►

From YouTube: IETF101-LWIG-20180321-1520

Description

LWIG meeting session at IETF101

2018/03/21 1520

https://datatracker.ietf.org/meeting/101/proceedings/

A

B

A

A

Okay,

Rahul

thanks,

so

here's

the

working

group

status,

we

had

two

drafts

that

cleared

the

isg

ITF

last

call

and

all

the

ESG

comments,

and

they

are

now

with

in

the

RFC

queue,

and

the

third

draft

is

also

in

the

RFC.

Tuber

has

a

missing

ref

reference

to

sin

ml,

so

it

will

also

move

ahead

at

some

point.

C

C

So

basically,

this

part

is

mostly

a

reorganization

of

content

and

only

just

the

small

new

subsection

for

this

sections,

four

and

five

okay.

So

let's

go

through

the

updates

in

this

last

revision.

So

a

first

update

is

in

section

one

introduction,

which

is

a

new

paragraph.

Some

text

intended

to

address

a

comment

by

Hanna's

in

in

Singapore

on

which

are

the

reasons

for

Hawaii

TCP

is

perceived

as

a

not

so

good

or

a

bad

protocol

for

IOT

scenarios,

and

we've

also

added

a

reference

to

a

more

detailed

document

on

this

topic.

C

C

Well,

multicast

is

important

in

some

IOT

applications

and

also

TCP

provides

and

always

confirmed

it,

a

delivery

service.

So,

for

example,

you

may

compare

with

what

happens

in

co-op,

where

you

may

well,

a

sender

may

determine

may

choose

whether

an

acknowledgment

is

required

for

a

message

or

not,

and

this

is

some

flexibility

that

can

be

useful

for

some

scenarios

and

applications,

especially

for

applications

where

you

can

tolerate

some

loss

ratio,

and

you

can

just

skip

waiting

for

an

acknowledgment

and

by

that

you

can

get

some

energy

savings

for

energy

constrained

devices.

C

However,

for

TCP

this

flexibility

does

not

exist

and

on

the

other

hand,

they

have

been

many

typical

reasons

for

criticizing

TCP,

which

we

believe

are

invalid

or

are

not

fair.

One

of

the

most

typical

ones

is

the

complexity

of

TCP.

So

it

is

true

that

there

are

many

mechanisms

defined

for

TCP.

However,

it's

true

also

that

much

of

the

functionality

is

optional,

so

it

is

not

really

required

all

that

functionality

for

interoperability

and,

in

fact,

it's

possible

to

implement

TCP

in

a

quite

simple

way,

for

example

by

having

single

MSS

window

size,

oriented

implementation,

then.

C

Secondly,

another

claim

that

appears

sometimes

in

the

literature

is

that

the

connection

oriented

approach

of

TCP

is

incompatible

with

Radio

duty

cycle,

so

the

latter

is

a

technique.

That's

quite

typically

used

in

wireless

low-power

technologies

for

devices

that

are

energy

constrained

and

it

is

based

on

having

the

constraint

device

in

sleep

mode

during

some

intervals

or

maybe

by

default

and

waking

up

sometimes

for

communication,

and

the

point

is

that

there

is

not

any

actual

problem

with

having

the

constraint

device

in

sleep

mode

for

some

intervals.

C

Ok,

well,

the

device

is

in

a

TCP

connection,

so

the

consequence

that

there

will

be

when

there

is

ready

to

die

cycling

in

place

is

that

sometimes

to

deliver

data

to

the

sleepy

device.

There

will

be

some

additional

delay.

However,

if

Radio

duty

cycling

is

suitably

configure,

there

is

not

any

further

problem

than

that.

So,

for

example,

when

the

device

goes

to

sleep,

it

does

not

necessarily

break

the

connection

and

another

claim

or

another

reason

for

criticizing.

C

Tcp

is

actually

a

bit

more

general

and

well

known

for

quite

some

time

about,

and

the

performance

of

TCP

in

wireless

links,

for

example.

So

it

is

true

that

TCP

activates

congestion

control

mechanisms

whenever

there's

a

packet

loss

and

the

problem

is

that

sometimes

packet

losses

will

happen

not

due

to

congestion,

but

due

to

corruption

in,

for

example,

high

baterry

rings,

which

are

quite

typical

in

IOT

scenarios.

So

the

problem

here

is

that

TCP

behaves

quite

conservatively,

assuming

that

any

loss

is

due

to

congestion

and

therefore

there

will

be

these

under

performance.

C

However,

the

main

point

here

is

that

this

problem

is

not

specific

of

TCP,

so

the

actual

problem

is

the

fact

that

the

transport

layer

protocol

doesn't

know

which

is

the

reason

for

a

packet

loss.

So

this

is

a

problem

that

will

happen

also

in

in

other

protocols,

for

example,

in

co-op.

This

is

the

same

issue,

however.

C

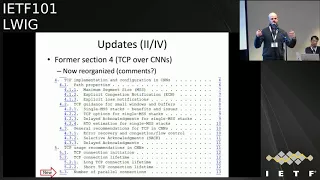

Okay,

then

we

have

a

quite

major

update

in

former

section

4,

which

was

entitled

TCP

of

a

constrained

node

networks.

The

update

is

mostly

editorial

and

relates

with

how

we

organize

the

content.

So,

basically,

now

the

content

is

split

in

two

sections.

The

first

one

is

now

entitled

TCP

implementation

and

configuration

in

constrain

node

networks.

Well,

the

section

5,

which

is

entitled

TCP

usage

recommendations

in

constraint

on

network,

so

the

last

section

deals

with

how

an

application

will

use

where

handle

connections

in

constraint,

mode,

Network

environments

and

the

previous

one

section.

C

By

the

way,

there

is

the

only

actual

update

in

terms

of

content.

Here

is

the

small

subsection

5.3

entitled

number

of

parallel

connections.

So

basically

we

just

say

that

device

that

has

limited

resources

needs

to

keep

a

low

number

of

parallel

connections

since

each

one

of

them

each

connection

will

consume

some

resources.

C

Then

we

have

some

other

updates

in

the

annals

of

the

document,

which

is

the

part

where

we

collect

details

on

TCP

implementations

for

constrain

devices.

So

first

couple

of

updates

is

in

the

subsection

on

the

TCP

employment

asian

for

tiny

OS.

So

we

now

have

added

that

this

implementation

provides

a

subset

of

the

socket

interface.

So

there

was

a

comment

by

Rahul

in

Singapore

on

this,

and

also

we

found

that

it

appears

that

this

implementation

supports

also

multiple

TCP

connections.

In

parallel,

then,

we've

also

added

details

for

two

additional

TCP

implementations

for

constrained

devices.

C

This

is

basically

after

some

comments

by

Hanna's

in

Singapore

that

well,

we've

added

the

implementations

for

free

rtos

and

for

micro

CE

OS.

So

these

two

are

real-time

operating

systems

for

embedded

devices,

which

are

supported

by

up

to

32

bit

micro

processors.

So

now

we

are

covering

implementations

for

a

bit

more

powerful

platforms

than

the

ones

we

are

covering

in

the

previous

version

of

the

document

and

both

basically

are

based

on

a

multiple

MSS

window

size.

C

So,

first

of

all,

we

can

say

that

there

are

two

rows

that

have

been

deleted

from

the

table.

Those

were

the

rows

for

DFO

and

ecn,

because

actually

there

was

a

no

everywhere

so

now

the

same

information

is

provided

as

one

sent

in

the

table

caption

and

on

the

other

hand,

we

have

added

two

rows:

one

for

whether

there

is

support

for

the

socket

interface

and

another

one

on

the

number

of

well,

whether

it's

possible

to

have

number

of

concurrent

connections.

C

C

Then

those

were

all

the

dates

or

the

main

updates

in

this

last

revision,

and

we

have

identified

a

number

of

potential

additions

for

0-3.

For

example,

we

plan

to

add

some

discussion

on

the

support

or

lack

of

support

of

ecn

in

the

internet

and

also

in

for

specifically

IOT

scenarios,

then,

with

regard

to

the

security

consideration,

section

we'd

like

to

possibly

expand

it,

and

one

thing

we

are

interested

would

be

to

know

if

there

are

any

known

code

vulnerabilities

due

to

implementing

TCP

in

a

constrained

way.

C

So

this

is

something

that

that's

a

question

that

we

also

would

like

to

make

to

the

working

group

right

now,

for

example,

and

also

we

plan

to

to

maybe

post

an

email

on

the

mailing

list

this

and

then

Indiana's.

We

also

plan

to

maybe

separate,

which

are

the

TCP

implementations

that

appear

to

be

updated

and

maintained

recently

from

those

that

have

not

been

updated

or

maintained

since

some

time

ago,

and

well

and

again,

it

would

be

great

to

try

to

complete

the

summary

table.

C

D

I

had

a

specific

question

about

the

appendix,

like

I,

think

it's

8,

section,

8

right

one

other

thing

that

worries

me

is

like:

that's

like

highly

dynamic

content

and

I

had

a

thought

like

just

like

chronic

I

want

to

run

it

by

the

working

group

see

what

they

think

right.

So

usually,

this

kind

of

stuff

is

tracked

in

something

called

an

implementation

status,

section

that's

removed

before

publication

as

an

RFC.

Ok,

so

that's

like

RFC

79,

32

or

something

that

it

describes.

D

F

E

E

I

understand

that

it's

very

difficult

to

get

the

data

about

data

size

and

code

size,

but

since

there

are

lots

of

people

in

the

room

who

always

focus

on

on

code

size,

DRAM

utilization

over

the

wire

transmission

size,

I

think

it

would

be

a

good

exercise

to

actually

get

some

real

data.

If

you

get

the

data

from

some

papers,

I

expect

that

this

will

be

tricky

because

they

will

probably

measure

it

differently.

They

will

probably

include

different

values,

and

so

you

will

have

a

not

very

representative

figure

in

yen,

so

that

could

be.

E

If

I

understand

that

you

are

also

sort

of

the

document,

you're-

probably

not

super

thrilled

about

the

idea

that

you

have

to

get

all

these

things

running

and

then

do

a

comparison

for

the

date

for

the

code

size.

It

may

not

be

the

the

item

at

issue,

but

would

still

take

you

some

time,

but

for

the

RAM

size

in

measuring

that

would

be

will

be

tricky.

I'm

sure

you

have

noticed

that.

A

Yeah,

this

is

more

so

I

think

I

agree

with

Hannah

said

it

makes

sense

to

have

have

these

numbers

and

not

remove

them

our

before

publication

yeah

once

it

is

RFC,

so

we

then

we

just

updated.

We

don't

remove

it

but

like

if

we

keep

updating

the

curves

right.

So

today's

this

elliptic

or

tomorrow,

it's

some

other

curve

and

things

will

change,

but

it

it

makes

sense

to

have

like

at

this

point

of

time.

This

is

what

we

know

these

are.

A

E

Actually,

there

are

ways

to

measure

the

RAM

utilization

of

and

to

get

some

some

real

data

without

being

overly

intrusive,

but

it

it

will

require

a

sophisticated

setup,

so

I've

seen

tools.

I

in

fact

I

wanted

to

use

a

tool

myself

without

making

advertisement,

it's

actually

not

a

nun

product,

and

so

it's

a

it's

a

company,

an

Austrian

company,

a

CEO

who

provides

her

and

analyzer

and

they

basically

give

all

sorts

of

data.

You

increment

the

code

and

you

can

retrieve

it

from

from

the

board

then,

but

it

I

haven't

used

it

myself.

E

I

want

to

play

with

it

to

see

how

easy

it

is,

maybe

that

maybe

that

could

help.

Thank

you,

I,

don't

know

if

you

say

that

already,

but

maybe

you

want

to

at

the

beginning

of

this

section.

You

just

want

to

indicate

that,

like

a

such

just

a

disclaimer

that

this

was

what

I've

done,

work

done

at

the

time

of

writing

of

this

document,

yes,

and

maybe

incorrect

at

the

time

when

the

people

read

it

and

then

later.

G

E

Don't

expect

these

implementations

to

change

like

on

a

daily

basis,

or

maybe

in

a

few

years,

then

things

could

be

revisited

and

like,

like

you,

guys,

do

or

constant

us

at

least

try

to

redefine

their

classes

and

pretty

be

more

descriptive

of

a

couple

of

years

of

experience.

I

could

see

that

following

similar

lines

or

as

we

do

for

crypto

as

well.

E

G

Regarding

the

question

of

implementation

is

getting

changed,

so

one

point

is

that

the

I

think

these

are

all

the

design

aspects,

so

the

implementations

might

change

at

a

different

level,

but

these

things

will

remain

same.

For

example,

I.

Don't

see

why

you

IP

or

riot

will

support

slow

start

or

any

congestion

control

algorithm

in

the

future

and

WI.

G

C

D

So

I

I'm,

not

sure

I

agree

right

like

so.

The

thing

is

like

lot

of

these

nodes

could

change

into

a

s

at

some

point

right

like

so

that

that's

the

part

I'm

concerned

about

that?

We

are

giving

misleading

information

right,

but

the

core

number

probably

won't

change

by

March.

All

yeses

are

probably

not

going

to

become

notes

at

some

point

right,

but

there's

some

stuff.

We

say

like

no,

but

at

some

point

people

are

implemented

like

if

something

becomes

important

like

you

know,

delay

tax,

for

example

right.

D

Okay,

that

that

could

probably

give

you

some

kind

of

so

this

is

the

information

you

are

kind

of

trying

to

push

into

a

working

group

wiki

rather

than

a

RFC,

because

it's

so

a

lot

of

the

times

we

think

like

this

is

gonna

be

valid

in

five

years.

That's

the

kind

of

like

test.

We

do

to

see

what

goes

in

a

wiki

and

what

goes

in

an

RFC

and

I.

D

Think

something

here

is

gonna,

be

wrong

in

five

years,

like

just

that

feel

yeah

I

might

be

wrong.

You

might

be

wrong

right,

but

that's

that's

the

thinking.

So

just

put

it

somewhere

and

say,

like

you

know,

may

not

be

up

to

date

and,

like

you

know,

the

current

status

for

this

is

track

that

wherever

and

then

somebody

maintains

it

like

would

be

a

lot

no

biggie

yeah.

H

I

G

So

the

only

update

that

has

been

done

since

the

past

revision

is

the

security

consideration.

So

there

had

been

one

discussion

in

the

six

stage,

which

talked

about

maintaining

untrusted

neighbor

entries

for

some

time,

while

the

pledge

joins

the

network

or

in

case

of

an

AGG

authentication

where

the

panaka

client

joins

the

network.

So

this

this

specific

security

consideration

has

been

added

into

the

document

thanks

to

Melissa.

For

citing

this

in

context,

two

six

dish,

and

today

there

again

there

was

some

discussion

tomorrow.

G

Right

does

not

have

this

implementation

as

of

now

and

other

operating

systems.

Also

don't

have

this

implementation,

so

what

quantity

doesn't

have

currently,

so

we

already

have

this

implementation.

It's

appropriate

implementation.

We

are

planning

to

add

this

support

to

quantity

and

hopefully

to

write

as

well

as

a

pull

request.

So

what

what

we

have?

G

What

quantity

currently

doesn't

have

is

it

doesn't

have

any

key

management

protocol

once

it

doesn't

have

any

if

it

doesn't

have

any

key

management

protocol

I'm

talking

about

not

the

quantity

ng,

but

the

quantity

operating

system

which

is

which

is

the

older

version.

So

it

cannot

distinguish

between

a

neighbor

cash

entry

which

is

authenticated

and

unauthenticated,

so

there

is

a

difference

there,

so

it

doesn't

handle

that

currently.

So

there

is

no

time

so.

The

abit

that

we

had

done

in

the

security

concentration

is

that

you

have

a

different

timer.

G

G

So

what

are

the

next

steps?

So

we

plan

to

do

this

implementation

in

Kentucky.

We

are

we

plan

to

align

the

quantity

implementation

with

the

drafts

proposition

and

do

some

performance

tests,

so

the

performance

test

would

be

in

terms

of

like

how

many

times

the

network

had

to

change

is

routing

adjacencies

and

how

soon,

how

was

the

connector

convergence

time

impacted

because

of

such

an

implementation?

G

A

H

Hello,

so

in

six

days

this

wagon

will

get

seven

years

old

and

yeah

I

cannot

regulate

because

it's

six

days

away,

but

I

wish

this

moment

would

have

been

around

11

or

12

years

ago,

because

I

wouldn't

have

to

stand

here

now.

So

this

is

really

a

blast

from

the

past

I

on

the

insistence

of

Thomas

to

attain

document.

It's

something

that

I

thought

had

been

common

knowledge

for

more

than

a

decade

now,

but

apparently

had

so.

H

It

came

up

when

the

again,

when

the

sixth

of

our

group

build

sixth

of

fragmentation,

design,

team

and

one

of

the

objectives

was

to

finally

document

this.

So

this

is

about

memory,

fission,

fragment

forwarding

with

virture

reassembly.

But

first

let

me

quickly

talk

about

the

standards

this

is

based

on

from

the

standard

number.

This

is

really

about

something:

that's

that's

a

decade

more

than

a

decade

old,

why

we

are

doing

this,

how

they

work

and

what

we're

going

to

do

about

this.

H

So

you

know

that

I've

seen

it

for

944

defines

a

link

layer,

fragmentation

or

adaptation

layer.

Fragmentation

as

as

I,

usually

call

it

mechanism.

So

we

are

able

to

take

an

IP

package

turn

it

into

a

multiple

adaptation

layer,

fragments

and

then

hand

them

over

to

802

15

for

and

the

fragmentation

format.

Is

this

inspired

from

ipv4,

so

we

have

a

Datagram

size

and

a

Datagram

tag

and

for

any

data

room

that

is

not

the

first

one.

We

also

have

a

Datagram

offset

as

a

unit

of

eight

bytes.

H

So

basically

you

can

think

about

this

as

a

form

of

IP

fragmentation

that

is

undone

at

the

place

where

the

fragment

I

received,

so

that

when

at

the

packet

leaves

the

the

6lowpan

domain,

it's

a

whole

packet

still

and

not

a

lot

of

IP

fragments,

which

would

be

much

larger.

Now,

how

do

you

implement

fragmentation?

You

do

it

by

providing

reassembly

buffers,

so

you

build

reassembly

buffer.

When

you

get

the

first

fragment,

then

you

have

something

like

a

time

out.

H

While

you

are

waiting

for

additional

fragments

to

come

in

and

then

either

you

reassemble

it

completely,

then

you

can

drop

the

reassembly

buffers

because

you

have

processed

it

all.

When

you

have

a

timeout,

you

drop

it,

and

these

timeouts

have

to

be

pretty

long

for

a

number

of

reasons.

So

usually

there

are

dozens

of

seconds

60

seconds

and

in

some

cases,

so

we're

talking

about

buffers

that

that

are

about

a

kilobyte

that

are

reserved

for

60

seconds

and

that

are

living

on

a

constrained

note.

So

this

is.

H

This

is

really

a

bit

of

the

hard

proposition

RFC

six

to

a

two

which

define

a

refined

header

compression

did

not

change

anything

about

the

fragmentation

mechanism,

so

we

as

they're

using

the

same

mechanism

as

in

4/4.

Now,

what's

the

problem

well,

first

of

all,

because

our

links

are

not

particularly

fast

and

media

access

may

take

some

time

the

the

the

idea

of

reassembling

everything

at

every

hop

and

then

fragmenting

it

out

again

that

increases

the

latency.

It

essentially

can

can

double

latency

or

more

and

also

the

reliability

where

fragmentation

is

bad

for

reliability.

H

H

So,

basically,

what

what

we

need

to

find

is

a

way

to

actually

get

rid

of

the

fragment

in

the

forwarding

node

as

quickly

as

possible,

and

that's

known

as

fragment

forwarding.

So

we

actually

want

to

send

fragments

onwards

before

we

have

received

the

whole

package

and

that

of

course

relieves

the

intermediate

nodes

of

memory

requirements

and

the

only

one

that

really

reassembly

reassembles

things

is

the

final

destination

or

the

ABR,

for

instance,

when

the

package

is

supposed

to

leave

the

6lowpan

domain

okay.

H

We

can

actually

emulate

the

function

of

a

reassembly

buffer,

so

we

don't

actually

implement

the

reassembly

buffer.

We

just

act

as

if

we

had

one

and

that's

called

a

virtual

reassembly

buffer,

so

real

reassembly

buffer

is

going

to

sit

there

and

and

put

the

fragments

together

and

then

only

when

it's

full

actually

starting

to

burst

out

packets,

fragments

again

and

the

idea

about

the

virtual

reassembly

buffer

is

that

you

should

just

don't

let

the

data

sit

here.

You

send

them

on

as

soon

as

you

have

enough

information

to

actually

do

that.

H

So

in

a

virtual

reassembly

buffer

environment,

you

essentially

reduce

the

reassembly

buffer

to

information

that

you

need

to

identify

the

packet

that

you

are

working

on,

which

is

the

layer,

2

sauce

and

the

Datagram

keg,

and

all

the

other

information

you

just

don't

keep

you

you

just

send

them

out

immediately

when

you

get,

of

course,

there's

a

little

catch

here.

You

have

to

have

media

access

to

send

something,

and

while

you're

waiting

for

media

access,

you

submit

receiving

packets.

H

So

you

you

cannot

count

on

getting

rid

of

your

fragments

at

the

same

speed

that

you

receive

them.

So

the

reliability

problem

and

the

requirement

for

buffer

space

remains,

but

in

many

cases

nodes

will

be

able

to

actually

get

the

media

access

in

about

the

same

speed

in

which

they

are

receiving

things.

So

the

the

virtual

reassembly

buffer

never

actually

stores

actual

data

and

that's

good,

because

now

suddenly

we

might

keep

twenty

of

them

or

something

like

that.

It's

no

longer

as

costly,

because

it's

just

a

couple

of

fights.

H

So

this

figure

by

the

way

is

from

Thomas

who

wrote

another

draft

in

documenting

that

you

race

in

your

books.

So,

as

I

said,

it's

not

always

trivial

to

do

this.

For

instance,

you

might

have

a

situation

in

which

the

first

fragment

does

not

contain

enough

information

to

forward

the

packet.

If

you

have

a

very

long

sauce

world

in

your

packet,

you

might

have

to

wait

for

the

first

two

fragments.

J

H

So

what

do

you

do?

In

that

case,

you

actually

send

three

fragments

128

one

and

fifty,

and

that

doesn't

make

a

lot

of

sense.

So,

there's

a

well

enough

to

trick

the

original

fragmented

doesn't

send

128

at

50.

It

sends

50

and

128.

So

you

have

all

the

slack

in

the

first

fragment,

and

this

means,

if

you

have

to

go

from

50

to

51

or

55,

that's

not

a

problem,

yeah

security

considerations.

This

is

pretty

much

the

same.

H

It

doesn't

really

change

very

much

so

getting

getting

a

node

who

sought

reassembly

buffer

is

as

easy,

but

it's

at

least

not

as

severe,

because

we

can

have

very

more

of

these,

but

you

reassembly.

And

finally,

we

are

not

serving

all

the

problems.

This

is

just

an

implementation

trick.

It's

not

a

new

protocol,

so

it

kind

of

cannot

help

us

with

a

packet

drop

probability.

It

doesn't

provide

fragment

recovery.

It

doesn't

provide

things

like

per

fragment,

routing,

which

you

may

want

to

have

you.

H

You

cannot

do

situation,

sending

fragments

multiple

times

where

you

can

do

it,

but

it

doesn't

really

help

as

much

as

if

you

have

a

real

special

protocol

for

fragment

recovery.

So

this

is

a

completely

different

item

and

this

is

out

of

scope

for

this

group

and

is

being

looked

at

by

six

law.

So

six

law

has

a

fragmentation,

design

team

that

was

charged

to

produce

two

documents,

one

giving

all

of

you

of

what

we

have

and

originally

the

idea

was

to

have

Mitch

the

information

about

the

battery

assembly

buffer

implementation

technique

in

that

document.

H

But,

more

importantly,

this

is

also

supposed

to

discuss

it

analyze.

It

tell

us

how

far

does

that

take

us,

so

it's

supposed

to

have

some

numbers

in

it,

some

some

actual,

a

summary

of

research.

So

that's

one

thing

that

design

team

wants

to

do

and

the

other

thing

is

get

a

standard

strike

document

out

with

a

new

protocol

that

adds

fragment

recovery.

H

Okay.

So

how

do

we

do

this?

As

I

said,

Thomas

wrote

another

document

that

also

documented,

but

you

reassembly

buffers

and

a

few

other

things,

and

that

might

become

this

document

here

and

yeah

small

section

here

about

the

VRB

itself.

It

also

has

a

section

discussing

it.

So

what

are

the

limitations

and

so

on?

And

we

would

add

numbers

there

and

so

on,

and

that

may

continue

to

be

a

good

topic

for

6lo,

because

it's

the

forward-looking

part

is

the

backward-looking

part

documenting

things

that

happened

more

than

a

decade

ago.

H

So

yeah

the

this,

the

the

the

description

was

first

published.

As

as

a

draft

a

week,

Thomas

wrote

another

description

and

after

some

back-and-forth

and

discussion,

we

came

up

with

the

idea

to

keep

a

document

about

the

implementation

technique

in

eric

and

put

analysis

and

some

some

analysis

also

of

new

protocol

proposals,

how

they

can

avoid

the

limitations

of

the

limitation

technique

into

a

sixth

order

humans,

which

will

then

be

accompanied

by

one

fragmentation,

recovery

technique

that

has

chosen

by

sixth.

Oh,

so

that's

where

we

want

to

go

questions.

A

K

K

No,

so

so

what

what

Carson's

made

exactly

the

plan?

I

think

if

that

works,

so

the

pure

implementation

details,

VRB,

L,

wig,

six

low,

discusses

that

and

the

limits

we

have

some

judge

is

working

on

simulation

results

where

we

show

how

the

thing

diverges

and

how,

for

you

know

tenth

of

the

price

and

random

or

you

actually

get

a

hundred

percent

reliability

stuff

like

this

and

then

the

second

six

low

draft

is

about

a

new

protocol,

so

makes

perfect

sense.

D

L

H

A

A

F

F

F

We

corrected

some

errors

wearing

in

the

document,

such

as

the

legacy

version

number

that

was

0

3,

0

1

and

the

correct

number

is

0

3

0

3

that

doesn't

change

the

overhead,

but

it

mistake

so

that's

fixed.

We

add

the

encrypted

content

type

in

both

for

T

less

one,

two,

three

and

DT

less

one.

Two:

three:

we

remove

the

nuns

from

TLS

one

two

three

and

we

added

the

consideration

about

GHC,

which

increases

the

overhead

for

TLS

1,

2,

3

and

DTLS

1

2

3.

So

all

these

changes

you

can.

F

F

So

this

is

the

main

body

table

of

content.

So

we

have

now

a

section

for

DTLS

102

with

and

without

GHC

and

connect

connection

ID

and

a

combination

of

those

same

for

DTS

103,

including

short

header

format.

Then

TLS

1.2

till

s,

1

2,

3

version

27.

We

think

without

JC

again

and

then

oscar

version

11

she's,

the

last

one.

F

This

is

the

new

table

that

we

opted,

so

this

is

just

for

the

overhead

without

the

Mac.

Well,

the

table

before

is

a

function

of

yeah,

how

long

the

sequence

unbraced-

and

it

includes

a

ok

I'm,

going

to

finish,

and

it

includes

the

the

Mac.

So

this

is

without

the

Mac

for

all

the

protocols

with

and

without

GHz

again,

Green

is

for

lower

number

than

before,

and

this

is

because

of

we

updated

according

to

update

seeing

the

document

and

fixed

a

few

mistakes.

F

A

A

N

There

are

some

simple

suggestions

and

those

are

on

these

flights.

So

so

one

remark:

the

the

draft

is

like

12

pages

of

it's

probably

11

pages.

Our

appendix

and

the

zoning

home

page

is

just

a

description

but

sniffle

I,

suppose

Minister

will

make

15

slides,

but

most

of

these

slides

are

just

things

to

glimpse

over

and

just

to

get

the

appreciation

for

some

problems,

and

hopefully

your

solution

direction

next

slide.

Please.

N

Can

you

me

yes

go

ahead?

Okay,

sorry

so

so,

currently

in

in

ITF,

we

have

quite

some

interest

in

implementing

Ecco

mall

kind

of

devices.

It's

until

at

one

point

three

and

it's

a

lot

of

IT

style

devices

and

I

will

discuss

the

the

kind

of

the

algorithms

ooh

that

has

evolved

over

time

and

what

to

do

with

it.

If

we

all

the

cram

as

much

so

among

the

guys

as

possible,

so

I

will

go

over

the

the

Dinesh

curves,

which

have

been

there

for

at

least

one

hour

decade.

N

I

think

and

some

more

recent

curves

suggested

certify

the

see

of

our

key

process

both

for

key

agreement

and

for

signature

schemes

and

then

I

will

zoom

in

a

little

bit.

So

I

have

a

few

slides

with

mathematical

detail,

but

you

don't

have

to

learn

it

by

heart.

Then

I

will

just

do

compare

some

very

implementation,

pitfalls

lie

and

then

I

would

suggest

an

approach

on

how

to

actually

still

cram

as

much

on

one

device

as

possible.

N

Just

like

how

can

we

evolve

from

here

and

can

we

make

potentially

our

sanitization

process

somewhat

easier?

So

so

why?

My

kind

of

hidden

claim

is

that

lots

of

documents

that

have

been

produced

in

crypto

lamp

in

ITF

the

last

few

years

they

could

be

mounted

documents

if

they

had

the

document

that

I

submitted

in

November

and

then

I

would

glue

to

some

final

remarks

next

slide.

Please.

N

Okay,

so

this

is

the

finished

curves

and

the

most

important

thing

is:

there's

lots

of

dqo

only

slides,

but

there

is

an

overview

and

a

few

slides

down

the

road.

These

are

widely

implemented

source

of

hardware

implementations.

For

this,

it's

all

over

the

place.

The

important

thing

to

notice

is

that

they

use

a

particular

shape

of

elliptic

curves.

This

is

so

called

the

bias

trust

model,

and

you

see

this

equation.

N

The

curved

equation

is

the

mod

p

and

x

and

y

parameters

on

there,

and

this

particular

shape

of

equation

its

triggers

all

kind

of

other

design

decisions,

including

how

do

you

do

circle

group

operations

on

this

curve?

How

do

you

represent

points?

How

and

so

on,

so

lots

of

standardization

bodies

have

picked

some

choices,

for

so

long

I

just

want

to

highlight

is

that

the

points

are

always

submitted

in

most

significant

bit,

an

octet

order

and

that

these

objects

that

are

on

this

curve

are

always

in

so

called

non

compressed

form.

N

So

it

means

you

have

all

any

information

that

you

need

to

run.

A

calculation

is

not

something

that

you

have

to

mentally

reconstruct

I.

Then

we

have

ECB's

a

it's

all

over

the

place

as

well.

It's

a

signature

scheme

there.

We

we

use

the

same

elliptic

curve

as,

for

example,

expect

we

specified

the

NIST.

We

use

a

hash

function

and

then

we

pop

out

a

signature

of

in

this

case,

512

bits

again

in

a

particular

format.

So

this

is,

this

is

just

basically

legacy.

Curves

has

been

around

since

probably

around

2000.

N

So

one

important

remark

there

is

that

some

of

these

speed

ups

actually

may

work

less

well

in

iit

setting

so,

for

example,

atmega328

device

to

treatment

the

8p

device.

Most

multiplications

is

devoir

on

a

bit

level

and

all

the

papers

that

are

written,

academia

about

speeds

or

records

are

all

about

32-bit

or

64-bit

architectures,

which

is

nice

to

TLS,

but

maybe

not

nice

for

every

iit

in

application.

N

So

one

thing

to

note

here

would

be

that

the

calculations

are

done

on

only

one

of

the

X&Y

coordinates

of

the

points

there,

so

you

basically

saw

way

information,

so

you

don't

really

reconstruct

the

entire

thing

that

you

would

otherwise

need

in

the

BIOS

transform

in

the

nice

curve

case,

but

it

still

is

enough

for

lots

of

tea

brewing

protocols

next

slide.

Please.

N

Chemical

next

fight,

okay,

so

I

just

walked

over

these

three

different

curve

models.

So

bombed

in

this

curve

is

trust

model.

The

curve

to

509

is

Montgomery

curve

FS

curve.

They

all

have

different

on

the

line

formulas.

On

the

route

of

file

to

do

group

operations,

they

have

different

representations

of

how

the

points

are

represented

internally

and

also

how

they

are

sent

over

the

wire

and

they

even

have

different

bit

and

byte

orderings.

So,

for

example,

if

you

look

at

the

left,

column

is

on

the

NIST

P

256.

N

N

So

what

are

we

going

to

do

now

if

you

want

to

implement

or

have

to

implement,

for

example,

list

curves

and

NIST

signature

schemes,

and

we

also

want

to

use

the

C

over

C

stuff

for

almost

mobile

guys.

So

there

seems

to

be

quite

a

pain

right

because

all

the

even

the

formatting

is

different

like

the

bit

or

Couture

ring

the

underlying

formulas

are

different.

Arithmetic

is

different,

so

it

seems

like

oh,

we

need

to

have

triple

the

implementation

size

or

some

of

the

implementation

size

of

the

individual

components.

N

N

Can

we

go

back

down?

I

didn't

see

the

my

screen?

Okay,

so

I,

just

recopy

the

comparison

of

all

these

curves

and

for

now

I

want

to

ignore

the

bit

byte

ordering

because

it's

kind

of

a

mess,

but

it's

only

a

slim

layer

to

convert

along

to

the

other.

So

let's

focus

on

the

elliptic

curve

cryptography

so

to

make

the

the

exposition

level

clearer,

I

assume

that

all

the

information

is

there.

We

don't

do

anything

compressed.

N

N

Okay!

So

you

see

that

I

have

a

blue

item

there,

where

it

used

to

say

NIST,

P

256,

and

what

is

that?

So

we

have

this

curve

255

online

and

this

adverse

curve.

If

you

look

at

all

these

facts,

you

will

you

think

that

all

these

curves

are

completely

different.

Mathematical

animals

but

they're,

not

the

just

different

ways

of

representing

the

same

mathematical

objects,

and

it

turns

out

that

you're

able

to

with

all

these

three

curve

models

once

you

actually

have

something

like

curve

to

5009.

N

You

can

easily

convert

the

points

to

something

on

different

weekly

space

like

metros

curve

or

the

curve

that

I

specified

in

my

draft,

which

is

something

in

the

traditional

Weierstrass

format,

and

just

for

the

for

the

record,

like

every

curve

cut,

can

can

always

be

represented

in

biased

as

firm

up,

but

it's

not

always

representable

in

memory

or

at

this

form.

So

you

see

this

little

diagram

on

the

bottom.

Essentially,

we

we

we

have

a

way

to

to

move

between

different

representations

of

the

same

objects.

N

So

these

seemingly

different

things

are

this

just

different

way

if

we

present

in

the

same

mathematical

objects

and

these

mappings

between,

for

example,

the

curve

to

five

thousand

nine

and

this

new

thing

that

I

introduced

in

the

in

the

spec

in

the

draft

is

very

easy

to

implement.

So

let's

look

at

it

on

the

next

slide.

N

So

here

I

just

for

first

focus

on

on

the

bottom,

so

we

have

a

curve

two

five,

five

one

nine

points

which

is

people

are

using

it

and

in

IOT

applications

it

has

two

coordinates:

U

and

V.

Actually,

X&Y

I

have

to

look

at

the

red

arrow

going

to

the

left.

How

do

you

move

toward

something

that

looks

like

a

missed?

N

Weierstrass

format?

You,

you

just

add

some

number

to

the

x

coordinates.

That's

it

same

thing.

If

you

want

to

move

the

other

way

around,

you

just

subtract,

the

number,

that's

it

so

the

computational

workload

of

moving

from

one

to

the

other

direction

is

0.1

percent

at

most,

maybe

even

far,

let's

write

some

similar

happens

if

you

move

between

this

adverse

curve

and

the

curve

to

five

509.

N

So

that

means,

for

example,

if

you

can

do

this

very

efficiently,

you

can

have

a

single

implementation

for

the

signature

scheme

that

came

out

of

CLG

and

the

this

extra

255

wrong.

Nine

implementation,

as

long

as

you

convert

it

to

the

other

fall

out

and

then

the

end

of

computation

just

converted

that

and

the

reason

why

this

works

is

that

it's

a

so-called

isomorphism,

which

means

that

you

maintain

the

same

mathematical

structure.

So

now

we

have

essentially

three

repeats.

Less

representation

of

the

same

mathematical

objects,

one

of

the

domestic

curve

to

509

the

address

curve.

N

That's

in

the

EDD

is

a

scheme

and

the

thing

I

specified

in

the

draft

and

they

all

have

the

same

parameters

at

the

same

prime

number.

So

you

can

just

borrow

all

the

arithmetic

for

it

and

same

group

size.

The

only

thing

is

like

the

the

rip

station.

The

XY

corners

are

morphed

a

little

bit

so,

for

example,

in

curve

two

55.9

you

have

an

x,

coordinate.

Nine

is

the

circle

base

point,

but

it's

nine

plus

the

fixed

number.

N

That

is

a

little

bit

long

to

write

a

new

slide,

but

it's

never

less

simply

add

in

your

head

and

that

transforms

it

to

the

other

format.

So

the

important

lesson

from

this

slide

and

the

previous

few

slides

is

that

we

have

all

these

million

different

objects

that

essentially

are

the

same,

except

they

are

representing

their

coordinates

slightly

differently.

We

won't

exploit

that

to

essentially

stick

it

into

an

existing

air

transportation.

For

example.

The

miss

curves

next

slide,

please

so

now,

I

have

I

condensed

all

these

different

new,

improved

curves

to

the

justice

volume

right.

N

Various

fast

format,

I

mean

now

see

that

if

we

compare

this,

the

miss

curves

that

has

been

around

forever-

and

it's

like

probably

owners

of

hardware

invitations

for

that

already

available,

we

have

the

same

curve.

Normally,

if

the

same

base

point

now,

we

have

the

same

internal

ripest,

a/c

the

same

foreign

and

the

same

wire

format,

a

same

auditory

and

by

embed

ordering.

So

that

means

that,

if

suppose,

you

are

kind

of

late

in

the

game

and

only

won't

start

implementing

the

move,

Emery

curve

or

the

FS

curve.

N

Now

maybe

you

can

just

just

just

format

the

various

formats

just

of

an

old

hardware,

implementation

for

the

Miss

curves

and

you're

done.

The

only

thing

you

need

to

do

is

you:

you

represent

it

in

and

if

way,

and

you

use

outer

circle

domain

parameters.

So

if

the

the

NIST

NIST

curfews

another

prime

number

than

the

other

curves

and

a

few

other

parameters,

but

these

are

constant

and

they

are

easily

loaded

into

memory.

Okay,

next

slide,

please.

N

So

I

keep

it

a

little

bit

because

we

also

actually

had

all

these

encoding

problems,

because

the

bit

and

byte

ordering

and

in

all

these

different

models

has

been

picked

kind

of

badly

because

they're

all

like

incompatible.

So

if

you

really

want

to

implement

all

these

different

models

in

the

same

way,

we

need

to

at

least

solve

this

encoding

mess.

So

if

the

octet

ordering

was

least

significant,

byte

first,

instead

of

most

significant

byte

first,

we

have

to

have

a

little

translation

layer

there.

N

So

what

is

the

efficiency

of

of

this

whole

exercise?

So

one

attempts,

of

course,

is:

oh,

we

we

only

need

one

implementation

of

instead

of

all

these

flavors,

except

for

a

little

bit

byte

ordering

a

mess.

The

conversion

is

really

low

cost,

as

I

said

in

the

first

bullet.

It's

like

really

negative

negligible

and

there's

one

caveat,

though,

is

that

if

you

look,

for

example,

TLS

I

saw

today

that

TLS

1.3

was

just

approved

until

at

one

point,

three

uses

this

curve

to

55.9

and

for

reasons

that

I

really

don't

understand.

N

They

only

at

format

the

x

coordinate

of

the

point,

instead

of

both

coordinates

its

handicaps.

My

mapping

between

this

curve

formats,

because

now

7b

has

only

computer

sets.

So

if

TLS

1.3

has

solved

a

little

bit

more

of

our

the

reputation

issues,

then

this

point

one

percent

would

be

the

final

cost,

but

because

they

didn't

do

that

now

we

have

a

very

expensive

call

calculation.

N

We

have

to

add

to

transfer

to

this

virus

format

or

a

Swiss

format,

which

adds

roughly

one

seventh

of

the

total

scalar

multiplication

time

and

then