►

From YouTube: IETF101-CFRG-20180319-1550

Description

CFRG meeting session at IETF101

2018/03/19 1550

https://datatracker.ietf.org/meeting/101/proceedings/

C

C

D

All

right,

thank

you.

My

name

is

Karthik

Karthikeyan,

bhargavan

and

I'm,

going

to

be

talking

about

a

new

initiative

called

hack

spec,

which

stands

for

high

assurance

cryptographic.

Software

assurance

cryptographic,

specifications

for

which

I

need

your

help,

so

I'm

going

to

describe

a

little

bit

of

this.

This

is

an

initiative

that

came

out

of

a

group

of

researchers

who

matter

the

sidelines

of

the

elbe

all

crypto

this

year,

and

this

is

kind

of

a

problem

that

we

identified

and

we

have

a

proposed

solution

that

you

want

your

help

them.

D

So

most

of

us

will

agree

that

implementing

crypto

software,

which

meets

implementations

of

crypto

primitives

crypto

constructions

correctly,

is

hard.

Ok.

So

if

you

go

look

at

the

last

two

or

three

years

of

OpenSSL

series,

you'll

see

about

half

of

them

are

memory

safety

bugs

like

buffer

overflows

about

a

quarter

or

side

channel

bugs

like

branching

on

secrets

and

about

a

quarter

of

functional

correctness

bugs

like

carry

propagation

bugs

the

thing

about

these

kind

of

bugs

is

that

they're

low

probability,

which

means

if

you

test

and

test

and

test

the

code?

D

It's

still

unlikely

that

you

might

hit

the

case

where

this

bug

might

be

trigger,

but

an

attacker

who

knows

that

the

bug

exists

might

be

able

to

drive

the

implementation

towards

about

so

testing

doesn't

work.

What

works

better

is

formal

verification,

but

formal

verification

requires

a

lot

of

effort

and

expertise.

So

the

group

came

up

with

this

idea

that

maybe

we

can

try

to

make

the

effort

required

for

verifying

modern,

crypto

primitives

lower.

D

The

good

news

is

that

a

whole

bunch

of

research

groups

are

right

now

be

able

to

use

existing

research

tools

to

verify

fairly

sophisticated

cryptographic

implementations.

So

there

are

a

bunch

of

tools

that

will

verify

see

implementations

of

your

primitives,

including

all

the

ones

that

you

can

imagine,

there's

also

a

few

tools

that

will

verify

optimized

assembly

implementations

and

these

tools

have

now

reached

a

level

of

maturity

that

they're

being

included

in

production

software

like

boarding,

SSL,

NSS,

s2,

N

and

so

on,

actually

using

formal

verification

techniques.

D

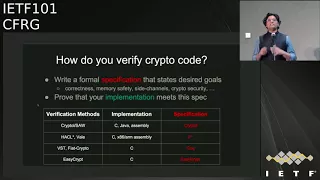

D

It

could

be

like

functional

correctness,

memory

safety,

some

specific

kinds

of

side,

channel

resistance

that

you

need

even

your

cryptographic,

security

goals,

and

then

we

write

your

implementation

and

prove

that

in

this

implementation

meets

the

spec

that

you

set

out

first,

okay,

so

this

both

of

these

steps

actually

require

work.

The

if

you

go

look

at

the

various

tools

that

are

available

right

now.

Most

of

us

agree

on

what

the

implementation

language

should

be.

D

You

usually

see

your

assembly,

but

there

is

a

wide

variety

of

specification

languages,

so

some

people

write

specifications

in

general-purpose,

logical

languages

like

cork

and

F

star.

Other

people

write

it

in

domain-specific

languages

like

easy,

crypt

and

crypto,

and

the

reason

we

write

it

in

these

languages

is

that

these

languages,

which

see

specification

languages,

are

particularly

geared

towards

a

specific

verification

method

that

these

tools

are

using.

D

However,

what

it

means

is

that

the

first

step

any

of

us

has

to

do

when

verifying

code

is

to

take

the

beautiful

pseudocode

or

text

written

in

your

other

C's

and

encode

it

in

the

specific,

very

specification

language

that

you

have.

This

step

is

quite

error-prone,

quite

painful,

but

actually

is

needed,

because

this

is

what

we

need

in

order

to

get

the

verification

tool

to

work.

On

the

other

hand,

the

negative

is

that

the

resulting

spec

actually

looks

quite

different

from

the

RSC.

So

it's

difficult

to

understand

what

exactly

we

are

proving

right.

D

So

this

is

the

kind

of

problems

that

came

up

and

a

whole

bunch

of

us

met

with

crypto

developers

in

the

series

of

workshops

called

hacks,

which

is

on

the

side

of

real

world

crypto,

and

the

developers

essentially

said.

Well,

you

guys

have

lots

and

lots

of

proofs,

but

we

don't.

We

don't

understand

what

you're

proving

ok,

because

the

proofs

are

relying

on

the

specification

written

in

some

obscure

language

that

we

don't

understand

and

we

don't

have

the

time

to

learn.

D

So

this

is

where

the

idea

for

hack

spec

came

from,

which

is

the

idea

that

we

need

a

language

which

can

be,

which

has

a

well

understood,

syntax

and

semantics

that

both

developers

and

crypto

designers

and

people

are

writing.

Specs,

like

the

I,

like

the

CFR,

G

and

so

on,

can

understand

and

read

and

write

as

well

as

can

be

used

as

a

basis

for

formal

verification.

D

So

the

design

goals

for

hack

spec,

which

is

a

new

high

assurance

crypto

specification

language,

are

the

following.

You

want

the

specs

that

we

write

to

be

really

set

synched

and

readable

so

that

you

can

actually

the

people

in

this

room

can

read

and

write

these

specs

and

that

they

can

be

integrated

as

pseudocode

in

to

RFC's.

We

want

the

this

X

to

be

executable,

so

they

can

actually

be

treated

as

reference

implementations

and

they

will

pass

test

vectors

and

so

on,

and

we

actually

want

them

to

have

a

clean,

syntax

and

compact

formal

semantics.

D

So

we

all

can

understand

them

and

they

can

be

used

as

a

basis

for

formal

verification

by

a

variety

of

tools.

Ok,

so

that's

the

goal

that

you're

going

for

so

we

have

a

first

design,

it's

a

very

preliminary

design

and

looking

for

feedback

you're

all

looking

for

feedback

on

this,

and

we

went

around

and

looked

at

various

RFC's

that

have

been

standardized

recently

and

we

found

that

most

symmetric,

crypto

Hirsi

seem

to

be

using

pseudocode,

+

c

reference

implementations,

but

anything

that

uses

field

arithmetic

seems

to

be

using

Python

reference

implementations.

D

So

we

said:

okay,

maybe

Python

is

a

language.

To

start

with,

it

seems

to

be

used

by

quite

a

few

developers

and

designers

as

prototype

for

prototyping

and

testing.

So

we

pick

a

subset

of

Python

3.6

extended

with

type

annotations.

What

is

the

subset?

Well,

it's

a

really

really

minimal

subset.

We

have

like

machine

integers,

we

have

big

numbers

and

we

have

arrays

and

not

much

else.

Okay,

we

might

add

things

as

we

need

them,

but

you

really

want

to

keep

this

minimal,

because

you

want

this

to

be

a

really

compact

and

minimal

domain-specific

language.

D

The

types

are

useful

in

two

ways:

they

allow

us

to

catch

very

simple

in

silly

errors

like

writing

into

an

array

out

of

bounds

or

or

forgetting

to

add

one

or

my

subtract,

one

and

so

on,

but

they

also

help

us

to

do

very

precise

translations

into

various

other

formal

languages

which

are

usually

typed.

So

in

particular,

we

have

compilers

right

now

to

F

star

there're,

already

one

working

to

Easy

Clip,

that

is

on

the

way

and

other

compilers

that

we

are

developing

for

languages

like

crypto

land.

D

So

if

you

write

one

speck

in

hacks

pack,

which

looks

like

Python

with

a

few

more

type

annotations,

you

will

be

able

to

get

for

free,

formal

specifications

in

all

of

these

different

languages.

We

also

have

a

building

a

library

of

common

constructions

and

specifications.

We

have

written

quite

a

few

examples,

but

we

need

help

to

write

more

because

you

want

to

basically

exercise

this

language

quite

a

bit

more.

What

does

it

look

like?

Well,

here's

an

example.

D

This

is

the

matic

of

poli

one

3:05,

it's

a

very

small

sort

of

fragment

of

the

speck,

as

you

can

expect.

It's

Python

there's!

Nothing

special

happening

here,

there's

a

prime

there's

addition

and

subtraction

and

multiplication

on

this

on

this

field,

which

is

modulo

the

prime,

but

the

interesting

thing

there

is

we're

defining

a

type

called

FLM,

which

is

a

refinement

type.

It

says

this

is

a

type

of

element

set

up

between

zero

and

prime

minus

one.

D

This

means

that

everywhere

in

the

spec,

where

we

use

the

type

FLM,

we

will

be

implicitly

asking

the

verification

tool

to

prove

that

at

this

point,

the

value

that

we

are

dealing

with

is

in

the

field

is

between

zero

and

a

P

minus

one,

and

we

can

put

many

other

such

constraints.

Here's

a

fragment

of

the

charger

20

spec

that

we

wrote

again

looks

just

like

Python,

it's

nothing

very

surprising,

except

it

as

a

few,

more

type

annotations.

D

In

particular,

we

are

asking

that

all

the

array

indexes

that

you

use

are

between

0

and

15,

which

means

that

you

will

never

accidentally

access

the

state

array

outside

these

bonds.

Ok

again,

this

is

just

a

sanity

check,

but

it's

useful

to

kind

of

put

this

in

and

we

need

them

for

our

formal

models

later

on

anyway.

So

once

you

write

specs

in

this

language,

what

you

get

is

for

free.

D

You

get

compiled

a

compilation

into

models

like

this,

which

is

a

model

in

a

star

of

charge

at

many

and

then

you'll

be

able

to

prove

that

C

code.

That

looks

like

this

implements

the

spec

that

we

started

off

with,

so

you

just

write

the

spec

and

then

we

that

be

various

tools

that

will

be

able

to

prove

that

assembly

in

C

and

Java

and

LLVM,

or

whatever

meets

this

high-level

spec.

And

yes,

we

can

verify

code

that

uses

highly

optimized

instructions,

including

vectorization,

and

so

on.

D

There

are

tools

that

will

do

that,

for

you,

so

coming

to

the

end

of

my

talk,

what

we

need

here,

what

I

am

here

is

that

we

need

some

help.

We

want

your

help

in

promoting

high

assurance

implementations

for

the

standards

that

are

coming

out

of

this

body

in

particular.

If

any

of

you

is

writing

a

new

crypto,

primitive

or

has

just

recently

standardized

something,

maybe

you

want

to

write

your

pseudo

code

and

hack

spec?

D

Maybe,

in

addition

to

your

pseudo

code,

you

want

to

write

a

reference,

implementation,

hack,

spec,

so

come

look

at

our

specs.

Read

them

comment

on

them.

Add

more

specs.

If

you

can

give

us

feedback

on

the

language,

what

are

the

kinds

of

features

that

you

might

need

to

write

specs

for

your

new

crypto

committee

in

this

in

this

language

we

are.

We

are

happy

to

kind

of

evolve

our

design

as

depending

on

what

people

say

and

help

us

kind

of

test

out

our

various

compilers

to

various

languages

and

our

various

specs.

D

E

Hello,

I'm

Daniel

Kahn,

Gilmore

I.

Thank

you

for

this.

This

is

really

great

and

I'm

pleased

to

see

it.

One

of

the

things

that

maybe

you

don't

have

an

example

of

in

your

slides

is

how

to

express

the

formal

cryptographic

properties

that

you

want

from

the

code

right.

So

the

examples

that

you

showed

made

it

really

clear

how

to

express

numeric

constraints,

and

things

like

that.

Can

you

just

explain

a

little

bit

about

how

you

use

hex

back

to

express

those

things

right.

D

So

right

now,

the

way

we

write

crypto

security

guarantees

in

these

various

tools.

They're

different

tools

have

different

ways

of

doing

so,

and

we

haven't

really

resolved

how

we

would

do

that

in

a

language

like

hack

spec.

What

do

you

want?

A

classic

way

to

do,

that

is

to

define

two

specs

one,

which

represents

the

ideal

functionality

and

one

we

should

present

the

complete

functionality

and

kind

of

show

that

that

is

the

ideal

functionality

we

want

this

to

achieve

and

the

ideal

functionality

would

not

be

actually

executable.

D

F

D

So,

for,

for

example,

for

our

charge,

mining

spec

and

for

any

symmetric

spec

like

that,

what

we

do

is

we

restrict,

because

we,

because

you

have

a

type

system,

we

can

restrict

the

uses

of

all

the

integers

machine

in

this

world

to

be

secret,

independent

in

the

sense

that

you

cannot,

for

example,

compare

to

win

to

machine

integers.

You

can

only

compare

array,

indices

and

so

on,

but

you

cannot

compare

the

data

which

is

represented

by

you

into

eight.

You

cannot

compare

it.

You

cannot

crunch

on

it.

D

You

cannot

use

it

as

an

index

to

an

array.

You

can

write

these

kinds

of

things,

but

it'll

make

things

like

AES

with

s

box

impossible

to

write

in

the

language,

so

you

have

to

relax

it

at

some

places.

So

you

might

say:

ok

for

the

S

box,

I'll,

relax

it

for

the

bignum

arithmetic,

because

you're

using

big

numbs,

there

is

no

side

channel

guarantee

there,

but

once

you

once

you

exit

that

world

and

you

come

down

into

the

world

where

you

actually

have

concrete,

bytes

and

concrete

integers.

D

F

D

G

Phil

hamburger

yeah

I,

like

this

lockers,

you

know:

we've

used

Python

in

a

couple

of

specs,

yes

and

I've

spent

a

lot

of

time

trying

to

convert

them

into

c-sharp

or

C,

because

it'd

be

nice.

If

you

had

compilers

to

executable

languages

and

if

you

can

get

C

and

C

sharp

and

maybe

Java

that

basically

covers

all

the

things

you

need

to

cover

because

everything

else

can

cook

into

those.

That's

a

that's

a

good

point.

Thanks.

H

J

Alright,

hello,

everyone.

My

name

is

Chris

Wood

from

Apple

here

to

talk

about

something

some

work,

we're

doing

with

Katz

Kramer's

Luke,

Garrett

son

Esau,

not

gonna,

try

to

pronounce

your

last

name,

so

butcher

apologize

and

Nick

from

CloudFlare.

This

is

something

we

talked

about

a

sec

dispatch

at

the

last

ITF

and

we

were

encouraged

to

come

over

here

to

present

it

to

you

so

without

further

ado.

J

J

First,

one

being

the

Debian

bug

where

some

developer

tried

to

silence

some

warnings

that

were

brought

up

by

Bell,

grind

and

mistakenly

removed

some

critical

seeding

processes

from

the

or

in

some

critical

seeding

step

in

the

random

number

generation

step

which,

basically,

you

know,

hosed

everyone

and

made

the

output

be

sort

of

predictable,

which

is

not

so

great

and

then

there's

the

dual.

You

see,

bug

or

design

flaw

or

whatever

you

want

to

call

it,

which

could

have

been

actively

exploited

by

TL

server.

J

Also,

if

you

have

sort

of

a

you

know,

systemic

kind

of

implementation

flaw

or

deployment

flaw

for

some

pseudo-random

number

generator.

This

technique

ensures

that

the

failures

themselves

are

kind

of

localized

to

each

individual.

You

know

a

secret

key

instance,

so,

for

example,

the

randomness

that

you

might

get

from

one

particular

server

would

be

different

from

the

randomness

that

you

would

get

for

a

different

server.

They

happen

to

be

running

the

same,

the

exact

same

rng.

You

see

our

PMG

with

the

same

state.

So

not

everyone

is

hos.

Just

a

small

fraction.

J

However,

in

today's

deployments

private

keys

are

not

readily

accessible.

You

know,

if

you're

a

server,

you

typically

store

them

in

some

HSM

for

good

reason,

and

if

you're

a

client,

you

might

keep

them

in

an

enclave

and,

depending

on

what

api's

are

using

to

actually

interface

with

the

private

keys,

they

might

not

be

readily

available

to

you.

The

keys

themselves

might

be

of

varying

types

you

could

have

RSA

or

elliptic

curve

or

whatever,

meaning

that

the

output

size

or

whatever's

fed

into

this

mixing

step.

J

J

So

you

would

sign

some

fixed

tag

tag

one

with

your

C

key,

which

you

can

call

it

your

HSN,

which

you're

whatever

your

your

API

that

happens

to

manage

your

private

key

access

for

you,

hash

the

output

and

then

anytime.

You

wanted

to

extract

some

randomness.

You

would

actually

call

it

to

the

PRNG.

You

get

the

randomness

append

it

to

this

hashed,

the

hash

with

the

signature,

and

you

would

extract

from

that

a

key,

the

KDF

step,

and

then

you

expand

using

the

PRF

our

much

randomness.

J

You

need

I,

you

know,

I,

guess

the

KDF

I

we

use

KDF

and

PRF

notation

here,

but

really

it's

just

an

extract

and

expand

kind

of

design.

You

could

probably

swap

them

whatever

expander.

You

want,

of

course,

subject

to

analysis

and

everything

to

be

sure.

That's

safe,

so

some

details

about

the

particular

parameters

so

tag.

One

is

a

fixed

intended

to

be

a

fixed

string.

That's

used

across

you

know

a

particular

deployment

of

this

technique,

so

a

server

that

starts

up

starts.

J

You

know,

pumping

out

randomness

would

only

compute

one

signature

with

the

secret

key

over

a

fixed

tag

and

it

can

just

cash

that

you

know

value

or

catch

the

hash

of

that

value

and

then

never

do

anything

with

that

ever

again,

except

append

it

to

whatever

randomness

is

generated.

Tag

two

is

a

dynamic

string.

It

changes

every

single

notification

so

that

you're,

not

you,

don't,

have

colliding

randomness

output

and

that's

critically

important

in

fairly

simple

to

implement

as

well

a

comment

that

the

signature

absolutely

must

not

be

exposed.

J

J

There

was,

you

know,

flurry

of

comments

on

Twitter

first,

one

being,

why

bother

with

this?

At

all?

You

know

your

energies

are

very

easy

to

get

right,

but

just

point

back

to

the

previous

slide.

Yes,

in

principle,

they

should

be

easy

to

get

right.

However,

things

do

go

wrong

and

this

gives

us

an

extra

insurance

policy

to

kind

of

protect

against

those

particular

instances.

J

Second,

major

one

is:

why

would

you

ever

use

your

private

key

for

something?

That's

you

know

not

intended

use

for

coming

here.

Is

that

or

my

response

to

that

is

simply

here:

we're

not

it's

not

an

unintended

use

of

the

private

key

you're

still

computing

a

signature

with

a

signing

key.

It's

just

not

used

in

the

same

way

that

you

would

use

it

in

the

protocol

originally

so,

for

example,

you're

not

signing

the

key

sheriff

until

s1

or

or

whatever

and

I'm

sure

other

people

would

come

up

with

other

criticisms.

J

I'd

be

very

happy

to

hear

them

on

here

or

here

at

the

Lister.

However,

you

know,

however,

you,

like

so

some

open

issues

in

the

draft

right

now

with

the

retinas

wrapper

as

presented.

It's

not

an

easy

drop-in

replacement

for

something

like

that

random,

where

you

just

expand,

however

much

random

as

you

want,

so

we're

looking

at

various

other

alternatives

or

Jarius

extraction

alternatives

to

make

it

so

that

it's

very

easy

to

do

so,

I,

don't

mind.

J

J

We

have

a

very

trivial

implementation

of

this

technique

if

you're

interested

go

check

it

out,

then,

just

comment

that

we

could

probably

experiment

with

other

implementations

or

with

this

technique

in

popular

OS

libraries

like

popular

there,

SSL

and

then

SS

in,

among

others

that

have

user

space

PRNG

implementations

that

you

could

easily

amend

or

rap

to

make

this

happen.

So

that's

it

very

simple

idea:

I'd

love

to

hear

any

comments

and

criticisms.

You

have.

G

F

K

L

J

J

J

L

Leads

me

to

the

conclusion

that

perhaps

this

this

leads

to

assigning

Oracle,

that

I

I'm

not

sure

than

them.

So

perhaps

we

I

was

thinking.

This

would

be

a

fixed,

fixed

tag

based

on

you

know

this.

This

is

I'm

using

this

for

HTTP

server

authentication,

so

I

just

pick

a

string

that

that

is

constant

across

all

over

that

particular

application,

and

then

then

I

deal

with

the

the

problem

of

making

tag

to

unique

across

all

of

the

uses

of

the

same

yeah.

J

L

M

H

The

short

answer

is

as

many

as

you

like:

if

you

have

secured

a

secure

PRF

and

you

model

the

hash

function

as

a

random

Oracle

I

mean

there's

really

no

boned

okay,

but

you

don't

have

forward

security,

though

I

mean

once

X

is

compromised.

Yes,

but

we

just

haven't

specified

it

like

the

tightness

of

the

bound

position.

Thank.

H

A

couple

of

questions

too,

if

I

mean

yes

and

I

start,

you

used

dual

ec

as

part

of

your

motivation

for

this

yeah

I'm,

not

sure

that

motivation

really

works,

because

it's

known

by

now

that

a

back

door

generator

in

that

way

is

actually

equivalent

to

public

key

encryption,

which

means

you

can

spot

it

in

source

code.

If

you

have

access

to

store,

could

it

stands

out

a

mile

because

it's

basically

a

public

key

encryption

scheme?

H

On

the

other

hand,

if

you

don't

have

access

to

source

code,

you

can't

see

it

and

you

can't

detect

it.

Okay,

fair

point,

so

that

might

be

something

you

want

to

look

at

in

your

you

know,

section

and

zero

of

your

draft

or

something

yeah.

Our

motivation,

I'm.

The

other

question

I

had

is,

if

you

go

back

to

the

equation,

just

from

aluminum

suppose

we

use

this

process

to

generate

randomness

for

a

signature

scheme

which

is

already

the

signature

scheme,

that's

being

used

to

generate

the

randomness

yeah.

What

can

you

say

about

security?

J

N

Hello,

/

Mishler

descriptor

pro

one

command

for

kenneth

question.

Yes,

this

is

very

important

question.

That's

why,

in

the

new

version

of

the

draft

at

least

should

appear

about

this

monistic

signature,

algorithms

and

I

think

we

should

discuss

that

she's.

This

shoot

can

be

switched

to

must

in

the

future.

So.

N

Usage

of

non-deterministic

signature

algorithm

can

be

used

only

once

for

these

exact

usage

and

not

for

any

other

means

and

about

the

question

of

Martin

about

Sun

Oracle.

Of

course,

we

should

continue

improving

the

draft

about

as

a

form

of

tag

1

and

take

2

about

the

possible

forms

such

that

no

obvious

attacks

place

I

mean

Oracle

can

be

possible,

so

these

two

ways

must

be

taken

into

account

while

we

improve

out

draft.

So

thank

you

very

much

questions.

N

H

J

L

H

Excellent,

thank

you,

I

think

you're

sort

of

getting

to

this

point,

but

thank

Thank

You

Martin

for

accelerating

the

process.

So

maybe

we

can

just

take

a

hum

in

the

room.

Are

people

in

favor

of

the

research

group

adopting

this

as

a

research

group

draft

with

the

intention

of

starting

as

a

starting

point?

Yeah,

potentially

other

techniques

will

come

forward

and

other

people

want

to

get

involved

and

that's

how

it

always

is

with

with

an

internet

draft

in

a

research

group

yep.

H

H

J

H

J

Alright,

so

the

next

one

is

about

hashing

to

elliptic

curves.

This

is

work

that

Nik

and

I

I

did

not

intend

to

do

that.

Put

together.

There

has

been

some

work,

especially

with

relation

to

the

vrf

graph

that

was

recently

adopted.

You

know

nail

down

exactly

how

you

might

want

to

hash

arbitrary

things

strings

to

elliptic

curves,

and

there

are

a

variety

of

different

ways

to

go

about

doing

this.

J

If

you

just

look

in

the

literature,

so

we

wanted

to

write

down

what

we

thought

were

reasonable

mechanisms

for

doing

so,

exactly

how

you

do

them

and

hopefully,

depending

on

how

those

hacks

back

stuff

going

goes,

maybe

produce

some,

maybe

use

that

as

kind

of

a

vehicle

to

drive

that

particular

work

forward

as

well,

so

kartik

I'm

kind

of

well

sync

up

later

talk

about

it.

So

for

some

background,

hashing

two

curves

is

not

uncommon.

It's

used

in

a

wide

variety

of

protocols.

J

Pakes

use

it

quite

often

to

hash,

for

example,

of

the

private

key

or

the

secret

password

onto

the

curve

itself.

Bls

signatures

used

it

quite

frequently

or

used

it

as

well.

As

I

mentioned,

the

vrf

Draft

has

a

placeholder

for

the

elliptic

curve,

variant

of

the

vrf

that

uses

this

particular

technique

and

the

privacy

perhaps

work

from

CloudFlare

and

others

also

has

this

baked

into

at

one

step

of

the

protocol.

J

So

the

common

approach

to

going

about

doing

this

and

the

one

that's

written

down

in

the

vrf

draft

and

I

think

the

one

that's

maybe

implemented

in

privacy.

Is

they

try

an

increment

approach

where

you

simply

iterate,

essentially

until

you

have

something

under

the

curve,

so

you

have

some

spring

hash

it

up

with

some.

You

know

kid

hat

and

a

would

some

counter

and

check

to

see

if

the

output

can

be

decoded

to

a

point

on

the

curve

and,

if

so,

return

it

otherwise

keep

going

indefinitely.

J

The

problem

with

this

particular

approach

is

that

it's

obviously

not

constant

time.

The

number

of

iterations

that

you

do

depends

on

or

will

distinctly

reveal

what

the

actual

arm

reveal

something

about

the

input,

so

that's

not

always

necessary,

but

when

we

can

make

it

constant

time

it

might

as

well.

So

some

of

the

requirements

we

kind

of

set

out

for

this

particular

draft

are

number

one.

We

want

to

be

constant

time

number

two.

J

Privacy

paths,

for

example,

requires

that

the

mapping

not

be

invertible,

so

we

just

want

to

call

out-

and

it's

not

yet

written

down

the

draft,

but

we

want

to

call

it

which

of

these

algorithms

are

invertible

and

which

are

not,

but

it's

not

a

requirement

for

the

ones

that

we

write

down

right

now.

So

the

methods

that

we

chose

and

we

started

with

four-

are

the

aircard.

The

SW

I

forget

their

names.

The

simplified

has

to

be

you

and

alligator

two,

and

each

of

them

has

this

particular

requirement

on

the

curve

itself.

J

J

So

that's

what

I'm

doing

in

the

draft

we

focus

on

one

particular

interface

for

this

function,

simply

a

hash

function

which

I

shortened

that

to

H

you

see

here,

which

takes

some

input

alpha

maps,

which

is

at

least

nonzero

and

maps

to

some

point

and

the

curve.

So

alpha

can

be

arbitrary.

In

point,

we

q

to

denote

the

prime

order,

the

base

field.

U

is

a

point

of

order.

2.

We

went

specifically

in

the

case

of

alligator

2,

which

is

my

one

that

has

this

lovely

little

criteria.

J

F

of

X

is

the

curve

equation

and

then

H

of

X

Rho

alpha

is

something

that

we

use

to

hash

a

string

into

the

prime

order,

subgroup

of

the

curve

not

hash

to

the

curve,

which

are

two

distinct

things.

So

the

heart

method

is

fairly

simple.

You

can,

you

know,

write

some

sage

go

fairly

quickly

to

compute

this.

In

fact,

one

of

the

appendices

in

the

draft

has

this

some

modest

number

of

exponentiation,

some

scalar

multiplications

and

additions

and

subtractions

the

exact

cost.

We

still

need

to

do

in

the

draft.

J

There's

a

big

to-do

at

the

end,

just

to

compare

the

different.

You

know

the

computation

will

come

the

you

know

at

arithmetic

complexity

of

each

of

the

algorithms,

but

you

can,

you

know

just

take

a

glance

at

it

and

see

what

it's

like

alligator

sort

of

similar,

but

a

little

bit

simpler,

compute

this

value,

D

and

compute,

the

Legendre

symbol

and

then

just

depending

what

the

output

of

that

is

negative

one

or

not

choose

one

value,

the

other.

So

there's

some

constant

time

this

you

need

to

worry

about

with

the

implementing

this

particular

technique.

J

We

just

wanted

to

get

something

written

down

and

something

that

people

could

talk

about,

need

to

complete

the

cost

analysis.

There's

a

huge

empty

section

on

the

SW

algorithm,

particularly

details

of

an

implementation

which

are

empty,

we'll

get

to

eventually

simply

that

draft,

unlike

him

up

on

this

mighty

quick,

so

next

time

around

we'll

get

to

it.

J

Sharon

Goldberg,

who

we

collaborated

with

on

this

particular

document,

pointed

out

that

it

would

be

useful

if

there

were

security

reductions

in

this

particular

work

where

possible.

So,

of

course

that's

something

we

should

consider

and

integrate

the

interface

details

are

sort

of

skimmed

over,

in

particular

how

you

convert.

You

know

octave

strings

to

integer

points,

so

we

just

need

to

look

at

the

vrf

graph

where

she's

done

a

she

and

her

co-authors

did

a

great

job,

laying

out

the

foundations

there

and

maybe

apply

that

here

and

also

kind

of

work.

J

Alongside

with

her

to

see,

if

there's

something

like

in

that

draft,

that

or

if

she

can

point

her

VC

vrf

stuff

over

to

this

particular

document,

so

we

don't

duplicate

work

and

then

I

was

alluding

to

earlier

it'd

be

really

nice.

If

we

could

use

this

as

a

driver

for

hacks

back

to

produce

some

verifiable

implantations-

and

you

know

test

out

the

miss

emerging

language-

and

the

last

thing

is

so

I

want

to

clarify

that

not

all

mappings

are

reversible,

and

specifically,

you

know

layout

in

potentially

in

the

security

consideration

section.

J

What

happens

if

you

are

using

a

hash

function

that

is

invertible,

so

some

open

issues

particular

the

language

around

hashing

to

a

prime

order.

Subgroup

of

the

curve

is

not

it

there's

much

to

be

desired

there.

Certainly,

we

flushed

out,

in

particular

many

of

the

techniques

that

we

see

always

multiply

the

output

by

the

cofactor

of

the

curve,

so

that

you

make

sure

that

you're

always

belong

to

the

primer

order.

Subgroup.

J

J

The

other

point

raised

by

Mike

amber

goes

that

you

don't

get

pure

indistinguishability

from

random

from

a

random

point

in

the

curve

for

each

algorithm,

that's

not

always

needed

for

every

single

protocol,

so

we

just

need

to

spell

it

very

clearly.

You

know

with

this

much

technical

precision

as

possible,

what

the

indistinguishability

guarantees

are

and

potentially

list

some

algorithm

are

some

protocols

in

which

this

is

and

is

not

needed.

J

So

that's

still

sort

of

an

open

task

and

it'd

be

interesting

to

hear

what

people

have

to

say

or

think

about

that,

and

so

that's

it

so

I

encourage

you.

If

you're

interested

go,

take

a

gander

at

the

graft,

it

simply

lists

the

different

algorithms

has

some

implementations

and

has

some

dues

that

we

need

to

fill

in

and

yep.

H

Q

We

should

so

actually

have

a

question:

Richard

Barnes

question

about

variants,

so

whether

this

is

related

to

different

problems,

I've

got

in

different

contexts,

so

in

the

MLS

that

I'll

be

discussing

the

buff

on

Thursday,

we

have

a

need

to

map

from

a

random

string

to

a

curve

point,

but

also

know

with

a

known

discrete

log.

So

you

know

me

up

to

an

easy

keep

hair

instead

of

just

a

point

now

that

draft

has

something

in

it

that

I,

just

sort

of

made

up

and

I

think

looks

kind

of

okay.

Q

H

Q

J

It

is

also

interesting

in

the

vrf

draft.

The

interface

is

not

exactly

equivalent

to

this;

they

take

in

a

public

key

and

a

string,

and

they

use

both

in

hashing

to

a

point

in

the

curve.

So

the

question

of

what

exactly

is

the

right

interface

here

is

still,

of

course,

up

for

discussion.

We

just

chose.

You

know

one

input,

the

arbitrary

input

because,

as

Kenny

said,

we

kind

of

want

that

to

be

the

thing

that

we

hashed

onto

the

Garba,

nothing

else,

but

of

course

we

can

talk

about

it.

If

we

need

to

change

it.

J

R

J

We

don't

have

a

proposal.

This

is

simply

a

coalition

of

all

of

the

existing

techniques

and

algorithms

that

have

been

already

published

into

a

single

document

in

an

attempt

to

kind

of

nail

down

exactly

how

you

would

do

this.

If

you

were

to

do

this

in

an

IETF

protocol,

because

it's

used

quite

often

and

as

you

point

to

the

CRF

draft-

there's

one

particular

use

case

so

that

I'm

sure

somewhere

in

like,

for

example,

in

the

alligator

paper

and

in

the

they

simplified

us

interview

paper

where

they

presented

that

particular

algorithm.

J

E

S

J

S

J

To

give

you

an

example

of

the

extreme

elliegator

to

only

ashes

on

to

half

of

the

points

on

the

curve,

so

that's

likely

in

distinguished

are

that's

lightly

distinguishable.

We

don't

sorry

we're

not

more

precise

than

that.

We

would

certainly

like

to

be,

though,

and

it

if

you

have

suggestions,

love

to

Europe

thanks.

T

Dan

Harkins

yeah

I

think

this

is

a

really

good

idea.

It's

very

important,

as

you

mentioned,

it

is

used

in

vrf.

It's

used

in

Pakes

I

got

beaten

up

pretty

severely

for

using

a

really

simple

and

lame

moy

of

hashing

philippa

curve,

and

had

this

been

around,

I

wouldn't

have

gotten

beaten

up

so

badly

good

I'd

probably

get

beaten

up

anyway.

F

J

T

Hi

I'm

Nick

Sullivan

from

CloudFlare.

This

is

sort

of

the

Nick

and

Chris

show

right

now,

but

right

now,

I'd

like

to

introduce

this

draft

that

we

wrote

that

introduces

a

new

construction

called

verifiable,

oblivious

pseudo-random

functions.

Now

it

builds

on

the

intuition

from

two

very

well-known

constructions.

One

is

V.

Rf

switch.

Is

a

public

key

out

analog

to

hashing,

so

you

can

compute

a

v

RF,

and

then

someone

can

verify

that

with

your

public

key

that

this

was

done

correctly.

T

T

You

lose

some

properties,

but

essentially,

if

you

go

through

what

a

vrf

or

what

a

PRF

is

supposed

to

be,

is

that

it's

a

function

that

given

F

X,

it's

infeasible

to

compute

this

function

without

knowing

a

secret

key

okay,

and

this

F

is

also

supposed

to

be

indistinguishable

from

from

random.

And

this

has

the

technique

that

we

want

to

incorporate

from

VRS.

Here

is

that

this

should

also

be

verifiable.

So

the

verify

somebody

who

requests

this

vo

PRF

to

be

computed

can

verify

that

it

was

actually

used.

T

It

was

computed

using

a

specific

private

key,

that's

associated

with

a

unknown

public

key.

We

would

also

like

this

to

be

oblivious

in

that

the

signer

does

not

know

if

review,

if

the

value

Y

that

is

revealed

to

them

cannot

identify

which

X

was

actually

used

or

which

computation

was

actually

used

to

to

compute

Y.

So,

yes,

if

X

is

revealed

to

the

signer,

they

can't

actually

identify

which

signature

or

which

of

these

computations

is,

is

the

one

that

was

used

to

compute

it.

T

So

this

is

very

similar

to

the

vrf

and

the

O

PRF,

but

what

you

do

lose

to

verifiable

and

oblivious

properties

are

used

together,

but

you

lose

the

ability

to

have

this

publicly

verifiable.

So

if

you

do

actually

expose

this

as

a

publicly

as

a

as

a

public

value,

then

the

signer

can

then

recover

the

obliviousness.

So

it

the

way

that

it

kind

of

will

work

fit

into

a

protocol

is

is

as

such

is

the

verifier

will

construct

a

message.

T

M

will

blind

that

message:

send

it

to

the

prover,

which

will

then

compute

a

signature

s,

a

signature

which

is

the

o

PRF

computation

of

VO

PRF

computation.

The

verifier

can

then

take

this

and

verify

that

this

is

associated

with

the

provers

public

key,

and

then

they

can

remove

the

blind

and

if

they

want

to

that,

can

confirm

the

the

output

here.

This

signature

is

associated

with

the

original

message.

M

send

that

back

to

the

approver,

they

can

verify

the

same

thing

and

this

original

vo

PRF

computation

versus

the

confirmation.

T

T

Eduroam

authentication

failed,

great,

ok,

let's,

let's

go

forward

so

I'm!

This

instantiation

was

that

actually

came

from

a

paper

from

a

few

years

ago

from

jirachi

khaosan

kochak,

and

this

describes

the

algorithm

which

lets

you

compute,

a

discrete

logarithm

equivalence.

So

if

you

have

Y

modulo

point

G

and

Z

modulo

point

M,

you

can

show

that

there

actually

have

been

exponentiated

with

the

same

scalars

K,

and

this

is

these-

are

the

details,

the

algorithm

and

in

the

protocol,

some

intuition

you

can

get

around

this?

T

Is

you

take

your

string,

which

is

your

sort

of

secret

value?

You

hash

this

into

a

curve

and

you

apply

a

blinding

factor

which

is

just

an

exponent,

which

is

just

a

multiplicative

value

here.

So

your

message

that

you're

sending

to

the

server

or

to

the

approver

in

this

case

is

the

hash

of

the

input

X

onto

the

curve.

So

you

have

a

curve

point

and

you

multiply

it

by

a

blinding

value

arm

on

the

prover

side,

you

take

that

value

and

you

multiply

it

by

the

secret

key

in

in

the

case

of

hash

curve.

T

This

is

just

another

secret

scalar,

and

then

you

generate

discrete

log

equivalence

proof

between

this

multiplication

of

the

point

and

the

a

public

pair

that

represents

a

a

base

point

and

the

base

point

multiplied

by

again

K,

which

is

the

the

secret

value

in

the

prover.

You

can

send

this

back

and

the

the

verifier

can

verify

this

discrete

logarithm

sort

of

divide

out

its

multiplicative

factor,

R

by

multiplying

by

the

inverse-

and

you

end

up

with

the

original

point,

hash

to

a

curve

multiplied

by

the

provers

private

key.

T

T

T

Alternatively,

you

don't

have

to

send

the

value

why

you

can

send

a

value

that

can

only

be

computed

with

the

knowledge

of

Y

and

the

prover

can

compute

that

same

y

as

well.

I'm,

multiplying

that

x,

hash

to

the

curve,

okay

and

and

then

you

can

validate

that

both

parties

share

this

Y

over

a

specific

mount,

a

bound

to

a

specific

message.

T

So

that's

that's

generally

how

these

this

construction

works

and

as

as

was

mentioned

before

this

is

we

have

an

application

to

this

called

privacy

pass,

which

is

in

an

upcoming

Pets

paper

with

myself

and

Alex

Davidson

and

few

other

folks.

The

way

that

this

works.

This

is

for

reducing

the

amount

of

CAPTCHAs

seen

on

the

Internet

in

a

privacy-preserving

way.

So

the

way

it

would

work

is

that

you

would

compute

a

token

and

or

a

set

of

tokens

and

win

solving

a

CAPTCHA.

T

The

CAPTCHA

server

will

compute

the

vo

PRF

over

your

blinded

tokens

and

send

them

back

to

you

and

then

the

next

time

you

see

a

CAPTCHA

on

a

completely

different

site

on

a

completely

different

origin.

You

can

submit

the

unblinded

the

unblinded

token,

and

then

the

server

has

no

way

to

actually

associate

whether

or

not

which-which

site

was

the

one

that

actually

issued

these

tokens.

So

it

allows

you

to

send

data

to

to

prove

that

you

have

solved

a

CAPTCHA

on

a

another

site

without

revealing

which

origin

that

site

was

originally

presented

it.

T

So

this

allows

a

sort

of

bypass

of

the

same

origin

policy

due

to

the

blinding

ability

of

the

vo

PRF,

and

this

is

implemented

in

a

browser

extension

for

Chrome

and

Firefox,

as

well

as

on

CloudFlare

servers.

Another

potential

application

of

this

that

we've

looked

into

is

privacy-preserving

password

leak

checks.

T

So

if

a

service

has

a

lot

of

passwords

that

have

been

leaked,

what

they

can

do

is

they

can

hash

these

to

a

curve

and

exponentiate

by

a

private

value

and-

and