►

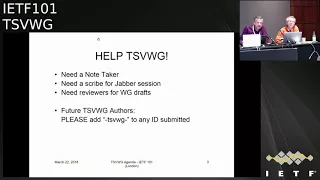

From YouTube: IETF101-TSVWG-20180322-1550

Description

TSVWG meeting session at IETF101

2018/03/22 1550

https://datatracker.ietf.org/meeting/101/proceedings/

C

D

C

D

F

B

Okay,

have

note-takers

want

to

thank,

which

is

chef

maker

and

paul.

Congdon

Gauri

is

going

to

try

to

see

on

top

of

jabber

from

up

front

here,

welcome

back

to

the

three-ring

three-ring

circus,

better

better

known

as

the

Trent,

the

transport

area

working

group

I'm,

the

I'm

David

black

one

of

your

chairs.

C

B

Is

chair

is

a

chair

chair

in

absentia?

Okay,

this

is

the

new

and

improved

note

well

complete

with

BCP

numbers.

This

applies

everything

that

goes

on

that

goes

on

this

me

you're

expected

to

you're

expected

to

be

aware

of

it.

Okay,

we

have

a

couple

note

takers.

We

have

jabber

session

scribes.

We

will

be

soliciting

as

we

go

along

reviewers

for

various

drafts

that

come

up

in

the

meeting

reminder.

B

Please

use

TS

vwg

as

the

as

part

of

the

draft

name

draft

your

name

TS,

pwg

da-da-da-da-da,

and

that

way

the

chairs

will

will

notice

it

when

we're

trying

to

figure

out.

What's

going

on

reminder

doctrine,

quality

relies

on

reviews,

so

please

review

documents

in

your

working

group

and

hear

at

least

one

of

the

doctor

of

another

working

group.

If

you

like

documents,

you

care

about

reviewed,

please

return

the

favor

and

review

other

people's

documents.

They

may

be

yours,

okay,

on

ecology,

status,

I.

B

Think

at

one

point,

I

saw

a

slide

from

Spence.

It

only

had

two

RFC's

on

I

found

two

more

carefully

setting

the

search

date

to

the

beginning

of

the

Singapore

IETF

meeting

week.

We've

had

four

RFC's

Publishing's

I

last

put

put

put

a

set

of

slides

like

this

together,

the

best

of

them

of

the

first

two,

which

are

a

couple

SCTP

RFC's

that

are

part

of

the

massive

web

RTC

draft,

a

hairball

or

tar

ball.

If

you

like,

we

managed

to

extract

those

two

out.

B

The

really

really

good

news

is

thanks

to

diligent

efforts,

a

number

of

people

in

the

room.

We

are

no

longer

on

the

critical

path

to

getting

rubber

to

getting

getting

to

getting

WebRTC

done

thing.

Thank

you

very

much

everybody.

We

still

got

one

draft,

that's

stuck

against

some

other

web

RTC

dress,

but

web

RTC

will

sort

that

out

two

more

each

an

experimentation

draft

and

the

diffserv

to

Wi-Fi

mapping

draft

the

draft

on

the

screen

is:

is

the

remaining

graph?

That's

stuck

in

the

web,

RTC

hairball,

okay,

so

Spencer

right!

Now

you

get

it

easy!

B

G

B

Believe

so,

okay,

we

have

an

idea.

Mercury

blast

call

ends

March

30th,

DCPI,

Anna

process

changes.

This

is

needed

for

the

diffserv

lower

effort

to

pH

be

draft

that

we're

going

to

talk

about

later

in

the

session.

I

think

I

heard

something

Spencer

said,

says:

I'm

a

tidal

wave

earlier.

We

are

about

to

send

five

internet

draft

ring.

Group

last

call

hope

to

do

last

call

on

all

of

these

before

Montreal.

The

good

news

is

they

only

come

in

three

batches

after

we

get

the

D

scpi

on

a

draft

dealt

with

we're

going

to

lap.

B

We

expect

to

working

with

last

call

the

diffserv

lower

effort,

page

B

draft

the

e

C

n

drafts

on

encapsulation

four

layer

protocols

and

tunnels

use

shim

headers

will

be

last

call

together.

I

believe

those

are

about

ready

for

last

call

and

addition,

the

at

the

to

FEC,

update

drafts

are

also

its

might

be

ready

for

work.

New

glass,

Chloe

expected

working

group

last

call

those

before

Montreal.

B

So

there

are

another

seven

additional

working

group

drafts

that

we'll

talk

about

a

CV

net

draft

three

drafts

on

the

l4s

low-latency

service.

You

the

options,

draft

tonal

congestion

feedback

and

Datagram,

a

Datagram

packet,

ization

layer,

PMP

MTU

discovery,

which

is

a

recently

adopted

draft,

a

couple

of

slides

on

related

drafts.

B

There

are

two

drafts

that

you

will

see

on

today's

agenda.

One

is

transferred

header

encryption

impact

which

is

Gauri's

draft

and

the

other

is

privacy,

switching

scheduler

draft

Finzi.

Let

me

say

a

word

about

what

we're

going

to

do

with

that.

There's

been

some

offline

discussion

and

it

appears

the

best

loop

for

that

draft

is

publication

as

an

independent

submission.

B

However,

when

other

trap

is

presented,

we're

going

to

be

asking

for

reviewers,

because

what

we'd

like

to

do

is,

in

essence,

empower

the

draft

authors

to

send

it

off

to

the

innovative

submission

editor

with

a

with

with

a

stamp

of

approval

from

TSV

wge.

That

says,

we've

looked

at

it

and

we

think

this

is

we.

We

think

this.

We

we

think

this

is.

This

is

a

good

thing

for

the

invention,

submission

editor

to

publish.

So

that's.

What's

that's

gonna

happen

later

this

session,

three

more

drafts,

not

on

today's

agenda.

B

Generic

multiplexing

draft

draft

ladonna

Tom

Herbert

has

a

new

draft

on

firewall

and

service

tickets,

he's

trying

to

do

things

in

band

I'm,

looking

at

Tom

on

assuming

he's

going

to

sort

out

how

this

relates

to

all

the

work

going

on

in

the

eye,

the

eye

to

NSF

working

group

congestion

control

for

for

guaranteed

bandwidth.

This

draft

actually

turned

out

to

be

a

TCP

M

related

draft,

but

I

left

it

here

because

they

put

th

vwg

in

its

title.

There

was

some

discussion

of

this

in

TC

p.m.

B

on

Monday,

and

it's

been

quite

a

bit

of

list

discussion

over

in

int

area.

There's

three

more

drafts

that

are

relevant

to

us

tunnel.

Mtu

considerations

there's

actually

whole

draft

on

on

tunnels

in

the

internet

architecture

in

in

inter

area

fragmentation

fragility,

that's

also

a

draft

into

area

and

socks

version.

Six.

All

of

these

drafts

are

being

handled

by

the

by

the

interior

working

group.

B

Okay,

so

you're

in

the

your

you're.

Now

in

the

middle

of

chair,

slides

we're

going

to

be

bashing

general

a

little

bit.

We've

done.

The

note

well

just

went

through

document

columns

and

status.

So

what

comes

next

is

milestones

review

this.

We

have

a

few

milestones

that

have

gone

past,

but

we're,

but

we're

close.

B

The

RFC,

4960,

errata

and

issues

draft

should

be

submitted

to

the

iesg

in

the

next

week

or

two.

The

other

two

orange

graphs

up

here

are

the

two

EC

ending

cap

drafts.

Those

are

we're

going

to

move

the

dates

out

to

June

2018.

Both

drafts

have

new

versions

Bob,

both

new

versions

or

just

6040.

Both

have

new

versions.

Both

are

believed

to

be

very

close

to

ready

for

a

group

last

call

our

intent

is.

Our

intent

is

not

to

have.

B

H

B

B

During

the

Alpha

recession,

we

could

discuss

likely

timing

for

those,

and

then

this

December

date

on

the

remaining

drafts

and

as

we

get

to

Montreal,

we'll

try

to

figure

out

what's

going

to

get

done

this

year

and

what's

going

to

have

new

dates

and

new

new

dates

in

the

next

year.

Okay,

a

little

bit

on

the

agenda,

so

we've

done

a

document

status

charting

accomplishments.

This

is

agenda

bashing

and

I'm,

going

to

use

the

opportunity

to

say

a

few

words

about

the

tunnel

congestion

feedback

draft

right

now.

This

draft

is

currently

sort

of

stuck

I.

B

Think

we

had

discussion

with

our

ADEs

about

this

draft

back

in

Prague,

and

the

determination

at

that

point

was

a

mechanism

without

a

work.

Example

of

usage

doesn't

get

as

much

anywhere

and

with

luck,

bob

is

good.

At

going

to

the

mic

can

tell

us

that

there's

a

work

example

of

its

usage

about

to

emerge.

B

I

Way,

I'm

not

trying

to

repeat

your

words

for

you.

Okay,

don't

least

like

I

happened

to

be

talking

to

him

in

the

service

function.

Training

working

group

wants

to

use

this

with

and

MLS

for

doing,

load,

balancing,

essentially

across

service

functions

and

I

pointed

the

him

at

that

draft,

and

so

he's

willing

to

jump

in

and

elbows

guys

finish.

It.

B

B

They

were

referring

to.

Is

there

the

banana

drafts

which

are

into

area

stuff,

are

some

individual

submissions

and

there's

an

EC

is

a

banana

draft

and

ecn

that

is

also

trying.

That

also

is

trying

to

use

what

is

effectively

tongue,

dish

and

feedback

to

do

to

do.

Load,

balancing,

okay

right

after

I

get

done

with

this.

With

this

wonderful

monologue,

we're

going

to

do

a

the

lower

effort

of

PHP

draft,

which

will

be

a

gory

and

Roland.

We

have

presentation

of

prairie

switching

scheduler,

which

is

exposes

material.

B

We

have

the

packetization

layer

path,

MTU,

discovery,

draft

the

trees

and

cap

drafts

l

fresh

drafts,

and

then

there

is

some

new

activity.

That's

been

proposed

over

in

aqua

t8o

2.1

called

congestion,

isolation.

Paul

con

is

going

to

talk

about

that

should

get

us

to

the

break.

We

may

pick

up

the

feck

frame

dress

before

the

break.

If

we

do

well

on

time

that

would

be

a

items.

B

He

sent

me

some

slides

and

I

think

I

know

what

they're

about

a

couple

of

SCTP

drafts,

which

I

think

is

going

to

be

fairly

quick

and

then,

if

you're

at

the

plenary

last

night,

you

heard

a

little

bit

of

discussion

about

sort

of

the

overall

impact

crypts

on

the

internet.

We

have

a

draft

on

transport,

header,

encryption,

I,

think

that's

it.

B

C

C

Pill

three

one

values

to

require

publication

of

a

standard

strike,

our

best

current

practice

RFC,

and

if

my

ad

wants

to

bash

the

name,

you

can

do

I,

don't

care,

there's

a

photo

of

Balmoral,

it's

close

to

our

body,

and

this

is

the

purpose.

Oh,

he

wants

to

try

and

bash

the

name.

Already.

Okay,

here

we

go

as

I

said:

dispenser

darkens

speak

his

response.

J

L

C

I

am

seriously

and

we

might

have

get

a

name

before

we

finish

it

and

which

castle

did

you

use?

That's

Balmoral,

that's

where

we

are

and

the

overview

of

the

draft

is.

It

simply

changes

this

pool

in

there,

no

registry,

which

already

exists

from

being

a

local

use

registry

to

being

a

standards,

action

registry,

and

we

believe

that

there

are

no

bad

effects

of

doing

this,

but

we're

the

ietf.

So

we

write

a

document

to

make

sure

everyone

else

believes

that

there

are

not

bad

effects

of

doing

this.

That's

the

purpose

of

the

document.

C

Yeah

apologies.

If

you

read

the

last

version,

b:00

version

it

had

everything

talking

about

Iona,

which

this

is

this

picture

of

I

honor

in

Scotland

as

well,

not

I

honor.

My

spellchecker

had

a

wonderful

time

over

the

draft

and

I

was

submitting

it

very

quickly

and

don't

read

that

version

read

zero

one

and

seriously,

because

it's

only

one

registry

item

we're

changing.

I

think

this

is

now

ready

for

any

comments

you

may

have

it's

in

working

group

last

call

thanks

to

people

who

have

already

sent

me

comments,

I

know

of

one

or

two

changes.

I!

C

Do

have

to

make

so

I

will

include

those

in

the

final

version

and

I've

already

fixed

one

type

or

I

realize

there's

an

AM,

a

with

a

capital

m

and

but

small

days

and

wise

and

I

realize

there's

some

inconsistency

with

the

honor

registry

in

terms

of

the

placement

to

the

ordering

of

some

of

these

things

and

you'll

get

any

other

comments.

Please

tell

me

yeah.

L

I

C

Yeah

we

have

talked

about

this

in

previous

meetings

and

the

reason

for

presenting

this

here

is

to

try

and

make

sure

of

that.

When

we

go

to

IETF

last

call,

we

will

make

sure

that

we

also

tell

other

working

groups

heads

up

Luke.

There

is

a

change

here.

We

don't

think

it's

bad,

but

there

is

a

change

if.

B

J

Expense

to

Dawkins

David

David

answered

the

question

that

I

was

going

to

ask.

So

let

me

ask

my

next

question,

which

is

don't

if

those,

if

those,

if

those

still

make

if

those

are,

if

those

networks

are

using

the

local

use

the

SCPs

now

don't

they

have

to

explicitly

shoot

themselves

in

the

foot

to

start

having

problems

they.

B

Diffserv

work

diffserv

asserts

that

it

behaves

best

when

you

have

completely

perfect

configuration

of

the

network

perimeter.

Now

a

network

with

a

completely

correct

configuration

of

his

perimeter

is

missing

categories

as

Santa

Claus,

the

Easter

Bunny

and

the

Tooth

Fairy,

but

I,

don't

think,

there's

gonna

be

made

that

I,

don't

think,

there's

going

to

be

made,

there's

going

there

going

to

be

major

problems

and

a

network

that

is

using

these

as

local

use

will

have

the

ability

to

reconfigure

to

so

many

other

local

use

code

points

are

the

one

ones

I

look.

M

K

L

B

L

C

Summary

is

still

basically

that

when,

when

this

completes

working

group

last

call

we're

going

to

do

a

write-up

and

we're

going

to

make

sure

we

have

feedback

from

everyone

in

the

ITF,

it's

much

more

important

that

this

is

well

known

before

it's

published,

then,

when

it's

published

so

and

the

purposes

document

is

to

tell

everyone

on

the

ITF

that

it's

happening

and

then

Ayane.

Finally

to

make

the

change

and

that's

my

slide.

David

okay,.

L

L

Presented

draft

from

gory

and

now

the

working

group

draft

in

order

to

open

pool

three

four

standards:

action.

Several

editorial

changes

so

not

really

much

new

here,

I,

actually

edit

updates

also

to

the

recently

published

mapping,

gives

earth

through

arrow

2.11,

drag

RC

and

yeah

I.

Think

there's

one

more

draft

or

our

C

to

be

out,

throw

up

to

be

updated,

but

I

come

to

that

later.

So,

review

comments

really

go

gaps

and

some

comments

in

many

editorials,

so

I'll

try

to

maybe

shorten

some.

B

Phrases

and

suggested

rollin

before

you

go

to

Bob

coach.

Could

you

go

back

the

previous

slide

and

let

me

do

a

real,

quick,

quasi

administrative

item.

I

want

to

make

sure

that

we

can

report

that

the

sense

of

the

room

is

that

the

first

bullet

is

what

we

want

to

do.

We've

had

long

discussions

about

that

and

I

think

it

came

down

to

code

points

one

or

five,

and

there

was

some

there's

some

data

that

I

think

Brian

Trammell

sent

the

list.

That

suggests

that

as

we

suspected

one,

his

problem

is

probably

the

better

choice.

L

L

So

I

just

sent

your

email

to

the

list.

That

I

will

try

to

clarify

on

that.

So

my

proposal

is

to

riot

le

users

should

use

a

lower

than

best

effort,

congestion

control

because

blah

blah

blah

and

then

also

what

happens.

If

you

don't

do

that,

all

right,

so

implications

of

yeah

may

be

negative

or

if,

if

you

don't

use

such

kind

of

congestion

control,

then

you

can

expect

some

some

problems

on

cement,

brisker.

I

I

just

wanted

to

try

and

explain

in

face-to-face

the

that

what

I

was

trying

to

say-

and

that

is

that

at

the

moment

the

text

essentially

says:

if

you

don't

want

to

harm

people,

you

shouldn't

harm

people

and

and

the

condition

shouldn't

be

what

you

want

to

do

or

what

the

application

wants

to

do.

It

should

be

what

whether

there

will

be

harm.

I

You

know

it

so

it's

it's

about.

If

the

traffic

is

is

unlikely

to

harm

anyone,

then

you

don't

need

to

do

an

lvl,

LBA,

congestion

control,

but

otherwise

you

do

know

it's

not

about

what.

Whether

you

want

to

help

people,

because

that's

just

saying

psychopaths

are

okay

and

and

Psychopaths

they're

gonna.

Do

it

anyway

sure.

C

Well:

okay,

so

gory

fur

hats

from

the

floor,

Mike

and

yeah

I

much

prefer

a

approach

that

says

should

use

a

leadbox

style,

less

than

TCP

type

congestion

control.

If

you're

using

the

early

class

should

be

don't

have

to.

If

you

don't,

if

you

don't,

then

there

are

going

to

be

interactions

between

an

application

that

uses

multiple

classes

on

the

maybe

interaction

with

other

traffic,

and

if

we

explained

that

more

clearly

in

the

wording,

then

I

think

that's

much

better

guidance

than

simply

kind

of

waving.

C

Our

hands

I'm

a

little

bit

scared

as

an

individual

about

the

use

of

must

do

something

when

we

can't

actually

force

anyone

to

do

it,

and

it's

not

clear

how

you

check

that

interaction,

but

I

do

like

the

idea

of

saying

should

and

really

meaning

should

are

then

explaining

what

the

ramifications

are

and

then

people

can

make

an

intelligent

decision.

Oh

great.

B

Chairs

running

around

yes,

we've

now

done

the

checks

changes

as

David

from

the

floor

Mike,

and

what

I

was

going

to

observe

is

that,

from

an

operator,

a

point

of

view

should

versus

must

is

kind

of

irrelevant,

as

the

operator

is

going

to

have

to

defend

against

this,

regardless

of

which

word

we

put

into

the

RFC's

and

I'm

inclined

to

agree

with

Gauri

that

a

should

is

appropriate

because

I,

don't

think

I,

don't

think

anything

changes

in

what

the

network

is

gonna

have

to

do.

If

we

put

a

mustin.

I

My

point

wasn't

about

I

sure

enough:

I,

don't

care

about

the

sugar?

Well,

I

do

a

bit,

but

it's

more

about

you

know

it's

just

it

just

doesn't

make

sense,

saying

that

if

you

don't

want

to

do

harm

then

using

the

OBE,

because

if

you

don't

want

to

do

harm,

you

will

use

an

L

ve,

it's

it's

it!

It's

it's

about

whether

you're

going

to

do

home.

Well,.

B

And

it's

it's

a

and

I

think

we

take

a

closer

look

at

the

wording,

because

the

real

concern

for

the

network

is

not

it's

not

whether

a

flow

does

harm

is

whether

the

flow

is

using.

The

using

this

in

aggregate

would

do

harm

and

that's

a

little

bit

different

and

not

and

not

something

an

end.

User

always

has

enough

visibility

into.

I

Let

me

explain

where

I'm

coming

from

I

have

in

mind:

I'm

thinking

about

l4s

and

I'm

writing

mappings

for

this

server

l4s.

At

the

moment,

you

can

do

a

really

good

lesson

best

ever

congestion

control

with

a

scalable

congestion

control

cuz.

It

jumps

up

really

fast,

but

I

don't

want

anything

that

doesn't

have

an

L

ve

in

the

elf

rescue

because

it

will

screw

the

latency,

and

so

you

know

it

it

affects

that.

But

I

mean

you

know,

that's

a

special

case,

maybe,

but

it's

from

the

network

operators

point

of

view.

I

C

C

B

L

I'm

mailing:

this

is

fine

yeah.

So

what's

yeah,

maybe

we

can

discuss

this

on

mailing

list.

I

checked

the

PHP

guidelines

from

our

C

2475

and

I.

Just

came

across

the

the

g7

guideline

7,

which

talks

about

tunneling

I,

don't

know

whether

we

have

to

state

any

extra

text

about

tunneling

I,

don't

know

I'm,

not

a

tunneling

expert.

I

know

they

use

the

tunneling.

Do

so

drag.

L

L

C

L

J

J

C

B

J

For

any

normal

speaking,

expensive

Dawkins

for

any

normal

craft

I

would

be

happy

to

handle

stuff

in

all

48,

but

I'm

not

sure

that

there

will

be

I'm,

not

sure

that

all

the

author's

will

be

living

when

the

hairball

is

published,

which,

which

makes

it

less

likely

that

that's

going

to

be

effective.

I'm

happy

I'm

happy

to

drop

a

droplet

out

to

the

RFC

editor.

Now,

if

you

would

send

me

what

I

need

to

tell

them

I'm

happy

to

do

that

and

let

let

them

do

the

things

that

they

do.

You.

B

C

We

probably

should

let

the

RTC

what

people

know

that

we've

decided

the

code

point

pretty

soon,

because

we

shouldn't

change

the

document

that

they

think

they've

been

referring

to.

So

we

will

let

other

people

know

of

the

consensus

this

ITF

finally

confirmed

about

which

code

point

it

is,

and

they

will

then

be

aware

of

this

upcoming

change.

So

we

can

handle

that

bit

and.

B

B

Quick

quick

reminder:

the

the

destination

is

draft

is

a

publication

it

by

the

independence,

submission

editor,

and

the

goal

of

this

is

for

people

to

understand

this

draft

I'm

gonna

be

soliciting

a

a

few

reviewers,

because

we'd

like

to

be

able

to

say

that

TC

WG

thinks

that

publication

of

this

trap

is

in

PES.

Admission

is

a

good

idea.

So

an

a

is

that

the

house

for

functioning,

so

you

first

name

Annie

I

mean.

N

I'm

going

to

talk

about

our

priority

switching

schedule

so

sharing

the

capacity

offering

is

an

important

issue

for

mix

traffic.

As

you

all

know,

and

there

are

many

existing

solutions

like

waiting,

fracturing

deficit

from

Laureen

and

so

on,

but

there

are

complex

to

configure

and

provide

only

soft

guarantees,

and

so

our

objective

with

this

new

priority

scheduling

switching

schedule,

is

to

achieve

a

service

closer

to

PG

PS,

obtain

a

more

predictable,

predictable

available

capacities.

N

So

in

fact

we

want

to

ensure

a

more

predictable

output

right,

so

we

have

a

use

case

to

so

how

we

want

to

use

it

as

an

example.

So

the

idea

is

to

make

the

AF

class

more

predictable

on

the

deep

south

quarter

architecture,

just

as

a

teaser

here,

I

have

shown

two

figures

with

the

round:

AF

output

rate

as

a

function

of

the

EF

input

rate.

When

you

vary,

the

read

scheduler

wait.

So

here

you

can

see

that

the

range

is

larger,

with

the

ALP,

with

a

red

color

compared

to

our

Ghidorah.

Wait.

N

N

Next,

the

use

case

I

just

talked

about

showing

the

benefit

of

using

PSS

in

a

diff

circle

network

and

the.

Finally,

the

security

considerations

are

still

a

work

in

progress,

so

PSS,

you

know

in

a

nutshell,

the

PSS

is

based

on

the

birthday

meeting

shaper

and

the

key

idea

is

to

use

a

credit

contour

to

change

the

priority

viewed

by

the

bio

priority

scheduler.

So

you

have

a

non

active,

PSS

cues,

which

are

regular

priority

schedule,

schedule,

Jews

and

other

cues

with

an

active

PSS.

N

N

So

that's

the

key

idea.

As

you

can

see,

there

are

two

things

to

establish

it's:

how

does

the

credit

change

and

how

to

select

the

prairies

we

use?

So

we

have

three

parameters:

/

ctrl

Q.

We

have

a

max

level

for

the

credits,

a

resume

level

and

the

result

bandwidth,

which

is

used

to

compute

the

credit

slopes.

I

will

give

I

will

explain

more

how

it

works.

So

here

is

an

example,

as

the

FX

axis

you

have

the

time

and

as

y-axis

the

credit

of

the

crunchyroll

q

we

are

looking

into.

N

We

are

transmitting

several

packets

from

here

and

when

we

are

transmitting

packets

from

the

control

queue,

we

are

increasing

the

credit

with

a

center

with

a

right

I

sent

when

the

credit

reaches

the

level

max.

We

change

a

priority

from

the

high

priority

to

the

low

priority

and

due

to

the

fact

we

are

using

a

non

primitive

static.

N

Scheduler

there

is

some

non

preemption

leading

to

the

saturation

of

the

credit

at

the

maximum

level.

Then

we

send

all

the

types

of

traffic,

so

we

decrease

the

credit

with

a

right

I

either

when

the

credit

reaches

the

resumed

level,

the

priority

is

switched

back

to

the

high

priority

and

again

you

do

not

preemption.

We

keep

decreasing

your

credit

until

I

we

reach

zero.

The

credit

reaches

zero

or

until

the

end

of

the

transmission

of

the

packet.

N

So

this

is

the

an

example

that

is

true

but

I.

Think

it's

a

bit

misleading.

It's

I

think

it's

the

best

way

to

explain

it

first,

but

a

bit

misleading

because

it

may

it

may

give

you

the

impression

that

when

you,

when

the

priority

is

high,

then

we

only

sent

the

control

traffic

and

when

the

priority

is

low.

You

on

this

concern

also

traffic's.

N

So

another

example:

here

we

start

by

sending

a

few

packets,

and

so

we

increase

the

rate

with

right

with

the

eyes

sent

then

for

some

reason

either

because

zero

traffic

as

a

higher

priority

or

because

there

are

no

more

control

traffic

enjoyed

and

we

can

send

other

types

of

traffic.

So

we

decrease

the

credit

with

the

right

I've

either

done.

Maybe

we

come

against

an

the

control

traffic

so

again

the

credit

increases.

N

So

we

have

again

the

change

of

priority

with

to

the

low

priority,

and

so

we

can

continue

with

sending

other

type

of

traffic's

and

if

all

the

Q's

with

higher

priority

are

empty,

then

we

can

send

the

control

traffic,

even

though

the

priority

is

low.

And

finally,

the

last

example

is,

if

also

chose

again,

our

empty.

So

credit

decreases.

So

you

can

see

that

we

have

a

fairly

simple.

N

So

I

think

I

hope

I

have

convinced

you

that

it's

an

interesting

schedule.

Robots

here

is

a

use

case

to

emphasize

it,

so

we

want

to

use.

We

used

the

current

quarter,

architecture,

which

you

I

think

you

will

know,

and

here

is

what

we

usually

obtain

with

such

anarchic

architecture

here

on

the

y-axis,

is

a

weight

of

the

AF

dress

and

on

the

on

the

x

axis,

is

the

weight

and

on

the

y

axis,

is

a

output

right.

So

the

aim

is

to

make

the

AF

class

more

predictable.

N

So

we

first

study

how

it

works

with

the

current

architecture.

Let's

say

you

want,

you

know

that

the

input

right

of

the

EF

classes

around

50%

and

it

varies

from

25

to

75.

Then

as

you

come

as

you

know,

the

output

rate

will

also

vary

here.

For

example,

if

you

set

the

white

at

0.5

s,

output

rate

will

vary

between

twelve

point

five

to

thirty

seven

point

five,

so

it

is

quite

large

and

we

can

conclude

that

the

AF

output

rate

is

uncertain

when

the

EF

input

rate

is

unknown.

N

Our

goal

is

to

make

the

rate

of

the

AF

class

more

predictable.

Our

proposal

here

is

to

add

the

PSS

and

to

use

it

to

change

the

priority

of

the

AF

class,

so

the

EF

class

still

has

the

first

first

priority,

so

no

change

here

and

the

AF

class

sometimes

has

a

higher

priority

than

the

default

class,

sometimes

the

lower

priority

and

today

for

class

under.

As

a

result,

we

obtain

more

of

its

this

as

fairness

to

the

priority

scheduler

through

simulations.

N

We

obtain

these

curves,

so

we

set

the

parameters

to

obtain

some

more

of

the

same

red

line

as

before

here

the

same,

and

what

we

obtain

is

that,

for

a

new

input,

right

of

the

EF

class

below

50%

will

change

the

red

lines,

which

is

very

good

and

then

of

when

we

increase.

If

we

still

increase

the

EF

input

rate

we

obtain

as

a

minimum

between

the

remaining

capacity

and

our

red

line.

So

here

at

75%

of

remaining

capacity,

it

is

25.

N

So

if

we

compare

both

schedule,

we

obtain

a

much

larger

area

of

uncertainty

with

the

red

Piedra

compared

to

our

proposal

of

PSS.

And

what

is

interesting

is

that

if

we

want

to

provide

a

minimum

guarantee

for

the

AF

right

output

right

with

the

with

a

red

schedule,

we

have

to

set

the

right

a

0.5

to

obtain

12%

output

rates.

But

with

our

proposal

we

have

only

to

set

it

at

0.5%,

meaning

we

have

0.75

percent

that

can

be

given

to

other

priorities

if

needed.

N

So,

to

conclude,

on

this

use

case,

the

EF

class

is

not

impacted

by

the

change.

I

think

it's

important

when

we

know

the

EF

input

rate

we

can

have

easily

at

the

same

behavior

with

PSS

and

with

the

weight

in

wrong

Robin

when

the

EF

input

rate

varies.

That

is

where

our

PSS

as

a

better

behavior,

because

the

AF

output

rate

is

much

more

predictable,

and

this

result

that

we

corroborate

corroborated

with

the

simulations

on

Anniston.

N

L

N

Situations

and

we

didn't

see

any

changes,

and

even

if

you

look

at

it

here,

you

see

the

EF

class

is

as

a

first

priority

and

it's

exactly

the

same

with

our

PSS.

So

the

impact

maximum

impact

is

only

the

maximum

frame

size

of

a

lower

capacity

of

priority.

So

on

the

EFM,

the

yeah

that

doesn't

change.

Okay,.

B

P

P

B

Miss

miss

miss

miss

introduction.

This

is

this

is

not

going

to

be

published

as

a

standard.

This

is

going

to

be

independent,

submission

we're

looking

to

provide

some

reviews

so

that

we

can

give

the

authors

a

a

effectively

a

note

to

send

the

insufficient

editors

as

TS

vwg

thinks

is

a

fine

thing

to

publish

it's

good

work

right.

Okay,

would

you

be

interested

in

reviewing

the

draft.

B

P

B

L

N

N

B

C

E

G

G

So

since

it

was

last

night

in

Singapore

they've

been

several

updates

address

been

adopted,

we

have

taken

some

implementation

experience

and

we

have

updated

our

state

machine

as

we've

renamed

some

states

and

we've

added

a

new

state

for

UDP

transports.

We've

added

text

for

considering

search

algorithms

based

on

discussion

from

the

list

and

probably

the

most

important

thing.

There

is

providing

useful

growth

as

you

are

still

searching.

So

we

can

feed

that

forward

and

we

got

added

text

on

quick

that

we

think

quick

can

support

everything

we

need

in

the

draft.

G

Well,

that

was

quick

last

week,

not

necessarily

quick.

This

week

we

run

experiments,

Trang

get

rid

of

things

and

we've

also

added

text

on

path.

You

pick

handling

and

other

mechanisms

were

written

single

signal,

latitude,

big

style

messages,

so

in

Singapore

the

state

machine

look

like

this

in

just

quick

overview.

G

We

we

enter

through

in

hidden

probe

non.

We

have

this

for

unconnected

transports

and

then

we

validate

connectivity

moved

to

probe

base

in

pro

base.

We

need

to

to

validate

that

a

base.

Mtu

is

going

to

work

and

once

we

do

that,

we

could

have

move

into

a

search

state

and

then

from

there

we

we

can

perform

a

search

and

we

search

shop

until

we

heat

a

Maxima

to

you

and

then

we

are

done

and

then

we

we

can

sell

that.

G

So

what

we've

changed

is

we

have

renamed

the

the

initial

state

from

program

to

protect

where

we

are

we've

also

added

a

timer

mechanism,

so

we

can

figure

out

if

we

can

actually

establish

connectivity

and

then

one

thing-

that's

probably

still

missing

from

this-

is

in

the

in

the

probe

search

state.

We

need

a

pointer

out

so

that

we

can

incorporate

different

search.

Our

lives.

G

G

G

We

know

we

need

to

at

least

rebuild

the

5-tuple,

so

we

know

it's

coming

to

the

right

connection

and

we're

pretty

sure

that

the

the

implementations

we

have.

We

cannot

not

do

this,

but

we

also

have

questions

about

how

we

consider

the

the

path

you

signals

we

receive

and

what

we

should

do

with

with

the

new

information

we

get

from

the

network.

B

Comment

on

this

one

I

don't

have

any

good

answers

for

you,

but

have

a

place

to

dig

in

in

the

the

slides

I

started.

The

meeting

you

saw

a

reference

to

draft

Bonica

interior

frag

fragile.

Take

a

look

at

that

that

that

will

probably

tell

you

a

little

more

than

a

little

more

about

ptb

signals.

Thank

you.

I.

H

Jackson,

can

you

go

yeah

so

at

least

for

a

CDP?

We

can

do

a

verification

and

we

do

this

based

on

their

verification

tag.

So

it's

not

just

a

five

tuple,

but

we

have

something

in

a

blind.

Attacker

would

have

to

guess

and

I

think

we

have

to

validate

the

the

package

of

big

messages,

because

this

can

just

come

from

a

middle

common

box

in

the

middle.

So

you

only

know

you

can

reach

it

gives

you

an

upper

link,

so

you

an

upper

limit,

so

you

have

to

you

have

to

prove

it.

Yes,.

J

R

I

can

get

all

those

words

out:

I

didn't

catch

the

semi

horizon

soon

enough

to

rid

of

them.

Sorry

I

do

intend

to

do

that.

The

issue

of

authenticating

messages

from

the

network.

The

end

system

of

course,

is

much

broader

than

just

this

problem,

and

it

would

be

good

to

revisit

whether

or

not

there

might

be

some

new

solutions.

There

are

some

more

work

there.

R

R

R

There's

always

I

believe

the

other

draft

admitted

the

possibility

of

just

completely

ignoring

ICMP

messages

yeah.

We

help

that

in

this

draft

as

well.

You

have

that

in

this

draft-

and

we

did

definitely

see

cases

where

they

were

bite-

swapped

yeah.

There

definitely

is

like

a

sensibility

filter

on

the

the

signals

you

get

out.

R

I,

don't

remember

other

cases,

the

other

thing.

This

may

not

be

evident

from

reading

the

other

document,

but

alright,

but

our

intent

all

along

had

been

to

facilitate

jumbo

discovery,

and

it

turns

out

that,

after

putting

all

that

work

into

that

draft,

what

it

really

did

was

move

the

problem

down

a

level

because

the

Knicks

need

to

do

buffer

carving

before

they

can

talk

to

the

switch

to

ask

the

switch.

R

What

into

you

can

just

do

and

by

the

time

you've

buffer

carved

the

neck,

it's

too

expensive

to

restarted,

and

so

it

never

attained

that

goal.

So

that

was

a

and

I'll

say

an

off

topic

agenda

that

we

didn't

adequately

vet

her

I

was.

It

was

our

motivation

for

doing

all

that

work,

but

it

didn't

didn't

pan

out

thanks

for

the

author

of

reviewing

this,

send.

S

H

D

H

Q

A

T

I

think

one

thing

I've

come

to

is

the

EOP

TB

is

a

nice

optimization

rather

than

a

must-have

and

but

I

think

one

thing

that,

in

order

to

make

it

useful

as

an

optimization,

that's

worth

really

calling

out

and

thinking

about,

and

it's

got

we

started

discussion

on

quick

on

this

is

how

does

it

interact

with

load

balancers

because

dogmas

routing

it

back

to

the

you

know,

from

a

in

most

in

server

clustered

environment,

see

how

do

you

get

that

to

the

back

to

the

right?

Server

is

an

interesting

challenge.

T

That's

there

there's

some

there's

a

few

different

approaches

that

may

be

worth

calling

out

of

pointing

people

to,

but

certainly

on

the

validation

side.

I've

seen

both

I

both

see

not

to

do

interesting

things

to

PT,

be

messages

causing

them

to

not

be

useful

anymore

or

not

rewrite

them

properly,

as

well

as

at

least

one

tcp

optimizer

that

did

creative

face,

as

it

was

passing

back.

The

the

PTV

message

so.

C

To

two

bits

of

feedback

immediately

from

me

on

those

two

main

count

on

my

also

card,

but

the

the

first

thing

is:

please

tell

us

about

strange

paths

to

big

messages,

because

it

might

help

us

verify

things

correctly.

If

you

want

to

send

us

an

email,

anybody

who's

seen

a

really

strange

path

to

big

message

relating

to

this.

It

would

be

really

helpful

input.

The

second

thing

is

them.

G

G

We

still

have

two

weeks

to

do

to

the

state

machine,

and

so

there

are

still

constants

and

times

which

don't

have

values,

and

this

says

when

to

set

the

maximum

packet

size,

but

I

think

we

really

mean

when

to

feed

this

new

MTU

to

applications,

we're

providing

with

service.

And

then

we

have

a

bigger

issue

trying

to

deal

with

with

inconsistent

results.

As

we

spoken

about.

G

G

We

will

then

come

back

into

checking

with

implementations,

and

that

was

updating

their

c2b

implementation,

the

UDP

options,

implementation

is

tracking

and

then

I

think

we

hope

to

have

at

least

a

prototype

than

one

of

the

quick

implementations

for

from

Montreal

and,

as

Gauri

said,

we're

very

interested

in

people's

experience

with

weird

networks

and

weird

empty

use,

and

and

when

things

get

really

strange

in

their

networks.

Thank.

J

But

having

says

what

I

think

large

is

going

to

say,

I

do

hope.

We

can

make

some

progress

on

this

because

you

know

I

mean

it

does

matter

you

know,

and

when

we,

when

we

tried,

we

tried

to

advance

ipv6

to

two

full

internet

standard.

This

is

what

broke

you

know

this

is.

This

is

the

one

where

this

is

the

one

where

we

did

up

the

ITF

last

call

people

came

back

and

said:

you

know

path,

MTU

discovery

using

ICMP,

yeah

I'll

get

right

on

it.

You

know

so

I

mean

this

this

this

is

broken.

U

Yeah

I

wonder

to

what

I

will

say

so

at

the

blogger.

At

the

moment,

most

of

the

quick

implementations

do

something

very

simple

I.

Just

basically

you

try

to

send

a

packet

over

a

larger

size,

and

if

that

works

you

too

charactery

and

move

on

so

and

that's

okay,

it's

not

great,

but

it's

okay,

the

other!

It's

also

having

a

functional

and

and

and

better

path,

MTU

scheme.

It's

not

necessarily

something

we

need

to

ship

with

very

first

version

of

quick.

U

It

would

obviously

be

nice

right,

but

it's

not

sort

of

blocking

us

from

from

from

doing

so,

because

we

can

quickly

rev

that

said,

right,

I,

currently

so

I

haven't

had

to

draft

right

because

of

other

things

to

do

this

week,

but

I

don't

have

a

good

feeling

how

complicated

these

schemes

would

be

right.

If

we're

talking

about

something

that

that

is,

you

know

a

paragraph

of

text

I'm,

pretty

sure

that

many

implementations

will

probably

try

to

put

it

in

for

v1.

U

If

it's

something

that

is,

you

know

more

transport

in

complexity

like

usual,

maybe

not,

but

we

can

build

this

later

right.

So

in

my

mind-

and

this

is

sort

of

not

with

the

chair

and

on

but

I-

see

us

after

we

do

v1

I

see,

is

actually

work

on

two

things:

we're

going

to

probably

do

a

v1

dot

on

that.

It's

just

bug

fixes

and

little

things

like.

C

So

speaking

as

a

chair,

rather

than

an

offer,

I

think

it'd

be

good.

If

the

this

document

has

very

small

paragraphs

in

about

how

the

individual

transports

in

key

would

implement

this,

it

would

be

good

if

the

quick

working

group,

at

least

somebody

in

there

reviewed

those

bits

to

check

the

align

currently

they're

just

pulled

from

the

quick

spec,

and

that

might

be

all

that's

needed.

They

don't

define

how

it

works,

but

they

say

there

are

mechanisms

in

quick

which

can

do

these

things

that

you

need.

So

we

can

get

that

fixed.

C

U

B

Prefer

the

the

earth,

the

slightly

earlier

discussion,

where

quick,

would

would

would

likely

be

upwards

compatible

with

to

come

with

this

likely

not

coming

in

quickly

when

I

put

the

state

machine

diagram

up

Lars,

because

this

is

a

this

this.

This

is

not

the

same

this.

This

is

not

a.

This

is

not

not

a

paragraph

of

text

right,

it's

a

little

more

work.

B

C

G

G

If

you

go

past,

my

last

slide,

there's

a

there's,

a

pointer

to

our

our

github.

You

keyboard

this

one

yeah,

there's

a

pointer

to

our

github

and

all

of

the

the

work

we're

doing

on

this,

and

my

later

presentation

is

just