►

From YouTube: IETF101-BESS-20180320-1550

Description

BESS meeting session at IETF101

2018/03/20 1550

https://datatracker.ietf.org/meeting/101/proceedings/

A

A

A

Blue

sheets

are

circulating,

so

please

take

care

that

we

get

back

to

us

at

the

end

of

the

meeting.

We

would

like

to

have

a

minute

taker

is

possible

to

help

us.

We

have

many

many

session

to

manage

today

and

we

would

like

to

be

able

to

interact,

so

it

would

be

really

great

to

have

someone

who

take

some

notes

around

here.

A

Okay,

easy

here

is

our

agenda,

so

we

will

try

to

do

quite

long

working

group

status

to

catch

up

all

the

work

that

is

currently

done

in

Bess.

We

would

like

to

do

some

cleaning,

so

we

will

spend

some

times

on

the

document

status.

Then

Andrew

should

present

a

kind

of

coordination

or

regarding

via

TCP

md5

option

for

LDP,

which

is

planned

to

be

deprecated.

A

Then

we

will

have

some

MVP

and

draft

and

a

lot

of

session

about

evpn

talking

about

yank

model.

There

is

a

huge

item

which

is

via

direct

jia,

friction

framework,

which

we

know

where

we

need

a

consensus

on,

and

also

some

drugs

that

are

related

to

bgp

load,

balancing,

so

quite

a

huge

agenda.

So

please

present

us

to

be

on

time

because

we

are

really

for

for

session.

A

A

A

A

A

A

We

have

also

one

document

ready

for

submission

to

Yale

to

the

IES

G,

which

is

V

our

layer,

2

layer,

3,

VPN,

multicast

neighbor,

and

we

have

also

within

our

end

some

documents

that

we

need

to

progress

which

are

post

working,

hopeless

cause

of

the

optimized

yeah.

So

Matt

you

should

do

via

Shepard

on

this,

the

explicit

wrapping

there

will

be

a

session

today

on

the

agenda

and

for

the

MVP

and

maybe

I

think

we

are

waiting

for

some

updates

on

the

documents

that

should

be

done.

I

think

by

the

end

of

the

month,.

A

We

have

several

documents

that

are

also

ready

for

a

working

group.

Last

call

we

are

already

known

and

there

is

a

new

one

which

is

their

diffraction

framework.

Then

there

is

a

session

and

the

agenda

on

this

one

to

give

a

bit

of

history

for

people

who

missed

the

discussion

on

the

mailing

list,

so

I

requested

the

offers

from

the

ACDF

draft

and

the

initial

Direction

draft

to

come

with

a

merged

solution.

So

this

diffraction

framework

is

a

measure

of

the

to

document,

so

the

ACDF

and

via

DF

election

document.

A

We

also

adopted

a

document

as

a

working

of

documents

of

the

EVP

and

I

am

a

multicast,

and

we

have

several

documents

that

are

should

be

ready

for

working

about

adoption.

If

we

are,

you

think

we

are

not

ready

for

working

on

production.

So

if

you

change

your

mind,

please

advise

us

I.

Just

one

comment

on

the

MSD

PSA

draft,

so

normally

the

poor

for

the

working

all

production

ended

yesterday,

but

we

received

on

the

mailing

list

very

few

replies

to

the

poor.

A

A

Ms

ese

interoperation,

okay,

yeah

very

few

people

within

with

people

who

thinks

that

this

document

should

be

adopted,

raise

your

hand

and

within

with

yoga

people

who

thinks

or

someone

else

in

Rome.

Who

think

that

this

document

should

not

be

adopted.

Because

there

is

something

wrong

and

there

is

no

interest

for

the

working

group.

A

A

C

B

A

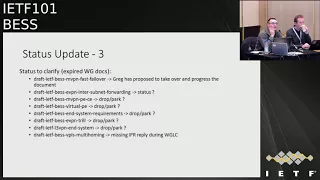

Okay,

we

have

a

lot

of

working

group

documents

that

are

expired.

Unfortunately,

in

we

would

like

to

get

a

feedback

on

each

document

to

see.

Do

we

need

to

progress

them?

Do

we

need

to

drop

off

per

gram?

So,

yes,

first

of

all

the

MVP

and

fast

failover,

so

Greg

told

that

you

would

like

to

take

over

one

progress.

The

document

which

is

a

good

news,

so

I,

don't

know

if

Greg

is

in

the

room

or

not,

unfortunately

not

for

the

evpn

in

terms

of

net

forwarding.

D

D

E

B

A

So

we

would

like

also

to

get

the

statues

of

some

documents

that

are

not

on

the

agenda

today.

So

first

we

lay

a

free

VPN

yang.

Do

we

have

an

offer

in

the

room

who

can

provide

an

update,

we

received

by

email

or

request

to

try

to

progress

the

yang

model

as

soon

as

possible,

but

we

would

like

to

ensure

that

we

are

really

ready.

A

F

For

the

layer,

2

VPN,

so

basically

we

have

two

updates

and

then

we

now

comply

to

the

network

instance

model

and

we

also

added

the

interface

reference.

So

the

attachment

circuits

now

refer

to

the

interface

graph

and

I

think

we

believe

the

authors

believe

that

the

draft

is

complete

and

we

would

like

to

make

a

last

call.

A

One

comment

regarding

the

yank

models.

As

a

chair,

we

would

like

to

ensure

that

all

the

yank

models

that

are

related

to

VPNs

are

modeled

in

the

same

way.

So

I

don't

know

if

the

offers

from

the

different

yang

model,

l2,

l3,

m,

VPN

and

evpn

are

talking

to

each

other

to

ensure

that,

from

an

operational

point

of

view

and

configuration

point

of

view,

the

user

will

have

the

same

experience

is

that

is

this

work

already

done

or

not?

H

A

B

A

I

also

would

like

from

this

office

to

maybe

discuss

with

the

routing

policy

young

people

in

Alta

gwg

to

see

how

the

housing

policy

can

be

used

or

extended

for

the

VPN

yang

models,

because

you

may

need

to

use

export

or

import

policies

at

a

certain

point.

So

you

need

to

know

how

to

do

it.

Do

you

need

to

extend?

Oh,

you

need

to

yeah

just

discuss

with

them.

I

Jeffires,

not

speaking

is

you

know

the

policy

editor

and

mostly

as

a

ghostwriter

and

the

l3

VPN

draft,

the

problem

that

we're

gonna

have

with

the

palace.

The

generic

policy

module

is

that,

well,

we

can

extend

policy

elements

that

can

match

things.

Many

vendors

do

very

different

things

in

terms

of

the

VPN

technologies

in

terms

of

how

leaking

happens

between

the

various

tables.

This

is

where

the

problems

we

ran

into,

which

is

simply

the

through

outing,

config

module,

and

they

decided

to

solve

about

my

punting

it

out

of

the

module.

A

I

The

attack

has

at

least

taken

into

the

l3

VPN.

Module

is

similar

to

a

lot

of

vendors

in

the

sense

that

targets

become

a

property

of

the

vrf

to

some

extent,

but

many

people

also

do

policy

algebra.

On

top

of

that,

to

do

things

no,

for

example

like

hub-and-spoke,

so

the

the

challenge

becomes

not

just

how

to

make

a

vrf.

Have

it

the

property

that

allows

grouts

to

be

attracted

to

it

appropriately,

which

BGP

could

pick

up,

but

also

how

to

describe

route

leaking

without

formalizing

somebody's

policy

algebras

being

canonical.

Okay,.

F

Just

to

add

that,

if,

if

you

meant

pegging

the

l2,

VPN

or

evpn

to

the

policy,

then

I

think

I,

don't

know

whether

you

meant

for

just

for

l3

VPN

or

not

at

least

for

l3

VPN,

LCVP,

okay,

then,

but

we

didn't,

our

drafts

have

progressed

quite

well

and

we

wouldn't

want

to

pack

our

drafts

to

the

policies.

Okay,

that's

not

complete!

Okay!

Thank

you.

J

E

Adrian

Farrell

yeah

I

will

just

respond

not

to

fit

some

silly

NIT.

This

is

at

the

sort

of

stage

of

building

implementation

and

is

linked

to

a

draft.

That's

in

the

spring

working

group

that

explains

how

all

the

pieces

fit

together

so

has

to

be

sort

of

seen

in

that

context,

but

getting

there

I

think

is

the

the

status.

E

The

nsh

bgp

control,

plane

document

we

think

is-

is

fairly

stable

and

and

is

now

dependent

on

where

we'd

like

it

to

be

dependent

on

the

MPLS

sfc

work

as

well,

but

failing

that

it's

applicable

to

nsh

on

its

own,

and

that

also,

then,

is

that

the

sort

of

implementing

getting

stability

stage

yeah

and

then

the

next

one,

the

best

service

training

document

which

I'm

not

an

author

of

that

I,

wrote

quite

a

lot

of

the

text.

I

think

you

can

work

that

out

that

that

I

think

is

actually

in

the

field.

E

A

H

Thank

you.

I'm

here,

I'm

andy

malice.

I'm

here

is

co-chair

of

pals

and

also

representing

the

mpls

working

group.

This

is

not

to

start

a

discussion

here

in

best.

Rather,

this

is

an

advertisement

for

a

discussion

which

will

be

happening

on

Thursday

in

the

MPLS

working

group,

and

so

we

just

want

to

make

you

aware.

So

if

you

have

an

opinion,

we

invite

you

to

please

come

to

the

MPLS

working

group

where

the

discussion

will

actually

take

place.

H

How,

however,

this

is

a

bigger

issue

than

just

LDP.

This

actually

affects

a

number

of

working

groups

and

number

of

protocols.

They're

all

up

there,

so

I

won't

bother,

read

them

to

you

and

what

we're

trying

to

do

in

the

routing

area

is

have

a

coordinated

approach

to

this,

and

so,

as

I

said,

we're

we're

in

discussions

with

the

security,

ADEs

and

security

area

is

the

best

way

to

to

go

forward

as

well

and,

as

I

said,

the

discussion

will

be

in

the

MPLS

working

group

on

Thursday.

So

with

that,

thank

you.

K

Today

we

have

a

routing

area

chairs

lunch,

because

if

you

have

notice,

there

have

been

not

only

for

the

P,

but

in

general

for

many

of

the

routing

area

drafts

of

work,

there's

been

a

lot

of

pushback

from

the

security

area

in

form

of

we

need

better

authentication,

fidelity

and

everything

else.

We

believe

or

I

believe

at

least

that

many

of

the

reasons

that

we

don't

necessarily

do

or

implement

is

because

of

operational

circumstances,

operational

issues.

So

what

we

were

doing

is

at

lunch.

K

K

So

one

of

the

first

steps

we're

going

to

do

is

create

some

templates

so

that

we

can

go

and

pick

and

put

into

drafts

that

doesn't

replace

any

other

security

considerations

that

you

should

have

in

your

drafts.

But

it's

the

first

step

to

help

you

or

to

help

everyone

get

to

where

we

want

and

to

avoid

discussions,

long

discussions

with

the

security

area

late

in

the

process.

Right,

we

can

attack

that

here

or

early

in

the

process.

It

helps

us

published

a

lot

faster

Thanks.

J

So,

first

of

all,

panel

aggregation

in

MEP,

N

or

EVM.

We

could

use

p2.

Envita

knows

whether

it's

a

TPP

to

Madonna

or

her

CBP

to

impede

on

oh,

we

can

use

one

tunnel

for

multiple

VPNs

or

for

multiple

BDS,

and

with

that,

the

ingress

PE

needs

to

impose

a

label

that

identifies

the

VPN

or

a

bid

or

BB,

which

is

broadcast

domain

and

then

impose

a

tunnel

label

current

specifications.

J

Their

label

is

upstream

allocated

from

the

ingress

peace

lego

space

and

that

label

is

advertised

in

corresponding

TMZ

routes

or

in

a

VPN

case

that

I'm

a

route

when

the

egress

route

equals

PE

will

maintain

contacts

label

tables,

they

say

one

table

for

each

ingress

PE

and

that

can

delay

the

entries

in

the

contest

label.

People

are

basically

the

upstream

area

labels

advertised

by

the

ingress

PE.

J

So

when,

when

the

egress

P

gets

a

packet,

it

was

the

first

CL

panel

label

and

from

the

tunnel

a

bow

you

can

identify

which

contest

label

to

go

to

use,

and

then

it

goes

into

the

contact

level

to

look

up

the

inner

label

and

and

identify

which

VPN

or

PD.

This

packet

is

for

another

case

is

multi

multihoming

segment,

supporting

an

EVP

and

if

I

can

use

this

horizon

procedure,

when

the

egress

piece

ends

a

Pam

packet

that

it

receives

from

Marty

Holmes

segment

using

pitten,

pitten

Oh.

J

It

also

need

to

impose

a

label

that

I've

been

identifies

the

source

segment

so

that

when

the

egress

PE

received

a

packet,

it

knows

that

the

packet

is

originated

from

a

particular

Eden

a

segment

so

that

it

won't

send

back

to

that

segment

again.

This

is

also

absolutely

located

and

this

another

form

of

hano

aggregation

because

a

single

tunnel,

it

means,

though

it's

useful,

a

single

VPN

or

a

single

broadcast

domain-

is

actually

used

for

multiple

segments

and

we

need

to

use

the

label

and

midi

file

the

segment.

So

that

works

in

theory.

J

J

If

you

have

1,000

P

VP

ends

and

each

has

1,001

piece,

you

will

need

1

min

and

labor

alone,

just

to

identify

those

deviants,

so

that

issue

has

not

surfaced

before,

because

the

PDMP

tunnel

aggregation

has

not

really

been

deployed,

or

it

may

be.

The

this

county

has

not

come

to

that

step.

Yet,

however,

beer

transport

is

inherit,

aggregation

turn

on

and

it

is

becoming

it's

getting

adopted,

Mormon

or

PD-

probably

well

soon.

J

So

this

becomes

a

seriously

ask

any

issue,

and

similarly,

this

is

also

a

problem

for

MP

to

MP

turn

on

so

there.

The

proposed

solution

here

is

that

piece

will

coordinate

their

label

allocation.

The

allocation

will

be

from

a

common

labor

pool

carved

out

lava

danced

out

of

the

dance

room

allocation

able

space.

This

is

no

longer

upstream

allocated,

and

this

this

means

simpler

forwarding

because

you

no

longer

need

the

context

label

space

label

table

here.

J

We

we

we

refer,

that

labor

pool

as

a

domain

wide

common

block,

it's

very

much

like

a

sRGB

block

and

in

addition

to

that,

the

they

also

use

the

same

label

for

the

same

api

or

for

the

same

BD

or

for

the

same

eden.

Assigments

with

that

we

can

reduce

number

of

labels

as

well.

If

you

have

ex

VPNs,

you

only

need

X

number

of

labels.

J

So

what

if

that

common

block

is

not

large

enough?

We

just

happen

to

have

the

deployment

with

where

you

just

cannot

carve

out

a

block

largely

enough.

The

solution

there

is

that

you

can

use

a

separate

label

space.

It's

very

much

like

upstream,

your

local

label

space,

but

it's

this.

It's

not

it's

no

longer

one

for

each

ingress,

P,

you

can

just

say:

R

use

one

or

two

just

a

couple

separate

label

spaces

and

the

other

piece

was

still

coordinate

their

label

allocation

from

that

space.

Now,

how

do

you

identify

this

label?

J

A

new

neighbor

space

so

in

in

the

in

a

path

data

train.

The

label

stack,

will

be

alike

like

this.

That

out

of

us,

you

have

the

tunnel

label

and

then

follow

that

you

have

a

label

that

identifies

that

label

space

and

their

labor

is

allocated

from

the

the

common

block.

And

then

you

follow

that

you

have

the

VPN

or

BD

or

AES

ID

in

five

minutes.

So

what

does

that

means

in

signaling?

J

So

today

the

the

labels

are

advertised

in

the

I

mail

routes,

even

in

case

or

in

pin

0

T

in

the

American

case.

In

that

pins,

a

tonneau

attribute

field,

pj

field

we

with

P

2

and

P

tonneau.

It

is

assumed

that

the

label

is

a

stirring,

elevated,

so

now's,

because

we

are

no

longer

using

the

upstream,

a

local

label.

We

need

to

indicate

that

this

is

not

from

the

absolute

miracle

label.

J

Space

is

Congress

from

common

blocks,

so

it

was

the

introduced,

let's

see

bit

in

the

flags

field

of

the

PTA

attribute,

and

then,

if

you

are

not

using

a

common

block

label

here,

instead

you're

using

a

separate

common

label

space,

then

the

pin,

0

or

I'm

a

routes

need

to

carry

an

additional

extended

community.

We

called

contacts

label

space

identifier

extended

community.

It

is

a

translates,

OPEC,

a

extended

community

in

the

form

of

ID

type,

plus

ID

type

tuple.

J

J

D

J

J

D

J

We

haven't

seen

a

customer

having

this

issue

already,

most

likely,

because

the

PTO

and

pita

know

aggregation

was

probably

not

deployed

at

all,

but

with

beer

coming

that

the

beer

tunnel

is

an

aggregation

tunnel.

So

as

as

long

as

your

number

of

weekends

having

times

number

of

peas,

if

that

number

goes

up,

then

the

problem

will

will

show

up

for

sure.

If

you

have

a

small

deployments

with

where

the

x

times

y

is

not

enough,

not

more

large,

then

sure

no

problem,

one.

D

Other

question:

with

respect

to

the

point

that

you

brought

up

in

terms

of

the

actual

customer

issues.

Typically,

the

customers

use

non

aggregates

because

I

guess

in

terms

of

their

skill,

even

though

it

increases

the

estate

in

the

core

in

terms

of

their

skill,

it

is

the

number

of

the

SP

MC

or

IP

MC.

Tunnels

are

not

that

large

for

them

to

be

concerned.

Do

you

have

you

actually

seen

a

SQL

issue

with

respect

to

the

some

customer

deployment

that

they

need

to

do

aggregation.

J

So,

as

I

said

today,

we

haven't

seen

actual

PTO

mutinied

regulation,

yet

probably

because

they

don't

have

that

scale

issue

yet

or

just

because

the,

and

so

so

far

they

can

tolerate,

with

that

man

number

of

panels

in

their

core.

But

now

with

with

beer

coming,

the

beer

is

aggregation

panel.

So

you

are

using

aggregation

with

beer,

and

so

as

soon

as

your

x

times

y

gets

noisy

enough,

you,

you

will

have

a

scanning

issue.

A

J

G

So

I

saw

Cisco.

So

if

you

do

the

aggregation

over

a

single

tree

like

this,

you

can

prove

that

I

have

less

state,

but

the

thing

with

beer

is

that,

let's

say

the

downside

of

that

is

that

you

shall

have

flooding

right.

All

the

packets

that

go

over

the

tree

go

everywhere,

but

with

beer

you

still

have

very

unique.

You

can

identify

each

destination

separately

right

so

with

Barry

have

the

advantage

of

the

state

and

no

flooding.

G

D

J

D

A

D

J

J

J

In

this

way,

that's

the

tunnel

type

specifies

it

says

the

note

on

of

information,

and

then

it

has

the

if

information

required

flag

set.

The

purpose

of

this

is

to

provide

a

mechanism

for

an

ingress

nodes

to

to

learn

to

find

out

which

egress

pease

want

to

receive

the

traffic.

In

a

meantime,

it

is

not

actually

finding

the

flows

through

that

to

any

particular

tunnel.

J

This

provides

some

useful

information

for

monitoring

program

purpose

and

the

second

topic

is

a

new

flag

in

the

in

in

pta

its

if

information

required

per-flow

flag,

and

why

do

we

use

that?

Let's

say

the

ingress

PE

that

it's

sending

out

some

selective

pinzhi,

while

car

routes

saying

that

I

want

to

Risa

I

want

to

send

traffic

to

star

star

or

to

Astarte

flows

and

I

said

that

per

hour

per

flows

flag?

That

means

that

I

want

each

egress

PE

to

send

back

individual

leaf

routes

for

each

flow.

J

For

example,

the

ingress

can

say

that

s

pin

z

is

for

a

particular

star

g,

star,

G

or

star

star,

but

the

egress

piece

was

sent

back

individual

SG

ad

routes,

so

this

kind

of

leaf

tracking

at

St

level

benefits

beer

or

ingress

predication,

because

now

the

ingress

PE

can

use

the

that

DVD

route

to

know

exactly

which

eager

speeds

need

to

receive

that

particular

SC

traffic.

So

you

can

do

more

precise

replication

towards

the

egress

key,

and

this

is

without

the

need

of

sending

individual

SG

s

pins

around.

J

J

J

There

was

a

more

complex

solution

and

with

some

unintended

side

effects,

so

the

new

way

is

that,

if

a

leaf

ad

routes

is

since,

in

response

to

that,

if

we

information

required

for

flow

flag,

then

it

will

carry

a

PDF

PDA

attributes

with

the

frack

sites

that

PDA

was

not

mandatory

before,

but

now

it

is

mandatory

if

that

DVD

route

is

sent

in

response

to

that

error.

Li

are

peripheral

flag,

a

lot

of

more

details.

Things

changes

in

the

in

the

draft

escape

those,

so

this

document

or

updates

the

wild

card

RFC's.

J

So,

with

all

these

changes,

we

believe

the

document

is

ready

for

a

working

group.

Last

call,

in

fact,

this

documents

is

the

deemed

as

a

normative

reference

to

the

beer

and

VPNs

back

and

which

is

ready

for

to

go

to

harvested

eaters.

So

if

this

document

could

remove

soot

can

progress

quickly,

there

will

be

great.

L

L

J

J

A

Okay,

thank

you.

Thank

you.

Are

you

having

the

next

steps,

so

this

document

already

passed

to

working

hope

last

call,

and

these

comments

will

be

introduced

as

part

of

the

Chappelle

review

as

a

working

or

share

I

would

like

to

issue

a

short

working

group

last

core

of

just

one

week.

Is

it

fine

for

everyone

in

the

room?

If

we

go

right,

this.

A

A

The

issues

that

we

may

have

is

that,

as

far

as

we

know,

there

is

no

current

implementation

of

this

explicit

tracking

draft,

which

means

that,

based

on

our

best

policy,

we

should

not

progress

this

document

to

further

step

because

we

don't

have

any

implementation.

But

this.

If

we

go

this

way,

we

will

block

also

with

EBL

document.

D

M

N

M

O

Hi

I'm

Patrice

Blissett:

this

is

for

the

EVP

and

yang

I.

Think

it's

gonna

be

pretty

quick,

so

we

haven't

presented

last

time

in

Singapore,

so

I

just

want

to

give

you

a

quick

update

where

we

are.

This

has

been

a

lot

of

work

and

back

and

forth,

and

on

the

author

and

well,

you

can

read

all

the

thing

that

has

been

updated

here

about

the

about

the

yang

model.

I

think

the

main

one

that

we

have

done

is

we.

O

D

So

this

is

this:

is

the

rep

one

of

the

IGMP

MLD

proxy

draft,

which

basically

tries

to

solve

the

issue

when

you

have

all

active

multihoming

in

a

VPN

and

you

get

the

IGMP

join

or

leave

can

get

hash

to

one

of

the

link

to

one

and

arrived

at

one

PE

and

leave

can

go

to

another

P,

so

we're

in

here

we're

trying

to

solve

that

issue

by

sinking

the

IGMP

Eskimo

G&S

star

emoji

Estates

between

the

multihoming.

Please

so

changes

since

last.

D

We

reduce

the

selective

multicast

procedures

to

that

of

ingress

replication,

because

there

was

an

overlap

between

the

four

point-to-multipoint

between

this

draft

and

bond

procedures

and

for

bond

procedures.

We're

gonna

defer

the

selective

multicast

to

to

that

draft

and

reference

it

here,

instead

of

trying

to

cover

it

in

two

places

we

there

was.

D

There

was

a

section

that

talked

about

the

multiple

tunnel

type

discovery

and

again

in

order

to

tidied

up

this

trap

and

get

it

ready

for

last

call.

We

decided

that

it

is

also

missing

certain

procedures

and

it

is

easier

to

cover

it

separately

rather

than

in

here

so,

and

the

remaining

changes

were

basically

tidying.

It

up

the

draft

and

replacing

some

of

the

terminology

which

we

threw

out

the

draft

now,

instead

of

pointing

to

the

Evi,

comma

B

D.

D

We

just

simply

refer

to

the

BDA

as

a

British

comment,

because

we

already

know

what

it

in

context

of

what

a

VI

that

is.

So

it

has

been

around

this

draft

for

about

two

and

a

half

years.

It

has

been

implemented

by

multiple

vendors

and

we

think

it

is

ready

for

a

working

group

Lascaux

and

we

like

to

request

a

mascot

for.

P

D

H

D

P

My

yeah,

my

second

comment,

is

I,

also

sent

a

few

comments

to

the

list

like

long

time

ago,

and

one

of

my

my

comments

that

you

know

it

wasn't

addressed

in

the

draft

is

to

simplify

the

the

IGMP

leaf

sync

procedure

and

in

particular

there

are

a

lot

of

use

cases

where

you

don't

need

to

have

the

whole

leaf

procedure,

especially

when

you

got

the

receivers

directly

attached

to

their

PE

right

or

even

they

are.

You

know

they

see

that

is

directly

attached

support

itself

proxy.

D

P

P

Q

Mancom

nur

from

Cisco

so

quick

comment

that

will

it

not

be

very

difficult

to

have

selective

decision

when

to

sync

the

leave

and

when

not

to

sing

the

leaf

with

respect

to

implementation.

So

will

it

not

be

good

idea

to

be

a

symmetric

or

maybe

we

can

discuss

offline,

in

which

case

you

want

it

not

to

be

Thanks

yeah.

D

D

Okay,

this

is

there's

an

old

draft

and

I

refresh

it-

and

I

think

it

is

already

in

your

IQ

for

the

working

group

Glasgow

just

wanted,

you

know

to

go

over

quickly,

go

over

the

status

of

it.

If

so,

the

little

bit

of

history

is

been

around

for

five

years

and

it

got

adopted

three

years

ago

and

it

has

been

deployed

also

about

the

few

years,

and

there

is

not

from

the

previous

review,

which

was

very

row

till

now.

There

hasn't,

you

know,

there

is

no

changes,

so

it

is

pretty

stable

and

it

isn't.

D

This

is

basically

an

important

solution

in

order

for

the

evpn

and

ppb

evpn

getting

get

deployed

in

brownfield

scenario

where

you

already

have

VPLS

or

ppb

VPLS,

and

you

wanted

to

introduce

a

VPN

or

pv

via

VPN

into

that

Network.

How

do

we

do

that

without

any

requirement

on

or

any

changes

to

the

existing

legacy

Pease

and

making

sure

that

the

whole

thing

works

seamlessly?

D

D

P

But

as

we

had

deployments,

we

found

some

in

efficiencies

in

that

selection,

so

my

name

is

Jorge

Robin,

and

this

is

the

list

of

the

cutters

and

basically

this

is

the

the

way

we

addressed

some

of

those

issues

that

we

saw

India

in

the

baseline

743

to

the

middle

phase.

Three.

So

in

seven,

four,

three

two,

as

I

said

before

we

have

this

designated

forward

an

election

procedure.

P

Now

so,

three

more

than

three

years

ago,

we

started

working

on

a

couple

of

documents,

so

one

is

the

DF

election

document

and

the

other

one.

Was

there

a

C

and

D

F

document

and

those

two

documents.

They

were

addressing

different

issues

that

we

we

had

with

the

default

DF

election

procedure.

They

by

the

way

they

may

work

together

perfectly

well.

So

during

the

ACDF

election

trough,

a

separ

Shepard

review,

basically

Stephan

strongly

suggested

that

these

two

drafts

should

be

merged,

and

basically

this

is

the

result

of

it.

P

We

merged

the

content

of

both

drafts

and

we

clarified

at

the

definition

of

the

IDF

election

extended

community

that

we

are

using

to

signalling

what

type

of

the

election

we

are

doing,

and

we

also

added

some

little

improvements

that

we

are

going

to

discuss

here

today.

So

the

first

thing

we

are

going

to

basically

comment

on

the

HRW,

which

is

part

of

the

IDF

election

draft,

and

this

is

Satya.

F

F

Yeah

in

the

base

HRW

yeah

in

the

base

HRW.

What

we

have

is

in

the

hash,

the

ethernet

segment

value

itself

did

not

enter

into

the

equation.

So

what

happens

is

if

you

have

the

same

VLANs

exactly

same

villains,

configured

on

different

ESS,

then

the

carving

will

be

exactly

similar.

So

the

thinking

was

that

that,

if

we

want

a

more

uniform

distribution,

then

we

have

to

enter

the

es

itself

into

the

hash.

F

F

F

So

that's

the

change,

and

so

these

are

the

advantages

like

if

the

same

set

of

peas

are

multi-home

to

the

same

set

of

ESS,

then

whether

you

use

the

modulo

DF

election

in

RFC,

743

to

or

in

fact

in

the

base

HRW,

they

would

result

in

the

same

P

being

and

elected

elected.

The

DF

for

the

same

set

of

custom

ends.

So

this

can

have

some

adverse

side

effects

on

both

load,

balancing

and

redundancy,

because

now

for

every

year,

it's

the

same

P,

which

is

the

beer.

And

so

we

thought

that.

F

Okay,

if

we

put

the

ESI

into

the

DF

selection

algorithm,

it

will

introduce

some

additional

entropy

which

will

make

it

more

uniform.

But

there

are

cases

in

which

one

would

want

the

carving

to

remain

the

same

in

the

sense

that

the

HRW

actually

does

a

ranking

of

the

piece.

And

by

this

method

you

would

lose

that

control

on

the

ranking.

But

that

has

some

specific

use

cases

which

I

think

in

one

of

the

next

talks

sandy

is

going

to

explain

that.

Thank

you.

P

P

Let's

say

that

an

individual

attachment

circuit

in

p2,

which

is

that

EF

for

both

different

segments

of

fails

and

what

RFC

742

says,

is

that

p2

should

actually

withdraw

the

a

deeper

API

route

for

that

attachment

circuit.

But

what

the

RFC

doesn't

say

is

that,

based

on

that,

the

apiece

that

are

part

of

also

of

the

ethernet

segment

should

actually

rerun

the

DF

election

and

and

remove

from

consideration

p2

for

the

Ethernet

segment

twelve.

So

this

is

precisely

what

we

are

doing

with

this

draft.

P

We

are

actually

taking

that

into

consideration

and

we

are

rerunning

the

DF

election

of

removing

p2

for

ethernet

ii

12.

Now

that

was

the

initial

draft,

but

we

are

improving

now

in

the

framework

traffic.

The

new

merged

draft

is

that

we

are

also

modifying

the

deflection

procedure

for

vadhana

we're

bundled

services,

and

now

the

DF

election

is

based.

It's

it's

now.

/

es

comma

villain,

whereas

before

it

was

based

on

es

comic

villain

bundle,

so

you

can

actually

take

full

advantage

of

this

capability

to

detect

black

holes

and

solve

them.

P

The

the

other

thing

we

are

doing

now

in

the

framework

is

that

we

are

signaling

this

capability

in

a

bit

in

the

IDF

election

extended

community

and

in

fact,

that

the

election

extended

community

is

defined

in

this

draft.

You

have

a

section

that

basically

shows

you

the

format

it's

an

already

deployed

extended

community

with

the

type

and

subtype

already

allocated

by

Ayana,

and

this

extended

community

has

two

fields

that

you

have

type

and

a

bitmap

in

the

the

Christian

community

is

advertised,

improv

and

processed

along

with

the

ears

out

and

in

the

DF

type.

P

What

you

encode

is

the

DF

election

algorithm,

so

the

values

we

are

defining

or

requesting

to

Ayanna,

and

this

draft

is

value

0,

which

is

backwards

compatible

with

the

743

to

default.

Modulo

VF

election

value

1

is

a

HRW

that

we

are

defined

in

this

in

this

draft,

and

we

are

also

allocating

value

255

for

experimental

use.

P

There

are

other

values

already

defined

in

other

specifications

like

a

value

2

in

the

preference

based

deflection

draft

and

value

3

and

4,

and

now

Ali

is

going

to

talk

about

some

of

them

today

and

for

the

bitmap.

Here

we

are

encoding

capabilities,

so

it's

not

a

DF

election

algorithm

per

se.

It's

not

choosing

who

the

TFS

in

the

ethernet

segment,

but

it's

actually

modifying

some,

how

the

DF

election

procedure

and

as

an

example,

we

have

the

bit

25.

P

It's

actually

signaling

this

a

CDF

capability

which

is

compatible

with

most

of

the

idea,

collection

types

that

we

can

think

that

if

type

failed

and

yeah-

and

there

are

also

other

bits

already

defined

in

other

specifications,

so

some

conclusions

and

next

steps

so

you've

seen

this

is

actually

a

pretty

old

new

document

in

the

sense

that

is

merging

to

you

know,

documents

that

we've

been

discussing

and

they

already

even

passed

a

working

group

last

called

so

this

new

document.

Basically,

what

we

are

asking

for

is

an

immediate

working

group

last

call.

P

A

Thank

you

and

thanks

a

lot

for

the

work

to

do

the

merge.

Thank

you

any

comment.

Yes,

this

document

is

coming

from

two

documents

that

we

are

almost

ready

for

working

up

last

call.

Very

few

changes

has

been

introduced,

expect

of

the

merge.

So,

yes,

we

would

like

to

proceed

to

be

working

or

place

call

as

soon

as

possible,

because

this

is

an

important

piece

of

work

and

we

have

a

lot

of

drafts

that

are

coming

after.

So

we

need

to

finalize

this

one

thanks

a

lot

for

the

work.

Thank

you.

A

Q

Q

So

is

there

really

need

of

the

third

one?

So

let

us

consider

that

whatever

DF

election

procedure

today,

we

have

whether

it

is

RFC,

say

one

four,

three,

two

or

whatever

we

spoke

in

the

previous

presentation.

So

the

way

it

it

works

today

in

this

topology

c1

is

multi-home

p1

and

p2,

and

we

have

only

one

VLAN

configured

there,

wheel

and

one.

Q

So

if

any

I

jump

adjoin

coming

from

receiver

side,

basically

it

could

go

to

either

of

the

peas

and

we

have

IGMP

proxy

procedure

which

says

that

we

can

sync

the

join

to

the

other

P

in

the

redundancy

group

and

what

is

going

to

happen,

and

here

we

can

see

that

p1

has

been

elected

as

a

DF

and

p2

is

non

DF.

So

when

multicast

traffic

comes

and

it

will

be,

p1

who

is

going

to

forward

towards

the

receiver

and

p2

will

keep

dropping.

But

what

is

the

problem?

Q

The

problem

is

the

scenarios

where

there

are

customer

who

are

provisioning,

complete

multicast

traffic

in

one

VLAN

we

are

actually

not

utilizing.

The

other

link

at

all,

so

we

are

going

always

p1-

is

the

one

who

will

be

following

the

traffic

and

p2

will

come

into

the

picture

only

in

case

of

failure.

So

this

is

where

we

are

coming,

that

there

is

a

need.

Can

we

improve

something

and

the

DF

election

procedure

where

you

can

load

balance

even

in

this

case,

so

the

requirement

briefly

would

be.

Can

we

distribute

the

multicast

flows?

Q

Even

if

the

whole

multicast

traffic

has

been

provisioned

in

single

wheeler

and

it

should

be

working

for

IGMP,

v2,

v3

and

MLD

V

1

V

2

receivers,

IGMP

v1.

We

are

not

considering

in

this

document

because

we

don't

see

any

practical

deployment

as

of

now

and

new

DF

election

procedure.

Definitely

it

should

have

backward

compatibility

with

all

of

the

other

DF

election

procedure.

Q

So

the

way

it

is

the

solution,

the

way

we

are

proposing

yeah.

So,

let's

see

we

have

some

unique

ass

traffic

flow

here

and

now

there

will

be

default

DF

election

when

I

say

default,

DF

election.

Basically,

we

are

using

the

HRW

base,

DF

election

and

let's

at

p1,

get

elected

as

a

DF

and

p2

is

non

DF

for

all

of

the

bump.

Traffic

p1

will

be

the

D

of

n

p2

will

be

non

DF

at

this

point

of

time.

So

I

have

a

new

membership

request

coming

in,

and

membership

request

reached.

Q

Q

So

it

means

that

if

you

see

the

left

side

table,

the

forwarding

state

will

be

for

SG

1

P

1

will

be

in

the

non

DF

mode

and

SG

1

P

2

will

be

the

DF

mode

for

rest

of

the

bump

traffic.

Still

P

2

will

be

in

the

non

DF

more

so

when

traffic

multicast

traffic

starts

coming,

it

will

be

the

p2

who

will

be

forwarding

the

traffic

towards

receiver

and

in

similar

way.

Let

us

say

we

have

the

next

SG

2

requests

coming

in

and

again.

Q

If

the

join

gets

high

as

2

P,

2

and

P,

we

will

sing

the

joy

into

p1.

Again

they

are

going

to

read

in

the

DF

election

for

this

particular

SG,

and

this

time

s

G

D

F

becomes

p1

and

for

the

same

flow,

p2

becomes

a

non

DF.

In

this

case

now,

multicast

traffic

will

be

forwarded

by

p1

and

same

thing

can

be

extend

for

star

G

as

well,

so

in

the

next

section.

Q

Okay,

so

with

respect

to

the

solution

which

we

are

proposing,

it

is

enhancement

of

existing

HRW

based

algorithm

and

why

we

are

using

s

G

R,

star,

G

info,

because

apart

from

ESI

VI

info,

the

this

is

the

key

information

in

multi

gas

flow,

which

can

differentiate

between

different

flows

and

including

s,

comma,

G,

R,

star,

comma

G

info.

We

are

going

to

get

better

distribution.

D

D

P

D

A

D

So

what

we

did

in

this

draft

is

basically

not

that

we

got

the

type

versus

capability

a

straight

out

in

our

deer

framework.

It

was

very

easy

to

leverage

that

and

use

it

in

this

draft,

and

basically

we

are

introducing

two

capability,

one

for

handshaking

and

font

for

synchronization.

If

the

Pease

we

are

assume,

we

are

requiring

that

all

the

Pease

in

the

redundancy

group

to

have

that

this

capability

in

order

for

it

to

get

exercised.

D

If

one

of

the

PE

don't

have

this

capability,

the

you

know

they

all

go

back

to

the

default

mode

and

what

is

when

I

was

looking

at

this

traffic.

I

think

what

is

missing

is

if

both

capable

leti,

both

synchronization

and

the

handshake

is

available.

The

Jeff

doesn't

currently

mentioned,

which

one

should

supersede

I

need

to

add

that

to

the

draft.

H

D

D

What

the

initial

version

was

introduced

in

ITF

96

about

three

years

ago

and

got

updated

last

year,

and

this

is

again

another

draft

that

is

being

requested

by

a

multiple

service

provider

and

has

been

implemented

by

multiple

vendors.

What

I

did

in

this

trap

is

clarify

some

of

the

terms

which

I

find

it

you

know,

but

I

find

it

confusing

in

each.

D

The

Jeff

was

talking

about

VLAN

on

Emmett,

unaware,

fxc

and

villain,

aware

fxe,

and

for

the

single

homing

and

multihoming

and

I

found

that

confusing,

because

when

you

talk

about

villain

a

very

or

villain

another,

are

you

talking

in

terms

of

the

control

plane

signaling?

Or

are

you

talking

about

in

terms

of

the

data

plane

so

that

I?

D

Basically,

we

got

only

because

we

got

two

modes:

one

is

fxe,

flexible,

cross-connect

and

with

that

one

we

don't

do

any

villain

signally

and

then

there

is

a

epic

say

with

villain

technology,

and

that

makes

it

very

clear

that

what

we

are

talking

about

in

FX

see

the

difference

between

FX

e

and

v.

Pw.

S

is

in

a

nutshell.

If

somebody

asks

you

what

is

the

difference,

FX

e

requires

vid

or

VLAN

vrf.

It

is

introduced

as

wheel

and

vrf

vera.

D

D

So

at

the

egress,

when

you

receive

a

packet,

you

need

to

be

able

to

know

based

on

the

villain

which

port

you're

going

to

and

that's

why

we

need

a

vid

vrf

to

do

a

lookup

to

know

what

what

we

are

going

to.

So

that's

in

a

nutshell.

The

difference

between

the

FX

e

and

you

know,

and

evpn

v

p

WS

e

v,

pn

v

p

WS.

What

was

between

two

ports?

There

was

not.

D

Then,

if

one

of

the

port

fails,

we

need

to

know

which

villain

got

impacted

and

for

that

we

need

VLAN

signaling

and

that's

why

we

call

it

FX

rivet

VLAN

signal,

so

we

had

a

failure

scenario,

friction

which

was

pretty

slim

and

thanks

to

Jorge.

He

provided

that

takes

and

we

expanded

it

with

a

nice

figure

and

Alba.

Thank

you

and

I

think

the

draft

is

now

is

in

pretty

good

shape,

because

I

went

through

the

whole

thing

and

I

modified

these

terminology

to

make

it

consistent

and

to

get

rid

of

ambiguity.

D

Is

my

last

one,

so

you

don't

have

to

worry

too

much

so

this

again.

This

is

one

of

the

draft

that

was

realized

needed

some

time

back

four

years

ago

and

we

started

with

the