►

From YouTube: IETF102-BMWG-20180717-1330

Description

BMWG meeting session at IETF102

2018/07/17 1330

https://datatracker.ietf.org/meeting/102/proceedings/

A

And

all

all

we

ask

is

that

during

the

course

of

the

meeting,

someone

occasionally

captures

all

the

text,

because

there

are

experiences

in

our

not

so

distant

past,

where

the

whole

thing

is

disappeared,

but

this

is

but

it's

really

easy.

We're

gonna

stick

to

this

agenda.

It's

all

right

here

and

for

example,

you

just

you

know,

stick

your

cursor

there

and

press

return

and

then

you're

typing

notes.

A

B

C

B

A

B

A

This

is

Sarah

banks

and

we

are

the

co-chairs

and

we're

going

to

entertain

you

as

much

as

we

possibly

can

today.

With

all

the

things

that

we've

prepared

to

hand

off

the

mic

to

our

friends

and

neighbors,

who

have

worked

so

hard

to

prepare,

slides

drafts

and

done

research

at

home

to

make

this

an

interesting

meeting,

our

ad

advisor

is

right

here

in

front

Warren.

Kumari

welcome

back

Warren,

incidentally,

did

you

ever

get

your

benchmarking

setup

running

cool

cool?

A

Obviously,

all

our

slides

are

available

on

the

meeting

materials

page

so

feel

free

to

grab

all

of

them

and

there's

actually

going

to

be,

probably,

especially

in

my

decks

of

slides,

there's

gonna,

be

backup,

slides

that

you

might

want

to

refer

to

later,

so

feel

free

to

do

that?

Okay,

the

note!

Well,

it's

still

early

in

the

week

we

have

an

IPR

policy.

It

means

that

you

have

to

disclose

all

your

IPR

in

a

timely

manner.

A

B

D

E

B

A

Here's

our

agenda.

First,

we

do

status

and

we're

gonna

look

at

our

new

charter

and

and

then

we've

got

this

one

draft,

which

is

I,

think

it's

been

working

group

accepted

actually

at

least

that's

what

I

did

the

last

time,

but

if

the

draft

name

doesn't

reflect

that,

so

that's

on

the

EVP

NPPB

we're

gonna

look

at

the

vnf

benchmarking

methodology,

which

is

now

about

automation

according

to

Rafael,

the

title

has

changed:

I

didn't

change

it

here.

Sorry

about

that

consideration

for

benchmarking,

Network

platforms,

actually

Jacobs

going

to

talk

about

that

in

the

online

agenda.

A

I

fixed

that

benchmarking

for

modern

firewalls

is

Tim

winters

here,

yes,

Tim

welcome.

Thank

you

for

joining

us

and

for

filling

in

for

the

rest

of

net

SEC

open,

much

appreciated.

I'll

talk

about

the

back

to

back

frame

benchmark,

which

is

followed

up

with

some

real

testing,

and

this

is

what

gets

everybody

exciting

here

when

we

really

talk

about

laboratory

work,

that's

been

done

and

right

behind

me

is

Paul

Emmerich

who's

going

to

also

talk

about

real

stuff

and

the

implications

of

the

real

testing

on

traffic

generator,

calibration

accuracy

and

precision.

A

So

that's

our

planned

agenda.

What

I'd

like

to

do

is

I'd

like

to

I'd

really

like

to

get

to

item

7

about

20

after

2,

something

like

that.

So

that

gives

us

about

40

minutes

for

the

testing

stuff,

which

I

think

is

going

to

get

really

interesting

in

Rawkus.

So,

let's,

let's

leave

plenty

of

time

for

that

and

and

two

minutes

again

for

Warren

to

tell

us

about

his

benchmarking

experience.

A

Okay,

any

questions

about

the

agenda.

Any

bashing

needed

everybody's

looking

at

their

laptops.

Let's

move

on

okay,

so

here's

the

quick

status,

the

SDN

controller

drafts

for

benchmarking,

their

approved

array,

congratulations

to

the

authors.

Sarah

was

one

of

them

and

and

I

supposed

to

the

you

know

that

approval

process

will

not

approval,

but

the

editing

process

will

continue

now

so

you'll

eventually

be

contacted

by

the

RFC

editor

to

clarify

a

whole

bunch

of

stuff

I'm

sure

nothing

goes

unscathed

there.

So

the

proposals

keep

coming.

I've

already

talked

about

these

industry

discussion

topics.

A

This

is

what's

going

on

everywhere

in

our

industry,

we're

talking

about

buffer

sizes,

the

assessment

of

them

search,

algorithms

and

the

traffic

generation

calibration.

That's

it's

actually

happening

everywhere,

but

we've

got

some

good

talks

on

all

of

this

today.

So

here's

our

current

milestones

boom.

A

Excuse

me

and

I

I,

let's

see

so

the

one

that

we

originally

planned

to

get

going

very

quickly

was

in

August

2018

methodology

for

next-generation

firewall,

I

think

we're

probably

gonna,

be

a

little

bit

behind

and

missed

that,

but

that's

alright.

All

of

these

were

really

aspirational,

and

the

next

step

here

is

to

I

wanted

to

I

wanted

to

mention

that

you

know

our

reach

are

during

discussions.

A

Basically,

two

things

happened.

One

is

that

we

codified

our

permanent

attention

to

the

virtualized

network

platform.

Benchmarking.

Here,

that's

now

sort

of

a

written

part

of

our

charter.

It

was

always

it

was

a

bullet

item

for

the

last

three

years,

and

now

it's

a

explicit

part

when

we,

where

is

it?

Oh

yeah,

the

one

in

the

middle

there

draft

on

selecting

and

applying

models

for

benchmarking

to

iesg

review.

That

was

actually

the

the

thing

in

our

Charter.

A

Where

we

got

the

most

comments,

it

actually

blocked

the

approval

of

our

Charter

for

a

while

and

and

the

reason

that

happened

was

that

in

fact,

there's

lots

of

models

being

prepared

for

network

services

and

possibly

applicable

models

being

developed

around

the

ITF

that

we

need

to

pay

attention

to

so

so

we

have

this

draft

on

the

table.

It

was

updated.

It

was

updated

back

in

May

actually,

but

the

author,

Shaun

Wu,

didn't

send

anything

to

the

list

about

it.

A

So

it

actually

kind

of

surprised

me

to

see

the

update

there,

but

we're

still

I

mean

we're

still

looking

at

this

topic,

and

what

we

need

to

do,

though,

is

look

a

little

more

broadly

than

the

proposal

we've

received.

That's

the

feedback

we

got

from

iesg,

it's

good,

it's

good

cross,

ietf

feedback.

Otherwise

we

might

get

all

the

way

up

there

with

something

that

is

just

gonna

get

crushed

with

discusses

blocking

comments,

any

questions

about

the

milestones,

I

think,

actually,

that

that

draft

on

selecting

and

applying

models.

A

A

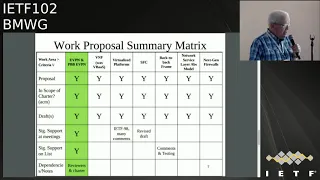

We

sort

of

keep

track

with

the

work

proposals.

We've

adopted

the

work

on

evpn

PBB

evpn,

but

we

still

have

to

have

you

know

more

review

of

this

and

more

comments

and

so

forth

before

we

go

further

with

it.

It's

definitely

on

the

Charter,

though,

and

and

so

that's

good.

The

rest

of

this

stuff

is

kind

of

represented

in

the

Charter

as

well.

Although

we

haven't

seen

much

on

SFC

service

function,

chain,

chaining,

lately

and

actually

vnf

is

gonna

have

to

be

really

titled,

vnf

benchmarking

automation.

A

A

We

also

have

a

standard

paragraph

that

helps

people

understand

who

review

our

drafts

from

outside

the

area.

This

is

kind

of

like

you

know

this

is

this:

is

testing

for

the

laboratory

security

people

don't

flip

out,

because

we're

saturating

interfaces

and

stuff

like

that

I

mean

it's

just.

It's

just

advice

to

the

world

that

that

if

you

haven't,

read

our

Charter,

it's

kind

of

encapsulated

right

here

in

these

few

sentences.

A

A

An

absolute

requirement

must

is

an

absolute

requirement

and

when

those

you

see

those

capitalized

words,

that's

exactly

what

it

means.

That's

now

clear,

with

8174

and

there's

slightly

new

version

of

this

typical

terminology,

paragraph

so

I

encourage

everybody

to

adopt

that

I.

Just

had

two

graphs,

two

drafts

go

through

the

iesg

and

got

dinged

on

this,

for

both

of

them,

so

I

went

back

and

fixed

all

of

my

stuff

and

I

encourage

all

you

draft

authors

to.

Please

do

that

and

that's

it

for

the

chairs,

unless

you

want

to

add

anything

there.

A

B

A

H

H

So

evpn

enter

PBB

VPN

became

the

RFC

in

best

workgroup

and

it

is

widely

deployed

in

service

provider

network

since

to

the

2014

it

started

as

a

draft.

Then

it

became

RFC

in

2016,

so

it

is

widely

deployed.

So

there

was

no,

you

know

benchmarking

criteria

to

rate

this

particular

services

when

deployed

in

two

different

service

promised

ly

the

service

power

consumed

this

EVP

an

MPLS

and

PB

ve

VPN.

So

we

defined

certain

parameters

to

benchmark

this.

A

H

I

caught

it

correctly,

I

was

pressing

the

other

key

okay

yeah.

This

were

the

comments

received

from

the

previous

I

mean

IETF,

so

the

terminology

is

sections

expansions

and

the

ordering

of

the

test

cases

and

objectives.

So

you

know

the

placing

of

terminology

section

about

the

topology

and

the

topology

requires

some

more

dig

into

it,

and

so

this

were

all

the

comments.

So

we

have

addressed

that,

and

these

are

the

basic

you

know

we

had

defined

certain

parameters.

You

know

we

define

eight

parameters

to

benchmark

these

services,

then

deploy

it.

H

How

how

to

differentiate

you,

one

particular

when

your

duty,

when

we

are

testing

a

one

particular

box

from

other

howdy.

How

do

you

differentiate

this

so

based

on

this

particular

euro

parameters?

We

defined

it

based

on

that

we

benchmark

this,

so

it

is

based

on

the

Mac

learning

the

Mac

fresh,

the

Mac

aging

high-availability

are

scaling

the

scale

and

the

scale,

convergence

and

the

soak

test.

H

So

these

are

the

parameters

we

define

to

benchmark

these

services,

so,

based

on

that,

it

will

be,

you

know

it

will

be,

test

will

be

conducted

and

the

measuring

measurements

will

be

taken

and

it

will

be

plotted

and

rated

on.

You

know

how

the

each

box

is

performing

it

so

acknowledgement

as

thanks

Sarah

for

helping

us

and

alpha

the

support

and

a

lot

of

feedbacks

and

back

and

forth

changing

this

and

thank

Sarah

and

Al

for

this

support

and

next

as

her

option.

A

B

Good,

does

anybody

in

the

room

have

routing

experience

testing

wise

besides,

the

folks

will

raise

their

hands

so

it'd,

be

very

helpful

for

the

folks

who

have

the

experience

to

read

will

definitely

cross

pollinate

this

outside

of

the

working

group,

but

from

an

editor

perspective

on

helping

students

sort

of

lock

into

place.

The

different

steps

so

I

think

this

dropped.

This

version

and

the

next

version

student

has

coming,

will

be

really

approachable

and

and

I

think

you'd

be

able

to

go

on

and

read

this

and

say

yep.

B

E

Jimmy

taro

AT&T

just

a

question,

so

evpn

encompasses

a

family

of

services,

one

of

them's

VPLS,

there's

access,

there's

a

number

of

different

sorry:

vp,

&,

v,

p,

WS

c

d,

pn,

f,

XE,

v,

pn,

v

pls.

So

when

you

talk

about

benchmarking,

it

is

it

specific

to

the

EVP

NV

pls

with

the

multihoming

and

all

of

those

features

or

intending

also

to

do

these

other

aspects

of

evpn.

No.

H

E

7432

actually

articulates

the

control

plane

to

realize

not

only

the

creation

of

a

VPLS

but

also

a

vp

WS,

and

also

an

FX

c,

so

I'm

just

curious.

Maybe

you

might

want

to

I

mean

what

I'm

seeing

is

many

people

using

a

VPN

for

a

lot

of

different

use

cases.

One

of

them

certainly

is

in

the

data

center

with

active,

active,

active,

active,

active,

active

multihoming

and

that's

a

huge

thing,

but

there's

a

lot

of

people

also

using

it

for

access

like

bring

me

circuits

across.

You

know,

layer,

3,

network

and

land

somewhere.

That's.

H

E

Guess

if

you

can

efficacy

takes

VLANs

and

treats

them

as

state

and

appetize?

Is

that

state

to

index

up

and

says

these

VLANs

live

at

this

next

half

in

this

context

of

fxa?

So

you

know

if

you're,

if

you're

looking

at

programming,

that

on

a

box

and

that's

part

of

the

evpn

scope

that

might

be

useful

to

people

but.

H

Currently,

anyway,

that's

a

good

point,

because

that

cannot

be

taken

into

the

VPLS

per

se,

because

the

parameters

we

defined

it

that's

a

good

point,

because

the

fxe

came

later,

it's

still

running

as

a

ietf

drop

zero

zero.

Earlier

it

was

individual

draft.

Now

they

adopted

it,

zero

geez,

I'm,

sorry

yeah!

This

is

a.

This

is

a

basically

benchmarking,

this

RFC,

because

it's

widely

deployed

since

2040.

So

we

want

to

make

this

as

the

platform,

then

the

this

add-on

services

come

so

so

we

are

benchmarking.

The

base

RFC's

this

tool

so.

B

H

B

I

think

what

I'm

hearing

Jim

say

is:

if

we're

not

clear,

you're,

not

clear

on

what

your

scope

is

in

the

draft,

and

could

you

clarify

it?

Would

that

be

correct?

Slash

I,

think

he's

also,

maybe

potentially

saying

you

may

want

to

consider

a

larger

scope

and

I

think

adds

an

author.

It's

your

right

to

not

do

that.

B

However,

you

also

made

the

point

so

my

comment

as

a

personal

contributor,

if

you're

going

to

say

it's

them

widely

deployed

since

2014

I

think

Jim

just

laid

out

two

very

typical

use

cases

you

may

want

to

consider.

One

I

agree

define

the

scope,

but

I

do

potentially

think.

Maybe

you

should

broaden

that

scope

because

it's

been

out

there

for

four

years.

So

if

we're

gonna

put

out

something

that

says,

here's

how

you

benchmark

it

you'd

want

to

cover

the

use

cases

of

what

folks

are

deploying

right.

H

At

this,

at

this

point,

I

cannot

comment.

I

need

to

think

because

the

parameters,

because

the

services,

what

Jim

mentioned,

is

totally

different.

What

evpn

is

kind

of

a

you

know

super

VPLS,

and

this

is

a

point-to-point

service

with

VLAN

features

and

all

so.

The

parameters

will

change,

but

I

need

to

think.

I

cannot

comment.

Jim,

I,

take

your

point,

but

I

cannot

comment

at

present

because

I.

B

H

I

really

appreciate

your

point

just

give

me

some

time,

because

you

know

I

need

to

think

I'll

get

back

because

at

present,

I

really

apologize.

I

can't

give

you

the

comments

on

that,

because

the

parameters

I

need

to

think,

because

you

know

it

right.

The

services

are

different,

but

it

won't

so

name

a

pin,

but

that

one

of

the

key

points

I

need.

Give

me

some

time,

sir.

So

I

get

back.

Thank

you.

So.

A

That's

it

that's

a

good

ending

point.

I

think

well,

well,

sort

of

leave

it

to

you

to

think

about

this.

There's

two

ways

to

proceed.

We

could

add

the

stuff

to

your

graph

if

you

prefer

to

go

that

way,

Sudi,

but

also

it

could

be

a

it

could

be

an

additional

effort

that

folks

propose

if

they

see

a

need

for

that.

So

that's

a

you

know

there

could

be.

You

know

a

sort

of

a

part,

1

and

part

2

kind

of

approach

here

and

I

think

that

that

could

work

well.

Yeah.

H

C

So

some

of

the

changes

so

one

thing

that

I

think

Samuel,

the

co-author

of

the

draft

did

we

went

to

the

nvo

three

working

group

off

and

kind

of

talked

a

bit

about

this.

So

one

thing

that's

actually

coming

in

this

next

revamp

is

quite

a

bit

of

changes

and

actually

some

of

the

scope

as

well

as

some

of

the

terminology.

C

What's

already

there

in

the

nvo

three

working

group

it'll

make

this

draft

a

bit

more

clear

of

what

exactly

we're

going

after

in

term

of

benchmarking

and

then

once

we

update

the

actual

good

terminology

as

well

in

this

it'll

dice

the

aligned

and

then

one

thing,

the

split

MBE

is

one

thing

we

haven't

covered

that

we're

adding

in

it's

kind

of

a

work

in

progress.

The

split

NDE

is

actually

the

network,

then

de

for

those

unfamiliar

as

a

network

virtualization

edge.

C

Exactly

that's

what

that's

what

their

needs?

That's

exactly

like

why

we

want

to

kind

of

define

in

each

test

like

here's,

here's

if

it's

co-located

with

hypervisor

vs.

but

and

have

kind

of

different

considerations

for

each

and

that's

kind

of

the

big.

The

big

updates

that

we're

going

after

in

this

specifically

I

mean,

if

you

look

at

the

83

94,

there's,

there's

some

ambiguity

and

how

things

come

to

an

agreement.

C

They

there's

literally

the

one

sentence:

it's

like

okay,

when

when

they

come

to

some

sort

of

agreement-

and

it's

like

okay,

hey,

that's

an

interesting

yeah

okay,

so

we

need

to

kind

of

come

up

with

benchmarks

to

say:

okay,

how

do

okay,

I

get

it?

This

ambiguous

and

every

implementation

is

different,

but

how

do

you

benchmark

it

so

that

you

know

exactly

what's

happening

when

it

happens

for.

A

It

so

so

Jakub

this

is.

This

is

the

start

talking

about

the

overlay

layer?

Yes

in

the

previous

slide,

which

I

don't

even

want

to

try

to

go

back

to

yes,

there

you're

talking

about

application

layer

benchmarks.

Yes,

so

we've

got

kind

of

this.

You

got

overlays

an

application

layer,

I'm

trying

to

get

the

scope

clear

in

my

head.

Here

it's

got

its

got

application

layer,

stuff

and

over

weary

stuff,

which

is

I.

Don't

know,

I

mean

I'm,

not

sure

how

that

fits

together.

Yet

I

think.

C

The

the

application,

meaning

you

could

call

application

or

layer

benchmarks

but

I,

think

the

goal

is

that

defines

also

some

benchmarks

that,

when

you're

benchmarking

from

a

server

itself,

what

are

those

have?

Those

benchmarks

change

so,

instead

of

like,

instead

of

just

having

a

traffic

generator,

that's

blasting

traffic

at

unlit,

Sun

stateful.

You

can

actually

go

in

and

okay,

here's.

What

are

the

application

layer

benchmarks?

You

can

do

to

actually

benchmark

this

in

a

different

way.

I

think

that's

the

okay.

C

A

I

I

C

C

C

B

B

J

C

A

C

So

they

yeah

even

in

the

84.

What

is

it

80

14?

They,

they

kind

of

call

that

out

as

well.

It's

like

these

things

could

be

used

for

non

hypervisor.

Workloads

like

just

need

a

container

workloads,

but

it's

very

specific

to

hypervisor

today

yeah,

but

though

I

think

they

have

to

do

even

further

work

to

get

to

that

point

where

it's

container

but

I

mean

it's

they're

actually

in

the

world

today,

so

it

just

will

have

to

work

its

way

way

in

there.

Now,

no

yeah,

that's

pretty

much.

C

J

A

K

In

the

race

of

convenience,

my

only

concern

is

like

I'll,

say

initially,

I.

Think

I

read

the

draft

like

like

six

months

ago,

but

my

main

concern

was

like

about

the

approaching

not

only

VMS

but

also

containers.

So

I

thought

that

the

the

draft

was

like

very

folks

on

a

hypervisor,

but

if

you

bring

that

world

I

provide

her

two

containers,

it

might

might

change

the

terminology

little

bit,

yeah.

C

Absolutely

I

think

I'm

yeah.

Definitely

the

I

don't

know

if

it.

If

in

this

scope

of

this

document

or

if

it's

like,

then

containers

or

other

workloads

outside

of

the

hypervisor,

and

if

there

is

no

hypervisor.

What

does

that

change

from,

because

we've

known

being

very

specific

around

like

okay,

here's

the

or

for

the

splits

there's

like

there's

at

env

and

then

the

and

then

enemies,

and

this

external

and

II

thing

and

and

there's

very

specific

things

to

like

okay?

How

the

hypervisor

does

this,

but

they're

so

I

mean

it's

it's.

C

C

J

C

B

J

B

G

E

I

Well

correct,

so

this

is

next-generation,

firewall

benchmarking.

This

is

actually

the

work

of

a

group

called

net

sec

open.

That

is

a

group

of

about

15

individuals

who

are

working

to

getting

together.

We

get

together

once

a

week,

have

a

comments,

call

and

talk

about

these

types

of

test

cases.

So

we've

done

two

drafts.

This

is

a

third

wrap

I'm,

really

going

to

focus

on

the

differences

that

we've

had.

I

If

you

want

to

ask

any

questions

about

any

of

it

feel

free,

there

might

be

some

of

the

test

cases

where

I'm

not

slightly

less

involved,

where

I

might

defer.

I'll

go

back

to

the

group

and

come

back

to

you

guys

if

you

have

any

specific

questions,

so

the

first

thing

we

did

in

the

third

work

of

this

draft,

we

reordered

it.

I

So

if

you

look

at

the

diff,

it

looks

like

a

mess

because

we

deleted

like

entire

sections

and

move

them

to

the

bottom

and

move

them

around

so

they're

just

kind

of

hard

to

read

worse,

I

won't

do

it

again.

This

was

a

one-time

thing,

so

there's

a

lot

of

reordering,

so

it

makes

it

look

like

they

were

massive

changes,

the

only

other

section

we

touched

editorialize

that

was

worded

kind

of

funny

because

different

authors

and

this

kind

of

gave

it

one

voice.

So

7

7

through

7

one

got

a

little

bit

of

a

rework.

I

Those

are

mostly

editorial

changes,

not

major

detailed

tests,

test

cases

7.

We

had

a

bunch

of

test

cases

in

particular

HTTP

throughput

in

HTTP

throughput,

and

we

removed

the

binary

search

requirement.

After

talking

to

some

of

the

test

and

measurement

guys,

this

was

kind

of

overly

complicated.

It

was

just

easier

to

take

out

that

requirement

that

we

always

do

a

binary

search,

so

we

removed

that

from

the

version

to

the

other

draft.

We

cleaned

up

the

objectives

of

72

and

73.

I

In

particular,

we

had

a

spirited

conversation

about

object,

sizes

and

those

are

the

ones

we

settled

on.

If

anyone

has

any

feedback

about

those

types

of

things

feel

free

to

read

the

draft

and

send

comments

or

get

up

to

the

mic.

Those

are

what

we've

chosen

for

now.

The

second

version

of

the

draft

did

not

have

any

real

test

cases

for

7

3

through

7

6.

I

Those

are

all

brand

new

in

this

draft,

where

we

wrote

down

the

individual

test

cases,

so

we're

closing

in

on

getting

some

of

these

wrapped

up

some

of

them

also,

it's

sort

of

a

rinse

and

repeat

situation

where,

once

we

wrote

it

for

one

technology,

we

could

just

input

input

the

different

parameters

just

made

it

pretty

easy.

So

once

we

got

the

general

setting

up,

we

were

able

to

update

those

test

cases

before.

A

I

Complicated

was

what

the

test

and

measurement

guys

were

very

concerned.

In

particular,

we

work

with

this

group

includes

xes

by

those

types

of

test

and

measurement

vendors

said

the

binary

search

will

really

complicated.

Some

of

the

results

that

we

were

going

to

have

to

get

out

like

there

is

easier

ways

for

us

to

get

to

the

throughput,

instead

of

actually

making

a

binary

search,

be

done

for

over

all

of

the

sets

of

results.

I

A

I

A

I

I

So

you

know

going

forward,

I,

don't

know

how

many

people

have

read

the

draft.

Obviously

we

would

love

to

get

some

on

lists.

Revision.

I

will

say

there

is

lots

of

review

going

on

inside

of

this

group

before

it

gets

listed.

There's

like

I,

said

there's

somewhere

between

ten

and

fifteen

participants

who

are

reading

these

test

cases

as

we

write

them,

but

the

more

the

merrier

you.

A

B

A

If

people

were

willing

and

in

this

group

to

join

our

mailing

list

and

to

share

comments

that

the

during

the

development

of

this,

that

will

look

a

lot

more

yeah

it'll,

look

a

lot

more

engaging

to

our

group

yeah.

The

folks

who

are

kind

of

being

you

know

just

presented

with

a

big

document

once

in

a

while,

yeah

and

and

an

activity

on

the

list

is

the

best

way

to

get

some

attention.

Pay.

I

Yeah

I

think

we're

getting

to

the

point

now

where

the

test

cases

are

stabilizing.

That

I

think

we

should

start

to

move

the

conversations

over

I

think

before

we

were

working

out

lots

of

details.

Might

it

kill

the

list

but

I

think

maybe

after

this

next

revision

I

think

absolutely

they

should

start

to

do

all

of

the

stuff

on

the

lists.

Great

again,

like

you

said

just

to

show

interest,

because

there

are

people

that

are

interested

absolutely.

A

A

I

So

I

will

make

that

suggestion.

Yeah

we're

working

right

now,

one

of

the

things

missing

before

we

think

this

is

ready

to

go.

Is

it

doesn't

have

the

security

effectiveness

in

particular

you're

talking

about

vulnerabilities

and

things

you

need

to

do.

The

list

is

closed.

We

have

an

idea

of

what

we

want

to

say

about

this

or

how

we're

gonna

go

about

getting

that

list,

so

that'll

be

in

the

next

revision

of

the

draft,

and

then

we

missed

a

cup.

I

I

C

C

I

C

I

A

C

G

A

Thank

you,

Rafael

I

completely

blew

the

agenda.

You

were

supposed

to

go

before

Jacob

and

him,

and

and

and

I'm

now,

gonna

demonstrate

how

it

happened.

Let's

see

here

so

I

got

to

back

this

thing

up

and

I

thought

I

had

them

all

in

order

here,

but

that's

now

see

that's

mine,

that's

mine

and

somehow

that

one

got

mixed

missed.

So,

let's

see

here

yeah,

that's

right

right

there.

It's

that

one

all

right.

So

this

magic

button

helps

and

then

go

this

magic.

One

else.

K

Yeah,

okay,

go

so

I'm

here

to

talk

about

the

the

updates

that

we

made

in

this

draft

starting

by

the

title.

I

think

we

we

had

a

lot

of

reviews

in

the

last

meeting

about

okay,

you're,

addressing

generic

ways

of

vnf,

Mitch

marking

soul,

and

it's

like

based

on

the

research

behind

this

dis

draft,

there's

a

lot

of

focus

on

automation,

so

we

said

yeah.

We

agree

with

that.

K

I

think

that's

some

consensus

we

had

and

then

we

change

the

title

for

methodology

of

net

marking

automation,

so

why

we

dated

basically

the

main

changes

are

about

automation.

As

we

discussed

in

the

last

meeting,

I

mean

there

there's

the

new

title:

the

scope

has

changed.

We

added

there's

some

modifications

in

the

methodology.

The

idea

is

that

we

do

not

aim

to

explore

all

the

UNF

marking

methodologies

but

ring.

We

aim

to

create

this

kind

of

like

approach

of

methodology,

for

if

you

have

a

DNF

benchmarking

methodology.

K

K

So

if

you

provide

all

the

tools

and

kind

of

like

a

generic

methodology

for

that

to

be

automated,

we

we

understand

that

the

developer

can

do

that

better

than

us

and

I

think

that

new

coming

gnf

method,

benchmarking

methodologies-

might

use

this

draft

as

a

reference

implementation.

For

that

we

have

also

new

contributors

so

from

Paderborn

university

that

are

doing

similar

work

as

we

are

doing.

We

have

for

its

implementation.

Also

added

to

the

draft.

B

That's

live

for

just

a

sec.

For

me,

the

the

biggest

problem

I

have

with

what's

written

here.

It's

a

large

part

of

what

we

want

to

be

able

to

do

in

BMW

is

have

repeatable

results

and

have,

if

I,

execute

the

test,

and

you

execute

the

test

and

we

were

to

execute

them

against

the

exact

same

thing.

We

come

out

with

the

exact

same

results.

The

problem

is,

if

you

leave

the

metrics

in

methodology

up

to

the

developer,

I'm,

not

entirely

sure

I'd

trust.

My

developers,

I

mean

I

love

my

developers

by

trust.

B

Can't

trust

but

verify

if

I

follow

this

methodology,

so

I

have

some

I.

Have

some

sympathy,

I

think

I've

been

at

the

receiving

end

of

these

comments.

I

think

I'll

gave

them

to

me

once

upon

a

time

to

you.

So

I

definitely

have

some

sympathy

there,

but

I

do

suggest.

Maybe

we

take

a

look

at

how

to

have

at

least

something

be

automated

and

give

yourself

some

wiggle

room,

but

something

needs

to

be

there

so

that

I

could

repeat

this

and

get

the

exact

same

apples

to

apples.

Comparison,

okay,.

K

Okay,

let

let

me

get

there,

okay,

all

right.

No

no

I

mean

this.

Is

a

generic

I

think

it's

a

major

concern

about

the

graph,

how

much

how

it

can

be?

Not

generic

or

not

to

be

specific

about

the

automation

but

I

mean,

and

here

he

is

we're

trying

to

restrict

it

to

have

this

developer

or

I.

Don't

know

this

the

creator

of

the

benchmark

methodology

to

come

here

and

specify

what

we

call

it,

the

the

benchmarking

descriptor-

and

this

comes

the

final

I

mean

the

main

of

the

changes

in

the

draft.

K

Then

we

focus

on

say,

like

the

main

focus

of

the.

The

draft

is

to

orbiting

a

vnf

niche

marketing

report

in

an

automated

way-

and

we

do

this

by

having

the

benchmarking

report

composed

of

two

parts.

One

is

the

benchmarking

descriptor

and

the

other

is

the

profile.

So

the

descriptor

is

that

one

that

is

the

one

that

I

give

it

to

you

and

you

say

like

now:

you

can

repeat

it

and

it

gives

some

kind

of

like

boundaries

and

and

I

Street

kind

of

like

environment

and

requirements,

parameters

that

you

can

say.

K

If

you

put

these

in

the

environment

that

you

describe

you,

it

will

give

you

the

same

performance

profile.

So

that's

what

you're

trying

here

I

know

it's

like.

We

are

in

a

zero

two

version,

but

we

will

get

there.

So

then

we

in

the

benchmarking

descriptor

made

mainly

we

described

the

procedures,

configuration

the

overall

description

of

the

the

benchmarking.

The

automated

benchmarking

scenario,

the

target

informations

about

the

vnf

itself.

Diversion

I

mean

that

the

target

vnf

diversion

the

model.

K

Although

the

specific

specifics

of

the

vnf,

we

have

the

deployment

scenario

that

basically

I

forget

to

tab

here.

It's

basically

the

topology,

the

requirements

and

the

parameters

for

each

one

of

the

components

that

we

have

described

it

in

the

BNF

for

benchmarking

setup

decision

in

the

draft,

and

then

we

have

the

vnf

performance

profile

that

it's

the

result

of

the

execution

of

the

benchmarking

descriptor

I

mean

it

gives

you

the

execution

environment.

That

means

where

the

benchmarking

descriptor

was

but

and

then

and

how

it

was

executed

to

create

that.

K

So

it

gives

you

hardware,

specifications

and

software

specifications.

This

is

also

detailed

in

the

draft

and

also

the

measurement

results.

We

we

put

the

measurement

results

as

active,

matrix

and

passive

matrix.

The

active

ones

are

from

the

direct

relationship

of

agents

in

the

vnf

and

the

passive

is

the

monitoring

or

the

infrared

matrix

from

the

dns

when

it's

possible.

So

here's

the

basic

I

mean

scenario

I'm.

A

B

B

K

Then,

in

this

case,

you

just

need

specify

how

to

attach

moonshine

to

as

a

probe

into

agent

and

put

the

parameters

to

configure

that,

and

that

goes

as

a

I

mean

a

requirement

for

the

test

itself.

I

mean

you

have

must

have

an

interface

to

an

agent

with

moonshine

and

you

must

have

required

the

parameters

to

execute

with

certain

I'd

say,

generic

guidelines

and

packet

traces.

So.

A

B

K

I

totally

do

new

contributors

now

I

mean

we

have

a

lot

of

psychos

offi

yeah

I

mean

what

do

you

find

the

draft

and

giving

the

ideas

and

the

terminology

was

changed

from

the

last

draft

and

I

think

it

will

you

come

out

to

change

again,

so

nothing

is

written

in

stone

in

a

draft.

So

basically

the

idea

here

is

that

I

told

you

have

this.

This

picture

of

you

know

you

give

the

vnf

benchmarking

descriptor

as

the

definition

of

the

method,

how

to

benchmark

the

vnf.

K

You

have

the

benchmarking

process

that

generates

the

benchmarking

report

that

contains

basically

the

descriptor

and

the

performance

profile,

and

with

all

of

that,

you

can

compare

repeat

experiments

for

further

this

benchmarking

descriptor.

In

this

case,

we

considering

the

Draft

at

multiple

steps

or

procedures

of

this

benchmarking

of

the

benchmarking

itself

can

be

automated

as

and

we

define

like

orchestration

of

the

components

itself.

The

placement

can

be

manually

or

automated,

please

put

in

your

menu

or

automated

way

the

management

and

the

configuration

of

the

components

also,

and

then

you

have

the

execution.

K

After

of

the

the

benchmark

itself

and

the

output,

we

consider

also

possible

ways

of

pricing

the

matrix

in

a

in

a

row

format

in

a

specific

format,

extracting

some

new

analytics

ways,

for

example,

clustering,

matrix

or

or

so

that

might

be

specified

in

the

vnf

paint

performance

profile,

and

there

are

many

issues

that

are

unresolved

in

this

draft

I

mean

at

least

now.

We

we

understand,

we,

the

co-authors,

that

we

have

a

good

skeleton

of

I

thinks

that

we

can

work

from

now.

K

On,

for

example,

we

need

to

clarify

the

automated

benchmarking

procedures,

I

mean

which

what

is

happening

actually

in

each

one

of

the

bullets

that

we

have

in

the

draft.

We

need

to

specify

clearly

each

one

of

this

particular

case

in

subsection,

5.4

I

mean,

for

example,

when

you

have

the

case

of

a

noisy

neighbor.

K

When

you

have

the

case

of

failures,

or

you

have

the

case

of

flexible

V&F

that

inside

of

the

NF

itself,

it

might

have

multiple

components

that

might

scale

depending

depending

on

the

the

traffic

workload,

and

this

must

be

represented

in

the

VN

MH

marking,

report,

yeah

and

so,

what's

still

missing.

We

think

that

we

must

detail

these

interfaces

like,

like

you

say,

of

the

agent

monitor

with

the

prober

and

listener

this

terminology

might

might

be

updated.

We

need

specify

how

these

interfaces

work

actually

in

the

draft.

K

The

the

action

of

the

each

one

of

the

components

like

the

manager,

agent

and

monitor

might

take

on

the

messages

and

how

they

parse

the

the

actions

that

they

must

take

to

run

the

benchmarking

stimuli

and

parse

the

metrics.

The

possible

issues

of

the

automation

approach.

I

think

we

need

to

find

out

like,

for

example,

there

if

you

are

going

to

but,

for

example,

a

binary

search

as

some

way

of

a

procedure

in

this

automation,

scope,

I

think

we

need

to

say

hey.

K

This

might

be

a

problem

here

here

and

there

and

I

mean

as

a

consideration

for

for

the

reader

of

the

draft.

In

parallel,

we

really

the

quarters

we

are

developing

an

information

model

that

we,

because

we

have

this

reference

implementations.

There

are

a

gene,

the

1i

I

coded

in

the

other

one

TNG

bench

from

from

the

manual

the

other

quarter,

and

we

are

doing

tests

side

by

side

and

we

are

creating

this

half

restore

model

information

model

that

we

might

be

useful

further.

K

The

whole

draft

I

don't

know

if

it's

in

this

as

we

talked

earlier,

it

might

be

in

the

scope

of

the

draft

or

not.

We

need

to

still

see

how

we

we

gonna

put

in

inside

the

draft

or

or

as

a

half

restore

I.

Don't

know

if

formative

reference

for

the

draft

and

now

we

he

I,

put

that

RFC

21

19,

but

it's

no

8174,

so

yeah

we

are

going

to

update

the

draft.

B

K

K

K

A

K

A

A

B

A

All

right,

so

this

is

the

draft

on

the

updates

for

the

back

to

back

frame

benchmark.

This

is

where

we're

desperately

trying

to

measure

the

size

of

the

buffer

of

the

device

under

test

and

RFC

25:44.

Our

most

fundamental

RFC

specifies

a

method

to

do

this

and

that

the

thing

we're

trying

to

measure

is

actually

the

longest

burst

of

frames

that

a

device

under

test

can

process

without

loss.

It's

intended

to

examine

the

extent

of

data

buffering

the

the

material

there

was

extremely

concise

in

terms

of

the

procedure

and

reporting.

A

In

other

words,

there

wasn't

much

there.

It

was

like

one

page

so

so

we

ran

some

tests

in

the

vias

perf

project

as

part

of

the

open

platform

for

nfe

last

year,

publish

the

results

at

the

at

the

summit

and

what

we,

what

we

basically

decided

there

was

that

a

quite

a

few

considerations

could

be

improved.

I've

skipped

over

a

lot

of

those

here,

but

the

main

one

is

this

idea

that

when

we

measure

the

longest

burst

that

a

device

can

accommodate,

while

that

burst

is

being

transmitted

into

the

device,

the

device

is

also

thumb.

A

Reading

packets,

basically

forwarding

frames

and

the

previous

calculation

didn't

account

for

that

at

all.

In

other

words,

by

the

time

you

try

to

assess

the

size

of

a

buffer,

and

you

count

the

number

of

packets

which

have

been

sent

some

of

those

packets

aren't

in

the

device

anymore,

they've

actually

gone

out.

So

so

that's

that's

what

the

correction

factor

is

simple

and

that's

what

it's

all

about,

accounting

for.

So

when

we

tried

to

calculate

the

number

of

packets

in

the

buffer,

we

calculated

the

average

length

of

this

burst

the

number

of

back-to-back

frames.

A

We

divided

it

by

the

maximum

theoretical

frame

worried

because

they've

been

sent

at

the

back-to-back

rate,

and

that

gives

us

basically

the

time

of

that

is

represented

by,

though

all

those

back-to-back

packets

put

together,

but

now

I've

explained

the

second

part

of

this

that

basically,

we

know

that

the

throughput

over

that

amount

of

time

is

going

to

have

extracted

the

maximum

throughput.

It's

going

to

extracted

some

number

of

frames.

So

that's

why

we

have

this

corrected

factor

here.

A

So

we

have

the

implied

buffer

time

and

we

correct

it

by

this

fraction,

which

is

the

measured

throughput

over

the

maximum

theoretical

frame

rate,

and

it

turns

out

that

reduces

the

buffer

size

quite

nicely

to

something.

That's

more,

probably

a

little

closer

to

accurate.

Now

this

this

test

only

ever

intended

to

work

with

a

single

egress

port,

where

we're

sending

traffic

and

and

a

both

a

single

ingress

and

egress

port.

So

it's

completely

different

from

the

test

that

was

described

in

the

data

center,

benchmarking

80

to

39

I.

Think

it

was.

A

A

So

we

had

some

questions

in

the

draft

for

discussion

and

we

said,

should

just

particular

search

algorithm

be

used.

We

think.

Yes,

the

answer

is

yes,

we

think

it

should

probably

be

binary,

search

and

and

and

also

should,

search

trial.

Repetition

should

include

trial,

repetition

whenever

there's

a

loss

observed

to

avoid

the

effects

of

background

loss

unrelated

to

buffer

overflow.

A

So

now

we're

thinking

of

two

kinds

of

sources

of

loss

here:

kind

of

a

transient

loss

that

might

be

present

on

the

links

really

high-speed

links

or

in

the

devices

itself,

if

it's

a

virtualized

device

and

and

and

so

yes,

we

think

the

answer

is

yes

there

as

well.

So

we're

gonna

see

some

results

about

that

in

a

moment,

so

the

next

steps,

who's

read

the

draft

one

hand.

Thank

you

Oh

two

hands.

Thank

you.

A

So

if

you

guys

have

comments

that

would

be

great

to

hear

about

now,

but

otherwise

we

need

more

readers

before

we

can

adopt.

This

we've

got

a

milestone

on

our

Charter,

but

we

can't

adopt

this

if

it's

of

course

I'm

speaking

as

a

participant

now

I'm

glad

to

qualify

that

so

other

ideas

are

always

welcome

here

for

doing

this

kind

of

benchmarking,

we'll

hear

more

I

think

a

little

more

about

that

later.

To

possibly

so

comments

are.