►

From YouTube: IETF102-MAPRG-20180719-0930

Description

MAPRG meeting session at IETF102

2018/07/19 0930

https://datatracker.ietf.org/meeting/102/proceedings/

A

A

But

given

you

probably

already

have

found

the

slides,

you

might

already

know

whether

it

is

ok

before

we

actually

start

with

our

agenda,

as

we

usually

do,

and

we

have

a

lot

of

nice

presentations

today.

I

would

like

to

quickly

talk

about

the

AME

review

we

had

at

the

last

IETF

meeting.

So

every

research

group

gets

like

reviewed

from

time

to

time

by

the

IAB

to

have

some

kind

of

feedback

mechanism

discuss

how

to

develop

the

group

and

see

like

if

it

makes

sense

to

continue.

A

We

had

our

very

first

review

with

the

IEP

and

in

general,

as

was

was

very

positive,

so

they

all

kind

of

liked

it.

But

we

also

discussed

about

how

to

develop

the

group

into

certain

directions,

because

what

we

do

right

now,

because

that's

kind

of

the

feedback

we

got

from

the

beginning

from

the

group

is,

we

focus

very

much

on

measurement

results.

Getting

people

from

academia

presenting

measurement

results,

then

kind

of

you

can

consume

this

information

and

talk

to

the

people

and

and

get

a

connection

there.

A

So

what

we

do

in

more

and

more

detail

is

that

we

usually

try

to

solicit

eight

contributions

from

academia

or

also

industry

research.

We

did

so

by

going

to

different

conferences

whenever,

like

day,

four

I

happen

to

be

at

a

conference,

we

try

to

announce

that

Mataji

exists

and

people

should

send

contributions.

We

also

had

some

Lightning

talks

which

were

like

explicitly

on

gender

and

these

kind

of

things

we

use.

A

The

way

we

select

the

contributions

is

mainly

driven

really

by

the

Charter.

So

first

check

is

always

is

a

contribution

in

charter,

and

then

we

also

prefer

contributor

presentations

that

provide

data

over

a

presentation

that

only

talked

about

methodology

or

something

because

that's

kind

of

the

feedback

I

think

we

got

from

this

group.

We

in

general

try

to

fit

as

much

contributions

into

the

agenda

as

possible,

but

it's

also

sometimes

a

logistic

question.

A

So

usually

we

try

to

prefer

in-person

presentation

over

remote

presentation

just

so

that

you

also

have

a

chance

to

interact

with

the

speaker

after

the

session

or

during

the

whole

week,

or

sometimes

it's

just

like

this

person

can

only

present

at

a

European

meeting

or

just

present

at

a

u.s.

meeting.

So

we

have

to

figure

out

if

we

do

it

this

time

or

next

time

or

whatever.

A

What

we

really

try

to

avoid

is

having

any

presentations

in

here

that

I

presented

in

other

working

groups.

That

can

still

be

interesting,

but

usually

we

have

a

nice

presentation,

so

we

think

it

would

be

unfair

to

give

time

to

somebody

who

already

has

a

slot

somewhere

else,

and

we

also

really

prefer

presentations

which

are

kind

of

Newars

or

cannot

be

discovered

somewhere

else

like.

If

there's

a

video

of

your

presentation,

then

we

might-

and

we

don't

have

time

for

you-

then

we

might

like

maybe

decide

for

a

different

presentation.

A

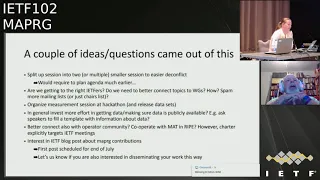

That's

also

kind

of

what

we

presented

to

the

IAB

and

what

came

out

of

this

meeting

was

a

couple

of

ideas.

What

we

could

maybe

change,

or

improve

and

I

want

to

quickly,

just

like

it's

all

written

on

the

slides,

and

maybe

you

have

ideas

or

we

can

also

discuss

later,

but

like

give

a

overview

for

you,

so

there

was

one

idea

to

instead

of

having

one.

A

A

Does

everybody

know

if

there

is

a

presentation

here

that

is

relevant

to

their

own

work,

that

the

presentation

happens

and

do

they

actually

come

and

we

had

like,

because

of

rumors

were

usually

worried,

for

we

had

a

feeling

that

my

bride,

she

is

well-known,

but

of

course

we

can't

do

more.

We

can

send

like

more

emails

to

medalists.

We

could

send

emails

to

the

chair

madness

to

the

chairs,

can't

judge

if

it's

relevant

for

the

working

group

or

not

so

yeah.

Any

input

is

welcome

here.

C

D

Is

okay?

Talk

about

this

now

yeah

Aaron

Fox,

so

one

thing

I

recently

did

in

taps

was:

do

a

reset

on

the

conflict

list,

and

so

what

you

might

do

send

a

message

out

to

the

list

asking

for

people

who

wanted

to

be

here

but

couldn't

because

of

a

conflict.

And

then

you

can,

you

know,

maybe

get

some

new

entries

for

your

list,

but.

A

D

A

E

G

E

A

submission

cut

off,

but

here

or

you

guys

know

what

research

you

know.

You've

done

results

you've

got

potentially

a

long

time

before

two

weeks

before

the

IETF

meetings,

so

you

might

be

able

to

give

Mary

a

more

of

a

heads-up

so

that

she

could

not

have

to

struggle

so

hard

to

it

with

a

conflict

list.

So.

A

Spencer,

actually

we

don't

like

this

time.

We

really

knew

which

presentations

are

in

at

the

time

where

the

a

draft

gender

gender

deadline

was

requested,

because

it's

it's

maybe

we

started

too

late

to

ask

for

contributions,

but

people

are

also

deadline

driven

right.

Whenever

you

put

a

deadline,

you

get

all

contributions

at

the

deadline

so

and

then,

like

even

after

the

debt

and

whistles

going

to

people

in

trying

to

talk

to

them

and

figure

out

what

they

want

present

and

there

was

a

lot

of

frozen

back.

A

H

Colin

Perkins

I,

don't

think

this

has

been

a

problem,

but

I

don't

get

something

we

should

look

at

going

forward.

Is

that

we're

getting

increasing

amounts

of

research

group

measurement

research

coming

in

to

the

ATF,

with

things

like

the

a

and

iw

in

the

a

and

a

P?

We

should

make

sure

that

there's

a

clear

story

for

where

this

research

goes

and

where

its

presented,

we

don't

want

to

be.

Having

the

same

talk

several

times.

No.

A

Sir

I

mean

definite,

so

we

had

actually

this

case

that

somebody

who

presented

at

the

workshop

on

Monday

also

while

ago

actually

submitted

a

contribution

to

us,

and

we

knew

that

this

was

coming

up,

and

so

we

didn't

have

that

presentation.

So

that's

something

we

definitely

try

to

avoid

how

to

redirect

people

to

the

right

group

is

actually

kind

of

more

difficult,

because

not

everybody

at

the

workshop

is

interested

in

the

rest

of

the

ietf

right

in

these

kind

of

things.

So

we

also

I

mean

it's

also

like.

A

A

A

We

don't

know

what

that

means,

yet,

if

we

just

say

like,

if

you

want

to

do

something

on

measurement

come

to

this

table

or

if

we

actually

think

about

like

very

specific

project

where

we

say

like

we

want

to

hack

on

this

measurement,

or

we

want

to

run

like

this

kind

of

small

measurement

study

today

and

and

see

if

people

are

interested.

So

if

you're

interested

in

contributing

that

that

part

or

if

ideas,

what

you

would

like

to

do

at

the

hackathon,

please

talk

to

us.

A

Another

thing

that

was

discussed

was-

and

that

was

discussed

in

is

working

group

at

the

very

beginning-

is

how

do

we

get

actually

hands

on

the

data?

How

can

we

make

sure

that

all

the

data

that

is

presented

is

available

for

further

research

and

analysis,

and

how

can

we

support

this

kind

of

data

exchange?

As

this

group

I.

A

But

of

course

we

could

also

do

a

little

bit

more

effort

and

try

to

figure

out

like

put

in

the

wiki,

for

example,

where

this

data

is

available,

which

data

or

let

everybody

who

presents

fill

out

a

template

and

tell

us

about

the

data.

What

kind

of

data

you

have?

What

format,

how

much

data

out

where

to

find

it?

These

kind

of

things?

Would

they

be

interest

in

this

kind

of

effort.

A

A

That's

also

focused

on

measurements,

so

we

could

kind

of

try

to

like

more

closely

work

with

them

together,

but

I

have

to

say,

like

I'm,

actually

not

shortened

up

certain

about

that

point,

because

first

of

all

our

Charter

says

were

targeted

for

IETF

meetings

and

second

is

given.

We

have

this

mode

where

we

kind

of

present

data

I,

don't

think,

there's

something

where

you

can

like

a

lot

of

do

a

lot

of

common

work,

because

it's

just

a

forum

for

discussion

Brian,

who

is

the

mad

chair

like.

I

Brian

Dremel

right

at

Polizzi,

co-chair,

yeah

I

think

that's

probably

the

right

way

to

do

it.

I

mean,

like

you

know,

we

we

talk

occasionally

you

and

I,

and

the

meeting

cycles

don't

line

up.

You

know

in

such

a

way

that

there's

much

conflict

so

yeah.

If

there's

stuff,

that's

here

that

I

think

should

also

go

too

ripe.

I

will

approach

people

and

you

know

vice

versa.

So

I

think

that

there's

you

know

a

lot

of

the

stuff.

I

That's

here

is

I

mean

they're

slightly

different

focuses

right

lecture

than

that

stuff

is

things

that

are

meant

to

be

a

little

bit

more

operationally

relevant,

but

also

things

they're

sort

of

you

know

even

just

interesting,

so

yeah

I

have

one

of

the

reasons

I'm

here

and

I

cover.

This

is

looking

for

interesting

things

to

encourage

to

come

to

Matt

insell

yeah.

A

So

I

mean

like

this:

one

is

easy

because

you're

sitting

in

the

same

office-

but

maybe

there

are

other

groups

that

are

focused

on

measurements

and

I'm,

not

aware

of

so.

If

you

like,

involved

or

you

know

these

groups,

let

me

know

we

can

see

that

makes

sense

to

incorporate,

and

then

a

very

last

point

was

also

that,

like

some

of

these

presentations

might

be

interesting

for

an

IETF

blog

post.

A

So

in

general

we

see

like

more

posts

on

the

IETF

block

and

I

already

did

this

and

talked

to

some

of

the

people

who

presented

the

last

time

and

they

will

write

a

blog

post.

So

if

you

are

presenting-

or

you

have

some

data

that

you

think

is

interesting

and

you

would

like

to

write

a

blog

post

just

come

to

me.

A

No

you're

all

waiting

for

the

presentations.

Okay,

that's

the

agenda

for

today,

so

we

have

to

head

up

talks

and

both

are

more

focused

on

on

tools

of

methodologies

and

our

measurement

data.

That's

why

their

head

up

talks

because,

as

I

said

earlier,

we

are

like

trying

to

focus

more

on

the

extra

data

and

then

we

have

six

torques

all

over

the

place.

So

it's

like

it's

about

privacy,

ipv6,

DNS,

packet

sizes,

so

the

typical

stuff.

We

are

interested,

let's

start

Johnny.

Where

are

you.

J

J

One

situation

we

face:

SSID

n,

it's

like

if

you

wanted

to

measure

a

lot

of

properties

associated

to

a

domain

name,

let's

say

wikipedia.org.

If

you

want

to

know

stuff

like

where's,

the

DNS

services

are

located

information

about

SMTP

HTTP.

Where

is

hosted

it's

very

hard

to

do

that

with

current

tools,

there's

a

bunch

of

open

datasets,

but

currently

there's

no

two

that

do

that

automatically

for

you.

So

if

you

want

to

measure

all

these

different

protocols

associated

with

any

domain

name,

we

would

end

up

to

have

something

like

that.

J

You

have

to

use

the

map

dig

mask

and

a

bunch

of

other

tools.

You

can

do

it,

but

it

ain't

pretty

and

that's

a

problem

because

you

end

up

wasting

a

lot

of

time

doing

repetitive

tasks.

You

have

to

do

with

very

different

data

formats

wasting

a

lot

of

time.

More

complexity,

as

we

know,

need

some

more

errors.

It

is

very

hard,

as

if

you're

academic,

a

reviewer

to

review

papers

and

try

to

reproduce

the

studies,

because

they're

gonna

be

wastes

a

lot

of

time

in

just

doing

the

measurements.

J

So

what

we

decided

to

do

is

to

build

a

new

tool.

It's

called

demamp

domain

name,

echo

system

mapper

and

what

it

does

is

just

automate

the

measurements

of

five

protocols

and

also

take

a

screenshot

of

a

domain

name

over

websites

of

exists

and

what

it

the

way

works

is

different

from

say,

map

Zimmy,

usually

you

have

a

list

of

IP

addresses

already

just

choices

for

you,

but

this

one,

you

have

to

provide

a

CSV

with

a

list

of

domain

names

can

be

as

long

as

we

use

for

data.

J

Now,

it's

almost

six

million

domain

names

and

in

a

single

machine

we

do

1

million

domains

a

day,

but

this

application

is

also

distributed.

So

you

can

scale

up

very

quickly

and

easily.

This

is

the

website.

Let

me

see,

there's

a

bunch

of

stuff

in

a

paper

applications,

demos,

whatever

in

C

Coco

to

journalize

death.

Oh

yeah,

the

good

thing

about

this

tool

that

later

once

you

they

do

the

to

do

those

all

the

measurements.

It's

very

easy

for

a

researcher

just

to

analyze

the

results

who's

in

sicko.

J

So

we

have

a

demo

dataset

on

the

website.

Selex

1

million.

The

queries

are

there,

you

can

download

and

analyze

and

I

just

want

to

show

one

thing:

this

is

a

table

that

shows

the

various

properties

that

we

have

measured

using

Alexa,

and

one

thing

we

found

is

that

currently,

and

unlike

some

1

million

seven,

seventy

percent

of

all

the

domains

support

HTTP

and

one

in

five

are

using.

Let's

encrypt

there's

way

more,

there

were

many

more

finders

in

the

paper.

Just

would

like

to

encourage

the

download.

What

do

you

think?

J

We

would

only

think

we

make

it

like.

We

make

it

only

open

source

for

researchers,

because

this

has

some

kind

of

commercial

application

possibility.

So

we

just

don't

want

to

incentivize

that

so

just

click

on

it

to

have

to

register

we're

gonna,

give

you

the

access,

repository

and

github,

and

that's

it.

Thank

you

very

much.

K

K

So

I'm

gonna

use

your

key

overview

of

elementary

Indiana

speedups

of

solutions.

This

was

presented

on

the

asari

connecting

American

well,

so

DNS

is

currently

monitored

in

two

different

ways.

One

is

doing

a

pretty

tag

regression

and

that

is

done

by

original

information

onto

the

NS

servers

and

10

standing

into

a

central

server

47.

K

This

method

is

used,

Festival,

IDs

C

was

developed

by

org

and

we

have

DNS

data

these

vital

root

servers.

The

second

method

is

during

the

series

in

data

setting

and

story

stretch

it

like

stirring

it

around

package

of

the

US.

These

another

use

by

an

example,

use

a

Hadoop

cluster

to

process

and

store

all

the

information

now

to

bring

me

this

metal

is

that

he

doesn't

give

too

much

information

out

the

current

status

of

the

DNS

servers.

So

we

started

looking

about

Oracle

in

properties.

K

They

take

our

methods

and

for

this

we

first

try

to

develop

our

own

structure.

We

develop

rapid

en

esta,

did

captured

storage

any

sensation

of

as

data,

but

we

resulted.

We

were

permitted

the

wheel,

many

of

the

things

that

we

were

developing,

where

I'll

raise

done

by

someone

else

and

also

when

we

presented

this

to

DNS

and

mr.

Eaton

Tate.

It

was

like

to

white

being

like

it,

so

we

start

looking

to

events

or

solutions.

K

We

thought

that

many

of

the

promised

that

we

were

presenting

we're

array

done

by

someone

were

paid

owning

production

and

we

start

analyzing

different

software

for

the

captor

storage

and

service

Asia

for

the

cattle

we

serve.

We

use

he

compare

different

software

like

packet,

big

collective

DSC,

and

we

saw

that

maintained,

answer

I'll

transfer

or

network

protocol

study

Ennis

use.

K

Finally,

when

end

up

with

that

AB

sector

like

this,

where

we

had

the

DNS

servers

and

we

cut

around

information,

we

our

own

solution-

that

was

DNS,

sibling,

I'll

deformation

resonated

to

a

clique

cluster

and

finally,

it

was

presented

in

a

graph,

a

novice

obsession

parasol.

You

ask

something

like

this,

where

you

have,

for

example,

topiary

domains

that

some

frightened

I

was

never

analyzed

directly

in

real

time.

We

also

have

the

any

key

domains

names

that

are

precise.

K

Some

of

the

performance

and

a

single

server

setup

we

test

with

real

data

from

technique

Chile,

and

we

found

that

these

we

could

process

like

CentOS

and

packet

per

seconds,

and

we

found

I

used

30

40

about

the

data

and

we

could

start

adding

40.78

every

day.

So

we

kiss

it's

entirely

possible

to

running

crying

like

and

store

like

at

year

of

the

eight-hour

more.

K

We

also

try

to

flu

the

wrong

service

experiment

and

we

found

that

we

can

handle

like

had

a

20,000

packets

per

second

on

one

single

server,

and

you

never

go

up

more

than

30%

of

the

cpu,

and

this

was

like

a

true

course

computer.

So

it's

kinda

scale

a

lot

more.

So

this

is

well

and

when

money

doesn't

be

in

a

service

thanks.

L

K

M

K

M

K

K

N

So

I

have

some

recent

data

on

UDP

packet,

reordering

that

we're

seeing

in

quick,

so

obviously

I

can't

say

whether

this

is

representative

of

you

know

what

what

someone

else

might

see.

I

can't

say

it's

representative

of

TCP,

but

at

least

for

this

data

set

it's

fairly

representative.

It

wasn't

chrome

stable,

so

we're

talking

fairly

large

sample

sizes.

You

know

millions

of

users

and

many

many

servers

and

we

have

some

from

the

server

side

and

some

from

the

client

side.

N

The

core

metric

is

actually

exactly

the

same,

and

the

measurement

code

is

exactly

the

same,

but

it

turns

out

the

data

does

end

up

displaying

a

little

bit

differently

and

I

apologize

for

that.

That

difference

makes

a

little

bit

more

difficult

to

interpret

but

we'll

walk

through

it

anyway,

so

I

probably

should

have

just

gone.

This

slide

also

we're

using

bbr

congestion

control

on

the

server

side

from

server

to

client,

but

we

are

using

cubic

from

client

to

server

so

that

actually

may

affect

the

measurement.

Data

may

potentially

change

the

likelihood

of

reordering.

N

Also,

the

client-side

data,

which

is

so

client,

is

the

client

receiving

and

it's

measuring

on

the

receive

side

and

the

server

is

sending

so

those

flows

tend

to

be

longer

their

CDN

flows

in

this

particular

case,

like

large

video

playback

things

like

that,

the

server's

idea

that

tends

to

be

you

know,

obviously

less

data

intensive

with

a

few

exceptions,

so

there's

an

arrant

asymmetry,

at

least

in

this,

but

it

kind

of

reflects

typical

web

traffic.

So

hopefully

it's

informative.

N

So

the

first

fact

is

just

how

many

percent

of

what

percent

of

connections

have

at

least

one

reordered

packet

on

the

client

side?

It's

only

5.4

percent

on

the

server.

It's

nine

point,

four

percent

I

can't

really

explain

why

there

would

be

more

reordering

going

upstream

and

downstream.

Personally,

maybe

someone

else

can

could

be

Wi-Fi

is

we

can

blame

everything

on

Wi-Fi.

N

So

this

is

on

the

client

side,

so

on

chrome

chrome

is

receiving

packets

and

this

is

in

packet

number

space,

and

this

is

actually

pretty

brutal

and

depressing

so

I

had

to

put

the

bottom

scale

at

a

log

scale,

because

there's

a

still

like

a

decent

amount

of

energy.

Around

100

and

and

I

thought

I

mean

I.

N

Think

we

don't

actually

get

to

like

point

under

0.1%

until

like

a

few

hundred,

so

things

are

pretty

bad

in

a

packet

number

space

and

in

particular,

as

you

can

see,

10

is

like

a

few

percent

right

there,

so

the

default

reading

threshold

of

3

is

kind

of

woke,

fully

and

sufficient

for

something

like

half

the

time

when

reordering

actually

does

happen.

So

it's

sort

of

you

know

you

pick

a

number

and

you

go

with

it,

but

it's

it's

sort

of

a

weird

number

things.

Look

a

little

bit

tomi.

O

N

As

only

on

the

client

side,

so

well,

okay,

I'll

go

the

server

side

later

after

I'm

gonna

do

all

the

client-side

data

and

then

all

the

server

side,

data

so

and

I

think

this

metric

I

can't

remember.

If

I

have

this

metric

on

the

server

side

or

not

yes,

so

on

the

client

side,

we

also

happen

to

record

it

as

a

fraction

of

min

RTT.

N

So

that's

just

kind

of

an

indication

of

like

if

I

was

to

do

it

in

time

domain.

What

would

it

look

like?

And

here

it

looks

quite

a

bit

quite

a

bit

nicer,

so

you

see

you

know,

there's

almost

no

energy

before

25%

or

sorry

after

25%,

except

for

that

blip

at

the

very

end

which

this

is

running

in

user

space,

so

I'm

gonna

suspect

that

that's

chrome

basically

hanging

for

some

long

period

of

time

and

and

thread

jank

or

something

like

that

yeah.

So

this

this

looks

quite

nice

like

there's,

there's

very

little

energy.

N

You

can

past

12.5%,

so

so

we're

doing

much

better

I

did

have

to

filter

the

men

RTT

to

greater

than

a

hundred

milliseconds.

To

get

this

data

to

be

like

sensible,

it

turns

out

that

there

are

some

clients

that

measure

like

sub

millisecond,

RT

T's

and

obviously,

if

you

have

a

sub

millisecond

RTT,

your

fraction

of

Manara

TT,

just

kind

of

goes

crazy.

N

It'd

be

interesting

to

get

data

for

like

greater

than

10

milliseconds

as

well,

which

kind

of

is

a

more

sensible

thing.

So

the

fact

that

it

was

so

crazy,

though,

is

probably

motivation

that

we

need

a

min

like

if

you're

gonna

start

using

time

based

loss

detection.

Like

probably

you

need

something.

That's

on

the

order

of

like

your

clock,

granularity

or

a

timer

granularity

like

1,

millisecond,

so

amount

of

minimum

threshold,

just

to

kind

of

ensure

some

basic

sanity

in

the

network

to.

D

N

N

This

is

an

effort

of

saying

if

you,

if

I,

had

an

adaptive

kind

of

time-based

loss,

detection

that,

like

ratcheted

up

the

time

threshold

to

whatever

was

necessary

to

never

experience,

MIT

a

packet

kind

of

how

much

time

of

reading

window

would

I

need

to

make

that

happen.

That's

my

internal

logic,

yeah.

D

I

guess

it's

just

like

when

you

the

like

the

PDS

that

you're

showing

before

when

you're

when

you're,

showing

like

numbers

of

events

that

you're

measuring

is

it

is

it

confused?

Do

you

have

like

a

single

flow

has

multiple

times

or

single

path

has

multiple

times

I'm

just

trying

to

understand

how

pervasive

are

this

like

is.

N

N

N

N

N

N

So

over

40%

of

packets

were

just

like

Twitter

as

they

are

sometimes

referred

to

as

and

then

you

get

a

fair

amount

of

energy

around

two

and

three

and

then

you

know

kind

of

things

go

off

the

off

the

chart

on

the

top

end

from

there.

So

it

seems

on

the

server

side.

The

the

maximum

distance

in

packet

number

is

smaller.

I

think

that's

mostly

a

product

of

the

fact

that

usually

we

have

a

smaller

condition

window

going

from

client

to

server

than

from

server

to

client.

N

N

N

So

the

best

majority

of

connections

seen

over

here

during

the

tail

is

very

very

long.

I

mean

packet

number

space.

It's

depressing

really

long,

quick

runs

in

user

space,

so

you

know

small

amounts

of

network

where

during

may

occasionally

get

amplified

into

much

larger

amounts

of

networking

ordering

jutsu

like

thread

jank,

particularly

true

on

the

client

side,

and

so

hopefully,

TCP

actually

might

see

a

little

bit

whispering

if

things

are

going

well.

P

N

H

N

H

N

H

N

I

Brian

Trammell

anecdotally,

we

have,

we

would

also

play

my

wife,

I

I

mean

so

where

we've,

where

we've

done

stuff

for

like

in

the

lab,

where

we're

looking

at

different

connection

things,

it's

always

like

the

dodgy

little

a

wireless

router

thing

and

they

suck.

If

you

come

over,

if

you

come

over

the

wired

interface,

but

they

suck

a

lot.

I

Q

Cory

Fair

has

thank

you.

It's

fun

to

have

real

reordering

information,

I

wonder

whether

this

is

partly

a

function

of

what

you

measured

and

the

fact

I

see.

Tcp

I

mean

maybe

having

less

reordering.

Maybe

it's

the

footage

of

your

congestion

controller

actually

pushing

hard

for

a

little

while

so

I

wonder

whether

as

a

community,

we

could

kind

of

gather

more

stuff,

and

do

you

think

that

would

be

useful

to

kind

of

look

at

other

other

transports

of

the

links

and

see

what

we

can

actually

derive

from

this

yeah.

S

C

N

I

Good

morning,

everyone

hi

I'm

Brian,

Trammell

eh.

This

is

work

actually

did

with

Miriah,

but

she's

chairing

so

I'll

present,

and

we

asked

a

very

simple

question

and

we

have

a

very

simple

answer:

is

buffer

bloat,

a

privacy

issue?

Actually

I

want

to

see

there's

buffer

board

of

privacy

su

yes

or

no

so

hands

up

for

yes,

yeah

hands

up

for

no,

oh,

yes,

buffer

bloat

has

a

potential

privacy

impact.

Okay,

that's

interesting,

but

there's

a

big

asterisk

on

this.

Yes,

everything's

a

privacy

issue

right.

I

So

if

you

have

significant

buffering

on

a

link

right,

so

yeah,

that's

buffer

bloat.

If

you

have

a

public

IP

address

that

is

associated

only

with

that

link.

If

the

public

IP

address

is

responds

to

an

ICMP

echo

request

and

that

echo

request

and

reply

fair,

the

buffered

queue,

then

I

can

ping

you

and

figure

out

how

big

your

queue

is.

This

is

very

surprising

to

me,

so

I

decided

to

come

and

talk

about

it

for

networks

that

we

examined.

This

is

a

this

is

I

would

almost

call

this

an

ik

data.

I

This

is

a

a

extremely

bias

study

of

people

that

I

could

get

to

clink

on

a

link

in

a

tweet,

but

for

one

in

seven

of

the

networks.

They're,

these

conditions

hold.

So

this

is

actually

you

know,

an

advice

we've

been

giving

in

the

transfer

area

for

a

long

time

is

like

fixed

buffer

bloat,

but

now

we

can

say

actually

no

seriously

fixed

buffer

bloat,

because

this

is

a

problem.

How

did

we

get

here

on?

This

is

sort

of

a

recap.

I

I

didn't

actually

set

off

to

try

and

answer

this

question

I

tried

to

answer

a

completely

different

questions,

so

this

was

you

know

this

is

the

quick

portion

of

the

morning

I

guess

so.

This

was

a

question

that

was

into

the

quick

RTT

design

team

in

the

spin

bit.

Discussions

is

RTT

data

privacy,

sensitive

passive

RTT

data

privacy

sensitive

and

the

idea

is

yeah.

If

I

continue,

I

can

know

where

you

are

not

what

you're

doing,

and

you

know

you

essentially

two

very

simple

trilateration.

You

know

the

you

basically

take.

I

The

radius

is

equal

to

the

time

on

each

of

these

pings

and

multiply

it

by

the

speed

of

light

in

the

internet

run.

You

know,

do

some

very

basic

math

and

actually

it

turns

out

that

the

data

is

usually

fuzzy

enough,

that

when

you

do

this

very

basic

math

you

divide

by

zero

somewhere

and

if

you're

not

dealing

with

complex

numbers.

Bad

things

happen.

I

Usually

this

is

done

in

a

more

approximate

way

right

like

so.

These

are

the

RT

T's

by

color.

So

green

is

faster

to

a

particular

anchor

in

the

right

that

lists

network,

which,

if

you

just

look

at

the

colors

he'll,

guess

it's

kind

of

yeah.

It's

probably

there

in

Europe

right

and

you'd

be

right.

Yes,

it

is

indeed

in

Europe.

I

When

we

looked

at

this,

we

actually

found

the

Internet

Artie

T.

Is

that

some

of

delays

at

each

hop

a

lot

of

these

are

variable?

You

can

only

derive

distance

when

you're

queuing

stack

and

application

delay

or

held

the

zero,

which

basically

never

happens.

The

network

operations

rule

of

thumb

that

one

millisecond

of

Artie

T

is

a

little

bit

less

than

100

kilometer

as

a

distance

holds.

I

So

if

you

know

the

IP

addresses

then

trying

to

do

a

geolocation

by

exclusion

based

on

Artie

T

bait

data

is

somewhat

more

erroneous

than

even

the

cheapest

lowest

quality.

Ip

geolocation

database

right.

If

your

Artie

T's

are

over

ten

milliseconds,

which

doesn't

happen

in

the

internet,

all

that

often

then

you're

getting

at

best

exclusion

information

for

national

level

right.

So

this

isn't

a

problem,

but

we

were

concerned

in

general

about

the

gia

privacy

implications

of

of

passive

observation.

Murky.

I

T

turns

out

to

be

not

that

scary,

because

of

all

of

this

variation,

but

can

we

we

flipped

this

question

around?

Does

active

observation

of

our

TT

pose

a

problem

and

it's

one

of

these

things

were

actually

just

sort

of

like

cleaning

up

the

we're

writing

a

paper

on

this

and

we

were

sort

of

cleaning

up

the

you

know.

Looking

at

all

the

loose

ends,

you

know

we're

actually

going

to

get

this

question.

I

So

let's

actually

go

ask

that

question

and

we

asked

the

question

about

remote

load,

telemetry

kind

of

as

an

Africa

right

can

a

remote

entity

armed

only

with

ping

get

information

about

the

operation

of

machines

on

me

networks.

Oh

no,

this

is

me,

is

the

internet,

and

here,

if

we

look

at

that,

you

know

the

equations

before

about

how

we

actually

what

these

components

of

of

RTT

do.

Then

the

load

on

the

network

is

equal

to

the

sum

of

the

queuing

delay

and

one

direction

in

the

sum

of

the

queuing

delay

in

the

other

direction.

I

If

I

have

a

cheap,

router

and

somebody's

going

to

ping

me

from

very

far

away,

can

I

actually

get

information,

so

what

I

did

is

my

set

stuff

up

on

my

cheap

router

and

I

started

downloading

my

kernel

from

somewhere

and

then

I

pinged

myself

from

Singapore

in

Amsterdam

and

I

got

this

that

trough

Topeka.

So

that's

like

basically

zero.

It

turns

out

I'm

in

Zurich,

which

is

not

that

far

from

Amsterdam.

I

You

know,

boom

I

see

this

peak

of

800

milliseconds

I'm

like

wow,

okay,

I'm,

an

Internet

measurement

researcher

I,

probably

should

have

noticed

that

I

have

a

second

of

buffer

bloat

in

my

own

network

and

I

hadn't

before

that,

and

it

was

gonna

like

wow,

that's

scary

and

then

I

went

down

here

and

I

actually

started

using

rate

limiters

to

limit

the

rate

to

other

than

full

rate.

Let's

not

full

up,

fill

up

the

entire

pipe

and

I

was

even

able

to

actually

see

some

some

variance

here

when

I

was

pulling

down.

I

300

kilobits

a

second

on

a

a

or

330

kilobyte

300

kilobytes,

a

second

on

a

40

megabit

link

right.

So

even

if

I'm,

only

at

10%

I

can.

Actually.

You

know

that

signal

looks

different

than

that

signal

looks

different

than

that

signal

can

actually

estimate

the

rate

across

there.

So

my

connection

sucks

good.

How

widespread

is

this

phenomenon?

So

we

stood

up

a

piece

of

software

if

you

go

and

you

click

on

that

link

right

now,

you're

probably

going

to

cause

it

to

fall

over

because

I've

never

actually

had

a

room.

I

This

big

going,

click

on

it

so

feel

free.

It

actually

kind

of

does

work,

I'll!

Warn

you!

The

JavaScript

was

me

learning

JavaScript

over

Christmas

vacation,

so

you

might

get

in

a

situation

where

you

give

me

data

and

I.

Don't

show

you

the

graph

if

it

fails

and

says

that

I

can't

ping,

you

send

back

the

link

to

me

and

I

can

give

you

your

data

if

you're,

if

you're

interested

the

way

that

this

work

is

that

you

know

I

have

a

ping

server

somewhere,

it

has

its

the

clients

and

JavaScript

sends

a

ping

request.

I

The

ping

server

starts

pinging,

then,

after

a

delay,

I

start

downloading,

actually

I

think

I

start

downloading.

The

Hubble

Deep

Field

from

a

CDN

somewhere

I

keep

pinging,

and

then

you

know

that

the

thing

server

itself

keeps

the

ping

information

it'll

only

actually

ping

the

public

IP

address

from

where

the

request

comes

from,

because

you

don't

want

to

turn

this

into.

Essentially,

a

botnet

and

I

left

this

up

and

ran

a

you

know,

kept

this

running

and

updated

stuff

from

just

before.

I

33%

of

these

networks

always

block

ICMP,

and

you

know

so

7/8

of

of

definitely

mobile

network.

So

basically

we

classified

these

by

autonomous

system

number

to

see.

Are

you

an

access

network?

Are

you

a

you

know,

or

your

trust?

Relax

its

network

or

a

mobile

access

network,

see

a

lot

more

ICMP

blocking

in

mobile

access

networks

because

there's,

you

know

generally

some

sort

of

nat

somewhere

along

the

path

on

33%

of

these

networks.

I

There's

no

indication

of

load

dependent

RTT,

which

means

that

last

mile

segment

is

either

there's

actually

not

so

you're

you're

you're

hitting

a

thing

that

is

not

the

congested

queue

on

the

way

down.

There's

a

queue

in

the

way

or

the

queues

are

tuned

in

such

a

way

that

you

don't

actually

have

buffer

bloat

and

we're

in

a

remote

load

telemetry

which

might

work

on

about

14%

of

these

networks.

So

you

know

what

this

looks

like,

so

you

know

we

start

pinging.

Here

we

stopped

pinging

there.

I

I

It's

not

maximum

maximum

load

I'm

only

seeing

300

seconds

of

variance

there

as

opposed

to

800

milliseconds,

and

this

is

a

very

easy

signal

to

sort

of

see

so

coming

out

of

that

you

know,

recommendations

for

protocol

design,

remote,

local

imagery.

Anyone

who

can

ping

you

from

anywhere

in

the

internet

can

measure

Network

activity.

I

will

leave

why

this

might

be

a

thing

that

you

don't

want,

isn't

exercise

to

you

the

audience

and

you

can

take

away

sort

of

like

two

bits

of

advice

here.

I

I

That

will

fix

it

great

and

there's

at

least

anecdotally

one

of

those

lines

where

everything

is

good,

that's

how

they

fixed

it

right

like

because

I

know

that

guy

who

built

the

network

bad

advice,

you

could

also

just

roll

out

CG

and

everywhere

and

block

up

block

ICMP.

That

would

also

fix

this

and

other

forces

are

causing

that

to

happen

as

well.

So

with

that

I'm

done

and

we'll

take

questions,

how

much

time

do

I

have.

I

The

pattern

of

I'm

doing

a

bulk

download

looks

somewhat

different

than

the

pattern

of

I'm

doing

adaptive,

bitrate

multi-streaming.

So

so

there's

there's

a

difference

between

web

web

and

netflix

and

chill'

on

this

graph.

Now,

in

this

case,

this

was

ten

pings

a

second.

So

if

I

had

a

competent

network

in

front

of

me,

they

would

probably

actually

report

that

as

abuse

but

I

also

have

800

milliseconds

of

buffering

on

my

cable

modem,

so

I'm

not

sure

I

have

a

copy

competent

network

in

front

of

me.

I.

D

D

E

I

Could

also

do

that.

The

reason

that

I

did

ICMP

is

because

I

was

using

ICMP

and

the

rest

of

the

study

and

I

wanted

to

wanted

to

compare

ICMP,

ICMP

ICMP

to

ICMP.

Another

reason

not

to

do

I

mean

so.

If

I

seem

pious

block,

you

can

use

TCP,

syn

and

reset

know

you

could

San,

and

then

you

get

a

reset

if

there's

no

server

yeah

right,

the

TCP

Center

reset

gives

you

the

gives

you

that

the

I

mean

really

that

the

short

answer

is

I

was

lazy.

J

Giovanni

said,

the

end

yeah

thanks

for

the

presentation

coming

back

to

the

the

big

thing

I

think

this

stuff

and

just

settle

it.

The

good

thing

about

paying

is

just

like

it's

less

blocked

in

other

applications

as

well

as

less

invasive,

so

there's

the

good

side

of

it.

The

bad

sides,

as

many

networks

treat

things

differently.

They

put

like

in

great

look

exactly.

I

I

Something

doesn't

hold

and

actually

I'm

I'm

gonna

take

Stefan's

idea

and

actually

hack

up

the

tooling

to

be

able

to

do

this

in

RCT

hack

as

well,

because

that'll

that

should

go

through

the

same

queue

in

ways

that

that

ICMP

won't

and

if

I

rerun,

that

on

the

networks

that

I

already

have

information

on,

then

that

could

I

could

see

if

I

can

get

any

out

of

that

no

indication

of

load

dependent,

Artie

T

space

thanks.

That's

good

good

advice,

I.

T

Wes

heard

occur

is

I

with

respect

to

the

privacy

problem,

so

in

and

ESS

the

DNS

workshop

this

year,

I

showed

that

just

by

studying,

DNS

packets

leaving

my

house

I

could

determine

things

like

sleep-wake

cycles

and

great

great

stuff,

like

that.

So

this

sort

of

actually

augments

that

even

further,

because

you

know

certainly

we

all

stream

Netflix

starting

at

8:00

p.m.

at

night

and

rates

when

we

watched

most

of

our

bandwidth

and

well

notice

when

you're

not

at

home

and

things

like

that

and

when

you're

at

work

and

and

this

those

do

become

important.

T

I

I

T

I

I

G

K

V

A

W

Thank

You

Mia

hi

everyone,

I'm

clearance

at

Lee,

I'm,

a

PhD

candidate

at

Technical,

University

of

Munich

and

I'll

talk

about

a

quite

specific

and

quite

technical

topic

today,

which

is

the

aliasing

ipv6

hit

lists

and

I'll

briefly

get

into

what

precisely

that

even

means.

It's

based

on

two

papers

that

I'm

listing

for

completeness

or

if

you

want

a

background,

go

to

these

and

have

a

look

and,

of

course,

it's

as

everything

academic.

W

It's

joint

work

with

Alabama

New,

York,

Pawel

cars

in

much

Steven,

Luke

and

Georg

again,

so

I

do

a

lot

of

security

scanning

in

the

internet

and

IP

before

that

has

become

fairly

easy

recently,

because

you

can

simply

ping

all

the

addresses

in

ipv6

that

doesn't

work.

The

address

space

is

just

too

large,

so

we

are

back

to

using

hit

list

what

we

did

for

ipv4

10

years

ago

to

have

this

hit

list.

There's

basically

two

approaches.

W

One

is

first,

you

need

to

collect

addresses

from

whatever

source

you

can

get

DNS

passive

observations

whatever

and

there's

also

some

papers

that

then

use

these

lists

to

generate

more

addresses

like

learn

the

structure

of

IPS

in

those

lists

and

generate

new

IPs

and

see

if

they

respond

and

there's

plenty

of

related

work

going

on

so

I'm.

Really

not

the

only

one

doing

something

in

that

space

and

the

question

is

truckers-

is:

are

these

lists

biased?

W

We

call

this

aliases

because

it's

one

IP

address

and

or

many

IP

addresses,

and

just

one

host

for

which

you

have

many

aliased

addresses,

so

you

can

call

it

an

alias

prefix

of

all

the

IPS

in

that

prefix

belong

to

the

same

host,

so

just

a

brief

intro.

So

this

is

what

our

current

ipv6

hit

list

looks

like

it's

also

published.

W

So

if

you

want

I

P

addresses

to

here

there,

it

consists

of

many

components

like

domain

lists

in

DNS

domain

list

from

certificate

transparency,

but

also,

for

example,

running

trace

routes

to

all

the

IPS

and

finding

router

IPS

in

between.

So

let's

just

a

brief

overview,

we

have

around

50

to

60

million

of

IP

addresses.

The

question

is

how

many

of

these

are

real

and

not

just

aliases,

so

the

state

of

the