►

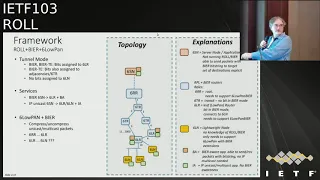

From YouTube: IETF103-ROLL-20181105-0900

Description

ROLL meeting session at IETF103

2018/11/05 0900

https://datatracker.ietf.org/meeting/103/proceedings/

A

A

Yeah:

okay:

we

have

new

new

tickets

consequence

of

the

last

meeting,

so

if

we

need

a

new

version

of

6550,

like

so

I

think

every

per

observation

drive

is

going

to

help

to

us

for

that.

And

if

we

need

a

new

mode

of

operation

for

ripple

and

if

we

have

to

include

this

lbr

us

into

the

dock

route

and

I

think

we

should.

A

B

B

So

so,

this

is

about

the

whole

idea

of

using

its

strings

as

addressing

in

role,

and

here

is

a

little

bit

the

marketing

slide

of

what

we

are

trying

to

achieve

high

level.

You

know

as

something

to

sell

it

to

a

user,

so

the

design

team

has

the

following

email

address.

So

if

you

want

to

contribute

ly

subscribe

to

it

and

a

little

bit

too

small

tools

to

see

it

completely,

but

let

me

quickly

try

to

summarize

what

the

goal

is.

B

C

B

B

C

B

I've,

just

you

know,

introduced

in

some

slides

down

the

terminology

for

the

components

but

yeah

we

I,

don't

know

what

the

right

terminology

is

at

the

application

side,

so

yeah,

so

that,

basically,

would

be

one

of

the

things

we've

never

done

in

in

in

beer

working

group

proper

so

far,

which

is

bringing

the

ability

to

set

bits

up

to

the

application

level,

and

especially

here

in

these

control

applications

where

the

control

software

would

like

to

be

able

to.

You

know

for

individual

commands

very

quickly

changed

the

set

of

receivers.

E

D

E

Level

great

Shepherd

Cisco

on

behalf

of

the

veer

working

group

Taurus,

you

mentioned

that

we

haven't

specified

in

position

in

the

application

layer

within

the

working

group,

architectural

II.

It's

always

been

the

intent,

so

I,

don't

think,

there's

anything

omitting

that

if

you

think

we

need

to

do

something

document,

something

in

a

way

that's

unique

to

what

the

architectures

not

cover.

Please

bring

that

up,

because

there's

a

lot

of

work

in

that

space

right

now

not

necessary.

E

E

B

The

way,

much

more,

fundamentally

and

I

think

I

forgot

to

put

this

into

the

slides,

but

I

wouldn't

like

to

have

role

do

any

things

that

should

better

be

done

in

the

beer

working

group.

So

anything

on

this

I

level

for

the

application

or

so

I

think

should

not

be

specific

to

role.

But

let

us

in

the

design

team

I'd

rather

get

far

along

to.

C

E

C

B

B

You

know

how

you

build,

what

is

a

controlling

server

and

then

these

client

devices

so

yeah,

okay,

so

that

was

basically

the

idea

to

to

market

the

scope

of

the

work,

but

it's

not

only

multicast,

but

it's

also

meant

to

support

the

efficient

transmission

of

unicast

and

then,

of

course,

the

cool

thing

coming

over

from

the

beer

working

group

is.

We

don't

have

to

bother

about

the

constraints

of

working

in

Asics

that

support

terabits

of

bandwidth,

rather

the

opposite.

B

B

Then

the

root

node.

That

would

basically

be

one

of

the

roles

of

these

blue

boxes,

which

are

the

ripple

routers,

so

6rr

as

a

proposed

term

for

the

root

60

r

would

be

a

transit

note

that

doesn't

necessarily

have

to

have

a

in

the

the

beer

string,

so

it

wouldn't

necessarily

have

to

be

a

receiver

in

beer.

This

is

just

called

a

VFR

BIA,

forwarding,

router

and

then

6lr

would

be

the

leaf.

B

The

receiver

router,

which

has

a

bit

which

can

act

as

a

receiver,

which

you

can

explicitly

address

and

then

also

Pascal,

brought

up

the

need

that

we

also

want

to

support

even

more

lightweight

notes

that

wouldn't

be

part

of

the

routing

domain.

That

would

just

be

hosts

receivers

that

just

would,

and

that

I

think,

is

the

conclusion

that

we're

reaching

so

far

we'll

just

be

receive

able

to

receive

IP

multicast,

but

wouldn't

have

the

feature

of

being

directly

addressed

by

bits.

B

So

that's,

basically

you

know

the

current

state

of

the

overall

architecture,

and

then

that

means

there

are

two

type

of

applications

right.

One

is

the

bureau,

where

application

that

does

know

its

address

through

a

bit

and

then

basically

the

applications

here

that

would

only

be

able

to

see

IP,

multicast,

Greg.

E

B

B

E

B

B

B

But

on

the

forwarding

side

really,

maybe

we

could

think

about

it

in

this

simplified

manner,

where

no

distinction

between

I

actually

think

I

missed.

One

update

here,

I,

think

I,

said:

okay

yeah,

so

I

thought

I

had

written

something

here,

but

so

the

the

interesting

idea

here

was

that

we

may

not

need

any

distinction

in

the

forwarding

plane

between

the

te

notion

and

the

native

beer

notion,

because

in

the

end

the

only

thing

we're

trying

to

do

is

you

have

a

bit

for

that

bit.

You

are

trying

to

figure

out

where

to

send

a

copy.

B

What

is

your

next

hop?

The

question

is:

how

is

the

next

hop

calculated

right

in

the

beer

te

model?

The

next

hop

comes

from

an

explicit

assignment

in

the

controller

and

would

be

different

on

every

node,

whereas

in

beer

proper

it's

calculated

locally

from

the

routing

table.

The

next

hop

right

so

that

that

fundamentally

means

that

the

control

plane

could

be

different,

but

hopefully

we

can

come

up

with

a

forwarding

plane,

which

we

call

you

know

beer

forwarding

table

that

wouldn't

have

to

distinguish

between

beer

and

beer

tea.

B

That's

detailed

in

in

the

further

slides,

so

I've

basically

put

these

resets

bit

in

here,

but

we

also

know

we

can't

do

the

reset

of

the

bits

when

we're

compressing

the

bit

string

in

a

lossy

fashion.

So

here

we

wouldn't

have

it,

and

the

question

also

in

the

non

compressed

forwarding,

is

whether

we

want

to

have

this

reset

bit

mask

because

it

maybe

if

we

don't

compress

it,

it

may

be

fairly

expensive

right.

So

if

we

have

a

bit

string

table

of

256

bit,

let's

say

this

is

kind

of

here

the

example.

B

Then

every

forwarding

entry

would

need

to

have.

Of

course

they

could

be

compressed

right.

But

if

we

look

at

uncompressed,

we

have

256

by

256

bit

for

the

reset

bit

strings

for,

for

these

fbms

forwarding

bit

masks

which

are

basically

resetting

the

bits,

and

maybe

that

is

too

much

state.

So

we

don't

want

to

have

it

from

the

state

perspective

now

they

could

be

very

well

compressed

right

so,

but

in

any

case,

this

is

kind

of

the

current

state

of

the

slide

of

a

slider,

not

right

Pascal.

Yes,.

F

B

F

And

then

the

benefit

of

Greg

being

here

and-

and

you

know

the

draft

right-

we

have-

we

have

another

draft

which

has

nothing

to

do

with

this

work,

also

written

so

far,

which

is

about

in

the

tea

world,

managing

duplicates

and

replication

and

finding

out

which

transmissions

failed

and

that's

going

to

be

very,

very

useful.

In

the

wireless

space

I

mean

you've

presented

the

drafted

beer,

I

guess

III

had

a

collision

but

yeah

yeah

and

and

so

actually

Thursday.

F

We

laughs

a

bar

buff

that

I

could

power

for

predictable

unavailable

Wireless,

and

this

is

the

sort

of

work

which

would

be

useful

for

both

the

bath

years

at

eleven,

and

we

don't

have

a

room

yet.

But

if

you

want

to

attend

because

you're

not

I

own,

the

data,

at

least

I,

don't

think

so.

I

copied

six

I

copied

six

lo

I

copied

that

Matt.

Now,

if

she

just

dropped

me

an

email,

you

want

to

see

what

Paul

is

doing.

F

B

Let's

I

mean

they're

bad,

and

these

are

you

know

not

really

finished

slides

for,

for

these

are

working

slides

right

by

the

way

there

there

is

a

version

update

missing,

so

I

had

already

replaced

all

6lowpan

with

six

low

RH

in

in

the

slide

deck

that

I

thought.

I'd

sent

you

so

sorry

for

for

the

mistake.

So

basically

the

kind

of

encapsulation

that

we're

planning

to

use.

B

B

B

Right

so

I

I

think

the

question

of

where

do

we

have

duplicates

and/or

loops

right

so

in

the

in

normal

I

GPS

we're

saying

we

have

micro

loops

right

so

and

we

wanted

to

avoid

creating,

duplicates

and

problems

there

with

with

the

reset

right.

So

you

say

Pascal:

are

you

saying

that,

for

example,

because

of

the

way

a

ripple

works,

we

would

never

have

micro

loops.

F

Let's

get

in

summary,

yes

practice

in

ripple,

we

have

a

additional

header

which

allows

us

to

to

track

if

you're

going

up

or

down

the

structure,

and

we

have.

We

have

this

rule

which

says

that

if

you're

going

down,

you

cannot

go

up

again

and

even

if

the

nodes

are

not

in

sync

in

the

routing

table,

they

still

have

a

sense

of

up

and

down

and

the

mismatch

is

found,

and

so

the

packet

will

never

move.

F

F

F

Yes,

I

mean

the

bits:

the

bits

is

kind

of

a

match.

Right,

you've

got

some

bits

which

might

match

next

a

child,

and

so

you

go

through

your

children

and

you

kind

of

and

the

bits

which

identify

this

next

hub.

So

all

the

routes

which

goes

through

this

guy,

if

it's

during

mode

or

this

thing,

if

it's

non

storing

mode

and

and

you

it

could

be

a

blue-

and

so

you

add

the

back

yet

and

this

thing

and

then

gives

you

whether

this

child

or

not,

is

next

time

right.

B

F

B

F

F

In

storing

mode

in

non

storing

mode,

do

you

have

a

bit

per

interface,

which

is

well

anyways

for

adjacency

right?

So

so,

without

the

same

wire

or

wireless?

Or

if

it's

multiple

different

interface,

you

don't

really

care.

You

have

a

bit

per

adjacency

a

bit

a

bit

mass

progestin,

see

in

non

stirring

that

bit

mask

will

be

basically

the

bloom

filter

of

this

link

or

the

bit

of

this

link.

F

If

you

are

not

using

bloom

filters

and

in

storing

mode,

it's

going

to

be

the

collection

of

all

the

bits

of

all

the

leaves

which

are

somewhere

down

the

dyrdek

via

this

guy

anyway,

you

take

the

bit

mask

the

bits

in

your

packet.

You

end

that

with

the

stage

here

for

that

child,

if

the

and

operation

is

not

a

zero,

you

have

a

match.

If

you

have

a

match,

your

four

okay,

so.

F

C

B

So

yeah

so,

but

this

was

the

slide

that

I

was

missing

right,

specifically

just

to

figure

out

if

I

have

the

complete

understanding

of

the

documents

that

have

been

written

for

the

scope

of

this

work

here

right.

So

a

Pascal

has

the

you

know

his

proposed

architecture,

purely

the

beer

and

row

level,

not

the

you

know:

IP

multicast

overlay

Carsten

has

the

role

C

cast,

which

talks

purely

about

the

solution

assuming

IP

multicast,

on

top

of

it.

B

For

the

bloom

filters,

there

is

a

Pascal's

beer

dispatch

in

6lo,

which

talks

about

the

six

low

RH

extensions

for

beer

to

the

best

of

my

understanding,

and

then

the

unaware

leaves

is

I.

Is

that

related

to

basically

these

these

notes

that

are

supposed

to

not

be

doing

so

this

this

I

think

starts

this

work.

It's

not

meant

to.

You

know,

define

right

now

the

multicast

part

of

it

yeah,

so

so

that

would

basically

be

the

part

of

these

leaves

that

should

be

very

lightweight.

B

B

Right

so

there

a

lot

of

things

here

and

I

think

I

was

trying

to

yeah,

so

we're

trying

to

continue

limit

the

work

scope

by

eliminating

below

the

line

or

it

doesn't

work,

options

and

I

have

more

detailed

slide

on

those,

so

I

think.

First

consideration

was

even

when

we

want

to

address

receivers

directly

with

bits

from

the

server

application.

B

We

could

still

put

everything

into

an

IP

multicast

header,

because

that

one

would

be

compressed

by

six

low

RH

anyhow,

and

you

know

we

already

have

socket

API,

so

it

helps

a

lot

if

we

don't,

at

this

point

in

time,

try

to

figure

out

how

to

receive

native

beer

packets

that

don't

have

IP

multicast

in

them.

I

was

first

afraid

of

the

overhead

of

IP

multicast.

B

We

don't

need

it,

but

you

know

that

would

be

the

proposal

of

just

thinking

that

the

stack

always

has

an

IP

multicast

header

and

that

were

appropriately

compressing,

that

with

a

six

low

RH,

then

we

did

discuss

to

an

extent

how

we

can

basically

send

packets

optimally.

I

I

was

thinking

fake,

IP,

packets

or

we

don't

have

an

IP

multicast

destination

and

we

figured

out

that

we

don't

want

to

do

it

right.

So

remember

all

these

discussions

that

that

we

had

when

we're

multi

casting

a

unicast

packet

and

figured

out.

F

G

B

F

B

B

C

B

F

Beer

is

critical

for

storing

mode

because

it

allows

you

to

save

a

lot

of

state.

I

mean

it.

Basically,

it

allows

storing

mode

in

constrained

devices.

I

mean

Kirsten

has

been

telling

us

so

many

times

that

he

has

never

seen

any

storing

mode

ripple.

Network

in

IOT,

right,

I've

seen

them

in

non

iut,

because

it's

a

rotting

protocol,

you

can

use

it

everywhere.

We

ship

it

with

storing

mode

with

Cisco,

but

in

in

IOT.

You

usually

don't

see

it

because

of

the

load

of

information.

You

have

to

store.

F

F

G

Michael

Richardson,

so

if

I

understood

Pascal,

what

you

said

is

that

beer

reduces

the

cost

of

routing

entries

in

storing

mode

to

such

that

it's

now

low

enough

that

it's

maybe

workable,

but

it

doesn't.

It

does

not

change

the

order

in

dependency

that

storing

mode

has

it

just

reduces

the

constant

significantly,

not

not

not

true.

G

F

You

still

need

to

have

information

per

leaf,

but

the

point

is:

if

you

have

sized

your

bitmap

to

say

256

just

for

this

example,

because

you'll

know

your

network

is

going

to

be

256

or

something,

then

you

need

one

of

such

bitmap

per

child,

regardless

of

how

many

leaves

you

have

now,

you

cannot

have

more

than

256

leaves

because

that's

the

size

of

your

bitmap

right.

If

you

want

to

go

beyond

that,

then

then

you

you

have

the

she

want

some

elasticity.

F

F

C

I

just

wanted

to

point

out

two

things:

there's

a

small

state

of

end

here,

because

by

limiting

the

thing

to

256,

of

course,

we

make

story

mode,

much

more

manageable

and

then

by

reducing

the

state

by

a

factor

factor

of

64.

We

also

gain

something.

The

other

answer

is

the

bloom

filter

is

one

important

parameter

for

room

filter

is

how

many

members

you

actually

have

in

the

whole

group.

So

if

you

have

sparse

groups,

you

can

address

the

whole

network

with

a

pretty

small

filter.

C

B

Let's

go

back

so

let

me

say

what

I

just

want

to

make

sure

is

that

for

every

option

that

we're

saying

we

want

to

work

on,

let's

make

sure

we

have

sufficiently

agreed-upon

value

proposition

right

and

I

I

think

this

discussion

was

good.

It

I

think

showed

that

that

is

necessary

and

that

I

think

we

should

reconverge,

for

example,

on

exactly

the

value

proposition.

Pascal

claims

for

the

beer

mode,

so

I

I

had

started

here

to

kind

of

look

at.

You

know

the

stacking.

B

How

this

stuff

works-

probably

not

correct

here,

but

this

is

basically

what

what

what

what

I

think

we

would

need

to

work

out.

So

this

was

the

IP

multicast

overall

beer

to

ward,

in

this

case

it

6ln

right.

So

in

this

case

we

have

the

server

application.

I

mean

in

this

section.

That's

saying:

IP

multicast

should

mean

SSM

really

so

then,

basically

there

should.

We

should

be

able

to

have

a

user

level

SDK

that

the

whole

stuff

can

be

written

at

user

level

that

you

know

on

the

application

server.

We

can

just

send

things

into.

B

Let's

say

UDP

tunnel,

which

is

typically

the

encapsulation.

We

can

do

at

application

layer

that

would

have

to

end

up

at

the

root

node

and

then

from

there

on.

We

would

have

to

hop

by

hop

forwarding

beer,

Purity

and

then

basically

on

the

6lr

node,

where

the

bit

is

set.

It

would

basically

be

uncompressed

must

be

compressed

as

a

non

bit

string.

Multicast

packet

again

gets

into

the

six

an

application

right,

so

that

was

kind

of

a

little

bit.

B

The

starting

point

of

these

are

the

type

of

diagrams

I

think

we

need

to

work

through

the

end-to-end

solution

of

the

stacking

of

pieces

and

to

me

the

core

part

is

really

also

understanding

the

six

low

RH

kind

of

how

small

these

headers

can

become

with

the

bit

strings

and

confessing

the

IP

multicast

overhead

away.

So

the

unica

stuff

wasn't

done

this

worse.

All

these

consideration

I

think

running

out

of

time.

B

These

were

the

things

that

we

think

wasn't

working

and

yeah,

so

I

basically

started

to

write

down

the

multicast

layer

and

yeah,

so

yeah

so

way

too

much

text,

but

I

think

one

of

the

discussions

were

having

in

the

multicast

architecture

sat

in

the

ITF

overall

is:

can

we

do

things

more

efficiently,

better

with

SSM?

Only

and

yet

now?

Obviously

that's

you

know

one

of

the

things

I

wanted

to

review.

B

C

C

Brought

that

so

the

price

like

there

would

be

different

application

servers

the

direction.

That's

that's

why

you

now

can

steal

that

and

use

it

for

something

else,

which

is

an

interesting

idea.

I

haven't

thought

about

much,

but

basically

the

reason

why

my

recon

says

is

to

solve

the

root

problem

and

since

everything

has

to

go

through

the

root,

we

don't

have

that

problem.

No

I

yep.

B

Well

now

I

think,

but

the

the

rendezvous

point

is

primarily

overhead

in

administration

and

paying

for

the

network

operator.

It's

not

an

application-level

issue

right.

The

application

level

issue

is

kind

of

indicating

which

source

am

I

wanting

to

get

traffic

from

right

now

and,

as

you

said,

we

can

nicely

abuse

that

to

basically

have

the

server

source

discovery

built

from

the

application

level.

That

way.

Okay.

C

B

B

E

Greg

again,

I'm

gonna

avoid

that

last

piece,

but

just

going

back

for

clarification

if

the

intent

with

SSM

is

to

use

it

to

identify

one

of

a

number

of

servers

or

source

discovery.

That

makes

a

lot

of

sense,

but

don't

forget

the

discussions

around

an

RP

or

moot

with

beer.

In

fact,

beer

doesn't

care

of

his

ASMR

SM

and

actually

becomes

effectively.

The

beer

domain

is

like

a

virtual

RP.

E

B

F

F

We

would

not

like

to

see

it

in

the

packet

if

we

can

avoid

it,

because

we

need

to

compress

it

or

if

we

don't

compress

it

sixteen

bits

in

year,

sixteen

bytes

in

year,

so,

for

instance,

having

every

multicast

packet

sourced

at

the

root

for

us

can

be

comprised

with

six,

where

extra

one

bit

right,

because

it

comes

from

the

root

bit.

You

know

so

that's

very

efficient.

If

every

multicast

packet,

which

is

compressed

this

way,

can

be

sourced

at

the

root

and

this

nation

set

of

bits,

then

we

have

the

most

efficient,

efficient

compression.

F

B

Argument

would

be

that

the

amount

of

state

that

I

think

I

need

here

in

the

last

hop

ripple.

Router

is

the

same.

That

I

would

need

for

the

clients

having

unicast

IP

traffic

to

a

particular

application

server.

So

if

to

you

know

improve

the

performance

of

the

system,

all

these

application

servers

should

use

the

IP

address

of

the

root

node

so

that,

basically,

you

don't

have

to

keep

state

for

new

IP

unicast

addresses

that's

fine

with

me

that

doesn't

change

the

concept

right.

H

H

B

I

H

J

J

So

this

is

the

first

one

I'm

presenting

so

one

of

the

one

of

the

major

changes

that

has

been

done,

or

one

of

the

thing

that

has

been

explained

more

is

related

to

how

to

handle

multiple

parents

so

ripple.

Has

this

notion

that

you

can

have

multiple

routing?

You

can

send

the

same

Dao

to

multiple

parents

and

in

such

a

case,

how

will

DC

you

or

the

D

COO

handle

the

route

invalidation?

This

is

explained

with

an

example,

so

this

is

the

major

update

other

updates.

J

J

Then

there

are

some

clarification.

The

d

KO

sequence

number

choosing

the

initial

value.

Some

terminology,

especially

the

require

that

order

into

link

failures.

So

this

is

this

is

the

section

titled

we

had

used

and

this

caused

a

lot

of

confusion.

We

had

to

reword

the

whole

thing

based

on

the

comments

from

Peter

and

George's.

J

J

J

So

this

is

the

draft

which

talks

about

most

of

the

most

of

the

observations

that

we

had

while,

while,

while

developing

a

solution

around

ripple

for

metering

solution,

having

said

that,

it's

not

now

limited

only

to

the

matrix

metering

solution,

but

more

more

other

problems

as

well.

So

this

working

this

document

was

out

of

10,

but

we

have

clearly

mentioned

in

the

document

that

maybe

this

document

might

not

get

published.

E

J

This

can

explain

this

explains

the

problem

in

more

details

like

it

gives

tech

wise

explanation

of

what

the

problem

is.

Okay,

so

there

had

been

a

big

discussion

on

whether

DTS

n

is

a

lollipop

counter,

or

no

most

of

the

things

I'm

going

to

repeat

from

the

last

presentation

so

yeah.

This

is

in

continuation

to

what

we

had

presented

before

whether

DTS

n

is

the

lollipop

counter

or

no

now,

implementations

are

clearly

confused

about

it.

J

We

have

two

implementations:

making

use

of

different

I

mean

different

notions;

basically,

quantity

is

using

DTS

and

as

a

value

pop

counter

and

Wright

is

not,

and

this

is

going

to

I

mean

this

will

have

issues

on

interoperability.

So

we

had

a

big

discussion

on

the

mailing

list.

Eventually,

we

realized

that

maybe

DTS

n

could

be

a

lollipop

counter

or

there

are

pros

and

cons

with

each

of

the

approach.

J

The

primary

problem

with

using

a

lollipop

counter

is

that

within

the

linear

part

of

this

lollipop

country,

you

have

to

maintain

the

state

in

a

persistent

storage.

Now

this

this

problem

is

in

general,

with

all

the

lollipop

counters,

including

DTS,

into

our

sequence,

or

not.

Now

sequence

is

not

a

lollipop

photo,

but

all

the

other

sequence

numbers.

The

problem

is

for

the

linear

part.

You

have

to

maintain

the

state

in

the

persistent

storage.

Now

you

have

a

application,

which

is

a

very

minimalistic

application

and

you

just

are

hosting

that

application

in

a

mesh

network.

J

J

Okay,

so

the

next

slide

is

about

the

DTS

in

so

we

had

a

discussion

right.

The

primary

problem

with

non

storing

mode

is

the

memory

efficiency

on

6

l

RS,

but

there

are

other

issues

that

we

found.

We

observe

while

deploying

storing

mode

of

operation

and

DTS

intends

to

be

one

of

the

major

I

mean

the

way

DTS

Sinha

is

handled,

eventually

decides

what

kind

of

control

overhead

you

have

in

the

network,

so

DTS

in

is

Dao

trigger

sequence

number.

J

Essentially,

what

it

means

is

whenever

a

new

DTS

end

is

received

by

the

6lr,

it

will

in

turn

generate

its

6

lr

6

7.

It

will

in

turn,

generate

its

own

Dao.

So

what

happens

is

when,

in

case

of

parent

switching

node

switching

should

the

DTS

end

be

incremented

should

so

the

first

implementers

dilemma

is

that

should

the

DTS

and

be

incremented

with

ability

I

have

to

cut

people

timer.

J

If

it

is

not

incremented

with

regards

to

DI

electrical

timer,

then

we

don't

have

enough

doubt

redundancy.

Now.

There

is

another

problem

with

regards

to

doubt

that

the

acknowledgement

mechanism

of

dau,

which

I'm

going

to

represent

in

the

subsequent

slide

because

of

that

issue,

we

cannot

really

increment.

We

cannot

afford

to

not

increment

DTS

in

on

every

iota,

and

you

can

see

implementations

struggling

with

that.

J

J

J

So

doing

parents

which

also

the

same

procedure

the

primary

problem

with

doing

parents

which,

if

the

6l

r,

which

is

switching

if

it

increments

a

DTS

in

the

question,

is

other

sellers

downstream,

should

also

increment

it

or

not.

If

it

don't,

if

they

don't

increment,

then

how

how

how

would

the

route

updation

for

all

the

subdued

attack

take

into

a

will

be

handle

now?

J

F

F

So,

even

if

you

don't

increment

the

DTS

and

each

time

you

Treecko,

if

you

don't

do

that,

then

you

have

the

expectation

that

anyway,

the

children

soon

enough

on

their

own

will

decide

to

trigger

her

dau.

So

the

the

basic

spec

doesn't

tell

you

that,

and-

and

it's

probably

because

we

we

could

not

agree-

and

we

really

did

not

know

if

there

would

be

use

cases

for

repo

where

the

push

model

that

the

child

pushes

the

down.

F

Theoretically,

whenever

it

likes

or

the

pool

model

where

the

parent

pulls

with

a

new

DTS

and

all

the

dolphin,

the

children,

which

one

was

the

right

model

for

which

use

case,

we

were

not

clear

right

ripple

was

there

before

we

had

enough

experience.

So

we

left

that

open.

So

what

role

is

telling

us

is

is

now

we

need

to

be

more

specific

Oh.

Is

there

a

type

of

shoes

case?

Well,

one

applies,

the

other

applies,

what

benefits

of

each

model

etc.

I

mean

ripple,

is

fat

enough

like

this.

F

We

didn't

have

much

text

on

that,

but

this

is

a

great

great

discussion

to

have,

and

you

know

at

some

point

ripple

will

be

implemented

in

some

vertical

standards

like

I,

don't

know

TVA

or

whatever

else,

and

and

when

you

implement

in

RFC

in

an

Aryan

standard,

then

you

actually

say

I'm

using

this

feature

this.

It

show

this

teacher

like

this,

so

they

will

design

is

like

they

will

have

to

decide

if

they

want

to

push

pool

or

to

both

what

kind

of

timers

you

have

for

the

sending

down.

F

G

So

Michael

Richardson

here

and

we

have

microphones

I,

didn't

we

had

local

AV,

so

so

we

could

have.

We

should

have,

as

Pascal

just

said,

some

things

I'm

not

really

fond

of

alliance

profiles,

because

I

just

I

just

think

they're

dumb,

but

we

have

applicability

statements

that

we

wrote

and

clearly

this

was

something

we

should

have

a

should

have

covered

about

push/pull

and

this

kind

of

stuff.

G

If

that

was

a

clearly

articulated

option

and

I,

don't

think

in

65

50,

that's

clearly

articulated

that

there's

two

models:

rather

here's

some

here's,

some

tools

put

them

together

in

some

way

right.

So

I've

advocated

this

before

and

a

kind

of

bit

lost

as

to

why

we're

not

further

down

and

I

said

that

to

you

last

week

right.

This

should

be

a

draft.

We

were

talking

about

leaving

observations

as

a

collection

and

I

would

really

like

you

to

pull

that

text

out.

G

G

To

revise

it

and

I

mean

if

we

were

to

do

Biss.

Sorry,

one

of

the

things

you

probably

would

rip

out

is

all

the

security

part,

because

no

one's

implemented

it

okay.

However,

that

statement

is

no

longer

true.

I've

learned

that

someone

has

implemented

that

the

security

parts

of

ripple-

and

so

that's

kind

of

interesting,

so

so

I'm

glad

we

haven't

started

this

because

we

would

have

no

I

think.

G

G

J

J

One

is

the

hop-by-hop

acknowledgement,

so

in

this

case

the

acknowledgement

is

immediately

sent

by

the

receiving

6lr.

There

is

a

so

what

happens

is

if,

if,

if

the

acknowledgement

is

sent

here

and

then

the

acknowledgement

is

sent

above

like,

for

example,

in

one

sense,

a

two

border

router

or

some

other

6lr,

if

it

gets

rejected,

then

there

is

no

way

of

informing

the

the

6lr

no

downstream,

whether

that

the

dow

has

been

rejected.

So

there

is

no

way,

so

this

is

much

much

easier

to

implement.

J

The

primary

reason

why

dow

AK

is

needed

is

so

that

an

application

on

the

node

or

the

node

knows

that

it

has

connected

in

the

in

the

whole

network

and

that

it

can

start

its

application

traffic.

What

I'm

trying

to

say

is

the

current

action

acknowledgment

mechanism

doesn't

help

you

with

that,

and

that

there

has

been

a

lot

of

debate

on

this,

but

so

so

kontiki

initially

implemented.

This

then

went

on

to

this

style

of

aking,

which

allowed

like

and

tools

in

the

Dow

to

N,

1

and

N.

J

1

won't

respond

back

with

an

acknowledgment

immediately.

It

will

wait

for

the

above

upstream

parent

to

send

back

the

acknowledgement

and

then

send

an

acknowledgement

in

response,

so

this

model

is

okay,

but

it

has.

It

has

issues

like

in

terms

of

it

has

to

maintain

state.

It

has

to

maintain

state

for

a

long

time,

and

that

is

that

is

costly.

J

Another

employee

interpretation.

Now

this

the

the

this

discussion

I

had

with

Pascal.

So

one

of

the

things

that

he

mentioned

was

that

whenever

an

upstream

node

takes

up

a

Tau,

it

accepts

the

responsibility

of

pushing

that

Dao

all

the

way

upstream

well,

and

if

it

sends

an

acknowledgment

downstream,

it

means

that

it,

the

Dow,

will

reach

upstream

well.

Well

it

the

the

problem

with

this

approach

is

that

if,

if

there

is

a

negative

acknowledgment

anywhere

upstream,

this,

this

node

doesn't

know

that

there

will

be

a

negative

acknowledgment

upstream.

F

Right

so

two

things:

first

I

try

to

get

from

the

kontiki

people,

but

they

are

not

in

the

room

right

now

why

they

did

that

they

said

it's

a

hack.

They

made

for

a

particular

customer

so

yeah

because

they

are

actually

shipping

their

code

and

they're,

actually

products

being

built

out

of

that

and

I

think

this

one

was

for

intelligent

plugs

or

something

and

they

they

really

wanted

to

have

like

very

quick

acknowledgment,

and

it's

a

very

popular

use

case

where

this

behavior

kind

of

works.

F

It's

a

dairy

case

I,

don't

believe

it

would

work

just

because

of

scalability,

your

timers

or

all

those

things

right.

So

don't

I,

don't

think

it's

right

to

say

that

kontiki

understood

this.

It's

just

that

they

knowingly

did

that

hack

for

a

particular

use

case

right.

It

was

actually

better

for

them

easier

and

just

do

the

trade

more

nicely

right.

So

the

design

in

the

left

is

the

official

one

in

repo

and,

like

you

said

you,

the

official

thing,

is

you

take

responsibility,

meaning

you

take

it

to

the

end?

F

If

you

can't,

if

you,

if

you

push

it

to

your

parent

and

your

parent,

cannot

accept

that

you

have

to

repair

it

yeah

and

if

you're

completely

broken,

then

you

you

have

to

poison,

but

so

so

it's

it's

wrong

to

say

that

the

child

will

never

know

either

the

parent

will

and

all

the

responsibility

and

push

it

or

it

will

detach

it

might

find

a

new

parent

and

push

it

through

that

new

part,

and

even

if

that

fails,

then

it

will

completely

have

to

detach,

because

now

it

cannot

end.

Oh

it's

rotting

right.

F

J

F

E

F

J

F

J

F

Repo

doesn't

give

you

that,

so

you

know

that

the

state

is

progressing

through

the

network.

You

don't

know

if

it

reached

the

destination,

so

we

discussed

about,

and

that

could

be

in

one

of

those

draft

that

Michael

is

talking

about,

but

an

acknowledgement

by

the

route.

You

know

all

the

way

down.

Okay,

now

I

get

it

you

could

you

could

add

a

mechanism

like

this?

Maybe

it's

one

of

your

side,

yeah.

J

Okay,

okay,

so

so,

essentially,

what

we

so

the,

although

all

the

observations

that

we

have

on

what

we

have

quoted

is

in

context

to

quantity

all

right.

We

also

have

our

own

private

implementation,

though,

which

which

ran

into

similar

problems,

and

then

we

check

how

open

sources

are

handling

it

and

that's

when

we

actually

went

ahead

and

actually

included

a

lot

of

other

things

that

are

not

handled

properly,

no

processes.

We

have

not

quoted

those,

because

those

are

just

implementation

works.

J

You

know,

so

we

have

not

included

those

points,

so

the

easiest

way

to

handle

this

is

and

without

much

storage

requirement

or

any

state

requirement

is

by

having

route

acknowledged

the

DAO.

This

will

be

much

easier

much.

The

only

problem

with

this

approach

is

that

you

cannot

do

tag

aggregated

acts.

You

can't

aggregate

multiple

acknowledgments

in

the

same

in

the

same

package,

so

you'll

have

to

send

an

individual,

because

this

is

essentially

unicast

traffic

back

from

the

root

to

the

know.

So

that

is

one

of

the

downside.

J

J

There

is

no

clear

explanation,

other

implementations

already,

which

enable

aggregated

targets

by

default

in

the

implementations,

and

it

is

absolutely

impossible

to

get

anything

to

interrupt

the

open

source

implementation

at

multiple

hops,

multiple

hops,

if

you

put

five

notes

on

the

table,

all

the

odds

are

talking

to

the

water

out

of

everything

works.

Fine,

but

the

moment

you

try

to

scale

a

little

bit.

Nothing

works.

That

is

how

it

assisted

a

handling

node

reboots.

Now

this

we

had

a

big

discussion,

lollipop

counter

sort

of

handles

again.

J

The

point

here

that

I'm

trying

to

make

is

lollipop

counter

requires

some

sort

of

persistent

storage,

even

though

it

is

a

little

very

small.

Only

it

is

only

required

for

the

linear

part.

It

still

requires

purses

or

storage.

If

you

want

to

get

a

if

you

want

to

get

a

deterministic

way

in

which

the

node

can

join

the

network,

deterministic

means,

within

a

particular

time

bound

not

like

in

a

few

milliseconds,

but

at

least

in

a

few

seconds

it

should

be

possible

yeah.

J

The

primary

question

here

is:

should

deployment

provision,

persistent

storage

for

network

stack,

even

though

app

does

not

require

any

persistent

storage,

so

this

is.

This

is

a

big

debate

that

we

had

internally

inside

our

deployment

solution,

handling

resource

owner

ability.

Now

this

is

the

this

problem

is

with

I.

Think

this

problem

is,

you

know

we

handed

by

other

drafts.

Now

it's

about

neighbor

table

and

routing

table

getting

full

and

how

to

handle

it.

J

If,

if,

if

the

routing

table

or

neighbor

table

at

an

upstream

node

gets

full,

so

how

would

the

down

sink

node

come

to

know

about

it

and

how

to

handle

all

this

ignorance.

I

won't

go

into

the

details

of

this,

because

there

is

some

work

going

on

in

the

context

of

this

decision.

Should

transit

information

be

optional

now,

currently,

the

transit

information

is

optional.

Transit

information

contains

key

elements

like

path,

sequence

and

the

path

lifetime

parent

address

for

non

storing

mode

of

operation.

It

is

optional.

J

There

is

no

way

parent

address

can

be

an

optional

element

for

non

story

mode

of

operation,

so

it

has

to

be

mandatory.

So

these

are

some

of

the

points

that

you

know.

Aggregate

a

target

container

aggregation

can

be

optional,

but

at

least

the

reception

of

aggregated

doubt

should

be

made

mandatory

so

that

the

implementations

care

to

follow

that

how

to

do

it?

I

mean

this

is

something

that

is

left

to

discussion,

but

this

is.

There

is

some

idea

that

we

have

proposed

here.

J

Maybe

some

of

the

work

will

require

separate

drafts,

like

Michael

mentioned,

even

the

acknowledgement

or

acknowledgement

work

might

be

a

separate

draft

because

it

requires

a

different

is.

It

is

a

different

discussion

for

all

the

other

parts,

some

the

handling

resource

owner

ability.

There

is

already

a

work

in

progress.

I

feel

six

dish,

the

enrollment

around

sort

of

handles

it

for

the

six

dish,

the

rank

priority.

The

same

mechanism

can

be

used

here

in

ripple

as

well,

so

that

it

informs

the

downstream

nodes

whether

there

is

enough

capacity

on

the

upstream

path

there.

J

So

our

plan

was

to

actually

work

on

these

problems.

Get

some

data

statistics

prove

that

the

control

over

it

is

much

large

in

certain

cases

and

then

come

back

to

the

working

group.

That

is

the

way

that

we

had

thought

about.

One

more

thing

that

we

wanted

to

discuss

is

there

are

other

implications

when

it

comes

to

multiple

link

like

use

of

multiple

link.

K

K

So

there

were

some

questions

about

sequence

numbers,

and

that

was

a

clarified

in

the

draft.

There

was

a

sort

of

it's

been

mentioned.

A

couple

of

times

have

requested.

Why

don't?

We

use

the

NotI

version

number

and

D

TSN?

Well,

the

main

reason

was

because

early

on,

when

the

draft

was

being

done,

the

large

part

of

it.

We

didn't

understand

exactly

how

DTS

n

was

supposed

to

work

and

I

asked

a

couple

of

people

and

still

didn't

get

a

full

understanding.

K

But

right

now

you

just

refers

to

the

event

that

the

lollipop

counter

is

done,

the

same

way

as

it's

done

in

ripple,

so

that

that

might

be

good

enough,

but

I

think

we

should

be

sure

about

that

and

then

to

answer

some

other

questions

about

how

the

sequence

number

is

used.

So

the

originating

node

increases.

K

The

sequence

number

same

way

and

whenever

it

wants

to

find

a

new

route

and

that's

in

the

request

d

io

message

also,

our

originating

node

can

put

in

a

target

sequence

number,

which

is

meant

to

say

that

it's

not

interested

in

getting

routes

unless

the

sequence

number

is

greater

than

what

it

already

has

for

the

target

sequence

node,

and

that

is

intended

to

eliminate

the

possibility

of

accepting

route

updates

for

operations

where

the

packets

are

still

somehow

being

forwarded.

In

the

end,

the

network.

K

This

is

all

pretty

much

modeled

on

the

way

Mao

Devi

did

it

in

RFC

35,

65,

35,

61

and

also

iota

V

version

2,

which

is

currently

under

review.

But

so

that's

that's

how

that's

supposed

to

work,

and

then

the

intermediate

routers

also

follow

pretty

much

the

same

sort

of

the

philosophy

they

only

use.

They

only

update

their

route

entries

when

the

sequence

number

is

greater.

And

finally,

the

target

note

includes

its

sequence

number

in

the

route

reply

when

it

sends

a

rail

reply

back

to

the

originating

node.

K

Another

one

thing

that

I

didn't

put

in

a

slides,

but

I

at

least

like

to

mention,

is

that

there

was

in

the

earlier.

There

was

requested

that

a

ODB

ripple

also

enable

handling

of

multiple

targets,

and

that

was

done

by

having

an

AO

DV

ripple

target

option

and

one

of

the

larger

editorial

changes

in

this

version

of

the

draft

was

to

just

instead

of

always

writing

out

a

devii

ripples

target

option.

Now

we

have

the

AR

T

option

so

that

made

some

of

the

text

a

little,

perhaps

easier

to

read.

K

K

K

Question,

okay,

so

the

and

it's

worth

routing

just

this

is