►

From YouTube: IETF103-LWIG-20181107-1120

Description

LWIG meeting session at IETF103

2018/11/07 1120

https://datatracker.ietf.org/meeting/103/proceedings/

A

B

B

B

B

Today

then,

we

had

an

update

to

the

TCP

constraint,

node

network

draft,

which

was

also

presented

in

TCP

M.

We

were

hoping

to

issue

a

joint

working

group

last

call

with

TCP

M,

but

there

were

some

issues

raised

which

Carles

would

put

present

today.

So

I

guess

we

need

another

revision

for

this

document

before

we

do

do

the

working

group

last

call,

then

we

had

a

new

working

group

document

adopted

and

Rene

will

present

more

on

this

remotely

during

the

meeting.

B

There

no

updates

to

working

group

documents

on

lightweight

co-op

implementation,

so

I

had

some

feedback

for

the

author.

Since

we

have

all

these

new

options

and

extensions

in

coop

like

hop

limit

and

too

many

responses,

maybe

it

would

make

sense

that

at

least

some

document

tells

me,

which

one

should

I

implement

for

which

scenarios.

So

if

it's

very

small

sensor

do

I

need

to

implement

too

many

requests.

B

Do

I

need

to

implement

hop

limit,

for

example,

so

I'm

hoping

Ola,

4

or

Matias

can

can

use

this

feedback

and

and

bring

update

of

the

document

to

the

next

meeting.

We

had

no

updates

to

their

weak

security

protocol

comparison

I,

guess

they

are

waiting

for

DTLS

1.3

to

stabilize

before

they

come

up

with

the

numbers.

B

B

Then

we

had

this

draft

IETF

velvet

cellular,

which

is

now

in

mr.

F

for

more

than

1,000

days,

which

is

a

bit

sad,

so

I

discussed

with

one

of

the

authors

yari

whether

it

makes

sense-

and

this

is

Suresh-

also

question

to

you-

to

move

all

the

normative

references

to

informational.

This

is

an

informational

document.

I,

don't

see

any

reason

why

we

should

be

stuck

on

resourcedirectory

people

to

to

finish

their

things

and

it's

not

like.

B

D

D

Okay,

so

that's

if

that's

something

you

would

like

like,

we

can

probably

just

like

pull

it

back

and

do

this

and

I

can

talk

to

the

iesg

about

it,

and

so

one

thing

I

want

to

talk

about

is

really

the

normative

reference

and

informational

documents.

I

think

it's

not

that

straightforward

right,

like

you

know,

just

saying

this

informational

document

and

we

don't

need

normative.

But

if

you

think

like

somebody

can

follow

this

document

without

reading

that,

then

we

can

seriously

push

it

to

informational

right.

That's

really

the

question!

B

D

That's

fine,

so

I'm

willing

to

pull

it

out

right,

like

it's,

not

a

problem

pulling

it

out,

and

but

this

like

this

existence,

proof

for

this

kind

of

thing

this

like

stuff

in

there.

That's

like

more,

like

close

to

thousand

days

old,

like

there's

like

this

cluster

238

I.

Think

if

you

look

at

it,

there's

like

you

know

this

intertangled

thing

of

like

about

40

documents,

I

would

say:

they've

been

stuck

in

there

like

for

a

very

long

time

right

and

unfortunately,

that's

how

it

is

right.

C

I

reckon

as

one

of

the

authors,

yeah

I,

guess

that

they're

referenced

were

missing,

whereas

in

Salem

Ellen

Rd,

and

we

did

think

back

in

the

days

that

those

wouldn't

be

RFC's

way

earlier.

If

you

would

have

known

its

chromatic

thousand

days,

probably

would

have

taken

another

look

on

as

informative

or

no

many

references.

So

that

is

an

option

of

course,

but

I

guess

it's

matter

having

that

cloak

have

a

chat

with

already.

If

you

can

make

the

change

on

it's

okay,

but

already

should

be

ready.

E

Beautiful

much

talk,

I'm,

not

so

pessimistic

about

our

D

as

of

a

thousand

days

ago,

but

ya

know

in

interrupts,

which

have

passed

very

nicely.

There

are

two

modifications

we

have

exchanged

in

to

expect

to

be

accordingly

and

I

think

we

can

have

good

interrupts

according

to

the

specifications,

and

the

last

call

will

not

be

very

far

back

and

then

there'll

be

very

quick

because

there's

so

many

comments

before.

C

C

The

editors

queue

for

now

I

think

it

makes

sense

to

see

how

this

final

question

on

whether

already

last

call

is

started

in

the

next

few

weeks.

We'll

know

that

you

know

in

a

few

weeks,

okay,

and

if

it

looks

it's

gonna,

go

longer,

then

let's

have

a

look:

whether

we

should

pull

off

that

normative

reference.

I,

think

that

makes

sense.

C

B

Then

the

last

thing

on

the

status

in

society

of

102.

Well,

we

had

this

presentation

from

Daniel

be

called

on

doing

IPSec

on

non

small

devices

and

I

think

we

have

done

to

call

for

adoptions

in

to

IETF

meetings,

and

then

we

try

to

confirm

it

on

the

list.

There

were

some

comments

from

from

Tarot

from

Paul,

routers

and

Henrik,

but

we

never

closed

the

last.

B

B

All

right,

so

this

is

the

agenda

for

today:

Carlos

will

present

TCP

user

guidance

in

the

internet

of

things.

This

was

presented

in

DC

p.m.

on

Monday

and

we

will

see

what

what

feedback

he

got.

Then

it's

Rahul

presenting

two

things.

So

the

neighbor

management

policy

update

and

then

performance

report

for

six

low

fragments

forwarding,

which

was

also

presented

in

in

the

six

low

working

group.

Rna,

will

do

a

remote

presentation

on

alternative

curve

representations

and

then

we.

B

B

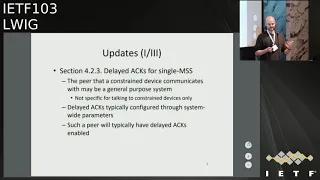

F

So,

first

of

all,

let's

take

a

look

at

the

status

of

the

document.

Since

the

last

ITF,

the

draft

has

been

updated

once

the

last

revision

is

0

4,

and

this

last

date

intends

to

incorporate

to

the

document

the

feedback

received

in

the

room

in

the

last

IDF

and

also

it

addresses

the

last

remaining

editorial.

Those

from

the

authors

also,

we

received

the

review

of

0

4

by

Yoshi

foam

in

Ishida

who's.

The

CDM

co-chair

later

referred

to

the

review

and

also

in

the

same

idea

as

Mohit

explained.

F

I

already

gave

a

presentation

in

TCM

that

was

a

longer

presentation

with

the

goal

of

providing

an

overview

of

the

main

contents

in

the

draft

in

preparation

for

requesting

a

working

Robles

call.

Then

we

also

in

that

session.

In

this

idiom

we

received

few

comments

by

Gorion

David

black,

that

later

I'll

also

present.

G

F

Ok,

so

now,

let's

take

a

look

at

the

updates

in

the

last

revision

of

the

draft,

so

a

first

set

of

a

date

is

in

Section

4

3,

which

discusses

used

of

delay.

That

noise

means

for

single

MSS

windows.

Here

we

have

added

that

the

pier

that

the

constraint

device

communicates

with

may

be

a

general-purpose

system.

H

I

promise

to

do

the

review

as

well,

but

that's

the

least

I'd

heard

about

late

that

the

medium

a

few

days

but

relax

the

there

are

other

things

also

that

should

be

taken

into

account.

So,

unfortunately,

when

using

single

MSS

advertised

in

dog,

then

the

it

doesn't

play

very

nicely

with

it.

If

you

turned

on

the

dead

axe

off

because

now,

when

the

message

or

the

data

packet

arrives,

you

immediately

ack,

and

that

goes

with

this

Jeremy

advertisement.

And

now,

when

the

application

reads,

the

gate

on

the

from

the

buffer

don't

receive

buffer.

H

H

Not

that

clear

and

another

thing

is

that

tiem

when

you

have

a

read

with

response

kind

of

for

traffic

and

now

when

the

request

comes,

you

immediately

act

and

soon

after

that

you

send

the

response,

and

if

you

are

using

the

latex

in

most

cases

the

I

could

call

together

a

key

back

or

the

piggyback

with

your

response.

Once

again,

you

kind

of

send

an

unnecessary

ACK

in

that

case.

So

this

is

not

that

clear

whether

it's

advisable

to

turn

it

off.

Okay,.

F

F

First,

we

have

reorganized

the

content

in

this

section,

so

sections

five,

two

and

five

three

have

been

swapped

in

order

and

also

they

have

been

mostly

editorial

updates

in

the

current

section

5.3,

which

discusses

the

city

connection

lifetime.

Previously

it

was

split

in

two

specific

parts,

one

discussing

short

lifetime

and

another

one

for

long

lifetime.

F

However,

now

the

content

is

mostly

the

same,

but

it's

been

presented

in

a

more

natural

way,

possibly

better

for

for

the

reader,

and

on

the

other

hand,

we

have

in

section

5

the

tool

which

provides

guidance

on

the

number

of

concurrent

connections

used.

We

have

added

some

actual

new

content.

We

have

explained

now

that,

in

order

to

well

using

several

concurrent

connections

between

the

two

applications

may

help

avoid

head-of-line

blocking

problems

for

the

application.

F

However,

it

also

may

be

harmful

in

congested

networks,

so

considering

what

we

already

explained

in

the

previous

version

of

the

draft

that

each

additional

TCP

connection

will

consume.

Potentially

scarce

Ram

resources,

the

overall

recommendation

is

being

conservative.

With

regard

to

the

number

of

concurrent

connections

to

be

used,

then,

finally,

we

have

also

an

update

in

the

annex

of

the

document.

You

may

recall.

F

This

is

the

section

where

we

collect

details

on

a

number

of

TCP

implementations

for

constrained

devices,

and

basically

the

update

is

that

we

have

removed

the

section

on

open

VM,

since

it

appears

that

that

implementation

was

actually

mostly

based

on

micro-p,

so

it

was

actually

quite

redundant

with

that.

So

we

have

removed

that

section

from

the

text

and

also

the

corresponding

column

from

this

table.

I

Nice

to

see

you

Joe

from

Ave

so

nice

to

see

the

socket

row

getting

added

here

and

it's

good

that

we

have

removed

open

WS.

One

thing

that

I

wanted

to

check

is

regarding

the

riot

Dane's

interface

inspired

by

POSIX

have

seen

the

latest

ApS

and

they

are

indeed

POSIX

compliant.

There

are

certain

options

that

are

not

available,

but

I.

J

I

Is

very

difficult

for

me

to

convince

my

team

that

lwp

2.0

takes

less

than

40

KB

or

it

could

approximate

your

40k

be

so

will

it

be

possible

for

you

to

push

the

open

source

with

the

configuration

that

you

are

checking

to

l,

wig,

github

or

something

I,

don't

know

whether

which

you?

What

is

the

right

place

but

I,

want

to

check

I

want

to

cross

check

whether

40

KB,

whether

L

WI

B

2.0,

can

1440

KB,

of

course,

flash.

Yes.

F

So

that

the

sources

for

this

information

or

different

or

heterogeneous

in

some

of

the

cases,

we

do

have

some

actual

references

that

indicate

which

is

the

source

for

some

of

these

details.

In

some

cases

it

could

be

academic

papers

or

in

other

examples,

it's

more

related

with

information

available

in

some

webpages.

I

The

reason

why

esteem

this

is

because

people

have

started

using

this

to

compare

things

you

know,

and

if

there

has

to

be

a

fair

comparison,

the

code

size

has

to

be

checked

on

the

same

platform.

Maybe

we

can

do

a

cross

compilation

for

I

mean

there

ways

to

do

it,

get

it

done.

I,

don't

know

whether

they'll

with

github

is

the

right

place

to

keep

this

information.

B

So

Mohit,

when

we

have

done

this

crypto

sensors

document,

which

is

no

RFC

83

87,

we

you

know,

did

experiments

with

a

bunch

of

libraries

and

we

got

actually

a

lot

of

people

offline,

asking

us.

How

do

you

compile

this

library

on

this

platform

that

platform?

What

settings

you

use?

So

what

I

did

was

I

made

the

configuration

we

had

three

or

four

different

contribution

configurations.

One

was

for

no

least

amount

of

execution

time,

irrespective

of

how

much

memory

it

consumes.

B

One

was

for

least

amount

of

memory

consumption,

irrespective

of

how

much

execution

time

it

takes

and

I

put

it

on

my

person.

We

get

help

so

that

people

could

use

the

same

configuration

file

to

build

the

libraries

and

what

I

guess

Rahul

wants

is

something

similar,

because

he

is

using

this

OS

for

his

products

or

for

some

of

his

experiments.

So

if

we

do

say

that

this

is

40

kilobytes

is

there

some

way

to

reproduce

these

results,

whether

you

put

it

on

your

personal

github

or

whether

we

use

Hellwig

github

is?

Is

another

question?

B

F

F

What

what

we

can

do

is

make

sure

that

the

references

and

the

sources

for

this

information

are

really

reflecting.

What

is

intended

so

the

data

here

that

the

numbers

for

code

size

are

really

not

measured

by

ourselves,

so

they

have

obtained

some

cases

from

academic

papers

in

some

cases.

For

example,

for

riot,

we

have

the

details

provided

by

the

main

developer

of

the

TCP

implementation

of

riot,

so

yeah.

We

have

this

problem

with

heterogeneity

here,

so

I

don't

know

sure.

B

So,

for

every

bit

is

the

whole

protocol

stack

on

embed.

So

if

this

embed

folks,

who

reported

this

number

or

because

now

I,

see

the

annex

that

it

says

for

l-l

week

to

dotto,

it

says

the

whole

protocol

stack

on

embed.

So

is

it

the

embed

folks

who

said

that

this

is

some

how

much

space

it

takes

or

did

you

do

some.

B

F

Okay,

so

then

we

have

a

number

of

comments

received

after

the

internet

draft

submission

cutoff

date,

one

one

set

of

commands

was

provided

by

Yoshi

for

me:

Nishida

TC,

TM

culture.

He

sent

a

review

on

the

mailing

list

by

the

way

things

a

lot

for

the

review

and

well.

His

general

assessment

was

that

the

draft

looks

fine

and

mostly

ready,

although

he

gave

a

number

of

detailed

comments.

The

first

one

was

a

suggestion

to

cite

TC

TM

document,

which

discusses

the

trade-offs

and

and

requirements

around

RT

algorithm

design.

So

it

is

useful.

F

F

Then

also,

he

pointed

out

a

typo

in

Section,

five,

two

three,

and

also

in

the

same

section.

He

mentioned

the

problem

with

the

fact

that

TCP

first

opened

DFO

may

sometimes

deviate

from

TCP

semantics,

in

the

sense

that

a

packet

may

be

repeated

at

the

application.

So

this

is

something

we

need

to

to

discuss

here.

F

F

Then,

on

Monday

in

the

TC

p.m.

session,

we

received

also

some

comments.

David

black

explained

that

mentioning

this

be

md5.

We

just

mentioned

in

the

security

consideration.

Section

is

not

a

good

idea,

since

it's

no

longer

considered

a

secure

option,

so

the

proposed

action

for

this

is

actually

removing

the

related

part

and

the

gory

Fairhurst

explained

that

he

had

read

some

part

of

the

document.

He

said

that

he

would

provide

more

comments,

and

anyway,

his

general

assessment

so

far

was

that

it

was

more

or

less

in

good

shape

for

a

working

group.

Last

call.

B

I

I'm

Rahul

Jadhav,

basically

this

this.

This.

This

presentation

is

about

an

update

for

neighbor

back

a

neighbor

management

policy.

What

it

deals

you

with

is

you

have

neighbor

cash

entries

and

how

do

you

manage

those

neighbor

cash

entails?

Well,

with

the

star

networks,

star

topology,

it

was

much

easier

to

manage

the

neighbor

cash

entries

with

bus

like

topology.

It

is

slightly

more

complicated

with

mesh

topology.

It

is

extremely

complicated

and

with

with

additional

constraints,

with

additional

resource

constraints

as

much

it

becomes

much

difficult

to

handle.

There

is

the

the

NC

entries.

I

So

this

draft

talks

about

how

do

you

manage

this

neighbor

cash

entries

in

a

mesh

topology

like

6lowpan,

with

a

limited

neighbor

cache

size

navigation

table?

So

we

have

received

a

good,

substantial

review

from

more

health.

We

have

updated

the

document

and

pushed

the

next

version.

We

have

clarified

a

lot

of

terminology

that

was

used

we

dependent

upon.

I

We

have

given

examples

about

ripple,

signaling,

Panna

signaling,

which

we

make

user

for

network

access

that

the

there

was

one

thought

that

came

to

our

mind

whether

we

should

be

ripple

and

Panna

from

this

document

and

make

it

more

generic.

It

is

possible

to

do

that,

but

then

it

is

very

difficult

to

give

a

concrete

examples

in

the

document

so

I

would

like

to

have.

I

would

like

to

check

if

there

is

any

specific

feedback

from

this

working

group

on

this

point.

I

If

not,

we

can

go

to

the

next

point,

so

the

points

to

discuss

so

there,

while

going

through

the

document

and

while

discussing

with

several

other

people

and

while

implementation

ourselves,

there

was

one

more

key

thing

that

came

to

our

mind

whether

whether

we

should

do

two

things.

The

document

tries

to

do

two

things.

One

is

the

reservation

policy

for

the

neighbor

cache

entries

and

the

other

thing

is

the

signaling

recommendations.

I

There

are

no

signaling

changes

that

we

say,

but

there

are

some

signaling

recommendations

that

the

documents

are

added,

so

we

feel

now

that

maybe

it

is

difficult

to

have

all

the

signaling

recommendation,

or

maybe

it

is

not

a

good

idea

to

give

all

the

recommendation

for

in

this

draft

it's

better

to

concentrate

only

on

the

reservation

policy.

Like

is

mentioned

here,

there

is

a

fixed

table.

There

is

a

reason.

I

Entry

that

is

added

by

I

mean

you

need

to

know

what

is

the

reason

for

the

entry

into

the

neighbor

cash,

and

then

you

can

have

a

reservation

based

policy

with

this.

You

can

have

a

stable

Network,

even

if

the

node

density,

where

is

across

the

deployments,

so

we

intend

to

remove

the

signaling

recommendations

because

it's

very

difficult

to

get

it

generally

applied

across

all

the

deployment

scenarios.

In

some

cases

we

might

cite

some

examples,

but

we

are

trying

to

remove

the

signaling

recommendations

from

the

draft

in

the

subsequent

version.

B

Mohit

so

I'm,

not

a

6lo

expert,

but

like

don't

you

have

the

neighbor

cache

entry

entry,

it's

based

on

the

signaling

that

you

receive

right.

You

put

something

in

the

entry

based

on

some

signal

you

receive.

So

even

if

it's

for

your

specific

scenario,

at

least

that's

a

scenario

that

you

are

deploying

and

you

actually

actually

have

products

that

you

are

deploying.

So

you

could

write.

This

is

our

specific

deployment

model

and

these

are

the

signals

we

use

when

putting

things

into

the

cache.

I

So

let

me

give

a

specific

example

in

this

case.

Yes,

we

have

a

deployment

in

place

which

makes

use

of

the

implicit

signaling.

Basically,

we

make

use

of

ripple

signaling

to

add

interest

to

the

neighbor

cache.

The

problem

is

even

with

ripple

as

a

signaling

mechanism.

If

we

specify

that

you

can

add

neighbor

cash

entries

based

on

triple

signaling

it,

it

may

be

interpreted

that

you

can

do

that

across

all

the

deployment

scenarios.

When

one

problem

is

in

in

case

of

NS

na

or

NDB

signaling,

there

is

lot

other

things

that

are

happening.

I

For

example,

now

we

have

CG

is

we

have

ap

nd?

We

have

six

67

75

update,

which

is

doing

lot

of

stuff.

All

this

is

not

applicable

with

this

implicit

signaling

mechanism,

so

that

was

what

I

mean

we

can

cite

a

deeper

moment.

We

can

cite

a

specific

example,

but

yeah

that

would

be

too

limited

in

scope,

eiffel.

B

I

Yeah

yeah,

so

one

might

an

implementation

go.

Might

one

implementation

can

do

something

like

this?

Based

on

the

d

io

message,

it

has

decided

that

the

preferred

parent

is

selected

based

on

the

DR

message.

You

also

add

up

with

neighbor

cache

entry

based

on

it

other

another

implementation.

Can

you

decide

to

do

explicit,

nsns

signaling

before

adding

an

invocation

tree?

This

is

also

possible

and

SNS

signaling

gives

you

some

additional

control

over

other

stuff,

which

people

sitting

alone

cannot

give.

B

B

Put

it

there

and

you

know,

make

it

clear

that

this

is.

This

is

how

we

are

deploying,

and

this

is

how

we

intend

to

do.

It

may

not

be

generally

applicable,

and

you

need

to

do

some

of

these

things.

If

you

are

running

you

know,

devices

which

have

hundreds

of

not

thousands

of

kilobytes

of

memory

and

flash

thank.

I

You

thank

you

for

the

feedback,

then

I'll

take

into

consideration

this

thing,

and

maybe

I

will

discuss

with

other

authors

on

this

point

as

well,

which

are

canta

key

developers

as

well.

Okay,

the

implementation

progress.

We

have

an

implementation

which

is

almost

finished.

One

of

the

things

that

we

found

during

implementation

is,

we

are

unable

to

handle

and

see,

increase,

authentication

related,

insane

reason

with

quantity,

because

quantity

doesn't

support

any

network

access

protocol.

I

One

way

is

to

do

is

to

the

injuries.

By

the

way

we

have

a

private

implementation

which

does

all

this,

but

but

we

don't

have

an

open

source.

What

we

are

trying

to

do

here

is

we

are

trying

to

push

an

open

source

implementation

as

part

of

quantity,

and

this

is

the

this

is

the

issue

that

we

have

faced

so

I

hope

it

is

okay

to

do

this

way.

Yeah.

It

definitely

stabilizes

the

network.

To

what

extent

we

will

be

able

to

report

the

data

by

the

next

meeting,

good.

I

So

I

have

another

presentation

right:

okay,

so

this

is

about

fragment.

Forwarding

graph

I

have

presented

this

previously

in

a

day

before

in

6lo

working

group.

Well,

when

we

presented

this

in

six

law

working

group,

please

let

me

clarify

before

I

go

into

the

details

of

this

draft,

that

this

is

specific

to

the

deployment

that

we

are

doing

so

consider

the

numbers.

Consider

all

the

figures

that

I

am

quoting

here.

Only

in

the

context

of

what

we

are

trying

to

achieve.

These

are

specific.

I

will

be

quoting

specifically

two

characteristics.

I

The

numbers

are

relevant

only

with

those

specific

two

characteristics

so

for

people

who

don't

know

about

fragment,

forwarding

they're

the

existing

four

nine

four

four

allows

you

to

do

per

hop

reassembly

you

can

every

node

sends

a

packet

it's

at

every

or

immediate

6lr.

It

is

reassemble

and

then

subsequently

fragmented,

again

and

then,

since

sent

upstream

or

downstream,

whatever

in

case

of

fragment

forwarding,

it

is

possible

to

have

a

train

of

fragments

such

that

the

the

fragment

as

it

is,

is

forwarded

to

the

next

stop.

I

I

Yeah,

so

our

motivation,

our

we-

we

were

really

excited

about

this

particular

work.

We

are

really

excited

about

this

particular

work.

We

wanted

to

check

whether

it

can

improve

our

fragment

forwarding.

In

our

scenario,

our

primary

motivation

was

to

check

latency

and

PDR

implication,

not

the

memory

utilization

on

the

bottleneck,

node

and

we

have

been

using

a

paper

on

signaling

for

the

network,

access

and

EAP

panel

signaling

is

relatively

bulky

which

causes

fragmentation

in

the

network.

So

we

were

hoping

that,

if

fragment

forwarding

can

solve

that

issue,

it

will

improve

our

network

convergence

time.

I

Okay,

the

test

that

we

started,

this

implementation

even

before

the

drafts

were

adopted.

So

we

were

really

excited

about

this

work.

We

are

really

excited

about

this,

so

the

test

configuration

that

that

we

used

is,

we

use

eight

know

or

15.4

in

unsorted

single

channel

mode

of

operation

it

had

carry

on

sensing.

It

has

clear

general

assessment

enabled,

but

no

RTC,

TS

I,

don't

know.

If

anyone

here

use

this

lo

pan

with

RTS

CTS,

it

has

a

high

control

overhead

and

no

I

I,

don't

know

of

any

implementation

or

any

deployment

which

uses

RT

CTS.

I

I

I

The

reason

why

we

did

like

this

was

so

that

we

wanted

to

have

equal

amount

of

data

traffic

on

the

in

the

air,

but

more

or

less

fragments

in

there,

for

example,

160

160

seconds

scenario

will

generate

more

number

of

Eggman's

or

payload

than

40

seconds,

but

the

amount

of

fragments

in

there

at

in

water

time

should

remain

same.

The

data

traffic

in

there

should

remain

same.

I

Please

note

that

there

was

a

random

delay

between

0.5

seconds

to

5

seconds

before

transmitting

each

of

this

payload,

not

the

fragment

and

all

the

data

here

is

distinct

to

the

water.

Router

I.

Think.

That's,

that's!

Ok.

So

the

packet

delivery

rate

that

that

that

we

found

with

with

fragment

forwarding

it

actually

yeah.

I

This

is

the

delay

that

is

added

by

the

application

there.

Yes,

so

the

packet

delivery

rate

that

we

found

was

surprising

actually

in

case

of

per

hop

reassembly.

We

found

that,

where

of

reassembly

is

performing

much

better

well,

if

it

turned

out

that

fragment

forwarding

is,

is

better

or

is

almost

equal

or

better,

then

piranhas,

presumably

when

there

is

some

sort

of

pacing

in

water.

What

it

means

is

you

and

I'll

come

to

this

point?

What

if

what

is

pacing

so

before

every

fragment

is

forwarded

on

the

sender

side,

we

would

add

some

fixed

delay.

I

It's

a

fixed

delay

of

50

milliseconds

100

millisecond,

because

it

is

much

easier

or

trivial

to

implement

this

kind

of

delay

mechanism.

On

the

sender

side,

it's

very

difficult

to

implement

such

delay

on

intermediate

hops,

because

on

intermediate

hops,

you

might

have

multiple

fragments

coming

from

multiple

streams,

so

it's

it's

non-trivial

to

implement

it.

So,

on

the

synth

aside,

this

delay

was

implemented

and

I.

We

had

a

great

discussion

on

the

design

team

mailing

list

to

implement

this.

I

It

improved

the

pacing,

improved

the

PDR

drastically

for

fragment

forwarding,

but

also,

naturally,

it

induced

some

sort

of

latency

and

it

was

a

big

factor.

The

latency

was

a

big

factor.

You

know

so

the

reasoning

part

the

Mac

transferred

failures.

If

you

see

the

macro

transmit

failures

were

relative

were

very

high

in

case

of

fragment

forwarding

without

any

pacing

it

uses,

if

you

add

some

pacing

with

pre-assembly,

it's

much

better,

but

one

one.

One

more

point

that

I,

so

the

observations

here

is

this.

I

All

the

observations

here

are

quoted

are

in

context

to

the

setup

that

we

have

and

it's

not

a

very

general

setup.

It's

very

specific

to

our

deployment

fragment

forwarding

seems

to

depend

on

pacing.

If

you

add

pacing

the

read

latency

and

if

you

add

pacing,

the

real

latency

is

negatively

impacted.

Naturally,

at

least

in

this

case,

Ferrari

is

simply

seems

to

be

doing

better,

both

in

terms

of

PDR

as

well

as

latency,

the

L,

but

clearly

LT

primitives

have

a

bigger

impact

than

anything

else

on

this

whole

scheme.

I

So

one

of

the

key

takeaway

from

from

this

experiment

was

that

if

you

are

going

to

implement,

fragment

forwarding

without

I

mean

without

considering

any

l2

primitives

I

mean

without

considering

what

your

l2

is.

You

might

have

some

surprises,

so

it's

better

to

have

it's

better

to

get

your

l2

characteristics

in

place

before

thinking

about

such

a

scheme

fragment

for

a

drop

due

to

memoral

unavailability,

in

our

case,

at

least

at

least

it

was

nil,

and

the

reasoning

was

that

we

had

a

great

topology.

I

It

was

a

sufficiently

balanced

network

and

the

traffic

patterns

were

sparse.

So

not

much

of

a

memory

issue

for

us,

but

if

you

have

a

constant

fragment,

constant

payloads

going

out

of

the

node,

then

you

might

run

into

memory

or

an

ability,

issues

and

fragment

forwarding

in

that

case

might

perform

much

better.

Secondly,

secondly,

one

more

important

thing

is

fragment.

Recovery

might

actually

help

in

this

case,

because

we

found

that,

as

the

number

of

fragments

per

payload

increased,

it

resulted

in

exponential

decrease

in

PDR

or

R

or

Layton

exponential

decrease

in

PDR.

I

I

That

we

used

was

Whitefoot

framework.

Basically,

it's

an

MS

3,

a

larger

loop

and

in

the

back

end-

and

there

are

some

implementation

quotes-

that

we

mentioned.

We

needed

some

slack

to

be

added

in

first

fragment

yeah.

Clearly,

more

experiments

are

needed.

There

are

different

ahrefs

that

have

to

be

tried

out

more

optimal

pacing

algorithms,

that

have

to

be

thought

about,

and

we

are

hoping

to

do

the

same

experiment

on

the

actual

hardware

setup,

Thank,

You

Carson.

K

Customer

a

quick

question:

if

I

understand

your

parameters

right,

the

packet

takes

about

four

milliseconds

to

be

transmitted

and

then

we

add

a

little

bit

of

inter

frame

spacing.

So

we

have

something

like

like

a

five

millisecond

wait

of

a

package

and

it's

interesting

that

50

millisecond

is

kind

of

a

key

and

100

millisecond

is

perfect.

K

Know

about

the

actual

propagation

delay

or

no

I'm

talking

about

the

serialization

time

of

the

packet

I

guess

in

the

air

for

about

five

milliseconds

time.

So

that

means

it

arrives

at

the

next.

Stop

the

next

hop

tensage.

So

if

it

were

able

to

do

this

immediately,

then

the

channel

would

be

clear

again

after

five

more

milliseconds

right,

but

you

have

to

wait:

fifteen

milliseconds

or

even

better

100

seconds.

So

do

you

have

a

hunch,

but

the

relationship

between

this

theoretical

5,

milliseconds

and

the

real

50

or

100

might

be

so.

I

K

I

Right

so

one

of

the

things

that

we

looked

at

was

the

mac

transmitted

failures.

Now

here

only

the

mac

transfer

failures

are

mentioned,

even

the

second

and

third

attempt.

So

we

have

a

macro

macro.

Try

of

three.

The

second

and

third

items

were

also

relatively

much

higher

in

case

of

fragment

forwarding.

So

what

this

tells

me

is-

and

we

had

clear

channel

assessment

in

this

cement

enabled

CC

was

enabled

we

had

carrier

sensing

in

place.

Even

then

this

had

there

were

a

lot

of

failures

with

record

forwarding,

so

in

case

I

think

yeah.

I

K

A

L

L

So

the

idea

is

to

evaluate

the

security

of

the

device

itself

by

reduced

number

of

attribute-

let's

say

five

billion

attributes

the

ephemeral

odour

one

time

for

my

programmable

memory,

secure

my

on

loader

tamper,

resistant,

key

and

diversify

a

key

one

question

should

be:

is

it

necessary

to

add

more

attribute?

But

if

you

had

more

attribute

its

mean,

we

will

define

a

lot

of

subclass

of

device.

L

L

So

what

is

the

goal

of

of

these

of

this

draft?

When

you

talk

about

sick

software

updates

one

guy

in

from

France

and

National

Security

Agency

say:

if

you

don't

have

update

for

IOT,

it's

not

serious,

it's

mean

nothing's

will

works

and

your

plan

to

deploy

IOT

system

will

completely

fail.

So

update

is

needed,

but

if

your

update

can

be

crooked

easily,

then

it's

more

than

having

no

update.

It

means

what

you

are

going

to

deploy

is

put

on

shore

a

kid

named

networks.

So

it's

in

this

draft.

L

L

It

means

it's

a

memory

and

usually

it's

fresh

or

square

problem,

and

it's

mean

you

can

erase

this

memory

or

write

this

memory

and

okay,

it's

non-volatile

memory,

but

it's

mean

it

can

be

erased,

and

this

is

quite

fundamental

because

taking

a

look

at

what

society

today,

you

will

find

simply

that

a

lot

of

device

can

be

completely

erased.

So

it's

menu,

believe

you,

you

have

a

book

order

and

in

the

device,

but

it's

very

easy

to

simply

erase

the

bootloader

and

to

refresh

with

another

bootloader.

L

That's

why

the

only

insurance,

karoke

huge

or

security

is

from

insurance.

You

may

have

device

is

the

use

of

one-time

programmable

memory

and

I

prefer

this

OTP

term

rather

than

one

term,

because

Renaud

me

memory

doesn't

really

mean

that

you

can

only

read

the

memory

and

usually

it's

mean

you

can

write

the

memory

with,

as

a

mean

and

in

normal

operating

condition.

You

cannot

erase

the

memory,

and

so

when

you

use

one

time

program,

memory,

memories,

damn

it's

mean

you

have

something

like

refused

I,

don't

say

that

refuses

perfects.

L

You

have

many

physical

protocols,

your

parallel

and

so

on,

and

this

protocol

used

to

put

code

and

data

in

the

non-volatile,

pasta,

sauce

and

because

anyway,

you

need

a

communication,

at

least

for

for

the

update.

You

have

a

communication

process,

so

in

all

IOT

device

you

have

a

communication

processor

same

time.

The

communication

processor,

in

fact,

is

much

in

the

mind

pop

up

a

cell

say

it's

the

same

thing.

You

have

a

system

on

earth

on

shaggy

device

by

the

communal

processor,

two

as

the

same

the

same

structures

so

to

go

further.

L

First

thing

is

to

identify.

Why

is

the

bootloader

only

needed

if

you

are

no

bootloader?

It's

me,

you

are

going.

You

need

to

use

physical

processor

in

order

to

put

the

code

in

in

your

ddddd

device,

it's

mean,

so

you

have

a

lot

of

physical

protocol

available

for

for

the

chip

and

it's

been

film

where

loader

is

not

some

things

than

that.

L

You

may

use

the

device

without

somewhere

Loden,

and

this

will

give

you,

for

example,

other

security

products,

the

existence

of

physical

protocols

for

flashing

ddd

device,

quite

a

suppression,

attack

and

suppose.

Shannon

attack

today

is

a

very

sensitive

issue

for

all

the

device,

including

the

networking,

the

device,

the

IOT

device,

and

so

on.

It's

been.

L

If

you

have

no

permanent

memory

in

the

device,

you

can

reflash

the

code

you

want

to

flash

in

the

device,

and

this

is

very

easy

to

do

during

the

suppression

supply

chain

process,

because

it's

mean

your

device

could

be

undone

by

many

people,

and

these

people

will

have

the

ability

to,

for

example,

to

erase

your

original

boo-boo-boo-boo

Triodos

and

to

a

fashion

another

inside

so

yeah.

Basically,

these

two

kind

of

device

with

and

not

boot.

L

L

L

No

TP,

it's

been,

in

fact

you

have

a

bootloader,

but

you

just

you

will

never

know

if

the

bootloader,

the

right

boot

and

screw

Sebastian

attack

your

device

could

be

more

device,

and

this

mean

all

you

want

to

do

for

update

software

would

likely

completely

fail

and

as

a

generality,

it

has

the

option

on

your

an

OTP

in

the

device

and

then

in

spin

you,

you

will

have

minimum

guarantee

about

your

device.

Bbp

behavior.

So

that

says,

is

this

draft

is

not

content

with

device

that

include

bootloader

with

and

without

OTP

okay.

L

L

Usually

the

ash

of

the

public

key

is

in

fact,

in

this

memory.

So

part

of

the

security

attribute

for

T

came

from

the

fact

that

you

have

this

kind

of

one-time

programmable

memory,

and

if

you

google,

for

example,

microprocessors

and

an

run,

you

will

find.

What

will

you

likely,

which

are

going

to

get?

Is

the

datasheet

of

a

secure

amount

with

which

the

data

sheets

that

you

could

not

have,

and

without

a

known

without

in

the

agreement,

because

the

specification

of

this

kind

of

device

are

not

perfect.

B

L

Are

running

out

of

time,

could

you

okay

wrap

it

up?

So

do

you

have

security

you

not,

and

some

would

have

no

security,

for

example,

and

it's

very

common

to

do

to

have

no

security

or

not

since

I

am

and

I

like

that,

and

even

if

you

put

security

in

your

boot

reserve,

the

issue

is

your

device

tamper

resistant

or

not

that

to

say

that

all

symmetric

cryptography

may

be

broken

by

side

channel

attack

an

isometric,

so

it's

mean

even

if

you

have

a

secure

boot

order.

L

L

Cryptography

there

was

a

competition

last

year

and

the

goal

was

to

to

write

a

program

with

AES

key

inside

and

it's

it

has

been

skipped

six

months

and

also

program

must

be

broken.

Let's

say

between

a

few

seconds

and

three

weeks,

so

it

means

there

is

no

way

to

this

today

to

securely

compute

a

symmetric

or

asymmetric

algorithm

by

software,

and

the

lastest

tribute

is

a

diversified

key.

It's

mean

because

you

know

that

you

have

side

channel

attack

if

you

use

hardware

without

temper

resistant

key.

B

L

B

J

B

J

What

I

will

do

is

I

probably

will

go

to

this

slide

number

four,

because

I

think

I

have

two

minutes

or

so

yes

long,

no,

not

notice

from

the

the

piece

on

yeah.

They

fill

me

up.

So

I

I

presented

the

this

document

in

Montreal

and

since

then,

I

think

it

became

a

document

and

there's

a

missing

pieces

at

a

time

because

I

had

to

run

some

computations,

it's

actually

good

to

quite

some

while,

but

the

results

are

included

now

in

the

document

version

zero

that

I

posted

by

the

cutoff

date.

J

Essentially

how

can

we

deal

this

different

elliptic

curve?

Flavors

in

small

device,

I

devised,

Oscars?

It's

our

own

is

curves

or

one

of

the

C

over

D

curves,

which

is

either

the

one

used

for

the

film

key

agreement

or

for

signatures,

and

the

main

crux

of

the

drafts

is

really

to

try

to

morph

them

into

something

that

decreases

the

code

size

requirement

and

also

makes

it

easier

to

specify

new

things.

J

So

I

provided

too

many

examples.

One

of

us,

if

you

have

a

small

device-

and

you

run,

for

example,

in

agreement

on

it

like

in

the

co-state

protocol,

then

maybe

you

can

also

implement

the

so-called

efforts

signature

scheme

in

which

also

has

been

a

use

in

the

same

coast.

A

scheme

and

also

in

Hawkeye

I,

saw

using

the

same

implementation

and

let's

go

to

the

last

slide.

J

So

basically,

I

show

that

you

can

use

all

these

different

flavors,

of

course,

by

ripping

em

them

into

the

same

shape

and

so

that

you

can

use

the

NIST

implementations

and

reuse

them

to

also

implement

the

co

fatty

curves,

but

also

to

use

single

implementation

for

both

the

after

signature

scheme

and

the

Montgomery

letter.

That's

you

still

saying

the

Kosei

scheme.

J

The

main

question

I

have

is

the

draw,

for

you

looks

quite

technical

and

the

first

five

pages

are

really

about

the

main

content

is,

and

everything

else

is

essentially

a

huge

tutorial

into

all

kind

of

transformations.

So

I

would

really

like

to

have

a

little

bit

more

feedback

and

I

already

got

some

good

feedback

from

Nicholas

Reznor,

who

I

think

he

did

a

thesis

all

this

project

and

I

also

would

like

to

have

some

implementation

inside

so

I

got

some

information.

J

B

Renee,

we

are

already

three

minutes

over

times.

I

think

it's

enough

time

to

close

the

meeting,

but

it's

for

the

group

to

provide

more

feedback

on

this

draft

I

think

it's

provide

some

useful

information,

gosh

quick

comment:

Thank

You,

Unni,

Thank,

You,

custard,

okay,

so

I

guess

we

need

few

more

reviews

before

before

we

progress

on

this

document.

That's

it

for

Elle

McPherson.

Where

are

the

blue

shades?