►

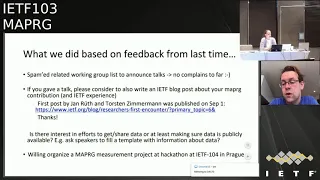

From YouTube: IETF103-MAPRG-20181106-1610

Description

MAPRG meeting session at IETF103

2018/11/06 1610

https://datatracker.ietf.org/meeting/103/proceedings/

A

B

C

A

A

A

A

Yeah

and

then

last

time,

I

presented

a

little

bit

of

feedback.

We

got

from

the

IEP

review,

because

every

research

group

has

once

in

a

time

or

like

from

time

to

time,

actually

a

review

with

the

IAB,

and

we

had

a

good

discussion

and

we

also

had

a

discussion

about

what

we

did

so

far

and

what

we

could

change

or

we

could

improve

a

couple.

A

Things

came

up

and

I

presented

this

last

time,

so

one

thing

we

did

change

this

time

is

that

I

did

a

little

bit

of

spamming,

so

I

tried

to

send

a

pointer

to

those

working

groups

which

might

be

interested

in

the

talks

we

have

here

today

and

I

got

no

complaints,

but

I

got

a

little

bit

of

positive

feedback,

so

that

seems

to

be

useful.

People

told

me.

I

was

not

aware

of

this.

Thank

you

for

sending

it,

so

I

will

continue

that.

A

The

other

thing

we

we

have

discussed

with

the

IEP

or

came

up

from

the

IP

was

that

this

research

group

has

many

nice

talks

which

are

interesting

for

a

lot

of

people

in

and

outside

the

IETF,

and

there

is

the

possibility

to

to

write

blog

posts,

and

we

already

had

one

blog

post

from

people

presenting

their

work

here

and

if

you

are

have

a

presentation,

Mataji

or

also

other

interesting

measurement

results.

You

might

be

interested

in

writing

an

IETF

blog

post

as

well.

A

A

The

third

point

on

here,

where

the

bullet

point

is

actually

missing.

Somehow

was

what

the

thing

that

I

brought

up

last

time

and

I

didn't

get

a

lot

of

feedback.

So

I

would

like

to

ask

again-

and

that's

like-

is

this

group

interested

in

like

only

consuming

measurement

results?

Or

is

this

group

also

interesting

in

being

a

venue

for

sharing

data

and

collecting

data?

And

if

so,

if

there's

an

interest

what

to

do

about

it

like?

How

can

we

get

the

people

in

here

to

bring

their

data

and

which

data

do

you

want

to

see?

A

And

one

simple

idea

was

to

like

pushing

everybody

who

gives

to

talk

a

little

bit

more

on

the

side

of

making

their

data

available

and

and

make

it

easy

to

at

least

find

the

data

for

the

talks

that

we

have

here

and

I?

Don't

see

anybody

rushing

to

the

mic,

so

it's

kind

of

no

okay,

but

that's

not

says

well,

okay

and

somebody

in

the

remote

q.

D

Hello,

everyone

so

I

would

be

the

one

to

say

yeah.

Actually

we

should

consider

doing

this.

There

was

some

work

that

we

did

in

the

Maumee

project,

on

building

sort

of

reference

of

all

databases

of

intermediate

measurement

results

that

I

could

possibly

talk

about

in

Prague,

or

we

could

talk

about

in

a

hackathon

in

Prague

as

to

looking

for

you

know,

some

sort

of

sets

of

intermediate

measurement

results

that

money

makes

sense

to

share

over

a

platform

like

that.

D

Data

collections

we're

talking

about

what

it

would

take

to

get

their

api's

together,

so

that

you

could

use

essentially

a

single

calling

it

for

all

of

those

particular

things

so

I.

Maybe

I

could

propose

a

talk

on

the

state

of

these

things

in

Prague

and

then

we

could.

We

could

speak

for

it

from

there.

Okay,.

A

Okay

and

then

the

last

point

on

the

slide

brings

meiosis

the

next

slide.

One

proposal

was

also

to

interact

with

the

community

closer,

for

example,

by

having

a

map

very

focused,

Heckert

on

table

and

especially

Dave.

My

co-author

a

co-chair

really

liked

this

idea,

and

he

will

push

this

idea

for

he's

not

here

today,

unfortunately,

but

he

will

push

this

idea

for

the

next

meeting

in

Prague

and

the

current

two

measurement

ideas

he

brought

up

was

one

to

check

the

current

state

of

ecn,

because

there

was

a

hackathon

table

at

iit

of

101.

A

So

this

is

like

kind

of

a

continuous

measurement

work

which

would

be

nice

to

do

and

the

other

one

he's

interested

in

is

measuring

DNS

and

there's

also

a

related

paper

at

IMC,

actually,

which

is

a

link

here-

and

you

know,

if

you're

interested

in

these

topics

watch

out

for

a

hackathon

project

at

the

next

meeting

or

if

you

have

more

topics

around

measurements

that

you

would

like

to

hack

on

at

the

next

time.

Please

talk

to

us.

E

Tom

Jones

I

support

the

idea

of

a

hackathon

table.

I

would

sit

there

and

Comcast

before

have

offered

virtual

machines

on

their

DOCSIS

backbone,

for

lack

of

thought

and-

and

this

probably

needs

to

be

organized

in

advance

because

it

wasn't

getting

utilisation,

but

it

would

be

a

good

thing

for

a

platform

to

have

four

measurements.

Okay,.

E

F

A

That's

easy:

okay,

cool

yeah.

We

will

also

notice

on

the

meeting

just

say:

if

you

watch

out

a

little

bit,

you

shouldn't

miss

it

okay

and

talking

about

IMC.

This

is

like

one

of

the

reasons

why

Dave

is

not

here,

because

the

IMC,

the

in

measurement

conference,

was

last

week

in

Boston,

so

he

went

there

and

I'm

here

and

what

he

did

is

that

we

talked

a

little

bit

about

it

and

he

enjoyed

the

meeting

a

lot.

It

was

a

good

meeting.

A

It

was

a

lot

of

people

packed

agenda

more

papers

than

previously,

and

he

put

the

effort

in

to

pick

out

some

of

the

papers.

That

might

be

most

interesting

for

this

audience,

so

you

have

have

it

easier

to

find

the

stuff

that

might

impact

your

work

directly,

while

the

other

presentations

were

probably

also

very

interesting,

so

check

out

the

agenda.

G

A

Okay

and

that's

our

agenda

today,

we

have

a

quick

heads

up

talk

and

then

we

actually

have

five

presentations

and

the

last

who

are

actually

IMC

papers

that

were

presented

last

week

in

Boston

and

are

now

I'm

here,

for

you

brand

new

basically,

and

we

go

ahead

and

we

start

with

Tobias

except

you

have

any

questions

or

I.

Don't

think

we

do

agenda

fishing

here.

Actually,

I

don't

have

time

for

that.

H

Hey

so

welcome.

This

is

together

with

Dave

and

it's

about

some

things

we

found

in

our

research

on

privacy,

one

on

our

v6

scanning

and

ipv6

deployment,

and

basically

we

stumbled

a

lot

about

eui-64

addresses,

actually

there's

one

error

on

there.

Our

C

49:41

has

been

now

been

overruled,

but

I

forgot

to

update

the

slides

and

technically,

the

problem

of

having

you

i64

address

on

the

attend

should

be

kind

of

solved.

H

However,

when

we

were

looking

at

actual

measurements

out

there,

we

found

that,

for

example,

if

you

do

trace

routes

across

the

internet

for

v6,

you

find

up

to

45%

of

hosts,

responding

with

UI

64

dresses

for

ICMP

time

exceeded,

which

is

a

quite

a

lot,

but

be

mostly

CPEs.

The

other

example

we

had

actually

in

a

work

where

we

looked

at

how

we

can

actually

enumerate

reverse

DNS.

We

could

actually

track

people

walking

across

buildings

when

they

were

auto-populated

that

reverse

DNS,

because,

like

8

a.m.

to

1

p.m.

H

In

addition,

also,

the

addressing

practices

in

ipv6

can

lead

to

well

privacy

implications,

because

now

you

can

actually

do

seeing

structured

lee.

So

you

have

a

lot

less

noise

in

there

which

aids

topology

discovery.

So,

for

example,

and

one

of

our

words,

we

could

discern

the

policy

of

dot

mil

installation

quite

well

accurately.

H

There

are

two

related

publications.

One

has

been

at.

Imc

was

I,

think

also

in

the

list

mayor

showed

from

Dave,

which

is

mostly

around

measuring

up

the

v6

adoption

and

where

they

found

these

45%

of

responses

from

here,

I

64

addresses

and

then

there's

the

paper

where

we,

for

example,

found

the

building

stuff

together

with

revolt

in

some

other

people,

which

was

at

security

and

privacy

this

year.

H

H

This

is

basically

a

call

for

measurements

and

observations.

So

if

you

have

anything

which

also

falls

in

the

context

of

ipv6

security

and

privacy

implications,

we

would

really

like

to

hear

your

input

even

more.

So

we

would

like

to

hear

datasets.

So

if

you

have

any

others,

please

drop

us

an

e-mail

at

Dave,

Parker

faith

as

plonker

taught

us

and

my

funny

institutional

address,

and

that's

actually

already

the

last

slide.

If

there

are

no

comments.

A

E

Hello,

I

am

Tom

Jones

and

so

the

University

of

Aberdeen

we've

been

working

on

implementation

of

UDP

options.

Udp

options

ads

transfer

options

to

UDP.

It

does

this

by

taking

advantage

of

the

fact

that

when

GDP

is

carried

inside

IP,

there

were

two

fields

which

describe

the

the

length

of

the

EDP

diagram.

E

There

is

a

length

field

in

the

UDP

header

which

describes

the

the

length

of

the

UDP

header

and

the

data

and

there's

a

length

field

in

the

IP

payload,

which

type

the

payload

length,

and

normally

these

two

numbers

are

the

same,

and

so

we

can

create

surplus

space

after

the

UDP

option.

After

the

UDP

Datagram

by

increasing

the

IP

payload

length,

we'll

keep

in

the

UDP.

Like

the

same,

we

get

surplus

area

in

the

surplus

area.

We

can

stick

in

transfer

options

and

transfer

options.

E

Look

like

this,

and

so

we

have

some

options

for

for

structuring

with

space

and

creating

a

checksum

for

the

option

space

and

then

the

rest

of

the

options

are

tlvs

where

they

have

a

type

length

and

then

a

value

they

carry

and-

and

so

we've

working

this

for

about

a

year

and

ITF.

At

CSP,

WG

in

London,

back

in

March

I,

showed

the

slide

as

an

extra

slide

about

a

potential

issue.

E

The

conclusion

from

this

was

don't

offload

to

UDP

checksums

hardware,

or

so

they

don't

do

this

wrong.

I,

don't

fix

it

in

code

and

I'll,

be

done.

I'm

great,

you

know,

fixing

FreeBSD

the

ITF,

making

them

making

internet

better.

I

went

to

the

pub

and,

of

course,

that

that

doesn't

really

worked

out

and,

and

so

we've

been

doing,

measurements

for

UDP

options

for

the

last

couple

of

months

and

UDP

options

are

fun

to

measure.

There

are

no

hosts

on

the

internet

yet

which

support

UDP

options.

So

we've

had

to

come

up

with

similar

solutions.

E

We

have

a

tool

called

mobile

trace

box

core.

As

a

continuation

of

this

field

called

trace

box,

it

performs

a

trace

route

style,

a

TTL

ring

search

and

allows

us

to

see

where

in

the

network,

packets

or

modified

or

dropped

and

we've

been

using

this

for

doing

measurements,

and

we

also

find

that

UDP

is

quite

difficult

to

measure.

E

We

filter

out

the

hosts

which

don't

respond

to

this

and

we

get

out

a

larger

set.

Of

course

we

can

measure

against,

and

so

with

this

we've

done

some

measurements

for

UDP

options,

and

they

sort

of

look

like

this,

and

this

plot

shows

the

the

chance

of

a

path

for

each

of

these

measurement

types

to

pass

a

UDP

options

diagram

end

to

end.

E

You

might

see

that

this

about

50

percent

chance

of

failure-

and

it's

not

very

goodness,

not

very

promising-

for

UDP

options

and-

and

we

know,

we've

thought

about

this-

and

we've

trying

to

figure

out.

Why

and

and

Ron

Banach

I've

brought

this

up.

You

know

when

the

check

sums

wrong

for

a

packet.

A

lot

of

stateful

boxes

drop

the

packet

because

they

should

they

shouldn't

feed

more

broken

stuff

into

the

internet,

and

but

these

are

these.

Packets

are

being

incorrectly

detected

as

broken,

and

why

is

this?

E

And

so

we

interrogated

this

and

we

figured

out

some

pathologies

for

what's

going

on

our

favorite

pathology.

Is

that

everything

straight

correctly

and

it

works

and

then

serve

in

in

descending

order

of

frequency?

We

find

the

the

checksum

on

the

UDP.

Datagram

is

being

done

on

the

full

IP

payload

length,

and-

and

this

is

the

same

as

the

bug

we

saw

in

FreeBSD-

and

so

this

issue

that

we

thought

would

be

easy

to

solve,

turns

out

to

be

a

big

chunk

of

the

failures

we

find

and

then

the

next

two

cases

are

weird.

E

We

see

the

full

payload

checksum,

but

the

pseudo

header

is

created

using

the

length

in

the

the

UDP

header,

and

then

we

see

the

correct

checksum

like

performed,

but

with

a

pseudo

header

created

from

the

Ikey

length

header,

we

see

UDP

options,

payload

being

passed

as

long

as

the

option.

Space

only

contains

zeros

and

while

that's

cool

we're

not

really

sure

how

to

use

that,

and

then

we

see

hard

checks,

bimodal

boxes

where

they

compare

the

IP

he'll

like

the

UDP

length,

and

this

one

we

don't

think

we

can

solve.

E

So

today,

I'd

like

to

introduce

the

the

CCO.

The

CCO

is

an

option

for

UDP

options.

It

uses

a

modified

super

header

and

the

checksum

across

the

UDP

option

space

and

it

creates

a

checksum

which,

when

you

calculate

the

checksum

across

the

UDP

Datagram

and

the

you

option

space,

you

get

the

same

checksum

as

you

would

get.

If

you

correctly

calculated

the

the

UDP

checksum.

E

We

see

a

huge

increase

in

the

number

of

paths

which

will

successfully,

which

was

successfully

passed

traffic

a

bit

better

than

that

it

actually

works

through

CPE.

So

rural

America,

the

University

of

Oslo,

has

a

testbed

he's

made

out

of

the

strangest

things

he

can

find

in

flea

markets.

He

goes

to

second-hand

shops

and

he

picks

up

for

everytin.

E

He

has

a

test

bed

and

he

ran

his

tests

through

23

of

these

devices

and

at

first

pass

17

over

23

would

would

pass

UDP

options,

but

6

would

drop

it

when

we

add

in

the

the

CCO

all

23

devices

pass

it

great

and

ok,

and

so

this

is

what

we're

proposing.

We

have

a

draft

on

this

we'd

love

it.

If

you

read

the

draft-

and

we

love-

if

you

passed

on

comments

about

this

and

if

you

send

them

to

the

office

directly

or

at

ETS,

vwg

and

we'd

love

to

hear

what

you

think,

questions.

I

Okay,

hello,

everyone,

my

name

is

nicola

queen

and

putting

in

work.

We

have

been

doing

with

all

these

people

listed

underneath

I

need

something

I

need

to

make

clear

before

I

start

is.

We

have

not

been

working

on

IHF,

quick

but

more

on

the

Google,

quick

implementation.

Basically,

why

we

wanted

to

work

on.

It

is

because

first

quick

is

here

already

so

this

is

a

chart.

Lots

of

you

may

know.

So

that's

the

guys

from

working

on

these

things,

so

they

have

a

website.

I

Well,

you

can

actually

have

extract

these

figures

quite

easily

for

your

talks,

and

so

we

just

picked

a

point-

one

nominee

not

so

hard

on

me,

but

when

actually

quick

was

25

percent

of

the

traffic,

so

it's

based

on

the

Maui

traces.

So

this

is

one

of

the

main

reason

why

we

wanted

to

work

on

it,

but

also

because

we

we

as

SATCOM

we

split

and

twine

connections

and

with

TCP.

So

basically,

every

TCP

connection

is

pitted

in

three

independent

TCP

connection,

and

why

do

we

do

that?

I

Basically,

when

you

look

at

the

underneath

picture,

we

have

three

different

websites

with

different

characteristics.

One.

The

three

pages

are

downloaded

with

add

value,

tenth

or

initial

condition,

and

ten

or

initial

condition

of

window

of

60,

and

we

just

split

the

connection

with

the

pet.

We

just

keep

the

cubic

inside

the

pet.

We

don't

do

any

specific,

optimization

and

just

by

splitting

TCP,

we

have

the

time

needed

to

download

the

web

page.

I

So,

basically,

that's

why,

in

a

sec

immunised,

we

will

go

on

splitting

TCP

connection,

but

the

volume

that

we

can

split

quick

at

the

moment.

So

that's

why

we

work

on

it.

That

is

a

slide

to

show

basically

from

analysis

of

a

quick

and

fat

calm.

Basically,

what

could

be

good

for

Earth

is

that

we

have

problems

in

making

TCP

fast

open

when

we

speak

participe

connections.

So

the

zero

ATT

and

shake

thing

is

very

interesting

for

us

problem

is

we

may

not

be

able

to

add

to

the

congestion

control.

I

Good

thing

is

we

can

support

with

that,

a

new

lots

of

new

control,

congestion,

control

position,

control

regions

and

without

pets.

Our

gone

segments

would

be

a

lot

cheaper

and

also

that

would

not

even

we

don't

put

pets

because

we

want

to

split,

but

because

we

have

to,

and

basically

that's

a

big

problem

for

us,

because

we

have

to

follow

all

the

trends

in

end

to

end

to

actually

support

the

good

innovations

and

that's

a

cost

forth.

I

And

although

one

of

the

threat

we

have

at

the

moment,

but

that's

more

for

an

operative

point

of

view-

is

when

you

have

all

different

kind

of

traffic,

with

different

characteristics

being

under

the

same

port.

It's

getting

complicated

to

do

some

quality

of

service

management

for

the

different

needs

of

the

different

applications

and

also

threads

that

we

have

in

that.

I

will

show

later

on

that

we

have

some

issues

with

end-user

quality

of

experience.

For

basically

the

question

we

have

is

earthquake,

doing

any

better

than

speed

TCP

for

SATCOM

public

access.

I

Gouri

already

mentioned

on

the

mic,

doing

whatever

talk

that

I,

don't

remember

when

it

was

this

week,

but

basically

that

it's

sure

that

speedy

TCP

is

doing

better.

But

we

just

wanted

to

make

simple

measurements

to

measure

to

by

how

much

is

it's

doing

better?

So

our

testbed

for

these

are

following

different

questions

we

had.

So

how

can

we

test

our

quick

experiments?

We

can't

replicate

any

quick

implementation

available

today

because

we

don't

have

all

the

smart

congestion

control

that

may

be

embedded

in

it.

I

Another

thing

we

did

to

have

an

actual

and

user

perception

in

that

we

have

an

ink

Ness

public

SATCOM

accesses,

so

we

just

have

a

terminal

and

we

connect

to.

We

have

public

access

is

so

we

connect

our

laptop

to

the

terminal

satellite

terminal

and

go

to

the

different

web

pages.

So

it's

good

thing

if

we

have

real

end

user

experience,

but

then

the

problem

we

have

and

I

will

be

back

on

that

later

and

multiple

moments

is

that

there

are

lots

of

things

happening

lots

of

operator

tuning

that

we

don't

actually

understand.

I

So

we

understand

that

our

experiments

are

just

cannot

be

extended

to

lots

of

cases.

We

just

wanted

to

point

out

from

any

other

experience.

Although

we

are

a

small

community,

we

want

to

make

everything

we

do

available

to

anyone.

So

all

the

scripts

that

run

on

whatever

VM

and

the

using

any

VM

you

can

connecting

to

a

favor.

You

know

enabling

quick,

you

can

reproduce,

also

results.

I

We

have

known

in

all

the

experiments

we

have

so

feel

free

to

use

it,

and-

and

that

is

just

a

slide,

to

explain

a

little

bit

better

how

we

made

our

experiments.

So

we

focus

on

w3c

metrics

to

measure

the

page

load

on

time

and

the

time

to

restart,

and

we

compare

those

two.

We

made

different

webpages

downloads

and

then

we

purge

the

border

profile

and

for

basically,

we

use

selenium

to

automate

our

measurements.

I

Phone,

the

results

we

show

this

kind

of

diagrams,

we

don't

have

lots

of

plots,

but

still

it

shows

disparity

and

how

the

measures

are

distributed.

Basically,

for

the

first

load,

we

often

always

have

the

same

page

loading

time

that

is,

for

the

first

part,

I

will

focus

on

the

big

page.

We

don't

note,

and

basically

what

we

observed

is

that

in

common

implemented

we

always

start

with

the

TCP

connection.

I

So

the

first

reason

of

our

question:

if

quick

doing

any

better

than

speeded

TCP

for

large

page

on

satellite,

it's

not

the

case

for

this

is

to

be

more

for

the

CDF

of

with

from

French

words,

but

that's

the

CDF

and

now

basically

we

show

that

we

are.

We

have

two

completely

independent

CDF,

so

we

are

quite

sure

about

what

we

are

showing

here

to

better

understand.

I

We

have

tried

to

come

up

with

some

sequence

number

view,

so

this

is

the

sequence

number

reception

in

byte

and

add

from

a

function

of

the

time

to

since

the

connect

start.

So

the

first

triangle

is

when

we

actually

start

to

download

data,

and

the

second

triangle

on

the

top

is

when

we

finish

to

the

nahji

page,

so

we

will

be

back

to

that

after,

but

the

first

back

bits

bytes

come

before

with

quick,

but

not

so

much

and

also

what

we

can

feel.

I

If,

basically,

if

we

look

at

the

very

derivative,

when,

basically

when

you

look

at

the

one

pub,

we

can

feel

that,

with

the

back,

we

have

a

very

high

and

stable

throughput.

We

get

up

to

speed

directly,

because

we

know

our

conditions

with

quick,

quick

congestion

control

doesn't

know.

Google

quick

collision

control

doesn't

know

that

we

are

navigating.

So

basically

it

takes

its

awhile

to

actually

come

up

to

the

goods

and

available

boundaries.

I

This

is

the

part

of

these

things.

We

don't

understand,

basically,

if

we

look

at,

but

because

we

want

to

be

honored,

and

so

when

we

look

at

the

different

loads,

with

your

huge

disparity

in

the

tron,

no

quick

page

and

or

learning

time

in

basically

everything

in

the

response

that's

what's

happening.

There

is

strange

that

may

be

due

to

the

channel

capacity.

The

way

this

access

is

done

for

those

all

these,

they

are

two

things

that

are

happening

on,

so

we

not

actually

know,

and

we

couldn't

dig

into

the

details.

I

I

The

thing

is

basically

quick

is

doing

better

I,

don't

know

how

much

time

left

I

have

if

it's

fine,

but

basically

we

can

see

that

the

the

fact

that

we

have

the

first

bytes

of

data

that

comes

faster

with

quick

is

very

a

huge

gain

in

this

case,

and

so

again

the

a

lot

of

things

we

don't

understand.

We

just

wanted

to

report

some

results

and

share

the

code.

We

have

that

anyone

wants

to

replace

it

with

any

kind

of

also

accesses

can

take

the

code

and

try

it

out.

I

So

at

the

conclusion

for

small

files,

quick

quick

is

winning

even

on

the

satellite

thing,

because

we

have

very

first

data

bytes

that

arrive

earlier

for

large

files

stated

tcp

wins,

and

the

big

issue

is

how

to

get

up

to

speed

with

quick

in

this

case.

So

the

paper

is

here

and

we

are

here

to

have

any

discussions

on

it.

So

what

you

want

jump

in

now-

or

that's

my

last

slide.

J

That's

that's

a

pretty

large

number

by

during

that

stance,

at

least

in

my

experience.

So

our

numbers

indicate

that

you

know

a

median

congestion

window

for

a

typical

connection

is

something

we're

like

20

to

40

for

most

users.

So

your

your

two

orders

of

magnitude

off

the

typical

user.

You

combine

that

with

the

longer

RTT

and

and

slow

start

is

going

to

take

a

while

to

fix

that

so

I'm

very

interested

to

see

what

you

might

come

up

with

to

to

make

this

situation

better.

J

I

think

there

might

be

some

things

on

the

server

side

we

could

potentially

do,

but

but

yeah

I

mean

this

is

sort

of

the

nature

of

this

is

one

of

those

cases

where

end

end

is

actually

worse,

because

you

just

have

a

network.

That's

so

different

from,

like

the

typical

network

and

connections

are

relatively

short

web

typically

and.

I

Thanks

for

final

for

the

small

solutions

we

may

have,

we

have

already

measurements

on

just

increasing

a

lot

of

initial

causation

window

helps.

So

if

we

can

say

just

pace

it,

we

our

our

TT,

60

and

paste

a

very

large

congestion

window

and

that's

absolute

already,

not

aiming

for

something

that

we

know

we'd

be

impossible

possible,

but

we

will

try

to

find

some

small

tricks

that

would

help

us

or

not.

Yeah

I

think

we

did

1iw.

L

M

Westford

occur,

I

am

so

like

15

plus

years

ago,

which

means

that

the

data

is

no

longer

valid,

but

I

actually

looked

into

studying

UDP

versus

TCP.

You

know

long

before

a

quick

and

one

of

the

conclusions

that

I

came

to

and

specifically

I

was

looking

at

satellite

links

and

things

like

that

which,

at

the

time

were

really

lossy

like

33%.

You

know

lossy

and

I

suspect

that

that's

gotten

better

and

my

knowledge

of

the

situation

has

gotten

worse.

I

We

are

working

on

geo

satellites

fixed

axis

where

the

loss

ratio

is

lower

than

in

LTE

and

Wi-Fi.

We

have

very

high,

but

we

have

no

loss

now

for

quasi

error-free

transmission,

but

then,

when

it

comes

to

satellite

concentrations

or

mobile

users,

that's

not

the

same.

We

have

burst

of

losses,

so

it's

a

pattern

of

losses

is

not

the

same

depending

on,

but

we

are

focusing

on

geo

bob

on

access

internet

Access's

right.

One.

M

N

N

I

think

would

be

really

useful

to

also

try

to

take

some

measurements

of

the

effects

that

those

large

initial

windows

have

on

low

latency

traffic,

like

on

the

other

side

of

the

hop

right,

because

you're

gonna

be

very

unresponsive

to

congestion

events

then,

and

I

think

that

trying

to

not

only

document

sort

of

the

performance

increase

that

you'll

see

over

the

satellite

link,

but

also

look

at

like

how

bad

are

you

making

it

for

everybody

else.

Who's

sharing

the

network

on

the

other

side

would

be

that's

gonna,

be

part

of

the

story

right

now.

Well,.

I

The

large

initial

condition

window

we

have

I

mean

we

can

increase

it

a

lot

if

we

pace

it.

You

just

said

we.

If

you

send

one

packet,

every

five

milliseconds

during

500

milliseconds,

you

have

already

sent

a

bunch

lots

of

data

without

including

tumors

burst

in

the

network.

So

we're

not

asking

that

says

one

thing

we

work

on:

it's

true

that

we

we

don't

have

a

same

initial

congestion

window

as

in

LTE,

but

then

and

for

the

vegan

point

of

latency-sensitive

traffic.

I

We

have

n

users

with

SNA

contracts

and

requirements

for

latency-sensitive

traffic,

so

we

have

quality

of

service

management

where

we

actually

prioritize

this

kind

of

traffic,

such

as

it

has

low,

latency

and

I,

mean

it

is

not

affected

by

the

problem.

Then,

in

how

your

collision

control

is

affected

by

the

quality

of

service

management.

You

have

underneath,

but

that's

a

generic

topic

not

only

specific

to

our

case,

I,

believe

sure

and.

N

Then

the

question

that

I

had

going

to

your

environment

I

think

that

you,

when

you

were

talking

about

your

test

environment,

it

looked

like

you

said

that

on

the

satellite

link,

you

weren't

actually

sure

whether

they

were

running

some

sort

of

TCP,

optimization

they're

wearing

your

UDP

packets,

your

quic

packets,

over

some

TCP

optimization

is

so.

Do

you

know

for

a

fact

that

there

is

some

TCP

running

in

here

or

we

or

were

you

just

saying

that

you

are

uncertain?

We.

N

I

O

There

Pappas

guy

I,

just

thought,

I'd

come

up

and

say

I,

don't

think

it's

a

controversial

point.

I

just

wanted

to

correct

the

people

who

are

saying

that

you

know

this

is

a

problem

in

satellite.

It's

actually

a

canary

in

the

cage

for

what

could

will

be

the

problem

as

links

get

fast

to

go,

spend

with

delay

product

is

about

bandwidth

as

well

as

delay.

I

Basically,

since

we

have

more

time

and

that's

something

slightly

different

I

didn't

want

to

make

too

much

noise

about

it,

but

we

are

here

and

basically

we

have.

We

are

and

public

Institute's,

so

we

believe

a

lot

in

open

source.

So

we

have

this

tool

that

is

basically

experimental

directly.

We

use

lots

of

open

source

tools

available

to

orchestrate

results.

I

If

you

want

I'm,

not

speaking

about

opening

the

having

a

common

DCP

evaluation,

suite

document

in

the

IDF,

but

it's

just

that

we

are

having

tools

to

experiments,

lots

of

things,

I

want

ECP

fairness,

and

so

whenever

we

have

a

new

cohesion

control,

we

want

to

try

out

to

just

put

it

in

this

box

and

we

have

lots

of

scenarios.

I

couldn't

run

and

I

think

we

think

is

useful

for

the

community,

because

we

always

complain

about

how

to

evaluate

your

furnace

or

performance

of

different

collision

controls.

I

P

A

Q

Hello,

so

probably

you

really

hate

it

when

someone

is

presenting

somebody

else's

work,

because

they

don't

know

deep

in

detail

what

it

is

about-

and

this

is

one

of

those

cases

I

will

be

presenting

on

behalf

of

two

colleagues,

Roberto

Morabito

and

Sicario

LaRue

see

the

topic

is

vehicular

communications

on

how

different

application

layer

protocols

may

affect

them.

You

have

all

the

information

there

on

the

reference

the

reference

below

this

was

presenting

in

the

eye

to

policías

CN

conference

this

year.

Q

So,

let's

start

with

the

purpose,

so

the

main

idea

is

to

see

if

QT,

co-op

or

HTTP,

depending

on

the

vehicle

or

network

might

affect

the

performance

also

depending

on

whether

we

use

H

or

cloud

to

provide

the

service

or

there

also

that

may

affect

the

performance

we

use.

Vanilla,

mqtt,

also,

cooperation

EP.

That

means

that

it's

nqt

over

TCP

with

the

QoS

of

that

affects

also

the

resource

you

will

see

later

on

and

with

HTTP.

We

do

not

use

quick,

which

probably

would

have

shown

much

better

results.

Q

Please,

playing

mqt

TCP,

co-op,

UDP

and

HTTP

TCP

as

well

in

the

scenario

is

rather

simple,

so

we

have

vehicle

a

car

with

an

onboard

unit

that

is

contacting

an

inert

P

a

base

station

relatively

close

to

the

base

station.

We

have

the

edge

server

that

is

provisioning

with

the

same

services

as

in

the

cloud.

We

do

not

modify

the

base

station

in

any

way

or

we

don't.

Q

Q

The

setup

is

done

in

Finland,

so

we

have

for

future

work.

We

would

like

also

to

check

vehicle

vehicle

to

vehicle

communication

as

well,

so

we

will

need

more

age

entities.

At

the

moment,

we

have

only

one

H

entity

which

is

located

nearby

the

path

of

the

vehicle

the

car

will

connect

to

the

H

entity

when

is

nearby,

and

it

will

connect

to

the

cloud

through

the

normal

mobile

operator

when

it's

not.

The

data

center

is

in

London

in

Sweden

about

850

meters

away

and

yeah.

Q

So

the

the

participating

entities,

so

we

have

this

system

with

the

data

center.

So,

as

I

said,

that's

inland

we

run

OpenStack

and

inside

we

have

a

VM

with

the

set

of

software

and

protocols

that

are

required

to

send

and

receive

data

payload

size,

rather

small

about

11

kilobytes.

Not

not

so

small

in

the

IOD

scenario,

of

course,

was

relatively

small.

For

for

other

environments,

the

H

entity

is

a

Dell

server,

d5500

15

gigabytes

of

RAM

pretty

powerful.

Q

Q

So

it's

we

are

running

it

on

the

normal

network

infrastructure

in

Finland,

and

the

same

thing

goes

for

the

mobile,

which

is

the

mobile

operator

DNA,

and

no

specific

tweaks

there

and

the

onboard

unit

is

a

Raspberry

Pi,

3,

running

raspbian

and

again

running

a

co-op

and

unity

and

HTTP.

We

are

is

connected

to

a

6

pub

shield

and

we

have

a

4G

LTE

module

and

onion

is

transmitting

there.

This

so

very

simple

setup.

It's

unit

on

board,

sorry,

H

server

on

board

unit

and

the

cloud

side.

Q

Q

Also,

as

I

will

continue

later

on,

we

do

comparison

of

the

various

application

layer

protocols,

but

in

the

future

we

will

also

look

into

a

bit

larger

size

payloads

and

how

does

the

H

operate

in

indoor

environment?

We

haven't

finished

the

empirical

evaluation

there

and

we

also

test

with

all

the

radio

interfaces

older

than

40

so

locally.

Q

We

would

like

to

use

Wi-Fi

and

for

vehicle-to-vehicle

Wi-Fi

802.11

dot

P,

moreover,

later

on

also,

we

will

test

with

other

application

layer

protocol

modifications,

just

like

we

did

for

MPD

t

QoS

settings

so

other

than

what

I

already

mentioned.

We

would

like

to

we

check

on

all

the

factors

like

the

vehicle

speed,

the

number

of

clients

and

again

the

queue

is

so

on

kind

of

repeating

the

same

thing.

Q

The

background.

Alright,

just

I

didn't

check

this

like

before,

but

you

here

here

you

can

see.

Basically,

the

setup

we

have

for

HTTP

cooperativity,

so

the

architecture

of

coop

is

client

to

server

restful

type

of

interaction.

The

coop

server

is

running

on

the

device,

the

coop

line

on

the

cloud,

although

in

practices

device

device

to

device

with

a

peer-to-peer

setup,

he

has

a

bit

of

a

larger

header

size

compared

with

GP

as

it.

Well.

Q

No

sorry

compared

with

entity

the

paradigm

of

communication

between

mqt,

HTTP

and

coop

is

a

bit

different

until

it

is

designed

for

pub

sub

type

of

communication

over

co-op

also

supports

the

same

kind

of

pattern

with

observe

option.

An

HTTP

is

also

restful,

then

on

the

semantics.

So,

basically

yeah.

There

are

different

methods

to

do

similar

operations.

We

do

the

basic

one

so

get

post,

put

delete

basic

operations

on

resources

on

the

devices

and

similarly

basic

operations

when

it

comes

to

the

Infinity

side,

so

connect/disconnect,

publish/subscribe

and

so

on.

Q

There

is

a

bit

of

a

larger

handshake

if

you're,

using

TCP

that

is

not

contemplated

here,

but

yeah

so

that'll

be

basically

it

on

the

QA

side,

as

I

mentioned.

I

can

go

on

later

on

it,

but

basically

pure

0

has

no

delivery

guarantee

q

is

one

is

basically

that

at

least

the

message

has

arrived,

1

and

qh2.

There

is

a

very

high

reliably

high

guarantees

that

the

message

has

arrived

one

time,

but

it

it

implies.

Q

That

days

are

for

four

messages:

overhead

and

that's

pretty

much

it

I

mean

it

is

well

known

already

for

the

evaluation.

So

the

set

up

is

on

the

protocol

side

we're

running

mosquito,

which

is

very

well

known.

It

has

a

broker,

it

has

a

client

server.

It

has

a

benchmark

tool

that

allows

you

to

edit

the

QoS

xx

root

code

to

do

modifications

on

the

broker

as

well.

Q

Q

So

then,

as

far

as

empirical

results

go

so

for

the

vehicle

speed.

Sadly,

we

couldn't

test

at

really

high

speeds.

He

was

only

the

the

barrier,

but

ability

was

only

from

30

to

50

kilometers

because

of

the

spin

speed

limitations.

So

obviously

there

was

no

correlation

I

believe

probably

I

mean

we

will

need

to

run

this

in

another

place

where

the

speed

limits

are

a

bit

higher,

but

at

least

you

can

see

some

interesting

results

as

far

as

performing

goes

in

which,

basically,

the

coop

outperforms

in

terms

of

this

is

when

they

grab

it.

Q

On

the

on

the

left

side,

you

have

the

throughput

emitted

messages

per

second

and

on

the

right

side

you

have

the

latency

in

milliseconds

and

in

the

bottom

you

can

see

how

the

H

and

the

cloud

perform

in

in

in

each

of

the

cases.

So

you

can

see

there

that

the

performance

when

it

comes

to

core-

yes,

just.

R

R

Q

Use

a

confirmable

messages,

and,

and

that's

it

confirmable

messages.

There

was

not.

The

acknowledgment

was

not

confirmable,

so

you'll

be

matching,

I

would

say

quality

of

service

one,

but

we

don't

really

have

that

terminology

in

coop

I

should

that

would

be

something

interesting

for

further

work

as

well

to

see

how

the

coop

could

match

the

distillate

quality

of

service

terminology

as

well.

Essentially,

you

could

send

confirmable

acknowledgment

so

that

you

receive

the

confirmation

back,

and

you

know

that

it

has

been

received

only

one

time,

so

yeah

so

again,

co-op

over

UDP

with

the

observe

option.

Q

Sorry

with

the

observe

knob,

sir,

with

the

confirmable

option

on

so

that

you

receive

the

acknowledgment

yeah.

What

was

yeah

so

the

throughput

was

about

in

the

in

the

edge

case

for

coop

25

messages

per

second,

which

was

almost

in

one

of

the

cases

almost

twice

as

the

messages

per

second

for

HTTP

and

in

completely

perform

a

bit

better

than

HTTP

and

in

terms

of

latency

again

co-opt.

Q

Q

The

difference

is

not

as

dramatic

as

in

this

case

and

as

it

was

expected,

the

cloud

also

had

a

bit

of

a

higher

latency

and

a

bit

of

a

lower

throughput,

so

no

surprises

here

and

then

the

next

one

we

did

so

assuming

that

you

have

a

this

kind

of

foam

board

unit

and

this

kind

of

vehicle

connected

to

the

Internet.

You

may

have

different

type

of

services,

so

you

have

an

infotainment

system

with

video.

You

may

have

some

telemetry

being

sent

so

they

have

different

requirements.

Q

So

we

wanted

to

test

not

not

those

requirements

in

particular,

but

having

multiple

clients

connected

at

the

same

time

and

see

how

the

performing

varies

so

again.

On

the

left

side,

the

throughput

on

the

right

side,

the

latency

and

the

as

we

increase

the

number

of

clients

and

the

throughput

greatly

decreases,

especially

in

the

in

the

case

of

HTTP,

so

that

the

tenth

client,

actually

the

throughput,

is

almost

one

third

of

the

first

one.

Q

In

the

case

of

coop,

the

throughput

was

much

higher

and

actually

had

a

very

good

efficiency,

because

it

was

only

10%

difference

between

the

first

and

the

last

client

latency

wise.

It

was

pretty

high

for

HTTP

again.

I

believe.

The

fact

that

we

were

using

TCP

has

something

to

do

with

that.

I'm,

not

sure

why?

Actually,

but

anyway,

it's

something

to

look

into.

But

again

the

latency

was

much

better

for

well

a

slightly

better

for

co-op

than

for

infinity

and

much

better

for

both

of

them

than

for

HTTP

in

the

next

one.

Q

Q

We

could

have

used

cubes

of

one,

and

we

did

I

will

continue

on

that

to

be

a

bit

more

fair,

since

we

were

using

coop

UDP

with

plain

configurable

messages

and

no

equivalent

equivalent

guarantee

of

delivery,

curious

curious

of

actually

a

thing

he

was

designed

for

subtly

satellite

communications.

Actually,

so

it

has

a.

He

has

a

quite

a

lot

of

message

transmission

and

therefore

it

performs

a

bit

worse,

but

it's

the

safest,

obviously.

Q

So

this

was

the

results.

The

throughput

greatly

was

increase

when

using

Q

as

a

one,

so

Q's

of

to

greatly

reduces

the

throughput

and,

and

conversely

same

thing

happens

with

the

latency

still

the

results,

if

you

remember

from

the

previous

slide

so

here

the

throughput

for

Q

s

of

one

was

about

17

messages

per

second.

In

the

case

of

co-op,

it

was

20

24,

25

messages

per

second,

so

still

co-op,

outperforms