►

From YouTube: IETF104-NWCRG-20190328-1350

Description

NWCRG meeting session at IETF104

2019/03/28 1350

https://datatracker.ietf.org/meeting/104/proceedings/

A

Everybody,

so

this

is

the

network,

cooling,

sorry

for

the

cutting

for

efficient

network

communication,

so

troop

we

are

on

time,

but

maybe

we

can

wait

one

minute

one

more

minute.

This

is

the

first

afternoon

session,

so

maybe

it

makes

sense

to

wait

just

a

little

bit.

The

agenda

is

not

total

fool.

We

have

20

minutes

belongs

time

at

the

end.

So

if

we

need

we

can

discuss

just

a

little

bit

more.

It's

feasible

this

time.

C

So

good

afternoon,

everyone,

this

is

again

like

as

I

was

saying,

this

is

the

coding

for

efficient

network

communications

research

group-

if

you

here

for

this

great,

if

you're,

not

you're,

going

to

have

a

great

meeting

anyway

and

you're

going

to

learn

about

something

fantastic.

So

the

goals

that

we

repeat

at

every

meeting

is

that

we

would

like

to

foster

research

in

network

and

application

layer

coding

to

improve

network

performance

and

I

would

say

that

if

I

look

at

the

people

from

this

room,

a

lot

of

them

have

been

very

successful

at

this.

C

We

want

obviously

to

focus

on

the

codes

themselves

and

the

libraries

to

be

able

to

implement

them.

We

want

to

look

at

the

protocols

beyond

the

codes

with

the

protocols

to

facilitate

the

use

of

coding

in

existing

systems

and

existing

applications,

and

we

are

welcoming

all

the

time

and

you're

going

to

see

some

of

this

presented

today.

Real-World

use

cases

and

work

in

progress

in

the

field.

C

C

C

So

you

will

see

that

if

you

go

to

the

wiki

by

any

chance,

you're

going

to

see

that

essentially

you've

been

sent

to

the

github

and

all

the

latest

document

and

the

latest

comments,

including

the

code

that

we've

been

developing

over

the

weekend

or

there

so

Elizondo

okay.

So

the

agenda

is,

is

kind

of

very

simple

after

I'm

done.

Talking

we're

going

to

talk

about

is

what

we

did

on

Saturday

and

Sunday

we're

going

to

have

a

quick

update

on

the

satellite

deck

document.

C

That

is

very

much

ready

to

publish

now,

frankly,

we're

going

to

talk

about

another

update

of

something

that

is

very

also

very

close

to

be

able

to

publish,

which

is

the

network

coding

on

CNN

and

Jung.

We're

going

to

have

a

fairly

extensive

presentation

from

Muriel

and

Karen

who

are

on

the

meet

echo

on

basically

our

LNC

other

random

linear

network

code.

C

We

want

to

talk

about

what's

happening

about

the

the

RLC

ether,

but

now

we

called

it,

which

is

related

to

the

swift

codec

and

that

the

standardization

related

to

it

in

the

transport

group-

and

you

know,

raising

some

of

the

issues.

Obviously

future

meetings.

The

hackathon

will

have

another

one.

We

had

so

much

fun.

We

want

to

repeat

the

experience

and

and

by

the

way

you

have

more,

women

would

like

to

come

to

the

hackathon.

That

was

like

I.

C

Wasn't

the

sisters

lunch

right

now

and

we

said

that

the

problem

with

the

hackathon

it

was

like

you

could

see

huge

amounts

of

men,

but

they

were

not

only

women,

so

I

encourage

more

women,

although

I'm

speaking

to

a

room

here.

Also

with

this

a

strong,

not

a

strong

number

of

women,

and

we

are

going

to

obviously

to

meet

in

Montreal,

and

if

anybody

wants

to

have

any

hints

about

material,

it's

my

hometown,

so

send

the

email

and

without

further

delay

we're

going

to

go

to

the

first

presentation,

which

is

its

Nico

right.

C

E

A

A

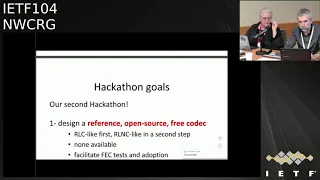

So,

as

I

said,

this

is

the

second

marathon

we

second

time.

Amid

for

this

project,

the

goal

is

to

design

an

open

source

reference

and

free

codec,

with

as

a

first

goal

being

able

to

do

a

noisy

like

codec

and

as

a

second

goal,

an

RNC

like

collect.

So

for

the

first

time

we

will

only

focus

end-to-end

communications,

one

encoder,

one

decoder

and

as

a

second

goal,

we

will

add

this

oil

NC

Network

coding,

with

potentially

the

capability

to

do

rien

culling

within

the

network.

A

So

that's

the

goal

and

of

course

the

the

final

objective

is

to

add

this

technology

easier

to

tests

to

integrate

or

to

benchmark

facilitate

adoption.

Another

goal

is

to

challenge

our

generic

API

internet

draft

I

will

come

back

on

the

spectrum

so

in

during

the

first

ITF

103

I

was

almost

alone.

So

this

time

there

was

a

great

team,

as

you

can

see,

it's

almost

balanced

in

terms

of

gender.

We

had

two

women,

including

my

Jose

and

may

not

and

our

free

eye,

so

it

was

great.

A

So

we

made

a

lot

of

progress.

I

mean

most

a

story

that

we

managed

not

to

finish

the

encoder,

but

we

are

not

very

far

from

the

end

on

the

unclean

site,

so

we

are

capable

of

submitting

new

symbols

and

computing

randomly

not

munitions

on

the

symbols

and

closing

the

session

strings

like

even

it

is

some

tests

on

going.

We

are

not

very

far

from

the

from

the

end

at

the

end,

so

the

encoder

is

the

simple

spots.

Next

time

we

will

have

to

do

the

decoder.

A

In

fact,

we

started

the

decoder

program,

but

it's

not

yet

very

well

against.

So

we

had

this

as

a

goal

for

next

idea.

We

also

wrote

a

te

client-server

demo

application.

That's

also

another

way

to

test

the

codec

itself

with

threads,

and

we

have

almost

finished

this

part

of

the

work

and

we

started

a

python

wrapper

on

top

of

this.

On

top

of

the

api,

to

add

to

add

this,

the

ability

to

integrate

this

c

codec

into

Python

applications

I

won't

come

back

on

the

design

principle

of

this

correct.

A

We

already

discussed

this

in

the

past,

so

the

idea

that

okay,

this

is

just

the

cross

thought

of

the

FEC

correct.

All

many

parts

of

it

are

left

to

the

application,

so

the

signaling,

all

this

stuff,

is

managed

within

the

application.

So

I

won't

go

into

these

technical

details.

Something

great

with

this.

A

Catherine

project

is

that

it

enables

us

to

make

progress

also

on

the

internet,

dot

internet

draft

generic

API

thought.

A

So

we

as

we

do

the

the

world

technical

work.

We

I

don't

find

a

few

problems.

We

solve

some

of

them,

so

we

made

few

fixes

in

this

engine

draft

four

or

five,

and

we

also

I,

don't

find

open

points

but

is

to

say

point

as

technical

aspects

that

we

need

to

figure

out

how

to

solve

in

the

coming

weeks.

So

all

of

this

is

documented

in

this

github

document.

A

If

we

can

achieve

this,

but

maybe

it

could

be

possible

also,

there

is

a

lot

of

clean

up

all

the

stuff

of

things

to

do

on

the

side

aspect

of

this

codec,

but

that's

the

goal

for

next

ITF

and

then

for

the

ITF

106,

we'll

start

working

on

this.

We,

including

capability

that

we

want

to

add

into

these

projects,

has

to

be

a

Island

sea

to

have

an

oil

and

she

like

solution.

A

So

at

the

end,

we

will

have

both

a

support

for

algae,

purely

Antoine

and

Kareem,

recording

and

Ireland

sea

with

the

capability

to

have

this

gray

encoding

within

the

network.

So

that's,

that's

all

for

this

academic

project

just

want

to

say

something

else,

a

sense.

Yesterday,

an

email

asking

for

research

group

adoption

of

this

document-

yeah

I

mean

the

generic

API

document.

This

is

something

that

we

already

discussed

at

procite

EF,

so

there

was

two

positive

opinions

on

doing

that

on

cognition.

A

A

The

fact

we

have

this

document

as

a

document

does

not

mean

that

we

necessarily

need

to

publish

it

as

an

information

or

I

see.

That's

a

side.

Question

we'll

see

that

later

on,

it's

not

very

usual

for

ITF

for

at

EF,

you

have

API

document,

so

we

will

see

if

it

makes

sense

later

on,

but

anyway

it's

important

I

think

to

have

both

works

interim

since

the

mesh

of

the

nation's

major

connections

between

the

two.

So

that's

its.

If

you

have

commands

questions

indicate.

F

Hello,

everyone,

that's

a

quick

update

on

what

we

have

been

doing

in

this

draft

since

the

last

IDF

further

next,

and

only

slide

is

basically

we

had

lots

of

at

the

ATF.

We

presented

this

document

in

front

of

the

ttn

working

hope

as

well

to

see

if

anyone

wanted

to

review

it.

So

we

had

lots

of

comments

from

riderhood

and

lots

of

emails.

I

changed.

F

He

had

lots

of

good

points

and

try

to

sum

up

here

what

we

have

done.

Basically,

at

the

beginning,

we

wanted

to

have

lots

of

people

on

board

on

trying

to

speak

about

what

was

actual

deployment

on

network

coding

in

higher

layer

in

SATCOM

systems.

We

failed

to

do

that,

so

we

have

more

focused

on

indentifying

opportunities,

so

we

somehow

had

some

words

in

the

introduction

that

were

not

really

accurate.

F

Also,

we

had

no

motive

references

which

are

not

relevant

for

research

document.

We

had

an

economy

list

that

was

not

up

to

date

and

we

were

not

clear

on

whether

fitty

color

coding

were

involved

or

not.

So

basically,

we

removed

any

mention

to

physical

layer

coating.

So

we

haven't

had

many

feedback

scenes

and

we

believe

the

hoop

is

draft.

If

still

what

happened

for

being

published

as

an

RFC

is

ready

to

do

so.

A

So,

if

nobody

objects,

maybe

we

can

start

research

group

last

call

on

this

well

see

left

on

the

list

anyway,

but

it's

really

a

list

where

these

three

weeks

period

for

all

research

group

last

call

and

hopefully

people

will

come

and

I

will

anyway.

Does

anybody

intend

knows

that

you

will

read

and

comment

so

this

document

during

this

last

call

how

many

people-

yes,

what

great

okay,

so

at

least

or

two

is

me,

that's

great:

that's

the

minimum

I

hope

there

will

be

more

people

but

anyways.

G

E

H

Hello

I'm

in

matters

because

he

saw

I'm

not

so

no

from

when

I

cheated.

So

let

me

talk

about

a

quick

update

on

net

recording

for

session

and

young

requirements

and

the

challenges.

So

first,

let

me

talk

about

this

draft

of

summary.

The

main

objective

is

to

introduce

research

result

related

to

this

topic

and.

H

So

by

doing

so,

we,

hopefully

we

provide

a

user

for

any

sites

to

network

need

to

code

who

try

to

implement

network

coding

intuition

and

en

you,

so

distrust

now

are

focusing

on

the

requirement

so

describes

a

specific

solutions

and

were

mechanism

out

of

scope

of

this

draft.

So

actual

protocol

proposal

will

be

down

in

another

draft.

H

So,

regarding

mine

applet,

we

we

addressed

missing

your

content,

such

as

we

added

backward

compatibility

section,

because

there

is

no

description

about

about

these

two

requirements.

So

we

describes

recording

comparability

compatibility

weights

were

a

network

operation

with

consideration

for,

since

my

work,

migration,

and

also

we

added

the

content

regarding

security,

privacy

and

routing

in

the

channel

dissection,

so

net

recording

operations

and

in

network

caching

related

to

content,

poisoning

and

cache

pollution

attack

and

the

net

recording

of

may

offer

encoding

vector

modification

by

attackers.

B

E

I

A

J

J

My

suggestion

is

just

go

ahead

and

do

that

now

and

then

we'll

send

that

draft

around

I'll

make

sure

that

it

gets

some

review

in

the

IC

NRG

see

see

if

there's

any

further

things

that

the

ICA

RG

thinks

and

the

NWC

argue

thinks,

and

with

the

goal

of

turning

into

a

last

call

well

before

the

next

IETF

right

see

if

we

can

turn

this

around

in

a

month

or

two

make

sense.

Thank

you.

Thank

you.

C

K

K

This

document

contained

a

large

background

and

symbol.

It

was

focused

on

simple

representation.

Still

is

so.

We

submitted

two

updates

to

that

document,

so

we

separated

the

background

information

on

still

pretty

much

simple

representation.

So

it's

not

complete

in

Arlen,

see

background

document

and

then

the

second

one

is

dedicated,

updated

the

second

one

they

have

0

1

and

it's

dedicated

to

presentation.

This

is

collaborative

worked

with

an

of

code

and

Mossad

all

the

way

we

are

peeking

in

the

name

of

all

the

Maryland.

L

K

Moving

to

the

agenda

slide,

though,

as

I

mentioned,

we

split

the

document

into

the

background

information

and

simple

representations

itself

in

the

updates

in

1001

I'll.

Just

you

know,

Cisco

we

have

new

definitions

that

are

engines

couples,

we

have

two

important

exceptions

and

then

and

then

I'll

cover

them.

What

we

need

look

towards

doing

for

the

next

version

you

to

modifications,

mostly

from

comments

from

the

list,

and

of

course,

if

you

guys

have

come

in

from

this,

nothing

will

add

them

up

and

and

respond

to

them

so

going

to

slide

3.

K

So,

in

the

first

document,

informational,

as

I

said

a

general

background,

it's

supposed

to

be

on

Ireland,

see

that's

the

goal,

its

documents

and

development.

Still

now

it's

focusing

on

the

really

the

background

required

to

read

the

single

representation,

so

an

important

section

was

again

which

is

really

viewing

symbol

representation

as

an

important

standardization

target.

We

actually

argue

for

that

in

this

document

and

then

in

the

second

document,

which

is

a

simple

representation,

specification

itself

added

definitions

and

and

improve

the

figures.

Most

of

these

comments

come

from.

K

So

on

the

definition,

symbol,

representation,

really

we

spelled

out

the

definition.

You

see,

there's

a

slight

difference,

but

basically

the

same.

We

are

talking

about

these

little

carrying

data.

That

means

this

is

what

you're

gonna

have

on

the

wire.

It's

not,

of

course,

what's

gonna

be

used

in

the

encoder,

the

decoder,

that's

different,

so

if

you

guys

have

any,

you

know

comment

on

this

definition.

K

This

is

a

good

time

to

say

so

on

the

disclaimer

here

most

of

the

points,

I'm

gonna

say

it

after

this

are

really

touching

upon

the

some

of

the

comments

on

the

on

the

on

the

background

section

and

also

I'm

gonna

cover

what

we

added

in

the

background

section,

the

the

section

about

simple

representation.

So

you

see

here,

the

figures

is

really

the

fundamental

concept

that

you

have

in

in

the

experimental,

a

draft

which

is

the

second

version

are

in

c01.

K

B

K

I'm

on

slide

5

now,

so

the

major

argument

for

standardizing,

simple

representation

according

to

us

is

that

there

is

a

lot

of

flexibility

in

Ireland

see,

and

so

we

really

need

the

standard.

I

mean,

that's

that's

for

sure,

but

once

we

have

it,

it's

going

to

be

highly

reusable,

and

so

the

point

is,

of

course,

that

Ireland

C

is

is

very

dynamic.

The

structure

is

dynamic

if

the

simple

set

is

very

reconfigurable,

so

examples

of

reconfigurability

are

given.

K

K

You

can

do

de-seed,

which

is

something

completely

different,

and

you

can

also

do

an

indexed

representation

where

you,

if

you

have

just

a

few

coefficients,

you

can

put

them.

You

know

really

unknown

juxtapose

one

next

to

the

other,

each

one

of

them

having

an

index

indicating

which

symbol

they

refer

to.

Basically,

so

so,

there's

a

lot

of

flexibility.

The

reason

for

this

flexibility

is

that

symbols-

and

you

know,

are

LNC

tailored

can

be.

You

can

operate

on

it.

K

You

can

use

linear

operations

on

it

without

actually

necessarily

knowing

have

the

location

of

so

once

you

put

them

there,

you

can

it's

the

same

operations

that

are

done

tailored

for

the

positions

themselves,

which

is

a

really

unique

aspect

that

adds.

That

was

point

one.

The

point

two

is

the

of

course.

The

number

of

coefficients

is

quite

dynamic,

also

the

number

of

symbols,

so

you

can

have

dense

curves,

you

can

have

sparse

codes

and

actually

the

sparsity

can

be

even

dynamic

itself.

K

The

Third

Point

of

flexibility

is

a

simple

size.

Clearly

you

can

have

larger

symbols

or

smaller

symbols.

I

will

define

actually

some

of

these

concepts

later,

because

we've

had

questions

about

this

that

so

you,

if

you

you're,

expecting

in

your

network,

to

have

anything

like

fragmentation,

padding

or

even

encapsulation,

which

is

standard

procedure

everywhere.

Then

you

know

it's

it's.

This

is

very

useful.

This

flexibility

is

useful

and

then

finally,

the

field,

even

the

field,

which

is

you

can

think

of

as

something

fixed

in

most

applications.

It

can

be

flexible.

K

K

You

can

have

flexible

fields,

and

one

of

the

interesting

things

about

RNC

is

that

the

same

operations

can

hold

for

different

fields.

That's

another

discussion.

So

this

is

what

I

have

on

this

slide

on

slide.

Six

I

was

going

to

just

tackle

one

example,

so,

as

we

said,

network

operations

will

be

affected

by

single

representation.

K

So

an

example

is

fragmentation

and

it's

very

simple:

either

you

know

where

your

coefficients

are,

or

you

don't,

and

if

you

know

them,

then

you

can't

separate

the

coefficients

and

do

the

fragmentation.

That's

what

you

see

in

the

figure,

the

a

code

or

a

fragmentation,

you

can

actually

run

recoding

and

modify

the

code

on.

You

know

different

fragments,

and

then

you

can

do

the

decoding

and

if

the

coefficients

are

unknown.

So

this

can

happen.

K

Also

I've

received

comments

on

that

on

the

list

and

reply

to

some

of

them,

though,

suppose

you

have

a

file

which

was

precoded

at

the

different

layer,

so

you

know

it's

good,

but

you

don't

know

where

the

coefficients

are,

then

you

basically

have

to.

If

you

need

to

do

coding

on

the

different

fragments,

you

have

to

add

new

coefficients

and

that's

what

we

call

by

adding

a

new

layer.

Basically

you

have

you,

don't

use

the

same

coefficients

you're,

adding

another

layer

of

RL

and

C

that

you

need

to

deal

with,

of

course,

at

the

decoder.

K

Okay.

So

if

there

are

questions

here,

attack

we're

happy

to

do

to

respond

so

I'm

moving

to

slide

seven.

So

here's

slide

seven.

It's

still,

you

know

arguing

for

the

standardization

symbol,

representation,

and

so

the

point

here

is

I

mean

there

was

a

figure

similar

to

this

in

Yanis

presentation

in

London

Yanis

mentioned

and

through

that,

of

course,

simple

representation

can

be

reused

by

multiple

protocols.

Simple

representation

also

effects

the

architecture,

the

linear

architecture

it

affect

it

effects,

including

topology

that

you

can

use.

K

Alright,

we've

received

some

questions

on

this

too,

so

one

actually

architecture,

of

course,

related

architecture.

Where

you

put

the

coding,

you

can

be

using

the

coding

for

routing

you

can

you

can

just

do

the

encapsulation

as

mentioned

earlier.

These

aspects

are

affected

by

where

the

coefficients

are,

for

example,

and

other

aspects.

So

on

the

topology

side,

we

are

talking

about

the

logical

coding,

topology

and

the

good

example

is

whether

you

need

to

do

recording

or

not.

If

you

need

to

do

recording,

then

the

coefficients

need

to

be

apparent.

B

K

Now

I'm

moving

to

the

next

section,

which

is

overview,

suggested

changes

almost

slide

8.

So

on

this

we

have

received

comments

from

Dave

and

Salvatori,

mainly

seniority,

thanks

and

and

also

Dave

Oran.

Thank

you

very

much,

multiple

comments

and

on

the

first

on

the

background

draft,

we

have

we're

looking

at

substantial

changes.

So

there'll

be

a

bunch

of

definitions

that

will

add,

there's

a

lot

of

networking

terminology

that

needs

clarification

or

correction

to

that

there

is

they've

mentioned

trade

offs

related

to

coding

parameters.

K

We

talked

about

that

a

little

bit

and,

of

course

a

security

section

will

discuss

what

we

put

right

in

the

Security

section

on

the

RNC

draft,

so

there

the

second

version

of

the

symbol

representation.

It's

really

just,

maybe

some

clarification

of

definitions

that

are

required

there.

So

again,

if

you

have

more

comments

on

them

to

the

lists,

so

I'm

going

to

slide

9,

so

just

an

overview

of

the

kind

of

definitions

we're

going

to

be

looking

into

so

first

of

all,

I

mean

before

the

definitions,

networking

terminology.

K

So,

yes,

there

is

a

little

bit

some

a

little

bit

of

washi

use

of

the

word

connection,

as

I

mentioned,

also

in

an

email

just

earlier,

link

versus

channel

and

the

losses

versus

errors.

So

most

of

this

stuff

comes

from

really

any

different

backgrounds

of

people,

people

working

in

communication

theory,

maybe

information

theory

versus

networking,

different

terminology,

so

I

I

go

back,

I

mean

I'm,

gonna,

say

from

you

know

from

the

get-go

that

yes,

we're

gonna,

look

at

the

taxonomy

draft,

RFC

84

of

six

and

maybe

connect

our

document

with

that.

K

A

little

bit

more

refer

to

that

some

of

the

definitions,

some

of

the

terms

that

I

think

we're

going

to

be

using

are

going

to

say,

field

elements

rather

than

since

symbols

is

not

I

mean

symbols

is

used

in

communication

theory

to

mean

you

know

it's

related

to

modulation.

So

so

it

really

means

field

elements

here.

We're

gonna

use,

field

elements.

What

we

mean

by

symbol

here

is

more

than

networking

coding

implementation

term,

which

is

really

the

coding

data

units.

It's

in

a

racial

element,

raw

data,

yeah

I

mean

Yanis

mentioned

yeah.

K

This

is

a

good

term.

I!

Think

raw

data,

it

means

anything

like

uncoded

or

systematic

or

assembles

input

symbols,

application

data.

All

of

that

has

been

used

to

check

the

taxonomy,

but

you

probably

be

using

raw

data.

So

again,

I

mentioned

representation

is

what

goes

on.

The

wire

is

different

from

what

the

input

and

you

can

have.

So

this

is

not

necessarily

the

data

structures

in

here

in

the

nodes.

It's

really

at.

What's

on

the

wire,

we

agree

on

that.

K

K

There

is

two

other

aspects

we'll

be

mentioning

next

slide

cutting

vector

which

comes

back

to

a

point

from

Salvatori

and

also

the

concept

of

hidden

coefficients

which

is

related

ready

to

the

security.

So

that's

nice,

so

slide

10

on

the

coding

vector

so

I'm

just

going

to

a

little

bit

of

detail.

So

yes,

I

mean

I

already

explained.

All

of

this.

The

raw

vector

is

the

clothing

vector.

The

coding

vector

is

really

what

is

used

as

in

the

encoder

and

the

decoder.

K

So

what

we

mean

by

coding

vector

is

really

what

you

have

there

on

the

Left,

it's

the

full

vector,

including

as

many

zeros

as

needed.

If

you

have

sparse

coding,

it's

definitely

knocking

to

you'll

be

standing

on

the

wire.

It

doesn't

make

sense

if

it's

as

far

as

code,

there

are

different

representations

that

you

can

be

using

and

that's

actually

an

important

aspect

of

the

second

drug

that

you

can

look

at

so

I

think

I

mentioned

all

of

that.

Yes,

yeah.

K

The

two

main

aspects

really

for

the

representations

you

need

to

have

the

value

of

the

coefficient,

but

you

also

need

to

have

the

mapping

between

each

coefficient

and

the

symbol

that's

related

to.

So

that's

so

any

way

you

devise

of

doing

that

is

around

and

yes,

neural

is

pointing

to

just

the

fact

that

I

mean

there

are

examples

of

representations,

so

either

you

send

the

raw

vector,

which

is

the

whole

thing.

If

you're

using

dense

coding.

If

it's

good,

then

you

can

just

go

with

the

coefficient

value

symbol.

K

The

document

and

yes,

you

can

send

the

seeds,

which

is

also

specified

in

the

simple

representation

job,

so

that

is

on

the

cutting

that

decide

on

the

called

trade

offs.

So

that's

an

important

point.

We

have

to

say

we

try

to

avoid

this

a

little

bit

because

we

didn't

want

to

go

into

the

other

call,

but

they

are

related

to

many

of

the

parameters

that

are

explained

so

so

we

agree,

I

think

we're

going

to

do

some

at

least

the

fundamental

trade

offs

we're

gonna

mention.

So

basically

the

field

size.

K

You

know

having

a

larger

field

size,

meaning

if

you

have

a

larger

field

size,

then

you

have

higher

diversity,

it's

less

likely

to

have

dependents,

and

it's

higher

complexity

that

that's

a

basic

trade-off.

Also

you,

if

you

have

a

smaller

field

size

you'll

need

to

send

probably

more

redundancy

because

of

the

denier

possible

linear

dependence.

So

you

know

you're

working

on

that

I

think

that's

an

important

trade-off,

which

is

fundamental.

The

the

simple

size

in

between

and

the

generator

in

size

is

pretty

clear,

so

the

larger

the

generation,

the

higher

latency.

K

B

K

So

that's

important,

so

there

is

a

latency

through

per

trade-off

there,

that

we

can

explain.

There's

a

extensive

literature

about

this

too

and,

of

course,

the

granularity

of

your

redundancy.

Of

course,

the

larger

the

generations

are,

the

larger

the

window

is

the

more

you

can

be

specific

with

you

with

with

your

feedback.

K

So

sorry

I

mean

I

mean

with

your

redundancy,

so

one

packet

basically

is

if

you

lose,

for

example,

if

you,

if

it

is,

you

can

have

better

granularity

finer

granularity

to

the

words,

and

we

will

explain

that

you

will

explain

that

and

document

so

I'm

looking

at

the

time

and

frankly,

I

need

to

afford

so

other

up

trade

offs.

We

might

touch,

but

we

don't

think

really

unnecessary

at

this

point

is,

of

course,

whether

you

do

a

block

code.

A

sliding

window

really

is

a

design

issue

for

the

application

we

might

brush

on

that.

K

L

This

would

be

an

interesting

topic,

so

there's

a

huge

amount

of

work

that

that's

been

done

by

group.

They

don't

survive

some

others

on

security

and

really

what

we're

concentrating

in

heroes

again

just

how

this,

how

this

connects

to

the

representation

issue.

So

we,

this

is

not

a

comprehensive

view

of

security,

but

the

initial

assumption

that

we

have

here

is

that

we're

operating

for

the

security

aspects

within

the

coating

layer.

So

really

just

focusing

on

the

coding

operational.

L

Aspects

of

coding

specific

so

now

we're

coding

operates

course

allowing

for

mixing

of

data,

and

the

natural

question

is:

what

are

the

security

consequences?

One

are

there

extra

vulnerabilities

that

open

up,

but

two?

What

are

the

extra

of

possibilities?

Also

that

this

provides?

Also,

you

know

one

of

the

things

that

this

allows.

Of

course

is.

Is

data

hiding

the

encoded

data

in

the

absence

of

decoded

is,

of

course,

actually

a

very

well

protected.

It

is

related

to

you

know,

sort

of

sure

style.

L

You

know

post

respond

up

security

actually

strongly

behind

such

as

you

know,

basically

trusted

stored

over

untrusted

networks.

I

think

that

people

course

sometimes

worry

about

because

of

the

work

that

you

have.

The

mixtures

is

this

issue

of

pollution

attacks,

sometimes

more

Byzantine

attacks

because

insider

attacks,

but

it's

inside

the

networks

in

it

appear

system

or

when

you're

recording.

If

one

of

the

one

of

the

intermediate

routers

were

colonized,

so

then

the

possibilities

run

a

detection

and

correction,

some

of

them

automated

the

representation,

some

of

them

or

not.

L

There

is

work

on

that.

The

other

aspect,

of

course,

is

a

the

aspect

of

verification.

Just

I

want

to

say

for

the

Byzantine

attacks

is,

of

course

you

do

both

detection

business

school

attacks

either

end

to

end

or

I.

Didn't

immediate

knows.

Even

that

knows

that

that

don't

have

enough

degrees

of

freedom

to

decode.

You

can

also

do

correction

by

combining

it

with

an

error,

correcting

code.

L

Also,

verification

is

something

where

that

touches

upon

homomorphic

encryption

I

just

want

to

point

out

that

the

don't

necessarily

need

third

of

the

more

sophisticated

elliptic

curves

verification

with

encryption,

but

the

the

network

coding

aspect

does

does

vary

with

hormone

fizzle.

Basically,

what

you

need

is

home

office

and

after

the

linear

operations

of

the

and

actually

very

simple,

discrete

log

systems,

elida

phenomena

perfectly

compatible

with

that.

So

again,

you

know.

L

K

K

That

needs

to

be

looked

at.

The

RFC

Percy

84

is

fixed

economy.

Maybe

a

couple

more

references

about

related,

simple

representation,

there's

also

a

question

in

coda

right.

We

saw

that

from

an

oscillatory,

so

all

of

these

will

respond

to

in,

of

course,

definitely,

and

of

course,

if

you

have

last

slide,

if

you

have,

you

know

any

comments,

questions

or

suggestions

do

not

hesitate

to

send

them

on

the

list

or

just

come

to

the

microphone

and

tell

us.

No

that's.

A

A

On

slide

5,

you

mentioned

that

there

is

this

dynamic

number

of

K

of

coefficients

and

symbols.

Yes,

but

if

I'm

looking

at

the

adder

I

see

your

four

bits

field,

meaning

that

you

cannot

exceed

the

15

16

depending

on

how

you

count

okay,

is

it

less

flexible

it

really

on

what

you

want

to

achieve

with

which

field

size

things

like

that

that

much

yeah.

K

B

K

A

Yep,

which

leads

to

another

comment,

it's

great

to

have

this

specification

of

full

specification

of

the

ideas,

but

if

we

won't

go

into

that

direction,

then

we

may

then

we

should

make

attention

not

to

closed

doors

and

by

being

too

specific

on

this

aspect.

So

it's

a

difficult

exercise

to

keep

the

eye

flexibility

of

rnc

that

you

mentioned

I

fully

agree

and

at

the

same

time,

specifying

something

which

is

very

detailed

in

terms

of

either

format

but

difficult

exercise.

K

There

but

again

I

mean

this

is

one

simple

representation.

So

what

I

mentioned?

What

I

said

in

Montreal

is

that

we

might

end

up

with

a

with

a

couple

three

or

four

different,

simple

representations:

each

geared

to

class

of

applications,

so

so

I

mean

it

doesn't

have

to

be

all

full

flexibility,

and

it

doesn't

have

to

be

also

too

rigid

so

and

that

yeah

definitely

a

discussion

for

the

list.

We

agree

and

and.

L

A

K

These

documents,

so

not

necessarily

I,

mean

I,

think

representations

can

be

added

in

different

documents.

I

think

it's

not

a

bad

idea

to

have

maybe

a

certain

number

of

of

documents,

each

addressing

maybe

a

class

of

applications.

This

is

the

first

effort.

I

mean

it

can

go

either

way.

Frankly,

so

we

can

discuss

it,

but

as

far

as

I

see

it

now

clarifying

this

one,

putting

it

down

I

think

is

useful

and

then

we

can

take

it

from

there.

Yeah.

L

A

I

agree

since

you're

mentioning

the

possibility

to

other

seed.

You

means

you

have

a

PNG

associated

to

this.

We

also

intend

to

provide

a

list

suggestions

in

terms

of

PNG

I'm

asking

the

question,

because

the

last

item

in

the

agenda

today

is

about

our

experience

on

terms

of

30

mg.

42

I

will

have

to

come

back

on

this,

but

what

you

go?

Okay,.

K

So

yeah,

so

that's

that's

a

good

question.

We

had

it

also

on

the

list,

so

we,

our

initial

thought,

was

to

have

that

part

of

the

protocol

specification

and

negotiation.

I

think

that's

a

natural

place

for

it.

We

are

really

really

specific

to

the

symbol

representation

here

so

yeah

that

can

be

I.

Think

that

that

can

remain

outside

yeah

I

mean

we're

open

to

discussion

here.

If

you

think

it

really

has

to

be

part

of

it,

I

don't

see

now

why

it

should

be

part

of

it.

That's

all

yeah.

B

A

L

A

H

M

I,

just

can't

get

rid

of

so

I

here

present

our

work

on

implementing

and

father

as

a

correction

extension

to

the

quic

protocol,

so

first

a

quick

reminder

about

the

equip

protocol.

So

this

is

a

transport

protocol

providing

a

reliable

and

secure

transports

of

data.

It

also

provides

stream

multiplexing

in

order

to

avoid

problems

such

as

head-of-line

blocking

so

fake

worst

part

of

the

only

quick

versions,

but

it

has

been

dropped

due

to

bad

experimental

results

from

the

only

designs.

M

So

how

does

a

quick

packet

look

like

it's

just

composed

of

header

and

payload?

The

payload

is

itself

a

sequence

of

frames.

So

let's

take

an

example

here

we

have

three

frames

in

the

quick

payload.

The

first

one

is

an

ACK

frame

which

acknowledges

the

received

packets

and

the

two

others

are

what

we

call

stream

frames.

They

carry

user

data

for

two

different

streams

here:

the

stream

X

and

the

stream

Y.

M

M

We

will

discuss

the

differences

here,

so

we

have

two

implementations

one

using

the

quick

go

implementation

which

is

based

on

the

Google,

quick

design

and

second

implementation

working

on

ICO,

quick,

which

is

based

on

the

IETF

quick,

quick

design,

draft

14,

so

I

will

show

some

friend

formats,

but

we

do

not

intend

to

propose

it

as

a

standard.

So

we

just

want

to

discuss.

The

idea

is

not

the

details

of

these

frame

formats.

So

let's

first

take

a

look

at

what

the

term

draft

is

proposing.

M

So

we

want

to

define

what

are

the

source

symbols,

which

are

the

the

data

unit

that

we

want

to

protect,

and

the

draft

proposes

to

chunk

a

stream

into

blocks

of

fixed

size.

The

size

is

called

E,

and

so

we

will

chop

the

stream

into

several

fixed

charge,

size

chunks,

and

we

will

consider

this

equal

size

chunks.

As

our

source

symbols,

so

here

on

the

figure

we

can

see

that

we

have

ten

source

symbols

and

the

last

one

is

synchrony,

because

the

stream

is

finished

before

the

end.

B

M

This

track,

so

there

are

some

advantages

of

proceeding

like

this.

First,

we

don't

in.

We

don't

need

any

signaling

to

to

identify

those

source

symbols,

because

the

server

and

the

client

only

have

to

agree

on

the

size

of

the

chunks

and

then

they

they

don't

need

to

advertise

which

part

of

a

stream

is

a

source

symbol,

because

it's

implicit

another

advantage

is

that

there

is

no

control

over

head,

because

we

only

protect

the

user

data.

We

don't

protect

any

any

frame,

header

or

thing

like

that.

We

focus

on

user

data.

M

M

So

here

you

can

see

on

the

on

the

image

that

the

packet

header

is

not

really

part

of

the

symbol.

Only

the

the

seconds

and

frames

is

the

salsa

mode.

So

this

approach

also

has

some

advantages.

First,

we

don't

need

to

agree

on

a

symbol

size,

because

if

two

packet

payloads

have

different

sizes,

we

can

we

can

consider

that

one

of

those

two

source

symbols

will

be

padded

to

match

the

size

of

the

other

and

as

quick,

naturally

handle

padding.

M

This

additional

padding

will

be

understood

as

padding

frames

and

having

not

having

to

to

agree

on

a

symbol.

Size

also

helps

us

to

solve

the

silent

period

problem,

which

was

a

problem

that

was

pointed

by

the

authors

of

the

draft.

If

you

look

at

the

figure,

you

can

see

that

the

last

symbol

is

not

complete,

so

we

are

unable

to

protect

it.

So

if

this

last

symbol

is

lost,

we

can

recover.

We

cannot

recover

it

because

we

cannot

add

padding

in

stream

frames

because

it

would

consist

in

adding

data

in

the

user

data.

M

Another

advantage

is

that

we

can

protect

more

than

just

the

stream

data.

So,

for

example,

we

can

protect

the

flow

control

frames

and

we

can

also

protect

some

other

friends,

like

the

data

grant

frames

that

were

discussed

yesterday.

That

will

provide

a

message

mode

for

the

quick

users.

We

can

also

protect

any

of

the

frames

that

are

not

currently

not

part

of

the

design

because,

as

we

protect

a

quick

payload,

we

are

agnostic

of

what

it

contains.

M

So

this

approach,

this

packet

based

approach,

still

has

some

inconvenience.

First,

we

need

explicit

signaling

to

identify

the

source

symbols,

because

then

it

is

not

implicit

anymore.

So,

for

that

we

propose

to

add

a

new

frame

that

will

identify

that

this

quick

payload

is

considered

as

a

source

symbol.

We

call

it

the

source

back,

pedal,

ie

frame,

and

it

just

contains

one

field,

which

is

a

no

back

field,

and

this

field

will

be

populated

by

the

VEX

key.

It

will

identify

the

source

symbol

in

the

in

the

coding

window.

M

Another

disadvantage

of

this

packet

based

approach

is

that

we

have

an

increased

overhead

because

now,

as

we

are

protecting

a

sequence

of

frames,

we

will

also

protect

the

header,

and

so

we

are

not

only

protecting

the

user

data

anymore

and,

as

we

have

an

increased

overhead,

we

will

more

likely

be

in

the

case

where

so

symbol

we

have

a

full

packet

size

and

so

the

Reaper

symbol

might

not

completely

fit

into

one

packet.

So

you

know

implementation.

M

We,

we

restrict

a

little

bit

the

size

of

a

source

symbol

to

be

able

to

have

a

Reaper

symbol

that

fits

into

one

packet.

So

now

so

here

we

thought

about

the

Sun

symbols.

Now,

let's

talk

about

how

we

we

send

a

Reaper

Simoes.

So

here

we

have

an

approach

that

that

is

similar

to

the

approach

described

in

the

undercurrents

column,

for

quick

specification,

so

we

add

a

new

frame

which

will

basically

contain

a

Reaper

symbol,

so

I

won't

dig

dig

into

the

details

of

this

frame.

M

Then

we

had

to

think

about

what

to

do

when

we

recover

a

packet,

because

in

a

transport

protocol,

if

we

recover

a

packet,

we

can

do

three

different

things.

First,

we

can

AK

this

recover

packet,

so

it

will

make

the

sender

thing

that

this

packet

has

been

successfully

received

and

then

it

will.

It

will

not

retransmits

the

the

lost

data,

which

is

fine,

but

it

won't.

It

won't

adapt

its

congestion

window.

So

if

this

lost,

if

this

loss

was

due

to

congestion,

the

congestion

window

won't

be

adapted.

M

M

However,

the

problem

is

that

we

transmit

the

loss

data,

even

if

there

they

have

been

successfully

received.

So

we

propose

a

third

way

to

react

and

this

is

consisting

explicitly

advertising

the

sender

that

we

recovered

packets.

So

we

we

send

a

new

kind

of

frame

which

is

called

the

recovered

frame

and

it

announces

the

range

the

range

of

packets

that

have

been

successfully

recovered.

M

In

that

way,

the

server

can

can

know

that

a

packet

has

been

lost

and

then

recovered,

so

it

can

adjust

its

congestion

window,

but

it

can

avoid

to

retransmit

the

recovered

data,

so

the

format

assist

of

this

frame

is

currently

very

identical

to

the

format

of

an

ACK

frame,

just

instead

of

announcing

announcing

which

packet

has

been

acknowledged,

it

announces

which

packet

has

been

recovered.

So

now,

let's

take

a

look

at

some

experimental

results

that

we

have

performed

with

our

implementations,

so

we

are

doing

a

simple

request.

You

have

a

question.

Excuse.

M

F

M

So

yeah

I