►

From YouTube: IETF104-TLS-20190326-1610

Description

TLS meeting session at IETF104

2019/03/26 1610

https://datatracker.ietf.org/meeting/104/proceedings/

B

Our

chairs

Chris

Wood

Joe,

Sal

alia,

myself,

Sean

Turner.

This

is

the

note.

Well,

you

should

have

seen

it

a

couple

times

by

now.

You

know

what

Oh

closer

okay

I

can

do

that

too.

This

is

the

no

well

super

loud

up

here

but

anyway.

Basically,

if

you

get

to

the

microphone

you're

gonna

get

recorded.

There's

video

in

here.

If

you

get

IP,

are

supposed

to

disclose

it

don't

be

a

bad

person.

You

can

read

the

rules

next

requests.

We

need

a

minute

taker

and

a

jabber

scribe

before

we

proceed.

B

B

Okay,

I

think

someone's

phone's

gonna

blow

up

in

a

second

Richard's

gonna.

Do

it?

Thank

you

very

much,

sir.

We

need

to

the

microphone.

Please

state

your

name,

let's

keep

it

professional

and

let's

keep

it

succinct

to

you,

because

we

have

a

lot

of

presentations

today,

Thanks

that

was

the

first

day

the

next

day.

So

this

is

the

administrative

part

and

we

had

a

whole

lot

of

presentations.

The

ones

between

the

two

lines,

our

working

group,

jobs

and

the

ones

at

the

end

are

not.

B

We

did

have

another

none'll,

we

did

I,

don't

know

another

non

working

group

draft

called

CTLs

for

compact

Els,

but

the

agenda

bashed

it

off

it's

out

there,

so

you

can

go,

read

it,

but

it

wasn't

really

ready

for

part

time

and

without

further

ado

is

anything

else

we

want

to

talk

about

before

turning

it

over,

which

is

deprecating.

Tell

us

one

then

nope

all

right:

let's

do

it

so

I

think

it's

Kathleen.

C

C

C

1.0

and

so

a

result

of

this

conversation

was,

should

we

be

more

explicit

about

DTLS

1.0

deprecation

in

light

of

this

work

being

recent,

and

the

work

in

this

case

is

that

I

believe

it

recommends

D

TLS

1.2,

but

doesn't

do

a

hard

deprecation,

and

so

the

update

made

was

in

the

abstract

here

to

add

mention

of

DTLS

1.0.

So

are

there

objections

to

that

update?

Do

people

need

time

to

look

at

this?

D

E

F

B

The

only

point

I

would

add

is

that

obviously

the

system

was

not

one

of

our

documents,

so

we

should,

you

know,

make

sure

that

we

tell

them

before

IETF

last

call

that

we've

done

this

right,

isn't

80

261

am

I.

Looking

at

the

right

one,

more

details,

encapsulation

of

SCTP

packets

done

by

the

transport

area

ad.

B

E

C

Okay,

so

the

next

question

discussed

on

the

list

was

about

nntp

and

some

should

not

and

must

not

language

for

using.

I

think

it

was

1.0

in

this

case,

and

the

response

Stephen

gave

on

list

was

that

it

was

good

to

do

this

update

because

should

not

must

not

are

not

the

same

things

right.

So

we

are

deprecating

its

usage,

and

so

this

makes

it

a

little

more

clear

by

doing

that

update.

Does

anyone

have

a

problem

with

that?

C

Okay,

wonderful,

a

reference

for

3gpp,

deprecating

TLS,

one,

oh

and

one

one

was

updated

and

then

so

I

think

at

the

last

meeting,

I'm

pretty

sure

Stephen

already

went

through

the

70

different

documents

that

this

updates-

and

these

are

just

some

notes

on

additional

updates

to

other

documents.

I,

don't

think,

there's

anything

here

to

really

comment

on,

but

it's

here

in

case

they're,

something

that's

you

feel

is

important

to

comment

on

before

we

move

along.

C

G

Violence

support,

perhaps

Martin

Thompson

I

dropped

a

link

to

our

current

stats

on

where

things

are

at

in

terms

of

turning

yourself

for

the

web

into

the

Java.

If

anyone's

interested,

they

should

take

a

look

at

that.

It's

pretty

bad.

There's

a

lot

of

sites

out

there

that

still

don't

support.

Tell

us

one

I,

don't

mind

that

people

accept

TLS,

one,

oh,

but

not

supporting

TLS

one

is

kind

of

bad

in

this

situation,

and

that's

really

what

we're

pushing

for

here

by

deprecating

one

one.

Oh

one,

one

wait.

B

F

All

right,

this

may

be

even

shorter,

certainly

more

that

I

said

so.

I

just

put

a

rush

to

31

draft

literally,

like

I,

think

yesterday,

I

was

doing

other

things

for

the

meeting.

This

has

basically

a

bunch

of

a

deferral

improvements

thanks

to

Martin,

especially

date,

samples

has

sort

of

an

annoying

bug

with

the

pizza

for

the

original

orders

that

the

new

order,

the

new

orders

better,

but

the

urgent

workers

confusing

so

I

fixed

that

and

I

chef

I,

said

twice:

1

fix

it

then

I

fixed

it.

So,

hopefully

people

funnies

an

implement.

F

F

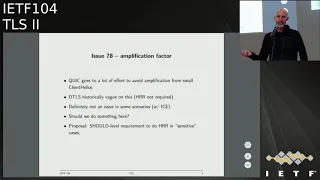

So

quick

like

there's

a

lot

of

effort

and

says

basically

like

the

client,

hello

has

to

be

a

certain

maximum

size

and

when

initial

Latasha

client

alone

in

this

case,

and

that

you

only

let

us

send

in

packets

in

response

on

DTLS,

has

been

like

historically

kind

of

vague

on

this

and

I

said.

Has

this

on

this

path,

validation

that

has

been

nice

to

be

called.

However,

if

I

request

now

it's

called

hollow

retail

request

on,

but

you're

not

required

to

do

it.

F

G

So

I

think

the

advice

can

be

a

little

more

than

that,

but

sorry,

Martin,

Thompson,

the

the

advice

we'll

be

having

in

quick,

is

basically

don't

send

significantly

more

than

what

you've

received

for

this

apparent

address,

and

so

in

the

z-row

ICT

case.

There's

there's

this

huge

potential

for

amplification,

but

if

you're

not

careful

simply

say

don't

do

that

unless

you've

validated

that

the

other

side

would

be

fine,

I

think

you,

you

probably

want

to

have

a

must

level

requirement

in

here.

F

G

H

How

dishonest

the

only

environment

where

we

have

seen

DDoS

attacks

in

the

IOT

context

was

when

co-op

was

used

without

didi

Ellis.

Actually,

we

had

not

seen

any

amplification

attacks

with

based

on

the

use

of

Detailers

and

specifically

thinking

about

1.3

there's,

also

depreciates

secret

based

mechanism,

where

there's

actually

no

need

and

to

use

the

H

or

the

Hillary

fury

request,

because

you're

already

authenticating

the

client

on

the

first

backup

anyway,

we.

F

I

G

I

G

This

is

great

for

the

handshake,

but

there

is

also

the

addition

of

connection

IDs

to

the

protocol,

which

means

that

you

can

use

the

protocol

on

new

paths

based

on

an

existing

handshake

and

we

don't

have

any

explicit

pass

validation

mechanism

in

the

protocol.

Sure

which

makes

it

a

little

interesting

that

that

I

feel

comfortable

saying

as

an

application

issue.

G

F

Know

you

have,

but

basically

there's

no

on

like

if

there's

no

instructions,

if

you

get

if

you

get

a

new

IP

addresses

or

sending

another

P

address,

and

do

we

not

it

wouldn't

be

good

to

because

then

you

because

then

you

have

like

you

have

active.

You

have

information

attacks,

so

we

assume

that's

gonna,

look

like

when

we

just.

We

need

connections,

plus

it

aside

to

keep

our

Gration

out

of

the

protocol

right.

So.

K

L

L

Given

that

correction

ID

is

a

construct,

that's

India

TLS

is

not

available

to

the

application.

It

might

be

something

it

may

be

worth

saying

something

about

how

some

sort

of

a

signal

from

the

application

if

the

path

has

changed

might

be

useful

for

DTLS

in

details

can

then

use

that

to

change

the

connection

ID

sure

I

can

I.

Can

you

know

that

someone

you

know

I

can

do

that,

assuming

that.

L

F

You

you

okay

next

slide.

This

is

me

I

think

this

is

just

like

Millia

to

toriel

the

spec

doesn't

say

what

to

do

so

in

details.

1.2.

There

was

a

cookie

and

details

1.3

that

got

moved

into

the

cookie

extension

because

we

had

a

cookies

for

hvhr,

so

there's

no

good

reason

to

have

a

non-empty

cookie

and

the

cooking

extension.

So

my

proposal

is

just

to

forbid

doing

this

and

require

the

server

to

detect

it

and

choke.

F

So

I

think

this

is

really

just

a

troll

issue

at

some

point:

okay,

72

Martin

at

that

Martin

Mastiff,

Weeki

separation

with

TLS

first

to

GCLs.

My

proposals

no

and

the

reason

is

falling

on

the

transcripts-

is

different.

The

headers

are

different

because,

because

the

handshake

headers

are

constructed,

so

there's

no

chance

that

you

can

have

it

and

and

the

record

layers

are

as

well

so

there's

no.

Thank

you

there's!

No!

So,

even

though

you

even

that,

so

you

you

can't

get

the

same

key.

F

You

can't

have

a

TLS

CG

on

you

all

have

at

each

Ellison

DTLS

like

proxy.

That

would

take

it

like

the

TLS

messages

and

send

the

details.

Connection

and

get

the

same

keys

out,

and

even

if

you

did

messages

wouldn't

be

correctly

formed

when

you

talking

use

them

so

I

think

this

is

gonna,

be

fine,

so

I

posed

in

noting

its

if

Kitty

or

cuff,

that

comes

and

tells

me

is

any

different.

I

will

change

my

opinion.

F

Implementation

status

liking

a

bit

on

on

tales

for

my

three,

but

we

now

have

implementations

in

an

SS,

mint

and

embed

I

thought

I

said

she

knew

size

which

had

better

in

AB

story

on

them.

So

you

know,

F

story

is

improved

slightly

from

this

slide

and

that

we

got

new

out,

but

can

we

mint

and

SS

I

think

yesterday?

F

I

want

to

run

but

I.

Think

that

looks

basic

okay,

so

post

next

times

here,

I

want

to

update

the

issues

as

discussed

above

there's

a

few

more

soil

issues

which

I

just

haven't

dealt

with,

but

are

easy

to

deal

with.

Ornery

new

draft

and

multiplied

Nolan

run-up

testing

and

send

a

report

to

the

list

and

I

think

we're

ready

to

go.

Guess

you

yeah.

B

So

I

mean

that's

the

question

will

if

they

come

back

and

do

another

last

call

and

I

didn't

see

anything

that

actually

kind

of

warrants

that

so

I

think

if

you

guys

just

continue

dinner

up,

and

if

you

find

anything

great,

if

you

don't,

then

we

can

just

push

it

forward.

Yeah

we're

also

going

to

start

to

deploy

this

in

Firefox

all

right.

Thank

you.

H

H

Boring-Ass

asalaam

integrated

some

support

some

time

last

year

and

chrome

shifted

in

July

I,

don't

remember

the

specific

version

CloudFlare

also

integrated

that

boringest

south

support

and

deployed

it

on

a

last

year

in

September,

there's

been

also

some

work

from

Apple

I

think

also

boring

itself

and

from

Facebook.

So

we

were

able

to

collect

some

data

from

the

CloudFlare

point

of

view.

What

we

see

is

an

average

1.5

kilobyte

reduction

in

the

certificates

for

both

ECDSA

and

ever

say.

H

Ecdsa

went

from

about

3.5

kilobytes

to

2

kilobytes

RSA

from

about

4.9

kilobytes

to

3.5.

So

one

of

the

main

reasons

why

we

did

this

work

was

to

reduce

the

potential

amplification

factor

during

the

quick

handshake

which

uses

TLS

1.3.

The

quick

specification

allows

for

allows

the

server

to

send

about

three

times

the

amount

of

bytes

the

client

sent

in

its

first

light.

Before

the

address

verification,

so

the

client

is

is

supposed

to

send

at

least

1200

bytes,

so

the

server

can

then

send

back

something

like

3.6

kilobytes.

H

What

certificate

with

certificate

compression

we

get

quite

a

good

Headroom

with

ECDSA,

but

for

RSA

there's

it's

not

there.

We

still

need

more

space.

I

forgot

to

point

out

that

cloud.

Fair

use

is

partly

brought

recompression

with

the

with

the

default

compression

level,

so

tweaking

the

the

either

the

compression

level

or

the

dca

used.

We

can

probably

save

more

bytes.

K

F

Dina

asada

is

really

interesting.

D

know

offhand

how

much

of

that

value

you're

getting

is

because

your

Co

compressing,

like

you,

know

the

issuer

in

the

subject.

Sorry,

what

so

I

mean?

Do

you

know

how

much

so

do

you

know

offhand

if

you

just

compressed

like

the

EE

certificate,

how

that

would

compare

to

compressing

the

chain

like

if

you

get

as

high

compression

ratio

most.

H

F

Now

joins

wondering

like,

for

instance,

the

name

like

the

issuer

name

is

duplicated

between,

as

it

is

renamed

this.

Your

name

is

like

duplicate,

but

in

the

subject

of

this

years

to

forget

in

it

as

I

was

wondering

yeah.

Are

you

if

part

of

the

value

you're

getting

that

you're

compressing

redundancy

between

the

chicken

inside

the

chain

or

not?

No.

N

H

So

so,

there's

just

one

remaining

open

issue,

which

is

the

attacks

about

presented

some

time

last

year.

The

idea

is

that

if

the

decompression

function

produces

different

results

based

on

the

timing,

it

receives

the

data

an

attacker

might

be

able

to,

for

example,

delay,

network

packets

and

then

cause

the

the

receiver

of

the

certificate

to

to

physically

process

a

different

certificate,

and

what

the

what

the

sender

sent

it

was

pointed

out.

The

disease

did

the

same

problem

basically

applies

to

a

s,

and

one

parsing

is

just

that.

H

So

so

one

potential

solution

is,

to

just

add

the

decompress

certificate

today

handshake

transcript

Azaz,

either

by

adding

both

the

compress

certificate

and

the

decompressed

certificate,

or

just

the

decompressed

certificate.

So

the

question

is:

do

we

do

we

want

to

fix

it?

We

actually

care

from

the

mailing

list

discussions

and

from

talking

to

people

in

person

there.

It

didn't

seem

like

there

was

a

lot

of

interest

in

this,

but

the

discussions

were

like

pretty

small

anyway,

so

you

tell

me

I

guess.

H

B

B

O

So

Victor

vasila

of

Google

what

I

wanted

to

notice

as

the

issues

with

potential

attack

and

compression

while

the

issue

is

itself,

is

a

very

theoretical

in

nature

in

nature,

fixing

it

will

lead

in

various

ways

of

fixing

it

to

various

forms

of

headaches

and

which

are

not

so

radical.

Unlike

illicit

post

is

an

issue

which

is

theoretical,

which

is

why

I

would

advocate

for

not

fixing

it.

H

On

this

on

the

open

issue,

I

wouldn't

want

to

have

the

decompress

certificate

in

the

in

the

transcript,

because

it

makes

the

implementation

much

more

complicated

because

in

a

way

how

most

flowery,

including

ours,

works.

So

at

that

time,

when

you

process

the

packet

and

create

the

transcript,

we

actually

don't

pass

the

content.

So

maybe

not

good.

A

separate

question

did

you

if

you

look

at

the

code

size

of

the

functionality

in

specifically

the

compression

algorithm

and

the

van

requirements

that

would

be

I

would

be

curious

about

this.

H

In

light

of

many

of

the

discussions

we

have

currently

in

the

idea

for

that

TLS

being

too

heavy

weight,

and

so

on

and

so

on

right,

so

we

do

add,

some

numbers

on

the

actual

compression

I

think

it's

kind

of

like.

If

I

we

saw

some

some

of

this,

some

incrementing

in

CPU

usage

I

think

it

was

about

1/2

percent

globally,

I,

don't

I,

don't

know

if

that

means

anything

to

you,

but

so

this

was

on

on

clawed

floors,

edge,

Network,

okay,

odd,

you

didn't

do

anything

on

a

sort

of

more

client-side

device.

No.

C

I

Benkei

doc

is

interesting.

The

house

was

up

before

me

to

say

that

he

doesn't

want

the

decompressed

certificate

in

handshake,

because

I

was

sort

of

considering

the

case,

also

for

IOT,

where

you

might

have

an

extreme

compression

function,

which

is

like

a

one

byte

index

into

a

table

certificates,

and

in

that

case

it's

a

lot

easier

because

you

might

have

some

miss

binding

problems.

G

H

I

H

G

H

F

Yeah

I'm

much

less

concerned

about

this.

This

particular

flavor

attack,

I

I,

think

that

they

they're

relevant

concern.

Is

it

somehow

possible

to

have

I'm,

not

sure

I'm,

just

taking

my

feet

on

a

compression?

It's

not

closing

the

compression

function

where

there

are

two

things

that

compression

the

same

thing,

but

that

seems

like

it's.

Gonna

have

other

problems

like

operationally

yeah

aside

for

us,

aside

from

like

any

miss

binding

issues,

so

I

think

we

have

to

convince

ourselves

that

the

crush

pressure

function

is

collision

free.

So.

H

F

F

You

know

that

has

to

be

injected

right,

but

I'm

not

concerned

of

this

defect.

Issue

I.

Think

it's

like

I

think

it's

like,

as

you

say

it

had

the

a

bug

in

the

deacon

pressure.

I

think

it's

fasting

more

likely

that

bug

in

the

deacon

pressure

is

like

it's

like

an

undersized

memory.

You

know

a

UAF

for

something

else,

so

I

think

much

more

likely

than

this.

If

you

have

been

much

more

serious,

so

I

I

think

we

should

proceed

with

this

as

it

is,

and

just

talking

with

Rome.

Isn't

it

yeah,

then

the.

B

O

Victor

Vasily,

if

I

wanted

to

us

answers

a

question

about

the

binary

size,

so

the

binary

size

of

broadly

is

quite

substantial

because

it

carries

a

giant

dictionary

with

it,

which

is

why

this

is

mostly

intended

for

clients

and

servers

which

serve

web

content,

because

when

you

serve

web

content,

you

want

perfectly

for

other

reasons-

and

this

is

like

this

is

three

really.

Both

clients

and

conservers

can

trivially

choose

to

not

implement

this

and

they

will

work.

Just

fine

yeah.

P

B

Q

R

All

right,

hello,

I'm,

going

to

talk

about

a

draft

that

is

on

draft

number.

Three.

It's

been

adopted

as

of

very

long

time.

We

haven't

talked

about

it

for

a

while,

so

it's

due

for

an

update,

so

just

as

a

bit

of

background,

if

folks

remember

what

this

is,

it's

meant

to

help

protect

interface

internet

facing

applications

from

having

their

long-term

TLS

keys

in

memory,

allowing

them

to

instead

have

a

shorter

lead,

lived

key

in

memory

and

keep

their

long-term

key

somewhere

else.

R

There

was

I

believe

a

buff

or

lurk

about

this,

but

the

motivation

behind

this

change

is

to

do

so

is

to

not

introduce

the

latency

that

these

changes.

These

proposals

introduce

so

just

to

kind

of

spell

that

out.

If

you

want

to

have

your

key

in

a

very

secure

location

in,

say

west

coast

of

United

States

and

have

traffic

in

Europe,

you

may

have

to

incur

additional

latency

to

do

the

handshake

itself.

This

latency

comes

here

in

delegated

credentials.

The

way

it

works

is

that

a

short-lived

key

is

issued

to

the

web

server.

R

Every

X

number

of

hours,

or

every

periodically

that

is

signed

by

the

certificates

key,

so

it

comes

with

some

additional

parameters.

So,

quite

specifically,

it's

the

way

to

think

about.

This

is

not

as

a

sub

certificate,

it's

more

as

a

time

bounded

key

swap.

This

is

how

Richard

bonds

described

it

so

on

the

right

here,

you

can

see

all

the

the

the

aspects

of

it:

it's

not

an

x.509.

It

doesn't

have

all

the

different

details

of

this.

It

has

specifically

a

this

see

where

it

says

protocol

version

this

has

been.

This

is

the

part.

R

R

There's

a

requirement

for

the

certificate

to

have

a

specific

object,

ID

that

enables

this

feature

but

other

than

that

all

certificate

content

constraints

still

apply.

So

the

subject

alternative

names

any

of

the

key

usages,

all

everything.

That's

that's

on

the

certificate

still

applies

to

the

validation

of

the

connection,

including

revocation

any

certificate,

transparency

requirements.

They

all

apply

to

this

certificate.

That's

delegated

the

credential

structure

itself

is

validated

validated

against

this

public

key

in

the

delat

in

the

end

entity,

certificate

and

and

then

obviously

the

certificate

verifies

is

checked

against

the

key

in

the

delegation.

R

So

this

has

several

benefits.

You

can

put

the

signing

key

far

away.

You

can

centralize

the

control

of

it.

You

can

kind

of

split

your

operations

for

key

management

into

short-lived,

fast

rotating

keys

and

a

long-lived

more

secure

key,

and

this

doesn't

have

the

risk

of

expanding

the

scope

of

the

certificate,

unlike

say,

adding

additional

certificate

at

the

end

of

the

chain

and

relying

on

name

constraints.

S

S

R

There's

several

options:

there's

a

if

you

want

to

keep

the

long

term

key

far

away.

You

can

continue

to

do

that.

If

you

want

to

refuse

connections

from

clients

that

don't

support

this

extension,

you

can

choose

to

do

that

as

well

in

and

those

would

be

the

two

ways

to

not

have

the

long

term

key

in

the

local

memory.

F

It's

worth

knowing

there

couple

other

benefits

here,

aside

from

sorry,

I

thought

I

was,

is

what

other

benefits

here

was

the

security

benefits

adjusting

so

I

mean.

Basically

the

idea.

Is

you

have

these

remote

datacenters

right,

and

so

you

can

remote

key

port

O'call.

The

way

nick

says

you

can

backhaul

the

data

all

the

way

back

to

your

main

datacenter

on,

but

you

point

easier

motivated.

F

You

can

have

a

lower

security

level

than

then

you

mean

datacenter

another,

but

though

this

worth

noting

in

the

method

is

deployment

algorithms,

so

like

et

tu

509

is

like

very

slowly

rolling

out

in

cab,

F,

in

fact

not

really

more

young

cab

Beth.

But

this

lets

you

deploy

teacher

thought

of

a

ninth

day,

yeah.

F

G

Then

this

would

support

that.

So,

madam

Thompson

and

the

case

that

you

wanted

to

use

a

new

type

of

signature

algorithm,

the

client

would

have

to

support

both

the

signature

algorithm

on

the

end

entity,

cert

and

the

signature

algorithm

on

the

delegated

credential,

and

so

that

that's

a

little

limiting.

But

it's

it's

certainly

a

lot

better

than

it

has

been

really

sauce.

You're,

not

worried

about

the

size.

G

No,

no,

it

was

just

a

point.

That's

one

way

of

thinking

of

this

is

an

extension

of

the

certification

path,

but

it's

not

it's

it's

an

in

protocol

thing,

which

just

makes

it

now

that

you

have

to

deal

with

two

signatures.

You

have

to

deal

with

potentially

two

different

signature,

algorithms,

and

that

was

that

was

all

as

a

feature,

but

it's

also

means

that

you

need

to

have

the

code

for

those

things

and

assess

doesn't

have

eighty

two

thousand

nine

at

the

moment.

But

you

know

it's.

R

So,

on

the

client

side,

the

validation

of

the

handshake

and

the

validation

of

the

PKI,

if

they're

separate

like

they

are

a

lot

of

plot,

this

allows

you

to

rely

on

upgrades

to

the

TLS

stack

without

necessarily

needing

upgrades

to

the

PKI

stack

and

and

the

certificate

I

guess.

The

signature

algorithm

extension

is

reused

in

this

case,

so

this

is

the

same

thing

that

you

rely

on

so

updates

in

oh

three,

there

was

some

short

discussion

on

the

list

about

protocol

version,

so

this

is

this

was

removed.

R

This

is

TLS

1.3

only

and

there

are

no

non

TLS

1.3

versions,

but

in

any

case

it

is

potentially

useful

for

future

versions

of

TLS

1.3.

Without

changing

the

structure

of

the

credential.

There

is

now

server

implementation

of

oh

3

in

boring,

SSL.

There

is

a

server

deployment

here

at

this

as

this

website

with

the

proposed

ID,

which

is

issued

by,

did

you

cert?

We

there's

a

hiccup

in

deployment,

so

we

we're

going

to

try

to

fix

that

by

by

tomorrow.

So

this

is.

This

is

optimistic.

R

The

one

thing

that

we

wanted

to

have

here

is

is

some

sort

of

formal

security

analysis,

so

we've

grabbed

somebody's

to

do

that,

essentially,

the

properties.

We

want

to

prove

that

this

is

equivalent

from

a

security

perspective

of

having

an

additional

certificate

in

your

chain

as

well

as

we

want

to

have

this

brings

stronger

binding.

Then

you

would

for

a

PKI

chain

and

PKI

chain,

so

you

can

change

the

intermediate

and

use

the

same

key,

and

it's

still

fine

in

this

case,

delegated

credentials

are

specifically

bound

back

to

the

credential

itself.

So

the

next.

R

T

R

F

U

V

Alright,

hello

you're

here

to

give

you

enough

the

unencrypted

at

SN

I

for

those

that

are

unfamiliar

just

a

quick

summary

of

the

problem

as

it

exists

today,

assuming

you're

not

using

an

encrypted

DNS

or

using

a

legacy

version

of

TLS.

Your

network

traffic

leaks

a

lot

of

information

to

any

passive

observer,

you'd

buy

at

the

DNS

query

itself

in

the

equator

answer

or

in

the

TLS

S&I

or

in

the

certificate

that

comes

back.

V

We

also

added

a

rule

that

prohibits

sending

a

cached

info

search,

so

we

specifically

because

they

don't

want

to

leak

what

certificate

the

client

has

previously

cached

and

responds

to

a

connection.

We

add

some

clarifying

words

around

HR

our

behavior,

although

they're

still

a

little

bit

more

than

needs

to

be

done

there.

We

added

also

an

initial

simple

st.

design

for

the

multi

CDM

problem.

V

That

is

the

combined

records

approach,

along

with

a

mandatory

extension

mechanism

in

a

way

to

specify

with

a

particular

extension

in

the

s

and

I

record

is

mandatory

by

mandatory

I

mean

not

mandatory

to

implement

but

clients

which

get

a

mandatory

extension,

and

they

don't

understand

it.

They

must

ignore

the

East

and

I

record

that

comes

back

so

I'll

describe

this

solution.

V

A

little

bit

more

in

a

couple

of

slides

and

in

kind

of

landing,

this

initial

PR,

we

also

dropped

the

S&I

prefix

for

the

the

special

DNS

query

and

moved

from

a

text

record

to

a

new

are

type

per

advice

that

we

got

back

from

various

stakeholders.

So

there

are

also

some

pending

changes

that

are

probably

ready

to

land,

particularly

the

Greece

one

from

David

management

as

well.

It

just

needs

is

it

up

to

date?

V

I,

it

is

okay,

so

we

should

merge

that

relatively

shortly,

there's

also

APR

to

swap

the

version

in

check

some

fields

in

the

sni

keys

record.

If

you

believe

that

the

check

sum

adds

value

for

version,

one,

it

likely

adds

value

for

any

kind

of

version

of

es

ni,

so

by

swapping

them

you're

effectively,

making

the

check

summit

invariant,

which

seems

okay.

V

You

can

end

up

in

a

situation

in

which

the

addresses

that

you

get

back

from

your

a

or

quanta

queries

are

effectively

provided

by

one

CDN

or

one

provider

and

the

PSNI

records

and

says

Texas

should

be

not

text.

The

PSNI

records

you

get

back

are

provided

by

another

CDN

provider

and

that

doesn't

work

because

CDN

two

in

this

case,

doesn't

our

CDN

one

doesn't

have

the

SMI

keys

receiving

and

two

and

vice-versa

and

the

robustness

technique

that

we

added

does

not

allow

you

to

fall

back

from

this

particular

scenario.

V

So

either

you

have

to

hard

fail

in

this

case

or

you

fall

back

to

point

excess

and

I,

both

of

which

are

not

great

and

ideally,

we'd

like

to

avoid

heart

failure

in

order

to

make

this

work.

So

the

simple

PR

that

landed

effectively

works

as

follows:

combine

the

PSNI

keys

and

a

in

quantity

records

into

a

single

simple

record

by

adding

the

addresses

to

an

es,

ni

extension

that

marked

as

mandatory

and

then

when

you

query

for

the

es

ni

records,

and

you

get

back

one

of

these.

V

V

You

sent

our

records

because

you're

simply

not

using

the

a

in

quality

records

at

all

the

you

know.

The

mismatch

rate

is

completely

irrelevant.

The

count,

of

course,

then

is.

It

requires

an

a

provider,

that's

able

to

actually

make

these

records

and

make

sure

that

the

contents

of

the

ESN

I

record

matched

those

that

match

match

the

addresses

that

the

for

the

hosts

that

they

happen

to

service,

so

some

providers

out

there

can

do

it.

Some

cannot.

So

that's

the

trade-off.

There

is

a

the

general

PR

or

the

generalized

PR.

That's

out

there

right

now.

V

This

is

what's

written

is

not

effectively

what's

in

the

text.

What's

in

the

text

is

arguably

a

lot

more

complex,

but

effectively.

What

we're

trying

to

do

is

allow

for

the

addresses

that

are

contained

in

the

sni

records

to

filter

or

to

act

as

a

filter

for

or

ability

check

for,

the

addresses

you

get

back

and

the

Inc

wide

a

records.

So

the

algorithm

basically

works

as

follows.

V

You

would

query

for

your

a

in

quantity

records

for

yeeess,

and

I

record

and

assume

you

get

everything

back

and

you

get

back

in

a

sign,

a

record

that

has

one

of

these

address

pointers,

structures

which

contains

a

netmask

inside

of

it

or

one

one

or

more

net

masks.

You

use

them

to

match

against

the

addresses

that

you

get

back

from

your

in

quasi

records

if

they

match

go

ahead

and

use

those

addresses

and

connect.

V

Just

fine

if

you're,

a

or

quad-a

answers

happen

to

resolve

in

a

cname

chain

of

some

sorts

and

the

cname

are

the

canonical

name

ur.

We

didn't

quite

not

quite

sure

what

to

call

it.

The

name

inside

the

ESN

I

address

pointer

record,

happens

to

match

the

cname

that

you

got

back

from

the

a

or

quad

a

record

used

the

address.

V

Otherwise,

you

have

to

take

the

name,

that's

in

the

es

and

I

record

and

resolve

that

to

an

address

and

in

connect

to

that

one,

because

presumably,

that

particular

record

has

been

constructed

in

such

a

way

that

the

name

will

ultimately

resolve

or

must

resolve

to

a

host

that

is

serviced

by

the

same

CDN

provider.

Otherwise,

you've

royally,

screwed

up

and

things

will

go

badly.

Of

course.

Now.

The

efficacy

of

this

particular

approach

depends

on

how

often

the

mismatch

occurs.

So

if

you

have

a

scenario

in

which

mismatch

occurs

say

50

percent

of

the

time.

V

That

means

you

will

be

doing

a

second

sequential

DNS

query

to

resolve

the

name:

that's

inside

the

ace

and

I

record

to

an

address,

50

percent

of

the

time.

If

you

don't

want

to

run

into

heart

failure,

it's

also

problematic,

because,

depending

on

how

you're

doing

your

happy

eyeballs

like

querying

for

your

a

and

your

quad-

and

you

use

my

records,

you

might

introduce

a

delay

of

some

sorts

in

order

to

get

your

initial.

V

Yes

and

I

responds

back

so

the

time

you

it

actually

takes

to

go

from

nothing

to

you,

know,

es

and

I

keys

and

address.

Some

addressing

information

could

potentially

be

quite

long,

so

not

only

you

paying

the

hit,

but

the

time

it

takes

to

actually

start

connecting

could

substantially

increase,

which

is

not

ideal.

V

It

makes

the

mismatch

rate

irrelevant,

provided

that

you

can

actually

bend

these

particular

simpler,

es

ni

records

that

have

the

full

addresses

in

them.

We

propose

moving

forward

with

that

taking

and

then

taking

the

generalized

version

that

is

in

137,

making

that

an

extension

potentially

in

a

separate

draft-

and

you

know,

working

on

that

and

I

guess

either

bringing

it

back

to

the

working

group

or

something

so

I'm

I'm

hopeful

that

people

will

people

who

are

opinion

about

this

particular

topic.

V

Well,

come

to

the

mic

now

and

tell

me

whether

or

not

they're,

okay

with

this

or,

if

not

because

the

multi

CDN

issue

was

the

big

thing

we

needed

to

address,

order

to

move

forward

with

the

s

and

I.

So

I

would

really

like

to

see

something

happen

here,

so

just

that

we

can

get

ourselves

on

block

to

move

forward.

So

Stephen.

E

E

C

E

Extensions

in

ES

and

I

or

yeah,

which,

if

anybody

bought

into

that

which

I

don't

think

they

do

that,

would

rule

out

this.

Up

to

this

password

on

the

1:03

has

I

just

kind

of

worried.

It

will

cause

another

fest

with

a

bunch

of

people

who

have

dependencies

on

how

a

and

quad

a

records

a

manage.

That,

therefore,

wouldn't

be

like

able

to

use

yes

and

I,

but

if,

because.

V

We're

deploying

this

to

an

extension

is

assuming

you

by

the

extension

thing

and

because

we're

deferring

it

to

an

extension

it

just

not

prohibit

them

from

doing

that

later.

In

extension,

right,

it

simply

enables

the

people

who

want

to

do

yes

and

I

and

me

the

way

it's

currently

specified

to

do

it

right.

If

you

assume

that

everybody

that

implements

both

not

everyone

might

implement

both.

V

So

if

you're,

a

client

and

what

is

the

incentive

for

a

client

to

do

the

more

general

one

if

you're

paying

a

potential,

but

it's

the

server

side

who

has

the

dependency

on

the

a

in

for

a

management

right?

But

if,

if

the

clients

are

paying

a

substantial

performance,

if

a

large

fraction

of

time

for

one

of

the

signs

versus

the

other

there's

not

much

incentive

them

to

do

the

the

other

one,

regardless

of

how

hard

or

easy

it

is

for

the

server

yet.

Actually,

if

you

it

right.

E

W

V

Response

to

that

would

be

as

a

client,

so

I'm.

Now

speaking

as

a

client

as

Apple,

we

would

not

it's

very

unlikely

that

we

would

implement

a

solution

that

had

a

performance

regression

in

this

that

PR

137

potentially

provides

or

gives

us.

So,

regardless

of

whether

or

not

it's

in

the

draft

or

in

it

in

this

draft

or

in

a

separate

draft,

it

doesn't

change

the

fact

whether

or

not

we'll

implement

it

we're

looking

for

something

to

implement.

D

C

C

D

Know

but

that's

kind

of

what

the

question

is

asking

as

well.

You

know

it's

not

intuitive

to

me

so

I'd

like

to

understand

it

more

deeply.

The

current

solution

in

the

graph

actually

does

impose

a

performance

penalty

as

well

right

because

you're

essentially

racing

an

a

lookup

of

the

es

and

I

look

up

right

and

you're,

giving

it

you're

tolerating

a

certain

amount

of

time

right.

Isn't

the

connection

delay

apart?

D

C

D

V

F

Doing

a

lookup

and

just

telling

the

TLS

they

were

to

go

I

mean

quite

serious.

That's

how

I

simple

emitted

like

these

two

Firefox,

so

I

guess

like

try

to

reframe

this

discussion.

Perhaps

on

the

situation

we

have

at

hand

is

that

we

have

some

evidence

and

some

work

experience

that

will

t

see

the

ends.

We

have

a

real

problem

and

the

yes

and

is

not

to

playable

in

the

current

state,

because

it

causes

wait

because

it

was

a

hard

fail

on.

F

Now

it

may

be

the

case

that

there's

some

mechanism

that

will

be

easier

for

some

people

employ

on

the

server

side

and

will

not

induce

a

major

performance

regression

on

and

I

welcome

that

solution

and

that

solution

is

available

today.

I

prefer

solution

to

this

appear,

even

if

it's

not

more

complicate

for

the

client

to

play.

However,

my

impression

is

that

another

situation

and

then

available

solutions

like

you,

have

no

performance,

regressions

or

seem

likely.

A

performance,

regressions

and

I

can

tell

you.

This

is

a

client.

F

Do

a

connection,

that's

like

not

as

a

non-starter

for

us

yeah

for

like

a

very

ain't

like

for

any

substantial

fraction

of

a

fraction

of

requests,

or

all

that

happens

like

will

liability

for

any

server

like

you

know,

if

it

was

like,

you

know,

like

some

1%

of

requests,

and

it

was

I

suppose

across

servers

like

including

with

new

server,

was

always

taking

regression

like

me,

but

like

if

it's

gonna

be

like

a

major

regression

of

all

time.

We're

signers

then

so

on

that.

F

That's

that

the

requirement

for

solution

on

the

as

far

as

like

the

design

of

the

system,

the

I

think

I,

was

personal,

closest

attention

version,

this

invention

approach,

I

think.

The

advantage

of

that

is

that

lets

us

get

some

deployment

Springs

with

this

with

something

we

know,

will

work

for

the

people

can

play

wall

and

then,

if

something

awesome

comes

along,

that

makes

us

work

better

like

that.

Like

has

the

properties

version

we're

more

than

interested

in

like

taking

that

as

well.

F

B

F

I

forgot

to

mention

one

more

thing:

also

Chris

didn't

mention

I'm

being

nice

I'll.

Probably

this

extension

is

that

that,

because

we

have

that

the

bit

indicating

it's

mandatory,

it's

quite

straightforward

for

you,

advertise

yes

and

I

keys

with

the

like

some

sort

of

peel

and

3070

thing

and

then

conform,

clients

will

should

reject

it

if

they

don't

know

about

it.

So,

like

that

great

quite

smoothly

yeah,

thank

you.

X

I

think

the

on

that

topic

of

the

they,

what

happens

when,

when

it's

mandatory

I

think

it's

some

of

that

will

be

to

be

a

function

of

how

comfortable

are

we,

then,

if

adding

that

extension

is

unintentional,

Ike

one

137

is

mandatory.

If

that

means

that

clients,

using

that

than

that

happened,

implemented

137,

basically

can't

use

yes

and

I

anymore

yeah

when

they

just

I

guess

it

was

enough

being

in

echo,

Eckerson

said

that

that

was

intended.

X

Behavior

and

I

think

that,

where

that

starts

becoming

important,

is

that,

like

with

136

I,

think

the

biggest

challenge

is

going

to

be

cases

where

the

sheer

number

of

a

and

quad

a

record,

the

more

that

might

get

used

in

any

one

point

of

time,

is

more

than

necessarily

fit

in

I.

Think

to

Patrick's

comment.

I

think

there

is

a

bit

of

a

I.

X

Also

do

have

some

anxiety

of

what

of

what

are

going

to

be

the

operational

issues,

we're

going

to

run

into

of

having

the

a

and

quad

a

records

that

people

use

be

something

that

comes

from

another

record.

That's

not

a

or

quad

a

that's

something

that

does

have

a

big

enough

operational

change

and

breaks

a

lot

of

assumptions

that

could

have

interesting

side

effects,

but

I

think

until

we

try

to

deploy

that

we

don't

know

what

the

side

effects

are

doing

that

are

yet

so

to.

V

X

Operational

stuff

of

all

the

complexities

of

how

the

internet

works

between

that,

like

the

example

would

be

like

the

known

known

case,

would

be

dns64

of

dns64

relies

on

being

able

to

rewrite.

Do

those

rewrite.

We

can

put

specify

that

one

in

the

draft

to

cover

that

case

if

clients

implement

that

properly,

but

it's

very

likely.

There

are

other

things

we

haven't,

thought

of

which

were

worked

by

accident

and

not

for

an

undocumented

manners.

It

will

start

tripping

over

as

we

start

trying

to

roll

this

out.

Sure.

U

V

Y

Wester

occur,

I,

say

so:

I

I

need

to

go,

read

the

document

in

greater

detail

and

I'm

glad

that

I

was

in

the

room

so

that

I

could

see

this

issue

propping

up

so

I'll

speak

at

a

sort

of

a

higher

level,

which

is

that

I

think

this

is

especially

to

the

chairs.

If,

if

this

is

gonna

go

forward,

we

need

significantly

more

review

from

expertise

that

is

not

in

this

room.

There's

some

rather

large

interesting

ramifications

of

doing

this

type

of

stuff,

where

we

have

to

some

extent

your

fracturing

the

namespace

right.

Y

So

the

dns

has

considered

the

this

global

naming

system

and

and

there's

already

some

fracturing

because

because