►

From YouTube: IETF104-LWIG-20190326-1120

Description

LWIG meeting session at IETF104

2019/03/26 1120

https://datatracker.ietf.org/meeting/104/proceedings/

A

A

A

So,

let's

briefly

go

through:

what's

the

working

group

status

since

the

last

ITF

in

in

Bangkok,

so

we

have

the

TCP

constraint,

node

networks

draft,

which

received

quite

good

feedback

during

the

previous

IETF

and

the

authors

have

submitted

a

new

version

which

will

be

now

discussing

today

and

hopefully

it's

now

ready

for

a

working

group.

Last

call.

We

also

have

a

presentation

on

the

neighbor

management

policy

draft

from

Rahul.

A

There

was

update

to

the

virtual

reassembly

document,

but

there

isn't

any

presentation

planned

unless

you

want

to

say

something

on

the

mic.

Now

we

will

have

a

presentation

from

John

on

security

protocol

comparisons.

Document

and

Rene

will

also

present

remotely

the

curve

representations.

He

submitted

a

new

version,

so

it's

I

guess

at

0

3.

Now

we

also

have

a

few

non-repellent

that

were

updated,

so

Karsten

Borman

has

this

draft

on

lightweight

terminal.

A

Logy

document

and

Pascal

also

has

a

document

on

the

security

classes

which

seem

to

be

related,

but

we

won't

be

discussing

them

in

in

the

meeting.

If,

if

you

want

to

review,

please

send

your

comments

on

the

mailing

list.

There

was

also

discussion

on

the

minimalist

document

and

we

had

issued

a

call

for

adoption.

A

B

This

is

Mathias,

so

my

main

reason

was

there

was

no

sufficient

feedback

on

it.

So

we

wrote

a

lot

of

stuff,

we

wrote

and

wrote

and

it

might

be

useful,

but

nobody

came

back

to

us

and

said

yet.

This

is

really

good,

or

this

is

still

a

question

that

I

have

so

that

would

be

really

nice

to

move

forward

with

this.

C

A

A

A

D

D

D

We

received

quite

a

lot

of

feedback,

for

example,

two

full

reviews,

one

by

a

few

mini

Sita,

another

one

by

ilpo

Jarvan,

and

then

we

also

received

comments

by

David,

black

and

Manuel

Bocelli,

and

also

we

received

more

recently

the

input

of

code

size

measurements

of

TCP

implementations

for

constrained

devices

by

Rahul

that

have

so.

Thank

you

very

much

to

everyone

for

the

feedback

and

all

the

inputs

and

we

produced

version

zero.

D

D

Now

we

have

added

explicitly

that

an

advantage

of

ECM

is

that

congestion

can

be

signaled

without

incurring

packet

drops,

and

also

added

that

an

RTO

may

incur

a

wake

up

action,

in

contrast

with

a

clock

triggered

sending.

So

this

is

relevant

for

devices

that

have

energy

constrains

and

apply

techniques

for

energy

savings

based

on

being

in

sleep

mode

for

sometimes

then

section

for

the

two.

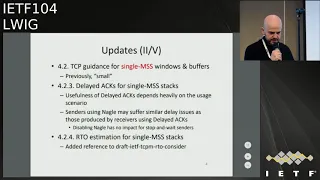

Here

we

have

modified

the

title.

Previously

it

was

specific

items

for

small

windows

and

buffers.

D

Now

we

have

replaced

small

by

single

MSS,

which

is

hopefully

more

accurate,

and

within

this

section

we

have

updates

in

the

part

of

delayed

acknowledgments

for

single

MSS

stacks.

First,

we

have

an

additional

sentence,

which

is

quite

general,

meaning

that

the

usefulness

of

the

latent

management

depends

heavily

on

the

usage

scenario

and

in

the

context

of

a

single

admin

sender,

the

transmits

to

receiver

using

delayed

acknowledgments.

We've

also

added

that

using

senders

using

the

Naga

logarithm.

D

We

suffer

similar

delay

issues

as

those

produced

by

a

receivers

using

delete

acknowledgments,

and

we

have

also

added

that

disabling

Merrill

has

no

impact

in

the

case

of

stop-and-wait

senders,

then

there's

the

subsection

4

to

4,

which

discusses

the

RTO

algorithm

for

single

MSS

stacks

here.

The

point

was

that,

in

this

kind

of

stacks,

the

RTO

algorithm

may

have

a

greater

impact

than

on

greater

window

sizes,

and

here

we

have

added

a

reference

to

a

TC

p.m.

working

group

document

which

discusses

the

RTO

considerations,

all

the

trade-offs

and

requirements

around

the

design

of

RTO

algorithms.

D

Then,

in

the

section

5.2,

which

discusses

the

number

of

concurrent

connections,

we

have

added

something

which

is

actually

obvious

but

was

not

written

in

the

document,

which

is

that

we

need

to

take

into

account

the

overhead

of

the

three-way

handshake

of

each

additional

TCP

connection.

So

this

is

yet

another

reason

why

we

may

want

to

keep

the

number

of

concurrent

connections

in

a

constraint

device

low

and

also,

if

updated,

the

TCP

connection

lifetime

section

here.

D

D

Also,

we

have

added

the

timely

detection

of

a

dead.

Pier

may

allow

memory

savings

which

may

be

useful

for

memory

constrained

devices

and

then

we've

added

another

sentence,

which

is

quite

obvious.

That

was

not

explicitly

written,

which

is

that

sending

TCP

key

believes

frequently

drains

power

on

energy

constrained

devices.

D

Then

we

have

updated

also

the

security

considerations.

Basically,

we

have

removed

the

reference

to

TCP

and

the

five

signature

option,

because

it

is

now

no

longer

considered

safe

and

also

we

have

updating

the

annex

which

tries

to

collect

the

details.

The

details

of

some

implementations

of

TCP

for

constrained

devices

in

the

section

that

talks

about

micro

IP.

D

We

now

explain

that

if

multiple

connections

are

used,

they

need

to

share

the

same

global

buffer

and

also

in

this

section,

we

have

added

that

the

TCP

implementation

in

Contiki

and

G

that

the

the

code

size

of

implementation

is

3.2,

kilobyte

on

CC

25:38

platform

and

Rahul

kindly

performed

these

measurements

just

for

the

sake

of

the

document.

So

thank

you

very

much

and

the

section

about

riot

has

also

been

improved.

We

have

now

added

that

32

bit.

D

Then,

in

the

section

about

tiny

OS,

we

have

clarified

a

bit

couple

of

sentences

that

were

not

so

clear

and

now

we

stayed

hoping

it's

clearer

that

a

send

buffer

is

provided

by

the

application

as

the

last

update.

Well,

we

have

modified

also

the

summary

table,

which

is

in

the

annex.

First,

we

have

changed

how

we

express

the

content

in

the

first

row

of

the

TCP

Features

section

of

the

table.

Now

we

express

it

in

terms

of

whether

a

an

implementation

visit

in

Plantation

for

constrained

devices

is

a

single-segment

implementation

or

not.

D

Formerly

we

had

another

value

which

was

40

kilobytes,

but

that

was

for

an

older

version

of

lightweight

IP.

So

again

we

are

who

perform

measurements

and

can

be

provided

this

result,

which

is

38

kilobyte,

which

is

still

aligned

with

the

previous

result.

But

it's

based

on

a

on

an

up

to

date,

version

of

lightweight

IP.

D

Well,

after

the

submission

deadline,

there

was

a

number

of

comments

that

will

receive

from

Stuart

Churchill

on

version

zero.

For

first,

he

suggested

adding

some

more

text

in

the

document

about

what

our

options

0

1

&

2,

rather

than

referring

the

reader

to

looking

for

that

in

another

document,

then

about

the

recommendation

of

setting

the

MSS

not

larger

than

twelve

hundred

and

twenty

bytes,

he

suggested

to

explicitly

state

that

there

is

an

assumption

here.

D

So

our

plan

is

incorporating

the

feedback

by

a

steward

quite

quickly,

hopefully

within

this

week

and

release

version

zero,

6

and

ending

that

the

authors

believe

that

the

document

is

ready

for

working

group.

Last

call

so

I

would

like

to

ask

to

the

working

group

and

the

chairs

whether

that's

actually

is

something

that

can

be

confirmed.

E

I'm

Stuart

Cheshire

from

Apple

I

just

want

you

to

thank

you

for

writing

this.

This

is

an

enormous

ly,

useful

document

as

more

and

more

companies

get

into

making

network

products

that

have

not

been

in

that

space

before

there

is

this

myth

that

is

widely

circulated.

That

TCP

is

too

complicated

to

implement

and

I.

Don't

know

where

that

comes

from.

Maybe

it

was

true

on

8-bit

processors

in

the

1980s,

but

that

myth

persists

and

it

leads

to

some

very

bad

design

decisions

where

people

think

that

they

can

build

their

own

protocol

on

UDP.

E

That's

as

good

as

TCP,

and

we've

seen

from

the

work

done

in

quick.

You

can

build

a

protocol

on

UDP,

that's

as

good

as

TCP,

but

it's

an

enormous

amount

of

work

and,

if

you're

new

to

networking

and

don't

know

anything

about

it,

you're,

probably

not

going

to

do

better

than

TCP.

So

using

this

document

as

a

way

of

bringing

these

people

up

to

speed

and

explaining

some

of

the

misunderstandings

to

them

is

really

really

useful.

Thank

you.

Thank.

D

A

Think

we

will.

We

will

discuss

this

with

the

TCP

M

Cheers

after

this

meeting,

and

we

will

issue

a

joint

last

call.

My

request

is

to

those

who

have

already

commented

on

the

document,

including

professor

Marku,

ilpo

Stewart,

when

we

do

issue

a

last

call.

Please

comment:

if

you

have

any

remaining

issues

or

if

you

think

the

document

is,

is

ready,

because

that

would

help

the

Shepherd

to

to

move

this

forward.

G

H

Hello,

I'm,

Rahul,

Jadhav,

so

I'll

be

talking

about

neighbor

management

policy.

I

won't

be

going

into

the

details

of

this

thing,

but

but

I

just

like

to

give

an

overview.

So

it

comes

into

picture

for

constrained

nodes

which

have

limited

in

memory

resources

and

the

network

density

or

the

node

density

in

the

network

is

really

really

high.

So

you

have

to

manage

the

neighbor

cache

in

an

efficient

way

such

that

the

churn

in

the

neighbor

cache

is

as

little

as

possible

such

that

your

routing

adjacencies

are

stable

enough.

H

So

this

directly

impacts

the

overall

latency

that

you

can

achieve

for

the

applications

and

the

packet

delivery

rates.

So

today,

what

I'm

going

to

show

is

the

performance

data.

We

have

the

implementation

in

quantity,

which

is

already

mainstream,

but

it

doesn't

take

into

consideration

some

of

the

aspects

of

the

draft.

We

have

a

private

implementation.

The

data

is

based

on

this

private

implementation.

I

will

talk

about

the

data

in

the

subsequent

slides

so

before

going

for

the

data,

the

few

updates

in

this

in

the

draft.

H

So

there

is

something

called

as

minimum

priority,

which

is

which

is

which

is

getting

discussed

in

role,

which

is

quite

a

useful

figure,

quite

an

infamy,

quite

a

bit

of

information

based

on

which

a

node

can

decide

which

are

the

neighbors

which

might

be

prioritized.

So

we

are

making

use

of

this

information.

H

H

We

have

added

more

clarifications

on

route

cleaner

and

its

corresponding

impact

on

the

neighbor

cache

and

how

to

handle

it,

and

then

we

have

added

the

performance

results

in

the

appendix

so

the

performance

test.

What

we

have

done

is

we

have

integrated.

We

have

used

LW

IPs

the

networks

and

we

have

integrated

ripple

with

it.

What

we

have

done

is

we

have

carved

out

the

ripple

implementation

of

quantity

and

made

to

work

with

it

with

Linux

l

WIP,

as

well

as

micro

IP.

H

So

this

is

something

that

we

talked

about

in

a

net

Dave

conf

as

well

this

week

last

week

in

this

we

have

so

l

WL

in

WIP

is

where

we

have

added

this

neighbor

management

policy

module

which

takes

into

consideration

how

to

so

so

whenever

a

neighbor

cache

entry

is

getting

added.

The

reasoning

for

why

is

it

getting

added

is

also

sent

along

and

then

a

reservation

based

policy

kicks

in,

which

decides

whether

this

interest

should

be

added

or

not.

H

If

it

is

not

added,

then

a

corresponding

negative

status

is

sent

back

depending

upon

from

where

the

neighbor

cache

is

getting

added.

For

example,

if

it

is

getting

added

in

context

to

ns

any

messaging,

then

a

negative

acknowledgement

in

na

is

sent

if

it

is

getting

in

added

in

context

to

ripple

messaging,

then

a

negative

message

is

getting

self,

so

we

have

used

the

DES

tool,

Whitefield

framework,

which

does

realistic

wireless

simulation

using

n

is

3

in

the

back

end.

H

The

test

bit

can

contain

64

nodes,

and

you

can

see

that

the

density

is

quite

high.

We

have

packed

in

all

the

nodes

in

80

cross,

80

square

meters.

We

have

used

a

to

2

dot,

15.4

and

2.40

guard

single

single

channel

mode

and

slotted

CSMA

mode.

The

they

did.

The

data

transmission

is

every

10

seconds.

Every

node

sensor

data

every

10

seconds

to

the

border

router

and

the

border

equals

the

packet

back

and

that.

H

The

overall

video

so

the

so

we

wanted

to

understand

what

should

be

what

what

is

the

data

that

we

need

to

take

PDF

packet,

delivery

rate.

We

clearly

understood

you

know

it

is.

This

is

something

that

we

have

got

to

measure.

So

we

wanted

to

check

how

to

how

does

the

neighbor

management

policy

impacts

media,

but

the

network

convergence

time

is

difficult

to

quantify.

H

So

how

do

you

define

network

convergence

time?

Do

you

define

network

and

convergence

time

when

all

the

nodes

in

the

network?

Finally

join

the

routing

join

the

border

router?

Well,

even

if

they

join

the

border

order,

it

doesn't

mean

that

all

the

subsequent

hops

in

between

have

still

retain

the

entries.

So

there

could

be

certain

churn.

So

how

do

you

define

the

neighbor

network

convergence

time

so

finally,

network

convergence

time?

What

we

decided,

what

to

do

was

the

routing

table

should

be

stable

enough

for

X

amount

of

time.

H

So

that

is

how

we

define

and

this

this

stability

should

be

in

the

overall

network,

not

necessarily

only

on

the

border.

So

you

can

see

here.

What

we

have

done

is

the

neighbor

cache

size

is

10,

20

and

40,

with

without

neighbor

management

policy

and

with

neighbor

management

policy.

We

can

see

in

single

channel

mode

of

operation

we

we

could

achieve

greater

than

95%

PDR,

even

with

extremely

constrained

neighbor

table.

H

The

important

thing

to

note

here

is

without

neighbor

management

policy.

There

is

absolutely

no

convergence.

You

cannot

get

any

convergence

with

even

20

neighbor

cache

size

and

table

size,

so

it

is

quite

a

quite

a

big

deal.

The

this

is

what

we

had.

We

finally

had

to

do

this

implementation

to

get

our

pilot

working

in

the

first

place.

H

H

There

are

some

observations,

well,

of

course,

with

neighbor

management

policy,

this

additional

control

overhead

that

is

going

to

get

incurred

there

because

of

the

proactive

may

maintenance.

So

now

the

nodes

in

the

network

have

to

additionally

signal

some

more

information

so

as

to

make

this

proactive

management

possible.

So

that

is,

that

is

the

control

overhead

that

we

are

talking

of

here.

It

increases

with

neighbor

management

policy,

and

the

convergence

time

is

also

high.

H

If

you

see,

as

as

compared

to

40

typical

size,

the

10

neighbor

care

says

the

convergence

time

is

almost

more

than

double,

so

it

also

has

an

impact

on

the

convergence

time,

and

we

feel

that

there

are

there

are

possibilities

to

improve

on

these

numbers.

Having

said

that,

it

would

be

we

as

you,

or

we

feel

that

this

number

that

this

work

would

keep

have

to

be

keep

on

rolling

to

improve

such

numbers.

You

know

what

we

are

trying

to

do

here

is

put

in

a

basic

framework.

H

First,

such

that

we

have

some

sort

of

framework

to

work

with

in

the

first

place,

without

neighbor

management,

the

BR

could

get

all

the

routes,

but

the

neighbor

table

size

was

never

enough

and

we

could

see

that

whenever

a

packet

was

getting

dropped,

the

packet

was

getting

dropped

because

there

was

not

a

corresponding

neighbor

cache

entry

in

the

in

the

in

the

in

the

neighbor

table.

Oh

well,

we

we

have

been

working

on

this

for

past

two-and-a-half

years.

We

have

a

pilot

ready.

H

We,

the

kontiki

implementation,

already

has

some

form

of

neighbor

restriction

policy

based

on

this

little

bit.

But

but,

like

I

mentioned,

the

kontiki

implementation

doesn't

consider,

it

doesn't

consider

the

authentication

phase

where,

in

the

neighbor

cache

might

be

populated

because

of

the

authentication

signal,

or

rather

the

panel

signaling

in

process

of

progress.

So

we

believe

there

had

been

certain

reviews

from

where,

from

other

people

and

based

on

the

implementation,

we

have

updated

the

document

in

the

previous

versions.

H

A

H

A

H

H

H

A

I

A

J

J

J

So

let's

go

to

the

next

slide:

okay

mother's.

In

the

current

draft,

the

draft

contains

lots

of

background

material.

So

that's

all

these

appendices

right

now.

I

have

Appendix

A

up

to

L,

but

most

of

it

is

just

to

make

the

document

self

contained

the

virus

curve.

That

is

corresponds

to

this

curve.

Two

five,

five

one

nine

and

it's

also

used

in

this

secondary

signal

scheme

at

efforts.

Two

five

five

one

is

specification,

is

only

like

three

lines.

J

J

You

could

call

those

test

factors

and

the

test

factors

are

designed

to

also

show

that,

for

example,

using

this

about

the

Montgomery

letter

that

is

specified

in

something

to

see

if

our

G

documents

can

be

used

to

really

recover

the

whole

elliptic

curve

point

and

which

allows

to

use

reuse.

The

second

scheme-

that's

defined

in

one

of

these

other

very

witty

documents,

so

it

gives

a

signal

implementation,

even

if

you

don't

care

about

Weierstrass

curves

and

just

our

sorority

girls.

J

The

detailed

examples

also

provides

for

a

condensed

compressed

representation

of

rice

Huskers.

What

I

also

did

in

the

last

revision

is

I.

Just

posted

in

this

weekend

is

expanded

a

little

bit

on

security,

consideration

sax

section

mainly

to

preclude

reuse

of

some

of

the

key

material

which

directly

exposed

the

private

key

and

to

warn

against

the

kind

of

stupid

combinations

of

use

of

the

private

key

with

different

schemes,

and

then

I

also

expanded

on

the

IANA

consideration

section,

because

some

people

suggested

I

should

ask

for

a

code

point.

J

So

that's

what

I

did

and

I

think

the

document

is

now

in

pretty

good

shape.

I'm

sure

it's

not

your

average

topic

area,

but

I

think

it

could

be

useful

for

some

of

the

applications

that

we

considering

next

slide.

Please

so

I

think

the

document

this

is

kind

of

ready,

of

course,

could

give

another

read,

but

more

importantly,

is

to

have

other

people

look

at

it,

so

some

people

have

looked

at

it

in

the

past.

For

example,

in

the

earlier

drafts,

Nicholas

Reznor

has

a

look

at

it.

J

Then

I

have

some

comments

based

on

email,

traffic,

I

have

a

baker

and

a

Stanislav

had

a

very

detailed

review

and

I

shared.

Also,

all

my

circuits

H

go

to

ISM

to

do

redo

all

the

computations

and

to

check

all

the

details

and

the

lasts.

The

only

remaining

item

is

to

check

all

the

examples

that

I

stuffed

in

the

back,

so

it's

about

probably

eight

pages

or

so,

but

it's

basically

just

numerical

values

out

there.

J

A

A

If

if

he

sends

that

on

on

the

mailing

list,

then

maybe

we

can

have

only

review

from

the

security

Directorate

and

ship

this,

because

clearly

not

many

of

us

can

understand

the

the

math

behind

this

and

I

would

I

promise

to

review

the

security

considerations

and

and

and

IANA

text,

but

but

the

rest

is

I,

guess

Stanislaus

domain

and

he

will

provide

comments

and

then

we

see

it

from

there.

Okay.

A

J

A

K

One

update

is

that

the

comparison

has

been

updated,

based

on

updates

to

DTLS

1.3

earlier

version

of

DTLS

103

specified

two

different

DTLS

ciphertex

structures,

one

one

like

in

DTLS

1.2

and

one

short

cypress

deck

structure.

The

latest

version

of

DTLS

1.3

unifies

these

two

a

single

structure.

It's

called

ETLs

cypress

takes

structure,

but

it

is

has

more

in

common

with

a

short

ciphertext

structure.

Today

they

skip

the

lodge

ciphertext

structure.

The

surface

direct

structure

now

is

always

quite

compressed.

K

Then,

between

0

2

and

0

tree,

the

main

addition

is

that

we

had

finally

added

message:

Isis

for

key

exchange

protocols-

and

this

has

been

the

number

one

request,

since

this

was

send

the

0

0

version

here

and

also

before

when

this

was

in

core

and

the

protocols

we

have

added

is

TLS

103d,

TLS,

wama

tree

ad

hoc,

and

then

we

have

based

on

this

new

numbers

for

detail

s.

We

have

we

formulated

some

of

these

summaries

for

application.

They

protection

on

the

application,

very

data

and.

K

So

the

new

table

of

contents

looks

like

this,

so

basically,

all

of

this

is

new.

We

have

a

new

overhead

of

key

exchange

protocol,

section,

section

number

two

overhead

for

protection:

application

data

is

moved

to

section,

3,

subsection,

4

different

protocols,

and

another

change

is

that

summary

has

now

been

moved

as

a

first

subsection.

So

in

each

you

get

summary,

and

then

you

get

the

details.

K

K

We

have

chosen

to

do

with

point

compression

I,

think

point

compression

is

not

really

implemented

anywhere

for

this

as

I

have

lured,

but

we

chose

to

go

with

the

choice:

to

do

the

smallest

size

that

is

standardized

to

do

a

fair

comparison,

there's

only

mandatory

TLS,

DTLS

extensions,

except

for

connection

ID.

We

feel

that

connection

ID

is

a

very

important

use

case

for

big

deployments,

and

it

also

makes

comparison

with

Eid

hope

better

and

there's

no

DTLS

fragmentation.

That

would

add

more.

K

The

structure

of

the

documents

is.

There

is

similar

to

the

prediction.

Application

data

is

quite

a

lot

of

information,

and

we

have

noticed

that

this

is

requested

by

people.

Reading

this.

It

also

will

give

credibility

to

the

numbers.

You

can

verify

them

and

you

can

see

by

the

numbers

or

like

they

are

so

you

can

compare.

K

This

is

how

the

document

looks

for

DTLS,

you

list

the

Ricola,

your

handshake,

Laker

and

then

all

the

different

fields

in

the

handshake

header

and

then

extensions

and

then

continues

like

that.

This

is

how

the

RPK

in

Telus

looks

like

in

asm1,

and

this

was

bought

from

most

of

teases

at

Royal

Institute

of

Technology

in

Sweden,

and

is

how

is

how

the

format

looks

for

the

ad-hoc

messages?

K

Here's

a

seaboard

sequence

and

here

are

seaboard

honestok

notation,

and

this

is

you-

have

the

subset

of

all

the

information,

two

main

tables

in

this

comparison:

here's

the

first.

This

is

DTLS

103

and

TLS

103,

and

this

is

without

connection

ID.

So

only

the

mandatory

extensions

minimal

amount

to

do

this

and

the

extensions

depend

on

if

you

do

PSK

or

if

you

do

or

PK

and

you

have

compared

or

PK

and

PSK.

K

So,

as

you

can

see

the

detail,

s

and

TLS

I,

don't

know

how

these

compared

to

earlier

version

of

TLS

and

DTLS.

That

might

be

something

to

add

in

the

future.

Actually,

there

has

already

been

requests

for

that.

I

was

a

university

that

contacted

me

and

asked

if

I

had

numbers

for

that

main

difference

between

TLS

and

DTLS.

Is

that

DTLS

add

sequence

numbers

it

adds?

K

K

Next

table

compares

DTLS

with

ed

hook

and

here's

the

comparison

is

the

same

same

numbers

for

DTLS

ed

hook

with

our

picky

and

ECDSA

ad

hoc,

with

paste

TSK

and

ISA

DAC,

and,

as

you

can

see,

the

numbers

for

a

adult

are

drastically

smaller,

we're

also

Dom

shaking

what

you

get

if

you

have

a

cached

certificate.

So

this

is

a

combination

where

you

have

a

cached

certificate.

This

client

has

cached

a

server

certificate.

K

And

the

question

to

the

working

group

is:

what's

next:

what

how

would

the

working

group

first?

What

does

the

working

group

thinks

about

the

changes

that

was

made?

Is

it

structured

in

the

correct

way,

with

the

working

group

like

its

structure

in

some

other

way,

some

other

comparisons

in

the

table

and

so

on,

and

also

right

now

we

are

comparing

the

bite

sizes

of

the

protocols

themselves.

Is

there

any

other?

K

Is

there

any

other

de

Provence

scenarios

that

should

be

considering

any

extensions

that

are

important

for

L,

big

use

cases

and

what

would

working

group

like

to

be

added

in

future

versions?

If

anything

can

also

I

think

there's

a

lot

that

could

be

added,

you

need

to

decide

on

what

should

be

added.

We

cannot

add

everything,

then

the

draft

will

never

be

done.

K

That's

a

good

suggestion:

I

have

listed,

there's

actually

two

different

compares

TLS

drafts

recently

submitted

I,

guess

it's

compact,

TLS,

1.3

or

CTLs

meter

by

Eric

rescue

litter,

the

TLS

working

group

and

there's

still

a

chance

against

a

boar

or

TLS,

see

submitted

by

Jim

shod

to

the

ACE

working

group,

and

these

are

definitely

potential

additions

in

the

future.

I

would

but

I

would,

in

that

case,

wait

a

couple

of

mean

meetings

to

get

them

some

stability

before

adding

them.

So

we

don't

have

to

do

changes

every

meeting

so.

J

The

other

question

on

the

document

itself

is:

you

had

some

comparisons

and

that

always

raises

the

questions.

How

do

we

know

those

keys

are

authentic,

so

I

haven't

really

checked

right

now,

but

did

you

also

provide

a

figure

where

you

had?

Let's

save,

on

cert

and

and

and

what

the

comparison

is

then

I.

K

J

K

K

J

J

So

then

some

lead

over

froze

right

yeah.

The

final

comment,

I

have

is

I,

know

same

security

considerations,

I've

seen

you,

you

said

it's

not

necessarily

informational.

It

may

be

good

to

state

whether

the

protocols

that

you

compare

have

the

same

security

properties

or

you

know,

at

least

from

a

you

know,

practitioners

point

of

view

or

whether

they

have

different

tests.

For

example,

with

respect

to

you,

know,

denial

of

service,

or

things

like

that.

I

could

be

just

a

one-liner

right

if

the

discrete

protocols

are

are

presumed

to

have

the

same

security

properties.

J

J

F

J

G

F

K

I

think

that

yeah,

some

short

in

there

probably

I,

think

the

converse

is,

is

quite

close

to

comparing

another.

It

depends

tell

us

it's

on

a

much

lower

layer

than,

for

example,

Oh

score

in

the

old

section.

Let's

put

a

key

exchange,

I

think

it's

more

similar

I

will

think

about

what

to

say,

I.

Think

it's

a

good

idea,

so

don't

think

it's.

They

have

slightly

different

use

cases,

yeah

different

problem,

hi.

I

Francesca

Lynette,

co-author

of

this

document,

I

just

wanted

to

point

out

that

in

the

conclusion

subsection

of

the

draft,

we

do

start

some

of

these

consideration

about

things

that

impact

these

message

sizes.

And

it's

not

it's

a

first

draft.

It's

not

complete.

We

can

probably

do

better,

but

that

was

some

of

it.

Isn't

it

in

there.

J

K

It's

a

good

comment

thanks,

so

coming

back

to

now,

we

already

Rene's

comments

was

on

what

to

add

in

future

versions.

So,

as

I

said,

what

I

could

find,

what

could

be

reasonable

to

add

is

only

version

of

TLS

and

ETLs

one

to

two

and

one

TLS,

one

or

two.

This

has

I

already

have

seen

requests

of

doing.

This

would

be

very

happy

to

see

what

the

working

group

does.

The

working

group

want

that

it's

reasonable

amount

of

work.

What

do

we

want

to

focus

on

new

thinks.

A

But

I

think

that

that

could

definitely

make

sense

to

have

numbers

for

older

versions.

I

also

wanted

to

comment

on

what

Rene

was

saying

earlier,

and

perhaps

there

is

text

on

the

conclusion

so

I

was

reading

reading

through

the

document

and

things

like

DTLS,

1

or

2

has

explicit

sequence,

numbers

and

so

on,

which

which

causes

this

overhead

I

think

that

is

perhaps

the

most

valuable

information

I

get

from

the

document.

A

So

if

I

just

see

numbers,

ok,

I

get

some

information

that

this

is

more

lightweight

than

the

other,

but

I

think

the

most

useful

information

is.

Why

is

something

better

than

than

the

other?

So

perhaps

having

more

text

like

that,

and

perhaps

even

in

the

main

body

and

not

just

in

the

conclusion-

would

you'd

be

useful.

That's.

K

A

good

comment,

I

think

that's

definitely

missing

from

the

key

exchange

bottom,

probably

from

the

protection

of

application

data

as

well

yeah.

Then,

as

Rene

said,

the

second

opportunity

is

to

add

this

new

compressed.

Tls

handshakes,

I.

Think

for

that

I

would

wait

until

they

are

a

little

bit

more

stable.

It

was

painful

enough

to

add

DTLS

1.3

when

it

was

a

draw

because

it

changed.

Basically,

our

idea

of

meeting

but

I

think

that's

very

useful

to

do

in

the

future.

K

K

A

F

A

I

think

client-server

and

that's

it

we

don't

do

group

communication,

we

don't

do

all

the

other

things

so

as

individual

contributor

I

think

my

recommendation

would

be

not

to

have

group,

because

that's

one

way

of

limiting

what

we

will

have

in

the

document

and

what

we

won't

and

seems

reasonable

to

have

clients

over

and

then

the

rest

can

be

in

another

document,

I

think

just

getting

the

numbers

for

these

and

making

sure

those

specs

themselves

are

stable

enough.

This

document

will

take

quite

some

time.

Yeah.

F

A

A

K

F

From

working

group,

chair

I

have

a

very

general

question

about

these

drafts

so

because

these

are

basically

very

good

in

size

of

the

overhead

or

issue

security

protocol,

but

have

had

the

protocol

designers.

For

example,

OS

Co

has

taken

any

recommendation

from

this

inside

in

designing

their

security

protocols.

Good

examples

here,

yes,

I,

think

so

I

think

I.

K

Think

now

Oscar

is

not

here

but

I

think

to

actually

start

comparing.

This

was

a

big

big

push

for

Oscar

to

get

leaner

I

think

it

was

also

a

very

big

push

for

DTLS

to

get

leaner.

The

detail,

s103

Rico

layer

which

went

from

I,

think

26

bytes

in

detail,

as

102

211

might

seem.

Dtls,

1.3

and

I

think

now

we're

seeing

the

same

things.

Yes

publishing

paper

things

from

this

makes

people

makes

the

author

think.

What

can

we

optimize?

More

so

I'd

hope

sense

is

first

Mary.