►

From YouTube: IETF104-BMWG-20190327-1120

Description

BMWG meeting session at IETF104

2019/03/27 1120

https://datatracker.ietf.org/meeting/104/proceedings/

A

B

D

C

B

B

So,

let's,

let's

begin

by

doing

the

the

usual

stuff,

we're

gonna

circulate

the

blue

sheets.

Our

area

director

is

right

here

in

front

signing

the

blue

sheet

right

now:

Warren

Kumari.

If

you

have

an

interest

or

a

something

you'd

like

to

see

done

differently,

Warren's

also

a

person

you

can

talk

to

because

we

work

for

him

in

effect

and

we're

happy

to

to

take

on

new

actions.

So,

let's

see

here,

I

guess

it's

a

no!

It's

a.

E

B

F

F

B

Yeah,

alright,

so

the

note

well,

if

you've

been

to

an

IETF

meeting

before

you've

seen

this,

it

basically

means

that

everything

you

say

and

do

in

this

room

is

a

contribution

to

the

IETF.

Also,

you

know

messages

to

the

mailing

list

and

all

sorts

of

things,

but

the

most

important

thing

is

something

I've

added

to

the

top

of

the

note.

Well,

we

work

as

individuals

and

we

try

to

be

nice

to

each

other.

This

needs

to

be

said.

More

often,

that's

what

the

working

group

chairs

have

agreed

on.

B

So

we're

saying

it

now

at

the

beginning

of

every

meeting,

those

of

us

who

picked

up

on

this-

and

let's

also

note

that

well,

thank

you

so

on

to

the

agenda.

First

off,

we

need

some

volunteer

note

takers

and

the

reason

I

ask

for

that.

Now

is

that

we've

I've

just

managed

to

post

the

entire

agenda

into

our

etherpad.

There

are

some

updates

to

the

agenda.

Some

items

that

we're

gonna

flip

around

a

little

bit

and.

B

B

A

A

B

That's

you

know,

that's

the

kind

of

help

we

need.

We

mean

I've

been

I've,

been

working

till

late

last

night,

posting

slides

from

everybody

and

reading

people's

drafts,

and

you

know

it's

it's

not

a

two-person

operation

here

we

need

the

community

to

work

with.

So

thank

you

all

right.

So

let's

has

anybody

on

jabber,

so

we've

had

problems

with

this

in

the

past,

my

machine

is

blocked,

I,

think

your

machine

is

blocked

or

somebody

I

mean

we've

had

a

lot

of

trouble

with

this.

B

All

right,

so

what

so

we'll

use

meet

echo

for

the

for

the

jabber

and,

and

that

means,

if

you're

a

remote

participant

today

and

it

looks

like

we

have

three

or

four

who

are

not

the

organizers

of

meet

echo.

Then

please

use

the

please

use

the

jabber

equivalent

on

that

Thank

You

Warren

great

suggestion.

All

right,

the

blue

sheets

are

going

around.

We've

done

the

IPR

sort

of

note.

B

Well,

if

there's,

if

you

have

IPR

associated

with

your

internet

draft,

please

disclose

that

just

close,

it

frequently

disclose

it,

often

and

and

in

a

timely

fashion,

so

make

sure

the

blue

sheets

don't

get

lost

and

sort

of

keep

them

in

the

back

where

people

will

walk

in

and

see

them

and

hopefully

saw

we

need

to

keep

as

many

names

on

the

blue

sheets

as

possible.

Thank

you.

B

So

our

quick,

a

working

group

status,

that's

what

we'll

cover

first,

we'll

look

at

our

Charter

and

milestones

and

then

we'll

hear

from

Carsten

and

his

team

on

the

benchmarking

methodology

network

security

device

performance,

nice,

big

draft

there.

We

have

six

continuing

proposals,

one

from

me

on

back

to

back

frame

methodology

for

vnf

benchmarking,

water,

business.

Automation

is

manual

here

and

well

yes,

nice

to

meet

you

man

well

and

then

San,

Juan

and

Jacob

are

going

to

present

on.

B

That's

a

really

quick

one

for

me,

in

fact,

it's

got

zero,

slides

and

then

the

multiple

loss

ratio

search

and

the

probabilistic

plus

you

less

ratio

search

from

the

team

of

Ratko

and

ma

check.

Welcome

guys.

So

then

we

have

a

whole

bunch

of

new

proposals.

Evpn

multi

casting

is

a

remote

presentation.

Mr.

young

ye

son

is

that

close,

close

to

correct

pronunciation,

okay

and

and

then

on

containerized

infrastructure,

and

if

he

service

benchmarking

from

Mott

Jack

I

have

a

comment

on

that

that

I

haven't

fully

typed

up.

B

B

Benchmarking

methodology

for

evpn

PWS,

that's

a

it's

an

another

draft.

It's

been

around

actually

no

presentation

this

time,

so

those

are

additional

drafts.

You

can

take

a

look

at

any

bashing

of

the

agenda

needed

I.

Think

we've

already.

We've

actually

already

done

one

this

morning

rearranging

a

couple

of

talks

so

seeing

no

requests

for

them

phone.

Let

us

continue

so

here's

the

status

I

hope

by

by

looking

at

a

full

page

of

an

agenda

items.

B

There

are

fourteen

of

them

that

you'll

agree

with

me

that

proposals

keep

coming

and

they're

in

all

these

different

areas

and

I

mean

I'm

starting

to

see

some

interesting

interactions

between

the

proposals.

So

I

think

that

that's

that's

one

of

the

things

we

might

look

for

today's

some

synergies,

some

areas

where

author

teams

could

work

together

and

and

produce

a

larger,

better

draft.

That

would

that's

one

of

the

things

that

I

would

like

to

suggest.

B

B

Well,

I.

Think

we're

doing

fairly

well

on

on

all

of

these.

So

it's

up

to

the

chairs

to

go

in

and

do

a

reassessment

a

job

on

on

each

one

of

these

I

mean

the

bottom

line.

Is

we

haven't?

We've

only

adopted

drafts

for

two

of

these

I

think

the

methodology

for

next-gen

firewalls

and

the

evpn

benchmarking.

So

you

know

we've

gotta,

move

we're

gonna

move

up

and

you

know

pick

up

pick

up

the

work

that

would

help

us

to

satisfy

these.

B

B

So

no

new

RFC's

we're

still

working

on

a

charter

that

little

I

think

it's

a

little

less

than

a

year

old.

Now

we

have

a

supplementary

BMW,

G,

page

who's,

new

and

BMW

G

tending

for

the

first

time.

Please

raise

your

hand

great.

It's

like

five

six

people.

That's

that's

good!

So

you'll

find

this

is

a

very

easy

group

to

join,

especially

if

you

spend

any

time

in

the

lab

doing

testing.

B

If

you

read

some

of

our

fundamental

dress

like

or

there

are

seas

like

RC,

25,

44

or

RFC

2889

I

mean

these

are

the

these

are

the

real

pillars

of

our

work

that

we're

leaning

on

still

today,

so

I

suggest

that

you

get

involved

in

the

group

that

way

and-

and

if

you

have

any

questions

about,

you

know

things

that

you

might

get

started

on

drafts

to

review

and

in

your

area

of

expertise,

please

see

either

Sarah

or

or

I

after

the

meeting.

We

would

be

glad

to

help

you

out

so

welcome.

B

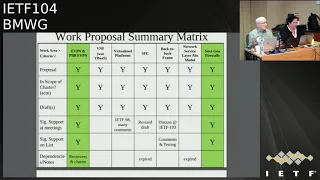

So

here's

our

work

proposal,

summary

and

I

haven't

added

all

the

new

proposals

here.

In

fact,

I

mean

there's

two

that

are

in

green,

which

we've

actually

adopted

so

they're,

not

proposals,

there's

some

other

stuff

in

here,

the

SFC,

that's

kind

of

expired.

Now

that's

gone.

The

network

service,

our

abstract

model,

that's

gone,

but

we

have

other

proposals

that

might

take

the

place

of

that

in

the

and

the

things

that

we

looked

at

in

the

milestones

here,

so

a

fairly

good

activity

on

back-to-back

frame

testing.

B

B

Eventually,

the

security

area

will

review

our

dress

and

the

security

area

doesn't

review

our

Charter

when

they

review

our

dress

and

sometimes

the

security

area

reviewer

flips

out

when

they

see

that

we're

doing

all

this

stuff

and

sending

traffic

across

the

network

and

congesting

the

hell

out

of

network

devices.

It's

a

it's

a

real

shame

that

they

don't

read

the

Charter.

But

then,

if

we

put

this

in

our

security

considerations

section,

it

immediately

alleviates

all

the

issues.

Oh

wow,

this

is

just

in

the

lab.

I

get

it

now

all

right

all

right.

B

We

still

get

some

comments

from

them,

occasionally,

which

is

fine,

but

we're

and

we're

happy

to

deal

with

that.

But

but

this

dispels

a

lot

of

problems,

so

I

I

strongly

suggest

that

folks

use

this.

But

it's

up

to

you

I

think!

That's

it

that's

it!

So

that's

the

Chairman's

slide

any

any

questions.

Additional

comments:

Sara!

Okay!

Thank

you.

It's

good

to

hear

that

from

someone

all

right

so

off

to

the

next

year.

Let's,

let's

see

alright

so

on

on

my

version

of

the

agenda,

which

is

somewhere

here,

yeah

I'm.

B

Really

sorry,

when

I

put

when

I

pasted

this

into

etherpad,

all

my

text

came

out

pink.

So

those

of

you

who

looked

at

that,

no

not

nothing

wrong

with

it.

But

it's

like

I

mean

it's

a.

It

ends

up

being

a

low

contrast

thing

for

me

yeah.

So

so

as

part

of

our

status,

we

said

we

were

gonna

cover

the

status

of

the

benchmarking

methodology

for

evpn

and

PBB

evpn.

B

So

that's

not

good.

That

means

we

can't

close.

The

working

group

last

call

in

fact,

I

think

I

think

it

means

we

have

to

have

another

one.

So

so

that's

what

we'll

do

we'll

have?

Another

working

group

last

call

on

this

and

I'll

ask

Sarah

to

strike

that

when

you

get

access

to

your

email,

please

and

then-

and

we

should

cross

post

I-

think

to

the

bier

working

group

where

they

work

on

evpn,

so

we

should

have

there.

The

benefit

of

their

input

is

always

good.

B

It's

always

good

for

our

group

to

cross

post

to

a

group

that

has

effective

expertise

in

the

area

as

well.

I

mean

we're

we're

all

testers.

We

can't

expect

to

be

experts

on

everything

like

next-generation

firewalls,

for

example.

So

in

in

that

topic,

which

is

coming

up

right

now,

I've

actually

requested

an

early

review

from

the

security

directory

and

hopefully

and

I've

asked

them

to

find

somebody

who's

a

who's.

A

real

firewall

expert

to

provide

us

some

additional

comments

there.

So

that's

the

beginning

of

our

interactions

with

IETF

on

unworked

like

this.

B

C

B

Thank

you,

sorry

about

that

brain

all

right,

so

our

so

that

covers

yeah

thanks,

so

that

basically

covers

the

status.

The

charter

of

the

milestones.

So

now

we're

gonna,

hear

from

Karsten

ro,

Sandoval,

I'm,

sorry,

Carson,

I,

couldn't

spell

your

whole

name

out

and

the

agenda

slide

there

and

so

I

ended

up

truncate,

truncating

everybody's

name,

so

that

so

they

didn't

go

across

to

two

lines.

All

right

here

we

go

so

now

wait

a

minute.

B

G

I

think

we're

ready

to

go

okay,

so

thanks

yeah,

so

the

main

author,

Bala

Bala

Raja,

is

also

in

the

meeting

or

training

remotely.

He

wasn't

able

to

come

in

person,

unfortunately,

and

Brian

moment

from

the

net

tech.

Open

group

is

also

on

remotely

available

and

Tim

from

IOL

who's

also

contributed

heavily

present

here.

So

any

questions

I

think

I

hope

we

should

get

covered

yeah.

So

this

draft

has

been

presented

before

I

think

at

Ike,

if

one

of

three

and

one

or

two

and

not

sure

about

101,

so

she

can

move

the

first

slides.

G

We've

continued

to

refine

this.

So,

unfortunately,

many

of

our

discussions

are

not

usually

seen

over

the

BMW

G

mailing

list,

because

we

have

a

separate

group,

it's

called

net

sec

open

and

we

have

like

discussions

and

weekly

calls

and

actually

we

use.

They

have

two

weekly

calls

until

a

short

time

ago

and

we've

basically

had

a

lot

of

internal

draft.

So

before

we

upload

anything

to

the

ITF

official

document

repository,

we

basically

have

internal

reviews.

We

used

to

have

a

separate

set

of

test

cases

for

transaction

per.

G

Second,

we

figured

in

the

proof

once

a

test.

We

can

merge

them.

So

now

we

go

down

from

11

main

chest

areas

to

9,

because

we

emerged

transactions

per

second

with

a

true

protest,

so

that's

actually

making

things

more

efficient.

We

added

more

system

under

test

features.

We

clarified

more

stuff

and,

in

general,

our

draft

guru

in

with

the

attempt

to

make

things

as

precisely

defined

as

possible.

G

So

the

main

goal

here

is

to

make

sure

that

anybody

who

applies

this

document

to

a

firewall

test

will

yield

the

same

results

so

basically

to

iron

out

any

uncertainties

any

black

spots

of

not

being

defined.

We

also

improved

the

test

procedures,

so

we

actually

found

when

working

with

multiple

labs

and

multiple

tests

with

vendors,

that

some

of

the

text

was

not

precise

enough.

G

Yet

so

we

improved

the

test

procedures

both

to

make

them

more

reproducible

and

also

to

make

them

more

precise

on

how

exactly

to

set

up

things,

and

we

to

this

end,

we

also

defined

more

TCP

parameters

like

we

had

a

long

debate

about

the

window

size

that

was

also.

There

were

also

some

discussions

on

the

mailing

list

about

delayed

AK

and

congestions

windows

and

so

on

so

I

think

we

have

now

defined

the

precise

set.

G

So

please

look

at

the

document

and

see

if

you're

agreeing

with

that

and

this

exact

parameters

ation

actually

enables

test

automation.

So

there

are

two

commercial

testable

vendors

in

the

process

of

automating

all

of

these

tests

right

now

one

of

them

has

reached

90%

and

the

other

one

has

said

they

should

be

ready

by

the

end

of

q2.

So

it

seems

like

that's

another

good

proof.

That

standard

is

actually

implementable

and

is

precise

enough.

G

So

next

slide,

please

just

for

those

who

might

remember

RC

3511

the

test

methodology

for

firewall

performance,

benchmarking,

that's

a

couple

years

old,

so

we

don't

really

we've

never

really

discussed

whether

we

actually

want

to

formally

supersede

it

I

think:

that's

not

my

goal,

but

just

to

put

things

in

comparison.

So

some

things

like

traffic

requirements,

we're

not

defined

back

then,

and

here

we

have

a

precise

requirement

about

the

object

sizes

and

the

traffic

mix.

G

That's

very

important

because

when,

if

I

were

Venice

went

to

like

very

small

object

sizes

for

some

tests

or

very

large

one

for

other

tests,

optimize

the

results

and

it's

important

to

have

this

comparable

in

an

apples-to-apples

way.

We

also

define

the

cipher

suites.

That's

actually

very

important,

as

HTTP

becomes

much

more

widely

used,

and

these

cipher

suites

are

defined

for

individual

test

cases.

We

had

a

big

debate

about

cipher

suites,

because,

obviously

you

can

imagine

from

the

ITF

perspective.

G

We

want

to

have

the

most

advanced

cipher

suites,

but

from

the

vendor

perspective

we

want

to

have

the

easiest

ones

and

then,

from

the

reality

check

perspective

with

enterprises.

They

want

to

have

something

that's

already

robust

and

it's

not

just

the

latest

and

greatest,

but

is

actually

used

in

the

field.

So

next

to

please.

G

Regarding

the

rule

sets

we

defined

like

ACLs

and

yeah,

so

basically

rule

sets

for

for

firewall

rules.

In

a

specific

way,

we

tried

to

come

up

with

different

definitions

for

different

sized

firewalls.

So

again,

I

asked

you

to

review

this.

So

there

is

a

table

in

the

document

which

says

there

are

XS

extra

small

types

of

firewalls.

There

are

small,

medium

and

large

firewalls.

They

are

characterized

by

their

maximum

throughput

and,

of

course,

the

larger

firewall

is

the

more

advanced

it

is

normally.

G

So

we

cannot

expect

the

same

CPU

power,

the

same

feature

set

from

a

very

small

firewall.

That's

usually

used

at

a

very

small

branch

office

or

at

the

cpe

side

or

whatever.

So

that's

why

we

define

different

rule

sets,

but

of

course

that's

our

tree

and

it

depends

on

you

know

manufacturers.

So

if

you

have

any

comments

there,

that's

welcome.

H

Wrap

from

game

we're

looking

at

the

word

that

the

rule

set

as

well

as

the

throughput

recommendations,

and

as

can

we

is

there

a

better

way

to

classify

it

in

large,

medium,

small

extra

small,

because

I

think

over

time.

Those

definitions

will

change

like

throughput

as

you

should.

We

should

measure

throughput

or

measure

how

many

rules,

but

to

put

it

in

the

category,

is

kind

of

like

that's

it'll.

It

might

go

away

tomorrow

or

I

may

have

a

very

definition,

different

definition

of

how

many

rules

are

large

or

small

yeah.

I

G

C

Sarah

banks

all

add

on

to

what

Jacob

saying

I

agree:

I

think

specifying

as

extra

small

small

medium

large

is

fine,

but

it's

loose

right

so

having,

in

my

opinion,

two

approaches,

one

is

to

say,

look

for

each

one

of

those.

We

think

that

they

are

roughly

this

many

rules

and

this

much

of

throughput

and

then

also

because

the

I

think

everybody's

definition

might

vary

as

to

what

small,

medium

and

large

is.

The

other

alternative

is

to

say

and

I.

C

D

C

The

guidelines

is

not

a

bad

idea,

but

the

second

thing

that

I

like

to

see

sometimes

is

look

if

you're

gonna

test

make

sure

if

you're

gonna

put

in

your

number

that

each

time

you're

running

the

test,

that

you

noted

how

many

rules

and

what

the

throughput

was

and

that

you

repeated

those

so

that

you

have

apples

to

apples

comparisons

when

you're

done

for

each

iteration

and

I.

Think

those

two

things

set

you

free

because,

as

testers,

what

else

it

makes

sense,

yeah.

G

H

D

H

C

I

that

a

little

I'd

say

small,

medium,

large

and

custom,

and

that

way

when

somebody's

reporting

their

stuff,

you

can

ask

them

hey.

Did

you

use

the

small

medium

large

or

did

you

go

custom

and

then

it's

crystal

clear

that

if

you're

trying

to

game

it?

Well,

maybe

you

want

custom,

but

it's

clear

versus

if

you

use

small

medium

large,

presumably

now

we're

going

to

have

the

same

route.

It's

a

decent

apples

to

apples,

comparison

of

different

vendors

because

you

use

the

exact

same

number

of

rule

sets

and

throughput

yeah.

B

B

G

So

next

one

it's

a

it's

actually

animated

same

flight.

Okay,

the

last

thing

is

TCP

sticks

we

in

RC,

35

11.

It

wasn't

foreseen

that

details

of

the

TCP

stack

should

be

defined

nowadays,

it's

pretty

obvious.

These

things

need

to

be

defined,

as

I

said:

T

V

window

size

and

so

on,

algorithm

maximum

segment

size

and

all

of

these

things

that

can

be

used

to

twist

the

results

or

to

make

them.

B

As

a

participant,

al

Morden

has

a

comment,

and-

and

that

is

that

TCP

is

dynamics

are

affected

by

the

round-trip

delay,

even

even

with

cubic,

which

tries

to

be

independent

of

it.

And,

let's

see

for

one

thing,

I

don't

see

in

this

list

a

specification

of

the

congestion

control,

algorithm,

I

think

it's

in

the

draft,

though.

So,

if

it's

not,

it

should

be.

D

B

G

Okay,

yeah

good

point,

I

think

yeah

I'm,

not

sure

whether

this

would

be

another

test

or

just

another

parameter

to

be

applied

to

all

tests,

because

ultimately,

I

think

this.

This

document

is

probably

going

to

be

used

in

the

lab

and

by

vendors

to

define

datasheet

numbers

and

if

we

add

delays,

they

are

our

trait

for

a

use

case

scenario,

I'm,

not

sure

Tim.

J

D

J

B

G

Okay,

so

next

slide,

please

so

last

aspects

of

the

comparison

with

RC

3511.

So

we

have

multiple

test

validation

criteria,

so

we

don't

really

define

pass

or

fail,

but

we

define

criteria

to

measure

expected

results

against

and

we

also

make

sure

that

the

same

system

and

the

test

features

are

switched

on

or

off

in

the

test

and

that's

actually

quite

important,

because

the

features

of

modern

firewalls

are

increasing.

G

H

Can

is

there

a

way

to

reframe

that

a

bit

of

like

not

making

a

recommendation

of

just

benchmarking

them?

So,

instead

of

saying

these

are

mandatory,

or

these

are

optional

and

making

a

recommendation

over

which

features

should

be

there.

Just

saying

here's

a

list

of

features

and

here's

a

test

to

go

after

them,

because

I

think

I,

don't

know

if

we

want

to

be

in

the

business

of

making

them

recommendation

of

what

vendors

should

implement

in

terms

of

features.

H

I,

don't

have

an

answer

to

that,

because

it's

it's

up

to

interpretation

right

I

mean

it

may

be

all

of

them,

but

you

put

some

of

them

as

optional

as

well.

Right

where

you

say

some

are

mandatory.

Some

are

optional,

but

then

maybe

you're

saying

that

all

are

mandatory,

so

I

would

just

put

him

on

the

list

saying:

hey,

here's

all

the

list,

universal

features

and

here's

how

to

go

test

them.

Not

that

not

not

make

a

recommendation

of

what's

mandatory

or

optional.

G

Yeah,

well

obviously,

from

the

lab

perspective,

we

want

to

maintain

as

much

stringent

requirements

as

possible.

So

I

see

there

is

a

usual

conflict

of

goals

and

in

the

end

you

know,

if

you

switch

off

everything

you

could

you

could

test

a

router

with

only

layer,

three

enabled

and

apply

this

methodology

selectively

this

wonderfully

blazingly

fast,

because

it

doesn't

do

anything

yeah,

but

the

reporting

wouldn't

show

anything.

But

the

problem

is

the

problem

is

typically.

If

you

look

at

I

will

inspect

it

a

lot

of

data

sheets.

G

C

A

compromise

then

be

perhaps

to

have

two

cases:

the

first,

where

we

say

turn

everything

on

and

test,

and

the

second

is

define

what

the

features

were

that

work

turned

on

when

you

test,

because

the

other

thing

is:

let's

fast

forward

to

the

point

where

this

goes

to

the

queue

and

we

publish

the

RFC.

What

happens

if

I

don't

know?

Tls

one

seven

comes

out,

and

it's

not

an

option

here

in

the

RC.

Yes,

you

could

go

back

and

update,

but

another

way

to

cover.

C

That

is

to

say

well

if

the

RFC

still

covers

this,

because

now

you

have

to

say

TLS,

one

seven

was

a

feature

we

enable

it

or

we

disabled

it.

So

your

second

case

is,

you

tell

me

all

of

the

features

your

firewall

has

and

then

tell

me

the

ones

that

were

turned

on

or

off

and

that

way

I

can

make

an

intelligent

decision.

But

the

first

case

still

covers

your

okay.

We

don't

want

everybody

to

turn

everything

off

and

have

it

be

a

all

this

was

this

was

RFC

bla,

bla,

bla,

tested

and

look.

C

G

Think,

and

from

my

experience

as

a

you

know,

lab

writing

marketing

just

reports.

People

are

vendor

state

commissioners

with

tests

that

are

beyond

the

state

of

art,

they're

very

proud,

and

they

asked

us

has

to

you

know,

put

it

in

bold

and

large

funds

that

they

actually

went

above

and

beyond.

But

it's

more

a

problem

with

that

want

to

stay

at

the

bottom,

so

I'm

very

much

concerned

about

the

lower

limit.

G

C

It's

a

suggestion.

You

know

how

you

want

to

phrase

the

first

case

where

you

somehow

define

what

the

the

features

are,

but

the

second

case,

I

think

gives

us

the

out

to

your

point

as

well,

which

at

least

tells

me

what

are

your

features

or

which

ones

were

the

ones

that

you

had

turned

on

in

off,

so

that

I

can.

If

I

go.

G

G

H

No

I

agree

I

think

that

I'm

more

thinking

about

where

we

may

handle

one

of

these

cases

in

a

different

way

that,

because,

if

there's

your

think

that

comes

back

to

a

couple

other

things

I

wanted

to

mention

too

it's

like.

Are

we

trying?

Are

you

trying

to

define

what

a

next-gen

firewall

is

in

this

document?

Are

you

trying

to

define

the

methodology

to

test

what

a

next-gen

firewall?

It

is

well.

G

H

Be

yeah,

so

that's

what

I

wanted

to

maybe

I'll

take

it

on

the

list

as

well

of

I,

was

definitely

interested.

So

I

read

the

draft

Oh

quite

a

bit

of

it.

You

know

how

do

we?

How

does

this

change

as

the

firewall

isn't

one

centralized

thing

and

it's

spread

out

on

the

hyper

visor,

not

just

within,

like

what

VMware

does,

but

in

the

public

cloud

as

like,

Azure

or

Google

get

better

at

doing

like

ids/ips

all

this

stuff

at

the

host

level

and

actually

covers

most

of

this

list

in

that

level

of

how?

H

K

H

I

also

think

that

there's

probably

some

more

definitions

we

can

put

in

this

draft

to

to

make

it

also

exhaustive

for

that.

It's

like

there's

I,

think

like

stateful,

is

often

misused

and

there's

a

bunch

of

other

terminology

that

they

actually

defined

and

that

2647

that

could

be

yeah

I

did

as

well

yeah

part

of

that

mechanic.

G

I

think

those

of

course

these

comments

would

be

very

welcome,

because

this

is

stuff

that

we

just

discussed

and

defined

in

an

etic

open

group

more

than

a

year

ago.

So

at

the

very

beginning,

and

since

then,

we've

mostly

worked

at

the

individual

test

methods.

So

maybe

some

of

it

is

benefits

from

a

good

review

and

expansion

thanks

thanks.

So

any

more

questions

on

this

slide.

Okay,

so

then

we

actually

went

into

proof-of-concept

testing

and

L

wrote

in

the

agenda

like

we're

going

to

have

actual

test

results.

G

I

have

to

probably

disappoint

you

I,

don't

come

up

with

individual

numbers

with

commercial

vendor

names

at

this

point,

but

we

ran

a

pretty

extensive

POC

testing

program

with

two

goals,

basically

make

sure

that

the

test

procedures

are

actually

producing,

correct

and

expected

results

accurately

and

second

goal

to

make

sure

that

this

is

all

comparable,

and

this

is

actually

quite

important.

Most

of

the

security

benchmarking

tests

that

exist

in

the

industry

are

proprietary

single

lab.

G

Two

things

are

actually

all

of

them,

so

it's

comparison

is

not

a

problem,

because

it's

only

one

lab

and

with

one

tool

winner

who

governs

this

program,

but

in

this

in

our

program

that

we

want

to

create

based

on

this

document

in

net

SEC

open,

we

want

to

have

multiple

labs.

We

want

to

have

multiple

tools

we

want

to

have.

You

know,

of

course,

many

vendors,

and

that

requires

that

all

of

the

methodologies

precise

enough

that

it

always

creates

the

same

results

independent,

which

left

runs

the

test

and

which

tool

they

use.

G

So

that

was

the

second

goal

to

make

sure

that

is

possible,

and

actually

that's

that

yields

quite

a

number

of

interesting

challenges.

So

we

started

with

this

in

October

it

was

a

NTC

and

il

running

these

tests.

They

were

aspiring

onyxia

involved

and

for

firewall

vendors,

which

I

will

not

name.

Some

of

them

also

did

test

there

only

ups,

and

we

have

initial

results

that

we

have.

We

are

discussing

under

NDA

in

the

net

SEC

open

group,

so

you're

welcome

to

join,

but

I

don't

want

to

pitch

it.

G

So

if

you

go

to

the

next

slide,

so

what

what

what

I

was

able

to

provide

here

is

the

results

of

a

test

or

analysis

of

the

results

of

the

tests

that

we

did

at

the

MTC

with

one

commercial

firewall

vendor

and

we

went

through

protests

according

to

the

draft

with

an

automated

test

tool

and

the

results

were

actually

40%

higher

than

the

vendor

had

published

in

the

datasheet,

and

that's

quite

interesting

because

this

is

one

of

the

vendors

who

I

said

earlier

like

they.

They

want

to

go

above

and

beyond.

G

They

want

to

be

industry

leader,

so

they

switch

on

everything

and

then

they

report

a

number.

But

of

course

that

puts

the

number

fairly

low

in

comparison

to

others

and

since

some

of

the

stuff

like

propriety

stuff

that

they

used

to

switch

on

to

create

their

own

datasheet

number

was

not

switched

on.

As

per

that

draft

standard,

our

numbers

were

40%

higher

than

the

vendor

datasheet.

G

So

the

next

topic

was

the

session

capacity,

and

the

session

capacity

is

less

of

an

issue

because

it's

a

fairly

static

number,

so

the

vendor

they.

She

didn't

provide

any

details

parameters,

but

the

results

were

identical

and

the

last

topic

were

the

connections

per

second,

and

in

this

case

the

vendor

actually

used

very

small

HTTP

transactions

with

one

byte

content,

which

are

unrealistic,

and

we

debated

this

in

the

group

for

a

long

time.

There

is

no

real

use

case

with

one

bite-sized

ATP

transactions.

G

Although

vendors

really

love

it

and

a

lot

of

them

fought

for

it

heavily

and

they

also

had

the

classification

of

the

applications

suppressed,

so

they

basically

said

for

connections

for

a

second.

We

want

to

be

as

fast

as

possible,

so

that

goes.

That

explains

maybe

a

little

bit

Jacob.

Why

why

I

am

a

little

resistant

to

switch

a

lot

of

switching

of

things,

because

if

you

switch

off

things,

then

you

get

very

large

numbers

so

in

in

our

POC

the

results

were

only

half

of

the

vendor

datasheet

numbers.

G

G

B

G

I

hope

so

so

further

findings.

There

were

some

false

positives,

so

we

actually

tested

CVS

like

we

test,

it's

a

vulnerability

attacks

and

these

are

actually

run

under

load

and

that's

actually

also

new,

so

any

other

public

tests

that

have

been

published

so

far.

Our

testing

vulnerabilities,

like

the

denial

of

service

attacks

or

whatever

in

a

functional

scenario.

So

basically

the

labs

typically

set

up

a

performance

test

and

they

create

numbers,

and

then

they

remove

the

performance

test.

They

use

an

idle

firewall

and

then

they

attack

it.

G

And,

of

course

the

file

sister

has

nothing

to

do

and

has

all

resources

to

analyze

the

attack

in

in

our

recipe

we're

actually

putting

background

traffic

in

parallel

to

these

attacks.

So

that's

more

challenging

and

we

also

use

the

NIST

database

of

vulnerabilities

with

certain

parameters

that

are

precisely

defined

in

the

document

to

create

a

selection

of

it

was

what

was

it

a

couple

of

hundred

potential

CVS

and

not

all

of

them

always

work

for

all

vendors

and

similarly

well,

let's

put

it

that

way.

G

So

we

needed

to

run

a

lot

of

manual

tests

for

troubleshooting

and

that's

another

reason

why

we

automated

things

so

with

any

new

methodology.

Of

course,

there

are

there's

a

lot

of

ramp

up

its

first

about

understanding.

You

know

each

vendor

is

used

to

doing

things

in

a

certain

way.

Now

they

need

to

do

things

in

a

different

way,

so

it's

also

of

finding

new

problems

with

new

methodology.

So

that's

why

automation

is

critical

and

we

had

also

some

latency

issues

and

especially

delay

variation

questions

here.

G

So

I

agree

with

your

point

about

latency

and

delay

variation

al.

We

just

need

to

find

out

like

what's

acceptable

and

what's

reasonable

for

each

use

case.

So

that's

basically

all

regarding

the

POC

test.

The

next

steps

will

be.

We

will

continue

to

review

this

draft,

which

we

consider

already

pretty

pretty

stable,

will

add

more

security,

effectiveness,

testing

details.

We

will

focus

on

traffic

profiles.

G

Currently,

we

have

one-and-a-half

traffic

profile,

we

have

one

which

is

fairly

detailed,

which

is

represents

an

enterprise

parameter

test,

but

we

of

course,

also

want

to

focus

service

by

their

mobile

operator

firewalls,

a

little

bit

of

that

is

mentioned

in

the

document,

but

not

too

much,

and

we

also

need

to

prepare

continue

preparing

an

open

certification

program

because

I

we

figured

there

are

always

two

different

levels

of

doing

things

right.

It's

the

same

as

with

the

good

old

RC

2544.

G

The

document

is,

has

a

lot

of

things

and

then

in

the

industry,

they're

established

practices

started.

That

say,

we

use

it

in

this

way

and

we

probably

need

to

have

two

stages,

because

we

cannot

guarantee

exact

represented

by

itself.

We

need

to

also

have

a

group

that

reviews

and

approves

these

results,

and

that's

this

certification

program

from

me

in

DC's

perspective.

We

also

want

to

elaborate

open

source

implementations

and

the

problem

is-

or

it's

not,

maybe

not

a

problem.

G

The

fact

is

that

for

any

domain,

specific

layer,

7

testing,

we

have

to

use

commercial

test

tools

at

this

point,

which

is

fine,

we're

getting

great

support

from

them

and

there

are

actually

not

only

the

HSU.

There

are

three

more

that

are

in

the

queue

of

joining

and

but

still

it

would

be

useful

to

do

some

testing

with

open

source

test

tools

and

what

these

configurations

are

quite

complex

and

for

us

at

least

overwhelming.

G

So

we

ask

for

support

and

help

from

some

groups,

for

example,

for

our

use

of

tier

X

or

other

open

source

testers.

So

if

there

are

any

open

source

groups

here

that

are

interested

in

participating

in

the

POC

testing,

that

would

be

very

much

welcome

and

we

would

work.

We

would

certainly

add

from

a

testing

perspective

and

probably

also

from

our

L

like

to

work

with

them.

So

any

questions.

M

Hi

Tim,

Chang

I

think

this.

All

this

work

is

is

really

good.

My

perspective

on

this

would

be

from

coming

from

a

national

research

network

operator

JISC,

and

we

work

with

a

number

of

universities

trying

to

help

them

move

large

volumes

of

research

data

around

well

I.

Think

what

I

see

with

this

draft

as

it

stands?

M

D

M

Because

of

all

the

background,

traffic

and

processing

that

and

that's

what

that's

a

fairly

well-known,

modern

firewall.

That's

suffering

in

that

way,

I

mean

one

view.

Is

you

mentioned

a

tack

on

the

file

and

in

one

wait

view

these

large

flows

are

kind

of

an

attack

on

the

capability

of

the

files

is

probably

also

bringing

down

its

capacity

to

do

the

business

processing.

So

that's

a

little

bit

of

a

little

bit

of

a

fluffy

words.

Well

I

would

like

to

know

is

whether

there's

scope

in

this

specification

to

allow

the

traffic

mix.

M

I've

looked

in

the

draft,

and

you

mentioned

certain

types

of

mix,

whether

the

traffic

mix

could

include

small

numbers

of

very

high

throughput

flows

and

part

of

the

performance

evaluation

is

the

impact

on

those

flows,

while

the

other

things

you

were

already

defined

are

happening

and

the

impact

of

the

larger

flows

may

be

on

the

business

traffic

as

well.

Yeah

yeah.

G

Absolutely

I

think

we

briefly

discussed

elephant

flows

if

I

remember

correctly,

yeah

Tim

is

nodding,

so

we

did

discuss

them

and

the

good

thing

is

that

this

document

is

modular.

So

we

don't

need

to

change

the

methodology.

We

only

need

to

change

the

profile

or

add

one.

So

we

could

say

we

have

an

enterprise

parameter

profile.

That's

that's

what

we've

been

working

on,

then

we

could

have

a

mobile

operator.

G

Let's

say

whatever

you

know,

file

a

profile

with

a

lot

of

T's

terminals,

sending

small

amounts

of

traffic,

and

then

we

could

have

a

that

say,

academic

area

profile,

and

maybe

if

someone

from

from

your

group

of

universities

could

help

us

to

contribute

it

could

help

contributing

it.

That

would

be

really

much.

M

Practice

at

the

moment,

universities

try

to

tend

to

now

engineer

their

networks

of

the

research

traffic

doesn't

go

through

the

business

firewall.

They

know

they

apply

security

policy

to

it

in

a

sorry,

say,

Lina

way

the

sort

of

security

of

a

social

science

dmz

type

of

approach

you

may

have

come

across

so,

but

it

would

be

much

more

interesting

to

try

and

encourage

the

performance

of

these

firewalls

and

whatever

their

internal

architectures

are

to

not

be

hampered

by

these

types

of

larger

flows

and

process.

G

M

G

M

G

M

Sort

of

aggregate

degradation

I

was

talking

about

was

for

a

sub

ten

millisecond

RT

tiers

between

two

sites,

not

far

apart

in

the

UK

right.

Obviously,

when

you're

sharing

research

traffic

with

the

states

or

something

you're

going,

seventeen

hundred

milliseconds,

then

the

the

impact

obviously

will

be

greater.

How

you

might

simulate

it'll.

Do

that?

There's

an

interesting

question

but

I

think

if

you're

trying

to

get

practical

results

for

university

wants

to

buy

a

file

and

no

as

research

traffic

is

going

to

performance

through

it.

B

B

N

D

N

But

it's

an

interesting

work

thanks

very

much

for

driving

it

home

and

on

the

open

source.

Benchmarking

tool

point

you

called

out

t-rex,

so

I

would

be

very

much

interested

to

to

collaborate

to

see

to

what

degree

t-rex

can

apply

here,

we're

using

t-rex

extensively

in

in

our

project,

and

it's

got

a

stateful

capabilities

with

API

is

now

enabled

so

I'll.

Take

you

on

the

on

flowing

up

on

that

excellent

and

second

question.

What

I

haven't

seen

from

what

you

present

it

and

also

from

the

draft?

N

G

Internally,

at

NTC,

we've

run

this

methodology

with

three

virtualized

firewalls

and

it

works

as

well.

I

mean

there's

shouldn't

really

be

a

difference

from

the

black

box

perspective

because

from

the

application

layer

the

goals

are

the

same.

You

know

if

a

customer

wants

to

have

a

firewall

function

for

a

certain

application

use

case

scenario:

they

don't

really.

They

shouldn't

really

see

any

difference,

whether

it's

an

appliance

or

a

virtualized

solution

in

the

traffic

streams

are

the

same.

The

expected

performance

is

the

same.

So

maybe

is

Jacob

has

said

you

know

if

you

really

split.

G

N

Excellent,

so

you

actually

guessed

my

second

point,

which

is

we're

spending

quite

some

time.

Looking

at

the

distributed

network

functions,

these

need

to

be

in

the

cloud

space

with

container

networking

function

and

that,

thank

you.

The

question

asked

earlier

towards

the

degree

and

the

methodology

may

change

and

because

the

the

way

that

the

composite

device

is

built

is

different

and

I

know

that

you

want

to

also

capture

that

in

the

in

the

draft,

where

the

actual

functions

that

you

are

testing

or

this

with

across

multiple

deities

formulas

of

the

cloud

of

of

the

UTS.

G

Right

so

yeah

any

contribution

is

welcome

either

just

here

via

the

mailing

list

or

if

you

want

to

be

more

in-depth

involved.

You

know

in

netic

open

there

are

currently

quite

a

number

of

firewall

vendors

participating,

but

none

that

already

just

focused

on

cloud

firewalls.

So,

but

because

there

this

in

scope,

we

consider

ssandsk.

Okay,

thank

you.

So.

C

There

are

two

comments,

one

from

actually

both

from

Bala.

The

first

is

that,

in

the

future,

net

sec

open

will

also

create

multiple

traffic

profiles

and

Brian

agrees.

The

second

is

for

virtual

test

reporting

may

be

different,

and

we

need

to

specify

the

number

of

V

CPUs

the

amount

of

memory,

etc,

etc.

After

yes,

thank.

N

You

that

would

actually

that

think

that

I

for

long

is

the

resource

utilization,

which

is

one

of

the

critical

things

in

the

nav

space.

You

measure

performance,

but

you

know

there's

a

difference

that

there

are

two

cores

used

or

five-course

and

and

and

other

and

other

resources.

So

it's

not,

as

with

it

will

have

to

capture.

G

B

D

B

The

process

that

goes

beyond

that

which

is

ITF

last

call