►

From YouTube: IETF104-TCPM-20190325-1350

Description

TCPM meeting session at IETF104

2019/03/25 1350

https://datatracker.ietf.org/meeting/104/proceedings/

A

Okay

and

I'd

like

to

welcome

all

of

you

to

the

tea

CPM

session

here

at

this

ITF

meeting.

First

of

all,

for

those

of

you

who

don't

know

me,

my

name

is

Michael

sharp

and

next

to

me,

is

Michael

Jackson,

and

is

that

I'd

like

to

start

today's

session?

Is

the

with

the

usual

note?

Well,

so

this

is

not

the

first

session

today,

so

I

assume

that

all

of

you

know

that

this

is

in

IETF

working

group.

So

everything

that's

done

here

is

covered

by

the

node.

A

A

A

Okay,

we

have

one

okay

thanks

a

lot

I

mean.

Hopefully

you

use

the

ether

pet,

as

I

will

also

too,

as

far

as

I

can,

for

example,

pay

attention

to

the

ether

pet.

But-

and

maybe

you

can

put

this

in

a

collaborative

style,

but

anyway

we

do

need

note-taking.

I

will

monitor

myself

the

meet

echo,

but,

of

course,

as

it's

something

shows

up

there

we

be

well.

We

appreciate

if

somebody

else

can

also

rely

that

do

we

have

another

volunteer

for

Chapas

crack.

A

We

broaden

the

agenda,

so

this

is

what

has

been

sent

out

before

the

meeting.

So

our

proposal

is

first,

of

course,

to

do

the

usual

status

update.

Then

we

have

two

presentations

for

working

group

items:

first,

olivier

on

the

colorado

traffic

and

ten

million

pop

on

equities,

Ian

and

easy

and

plus

plus,

and

then

we

have

today

quite

a

couple

of

other

presentations

that

are

not

at

all

about

working

through

items.

A

Okay,

that's

not

the

case,

so

then

I

will

move

forward

this

or

usual

status

update.

So

first

of

all

we

have

one

recent

RFC.

It's

the

alternative

back

off.

It's

only

one

recent

RFC,

but

we

have

a

couple

of

documents

that

are

getting

closer

to

completion

in

the

working

group.

So

hopefully

in

the

next

meetings

this

list

will

I

have

a

couple

of

more

entries.

A

Then

I'd

like

to

briefly

go

through

all

working

group

documents

for

the

first

three

bonds.

We

will

have

presentations

here

so

I

will

not

and

say

too

much

about

them.

So

we

will

see

a

presentation

on

the

converter

trough,

which

has

been

recently

updated.

We

will

have

status

updates

on

accurate,

easy

and

a

briefly

on

the

generalized

ecn

or

recent

plus

plus

so

I

will

also

postpone

the

discussion

on

that

one

too,

with

the

actual

presentations.

A

A

A

In

any

case,

having

expired

working

group

documents

is

not

a

good

stages

for

such

a

document,

so

hopefully

we

get

resubmissions

of

this

document

for

the

right

one.

Definitely

we

have

to

have

a

discussion

if

that

document

is

in

in

a

stable

state

and

if

it

can

move

forward,

but

the

first

thing

for

that

tribute

happen,

is

that

we

need

an

active

document.

A

The

second

document,

II

do,

has

not

changed

the

status

recently

and

so

I

assume

they're

still

ongoing

testing

effort

on

basically

the

question

whether

that

scheme

can

be

implemented

and

what

are

the

potential

issues

in

this

implementation

and

then,

last

but

not

least,

we

have

te

793

pitch

document.

This

is

all

the

document

that

has

recently

been

updated

and

I've

put

here

on

the

slide

at

a

comment

from

West

who

believes

that

the

document

is

getting

into

a

pretty

decent

shape.

A

We

will

not

discuss

this

document

in

this

meeting,

but

we,

the

church,

are

discussing

with

the

editor

on

what

to

do

possibly

in

one

of

the

next

meetings,

as

the

document

is

moving

forward

and

I

just

like

to

point

out

that

in

his

last

email

to

the

mailing

list,

Wes

has

flagged

a

couple

of

sections

in

the

most

recent

version

of

the

document

and

he

is

looking

for

feedback

on

those

sections.

So

please

have

a

look

at

those

questions.

A

We

recently

have

performed

the

working

group

at

optional

call

on

the

21:40

bees

document

and

the

working

group

adoption

call

has

run

until

Friday

last

week,

so

we

didn't

get

a

lot

of

feedback,

but

there's

also

been

no

pushback

on

working

group

adoption.

So

the

understanding

of

the

chairs

is

that

there

is

consensus

in

the

working

group

and

to

adapt

on

this

document.

A

Okay,

see

no

comments,

so

the

consensus

on

that

this

to

adopt

it

as

a

tea,

CPM

working

group

item,

and

then

this

is

my

last

slide.

This

is

just

a

heads

up

on

a

document

that

is

not

in

TCP

M,

but

we

have

repeatedly

presented

the

document

here

in

TCP

M

Templi,

because

it's

quite

related

to

our

work

here.

A

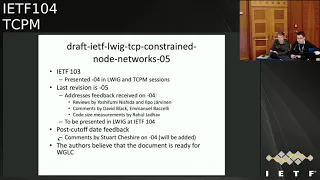

It's

the

document

on

the

constraint,

node

networks,

so

this

document

has

been

presented

in

detail

last

meeting

and

this

is

triggered

quite

a

bit

of

excellent

reviews

after

the

last

presentation,

and

also

specifically

I'd

like

to

highlight

that

we

got

a

pretty

good

list

of

feedback

comments.

First

word:

we

work

on

addressing

these

comments.

There

will

be

some

technical

changes

in

the

document

to

address

all

the

comments

that

have

been

made

and

assume.

The

main

author

will

send

a

summary

of

the

changes

to

the

list,

but

other

than

that

the

feedback

in

general

seems

positive.

A

So

the

authors

believe

that

this

document

is

getting

pretty

close

to

working

group

last

call,

and

so

that's

why

I

asked

the

working

group

to

carefully

review

the

most

recent

version

before

it's

not

a

tspn

document,

but

it

has

been

agreed

in

the

past

that

there

will

be

a

joint

working

group.

Last

call

in

the

library

I

be

working

like

that

IG

working

group

and

TCP

M.

So

we

will,

you

will

see

traffic

online

tests

on

the

mailing

list

and

that

is

actually

on

the

last

slide

in

the

chair

part

also.

B

B

Transport

between

the

client

and

the

converters

and

the

motivation

for

this

work

is

to

allow

a

TCP

connection

to

be

established

to

a

proxy

so

that

you

can

learn

whether

a

remote

server

supports

a

given

TCP

option

or

not,

and

the

menu

CSS

multipass

tcp,

so

that

you

can

get

multipass

tcp

on

the

access

network

through

the

proxy

and

then

learn

whether

the

final

destination

supports

and

p

tcp

or

not.

Next

slide.

Let

me

just

show

you

how

it

works

so

next

slide.

B

So

the

client

sends

a

scene

to

the

converter

to

connect

to

a

specific

server

and

this

the

converter

message

is

encoded

as

a

TLD

in

the

scene,

payload

and

the

converter

will

send

a

syn

to

the

server.

The

server

will

replied

next

slide

and

then

the

converter

can

confirm

next

slide.

The

establishment

of

the

connection

by

using

a

TLD

information

in

the

payload

to

confirm

that

the

connection

to

the

remote

server

has

been

established

and

then

next

slide.

B

At

this

stage

we

have

a

transparent,

byte

stream

through

the

converter

between

the

client

and

the

server

in

post

direction

and

next

slide.

So

what

are

the

main

changes

since

the

last

presentation

of

this

work?

We

got

feedback

from

different

implementers

and

asked

to

tweak

us

to

tweak

a

bit

the

protocol.

B

So

in

the

previous

version

we

relied

on

TFO,

but

we

know

that

here

who

has

issues

in

some

deployment

use

case,

and

what

we

did

is

that,

instead

of

using

the

TFO

option

to

encode

a

cookie

to

secure

the

connection

between

the

client

and

the

server,

we

move

the

cookie

to

the

user

space

by

using

a

specific

TLD.

So

the

same

functionality

is

there,

but

it's

not

using

TCP

options

anymore

next

slide.

So

let

me

show

you

how

the

cookie

works,

so

next,

so

the

clients

and

the

scene

with

the

connect

message

to

the

converter.

B

The

converter

does

not

have

because

there

is

no

cookie

inside

the

scene,

and

so

what

the

converter

will

do

is

that

it

will

compute

a

cookie

and

a

way

to

compute.

A

cookie

could

be

like

we

do

compute

the

cookies

in

TFO.

So

by

using

the

hash

of

the

IP

source

address,

then

you

can

return

the

cookie

in

the

simple

sock

with

an

error

message

that

indicate

the

cookie

that

the

client

should

use

and

then

next

slide.

So

we

can

restart

the

connection

again.

A

syn

we

encode

the

cookie

that

was

written

by

the

converter.

B

Now

the

converter

can

check

the

cookie

by

doing

again

a

hash

computation,

for

example,

but

there

are

other

possibilities

and

then

the

cookie

next

ID

can

establish

the

scene

and

then

next

slide.

We

get

the

response

from

the

server

and

next

slide.

You

get

the

simple

nak

Kozak

and

the

connection

is

established

as

before.

A

next.

C

B

Then

you

key,

they

are

also

used

edges

where

the

client

would

send

a

cookie

next

slide.

So

you

send

the

scene

with

an

invalid

cookie,

and

this

can

be

checked

by

the

converter

and

the

converter

can

return

an

error

message

saying

that

this

cookie

was

not

authorized

because,

for

example,

the

cookie

has

expired

on

the

on

the

converter

and

the

converter

wants

to

get

a

new

cookie

from

the

client

to

reopen

ticket.

B

The

client

owns

the

IP

address

from

which

its

received,

so

it's

exactly

the

same

stuff

as

what

we

do

in

Tier

four

by

using

TCP

options,

except

that

you

do

that

inside

the

payload.

So

we

can

have

a

cookies

that

are

much

larger

than

the

ones

that

we

use

in

the

TF

auction.

The

main

use

case

for

this

control

to

draft

is

to

support

multi

by

CCP

in

access

network.

B

B

We

will

see

what

happens

when

the

server

supports

multi

pass,

TCP

and

next

slide.

So

again

we

send

us

in

next

slide.

We

send

it

to

the

server.

The

server

next

slide

will

tries

with

the

NP

capable

option

and

then

the

converter

will

return

in

the

extended

TCP

header

TLV.

The

NP

capable

option

that

was

returned

by

the

server,

and

so

the

client

now

knows

that

to

reach

this

specific

server,

it

can

use

weekly,

pass

CCP

and

so

for

the

next

TCP

connections.

B

It

can

send

a

syn

directly

to

the

final

server

without

using

the

proxy.

So

that's

a

way

to

learn

whether

to

be

able

to

use

MP

TCP

on

the

access

network

through

the

convertor,

when

the

server

does

not

support

the

capacity

and

when

the

server

supports

multi

pass

TCP,

you

can

learn

it

and

use

direct

connection

to

the

server

next

slide.

So

to

summarize

what

happened?

So

we

believe

that

now

we

have

a

simplified

design

which

takes

into

account

all

the

feedbacks

we

receive

from

from

implementers

and

on

the

mailing

list.

B

There

is

ongoing

work

in

different

studies,

ation

about

using

this

convert

protocol

and

it's

being

adopted

by

to

sanitation

bodies.

So

one

is

the

broadband

forum

which

uses

that

as

one

of

the

solutions

for

a

grid

access

network

based

on

eighty

by

CCP-

and

this

is

WT-

three

seven

eight,

which

is

now

being

with

after

approbation,

is

being

finalized,

and

it's

also

used

by

3gpp

for

the

ATSs

service,

which

will

allow

to

combine,

treat

5g

and

Wi-Fi,

but

also

other

types

of

network.

And

this

is

part

of

the

work

in

twenty

three

701.

B

D

Proving

Microsoft

I'm

very

curious,

why

you

decided

to

not

use

TCP

fast,

often

because

fast

open

does

have

an

option

where

the

cookie

size

can

be

zero.

If

the

server

doesn't

care

about

enforcing

security,

the

cookie

size

can

be

zero

and

the

advantage

of

using

fast

open

is

that

most

operating

systems,

at

least

on

the

client

side,

would

already

support

us

and

India

tanderson

for

you,

so

otherwise

you're

looking

at

like

using

raw

sockets

or

something

to

do

so.

B

So

that's

so

we

have

a

specific

protocol

and

we

want

to

be

able

to

confirm

that

the

scene

comes

from

a

client

that

we

know

can

receive

simply

Zak.

So

for

that

we

need

to

have

a

cookie

and

the

cookie.

There

are

two

ways

to

encode

it.

One

is

to

encode

it

in

G,

TCP

other

as

a

TCP

option,

or

we

can

encode

it

in

the

payload.

B

D

E

Just

one

addition

to

the

comment

about

the

the

support

of

the

zero

cooking

for

TCP,

for

so

we

used

to

have

that

in

previous

version

of

the

specification

since

version

4,

but

we

we

have

discussions

with

other

implementers

vendors

and

DC,

that

is,

for

at

least

for

their

user

equipment.

It's

really

difficult

to

have

the

additive

food

supported

directly

as

an

option,

and

this

is

why

we

have

started

with

ODB

to

over

design

and

to

provide

the

same

functionalities

in

detail

instead

of

the

duty

for

so

we

used

to

have

that.

E

D

I'll

be

very

curious

on

you

know

what

kind

of

MIDI

box

issues

you

see

when

you

include

the

renders

and

without

the

T

option,

because

you're

going

to

see

two

patterns

of

traffic

on

the

network.

Now

one

has

30

fortune

and

the

data

and

one,

but

there

is

no

option,

there's

going

to

be

data,

so

it

just

seems

to

me

the

TFS,

the

cleaner

approach

but

yeah.

B

Yeah

we

have

not

yet

tested

that

in

real

network

with

middle

boxes,

but

I

think

the

the

deployment

use

case

that

I

mentioned

on

the

last

light

there

are

use

case

is

where

the

network

operator

will

control

the

palace.

And

so

we

know

that

there

is

no

middle

box

that

will

mess

up

and

whether

we

have

a

middle

box

that

messes

up

with

having

TCP

data

in

the

payload

or

having

a

TTL

option.

I

think

it's

the

same

problem

and

I

don't

see

a

difference.

Making.

F

Even

so

that

part

I,

agree,

I

think

there

are

already

problems

with

metal

boxes.

Even

so

you

have

the

option

and

but

I

think

having

the

option

is

the

matter.

You

know

design

approach

here

if

you're

talking

about

an

application

protocol

because

otherwise

has

changes

in

antics

of

TCP

and

Alain

transport,

but

I

also

need

to

look

at

the

draft

in

detail

because

I

don't

think

you

rule

it

out

completely

so

depend

strongly

on

the

exact

phrasing

in

the

traffic.

What

makes

sense,

but.

B

So

TFO

was

inserted

in

TCP

to

support

web

applications

and

to

have

a

way

to

support

the

establishment

of

sin

of

data

in

the

scene

in

applications

that

cannot

encode

the

cookie

information

in

the

application

itself.

So

we

are

here

in

a

different

situation,

so

we

have

an

application

that

can

support

the

cookie

inside

the

application

itself

and

I

think

it's

cleaner

to

put

the

cookie

information

inside

the

application.

F

B

B

G

Christopher

Apple

I

want

to

address

they

combed

about

the

middle

box

issues

when

using

TFO

without

TFO

option

independent

from

the

convertor.

We

have

done

experiments

and

it

x3

is

much

it's

not

much

better,

but

it's

better

than

with

the

option.

So

you

can

use

same

with

data

and

have

more

RP

10%

higher

success

rate.

D

G

A

So

this

is

Michael

speaking

as

Cheer,

so

and

then

we

have

here

on

this

slide

a

very

unusual

thing:

the

other

stos

directly

reference

our

work.

So

my

question

to

the

author

is:

can

use-

or

maybe

two

other

people

in

the

room.

The

canyon

share

more

details

on

how

this

pack

is

used

by

open

forum

or

3gpp,

and

the

specific

question

that

I'd

like

to

raise

is

if

there

is

an

issue

that

this

is

experimental

work,

because

we've

made

a

call

some

time

ago

that

this

is

an

experimental

protocol

and

I.

A

E

So

as

infomercial

for

the

the

first

question

that

you

asked

about

the

the

current

aggression

in

the

3gpp

specifications,

so

so

far,

the

the

converter

specification

is

the

only

specification

which

is

used

for

the

aggregation

there,

so

the

they

are

relying

exclusively

on

the

solution.

We

are

developing

here

and

the

same

working

group

for

their

work,

and

you

have

some

I

would

say

some

time

constraint

in

the

final

lines

in

the

dispossession.

So

they

are

waiting

for

us

to

finalize

the

RFC

here.

E

So

if

you

can

finance

it

by

May

during

this

year,

this

will

really

be

great

for

offer

their

work,

because

this

there

is

I

would

say

it's

only

editorial

and

notes.

That

is

that

we

need

the

RFC

staff

to

be

published

numbers

so

that

we

we

have

to

work

until

write

it,

as

this

is

for

your

first

question

for

the

second

one,

the

experimental

and

for

sterner

track.

Just

for

the

record.

We

when

we

started

this

work,

we

thought

we

have

done

that

in

the

in

participate

working

group

and

at

that

time

the

impetus.

E

Apiece

facilitation

is

experimental.

So

we

lift

that

that

header

as

experimental

level

there

so

for

us

we

are

opened

here,

if

do

if

there

is

no

extra

delay

in

the

development

of

the

specification.

I

would

personally

be

in

favor

to

consider

to

this

work

as

a

candidate

for

a

first

solid

track.

But

if

this

will

induce

I

would

say

additional

discussions

and

in

terms

of

in

more

long

time

to

have

the

discussion

finalized,

we

can

go

with

the

experimental

and

then

see

the

feedback

from

the

field

and

the

the

deployment.

E

F

A

So

this

is

about

between

5

and

10.

So

my

understanding

of

the

feedback

is

that

we

do

need

an

update

of

the

document,

but

other

than

that,

we

are

hearing

that

this

document

is

moving

forward

and

there

seems

to

be

both

ongoing

implementation

effort

as

well

as

use

in

other

sto.

So

that's

a

good

sign.

So

from

a

chair

perspective,

we

would

head

for

working

through

glass

all

relatively

soon.

A

B

A

F

Hello,

I'm

increment,

my

co-authors,

are

pop

and

Richard,

who

are

here

as

well

today,

and

this

is

a

quick

update

and

I

hope

you're

done

with

this

document.

So

first,

a

quick

recap

was

what

was

the

problem

that

we're

solving

here?

The

problem

is

EC

n

is

there?

Ec

n

is

around

for

a

while,

and

there

is

feedback

from

the

receiver

to

the

sender

to

see

to

tell

if

there

were

any

markings

seen

on

the

past

and

then

the

receiver

is

required

to

or

should

be

reacting

to

it

with

some

kind

of

congestion

reaction.

F

So

to

provide

this

feedback,

there

are

already

three

bits

in

the

TCP

header,

but

the

feedback

itself

is

limited,

just

like

providing

one

signal

per

round

trip

time.

So

you

don't

know

with

the

classic

easy

n

approach.

How

many

markings

have

the

bin?

Has

it

been

like

a

severe

Kadesh

or

just

like

a

very

light

congestion?

And

if

you

have

this

information,

however,

you

can

adopt

your

congestion

control

to

this

information,

make

it

more

reactive

next

slide.

So

what

equity

is

the

N?

F

The

last

time

I

think

already.

There

were

a

main

question

about

what

what

if

this

experiment

fails.

We

have

then

like

burned

all

the

heads

all

the

bits

in

the

in

the

header

for

the

negotiation,

so

we

make

sure

that

all

unused

combinations

still

lead

to

some

kind

of

accurate,

easy

and

negotiation.

So

we

have

a

way

open

to

further,

extend

and

update

the

accurate

ecn

specification

in

future.

Do

you

want

to

interrupt

no.

A

At

least

make

the

work

Michael

speaking

from

the

floor,

so

we

have

the

in

DTF

discuss

this

question

quite

a

bit

the

other

to

me.

What

is

presented

here

is

for

what

compatibility

makes

a

lot

of

sense,

if

you

assure

that

accurate

Sen

is

the

right

thing

to

do

forever,

but

it's

experimental.

So

we

don't

know

what

the

experiment

outcome

will

be

and

in.

A

The

the

last

sentence

so

that

we

make

the

behavior

of

occurs

accurate,

easy

and

predictable

for

future

protocols.

That

assumes

to

me

that

the

future

protocol

is

accurate,

Sen

and

as

individual

contributor

I

personally,

could

foresee

a

world

where

the

iCard

ecn

experiment

fails,

and

we

will

do

something

else

and

then

to

me

the

as

this

specific

world

doesn't

help

at

all.

I

don't

mind

at

the

end

of

the

day

to

me,

as

I

said,

also

to

me

for

an

experiment.

I

The

unexpected,

oh

and

yeah

pop

risk,

oh

yeah,

that

last

sentence,

which

I

write

obviously

didn't,

write

it

in

a

way

that

Michael

would

not

find

an

ambiguous

because

I

meant

to

say

for

future

and

feedback

protocols.

In

other

words,

if

this

fails,

what

do

existing

accurate,

ACN

servers

do

they've

got.

I

You

know

one

of

three

choices

till

they

do

not

ECN

3168

ACN

or

what

they

currently

you

know

what

they've

been

coded

to

do,

which

is

that

crew

ECM,

when

a

client

talks

to

her

ACK

only

to

an

accurate,

easy

answer,

but

this

isn't

to

you

know

any

other

server.

It's

watching

that

creating

server

terrific

gets

a

field

that

currently

doesn't

exist.

You

know

a

value

that

the

current

doesn't

exist.

That's

all

this

questions.

Ask

people.

J

Gory

first

and

as

TS

vwg

chair

culture-

and

this

document

is

kind

of

related

to

the

l4s

environment

and

it

runs

an

experiment

and

L

for

us

is

an

experiment,

so

I'm

just

kind

of

calling

out

that

there

are

no

two

experiments

which

have

some

sort

of

dependency

on

each

other,

and

maybe

this

is

necessary

to

have

some

form

of

ecn

feedback

for

l4s

to

work.

So

I

understand

this

I'm

just

asking

for

a

comfort

level

amongst

the

editors

and

maybe

most

of

the

people,

saying

that

there

are

two

experiments

here.

Just.

F

F

Okay

in

the

recent

version,

we

also

updated,

based

on

a

discussion

from

last

time,

which

was

triggered

by

Praveen.

May

sleep

aid

mainly,

and

we

try

to

clarify

that

the

option

is

really

optional,

and

that

also

means

in

your

depending

use

case.

You

might

have

benefits

and

not

even

implementing

it,

because

that

can

optimize

your

code.

So

we

did

change

the

wording

a

little

bit

to

hopefully

make

this

sure.

No

clear,

no

I

mean

yeah.

F

This

is

the

wording

or

check

the

draft

next

slide,

and

then

the

other

discussion

was

triggered

by

you

Chun,

who

was

favoring

the

DC

TCP

style

feedback

over

and

the

feedback

we

are

providing

right.

Now

we

had

a

lot

of

discussion

about

it,

and

part

of

the

problem

is

that

the

design

we

provide

right

now

is

probably

more

optimized

for

an

internet

scenario

where

you

expect

like

a

low

number

of

congestion

markings.

F

While

in

a

datacenter

scenario,

you

might

have

a

high

number

of

congestion

markings

and

therefore,

in

some

cases

the

data

send

the

TCP

feedback

might

be

more

optimal.

However,

the

optimization

really

depends

on

the

offloading

you're

implementing

and

there

is

a

way

to

also

optimize

the

offloading

for

equities,

and

so

we

talked

a

little

bit

more

about

this

in

the

draft.

But

more

importantly,

is

also

that

there

was

at

the

hackathon.

F

There

were

people

working

on

the

accurate

ECM

page

and

working

trying

to

get

this

in

the

kernel

and,

at

the

same

time,

also

working

on

offloading

and

the

problems

we

see

with

offloading,

we

believe

are

mostly

historical.

We

don't

even

know

how

this

got

into

the

shoe

and

so

they're

trying

to

fix

that

and

provide

patch

patches

for

that

as

well

yeah

and

that's

kind

of

half

way.

What

I

already

said

so

Olevia

two

months

is

working

based

on

like

my

original

type.

F

Proof-Of-Concept

implementation

he's

basically

implementing

a

completely

which

is

great

and

he's

bringing

this

into

the

kernel,

and

the

first

step

will

actually

bring

it

into

the

kernel

without

the

option

to

really

minimize

the

changes,

and

we

have

to

do

in

the

kernel

and

then

later

on,

provide

another

patch

to

to

have

that

the

option

part

as

a

compiler

option.

If

you

want

to

use

it,

so

that's

ongoing

I,

don't

think

the

patch

or

the

request

to

come

has

landed

yet,

but

should

be

this

week.

Probably.

F

A

Okay,

I'm,

sorry,

any

further

comments.

First

of

all,

as

we've

seen

here

on,

the

line

specifically

lasts

quite

a

bit

on

comments

that

are

actually

bought

in

planetary

implementation,

details

such

as

Jiro.

So

that's

why

I

wonder

specifically

to

the

implementers

in

this

room?

Do

we

have

any

further

comments

on

the

most

recent

version

of

the

protocol

or

you

feel

comfortable

is

moving

this

forward

so.

D

I

A

A

A

And

specifically,

among

those

who

have

read

the

document,

is

there

anybody

who

has

concerns

in

starting

a

working

group

last

call

relatively

soon

as

I

say

after

we've

see,

and

we

see

in

the

next

update,

addressing

the

minor

remaining

things.

So

this

would

be

an

excellent

opportunity

to

speak

up,

okay,

so

for

the

note-taker.

So

you

have

no

concerns

regarding

studying

and

working

coop

last

call.

A

So,

regarding

the

next

step

to

the

first

of

all,

I

think

we

are

waiting

for

an

update,

even

if

it's

small

editorials

things,

so

that's

definitely

something

that

should

be

done

and

then

other

the

chairs

will

check.

If

we

can

start

working

group

last

call,

we

can

do

this

in

that

case

anyway

on

the

list,

so

that

doesn't

have

to

wait

for

the

next

meeting.

A

So

now,

I'm,

now

speaking

from

the

presenter

line

and

I'd

like

to

present

a

new

document

that

has

not

been

presented

in

T

CPM

before,

and

it

basically

raises

the

question

whether

we

have

to

start

working

on

a

young

model

for

TCP.

So

next

slide,

please.

So

the

background

of

this

work

is

mostly

TCP

implementations

on

embedded

devices.

So

that

is

something

that

in

key

CPM,

we

actually

hardly

discuss,

because

we

focus

a

lot

on

the

TCP

stacks

that

run

on

hosts.

A

That

is

something

that

has

triggered

a

lot

of

work

in

the

IETF

outside

transport

area,

but

there's

one

specific

issue

that

now

matters

positively

to

this

working

group,

namely

the

fact

that

we

do

have

a

TCP

map.

So

it's

standardized

in

RFC,

4022

and

I

try

to

figure

out

who

has

implemented

it.

So

I've

found

data

sheets

of

at

least

three

different

devices

from

different

vendors,

claiming

that

they

have

an

implementation

of

that

myth.

A

Now

the

IETF

has

decided

to

deprecated

MIPS,

more

or

less

or

at

least

to

move

to

a

to

net

config

as

a

superior

technology,

and

that

now

raises

the

questions

of

what

happens

with

the

management

of

TCP

stacks

on

devices

that

will

maybe

at

the

moment

is

full

manifest

and

peep

at

the

management

move

is

to

net

convey.

Hang

there

is

one

candidate

solution

for

devices

that

have

used

the

myth

and

there

is

a

way

to

translate

a

myth

to

a

young

module.

A

So

there's

an

algorithm

to

do

that

and

in

fact,

I

have

found

signs

that

vendors

might

even

do

that.

But

of

course,

that

is

a

translation

of

a

pretty

old

myth

and

if

you

check

the

details

of

that

myth-

and

you

will

see

that

it's

a

very

old

RPC

actually

which

brings

up

the

question

if

this

is

indeed

the

right

thing

to

do,

and

that's

specifically

the

question

I'd

like

to

raise

here

and

I

want

to

put

up

a

disclaimer

right

from

the

beginning.

A

So

this

talk

is

really

about

devices

that

are

managed

by

network

management

protocols

such

as

net

config,

a

risk

on

seeing

I'm,

not

necessarily

speaking

about

an

end

host

operating

system.

Here

because

thought

you

know

very

well

that

there

are

other

means

to

configure

TCP

stack

so

I'm

talking

here

about

devices

that

are

natively

managed

by

yang

modules

in

order

to

analyze

a

bit

little

bit

more

detail.

What

we

have

right

now

there

and

I've

put

on

the

next

slide.

A

The

content

of

the

TCP

mmit,

but

I

decided

not

to

use

the

map

itself,

but

instead

to

convert

it

to

a

young

module.

I'm

not

saying

that

this

is

a

reasonable

thing

to

do,

but

it

at

least

gives

overview

of

what

the

TCP

map

originally

has

standardized,

and

it

also

provides

solution

how

a

young

model

could

hypothetically

look

like

if

we

decided

to

standardize

a

young

module

for

TCP?

You

can

see

these

auto-generated

the

immortal

here

or

more

specifically,

the

tree

version

out

of

it.

So

this

is

not

there's

no

editing

here.

A

It's

an

fully

automatic

convert,

a

conversion

of

the

TCP

map

and

if

you're

a

little

bit

familiar,

is

the

tree

structure

of

your

modules.

You

can

see

here

the

key

pieces

that

we

have

in

this

young

module.

So

the

first

important

point

to

note

is

that

most

information

in

the

TCP

mate

is

weak

only

so

actually

it's

mostly

amid

for

monitoring.

There

is

hardly

any

configuration

in

that

map,

except

for

one

detail

as

if

you

go

into

another

part

of

this

slide,

you

can

see.

A

And

it

contains

more

or

less

four

different

sorts

of

entries.

First,

there

is

a

little

bit

of

information

about

the

TCP

configuration,

but

it's

limited

to

the

configuration

of

the

retransmission

time

mode,

most

notably

about

the

minimum

time

mode,

and

if

you

look

more

detail,

but

it's

actually

in

the

mid,

it's

pretty

outdated,

they're,

pretty

outdated

references

to

TCP

is

back

there.

A

Second,

the

mid

includes

a

number

of

stat

counters,

for

example

on

connection

statistics,

and

then

there

are

two

tables:

there's

a

connection

table

that

basically

lists

the

active

connections

of

that

device

and

there's

a

TCP

listener

table,

and

that's

basically

what

the

myth

is

all

about.

So

we

see

it's

a

pretty

simple

myth

after

all,

and

that

is

probably

also

one

of

the

reasons

why

it

might

have

being

implemented

since

it's

a

relatively

simple

model,

and

that

obviously

brings

us

to

the

question

on

the

next

slide.

A

So

is

this

something

that

we

have

to

care

about

and

I

have

started

this

document,

mostly

as

a

placeholder

I

mean

I

will

not

argue

that

this

is

something

that

can

be

adapted

at

that

point

in

time.

But

still

if

we

look

at

what

happens

in

other

working

groups,

how

much

effort

is

spent

on

designing

young

modules?

So

there

are

to

me

there

is

a

question

in

the

room

whether

we

need

something

in

that

space.

A

C

A

We

have

the

wording

in

there

that

talks

about

interfaces

that

maintained

his

EP

utilities.

This

is

the

network

management

interface,

so

I

believe.

If

we

decided

to

do

something

in

the

longer

run,

it

would

be

in

the

Charter

and

second

other

IETF

work

in

that

space

is

to

be

standard,

strict,

which

is.

We

have

a

very

pretty

high

bar

here

in

in

TCP

my

standards

check

and

with

that

I

think

we

have

a

question.

K

Michael

Abraham's,

it's

a

less

of

a

question

or

more

of

a

statement.

Actually,

so

we

are

using

that

company

Hank

to

manage

everything,

we're

trying

to

move

in

that

direction

where

a

mid

administrating

servers,

home,

routers,

core

routers,

whatever

most

most

of

these

things

actually

speak,

TCP

I

would

like

a

comprehensive

yang

model,

so

please

do

not

just

alter

translate

whatever

we

had

lying

in

the

cupboard,

but

a

comprehensive

work

on

typical

settings

that

is

available

own

devices.

Okay,.

A

That's

a

fair

point:

I

mean

one

of

the

things

that

we

would

I

could

offer.

Is

that

I

would

try

to

reach

out

to

vendors

in

that

space,

because

this

is

something

obviously

where

we

need

input

from

vendors

on

what

this

set

of

typical

things

is,

of

course,

we

know,

and

there

are

a

couple

of

parameters

that

are

pretty

common

for

TCP

configuration

that

are

probably

uniform,

but

obviously

that

is

something

where

typically

as

a

vendor

input

would

be

highly

appreciated.

K

L

Laura

Secord

so

I

remember

when

we

did

the

MIPS

right,

because

that

was

the

cool

thing

that

everybody

needed

to

manage

their

networks

and

it

was

super

painful

because

nobody

actually

cared

and

and

and

E

steps

was

even

more

painful

and

than

the

basic

MIT

pride.

And

so,

unless

there's

like

a

line

of

operators

out

the

room

that

that

screamed

for

this

I

would

not

want

to

do

this.

Simply

because

it's

gonna

be

very,

very

painful

and

very

very

hard,

and

you

know

yeah.

You

can.

L

L

E

So

I

think

that

we

can

find

a

balance.

This

work

I

think

that's

something

which

is

really

interesting.

That

can

be

useful.

It

is

useful

because

if

we

see

some

simple

existing

models,

we

will

say

that

they

are

catching

on

TCP

stuff.

Take,

for

example,

the

BGP

ongoing

yang

model.

You

will

see

that

they

are

defining

some

generic

TCP

and

parameters

there.

E

So

what

personally

I

would

like

to

see

if

you

have

something

here,

anticipate

that

this

are

I

would

say

our

first

level

bar

in

terms

of

the

generic

parameters

that

we

we

can

use

to

tune,

the

TCP

stack

for

a

sense.

It

misses

this

kind

of

stuff

and

so

on.

So

this

modules

can

reuse

what

you

you

can

define

here.

A

generic

I

would

say,

parameters

for

configure

on

TCP

M,

so

I

provided

the

example

of

of

BGP.

E

You

know

mono,

but

you

can

can

find

other

models

in

which

they

are

touching

exactly

on

the

same

stuff.

So,

instead

of

having

something

which

is

sprayed

a

monic

among

a

list

of

yang

model,

I

prefer

to

have

something

which

is

ready.

I

would

say

the

reference

not

have

everything.

As

mentioned

by

thickness

this

one,

it

will

be

really

painful

to

have

something

which

is

already

comprehensive

from

from

day.

One

just

start

with

something

which

is

really

I

would

say,

balanced

and

then

take

the

work

from

there.

I

support

this

work.

K

Michael

Abramson

again,

of

course,

I'll

take

less

if

I

actually

get

anything.

It

is

not

an

all-or-nothing

proposition

and

I

know.

There

are

a

few

people

who

like

or

the

best

thing

that

they

know

is

to

create

the

young

model

for

management.

This

has

never

been

a

sexy

thing.

Very

few

people

enjoy

it.

It's

painful.

Okay

I'll

give

you

that,

but

there

must

be

a

bunch

of

like

basic

functionality.

The

mouth

most

TCP

stacks

share,

I've

turned

on

soon

cookies

in

a

bunch

of

different

operating

systems,

so

I

mean

there.

K

There

must

be,

at

least

like

I,

don't

know,

30

50

parameters.

That

is

very

common,

so

we

can

start

there.

That's

a

perfect

first

delivery,

just

the

knobs

they're,

typically

there

in

most

operating

systems,

and

we

can

augment

later

it

did.

It

doesn't

have

to

be

perfect

from

day

one

and

it's

very

to

deliver

something

in

six

months

and

then

another

thing

in

a

year

more

enhancements

in

a

year

just

keep

the

pipeline

going

then

to

get

a

prayer

to

sit

on

it

for

four

years

until

we

think

it's

perfect.

So.

A

Just

to

be

clear,

I

don't

believe

that

we

can

ever

come

up

with

a

very

comprehensive

model.

I'm

so

because

I

mean

TCP,

six

do

differ.

What

is

possibly

doable

is

to

it

the

basic

knobs

that

directly

map

to

aspects.

So

a

couple

of

things

where

we

have

an

hour

see

that

basically

specifies

that

there

is

something

that's

optional

that

can

be

turned

on

and

off,

and

that

is

something.

A

L

Logically,

I

want

a

pony,

so

this

is

this

is

gonna,

be

the

same

thing

we

did

with

the

MIT

model

right.

It's

gonna

get

super

complicated

very

quickly,

even

with

certain

cookies,

but

even

like

something

very

simple:

it

changes

from

kernel

version

to

kernel

version,

potentially

right.

It

changes

from

operating

system

to

operating

system

multiply

this

with

all

the

different

options

and

parameters.

We

have

that

this

is

and

and

given

that

we

don't

have

one

now

and

we

don't

seem

to

have

huge

operational

problems.

A

M

So

I'm,

looking

at

this

at

the

moment

from

the

taps

perspective

and

we

will

soon

have

four

taps

to

define

some

way

to

set

TCP

specific

options

and

I

think

having

a

yang

model

to

import

there

and

just

say:

okay,

this

is

the

list

of

properties.

We

can

import

into

taps

and

say

these

are

the

see

specific

options

would

be

really

really

cool

so

but

I'm

only

seeing

me

from

a

consumer

of

that

model

and

not

as

the

one

who

was

doing

the

hard

work.

Sorry.

H

M

It

is

connection

with

specific,

but

I

think

going

that

through

that

direction

could

also

be

interesting

to

see

which

of

the

settings

of

this

Dieng

model

cannot

be

applied

on

a

comet

per

connection

basis

and

which

can

only

be

said

as

a

system

default.

So

I

think

these

are

two

different

kinds

of

settings

on

different

branches

of

a

tree

which

might

make

sense

to

say.

Okay,

these

are

connection

specific

settings,

and

these

are

settings

that

are

globally

for

the

system.

H

A

Okay,

so,

as

I

said

before,

I

mean

at

the

moment

this

is

a

placeholder,

so

we

don't

have

to

come

to

come

to

come

to

a

conclusion

in

this

meeting.

So

technically

I

think

we

can

decide

to

do

it.

These

four

things

here

in

this

working

group.

The

first

thing

is

what

laws

has

just

suggested

to

do

nothing,

the

other

two

options.

A

B

A

A

Know

that

my

proposal

is

I

would

want

you

to

have

a

second

talk

on

that,

maybe

in

the

next

IDF

meeting,

with

some

additional

insight

on

my

site.

So

that

would

be

my

offer

to

this

working

group.

But

if

the

working,

who

believes

that

you

should

stop

this

activity

just

right

now,

then

I

will

also

be

fine.

So

that

is

if

you

in

particular,

if

you

believe

that

I

should

not

invest

any

further

cycles

on

that.

So

it

would

be

perfect

to

say

that

no.

H

So

I

think

it's

still

an

individual

contribution,

so

you

can

do

whatever

you

want

all

right,

but

I

got,

but

the

feedback

I

got

or

the

impression

I

got

is.

If

you

can

reach

out

to

come

operators

and

figure

out.

If

there

is.

If

there

is

a

need

for

using

this

stuff,

then

it

might

help

us

to

address

the

concerns

raised

by

loss.

I

mean

we

had

positive

and

negative

in.

If

we

can

figure

out.

If

we

will

have

consumers

of

this

work,

then

you

might

make

a

positive

decision.

I

A

I

H

I

I

If

you

set

ect

on

the

sin

since

work

out

the

solution

written

the

patch,

it's

gone

into,

the

limped

mainline

pipeline,

Bentham

feed

bang

come

out

and

gone

back

in

again,

but

basically,

we've

worked

our

way

so

that

when

you

set

easy

on

the

sin,

if

you're

using

accurate,

ACN

or