►

From YouTube: IETF104-IEPG-20190324-1000

Description

IEPG meeting session at IETF104

2019/03/24 1000

https://datatracker.ietf.org/meeting/104/proceedings/

A

A

A

A

B

C

Network

I

haven't

been

to

a

PT's

in

a

while

at

school

that

show

meant

so

many

people

show

up

used

to

be

smaller,

okay

and

I'm.

Here.

To

tell

you

about

offers,

there

was

sort

of

a

plea

for

agenda

items

were

neither

DNS

more

BGP,

so

I

try

to

I

try

to

feel

that

I

don't

have

nice

slides,

unfortunately,

but

I

hope

that

I

can

interest

you

a

little

in

this

awesome,

exciting

topic

of

buffer

sizes.

So

this

is

about

sizes

of

the

buffers

in

Reuters

and

switches,

I.

C

Think!

Well,

you

probably

all

know

why

we

need

buffers

internet,

it's

packet-switched

and

the

the

flow

control

is

mostly

end-to-end

done

by

transport

protocols

such

as

TCP,

and

you

need

buffers

to

accommodate

fluctuations

in

traffic

rage

and

bursty

traffic.

You

need

them

when

you

go

from

fast

links,

slow

links

or

when

you

aggregate

many

links

at

the

same

speed,

but

I

don't

have

time

to

go

into

the

details,

because

it's

a

huge

space

of

situations

where

you

where

the

buffer

needs

are

very

different.

C

C

3489

TS,

which

ended

up

recommending

to

have

office

space

in

the

Reuter,

released

the

bottleneck,

route

or

switch

that

corresponds

to

the

overall

end

to

end

round-trip

time,

which

is

the

average

I

think

times

the

bottleneck

bandwidth

so

on

today's

networks

on

white

area

paths

that

can

result

in

quite

big

offers.

So

this

this

recommendation

was

I,

think

it's

implement

by

many

people

in

the

in

the

ISP

space.

C

C

There

was

some

additional

work

to

push

this

to

even

smaller

buffers,

making

some

trade-offs.

For

example,

when

you

don't

insist

on

being

able

to

to

load

the

bottleneck

link

at

99.9

an

hour,

I

am

percent,

or

when

you

can

assume

some

some

pacing

of

the

connections

I

won't

get

into

this

anyway.

This,

despite

a

quite

a

big

spectrum

of

what

what

we

operators

are

told

how

we

should

dimension

our

buffers.

C

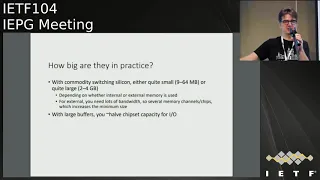

So

in

practice,

how

big

are

the

buffers

in

the

switches,

I

I'm,

looking

specifically

at

these

new

commodity,

switching

chipset

like

Broadcom

or

Mellanox,

or

these

kinds

of

things

that

are

used

by

many

vendors

these

days?

Basically,

there

are

two

types:

they're,

switching

Asics

that

have

the

memory

on

chip

or

maybe

on

package

in

the

future,

and

those

have

very

limited

buffers.

It's

usually

on

the

order

of

like

two-digit

megabytes.

I

know

some

of

the

early

Asics

had,

like

nine

I,

think

that's

the

least,

and

the

biggest

ones

have

around

64.

C

If

I'm

not

mistaken,

are

these

on

ship

buffers

and

note

that

these

switches

have

have

like

32

times

100

Gig

ports,

so

they're

pretty?

They

have

a

lot

of

bandwidth

and

usually

they're

deployed

places

where

people

don't

think

the

network

is

the

bottleneck,

but,

of

course,

due

to

things

like

in

caste

or

other

situations

that

happen

in

the

data

center.

C

So

you

need

at

least

four

channels

of

or

or

four

sets

of

dims

and

the

smallest

dims

you

get

for

memory

are

already

quite

big.

So

that's

how

you

end

up

with

with

much

bigger

buffer

memory

but

of

course,

there's

a

cost

because

on

the

chip

sets

you

need

about

half

the

pins

and

the

the

series

or

whatever

external

memory,

bandwidth

of

the

chip.

C

You

now

need

them

for

this

memory

channel

and

you

can't

use

them

for

ports,

so

you

get

about

half

as

much

switching

bandwidth

if

you

do

that

and

also

building

these

systems

are

hard,

is

very

hard

hitting

these

channels

to

external

memory,

and

you

know

there

are

mm

use

memory

management

units

on

the

switching

chipset

now

that

have

to

somehow

accommodate

the

the

bandwidth

and

latency

limitations

of

this

memory.

So

it's

it

gets

very

tricky

and

ASIC

many

basic

designers

don't

like

that.

C

They

would

rather

build

simple

switches

with

on-chip

memory,

but

that's

the

size

is

very

limited.

So,

of

course

you

may

ask

why

do

I

care

yeah?

If

your

buffers

are

too

big,

you're,

probably

wasting

money,

and

also

there

is

the

potential

for

like

interfering

with

with

TCPS

or

whatever

transports

rate

adaptation.

That

is

actually

bad

for

performance.

It

will

often

be

better

to

drop

packets

earlier

well.

I

I

haven't

put

this

on

the

slides,

but

we

all

know

this

use.

Buffers

should

be

managed

actively

right.

That's

active

queue

management,

which

is

also

a

lot

lot.

C

C

Also

for

distributed

systems

like

you

find

in

the

cloud,

people

are

often

interested

in

these

high

percentiles,

the

so

called

tail

latency

I,

don't

know

you

when

you

type

a

search

query

to

Google,

it

extends

it

out

to

I,

know

a

thousand

servers

and

you

only

get

the

results

if,

like

the

last

server,

has

send

its

response.

This

is

not

not

like

this,

but

get

the

basic

idea.

So

tail

latency

is

important

and

yeah.

The

the

big

question

I

found

in

the

discussions

that

people

people

optimise

the

networks.

C

Of

course,

for

very

different

goals

and

yeah

traditionally,

when,

like

20

years

ago,

or

so

when

when

links

were

super

expensive

people

wanted

to

utilize

them

to

the

absolute

maximum.

Ideally,

the

links

should

all

be

running

full

at

100%

all

the

time

and

people

should

still

buy

my

service.

But

of

course,

today

most

people

don't

run.

The

link

for

endeth

has

become

a

bit

cheaper.

C

It's

it's

become

sort

of

an

accepted,

the

approach

to

throw

bandwidth

at

the

at

the

problem,

performance

problems

and

the

metrics,

the

metrics,

for

which

metrics

optimizer

are

quite

different

for

many

of

the

cloud

or

content

providers,

figure

of

Merit

is

page

load.

Time

and

page

today

is

a

very

complex

thing,

of

course,

that

can

can

include

many

many

TCP

connections

and

software

interactions.

Some

people

may

be

interested

in

low

latencies

if

you

do

gaming

or

other

interactive,

AR,

stuff

or

connected

cars.

So

there's

no,

no

consensus.

C

C

I

think

exchange

points

are

particularly

interesting

case

because

they

have

any

even

less

control,

probably,

but

what

people

send

them,

as

as

other

people,

so

I

found

it

a

bit

frustrating

when

I

studied

I

try

to

study

before

this

problem

that

my

I

found

that

even

the

commodity

chipsets

that

you

that

are

on

all

the

products

now

they

have

some

instrumentation

of.

What's

going

on

in

the

buffers

like

Broadcom

has

some

sort

of

high

watermark

recording

or

where

you

can

see?

C

Okay

in

the

in

the

last

period,

something

the

buffer

went

up

to

this

I

and

Mellanox

has

a

nice

feature

way.

You

can

actually

split

the

buffer

into

slices

of

equal

size

and

the

chipset

would

in

the

in

the

fast

path

would

count

how

many

times

the

buffer

was

encountered

at

this

size

range

I

found

that

very

nice.

The

frustrating

thing

is

that

the

software

we

use

on

those

switches

doesn't

allow

me

to

look

into

this.

C

This

instrumentation,

although

it's

there

in

the

in

the

hardware,

so

that's

a

bit

frustrating

yeah

in

the

in

the

past

also

twenty

years

ago,

I

would

have

say

well

it's

it's

clear.

The

IETF

needs

to

define

a

mabe

for

for

accessing

buffer

statistics,

because

these

days,

people

don't

necessarily

implement

this

as

MIPS

anymore,

but

I

think

the

this

whole

story

of

how

to

how

to

give

operators

visibility

into

what

the

buffers

are

used

for

or

how

much

they

are

used.

That

is

quite

quite

interesting

at

hello.

C

Whether

someone

in

the

ITF

wants

to

would

like

to

work

on

that,

of

course,

it's

not

just

how

full

your

buffers

are.

Although

I

think

that

is

sort

of

the

critical

questions,

you're

running

a

network-

and

someone

tells

you

you-

you

don't

need

all

this

large

purpose

that

you

have

you.

You

really

want

to

know,

okay,

how

much

of

the

buffers

am

I

actually

using

and

how

often

I

know.

C

Maybe

the

average

of

our

utilization

of

my

four

gigabyte

buffer

in

my

switch

is

only

32

kilobytes,

but

that

doesn't

tell

me

it's

safe

to

reduce

the

the

buffers

to

and

all

3200

megabyte,

because

you

know

so

many

times

there

will

be

peak

set

but

go

to

2

megabytes

or

something,

but

also

maybe

not

as

much

for

ice

peace.

But

you

also

want

to

know

what

kind

of

traffic

what

flows

occupy

that

space.

That's

a

much

harder

problem

to

really

look

more

into

the

structure.

The

stuff

in

the

in

the

queues.

C

So

some

some

developments

that

might

it

might

make

it

interesting

to

look

at

this

problem

again.

I

think

it's

other

than

these

is

modern,

chipsets

and

design

problems

and

links

always

getting

faster.

That's

not

new

yeah

they're,

interesting

approaches

to

limiting

the

the

queuing

through

the

network

on

the

both

on

transport

protocols,

with

things

like

data

center,

TCP

or

other

attempts

at

making

TCP

more

I

know.

Most

of

you

would

like

and

limit

some

of

these

bursts.

Some

of

this

is

going

on

here

in

the

IDF

super

promising,

yeah

and

also

they're.

There.

C

So

if,

if

I'm

actually

interested

you

in

the

topic,

which

I

hope

there's

a

few

pointers

here,

where

you

can

wait,

you

can

look

at

everything

the

people

studied

over

the

last

years.

The

reason

I'm

standing

here

is

that

two

or

three

weeks

ago

there

was

a

small

workshop

in

Stanford

to

also

by

the

people

who

basically

initiated

this

work

like

12

years

ago,

so

and

so

that

they're

willing

to

do

more

research

with

with

operators.

C

Few

very

interesting

presentations

which

not

really

online

yet

and

I

apologize

for

that

I

need

to

hunt

the

organizers,

I

hope

they

will

make

them

public

should

stop

playing

with

this

thing

so

that

there

was

a

lot

of

interesting

content

from

operators

of

like

content,

distribution

networks,

cloud

networks

also,

some

some

more

traditional

ISP

people

about

measurements.

They

did,

for

example,

by

artificially

limiting

the

buffers

in

their

switches

whether

they

got

performance

loss

or

even

performance

improvement.

In

some

cases,

all

the

things

that

interests

them,

which

were

all

different

and

the

workshop.

C

The

goal

of

the

workshop

was

to

organize

a

wider

workshop

for

the

wider

community.

Maybe

in

fall

2019,

so

yeah

say:

watch

the

space

I,

don't

know

what

the

space

is,

but

if

you're

interested,

then

you

could

have

the

opportunity

to

to

talk

more

about

this

towards

the

end

of

the

year

and

of

course,

if

you

have

ideas

for

measuring

buffers,

offer

utilization

impact

on

transport

protocols,

performance

and

so

on,

then

yeah

there

people

interested

in

this

certainly

a

few

groups.

You

can

also

contact

me

if

you

want

if

you

want

pointers.

E

One

of

the

things

you

should

think

about

for

the

next

version

of

this

presentation

is

to

include

pointers

to

an

older

presentation

that

run

through

ITF

a

buffer

bloat,

because

too

much

buffer

that

you

said

this

cars

problem.

It's

not

the

fact

that

the

box

has

too

much

about

fares.

Is

that

TCP,

using

that

without

any

bounds,

tends

to

have

really

bad

back

pressure

problems

so

but

like

like

you've

mentioned

pace,

tcp

is

really

what

you're

trying

to

optimize,

rather

than

no

thirstiness.

E

Second

bit

is

speaking

with

a

vendor

hat

on

measuring

buffers

in

a

abstract

way

for

bottles

is

not

going

to

work

for

you.

The

problem

you're

gonna

run

into

is

that

for

easy

software

based

systems

like

say

a

linux

box,

or

some

like

that

sure

you

could

actually

get

an

easy

exposure

of

those

buffers

and

map

that

to

resources

used,

but

the

mini

start

actually

getting

to

chipsets

that

have

different

types

of

buffer

resources.

E

Based

on

how

it's

trying

to

do

different

types

of

forwarding

you're

going

to

find

that

you

can't

get

a

useful

abstract

model,

you

can

in

existing

modeling

stuff,

like

yang,

protobufs,

etc,

gets

nice

modeling

for

what

the

resources

are

on

the

box,

but

even

then

getting

mapping

to

the

resources

to

how

they

are

utilized

be

tricky.

So,

like

don't

think

of

Broadcom

as

an

example,

they

have

a

host

table

that

has

certain

types

of

resources

for

fast

hits,

host

level

routes

and

different

resources

for

everything

else.

So

just

late.

E

D

C

The

buffers

already

it's

it's

time

to

reconsider

this

well

in

comparison,

I

mentioned

this

aqn

story,

which

is

sort

of

a

sad

story,

I

think

in

the

in

our

community,

because

there

was

a

lot

of

research

results

and

also

some

ITF

work

and,

in

the

end,

this

very

little

deployed

practice

so

yeah

about

the

about

the

modeling,

the

difficulties

of

modeling

buffer

utilization,

you're

right,

it's

the!

If

you

look

into

the

hell

the

buffers

I

manage.

C

It

gets

very

complex

because

so

that

what

I

I

didn't

mention,

but

these

chipsets

with

many

ports

and

like

a

blob

of

memory

there

they

have

to

to

allocate

that

memory

to

all

the

different

traffic

laws

between

ports

and

that's

very

tricky.

So

the

the

model

is

it's

hard

to

do.

The

modeling

I

agree,

but

at

all

I'm

just

frustrated

that

I

can't

look

at

all

at

these.

These

statistics

are

being

taken,

so

maybe

even

that

bad

model

would

be

I.

Consider

that

as

big

progress

I

know,

maybe

it

confuses

people,

but

so.

E

D

E

F

Jeff

Houston

Mike.

This

is

a

really

old

topic,

as

you're

well

aware,

I

seem

to

recall

one

of

the

seminal

pieces

of

work

was

actually

Doug

coma

back

in

the

late

80s,

where

he

had

a

150

mega

ATM

switch

with

no

buffer

and

managed

to

get

a

max

of

about

two

megabits

per

second

out

of

it,

and

you

know

the

consequent

investigation

as

to

what

was

going

on.

Part

of

this

is

the

connection

between

the

way

TCP,

traditionally

rate

controls

versus

how

switches

work

and

for

most

of

our

lives.

F

We've

worked

on

loss

based

rate

control

mechanisms,

I

don't

understand

when

a

queue

is

building

up

until

a

cure

is

overflowed

when

a

cure

is

overflowed,

ie

the

buffer

is

full

of

on

dropping

packets.

I

am

too

fast,

and

if

you

think

about

it,

a

huge

amount

of

work

by

van

Jacobson

by

the

time

I

get

the

lost

signal.

One

hour,

TT

has

gone

past,

I'm,

probably

going

faster.

F

To

what

extent

do

I

need

to

rage

adapt

in

order

to

drain

the

buffer,

and

the

crude

mechanism

that

we

came

up

with,

which

seems

to

work

for

the

last

20

odd

years

is

times

two,

so

our

rate

half

and

then

I

again

slowly

build

up

till

effectively

I'm

filling

up

the

buffers

when

I

get

the

signal,

I

rate

Harv

again

that's

metastable,

and

it

probably

needs

to

be

because

the

world

runs

cubic

for

that.

You

need

big

buffers.

F

If

you

don't

want

to

leave

bandwidth

on

the

table,

you

need

buffers

that

are

equal

to

the

delay

bandwidth

product

because

of

this

harming

property

and

doubling

property.

The

real

issue

is

actually

work

on

bbr,

which

was

old.

Work

on

latency

sensitivity

that

never

quite

worked.

Bbr

has

taken

a

different

view

and

it

is

possible

to

run

bbr

extremely

fast

with

extremely

small

buffers,

because

your

signal

is

the

formation

of

the

buffer,

not

the

overflow

of

the

buffer.

F

Now,

if

I

was

building,

chipsets

I'd

be

pushing

PBR

like

crazy,

because

all

of

a

sudden

I

don't

need

large

buffers

if

I've

got

TCP

being

latency

sensitive

and

if

I

was

running

large

applications

on

today's

network,

I

would

use

bbr

hell.

I.

Am

why?

Because

it

knocks

all

those

delay

base,

throw

the

loss

based

systems

off

the

table

because

they're

basically

flood

the

network.

So

is

there

an

evolution

going

on?

Yes

has

lost

based

systems?

Are

they

losing

it?

F

G

July

anger

thanks

for

that

doc.

I

was

actually

going

to

step

up

and

say

something

quite

similar

to

what

Jeff

said

in

terms

of

the

action

between

the

congestion,

controller

and

and

the

the

queue

management

system.

Ultimately,

what

we're

talking

about

here

is

not

simply

buffer

management

in

the

network,

but

the

whole

system

of

sender,

condition,

controller

rate

control

and

how

offers

are

managed

in

the

network

and

what

those

signals

of

drop

/

ecn

mean

to

the

endpoints.

So

whoever

is

girl

wants

to

look

at

this

problem.

G

I

strongly

encourage

them

to

look

at

the

entire

system,

know

coming

to

the

entire

system

and

condition

controllers.

Jeff

is

right

that

PBR

does

a

better

job

of

managing

the

buffers,

but

I

would

I

would

push

back

gently

against

the

fact

that

PBR

does

not

actually

kill

Kubek.

It's

super

important

to

say

that

well,

I

and

and

I

want

to

look

at

those

those

traces

for

sure,

because

it

can.

G

There

are

certain

conditions

under

which

that

can

happen

and,

as

he

pointed

out,

PBR

we

do

which,

which

you

hear

interested

in

will

be

presented

at

ICC

RG.

This,

the

speak

and

you're

welcome

to

talk

to

the

people

are

working

on.

Now

there

will

be

friendlier.

Is

my

understanding?

I

haven't

actually

seen

exactly

what's

happening

over

the

past

several

months

that,

but,

in

general,

the

condition

controller,

not

just

DB,

are

a

better

congestion.

G

Controller

is

something

that

we

want

so

to

reduce

the

buffer,

bloat

/

buffer

management

problem

with

the

the

the

two

sides

of

this

are

basically

going

to

be.

How

do

you

do

good

aqm,

and

how

do

you

do

a

good

condition,

control

I,

don't

think

DB

as

the

final

answer

in

this,

but

I

think

that's

in

the

right

direction.

B

B

G

G

G

G

So

what

are

we

talking

about

for

people

who've

been

under

a

rock

for

the

past

three

years?

That's

well

people

who've

been

under

a

rock

for

the

past

20

years.

That's

the

HTTP

stack

for

people,

who've

been

under

a

rock

for

the

past

three

years.

That's

what

quicker

places

so

quick

is

basically

replacing

all

of

TCB.

It

doesn't

replace

TLS,

but

it's

subsumes

Atilla

so

sits

inside

of

Quicken

in

a

nurse

in

a

slightly

strange

way

and

then

there's

a

new

HTTP,

that's

being

built

to

run

on

top

of

quick

and

that's

htv-3.

G

So

the

surface

that's

exposed

to

applications

on

top

will

remain

the

same,

but

HTTP

3

is

basically

the

HDB

mapping

over

quick

penis

1.3

sits

in

this

strange

way

right

there,

because

it's

basically

quick

users

TLS,

as

as

as

it

doesn't,

the

other

staff

as

HTP.

Doesn't

the

other

stack

but

quick

uses

TLS

to

do

the

exchange

and

then,

when

the

keys

come

come

out,

quick

uses

the

keys

to

encrypt

its

own

headers,

in

addition

to

application

data

so

as

compared

to

the

previous

stack,

we're

only

application.

G

Data

was

encrypted

and

the

transport

headers

were

visible

in

the

network

in

the

quick

world.

The

transport

headers

are

also

encrypted

and

not

visible

in

the

network,

most

of

them

anyways.

So

those

are

the

drafts

if

you

enjoy

reading

drafts,

those

are

available

and

before

I

move

on

one

of

the

most

important

problems

that

quick

there

are

several

problems

at

which

set

out

to

solve,

but

the

most

relevant

one

and

to

this

conversation

is,

is

the

one

around

metal

boxes?

G

Presumably

everybody's

familiar

with

this

here

in

this

room,

so

I

won't

go

into

the

details

of

what

exactly

I'm

talking

about

here,

but

the

problem

that

we're

trying

to

solve

is

that

of

ossification

is

that

we

have

and

we

have

been

unable

to

change

TCP

in

in

in

quite

a

while

you've

seen

TCP

fast,

open,

you've

seen

MP

DCP

a

number

of

extensions

to

TCP

come

out

of

this

community.

How

many

people

would

would

claim

that

if

you

put

a

probe

in

the

network

today,

you

would

see

a

TCP,

fast,

open

sin.

G

H

G

Was

and-

and

this

is

not

what

I'm

here

to

litigate

I

never

talked

about

the

tooling

that's

available

for

quick

we've

had

this

discussion

add-in,

but

in

general

right,

not

easy,

be

fast.

So

when

I

try

to

deploy

I'm

unable

to

deploy

it,

and

if

you

see

DCP

first

opens

packets

on

the

wire

I'd

love

to

see

what

fraction

not

TCP

is

actually

using

TCP

fast

open,

because

that's

a

number

that

we

are

actually

interested

in

to

be

clear

and

I'm

interested

in

it.

G

Iii

I

was

I've,

been

pushing

for

TCP

fast

open

for

quite

a

while.

It's

super

difficult

to

deploy

apples

forward.

How

to

deploy.

Microsoft's

wallet

have

to

deploy

Google

support

hard

to

deploy,

and

it's

not

because

we've

not

been

trying.

We've

tried,

we've

tried

and

we've

tried

and

metal

boxes

still

break

it

in

various

insidious

ways

and

we're

unable

to

deploy

it.

So.

H

Sometimes

you

have

to

go

and

do

what,

for

example,

Mark

Andrews

over

here

has

done

with

trying

to

do

stuff

with

the

DNS

protocols

where

he

goes.

Neat

he's

taken

the

time

he's

research

he's

identified,

which

TLD,

ccTLDs,

etc

that

are

not

compliant

with

the

standards

publishing

the

list,

publishing

the

research

and

going

and

actually

doing

the

work

to

actually

go

and

clean

up.

Some

of

these

devices,

as

opposed

to

saying

fixing

these

is

too

hard,

so

I'd.

Let

me.

H

G

Protocol

instead,

I

do

I

do

I.

Let

me

have

the

last

word

on

that

point

and

you

did

I'm

gonna

move

on

because

we're

not

going

to

really

to

get

this

point

here.

The

point

here

is

that

this

was

one

of

the

core

motivations

for

why

we

did

develop

quick

and

it

is

deployed,

and

it

has

seen

less

than

it's

taken

less

than

nine

years

to

try

and

see

any

bits

on

the

wire.

G

G

This

is

a

few

years

ago

and

Google's

version

was

deployed,

and-

and

this

is

the

before

fastly

I

was

at

Google,

and

we-

the

first

bite

of

this

of

this

packet

header

was,

was

the

flags

fight

and

you

all

know

what

a

Flags

fight

means

right.

Black

spec

means

it

has

bits

that

you

use.

You

turn

on

and

turn

off.

We

decided

to

flip

a

bit

and

because

we

want

to

change

a

particular

behavior.

We

wanted

the

header

to

change

a

bit.

We

change

B,

and

this

is

before

quick,

quasi,

unpublished

as

an

idea

craft.

G

It's

before

any

of

this

had

happened

publicly,

but

Google

quit

was

deployed

in

Chrome

and

there

was

basically

this

was

our

unencrypted

and

we

had

left

it

at

seven

for

a

while

and

we

flipped

a

bit

and

everything

went

to

hell.

Basically,

we

had

we

had

calls

coming

in

going

users

can't

reach

Google

over

any

Google

property

using

Chrome

and

by

the

way

this

is

Chrome's

problem,

because

guess

what

we

can

using

Firefox

right,

and

so

we

had

to

go,

find

out

that

the

problem

was

we

did.

G

This

firewall

would

let

it

through

the

response,

would

come

back

from

the

server

the

firewall

would

little

through

and

then,

when

chrome

decided,

Rick's

working,

the

firewall

would

black

hole

everything.

So

this

was

exactly

like

the

perfect

mismatch

between

these

two

mechanisms.

Of

course,

and

we

spoke

to

the

firewall

vendor,

we

spoke

to

them

in

detail

about

exactly

what

had

happened

and.

G

He

actually

talked

with

the

team

that

implemented

the

code

that

did

this

and

they

said

that

the

way

they

determined

what

quick

traffic

was.

You

want

to

ship

a

feature

that

did

quit

blocking

and

the

way

they

determined

what

Creek

was.

Is

they

looked

at

TCP

dump

for

a

little

while

and

found

that

the

first

fight

was

unchanging

and

the

way

they

implemented

a

filter

for

Quake

was

basically

this.

G

So

I

now

want

to

make

too

fine

a

point

here,

except

the

node

that

the

one

byte

that

didn't

change

on

the

wire

ended

up

becoming

the

quic

identifier

and

I

will

say

this

for

what

it's

worth.

We

still

don't

know.

What's

going

to

happen

when

we

change

despite

tomorrow,

right

now,

the

Google

quick,

that's

deployed

doesn't

have

seven,

it

has

nine

in

the

first

byte

that

G.

That

is

a

bit

we

flip

by

the

way.

The

second

bit

is

the

bit

we

flipped.

G

It

is

nine

right

now

so

I

don't

know

if

this

has

changed

to

equal,

seven

or

equals

nine,

or

if

it's

changed

something

broader.

So

we

have

to

see

what

happens

next.

However,

this

was

a

pretty

strong

reinforcement

of

something

we

already

knew

that

the

only

way

we

could

actually

protect

a

protocol

from

bitten,

getting

completely

ossified

and

for

for

us

to

be

able

to

you

know,

deploy

without

having

to

wait

for

10

years

as

we

waited

with

TCP

farce.

Open

was

to

encrypt

yes,

so.

G

I

G

It's

a

fair

point,

so

so

looked

a

couple

of

things

they

could

have

done.

One

is

so

it

was

published

as

a

public

document.

It

wasn't.

A

standard

I

should

have

actually

said

that

clearly

the

the

document

is

available,

but

they

hadn't

even

looked

at

the

min

they

hadn't

even

googled,

quick

to

be

very

clear.

We

may

ask

them:

they

had

no

idea

the

document

even

existed,

and

then

they

asked

us

the

next

time.

You

make

a

change.

G

G

It's

it's

it's

yeah,

but

but

if

you

want

to

ship

a

feature,

I

would

expect

that

there'd

be

some

amount

of

due

diligence.

It's

not

to

put

you

know,

I'm,

not

putting

them

on

the

spot.

They're,

probably

serving

their

business

purpose

adequately,

well,

to

continue

to

survive

and

exist

right

and

to

thrive.

Even

it's

a

big

company,

but

incentives

are

different

in

different

places

and

they

aren't

incentivize

to

go.

Look

up

the

spec.

G

G

So

what's

the

status

of

this

work,

we've

been

working

at

the

idea

for

the

past

two

years

on

standardizing

this

on

working

on

is

two

and

a

half

years

now

and

it's

we

understand,

everybody

understands

that

there

are

obviously

privacy

and

security

implications,

as

well

as

network

operational

and

management

concerns,

and

it's

unfair

to

say

that

those

happen

haven't

been

considered.

Those

have

been

absolutely

considered.

It's

it's!

G

It's

just

that

I

think

that

the

community

is

is

worn

down

by

the

inability

to

move

transport

or

into

n

bits,

and

we

are

trying

really

hard

to

figure

out

how

to

make

this

work.

This

means

that

there

might

be

fundamental

operational,

fundamental

changes

and

how

operational

work

happens

going

in

the

future.

G

Unfortunately,

we

didn't

have

the

experience

that

we

have

now

so

as

an

operator

if

you're

thinking,

what

can

I

do

here,

I

would

recommend

that

you

think

about

what

exactly

you

need

and

and

and

and

and

the

working

group

will

be

open

to

have

a

discussion

on

that.

So

there's

several

implementation

efforts.

G

The

long

header

format

looks

like

this:

it's

only

used

for

the

handshake.

It's

only

used

during

the

handshake

to

bootstrap

to

set

up

the

connection

and

the

bits

you

see

there,

which

are

not

marked

out

with

xx

xx,

are

visible

in

the

network.

Those

are

unencrypted

bits

visible

in

the

network

in

the

long

header

when

you

get

to

the

short

header.

G

Actually,

that's

not

a

great

picture,

because

it's

and

I

want

to

say

this

doesn't

show

you

everything

that's

encrypted,

because

the

packet

number

here

is

imprinted,

but

only

some

bits

are

actually

visible

and

I'll

actually

show.

The

short

header

is

the

common

header.

That's

what's

used

during

the

rest

of

the

connection

after

the

handshake

is

complete,

and

this

has

a

bit

here

called

the

spin

bit

that

you

may

have

heard

of

and

if

you

haven't

heard

of

it,

you

again

been

under

a

rock

for

the

past

few

years

climb

out

of

it.

G

But

the

spin

bit

is

basically

a

bit

that

is

available

in

the

in

the

short

header

and

and

it's

it's.

It's

meant

to

be

used

for

passive

measurement

of

round-trip

time.

I

won't

get

into

the

details

of

how

but

that's

visible

in

the

network

and

that's

available

for

the

network

to

use

to

measure

round-trip

time

folks

at

Ericsson

and

other

places,

and

are

doing

a

ton

of

work

on

figuring

out

how

to

use

this,

how

to

build

filters

and

tools

around

this,

and

so

on.

G

Again.

That

was

a

very

strong

case

that

they

had

to

make

that

this

was

super

useful

and

super

important

bit

of

information

in

the

network,

and

that

eventually

came

out

of

that

discussion.

A

very

heated

one

and

say

so.

That's

the

packet

header,

where

there

are,

if

people

who

are

familiar

with

TCP

might

say

well,

I

can

see

a

packet

number

that

looks

like

a

sequence

number,

whereas

the

acknowledgment

information

was

a

flow

controller

information.

G

Well,

as

it

turns

out,

none

of

it

is

in

the

packet

header,

they

are

all

inside

the

packet,

so

a

packet

basically

is

constituted

by

frames

and

you

can

have

different

frames.

There

are

many

different

frames

of

which

there's

a

stream

frame

that

carries

application

data

and

the

AK

frame

that

carries

acknowledgment

information.

G

And

now

we

just

look

through

a

whole

bunch

of

information

out

at

you

here,

but

I'm

going

to

skip

these

and

show

you

basically

what

a

quick

packet

looks

like

there's

the

packet

header,

the

grayed

out

bits

are

ones

that

are

encrypted,

so

the

packet

number

is

in

fact

an

encrypted.

You

can't

see

the

sequence

number

on

the

wire

anymore

and

pretty

much

everything

else

inside

the

packet,

which

is

the

scheme.

The

acknowledgment

information

is

all

encrypted.

G

So

clearly,

Wireshark

is

not

going

to

be

enough

because

it

really,

you

can't

see

very

much

without

having

an

endpoint

key

and

that's

that's

how

that's

going

to

be

with

Bioshock.

You

know

what

be

able

to

get

a

lot

of

information,

but

there's

been

a

lot

of

tooling

work.

That's

going

on

for

doing

endpoint,

tracing

of

of

quick

and

that's

seen

a

lot

of

traction.

So

here

a

server-side.

G

Network

or

if

you're,

a

client-side

network,

then

there's

a

bunch

of

tooling,

that's

that

that

works

been

going

into

and

if

I

may

just

very

quickly

show

these

two

bits:

quick

trace

and

quickness,

or

two

tools

that

have

been

under

serious

development

and

they

are

very,

very

promising

tools.

The

first

one

quickly

is

written

by

Victor

at

Google

is

available,

it's

open

source

and

basically

is

a

packet

trace.

That

shows

you.

G

You

know

like

if

you've

seen,

TCP

trace,

it's

quite

similar

to

that

it

shows

you

at

times

it's

basically

a

time:

sequence,

diagram

of

of

packets

and

acts

and

losses

and

things

just

that.

Quick

quiz

is

a

much

more

elaborate

rule

being

written

by

robin

marks

at

university

of

hasselt,

and

this

shows

some

seriously

interesting

information

that

you

can

get

from

doing

stuff

logging

at

the

endpoints.

These

are

all

available

to

see.

These

will

be

discussed

in

much

more

detail

at

the

tcp

TSV

area

this

week.

G

G

So

I

can

speak

to

my

operational

experience

at

Google

with

this,

which

is

that

when

we

deployed

quake

at

first,

we

found

about

93%

reach

ability

with

quick,

so

in

terms

of

black

holing.

That

may

have

been

the

7%

that

we

that

we

missed

and

I

was

perfectly

fine,

because

we

had

a

TCP

fallback.

So

if

quick

didn't

work,

that

was

perfectly

fine.

The

rate-limiting

is

this

is

the

more

nefarious

one

because

well

I'm,

sorry,

how

is

like

rate

limiting

denial.

A

D

H

G

H

J

G

Between

you

and

me,

what

I

can

say

is

that

operationally

our

experience

at

Google

was

that

when

we

turn

this,

when

we

turn

on

quick

globally

and

right

now

to

be

clear,

quick

is

about

40-ish

percent

of

Google's

traffic.

Last

count,

it

was

40

percent

two

and

a

half

years

ago,

and

that's

about

seven

percent

of

Internet

traffic,

so

I'm

sure

that

you're

seeing

quick

packets

right

now

flowing

over

the

networks.

Rate-Limiting

was

something

that

was

observed.

G

It

was

definitely

observed

and

what

we

did

was

the

cold-called

operators,

and

most

of

them

were

in

enterprise

networks,

and

they

basically

said

we

didn't

even

know

that

we

had

a

rate

limit

on.

We

got

3%

back

just

from

doing

that.

That's

so

so

yeah

we

did

get

people

to

turn

off

the

rattling

because

those

are

on

by

default

and

their

firewalls,

not

not

because

they

had

turned

it

on.

G

We

still

have

a

mostly

have

got

rid

of

the

rate-limiting

at

this

point

that

the

most

recent

data

was

from

last

year,

which

shows

that

most

of

the

rate-limiting

that

Google

sees

has

gone

down.

It's

it

doesn't

exist

at

this

point.

It's

quite

possible

that

it's

still

happening

in

corners

that

that

I

haven't

seen

or

heard

about.

But

if

it's

happening

in

a

big

way,

then

it's

certainly

being

missing.

Google

traffic.

Okay,.

H

D

H

G

G

So,

to

be

very

clear:

I'm,

not

the

working

group

I'm

here

doing

a

presentation

about

quick,

tooling

you

are,

this

is

I,

think

the

reason

the

conversation

is

breaking

down

is

because,

if

you

want

to

have

a

conversation

at

the

quick

working

group,

you

need

to

off

the

chips,

get

a

slot

discussions

made

I'm,

not

representing

the

working

group

here,

I'm

representing

the

discussion

I'm

having

this

is

I.

Don't

think

this

is

a

productive

conversation

at

this

point

here.

Listen.

E

G

E

The

de

part

of

the

point

is

in

your

slides

sort

of

approach.

This

a

different

way

leave

my

packets

alone.

The

contents

are

secret,

routers

have

to

actually,

you

know,

find

some

level

of

entropy

within

the

packet.

They

actually

know

hash,

stuff

and

trouble

with

UDP,

as

most

boxes

that

are

actually

doing

ecmt

on

UDP

have

a

limited

number

entropy

that

they'll.

Actually,

though,

they

don't

tend

to

get

to

the

contents

of

things

they

try

to

stick

mostly

to

the

headers.

So

one

of

the

considerations

therefore

become

expects.

E

G

So

I

would

say

that

ECM

be

still

usable

and

a

lot

of

deployments.

Actually

do

you

see

simply

and

planning

to

use

ECM

v4

for

sharding

traffic

for

quick

traffic

coming

in,

but

that

is

if

you

are

willing

to

build

in

the

support

for

it.

There

is

a

connection

ID

that

is

in

fact

visible

on

the

wire

and

that's

at

a

fixed

point

in

the

packet

header

that

can

be

used

for

shouting,

and

that

can

be

as

long

as

seventeen

bytes.

So

you

can

certainly

use

that

for

charting

as

well

right.

E

E

Eric

one

so,

given

that

everything

is

now

hidden

of

which

I

am

a

fan,

one

performance

enhancing

proxy

did

that

does

strike

me

as

having

been

useful

as

the

things

that

are

at

the

TCP

used

to

be

managing

for

satellite

links.

Are

you

aware

of

any

measurements

or

experience

of

how

quick

behaves

over

satellite

links?

No.

G

B

G

Just

whatever

it

sees

itself

yeah

so

at

the

moment,

and

that's

a

conversation

that

mean

we

we

can

and

we

need

to

have

going

forward.

But

it's

it's

something

that

at

the

moment,

if

this

is

again

why

we

expect

that

I

mean

HTTP.

For

example,

every

single

implementation

of

it

is

probably

going

to

have

is,

will

have

a

TCP

fallback.

So

ultimately,

if

you

need

need

need

need,

need

those

devices

the

and

that's

the

way

to

get

those

performance

benefits

out.

Well,

I.

G

G

I

mean

clicks,

so

not

a

lot

of

work

has

been

done

in

there.

There's

probably

you

know,

condition,

control

and

other

work.

The

protocol

doesn't

offer

additional

hooks

for

things

like

that

to

happen

at

the

moment,

but

there's

there's

certainly

possible

to

imagine

that

an

extra

version

of

the

protocol

might

have

more

things

do

to

support

support.

Other

network

types,

Thank

You.

I

L

L

My

name

is

Jobe

Snider's

I

work

for

entity,

communications,

a

IP

transit

provider

and

in

this

presentation,

I

would

like

to

discuss

some

ideas

or

considerations

related

to

BGP

black

holing,

quick

recap.

What

black

holing

is

black

holing

in

general

is

that

you

signal

to

an

adjacent

network,

usually

your

transit

provider.

L

Please

do

not

send

me

packets

destined

to

this

IP

address,

and

the

common

use

case

is

that

you

receive

say

a

DDoS

attack

targeted

towards

one

of

the

IP

addresses

originating

from

your

network

and

you

sort

of

disable

this

IP

address

in

order

to

allow

the

other

IP

addresses

to

remain

reachable,

in

other

words,

you're

sacrificing

the

victim

of

the

DDoS

attack,

so

that

the

rest

of

your

services

remain

online.

Fine,

usually

black

holing

is

implemented

through

one

of

two

methods.

L

Another

downside

is

that

almost

HP

implementations

you

will

need

two

filters:

one

filter

to

catch

the

black

holes

and

figure

out

which

ones

you

would

want

to

allow

and

the

other

filter

is

for

normal

BGP

routing.

To

put

this

in

perspective,

today's

largest

conflict,

an

entity's

network,

is

57

megabytes

and

roughly

50%

of

that

is

because

of

black

hole,

related

prefix

lists.

L

Now

most

providers

will

use

IR

or

RPI

or

Whois

data

to

construct

these

filters,

and

we've

noticed

that

some

of

our

customers,

either

maliciously

or

accidentally,

have

requested

black

holing

for

IP

addresses

that

are

not

theirs.

We've

seen,

customers

that

would

add

a

SN's

of

their

competitors

to

their

own

assets,

so

they

make

it

into

the

filters

and

then

they

signal

black

holes

into

our

network.

Of

course,

this

upsets

some

customers.

L

We've

also

seen

excellence

where

a

DDoS

mitigation

system

miss

identifies

a

DNA

adidas

flow

and

it

starts

black

holing

for

IP

addresses

that

are

outside

that

customers

network

in

any

guard.

All

of

these

situations

should

be

proactively

prevented,

rather

than

reactively,

where

we

disable

black

hole

and

capabilities

for

such

customers.

L

This

is

an

example.

Routing

policy.

You

can

see

we

have

two

lists,

one

for

black

holes,

one

for

normal

routing

the

destination

that

we

receive

over

the

ebgp

session

is

in

the

black

hole

list

and

the

community

is

our

black

hole.

Community

then

set

a

specific

next

stop

and

jump

out

of

the

policy.

If

that

conditional

requirement

is

not

met,

it

may

be

a

normal

routing

signal

and

then

we

would

accept

it

now.

L

My

proposal

to

this

community

is