►

From YouTube: IETF104-MAPRG-20190328-1050

Description

MAPRG meeting session at IETF104

2019/03/28 1050

https://datatracker.ietf.org/meeting/104/proceedings/

B

Okay,

so

just

to

sanity

check

I'm,

not

wrong

that

this.

This

was

supposed

to

start

three

minutes

ago.

Right

all

right,

we're

gonna

have

to

get

started.

We've

got

a

ton

of

stuff

to

jam

into

the

hour

and

a

half

time

we

have

so

so

fight

find

your

places,

assign

the

blue

sheets

they're

circulating

from

either

side

here

welcome

everyone.

This

is

map

RG

the

measurement

and

analysis

from

protocols,

Research

Group

I'm,

Dave

Wonka.

B

B

So

so

what

we

have

on

deck

for

today

is

what

we'll

well

here's

the

links

to

the

things

everything

map

RG,

if

you've

not

been

to

map

RG

before

it's

the

measurement

and

protocols

of

measurement

analysis

for

protocols,

research

group,

what

we

do

is

do

measurements

that

inform

the

engineering

or

operation

of

IETF

protocols,

so

everything

you

see

here

should

be

attached

to

some

IDF

protocol

or

the

operation

of

it.

We

have

a

mailing

list.

Today's

slides

are

up

at

that

link.

There's

the

etherpad

the

audio

to

meet

echo,

the

jabber

room,

I'll

link

there.

B

So

the

way

we

run

mapper

G

is

we

put

out

a

call

for

contributions

this

this

time

we

the

same

thing

On,

January

28th.

We

put

out

a

call

for

contributions

to

four

presentations

that

have

measurement

results

here

in

mapper

G,

and

we

received

about

ten

proposed

contributions.

A

couple

of

them

are

out

of

scope.

Seven

ohm

were

invited

to

present

here

today,

of

which

I

think

you'll

see.

Six

and

because

one

of

them

was

presented

a

couple

a

day

or

two

ago

at

the

quick

working

group.

B

Thanks

for

the

proposals,

we

got

some

really

strong

stuff.

Unfortunately,

this

time,

because

of

the

way

they

changed,

the

the

the

the

week

around

and

had

the

the

free

time

during

Wednesday,

we

couldn't

get

a

two

hour

time

slot,

so

we're

in

an

hour

and

a

half,

so

I

think

it'll

go

pretty

quick

one

of

the

things

we

do

in

mapper

G.

B

If

you

have

a

project

that

has

a

tool

or

your

results

aren't

particularly

mature

yet

or

you

just

want

to

tell

something

about

an

upcoming

measurement

conference

or

symposium,

we'll

run

ads

for

you

and

you'll

see

some

of

those

here

so

that

the

tail

end

of

the

intro

slides

will

be

those

ads.

The

other

thing

that

we

did

new

this

time

was

map

of

participated

in

the

hackathon.

B

So

we

had

a

measurement

analysis

for

protocols

table

at

the

hackathon

and

we

had

I

think

six

of

us

at

the

table

and

we'll

give

a

short

rundown

on

what

happened

there.

But

basically,

we

did

the

call

for

participants

and

projects

there.

We

had

three

projects

there

and

two

people

responded

in

advance,

two

more

showed

up

there

and

with

Miri

and

I.

That

was

the

six

of

us

that

spent

Saturday

and

Sunday

preparing

content

for

the

the

four

today.

B

It

has

a

draft

about

safe

internet

measurements,

and

it's

certainly

pertinent

to

this

group.

It's

about

what

would

be

essentially

like

best

current

practices

for

how

you

would

do

measurements

in

the

first

place

and

then

perhaps

also

how

you

would

catalog

the

results

and

what

sort

of

things

should

and

shouldn't

be

in

those

results.

So

there's

a

link

to

that

draft.

B

There

please

review

it,

contribute

to

help

me

in

with

that

one

of

the

proposed

presentations

for

today,

that

is

just

a

in

an

early

state,

and

we

asked

Luke

to

come

back

when

he

had

some

measurement

results.

Is

this

project?

It

sounds

like

he's

doing

some

really

neat

stuff,

with

line

rate

up

to

hundreds

of

gigabits

per

second

in

impecca

capture,

and

so

Luke's

got

his

email

address

there

if

you're

interested

in

a

product

project

or

that

software

get

in

touch

with

Luke

the

advanced

network

research

workshop

for

next

year.

B

The

dates

have

been

set,

so

let

students

in

and

and

potential

researchers

that

would

bring

work

there.

Let

them

know

about

that.

You

can

find

it

on

the

ITF

website

and

it'll

be

in

this

slide

set.

There's

a

quick,

quick

interoperability

and

performance

a

workshop

coming

up

next

year.

The

dates

are

here

as

well.

That's

the

second

venue

for

that

kind

of

work.

After

that,

then

we

have.

We

have

here's

Miriah.

B

B

So

certainly

one

of

my

favorite

venues

that

that's

up

also

the

internet

measurements

workshop

is

running

a

shadow

PC

again

so,

especially

if

you're

a

graduate

student

and

you

want

to

learn

how

to

be

on

a

PC

they'll

run

it

on,

in

parallel

with

the

with

the

regular

reviews,

really

neat

experience

and,

of

course

fascinating

when

they

pick

different

things

than

the

real

PC

would

pick

so

I

get

involved

in

that

for

you

or

your

students.

If

you

have

them

and

that's

the

end

of

the

advertisements,

could

you

switch

to

the

next

slide.

B

B

So

when

we

first

conceived

of

mapper

G

one

of

the

things

that

Mary

and

I

had

as

a

goal

was,

could

we

get

these

private

measurements

that

sometimes

happen

in

industry

where

they

can't

quote

it

disclose

the

details

because

it

might

be

about

customers

or

users

on

the

Internet?

Could

we

take

some

of

those

private

measurements

and

work

together

with

people

in

map

RG

in

conversations

and

then

digest

the

results

and

come

up

with

some

sort

of

summary

results?

So

that's

what

Oliver

and

I

gave

ourselves

as

a

goal.

B

B

So

the

nice

IMC

paper

about

how

they

developed

the

the

v6

hit

list

and

how

they

mean,

and

then

we

updated

and

released

a

couple

analysis

tools

that

I've

used

over

some

years

to

do

v6

analyses

and

so

we're

taking.

Essentially

what

we

think

is

the

largest

publicly

known

data

set

of

ipv6

topology

and

reach

ability,

measurements

and,

as

far

as

I

know

again,

the

largest

private

one

from

what

I

do

is

inside

Akamai

and

bring

them

together.

So

what

did

we

learn?

Well,

the

first

thing

we

learned

is

between

our

two

sets.

B

Just

accidentally

doing

these

topology

and

reach

ability

studies,

we

found

1.2

million

UI

64

addressed

ipv6

routers,

so

these

are

routers

that

we

know

the

manufacturer

of

them.

Ostensibly

many

of

them

are

reachable

on

the

internet

and

we

think

this

could

be

potentially

present

a

vulnerability

in

the

v6

Internet.

If

any

of

these

classes,

advices

are

known

to

have

have

weaknesses.

The

discovery

of

these

was

completely

accidental.

We

were

doing

a

topology

study

to

discover

the

core

of

the

v6

internet.

He

was

doing

a

reach

ability

study

and

then

we

decided

as

a

side

effect.

B

There

were

these

router

addresses

in

there.

We

also

found

that

the

public

data

set

the

results

that

team

Munich

prepared

and

our

set

inside.

They

were

focused

on

different

parts,

the

v6

internet,

again

sort

of

accidentally,

so

that

the

results

were

complementary

and

you

know

something

like

forty

percent

came

from

one

of

the

set

and

sixty

percent

came

from

the

other.

Another

thing

that

was

surprising

that

we

found

is

in

one

of

the

studies.

B

B

Do

you

add

it

with

what

you

hadn't

removed

from

them

and

some

in

some

addresses

some

sieve

addresses

hidden

culled

from

the

the

hit

list

and

last

year,

and

it

turned

out

those

were

actually

useful

to

find

more

of

these

v6

routers

of

interest

and

then

so

I'm

gonna

I'm

gonna

go

through

quickly

a

couple.

The

the

similar

results,

but

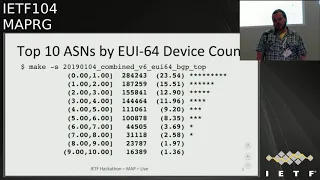

basically

the

the

middle

column

here

is

a

device

count,

and

this

is

a

GUI.

Sixty

UI

64

numbered

devices

that

have

you

know

I

believe

MAC

addresses

embedded

in

them

in

in

various

asns.

B

B

We

found

in

that

of

the

top

ten

vendors

we

found

two

and

thirty-five

unique

vendors,

but

the

top

10

you

can

see

here

make

up

something

like

99%

of

it.

So

there

are

particular

brands

of

equipment

that

that

networks

are

choosing

to

deploy

in

their

v6

deployments,

235

and

a

different,

unique

vendors

in

total

and

what

we

decided

to

do

so

I

said

there

was

there's

privacy

concerns

with

the

data.

So

what

what

Oliver

and

I

did?

B

Is

we

anonymize

the

ASNs

here

and

we

anonymize

the

vendors,

so

I

took

a

bunch

of

fictitious

company

names

from

movies

and

television

like

Wonka,

Ollivanders

wand

shop,

those

are

the

manufacturers

of

the

devices

and

then

we

made

classes

of

them

where

we

paired

up

the

ASN

and

that

particular

brand

of

equipment.

So

here

23.5%

of

the

UI

64

numbered

v6,

routers

were

manufactured

by

wonka

industries

and

we're

in

a

si,

and

what

you

can

see

here

is,

for

instance,

here's

two

different

ASNs

that

use

the

same,

ostensibly

popular

brand.

B

In

this

case

we've

substituted

acting

Corp

brand

equipment

in

there

and

that

represented

some

twenty

plus

percent

of

it.

And

then

you

can

see

that

same

brand

is

used

by

ASG

down

there

as

well.

So

just

trying

to

give

you

an

idea,

and

then

we

also

see

things

like

here's.

An

a

s

that

uses

has

a

high

population

to

two

different

brands

of

this

equipment.

B

Now

there's

many

many

of

these

brands.

So

let's

try

to

dive

in

and

visually

in

a

couple

of

these.

So

what

Oliver

did

is

over

the

over

about

the

past

six

months.

He

did

a

time

series

plot

here

of

the

the

five

asns

in

these

five

different

colors

again

anonymized

that,

but

this

is

a

on

the

vertical

axis.

The

number

of

devices

that

were

numbered

using

UI

64

addresses

and

the

top

of

it

is

of

five

hundred

thousand

there.

B

So

so

this

this

blue

line

at

the

top

saying

over

the

last

half

a

year,

there

was

a

decrease

in

the

number

of

UI

UI

c64

number

robberies

there,

but-

and

we

saw

some

anomalies

like,

for

instance,

where

a

number

of

ASNs

the

number

seemed

to

drop,

and

we

unfortunately

found

that

that's

just

an

artifact

of

the

way

the

measurements

were

done.

What

we

were

seeing

instead

is

over

the

last

half

here,

there's

a

number

of

a

ascends

that

the

number

of

eui-64

numbered

routers,

which

is

a

legacy

addressing

technique,

they're,

actually

increasing.

B

So

we

think

that

current

v6

deployments

our

numbering

routers

this

way

and

a

lot

of

them

are

customer

premise

equipment.

So

there

may

be

potentially

PII

concerns

there.

Another

way

we

visualized

it

is

on

the

horizontal

axis

here:

I

have

the

top

20

a

a

sense

that

had

the

most

the

most

you

I

64

numbered

routers,

discovered

from

the

this

private

Hawk,

my

data

set

and

what

I

want

to

show.

You

is

just

a

couple

of

the

the

modes

of

results

we

see

here

so

here.

B

The

green

line

there

at

about

20,000

is

the

number

of

target

addresses

that

we

traced

towards

in

these

networks,

and

you

can

think

of

these

as

web

clients

that

the

touch,

for

instance,

Akamai,

hosted

properties

and

by

tracing

towards

20,000

hosts.

We

discovered

110,000

eui-64

numbered

routers.

That's

the

only

data

point

in

that

particular

TSN,

where

you

trace

only

220

20,000

endpoints,

but

you

discover

more

routers.

Well,

what

does

that

mean?

B

It

means

that

they

must

be

using

you

I-64,

when

they're

numbering

things

in

the

distribution

layer

of

their

network,

that

we

see

multiple

eui-64

hops

on

a

path

towards

a

web

client.

The

other

thing

we

see

is

here

where

the

the

reticle

is.

The

number

of

you

is

64

numbered

routers.

We,

the

red

line,

is

the

more

Bui

64

routers

that

we

discovered

the

green

is

the

the

number

of

addresses

that

we

probe

towards

well.

Here

they

matched

up

exactly.

That

means

when

we

trace

towards

a

client

in

that

network.

B

We

pick

up

one

eui-64

numbered

router,

ostensibly

the

one

that's

in

the

customer

premise,

and

then

then

there's

also

these

bimodal

results

where

we

here

we

scan

less

than

we

scan

towards

about

40,000,

and

we

call

about

10,000

you

I-64

routers,

so

there's

quite

a

distance,

not

every

not

every

path

towards

when

the

client

seems

to

have

that

kind

of

router

numbered

router,

but

then

there's

other

ones

where

the

red

and

the

green

are

really

close

together.

So

we

almost

harvest

in

in

survey,

one

eui-64

numbered

router

for

every

target.

We

traced

towards.

B

And

then

so

that

those

are

basically

the

early

results,

we

got

from

the

this

v6,

so

security

and

privacy

study

and

basically,

what

we're

finding

is

that,

because

things

were

all

over

the

place

with

different

AAS

ends,

we

really

need

to

do

a

more

focus

study

that

tries

to

figure

out

how

many

of

these

are.

There,

they're,

really

just

being

accidentally

discovered,

so

we're

gonna

set

ourselves

up

for

some

follow-on

work

and

we'll

bring

that

to

metallurgy

when

we

have

it.

B

Another

project

was

al

Morton

at

a

project

at

the

same

table

where

I'll

just

leave

the

slide

here,

but

you

contact

and

contact

him

about

what

he's

doing.

It's

basically

new

ways

to

do

performance

measurements

at

at

the

Highline

rates

that

are

in

the

last

mile

in

modern

internet

and

then

Miriah

and

Marian

ended

a

path

spider

survey

as

well.

So

we

set

ourselves

up

with

a

what

wired

internet

access

at

the

table

and

you

can

do

some

active

measurements.

B

B

C

Thank

you

well,

I've

I've

been

using

the

internet

for

a

while.

You

can

see

my

my

here.

My

hair

is

white

and

I

was

you

know,

I

had

all

these

sessions.

You

know

my

bed

blossom

freezes.

My

video

session

hangs.

My

companions

fell

out

on

the

video

and

I

had,

you

know

always

thought.

Well,

it's

my

shitty

operating

system,

so

rebooted,

my

PC

I

I,

restarted

my

browser

and

whatever,

but

then

and

a

niche

that

they

could

be

problems

in

the

networks.

C

C

Well,

just

one

more

thing:

initially,

we

set

this

up

as

a

ratio

project

and

we

had

people

at

university.

That's

you

know,

run

a

couple

of

PhDs

and

some

thesis

on

this

and

I

was

helping

out.

Oh

no,

the

MSC

is

Monday

and

I

discovered

that

the

share

engineering

numbers

out

of

this

was

huge.

I

discovered

that

you

know

each

week

there

was

around

1,000

outages

in

this.

You

know

mesh

of

10

notes.

C

C

We

we

started

to

investigate

in

let's

say

each

let's

say

event

and

we

found

quite

clear

some,

you

know

short

engineering

measures

to

to

to

solve

some

other

problems.

It

turned

to

be

mostly

these.

These

kind

of

problems

related

to

BGP

boundary

crossings.

So

you

know

we

had

the

passive

mode

which

appear

to

our

customers.

That's

resulted

in

two

minutes

oth

when

you

have

the

redundant

collection.

C

You

had

been

miss,

miss

miss

done

with

rhetoric

rates

when

we

started.

You

know

evading

the

reads

from

the

routiers

before

being

bitten,

you

don't

see

any

outages,

so

I

also

saw

that

a

shear

fiber

cut

or

or

instability

costed,

79

seconds,

and

that

is

probably

due

to

bgp

or

whatever

the

riveter

rewriting

the

forwarding

table

with

the

fuel

bgp

routing

sec.

So

so

you

really

can't

afford.

C

Saying

kid,

you

know

it's

faster,

but

definitely

we

don't

afford

can't

afford

one

minute

outage

a

everything

change.

So,

as

you

can

see

here,

here's

a

distribution,

all

the

entities-

and

you

know

a

lot

of

oddities

in

in

the

air

over

50,

milliseconds

or

less-

and

that's

probably,

let's

say

randomly

be

scarred

so

whatever.

So,

that's

probably

a

that's

a

fact.

C

Network

solution

care

too

much

about

seven,

but

you

can

see

that

the

violet

figure

shows

that

the

most

of

time

goes

to

the

bigger

entities

which

in

is

in

the

order

of

50

seconds

or

more

so

minutes,

and

that

is

to

me

looks

like

we'll

be

lets,

say:

BD

related

configuration,

related

problems,

so

I

guess

we

need

to

do

all

of

us

do

better

which

be

to

tuning

and

configuration.

We

need

to

have

a

faster,

better

hardware

for

PSC

or

stuff,

like

that

faster

convergence

yeah.

C

So,

as

you

can

see,

I

have

a

limited

number

unknowns

and

if

anyone

are

interested

to

join

or

help

this

project

with,

let's

say

bye

power

and

also

some

hardware

I

just

need

an

UNIX

account.

So

so

let

me

know

and

I

hope,

there's

a

lot

of

stuff

to

be

discovered

here

about

how

the

internet

actually

behaves.

B

C

D

Hello,

everybody,

my

name,

is

your

coachman.

This

is

my

first

talk

at

an

idea.

Thank

you,

and

the

topic

is

satellite

internet,

which

was

also

on

the

agenda

at

the

previous

map.

Archy.

So

what's

it

about

it's

about

you

stationary

satellites,

we

have

five

propagation

delays,

so

our

duties

above

600

milliseconds,

and

that's

why

we

deployed

performance

enhancement

proxies

to

do

split

ECP.

Unfortunately,

we've

encrypted

transport

layer

headers.

We

cannot

use

split

tcp

anymore

and

at

the

previous

memory,

nickelodeon

already

presented

the

principal

problem

of

this.

D

He

compared

HTTP

to

too

quick

and

our

finals

complement

his

results.

We

agreed

with

him

and

yeah.

If

you

look

have

a

look

at

this,

so

there

are

three

major

operators

across

europe.

Sadly,

none

of

them

has

ipv6

support,

which

is

not

that

important

for

our

performance

measurements,

but

I

still

wanted

to

have

imagined.

D

D

Ok,

let's

start

off

the

one

way,

you

repeat,

delays.

We

send

one

body

DP

packets.

In

one

second

intervals,

we

use

the

same

physical

house

for

sending

and

receiving

packets,

which

simplifies

clock

synchronization

and,

of

course,

we

have

the

impact

of

the

backbone

network,

which

we

are

seem

to

be

neglected.

For

now.

D

D

We

have

no

insight

into

the

system,

so

the

reason

might

be

suddenly

a

true

channel

access

mechanisms

are

some

some

impact

in

the

access

network

of

operator.

But

let's

keep

these

delays

in

mind

next

or

the

page

load

times.

So

we

had

a

look

at

different

HTTP

flavors.

We

considered

Google

quick

ref

to

different

implementations

for

all

tests.

We

also

set

up

a

openvpn

UDP

tunnel

which

just

allows

us

to

disable

the

performance,

enhancing

properties

and

we

designed

two

static.

D

So

here

are

the

results

and,

as

expected,

the

TCP

connections

optimized

for

the

PAP,

provide

lowest

page

load

times,

in

contrast

to

the

ones

going

through

the

UDP

channel

again

for

all

experiments

operator,

C

shows

the

most

stable

results

and

even

gets

more

rigorous

when

looking

at

quick.

So

let's

have

a

look

at

chromium,

quick

with

chromium,

quick

operator,

a

and

P

suffer

from

I

would

say:

is

their

insanely

large

variations,

whereas

chromium,

quick

with

operators,

C

works

quite

well,

even

better

than

the

TCP

connections

going

through

the

VPN

tunnel.

D

But

still,

if

you

compare

HTTP

2

to

chromium

quick

for

operator

C,

you

see

a

the

page

load.

Time

is

roughly

twice,

and

this

is

exactly

the

same,

which

we

also

saw

at

the

previous

map

Archie

for

quick

go.

The

variation

is

not

that

high,

but

also

the

total

Pedro

time

for

when

using

operator.

C

is

not

that

good

for

large

large

website.

The

key

messages

are

all

the

same.

Therefore,

I

won't

go

into

detail

here,

but

I

think

it's

still

remarkable

that

the

order

of

magnitude

for.

E

D

D

So

the

first

row

is

all

reference:

hep-2

optimized

by

peps.

You

can

see

that

the

object

is

more

or

less

received

at

line

rate

and

the

second

row

is

in

PNG

TCP

again

the

operators

from

left

to

right

or

a

PC

like

before

so

aunty

TCP.

You

see

some

retransmission

bursts,

which

is

not

the

case

for

the

third

row,

which

is

quickly

quickly

works

quite

well

for

operator

C,

but

somehow

fails

completely

with

operator

P.

We

do

not

have

an

explanation

for

this

yet,

and

I

have

to

emphasize

that.

D

Of

course,

all

the

quick

implementations

are

work

in

progress

and

they're

all

great.

So

this

is

meant

not

meant

to

rate

the

implementations.

It's

just

like

first

impression

what

awaits

us

with

quick

over

satellite

and

these

results

match

the

previous

results

and

also

match

the

results

from

the

previous

map

arch

imaging.

D

B

D

Paper

so

roughly

we've

used

okay,

the

questions

like

what

was

the

setup

of

the

operators.

Like

did

we

yeah

for

this

comparable

setups

and

the

answers?

We

have

chosen

some

tariffs

for

the

operator

so

say

again.

It

just

went

similar

tariffs

so

like

similar

products,

the

download

dollar

rates

were

all

between

20

and

30

megabit

per

second,

and

so

I

would

say

they

are

comparable

with

a

dedicated

channels

or

was

there

sharing.

F

D

H

D

I

Do

you

have

an

S

at

the

end,

thanks

for

a

presentation

very

interesting

for

the

measurements

point

of

view,

I

mean

either

I?

Usually

don't

do

satellites

measurements

because

I

don't

have

an

access

to

satellite

network.

Do

you

know

if

there's

any

like

public

measurement

platforms,

if

ripe

Atlas,

for

example,

is

there

any

probes

in

Atlas

that

actually

have

a

satellite

connection

or

any

operators

in

your

own?

If

it's

not

a

case

would

like

to

host

one.

J

In

Korea

Montenegro,

so

you

mentioned

the

so

there

are

some

some

quick

and

different

populations

of

quick

testing.

There's

also

been

some

work

and

I,

don't

Iike

rock

units

involved

in

those

those

that

work

to

make

quick

quote,

unquote,

aware

of

satellite

lanes,

you

don't

increasing

BP

or

increasing

market

here

or

whatever

do

you

implement

any

of

those

mechanisms?

Experiment

with

overall

or

it's

just

pure,

quick,

off-the-shelf.

D

What

can

we

do

for

certain

learnings

I

thought

I

think

that

was

already

discussed

last

time

yesterday

there

was

a

interesting

site

meeting

about

localized,

optimization

of

Eggman's,

which

may

might

help

to

it's

a

satellite

link,

more

robust,

but

also

you

have

to

touch

the

end,

the

implementation

parameters

for

quick,

like

large,

initially

window

and

so

on.

Okay,.

J

B

F

E

Yes,

so

hi,

my

name

is

Pavel.

This

is

Oliver.

We

are

here

to

present

you

a

new

research

project

of

our

site,

security,

DNS

observatory.

The

goal

is

to

gain

insight

into

the

global

DNS

space,

using

kind

of

a

telescope

and

eventually

to

give

access

to

data

to

researchers,

because

we

found

that

it's

not

so

often

that

researchers

have

such

access

to

such

data.

E

To

begin

with,

we

start

by

analyzing

a

large

stream

of

passive

DNS

observations

from

recursive

observers

around

the

world,

that

is,

we

analyze

the

traffic

between

the

recursive

resolvers

to

alternative

name

servers.

That

is

what

happens

above

the

recursive

resolvers.

For

those

of

you

who

have

heard

about

far

site

security,

dns

DB,

that's

completely

new

machinery,

and

we

track

only

the

big

guys,

the

top

and

objects

for

that

research.

We

focus

on

top

10,000

name

servers,

but

also

other

objects.

I

will

describe.

E

We

focus

on

the

data

collected

in

the

first

three

months

of

this

year

that

is

marking

on

three.

Don't

cache

misses

and

we

are

just

starting.

We

just

want

to

preview

the

data

and

ask

you

what

would

you

like

to

see

in

the

final

work

so

as

to

the

galaxies

we

track

using

this

telescope?

We

tracked

DNS

objects.

We

aggregate

the

DNS

traffic,

that

is

the

query

response

traffic

and

by

few

kinds

of

keys

formost.

E

The

server

IP

address

the

IP

address

of

the

out

relative

name

server,

but

also

effective,

TLDs

SLD

SFPD

ends

q

types,

but

also

the

or

I've

already

before

or

v6

address.

We

have

seen

in

DNS

responses

to

a

quad-a

or

any

queries.

Every

minute

we

dump

a

list

of

top

ten

thousand

ten

thousand

objects,

ranked

by

the

number

of

queries

we

have

seen

and

we

aggregate

these

media

files

into

larger

files

like

one

I'll

revise,

daily

and

monthly,

and

so

on.

E

Each

tract

object,

for

instance,

each

authoritative,

nameserver

or

each

TLD

is

characterized

using

furtive

features

of

few

kinds.

First

of

all

counters,

like

the

number

of

DNS

exile

responses,

we

have

seen

from

a

particular

name

server,

also

cardinality

estimates

which

from

us

is

the

hyper

lock,

lock

algorithm

like

the

number

of

distinct

fqd

ends.

We

have

seen

from

particular

s

LD

and

histogram

estimates,

which

allows

us

to

get

median

response

delay,

including

the

quartiles

top

three

TTLs.

We

have

seen

four

particular

records

or

all

records

we

have

seen

in

responses

and

so

on.

E

So

the

very

first

result

we'd

like

to

show

you

this

graph.

This

graph

presents

you

the

rest,

four

top

10,000

name

servers.

Seen

during

the

first

quartile

is

the

distribution

of

traffic

on

the

x-axis.

You

see

the

server

rank,

so

the

big

guys

are

the

left.

These

were

the

root

name,

servers

and

TLD

name

servers

live

on

the

right

hand,

side.

E

You

are

more

likely

to

find

his

own

authorities

for

SLDS

and

so

on,

and

we

found

that

60%

of

the

queries

we

have

analyzed

are

handled

by

just

top

10

top

1000

name

servers

and

when

you

filter

by

the

response

code,

for

instance,

an

X

domain

which

is

visible

in

the

red

curve

and

traffic,

is

more

shifted

to

the

left,

which

kinds

of

story

that

no

propeller

name

servers

are

the

first

line

of

defense

from

the

garbage

traffic.

I

mean

queries

for

non-existent

domains,

they

would

servers.

E

E

E

We

put

the

names

aside,

because

there

is

no

particular

reason

to

blame

this

organization.

Organizations

for

wilt

rather

want

to

ask

question:

is

the

NS

really

distributed

I

mean

just

to

start

discussion

and

just

to

start

research?

We

found

that

just

8

organizations

and

53%

of

DNS

traffic.

We

analyzed

here.

We

showed

the

median

response

delay

so

on

the

x-axis

you

now

see.

The

response

delayed

by

axis

is

the

CDF.

E

Although

50%

of

the

server's

server

IPs,

we

have

analyzed

respondent

less

than

25

milliseconds,

but

still

1/5

needed

more

than

100

milliseconds.

To

respond,

which

suggests

many

recursive

need

to

cross

an

ocean

to

get

to

as

an

authority.

We

of

course

disregard

the

health

of

the

name

server,

but

while

there's

clearly

space

for

improvement

and

it

may

motivate

replicating

some

zones

closer

to

recursively,

solvers,

obviously

and

now

time

for

oliveira,

alright,.

K

What

to

note,

however,

is

that

the

overall

traffic

is

not

significantly

increasing

over

time.

This

means

that,

and

no

it's

a

large

service,

yet

its

operating

with

under

this

new

gTLD

now

to

another

interesting

effect

that

we

saw,

namely

the

combination

of

the

heavy

eyeballs

Algar

with

negative

caching,

which

leads

to

some

interesting

effects.

So

happy

I

was,

as

you

all

know,

is

if

you

have

a

v6

connectivity

as

well

as

IP

before

you

will

send

a

and

quad-a

queries

to

resolve

an

fqdn

and

negative.

K

K

The

second

example

that

we

look

at

is

actually

two

specific

FTD

ends

which

belong

to

the

to

a

large

at

serving

network,

where

we

see

a

regular

TTL

of

five

minutes

by

the

negative

caching

TTL

of

only

one

minute,

which

then

again

results

in

to

two-thirds

of

all

responses

being

empty

quality

responses,

and

the

last

one

that

I

want

to

show.

You

is

two

specific

FGD

ends

again,

but

this

time

for

an

OS

time

service-

and

here

we

have

the

effect

I

would

say

so.

K

We

have

a

regular

TTL

of

15

minutes,

but

the

negative

catching

TTL,

which

is

60

times

lower,

namely

15

seconds

only

which

results

in

90%

of

all

responses

being

quality,

no

data.

So

this

example

show

you

that

the

effect

that,

if

you

have

the

happy

eyeballs

algorithm

in

play

with

short-

caching

TTLs,

can

lead

to

a

large

number

of.

Basically,

let's

say

useless

queries,

okay,.

E

So

you

have

probably

heard

of

this

DNS

hijacking

at

X

right,

if

not,

please

download

these

slides

and

click

on

these

links.

The

short

story

is

that

DNS

second

rpki

properly

deployed

should

make

these

attacks

much

more

difficult,

and

this

is

why

we

analyzed

in

a

sec,

an

RPG

I

support

for

in

the

top

10,000

name

service

on

the

Internet.

E

Let's

start

with

the

red

line,

which

is

DNS

SEC

support,

I

mean

percentage

of

DNS

responses

with

the

SEC

signed,

DNS

SEC

signatures,

and

in

order

to

make

this

plot

sane,

we

represent

servers

in

by

averages

for

groups

of

100

servers

that

are

adjacent

on

the

trees,

because

otherwise

you

would

see

10,000

points

and

that

would

be

unreadable.

So

the

red

circle

shows

you

where

Dina's

sack

is

most

likely

to

be

deployed,

I

mean

for

the

most

rural

guys.

That

is

usually

the

named

the

root

name,

servers

and

deal.this,

which

is

I,

think

common

knowledge.

E

Nowadays,

however,

when

you

look

at

the

rest,

the

guys

it's

not

so

well,

and

so,

when

you

measure

the

adoption

by

server

IP,

it's

just

4%,

but

when

you

measure

by

traffic

its

16%,

which

kinds

of

alliance

with

the

evening

starts

which

are

linked

below

for

RPI.

For

some

reason,

the

guys

in

the

middle

are

more

likely

to

sit

in

prefixes

signs

using

RPI.

E

We

also

analyzed

well

what

if

a

server

is

secured

using

DNS,

SEC

or

RPI

I

mean

I,

wrote

the

two

or

both

and

that's

the

black

line,

so

intuitively

the

distance

between

the

blue

and

the

black

tells

you

how

these

two

techniques

complement

each

other

if

the

distance

high

or

in

they

are

deployed

at

the

same

time.

So

sorry.

G

E

Does

the

Union

not

the

intersection,

so

when

you

average

by

server

IP,

is

44%

and

by

the

traffic

49%,

so

I

think

for

now?

That's

it

just

to

summarize

the

observatory's

any

project

provides

aggregated

view

in

time

and

we

found

the

different

sector

might

need

more

work

in

performance

security.

As

I

said,

we

are

just

starting

and

we'd

like

to

hear

a

feedback

for

most.

What

evolution

would

you

like

to

see

in

our

final

work?

E

B

L

To

make

it

quick,

Alex

may,

over

from

Nikita

DT

what

I

would

great

work.

What

I

would

really

like

to

see

is

a

function

that

actually

allows

me

to

estimate

the

traffic

change

depending

on

a

TTL

change,

so

that

would

be

I.

Suppose

it's

something

like

this

yeah.

If

you

can

get

that

function

out

of

the

data

that

you

have,

and

that

would

be

a

greater

estimated

to

recommend

customers

and

also

to

compensate

for

measurements

between

domains

with

different

details.

L

M

N

O

You

go

back

to

the

first

graph

on

a

black

graph

and

I'm

less

hurt

occur.

Si

so

I

don't

understand

your

conclusion

from

this

one,

because

the

response

delay

doesn't

go

up.

Well,

the

DNS

traffic

goes

up

and

you

were

I

mean

there's

a

shift

between

the

green

and

the

red

line

and

and

you

your

statement

was

sounded

like

a

conclusion

that

they

were

aligned

so.

K

K

It

didn't

the

first

day,

that's

correct,

so,

as

we

also

mentioned

so

this

is

preliminary

work.

We

don't

have

all

the

knowledge

that

plays

into

the

DNS.

Dns

is

a

very

complex

system.

As

you

all

know,

the

it

could

also

play

in

that

the

people

at

this

company

added

new

servers

and

those

servers

were

maybe

configured

strangely.

K

P

I

Right

so

this

response

delay

in

this

graph

you're,

actually

measuring

traffic

from

resolver

to

authoritative,

right

so

I,

don't

think

would

be

influenced

by

TTL,

because

if

there

will

be

any

cached

orbino

queries

for

to

start

with.

You

know

what

I

mean

if

it's

in

a

cache,

you're

not

gonna,

see

the

queries

coming

from

the

resolver

story

straight

to

the

client.

Gonna

get

an

answer

right:

you're

measuring

the

response

time

from

resolver

to

authoritative.

E

So

we

are

assuming

that

what

actually

made

the

response

will

be

higher

was

right

of

the

traffic,

not

the

TTL,

because

TTL

of

0

increases

traffic

and

we

assumed

that

it

was

so

high

that

response

did

I

went

up,

I,

don't

know:

okay,

okay

and

anyway.

The

the

point

of

the

graph

on

our

plots

is

rather

to

show

that

we

have

software

for

finding

the

trends

in

automatic

way,

not

ready

to

drill

down

into

these

problems,

because

it's

premier

network

all

right,

but

thanks

for

interesting

work.

Thank

you.

Thanks.

Q

There's

my

first

a

IDF

and

thanks

for

giving

me

the

opportunity

to

present

here.

My

name

is

Jason

Sanchez

I'm,

a

master's

student

from

the

University

of

Chile

in

the

electrical

engineering

department

and

I'm,

going

to

present

the

Iranians

compliance

data

so

in

different

results

before

they

eat

DNS

flag

they

on

after

the

NS

like

the

well

some

background.

First,

what

is

it?

Ens

are

the

extension

mechanisms

and

to

the

play

new

futures

in

the

DNS

protocol.

Q

What

is

the

problem

with

a

DNS?

There

is

some

in

the

operational

world

there

is

some

work

around,

as

you

know,

and

also

there

is

a

prolific

walls

that

block

invalid

traffic

and

some

authoritative

servers

block

the

response

or

answer

with

a

gram

packet

and

what

that

means

and

the

EDA

the

result

birds

have

to

send

again

the

query

away

from

a

timeout

and

all

this

is

by

the

body

inflammation

inflammation

of

DNS

that

not

following

the

standards.

Q

The

solution

was

provided

by

the

DNS,

provided

the

most

common

providers

around

the

world

this

day

is

to

remove

all

the

workaround

was

to

remote.

All

the

workaround

was

the

1st

of

February

of

this

year

and

I'm

going

to

show

you

some

results

before

the

Status

Bay

before

the

DNS

Flag

Day

and

after

the

deer

slug

day,

ok

I'm

going

to

show

you

the

energy

status

of

19

and

19,000

and

61

resolvers.

We

develop

an

algorithm

to

test

design

in

forest

age.

Q

Q

It's

test

was

performing

3

child

before

the

dns

flag

day

and

two

times

after

readiness

like

day

two

as

mode

the

network

failures

like

timeout

and

others.

The

test

number

one

and

second,

some

of

the

tests

in

number

one

and

second

stage

was

base.

It

is

based

on

the

DNS

up

draft

a

common

operational

problems

in

DNS

servers

that

is,

and

an

advanced

version.

Well,

the

first

stage

we'd

get

some

information

about

of

the

DNS

version

of

the

servers,

also

with

so

bad

as

the

ethernet

support.

Q

Q

Also,

we

can

see

here.

The

ideon

is

our.

This

is

the

comment

made

by

dick

and

you

can

see

that

the

most

common

response

codes,

the

final

by

the

RFC,

10

and

35.

You

can

see

that

the

most

common

response

code

here

is

no

Aurora

CO

our

code.

Also,

we

can

see

that

the

OP

code,

according

to

the

draft

the

most

they

recommended

a

week.

We

can

we

have

to

see

the

option

code

in

the

additional

section

and

the

IDNs

version

number

0

in

the

in

the

answer.

Q

According

to

the

algorithm

classification,

we

can

see

that

the

most

of

the

resolvers

has

content

with

the

alienness

version.

0,

but

not

complete

is

d2.

For

instance,

we

don't

see

the

option

code

in

additional

section,

but

also

we

can

see

that

almost

9,000

or

soldiers

has

a

complete

compliance

with

the

ad

with

the

draft.

Q

The

first

test

here

was

Adina's

number

one.

We

expected

an

bad

version

according

to

the

draft,

and

also

we

expect

an

option

code

in

additional

section

and

the

version

number

zero.

Well,

not

our

role

of

the

results

are

according

to

the

draft,

as

you

can

see

here,

the

bad

version

only

3,500

for

soldier

responds

according

to

the

draft.

Also

we

test

the

DNS

SEC.

This

is

a

simple

command

to

send

here,

but

all

not

at

all

of

the

resolvers

has

the

flag

do

DNS,

SEC,

okay,

and

also

we

expect

this

flag.

Q

If

they

are

are

sick,

are

in

the

answer.

This

is

the

classification

of

the

organ

that

we

will

up.

Almost

10,000

has

okay

the

response

with

the

the

old

number

one

flag

and

almost

400

thousand

four

thousand

result.

God

has

they

know

to

flag

here.

The

terror

stage

was

the

to

taste

different

extensions

I'm,

going

to

present

two

of

that.

For

the

time

one

of

these

is

a

chain

Corinne

DNF

in

DNS.

Q

That

is

how

experimental

RFC

think

worry

is

today,

is

to

deploy

the

DNS

like

a

client

validation,

and

you

can

test

by

the

pipe

tool.

We

expect

here

no

error

code

and

also

another

flags.

This

is

made

by

a

simple

or

one

TCP

session

or

two

supposition.

According

to

the

implementation,

as

you

can

see,

almost

the

result

bars

are

no.

Compliance

with

this

extension

is

so

new.

Also

with

this,

the

client

subnet

is

the

result

for

this

extension

and

the

digital

solvers

are

chilean

resolver.

Q

You

can

see

that

it

is

not

content

with

the

most

common

extensions,

but

the

main

idea

is

made

a

comparison

between

the

D&S

flag

day

before

after

the

DNS,

like

the

complains

and

after

the

DNS

like

they.

This

is

a

comparison

of

the

minimal

it

in

ears

after

shoulders.

We

can't

see

that

then

no

error

code

increase

in

some

of

these

servers

and

I

believe

that

is

so

important

because,

most

of

the

result,

worse

has

a

increase

of

according

to

the

draft

that

I

talked

before

and

I

believe

that

is

a

barrier.

Q

Well,

we

can

check

here

that

almost

10,000

of

the

servers

increase

the

network

or

here

in

the

chain,

query

the

before

the

Green

Line

is

there

before

the

DNS,

like

the

dark

line.

Is

there

after

that

NSLog

day?

Also,

this

is

a

for

the

client,

subnet

and

also

on

to

finally,

is

they

recognize

the

server

recognize

the

opcodes?

M

Q

M

O

John

reed

ACMA,

I

noticed

early

on

the

slide.

For

example,

you

identified

various

resolver

versions

to

the

extent

that

they

answered

was

there

any

further

breakdown?

You

can

do

of

correlating

this

type

of

behavior,

either

with

specific

resolver

vendors

or

with

specific

other

cohorts

that

might

appear,

such

as

consumer

ISPs,

business

ISPs

things,

you

know

any

further

correlation

or

breakdown.

You

can

do

with

that.

Yeah.

Q

We

could,

with

we'd,

get

some

information

about

it

on

all

these,

about

I

showed

some

results

here

we

recover

all

all

the

resolvers

by

by

a

ACN

or

by

the

some

kind

of

version

we

have.

We

get