►

From YouTube: IETF105-LPWAN-20190726-1000

Description

LPWAN meeting session at IETF105

2019/07/26 1000

https://datatracker.ietf.org/meeting/105/proceedings/

A

A

Ok,

so

this

is

the

LP

one

working

group

we

have

Dominick

battle

here,

acting

as

chair

Alex

is

not

with

us

he's

the

proud

father

of

a

young

Leonardo.

So

we

welcome

neo

now

to

this

group,

and

so

hello

Alex

will

be

joining

us

from

Ithaca,

but

it

was

much

easier

that

just

I

mean

it

could

stand

with

me

at

the

chair.

So

with

this

we're

staying

as

usual,

we

won't

distribute

the

blue

sheets

immediately,

we'll

wait

like

10

to

15

minutes,

so

the

usual

late

people.

So

this

is

an

IETF

official

meeting.

A

All

the

best

practices

of

ITF

meetings

apply,

in

particular

the

rules

about

patterns,

if

you're

aware

of

any

IPR.

That

applies

to

the

discussion

that

takes

place

in

this

room,

whether

it's

from

your

sponsors

or

weather.

Please

let

us

know

or

refrain

from

talking

about

this,

and

if

you

don't

want

to

speak

during

the

meeting,

you

may

always

talk

to

the

chairs

after

the

meeting.

The

other

best

practices

also

apply

anti

restaurant

and

all

the

others.

So

they

are

listed

on

this

page.

A

The

meeting

is

being

filmed

and

recorded

and

actually

it

will

be

published

on

Yahoo

on

YouTube,

I'm,

sorry,

yet

and

actually,

what's

very

cool

is

you

can

extract

the

transcripts

from

the

YouTube

page

I'm

sure

you

can

make

a

rule

that

translator

yeah

with

YouTube

and

fix

me

so

yeah,

so

everything

is

recorded,

minutes

are

being

taken,

blue

sheets

will

be

distributed.

I

want

to

make

sure

that

we

have

people

joining

the

etherpad.

That's

the

mean

we

used

to

take

minutes.

A

What's

very

cool,

is

you

can

join

it

online

and

if

you

speak

to

the

mic,

please

check,

after

that.

What

you

said

was

captured

quietly,

make

sure

that

your

name

was

kept

shot

correctly.

So

we

publish

accurate

minutes.

Okay,

so

please

all

join

these

the

pad

and

and

preview

the

minutes

as

they

are

being

taken.

Mostly

if

you've

been

speaking

so

who

can

take

minutes?

Okay,

we

have

two

three:

is

there

somebody

on

the

on

the

double?

A

Even

you?

Yes,

okay,

thank

you.

Okay

and

then

the

other

material

is

available

on

this

page.

It's

it's

also

available

from

the

agenda

page

on

the

IETF

site

and

that's

actually

what

we

use

to

go

from

from

presentation

presentation.

So

we

have

a

lot

on

our

plate

today,

but

we

have

2

hours

some

kind

of

confident

that

we

won't

have

any

problems.

I

won't

be

pressing

people

I,

don't

think

so.

A

The

the

new

thing

compared

to

the

agenda

that

we

had

published

or

till

about

date

is

that

we

have

an

interesting

presentation

on

a

shield

limitation

coming

from

Chile,

so

this

one

will

be

actually

appended

to

the

presentation

by

Adam

in

each

so

in

those

15

minutes,

I

guess

the

mini

will

speak

for

like

10

minutes

and

then

we

will

be

able

to

discuss

I,

don't

know

any

provinces,

psychic

the

by

Sheik

since

so

we'd

be

able

to

do

this

case.

That

has

value

will

at

some

time

then

six

arcs,

no

one.

A

So

this

time

there

was

a

lot

of

work

done

on

the

lower

one

specification,

many

discussions

on

the

mailing

list,

so

we

we

gave

more

time

to.

Even

so

we

can.

We

tell

us

the

best

and

latest

about

how

low

how

one

profiles

shake.

Then

the

data

model

and

actually

I

just

got

a

text

from

sera.

She

said

I

won't

be

joining

the

meeting

immediately.

A

Cover

start

without

me

said:

yes,

if

so,

why

she's

not

there

at

the

time

after

the

young

thing,

I

would

like

to

push

it

till

he

arrives,

but

I

guess

you

will

be

there

because

it's

now

an

hour

from

now,

then

the

co-op's

magic

context

who

I

am

to

the

minimal

step

to

discuss.

I

am

again

and

Calais

will

show

us

the

progress

until

the

discussion.

A

Charlie

was

supposed

to

to

present

to

give

us

an

eye.

Tripoli

status

as

it

goes,

Charlie

did

not

join

the

mythical,

so

I

don't

know.

If

you

would

be

with

us.

If

Charlie

you

could

join.

Let

us

know,

do

you

sit

shown

in

a

Michiko

all

right,

don't

so

I'm

not

too

sure

that

we

will

have

Charlie

this

time,

but

ok,

we'll

get

the

news

next

time

and

and

then

we'll

discuss

free

chattering,

because

we

are

pretty

I

mean

the

basic

OBE,

so

I

show

here.

A

A

A

B

A

C

Hello

good

morning,

and

so

this

is

a

stages

about

the

basic

sheet

draft

ipv6

static

context

were

currently

in

version

21,

so

I'll

spend

just

one

a

minute

or

two

remembering

people

what

this

draft

is

about,

so

it

should

new

to

the

working

group.

If

you

haven't

looked

at

that

before

you,

here's

your

chance

to

understand

what

this

is

about

and

then

I'll

go

over.

What

we've

done

since

last

I.

C

C

Otherwise,

just

skip

it

and

then

so

what

sister

after

bad

I

like

to

say

there

are

three

parables

in

one

draft.

One

deliverable

is

a

generic

header

compression

mechanism

that

uses

a

static

context.

This

gives

the

name

shake

to

the

whole

technology,

static

context,

header

compression.

So

it's

that

thought

in

blue

in

my

protocol

stack

here

in

option.

Ipv6

UDP,

but

actually

nothing

in

this

header

compression

mechanism

is

dedicated

to

these

protocols.

So

we

can

apply

it

to

anything

that

regular

has

things

that

that

look

like

headers

in

the

same

draft.

C

We

also

have

the

specification

of

a

fragmentation

protocol

and

we

have

three

versions

of

fragmentation:

free

modes

which

address

different

underlying

network

requirements.

These

are

free

boxes

of

described

here

and

it's

kind

of

compression

over

fragmentation.

It's

not

really

encapsulated.

That

gets

people

a

little

bit

confused.

Sometimes

we

need

to

be

better

at

explain

and

in

the

same

draft

we

have

a

simple

example:

I

would

say

of

how

we

can

apply

compression

to

ipv6

UDP.

C

C

Do

these

red

boxes.

How

do

we

apply

mostly

fragmentation

over

various

technologies

because

they

have

their

own

specificities,

and

so

they

pick

you

one

or

two

or

three

of

the

fragmentation

modes

and

set

parameters

so

that

it

fits

the

characteristics

the

underlying

technology?

So

that's

my

view

of

the

whole

work

we're

doing

in

this

working

group,

yeah

and

so

far

the

draft

I'm

talking

about

has

specified

the

format

of

frames

that

go

over

the

air

of

the

three

person

to

the

underlying

layer.

C

But

so

far

we

haven't

defined

how

we

express

how

we

represent

the

rules

that

are

used

for

compression

and

fragmentation.

So

a

part

of

the

other

drafts

propose

a

representation

of

the

context,

the

rules,

the

parameters

for

fragmentation

and

everything.

This

way

this

is

not

in

this

draft

okay.

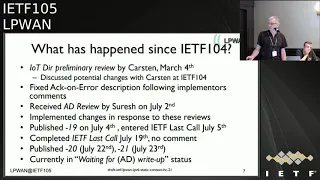

What

has

happened

since

the

last

meeting

so

fairly

long

list

of

technical

stuff,

but

we

had

IOT

idea

review

by

custom,

which

was

funny

of

the

document

we

discuss

with

Caston

at

Prague

and

agreed

on

most

of

the

changes

we

improved

the

description

of

ikana

Road.

C

D

D

Of

the

IES

she

read

an

older

version

and

some

of

them

read

the

newer

version

and

that

kind

of

like

I,

wanted

to

avoid

that

and

in

any

weights

idea.

We,

usually

you

don't

do

anything

like

like

because

nobody's

going

to

start

working

on

it,

so

this

afternoon,

like

it

should

be

pallet,

and

then

we

get

on

like

next

like

giving

the

number

of

pages.

So

previously

you

placed

us

explicitly

on

a

Tele

chat,

but

now

it's

like

automatic,

so

it

looks

at

the

reading

load

and

then

puts

it

on

until

the

chat

so

I'm.

D

Time

to

read

the

stuff,

like

all

the

Directorate

reviews

and

everything,

and

so

on,

whatever

it's

like

left

over,

because

some

of

the

directorates

to

last

call

and

tell

each

attributes

separately

so,

like

Jenna's,

for

example,

does

it

separately

so

they

do

a

last

cause

review

and

look

at

the

updates

and

do

a

tell

etcetera

reactor.

Okay,

so

I,

don't

expect

any

anything

to

come

out

of

it,

but

they

they

do

one

the

time

to

do

that,

so

they

can

expect

like

two

week

window.

So

that's

what

I'm

saying

22nd

would

be

a

to

date.

D

E

D

If

you

want

like

a

style

like

dominate

like

if

you

want

to

be

on

the

call,

you

can

be

able

to

call,

you

cannot

speak

and

less

like

in

and

let's

give

you,

but

you

can

be

on

the

call.

Anybody

can

be

an

observer

on

the

call,

but

I

could

pack

Stella

hey,

like

you

know.

If

I

have

some

questions

for

you,

you

can

actually

answer

it,

so

it

gets

done

quicker

rather

than

make

another

two

weeks.

Okay,.

D

C

C

D

C

Meetings,

so

we

now

have

a

compression

and

fragmentation

integrated

into

the

same

brain

sync,

which

wasn't

the

case

before

and

I'll

talk

about

em

later

coming

up

legs

well,

nothing

Suresh

described,

but

it's

going

to

come

in

terms

of

the

draft

itself

and

we

want

to

communicate

more

about

shakey

educate

people

about

chick.

We

get

lots

of

question

I,

don't

quite

understand

how

this

works

etc.

C

C

Club

CDTI

PVC

compression

overall

one

over

six

Fox

already

a

couple

years

ago,

coop

compression

of

our

LTM,

so

we're

not

compressing

UDP

ipv6,

but

just

a

co-op

part

of

an

IP

enabled

Network

and

still

getting

benefit

of

the

compression.

So

just

even

to

mention

that

co-op

is

not

shake

is

not

dedicated

to

the

IP

layer

to

sit

below

the

IP

layer.

It

can

sit

at

any

any

point

in

the

stack

as

a

sexual

oral

one

and

shake

is

being

evaluated

to

the

lower

Alliance

for

the

LMS

transmission

or

lower

one.

C

C

Daniel

here

is

interested

in

using

shake

for

IPSec,

ESP

compression

and

in

terms

of

implementation.

We

have

open

shake

which

already

mentioned.

We

have

a

Cleo.

They

have

commercial

card

both

in

C

and

go

language

both

for

the

devices

in

the

network,

so

commercial-grade

operational

and

I

just

showed

last

week

about

the

new

implementation,

so

Sondra

is

going

to

tell

us

a

little

bit

about

it.

C

F

Okay,

hello,

everyone

so

I'm

Sandra,

says

bells

from

Universidad

de

Chile

and

I

will

present

a

lot

from.

Actually

this

is

a

world

from

the

legal

he's,

a

master

student,

and

this

year

we

started

implementing

the

draft

or

she

cover

loyal

one.

So

I'll

just

give

a

brief

report

of

what

is

the

status

of

this

implementation.

F

So

basically,

this

is

the

architecture

that

we

are

using.

This

is

our

experimental

setting,

so

we

have

a

low

pi

end

device

which

is

connected

to

a

rack

wireless

gateway,

and

then

this

connects

to

the

the

things

network

and

the

other

part

of

shake

is

implemented

in

Amazon,

Web

Services,

so

he's

using

API

gateway

talking

via

rest

to

the

network

server

and

the

actual

implementation

of

shake

is

in

in

lambda

function

inside

the

AWS

services.

F

So

this

is

the

status

of

the

implementation.

What

you

see

here

on

the

right

is

the

the

modules

that

are

that

are

part

of

the

implementation.

So

basically

we

have

a

big

module

with

compression

the

compression

and

the

other

module

is

for

fragmentation

and

reassembly.

The

one

that

is

almost

ready

is

the

first

part

the

compression

the

compression.

F

So

all

the

rules

are

implemented

outside

in

a

dictionary

and

then

the

role

manager

just

loads,

the

specific

rule

that

we

need

to

apply

for

a

compression

and

then

so.

This

is

around

a

90

percent

ready.

They

have

fragmentation

and

reassembly

module.

This

is

in

charge

of

an

aura

student,

so

I

will

see

his

work

next

week

and

then

I

can

tell

you

if

this

is

also

working

or

not

I

couldn't

say

yet.

If

it's

working

I

already

said

this

so

so

far,

we

we

have

tested

the

day

uplink

for

the

compression

and

it's

working.

F

We

haven't

yet

tested

the

downlink,

but

the

coldest

is

their

Israeli.

We

just

need

to

do

the

testing

and

also

for

the

fragmentation

we

haven't

done

any

tested

any

testing,

yet

what

we

have

pending

still

so

this

90%

comes

that

from

the

fact

that

we

haven't

yet

implemented

these

two

compression,

the

compression

actions

which

is

mapping

certain

at

least

be

basically

because

so

rodrigo

said

he's

connected.

F

Actually,

he

said

that

he

wasn't

using

them,

and

so

he

just

skipped

the

implementation

for

now

and

then,

as

I

said,

we

need

to

third

attack

the

downlink

for

the

compression

and

we

want

to

do

one

end-to-end

testing

with

with

all

the

IP

connectivity

entrant.

So

this

is,

this

is

gonna,

be

the

last

part

to

test.

So

we

plan

to

do

it

first,

for

only

for

only

for

the

compression

and

then

we

have

when

we

have,

the

fragmentation

convey

to

then

they're

complete

thing,

and

I

was

gonna

say

something

else.

G

F

A

F

A

I

hope

it's

very

clear

to

everyone

in

the

room

that

was

very

critical

now

is

to

get

a

command

format

for

the

rules,

so

we

can

feed

the

rules

into

both

implementations

and

try

one

implementation

on

one

side

and

do

the

implementation

on

the

other

side.

Right

right

now

is

kind

of

blocking

us

for

interrupt,

because

we

can't

express

the

rules

in

the

fashion

that

both

codes

can

digest.

So

that's

really

the

next

important

step

for

us.

H

Good

morning

everyone

Carlos,

so

you

get

from

seefox,

so

I'm

gonna

talk

about

the

the

draft

IETF

lp1

chic

over

sea

Fox.

Just

a

quick

note

to

say

to

welcome

the

new

member

in

the

group

because

alex

is

not

here

and

I.

Think

I

saw

Alex

in

the

note

there,

so

congratulations

Alex

on

the

arrival

of

the

new

member.

H

So

yeah

very

quick

update

there

is.

We

have

none

modifications

to

the

draft.

What

we've

been

doing

is

working

on

optimization

of

the

sea,

shake

parameters

for

payload

fragmentation

and

data

integrity

over

sick

folks.

As

a

reminder,

sig

Fox

has

a

fixed,

say

size

for

the

payload,

so

in

the

uplink

is

12

bytes

in

the

downlink

is

8,

so

basically

we

can.

We

can

optimize

for

that.

H

H

The

first

one

is

a

single

bag

header

that

is

right

now,

optimized

for

minimum

overhead

in

providing

an

ACK

size

of

13

bits

in

the

downlink

and

the

other

one

is

two

might

shake

uplink

heathered

that

is

optimized

for

maximum

payload,

with

an

ax

sighs

after

43

bits

in

the

downlink.

So

this

is

more

or

less

what

the

the

simulations

look

like.

H

We

can

support

all

the

way

to

two

thousand

bytes,

not

only

sorry

that

it's

recommended,

but

that's

what

it

would

work,

then

again,

one

as

little

as

one

single

act

would

be

needed

to

support

all

that

payload

and,

in

this

case

it'll

be

our

43

bit

act

that

fits

perfectly

finding

there

in

the

sea

Fox

a

downlink.

So

we

plan

to

keep

working

on

this

and,

of

course,

bring

in

bring

it

to

to

implementation,

to

try

to

test

the

limits

of

the

protocol

and

see

how

we

can

sorry.

E

Here

everyone,

my

name,

is

Eva,

Petrov

and

I

will

be

presenting

the

advancement

of

the

sheikah

vellore,

one

trust,

and

so

he

will

start

by

giving

you

a

little

bit

of

context

about

laura1.

Then

I

will

present

you

the

changes

that

happen

since

the

last

IDF

and

then

I

will

tell

you

what

was

some

of

the

rationale

for

the

changes

that

we

did

and

we

can

have

a

discussion

if

you

have

any

ideas

or

any

comment.

E

E

E

E

E

E

E

Those

one

of

the

airport

is

the

possibility

to

be

used

also

for

compression,

whereas

this

is

the

F

port

of

default

and

the

airport

up

short,

is

only

for

fragmentation,

basically

in

F

port

up

short,

you

don't

have

any

space

for

additional

row

IDs,

so

you

have

effectively

only

one

row

ID

and

in

the

case

for

F

port

of

default.

You

have

space

for

other

room

IDs,

so

one

of

them

is

for

fragmentation

and

the

rest

of

them.

So.

J

D

C

It's

basically

the

way

and

have

had

this

discussion

on

the

missus.

Is

that

you,

the

weights

country,

describe

it,

looks

like

you

have

actually

three

instances

of

shape

of

the

context

separately

and,

of

course,

that

it's

one

of

the

instances,

for

example

the

F

bought

up

short.

This

needs

one

context

which

has

only

one

room.

Therefore,

you

need

three

bids

and

default

has

an

8-bit

a

space

for

good

idea.

So

either

you

I

mean

there's

nothing

wrong

with.

D

C

But

then

you

should

describe

it

this

way.

We

have

three

contexts

that

are

selected

by

foot

or

the

way

I

focus

on

mailing

lists.

You

describe

normal

IDs

to

include

this

F

fortified,

and

so,

if

we

useful

ID

numbers

that

have

at

least

eight

bits

which

overlap

with

the

support

of

Lauren

and

maybe

have

some

other

bits

so

variable.

C

D

C

D

C

K

L

E

E

In

order

to

be

able

to

optimize

for

the

different

radio

conditions,

as

I

said

earlier,

in

some

regions,

the

maximum

packet

size

is

11

bytes

and

in

case

there

is

some

that

are

present.

This

might

be

reduced

even

further.

That's

why

we

decided

to

keep

some

flexibility

there

and,

yes,

we

updated

the

terminology

to

match

that

of

the

18

version

of

the

sheet

raft.

I

mean

added

extra

examples.

E

E

Yes,

and

here

is

an

example

of

the

pellet

format,

so

we

have

at

the

upper

side

of

the

slide

the

regular

packet

for

the

short

illusion

and

which

doesn't

fyd

and

window

number,

and

we

have

in

the

down

part

of

the

site,

fragmentation

where

we

have

those

two

additional

fields.

So

one

is

for

F

poor

chart

and

the

other

for

the

effort

of

default.

E

I

G

B

C

L

So

we

did

detect

just

days

of

hello

recovery

because

it's

faster

to

detect

an

issue

when

the

detect

change,

but

we

specify

that

we

don't

want

to

use

and

to

mix

to

session

at

one

time,

because

we

are

in

embedded

contacts

with

lot

of

constraints.

So

it

radius,

provinces

and

or

all

memory

and

RAM

needed

in

microcontrollers

and

subdued,

simplify

implementations.

L

I

Another

bushes,

for

example,

I

saw

that

they

are

not

white

bind

in

the

sheet.

Compressions

I'm

a

bit

afraid

of

the

implementation

issues

it

can

break.

So

the

confession

residue

is

not

the

white

line

and

the

paper

comes

right

after

so

I

guess

this

is

the

decision

you

make

here,

but

when

you

get

so

more

rational.

C

A

I

E

Thank

you

so

now

things

that

have

been

discussed

as

possible

coming

changes

is

to

remove

the

restriction

of

the

row

ID

size.

Basically,

right

now

we

are

saying

we

recommend

people

to

use

the

to

have

ports

and

have

row

size

of

six

bits,

but

in

some

applications

that

might

be

insufficient

in

others,

it

might

be

Morden

enough.

E

So

the

comments

that

we

received

to

us

to

try

to

really

organize

the

draft

so

that

we

don't

have

these

restrictions,

and

this

is

something

we

intend

to

do-

have

some

specified

varieties

that

are

recommended

for

fragmentation,

maybe

still

keep

two

of

them.

That

is

something

who

you

have

to

to

decide.

I

think

we'll

keep

two

of

them,

but

in

any

case

the

applications

should

I

mean

implementers

should

have

the

flexibility

to

decide.

E

E

We

explicitly

state

that

we

don't

want

to

rely

on

this

thing,

because

it

is

not

handled

very

well

by

some

of

the

implementations,

so

it

might

cause

some

issues

and

similarly

for

a

confirm

versus

and

comfort

misuse,

it

is

possible

for

some

stacks

to

send

a

confirmation

before

the

Sheik

payer

and

the

sheet

layer

can

actually

attend

the

extra

payload

and

the

confirmation

could

very

well

be

piggybacked

with

the

Sheik

payload.

So

it

didn't

make

too

much

sense

to

use

confirmed

messages

in

this

wreck.

We

might

be

sending

more

messages

than

needed.

E

So

now

a

little

bit

more

details

why

we

decided

to

do

step

size

of

tree,

but

after

some

consideration

and

running

some

experiments,

we

found

that

this

is.

This

seems

to

be

the

optimal

at

ourselves.

If

we

want

to

be

able

to

vote

from

time

to

time,

sent

some

commands

using

the

F

hoped

header

in

door,

one

and

at

the

same

time

be

able

to

say

to

send

big

payloads

without

using

too

much

size

in

the

me

in

the

bitmaps,

the

bigger

terms

improved

the

bitmap

size.

E

So

what

is

remaining

right

now

is

to

finalize

the

high

D

computation.

There

is

some

text

already

regarding

this

thing,

but

I

believe

it

needs

to

still

be

improved

and

I

think

this

is

the

only

pending

thing

to

do,

except

I

mean

if

you

receive

any

more

reviews,

we'll

be

more

than

happy

to

to

discuss

if

there

are

other

things

to

change.

But

this

is

the

only

thing

that

came

over.

I

I

C

E

A

C

A

K

A

C

By

definition,

the

system,

by

definition

the

tile

size,

is

the

fixed

value

that

fits

all

I'm

to

use

and

that's

how

the

system

was

built.

So

now,

I

should

send

you

on

a

variable

minimum

size

and

it's

kind

of

meta

redesigning

the

protocol

so

know

the

tight

side

is

really

this

minimum

constant

and

then

the

otherwise

the

a

corner

doesn't

work.

Are

we

talking

about

a

corner

by

the

way

so

Joe

and.

C

So,

in

a

corner,

you

need

to

be

able

to

resend

data

that

was

lost

and

if

the

MTU

has

been

reduced,

then

you

cannot

send

the

same

message

as

you

send

before

you

had

to

send

smaller

messages

and

and

in

the

new,

the

data

that

shows

resending

needs

to

fit

somewhere

in

the

reassembly

buffer,

and

so

the

tile

is

this

minimum

piece

that

you,

which

you

pave

the

the

whole

reassembly

buffer.

That's

why

it's

fixed.

G

A

G

L

D

M

L

E

N

E

E

A

E

E

G

Okay,

so

the

data

model,

so

to

remain

you.

What

we

have

done

in

the

in

the

draft

is

that

we

have

a

very

abstract

way

to

represent

the

rule,

so

we

never

say

it's

young

or

we

just

as

definite

define

it

as

an

array,

and

now

we

need

to

have

something

more

formal

for

different

reason.

First,

when

you

took

Pascal

talked

before

about

interoperability,

for

example,

if

we

want

to

do

interpreted

testing

between

two

implementations,

so

it's

better

to

to

have

a

common

format.

We

also

may

need

it

to

two

men

to

have

some

management.

G

G

Another

example

is

Christian

but

say

that

maybe

we

can

do

ash

the

rule

to

be

sure

when,

for

security

reason

that

we

are

using

the

same

rule

and

of

course,

if

we

do

a

hash,

we

need

at

the

two

end

of

the

same

similar

presentation.

So

we

have

to

study

a

way

to

represent

in

a

compact

way,

because

we

are

in

compact

device

in

an

easy

way.

G

This

information,

that's

why

we

are

going

to

investigate

young

model

to

see

if

it

fits

all

the

requirement

we

need

for

this

this

management,

so

we

have

already

a

draft

but

will

expire

soon

but

present

to

first

young

model.

Lastly,

ITF

I

presented

also

something

that

was

more

evolved

on

envision

model,

but

we

are

reaching

our

limits

in

annyeong.

D

E

D

G

Thank

you.

So

this

is

a

physical

point,

so

we

will

shoot

soon

a

new

version

of

a

young

model

and

this

one

can

be

the

base

for

discussion.

The

other

point

that

comes

from

during

the

last

call

with

core

working

group.

So

we

had

a

proposal

from

Caston

that

say

that

we,

for

example,

we

have

co-op

option

and

for

the

moment

we

define

in

LP

one

a

name

for

each

co-op

options.

So

for

the

moment,

it's

okay,

because

it's

think

that

is

internal

to

an

application.

G

But

when

we

will

go

to

that

young

model,

of

course,

we

don't

want

to

make

twice

the

work

on

a

core

is

defining

some

co-op

option

and

then

LP

one.

We

have

to

redefine

another

space

for

visco

up

option,

so

one

proposal,

but

we

have

to

discuss

it-

is,

for

example,

to

reserve

in

the

seed

space

that

represent

all

the

value

we

are

manipulating

in

in

shake

so

some

space

for

the

co-op

option

and

for

example,

here

when

we

will

have

us

ure

profits,

value

11.

G

G

Currently,

the

drawback

of

this

is

that

we

have

to

to

take

a

65

thousand

places

just

for

the

seed

space,

which

can

be

a

lot

so

I,

don't

know

if

it's

reasonably

or

not

to

do

that,

because

the

seed

space

can

be

very,

very

huge.

So

it's

a

possibility.

So

do

we

have

to

do

it

for

co-op

option?

Do

we

have

to

do

it

for

over

value,

but

we

will

find

on

protocol.

We

we

compress

so

currently

that's

an

open

question

and

I

would

like

to

have

your

opinion

on

it.

A

G

G

So

this

is

a

result

of

we

had

the

working

group

last

call

on

the

coop

compression.

It

was

also

sent

to

the

core

working

group

to

have

their

feedback,

so

here

is

a

result

of

this

curve,

so

we

move

from

now

we

are

in

version

9

of

the,

so

we

have

comments

from

both

groups

about

what

we

are

doing

in

this

document.

So

thank

you

for

all

the

reviewer.

We

are

also

coming

from

Christian,

but

we

took

this

week.

So

it's

not

here

it's

about

to

make

a

rash

of

the

rules.

G

The

context

in

both

sides

when

you

are

doing

Oscar

and

include

this

ash

in

the

ad

when

you

are

doing

the

encryption.

So

this

way

you

are

sure

that

both

devices

or

the

device

and

the

Chico

are

using

the

same

rules

for

the

compression.

So

it's

avoid

to

have

confusion.

If,

for

example,

you

you

could

get

into

a

deal,

anis

understand

as

a

debit

and

of

course

you

you

will

have

some

bad

behavior.

So

that's

something

we

have

also

to

include.

G

So

from

my

point

of

view,

it's

quite

difficult

right

now

to

push

it,

because

we

need

this

data

model

before

doing

it,

because,

of

course,

if

both

side

user

jizan

the

and

you

have

one

space

in

one

J'son

definition

and

those

space

in

the

other

one,

so

the

ash

will

be

different

and

it

would

be

difficult

to

to

manage.

So

it's

something

interesting,

but

how

to

integrate

it

right

now,

I,

don't

see

really

how

to

do

it.

G

You

want

so

in

v8

we

we

have

only

some

text

edition,

so

nothing

new

and

in

v9

we

introduce

or

see

also

command

from

the

co-working

group.

It

was

about

ossification

on

the

risk

to

say:

okay,

I

am

compressing

this

way

and

then

the

coop

protocol

will

not

evolve

anymore

because

we

have

defined

it's

version

1

or

we

have

a

rule.

That

say

we

have

this

and

this

and

listen.

If

something

new

appears

in

in

a

co-working

group,

when

it

it

will

maybe

block

by

the

fact

that

she

cannot

evolve.

G

So

this

is

something

that

is

not

really

a

problem

from

the

ship

point

of

view,

because

she

is

very

generic

and

when

the

device

evolve,

then

the

rule

with

evolve,

and

if,

when

everything

is

designed

as

a

field,

we

have

a

way

to

compress

it

to

manipulate

it.

So

is

not

reader

problem,

but

we

had

some

text

to

say

that

there

is

no

risk

of

ossification

with

leaf

Shiki.

G

Ok,

so

here

is

what

I

say

before

with

custom

remark

is

what

puts

co-op

space

into

into

shake.

So

this

way

you

don't

have

trouble

and

I

cover

this

point

with

the

data

model,

and

so

we

finished

with

walking

or

sass

call.

All

the

ticket

has

been

closed

and

we

answer

all

the

questions

so

now

I

turn

to

the

chance.

What's

the

next

step?

Well,.

A

The

next

step

is

to

find

a

Shepherd,

so

probably

elack's,

but

otherwise

we

might

I

mean

if

somebody

is

interested

in

in

trying

the

exercise

of

being

the

Shepherd

of

this

document,

we

can

help

that

person

through

the

process

to

solutes

interesting

learning

curve

so

always

open.

The

Shepherd

is

not

named

right

now

so,

but

if

I

appear

to

take

around

years,

if

anybody

wants

to

try

the

game.

E

D

C

C

So

they

expect

ICMP

error

messages

when

something

goes

wrong

along

the

path,

the

one

who

they

want

to

be

able

to

ping

the

destination

and

maybe

run

a

traceroute

to

troubleshoot

initial

problems,

and

we

want

to

discuss

what

and

define

what

we

want

to

do

in

the

context

of

IOT.

Does

that

all

make

sense?

C

You

know

how

do

we

all

know

that

in

first

keep

it

simple?

We

want

to

address

the

methods

and

messages

in

RC

44-43

and

not

the

extended

stuff.

So

this

is

a

come

to

a

question

shortly.

This

is

a

kind

of

scenarios

we

considered,

so

this

is

already

a

year

all

oops

wrong

button,

obviously

from

the

internet.

If

you

have

IP

connectivity,

people

want

to,

as

I

said,

Kathy

ICMP,

a

v6

or

message

bags

want

to

be

able

to

do

a

ping,

a

trace

valve.

C

So

what

do

we

do

if

the

the

problem

happens

somewhere

here

on

the

everyone?

What

kind

of

respond

do

we

send

back?

Do

we

want

to

propagate

those

into

the

lp1

network

or

not?

Is

it

safe?

Is

it

reasonable,

etc

and

also

on

DL

in

the

other

way?

Probably

we

want

an

ICMP

v6

error

message

back

from

the

device

if

it

sends

an

ipv6

message,

but

we

don't

explain

the

device

to

run

a

traceroute,

because

it

says

nobody

just

no

keyboard

whatever.

C

I

G

G

C

A

So

since

Pascal

two

layers

and

individual

contributor,

since

you've

mentioned

Europe

I,

sit

here,

I

know

where

so

it's

like

your

honor

I'm,

aware

of,

like

yahuwah

by

code,

indicate

why

it's

not

read

the

context

of

shake

or

all

in

one

but

similar

it's

cold

in

something

like

the

gateway

would

actually

offload

the

or

processing

from

the

device

and

we

secrete

generators

in

paris

on

behind

effective

device

that

can

be

Mecca

file,

since

any

logical

function

like

back,

but

do

that?

Okay.

A

N

C

Or

is

it

yeah

we're

creaming

to

describe

here

is

scenario,

so

we're

saying

we

think

it

would

be

interesting

for

a

device

when

they

send

an

ipv6

message

out

and

there's

some

issue

with

his

ipv6

message

in

the

network

that

they

are

able

to

achieve

the

icmpv6,

our

message

back

and,

conversely,

on

the

other

side,

but

definitely

we

have

ipv6

flowing

both

ways

and

icmpv6

flowing

both

ways.

But

this

is

a

scenario

on

the

device

side

and

scenario.

N

C

That's

why

the

the

top

comes

with

it.

Thank

you

for

the

comment

anyway.

So

we

haven't

honestly

we're

having

worked

at

all

on

this

since

last

meeting

how

except

for

this

hackathon,

where

I

roll

implemented

this

part.

So

we

got

the

code

into

the

the

Chicot

on

this

side

and

when

we

said

ping

from

the

internet,

then

the

shakur,

instead

of

forwarding

the

ping

and

compressing

it

and

forwarding

to

the

device,

will

respond

with

an

echo

reply

on

behalf

of

the

device

and

the

way

we

did

it,

which

is

interesting,

is

kind

of

reuse.

C

The

the

compressor

implementation,

which

has

a

matching

thought.

We

look

at

ROS

and

we

have

a

matching

pattern

and

then

we

apply

it

for

compression,

and

then

we

added

a

few

hooks

such

that

when

this

matching

part

matches,

then

we

call

a

special

code

that

sends

I

echo

reply

back

so

just

a

point

of

reference.

We

have

some

of

this

working.

C

That's

a

very

valid

question.

It's

all

part

of

the

discussion

that

needs

to

take

place

in

and

it

revives

this

discussion

is:

what

do

we

want

to

do?

What

do

we

expect?

Certainly

not

responding

to

the

pin

ping

at

all

is

probably

a

bad

idea

in

general,

because

people

expect

that

you

know

they

have

an

ipv6

device

and

they

have

some

host

with

an

ipv6

address

expect

to

get

someone

so

back

to

the

pin.

But

what

can

of

answer

do

we

want

to

send

how

long

as

the

device

been

I

mean?

C

How

far

back

has

a

device

been

seen

for

us

to

decide

that

we

want

to

send

a

reply?

I

don't

know

could

be

an

hour

could

be

a

day.

Very

much

depends

on

the

network

on

a

class

of

device

if

it's

Class,

A

Class

C,

of

course,

is

if

it's

Laura

one

person

you

expected

expect

an

immediate

answer

back

if

its

class.

Here

you

understand

that

it

may

be

sleeping.

G

C

C

O

O

The

default

RTO

in

TCP

is

currently

one

second

and

the

default

Artyom

coop

is

randomly

chosen

between

two

and

three

seconds,

so

we

have

these

particular

RTD

characteristics

in

LP

ones.

However,

we

also

have

returns

mission

timers,

for

example,

when

we

use

coop

in

LP

one,

and

we

send

a

con

message.

There

will

be

a

retransmission

timer

running

and

also

she

defines

two

modes.

I

call

ways

and

I

can

read

that

use

retransmission

time

as

well.

So

the

question

here

is:

okay:

how

do

we

deal

with

these

particular

characteristics

of

our

titties

in

LP

one?

O

So

the

status

of

the

document

is

as

follows.

The

initial

version

of

the

draft

was

presented

in

Prak.

It

contained

an

analysis

of

what

we

now

call

the

uplink

RTD.

This

is

a

round

trip

where

the

first

message

is

in

the

airplane,

the

the

response

comes

in

the

downlink

and

also

it

contained

proposal

for

an

algorithm

for

the

RTO,

and

in

this

update

we

have

added

this

terminology

of

a

planar

t

teardown

linearity.

O

O

So

regarding

the

downing

RTD

analysis,

we

can

identify

that

it

comprises

two

components.

The

first

one

is

the

wait

time

until

the

next

uplink

transmission,

and

this

is

because

in

technology

such

as

sick,

fox

or

Laurel,

one

plus

a

a

downlink

packet

can

only

be

sent

in

a

dedicated

time

window.

That

has

been

offered

as

a

result

of

a

previous

uplink

transmission.

Therefore,

this

this

component,

this

wait

time,

will

depend

on

the

application

and

it

might

take

values

such

as

in

the

order

of

seconds

minutes

hours.

O

Although

it's

true

that

there

are

also

some

special

cases

where,

if

we

know

in

advance

that

we

want

to

perform,

when

do

we

want

to

perform

a

downlink

transmission?

It

would

be

possible

also

to

program

beforehand

some

bling

transmission,

so

that

it

might

be

possible

to

minimize

this

component,

ideally

even

down

to

zero,

and

then

the

second

component

in

the

downing

RT

team

would

be

the

time

since

the

uplink

has

been

completed

until

the

whole

darling

RTD

has

been

completed,

and

we

call

this

second

component,

a

basic

darling,

RT.

H

H

O

O

So

this

is,

after

the

uplink

has

been

done

yeah,

so

we

have

other

theoretical

analysis

similar

to

the

one

that

already

existed

in

the

document.

That

was

for

the

Appling

RTD.

Now

we

have

added

these

numbers,

which

correspond

to

basic

down

leaner

tt

for

lor,

1,

plus

a

and

also

for

sig

folks

for

a

variety

of

well

data

rates

and

Appling

mid

rates

respectively,

and

the

conclusion

is

similar

to

the

one

that

we

identified

for

the

appli-

values,

which

is

that

in

many

cases

we

have

RTS,

which

are

already

greater

than

the

default

co-op

RTO.

O

O

However,

if

delay

is

actually

relevant

for

the

application,

meaning

that

it

makes

a

difference

to

retry

after

one

second

compared

to

retrying

after

100

seconds,

and

also

if

we

expect

to

find

higher-order

are

titties

in

our

scenario,

then

we

might

consider

using

an

algorithm

such

as

the

one

that's

presented

here.

We

call

it

dual

RTO

algorithm

and

it

is

basically

a

two-state

machine

where

there's

two

separate

RT

log

rhythms

used.

O

There

is

the

high

RTO

state,

which

uses

one

RT

algorithm,

which

is

initialized

to

values

that

are

compatible

with

higher

titties,

then

there's

a

lower

T

or

state

which

uses

a

separate,

RT

algorithm,

which

is

consistent

with

lower

TD

values.

So,

for

example,

if

we

are

in

the

hierarchy

of

state

and

then

we

obtain

a

number

of

consecutive

lower

TT

samples,

then

the

mechanism

switches

to

using

the

low

RTO

algorithm

and

vice-versa

in

the

other

case.

O

So

this

is

just

an

example

to

illustrate

what

we

could

get

when

we

compare

what

happens

if

we

are

using

a

standard

single

TCP

RTL,

which

corresponds

to

the

curve

in

red.

Here

in

this

figure,

we

show

the

RTL

values

produced

by

this

standard,

TCP

RTL,

compared

with

the

ones

that

will

be

produced

by

the

dual

RTO

rhythm,

which

are

the

ones

in

green

when

there's

as

an

input,

the

sequence

of

RTD

values

that

are

shown

in

blue

in

the

figure.

O

As

you

can

see,

this

sequence

in

this

example

is

basically

that

RT

DS

are

by

default

low

like

around

one

second,

and

then

there

are

a

couple

of

intervals

in

which

the

RT

T's

are

around

100

seconds,

and

we

can

observe

that

the

TCP

RTL.

So

if

you

use

a

single

RTO

here,

it

struggles

with

the

variance

of

the

RTD.

That's

the

result

of

the

change

of

the

RTT

state

and

the

tcp

RTO

produces

very

large

values

even

up

to

300

seconds.

O

You

can

see

also

that

it

takes

some

time

to

converge

for

the

single

TCP,

RTO

and

also

the

worst

case

is

when

the

RTT

is

already

in

one

second

and

meanwhile,

during

this

conversions

phase,

after

a

transition

of

the

RTD

values,

the

TCG

RTO

is

stained

with

values

of

300

200

100

seconds,

in

contrast

with

the

dual

RTO.

As

soon

as

there's

the

detection

of

change

of

the

RTD

State,

it

switches

to

the

appropriate

RTO

algorithm

by

the

way

in

this

simulation,

what

we

use

is

to

separate

TCP

rtos.

O

O

So

one

consideration

here

is

that

it

is

actually

possible,

or

that's

the

assumption,

to

know

a

priori

in

advance,

which

will

be

the

higher

T

T

values.

For

example,

if

the

high

art

ET

intervals

are

due

to

packets

being

buffered

in

a

gateway

that

then

can

only

be

sent

because

of

some

uplink

transmission

that

allows

that

downlink

transmission,

then

it

means

that

the

RTT

is

actually

related.

It

depends

with

the

time

between

public

message.

Also.

O

Another

observation

is

that

the

amount

of

improvement

that

can

be

achieved

will

depend

on

the

actual

statistics,

for

example

the

duration

of

the