►

From YouTube: IETF105-ICCRG-20190723-1330

Description

ICCRG meeting session at IETF105

2019/07/23 1330

https://datatracker.ietf.org/meeting/105/proceedings/

A

A

A

A

A

B

A

A

What's

that

double

feature?

Thank

you.

That

was

the

yeah,

my

brains

also

not

really

functional.

At

this

point,

yeah

double

feature:

only

batch

we've

got

Praveen

from

Microsoft

talking

about

it.

That

plus

plus

some

of

you

may

have

seen

that

before.

But

it's

it

was

popular,

so

we're

bringing

it

back

and

we

have

our

LED

back

from

Marcelo,

which

will

build

on

this

to

do

receiver

side,

congestion

control.

A

A

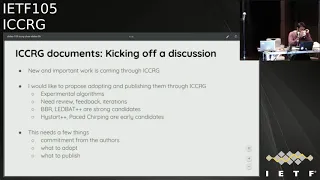

So

this

is

just

kicking

off

a

discussion.

This

is

getting

the

conversation

started.

Icc

RG

used

to

has

published

documents

in

the

past,

and

we

would

like

to

start

doing

that

again.

There's

the

context

is

that

there's

new,

an

important

work

coming

through

ICC

RG

now-

and

this

is

something

that

we

are

seeing

as

consistent

presentations

coming

through

these

are

experimental

algorithms.

They

need

continuous

review,

they

need

feedback

from

the

community,

they

go

through

iterations

and

they

come

back.

This

seems

more

and

more

like

a

process

that

should

turn

into

something

sort

of

an

eruption.

A

Some

sort

of

publication

eventually

be

BRM.

Get

back

dismiss,

are

strong

candidates

at

this

point,

the

brand

new

high

star,

plus

plus,

and

also

based

chirping

our

early

candidates,

but

we'd

like

to

see

this

sort

of

practice

start

to

happen.

I'm

completely

aware

of

the

fact

that

this

needs

a

few

things

and

has

implications.

This

needs

commitment

from

the

authors

to

keep

coming

doing

things

that

they

might

otherwise

not

do.

This

needs

us

to

agree

on

what

it

means

to

adopt

something,

what

it

means

for

a

research

group

toward

out

the

document

so

to

speak.

A

It

also

does

mean

that

we

need

to

agree

on

what

it

means

to

publish

document.

What

do

we

if

I

I,

see

crg,

says

that

we

it's

going

to

get

published

as

an

ICC

IG

document?

What

should

that

signal

to

the

community?

We

do

need

to

figure

this

out,

but

I

think

we

can

solve

these

problems.

This

is

not

intractable.

A

C

Hi

I

was

closer

to

the

microphone,

so

I

stood

up,

Brian

Trammell,

do

it

so

I

Spencer

here

so

I

can

borrow

back

my

pan

orgy

chair

at

the

like,

so

soap.

An

orgy

basically

has

this

like

a

more

focused

set

of

research

questions

that

it's

looking

at

at

least

right

now

it

might,

you

know

mutate

into

something

a

little

bit

broader

and

we've

sort

of

kept

cozy

and

internet

drafts

which

were

adopted

internet

rush.

C

The

thing

for

the

for

the

the

research

group

and

the

process

runs

a

whole

lot

like

a

working

group

right

like

so.

You

basically

just

put

the

put

the

process

over

runs.

Fine.

One

question

that

we

have

in

terms

of

the

types

of

documents

that

we

have

in

the

research

group

are:

are

these

things

that

we

basically

just

want

to

keep

the

document

as

an

internet

draft

and

keep

it

alive

within

the

research

group?

C

Where

that

you

know

draft

I,

RTF

Arg

label

at

the

front

means

this

is

something

that

the

research

group

is

thinking

about,

not

necessarily

that

it's

ever

going

to

be

published

as

a

document

right

licks

or

something

that's

talking

about

sort

of

frameworks

for

how

you

would

consider

research

in

congestion

control,

algorithms.

We're

not

really

sure

that

we're

gonna

publish

any

the

documents

out

of

pan

or

G,

as

rfcs

I.

Think

that

clearly,

the

experimental

RFC's

for

for

some

of

the

experimental

algorithms

that

make

sense

to

publish

here.

C

D

In

my

experience,

the

issue,

the

issue

with

doing

this,

my

experience

with

telling

people

that

we

can

publish

things

directly

in

as

ECoG

was

that

their

response

usually

was

that

they

have

this

business

in

the

other

room

and

it's

very

interesting

idea:

Bob

I,

like

something

I,

don't

know,

I

I,

don't

know.

Probably

it

just

smells

too

much

of

this

is

a

useless

I

RTF

document

or

I.

E

Your

affair

has

been

tapped

by

the

mic

I'm

a

TS,

vwg,

chair,

I,

think

I

want

to

try

and

understand

what

the

differences

between

so

be

publishing

something

here,

I'm

taking

one

of

these

two

TS

vwg,

which

you

could

do

as

well.

So

there

is

a

difference,

because

these

groups

are

different

and

the

process

is

different

and

that

doesn't

mean

you

shouldn't.

Do

it

here

and

be

able

to

do

it

there,

but

we

need

to

be

clear

to

the

community

that

reads

these:

what

that

difference

is

and

I

don't

know

yeah

so.

F

G

Okay,

Westland

I

get

to

almost

take

on

my

former

aide

transporte

here.

This

actually

goes

back

to

when

I

was

a

previous

term,

because

that's

when

me

and

Laura

said

it

worked

quite

hard

really

I

said,

could

then

set

devices

the

odd

years

to

establish

this

process

of

trying

to

bring

in

things

into

a

society,

do

incremental

specification

first

and

then,

when

algorithms

were

proved,

we

could

move

them

on

into

the

ITF.

G

That

was

the

intention

of

the

process

back

then

ten

years

ago

and

I

think

there's

definitely

value

publishing

specs

experimentals

as

a

starting

point

and

then

see

what

reached

when

we

get

high

maturity

and

deployment

experience,

and

all

these

things

we

can

move

them

into

ITF

when

they're

suitable.

So.

H

David

Barack,

the

other

cheater

cheater,

who

happens

to

be

here

in

Montreal

I,

mostly

want

a

plus-one

Magnus's

view

of

how

the

world

should

work

is

very

nice

analogy

between

ICC

RGS

relations,

the

transport

area

with

a

CFR

G's

relationship

to

the

security

area.

This

is

where

the

deep

expertise

exists

on

the

congestion

control

algorithms,

including

every

last

little

detail

that

you

have

to

get

right

or

else

and

like

Magnus

I'd,

be

very

comfortable.

H

A

I

Jumped

up

when

Michael

said,

you

know

there

wasn't

much

interest

in

people

wanting

to

write

things

in

the

ICC

idea,

and

I

wondered

whether

going

back

to

Magnus

is

so

I'm

Bob

Briscoe.

Going

back

to

may

was

this

point

that

where

where

this

process

came

from

was

where

IETF

group

would

ask

the

ICC

RG

to

do

expert

review

and

that

sort

of

broken

apart,

because

there's

so

many

transport

protocols.

Now

that

would

use

the

same

congestion

control,

and

so

it

occurred

to

me

that

TSP

WG

might

be

the

place.

J

Samiha

could

have

been

as

an

as

an

individual.

My

opinion

is

that

we

should

not

talk

about

processes

right

now

and,

like

it

doesn't

really

matter,

we

I

would

be

super

excited

to

work

on

congestion

controls

in

a

like

cooperative

manner,

where

we

all

talk

about

problems

and

finding

solutions

together,

write

them

down,

make

sure

that

we

have

a

description

which

is

like

readable

and

implementable

and

we

all

like,

and

then

we

can

figure

out

what

to

do

with

it.

K

So

my

name

is

Michael

sharp

speaking

as

TCP

I'm.

Sorry

I

came

late

because

this

slide

was

not

on

the

other

gender,

so

I

haven't

seen

it.

There

was

another

room.

I

just

want

to

point.

That

I

disagreed

is

this:

what

is

written

here

and

it

would

rico-

require

recharging.

Tcp

Mbita

also

disagree

right

now.

I

just

avoid

a

big

minus

one

on

this

one

here,

I,

don't

know

what

has

been

said

before,

but

it

disagree

with

what

is

written

on

the

slide.

K

K

Disagree

with

the

publication

of

experimental

algorithms

such

as

hi

start

in

this

year.

She

because

this

is

in

charter

of

TCP

M.

So

if

the

authors

want

to

come

to

T

CBM,

the

Charter

allows

that

and

I

is

a

grievous

changing

that

part

of

the

Charter

right

now

without

having

a

bigger

process.

Discussion.

J

K

A

A

K

F

Matt

Mathis

I

think

at

the

end

of

the

day,

what

we're

arguing

about

is

what

you

need

to

do

in

order

to

put

I

CCR

G

a

document

name,

and

maybe

that

should

just

be

a

self

selection

process

to

help

some

of

us

find

other

documents

easier

and

just

recognizing

that

sort

of

the

rules

are

anybody

can

put

ICC

RG

in

a

file.

Name

is

sufficient

to

address

the

topics

on

this

line.

L

B

L

Informational,

our

grooms,

if

we

we

believe

something

is

mature

enough,

I

think

it

is

something

I

see

see.

A

G

could

certainly

do

equally.

This

is

the

IRT

F

and

doesn't

set

standards.

So

we

need

to

be

clear

on

boundaries

in

the

scope

of

what

we're

doing,

and

we

need

to

very

much

understand

the

relation

with

the

IETF

groups

and

someone

brought

up

the

crypto

forum

group

earlier,

and

that's

been

enough

that

someone

brought

up

the

crypto

forum

research

group

earlier.

L

A

M

N

N

Just

a

quick

outline

wanted

to

talk

about

an

open-source

alpha

or

a

preview

release

that

we

sent

out

last

night.

We'll

talk

about

the

status

of

the

code.

Some

quick

lab

test

results

to

give

a

flavor

of

the

behavior

and

and

where

we're

trying

to

head

and

then

talk

about

the

deployment

status

at

Google

and

wrap

it

up.

N

So

we've

just

yesterday

released

an

open-source

alpha

or

preview

release

of

the

TCP

BB

r

v2

code,

and

the

quick

release

is

coming

very

soon

as

well.

I

think

probably

later

on

today,

and

the

goal

here

of

this

particular

release

is

to

enable

research

collaborators

to

do

some

testing.

Try

out

their

ideas,

get

more

real-world

test

miles

on

PBR

and

we

encourage

researchers

to

dive

in

and

evaluate

the

algorithm.

N

Those,

as

perhaps

a

starting

point

for

experimentation

and

I

would

just

remark

that

these

tests

are

using

network

emulation

using

the

Linux

net

m-q

disk

and

for

an

algorithm

for

an

overview

of

the

algorithm

itself.

I

would

point

people

to

the

slides

in

the

video

for

the

IETF

104

presentation,

as

well

as

some

discussion

in

the

IETF

102

slides

as

well.

N

So

what's

new

and

and

what's

changed

between

BPR

version

1

and

you

are

version.

2.

We've

talked

about

this

a

little

bit

before,

but

just

to

sort

of

recap:

the

properties

that

we

are

maintaining

between

version,

1

and

version

2

are

obtaining

high

throughput

with

a

targeted

level

of

random

packet

loss

and

also

being

able

to

bound

the

amount

of

queuing

delay

that

a

flow

is

is

causing,

despite

the

fact

that

it

might

be

traveling

through

a

very

buffer

blooded

path.

N

V2

includes

support

for

DC

TCP

or

alpha

s,

style,

ecn,

signals

and

we've

also

reduced

the

amount

of

throughput

hit

that

the

flows

take

for

entering

the

probe

RTT

phase,

where

the

flows

try

to

cooperate

to

expose

the

min

RT

t

of

the

path

and

so

in

the

following.

Slides

we'll

run

through

a

few

tests

just

to

sort

of

illustrate

the

the

general

flavor

of

the

behavior

and

illustrate

some

of

the

core

properties

that

we've

maintained

or

and

in

some

cases

added

to

v2

and

throughout

these.

N

The

metrics

that

we're

looking

at

include

throughput

cueing,

latency,

retransmit

rate

fairness,

that

sort

of

thing

so

just

a

tour

through

some.

Some

lab

test

results.

So,

first,

if

we

think

about

surviving

random

loss,

v2

continues

to

maintain

the

property

that

v1

had

that

it's

able

to

achieve

high

throughput,

even

with

a

targeted

level

of

random

packet

loss.

N

So

here's

an

example

of

an

experiment

where

we

take

either

we

take

one

flow

and

it's

either

cubic

or

bbr

or

BB

our

version

two

and

we

send

it

through

an

emulated

path

where

the

link

bandwidth

is

one

gigabit.

The

min

RTT

is

hundred

milliseconds

as

the

bdp

of

buffer,

and

then

we

do

a

bulk

transfer

with

a

particular

level

of

random

packet

loss.

N

Spanning

orders

of

magnitude

to

the

x-axis

is

log

scale

here.

So

we

can

see

that

you

know

cubic

is

achieving

low

throughputs,

as

is

well

known,

it's

very

sensitive

to

packet

loss,

whereas

PBR

version

one

is

achieving

a

high

throughput

up

to

about

fifteen

percent

loss

rate,

we'll

sort

of

see

the

the

corollaries

of

this

in

some

of

the

follow-on

tests,

but

with

PBR

version

one

that

level

of

loss.

N

Tolerance

is

sort

of

implicit

in

the

magnitude

of

the

bandwidth,

probing

that

it's

doing

by

contrast,

PBR

version

two

is

an

algorithm

designed

around,

including

an

explicit

loss

threshold

of

a

level

of

packet

loss

that

it's

trying

to

target.

So,

for

example,

here

the

sender

was

configured

with

a

loss

threshold

of

two

percent,

which

means

that

if

the

short-term

or

sort

of

instantaneous

loss

rate

that

its

measured

of

the

last

round

trip

time

is,

is

two

percent.

N

N

So

you

can

see

at

low

at

low

random

loss

rates

it's

achieving

high

throughput,

but

it's

not

quite

as

high

as

BPR

version

one,

because

it

is

trying

to

explicitly

leave

some

Headroom

in

the

path

or

other

flows

to

discover

available

bandwidth

and

to

keep

queuing

and

losses

low.

And

then

you

can

see

that

the

VBR

version

to

throughput

sort

of

it's

reduced

right

around

the

the

1%

to

2%

range

there,

which

is

because

of

that

explicit

loss

threshold

of

2%.

Why

does

it

fall

off

at

that

point?

Well,

the!

N

If

you

sort

of

configure

1%

random

loss,

then

you

know.

Obviously

the

losses

are

gonna

vary

round-trip,

two

round-trip

time,

so

you

get

this

sort

of

falling

off

in

this

flavor.

So

that's

that's

one

example

another

here.

If

we

take

a

scenario

where,

where

we've

got

a

bottleneck

with

with

deep

buffers,

one

thing,

one

property

that

we've

carried

over

from

b1

to

b2

is

that

PBR

is

able

to

use

its

model

of

the

network

path

and

generate

an

estimate

of

the

bdp

of

the

path

and

then

bound

the

in-flight

data.

N

Based

on

that

estimate

to

keep

latency

queuing,

latency

reasonably

low,

so

here's

a

test,

that's

very

similar

to

test

that

we

showed

way

back

in

IETF

97.

So

this

is

showing

either

2

cubic

or

two

VBR

or

BB

are

two

flows

entering

in

staggered

times.

The

link

bandwidth

is

50

megabits,

minar,

tt's,

30

milliseconds,

and

then

we

run

a

ball

test

for

a

while

at

various

buffer

depths,

and

here

on.

The

x-axis

is

the

buffer

size

as

a

multiple

of

the

BDP

and

then

on.

N

The

y-axis

is

the

median

smooth

round

trip

time

sample

that

we

obtained,

and

you

can

see

you

know

as

well,

not

as

is

well-known

cubic,

will

sort

of

fill

any

buffer.

You

give

it

quite

quickly

and

sort

of

hang

out

at

that

buffer

occupancy.

So,

as

the

buffers

are

bigger

than

your

RT

T's

are

always

bigger,

whereas

vvr

version

1

and

2

no

matter

the

buffer

depth,

they

will

have

their

estimate

of

the

bdp

and

they'll

bound

their

in-flight,

based

on

that

to

keep

the

latency

within

a

more

reasonable

range.

N

N

Their

bottleneck

here

is-

has

a

essentially

a

FIFO

with

the

maximum

depth

of

1,000

packets,

which

is

12

milliseconds

at

this

bandwidth.

But

the

interesting

thing

here

is

that

it's

doing

DC,

TCP

or

l4

s

style,

ecn,

marking

it

on

packets

that

had

more

than

a

242

microsecond

sojourn

time,

which

is

basically

saying

once

the

queue

gets

to

be

20,

packets

or

more

then,

there's

a

sort

of

step

up,

and

you

get

ecn

marks

on

on

packets,

for

queues

of

that

length

or

longer.

N

Ours

is

unable

to

bound

the

in

flight

in

a

in

a

way

that

we'd

like

and

on

a

experiment.

This

chart.

By

contrast,

if

we

look

at

DC

TCP

and

BB

our

version

2,

they

were

able

to

use

those

ECM

signals

to

keep

the

queue

right

around

the

EC

n

marking

threshold

for

smaller

numbers

of

flows

and

only

had

very

large

numbers

of

flows.

N

Do

you

start

to

see

it

make

excursions

above

that

target

range,

with

BB,

r2

being

slightly

more

aggressive

in

its

probing

and

thus

having

slightly

higher

RT

T's

with

larger

numbers

of

flows?

If

we

look

so

that's

the

latency

picture

in

this

kind

of

experiment,

we

look

at

the

loss

rate

picture.

It's

even

more

dramatic

notice.

I

N

N

So

another

property

of

BB,

our

version

two

is:

it-

has

an

explicit

strategy

for

coexisting

with

with

Reno

and

cubic

flows

within

a

particular

performance

envelope,

and

this

slide

is

just

to

sort

of

illustrate

the

flavor

of

that.

Obviously,

if

you're

talking

about

is

to

do

a

complete

evaluation

is

a

very

large

and

dimensional

space

lots

of

tests.

You

need

to

run,

but

this

is

just

one

to

illustrate

some

of

the

key

dynamics

here.

So

this

is

a

bulk

throughput

test

where

there's

one

cubic

flow

and

one

flow.

That's

either

media

or

VBR

version.

Two.

N

It's

sort

of

the

15

mil

50

megabit

bottleneck

with

a

30

millisecond,

30,

millisecond,

mid

RTT,

and

it's

a

three

second

bulk

throughput

test

with

varying

about

far

depths

here

on

the

x-axis

and

then

we're

showing

the

throughput

of

the

bbr

version,

1

or

version

2

flow

and

then

basically

cubic

gets

the

rest.

So

we

can

see

here

at

at

lower

buffer

depths

bbr

version.

1

grabbed.

You

know

sort

of

43

to

45

megabits

it,

whereas

bbr

version

2,

because

it

has

an

explicit

strategy

about

how

it's

going

to

probe

for

bandwidth

and

bound.

N

It's

in

flight,

it's

able

to

have

a

throughput,

that's

much

closer

to

the

approximate

fair

share,

they're,

reasonably

low

buffer

depths

and,

of

course,

as

the

buffers

are

deeper

and

deeper,

the

cubic

tends

to

build

such

an

enormous

queues

that

be

be.

Our

throughput

does

tend

to

gradually

fall

off,

as

the

buffers

are

deeper

and

deeper,

but

we

expect

in

the

in

the

common

case

the

the

buffers

should

tend

to

be

around

one

BTP

or

to

be

DP

and

in

well

provisioned

networks.

So

we

expect

that

the

common

case

should

be

quite

reasonable.

N

So

another

aspect

that

we

wanted

to

highlight

was

that

bbr

version

2

because

of

it

uses

loss

as

a

signal

and

uses

that

to

dynamically

adapt

the

amount

of

in-flight

data

it's

willing

to

maintain

it

has

considerably

lower

loss

rates

than

v1

in

shallow

buffer

situations.

So,

in

this

experiment,

we're

looking

at

the

retransmit

rate

for

an

ensemble

of

bulk

close

the

here,

the

link

bandwidth

has

a

gigabit

min

RTT

is

100

milliseconds,

it's

quite

a

big

BDP

and

then

it's

a

five-minute

test

and

the

buffer

here

is

very

tiny

relative

to

the

BGP.

N

It's

about.

2%

of

the

bdp,

but

that

can

be

quite

representative

of

the

situation

you

have

if

you

build

a

high

speed

when

out

of

commodities

which

is

with

shia

labeouf

errs.

This

is

the

kind

of

scenario

that

the

flows

will

need

to

deal

with,

and

so

here

we

can

see

that

cubic

by

virtue

of

its

design

and

sensitivity

to

loss

keeps

the

loss

rate

quite

low

BB.

Our

version

1,

because

it

is

agnostic

to

loss

and

because

of

a

couple

other

factors

tends

to

have

tends

toward

a

15%

loss

rate.

N

If

there

are

large

numbers

of

flows-

and

you

can

see

that

in

the

PPR

version,

one

line

or

by

contrast,

PPR

version

two

because

of

its

model,

is

more

rich

and

includes

loss

as

a

signal

to

feed

that

model.

It's

able

to

keep

the

loss

rates

bounded

in

the

region

that

is

below

its

targeted

lost

threshold.

Did

you

was

there

a

question

that

you

wanted?

Okay,

thank

you.

N

So

those

are

the

labs

lab

tests

that

we

wanted

to

sort

of

run

through

to

convey

a

flavor.

Obviously

this

is

is

algorithm

and

the

code

is

still

a

work

in

progress

and

obviously

more

tests

will

need

to

be

run,

but

we

wanted

to

sort

of

convey

the

the

flavor

of

where

we're

at

and

where

we

would

like

to

have

it

in

the

properties

that

we'd

like

to

maintain

and

to

add.

So

a

quick

note

on

the

status

of

the

the

algorithm

in

the

code.

N

N

We

can

have

Q

pressure,

that's

higher

than

desired

when

there

are

large

aggregates

of

the

BRV

2

flows,

and

we

can-

and

it's

also

case

that

the

the

ecn

response

is

not

really

well

tuned

for

both

for

very

long.

Our

TTS

in

the

hundreds

of

milliseconds

and

it's

also

not

quite

tuned

for

cases

with

more

flows

than

slots

in

the

bdp

and

I,

would

note

that

both

of

those

issues

are

sort

of

shared

with

DC

TCP,

which

is

also

so

far

intended

and

and

deployed

so

far

for

cases

within

a

data

center.

N

So

where

are

we

in

our

deployment

with

this

code,

as

I

mentioned

in

March,

we

do

have

a

global

experiment,

running

on

YouTube

for

a

few

percent

of

users,

and

what

we

see

so

far

is

that

it

it's

has

much

lower

queuing

delays

than

cubic

and

even

slightly

lower

than

PBR

version

one.

It

reduces

considerably

the

the

packet

loss

rates

versus

V

one

and

gets

them

closer

to

cubic.

N

Then

then,

V

one

we're

also

using

this

code

internally

in

experiments

between

and

within

some

Google

Data

Centers,

and

what

we're

seeing

there

is

that

bbr

v2

has

lower

tail

latency

compared

to

the

previous

DC

TCP

style,

congestion

control.

That

was

it's

deployed

for

TCP

within

Google

Data

Centers,

and

we

also

in

our

deployment

and

testing.

We

found

in

really

interesting

performance

issue

with

the

linux

AK

implementation

that

turned

out

to

be

a

surprisingly

high

impact

issue

for

for

data

center

performance,

which

we

can

talk

about

offline.

N

But

the

basic

flavor

is

kind

of

inch,

seeing

where

the

the

Linux

acknowledgement

code

actually

does

not

just

check

to

see.

If

you've

accumulated

more

than

one

MSS

of

unacknowledged

data,

it

also

wants

to

be

able

to

advertise

a

receive

window.

That's

as

big

as

the

previous

receive

window

it

advertised,

which

essentially

means

that

it

very

often

ends

up

waiting

for

the

application

to

read

all

the

data

out

of

the

socket

before.

It

then

sends

an

acknowledgement.

N

Advertising

the

same

and

I

can

see

Matt

frowning

over

here

and

then

it

before

it

decides

to

send

an

acknowledgement

which

can

sort

of

include

the

application

in

the

RTT

loop

of

the

congestion

control

and

cause

some

serious

latency

issues

anyway.

That

was

an

interesting

issue

and

it's

it's

a

common

issue

too

in

the

core

TCP

stack,

but

we

happen

to

run

into

it.

N

During

this

testing

of

this

transition,

we

can

talk

about

it

offline,

a

few

queries

anyway,

we're

continuing

to

iterate,

using

both

production

experiments

as

part

of

the

gradual

rollout

and

also

control

lab

tests.

So,

in

conclusion,

we've

open

sourced

a

first

sort

of

alpha

alpha

or

preview

release

of

PBR

version.

Two:

it's

ready

for

folks

to

take

it

out

for

a

spin

in

a

research

experiment

context,

and

we

obviously

invite

researchers

to

share

ideas

for

test

cases

or

test

Suites

metrics

that

they

think

we

shouldn't

be

looking

at

sharing

their

test.

E

Goering

Fair

has

thanks

for

bringing

this

here,

because

it's

significantly

different

to

the

previous

bbr

instances

and

it

seems

to

be

having

a

really

good

way.

How

much

ditch

have

you

on

different

experimental

results

in

your

labs,

because

it

looks

like

your

points,

didn't

have

a

confidence

intervals

or

anything

so

I'm

kind

of

wondering

whether

you

to

lots

of

different

snares,

different

delays,

different

traffic

mixes

or

whether

it

was

really

just

a

first

cut.

Yeah.

N

I

think

I

would

characterize.

This

is

the

first

cut

to

give

a

sense

of

the

flavor

of

the

behaviors

that

we're

looking

for

the

properties

that

we're

looking

for

and

I

I

agree

and

take

your

point

that

a

congestion

control

needs

a

lot

more

experiments

than

the

ones

we

showed

on

the

slide

before

you

say

you

know

you're

ready

for

yes,.

E

E

N

Mean

for

the

DCN

in

a

high

RTT

question:

yeah

yeah,

absolutely

so

the

I

think

the

direction

that

we

so

I

actually

haven't

in

I'm

working

with

an

intern

right

now

to

actually

do

experiments

in

this

direction.

The

I

think

my

hunch

is

that

what

makes

sense

would

be

to

include

a

response

to

ecn

that

is

in

the

rate

space,

so

that

flows

with

different

itt's.

As

if

they're

responding

in

an

in

the

rate

dimension,

then

everybody

can

respond

in

a

similar

way.

N

Despite

having

different

itt's

the

issue

with

long

RT,

T's

and

C

purely

see

you

and

based

algorithm.

That

sort

of

happens

immediately

is

the

the

long

RT

t

flows

in

the

stream

of

ecn

signals.

They

they

get,

will

sort

of

see

an

oscillation

between

EC,

unmarked

and

clear,

and

it

can

happen

on

the

scale

of

milliseconds.

But

if

you're

RT

long,

you

know

if

you're

art,

100

milliseconds,

you

may

see

a

whole

saga

of

up

and

down

and

up

and

down.

N

But

that

means

that

every

RTT

of

yours

has

ecn

marks

and

so

using

a

classical

style

algorithm.

You

do

multiplicative

decrease

every

single

RTT

of

your

life

until

you

converge

down

to

a

Sealand

of

two

and,

if

you're,

sharing

with

a

flow

that

has

an

RTT

of

50

microseconds

and

it

has

a

Seawind

of

it's

quite

happy.

N

Yes,

so

we

are

one

of

our

next

to

do

items

now

that

the

code

is

out

there

is

to

update

the

internet

draft

that

currently

is

describing

v1

and

update

it

to

cover

v2.

That

is

definitely

something

we

agree

is

important

and

we

know

it's

particularly

important

for

folks

that

are

part

of

the

open-source

ecosystem

so

we're

yes,

that's

on

our

to-do

list.

O

O

O

O

N

A

P

A

Q

N

Yeah,

it's

her,

so

it's

referring

to

both

the

fact

that

it's

expecting

the

ecn

marks

to

happen

at

a

low

threshold

of

Q

and

the

fact

that

we

are

expecting

the

receiver

to

reflect

the

EC

on

marks

on

a

sort

of

per

packet

basis.

Rather

than

echoing

the

see

e

marks

as

having

been

experienced

for

an

entire

round

trip

time

and

until

that's

acknowledged

with

the

CW

are

a

bit.

So

it's

the

essentially

DC

TCP

style

or

ecn

yeah.

N

A

A

T

T

Or

less

so,

I

guess

everyone

won't

know

here

knows

what

LED,

but

is

right.

I'll

let

buddy

is

a

congestion

controller

that

provides

less

than

my

best

effort,

essentially

defines

a

queuing

delay,

target

team

right

and

increases

and

decreases

the

congestion

we

know

based

on

whether

the

measured

queuing

delay

is

above

or

below

the

target,

all

right.

The

queuing

delay

space,

the

Qun

will

delays

inferred

from

the

one-way

delay

experienced

by

the

packets.

T

U

T

You,

okay,

sorry!

Well

not!

So

what

we

were

proposing

here

is

a

receive

driven

version

of

late

right.

So

the

idea

that

we

are

proposing

here

is

we're

going

to

make

a

congestion

controller

that

runs

on

the

receiver

right,

that

is

less

than

best

effort

is

inspiring

LED,

but

well,

it's

very

close

to

let

but

plus

plus,

that's

why

it

was

interesting

for

Praveen

to

present

LED

but

plus

plus

before

him

and

I

present

this

first

but

later,

but

that's

fine

right.

So

the

idea

here

is

a

congestion.

T

Control

would

run

in

the

in

the

receiver

and

the

receiver.

We

contract

will

control

the

sender

through

the

receiver

wind,

okay,

one

significant

change

is

that

we're

going

to

measure

the

RTT

instead

of

measuring

the

one-way

delay,

so

we're

going

to

estimate

the

queuing

delay

using

the

RTT

rather

than

the

one-way

delay.

So

what

are

the

motivations?

Why

we

want

to

do

this

so

so

we

have

essentially

three

motivations

to

regarding

deployment

models.

T

One

is

this

is

widely

use

for

distributing

operating

systems

updates

right.

Some

operating

systems

updates

are

being

done

through

syrians

and

there

have

been

observed

that

in

many

cases,

CDN

surrogates

do

not

support

LED,

but

and

also

it's

hard

to

convey.

Well,

there

is

no

signal

in

to

convey

if

they

did

support

that,

but

which

content

must

be

distributed

using

TCP

cubic

and

which

one

could

be

distributed

using

LED

right.

So

the

situation

is

that

the

operating

system

gets

uploaded

to

the

CD

to

a

CDN

using

LED,

but

because

the

the

source

actually

supports

that.

T

But

but

the

distribution

from

the

CDN

surrogate

to

the

client

is

not

benefiting

from

Lebanon

right

if

we

have

a

receive

based

version

of

LED,

but

this

could

actually

enable

the

transfer

using

LED,

but

from

the

pseudo

rate

that

is

oblivious

to

led,

but

to

the

client

who

is

actually

will

be

controlling

using

less

advanced

effort,

congestion

control

and

a

similar

situation.

Of

course,

when

you

have

something

like

a

proxy

or

a

firewall

or

stuff

like

that,

that

terminates

the

TCP

connection

right.

T

R

T

So,

let's

suppose

in

this

case

that

you

are,

you

have

a

mobile

phone

you're

having

you're

watching

a

video,

and

then

you

receive

what's

up

call.

One

thing

that

you

may

want

to

do

is

use

humming

in

general,

when

you're,

watching

the

video

use

TCP

and

then,

if

downloading

your

files

or

whatever

using

TCP,

and

then

when

you're

when

you're

a

phone

call

comes

in,

you

may

want

to

start

using

less

than

best

a

for

for

and

your

background

download

all

right.

T

So

if

you,

if

you

are

able

to,

if

you

enable

the

the

the

end,

the

end

the

client

to

determine

which

flows

are

led

but

and

which

flows

are

a

regular

TCP,

then

you

will

empower

the

the

client

to

manage

its

incoming

link

capacity

by

a

seer

assigning

different

congestion

controllers

to

different

to

different

flows

right.

So

these

are

the

three

main

use

cases

that

we

have

in

mind:

CDN

distribution

proxies

and

enabling

the

user

to

define

its

references

by

setting

different

congestion

controllers.

Okay,.

T

So

this

is

in

general

about

the

algorithm

piece

probably

falls

more

into

a

lead

plus

plus,

but

the

the

goals

for

the

design

is

basically

essentially

what

I,

what

I

assumed

the

original

lead

bath

designs.

Goals

word.

That

is,

if

you

have

some

latency

sensitive

delay,

sensitive

traffic

like

voice

when

you're

using

lead,

but

it

should

not

add

I

mean

the

delay

that

it

adds

should

be

bounded

to

some

target

right.

If

you

have

several

lead

but

flows,

they

should

be

they

should

that

should

be

fair

between

each

other

right.

T

So

the

algorithm

that

we're

proposing

is

essentially,

we

are

using

the

the

round

tip

time

in

order

to

estimate

the

queuing

delay.

So

we

measure

the

base

Rowntree

time

as

the

historical

minimum,

and

we

measure

the

current

round-trip

time

as

a

filter

off

of

last

few

samples.

We

estimate

from

that

the

current

queuing

delay.

We

define

a

target

for

what

should

should

be

the

queuing

delay

and

we

essentially

build

an

ideal

increase.

Multiplicative

decrease,

based

on

the

on

how

much

you

are

a

above

the

target

right.

T

T

So

this

this

was

most

about

like

that

plus

plus,

that

is

the

the

congestion

control

itself.

Then

I'm

going

to

dive

deeper

into

the

details

on

how

do

you

do

it

from

the

receive

side?

All

right,

so

we're

going

to

do

this

controlling

the

receive

window.

So

the

first

observation

is

a

received

window

has

already

is

being

used

for

something

right.

You

use

it

for

flow

control.

The

idea

here

is,

you

will

calculate

a

congestion

window

on

the

receiver.

T

T

The

interaction

with

the

sender's

congestion

control

again

because

the

window

actually

used

by

the

sender

is

the

minimum

of

the

congestion

window

and

the

receive

window,

because

the

receive

window

is

carrying

the

the

the

LED

but

the

lab,

but

calculated

window

n

LED,

but

will

be

more

aggressive

in

reducing

so

it's

likely

to

have

a

smaller

window

than

normal

congestion

control.

What

usually

will

happen

is

that

the

LED

bad

congestion

control

will

take

over

and

will

be

the

one

prevailing

because

it

will

be

the

one

expects

pressing

a

smaller

window.

T

So

the

other

thing

that

we

need

to

take

care

if

we

try

to

do

this

is

we

should

avoid

shrinking

the

window

right

because

we

have

this

multiplicative

decrease.

What

may

happen

is

that

at

some

point

we

need

to

express

our

window

that

it's

has

previous

value

right.

This

is

likely

to

be

in

most

cases

more

than

the

number

of

bytes

the

I

mean.

The

amount

that

you

need

to

receive

and

to

reduce

the

window

is

likely

to

be

more

than

the

amount

of

lights

that

you

have.

T

We

seen

the

packet

that

you

will

send

the

ACK

for

so,

if

you

actually

reduce

the

window

in

hive

right

away,

you

will

end

up

shrinking

the

window,

which

is

not

recommended.

So

essentially,

what

we're

going

to

do

is

we're

going

to

drain

enough

packets

from

the

in

flight

in

order

to

accumulate

enough

space

in

order

to

be

able

to

reduce

the

window

without

shrinking

right

this.

This

can

be

done

because

you

don't

do

I'm

going

to

placate.

T

It

decrease

more

than

once

per

round

trip

time

right

so

essentially,

because

you

only

reduce

it

once

at

most

once

per

round

the

time

you

have

enough

packets

to

drain.

In

order

to

then

be

able

to

express

whatever

window

you

want

smaller

than

it

is

so

that

that

that

should

be

feasible

and

then

the

other

problem

that

actually

media

suggested

when

I

was

talking

to

her

about

this

for

a

while

ago,

is

regarding

windows.

T

Scale-Up

ships

right

window

scale

is,

as

you

probably

are

aware,

of

I

change

of

the

units

on

the

that

you

express

the

receive

window

right

so

actually

window

scale,

values

between

zero

and

one

result

in

units

that

are

less

than

the

usual

maximum

segment

size

all

right.

So

you

see

that

that's

not

a

big

problem,

because

usually

you

want

to

decrease

your

window

size

or

increase

your

window

size

in

one

MSS,

I

mean

or

more

or

less

right.

T

If

you

have

values

that

are

larger

than

12,

12

foot

basically

will

be

twelve

thirteen

and

fourteen.

That

basically

implies

that

one

change

in

one

units

in

the

in

the

receive

window

will

be

more

than

one

MSS

right

in

that

may

generate

problems

in

the

sense

that

your

congestion

control

now

is

much

more

coarse,

because

the

units

it

can

express

itself

are

much

larger

than

one

MSS

right.

So

we

have

make

a

set

of

measurements.

T

B

T

Doing

this

has

a

fundamental

problem

that

is,

you

include

the

queuing

delay

on

the

reverse

path.

There's

nothing

I

mean

I,

don't

think,

there's

much

we

can

do

about

this.

Oh

I

haven't

come

up

with

anything

we

can

do

about

this.

This

basically

means

that

you

will

be

overly

conservative,

that

you

will

react

also

to

congestion

in

the

reverse

path.

That's

that's

it

right

and

we

need

to

deal

with

a

few

other

issues

in

order

to

to

make

this

work,

in

particular

a

fairly

common

case.

T

I

guess

it

will

be

peer

receivers

in

the

sense

that

you're

only

receiving

packets

and

you're,

not

so,

if

you're

only

receiving

packets.

If

you

want

to

measure

the

rounding

time,

you

cannot

send

it

and

match

it

with

the

ACK.

You

will

because

you're

only

receiving

packets

and

sending

acts

in

order

to

do

that,

we

will

use

the

the

timestamp

options

to

match

acts

that

we

have

sent

in

packets

that

are

coming

back,

data

packets

that

are

coming

back

so

that

that

should

work.

T

The

other

situation

that

we

may

have,

because

we

are

handling

pure

receivers,

is

that,

because

we

are

going

to

match

an

AK

with

a

packet,

we

may

have

an

artificial

increase

RTT,

because

the

source

is

not

sending

packets

right.

So

there

are.

We

have

identified

two

reasons

why

the

source

is

not

sending

packet.

T

One

is

because

it

has

no

data

to

send

well

in

this

case

either

you

don't

care

because

you're

not

managing

anything,

because

there

is

no

traffic

or

either

this

happen

once

in

a

while,

for

instance,

you

have

blocks

of

the

that

you're

sending

right

every

time

the

block

ends.

You

have

some

times

of

period

without

data.

The

way

we

deal

with

this

is

essentially

we

filter.

So,

instead

of

measuring

the

current

mounted

time,

you

do

a

filter

of

the

last

10

packets.

So

probably

you

get

rid

of

that

sample.

T

That

is

that

is

that

it's

outside

that

has

this

artificially

increase

RTT

right,

so

probably

that

that

should

work,

and

the

other

case

where

you

may,

the

sender

may

not

be

able

to

receive

later

is

because

you

are

clamping

down

the

the

the

the

receive

window.

So

if

you

are

artificially

telling

him

that

he

can

not

send

because

you're

reducing

the

the

receive

window,

he

won't

be

able

to

send,

and

at

that

point

you,

the

rtt

will

be

increased

because

you're

not

allowing

him

to

send

it.

T

But

that's

fine,

because

you

actually

know

when

you're

doing

that,

so

you

can

simply

avoid

measuring,

while

you're

reducing

the

the

receive

window

right.

So

you

can

accommodate

for

this.

The

other

problem

that

we

have

encounter

while

doing

this

is

regarding

the

real

reality

of

the

timestamp

values

right.

The

timestamp

values

have,

depending

on

the

clock,

but

you

use

for

that,

has

a

given

the

molarity.

T

You

may

end

up

with

multiple

packets

going

with

the

same

time

sample

all

right

so

that

that

makes

harder

too

much

because

you

don't

know

which

one

matches

with

which

one,

because

we

don't

really

need

to

measure

all

that

all

the

packets.

Just

what

we

simply

do

is

we

might

we

measure

the

first

one

right,

the

first

time

stamp

that

we

send.

We

are

you

in

value

in

the

first

one

that

that

would

receive

with

the

same

value.

B

T

Drop

all

the

other

samples

that

we

have

right,

and

that

gives

us

an

proper

original

measurement

of

the

of

the

rtt,

so

molar

design

choices

internet.

What

furnace

has

been

addressed

using

multiplicative

decrease

that

has

been

I

mean

this

there

is.

There

is

a

paper

that

explains

why

this

case

I

mean

I,

guess

is

fairly

well

understood,

reacting

to

packet

lost.

What

we

do

is

similar

a

slit,

but

we

do

a

multiplicative

decrease.

T

The

coefficients

for

multiplicative

decrease

regarding

time

and

regarding

loss,

don't

know,

do

not

have

to

be

same,

I

mean

we

actually