►

From YouTube: ANRW-DNSandSecurity

Description

DNSANDSECURITY meeting session at ANRW

A

So

this

is

the

session

on

DNS

and

security.

It's

a

fairly

packed

agenda.

We

have

an

invited

talk

on

dragonblood,

discussing

some

problems

with

WPA

three

that

will

hopefully

be

a

very

much

interest

to

the

security

and

Qatar

a

few

people

in

the

room,

and

we

have

several

talks

on

DNS,

both

with

the

lens

on

privacy,

performance

and

security.

So

without

further

ado

like

to

introduce

Matthew

fennhoff,

he

is

a

postdoc

at

NYU.

Let's

give

him

a

round

of

applause

as

you're

started,.

B

So

thank

you

for

the

introduction,

so

I'm

going

to

be

talking

about

WPA

three

on

specifically

about

the

dragonfly

handshake

that

is

used

now

in

this

protocol

on

this

dragonfly

handshake.

It's

also

used

in

EEP

PWD

on

this

research

was

done

in

collaboration

with

al

Ronan.

So

to

give

a

very

quick

background,

dragonfly

is

a

password

authenticated

key

exchange,

which

means

it

provides

mutual

authentication.

B

It

negotiates

a

session

key

which

you

can,

after

the

handshake

used

to

encrypt

actual

communications

and,

more

importantly,

it

is

a

handshake

that

provides

forward

secrecy

on

it,

defense

against

dictionary

attacks.

Now,

in

the

case

of

dragonfly,

there

is

no

protection

against

a

server

compromise.

So

concretely,

let's

say

in

the

case

of

WPA

3,

if

not

occur,

would

somehow

get

access

to

the

access

points.

B

So

the

handshake

consists

of

two

main

phases

not

going

to

go

into

detail.

Basically,

we

first

have

the

commit

phase

where

the

actual

session

key

is

being

negotiated,

and

then

there

is

at

least

in

the

case

of

Wi-Fi,

a

confirmed

phase

that

confirms

that

both

parties

indeed

used

the

same

password

on

that

day,

negotiated

the

same

session

key.

The

important

question

here

is:

how

is

this

password

converted

to

a

group

element

and

I'm,

going

to

start

with

a

simple

case

here,

where

we

are

using

so-called

mod

p

groups?

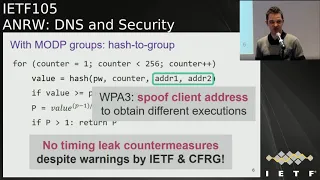

B

So

an

intuitive

and

naive

way

to

convert

the

password

into

a

group

element

is

to

simply

take

the

hash

of

the

password

in

the

case

of

Wi-Fi.

We

also

combine

it

with

MAC

addresses

of

the

client

on

the

server,

so

in

other

words,

we

include

the

identities

of

the

peers.

We

then

take

the

output

of

the

that

hash

value.

We

perform

some

calculations

to

get

a

p-value.

B

B

This

would

be

the

intuitive

way

to

convert

the

passwords

into

a

group

elements,

but

there

is

one

thing

that

is

missing

here.

The

problem

is

that

our

hash

output

here

our

value

it

can

be

bigger

than

the

prime

of

the

group

that

is

being

used,

and

in

this

case

the

calculations

that

we

did

here

wouldn't

be

valid.

So

how

do

we

avoid

this?

Well,

the

way

that

it

was

decided

to

do

this

for

dragonfly

was

to

simply

include

an

if

test

here.

If

the

value

is

bigger

than

the

prime,

then

well,

we

try

again.

B

In

other

words,

we

include

a

counter

here.

We

always

incremented

by

one.

Until

we

get

a

hash

output

that

is

bigger

than

the

prime

and

then

we

can

continue

now,

of

course,

this

leaks

time,

there's

a

side

channel

here

and

in

fact,

an

in

mailing

lists

of

the

ietf

on

the

CFR

gee

people

warned

about

this.

They

said

this

doesn't

look

good.

B

B

One

thing

that

makes

this

timing

attack

a

bit

more

interesting

here

is

that

we

can

pretend

to

be

clients

a.

We

can

then

measure

how

many

iterations

it

took

to

find

the

password

and

because

the

identities

of

the

clients

are

included

here

in

the

case

of

Wi-Fi,

we

can

then

spoof

another

MAC

address.

We

can

try

to

connect

with

the

access

points

and

then

we

can

see

how

many

iterations

are

then

to

accusing

another

MAC

address.

Then

we

can

spoof

and

we

can

spoof

a

lot

of

clients

on

each

time.

B

So

we

tried

to

dis

attack

by

setting

up

Wi-Fi

access

point,

so

it

wp8

three

access

points

on

a

bit

of

older,

raspberry

pi.

The

reason

we

pick

the

raspberry

pi

here

is

because

it's

CPU

is

actually

similar

to

the

one

of

a

professional

Wi-Fi

access

point

and

when

we

then

the

dist

IMing

measurements,

we

found

out

that

indeed,

these

differences

can

be

easily

measured

simply

over

Wi-Fi.

B

So

you

can

see

the

example

here

where

we

have

the

blue

full

line.

That

corresponds

to

the

timing

response.

If

the

algorithm

only

takes

one

iteration,

then

we

have

the

case

where

it

takes

two

iterations,

three

iterations

and

so

on.

In

practice,

we

found

that

against

our

target.

If

we

make

75

timing

measurements,

then

we

can

accurately

tell

how

many

iterations

it

did.

So

this

is

the

case

against

WPA

3

and

if

we

would

perform

a

similar

attack

against

the

EEP

PWD

protocol

against

so-called

IWD

clients,

which

is

a

Wi-Fi

client

in

linux.

B

B

So

we

now

know

that,

there's

a

timing

leak,

we

can

derive

the

number

of

iterations

that

were

being

used,

but

now

the

question

is:

does

this

really

leak

important

information?

In

other

words,

can

we

abuse

this

to,

for

example,

perform

a

dictionary

attack

on

the

answer

is

yes,

we

can.

Let

me

take

the

following

example.

We

are

say

again

attacking

a

WPA

three

access

point.

We

spoofed

a

client's

MAC

address

of

a

and

we

measured

that

in

this

case

the

access

point

took

two

iterations

to

derive

this

elements.

B

Well,

what

we

can

then

do

is

we

can

guess

some

passwords

and

our

example

here

we're

trying

three

different

passwords

and

we

can

then

simulate

this

method

that

converts

the

password

to

a

group

map

element

we

convert.

We

can

simulate

it

offline

on

our

own

PC

and

we

can

notice

here

that,

for

example,

for

password

one,

it

only

uses

one

iteration

which

doesn't

correspond

with

our

measurements,

so

we

can

exclude

the

password

based

on

that

on

the

other

two

passwords

they're

still

possible.

B

So

then

we

can

spoof

know

MAC

address

B.

We

can

again

measure

how

many

iteration

it

takes

in

a

real

world.

We

can

compare

this

to

our

simulated

results

and

we

can

continue

this

way

on

excludes

passwords

that

don't

match

our

observation,

and

we

can

continue

doing

this

until

we

uniquely

determines

the

the

password

that

is

being

used

so

in

this

example

its

password

three

that

matches

our

observations

and

in

general.

B

B

B

Unfortunately,

dragonfly

also

supports

rain

walkers

and,

interestingly

initially,

we

didn't

look

at

his

brain

pool,

curse

because

dragonfly

supports

of

a

lot

of

parameters.

So

it's

hard

to

analyze

every

possible

scenario,

but

the

reason

we

did

look

at

brain

pool

curse

is

because,

after

our

initial

disclosure

to

the

Wi-Fi

Alliance,

they

privately

created

some

recommendations

on

how

to

avoid

our

attacks

and

in

those

recommendations

they

said.

Ok,

you

can

use

brain

pool

curves

they

are

safe

to

use.

There

are

no

timing,

attacks

against

them.

B

Unfortunately,

when

we

check

that

there's

bad

news,

actually,

if

you

do

use

brain

pool

curves,

you

are

vulnerable.

So

here

we

also

fall

back

to

the

typical

security

advice.

Don't

write

recommendations

on

security

advice

privately,

but

we

should

all

know

that

already

so.

I

want

to

briefly

give

some

insight

into

why

these

brain

pool

curves

also

have

timing

leaks.

So

to

do

this

I'm

going

to

do

the

same

thing

like

I

did

with

the

mod

P

group

I'm,

going

to

briefly

explain

how

the

algorithm

works

to

convert

a

password

into

an

elliptic

curve.

B

Point

and

I'm

then

going

to

explain

where

this

timing

information

is

leaked.

So

if

you

would

want

to

hash

passwords

to

an

elliptic

curve

point,

a

naive

ID

would

again

me

to

just

take

the

passwords.

This

case,

we

again

combine

it

with

the

MAC

addresses

of

the

client

and

the

access

point.

We

take

the

hash

output

as

the

x

value

and

then

we

just

find

the

corresponding

Y

value.

I,

don't

use

that

as

the

elliptic

curve

point,

but

you

may

probably

now

already

have

one

remark

about

this,

namely

there's

not

always

a

solution

for

why?

B

So

what

can

we

do?

Well,

the

first

thing

we

can

do

is

we

can

calculate

this

value

here,

which

is

basically

Y

squared

and

we

can

see

if

it

is

a

so-called

quadratic

residue.

If

this

value

is

a

quadratic

residue,

then

we

know

that

the

solution

for

Y

exists

to

handle

the

case.

If

there

is

no

solution,

we

can

just

perform

a

loop.

In

other

words,

we

include

the

counter

in

our

values

that

are

being

hashed

on.

We

continue

executing

loops

until

we

found

a

solution

where

we

can,

where

the

square

root

exists.

B

Now,

there's

one

problem

with

this,

and

this

is

that

now

different

passwords

again

have

a

different

execution

time

now.

Luckily,

in

the

case

of

elliptic

curves,

they

listen

to

the

warnings

and

they

include

it

suggested.

The

defense

of

always

executing

K

iterations,

no

matter

when

the

password

was

found,

I,

don't

practice

here.

They

generally

use

a

value

of

k

equal

to

40.

B

There

is

one

other

extra

defense

that

they

included

here,

which

is

that

once

the

password

has

been

found,

they

execute

these

extra

iterations

based

on

a

random

password.

Now

this

is

just

defense

in

depth

in

case

that

there

is

something

wrong

with

the

code.

That's

the

best

way

to

explain

that

for

now

now

what

is

the

problem

here?

The

problem

is

quite

similar

to

the

mod

P

case,

so

maybe

some

of

you

can

already

figure

it

out.

The

problem

is

again

here.

B

This

hash

value

that

we

get

here

as

output

can

be

bigger

than

the

prime

that

is

being

used

in

the

scripted

graphic

group.

What

was

the

solution

here?

Well,

they

again

just

say

that

if

the

value

is

bigger

than

the

prime

just

go

to

the

next

loop-

and

you

can

see

the

problem

here-

the

problem

is

that

if

this

value

is

indeed

bigger

than

the

prime,

then

we

don't

do

these

quadratic

residue

tests

and

we

again

get

different

execution

times

now.

B

The

interesting

thing

here,

if

we

use

NIST

curves,

the

probability

of

this

condition

being

true,

is

very

low,

but

with

rain

pool

curves,

this

has

a

probability

of

say

between

10,

between

20

to

close

to

50

percent,

depending

on

the

specific

curve

you're

using

on.

In

that

case,

no,

the

quadratic

quadratic

test

may

be

skipped

so

again

we're

in

trouble.

Now.

B

The

timing

attack

against

this

case

is

a

bit

less

straightforward,

because

we

do

still

have

these

extra

iterations

that

are

being

executed,

and

on

top

of

that,

these

extra

iterations

that

are

executed

after

the

passwords

element

has

been

found.

They

are

based

on

a

random

password

so

to

quickly

illustrate

the

impact

of

this.

In

practice.

Let's

say:

I

perform

some

executions

using

the

same

MAC

address

then,

in

this

case

here

green

illustrates

that

I

executed.

The

quadratic

residue

test

in

a

loop,

the

small

bar

here,

illustrates

that

output

was

bigger

than

the

prime.

B

So

there's

no

quadratic

test

say

that

after

four

iterations

we

found

the

password.

So

at

that

point,

extra

iterations

are

executed

based

on

a

random

password.

So

we

get

some

amount

of

extra

time

that

the

algorithm

takes.

If

you

now

execute

the

algorithm

again,

we

always

have

that

the

first

iterations

they

are

identical

because

they're

based

on

the

real

password,

but

these

extra

iterations

they

use

a

random

password.

So

each

time

we

also

get

a

random

amount

of

extra

time

that

is

being

added

to

the

execute.

B

For

example,

if

the

password

is

immediately

found

after

the

first

iteration,

you

get

a

lot

of

variance,

but

if

the

password

was

found

say

after

20

iterations,

you

get

a

low

amount

of

variance

on

top

of

that.

The

average

execution

time

also

still

leaks

information,

because

that

depends

on

the

number

of

iterations

needed

to

find

the

password

and

how

many

of

those

iterations

had

a

hash

output

bigger

than

the

prime.

So

there

was

no

quadratic

residue

test.

B

So

again,

we

have

the

same

case

here

that

this

forms

a

signature

of

the

password,

and

we

can

use

that

in

an

offline

dictionary

attack,

and

if

we

perform

this

test

again

against

a

raspberry

pi.

We

again

notice

that

this

timing

information

can

be

measured

over

Wi-Fi

in

the

case

of

brain

pool.

We

need

to

make

more

measurements

or

MAC

address,

because

the

timing

differences

are

smaller,

but

it's

still

feasible

to

do

this

in

practice

and

a

few

sorry

in

a

few

hours.

B

So

another

possibility

here

and

I'm

going

to

cover

this

very

briefly.

Instead

of

doing

timing

attacks,

we

can

also

do

cache

attacks.

Basically,

we

have

the

same

algorithm

as

before.

We

can

basically

use

flush

plus

reload

to

detect

when

the

password

was

found.

We

can

do

the

same

thing

with

brain

pool.

We

can

use

flush

unreal

out

to

detect

when

the

hash

output

was

bigger

than

the

prime

now

I'm

not

going

to

discuss

much

more

because

I

don't

have

time

for

this.

We

actually

found

a

lot

more

interesting

things.

We

also

found

some

implementation.

B

Specific

vulnerabilities

and

EEP

PWD

on

the

one

I

want

to

highlight

is

that

if

you

use

bad

randomness

in

dragonfly,

then

you

can

recover

the

plaintext

password.

So

that's

one

thing

to

take

into

account.

Maybe

if

you

design

a

handshake

like

this

I,

think

it's

good

to

also

discuss

what

would

be

the

impact

if

there

is

a

flawed

source

of

randomness.

B

We

also

found

a

text

specific

to

Wi-Fi,

but

I'm

not

going

to

mention

them

here,

and

the

interesting

thing

here

is

that.

Finally,

the

Wi-Fi

standard

is

now

being

updated

to

use

a

constant

time

algorithm

to

find

the

password

elements,

and

maybe

this

will

be

included

in

WPA,

3

I'm,

not

sure,

but

at

least

on

the

Wi-Fi

on

the

altitude

11

group.

They

are

working

on

it.

So

with

that

I'd

like

to

conclude

my

talk,

thank

you

for

your

attention.

A

C

The

first

two

attacks

you

showed

looks

like

they

could

be

easily

beat

well

ative

lee

simply

worked

around

by

just

by

redesigning

exactly

how

the

code

works

by

iterating

as

many

times

and

always

performing

the

the

the

quad

the

QR

test,

even

if

P

is

greater

than

if

the

point

is

greater

than

P

have.

Are

your

other

issues

that

you

haven't

addressed?

B

B

But

the

first

problem

is

that

this

is

very

tedious

in

practice,

so

there's

a

high

chance

of

developers

from

accidentally

still

introducing

a

direct

cache

attack

or

a

timing

leak,

so

I

would

not

recommend

that

at

all

the

other

problem

is

that

a

lot

of

times

the

Wi-Fi

handshake

is

offloaded

to

the

Wi-Fi

chip

itself,

which

is

which

has

which

is

basically

resource-limited

and

then

always

executing

40

iterations.

It's

just

way

too

costly.

If

you

wouldn't

recommend

it,

what

solution

would

you

recommend?

A

D

Okay,

thank

you

for

the

nice

introduction

and

welcome

to

my

talk.

So

my

name

is

Tony

I'm,

currently

an

assistant

professor

at

the

UC

Irvine,

and

this

is

a

joint

work

with

my

causers

from

Shanghai

University

for

the

universities

in

China

and

UT

Dallas,

and

actually

one

of

the

causer

as

in

do

I

sit

in

there.

So,

if

you

guys

later

has

have

questions

regarding

our

talks,

you

can

come

to

us

during

the

break.

So

let's

get

it

started.

So

the

topic

of

this

talk

is

about

the

D

s,

security

and

let's

first

give

some.

D

D

So

typically

you

work

out

your

recursive

resolver

and

then

the

recursive

resolver

will

handle

all

those

resolution

process

for

you

like

going

to

the

different,

authoritative

servers

like

the

to

the

name,

server

top-level

domain

name

server

and

a

second

level

to

my

name,

is

over

to

cut

the

IP

address

of

you

to

me

and

then

give

back

to

the

client.

So

when

you

have

a

contract

with

ICP

are

typically,

they

will

give

you

a

default

recursive

resolver

for

you

to

use,

but

now

it

turns

out

and

one

more

users

prefer

to

use

is

public.

D

The

answer

is

over.

We

have

Google,

we

have

open.

Yes,

we

also

have

called

flare.

They

are

very

good,

so

I

believe

them.

The

major

three

reasons

for

the

users

to

switch

the

public

theater

observers

could

be

the

performance.

Could

it

be

the

battery

security

and

also

the

support

for

the

t?

As

extensions

turn

out

to

be,

may

be

better,

so

the

security

issues

we

investigate

English

talk

is

that

say

as

a

users,

you

choose

Public

DNS

resolvers

and

you

won

this

publicly

as

resolvers

to

handle

your

request.

D

You

still

send

that

dooming

request

the

to

this

resolver,

but

there

are

some

unpassed

middleboxes

that

can

see

your

request

and

assume.

Uts

request

is

not

encrypted.

So

what

can

really

happen?

And

in

particular

we

look

into

this

specific

case,

say:

there's

a

guy

on

a

path

and

basically

intercept

you

OTS

request

and

then

redirected

to

one

alternative

resolver

and

that

this

alternative

resolver

to

handle

the

resolution.

D

So

then

say

the

public

das

Google

will

be

kicked

out

completely

from

his

picture,

and

this

authoritative

resolver

well,

do

the

query

and

the

response

Hannity

and

I

finally

gave

the

request

to

the

user.

So

that's

the

limb

problem

when

we

look

into

in

this

work-

and

it

turns

out

this

kind

of

a

request.

D

So

we

found

that

there

are

four

types

of

it

potential

interceptors

doing

this

network

provider.

Isp

is

only

one

of

them:

censorship.

Fair.

Also

doing

that

now

there

have

have

already

been

some

reports.

Talking

about

that

and

then

have

our

software

now

well

doing

that,

and

also

Enterprise

proxies

are

doing

that

as

I

example

for

the

SPE

we

found

there

has

been

some

reports

and

news

about

these

practices,

and

actually

this

type

of

middleboxes

is

named

as

transparent,

yes,

proxy

by

those

parties.

D

So

it's

kind

of

to

be

something

known

to

the

community,

but

in

this

work

we

try

to

do

a

large-scale,

more

comprehensive

analysis

instead

of

doing

individual

news

reports.

So

that's

the

main

contribution

of

this

work.

Okay,

so

basically

the

two

main

questions

we

want

to

answer

in

this

research

is

first,

how

prevalent

is

this

approach

is

practice?

How

prevalent

is

the

as

interception

and

second,

what

are

the

characteristics

of

the

tea

interceptions?

Was

their

strategy

and

what

do

they

really

like

in

practice?

So

let's

first

come

to

the

threat

model.

D

So

actually,

in

a

previous

example,

we

only

introduced

one

way

to

do

that.

Yes,

interception

and

it

turns

out

there

are

a

few

more

ways

to

do

that.

So,

let's

first

come

to

our

basic

picture

say

we

have

those

five

parties.

We

have

a

client,

Public

DNS

and

the

authoritative

server.

They

are

the

normal

parties

handling

you

audience,

requests

and

responses,

and

then

now

we

have

found

has

device

and

then

one

alternative

resolvers

likely

to

belong

to

a

same

owner

of

this

on

hosta

on

pass

device.

D

So

when

the

on

pants

device

doesn't

do

anything

anomalous,

anything

suspicious

either

shoot

just

a

forward.

Your

request

that

to

the

public

dns

and

like

the

public,

the

s

to

handle

everything.

So

the

bad

things

happen

when

this

some

positive

eyes

try

to

change

your

a

request.

So

the

first

example

we

look

into

eases

request

retraction.

So

in

that

case

the

unpassed

device

were

simply

block.

You

request

to

public

TS,

of

example,

from

google

and

then

instead

either

word.

D

I

mean

whitter

actual

request

to

its

own

alternative,

resolver

and

then

daughter,

deliveries

over

well

duty

resolution

and

response

handling,

actually

there's

another

case

for

the

TS

interception.

So

in

that

case

the

unpassed

device

were

replicated

requested

to

different

places.

So

first

you'll

request

the

worst

still

go

to

the

public

dns

and

then

gather

resolved.

In

the

meantime,

damned

past

device

will

copy

the

same

requests,

an

issue

that

true

to

his

own

alternative

resolvers.

D

So

from

the

prospective

authoritative

server,

there

are

be

two

requests

and

then

two

responses

were

go

to

the

clients

and

typically

the

first

one

go

to

the

client

will

be

cached

and

used

by

the

client

and

there's

also

a

third

category

of

TS

interception.

So

in

that

case

the

request

to

public

TS

is

still

blocked

and

then

the

request

or

basanta

2.30,

authoritative

resolver.

D

But

when

this

happens,

the

alternative

resolver

stops

from

being

from

ascending

your

request

that

you

do

certain

ative

server,

but

instead

you

it

work

directly,

give

you

the

response

to

the

to

the

to

the

clients.

So,

in

the

end,

we

are

looking

to

those

three

types

of

issues

during

TS

interception

and

next

let

let

us

take

a

look

at

the

methodology.

We

try

to

detect

those

kind

of

das

interception

practices

so

actually

from

the

previous

three

examples.

D

You

may

already

have

some

idea

to

detect

this

because

say

if

you

are

able

to

control

the

clients-

and

you

are

also

able

to

control

some

other

authoritative

nameservers

and

you

are

able

to

direct

your

client

to

send

them

requests

to

your

own,

authoritative

servers

and

based

and

by

looking

to

the

differences

between

the

requests,

the

patterns

from

the

public,

the

ESRI

servers

and

not

arterial

resolvers.

You

are

able

to

discern

those

cases.

D

The

main

reason

is

like

if

you,

if,

by

looking

to

the

source

IP

address

of

the

requests

that

go

into

associative

servers

if

the

source

IP

address

does

not

really

belong

to

you,

for

example,

Google

belongs

to

something

you

have

no

idea

about

nothing

relevant

to

Google,

and

then

you

can

figure

out.

There

may

be

some

issues.

We

see

that

yes

resolution

process,

so

actually

this

is

what

we

do.

D

We

do

this

and

to

end

data

collection

and

the

comparison

we

do

control

some

clients

and

item

to

send

a

large

number

of

requests,

and

then

we

also

can

show

some

associative

servers

and

to

receive

those

requests

and

then

do

some

comparison.

But

still

there

are

two

major

challenges

we

need

to

address.

First,

how

can

we

gather

those

large

number

of

the

vantage

points?

I

mean

those

middle

boxes.

Some

of

them

are

very

close

to

the

clients.

D

So

if

you

want

to

observe

in

novel

of

those,

yes

interceptions

will

lead

an

odd

number

of

1h

points,

so

we

actually

leverage

the

two

platforms.

The

first

actually

comes

from

proxy

rack,

which

is

a

Sox

residential

proxy

networks.

Actually,

as

a

client,

a

customer,

you

can

buy

it

services

and

it's

a

peer-to-peer

proxy

network,

so

you

can

actually

send

your

request.

You

skate

away

under.

We

were

fun

to

appear

in

his

pourraient,

and

that

appeared

to

redirect.

D

You

request

that

to

some

places

else,

so

we

had

to

actually

leverage

a

large

number

of

IP

for

us

to

do

this

measurement

Authority.

So,

in

the

end,

this

is

the

first

one

we

use,

but

the

limitation

for

that

it

only

supports

TCP,

and

we

will

note

yes,

is

major

based

on

UDP,

so

to

measure

UDP

IDs

request.

D

What

do

we

do

is

to

actually

work

with

a

company

in

China

who

is

who

we

have

a

good

relationship

with

and

allows

us

to

write

to

to

put

our

code

in

it's

a

network,

debugger

modules,

so

we

actually

implement

how

much

Amanda

logic

and

Latta

to

run

on

the

client

devices.

So

by

doing

that,

we

are

able

to

measure

both

TCP

and

UDP

and,

and

then

second,

the

Challenger

we

need

to

address

is

how

able

to

see

the

policies

of

interceptions

of

the

middle

boxes

because

they

are

kind

of

black

box.

D

We

cannot

really

go

there

and

at

the

open

the

box

and

see

how

the

implement

there

are

rules.

So

what

we

are

trying

to

do

in

the

end

is

to

enumerate

or

the

possible

policies-

and

this

is

a

best-effort

approach,

so

we

actually

focus

on

five

types

of

fields.

First

is

the

public

IDs

resolvers

that

I

mean

a

destined

by

the

users

to

handle

the

requests

and

second,

the

different

protocols

and

third

of

different

types

of

a

requests,

and

also

we

look

into

the

different

types

of

a

TR,

DS

and

finally,

there's

a

particular

challenge.

D

Here

is

how

we

able

to

link

the

request

from

the

clients

to

the

requests

into

the

associative

servers,

because

when

the

requests

that

come

from

comes

through

what

those

meter

boxes,

the

source

IP

address

were

be

changed.

So

there's

an

obvious

way

to

to

to

link

that.

So,

in

the

end,

the

with

you

develop

this

trick

say

we

actually

encounter

the

unique

ID

for

the

source

into

the

to

my

name,

and

it

turns

out

that

those

middle

boxes

don't

really

change

the

to

me

name.

D

So

by

doing

that

from

the

perspective

or

associative

name

servers,

so

we

can

link

the

request

from

e

Sorrentino

cinders

to

the

ones

received

by

by

themself

okay.

So

so,

in

the

end,

by

using

these

two

platforms,

we're

able

to

send

a

six

million

requested

to

the

public,

Athena's

resolver,

send

it

to

our

authoritative

nameservers

and

we

have

a

good

coverage

of

the

geolocation.

So

actually

we

have

more

than

170

countries

observe

we

order

that

in

our

dataset

and

the

more

than

3000

autonomous

systems.

Yes

in

order.

D

That

said

so,

we

believe

this

is

a

really

good

data

sets

to

look

into

so

due

to

the

time

limit.

I

will

only

talk

about

three

major

observations

during

our

study.

So

first

question

we

want

to

answer

is

how

many

queries

are

intercepted,

actually

we're

looking

to

the

two

different

platforms

for

the

global

wide

analysis

we

have

about

so

1,700,

yes,

19

turns

out

in

the

end

there

were,

there

are

198

is

doing

interception

and

the

for

the

experiments

in

China

we

found

from

those

356.

D

Yes,

there

are

61,

yes

to

interception,

so

those

numbers

actually

are

not

small.

So

we

think

this

is.

This

is

contents

out

to

be

an

issue.

We

really

need

to

take

good

care

of,

and

then

we

also

look

into

the

differences

between

the

public

es.

Resolver

sign

turns

out.

If

you

are

trying

to

go

to

a

more

public,

more,

maybe

more

popular

like

well

known

publicly

as

resolvers

the

chances

you'll

request

to

get

interception

that

may

be

higher.

D

And

the

second

question

we

want

to

answer

is:

how

are

my

queries

intercepted?

We

talked

about

three

types

of

TS

interceptions:

the

Qwest

redirection

request,

replication

and

direct

responding

in

terms

of,

in

most

cases,

those

middleboxes

who

are

due

to

request

a

redirection

of

smooth

as

mauricio.

Well,

to

that

request

the

replication

and

then

for

the

directory.

Responding

is

really

really

rare,

and

the

third

question

we

want

to

answer

is:

are

my

response

that

tempered

I

think

for

the

security

perspective.

D

This

is

the

most

important

question

we

want

to

know,

and

it

turns

out

that

actually,

the

message

is

kinda

positive

I

mean

in

some

sense,

because

most

the

responses

are

not

tempered

from

the

six

media

and

responses

responses.

Only

hundreds

of

them

are

going

to

change

the

result,

users,

consent

and

there's

the

one

interesting

case

we

want

to

briefly

go

through

here

is

traffic

monetization.

D

So

actually,

if

you

are

in

China,

and

then

you

belong

to

this

China

mobile

group

of

Yunnan

autonomous

system,

if

you

use

Google's

public

dns

and

you

send

the

requested

of

Yahoo's

IP

address

and

then

your

response

will

be

chained

to

this

app

advertisements

IP.

So

actually

this

app

advertisements

also

belong

to

the

same

yes,

so

they

actually

somehow

monetize.

They

are

traffic

from

your

from

UTS

requests.

D

Okay,

so

I

will

quickly

go

through

some

way.

We

think

about

that

can

address

this

issue.

So,

first

we

think

I

mean

this

issue

should

still

be

taken

care

of,

even

though

it

turns

out

the

response.

Temporary

is

not

too

much

because

I

think

from

users

perspective

is

their

rise

to

know

who

is

really

the

the

person

that

the

party

handling

their

request

and

so

fathers

in

a

way

for

user

to

to

to

know

that,

and

certainly

we

found

those

open,

resolvers

security

is

not

really

good.

D

Actually,

only

43%

of

them

support

the

s

sec

and

we

found

for

those

resolvers

using

the

pant.

Yes,

resolution

to

kids,

it

turns

out.

All

of

those

versions

should

be

deprecated

well

before

2009,

so

they

are

using

all

those

of

very

vulnerable

versions

that

are

really

not

good,

so

two

tips

about

addressing

this

problem.

First,

we

think

we

think

the

attack

is

still

a

relevant

technology

to

address

this

issue.

I

mean

you,

those

recursive

resolvers

may

ignore

the

asset

as

a

client.

D

If

you

do

verifying

your

response

from

I'm

using

PS

ik

there's

a

chance,

you

can't

detect

such

response

tempering

and

then

prevents

these

bad

things

from

being

happening.

An

attack

in

the

suggestion

we

want

to

give

is

to

use

encrypted

DNS.

For

example,

if

you

set

up

a

tea

house,

TRS

connection

between

you

and

recursive

resolvers,

and

you

can

somehow

use

the

certificate

to

verify.

Ok,

this

turns

out

to

be

the

right,

recursive

resolver.

What

this

turns

out

to

be

something

I

have

no

idea

about.

D

So

we

we

know

there

are

some

very,

very

good,

very

interesting,

RFC's

working

on

this

direction

and

we

believe

this

is

right

way

to

go

to

and

in

the

meantime,

we

also

provide

this

online

checking

torso

even

without

encryption.

If

you

go

to

our

website,

you

can

clearly

see

who

is

the

real

TS,

recursive

resolver,

so

you

are

using

and

to

conclude,

we

to

the

first

large-scale

measurement

and

to

in

the

measurement

on

this

issue

of

the

s,

interception

based

on

32,

alternative

resolving,

and

we

have

some

interesting

findings.

D

For

example,

we

found

there

are

259

s

doing

this,

and

if

you,

if

you

are

in

China-

and

you

try

to

use

coupons

publicly,

s

should

be

really

careful

about

that,

and

then

there

are

some

security

concerns.

And

finally,

we

think

there

are

some

medications

and

we

also

propose

online

checking

tor

and

in

the

end

we

should.

We

think

this

issue

should

be

I

mean

address

that

by

the

efforts

of

the

community.

So

we

have

more

details

in

our

paper,

which

was

published

in

unique

security

last

year,

and

here

are

some

my

personal

informations.

E

D

E

A

F

F

So

you

can

easily

put

together

a

database

of

users

and

what

they're

doing

like

one

users

going

to

Google

and

Amazon

and

others

going

to

Bing.

They

can

also

see

what

types

of

devices

you

have

on

your

network

and

so

because

of

that

these,

these

recursive

resolvers

can

be

the

targets

for

data

requests.

So

an

oppressive

regime

can

simply

say:

hey.

You

need

to

hand

over

all

of

your

DNS

logs,

and

so

it's

this

dangerous

position

where

we're

sort

of

trusting

these

ISPs

or

any

recursive

resolver

to

hold

all

of

this

information.

F

There

are

these

cloud

services,

Google

quad,

nine

CloudFlare

that

are

offering

recursive

resolvers

openly,

and

they

say

you

know

obviously

they're

not

going

to

keep

logs,

which

is

likely

true,

there's

too

much

data.

It's

actually

sort

of

stored,

this

kind

of

thing,

but

it

doesn't

actually

solve

the

problem

there

they're

promising

to

throw

things

away.

It

doesn't

fix

anything,

we're

still

trusting

them,

we're

just

shifting

trust

from

our

ISPs

to

these

resolvers,

and

so

that

you

know,

that's

fundamentally,

there's

still

a

problem

there.

F

You

may

have

heard

of

other

DNS

privacy

focused

work

like

DNS

over

TLS

or

HTTPS.

That's

just

encrypting

the

transport.

So

that's

going

to

fix

things

like

eavesdroppers

between

you

and

the

resolvers,

but

their

resolve

are

still

gets

to

see

all

of

your

queries

and

your

identity

queue

minimization.

It

hides

things

from

the

the

rest

of

the

DNS

hierarchy,

but

it

doesn't

solve

the

fundamental

problem

here

and

so

what

we've

done

is

we've

designed

the

system

that

we

call

oblivious

DNS,

which

essentially

separates

the

user

identity

from

the

queries

at

the

recursive

resolver.

F

We

built

this

with

requirements

that

we

had

to

be

compatible

with

existing

infrastructure.

It's

very

hard

to

change.

Dns

software

on

Thor

taters

or

at

recursive

servers.

It's

a

really

old

ecosystem.

It's

just

not

simple,

to

sort

of

throw

things

out

and

build

a

new

protocol

altogether.

We

also

had

to

minimize

overhead

because

DNS

underpins

basically

all

web

traffic.

So

what

we

did

was

we

made

a

couple

changes.

The

first

was

we

modified.

The

stub

stubs

normally

operate

as

lightweight

processes

on

your

OS.

F

They

take

care

of

DNS

resolution

for

the

applications

on

your

on

your

machine

and

what

we

did

was

we

use

AES

symmetric

keys

that

we

generate

on

the

fly

and

we

encrypt

your

query

to

a

ciphertext.

We

then

append

a

a

domain

that

we

own.

Something

like

imagine

we

own

that

the

TLD

Oh

DNS,

so

we

append

the

this

clear

text

domain,

which

means

the

recursive

servers

not

going

to

be

able

to

understand

your

query.

F

It's

just

cipher

text,

but

it

will

use

the

existing

DNS

infrastructure

to

eventually

reach

our

authoritative

that

we

own

say

dot

Oh

DNS.

At

that

point,

we've

got

this

Oh

DNS,

authoritative

server,

which

is

both

an

authoritative

server

and

a

recursive

server.

It

holds

the

public

keys,

and/or,

the

private

key

to

decrypt

the

session

key

session

key

decrypt,

the

query.

It

then

acts

as

a

recursive

and

goes

does

the

entire

process

over

again

going

to

route

TLD

and

the

actual

genuine

plaintext

authoritative.

F

Of

course,

we're

introducing

these

operations

and

there's

going

to

be

some

some

overhead.

So

what

we

did

was

we

measured

this

we

implemented

a

stub

and

our

Oh

DNS

resolver

and

go,

and

these

tests

were

done

on

the

same

machine.

So

there's

no

sort

of

LAN

latency

and

what

we

see

is

as

expected,

the

symmetric

crypto

is

really

lightweight

you.

Can

you

can

generate

your

keys?

You

can

encrypt

your

domain,

so

you

can

decrypt

the

domains

very

very

quickly.

We

used

elliptic

curve,

cryptography.

F

F

F

So

that's

one

piece

of

the

latency,

the

the

other

thing

that

we

introduced

is

LAN

latency,

because

we're

tunneling

to

this

ode,

ENS

resolver,

essentially

that

round-trip

time

to

to

that

resolver,

is

added

to

every

query.

And

so

you

can

see

this

illustrated

in

this

figure.

We've

got

conventional

DNS.

This

is

our

client

was

in

New

Jersey

and

you

can

see

cloud

players

doing

quite

well.

F

You've

got

Google

and

quad.

Nine

are

the

other

solid

lines

and

then

we

we

used

OD

NS

resolvers

one

was

in

New

York

City,

which

is

about

4

and

1/2

milliseconds

away

from

us,

and

the

other

was

in

Georgia,

which

is

about

19

milliseconds

away,

and

what

happens

is

basically

that

our

TT

is

just

appended

to

all

queries.

So

this

obviously

motivates

some

kind

of

we

need

a

widespread

deployment

of

OD

NS

resolvers.

F

You

can't

just

have

a

single

DNS

resolver

out

there

in

the

world,

because

you

introduced

a

huge

amount

of

latency

for

a

large

number

of

people,

so

we

argue

for

widespread

anycast

employment

in

order

to

sort

of

get

nearby

because

we're

using

anycast.

This

introduces

a

problem

because

we're

using

this

public

key

crypto

to

decrypt

the

queries

or

decrypt

the

session

keys,

and

we

can't

just

hand

out

the

same

public

key

to

all

servers

on

any

caste.

That

would

be

incredibly

unwise,

and

so

what

we

do

is

we.

F

We

have

a

special

request

that

is

sent

on

that

anycast

address

and

at

that

point

the

OD

NS

resolver

that

your

nearest

according

to

bgp

will

respond

with

its

name,

which

is

you

know

us

one

dot,

ODS

something

like

that,

and

it

sends

back

a

it's

public

key

at

that

point.

Your

client

can

do

this.

You

know

once

a

day

once

an

hour

whatever

in

order

to

find

the

nearest

server

and

then

use

that

domain

specifically

to

append

all

queries

in

the

future

and

you'll

know

the

correct

public

key

to

use.

F

So

that's

all

fine

and

good.

But

what

happens

when

you

actually

use

this

on

the

internet

and

web

page

load

time

is

the

metric

we

decided

to

look

at

here.

We

use

38,

op,

alexa,

alexa

top

30

web

sites

and

loaded

them

using

both

conventional

dns

using

the

princeton

resolver

and

the

OD

NS

resolver

that

we

had

said

you

can

see

a

few

of

the

right-hand

bars

for

each

is

is

OD

NS

you

can

see,

for

a

few

pages

were

were

decent

amount

slower,

so

Craigslist,

Instagram

Facebook.

F

Those

mostly

things

that

had

a

lot

of

little

objects

are.

What

we

are

a

little

bit

slower

on

but

overall,

were

performing

pretty

closely.

There

was

one

that

was

very

odd

live.com.

It

turns

out

that's

because

we

were

directed

to

entirely

different

CDN

and

in

the

ODN

s

case,

we

were,

we

had

a

single

giant,

javascript

bundle

and

the

traditional

resolver

went

to

a

CDN

where

there

are

lots

and

lots

of

little

javascript

objects

to

download.

So

that

was

a

little

bit

slower.

F

The

other

one

that

we

were

curious

about

is

reddit

in

new

york

times.

How

could

we

possibly

be

faster

than

the

conventional

dns

in

those

cases,

because

we

are

introducing

this

latency

and

it

turns

out

if

you

look

at

the

time

to

first

byte

for

those

sites,

it's

it's.

It

starts

to

make

sense.

Essentially

we

were

directed

to

CBN's

that

were

closer.

We

just

happened

to

be

our

DNS

resolver,

directed

directed

us

to

a

more

optimal

CDN

for

us

rather

than

the

princeton

resolver.

F

So

we

wanted

to

understand

what

this

would

look

like

in

terms

of

traffic,

so

we

took

a

trace

of

around

8

million

queries

and

simulated

users

as

we're

turning

up

and

down

the

the

percent

of

users

that

are

using

au

dns,

and

you

can

see

when

you

have

zero

percent

of

ODS

users,

so

the

cache

misses

that

the

recursive

are

relatively

small

as

you

increase

the