►

From YouTube: IETF106-CBOR-20191121-1740

Description

CBOR meeting session at IETF106

2019/11/21 1740

https://datatracker.ietf.org/meeting/106/proceedings/

A

B

A

A

A

A

Finally,

we

will

wrap

up

any

agenda:

bashing,

nope,

okay,

so

status

update.

We

got

six

interim

since

ITF

105

I

thought

these

were

really

useful.

We

progress

the

documents

you

can

find

all

the

information

about

them.

The

recordings

are

on

on

YouTube

minutes,

slides

everything

we

have

on

this

link.

The

chartering

has

been

finalized

since

ITF

105,

the

sea,

beret

tags

and

seaboard

sequence,

sequences

document

or

in

RSC

editor

queue.

A

So

great

job

with

those

and

finally,

we

have

C

Barbie's

version

9,

which

has

started

it's

working

group

last

call

in

last

week

or

yeah

Thursday

last

week

and

that

will

go

on

until

December

12.

It's

a

long

working

group

last

call

because

we

have

ITF

week

and

also

some

holiday

going

on

after

that.

So

please

do

send

comments

about

this.

A

Before

we

move

on

to

the

Super

Bass

I

wanted

to

discuss

in

terms

so

now

that

basically

Super

Bass

is

almost

done.

We

might

want

to

stop

the

interims

every

two

weeks,

but

we

me

and

Jim

were

suggesting

to

have

these

three

interims

up

to

end

of

January.

So

this

are

all

on

Wednesday,

December,

18,

January,

15

and

January

29

or

Wednesdays

in

the

same

time

slot,

and

then

we

can

have

in

dreams

when

we

need

them.

If

we

need

them,

do

you

have

any

opinions

about

that.

C

So,

just

just

as

a

reminder

what

this

was

about,

we

were

planning

to

take

SIBO

to

internet

Senate.

So

this

is

not

the

time

for

great

innovations.

This

is

the

time

for

fixing

things

looking

at

it's

Robert

Horry,

making

in

particular

making

improvements

in

specification

quality,

because

that

is

probably

the

biggest

source

of

potential

into

our

ability

problems,

and

we

had

some

some

29

issues

that

Poland

is

closed

since

IETF

105.

So

there

was

some

work

document

does

look

a

bit

different

now.

C

So

I

think

the

the

biggest

thing

that

we

managed

to

do

is

understand

the

various

kinds

of

errors

in

a

better

way

and

also

I

understood

where

these

errors

could

potentially

be

handled.

So

we

clearly

separated

well

non

well-formed

from

non

village

in

village

situations

and

the

document

just

surf

the

data

item.

It's

not

well

formed,

there's

not

really

that

much.

You

can

do

and

we

now

have

corrected

pseudocode

for

there.

There

even

was

a

small

bug

in

the

pseudocode

and

the

appendix

for

well-formed,

and

then

the

the

other

question

is

is

the

semantics?

C

Okay

and

semantic

errors

are,

of

course,

also

errors,

but

it

may

still

be

possible

to

present

a

data

item

with

semantic

errors

to

the

application

if

there

is

a

way

to

indicate

those

errors.

So

the

first

two

of

the

three

levels

levels

that

that

happen

at

the

level

of

C

bar

in

the

generic

decoder,

for

instance,

and

then

there

is

the

third

level.

C

Of

course,

the

most

applications

will

need

some

form

of

validation

on

top

of

the

validation

the

generic

decoder

can

do-

and

this

is

out

of

the

scope

of

this

document

and

that's

where

either

handwritten

application

code

comes

in

and

or

some

form

of

data

definition,

language

like

CDL

or

JSON,

schema,

org

or

UD

DF

or

JD

DFO.

However,

it's

called

right

now

or

JCR,

or

there

are

lots

of

these-

that

are

out

there

and

can

potentially

be

used

for

SIBO

data

items.

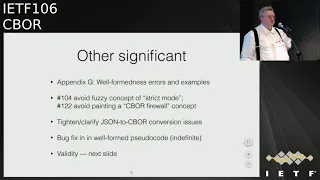

C

So

I

think

that's

a

good

thing

that

has

happened

and

I

think

we

have

pretty

much

completed.

This

change

other

significant

changes.

We

now

have

not

only

have

in

appendix

with

working

examples.

We

also

have

an

appendix

with

examples

that

have

where,

from

this

errors,

we

have

discarded

the

concept

of

strict

mode,

because

it

meant

too

many

different

things

and

we

also

do

not

paint

the

idea

of

a

SIBO

firewall.

C

We

had

a

bit

of

tightening

on

the

JSON

to

see

what

conversion

issues

so

since

C

Bo

has

a

richer

data

model.

Then

then

Jason

there

sometimes

the

question:

how

exactly

do

we

interpret

some

jason

in

adjacent

sasebo

conversion?

We

are

not

standardizing

this,

but

we

are

defining

enough

that

an

application,

a

protocol

can

essentially

just

reference

this

section

and

and

maybe

provide

a

little

bit

more

information,

how

it

wants

its

conversion

being

done.

C

Instead,

we

fixed

a

bug

in

the

appendix

with

the

pseudocode

for

detecting

well-formed

s,

and

we

worked

on

validity

and

there

we

now

have

the

the

validity

in

the

basic

generic

data

model,

which

is

exactly

two

things:

correct,

utf-8,

syntax

and

non

duplication

of

map

keys,

and

then

we

have

tag

validity.

And,

of

course,

since

we

have

dozens

of

tags,

we

have

dozens

of

different

things

that

can

go

wrong

there,

and

given

that

the

number

of

tags

is

supposed

to

grow,

the

idea

that

a

generic

decoder

is

a

completely

validity

checking

decoders

is

rather

unlikely.

C

So

that

is

pretty

much

what

what

has

changed.

Of

course,

there

are

29

issues

also

that

there

were

many

things

that,

on

the

editorial

level,

even

more

complex

editorial

things,

and

there

is

one

open

issue.

Actually,

there

are

two

open

issues,

but

one

is

just

the

tasks

that

we

have

to

do.

It

doesn't

have

anything

to

do

with

the

document,

and

the

other

open

issue

is

what

exactly?

C

So

besides,

it's

interesting

that

we

are

not

even

discussing

this

for

utf-8

for

the

utf-8

validity,

but

for

the

map

keys

it's

a

little

bit

more,

a

key

because

well

different

implementations

handle

it

in

a

different

way

and

that's

not

something

that

we

have

invented

or

Mis

designed

or

something,

but

it's

something

we

inherited

from

JSON

and

interestingly,

nobody

in

the

JSON

world

is

as

concerned

about

this

issue

and

well

yeah.

So

how

do

we

handle

it?

C

And

my

proposal

is

to

essentially

punch

and

say:

well:

we

live

in

a

world

where

we're

not

all

decoders

will

be

validating

in

this

space.

So

it

will

be

the

unusual

case

that

an

application

protocol

says

must

error

out

on

on

a

duplicate

map

key,

and

the

problem

really

is

that

many

decoders

by

construction

just

silently

discard

duplicates

so

I

spent

a

couple

days

between

the

IDF's

trying

to

fix

my

main

Zeebo

implementation

to

detect

duplicate

map

keys,

and

it

just

turns

out

it's

much

more

difficult

in

the

platform

I'm

coding.

C

So

the

generic

decoder

may

be

discarding

duplicates

and

may

be

discarding

the

the

early

ones

or

the

late

ones

or

something

in

between

we

don't

know

the

application

has

little

control

because

it

only

sees

a

very

nice

well-formed

map,

and

so

that's

the

normal

situation.

There

may

be

some

applications

that

actually

have

to

require

village

validity

checking.

So

that's

not

outlawed,

but

this

is

the

unusual

case.

C

So

what

there

is

actually

a

must

in

the

text

and

the

the

must

here

is

the

application

protocol

designer

needs

to

make

up

their

mind.

So

is

it

one

that

will

work

with

most

decoders

or

is

it

one

that

actually

needs

a

decoder

that

does

the

validity

checking,

so

my

proposal

would

be

to

put

her

bound

tricks

on

the

on

the

number

three

and

Geoffrey

you

put

this

issue

in

luckily,

is

here

so

maybe

you

can

have

an

opinion

on

that.

D

Okay,

Sean

Leonard

I

actually

just

have

an

observation

or

a

question,

given

the

extensive

use

of

JSON

and

protocols

and

so

forth.

Are

there

a

class

of

known

security

vulnerabilities

that

have

been

reported

that

exploit

duplicate

map,

key

issues

and

the

different

ways

that

decoders

handle

it

because

I'm

not

personally

aware

of

cases

where

it's

actually

been

shown

to

be

an

exploitable

problem

and

I

think

that

if

there

are,

if

it

is,

then

it's

much

more

important.

But

if

it's

not,

then

maybe

that

just

shows.

Actually

it's

not

that

big

of

a

deal.

B

I

do

feel

like,

even

if

we

don't

want

to

require

applications

to

to

like

require

say

picking

the

first

key

or

something

that

the

text

still

needs

to

change,

because

right

now,

it

says

like

make

an

intentional

decision

and

it's

not

clear

what

what

they

could

decide

if,

if

these

are

the

two

choices

that

either

kind

of

pick

an

arbitrary

one

or

error

like

if

those

are

the

choices

that

are

available,

we

should

just

list

them.

I.

Think.

E

Paul

Hoffman,

so

it's

not

an

arbitrary

choice,

at

least

in

the

Jason

we've

seen

ones

where,

once

you

hit

a

duplicate

it

swaps

in

the

duplicated,

it

forgets

the

first

one

and

we've

seen

them

where

once

it

sees

one

it

it,

it

ignores

it

so

I'm

going

back

to

your

question

Shawn.

If

there

is

a

security

vulnerability

found,

it's

likely

to

be

in

just

one

of

those

not

both

of

them.

E

So

if

we

say

you

got

to

be

careful,

you

got

to

do

it.

There

are.

Are

you

know,

given

that

we

have

indefinite

length

maps,

there

are

a

whole

class

of

applications

that

are

very

appropriate

for

seaboard

that

are

completely

inappropriate

for

Jason,

where

we're

now

saying

you

have

to

remember

every

key

you've

seen,

whereas

for

some

of

these

it

would

make

great

sense

to

say

I'm

only

going

to

look

at

you

know

like

like,

let's

say

the

key

is

numeric

I'm

only

going

to

look

at

ones

that

are

divisible

by

10

or

wait.

E

F

C

Yeah

I

think

that's

exactly

the

the

situation

where

the

security

problem

comes

in,

where

you

have

one

data

item

that

is

interpreted

by

multiple

different

implementations.

So,

for

instance,

player

a

generates

some

some

data

structures,

player,

B

signs

it

and

player

C

interprets

it

and

that's

where

these

these

problems

come

in.

So.

G

Lauren

slim

blade,

so

I

actually

wrote

the

code.

The

gym

was

just

talking

about

since

the

last

IETF

so

and

had

to

think

about

what

was

going

to

happen

with

duplicates

because

josé's

specifically

says

no

duplicate,

header

parameters,

so

that

did

that

detection

I

wound

up

implementing

it

in

the

cozy

layers,

not

in

the

C

bore

layers.

So

it

was

not

a

characteristic

of

the

decoder.

It's

a

characteristic

of

the

thing

above

the

decoder

above

the

generic

decoder,

but.

G

H

My

question,

and

so

my

brains,

not

very

working

with

unbounded

map.

It's

not

the

right

word.

Indefinite

I

knew.

That

was

the

wrong

word.

So

so,

with

a

pull

parser

and

an

indefinite

map,

you

would

not.

Until

you

got

to

the

end,

you

wouldn't

know

if

there

was

another

key,

so

you

would

in

a

pull

parser

return,

the

first

one

and

you're

done

you

found

it

right

and

so

any

any

ability

to

say

you

should

validate

means

that

you

always

have

to

go

to

the

end

of

the

stream

right.

So.

H

C

H

Bad

to

say

something

like

this:

that's

a

fundamental

issue

with

Matz,

yeah

and

and

so

I

I

would

say

that

I

think

we

should

make

some

recommendation

as

to

whether

you

should

keep

the

first

or

the

last

okay.

But

I

also

think

that

we

should

say

very

strongly

that

you

know

there

probably

that

it's,

it's

probably

indefinite.

We

should

make

a

recommendation,

not

a

a

must.

D

B

D

When

so,

if

you

ask

for

user

supplied

or

attacker

supply

data,

and

the

attacker

provides

the

map

data

as

opposed

to

the

map

keys,

it's

not

really

a

big

deal,

because

the

keys

are

chosen

by

the

secured

by

the

secured

code

base

more

or

less

it's

it's.

When

you

ask

a

potential

attacker

to

supply

the

keys

themselves

right

or

an

open

ended

production

of

keys

where

it

could

intentionally

construct

pairs

that

have

repeated

keys.

I

Hey

hi,

this

is

Hank,

so

I

think

that's

almost

nothing

left

to

say

from

here,

except

that

I'm

Pro,

no

change,

which

is

one

strange

when

you

say

it

out

loud

and

I,

always

assumed

that

the

last

key

was

the

valid

key

with

indefinite

maps.

I

think

you

should

be

very

careful

in

case

to

allow

them

in

any

case,

because

they

can

be

a

real

big

issue,

but

still

I

would

not

recommend

first

hit,

but

last

hit

in

that

case,

but

just

a

personal

opinion.

C

You

and

so

actually,

if

you

look

at

the

JSON

objects,

they

are

a

bit

like

indefinite

maps

in

Siebel,

because

you

don't

have

information

from

the

beginning.

How

long

they're

going

to

be

so

I

think

we

are

quite

close

to

what

happens

in

Jason

here

and

I.

Haven't

done

a

serious

search

about

the

check

versus

use

problems

that

we

are

talking

about

so

I'm

not

aware

of

any

such

problem

that

that

exists

in

the

JSON

word,

but

that

doesn't

mean

it

won't

existed

at

some

point,

there's

Jim

pointed

out.

J

My

name

is

Joe

Cheshire

from

Apple.

Listen.

If

this

discussion

I

achieved

lurkers,

you

made

me

think

to

check

RC

67-63

for

dns

service

discovery

to

see

what

language

we

had

there

and

then

we

said

if

there

are

duplicates

you

take

the

first

and

ignore

the

rest

and

there's

so

there

is

some

precedent

for

doing

that,

and

I

think

with

the

discussion

of

indefinite

maps.

J

B

K

C

C

K

F

I

I

don't

know

that

I

agree

that

it'd

be

twice

the

work,

since

you

kind

of

have

to

do

the

look

up

as

part

of

inserting

it

into

the

dictionary.

I

mean

I.

Think

that

that

the

answers

are

one,

take

the

first

to

error

or

three

return,

all

of

them

and

let

the

the

application

deal

with

it,

because

there

are

cases

like

you

know,

when

you're

doing

streaming

that

you

are

going

to

want

to

be

able

to

return

all

of

them.

C

B

H

Michael,

so

if

you

are

processing

a

big

map-

and

you

are

interested

in

a

subset

of

the

keys-

which

is

a

sort

of

a

variation

of

the

pull

parser,

then

for

the

subset

of

the

keys,

you

don't

have

to

store

them

all.

You

just

care

about

the

subset

that

you

know

so:

a

gigabyte

of

map

data

and

you're

interested

in

twelve

keys.

Okay,

so

you

may

have

to

read

to

the

end

of

the

data

stream

anyway

to

find

it

if

all

those

keys

are

there.

H

If

there

are

some

that

just

don't

appear

right,

you're

still

looking

and

still

looking

and

still

looking,

and

so

that

may

not

be

so

terrible,

most

dictionaries

lookups

under

the

hood.

Everyone

said

they

have

to

find

out

where

to

put

the

item,

and

so

probably

the

check,

if

it's

already

there,

while

you're,

inserting

it

in

other

words

insert

if

not

already

there

is,

is

probably

does

not

require

to

trivet

treat

reversals.

If

you

have

a

good

implementation,

unfortunately

not

patron.

H

So

not

all

platforms

are

that

smart,

but

but

but

that's,

but

under

the

hood

they

all

do

that

right.

They

all

they're

all

looking

up.

If

they

have

a

tree

of

some

kind

for

a

dictionary,

they're,

all

they're

all

have

to

find

out

where

to

put

the

item

and

see

if

it's

there

or

not,

and

it's

not

it's

not

twice

as

much

work.

It's

order

a

number

of

entries

in

the

map

right.

C

C

H

L

H

G

Laurence

limb,

but

it

seems

there's,

there's

cases

were

you're,

not

gonna

store

the

whole

thing

ever.

You

just

don't

want

to

do

that,

because

the

way

your

protocol

works

or

something

like

that

I,

don't

know.

Maybe

time

you

get

a

an

entry,

you

flash

a

light

and

that's

all

you're

gonna

do

then

you

have

no

way

to

I

mean

you

can't

dude,

you

can't

do

any

detection

or

the

first

or

the

last,

because

you

just

don't

know

so.

If

you're

not

storing

it,

you

can't

you

can't

do

anything

right.

What.

A

M

D

N

N

N

E

H

E

We

might

find

to

one

that

relies

on

decoders

that

do

this

and

one

that

relies

on

decoders

to

I

guess

we

have

three

choices:

I

have

a

hard

time

believing

anyway

there

could

be.

There

could

be

two

and

so

us

finding

one

going

Oh

we'll

do

the

other

one

isn't

as

a

point

of

reference,

though

we

argued

about

this

for

I,

Jason

and

I

Jason

always

said

was,

must

not

produce

whatever

I

think

we

hunted

once

it's

okay

to

punt

again,

I

mean.

E

B

B

B

Yes,

I

guess

I

guess

yeah

we

could,

we

could

say,

protocols

have

to

decide

or

they

could

leave

that

choice

up

to

the

application.

But

there's

kind

of

there

would

be

to

two

valid

choices

which

I

think

disagrees

with

Karstens

statement

that

maybe

you

want

to

pick

the

last

sometimes.

But

it's

not

it's

not

picking.

C

B

A

G

A

E

K

K

Yeah

the

comment

about

you

know

you:

some

some

implementation

will

be

class,

so,

yes,

they

might

but

I'm

just

thinking.

We

have

a

protocol

which

is

covered

an

issue

of

this

okay.

Well,

maybe

I

mean

you,

but

now

most

of

us

aware

of

it,

we

could

make

implementations

which

we

consider

to

be

broken

to

be

fixed

by

adding

new

requirements.

H

H

We

closed

the

export

because

we

make

everyone

interpret

it

the

same

way.

So

now

we're

just

simply

arguing

as

to

which

is

computationally,

more

efficient

or

more

convenient,

and

how

many

implementations

we

would

obsolete?

Okay!

Okay,

so

that's

why

I'm

don't

feel

comfortable

leaving

it,

as

is

because

I

think

that

the

exploit

is

there

because

of

the

ambiguity,

not

because

of

a

specific

choice,

a

or

b.

We

require

the

ambiguity

to

be

exposed

in

order

to

fort

to

make

an

exploit.

H

F

What

Michael

said

is

true

for

cozy,

the

only

place

that

is

an

issue

is

with

the

protected

attributes.

If

you

haven't

happened

in

the

unprotected

attributes,

well,

you're

not

supposed

to

trust

them

anyway.

So

if

your

code

is

screwing

up

because

it

picked

up

the

wrong

one,

it

doesn't

really

matter

all.

G

Right

so

I

think

whether

or

not

there

was

a

security

vulnerability

is

dependent

on

the

protocol.

Some

protocols,

don't

like

just

just

as

Jim

said

in

the

protected

headers

in

in

cozy.

It

matters

in

the

unprotected

headers

it

does

not

in

in

the

Jose

says

you

can't

have

a

duplicate

header

parameters,

so

the

text

maybe

should

be

if

you

are

designing

a

protocol

that

has

a

security

issue

with

duplicate

headers

pick

one

pick

first

or

pick

error,

one

or

whatever

you

do.

G

D

Sean

Leonard

I

think

that

it

sounds

from

Jeffrey's

description.

The

security

problem

comes

from

when

you

have

whether

you

a

second

piece

of

software

or

a

second

process,

looks

at

in

parses

the

data

item

from

the

original

source

material

versus

taking

the

results

of

the

decoded

item

in

the

first

pass,

when

it

was

doing

validation

or

authentication.

C

D

E

Paul

Hoffman,

so

I

think

we've

gone

down

a

rat

hole

here,

because

this

whole

discussion

is

about

valid.

A

decoders

that

validate

decoders

that

don't

validate

are

perfectly

valid.

The

carbs

are

here

perfectly

good

decoders,

so

we

can't

make

assumptions

about

receivers

of

the

data

because

it

might

not

be

a

validating

decoder.

So

who

will

look?

If

we

have

to

do

this

one?

We

can

make

it

safer.

We

can't

make

it

safer.

We

can

only

make

it

safer

for

decoders

that

are

validating

and

we

don't

know

if

decoders

are

validating

so

I'm

still.

B

B

One

of

the

mistakes

is

parsing

the

same

data

twice

with

two

different

parsers,

but

the

other

one

is

a

mistake

in

the

in

the

specification

of

the

format

that

that

there

are

two

legal

parses

or

even

in

zip,

like

one

of

the

parses

is

not

legal,

but

it's

still

possible

because

the

data

is

duplicated

and

and

so

we

can.

We

can

avoid

that

second

mistake

by

changing

the

definition

of

the

format

to

require,

or

the

definition

of

the

protocol,

to

require

that

it

that

it

pick

one

of

the

map

keys.

E

B

I

I'm

not

saying

Paul

Paul

said

I

was

claiming

that

all

parsers

need

to

be

validating,

I,

don't

believe

I'm,

claiming

that

I'm

saying

a

validating

parson

will

error.

An

on

validating

parser

must

must

return

one

of

the

values,

a

particular

one

of

the

values,

and

we

need

to

pick

which

value

an

on

validating

parser

returns.

So.

G

B

One

is

saying

like

see:

Bor

all

generic

non,

validating

decoders

pick

the

first

or

say

the

protocol

has

to

say

which

which

to

pick

or

the

protocol

has

to

either

say

which

value

to

pick

or

declare

that

it's

not

a

security,

critical

value

and

an

explicitly

saying

that

if

the

protocol

decides

it's

not

important

for

security

that

that

it

doesn't

have

to

say

I

think

would

be

enough

warning

for

me

to

be

happy

with

it.

Yeah.

C

C

A

K

A

C

A

So

now

we

can

talk

about

CDL

ways

forward

and

we

have

ten

minutes

so

we'll

try

to

be

quick.

We

probably

won't

have

time

to

go

into

the

details.

That

I

was

hoping.

We

would

so

the

what

we

started

doing

me

and

Jim.

We

started

identifying

interesting

features

for

CDL

users

to

go

forward

so

CDL

features

that

are

not

in

the

city

deal

RC.

These

are

additional

features

like

going

to

a

CDL

2.0

and

today,

I'm

gonna

give

a

report

of

the

first

investigations.

A

They

started

at

the

hackathon,

and

the

discussion

today

would

probably

not

happen,

but

if

it

did,

it

shouldn't

be

focused

on

the

technical

solution,

rather

on

the

scope

of

the

features

and

possibly

identify

pitfalls.

So

this

is

the

list

of

new

features

that

were

identified.

Those

were

taken

from

the

mailing

list

and

from

C

were

the

Karstens

document

CDL

freezer.

A

A

So

probably

not

gonna

read

them

all,

but

this

is

the

result

from

the

11

responses.

So

in

green

you

have

the

yes

response.

Yes,

I

want

this

or

I'm

interested.

The

yellow

is

a

maybe

in

the

future.

Possibly

and

the

red

is

either

no

don't

do

this

or

it's

mostly,

this

worries

me.

So

it

might

not

be

a

no

don't

do

this,

but

it

might

be

a

if

we

do

this.

A

A

So

these

are

points

that

the

working

group

would

have

to

decide

on

if

we

get

this

and

I

think

if

I

don't

get

this

wrong

yeah,

so

we

also

have

some.

This

worries

me

about

this

feature,

so

we

need

to

get

this

right

if

you

want

to

put

it

in

next

feature,

let's

see

if

nothing

from

the

jabber

okay,

so

cast

is

a

piece

of

cake

about

this

feature.

A

So

these

are

all

computed

literals

right

now

citadel

is

not

defined

to

be

able

to

compute

and

we

are

considering

to

add

the

functionality

to

define

integers

as

components

of

a

computer

operation

or

string,

literals

and

operation

being

concatenation

or

so

station

or

representative

or

tags

a

string

literals

tailored

to

their

semantics,

rather

than

she

realized

Sieber.

So

these

are

three

options.

D

I

This

is

Hank

I'm

to

blame

for

one,

and

this

is

due

to

I'm

writing

a

lot

of

CDL

and

a

lot

of

the

content

comes

from

the

old

world

and

sometimes

they

have

despite

offsets

and

you

I'm

ahead,

creating

scripts

to

write

my

own

CDL

and

and

I

can

compute

them,

and

and-

and

so

this

is

why

one

exists.

Is

this

a

convenient

feature

and

an

inference

or

a

convenience

feature

and

CTA

per

design

should

be

full

of

convenience

features.

I

So

that

is

why

I'm

really

also

in

favor

of

one

and

you

can

do

one

thing

and

stop

not

printing

the

other.

So

when

you

can

do

both

I

say

be

and

I

think

it's

not

a

bad

thing

and

it

might

be

cluttering.

But

if

you're

coming

from

another

specification

and

you're

new

to

seaboard,

that's

why

CDI

and

and

a

translating

it

it

makes

the

work

so

much

easier

for

you.

D

Sean

so

I'm

all

in

favor

of

syntactic

sugar.

That

makes

things

easier

to

read

and

understand

for

one

specifically,

can

we

have

a

list

or

consecutive

items

where

increment

is

implied

or

essentially

part

of

the

sugar?

Are

we

talking

about

plus

1,

plus

2,

Plus,

3,

plus

4

plus

5?

Are

we

actually

talking

about

complicated

mathematical

operations

like

x,

2,

mod

3,

whatever

for

your

different

symbols?

Right

I

mean

increments?

Is

is

great.

That

makes

a

lot

of

sense,

but

if

you

want

general-purpose

Turing

machine

as

a

math,

then

that's

that's

a

different

thing.

That.

I

It's

not

what

I

want,

but

this

is

what

one

implies.

So,

as

an

item

one

implies

so

I

I

would

not

love

to

see

something.

I

cannot

powers

anymore,

because

the

equation

is

like

two

lines:

long

and

no.

No.

No!

This

is

no.

This

was

not

my

problem,

but

but

we

figure

out

you're

right.

It

isn't

there's

not

there's

no

scope

to

item

one

and

naturally

we

would

have

been

in

scope.

So

yeah

I'm

with

you

with

the

complexity,

then

I'm

free

and

the

convenience

in

one.

A

C

A

Let's

we

have

two

minutes

left,

so

I'll

just

will

continuing

the

menu

list

anyway,

for

all

these

features

and

we'll

get

more

data

with

the

survey

as

well

variants,

both

Sieber

and

Jason

variants

in

one's

back,

that's

pretty

self-explanatory,

I

think

and

co-occurrence

constraints.

There's

a

lot

of

text.

We

don't

have

time

so

I

will

stop

here,

feel

free

to

go

in

and

read.

A

A

A

E

So

this

is

Paul

Hoffman

I'm

gonna,

possibly

volunteer

other

people

I

just

remember

during

the

CDL.

During

the

end

of

the

CDL

discussion,

there

were

lots

of

people

who

aren't

like

you

know

the

people

in

the

room

who

seemed

interested

in

it

and

they

might

be

more

interested

in

as

well

so

I

don't

think

we

were

restricted

to

it.

Okay,.

A

So

please,

let's

continue

this

discussion

as

well

yeah.

We

will

send

out

the

surveys

to

the

mailing

list,

several

million

East

and

collect

more

input

and

feedback.

Also,

there

were

several

general

comments

about

city

DL

that

we

want

to

take

into

account

about

usability

and

readability

and

learnability.

So

that's.

This

will

also

be

reported

and

blue

sheets

if

anybody

hasn't

signed.

If

Jim

wants

to

say

anything

else,

now

is

the

time

otherwise

I

think

we're

done.

Thank

you.

Everybody.

So.

C

C

Designing

good

co-occurrence

mechanism

probably

also

is

a

significant

exercise,

because

we

need

to

understand

what

are

the

actual

requirements

here

and

before

we

don't

want

to

invent

this

stuff,

just

as

we

didn't

advance

it

IDL,

it's

all

stolen

from

from

other

things,

but

to

reuse

things

like

ocl

and

schema

tron,

and

there

are

lots

of

things

to

pick

from

and

we

need

to

understand

which

of

these

we

want

to

pick

for,

but

for

the

earth.

Can

you

bring

up

my

slides

I?

Don't.

C

Okay,

so

yeah,

that's

why

I

wanted

to

point

through

this

freezer

document?

It

hasn't

been

updated

very

much

in

a

while,

but

it's

so

useful

that

there

are

pieces

of

solutions

already

Indian

I

mentioned

that

existing

extension

points

can

be

used

for

this

and

the

two

candidates

that

I

think

are

pretty

much

no-brainers

here

are

computed

literals

and

embedded

a

B

and

F

of

course,

embedded

a

B

and

F

is

a

significant

amount

of

implementation

work,

but

it's

actually

almost

trivial

to

specify.

C

So

let's

talk

about

computed

literals

first,

those

of

you

who

have

programmed

in

Fortran

will

almost

feel

at

home

here

for

the

other

C,

it's

not

beautiful,

and

maybe

we

can

limit

it

to

two,

the

things

that

we

really

need

at

the

moment,

which

I

think

are

plus

minus

n,

CH

and

concatenation,

but

maybe

we

need

a

few

more.

So,

let's

find

out.

So

that's

really

easy.

A

B

and

F

well.

C

C

C

C

Okay

and

a

completely

different

animal

is

taking

CDL,

which

is

essentially

just

a

predicate

on

this

road,

say:

C

bar

in

sense,

just

a

predicate

on

a

super

instance

and

tells

you

whether

that

instance

matches

or

not

and

turn

it

into

something

different,

which

returns

quite

different

pieces

of

information.

So

defaulting

is

part

of

the

semantics

organization's

transformations

and

so

on.

This

is

a

big

thing,

and

that

also

requires

significant

design.

C

Extending

the

expressiveness

of

severe

requires

design,

and

that

would

be

the

cuts,

a

work

that

we

have

started

and

the

the

co-occurrence

constraints.

So

essentially,

the

two

things

that

need

to

be

done

for

co-occurrence

constraints

are

predicates,

because

we

need

to

be

able

to

say

whether

something

is

okay

or

not,

and

some

form

of

selector.

So

if

we

say

that

one

number

here

needs

to

be

less

than

some

other

number,

we

need

to

have

a

form

to

point

to

that

other

number

in

the

data

item

and

that's

sometimes

significant

complexity.

C

Syntactic

sugar.

That

requires

a

way

to

do

transformations,

so

we

could

pretty

easily

say

the

the

identifiers

in

front

of

a

single

quoted

string

is

another

extension

point

of

CD

DL.

So

that

would

be

one

way

to

get

away

without

having

an

expression

language,

but

to

do

it

in

a

general

way,

requires

the

transformation

mechanism

and

then

finally,

the

the

module

superstructure

and

the

variants

are

things

that

should

be

composed

from

from

components

that

are

reusable

in

some

form.

So

that

requires

some

design.

C

Yeah

and

that's

one

of

the

features

that

most

people

want

so

yeah,

we

should

start

their

design

now,

but

we

shouldn't

that

shouldn't

keep

us

from

doing.

No-Brainers

like

like

this

and

finally,

the

the

Jason

stuff

really

is

coming

from

the

other

side,

where

we

have

a

number

of

Jason

related

requirements.

That

Jason

operator

is

a

no-brainer

like

we

have

dot

C

bar,

we

should

have

taught

Jason

and

maybe

judge

Jason

see

as

well.

C

The

variance

part

essentially

requires

a

way

to

switch,

and

then

finally,

we

have

this

CD

dij

thing

and

I

would

love

to

hear

from

people

who

are

worried

by

that.

This

is

the

description

of

CD

DL

in

CD

DL.

So

this

is.

This

is

the

content

of

the

of

RC

86n

and

then

you

can

can

take

an

existing

CDN

specification

and

make

it

almost

as

ugly

as

json

schema.

C

Yeah,

and

one

question

is

whether

we

we

actually

should

expose

our

planning

here,

a

little

bit,

maybe

take

the

freezer

document

and

and

actually

say

what

we

are

setting

out

to

do

and

and

put

some

of

the

things

that

we

might

be

doing

in

the

freezer.

A

little

longer

why

we

are

working

on

the

other

things,

so

that

that

would

be

my

proposal

if

we

are

a

little

bit

further

advanced

to

actually

make

this.

The

working

document.

H

C

H

H

That's

Church,

yes,

I

realize

that

but

I'm

trying

to

understand

that

the

the

use

case

better

so

that

I

understand

what

what

it

is

that

we're

trying

to

do,

because

if

we,

if

we're

making

it

adjacent

representation,

then

I

presume

that

part

of

the

reasons

we

want

to

write

programs

that

both

read

and

write.

Ctl.

I

H

And

it's

one

thing

you

said:

I

want

to

read

CD

DL

produced

by

human

and

then

it's

another

thing.

If

I

start

saying,

I

want

to

produce

CD

DL

by

a

machine

which

I'm

then

going

to

feed

into

a

something

to

produce

Jason

or

something

else

from

it

right

at

which

point

I

get

I

I'm

worried

about

what

happens

to

the

debug

ability

of

the

resulting

protocol,

which

has

been

produced

by

sort

of

two

layers

of

machine

interpretation

right.

C

Let

me

give

you

two

reasons

why,

where

this

is

useful,

one

is

simply:

you

want

to

write

a

tool

that

does

some

fall-off

consistency,

Jake,

some

form

of

search

on

a

CD,

a

specification,

that's

just

easier.

If

you

don't

have

to

write

a

parser

but

but

can

just

ingest

the

CDL

in

past

form

the

other

example.

What

I

really

want

to

write

this

and

have

written

most

of

the

code

already?

C

Obviously,

the

output

of

that

conversion

will

not

be

beautiful,

but

I

did

this

once

already

in

in

in

2016,

with

the

ocf

specification

at

the

time

and

I

found

tons

of

bugs

in

there

Jason's

email

as

soon

as

I

could

look

at

the

Infinity

air.

For

so

it's

really

useful

thing

to

do,

and

this

conversion

is

again

to

two

parts:

the

actual

conversion

and

the

writing

out

of

the

human

readable

city

and

again,

I

would

like

to

have

a

tool

that

does

the

letter

apart.

C

D

Sean

Leonard

I,

just

also

want

to

say,

since

since

Carson's

lists

include

incorporating

a

B

and

F

things,

I've

actually

done

some

work

along

with

Paul

cos

via

and

others

on,

improving

and

extending

a

B

and

F

so

that

it

can

handle

things

like

Unicode

and

and

a

few

other

things

that

kind

of

directly

related

to

what

CBL

is

trying

to

do

so.

I'll

try

to

post

some

of

those

drafts

to

the

mailing

lists.