►

From YouTube: IETF106-SECDISPATCH-20191119-1710

Description

SECDISPATCH meeting session at IETF106

2019/11/19 1710

https://datatracker.ietf.org/meeting/106/proceedings/

B

A

You

may

notice

that

there's

some

different

faces

on

the

stage

here

Kathleen

was

unfortunately

unable

to

make

it

so

Nancy

is

filling

in

her

place

and

we

have

one

brand

new

co-chair

Francesca

pollen

beneath.

Can

we

have

a

round

of

applause

for

her?

Please

all

right

so

note.

Well,

is

the

same

as

usual.

Please

note

it

well,

including

your

obligations

with

regard

to

a

PR

and

disclosures.

A

A

We

have

this

range

of

possible

outcomes,

so

keep

those

in

mind

our

goal

for

each

of

the

presentations

today

is

going

to

be

to

come

to

one

of

these

conclusions

so

as

you're

watching

the

presentations

think

about

which

of

these

conclusions

might

be

appropriate.

Here's

our

agenda

for

the

day

we're

in

the

first

slot

right

now.

We

have

five

topics.

Looking

for

dispatch,

we

have

a

bit

of

an

overflow

slot

because

we

are

a

little

short

on

time.

A

C

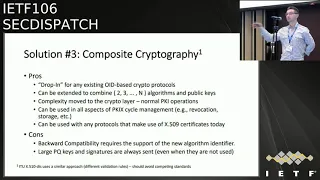

Okay,

everybody

welcome

to

sec

dispatch,

I'm

max

Farah

I

work

with

Kaiba

labs

and

I'm,

going

to

present

them

about

addressing

public

key

algorithms.

Uncertainties

with

composite

crypto.

This

work

has

started

a

couple

of

years

ago

is,

in

collaboration

with

other

companies.

I

can

trust

in

trust

other

cards,

Cisco

Systems,

I,

Zahra

and

desert.

So

what

is

the

problem

here?

Well,

you

know

with

announcement

about

quantum

computing

and

lots

of

investment

in

this

technology.

We

leaving

it

here

of

uncertainty,

specifically

in

cryptography.

C

We

suffer

a

problem

where

algorithm

that

we

use

today

might

fall

apart

right,

but

this

is

a

very

uncertain

period.

We

don't

know

if

that

happens,

and

we

don't

know

if

that

is.

For

example,

schemes

are

strong

enough,

so

possible

workaround

would

be

to

use

hybrid

solutions

where

you

have

multiple

algorithms

that

can

be

used

together

to

secure

some

data

like

certificates,

some

minutes

and

some

initial

experiments

for

the

Poynting.

Some

quantum

resistant

algorithm

have

been

published.

I

sent

an

email

before

in

dispatch,

meaning

list.

So

just

keep

in

mind

this.

C

These

results

is

not

for

all

the

algorithms.

These

are

algorithm

that

have

quantum

keys

that

are

relatively

small,

but

still

it

seems

that

the

situation

might

not

be

dead,

that

tragic

so

why

we

are

here

well

I,

work

in

in

cable,

and

we

use

a

lot

of

certificates

for

many

different

things,

and

it's

use

is

going

to

be

just

increase

with

the

new

platform

that

we're

going

to

deploy

for

a

10

gig,

and

one

of

the

questions

that

we're

facing

today

is:

is

it

possible

to

protect

our

infrastructure

infrastructure

in

a

transition

period

right?

C

So

what

applying?

What

could

be

could

be

said

as

the

further

algorithm

agility.

So

are

your

peaky

eyes

going

to

be

secure

in

next

20

years

or

30?

So

we

have

this

problem.

We

have

to

protect

ourselves

in

a

transition

period

in

with

that's

full

of

uncertainty

right.

There

are

cool,

currently

different

solutions

for

this

and

we're

going

to

go

through

through

them.

C

These

solutions

being

discussed

on

in

the

ministers

and

some

consideration

are

useful

to

make

so

multi-chain

so

using

using

multiple

certificates

using

extensions

in

certificates

to

carry

additional

keys

and

signatures

or

using

composite

crypto,

the

one

that

we

work

on

with

all

these

other

companies.

All

these

are

have

some

merits

and

some

drawbacks

and

let's

go

through

them.

So

using

multiple

certificates

seems

a

very

easy

solution.

C

Well

now

you

have

multiple

certificates.

So

if

you

want

to

protect

the

same

data,

you

have

to

change

the

protocol

to

allow

for

multiple

signatures

to

be

used

and

for

non

negotiated

protocol

might

be

a

little

bit

harder.

You

know

the

example,

the

user

example

for

as

mine,

where

you

don't

know

if

the

client

supports

or

not

the

new

algorithms

and,

however,

the

other

problem

would

be.

You

know,

when

you

have

multiple

certificate,

you

apply

multiple

to

the

same

data.

C

How

to

link

these

identities

in

multiple

certificates,

especially

if

your

PC

is

distributed,

might

be

a

problem

and

definitely

complicates

that

the

operation

size

of

PK

is

a

second

solution.

I

was

adopted

by

ITU

and

uses

extensions

in

the

certificate.

I

was

also

discussed

here,

names

and,

basically,

what

they

do.

They

use

an

extension

to

put

additional

public

keys

and

or

signatures

so

that

the

certificate

is

backward,

compatible,

there's

no

critical

tension,

so

it

can

be

ignored

by

clients

that

don't

know

how

to

process.

It,

however,

is

very

backward

compatible.

C

So

clients

don't

even

know

that

they

are

missing

something,

so

it

could

be

a

false

sense

of

security

requires

change

to

to

the

protocols

to

validate

multiple

signatures

and,

of

course,

in

this

case,

the

problem

of

transferring

the

keys

is

present,

because

the

large

keys

are

tied

to

the

traditional

ones.

So

you

cannot

separate

them.

Probably

it

is

something

that

can

be

done

there,

but

it

would

complicate

a

lot.

C

The

schema,

and

so

a

third

solution

is

the

one

that

we've

been

working

on

in

the

past

couple

of

years

actually,

and

we

try

to

leverage

the

beauty,

the

beauty

mechanism

for

a

greater

agility,

that

is

in

certificates

and

all

pica-x

data

structures,

and

how

we

do

that,

so

we

define

a

new

public

key

algorithm.

So

a

new

ID

define

the

encoding,

encoding

rules

and

processing

rules.

C

So

the

trick

here

is

that,

instead

of

using

a

data

strategy,

the

data

structure

that

contains

the

generic

key-

and

then

we

say

now-

this

is

a

sequence

of

generic

data

structures,

and

this

case

allows

you

to

extend

the

use

of

public

keys

and

signatures

in

all

the

pkx

data

structures.

I

said

before,

and

and

therefore

provides

compatibility

with

existing

certificate

processing

rule,

in

the

sense

that

one

composite

key

generates

one

composite

signature,

and

this

buys

well

with

protocols,

for

example,

what

are

the

pros

of

this

solution?

C

Well,

you

can

drop

in

any

of

the

algorithm

that

I

already

defined,

so

you

can

imagine

a

composite

key

using

RSA,

you

see

the

SI

n,

a

post

quantum

and

you

have

different

classes

of

devices

that

will

use

the

different

algorithms.

They

support,

for

example,

can

be

extended

to

combine

not

only

just

two

but

three

or

four,

depending

on

what

you

use

cases

and

it's

not

just

related

to

quantum

think

about

this

as

a

tool

that

can

be

used

anytime.

You

have

this

type

of

uncertainty,

you

don't

know.

C

If

how

much

you

can

rely

on

the

old

algorithm,

you

don't

know

if

the

new

algorithms

are

ready

for

you,

so

you

can

try

to

combine

them

and,

of

course,

by

using

one

signature.

One

public

key

setter

is

compatible

with

also

revocation

information,

so

you

can

do

OCSP.

You

can

do

CR

else.

You

don't

have

to

define

anything

else.

C

The

downside,

backward

compatibility

requires

the

support

of

a

new

algorithm

identifier.

This

is

not

new.

We

do

that

all

the

time

when

we

deploy

a

new

algorithm,

so

it

actually

goes

into

the

PKI

operations

very

well.

The

downside

here

is

the

large

keys

are

sent

every

time

because

they

are

part

of

the

certificate,

so

you

cannot

really

excite

them

from

there.

C

One

note

that

the

ITU

just

published

or

was

was

published

on

the

meaning

is

the

X

5x

by

10

this

that

use

a

similar

approach.

So

we

hope

that

if

some

work

has

been

will

be

adopted,

we

can

try

to

limit

the

in

competitive

incompatibilities

there.

I

hope

that

we

can

do

a

good

job.

So

what's

the

current

status

of

the

discussion,

of

course,

messages

have

been

a

same

thing

with

many

different

lists:

dispatch,

lamps

and

pica-x

to

try

to

gather

some

some

feedback,

of

course

mix

the

reaction

as

usual.

C

So,

however,

one

of

the

things

that

came

out

fairly

clearly

is

that

this

is

a

problem

that

needs

to

be

solved.

Many

people

noticed

that,

because

of

the

time

frame

that

we're

looking

into

the

sooner

we

start

to

work

on,

this

is

better

logic.

I

providers

I

work

in

an

industry

where

we

leverage

a

lot

of

certificates

in

the

billions

and

other

suppose

like

desert

and

others

VSPs.

They

actually

want

to

work

on

this

because

they

want

to

be

able

to

have

at

least

one

solution

that

they

can

deploy.

C

Other

proposal

may

emerge

that

that

more

towards,

let's

reinvent

the

wheel,

I'm,

not

saying

it's

not

possible,

it's

always

possible.

I

would

like

to

have

one

solution.

That

is

easy,

like

the

one

that

we

propose

right

away,

and

then

we

can

look

and

how

we

can

optimize.

Ok,

we

can

have

more

optimized

solutions

in

space,

largely

we

not

heard

from

the

sales

community

about

around

this

idea,

mostly

because

we

didn't

engage

with

the

community,

but

if

you

guys

want

to

start

engaging

and

telling

us

what

what

you

think.

C

Of

course,

we

are

aware

of

the

fact

that

the

large

large

key

is

and

the

problem

for

for

performances

here,

however-

and

this

could

be

one

one

tool

that

doesn't

require

many

changes

in

protocols

today,

so

I

mean

ok,

conclusions

does

mean.

Discussion

is

a

problem

that

is,

we

should

try

to

solve

within

that

composite

crypto

is

a

possible

tool,

not

the

only

one

but

one

simple

tool

that

we

can

standardize

and

we

have

to

two

IDs.

One

is

for

the

problem

statement

and

overview

of

the

solution.

D

Well,

I

question:

are

we

in

the

discussion

phase

now

Richard,

so

I

can't

speak

for

other

applications,

but

it's

a

little

hard

to

understand

how

this

brings

a

lot

of

value

to

at

least

like

the

web

PGI

story,

and

the

reason

for

that

is

that

on

basically,

we

have

two

choices.

So

somet

right

right.

Imagine

you

have

like

a

situation

where

we

are

now.

D

We

know

there's

not

a

valid

upon

a

computer

and

then

someday

that

we

evolve

a

common

computer

perhaps

and

we're

trying

to

get

past

that

transition

point

and

so

on

now

we

can

either

accept

like

non

compositing

signatures

or

we

cannot,

that

is

that

standard

or

assisted

insurers

and

easy

signatures,

or

we

cannot.

If

we,

if

we

do,

then

the

vast

majority

of

servers

in

the

world

will

only

have

those

signatures

and

when

there's

a

chronic

computer,

what

we

squirt.

D

Conversely,

if

we

don't,

then

we're

basically

forcing

everybody

in

the

world

to

get

Capas

integers,

which

doesn't

seem

super

likely

and

so

as

a

contract

achill

matter

I,

don't

see

how

to

like

use

this

in

like

a

web

PKI

environment.

So

it's

like

not

like

I'm

tal

I,

said,

like

telecine

used

everywhere,

so

like

that

I'm

sort

of

some

cos

environments

was

useful,

but

it's

like

hard

understand

like

in

the

web

environment.

This

works.

A

D

C

I

respectfully,

disagree

with

you

I

think

that

this

solution

is

very

important

for

specifically

protecting

keys

that

may

be

pulled,

remove

to

factorization,

so

not

not

so

much

the

server

sides,

but

you

think

about

CAS

route,

the

route

CAS

that

we

need

to

protect

so

by

by

using

this

this

approach,

usually

you

can

be

verifying.

This

type

of

algorithm

is

a

lot

easier

than

signing.

So

with

software

updates,

you

can

actually

verify

and

protect

the

chain.

C

I,

don't

think,

there's

gonna

be

that

much

of

impact,

or

you

could

think

about

solution

where

you

deploy

CAS

level

type

of

multi,

algorithm

and

and

entity

just

using

the

the

crypto

that

you

want

to

use

at

that

time,

because

at

that

point

you

can

is

easy

to

revoke

one

CA

and

whenever

the

the

algorithms

aren't

gonna

be

broken,

and

you

know

that

you

can.

Actually,

if

you

want

to

revoke

a

CA

and

then

spin

up

anyone

I

think.

D

Know

no,

it's

not

because

sitting

here

is

that

someday

in

the

future,

all

classical

algorithms

are

broken

and

now

I

cannot

trust

any

signature,

make

a

nice

neutral,

a

the

classical

algorithm,

and

so

now

the

date

so

like.

If

you

want

me

to

flip

that

switch

or

you

want

me

to

turn

off

all

class

of

algorithms

and

I

haven't

already

preceded

the

environment,

with

everybody

having

non

class

of

integers

and

I

just

destroyed

the

web,

which

is

exactly

what

happened.

D

We

try

to

search

over

Mashal

one

and

so,

and

so

so,

in

order

to

actually

make

this

work

or

we

have

to

do,

is

we

have

to

go

forward

by

requiring

everybody

to

have

post

quantum

signatures

on

every

certificate

or

and

then

only

once

that

is

done,

then.

Are

we

like

well

we're

gonna,

stop

trusting

classical,

always

integers,

and

so

I

don't

see

like

I.

Don't

see

us

doing

that,

I

don't

see

in

the

be

ours

requiring

that

and

therefore,

like

I'm.

A

D

Yeah

I'm

just

understanding

answer

the

question

that

tell

us

I

think

that

for

software

update,

clutch

applications

like

this,

this

kind

of

thing

make

sense.

Although

I

do

wonder

in

those

cases,

why

bother

with

hybrid?

Why

not

just

use!

Basically,

why

not

use

hashing

interest,

which

you

all

grier

find

you?

So

we

have

two

minutes.

E

Benjamin

so

similar

to

the

like,

also

on

the

telus

side

of

things,

when

we

were

doing

the

the

there

was

some

talk

of

the

hybrid

key

exchange,

stuff

and

I.

Think

one

of

the

things

that

came

up

was

how

to

do

the

encoding.

Do

you

add

this?

Like

generic

Combinator

thing,

or

you

just

allocate

code

points

for

some

pairs

and

move

on

with

life

and

the

preference

I?

Well,

my

preference

at

least

was

overwhelmingly

for

it.

E

Just

allocate

some

code

points

for

pairs

or

triples

or

whatever

you

want

and

move

on

with

life

and

I

think

this

is

in

the

wrong

direction

here,

where,

if

you

want

to

do

a

hybrid

thing,

building

these

combinators

and

saying

oh,

if

you

have

subsets

and

it's

okay

and

all

this

stuff

just

adds

like

a

whole

lot

of

complexity

which

bleeds

throughout

the

entire

system.

We

went

through

a

lot

of

trouble

until

s13

to

simplify

the

signature,

algorithm

negotiation,

and

it's

not

clear

to

me.

E

But

if

the

client

trusts

an

ABC

trust

anchor

like.

Presumably

it

knows

what

C

is

so

that

scenario

seems

kind

of

bizarre,

and

the

cost

of

all

of

this

stuff

is

that

we

need

to

like

be

able

to

express

the

Combinator.

Isn't

like

the

the

verifiers

need

this

like

complicated

matching

thing.

As

you

know,

in

the

slides

we

have

to

move

the

policy

into

the

crypto

libraries,

which

is

actually

the

wrong

direction,

because

the

crypto

libraries

are

fairly

low-level

and

don't

really

know

what's

being

used

like

I,

don't

see

the

benefit

of

this.

C

Read

carefully

the

draft

I

think

that

it

will

answer

most

of

your

questions.

Pushing

in

the

crypto

library

actually

I

think

is

a

very

good

idea

in

a

sense

that

operationally

wise

for

PK

eyes,

you're

talking

about

TLS,

but

we

I'm

correcting

my

PKI

I

need

to

protect

my

clients

and

I

need

to

protect

my

infrastructure.

So

my

consideration

for

now

is

I

need

to

protect

against

factorization

all

this

consideration

for

TLS.

They

are

very

important

and

they

have

their

place.

C

I

think

that

there

might

be

some

other

solution

that

we

can

come

up

with

that

are

optimized

for

these

use.

Cases

like

for

TLS,

for

example,

specific

algorithms,

like

some

experiments,

I'd

try

to

do

I

think

we

should

have

a

generic

solution,

doesn't

complicate

the

operation

of

actually

for

application.

Layer

is

easier

compared

to

multiple

certificates

or

multiple

keys,

as

I

show

before

protocols

don't

need

to

be

changed.

This

could

be

a

tool

that

you

can

deploy

whenever

other

tools

are

not

available

or

don't

fit.

C

C

A

I

think

we've

gone

through

this

with

some

other

stuff

in

the

past,

where

lamps

wasn't

sure,

if

other

folks,

if

there

was

a

broader

applicability

and

they've

bumped

it

up

this

Petrus

I,

don't

seem

scoped

to

that

and

I

think

that

the

conclusion

we

have

here,

based

on

the

comments

from

the

floor

is

that

this

doesn't

it's

not

really

appropriate

to

you

know

broader

situations

like

TLS,

I'm,

so

Supriya

to

consider

in

that

narrow

scope.

Does

that

seem

like

generally

okay,

two

people

will

confirm

this

I'll

tell

you

what

let's

not

have

further

discussion.

C

A

C

C

This

work

and

the

first

one

is

the

larger

the

PK

I

said

before

large

apki.

They

higher

the

cost

of

providing

good

difficulty

for

section

is

in

general,

at

best

practices.

Today

we

preside

a

once

posed

for

assertive

that

is

in

period

and

in

particular,

what

we

notice

is

that

what

we

sign

over

and

over

again

is

here,

there's

no

replication

information.

C

The

only

difference

is

the

serial

number,

but

it's

the

same

type

of

signature

and

think

about

the

situation

for

CSP

large

CSP

is

where

they

manage

hundreds

of

thousands

of

different

CAS,

each

of

which

can

have

millions

of

certificates,

especially

when

we,

when

we

think

about

one

of

the

last

consideration,

can

we

do

something

to

optimize

for

the

most

common

case

where

the

certificate

is

valid,

so

there's

no

additional

information

that

we

actually

want

to

provide.

The

second

consideration

is

about

when

you

ask

the

status

of

certificate.

C

Usually

what

you

really

want

to

know

is

this

certificate.

They

all

chain

is

valid

to

something

that

I

trust.

Can

we

limit

the

number

of

needed

round

trips,

so

some

discussion

went

on

the

meaningless

about

this,

and

you

know

some

people

pointed

out

that

the

OCSP,

since

the

2560

allows

the

responder

to

attach

multiple

responses.

C

Even

if

the

client

doesn't

ask

for

multiple

for

multiple

requests,

but

this

is

never

been

used

to

convey

and

this

the

full

saddle

of

the

chain

because

of

I

think

some

some

trust

issues

that

we're

going

to

to

look

into

a

little

later.

But

one

of

the

point

that

I've

tried

to

make

is

that

maybe

we

can

do

something

to

provide

the

full

chain

information

to

the

client

in

such

a

way

that

the

client

can

really

trust

that

information.

This

the

third

and

last

consideration

is

that

in

the

AEF

we

focus

on.

C

You

know

the

internet

PGI,

where

the

trust

model

uses

mostly

certificate

on

server

side

and

other

techniques

to

authenticate

the

client

side.

But

you

know

recently,

we've

seen

the

born

of

new

environments,

for

example,

IOT

is

but

industrial

and

the

industry

that

I

work

with

cable,

where

we

have

huge

big

eyes

or

PK

eyes,

tend

to

grow

in

size

and

in

this

in

this

environment,

sky

insider

vocation

is

even

more

costly

and

can

drive

up

the

cost

of

certificates.

Now

what?

C

How

do

we

address

the

distribution

of

location

information

today,

mostly

through

CR,

ELLs

or

OCSP

and

other

proprietary

metals,

but

I'm

not

going

through

that,

because

this

would

be

infinite?

So

how

do

we

do

with

CR

else?

Well,

er

else

is

a

list

of

authenticated

and

serial

numbers,

that's

signed

by

the

CA

or

delegated

a

CA

and

says

that

these

certificates

should

not

be

trusted

anymore

for

different

reasons

and

there's

a

sentience

for

that.

C

The

program

is

CR

else,

and

this

is

why

we

actually

shift

mostly

towards

P

is

that

the

size

of

the

CRL

is

fairly

unpredictable,

tend

to

grow

beyond

acceptable

sizes,

and

you

know

it

grows

with

replication

event

and

shrinks

with

exploration

events.

So

it's

very

unpredictable.

It

was

not

it

on

the

main

list

that

we

can

use

the

issuer

distribution

point

to

to

bound

the

worst

case

scenario.

However,

this

is

a

study

partition,

as

we

noticed.

So

maybe

you

know

some

approach

to

ranges

could

fix

this

problem,

so

others

be

responses.

C

We

have

to

calculate

every

single

SSP

response,

there's

a

problem

in

conveying

the

full

chain

here,

because

you

know

CSP

response

today

could

be

signed

by

the

CA,

and

if

you

try

to

say

that

CA

says

this

certificate

is

not

revoked.

I'm

not

revoke,

and

my

parentsí

is

not

revoke.

Probably

is

not

the

great

trust

model,

but

we

could

potentially

attach

SSP

responses

signed

by

by

a

responder

wrapped

in

the

chain

and

so

limiting

the

round

trips.

C

Just

to

give

you

an

example

of

the

proposal,

the

proposal

here

is

to

do

range

queries

in

in

a

PTI.

This

is

a

extreme

example

by

an

API

that

you

have

11.5

million

certificates

and

only

3-year

vacations.

You

know

with

doing

range

queries

you

have

to

sign

the

three

different

certification

plus

they

intermediate

between

them.

So

with

two

x+

1

signatures,

you

can

provide

the

same

information

then,

instead

of

signing

11.5

million

certificates,

it's

an

extremely

simple,

but

this

is

the

type

of

optimization

that

we're

looking

for.

C

C

C

Less

comments,

some

requests

for

some

figures:

how

can

we

save

in

costs?

Right?

Probably

the

real

dollar

costs

will

be

difficult

to

provide,

because

this

is

usually

secret

sauce,

but

we

can

provide

the

ratio

of

revolt

against

population

because

this

this

solution

actually

changing

changes,

one

property

they

the

costs-

are

not

related

to

the

Pope

active

population

in

this

case,

but

I

related

to

the

revoked

entries

in

the

population.

C

A

So

the

kickoff

discussion

here

and

again

keep

this

focused

on

this

dispatch

outcomes.

I

think

the

chairs

would

propose

that

this

piece

of

work

looks

about

like

this,

the

right

size

for

something

like

a

small

focused

working

group.

That

seems

not

not

like

something

that

fits

as

an

existing

working

group

and

probably

too

big

to

be

something

ad

sponsored.

So

that's

that's

our

initial

proposal

with

that.

Please

discuss

sure

it's

a

little.

D

Part

of

the

discussion

metric

any

protocol

engineering

because,

like

it

seems

that

is

really

obvious

solution.

This

problem

doesn't

require

much

protocol

engineering,

which

is

do

merkel

signatures,

myrtle

trees,

and

then

you

would

like

have

you

basically,

no

one's

integer

and

then

solution

and

then

and

all

you

need

ISM.

Is

that

way

to

panel

that

so

I'm

not

gonna

need

working

group,

but

so

I

guess

III

liked

it

I

liked

the

season

where

evidence

is

actually

for

her

problem

before

like

spam

for

working

before

it.

Well.

A

D

G

Filmmaker

I,

this

is

I

support.

This

work

and

I

actually

proposed

this

exact

solution

in

98

when

we

had

the

issuing

patent

distribution

point

patent

dispute

issue

to

respond

to

worker,

yes,

lambda,

the

Macaulay,

revocation,

trees

or

coastal

revocation

trees.

Another

approach,

I'm,

not

sure

I,

think

we'd

have

to

consider

which

one.

But

the

other

thing

that

you

might

want

to

consider

is

the

work

that

I

did

with

Rob

straddling,

which

was

on

crl

compression.

G

H

Labs

I'll

mention

what

we

did

for

ATSC,

which

is

the

over-the-air

television

broadcast

and

it's

commonly

used

in

North,

America

and

other

countries.

We

actually

used

OCSP,

as

is,

but

we

staple

the

OCSP

with

possible

responses

to

brought

to

specifically

broadcast

emissions.

So

if

you're

interested

in,

for

instance,

of

channel

a

you

set

your

tuner

on

channel

a

the

client

only

received

only

receives

the

OCSP

responses

that

are

related

to

the

certificates

associate

with

channel

B.

H

If

you

want

channel

B,

sir

responses,

you

go

to

tune

to

channel

B,

we

were

able

to

use

OCSP,

as

is

just

use

it

in

the

staple

in

the

way

was

intended

to

be

for

OCSP,

stapling,

I.

Think

I.

Don't

think

that

you

necessarily

have

to

change

OCSP

for

that

I.

Think

it's

more

a

question

of

how

do

you

actually

narrow

the

amount

of

responses

that

the

client

is

actually

interested

in

and

allow

the

client

to

discover

places

to

obtain

those

responses,

rather

than

coming

up

with

new

signalling.

D

D

For

for

this

kind

of

work,

what

I

think

is

different

and

is

very

valuable

is

for

those

who

are

skeptical

of

this

work

to

be

convinced,

otherwise,

is

to

see

the

data

right

in

practice

the

telemetry

of

how

much

time,

resources,

energy,

whatever

round

trips,

can

be

saved

from

you

know

the

different

techniques

that

are

being

proposed,

especially

whether

its

web

PK

or

other

PKI

deployments.

You

know

that

that

will

help

I

think

motivate

a

lot

more

support

for

different

use

cases

and

different

kinds

of

data

structures.

So

I'd

love

to

see

that

data.

I

J

K

Daniel

Kahn

Gilmore

I'm

curious

to

know

whether

you

thought

about

how

this

interacts

with

OCSP

stapling.

Are

you

expecting

to

ship

the

range

in

the

stapled

region

during

the

TLS

handshake,

and

if

so,

what

does

the

client

going

to

do?

Who

doesn't

understand

this?

When

they

see

a

range

are

they're

gonna?

Is

it

going

to

break

the

stapling

I'm,

not

sure

you

need

to

answer

that

question

right

now,

since

you

have

negative

60

seconds,

but

it

seems

like

a

potentially

problematic

situation.

A

On

finding

solution,

so

yeah

I

think

it

sounds

like

nobody

objected

at

least

to

this

idea

that

if,

if

we're

gonna

do

any

work

here,

then

it

should

be

in

a

new

small

working

group.

I

think

we

can

have

some

discussion

almost

to

confirm

that

there's

energy

to

do

work

here

at

all.

So

thanks

max

I'm

gonna

call

it

Nick

Sullivan!

Next,

if

you

that's

alright,

we've

had

a

last-minute

agenda,

propose

to

the

chairs

directly

I'm,

just

gonna

swap

the

next

two

proposals,

so

we're

gonna

do

a

nick

and

then

Justin.

That's

alright.

Okay,.

A

L

J

J

The

motivation

here

is:

wouldn't

it

be

nice

to

have

some

online

equivalent

to

cache

something

like

talking

to

one

service

and

being

issued

some

sort

of

credit

that

you

could

spend

at

another

service

or

such

that

it's

unlikeable,

but

the

person

who

issued

the

credit

to

you

is

unable

to

know

where

you

spent

it,

and

this

would

be

something

that

be

nice

to

have

and

to

work.

An

internet

scale.

J

Imagine

having

an

envelope

writing

serial

number

on

it,

adding

a

piece

of

carbon

paper

in

there,

sealing

it

up

and

sending

it

to

an

issuer

that

would

sign

the

envelope

with

saying

this

is

legal

tender

without

knowing

what

the

serial

number

is

you'd

open

it

up,

and

then

you

have

legal

tender

that

you

could

spend

somewhere

else.

So

the

issuer

does

not

know

the

serial

number

and

it's

something

that

you

could

use

use

somewhere

else.

J

J

The

solution

here

or

the

problem

space

here

was

for

solving

CAPTCHAs,

specifically

solving

CAPTCHAs

on

multiple

different

websites,

so

that

your

experience

of

solving

CAPTCHAs

is,

is

that

you

see

fewer

CAPTCHAs,

so

you

can

imagine

if

there's

a

hundred

different

websites,

they

all

have

the

same

CAPTCHA

provider

and

if

you

solve

a

CAPTCHA

on

one

website,

you

get

a

cookie

that

proves

that

you

solve

that.

Captcha,

this

cookie

is

not

transferable

from

one

website

to

the

other,

without

leaking

your

identity.

J

J

You

issue,

you

send

it

to

the

capture

provider,

they

sign

the

blinded

token,

send

it

back

to

you

and

then

the

next

time

that

you

encounter

a

CAPTCHA,

you

unblind

the

token

and

you

send

it

to

the

capture

provider

and

it's

able

to

verify

that

this

was

a

valid

token,

but

not

know

which

site

it

was

that

issued

it

or

who

the

issuer

was,

and

so

there's

two

constructions

underneath

that

make

this

possible

blinded

RSA.

This

is

the

original

ecash

scheme.

J

This

has

the

feature

that

it,

the

these

tokens

are

publicly

verifiable,

so

one

party

can

issue

and

any

party

can

verify.

The

version

that

we

came

up

with

for

privacy

pass

is

called

vo

PRF.

This

is

some

this.

This

is

actually

currently

being

looked

at

at

the

C

FRG.

That's

been

adopted

as

a

working

group

item

to

define

how

this

works.

It's

a

oblivious,

pseudo-random

function

with

verifiability.

J

Essentially,

the

only

difference

with

blinded

RSA

is

that

it

uses

the

looked

at

curves,

it's

more

efficient

and

it's

verifiable

by

only

the

issuer,

which

is

a

slight

constraint

that

gets

you

the

performance

now

privacy

pass

itself

has

running

code.

We

have

the

codes

open

source.

It's

oh

I

have

one

mint

okay

great,

so

this

is

there's

a

firefox

in

chrome

extension.

This

is

actively

used

by

over

a

hundred

and

one

hundred

and

thirty

thousand

people

per

day

per

week.

J

Half

a

million

redemptions,

or

so

this

numbers

probably

gone

up

quite

quite

a

bit

and

we

recently

released

a

new

version,

and

so

the

reason

I'm

coming

here

is

this

technology

seems

to

be

useful.

More

generally

and

there's

been

a

number

of

different

applications

to

this

that

have

popped

up

specifically

with

within

the

brave

router

browser

doing

ad

confirmations.

The

privacy

sandbox

proposal

from

chromium

is

interested

in

using

this

specific

technology.

J

J

Okay,

so

I

know

I'm

sort

of

a

little

bit

over

or

not

okay,

okay,

great

so

I'm

almost

done

here.

So

what

does

the

scope

of

work

to

be

done?

What

am

I

asking

well

defining

the

basic

privacy

protocol

in

terms

of

HTTP

headers?

How

do

you

integrate

the

crypto

and

if

there's

specific

features

to

the

use

cases

listed,

can

we

support

those?

And

so

perhaps

this

is

a

mini

working

group

sized

amount

of

work,

maybe

a

full

working

group

sized

amount

of

work?

It's

not

clear!

This

is

why

we're

here

at

dispatch.

J

J

M

M

I,

remember

talking

to

you

about

this,

and

one

of

the

differences

is

the

fact

that

the

original

proposal

could

be

verified

offline,

and

this

can't

right,

that's

correct.

So

I

was

wondering

whether

that

could

be

brought.

Is

there

a?

Is

there

an

like

a

way

to

bring

that

back?

I've

got

use

cases

which

this

would

be

perfect

for,

but

offline

verification

would

be

really

really

useful.

Yeah.

J

D

J

D

D

I

didn't

foresee

FRG,

but

again

so

I

guess

the

question

the

question

I

do

have

is:

is

there

any

new

crypto

engineering

here

that

we're

gonna

need

to

run

trip

for

CFR

G?

My

understanding

is

V

up

here,

ups

are

already

being

done

and

that

we

understand

how

to

do

the

there

one

or

two

bits

of

metadata

with

scheme

without

having

to

like

and

then

a

bunch

of

these

are

knowledge,

proofs

and

stuff

like

that

right,

yeah.

J

N

Is

interesting,

I

think,

including

in

the

charter,

the

ability

to

have

further

extensions

like

that

private

metadata,

additional

bits

of

data

would

be

useful.

I

think

there

is

still

crypto

work

to

be

done.

We

had

a

scheme

for

they

like

private

metadata,

but

there

was

an

issue

with

that

that

you

were

able

to

discover

whether

or

not

two

users

had

the

same

bit

there

and

I

think

we

probably

want

to

get

more

is

both

from

CFR

G

and

like

people

looking

at

these

protocols

specifically

before

we

call

anything

working

and

not

needing

work.

O

P

J

Q

R

M

A

S

J

You

yeah,

so

that's

that's

a

clarified

clarifying

point

on

the

in

drop

point

of

view.

The

recent

release

that

we

did

is

interoperable

with

multiple

CAPTCHA

providers

rather

than

just

CloudFlare

and

the

closer

extension.

So

it's

one

extension,

multiple

providers,

but

the

mechanism

for

federate

Federation

is

is

not

in

scope,

cool.

A

All

right,

so

it

sounds

like

there's

I

think

you're,

probably

about

right.

This

is

about

micro,

working

group

size

and

it

sounds

like

there's

several

expressions

of

interest

from

the

floor,

so

I

think,

let's

work

workshops

the

Charter

on

the

list

and

eighties

willing.

We

can

probably

just

charter

this

directly

without

further

Bafa

tude

a

chair

so

that

chairs

the

mic

please

or

a

decent

mic.

Please.

L

T

T

What

I'm

talking

about

is

a

message

level

signature

over

an

HTTP

request

and

that's

a

signature

that

would

cover

some

subset

of

the

headers,

the

body

other

requests,

I

learned

elements

like

the

URL

and

verb,

and

all

of

that

other

stuff,

and

in

particular,

we're

interested

in

talking

about

detached

signatures

and

not

encapsulated

signatures

more

on

that

in

just

a

bit.

The

reason

that

there's

a

lot

of

people

out

there

interested

in

solving

this

is

pretty

varied

as

it

turns

out.

People

have

been

approaching

this

in

order

to

prove

possession

of

a

specific

key.

T

There

are

security

protocols,

extensions

to

OAuth

and,

and

the

like

that

are

you

know

you

want

to

have

some

way

to

say

that

I

have

a

particular

application

level

key.

You

want

to

be

able

to

authenticate

a

piece

of

software

which

is

a

slightly

different

but

related

problem

to

keep

roofing

possession.

You

want

to

have

sometimes

message

level

integrity.

This

goes

beyond

the

TLS

transport

layer,

integrity

and

also

non-repudiation

and

audit

kind

of

you

know.

I've

got

a

signature

over

the

request

itself

that

might

last

in

logs

and

things

you

know

going

forward.

T

So

the

reason

that

this

is

a

hard

problem-

and

we

don't

already

have

something

built

in-

is

actually

not

the

signature

part.

The

reason

that

this

is

hard

is

because

of

HTTP

HTTP

gets

transformed,

and

you

know

terminated

and

repo

and

parsed

and

reconstituted

and

replayed

all

over

the

place.

It's

designed

to

be

able

to

do

that,

and

we

kind

of

expect

it

to

be

able

to

do

that

in

our

systems.

T

Things

like

a

JSON

body

is

gonna,

be

handed

to

you

as

an

object

and

not

as

the

series

of

bits

of

the

JSON

the

HTTP

message.

Entity,

payload

itself,

right,

parsing

and

re

serialization

is

really

really

super

common.

All

of

this

makes

signing

any

of

that

really

really

really

difficult.

You

inevitably

need

to

have

some

type

of

canonicalization

and

normalization

process

in

order

to

make

this

thing

work.

T

So,

first

question:

why

aren't

we

solving

this

using

other

existing

technologies?

So

why

can't

we

just

use

TLS

for

everything?

Well,

TLS

stops

at

the

TLS

terminator,

thus,

the

name

and

in

a

lot

of

cases

in

a

lot

of

applications.

You

need

both

the

integrity,

protection

and

the

key

proofing

to

go

beyond

the

TLS

terminator.

Additionally,

there

are

a

lot

of

applications

where

mutual

TLS

doesn't

really

fly.

Anything

that's

being

generated

inside

of

a

web

browser,

so

in

in

s.p.a,

or

something

like

that

doing,

M

TLS

from

inside

there

really

doesn't

work

it.

T

It

is

a

horrible

user

experience.

People

get

prompted

for

personal

certificates

because

of

assumptions

that

we

have

made

on

the

Internet

about

what

a

certificate

in

a

browser

means

and

stands

for

that.

Don't

really

map

to

the

way

that

we'd

like

to

potentially

use

the

technology

all

right.

What

about

s

HTTP

this

got

brought

up.

I

believe

is

on

dispatch

list

not

on

sec,

dispatcher

I

forget,

but

there's

and

no

I

don't

mean

HTTPS

I

mean

s

HTTP.

T

T

Are

we

taking

questions

in

the

middle

here,

or

should

we

tell

Phil

that

okay

awesome?

Thank

you

all

right.

So

what

about

using

Jose?

A

lot

of

people

love

this

for

lots

of

things.

The

thing

about

Jose

is

that

it's

really

really

good

at

signing

its

payload

that

it

carries

along

with

it

and

again,

if

you're

doing

some

type

of

detached

signature,

you

need

a

way

to

get

that

payload

into

the

Jose

world

and

then

get

it

back

out

so

that

you

can

carry

the

signature

along.

T

It's

not

really

not

really

set

up

to

do

this

kind

of

thing,

and

if

we

were

to

encapsulate

all

of

the

HTTP

stuff

so

put

the

the

verbs

and

the

actions

and

the

URLs

and

queries

and

everything

inside

a

Jose

object

and

just

stuff

that

inside

of

an

HTTP

request,

you

end

up

with

soap

with

curly

braces

and

let's

really

not

go

there.

All

right

so

looking

around

there

and

the

reason

I

wanted

to

bring

this

into

sec

dispatch

is

that

there

are

at

least

five

options

out

there.

T

There

are

actually

now

seven

thanks

to

Annabel

publishing

two

more

this

week,

but

be

fair.

That's

a

it's!

Those

are

a

remix

of

one

of

the

ones

up

here,

so

I

didn't

update

the

slides

and

I

am

personally

responsible

for

two

of

these,

which

are

different

from

each

other,

because

reasons

and

all

of

these

exist

today

and

are

incompatible

with

each

other

and

solve

slightly

different

slices

of

the

overall

problem.

So

cabbage

signatures,

which

is

probably

one

of

the

yes

so

Mountain

Thompson

I,

can

add

at

least

two

more

to

that

list.

T

Yeah

I'm

sure,

that's

I,

said

I

said

at

least

there's

there's.

All

people

have

been

hashing

this

in

different

ways

so

and

I

should

add.

These

are

specifically

the

detached

non

encapsulated

versions.

If

you

want

to

go

encapsulated

mess

signed,

HTTP

request,

there's

it's

like

you.

You

can't

throw

a

dead

rat

around

here

without

running

into

one

there's

a

lot

so

I'm

talking

about

specifically

detached

stuff

here.

Cavett

signatures

are

probably

one

of

the

most

widely

used

ones

out

there

they're

a

little

bit

limited

about

what

they

can

canonicalize

and

sign,

but

kind

of

works.

T

One

of

the

the

worst

things

about

this

particular

draft,

though,

is

that

it

is

entirely

detached

from

the

IETF

process.

It

is

in

ID

that

people

outside

the

IETF

have

been

working

on

and

updating

and

changing

more

on

that

in

a

moment,

and

it's

just

kind

of

there,

but

people

are

using

it

Oh,

auth,

depop

and

OAuth

pop,

which,

yes,

those

are

two

completely

different

things.

T

They

and,

as

you

can

see,

they

attack

different

parts

of

the

problem.

Deep

hop

is

all

about

key

proof

of

possession,

whereas

auth

pop

is

about

token

presentation

and

a

message:

integrity

component,

with

a

key

proof

of

that

presentation,

that

kind

of

comes

along

for

the

ride,

and

that

is

that

latter,

one

is

also

an

expired

working

group

draft,

because

you

know

it

never

really

kind

of

caught

on

the

new

XYZ

project

that

I've

been

working

on,

which

we

talked

about

the

TX

off

off

the

other

day.

T

All

that

that

does

is

it

signs

the

body.

It

doesn't

touch

anything

else,

so

that

is