►

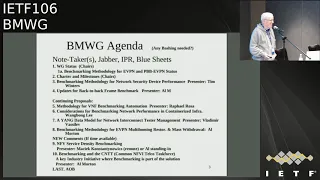

From YouTube: IETF106-BMWG-20191120-1330

Description

BMWG meeting session at IETF106

2019/11/20 1330

https://datatracker.ietf.org/meeting/106/proceedings/

B

B

D

B

D

If

anybody's

really

tall,

when

you

get

up

here,

feel

free

to

make

adjustments

a

hi,

everybody

welcome

to

benchmarking

methodology

working

group.

My

name

is

al

morton,

one

of

the

co-chairs,

my

fellow

co-chair

here

it

was

not

a

fellow

is

sarah

banks,

say,

say

hi

sarah

this

may

be.

He

has

said

hello,

so

our

session

is

starting

now

compliment

it's

late.

D

D

It's

wednesday,

we

have

the

note

well

slide,

which

you

should

have

seen

a

few

times.

We

work

according

to

certain

IETF

processes

and

policies,

one

of

the

most

important,

which

is

you

know

the

video

recording

and

also

because

that's

going

on

right

now

and

then

the

disclosure

of

IPR,

which

we

asked

you

to

do

as

quickly

as

possible

and

then

but

the

most

important

thing

is

the

thing

I've

added

at

the

top.

D

D

D

Thank

you

and

if

you

come

to

the

mic

to

speak

or

make

a

comment,

make

sure

Louise

can

see

your

badge

so

that

he

can

get

the

spelling

of

your

name

and

where

we're

taking

minutes

today

in

the

ether

pad

which

is

allocated

to

our

working

group.

So

our

oh,

yes

so

say

your

name

like

the

little

tag

says

and

and

and

Louise

will

also

be

able

to

spell

it

as

correctly

as

possible.

D

So

we've

got

the

note-taker.

Everybody

can

help

on

etherpad.

We've

had

trouble

with

jabber

in

the

past.

Is

anybody

connected

to

jabber

for

this

group

yeah

and

I

doubt

that

there'll

be

anybody

there

warned

but

yeah,

but

but

I

appreciate

that

you've

helped

us

out

with

this

in

the

past

and

and

it's

a

big

big

help

because

we're

supposed

to

provide

it.

I've

just

mentioned

the

IPR

and

the

blue

sheets

are

going

around.

D

This

is

where

you

sign

your

name,

only

one

blue

sheet,

because

we're

a

fairly

small

group

for

sure

you

get

your

name

on

it

and

your

company

affiliation

and

and

so

forth.

So

that

covers

the

administrative

stuff,

except

for

the

agenda

approval,

so

we're

first

going

to

do

the

working

group

status,

we'll

look

at

our

charter

charter

and

milestones

briefly.

D

We

have

some

sins

to

apologize

for

there

and

then

we'll

look

to

tim

winters

who

I

think

is

here:

yes,

hi

Tim

for

presentation

on

the

next

generation,

firewall

networks,

security

device,

performance,

I'm

authoring,

a

draft

on

back-to-back

frame

benchmarks,

it's

updates

for

that

in

RC

25:44.

So

that

gets

us

through

the

working

group

drafts

and

then

we'll

have

plenty

of

time.

I.

Think

for

the

continuing

proposals,

which

include

the

methodology

for

vnf

benchmarking

automation,

a

Raphael-

are

you

here:

Raphael

is

at

lunch.

Okay,

hopefully

he

joins

in

time.

D

We

may

need

to

shuffle

that

a

little

bit

we

have

Wang

bong

Lee.

Who

is

going

to

give

us

a

presentation

on

the

considerations

for

benchmarking

in

the

containerized

infrastructure

right

on

topic

and

then

a

yang

data

model

for

network

interconnect,

tester

management,

vladimir

veselov

is

here,

he's

he's

had

his

draft

available

for

quite

a

while,

and

this

is

the

first

time

Vladimir

is

asked

to

present

it

following

a

successful

hackathon

over

the

weekend

and

I

think

he

also

had

the

hack

hack,

hack

demo

happy

hour

last.

D

D

But

then

the

last

sort

of

one

we

originally

sort

of

wanted

to

do

here

was

the

benchmarking

methodology

for

a

VPN

multihoming

devices

and

restoration,

which

we've

updated,

Jim,

Yutaro

and

I,

and

then

new

sort

of

there's

new

comments

on

service

density

benchmarking.

But

we

don't

have

anybody

here

who

can

really

take

them.

D

D

B

D

E

D

That's

a

much

better

volume.

Thank

you,

okay.

Okay,

all

right!

So

thank

you.

Thank

you

for

that

agenda

bash

and

for

being

ready

to

go

here.

Much

appreciated

and

thank

you

for

utilizing

your

audio

expertise.

I

should

also

introduce

our

area

director

advisor,

who

is

a

Warren

Ace

Kumari

here

at

the

front

address

today

like

a

like

a

strawberry,

but

that's

cool,

so

very

cool,

actually

so

good.

D

So

let's

go

ahead

with

those

changes,

so

the

quick

status

is,

you

know,

along

the

way

we

adopted

this

back

to

back

frame

benchmarking,

and

that

meant

we

actually

got

some

comments

from

folks

which

I

almost

forgot

to

incorporate

so

and

they've

been

coming

in

very

nicely.

So

I

had

to

do

was

wait

till

the

submission

window

opened

again,

and

so

now

there

is

a

new

version

of

that

draft

available.

I

apologize

for

missing

the

regular

deadline.

D

So

I

don't

expect

a

lot

of

comments

on

that

today,

but

we'll

talk

about

the

ones

we

got

and

how

will

you

were

result

them?

So

the

new

proposals

keep

coming

and

if

you

don't

see

your

name

here,

it

means

I,

missed

it

somehow,

but

but

there's

just

the

the

point.

Is

that

there's

a

lot

and

here's

where

we

get

to

apologize

and

work

with

our

area

director

to

fix

all

our

milestones,

which

we

keep

forgetting

to

do

so

yeah

yeah

yeah?

D

So

let's,

let's

do

that,

but

these

are

all

the

things

that

in

fact,

some

of

them,

for

example,

methodology

on

evpn,

benchmarking,

we're

I,

think

we've

pushed

the

button

on

publication,

requested

right

and

and

so

for

that

one

we

have

a

write-up

to

do

the

document

Shepards

right

up

all

done:

oh

cool,

cool,

okay!

So

great!

D

So

then

it's

an

I!

Guess

it's

in

Warren's

hands

that

that's

fine!

That's

fine!

That's

a

busy

week!

I

know

so,

let's,

let's,

let's

all

be

nice

to

each

other,

as

I

said

at

the

start,

no

problem

one!

So

we

we're

willfully

behind

in

fixing

this,

but

we

will

do

it.

Okay,

new

RFC's,

none

charter,

update,

none.

D

But

also,

if

you

know

anything

about

testing,

you

can

read

our

fundamental

RFC's,

which

will

quickly

find

out

from

the

list

what

they

are

and

and

get

on

board

with

what

we

try

to

accomplish

here.

It's

a

laboratory,

testing

data

planes,

primarily

sometimes

control

planes

and

so

forth.

Folks

who've

just

joined

us.

The

blue

sheets

have

made

it

to

the

front

and

if

you

would

please

sign

the

blue

sheet

so

that

we

don't

get

a

broom,

a

broom

closet

sized

room

for

next

time.

B

B

B

D

B

B

D

Yeah,

well

probably,

you

know

who

try

to

keep

some

expectations

realistic

and

that

August

2018

one

was

always

aspirational.

So

okay,

I

think

that's

oh

yeah,

and

this

came

up.

I

just

sent

comments

to

the

considerations

for

a

containerized

infrastructure,

author

team.

We

do

have

the

standard

paragraph

that

helps

us

get

through

security

considerations,

review

and

feel

free

to

modify

it,

but

always

try

to

put

something

like

this

in

because

the

key

part

is

that

we're

activities

described

in

this

memo

are

limited

to

technology

characterization

using

controlled

stimuli

in

the

laboratory

environment.

D

You

know

we

had

a

bunch

of

people

and

security

go

what

the

hell

are

you

doing

and

and

and

then

they

finally

get

to

the

to

the

end

paragraph

and

they

go.

Oh,

oh

and

they've

done

a

bunch

of

typing

and

it's

you

know

it's

better.

If

they,

if

they

look

at

this

first

I

guess,

I,

don't

know

so

anyway,

it's

a

good.

It's

a

good

starting

place.

I,

don't

think

this

is

the

final

place

feel

free

to

edit

this

as

you

go

and

I

guess,

that's

it

yeah.

B

So

I

just

want

to

make

a

quick

note,

especially

for

newcomers

with

new

drafts.

Bmw

G

are

only

issues,

I

believe

informational

track

drafts.

Worse,

they

are

RFC's.

We

are

not

on

the

standards

track

and

I

bring

this

up

because

the

last

the

last

document

that

we

just

sent

in

for

publication

when

in

a

standards

track

and

Cynthia

actually

caught

it

and

came

back

and

said,

did

you

intend

this,

or

did

you

mean

for

it

to

be

informational

and

even

I

missed

that?

B

So

it's

easy

to

do,

because

so

much

of

the

IETF

tends

to

be

on

the

standards

track,

and

so

we

tend

to

overlook

that

so

I

just

wanted

to

call

out

hey

while

you're

cutting

and

pasting

that

paragraph

that

I'll

just

had

on

the

screen

into

your

draft.

We

want

to

make

sure

that

the

status

is

informational

and

if

you

feel

there's

some

particular

need

for

that

exception.

I

guess

you

could

let

us

know,

but

it

should.

We

would

generally

expect

it

to

be

informational

and.

D

By

the

way,

the

history

and

the

reason

for

that

is

that

there

was

at

the

time

that

was

sort

of

decided.

This

group

goes

back

to

1989.

By

the

way

there

was

no

process

to

move

testing,

internet

drafts

or

RFC's

along

the

standards

track.

You

had

to

look

for

interoperability

and

prove

that

interoperability

and

deployment

in

order

to

advance

along

the

standards

track.

D

So

in

the

IP

PM

working

group,

IP

performance,

metrics

working

group,

we

figured

out

a

way

to

get

our

drafts

and

RFC's

on

the

standards

track

by

proving

interoperability

in

the

form

of

measurement

equivalence,

and

we

have

a

process

for

doing

that,

and

that

process

would

very

much

apply

to

benchmarking

methodology

drafts.

We

could

modify

it.

D

Let

me

tell

you

there

was

a

lot

of

work,

but

it

was

worthwhile

in

the

end.

So

this

is

there's

something

we

could

talk

about

some

more

when

the

last

time

I

raised

this

one

when

the

I

ppm

process

was

new

people

weren't,

so

interested

in

it.

In

fact,

our

industry

has

been

just

completely

satisfied

with

taking

these

informational

drafts,

although

they

have

all

these

requirements

turns

in

them

and

building

their

equipment

to

satisfy

those

requirements.

So

if

there's

no

need

from

the

community,

then

we

won't

pursue

it.

D

H

Okay,

so

I'm

presenting

on

a

working

group

draft

next-generation

firewall

performance

testing.

We

have

a

couple

of

updates.

We

posted

a

new

draft

yesterday,

yeah

I

think

yesterday,

a

new

one

got

pushed

and

I'm

gonna

talk

about

some

of

the

changes

and

just

kind

of

highlight

them.

Just

a

quick

reminder.

This

is

the

reminder

slide

we

have

going

on

here.

This

is

really

creating

performance

testing

for

next

generation

firewalls,

the

last

IETF

draft

for

benchmarking

firewalls

was

15

years

ago.

Probably

time

we

updated.

H

Most

of

the

work

hasn't

been

happening

directly

on

the

benchmark

working

group

mailer.

It's

been

happening

through

a

group

of

companies

that

have

gotten

together

at

net

sec,

open

forum

and

it's

basically

two

labs

three

tool:

vendors

and

eight

firewall

members

that

have

been

working

on

developing

all

of

the

sections

are

inside

of

this,

so

I'm

gonna

report

on

the

updates

we

have

first

thing

is:

is

we've

actually

started

beta

testing?

So

we

have

several

firewalls

that

are

in

labs,

both

at

the

UNH

iol

and

a

NTC

that

are

actually

running

testing.

H

One

of

the

things

we're

doing,

and

it's

going

to

come

up

is

we're.

Comparing

measurements

from

multiple

tools,

so

we

have

multiple

tools

at

multiple

labs

and

we're

finding

it

not

everything.

Surprise

surprise

was

measured

exactly

the

same,

so

we're

going

through

figuring

out

what

makes

the

most

sense.

You

know

things

like

time

to

first

byte

those

kind

of

things

we're

negotiating

on

how

to

properly

measure

it.

H

You

know

one

thing

we

want

to

make

sure

as

part

of

this

is

that

no

matter

where

you

go,

what

lab

you

go

to

or

what

tool

you

use?

You're

gonna

get

the

same

answer.

So

that's

a

big

part

of

the

effort

that

we

have

right

now.

It's

a

beta

test

and

we're

also

comparing

measurements

from

different

firewalls

to

see

you

know

what

affects

them

and

what

doesn't

affect

them,

and

I'll

talk

about

more

of

that

in

a

second

some

of

the

output

of

those.

H

That's

all

ongoing

right

now,

I

think

we're

gonna

wrap

up,

probably

in

the

next

before

the

end

of

the

year.

I

think

we'll

have

our

first

set

of

results

to

be

companies

to

publish

is

where

we're

at

timeline

wise,

so

I

know

in

the

Charter.

We

have

this

date

that

we've

missed

I.

Think

we're

probably

going

to

finish

the

actual

testing

and

be

able

to

finalize

the

draft,

probably

by

the

next

ITF

will

have.

We

should

have

everything

in

a

better

spot

to

go

to

working

group

last

call.

B

H

We're

calling

it

beta

because

we're

going

through

multiple

runs,

so

we

do

a

run

and

then

we

adjust

we

exchange,

we

exchanged

emails.

Discuss

then

you're

gonna

see

two

of

the

things

that

we

weaved

in

a

first

round

on

firewalls

and

said:

oh

thank

you

yeah!

That's

really

why

we're

calling

it

beta

updated

firewall

configuration.

So

this

is

two

of

them.

H

The

original

draft,

and

now

it

says,

must

had

a

should

for

configuring

ACLs

and

basically,

what

we

found

is

that

you

know

obviously

that

affected

performance

and

realistically

you

know

most

firewalls

out

and

will

have

some

amount

of

ACLs.

So

we

said:

hey

every

firewall

should

do

this,

so

they

all

can

be

measured

correctly

and

have

the

right

ackles.

So

we're

adding

that

that

was

one

of

the

things

you

know

one

of

the

labs

the

firewall

vendor

didn't

have

them

configured

originally

and

the

other

labs

did.

We've

worked

that

out.

H

Another

one

that

came

up

was

traffic

logging.

The

original

draft

said

all

traffic,

which

obviously

is

gonna,

have

a

big

impact

on

firewall

performance.

We

updated

it

to

say,

you

know,

flows

sue,

so

it

doesn't

have

to

be

every

packet.

The

first

goes

through

the

firewall.

You

know

it.

This

was

really.

The

performance

was

going

to

drastically

change.

Every

packet

had

to

be

logged

for

the

actual

testing.

H

H

The

other

updates

we

made

to

the

draft.

There

are

two

main

things

that

we

made:

I'm

gonna

talk

about

the

second

bullet.

First,

because

it's

just

easier

to

talk

about.

We

had

a

lot

of

extra

KPIs

that

weren't

necessary

and,

as

we

went

through

the

beta

testing,

we

said

this

is

this:

is

of

zero

value,

so

we

removed

a

lot

of

those

a

lot

of

the

original

ideas.

We've

had

we've

as

we

actually

run

the

test,

and

we

find

that

there

wasn't

a

whole

lot

of

value

so

we're

removing

the

extraneous

kpi's.

H

If

you

do

add,

if

you

can

see

which

ones

came

out,

I

gave

an

example

of

a

couple

of

test

cases

where

it

just

made

more

sense.

The

other

thing

we

removed

that

we

weren't

currently

measuring

was

application.

Transaction

latency

I

wasn't

sure

this

was

the

best

KPI

to

measure

things.

So

we

took

it

out

and

when

we

took

it

out,

we

had

a

several

places

where

we

wanted

to

measure

it.

H

We

found

better

ways

to

measure

different

values,

so

we

basically

took

it

out

and

found

better

ways

to

measure

the

different

measurements

that

we

have

and

we're

going

and

using

those

going

forward.

We

think

we

think

now,

we've

got

it

better

and,

like

I

said

we're

hoping

to

wrap

the

testing

up

for

the

first

set

of

firewalls,

and

then

it

will

be

more

turn.

The

crank

going

forward.

Yeah

I,

think

that's

everything.

H

H

Me

your

ideas,

yeah

well,

one

of

them

is

definitely

to

do.

We

have

a

change

log

we've

made

some

decisions,

I

think

some

of

that

should

go

directly

to

the

list

to

the

benchmarking

list,

as

we

go

forward.

As

we've

decided.

Some

of

these

things

are

happening

between

you

know.

Between

the

benchmarking

group.

We

should

be

clear

when

we

made

changes

to

the

draft.

What's

going

on,

I

think

now

we're

at

the

point

where

we

should

be

doing

that.

So

that's

something

I'm

gonna

bring

up

with

the

benchmarking

working

group.

H

H

D

These

items

I

mean

I

I

appreciate

there

may

be

a

need

to

anonymize

the

specific

pieces

of

equipment

involved.

Yeah,

that's

fine

to

do

that.

We

don't

really

care

who

who's

what

broke

this

yeah

and

so

forth.

It's

really

just

about

how

do

we

clarify

things

so

that

measurements

clear

and

it

and

it

ends

up

sort

of

ensuring

the

the

kind

of

multi

device

equivalent

measurements

that

I

was

talking

about?

That

would

be

great

yep.

H

And

then

one

thing

another

thing

we

did:

we

did

start

putting

the

draft

and

get

to

help

us

track

changes.

You

can

definitely

make

that

link

available

so

that

people

can

see

as

commits

go

in

and

pull

request.

They

can

people

can

easily

see

what

changes

are

made.

I

will

so

that

doesn't

get

at

context,

but

I

think

that

will

help

I

think

the

next.

You

know

the

other

thing

we

need

to

do.

A

better

job

is

when

we

decide

as

a

group.

D

H

F

H

D

F

Yeah,

thanks

for

the

presentation,

Tim

I

think

I

wouldn't

expect

too

many

changes

any

more,

hopefully

so

yeah

I

completely

agree.

We

should

have

done

a

better

job

and

we

will

try

to

do

a

better

job

in

keeping

the

team,

WG

mailing-list

informed,

but

I

think

from

my

point

of

view,

I

would

rather

like

to

try

and

progress

the

draft

towards

like

last

call

or

something

like

that,

not

sure

what

your

advice

is

on

that

era.

D

Yeah

yeah,

it

would

be

III,

don't

think.

We've

had

the

list

discussion

yet

to

warrant

that.

So

let's

get

some.

Let's

get

some

reviews

from

the

benchmarking

community

and

then

we

can

justify

that

working

group

last

call

I,

think

and

and

I

and

I

appreciate

Carsten

that

you've,

given

us

lots

of

car

cementum

that

you've,

given

us

lots

of

opportunity

to

do

that,

I

know

that

I've

provided

comments.

I

know

that

others

have

sort

of

held

back.

D

B

Think

the

precondition

for

us

is

always

to

have

the

conversation

on

the

list,

so

we

can

get

the

eyeballs

on

it,

get

folks

weighing

in

and

then

as

chairs.

We

can

say

yep,

there's

clear

consensus

here

or

rough

consensus,

I

suppose,

but

some

kind

of

consensus

to

move

this

along

to

working

group

last

call

so

I

realize

I'm

one

hand

saying

at

that

out

loud

I

realize

you

probably

already

know

that,

but

it's

not

a

I

mean

I.

Guess

that's

an

always

precondition.

There

precondition,

there's

nothing

special

about

it.

B

D

D

D

Yeah,

okay,

thank

you

all

right,

too

many

buttons.

Let's

see

so

there's

been

lots

of

comments

on

this,

including

from

the

authors

of

the

search

search,

algorithm,

dress,

Maciek,

Ratko

and

and

at

the

last

meeting

Tim

Carlin

from

a

UNH

offered

to

provide

some

comments,

and

so,

since

the

original

submission

deadline,

we

have

addressed

vrak

COEs

comments,

and

you

can

see

all

of

that

going

on

in

the

email

on

the

list.

I'll

and

I'll.

D

D

D

D

We

know

what

this

device

can

do

in

terms

of

that

header

processing

rate

frames

that

have

not

been

processed

are

have

been

processed,

are

clearly

not

in

the

buffer

and

so

the

size

of

the

bursts

that

you

send

before

you

send

too

big

a

burst

and

packets

or

frames

are

lost.

It

is

actually

reduced

by

the

header

or

the

packet

frame

processing.

So

that's

the

calculation

we're

trying

to

put

together

here

and-

and

we

do

that,

so

that

we

get

a

more

accurate

view

of

the

actual

buffers

buffer

size

and

the

time

that's

available.

D

So

a

a

few

just

clarifications

here

in

the

text

that

we

talked

about

with

the

previous

testing,

so

the

number

of

back-to-back

frames

with

zero

loss

reported

for

a

large

frame

size

was

unexpectedly

long

for

for

large

large

frames.

This

is

cases

where

the

large

frames

and

their

frame

header

rate

didn't

exceed

the

frame,

header

processing

maximum

rate.

D

So

basically,

there

were

few

frames

or

no

frames

in

the

buffer

when

you

were

sending

frames

at

these

high

frame

sizes,

maximum

that

you

could

and

the

measurement

devices

would

not

necessarily

detect

that

so

we've

actually

got

prerequisite.

Testing

now

to

say

anytime,

you've

got

a

test

where

you

you

send

frames

at

a

particularly

when

you

get

a

throughput

equal

to

them,

the

maximum

theoretical

you

don't

test

for

buffer

size

there.

It's

just

not

possible.

D

That's

the

way

this,

it's

one

of

the

ways

that

this

update

improves

things,

and

then

it's

apparent

that

the

let's

see.

What's

this

way

one

here,

it

was

found

that

the

actual

buffer

time

in

the

dot

could

could

be

estimated

using

the

results

from

the

throughput

tests

conducted

according

to

20

section

26,

one

of

RFC

25:44.

It's

apparent

that

the

duds

frame

processing

rate

tends

to

increase

the

implied

estimate.

That's

what

I've

just

been

talking

about.

According

to

section

26

point

4

and

a

calculation

using

the

throughput

measurement

can

reveal

a

corrected

estimate.

D

What

we

have

is

that

we

have

a

table

where

we

ask

over

repeated

tests

of

the

longest

burst

length

of

back-to-back

frames.

We

ask

that

the

minimum

and

maximum

and

the

standard

deviation

be

reported

in

addition

to

the

average

length,

and

so

we

have

a

table

that

makes

that

possible

and

then

of

Ratko

asked

hey

look.

You

know,

you're

sending

these

frames

at

this

maximum

theoretical

rate,

the

back-to-back

rate

on

the

ingress

interface.

D

What?

If?

What?

If

I

my

tester

and

I

know

that

I

want

to

understand

the

frame

time

of

a

lesser

rate,

and

we

talked

about

that

a

little

bit.

This

is

the

calculation

that

lets

you

figure

that

out,

so

it

would

be

the

actual

buffer

time

for

a

lesser

than

then

back-to-back

frame,

size

rate

and

and

that's

the

calculation

there,

a

report.

You

can

report

this

value,

as

I

says

now

in

section

6,

but

properly

labeled,

so

that

it

doesn't

confuse

the

the

situation

and

yeah,

and

so

this

is

basically

what

I

talked

about

here.

D

That

there's

two

factors

in

creating

this

corrected

buffer

time.

The

original

implied

buffer

time,

where

some

of

the

frames

had

remove

from

the

buffer.

We

fight

we

figure

out

how

many

have

been

removed

by

taking

the

measured

throughput

over

the

maximum

theoretical

frame

rate

and

that's

the

factor

we

reduced

this

implied

buffer

time

by

so

I

think

that's

it.

D

B

If

you

go

back

to

the

previous

slide,

he'll

in

2544

I

know

this

is

so

nitpicky

in

2544.

There's

one

instance

of

standard

deviation.

I

had

to

go

back

and

look

to

see

if

it

actually

calls

it

out

in

they

do

and

it

says,

should

be

reported

and

I.

Think

or

excuse

me.

It

says,

should

be

reported.

Yes

and

I

think

your

text

calls

for

go

back

to

one

more.

B

B

D

B

D

So

I

see

one

nod,

so

that's

good

and

I

think

it's

good

to

characterize

the

variability

as

well

I

mean

I,

think

that's

a

very

often

the

difference

between

may

and

should

it

has

been

determined.

You

know

in

this

particular

case

twenty

years

ago

by

the

ability

to

look

over

the

individual

results

and

I

think

that

you

know

we're

it's.

It's

not

a

bad

idea

to

make

this

a

recommend.

It's

still

not

must

so

yeah.

D

You

know

that's

good,

I'm,

glad

you're

glad

you

made

that

check.

There's

only

a

couple

of

I

mean

the

the

26.4

section

for

a

description

of

this

test

is

extremely

concise.

We've

now

got

like

about

a

six

page

memo

that

goes

into

this.

So

and

and

it's

you

know,

and

it's

doing

a

lot

more

than

than

26.4

ever

ever

envisioned

I.

Think

I

can't

I

can't

wait

to

get

Scott

Brad

nurse

review

so.

D

D

E

Okay,

again

just

want

to

that's

the

mic

work.

Yes,

okay,

great

hello,

everybody.

My

name

is

mano

coaster,

I'm

going

to

replace

Rafael

holder

because

he

was

not

able

to

make

it

and

I'm

trying

to

be

present.

Our

and

updates

for

our

draft

on

methodology

for

vnf

benchmarking,

automation,

which

is

now

submitted

in

version

5.

If

you

go

to

the

next

slide,.

E

This

is

basically

what

happened

so

far.

Let's

go

briefly

put

you

into

picture,

and

this

draft

is

about

automating

benchmarking

of

virtualized

Network

function,

so

it's

it's

not

about

and

what

measurements

actually

perform

on

these

virtualized

network

function,

it's

more

about

how

to

specify

the

benchmarking

experiments

and

how

to

specify

the

results

and

and

how

to

automate

everything

end

to

end,

because

general

idea

is.

If

we

have

our

network

functions

entirely

developed

software,

we

can

make

much

more

use

of

automation

and

run

those

experiments

fully

automated

on

the

next

slide.

E

We

have

overview

why

or

what

was

updated

in

the

draft.

First

of

all,

we

are

presenting

in

that

draft

and

different

models

which

describe,

on

the

one

hand,

how

the

experiment

should

be

performed.

Those

models

are

called

INF,

benchmarking,

descriptors

or

short.

We

have

big

E's.

Those

descriptors

can

then

be

wrapped

or

used

by

automation

would

actually

run

those

benchmark.

E

We

addressed

comments

which

were

sent

to

remaining.this

by

luis

on

eros,

and

I

think

they

were

even

more

comments

coming

up

on

the

mailing

list

and

I

remember

comments

from

even

when

Rossum

they

not

get

addressed.

But

there

is

upcoming

number

of

reviews

on

the

mailing

list

which

we

are

working

on.

If

you

go

to

the

next

slide,.

E

Yeah,

this

are

the

main

main

points

in

our

update

this

time.

First

of

all,

as

I

said,

this

means

F

P

P,

so

this

is

basically

a

model

which

gives

you

the

result

from

such

an

automated

benchmarking

procedure

and

it's

basically

kind

of

a

template

in

which

these

automation

tools

can

fill

in

the

measurement

results.

So

general

measurements

are

divided

into

tests,

subdivided

into

trails

and

so

on,

and

this

model

basically

describes

how

you

can

give

those

results

in

a

structured

manner,

so

they

become

machine

readable.

So

you

can

define

different

metrics.

E

You

can

define

how

many

trails

are

executed.

Things

like

this

and

all

this

is

basically

captured

by

this

model.

So

you

have

a

kind

of

a

machine-readable

outcome

of

your

benchmarking.

Then.

The

next

point

are

these

young

models,

as

I

said,

and

those

models

are

right

now

available

on

each

F.

We

put

the

link

here,

so

you

can

go

there

and

check

all

those

young

models.

This

is

also

something

we

have

as

a

question.

We

are

not

yet

sure

how

to

add

those

who

are

attached

those

young

models

to

an

ITF

draw.

E

So

we

know

that

the

IDF

s

there

are

the

young

modeling

rules

and

we

try

to

follow

them,

and

we

know

that

in

the

data

track

up,

there

are

existing

drafts

which

have

young

models

designed.

So

our

question

would

be

how

how

to

do

that,

how

to

get

our

young

one

attached

to

this.

So

maybe

we

can

get

an

answer

to

this

later

and

in

addition

to

the

draft,

we

also

updated

our

two

reference

implementations,

one

of

them

in

young

and

the

other

one

is

TMG

bench.

E

This

was

also

one

one

issue

which

came

up

I,

think

on

ITF

104

that

the

journey

opens

go

through

was

not

yet

available

open

source.

This

has

changed.

No

suppose

Huth

are

available

and

linked

from

the

graph,

and

on

top

of

that,

we

did

first

test

run

with

both

of

the

tools

and

which

are

results

on

the

step.

Test.

Ones

are

also

available

on

github

and

you

can

check

them

out.

The

idea

here

is

that

we

try

to

compare

if

we

give

both

of

our

towards

the

benchmarking

description.

E

E

We

are

still

trying

to

update

those

models

a

bit

because

we

got

some

additional

feedback

from

our

colleagues,

so

they

might

be

any

minor

changes

and

I

think

there

are

a

lot

of

synergies

to

other

drafts

in

this

in

this

working

group,

especially

the

young

data

model

for

a

network

interconnection

tester

management,

so

I'm

quite

interested

in

in

their

presentation.

Maybe

we

have

near

sand

familiarities

or

can

make

our

models

compatible

to

each

other

or

something

like

this.

E

E

The

reference

tools

are

now

finally

available

and

from

our

stage

yeah

we

think

the

draft

becomes

more

and

more

stable

and

once

we

are

able

to

add

the

young

models

to

this

ITF

graphs

in

the

data

tracker

and

we

would

be

interested

and

what

the

net

next

step

could

be

if

we

should

aim

for

having

a

last

call

or

something

like

that,

that's

more

more

like

an

over

questioning

and

that's

basically

everything.

Thank

you.

D

I

Hi

ya,

ibadi

Whiteside

instruments.

It

is

really

relevant.

You're

dropped

to

the

meat

to

have

a

formal

way

to

represent

test

results,

and

the

idea

is

good,

but

I

think

you

need

some

help

with

young.

The

young

models

I

have

looked

into

them

and

they

really

need

some

review

and

refactoring.

So

I

can

join

the

discussion

and

I

think

it's

going

to

be

a

lot

of

changes.

I

But

if

you

are

dedicated

to

working

with

this

draft,

I

can

help

you

this

one

thing

ii

think

this

is

very

easy

to

add

the

young

code

to

the

drafts.

If

you

just

look

to

another

draft

that

has

young

and

just

like,

I

don't

know,

if

you're

using

xml

or

directly

write

it

as

text,

probably

xml,

so

just

check

some

of

the

sources.

It

is

a

five-minute

efforts

and

you

can

have

your

iran

models.

Okay,

okay,

to

discuss

and

one

more

thing

young.

You

should

write

it

with

capital

letters

because

it

gives

bad

impression.

E

I

E

D

And

Vlad

is

is

being

very

modest.

One

of

the

drafts

where

you

can

look

to

see

how

a

yang

model

is

incorporated

in

internet

draft

is,

is

his

draft

on

a

yang

model

for

control

of

traffic

generators?

Okay,

so

that's

a

that's

a

good

place

to

start,

and

it's

right

within

our

working

group

as

a

is

a

good

example.

Okay,.

B

E

Yeah,

what

would

be

noticed

and

while

all

those

experiments

are

testing

a

IDs

system

arm,

which

is

deployed

as

a

knocker

container,

and

we

run

traffic

traces

through

the

system

and

the

difference

is

on

the

one

side,

of

course,

the

absolute

numbers,

because

experience

might

be

performed

on

slightly

different

hardware.

We

were

more

interested

at

first

into

seeing

if

the

trends

are

the

same,

and

this

is

something

we

could

confirm

here

and

don't

be

confused,

because

the

colossi

exactly

is

what

is

the

blue

in

the

one

pot?

Is

an

orange

and

the

other

plot.

E

That's

a

profit

of

the

plotting

library,

I

guess,

but

the

general

trends

seem

to

be

the

same,

and

this

is

what

motivated

us

to

continue

and

this

work

I.

Think

if

you

write

or

the

goal

is

that

if

we

run

the

experiments

on

the

exact

same

machine,

because

it

always

depends

on

your

virtualization

virtualization

infrastructure

that

we

then

try

to

achieve

or

want

to

achieve

the

exact

same

result

and

I

think

from

our

methodology.

This

should

be

possible,

but

this

needs

to

be

confirmed

with

additional

experience.

Yeah.

B

Exactly

so,

I

think

one

of

the

things

that

we've

struggled

with

in

BMW

ge,

the

virtualization,

is:

how

do

we

confirm

the

ability

to

run

the

same

test

over

and

over

and

over

and

I

know?

Al

and

I

sometimes

differ

a

little

on

this,

but

on

one

hand,

you're

usually

looking

to

get.

If

the

exact

same

variables

are

run

ten

times,

one

would

expect

the

same

result.

B

Sometimes

we

don't

and

I

think

there's

a

lot

of

angst

around

virtualization,

where

how

do

you

confirm

that

hey

I

can

run

that

same

test

ten

times

so

that

I

have

ten

apples

to

apples,

comparisons

and

ten

numbers

that

I

can

actually

point

to

to

say

yep.

This

makes

sense,

and

so

because

you've

done

a

lot

of

heavy

lifting

here,

and

you

said

you

were

digging

into

it

and

I

thought.

B

Okay,

if

you

guys

could

continue

as

you

update

the

draft

and

Vlad

is

helping

you

when

you

guys

come

back

next

time

in

Vancouver,

possibly,

and

you

present

again

hey

just

you

know,

I'd

like

to

keep

focus

on

that,

because

it's

our

heritage

and

you're

also

helping

us

prove

out

and

solve

to

say,

yep.

We

can

actually

benchmark

and

here's

how

we

went

about

it

and

here's

what

we're

seeing

as

you're

doing

it,

which

is

exactly

what

you've

got

here.

I

just

want

to

make

sure

you

continue

to

do

so.

Yeah.

E

B

D

E

It's

the

ricotta

I

think

it

was

version

three

if

I,

if

I'm

right,

I

think

this

example

graphs

showing

the

number

of

drop

packets

and

they

are

made

up

for

different

races

with

different

flow

sizes

and

so

on.

So

in

the

experiment,

folders

of

the

github

repository

there,

we

have

specified

the

more.

The

information

is

available,

which

exact

setup

misused,

couldn't

put

everything

on

the

slide.

D

E

D

So

many

well

there

there's

enough

there's

enough

variability

across

that

list

of

vnfs.

That

I

feel

I

feel

like

you

must

be

in

some

sense,

defining

your

own

vnf,

specific

benchmarks

to

measure

as

young

as

you

do

that

so

that's

I

mean

that's

an

important

part

of

our

work

as

well,

and

it's

usually

something

that

we

have

a

complete,

complete,

sometimes

a

set

of

internet

drafts

in

order

to

agree

upon

so

for

I

guess

for

for

a

load

balancer,

for

example,

I.

Don't

think

we've

done

any

benchmarking

work

on

unload

balancers.

D

E

D

E

D

D

D

Anything

else

from

the

the

group

assembled

here

seems

not

okay.

Well,

what

one

thing

I've

I

always

want

to

encourage

people

about

is

one

way

that

folks

who

are

proposing

new

work

can

help

each

other

is

to

read

each

other's

drafts

and

perform

reviews,

and

even

if

it's

work,

you're

not

familiar

with,

you

may

learn

something,

and

that's

good,

always

a

good

thing.

So

consider

that,

as

you

come

to

the

microphone

today

and

then

stand

here

boldly

asking

for

the

working

group

to

drop

your

draft,

if

you

get

some

help

by

helping

others.

G

Thank

you,

I'm

Alan

Lee

from

HP.

Their

draft

title

is

consideration

for

benchmarking,

natural

polymers,

in

contrast,

infrastructure,

I'm,

presenting

briefly

the

some

minor

updates

for

the

huge

page

up

and

luma

these

part

of

the

our

interest

in

this

rep

team.

So

so

we

are

in

this

trip

to.

We

are

trying

to

how

to

configure

those

things

and

then

what

impacts

on

those

things

to

containerize

the

networks.

G

G

We

are

add

some

more

explanation

about

huge

pages

in

nuuma

pages

and

some

probably

the

container

is

as

isolated

in

the

application

level

and

administrator

can

set,

which

pages

or

growing

wrapper

but

like

like

m

kubernetes

or

us

to

use

of

500

112

megabytes

and

I'm,

but

we

are

still

trying

to

for

that

value

and

then

the

next

next

resource

being

since

Numa

is.

We

are

still

trying

to

find

out.

G

What's

going

on

from

this

hackathon,

we

are

still

trying

to

verifying

the

cpu

or

location

using

current

container

container

engines,

where

techniques

use

:,

tix,

CP

schedule

and

same

K,

and

then

we

are

measuring

throughputs

with

them

things

with

CP

variations,

and

next

in

some

we

are

still

trying

to

define

a

huge

page

values

but

and

our

results

and

I'm

our

test

environment,

the

slides

it's

from

them,

the

web

page.

So

it

can.

You

can

find

out

that

and

I'm.

G

This

is

our

basic,

a

test

pad

you

know,

so

we

can

use

the

EM

the

turtle

engine

traffic

generator

for

the

tracks

in

some

container,

networking

technology

TPK

and

then

and

we

we

use

m'kay

and

kinetics

natives

and

and

then

the

pot

won't

party

some

for

application.

We

use

the

surrogate

time

and

then

we

are

more

a

simple

one.

We

use

there

just

forwarding

application

and

part

of

this

testing.

We

some

some

problems

on

the

some

point.

You

know

the

the

between

two

points

and

I'm.

We

will

prepare

interface

and

then

part

interfaces.

G

We

have

some

peg

drops,

which

is

some

error

checks.

The

most

packets

are

dropped.

On

that

point,

so

we

still

know

area

and

some

we

are

regenerating

him,

some

traffic's

and

reinstalling

some

components

and

then

after

there's

no

know

any

visual,

so

I'm

we!

Finally

we

are.

We

are

you

know

the

the

change,

the

interfaces,

the,

which

is

the

Belarus

100

gigs,

but

we

changed

the

one

Jake

Intel

interfaces

it

works.

G

G

You

know

some

is

our

some

learning

from

their

hackathon

and

we

are

still

keep

trying

to

update

new

technologies

and

and

then

so

now

we

still

more

find

out.

You

know

what

what's

going

on

the

Bala

notes.

Interface,

that's

the

problem

on

that

interfaces,

but

I'm

not

quite

sure,

but

we're

gonna

find

out

so

and

so

so

next

step

on

out.

The

next

step

is

some

from

your

comments

and

then

updates

our

thread

and

then

next

the

BAM

double

Hector.

We

will

still

trying

to

our

experimental

things.

G

D

Thank

you.

Thank

you

very

much

any

questions

or

comments

about

this

benchmarking.

I

mean

this

is

containerization

is

going

to

be

the

kind

of

the

future

of

virtualized

deployments.

We've

got,

you

know

pretty

pretty

good

understanding

of

the

hypervisor

based

virtualization.

We

have

a

lot

to

learn

about

containers,

there's

a

obvious

advantages

and

some

obviously

a

lot

more

things

to

learn

about

now.

Making

that

work

in

general

I

think

some

people

are

very

good

at

it,

but

they

don't

talk

about

performance,

except

in

loading.

The

images

everybody

knows,

that's

going

to

be

quicker.

D

So

what

one

one

one

additional

point

I

wanted

to

make

is

that

your

considerations,

draft

and

I

think

I

mentioned

this

to

you

at

the

to

you

all

at

the

the

hackfest.

The

considerations

draft

could

include

some

information

on

a

troubleshooting

test.

Setups

I

think

you

know

your

you

guys

are

generally

going

to

get

some

good

experience,

doing

that

and

some

of

the

tricks

and

tools

and

getting

a

set

up

working

things

that

you

could

recommend

these

are.

D

B

What

you're,

seeing

in

hackathon

in

BMW

G

would

be

helpful

and

then

extending

that

to

hey

as

you're

learning

things

and

troubleshooting

through

the

hackathon,

adding

that

to

in

terms

of

your

troubleshooting

or

things

learned

or

best

practices