►

From YouTube: IETF106-CFRG-20191120-1330

Description

CFRG meeting session at IETF106

2019/11/20 1330

https://datatracker.ietf.org/meeting/106/proceedings/

A

A

C

B

B

B

Spectre

is

waiting

for

Kenny.

We

have

lots

of

documents

in

flight.

We

have

a

couple

of

new

documents.

Now

one

is

pairing

from

the

curves

and

ristretto

document

was

adopted

by

the

FRG,

but

the

reserve

document

wasn't

polished

yet

I

just

got

email

from

one

of

the

editors

that

they

are

working

on.

This.

B

B

B

D

B

B

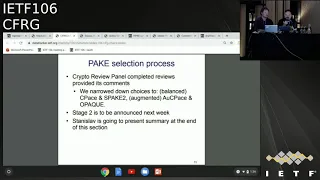

Right

and

with

back

selection

process,

Stanislav

will

talk

more

about

what

we've

done

and

what's

next

steps

are

we're.

Basically,

with

Crypt

review

panel

sculpt,

we

narrow

down

the

choices

to

two

balanced

and

two

augmented

packs,

because

we

didn't

end

up

with

one

and

one

in

each

category.

We

need

to

do

a

bit

more

work

by

we,

it's

the

whole

community

with

crypto

review

panel

and

a

little

bit

of

work

from

the

submitters,

so

this

will

be

presented

next.

F

It

started

as

if

May

and

we

had

two

stages,

one

and

two

before

the

Montreal

meeting,

it's

better

and

in

Montreal

we

discussed

this

and

we

had

eight

good

nominated

pegs

emanated

by

the

persons

enumerated

in

the

slide.

And

after

that

we

had

four

more

stages.

On

stage

three,

you

asked

people

to

be

the

reviewers

for

the

enumerate

equations.

We

had

a

number

of

additional

questions

to

be

asked

to

be

taken

into

account.

F

The

questions

are

on

the

slide

and

we

had

a

really

great

team

of

reviewers

with

really

deep,

really

wise

reviews.

All

of

these

people

are

on

the

slide,

many

things

for

all

of

them.

These

reviews

are,

in

fact,

I

would

say

most

wise

and

white

and

deep

understanding

of

pecks

I

told

not

only

the

process.

I

would

say

so,

as

I

related

to

IPSec

and

Taylor's

protocols

about

other

applications

of

products

about

security,

including

security

proofs,

including

digital

security

issues,

and

about

some

other

business

with

some

additional

applications.

F

With

some

special

properties

of

packs,

so

again

many

thanks

for

all

these

people

on

the

slide

as

a

process

answer

overall

reviews

you're

made

based

on

these

reviews,

then

on

stage

four

we

had

the

reviewing

process

started

and

many

things

to

Aaron

Schaefer

Forgan,

organizing

epic

selection.

If

repository

saw

all

the

views

on

nominated

pegs

or

immediate

results

are

public

here.

So

please

go

here

and

go

there

and

read

everything

throughout

the

process.

F

We

tried

to

be

perfectly

transparent

here

then,

on

stage

5

we

had

four

people

from

Crete

review

panel

to

review

all

of

these,

so

we

had

four

overall

reviews

for

all

eight

packs

from

buren

Tuchman

Rask

Housley,

our

own

chef

aarón's.

Then

it

was

much

life

and

all

of

them

are

made

available

again

on

each

hub

and

to

be

very

short,

these

for

review

stage

following

scenes

that

for

all

of

them,

have

some

preferences

about

pegs

and

I,

highlighted

them

in

the

slice,

bass,

blue

for

balance,

pegs

and

magenta

for

augmented

pegs.

F

So

as

I

was

a

decision

to

start

round

two

with

four

pegs

going

to

round

two,

these

four

pegs

are

speak

to

see,

pace,

Oh

park

and

our

space

to

Berlin

specs

and

to

augmented

pegs.

So

four

candidates

left

all

reviewers,

more

or

less

in

favor

of

these

candidates

and

another

reason

for

going

to

around

to

that

at

last

stages

of

pack

selection

process.

There

were

some

additional

issues

discussed

in

the

mailing

list,

for

example,

quantum

annoyance,

post

quantum

readiness.

F

Some

issues

about

whether

we

are

rated

at

one

discrete

logarithm

to

be

broken,

can

make

the

whole

protocol

broken

or

just

some

instances

of

risk.

So

we

had

some

particular

questions,

some

overall

questions,

and

they

were

not

addressed

in

the

reviews

because

it

were

erased

later

so

we

decided

to

start

round

two.

F

F

So

in

fact,

allocating

two

categories

of

contest

proposed

plan

and

timeline

for

around

to

say:

Stage,

one

of

round

2

starts

tomorrow,

so

we

plan

to

collect

additional

questions

for

all

four

candidates,

and

these

questions

can

be

about

particular

questions

or

modifications

for

each

candidate,

for

example,

some

issues

about

reduced

versions

of

CPS

and

also

pays

about

possible

modifications

of

speak

to

with

selection

of

its

parameters

based

on

half

the

curve

and

some

other

particular

questions

about

eliminated,

Thanks

and

overall

questions

that

can

be

taken

into

account.

Additionally.

F

So

these

questions

are

collected

by

crypto

panel

at

iron,

a

fork,

this

many

places

public.

So

everyone

can

read

everything,

sir.

At

stage

two,

which

will

last

until

17th

of

December,

the

list

of

any

questions

will

be

published,

exact

it's

up

and

the

super-g

will

be

asked

if

anything

else

should

be

added

to

the

list

at

stage.

F

So

we

commend

that

in

one

of

the

reviews

by

Aaron

Schaefer.

There

was

a

really

great

summary

of

why

we

simply

need

this

safe

regime

document

about

recommended

packs,

because

even

after

we

select

one

or

two

or

0

X

we'll

have

protocols,

we

have

clarifications,

but

we'll

still

need

to

make

all

recommendations

for

integration

into

ITF

protocols

really

clear

with

test

vectors

with

address

on

all

issues

that

can

be

raised

by

ITF

people.

F

So

we'll

need

to

have

additional

work

to

make

this

document

for

SSL

expects,

and

so

this

seems

to

be

our

net

sections

after

we

select

X.

So

what's

now

now

we

ask

all

say

for

G

and

also

of

course,

criminal

members

to

send

their

additional

questions

that

must

be

considered

during

round

two

for

all

pecs,

so

please

send

them

to

crypto

panel

at

RT,

fork

and

I'll

repeat

this

request

today

or

tomorrow:

Tuesday

safer,

G,

melon

tastes.

So

many

things

any

questions.

H

All

right

everyone,

my

name-

is

Chris

wood.

This

is

a

quick

update

on

the

hash

to

curve

draft.

Since

the

last

meeting

we've

made

several

major

updates,

I

guess

perhaps

the

most

important

one

is

that

we've

removed

an

algorithm

that

was

redundant

with

a

lot

of

things

that

we've

already

heard

with

other

algorithms

already

present

in

the

document.

That's

a

card,

so

that

should

help

simplify

things.

Moving

forward,

we

promoted

the

simplified

SW

map

that

was

specifically

meant

for

a

very

friendly

curves

up

to

the

main

part

of

the

document.

H

Previously

it

was

in

an

appendix

and

it

has

applications

to

other

curves,

so

that's

useful,

and

then

we

generalized

or

rial

general,

it's

the

SW

algorithm

to

apply

to

basically

any

curve

in

the

document.

Whereas

previously

you

only

apply

two

curves

with

the

base

field,

whose

prime

was

congruent

to

1

mod

3.

So

not

much

more

say

beyond

that.

So

and

then

a

lot

of

minor

updates

went

into

the

document

as

well.

H

So

in

particular,

we

we

had

this

issue

for

a

long

time

about

adding

like

a

sort

of

flow

chart

for

how

you

choose

the

particular

hash

to

curve

algorithm

for

a

particular

curve,

and

we

replaced

that

idea,

which

is

a

simple

block

of

text.

That

basically

says.

If

you

want

to

target

you

know,

pairing

friendly

curve

use

the

SW

variant

of

that

makes

the

most

sense.

If

you

want

to

target

a

Montgomery

curve,

use

alligator

two

in

all

other

cases,

use

a

variant

of

SW

that

is

the

most

efficient.

H

So

it's

simplified

us

it

with

you

being

preferred

in

some

cases

and

then

the

e

general

one

being

used

or

being

recommended.

In

all

other

cases,

there

was

also

requests

to

forget

from

whom

to

sort

of

allow

other

hash

to

base

has

to

base

implementations

to

be

plugged

in,

and

while

we

didn't

actually

specify

alternative

constructions

for

hash

surveys,

we

basically

laid

down

what

the

criteria

would

be

for

that

particular

function.

H

You

know,

including

things

like

it

must

be

collision

resistance.

It

must

not

use

rejection,

sampling

or

the

try

and

increment

thing

to

hash

to

the

curve

or

hash

to

the

base

field.

So

if

someone

wanted

to

do

something

other

than

you

know,

the

HK

DF

based

function

that

we

have

to

find.

They

should

follow

the

guidelines

and

hopefully

everything

will

work

out

just

fine.

H

We

also

added

some

clarity

around

how

to

do

domain

separation

in

in

the

document,

particularly

how

to

construct

the

domain

separation

tag

when

adding

hash

to

curve

to

other

protocols.

You

know

listing

things

like,

including

a

protocol

version

number

or

some

other

kind

of

unique,

identifying

information

and

I

guess

with

relevance

to

a

domain

separation.

We

did

tweak

the

hash

debase

function,

a

little

bit

in

particular.

Previously

we

would

not

have

the

input

message

or

we

would

hatch

the

input

message

repeatedly

in

the

iteration

step.

H

There's

like

the

initial

expand

message

or

extract

message

and

then

for

each

I

guess

if

you're

looking

at

I

think

it

was

extension

fields

for

each

element

or

each

coordinate

and

a

polynomial

representation

for

an

extension

field,

you

would

do

an

expansion

with

HKD

F

with

the

full

message

which

was

possibly

redundant

so

now.

What

we

do

is

just

hash

the

message

upon

immediately

calling

hash

debase

and

then

for

each

element

in

the

polynomial

representation.

H

Then

you

would

just

hash

something

or

call

H

PF

extract

with

a

smaller

input,

but

to

make

sure

that

no

call

to

H

Mack

in

that

function

doesn't

collides

with

one

another.

The

initial

hash

step

that

we

do,

the

initial

HDI

DF

expand,

except

that

we

do

append

a

zero

byte

to

the

end

of

the

message,

because

the

internal

h-back

implications

from

HK

DF

expand

only

append

of

non-negative

numbers,

larger

and

equal

to

one

anyways.

You

can

take

a

look

at

the

document

to

sort

of

figure

out

what

I

mean

there.

H

We

did

also

specify

and

make

it

very

clear

how

to

compute

the

sign

of

the

resulting

point.

For

a

you

know,

the

output

of

a

hash

to

curve

operation,

and

we

made

it

part

of

the

cipher

Suites.

So

there's

no

ambiguity-

and

this

was

done

specifically

to

help

alignment

with

the

vrf

draft.

So

hopefully

now

everything

should

be

in

good

shape

and

they

can

adopt

it

as

a

you

know,

sink

bowl,

a

call

to

hash

to

curve,

rather

than

response,

applying

alligator

where's

w

and

that

in

there

doc

and

that's

that

work

is

currently

underway.

H

We

added

some

optimized

and

constant

time

square

root,

computations

because

those

were

useful

or

those

previously.

We

can't

really

have

any

kind

of

concrete

algorithm

for

doing

so.

We

just

said

you

know

compute

the

square

root

and

do

it

in

a

constant

time

way.

But

now

we

just

really

flush

that

out

for

different

primes

are

different.

H

So

there

are

basically

two

major

items

that

we

needed

to

dude

before

moving

this

forward,

the

first

of

which

is

to

add

the

test

vectors

back

that

we

took

out

before,

because

the

docking

changing

so

much

we're

adding

new

algorithms

and

so

on.

So

we

have

proof

of

concepts,

age

code,

in

the

repository

where

the

document

is

hosted

and

most

likely

would

just

generate

the

test

vectors

from

there.

H

There

are

ongoing

implementations

and

go

see

and

rust

and

we'd

like

to

finish

them

and

make

sure

they

all

interoperate

with

the

test

vectors

and

assuming

all

goes

well.

Assuming

those

two

things

happen

in

the

near

short-term.

We

then

like

to

potentially

consider

starting

the

last

call

for

this

particular

document.

H

F

H

I

I

J

J

Es

renewed

and

modernized

I'd

like

to

thank

Chris

Wood

for

using

the

official

standard

theme

for

this

session,

as

well

as

in

the

hatch

to

curve

session.

So

this

is

a

draft

that

as

I

guess

we're

in

Oh

to

now,

because

we

noticed

we

published

oh

one

and

then

published

Oh

to

a

few

hours

layers.

I

recall

because

we

found

some

some

obvious

errors

to

rejiggered

some

things,

but

as

of

now

I

think

we

are

getting

pretty

stable.

J

So

in

in

a

102

of

the

research

group,

version

of

the

draft

we've

been

just

kind

of

polishing

things,

so

there

are

a

couple

of

minor

technical

changes

based

on

some

feedback

from

metal

implementers,

so

we're

using

hashes

of

values

instead

of

the

values

themselves.

In

some

cases,

because

people

didn't

want

to

keep

the

whole

values

around

Chris

sent

us

a

PR,

the

for

a

quote:

single-shot

API.

So

we

had

had

this

decoupled

structure.

Where

you

do.

J

You

set

up

an

encryption

context

that

you

can

use

multiple

times

to

amortize

the

cost

of

the

public

key

operation.

But

if

you're

just

doing

a

single

encryption,

it's

nice

to

just

compose

that

all

together

at

once,

so

we've

got

a

kind

of

simplified

profile

in

the

dock.

Now

to

you

in

general,

what

kind

of

cleaning

cleaning

up

the

dog

getting

it

ready

to

get

done?

You

know

with

the

the

core

mechanism

firming

up

the

you

know.

The

next

logical

step

is

to

start

looking

at

implementation.

It

interrupts.

J

The

latest

versions

of

the

draft

has

test

vectors

for

all

four

of

the

modes

and

several

of

the

Cypress

Suites.

We

didn't

do

the

full

combinatorial

explosion

of

all

the

different

possible

combinations

of

algorithms,

but

we

took

a

couple

slices

through

the

algorithm

space

and

did

all

the

modes

for

those

cases

and

so

based

on

those

test

vectors.

We

verified

Interop

on

at

least

the

first

two

modes,

the

base

mode

and

PSK

mode

between

a

go

implementation

that

I've

been

using

from

which

we

generated

the

test.

J

J

So

you

have

ind

CCA

to

as

public

a

public

key

encryption,

so

you

have

that

indistinguishability

property

and

that

you

get

the

authentication

properties

that

that

you

would

get

that

you

expect

based

on

the

mode.

So

in

the

PS

k

mode,

the

the

PS

k

could

the

ciphertext

can

only

be

generated

by

someone

who

has

that

PS

k

in

the

auth

modes?

Only

by

someone

who

has

the

relevant

private

key,

so

status

here

is

Benjamin.

J

Has

proofs

done

for

these

base

cases

for

all

of

all

for

modes

in

the

case

where

you're

not

considering

a

key

compromised

in

the

key

we

mean

here,

is

the

authentication

key,

so

the

difference

between

the

left

hand,

side

and

the

right

hand

side

columns

in

the

right

column.

What

we're

verifying

is

that

the

confidentiality

properties,

the

indistinguishability

property

holds

even

when

the

authentication

key

is

leaked,

so

the

whatever

the

PS

K

or

the

the

initiators

private

key

that

are

both

used

for

authentication

and

not

for

confidentiality.

H

Yeah

Christopher

I

didn't

want

to

comment

on

anything

said

just

one

day

for

people

in

the

room.

We

are

trying

to

also

generalize

the

analysis

to

cover

just

chems

in

general.

The

assumptions

here

are

specifically

the

captive

yeoman

problem

for

the

diffie-hellman

CEM,

but

since

we

one

of

the

hallmarks

of

this

document

that

you

can

plug

in

any

CEM,

presumably

and

still

have

things

work,

we're

trying

to

make

it

a

bit

more

generic.

So

that's

work

in

progress,

but

for

right

now

we're

just

kind

of

focusing

on

the

diffie-hellman

variants.

Yeah.

J

Yeah,

so

other

people

would

like

to

help

out

the

analysis

contribute

I'm

glad

to

have

help

here.

So

that's

that's.

Basically

the

status

the

document

is

starting

to

get

complete.

We

think

the

core

construction

is

pretty

sound

and

and

interoperable

specified

and

the

analysis

is

progressing

so

far.

We

have

proved

out

some

of

the

core

properties

and

we're

trying

to

get

the

remainder.

J

You

know

cover

the

remaining

cases

with

some

other

proofs,

so

I

think

we're

getting

close

to

a

point

of

research

group

last

call

here:

I,

don't

know

how

you

guys

want

to

arrange

it.

I

mean

I.

Think

the

main

major

outstanding

thing

is

is

the

analysis.

Timeline

might

need

a

little

while

longer

for

for

those

to

come

in,

but

that

could

be

done

potentially

in

parallel

with

the

research

group.

Last

call

so

up

to

the

chairs

about

how

you'd

like

to

press

this

Chris

did

you

have

coming.

C

J

H

Quickly

for

timeline

things,

it's

very

likely

that

encrypted

S&I

will

depend

on

this,

given

the

way

things

are

going

and

we're

aiming

to

have

that

done

early

next

year,

at

least

where

we

start

experimenting-

and

there

are

also

other

things

that

have

started

using

HP

key.

So

we

may

want

to

get

this

out

sooner

rather

than

later.

B

L

L

So

basically,

we

are

trying

to

propose

three

are

different

primitives,

the

first

one

is

a

tweakable

block

cipher.

So

it's

like

the

core,

primitive,

that's

really

designs,

and

on

top

of

that

we

have

two

modes.

That's

going

to

be

using

this

internal,

primitive

thanks.

So

what

is

autonomic

encryption?

So

basically

it's

authenticated

authentication

and

encryption

in

the

single

primitive.

The

goal

is

basically

to

try

to

avoid

having

some

weird

security

issues

you

can

have

if

you

use

them

separately

in

the

wrong

way.

L

So

if

you

have

everything

unifying

in

one

primitive,

then

your

hope

to

be

sure

that

it's

gonna

be

fine

and

also

the

goal

is

to

have

some

efficiency

gain.

So

if

you

do

both

at

the

same

time,

you

can

save

some

operation

have

something

much

more

efficient

and

actually

what

I

like

to

propose

is

something

called

a

EAD

at

Otakon

encryption

with

associated

data,

which

means

some

data

will

be

encrypted

and

authenticated,

and

some

that

would

be

only

authenticated

not

not

encrypted.

So

we

would

like

to

have

this

feature

as

well.

L

So

it's

not

that

that

this

was

a

hot

topic

in

the

crypto

research

community.

So

there

was

a

competition

organised

from

2014

to

2019

called

a

Caesar

competition

organised

by

Dan

Bernstein,

and

so

the

lot

of

people

from

the

community

participated.

It

was

like

worldwide

competition,

like

50-something

submission,

and

after

five

years

they

selected

some

candidates

for

the

three

portfolios.

And

what

I'm

proposing

here

is

the

winner

of

one

of

the

the

portfolio.

L

So,

first,

what

is

beyond

birthday

security,

so

actually

most

of

the

cipher

modes.

So

when,

if

you

say

s

box

iframe

inside

a

mode

like

oh

CB

is

GCM,

what

you're

gonna

get

is

what's

called

birthday

security,

which

means

that

your

number

of

your

advantage

of

the

adversary

will

grow

its

square

in

the

number

of

queries

that

you

actually

make

to

the

internal

cipher

so

rather

quickly,

you're

gonna

get

that's

after

some

amount

of

data.

You're

gonna

use,

you're

gonna

query

to

your

encryption

Oracle.

L

The

security

will

drop

rather

rather

fast,

so

actually

in

the

case

of

AES.

If

you

do

to

64

data,

which

is

quite

a

huge

number

I

have

to

say,

but

after

this

amount

of

data

I

read

all

the

securities

lost

completely,

you

can

really

have

many

different

type

of

attacks

and

everything

for

other

parts.

So

beyond

birthday,

more

secure

modes

are

more

than

trying

to

go

beyond

that.

So,

even

if

you

use

a

lot

a

lot

of

data,

you

still

maintain

the

security

to

a

very

good

level,

so

there's

different

spectrum

of

security.

L

You

can

still

maintain

some

will.

It

be

close

to

birthday,

but

some

can

be

like

full

security,

completely

maintained

and

its

security

fuser

n

bit

block

cipher.

So

that's

what

we

like

to

propose

today,

just

to

show

you

some

curves.

Basically,

this

on

the

x-axis

is

the

advantage.

Sarris

number

of

queries.

Y-Axis

is

the

advantage

of

this

re.

So

when

it's

high

it's

highly

secure-

and

this

is

a

security-

is

reducing

and

those

are

most

mode

will

be

the

blue

line,

basically

like

OCD

or

GCM.

What

we're

trying

to

propose

our

model

like

this.

L

L

Second

property

that

we

like

to

propose

is

knows:

missus

resistance,

I

think

this

is

quite

important.

So

most

of

the

auto

t

care

of

the

decay,

the

encryption

mode,

you're

gonna,

be

using.

You

need

a

knowns,

so

like

I,

do

that's

not

repeating

and

most

like

ocbs

GCM.

If

you

reuse

just

a

single

time

the

nonce,

then

again

all

security

falls

apart.

You

can

have

Universal

forgery

attack.

You

can

have

decryption.

So

really

you

really

must

make

sure

that

the

nonce

is

never

ever

repeated

and

that's

a

strong

constraint

on

the

implementers.

L

So

basically,

you

have

generate

two

ways

of

handling

this.

Either

you

generate

the

nonce

right

Omni,

then

you

hope.

That's

the

nonce

is

big

enough

and

your

entropy

sauce

is

also

good

enough.

So

you

don't

have

collision

too

often.

Otherwise

you

can

use

a

counter

for

the

nonce,

but

then

you

must

use

the

states

and

maintain

the

state

as

long

as

the

key

is

not

changed

between

the

two

parties,

you

must

maintain

a

state

for

the

counter,

and

this

is

also

quite

a

may,

be

as

strong

constraints.

So

the

goal

is

to

design

primitives.

L

Then

that's

do

not

have

this

issue

and

even

if

you

are

reducing

the

nonce,

you

still

maintain

a

good

level

of

security

and

decision

security

will

not

fall

apart

and

again,

you

have

a

big

spectrum

of

possibilities

here.

Some

will

be

a

little

bit

secure

if

you

reuse

the

nonce

and

some

can

be

up

to.

It

makes

no

difference.

If

you

repeat

the

notes,

or

not

so

I

will

go

yeah

very

quickly

about

our

our

design.

So

first

thing

we

design

is

basically

an

internal

primitive

and

it's

something

a

little

bit

special.

L

L

So

what

is

trickable

block

cipher,

so

block

cipher,

like

AES,

looks

like

this.

You

have

a

plaintext

and

a

key

and

goes

to

a

outputs

life

at

XM.

You

must

be

able

to

decrypt

and

three

cover

box.

F

is

exactly

the

same

thing.

You

just

have

an

extra

trick

input

in

here

that

allows

you

to

select

a

family

basically

of

block

cipher.

L

This

trick

can

be

public

and

it

actually

changes

a

lot

of

things

when

you

are

designing

some

modes

having

this

extra

two

inputs

makes

things

much

much

simpler

and

you

can

have

also

have

much

better

bounds.

So,

basically,

you

can

build

a

lot

of

things

very

easily

with

the

trickable

block.

Cipher

problem

is

there's.

No,

so

far

knows.

As

I

know

of

there's

no

no

standards

to

cable

box

offer

so

far,

so

what

we

try

to

do

is

start

with

a

yes,

of

course,

and

and

try

to

make

put

some

tweak

inside

AES.

L

So

it

looks

like

this

very

quickly.

The

blue

part

is

the

AES

round

function

that

we

use

kind

of

a

back

box

and

we

just

basically

redesign

the

case

schedule

of

the

AAS

and

try

to

get

something

a

bit

stronger

with

like

stronger

guarantees

that

the

sk

schedule,

and

also

to

be

able

to

use

a

trick

inside

okay.

So

that's

basically

how

it

works.

L

So

we

have

lots

of

security

guarantees

lot

of

analysis

that

we

did

in

a

paper.

You

can

take

a

look

at

our

website.

Our

paper

to

see

all

these

arguments,

and

the

important

point

is

that

the

trust

in

the

block

cipher,

I

mean

any

crypto

primitive-

is

put

by

the

how

much

script

analysis

has

been

done.

So

actually,

our

continued

has

been

one

of

the

most

scrutinized

algorithm

in

the

competition's.

L

A

lot

of

people

try

to

analyze

it

and

still

very

comfortable

security

margin,

but

there's

a

lot

of

quickness

paper

that

has

been

published

on

this.

The

goal

was

also

to

reuse

a

yes,

so

that

we

can

reuse

the

security.

Our

arguments

of

failure,

so

there's

been

decades

of

analysis

on

is

so

we

hope

to

leverage

this.

So

we

don't

have

to

read

you

everything

again,

also

it's

quite

efficient

because

we're

using

the

AES

round

as

a

black

box.

L

So

you

can

use

outward

acceleration

that

you

have

on

on

recent

processors,

so

we

can

run

on

less

than

one

cycle

per

bytes.

Basically,

and

we

have

no

patents,

there's

no

patent

on

this.

So

that's

the

primitive!

Now

we

need

to

define

a

mode.

That's

gonna

use

this

primitive

to

provide

authenticated

encryption,

that's

what

we

are

called

SCT

mode.

So

that's

something

that

we

publish

a

crypto

with

a

unique

surah,

and

this

is

also

part

of

the

winning

candidates

of

the

Caesar

competition.

L

Basically,

our

candidate

was

Deoxys

2,

which

is

composed

of

the

internal

cipher

I

just

described,

and

these

modes

here

I

mean

gonna

describe

very

quickly

how

it

works,

but

this

is

like

the

main

arguments

and

feature

I.

Think

of

our

mode.

So

it's

a

very,

very

key

thing

for

me

is

really

it's

extremely

simple.

I

can

really

describe

this

into

basically

one

slide.

If

you

look

at

some

other

authentic

encryption

algorithm,

there

are

quite

complex

there's

a

lot

of

details.

Implementation

is

not

so

easy

and

this

is

very

different

in

our

candidate.

L

It's

a

two

pass

mode,

which

means

you

need

to

go

through.

The

message

twice

not

be

authenticated

that

I

that

you

want,

but

only

the

message

you

have

to

pass

twice

through

it,

which

is

quite

a

strong

constraint,

but

this

is

required

because

you

need

this.

If

you

want

to

brighten

on

species

resistance,

there's

no

way

to

provide

no

species

resistance.

If

you

don't

go

twice

through

the

message,

so

it

provides

full

impede

security

so

beyond

birthday

security

in

up

to

actually

the

maximum.

L

As

long

as

you

respect

the

nonce

and

if

you

do

repeat

the

nonce,

so

that's

the

nonce

misuse

case,

then

it

provides

birthdays

security

actually

in

practice

it

provides

full

and

bit

security.

The

reason

why

is

because

our

bounds

here

goes

to

birthday

only

if

you

repeat

always

the

same

notes,

which

means

like

you've,

got

a

query

to

264

data

every

time

using

the

exact

same

nouns.

So

that's

a

very,

very

bad

randomness

source

I

would

say

so

in

practice.

L

L

Okay,

I'm

gonna

go

quick

and

again

no

patent

on

this

I'd

want

to

thanks,

alright,

so

yeah.

Okay,

then

one

last

thing

about

this:

it's

also

we

have

extract,

seek

input

so

because

it's

tweakable

block

cipher.

You

can

use

this

tweak,

but

you

can

use

it

for

the

cipher

to

build

the

mode,

but

you

can

use

it

for

other

protocols

that

might

plug

on

top

of

this

primitive.

This

is

actually

pretty

nice

feature

because

you

can

use

it,

for

example,

for

leakage,

release

leakage

resilience

you

can

use

it

also,

for

example,

disk

encryption.

L

You

can

use

it

for

ashing.

So

by

using

these

three

cable

box.

Therefore,

there's

lots

of

things

you

can

do

very

easily

and

with

very

strong

bounds.

So

this

is

the

slide

basically

explaining

how

it

works.

Everything

is

there

in

just

one

side.

So

that's

my

data

I

want

to

authenticate

that's

my

message:

I

would

like

to

encrypt

and

authenticate

what

I'm

doing

is

simply

make

it

grow

in

parallel

through

all

my

tweakable

block,

cipher

calls

it's

keyed

with

a

my

secret

key

and

you

have

a

counter

inside.

L

That's

it

I

just

process

this

in

parallel,

when

I'm

done,

I

get

one

call

to

create

the

authentication

tag

when

I'm

done

with

this

part.

I

take

that

occasion

tag

put

it

in

the

tweak

to

process

all

my

messages

that

I'm

basically

encrypted

with

a

kind

of

a

counter

mode

and

again

I'm

gonna

have

the

counter,

but

this

time

in

a

trick

not

any

classical

way

where

you

have

it

in

the

plaintext

here,

I

have

the

counter

in

the

trick.

You

logically,

that's

it

that's

how

the

mode

works.

L

L

I'm,

not

gonna,

go

into

details

in

in

for

this

one,

but

it's

something

I

proposed

in

2017

with

three

colleagues

and

it's

a

little

bit

more

complex

I'm

not

going

to

describe

it

here,

details,

but

basically

what

it

provides

is

a

little

bit

more

efficient,

it's

more

complex

to

implement.

So

that's

why

I

would

say

it's

a

trade

off,

but

it's

a

bit

more

efficient

and

it

provides

full

and

bit

security

completely

if

an

unsuspecting

and

on

some

issues.

L

So

in

order

to

compare

it,

I

think

one

key

point

is

really

the

fact

that

the

X

is

to

has

been

actually

chosen

by

the

cryptographic

research

community

as

the

winner

of

the

competition,

which

is

kind

of

first,

like

a

message

to

say

that

we

have

been

analyzing

this

candidates

and

we

believe

that

this

is

the

one

offering

the

best

trade-off

in

terms

of

efficiency

features

and

actual

security.

It

provides,

as

I

said,

it's

much

simpler

and

flexible

I

think

for

implementers.

This

is

really

something

important.

L

There's

also

the

issues

potentially

of

timing

attacks,

so

GCM

family

is

quite

sensitive

to

timing

attacks.

If

you

don't

have

an

encryption,

you

have

to

use

big

tables

in

order

to

make

it

like

really

efficient,

so

constraint

devices

might

be

not

so

that's

a

good

in

our

case,

it's

very

different.

So

it's

you

basically

just

use

a

bit

size,

implementation

of

AES

and

basically

you

you

directly

put

this

in

the

mode

and

that's

it

there's

no

extra

stuff.

You

need

to

end

up.

You

know

the

traffic

constant

time

implementation.

L

It

also

provides

higher

security.

So

when

you

look

at

the

bounds

compared

to

a

CMS

IV,

they

are

better

in

our

case

than

in

the

case

of

ASG

CMS

IV,

so

which

means,

if

use

a

certain

amount

of

data,

the

guarantee

you

get

on

the

advantage

of

the

adversary

is

higher,

both

in

the

non

respecting

and

in

the

nonce

or

not

misuse

scenario,

it's

more

efficient

in

in

Al.

L

L

Sorry

well,

I

think

so

in

terms

of

efficiency.

This

is

in

software.

Okay,

so

I

think

our

comparison

is

much

better,

not

in

the

case

of

software,

but

in

the

case

of

software,

of

course,

this

is

probably

one

of

the

most

interesting.

So

this

is

for

really

latest

Intel

processors

that

have

a

sry

acceleration,

so

I

asked

you

see.

Msf

is

actually

more

efficient,

especially

for

more

wrong

messages,

but

it

has

also

quite

a

big

initialization

process.

It

is

to

create

some

keys,

it

has

some

retain

inside.

L

So

all

this

takes

quite

a

few

is

runs

who

to

compute.

So

when

you

look

at

the

efficiency,

this

is

in

terms

of

how

many

is

rounds.

You

need

to

do

for

the

m

block

orthotic

a

block,

the

initialization

in

tag.

So

those

are

the

three

schemes.

I

was

talking

about,

but

actually

when

you

look

at

some

like

Internet

mix,

for

example

like

size

of

packets,

you

you

can

expect

it

cetera.

You

can

see

they

actually

for

small

packets.

This

is

actually

faster.

L

M

L

E

L

K

L

The

light

is

the

latest

version

dates,

so

we

publish

this

there.

The

first

one

was

in

2014.

We

make

some

modification

in

2015

I,

think

in

the

late

2015

and

since

then

I

don't

think

we

have

changed

anything

then.

The

other

crypt

analysis

that

has

been

is

actually

independent

of

all

the

change.

The

change

we're

like

small,

are

basically

small

changes,

but

the

cripton

sees

all

the

work,

not

all

the

work,

but

some

of

the

work

is

listed

on

our

website.

If

you

click,

then

it

releases

all

the

crypt

analysis

that

has

been

performed.

F

Maybe

we

can

repeat

our

experience

not

yet

ended

its

back

when

we

started

with

requirements

for

pegs

and

then

the

contest

and

maybe

organizing

the

a

ad

selection

process

in

ITF

can

be

a

good

idea

and

as

soon

as

that,

DOCSIS

to

will,

of

course,

can

be

one

or

most

stronger

strongest

competitors

here,

because

I

really

like

this

and

I,

think

that

the

structure

itself

is

very

mature

or

very

good.

And

thank

you.

L

Thank

you,

yeah

I

mean

I

again,

I'm,

not

sure

about

the

process,

so

the

crypto

community

went

through

this

work

already

so

without,

but

as

I'm

one

of

the

represented

who

served

in

crypto

committee

I

participate

a

lot

and

I

think

a

lot

of

people

actually

already

worked

on

this

competition.

Try

to

analyze

it

and

came

up

with

three

different

portfolios

to

try

to

cover

different

use

cases,

there's

one

portfolio

for

like

very

a

fast

software,

automatically

encryption,

that's

one

portfolio

for

constraint,

devices

and

there's

one

portfolio

for

robust

defense

in

depth.

F

F

G

G

So,

unfortunately,

the

authors

of

this

presentation

weren't

able

to

make

it

so

they

asked

me

to

come

and

read

their

slides.

So

that's

what

I'm

going

to

do

so

committing

authenticated

encryption

is

what

we're

talking

about

right

here

as

a

high-level

summary

of

what

we're

going

to

cover

in

the

next

five

minutes

or

so

what

is

committed,

authenticated

encryption

and

how

is

it

different

than

regular

authenticated

encryption,

we're

all

familiar

with

things

like

a

s

GCM

and

a

yes

in

different

modes

block

Shasha

poly,

we've

standardized!

G

Well,

we

have

filed

RFC's,

published

RFC

is

about

multiple

ones

of

these.

We

just

saw

a

great

presentation

about

a

a

EAD

mode,

deoxys

too.

So

what

is

committing

authenticated

encryption?

It's

an

authenticated

encryption

where

it's

hard

to

find

a

cipher

text

with

multiple,

correct

decryptions.

So

in

a

committing

AE,

cipher

texts

are

binding

commitments,

and

one

way

to

think

of

this

is

is

with

the

physical

intuition

of

physical

encryption.

So

thinking

of

a

safe

and

and

a

key.

G

G

So

if

a

this

is

sort

of

where

the

physical

analogy

breaks

down

with

regard

to

authenticated

encryption,

if

the

keys

can

be

adversarial,

then

authenticated

encryption

has

no

security

with

the

attacker

control

of

the

keys,

so

ciphertext

can

have

multiple,

correct

decryptions,

so

you

can,

if

you

can

swap

out

the

key

you

can

make,

it

seem

like

you're

decrypting,

something

else

that

you

didn't

decrypt

a

commiting

AEE

binds

the

ciphertext

to

a

specific

single

decryption

like

I'll,

go

a

little

bit

more

into

what

that

means

going

forward.

So

why

is

this

important?

G

G

If

you

have

the

key

and

adding

a

Mac

does

not

help

so

go

all

kind

of

counter

mode

itself

is

not

committing,

in

fact,

most

of

the

modes

that

we

have

here

that

we

use

in

protocols

in

the

ITF

are

not

committing

so

AES

GCM,

20,

poly,

13050,

CB

mode

encrypt

than

H

Mac

with

distinct

keys,

is

also

not

committing.

You

could

also

throw

a

a

s

GCMs

IV

in

this

bucket,

the

one

mode

that

we

do

have

that

actually

is

key

committing.

Is

this

encrypt

then

H

Mac

with

derived

keys?

G

So

this

is

surprisingly

something

that

something

that

we've

we've

known

has

been

a

good

model

for

a

long

time,

but

one

that

many

protocols

have

moved

away

from

okay.

So

where

is

this

needed

where's?

This

used

the

work

that

led

up

to

this

presentation

was

presented

and

it's

based

on

a

feature

in

messaging

services

called

message

franking.

This

is

specific

to

the

Facebook

encrypted

messenger.

It's

a

method

of

reporting

abuse

on

for

encrypted

messages.

G

So

this

is

one

use

case.

A

second

use

case

is

opaque,

which

is,

as

we

just

mentioned,

still

in

the

pake

process.

It

becomes

more

fragile

if

the

AE

is

not

committing,

so

in

particular,

it

reduces

the

security

of

this

cake

significantly.

If

you

don't

have

this

primitive

that

has

this

this

feature

itself.

G

A

committing

a

interests

ensures

transcript

consistency

in

group

messaging,

so

this

is

potentially

of

interest

to

MLS

and

the

MLS

working

group,

and

it's

widely

using

research

that

may

be

deployed

in

the

future.

So

the

the

process-

I

guess

here-

was

something

that

we're

kind

of

clarifying,

but

in

this

slide

it

says

why

is

an

RFC

needed?

G

What

should

the

RFC

say,

I'm

not

recommending

that

this

is

exactly

a

piece

of

work,

that's

going

to

be

adopted,

but

this

is

more

of

a

general

presentation,

and

this

is

kind

of

suggestions

from

the

authors

as

to

what

the

benefit

would

be

of

having

a

published

document

in

CFR

G

about

this.

So

specifically,

RFC's

mean

fewer

mistakes.

Better

designs,

misunderstanding

is

widespread.

You

can

implement

something

incorrectly.

If

you

have

a

solid,

readable

and

well

vetted

RFC,

it's

a

lot

easier

to

get

things

right

and

to

make

things

interoperable

a

committing.

G

F

F

It

was

terrifying

and

I

would

say

that

in

theoretical

probability,

cryptography,

MIT

comedian

schemes

and

committing

schemes

are

one

of

the

main

building

blocks

good

scheme,

so

I

think

that's

really

do

need

James

and

I

would

just

say

that

Larry

said

we

need

to

encourage

people

to

be

involved

in

the

process

of

thinking

of

how

to

do

this

in

MO

community.

Thank

you

very

much,

I

think

it's.

It

was

so

very

important,

great

presentation.

Thank

you.

Thanks

go.

N

Phil

Han

Baker

yeah.

This

is

very

helpful

to

me

so

I'd

like

to

see

go

forward

as

an

RFC

and

I'm

designing

a

cryptographic

message

system,

you

know

pkcs7

Jason,

one

on

things.

I'm

trying

to

do

is

to

only

provide

options.

This

is

secure,

so

I

derive,

oh

I,

always

use

derive

keys

and,

if

anything

out

time

could

look

at

it

and

see

whether

my

way

of

deriving

the

keys

is

solid.

I'd

appreciate

that.

J

J

So

what

we're

going

to

propose

here

is

updating

this

scalar

multiplication

operation

so

that

it

ends

up

being

compatible

with

the

kind

of

the

other

side

of

this

diagram.

So

the

underlying

problem

here

is

that

these

curve

groups,

x.25

500

and

9

X

4

4

8-

do

what's

called

clamping

so

before

they

do

the

actual

point

multiplication.

J

They

ensure

that

the

high

order,

a

high

order

bit

in

the

scalar,

is

set,

so

the

scalar

always

has

a

certain

bit

set,

which

means

that

it,

when

you

multiply

two

values,

you

have

like

a

50-50

chance

that

bit

beings

that

are

not

so

the

multiplicative

the

wrong

multiplication

doesn't

work.

That's

kind

of

the

core

of

the

brokenness

here,

but

what

this

draft

does

is

proceed

from

that

from

two

operations.

J

One

is

that

if

you

multiply

the

two

values

and

you

get

a

value-

that's

not

clamps,

then

if

you

subtract

that

value

that

products

from

the

curve

order

in

here,

then

almost

always

you

get

a

clamped

value.

So

that's

observation,

number

one.

The

second

value

of

observation

is

that,

in