►

From YouTube: IETF106-AVTCORE-20191122-1220

Description

AVTCORE meeting session at IETF106

2019/11/22 1220

https://datatracker.ietf.org/meeting/106/proceedings/

B

A

B

A

D

A

A

A

A

Agenda

so

rachel

is

not

here.

She

is

switching

jobs,

switching

roles

at

her

current

at

Huawei,

so

it's

not

clear,

she'll

be

able

to

be

coming

to

the

idea

or

I.

Think

it

is

actually

is

clear

that

she

will

not

be

able

to

be

coming

to

the

IETF

anymore.

So

probably

want

to

talk

to

Barry

about

that

at

some

point.

If

he

still

thinks

we

need

three

chairs

so.

E

A

A

B

So

the

opening

is

one

of

the

here

I'm

speaking

as

one

of

the

authors

of

the

document

editor,

we

missed

during

the

process

to

an

address,

Bernard's

comment,

and

hopefully

I

will

do

it

next

week.

So

I'll

probably

have

to

ask

probably

from

Bernard

for

some

clarification

but

I'll

try

to

do

it

next

week

and

the

last

one

di

feedback,

CC

Fitbit

message:

that's

the

one

that

Colleen

is

going

to

present

when

he

comes

to.

F

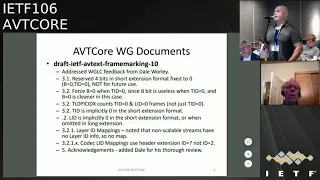

Most

nineties

editor

of

the

frame

working,

so

we

updated

to

version

10

based

on

Dale's

substantial

feedback.

Let

me

preface

this

with

it

was.

It

was

quite

a

bit

of

quite

a

quite

a

few

comments

and

I

think

several

of

them

required

some

substantive

changes,

so

unfortunate

I

think

we

do

need

to

go

through

another

working

with

last

call

to

make

sure

that

those

changes

are

ok

with

everyone.

I'll

summarize

them

briefly,

but

I

think

people

are

gonna,

need

some

more

time

to

digest

it

and

make

sure

that

we're

not

breaking

anything

with

these

changes.

F

So

the

the

first

major

item

was

and

I

guess

we're

on

a

you

should

pay

attention

to

this.

The

floor

reserved

bits

that

used

to

be

in

the

short

format.

You

know

where

the

with

a

temple

area,

ID

and

the

and

the

base

layer

SiC

bit

with

those

bottom

four

bits

were

all

zeros

in

the

short

extension

format,

and

since

we

added

the

ability

to

omit

in

the

long

format,

the

other

parameters,

so

that

made

it

ambiguous

whether

something

was

dead,

zero

with

B

equals

zero

or

whether

something

was

a

non

scalable.

F

Extension,

where

you

should

ignore

those,

so

it's

really

not

possible

to

reserve

them

for

future

use,

which

one

is

draft,

was

relying

on

them

being

available

for

future

use

for

the

non

scheduled

extensions.

Only

if

you

knew

that

beforehand

that

you're

only

ever

going

to

use

non-scalable

streams

through

some

other

signaling,

you

knew

that

there

was

only

non-scalable

screws.

That's

not

time.

You

can

really

reuse

those

bits.

If

you

had

a

case

where

there

could

be

scalable

and

on

scaleable

streams,

then

there's

no

way

you

could

reuse

those

bits

because

there's

no

distinguish

the

two.

A

F

I

mean

this

thing

is

just

to

have

a

different.

You

are

n

or

n

parameter

after

three

marking,

but

that's

essentially

a

different

extension

ID,

so

you

could

differentiate

them

that

way,

but

that

essentially

is

a

different

header

extension

at

that

point.

So

if

you

know,

if

Ronnie

wanted

to

pursue

the

priority

work,

you

just

have

a

different.

F

So

everything

only

depends

on

the

base

layer

and

they'll

pointed

out

that

it

was

an

oddity

that

the

baby

that

would

always

need

to

be

one

in

that

in

that

case,

because

you

you

only

on

the

base

layer,

but

in

other

cases

we

say

that

the

be

a

bit

of

zero,

for

example,

for

non

scribble

streams

so

to

clean

that

up,

I

just

forced

the

nib.

It

always

be

zero

when

the

ten

is

zero.

That

way,

it's

clear

that

the

natural

the

natural

case

is

when

something

is

ignored

at

zero.

F

So

then

you

don't

have

to

always

set

it

to

1

and

then

in

the

base

layer.

It's

just

always

zero

in

the

base

layer.

So

that

seems

a

little

bit

cleaner.

That's

a

substantive

change

and

the

next

changes

tells

your

epic

index.

The

current

draft,

the

older

draft

said

that

it

counts

the

typical

zero

frames

and

that's

not

completely

accurate.

That's

from

the

combination

of

typical

zero

and

lid

equals

zero.

F

Next

change

is

that

if

you

don't

have

a

tid,

it's

implicitly

zero,

so

basically

non-scalable

streams

are

flat

and

they

they

only

have

that

simple

base.

Tipler's

ero

and

similarly

lids

are

implicitly

zero

when

you're

using

the

a

short

extension.

So

if

you

don't

specify

it

you're

in

the

base

special

layer

and

if

you

omit

it

in

the

long

extension

same

thing,

it's

implicitly

zero

some

text

to

clarify

that

that's

not

stuffs

into

this

just

clarification:

they

are

ID

mappings.

That's

also

just

clarification.

F

D

F

Obvious

to

some

other

readers

that,

if

you're

having

non-scalable

stream,

there's

no

layer,

IDs

and

so

there's

no

mappings

clarified,

that

must

rest

and

then

finally,

the

editorial

changed

just

to

not

show

the

header

extension

ID

with

a

specific

value.

It

was

more

the

specific

value

in

some

of

the

sections

and

it

should

be

a

question

mark.

That's

negotiated

by

signaling.

That's

all

changes,

but

I

think

we

do

have

to

go

through

the

work

with

boss

called

heaven.

Everybody

digest

those.

My.

G

Bernardo

bulla

Microsoft

I

have

just

a

clarifying

question

for

you

mo

because

the

fray

marking

draft

is

relatively

simple

to

implement

it's

attractive

to

play

with

for

things

like

a

v1,

and

the

question

has

arisen

as

to

which

loads

of

baby

one

it

is

applicable.

For

so

my

taking

tell

me

I'm

wrong

or

not

is

that

it

applies

for

the

tempo

modes

and

this

normal

kind

of

spatial

modes,

but

not

for

the

KS

v

c

or

c

shift

modes

or

any

of

those

weird

things

or

this.

F

So

if,

if

people

wanted

to

go

outside

of

what

81

specifies

and

bind

to

this

specification,

yeah

the

cases

where

you

haven't

you

know,

nested

temple

hierarchies

would

would

be

usable

by

this.

But

that's

kind

of

is

difficult

to

say

other

and

that

we

should

recommend

and

have

a

section

481

about

this,

because

you

know

the

first

of

all.

There

is

no

any

one

art

speed

you

have

not

in

not

ITF

draft

for

that

and

if

there

ever

were

one

it's

my

understanding

is

it's

likely

not

to

specify

a

binding

to

frame

marking.

G

Mention

this

because

a

frame

marking

is

currently

in

library,

RTC,

and

so

when

a

v1

is

added

in.

You

have

to

figure

out

what

do

you

do

with

it?

And

even

if

we

don't

mention

a

v1,

just

understanding

what

kinds

of

modes,

because

some

of

these

modes

also

exist

in

vp9,

just

understanding

the

applicability

of.

H

B

Finally,

thank

you,

so

just

it

just

for

clarification

is,

as

far

as

I

understand

that

for

the

frame

out

theme

for

any

new

codecs,

which

are

not

part

of

this

document,

didn't

need

to

be

specified

elsewhere,

because

we

are

closing

this

one.

So

if

you

don't

need

for

everyone,

you

need

someplace

to

write.

It.

A

Yeah

I

mean

I

think

the

vp9

draft

does

mention

its

frame,

marking

mappings

in

it.

But

again

it's

only

I

think

frame

marking

is

pretty

clear

that

it's

only

for

these

simple

and

common

scalability

structures-

and

you

know

all

codecs

can

do

weird

things

only,

but

you

know

only

difference

is

how

easily

can

they

describe

them,

but

they

can

all

be

weird

and

frame.

Working

is

only

for

the

things

we

thought

were

the

simple

cases

when

we

were

writing

it.

G

H

G

F

Mean

you

know,

given

that

those

are

just

you

know

just

recently

within

a

few

months.

You

know

I

mentioned,

and

you

know

widespread

deployed,

so

I

I

belittle

averse

to

starting

to

enumerate

things

that

are

newish

because

that

list

could

be.

You

know,

I

worked

really

long

and

if

they

never

see

deployment,

then

it's

kind

of

you

know.

F

We

mentioned

those

things,

I

think

it's

cleaner

and

what

want

any

might

things

and

then

have

someone

say?

Oh

well,

you

didn't

write

this,

so

it

must

be

in

scope.

So

it's

kind

of

ambiguous

whether

or

not

something

that's

not

her

enumerated

you

know

is

in

or

out

of

scope,

so

I

think

it's

cleaner.

Just

to

say

only

these

very,

very

well-defined

streams

are

in

scope

and

everything

else

is

out

of

scope.

B

Okay,

big:

what

will

happen

so

that's

why

we

have

either

be

so.

We

make

a

new

definition

for

frame

mounting.

Like

you

suggested,

we

may

need

a

different,

a

different

structure,

a

different,

a

different

bill,

other

expense

extension

for

a

first

specific

one.

So,

but

that's

we

don't

have

to

decide

it

now,

because

now

we

are

closing

based

on

the

current

text,

that

there

is

a

current

called

exit

out.

There.

E

G

A

G

F

A

F

Mean

it's

a

the

creator

of

the

structure

knows

better

than

we

do

whether

or

not

it

would

work

with

frame

markings

so

I

think

to

actually

prohibit

it,

and

you

would

be

the

wrong

thing.

You

know

if

I'm,

if

I'm,

creating

a

complex

structure

but

I'm

clever

enough

to

make

it

work

the

same

as

nested

structures,

then

I

should

be

prohibited

from

being

able

to

use

the

frame

marking.

You

know

approach,

but

I

could

go

either

way

and

I

prefer

to

leave

the

text

as

is

not

recommended,

but

I

was

a

little

too

strong.

A

A

B

A

B

B

E

B

I'm,

not

sure

I,

think

I

think

they

that's

I'll

have

to

look

it

because

my

understanding

is

that

there

was

the

issue

about

how

it's

related

to

to

internally

into

the

text.

That's

in

the

TSA

Vietnamese

point,

for

that,

is

it

a

normative

reference

information?

Informative?

What

to

do

is

it

or

to

include

the

text?

I!

Think

that's

the

point

that

is

open

there

right.

E

B

B

A

Yeah,

so

I

did

a

minor,

refresh

and

I

think

it

was

a

one

minor

update,

but

there's

still

it's

still

basically

waiting

on

a

bunch

of

informative

texts,

which

I

was

hoping

that

my

co-authors

would

write

and

they

haven't.

So

it

might

be

that

we

need

to

find

some

other

volunteer

to

write

that

text

just

sort

of

like

an

overview

of

how

the

vp9

codec

as

a

whole

works.

I

mean

this

has

been.

A

J

A

B

I

B

B

B

We

have

to

go

back

to

the

goals

in

milestone

and

you

think

see

that

what

to

need

update,

but

currently

we

have

the

tetrarch's

already

passed

you,

the

RTP

header

extension

for

video

frame.

Hopefully

we

probably

hopefully

will

have

it

by

January

or

February,

we'll

be

able

to

send

it

for

publication.

So

we

need

to

update

this

this

date,

the

that's

the

June

2019,

that's

the

draft

for

calling,

hopefully

is

done.

B

We

hear

about

it

when

he

does

this

presentation,

because

we

will

ask

why

it's

not

finished

yet,

and

it's

mostly

because

Colleen

is

trying

to

steal

some

stomach

texts.

To

add

to

that

vp9

we

discussed

now

will

change

the

will

update

the

the

milestone,

the

JPEG

X.

Still,

we

still

have

time

estate

and

the

TTL

is

already

done,

so

we

have

to

just

change

it

to

them.

A

E

F

In

a

cave,

I'm

sure

there's

a

lot

of

energy

around

you

transport

formats

and

potentially

media

over

those

new

transport

formats

and

whether

or

not

it

uses

the

same.

You

know

formats

as

abt

is

defined

or

not

is

still

an

open

question.

So

if

anybody

here

is

not

aware

of

that,

it's

just

a

strong

suggest.

You

follow,

like

you,

know

the

quick

work

and

the

rip

work

and

all

that

and.

A

C

Okay,

hi

I'm,

Colin

Perkins

I

want

to

talk

about

RTP

congestion

control

feedback,

so

the

draft

content

technical

content

has

not

changed

in

the

slightest.

In

this

revision

there

have

been

precisely

free

updates,

one

of

which

is

to

fix

my

core

Mallos

contact

details,

one

of

which

is

to

mention

the

REM

B

format,

along

with

my

minds,

blanking

timber,

and

that

sort

of

thing,

the

other,

is

to

add

a

paragraph

which

discusses

a

relation

with

the

Hulman

draft.

C

And

essentially

all

this

points

out

is

that

you,

the

home

address,

adds

a

night

EP

header

extensions,

give

a

packet

sequence

number

and

then

reports

that

as

a

sequence

number

for

the

aggregates

in

the

reports-

and

this

uses

the

sequence

numbers

which

are

already

in

the

packets

and

reports.

The

SSS

here

in

the

per

flow

sequence

numbers,

and

it

just

points

out

the

obvious

thing

that,

if

you're

adding

a

per

packet

sequence

number,

this

adds

you

overhead

per

packet

but

simplifies

the

reporting.

C

If

what

you

want

to

do

is

can

get

congestion

control,

the

aggregate

and

the

approach

in

this

mechanism

and

has

less

overhead

per

packet,

but

has

slightly

more

complex

reporting

and

gives

you

makes

it

easier

to

congestion

control

the

individual

flows

and

perhaps

also

integrates

better

with

the

rest

of

RTP,

which

also

reports

sequence

numbers

per

SSRC.

So

hopefully

this

is

a

sufficiently

neutral

description

of

the

trade-offs

and

will

not

cause

great

objections.

Hopefully

that

addresses

the

action

item.

C

A

A

A

Any

problem,

one

yeah,

basically

I-

guess

we

can

do

them

both

or

I've,

been

the

same

considerations

apply.

We

want

to

avoid.

You

know

the

holiday

season,

I

think

but

oh

yeah

well,

I

must

I'm

not

unless

we

think

we

get

about

a

fortnight

between

holiday

season,

right,

yeah,

so

so

I

guess,

but

so

probably

overlapping

this

with

very

marketing.

It's

fine

I,

don't

think

it's.

Neither

of

them

are

terribly

long,

so

nothing

either

of

them

will

require

yeah.

C

F

On

the

prior

note

about

the

new

trance

performance,

Thank

You

c'n

for

writing

all

this

up

concisely,

because

I

think

this

will

be

very

informative

for

people

trying

to

design

those

new

formats

and

thinking

about

how

feedback

and

reliability

and

recovery

work,

because

it

helps

to

really

distill

what

is

needed

for

a

media

application.

Some

people

that

are

reviewing

those

new

protocols

should

review

calls

draft

in

parallel

and

understand.

What's

missing

in

the

current

transport

drafts,

yeah.

C

F

Iii

would

greatly

doubt

that

those

other

groups

are

paying

attention

to

something

like

this,

because

media

is

kind

of

worked

on

their

side

right

now,

so

it

wouldn't

hurt

to

ask

them

to

consider

it.

But

I.

Think

specifically,

if

you

ask

the

quick

folks

that

are

working

on

the

recovery

draft

to

take

a

look

at

this,

because

recovery

mechanisms

and

reliability,

mechanisms

and

acknowledgments-

and

things

like

that

are-

are

critical

for

media

and

they

should

understand

why

and

how

they're

critical

for

media,

which

this

draft

lays

out.

But.

C

B

K

A

A

F

C

H

K

A

F

F

A

C

L

L

L

So

our

approach

to

really

see

pillow

format

designs

to

really

start

from

the

service

of

NIH.

Most

Peace

Corps

is

widely

deployed

in

and

generally

works.

Even

though

there

are

some

still

open

issues

and

some

stuff

we

should

not

exercise

at

all,

but

that's

our

starting

point

most

apples

to

the

changes

in

our

header.

L

Our

current

first

strapless

doesn't

reflect

the

newest

to

change,

but

we

were

a

petition,

wants

the

general

meeting

in

GV

at

2020,

so

our

design

press

was

really

trying

to

remove

as

much

accomplished

as

we

can

base.

Our

studies

allows

for

things

in

cement

now

is

not

applicable

at

all.

Okay,

so

that's

a

way

of

trying

to

go

for

with

a

clean

design.

L

So

our

first

step

is

all

to

trying

to

do

B

this

transport

player.

First

right

and

all

the

silicon

is

somebody

gonna

come

last:

it's

not

because

we

forgot

it

it

better.

We'll

do

that

later,

so

we

targeted

this

thing

right

after

a

baby

see

our

first

version,

which

is

next

to

an

Atari

after

July

you'll

Ambridge.

So

that's

pretty

much

the

overview

really

see

if

any

questions

just

move

forward.

B

J

The

the

ITU

is

system

layer

standards

are

pretty

much

dead,

so

I

don't

expect

that

the

dependency

we

had

in

the

past

to

323

and

things

like

that

well

be

an

issue

here,

we're

not

on

a

time

pressure,

not

an

artificial

time

pressure.

There's

discussion

over

in

3gpp

essay

4,

that's

actually

in

work

item

that

they

want

to

perhaps

reference

that

and

they

would

need

it.

But

you

know-

and

that's

not

so

that's

not

so

they

for

the

codec

itself

is

just

for

their

the

reference.

They

called

it.

J

L

So

this

is,

to

the

overview

were

very

over

you

with

the

opening

issues

that

we

have

identified

so

far.

I

have

a

a

Celina

for

each

of

them,

so

I'm

just

gonna

go

through

what

are

we

are

concerning

the

open

issues.

The

first

was

really

concerned.

Well,

concern

is

something

we

need

to

discuss.

The

two,

the

transport

mode,

Mrs,

T

and

M

are

empty.

L

Then.

The

second

is

really

discussion

was

we

need

for

the

pocket

package

anymore,

ma

BC,

which

is

defined

in

the

semi

semi,

negate

that

they're

otherwise

really

c4?

We

need

this

answer.

Building

support

coming

from

this

dolly

basis,

signaling

for

the

incident

supporting

from

7/7

and

I

ate

the

last

one.

We

hope

you

can

leave

the

two

chairs

if

we

can

Japanese

working

group

draft

and

move

forward

to

sue

so

the

first

one

is

a

the

support

with

a

need

for

the

air.

L

Nor

stom

are

Machias

or

srst

is

simply,

everybody

knows

it

in

is

why

deployed

so

ii

would

say:

Mrs

T,

but

those

of

you

who

don't

know

what

is

is

very

carry

MOT.

Portuguese

stream.

Is

you

know,

transport,

the

distance

no7

I?

Don't

have

any

signaling

SST

B,

similarly

at

all

so

there's

a

long

history

to

be

back,

so

that's

even

longer

than

I

a

participant

a

standard,

so

I

will

leave

that

out,

but

that

every

settler

to

make

the

STP

signaling

for

Mrs

T

are

useful

in

our

eta

contacts

right.

L

G

L

L

A

F

Mostly

I

think

we

can

safely

omit

and

our

entity

I,

don't

believe

anyone

is

interested

and

in

that

I

grapple

and

forget

about

what

the

complications

are

with

Mrs

T

I.

Remember.

The

motivations

were

clear

that

you

could

you

could

more

seriously

detect

losses

and

things

like

that,

because

you

have

separation

of

the

layers

and

therefore

we

have

sequence

numbering

independent

for

all

of

those

layers,

but

I

suppose

that

with

something

like

frame

marking,

maybe

maybe

the

the

ambiguities

that

you

get

with

with

srst

aren't

as

bad

as

they

used

to

be.

F

But

I.

Don't

look

well

completely.

Shutting

the

bell

on

and

on

for

simplicity

on

floor,

eliminating

as

many

modes

as

we

can

I.

Just

wonder

whether

there's

anybody

has

actually

thought

about.

Are

people

actually

already

doing

the

marsh

team?

Don't

even

realize

it.

Certainly

simulcast

is

going

across

different

RTP

streams

right

nobody's,

bundling.

G

So

I

mean

Bernard

Abell

mic,

so

we

implemented

Mrs

T

for

h.264

and

one

of

the

arguments

is

you

don't

have

to

rewrite

this

sequence

numbers

so

there's

a

little

bit

of

an

efficiency

there,

but

a

lot

of

complexity

that

you

get

out

of

doing

it.

So

I

am

not

aware

of

anybody

doing

that

with

a

TV

see

it

is

done

with

h.264.

But

still

my

please

look.

J

J

J

We

had

references,

informative

references

to

drafts

and

stuff

in

there

in

the

hope

that

someone

gets

an

act

together,

ones,

one

certain

drafts

from

that

were

kind

of

clocked

over

in

RTC

web

once

those

drafts

were

were

were

done,

that

someone

would

get

the

act

together

and

do

abyss

or

something

no

one

ever

had

the

energy,

no

one,

no

one

cared

yeah.

That's

that's

not

only

because

of

the

success

or

lack

thereof,

of

HEV

C

and

in

the

video

conferencing

business,

but

also

no

one

cared

right.

J

No

one

cared,

it

I

hate

to

have

stale

text

that,

but

we

read

what

question

one

says:

should

we

do

it

now?

Should

we

wait

for

a

companion

document

to

do

it

later?

If

anyone

cares

or

should

we

person

to

be

Papa,

do

it

the

same

thing

that

we

did

with

711

98

and

do

it

in

abyss

and

both

later

options

are

something

which

I'm

kind

of

him,

maybe

the

first

one

I

just

say:

no,

we

shouldn't

write.

L

A

L

Great

all

right

so,

environment

T

is

auto

question

right.

So

when

we,

when

with

that

and

then

the

last

one

so

Bella,

the

fourth

noise

also

come

all

right.

So

I

need

four

right

history,

so

I

don't

want

to

go

through

the

history

again.

So

the

one

of

the

uses

of

the

extension

is

really

for

chimp

earlier

structure

in

signal

they

actually

no

other

uses.

You

may

have

identified

correct

me

if

I'm

wrong,

but

we

would

like

to

hear

if

there's

any

other

usage

for.

G

So

this

one

is

a

little

bit

different

I

do

understand

the

argument

that

some

kind

of

RTP

extension

is

likely

to

be

the

way

that

that

SF

us

operate

generically.

But

you

know

the

thing

is

there

there

may

be,

there

are

situations

in

which

SF

use

will

parse

the

bitstream

and

kind

of

prohibiting

that

from

working.

You

know

basically

requiring

you

to

negotiate

an

arch

at

the

extension

to

function

doesn't

seem

quite

right

to

me,

and

there

are

also

other

things

in

here.

I

would

say

that

that

can

be

supported

as

part

of

this.

G

J

To

put

SF

as

a

information

ever

as

a

fuse

would

typically

need

to

do

something

smart

to

a

scalable,

bitstream

I

think

is

not

the

payload

header,

because

the

payload

header

is

encrypted.

The

right

spot

is

in

an

extension

to

the

frame

marking

Draft

right

where

that

thing

is

not

encrypted

right.

So

the

scope

of

that

thing

is

only

the

payload

format

and

so

far

so

so

I

mean

we

we

can.

We

can

volunteer,

please

ship,

this

time

frame,

marking

draft

yesterday

right

dependent.

J

So

let's

get

that

thing

out

of

the

door,

but

I

officially

volunteer

here

to

think

about

an

extension

mechanism

of

the

frame

marking

draft

for

generic

stuff,

where

we

can

possibly

make

a

be

too

happy,

if

not

a

be

one

where

we

can

possibly

make

make

put

what

this

type

of

stuff

in,

but

throwing

in

a

generic

extension

mechanism

of

the

payload

header.

When

we

don't

have

a

single

use

case

that

makes

sense

in

today's

world

where

media

is

supposed

to

be

encrypted,

I'm,

sorry

I,

don't.

B

J

Okay,

so

so,

in

other

words,

we

may

want

to

have

in

here

a

section

that

says

how

to

use

the

frame

marking

draft

for

the

absolutely

agree.

Thank

you

very

much

for

reminding

me

yeah.

That's

what

we're

going

to

do

and

we

volunteer

to

do

some

work

there

and

a

yeah

go.

Are

you

online

territory

for

you

to

grab

work

to

do

next?

One

yeah

yeah

go

it's

muted!

Okay,

yeah

go

well.

Probably

do

it

hehe

volunteer

to

do

some

work

there

he

wants

to

be

involved,

so

Yahoo

can

do

it.

F

Person,

anyone

fall

one

on

that.

Thank

you,

someone

for

bringing

up

the

distinction

between

having

things

and

payload

headers

versus

artsy

headers,

like

the

reiterate

that

in

the

new

transport

work,

all

of

his

stuff

is

going

in

an

encrypted

basically

tunnel,

and

so

we

would

lose

the

ability

to

have

authenticated.

F

M

N

So

I

I

think

more.

What's

that

some

as

write

in

that

direction,

but

I

would

think

that

the

model

more

like

what

perk

houses

is

actually

what

we're

discussing

is

that

yes,

you're

gonna

have

now

to

encrypt

the

layer

between

the

nodes

in

a

centralized

conferencing

structure,

and

things

like

that,

so

you're

really

talking

is,

is

maybe

more

like

in

Prague.

How

do

you

expose

the

information

when

you

have

the

payload

being

encrypted

and

and

then

I

mean

really

the

media

payloads

so

and

I?

N

Think

if

I

remember

correctly,

that

would

mean

that

you,

the

payload

part

of

RTP

skarbek,

is

enter

and

encrypted.

Why

not?

They

had

or

extension

students.

It's

it's

still

in

this

booking

good

contact.

I.

Think

that's

the

the

right

way

are

thinking

about

this,

but

I

I

wouldn't

see

a

need

for

necessarily

doing

this

in

the

in

the

base.

Spec

for

this

payload

format,

I.

F

Got

Magnus

the

highest

runner

case.

Is

his

media

nodes

being

able

to

access

this,

but

I?

Think

one

of

the

other

motivations

for

having

our

theatres

and

the

clear

was

not

necessarily

a

conference

bridge,

but

even

on

path,

elements

to

be

able

to

see

and

do

things

intelligently

with

this

and

of

course

you

lose

that

ability

with

work

doesn't

allow

beyond

bath

elements

to

see.

You

know

anything

about

the

header

extensions

if

it's

going

really

over

DT

less

than

that

over

RTP,

not

ridiculous

or

to

me,

if

it's

going,

miscibility

yeah.

A

J

I

have

two

comments.

One

comment

is

that

the

typical

lifetime

of

a

video

codec

is

about

ten

years,

we'll

see

whether

we

see

initial

deployment

of

all

this

modern

stuff

that

more

sites

within

the

lifetime

of

this

codec

yeah

I

mean

come

on

this

we're

not

the

fastest

organization

on

the

planet.

That's

number

one

number

two

and

that's

probably

more

important.

The

argument.

J

The

the

key

argument

for

all

this

encryption

stuff

is

or

hey

you

know.

One

of

the

key

arguments

for

the

frame

marking

draft

has

been

as

far

as

I

understand

it,

that

the

middle

box

that

sits

there

in

the

middle

of

the

network

and

is

operated

by

someone

else

who

is

who

is

not

supposed

to

see

the

traffic

itself

can

act

on

it.

J

You

still

need

to

have

some

stuff

on

one

hand

encrypted

so

that

the

metal

box

can't

see

say

the

video

signal,

the

compressed

media

signal,

but

on

the

other

hand,

it

has

to

see

some

controlled

information

like

this

right,

so

so

just

putting

them

in

in

all

in

the

same

kind

of

giant

bucket.

It's

either

encrypted

or

not

in

that

scenario

is

wrong.

Yeah.

J

B

L

G

O

H

J

You

think

it's

not

okay,

it's

formulated

the

answer

to

question.

Five

is

no,

because

we

I

don't

think

we

need

to

extend

the

payload

header

itself

ever.

However,

there

should

be

a

question

5.5,

which

is:

do

we

need

a

mapping

of

the

mechanisms

of

the

new

BBC

codec

to

the

frame

marking

draft?

And

the

answer

to

that

question

is

a

resounding

yes

and

then

there

is

a

third

question

whether

we

need

that

in

this

document

here

and

the

answer

to

that

question

is

also

resounding

yes,

good.

E

L

All

right,

so

that

will

be

the

last

ones

we're

thinking

about

removing

the

Donny

based

signaling

for

engineering

support

M.

So

that's

a

better

trend

for

the

better

codecs

such

as

ABC

ABC,

M,

also

also

HTML,

maybe

see

that

we

know

the

coup

de

selva

is

already

good

enough

to

know,

let's

argue

hole

for

the

success

for

error

concealment.

L

A

I

B

B

A

B

A

A

A

P

P

P

P

It's

used,

it's

specified

in

next-generation

emergency

services,

we

do

regular

sip,

VoIP

services

and

in

relay

services.

So

next

slide

please,

but

the

multi-party

real

context

is

not

specified.

It's

mentioned

in

many

specifications

that

we

need

to

do

multi-party,

but

it

has

never

settled

in

any

standards

document.

It

has

been

said

that,

well,

you

do

you

use

regular,

ought

to

be

mechanized,

but

we

need

to

settle

it.

So

everybody

knows

what

is

the

best

way

to

do

it

so

that,

therefore,

the

current

user

terminals

only

support

to

park

a

new

of

Vietnam

text

course.

P

So

what

we

need

to

go

from

two

particular

multi

pocket

is

that

we

need

to

identify

the

source

of

text.

We

need

receiver

to

separate

text

from

different

sources

and

we

don't

allow

we

shouldn't

delay.

The

text.

Presentation

should

still

have

the

flow,

and

since

there

are

many

implementations

already

after

doing

two

party,

we

need

to

have

a

fallback

method

for

mixing

when

you

have

received

that

is

not

capable

of

sorting

out

the

receives

three

in

two

separate

presentations.

P

One

way,

which

I

think

is

becoming

the

most

common,

is

a

view

like

this,

where

you

labels

in

front

of

text

chunks

from

different

parties,

you

see

probably

the

three

lowest

icons

here.

You

have

people

typing,

so

the

text

is

going

from

multiple

parties

in

the

one

you

have

completed,

something

that

can

be

called

the

message

you

let

it

flow

up

in

the

in

the

next

slide.

We

have

another

way

of

displaying

a

real

context,

that

is

to

have

a

column

for

each

user.

P

K

P

It

should

not

delay

display.

We

have

an

overall

that

display

shouldn't

happen,

not

more

than

500

milliseconds

after

sending,

then

we

also

have

a

slight

risk

for

text

loss

when

we

are

redundant

same

use

so,

and

we

have

a

standard

saying

how

we

shall

indicate

that

to

the

receiving

user,

but

the

mark

in

the

stream-

and

we

need

to

have

that

still

available

to

mark

then,

and

we

shall

collect

text

from

different

users

so

that

it's

readable

and

the

source

is

identified.

P

We

also

support

erasure,

and

that

complicates

the

display

for

the

multi-party

case.

Even

must

not

let

it

erase

other

parties

text,

for

example

the

win

it.

What

for

security,

and

we

should

not

have

something

that

is

still

complicated.

There

is

a

some

urgency

for

getting

this

done,

so

implementable

in

near

future

would

current

implementations,

and

we

need

also

to

describe

this

for

back

method

for

cases

when

we

only

have

a

two-party

capable

of

receiving

terminal

mix

like.

B

P

P

And

since

they're

already

implementations

out,

we

need

to

have

a

method

for

capability

negotiation

for

the

multi-party

and

there

are

a

couple

of

methods

to

select

from.

We

need

to

discuss

how

we

identify

the

source.

We

have

some

presentational

aspects,

we

have

the

discussion

of

robustness

and

the

indication

of

loss

and

performance

and

security

and

Ayana

considerations

next

slide.

Please.

P

P

Now

they

drove

to

touches

topics

of

money,

working

groups,

it's

for

some

marketing

aspects

for

a

week

or

its

notion

ago.

She

ation

for

her

music.

It

might

be

something

Pacific

or

I,

don't

know,

and

so

we

need

to

arrange

the

discussions

in

a

practical

way.

I

wanted

to

take

advice

on

that,

so

we

have

it

in

a

music,

even

if

it

touches

every

key

topics

or

shall

they

distribute

the

discussions.

Let's

comment

and.

P

P

Examples

from

the

draft

some

discussion

topic

how

we

coordinate

RTP,

it's

different

marketing

methods,

translator

or

mixer,

more

have

in

the

source

indication

in

the

stream

or

have

a

mesh

mesh

about

the

end

points

or

even

multiple

RTP

sessions,

and

we

also

have

the

discussion

on

the

conference

on

the

web

with

agents

or

we

provide

some

sense

of

multi-party

to

them

next

night.

Please.

P

Another

discussion

topic

in

the

draft

is

the

capability

negotiation

where

I

have

identified

three

possibilities.

One

is

to

somehow

make

it

implicit

by

saying

that

if

you

have

a

multi

part

of

our

device

acquiring

text

in

the

stream,

it

must

be,

of

course,

speak

markup,

a

party

where

also

for

the

text-

and

you

can

start

sending

multi-party

contents

to

it.

Another

is

to

a

new

media

tag

for

declaring

multi-party

the

paper

lately

and

third,

one

is

to

have

STP

media

attribute.

P

Now

there

are

pros

and

cons

for

this

three

and

mark

the

other

ways

to

indicate

capability

next

slide.

Please

so

I

think

guy

Jeff

is

the

right

place

to

do

this,

because

we

have

needs

from

different

pockets.

We

have

Nina

and

we

have

three

EPP

dollars

and

they

often

refer

to

our

ITF,

currently

I.

Think

it's

a

best.

Current

practice

document

should

be

produced.

P

And

it

is

a

bit

urgent

to

get

this

ready.

The

urgency

has

been

announced

from

Nina

and

the

next

generation

emergency

services,

so

at

least

only

2021

that

I

want

to

see

implementations

running

Ollie's

and

max

like

this,

which

is

lost.

I,

look

forward

to

rapid

progress

on

this

and

hope

that

we

can

get

discussions

going

Thanks.

B

Okay,

so

I

think

we'll

have

to

go

and

discuss

with

the

a

dealer

to

progress

it

because

I'm

not

even

sure

if

it's

him

and

music,

because

it's

like

it,

has

a

spectrum

shape

like

we

said

from

c4n,

music

and

some

abt.

So

typically

we

do

it

through

dispatch,

but

then

I'll

consult

with

the

with

the

ad

about

what

to

do

and

we'll

come

back

and

saying

how

to

how

we

can

progress

it

and

whether

it's

well

and

then

we'll

have

to

decide

afterwards.

B

I

E

E

Taking

dispatch

is

a

reasonable

thing,

but

if

there

are

two

pretty

clear

working

groups

that

it

would

go

in

one

or

the

other,

and

you

should

work

that

out

first

and

if

the

answer

is

neither

of

them

thinks

it's.

The

answer

is

clear,

then

we'll

take

it

to

dispatch

or

I'll

chat

with

Adam

about

it

and

we'll

see

where

we

go

and

Magnus

obviously

has

something

to

say

so.

N

So

I

actually

have

a

question

which

I

think

defines

part

of

the

scope

to

goon

r8.

Are

you

feeling

that

the

multi-stream

multi-part

solution

is

clear

for

this,

that

the

current

payload

format

UNS

use

it

for

multi-party

with

when

you

actually

have

multiple

offices

are

sees

multiple

RTP

streams?

Is

that

clear

enough

in

the

specifications.

N

P

N

Mean

if

I

take

the

RTP

mobile,

the

obvious

multi-party

model

for

real-time

text

is

that

each

each

you

shoulda

tried

something

has

its

own

as

a

source

II.

The

stream

is

forward

the

whole

way

to

all

receivers

and-

and

it

becomes

solely

question

of

how

do

you

display

these

users,

it's

an

implementation.

It's

it

static,

fully

click

the

right

assumption

here

for

what

you

would

say,

be

the

right

solution

for

updated

users,

etc,

and

that

that

would

be

the

right

and

then

it's

the

question

of

how

do

we

deal

with

legacy

implementations?

P

N

Lot

of

people

spective,

say

I,

know,

I

know

the

result

is

this:

we

get

into

getting

the

whole

bundle

and

multiple

and

all

this

you

get

a

lot

of

em

lines

and

and

which,

which

is

interesting

in

these

cases,

where

you

have

I

would

say,

large

groups

of

conferencing

etc,

and

that

is

using

and

I

think

this

is

downside

of

the

result.

All

of

so,

therefore,

it's

an

M

in

some

sense,

let

me

use

the

question

of

the

complications

of

using

RTP

as

intended,

for

this

use.

N

E

I

N

So

I

mean

it's.

This

is

tough

I

think,

because

some

of

these

solutions

to

these

legacy

problem

is

is

in

the

space

that

you

actually

or

define

the

internal

of

the

text.

Formats

and

and

the

rest

is

very

much

tingling

I

think

for

dealing

with

et

cetera.

So

it's

it's

I

think

there's

a

large

part

which

is

probably

more

Indian

music

country,

and

then

you

have

this

specific

media

how

to

deal

in

media

and

that's

section

and

I.

It

might

be

that

it's

small

enough

and

it's

not

really

changing

the

RTP

stuff

anyway.

N

B

P

P

K

K

You

might

want

to

take

a

step

back

or

you

might

want

to

shut

down

the

scope

of

you

so

that

it's

okay

to

first,

you

a

point

solution

that

you

can

get

to

quickly

because

you

don't

consider

consider

either

other

requirements

or

other

technologies.

But

this

is

nifty

party

chat.

It

will

have

all

the

problems

of.

B

Trying

to

say

on

you

had

multiple

artists

solution,

architecture

in

the

document,

and

you

have

to

figure

out.

We

have

to

figure

out

which

one

will

be

simple

to

implement

and

to

start

with

that,

because

I

assume,

maybe

when

you

having

a

conference

and

a

conference

of

an

MCU,

the

does

that

may

make

it

simple,

because

signaling

would

be

simple

because,

like

a

point-to-point

with

handling

all

the

mechanism,

we

have

today

to

do

multiple

conferencing,

like

the

focus

the

one

to

a

defining

sync.

F

What

I

wanted

to

ask

is

a

qualifying

question

is

and

all

of

those

deployments

are

they

real

or

just

purely

nothing

but

text?

Well,

are

you

sometimes

trying

to

mix

the

text

with

other

media?

That

would

be

the

only

rationale,

but

I

could

see

why

you

would

go

to

any

kind

of

Artemia

solution

if

it's

purely

text

than

all

these

deployments

and

no

other

media

type

I

think

has

to

be

a

hack.

It's

an

accident

worse

than

an

accident.

It's

a

it's

a

giant

mistake.

Oh

yeah

I

can.

E

G

Bernard

about

myself

a

clarifying

question:

I

believe

this

is

part

of

the

next

gen

9

1

1

architecture.

Is

it

not

because,

as

I

yeah

recall

right,

you

can

have

real-time

text

and

audio

and

video

and

it

can

be

conferenced.

That's

how

the

recording

works

kind

of

go

for

a

conversing,

so

it

tip

it

can

include

both

audio

video.

A

K

P

A

Ims,

yes,

I

mean

I,

can

just

I

mean

which

I

guess

that

might

quite

there

is

that,

what's

what

implement

solution?

Spaces

are

likely

to

be

easy,

for

that

community

depends

yeah

that

effects

what

what

we

choose

to

do.

Yes,

yeah

so

I

think

it

does

sort

of

seem

like

people

are

feeling.

This

is

more

in

my

scope

with

M

music

than

here.