►

From YouTube: IETF106-RMCAT-20191119-1330

Description

RMCAT meeting session at IETF106

2019/11/19 1330

https://datatracker.ietf.org/meeting/106/proceedings/

A

B

C

F

C

C

Today's

slides

are

all

in

the

data

tracker.

If

you

want

to

follow

along,

we

have

mute.

Echo

is

anyone

in

the

jabber

room

in

the

in

the

room,

Jonathan?

Okay,

if

you

can

keep

an

eye

out

for

comments

in

there,

if

I

forget

to

press

the

red

button,

someone

shout

at

me,

I

know

all

I

am

able

to

remote

participation.

C

C

If

you

didn't

take

notes,

please

send

them

to

me.

So

we've

got

a

reasonably

light

agenda.

Today,

we've

got

this

introduction

and

status

update

to

start

with,

then

churching

will

give

an

update

on

the

NAD

or

implementation

and

some

new

evaluation

results

and

then

we'll

finish

up.

I

have

a

very

brief

presentation

about

the

congestion

control

feedback

draft,

which

will

mostly

be

discussed

in

a

BT

core

and

I.

Think

Friday.

C

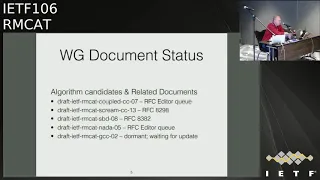

The

Google

congestion

control

draft

is

has

been

dormant

for

some

time

and

I'm

not

expecting

that

to

be

updated,

although

we

would

be

open

to

an

update

if

the

authors

care

about

that.

All

of

the

other

drafts,

the

NADA

algorithms

cream,

the

shared

bottleneck,

detection

and

coupled

congestion

control

refer

in

the

RFC

if

it's

a

queue

or

have

been

published,

as

RFC

is

already

the

requirements

draft

the

condition

mutual

requirements

has

been

in

the

RFC

at

HQ

for

a

very,

very

long

time.

C

I

believe

that's

part

of

cluster

238,

so

hopefully

will

appear

before

too

long.

The

evaluation

drafts

the

video

traffic

model

was

published

recently

recently,

the

evolve

test

draft

has

been

approved,

and

this

was

the

RFC

editor

when

the

eval

criteria

and

the

Wireless

tests

went

to

the

IFG

since

the

last

meeting

and

they're

waiting

for

processing

the

condition,

control

feedback

message,

I'll

give

you

a

brief

update

about

that

later

today

and

it

will

be

discussed

in

a

BT

car

on

Friday

here.

C

My

belief

is

that

this

is

now

ready

for

working

group

last

call

in

a

BT

core,

and

hopefully

we

will

be

able

to

wrap

that

up

pretty

quickly.

The

RM

congestion

control

feedback

draft

had

a

very

brief

update

prior

to

this

meeting,

which

just

fixed

a

bug.

I

noticed

to

make

the

calculations

I

actually

match

the

description

in

the

text.

It

turns

out

that

you

have

to

specify

the

dependencies

in

the

make

file

if

things

to

be

updated

correctly.

C

Won

the

war

for

that

draft

once

the

feedback

message

has

been

finalized,

that

will

then

get

revised

with

the

accurate

numbers

and

the

congestion

control

framework

and

codec

interactions.

Draft

have

both

expired

and

we

will

I

think

we

previously

decided

that

we'd

reconsider

those

once

the

candidates

had

got

the

standards

track,

and

that

may

be

some

time.

So

these

are

basically

dominants.

F

C

Yeah,

the

milestone

has

been

delayed,

a

little

obvious.

The

evaluation

results

were

supposed

to

go

to

the

ASG

in

July.

The

publisher.

First

draft

of

the

standards

track

congestion

control

in

July,

and

we

are

supposed

to

submits

that

the

final

version

of

congestion

control

and

the

interactions

to

the

ASG

by

this

meeting

I

think

it's

reasonably

likely

that

those

are

not

going

to

happen,

especially

the

ones

in

the

past.

C

So

the

suggestion

from

the

chairs

after

a

little

bit

of

discussion

was

that

we

would

spend

the

next

12

months

or

so

gathering

evaluation,

results

and

implementation,

experience

and

practical

experience

using

some

of

these

algorithms

for

the

next

12

months

or

so,

and

keep

the

working

group

alive.

And

if

there's

things

to

reports,

then

we

can.

C

We

can

have

meetings

and

have

some

reports

and

some

discussion

and

if

there

isn't

anything

to

report

for

a

particular

meeting,

then

we

can

not

meet

at

that

time

and

then

in

a

year

or

so,

we

can

evaluate

that

status,

see

see

where

things

are

and

see

if

we

should

continue

in

their

pursuit

of

work.

If

there's

any

interest

in

taking

any

of

these

to

us

done

district

I,

don't

know

if

there

any

opinions

on

that.

From

that

the

rest

of

the

group.

G

Jad

yeah

I

think

I.

Think

that's.

This

sounds

good

to

me,

but

what

I'm

I

was

wondering

like

what

do

you

do

with

gathering

information?

That

is,

how

do

you

gather

it

like?

Is

it

like

I

mean

we

have

been?

We

have

been

showing

results

when

we

were

developing

the

pollution

control.

Algorithms,

you

have

a

quite

amount

of

evolution,

results

there.

So

basically,

what

exactly

you

would

like

to

gather

that

was

not

already

there

and

how

you

would

like

to

gather

that.

So,

where

do

we

have

the

discussion?

G

H

D

Mia

Kulemin,

so

what

we

usually

do

for

congressional

tour,

not

that

we

have

like

a

bunch

of

congestion

control

schemes

sanitized

in

the

IDF,

but

like

we've,

been

carefully

about

Senate

I,

think

I

met

proposed

standard

because

you

want

to

make

sure

it's

safe

to

use

them

on

the

Internet,

so

I

think

the

only

way

to

figure

out

it's

safe

to

use

them

on

the

Internet

is

to

actually

use

them

on

the

Internet.

So

what

you

want

is

deployment.

You

want

not

only

evaluation

results.

D

You

actually

want

like

live

four

styles

of

deployed

traces

and

whatever.

If

you

can't

get

that,

then

maybe

we

shouldn't

go

for

proposed

standard,

which

I,

don't

think

is

like

a

bad

thing,

because

you

can

use

congestion

control

without

like

having

interoperability

problems

or

whatever

it

doesn't

really

matter

if

it's

experimental

or

proposed

standard

so

but

like

that

would

be

my

bar.

G

What

is

the

spread

of

the

deployment

like

like

this

amount

of

traffic,

need

to

use

some

condition,

control

or

something

like

that

or

how

I

mean

deploy

many

I,

of

course,

like

what

we

can

do.

We

can

what

exactly

for

a

scream

we

did

and

basically

we

have

it

open

source.

We

have

a

couple

of

cases

that

we

used

it

in

different

trials

and

tests

and

a

light

network,

and

also

how

we

know

like

some

people

are

working

on

it.

G

It

is

on

gh2,

more

and

stuff

like

that,

but

if

you

want

me

to

call

it

all

the

more

like

instances

of

using

scream,

it

will

be

very

hard

for

me

to

come

and

get

an

answer.

So

what

I

would

like

to

rely

on

like

anybody

using

those

not

ice

cream

of

Jesus?

They

come

and

show

show

up

here,

and

that's

also

like

and

not

really

realistic,

as

I'm

saying.

So

what

do

you

do

here?

I

mean

that's

my

question.

Basically

I

mean

we

for

one

year,

I

mean

we

said

like

one

year.

C

E

Hi,

actually

I

meant

to

ask

about

it

after

my

presentation,

but

I

think,

since

we're

talking

about

the

plan

for

the

working

group,

I

might

as

well

make

sure

I

have

sort

of

similar,

maybe

question

as

that

he

in

sense

of

for

the

evaluation

stage,

we're

no

longer

talking

about

evaluating

the

performance

right.

For

that

we

have

the

test

case.

We

have

all

the

collection

of

some

preliminary

set

of

our

results.

E

Now

that

we're

talking

about

trying

to

gather

a

deployment

results,

I

was

wondering

whether

that's

a

good

expectation

but

I

was

wondering

whether

there's

any

sort

of

treats

guidelines

around

it.

For

instance,

the

question

I

wanted

to

ask

after

my

presentation,

was

that

we

basically

put

in

the

implementation

as

kind

of

a

experimental

branch

if

you

like,

as

and

the

browser

you

know

and

buting,

an

alternative

method

inside

web

RTC.

E

I

was

kind

of

curious

to

know

whether

any

of

the

owners

of

either

web,

RTC,

modular

or

inside

Firefox

is

interested

in

trying

it

out

on

a

larger

scale.

So

maybe

those

type

of

things.

So

maybe

this

is

more

carful

participation.

You

know

either

inside

this

working

group

and

I

guess

we

can

also

reach

out

to

people

who

are

potentially

interested

yeah,

but

then

so

that's

my

commenting

may

be

trying

to

encourage

evaluation

of

this

method.

But

the

question

is:

do

we

also

have

guidelines?

Let's

say

if

someone

is

interested

in

contributing

to

large-scale

evaluation?

E

I

C

Which

lists

the

type

of

things

we

look

to

evaluate

now,

obviously,

what

you

can

do

in

a

simulation

testbed

is

not

the

same

as

what

you

can

do

in

a

real

deployment,

but

you

know

metrics

along

those

lines,

just

generally

experience

you,

we

have

used

it

or

we

have

seen

other

people

using

it.

It

doesn't

seem

to

break

anything

just

some

general

experiences

from

from

people

actually

trying

it

out

and

seeing

if

it

really

works.

Yeah.

D

You

could

let

me

let

me

at

one

point,

so

we

also

discussed

that

or

like

we

decided

long

time

ago,

that

we

don't

need

like

to

pick

one

that

is

the

winner

right.

We

can,

we

can

document

all

of

them,

and

then

we

see

what

deployment

which

deployment

will

happen

and

so

I

think

at

this

point

it's

also

not

about

comparison

anymore.

It's

not

to

say

this

is

this

one

is

better

than

the

other

one

it's

just

like.

D

If

you

can

prove

that

there

has

been

deployment

and

it

didn't

met

the

internet,

then

we

can

move

to

stand

up

strike.

If

not

it's

fine

as

well

I,

don't

think

it's

a

problem

so

like

I'd,

really

like

the

plan

having

the

group

open

for

12

months,

see

if

there's

like

anybody

who

can

report

on

these

things

and

this

timeframe

and

then,

if

so,

we

can

move

ahead.

That

would

be

great,

if

not

I,

don't

think

it

would

be

failure

for

this

working

group.

It

would

be

fine

as

well.

J

I

John

Lennox

I

think

I

mentioned

on

the

mailing

list

that

I'm

on

the

I'm

working

for

the

jitsi

open-source

conferencing

project

now

and

I've

I

think

I

mentioned

the

millions

would

be

interested

in

trying

out

various

algorithms

in

our

open

source

SFU,

and

we

could,

even

you

probably

you

know,

we

could

certainly

even

try

to

try

various

algorithms

in

production.

I

mean

the

thing

about

asset

view.

G

H

G

Think

I

agree

with

Miriah.

Like

I

mean

this

is

fine

with.

If

we

have

the

results,

we

have

a

stronger

if

we

don't

have

resolve,

so

you

have

experiment

and

I

think

we

have.

We

have

somebody

saying

here

like

they

have

dared.

I

am

doing

experiment

and

hopefully

they

are

doing

more

than

like

GCC,

because

I

don't

think

like

GCC

is

the

experimental

right

now.

G

G

J

So

I

just

want

to

add

that,

like

like

at

the

time

when

I

submit

the

proposal

like

maybe

to

the

cheer

like

you,

can

decide

whether

it's

fun

or

not,

but

like

our

work

is

not

just

purely

evaluating

GCC

like

we

actually

applied

it

over

a

quick

instead

of

UDP

and

we

applied

it,

we

applied

it

to

a

different

use

case.

Then

video

calling

so

there

might

be

some

interesting

learnings

for

the

group

like

I,

can

submit

a

proposal

and

then

we

can

I

decide

yeah.

C

I

mean

I

think

that

would

be

an

be

interesting

and

you

know

we'll

see

we'll

see

whether

this

group

is

the

right

place

to

do

it.

So

ever

whether

we

should

possibly

point

you

at

so

the

the

congestion

control

research

groups-

that's

yeah,

it's

it

sounds

like

interesting

work

and

yeah.

It

would

be

useful

to

hear

it

present

it

at

least

somewhere

in

in

one

of

these

meetings.

C

Okay,

so

how

I'm

reading

that

is

that

there

is

general

agreement

that

this

is

a

reasonable

approach

and

we

will

have

to

see

how

things

go

with

the

evaluation

and

it

is

clearly

difficult

to

be

prescriptive.

You

know

we're

not

gonna

say

you

need

so

many

hundred

million

users

and

minutes

of

deployment

experience,

but

if

people

are

using

it

and

getting

some

experiences,

then

please

report

back

okay,

so

the

only

other

thing

I

have

I

think

that's

the

last

of

these

chest.

Slides

I

mean

obviously

it

once

we're

talking

about

implementation

experience.

I

Jonathan

ik,

so

I

mean

the

main

thing

I

worked

on.

The

hackathon

is

to

sew

is

to

try

to

get

more

visibility

into

tracing

and

debugging

of

what

these

things

algorithms

are

doing.

Cuz

it's

often

it's

very

hard

to

say,

okay.

What

actually

happened

here

you

know

is,

you

know,

is

the

fact

that

my

band

was

just

dropped.

You

know

some

weird

quirk

about

algorithm

or

really

a

profession

of

my

network.

So

there

were,

there

were

no.

I

I

One

thing

that

we

useful

to

us

is

have

a

practical

way

to

turn,

looks

like

some

of

the

guidance

in

the

content

in

the

test

cases

into

you

know,

for

instance,

actual

commands.

We

can

type

into

Linux

to

turn

on

the

various

traffic

shaping

algorithms

to

say.

Okay,

let's

make

something

that

looks

like

you

know,

so

we

can

actually

test

front

on

our

real

systems.

What's

actually

happening,

and

you

know

there's

these

various

tools,

there's

this

tool,

TC

and

Linux,

which

is

nearly

incomprehensible

to

mere

mortals.

I

There

are

various

front

ends

to

it,

but

you

know

it's

always

clear

whether

the

front

ends

are

doing

what

you

know

something

realistic,

because

it's

very

easy

to

get

a

you

know

to

pick

the

wrong.

You

know

traffic

class

or

traffic

shaping

and

get

something.

That's

completely

unrealistic

compared

to

what

the

test

case

they're

trying

to

do,

particularly

for

things

like

the

wireless

test

cases.

We

have

no

idea

how

to

make

something.

Look

like

you

know,

a

wireless

base

station

using

TC

on

Linux.

That

is

extremely

non-obvious.

Yes,

the

that's

not

simple!

I

C

I

Other

thing

we

found

is

that

a

lot

of

both

the

algorithms

and

the

test

cases

are

written

from

the

point

of

view

of

endpoints,

whereas

you

know

the

things

that

the

people

who

are

working

on

it

are

actually

working

on

our

SF

use

and

so

I

think

we

need

to

you

know.

Sometimes

you

get

things

like

where

the

algorithms

will

say:

okay,

when

when

this

happens,

tell

being

coder

to

send

more

and

I

can't

do

that

I'm

in

SF,

you

so

I

think

sort

of

more.

You

know,

clarity

somewhere

on.

F

E

E

E

Basically

right

now,

the

draft

is

in

the

editors

queue

and

most

of

the

changes

to

the

draft

are

mostly

editorial

in

the

sense

of

trying

to

either

be

more

inclusive

or

you

know,

trying

to

eat

and

revise

these

discussions

for

more

clarity

and

there's

no

algorithmic

changes

for

the

draft,

and

also

the

details

of

the

revisions

were

summarized

on

the

mailing

list.

One

we

have

in

version

updates,

so

folks

are,

you

know,

feel

free

to

check

them

out

and

I

think.

Usually.

E

The

diff

view

also

gives

very

quick

and

concise

comparison

of

revision

updates,

and

next

one

please

and

implementation

wise

and

compared

to

the

last

round

of

our

presentation.

We

have

updated.

Our

implementation

to

now

include

all

the

our

following

algorithm

features

and

in

this

updated

version

of

implementation.

That

includes

the

mapping.

The

nonlinear

delay

warping

mapping

in

DES

to

the

congestion

signal,

as

well

as

incorporating

lost

as

part

of

the

congestion

signal

penalties.

E

So

this

way

the

evaluation

results

I

show

today

do

reflect

all

the

features,

that's

kind

of

bells

and

whistles

that's

in

the

draft.

The

other

thing,

the

other

and

things

are

mostly

for

inconvenience.

We

basically

added

similar

locking

mechanisms,

the

default

rate,

adaptation,

modular

and

inside

the

web,

RTC

modular.

So

this

way,

basically

one

we

can

do

comparison,

we

can

have

similar

locking

for

for

comparison

of

stats,

and

we

also

enabled

my

colleague

Sergio.

E

This

is

basically

a

branch

that

I

fought

off

the

web,

RTC

the

the

Firefox

entire

browser

code

and

we

added

the

pasta,

the

navigate

adaptation

modular

there.

We

actually

have

two

versions:

we

have

post

the

ground

truth

based

version,

which

is

somewhat

more

like

a

fallback,

if

you

don't

have

the

updated

version

of

the

transport

feedback

or

the

one-way

delay

based

nada,

which

reflects

better

all

the

features

in

the

draft

and

it's

become

people

feel

free

to

go

check

out

the

code.

E

You

know

sort

of

take

a

look

at

the

code

to

next

slide,

please

so

in

terms

of

evaluation

results.

In

this

round,

we

basically

took

the

feedback

we

gathered

from

our

previous

presentation,

in

the

sense

that

previously,

when

we

presented

results,

book

will

always

have

bi-directional

cause

and

always

compared

the

forward

direction

versus

the

reverse

direction,

because

the

sender's

were

running

different

algorithms.

E

Basically,

all

the

monitoring

logs

come

from

the

the

direction

from

client

a

to

B

and

we

switch

back

and

forth

between

the

default

behavior

versus

the

nagas

based

great

adaptation

and,

as

mentioned

before,

for

convenience,

with

both

added

logging

on

the

sender

site

to

gather

feedback

from

the

receiver,

as

well

as

per

packet

feedback

information

which

is

embedded

in

this

kind

of

this

new

form

of

transport,

which

Chrome

already

supports,

and

the

other

may

be

side.

Note

is

therefore

nada.

The

algorithm

we

do

impose

possib

mixed

max

and

minimum

grades

for

the

rate

adaptation.

E

So

with

that

and

then

only

on

the

side,

and

since

we

do

gather

all

the

logging

information

on

the

sender

using

our

own

code,

we

also

try

to

corroborate

that

information

by

taking

screenshots

on

the

receiver,

because

chrome,

the

web

art

web

RTC

internals

tab,

do

provide

you

with

figures

of

various

metrics

of

interest.

So

with

that,

we

can

probably

now

start

taking

a

collection.

Taking

a

look

at

some

of

the

evaluation

results

question

yes,.

I

E

That's

right

that

was

I,

believe

that's

the

fruit

of

one

hackathon,

maybe

a

few

few

meetings

away.

It

was

mostly

work

from

another

co-author

and

Sergio

may

not

he's

due

to

timezone.

Difference

is

not

attending

the

meeting

today.

He

was

mostly

working

with

a

neo

from

Mozilla

if

I

remember

correctly,

so

they

do

have.

Basically,

they

enabled

a

transfer

CC

inside

the

Firefox

yeah,

so

we're

building

upon

that

yeah

and

chrome

already

supports

it,

so

it

works

well

for

us

yeah.

E

The

other

thing

I

want

to

call

out

is

that

this

type

of

feedback,

the

default

interval,

seems

to

be

at

50

milliseconds

sort

of

a

150

millisecond.

Would

you

get

a

feedback

and

the

feedback

information

in

that's

per

packet,

sort

of

acknowledgments,

yeah

information?

So

that's

very.

We

basically

have

all

information

we

need

for

running

the

the

another

algorithm.

E

E

We

still

count

really

to

idealize

comparison.

So

what

we

do

is

we

try

two

different

mechanisms.

One

is

back-to-back

sessions,

so

basically

we're

on

one

car

using

the

default

for

immediately

followed

by

another

car

of

similar

duration

use

another.

The

other

thing

we

do

is

that

we

also

try

a

little

bit

about

running

two

parallel

sessions

between

the

sender

and

the

receiver,

one

of

them

running

nada,

the

other

one

run

in

c4.

E

So

this

way

at

least

we

subject

them

to

the

same

path

and

any

loss

in

delay

pattern,

and

we

also

get

observed

a

little

bit

about

how

Nava

competes

against

another

default

web

RTC

cop.

So

in

terms

of

evaluation

scenarios

are

representing

two

different

settings

in

one

setting.

It

is

a

car

between

I'm

based

here

in

Austin

Texas,

so

the

sender

is

based

in

Austin

Texas

and

the

receiver

is

based

in

California.

So

it's

a

kind

of

a

cross

continental

or

putting

the

US

units

are

domestic,

long

distance

car.

E

E

So

these

are

the

two

settings

for

each

of

the

setting

we

tribals

the

back-to-back

session

and

arrows

worth

it

we're

looking

at

four

set

of

comparison

results

you

feel

like

we

can

move

on

to

the

next

slide,

so

this

one

for

back

to

back

I'm

I

thought

it

would

be

easier.

So,

even

though

we

do

default

first,

followed

by

nada,

I

thought

it

would

be

easier

to

show

both

of

them

side

by

side

for

maybe

for

ease

of

comparison.

So

we

have

both

the

rates,

the

delay

and

the

packet

loss

ratio

for

the

rate.

E

The

blue

lines

show

the

target

rate

calculated

the

read

adaptation

algorithm,

whereas

the

blue,

the

red

dots,

are

d

and

kind

of

the

measured

acknowledged

rate

based

on

the

per

packet

feedback.

Information

and

the

read

great

are

calculated

using

a

interval

of

200

milliseconds,

so

they're,

somewhat

noisy

or

compared

to

the

calculation

of

the

target

rate.

E

The

middle

two

graphs

show

the

round-trip

time

and

delay

granter

time,

map

and

Ingrid,

and

the

queuing

delay,

which

is

kind

of

derived

from

within

the

algorithm,

by

subtracting

out

the

long

term

baseline,

one-way

delayed

and

is

in

blue

and

the

the

final

two

graphs

show,

the

in

kind

of

the

instantaneous

packet

loss

rates.

Basically,

we

take

the

packet

loss

rate

within

a

window

of

you

know

the

15-minute.

Second,

the

feedback

look

at

the

gap

in

any

sequence

number,

and

also

try

to

do

a

temporal

smoothing.

E

E

Maybe

the

main

observation,

and

here

in

the

bottom

I

list

out,

the

kind

of

the

baseline

are

Kiki.

We

observe,

which

is

the

minimum.

Our

T

key

over

the

path

which

is

around

16

in

a

second

between

Austin

and

California,

and

the

maximum

are

T

key.

We

observe

is

around

2.2

seconds,

so

one

things

to

queue

up,

apparently

some

of

our

routers

along

the

way

have

a

deep

use

yeah.

So

with

that,

maybe

the

main

observation

I

want

to

call

out

on

this

slide

is

that

obviously

they

are

back-to-back.

E

So

we

don't

really

have

a

exact

comparison,

but

maybe

qualitatively

we

still

see

and

the

kind

of

the

amount

of

losses

the

experience

are

similar.

They

have

spread

the

experiment,

sporadic

and

sort

of

hiccups

over

the

network.

The

default

algorithm

seems

to

be

a

little

bit

more

so

one

day

when

they

back

off

and

taken

ramp

up

again.

It

takes

a

bit

longer

time

and

they

also

incur

they

actually

incur

a

little

bit

less

and

delay

so

somewhat

less

aggressive,

whereas

the

nada

core.

E

What

we

do

notice

is

that

it's

very

fast

to

ramp

up

and

whenever

there's

a

hiccup,

it

recovers

from

the

hiccup

quite

and

fast,

and

so

and

interestingly,

even

though

these

two

cars

are

between

two

sort

of

enterprise

offices,

but

turns

out,

the

Wi-Fi

situation

can

be

quite

noisy

and

busy

and

between

both

offices

yeah.

So

next

one,

please

so

firmed.

E

So

this

is

from

the

same

experiment

I'm

just

showing

the

screenshots

captured

from

Chrome

browser

for

for

each

of

the

sessions

more

as

a

corroboration

and

I

guess

qualitatively

the

kind

of

poster

rate

profile,

as

well

as

the

frame

per

second

to

reflect

the

rate

we

have

measured

internally

at

the

sender.

So

these

are

really

all

corroboration

thoughts

to

make

sure

that

our

locking

mechanism

is

not

broken

yeah

next

one.

E

So

under

the

same

setting,

we

now

have

two

cores

and

side-by-sides

and

post

the

default,

and

neither

they

actually

share

the

past

and

over

the

same

time,

that's

why?

If

you

take

a

look

at

both

the

delay

and

the

packet

loss

ratio,

we

do

see

very

synchronized

behavior,

which

is

not

surprising

at

all

and

again,

you

know

occasional

delay.

Spikes

in

this

one.

E

So

in

this

one

and

the

I

guess

the

red,

the

red

dots

of

the

naba

car

in

terms

of

how

much

weight

actually

gets

through,

is

a

little

bit

hidden

behind

the

blue

health

busy

lines,

but

it's

sort

of

mostly

there.

So

there's

one

big

dip

between

0

and

100.

Secondly,

but

after

that

it

it

must

of

the

time

it

sustains

quite

well,

whereas

the

default

car

took

a

bit

longer

to

thing

sort

of

a

greater

share

of

the

bandwidth.

E

E

E

E

Yeah

one

thing,

I'm

not

sure,

is,

and

what's

the

kind

of

the

measurement

and

interval

of

these

bytes

received

person

by

the

way

everything

is

price

receives.

All

the

numbers

would

need

to

be

multiplied

by

82

corroboree,

with

the

numbers

in

a

previous

page

I'm,

not

too

sure

about

at

what

time

interval

day

and

they

sort

of

measure

rate

yeah,

but

other

than

that.

Both

kind

of

both

the

rate

and

the

resolution

and

frame

rate

do

sort

of

correspond

to

what

we

observe

in

the

center

side,

in

terms

of

the

acknowledged

received

rate

next

slide.

E

So

for

this

round

offer

I

thought

it

would

be

also

interesting

to

since

we're

mostly

interested

in

reducing

latency

or

at

least

confining

latency

for

conferencing

course.

So

what

I

did

is

I

basically

plotted

out

the

CDF

of

the

queuing

delay

from

each

of

the

sessions

and

put

them

side-by-side

for

both

the

back-to-back

session

and

the

parallel

session

to

form

a

comparison.

I

called

out

some

numbers

here,

but

I

think

maybe

the

main

observation

for

this

round

is

that

there's

not

really

a

main

advantage

of

the

not

a

scheme

with

respect

to

the

default.

E

In

fact,

for

the

back-to-back

session,

since

it

was

a

little

bit

more

aggressive,

it

does

it

actually.

In

you

know,

service

EF

is

somewhat

worse

compared

to

the

blood

curve,

but

that's

sad.

We

can

also

see

that

if

you

take

a

look

at

95th

percentile

of

queuing

delay,

we

can

limit

it

below

a

hundred

millisecond

for

sure

and

and

that's

that's

a

I

guess-

that's

a

good

news

post

for

the

default

option

as

well

as

for

another.

So

next

we

can

move

on

to

the

cross

and

cross

Atlantic

cars.

E

E

E

Well,

if

it

does

experience

a

delay

spike,

then

it

will

take

a

you

know,

sort

of

on

the

order

of

tens

of

seconds

to

blend

up,

whereas

in

nada,

even

though

it

is

subject

to

similar

types

of

delay,

spikes,

the

the

ramped

up

back,

is

much

faster

because

of

the

accelerated

ramp

up

feature

in

the

grid

adaptation

algorithm.

The

other

thing

you

might

notice

is

that

there

is

a

kind

of

a

floor

of

the

rancher

time

around

190

mini

seconds.

So

then

you

know

all

the

red

curves

are

kind

of

floating

above

the

blue

ones.

E

For

for

this

set

of

results

and

for

for

plotting

purposes,

all

the

delay

and

graphs

are

kept

at

600,

but

as

reported

below

and

some

of

the

spikes

actually

reach

as

high

as

4.5

seconds

in

terms

of

round-trip

time

and

next

one

so

similar

kiryl

screenshots.

To

corroborate

our

observations,

yeah

interesting

for

this

round,

and

even

though

we

see.

E

Okay,

I'm

not

yeah

I'm,

not

too

sure

the

screenshots

for

the

default

behavior,

because

it

seems

to

be

a

little

bit

different

from

from

yeah.

Basically,

the

the

previous

well,

no

I

think

it's

right

so

yeah.

Basically,

we

have

two

tips

in

the

and

default

algorithm

and

then

I

guess

this

could

shopped

missed

the

second

second

ramp

up

duration

for

for

that

one

yeah

folder

for

the

second

grandpa,

so

it's

not

qualitatively

corresponding

as

well

yeah

next

next

one

now,

so

this

is

in

peril

of

the

default,

comparing

against

them

sort

of

competing

against

nada.

E

In

parallel.

So

again,

we

can

see

that

both

the

delay

and

the

packet

loss,

observations

from

both

of

the

floors

are

very

comparable

and

for

the

default

algorithm,

even

though

their

target

rate

can

sometimes

ramp

up

very

high

and

go

back

and

forth.

The

actual

received

rate

is

quite

stable,

whereas

for

nada

we

see

very

similar

behavior

as

before,

and

next

slide

is

for

the

chrome

observation.

E

And

there

was

one

figure

that

we

forgot

to

capture,

so

I

no

longer

have

a

bit

rate,

but

other

than

that

we

see.

For

instance,

the

chrome

reported

bit

rate

for

the

for

the

default

algorithm.

One

thing

to

note

is

that

it

is

actually

kind

of

below,

basically,

typically,

the

max

trade

is

as

300

K

bytes

per

second.

So

this

one,

the

received

rates

for

default

algorithm

is

quite

stable,

but

stays

somewhat

lower

than

its

full

potential

and

the

NADA

graph.

E

Next

slide

shows

the

comparison

of

the

queuing

delay

so

for

this

round

for

both

of

the

back-to-back

session

and

the

parallel

sessions,

we

do

see

an

improvement

in

terms

of

the

queuing

delay.

Distribution

of

nada

with

respect

to

the

default

algorithm,

I,

suspect

part

of

it,

is

sort

of

attributed

to

the

kind

of

the

big

spikes

and

then

the

low

ramp

up

time,

and

so

overall,

if

we

look

at

and

of

their

personal

numbers

for

the

the

blue,

nada

stream,

and

it's

also

quite

good.

It's

actually,

interestingly,

for

this

cross-continental

sorry

cross-atlantic

car.

E

If

we're

looking

at

queuing

delay

just

because

the

center

side

is

in

a

more

in

a

less

noisy

wireless

environment,

this

performance

is

actually

better

than

the

previous

one.

So

it's

not

really

dominated

by

the

past.

Arty

keys

were

mostly

measuring

to

yelling

here,

I

think,

that's

the

end

of

our

my

observation,

slides.

So

in

summary,

I

think.

Maybe

some

of

the

consistent

observations

is

that

the

proposed

another

algorithm

and

do

have

a

fast

initial

ramp

up

to

achieve

the

maximum

allowed

grades,

and

typically

that's

within

a

few

second,

whereas

the

default

algorithm.

E

E

So

in

those

environments,

we

don't

really

starve

a

competing

call.

So

that's

probably

relatively

safe

to

mirrors

early

point

or

concern

about

how

you

know

how

it

works

against

existing

traffic

over

the

Internet

and

for

further

investigations.

The

main

things

of

interest

would

probably

to

try

it

out

over

bandwidth,

limited

connections.

There

were

some

suggestion

about:

try

it

out

over

LTE

links

that

we

haven't

got

a

chance

to

fully

investigate

on,

and

we're

also

interested

in

understanding

kind

of

coexistence

of.

E

If

we

have

multiple

cars

running

our

algorithm,

how

it

works,

that

is

that

something

we

can

we'll

be

able

to

get

evaluation

results

on

even

using

our

existing

setup,

and

the

other

thing

of

interest

would

be

to

try

it

out

with

running

some

kind

of

a

TCP

like

background

long

lasting

the

file

transfer

type

of

traffic,

so

that's

kind

of

the

update

I

have

for

for

this

meeting

for

the

matter

of

person,

posting

terms

of

evaluation

and

kind

of

some

additional

ideas.

I'm

also

here

to

maybe

gather

further

feedback

and

suggestions.

E

Sorry

yeah,

so

that

was

the

question

I

asked

in

the

very

beginning.

Frankly

speaking,

we

we

currently

don't

have

any.

We

haven't

laid

out

a

plan

to

try

it

out

in

a

large-scale

deployment.

Yet

that's

something

obviously

were.

We

would

be

very

interested

in

gather

results

on,

but

also

like

these

are

the

type

of

tests

we

I

drunk.

We

can

I

wrong

with

my

colleagues

kind

of

manually

and

I

find

them

to

be

here

always

surprising

me

thinking.

Bloggers

have

you

know

a

little

bit

awkward

yeah.

E

J

E

K

E

No

I

mean

to

be

honest.

This

and

the

parallel

setting

is

the

only

one

we

tried

and

mix

well

make

sure

meaning

one

versus

one.

That's

I,

guess

that

goes

back

to

the

question

of

a

larger

scale

deployments.

If

we

get

to,

let's

say

configure

I

guess

the

ideal

case

is

that

if

we

get

to

configure,

you

know

different

browsers,

running

different

traffic,

then

we'll

get

to

look

at

the

mix.

That

would

be

much

more

interesting

yeah,

but

we

don't

have

results

on

percentage,

wise

comparison,

yeah,

I,.

K

C

F

C

Don't

know

there

we

go

all

right,

okay,

so

the

only

other

thing

we

have

on

the

agenda

for

today

is

a

very

brief

update

on

the

on

the

congestion

control

feedback

draft

from

myself.

So

this

is

a

draft

which

started

out

in

this

group.

We

had

a

design

team

in

this

group

for

quite

a

while,

and

then

it

moved

over

to

a

VT

core

and

has

been

developed

there

and

it's

work

that

I've

been

doing

along

with

ahead

Varun

and

Michael

Ramallah.

C

The

draft

seemed

pretty

stable

that

the

format

hasn't

changed

for

a

reasonable

length

of

time

and

there's

been

only

details

for

quite

a

while.

Now,

since

the

last

meeting,

the

only

update

has

been

to

expand

the

design

rationale

section.

We

had

an

action

item

to

explain

how

this

relates

to

the

the

other

congestion

control

feedback

mechanisms,

so

that

there

were

two

two

two

two

changes:

that

there

was

a

small

ones,

just

a

dimension

of

the

receiver,

estimated

maximum

bitrate

option.

C

As

draft

already

mentions,

the

the

timber

mechanism

forth

for

signaling

the

feedback

and

the

the

arian

be

seems

to

be

a

very

similar

mechanism.

So

so

I

just

added

that

in

the

same

section

to

say

it

behaves

broadly

similarly

and

that

there

are

details,

but

but

they

you

know

the

level

of

comparison

we're

looking

at

here.

They

didn't

make

a

whole

lot

of

difference

and

they

also

added

a

paragraph

discussing

the

relation

to

the

the

transfer

wide,

just

control

extensions

in

the

whole,

my

drift.

Now.

What

that

graph

does

is.

C

If

your

goal

is

to

do

rate

calculations

per

SSRC,

then

obviously

the

format

which

reports

per

SSRC

is

simpler.

So

that's

that's

that

the

fundamental

trade-off

here,

it's

trading

off

hetero,

additional

header

overheads

for

simplicity.

If

you're

doing

your

aggregate

level,

congestion

control

it

also.

We

also

note

that

the

transfer

white

feedback

on

a

per

SSRC

basis

is

perhaps

a

more

natural

fit

for

RTP.

Since

everything

else

in

RTP

is

done,

per

SS

I

see

where,

as

this

aggregate

feedback

in

here.