►

From YouTube: IETF108 TCPM 20200727 1100

Description

TCPM session at IETF108

2020/07/27 1100

A

A

Nonetheless,

I

hope

that

we

can

have

a

successful

meeting.

My

name

is

michael

scharf,

I'm

one

of

the

chairs

of

tcpm

and

I

will

present

the

working

group

as

the

first

presentation

in

this

meeting

and

then

we

will

just

go

into

the

meeting

in

the

meantime.

Please

make

yourself

familiar

with

me

mitchell.

It's

the

first

meeting

today.

A

A

A

A

A

A

Okay,

we

have

a

notetaker

neil

thanks,

a

lot

that

is

very

useful.

As

I

said,

I

will

also

in

the

later

talks

try

to

help

this

note

taking

and

if

all

of

us

contribute

it's

easier,

maybe

for

note-taking,

but

it's

good,

very

important

to

have

one

note

taker

and

then

regarding

logistics,

another

important

comment:

if

you

speak

first

of

all,

you

have

to

queue

typically,

even

if

we

all

see

your

name.

Nonetheless,

please

state

your

name

before

you

speak.

A

A

So

this

is

the

usual

agenda

bashing,

so

we

have

two

sessions

today.

Basically,

we

asked

for

more

time

this

time

than

before.

This

makes

sense

simply

because

we

got

new

topics

with

mptcp

and

we

have

some

related

presentations

later

in

this

slot.

So

we

have

a

pretty

packed

agenda,

so

I

ask

all

presenters

also

please

try

to

present

within

the

time

slot

that

you've

that

you've

got

so

that

we

can

indeed

run

through

all

presentations.

We

will,

as

usual,

start

with

the

working

group

items.

A

I

will

start

off

after

this

talk

with

a

brief

summary

of

2140

bis.

Then

wes

will

give

a

brief

update

on

the

793

bus.

Then

we

have

two

longer

talks

about

rec

and

high

star,

plus

plus,

and

then

in

the

second

part

of

the

first

slot,

a

couple

of

non-working

group

items.

The

act

rate

requests

into

mptcp

related

presentations

in

this

leg.

Second

slot.

The

plan

is

to

have

two

talks

that

are

related

to

the

ongoing

yang

discussions.

The

first

one

is

about

a

working

group

item

in

the

in

the

net

converting

group.

A

A

A

If

not,

I

assume

that

the

agenda

is

okay.

For

the

moment,

we've

approved

all

requests,

so

actually

everybody

who

has

requested

a

slot

got

a

slot,

so

I

assume

that

should

be

fine.

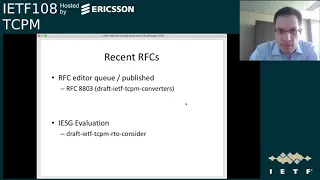

Then

I

will

quickly

run

through

the

working

group

status,

so

we

have

one

rfc.

That

is

almost

finished.

I

think

it's

not

officially

published

at

rfc808,

but

I

guess

it

will

happen

within

few

days.

That's

the

tcpm

converter

document.

A

This

is

almost

finished

as

I

think

it

will

be

published

within

days.

We

have

in

isg

evolution

the

audio

consider

draft.

That

problem

probably

needs

a

new

update,

but

there

has

been

quite

a

bit

of

discussion,

so

I

it

seems

some

of

the

most

of

the

comments

that

are

authors

seem

to

be

doable,

but

it's

it

requires

an

update.

So

that's

work

in

progress.

A

So

we

have

for

quite

a

bit

of

the

documents

presentations

in

this

meeting,

so

I

will

not

comment

a

lot

on

them.

We

have

a

presentation

on

rec

rec

has

completed

the

working

group

last

call,

but

there

were

a

quite

large

number

of

comments

and

we

are

at

the

moment

finishing

addressing

all

these

comments.

So

there's

been

quite

a

bit

of

discussion

on

that

and

we

will

have

a

talk

on

that.

A

We

also

have

done

a

run.

A

working

group

last

call

for

the

2140

bis

document.

There

has

been

a

bit

of

feedback,

but

not

a

lot,

so

that

is

why

I

will

summarize,

on

behalf

of

the

authors

in

the

next

talk,

the

working

group

feedback

that

we

have

removed.

We

received

so

far.

The

presentation

at

the

proposal

is

to

move

the

document

to

the

iesg.

A

We

will

also

have

a

presentation

on

seven,

nine,

three

and

three

bits

that

is

out

there

for

a

long

time

already

and

it

gets

very

stable.

The

one

of

the

more

main

open

points

was

the

other

consideration

sections,

and

this

will

talk

about

that

other

than

that.

The

document

seems

very

stable

and

I'm

thinking

about

the

working

group

last

call

relatively

soon,

that

is

within

the

next

one

or

two

months.

A

My

proposal

for

this

document

would

be

to

have

a

longer

working

group

last

call

of

a

duration

of

three

or

four

weeks,

because

it's

a

complex

document

to

review,

so

I

think

it

makes

sense

if

we

have

a

little

bit

more

time

to

review

the

document,

but

other

than

that,

at

least

from

my

point

of

view.

As

a

shepherd,

I

believe

that

that

document

is

ready

for

working

group

last

call

if

there

are

some

comments

on

the

end,

that,

of

course,

you

could

comment

on

this

right

now

we

had.

A

We

have

a

couple

of

other

documents

that

were

not

updated.

Recently,

the

accurate

ecn

one

was

discussed

during

the

interview

meeting,

but

there

has

been

no

update

in

the

meantime

and

we

have

no

presentation

requests,

so

I

guess

this

probably

will

have

to

wait

either

to

an

interview

meeting

or

the

next

official

meeting

same

for

generalized

ecn.

A

A

A

The

next

ones

on

the

milestone

list

are

the

2140

bis

and

the

rc

793

bis

and

you've

seen

that

we

either

have

already

done

the

working

group

last

call

or

you're

planning

it

and

further

out

we

have

the

accurate

ec

and

the

ecn

plus

plus

and

the

other

documents

on

our

roadmap.

So

that's

the

current

planning

for

the

milestones.

A

A

A

A

A

So

I've

already

mentioned

the

statuses

we

had

done

a

working

group

last

call

and

at

the

moment

we

are

about

to

finishing

the

comments

that

we

received

in

this

talk

is

about

the

the

comments

that

were

received

during

the

working

group

last

call.

So

if

you

can

go

to

the

next

slide,

please

so

this

is

a

brief

summary

of

the

history

of

this

document.

So

there

was

a

bigger

editorial

update

in

version

o3,

with

a

lot

of

mostly

editorial

clarifications

and

updates.

A

The

current

version

is

the

version

0

5

and

we've

run

a

working

group

last

call

on

that

version

in

end

of

may

beginning

of

june,

and

we

got

a

couple

of

comments

during

the

working

coop

last

call.

So

maybe

you

can

go

to

the

next

slide.

That

summarizes

the

as

most

of

the

technical

comments

that

we

got.

The

most

of

the

comments

actually

were

slightly

at

the

end

of

the

working

group

last

caller

afterwards.

So

there

was

one

comment

or

actually

two

comments

about.

A

First,

the

security

considerations

section:

this

is

consideration,

section

has

changed

in

in

earlier

versions

and

there

was

a

comment

on

that

one,

albeit

I've

tried

to

understand

that

comment,

and

it's

a

bit

unclear

to

me

what

exactly

that

comment

was

about

the

at

the

moment.

I

think

without

any

further

information,

we

just

keep

the

document

as

it

is

as

the

what

was

common

is

actually

in

the

document.

A

It's

just

got

reverted,

but

of

course,

if

there

are

any

further

explanations

for

this

comment,

we

would

be

happy

to

receive

if

I've

tried

to

figure

it

out

on

list

and

off

list,

but

I

didn't

get

any

further

information

on

that

comment.

So

there's

not

much.

I

can

further

do

on

that

as

shepard

and

second,

there

was

a

comment

on

appendix

c,

and

this

is

something

that

was

in

the

document

for

quite

some

time

already.

So

this

documents

specific

algorithm

as

more

or

less

an

example.

How

sharing

could

be

done?

A

B

B

B

Sorry

somehow

my

audio

wasn't

correctly

connected

yeah.

I

think

both

of

the

comments

were

actually

from

me

about

the

security

considerations

and

the

appendix

so

quickly

about

the

security

considerations

and

linkability.

The

thinking

here

was

that

if

you

use

state

of

different

connections

or

reuse

state

from

a

previous

connection

to

an

x

connection,

that

could

potentially

reveal

that

there's

some

linkability

between

the

crew

connections,

knowing

that

both

of

the

connections

belong

to

the

same

system,

even

if,

for

example,

the

source

ip

address

has

changed

in

the

meantime.

B

Yeah,

sorry,

for

not

replying,

I

somehow

missed

that

on

the

appendix

c.

I

totally

didn't.

I

never

kind

of

apparently

reviewed

the

appendix

previously

or

read

the

appendix

properly.

So

I

never

noticed

that

this

was

completely

included

and

it

seemed

very

weird

to

me

that

we

include

like

a

full

another

draft

into

a

biz

document

and

especially

a

draft

that

was

like

lengthy

discussed

in

the

group

and

was

denied.

B

So

this

is

kind

of

trying

to

sneak

this

in

there,

and

it

doesn't

really

make

sense

to

me.

I'm

I'm

okay

with

like

taking

a

summary

or

taking

reference

to

the

expired

draft,

because

it's

relevant

but

like

having

a

full

copy

and

paste

from

that

draft

that

was

previously

discussed

seems

a

little

bit

weird

to

me.

A

Okay,

that

one

I

explicitly

asked

also

for

comments

from

other

people,

because

I

mean

we

have

I.

I

know

that

that

feedback-

the

authors-

probably

disagree,

so

actually

it

would

help

a

lot

if

others

could

comment

on

that

specific

issue

as

a

whether

the

appendix

c

makes

sense

in

the

document

or

not

so,

I've

explicitly

asked

for

further

feedback.

A

If

I

leave

for

the

moment,

I

will

leave

open

the

question

on

what

to

do

with

appendix

c.

As

I

said,

if

you

have

any

of

any

opinion

on

appendix

c,

please

comment

on

the

list

so

that

we

can

sort

that

out.

I

would

definitely

look

forward

to

further

opinions

on

whether

appendix

should

stay

as

it

is

or

if

we

should,

for

example,

do

what

may

have

suggested

to

summarize

it

and

take

out

the

specific

algorithm

details.

Also,

please

comment

on

that.

A

If

you

have

any

opinion

on

and

other

than

that,

there

was

a

smaller

comment

on

tfo

that

will

be

addressed

in

the

next

upcoming

release.

So

if

you

can

move

to,

I

think

the

last

slide.

We

will

see

the

text

proposal

that

should

address

the

comment

on

tfo,

so

these

are

the

pending

changes

that

should

be

added

to

the

next

version,

so

the

security

consideration

section

at

the

moment

is

unchanged

as

far

as

understand.

So,

if

you

have,

if

we

should

add

something,

so

please

write

it

to

the

list

on

tfo.

A

The

change

is

the

small

editorial

changed

that

is

shown

here

and,

as

far

as

I

understand

that

working

cool

that

working

google

glass

card

comment

would

be

sorted

out

by

that

verdict.

So

we

would

be

fine

on

that

one

and

that's

all

what

I

am

aware

of

as

comments

during

the

working

couples.

Call

it's

not

a

lot.

A

To

be

honest,

we

have

usually

more

comments

during

the

working

coop

last

call,

but

since

it's

an

informational

document

since

it's

abyss

document

as

my

conclusion

conclusion

as

shepard

is

that

we

will,

as

once

we

sorted

all

the

comments

that

are

still

pending,

we

will

move

the

document

forward

if

you

have

any

opinion

on

that

or

any

suggestion

or

any

other

request

now

is

more

or

less

your

latest

opportunity

to

state.

So,

as

I

said,

once

we

sorted

out

millio's

comments.

D

D

E

D

D

D

D

D

So

this

table

I

pulled

from

the

draft,

and

so

what

you

see

is

what's

in

that,

and

I

thought

that

would

make

it

easy

to

discuss

whether

this

looks

right

to

people

or

whether

it

needs

any

more

work.

And

since

there

had

been

a

lot

of

discussion

on

this

in

the

past

on

the

mailing

list

and

in

prior

meetings,

we

thought

it

should

make

sense

just

to

get

a

quick

acknowledgement

that

this

is

what

people

expected

to

happen

and

that's

all

I've

got.

Actually.

This

is

my

only

chart,

so

the

feedback

on

that

is

welcome.

D

D

A

G

D

D

H

D

A

C

A

A

A

But

the

interesting

question

is,

of

course,

what

happens

with

spit

eight

and

nine

because

they

have

a

separate

meaning

in

accurate

ecn.

So

maybe

we

could

record

that

in

the

assignment

notes.

So

that

would

be

a

way,

for

example,

to

explain

that

those

bits

have

a

separate

meaning

if

they

are

used

by

accuracy

in.

A

B

Yes

and

somehow

I

couldn't

change

the

queue,

so

I'm

now

on

audio,

so

we

actually

don't

change

the

name

of

bit

8

and

9,

because

we

keep

them

for

negotiation

in

the

handshake

as

they

are

kind

of,

and

then

only

use

this

as

a

field

later

on.

So

it's

not

proposed

to

change

it,

the

name.

The

only

thing

we

will

do

is

assign

bit

number

seven

with

a

new.

B

A

D

D

A

A

A

Okay,

so

I'm

not

seeing

any

further

comments

on

that

one.

So

I

to

me

this

implies

that

we

will

execute

the

plan

that

we've

outlined.

That

is

as

well.

I

will

start

a

working

group

last

call.

I

will

still

have

to

think

about

the

time.

Maybe

I

will

wait

a

bit

so

that

it

it's

not

colliding

with

the

summer

holiday

season,

but

as

a

please

expect,

the

working

group

last

call

for

793

bis

within

as

a

at

least

at

least

the

next

two

months.

A

I

will

work

with

the

other

church

and

the

id

and

the

also

when

exactly

we

run

the

working

crew

class

call.

But,

as

I

said,

please

expect

in

working

couples

call

and

please

prepare

to

review

the

document.

So

this

is

one

of

the

most

important

deliverables

that

tcpm

has

worked

on

in

the

last

year,

so

we

do

need

reviews

to

ensure

that

the

document

is

the

best

document

we

can

publish.

So

as

a

please

comment

on

this

virtual

group

last

call

thanks

a

lot.

A

A

I

I

So

the

main

change

is

that

it's

really

a

substantial

rewrite

over

90

of

the

tags.

After

looking

at

all

the

comments

we

received

during

the

last

call

neil-

and

I

decided

that

you

know-

we

really

have

to

significantly

change

rack

and

really

focus

on

the

integrating

the

rack

and

tlp

as

a

whole.

You

know

in

an

integral

design

to

incorporate

all

the

comments.

I

So

there

are

a

few

new

sections,

a

new

motivation

section

that

clearly

explains

why

we

are

doing

rack

and

what

are

the

problems

we

are

trying

to

solve

and

a

high

level

design

so

including

a

very

explicit

reordering,

rational

section

to

avoid

people

feeling

like

okay,

we're

just

taking

two

wraps

two

drops

and

literally

just

staple

them

together

and

also

a

few

reviewers

suggested.

It

would

be

good

to

have

an

explicit

sort

of

hand,

sequence

kind

of

like

graph,

other

examples

we

added

that

into

it

too.

I

So

for

the

motivation,

it's

really

three

things

we're

trying

to

solve

that

the

current

standard.

So

we

do

back,

heuristic

does

not

do

very

well

right.

One

is

that

if

there

is

a

packet

drops

in

the

short

flow

or

toward

the

end

of

an

application

data

flight,

then

three

two

bags

simply

don't

work

that

well,

because

you

need

three

due

backs.

I

I

So

one

thing

we

do

want

to

emphasize

is

that

we're

trying

to

be

as

specific

as

possible

about

the

scenarios

we're

trying

to

fix

and

avoid

any

kind

of

comments

that

this

is

a

common

scenario.

This

is

the

prevalence

in

the

scenario

any

kind

of

this

comments

about

like

how

frequent

it

happens,

because

we

understand

at

different

networks.

This

may

not

be

that

common

for

a

particular

scenario.

I

The

last

one

is

really

we

trying

to

tolerate

reordering,

but

for

a

very

only

specific

type

that

is

reordering

in

a

very

low

time.

Degree.

So,

give

me

a

let

me

give

you

an

example.

Let's

say

you

say:

100

packet,

it's

named

as

p1

all

the

way

to

p100

right

and

the

last

one

is

deliver.

First,

then,

the

rest

deliver

in

order.

So

in

this

case

the

reordering

degree

is

actually

99.

I

But

if

you

look

at

the

time

for

this

particular

reordering,

it

could

be

just

like

the

last

one

is

delivered

just

a

fraction

earlier

than

the

rest.

So

the

in

terms

of

time

order

in

terms

of

in

the

rtt

scale,

it

could

be

just

a

very

small

fraction.

So

this

is

the

kind

of

rewarding

that

rap

trying

to

address

for

the

other

kinds

of

reordering.

I

The

overarching

goal

is

trying

to

do

fast

recovery

as

much

as

possible

and

since

it's

act

triggered

so

we're

talking

about

the

rtt

time

scale

and

trying

to

avoid

rto

recovery

as

a

last

resort,

because

one

rto

generally

wants

to

be

more

conservative

and

second

for

the

standard,

conduction

control.

It

has

a

consequence

which

is

going

to

reset

the

seaweed

and

in

order

to

achieve

fast

recovery

is

really

trying

to

use

the

per

packet

timestamp

and

use

the

x

to

adjust

sort

of

the

rtp

measurement

and

the

reordering

measurement,

and

simply

look

at

okay.

I

I

I

Now

we

now

we

get

a

lot

of

act

event,

but

sometimes

you

do

run

out

of

acts

because

the

segments

get

lost

and

you

don't

have

further

segments

to

trigger

the

act.

So

what

do

you

do?

What

you

do

is

that,

after

two

rtt

that

you

send

an

application

data

fly,

you

send

one

more

packet

to

sort

of

trigger

the

egg.

I

think

the

slide

needs

to

move

forward.

I

Now

you

get

a

stack,

then

you

get

an

idea

of

the

rtt,

and

you

know

all

this

packet

p0

to

p3

was

sent

more

than

three

rtp

ago.

So

you

would

conclude

that

this

packet

must

have

been

lost.

So

then,

you

will

transfer

so

remember

in

this

case

with

the

switch

you

back,

because

you

only

you

don't

get

any

due

back,

so

you

basically

will

have

to

do

an

optimal

recovery,

and

this

is

the

basic

idea

of

how

racket

tlp

work

together.

I

I

Should

we

step

to

based

on

the

defect

information

if

eligible

and

there

should

be

a

reordering

timer

and

the

idea

of

a

reordering

timer

is

that

they

say

you

know

that

a

packet

is

not

expired.

Yet

this

is,

you

know

two

milliseconds

from

being

expired,

then

the

only

way

that

you

do

mark

this

packet

expire

is

either

through

a

timer

or

another

ad

event.

That's

happened

two

milliseconds

later

so

in

this

case

it's

always

safer

to

install

a

timer.

I

So

how

is

the

dlp

and

the

rack,

dependency

and

top

does

require

rack

and

rack

requires

a

sac,

but

you

can

just

implement

rack.

You

don't

have

to

implement

plp,

it's

not

recommended,

but

it

will

work

because

you

simply

just

don't

send

those

pro

packets,

but

for

the

other

packet

rack

will

still

do

its

work

and

there

is

also

a

clarification

about

how

to

manage

all

these

timers.

The

idea

is

that

all

these

top

timer

audio

timer

rack

timer

zero

window

probe

timer.

I

They

are

all

timer

triggered

to

send

one

packet

of

some

sort,

so

you

don't

really

need

to

manage

and

have

all

these

timers.

What

you

do

is

at

any

time

you

maintain

one

of

them.

Then

you

can

just

keep

a

state

variable

to

point

to

what

kind

of

timer

you

want

to

trigger

because

think

of

in

the

case

of

plp

and

rack,

any

packet

is

being

used

to

probe

the

losses

can

trigger

the

the

loss

recovery.

So

you

don't

have

to

necessarily

send

the

tail

loss

pro

packets.

I

You

can

just

send

a

zero

window

probe

as

long

as

it's

listed

in

that,

then

you

are

good,

so

you

don't

need

that

many

timers

and

it's

actually

very,

very

complex

to

manage

all

this

together,

and

one

thing

we

do

remove

is

the

the

act

delay

of

200

milliseconds.

A

few

people

do

ask:

why

is

it

two

mil

200

millisecond?

I

It

is

from

a

linux

which

we

suspect

is

also

from

an

older

version

of

the

bsd

implementation,

but

because

of

a

lot

of

recent

discussions,

we

know

that

okay,

this

value

really

needs

to

be

adjusted,

so

it

doesn't

make

sense

to

further

coded

that.

So

we

make

this

implementation

specific.

So

I'm

trying

to

avoid

this

another

magic

number,

which

is

likely

outdated

as

well.

I

Next

slide.

Please

also

we're

trying

to

clarify

relationships

of

other

rfcs,

because

before

the

previous

draft

has

this

oh

tlp

may

work,

we

said

that

only

you

may

not

need

rack

or

rack

is

just

can

be

implemented

as

an

additional

algorithm

to

do

back.

So

we

clear

that

up.

These

are

all

text

that

was

left

over

never

get

revised

correctly.

I

So

t

o

p

rack

is

an

alternative

to

replace

the

conservative

loss

recovery

based

on

sac

and,

I

believe,

that's

the

major

implementation

by

most

of

the

stacks

that

you

set

and

it

can

replace

the

early

we

transmit.

So

if

you

implement

rack

and

tlp,

you

don't

need

to

implement

this

too,

and

for

the

other

rack,

sorry,

the

loss,

recovery,

rtos

gop

are

complementary

and

compatible

to

say,

limited

transmit

because

limited

transparent

is

simply

just

sending

more

packets.

I

I

Frto

is

to

say

when

you

time

out,

if

you

do

have

new

data

to

send,

send

this

new

data

because

they

help

disambiguate.

If

this

is

a

real

timeout

or

not

so

that's

the

same

spirit

with

racket.

Pob

is

sending

more

packet

to

probe

sort

of

double

check

if

something

is

really

lost

and

for

the

rto

star

and

the

rto

restart

in

rto.

This

is

simply

just

computing.

The

the

timer

value

values

similar

to

the

the

drive,

that's

becoming

the

rc,

the

rto

consideration.

I

It's

all

the

same.

These

are

all

compatible

racquetb,

do

not

manage

the

value

of

these

timers

and

I

felt

is

essentially

detecting

the

spoolies

with

transmission

and

then

actually

undo

the

congestion

window.

So

it

works

with

rack

and

tlp

too,

because

rack

and

theop

may

have

squishy

transmission.

It's

not

always

accurate.

I

So

in

this

case

we

do

encourage

if

you

find

that

a

loss

recovery

is

spurious,

we

do

want

to

undo

the

congestion

window

reduction

next

slide.

I

think

this

is

probably

the

last

slide.

So

that's

the

end

of

my

talk.

It's.

We

are

really

thankful

to

all

the

people

who

spend

so

much

time

reviewing

our

drafts

and

we,

I

know,

like

the

rewrite,

would

probably

take

a

lot

of

time

to

review

again,

but

I

hope

it's.

It

has

improved

quite

a

bit.

I

A

I

I

I

A

Neutron,

if

not

I

I

guess

we

don't

have

michael

dixon

and

they

call

who's

the

shepherd.

But

I

guess

the

proposal

is

that

the

ones

all

once

all

people

who

have

commented

during

working

goof

last

call

have

confirmed

that

the

comments

are

sorted

out.

We

will

finish

the

document

and

move

it

to

the

isg.

I

guess

that's

the

plan,

as

far

as

I

know

so,

there's

quite

a

bit

of

ongoing

discussion

still

on

the

mailing

list

to

get

confirmation

on

that,

because

it's

a

significant

amount

of

changes.

A

J

J

J

In

many

cases

this

can

overshoot

the

ideal

send

rate

for

the

bottleneck

and

that

causes

massive

packet

losses.

This

can

cause

problems

like

increased

retransmissions

because

no

packets

are

lost

and

then

time

spent

in

recovery,

particularly

for

flows

that

you

know

don't

exist,

slow

start

or

are

short-lived.

This

causes

a

latency

increase

and

if

the

packet

loss

is

high

enough,

this

can

also

result

in

re-transmission

timeout,

particularly,

I

think

for

implementations

that

don't

have

a

rack

which

can

recover

from

last

week

transmits

this

becomes

a

very

serious

problem

for

those

tcp

implementations.

J

J

The

particular

thing

that

we

do

here

is

that

we

measure

the

increase

in

delay

and

use

that

as

a

signal

to

exit

early

from

slow

start

in

some

cases.

If

we

get

a

delay

spike,

particularly

on

like

wi-fi

links

or

due

to

temporary

congestion,

this

can

cause

an

early

exit

from

slow

start

very

early

and

that

can

cause

performance

problems

as

well.

J

So

after

exiting

the

traditional

slow

start,

we

begin

what

we

call

as

the

limited,

slow

start

phase,

and

we

use

the

congestion

window

computed

by

rc

3742

and

also

also

compute

the

conjunction

window,

as

it

would

have

been

computed

by

the

congestion

avoidance

algorithm.

So,

for

example,

if

you're

using

cubic

this

would

be

the

cubic

congestion

avoidance

computation

of

the

conduction

window.

So

the

the

max

of

these

two

is

used

until

we

see

a

conduction

signal.

J

J

So

this

is

sort

of

a

english

language

description

of

the

algorithm

in

the

simplest

form.

As

you

can

see,

it

fits

on

one

slide.

It's

a

very

simple

modification.

So

on

each

act

in

the

slow

start

phase,

what

we

do

is

we.

We

update

the

congestion

window

as

usual

and

we

also

keep

track

of

what

is

what

is

the

main

rtt.

So

around

here

is

defined

in

terms

of

rtt.

Approximately

you

can

use

the

sequence

numbers

to

measure

this,

as

the

draft

formulas

show

so

for

each

round.

J

J

Then,

in

the

limited,

slow

start

phase,

what

we

do

is

for

every

ack.

As

I

described

earlier,

we

update

the

congestion

window

as

the

max

of

what

is

computed

by

3742

and

congestion

avoidance

for

the

particular

congestion

control

algorithm.

That's

in

use

this

could

be

neutrino.

This

would

be

cubic,

it

could

be

any

other

conduction

control,

algorithm

and

then

upon

the

first

congestion

signal,

which

could

be

ecn,

packet,

loss,

etc.

J

We

don't

currently

recommend

that

this

be

used

for

subsequent

slow

starts,

because

the

slow

start

threshold

value

has

already

been

determined

and

that's

usually

good

enough

to

be

able

to

not

cause

the

the

problem

we're

trying

to

solve,

which

is

the

overshoot

of

the

ideals

and

rate

next

slide.

Please.

J

J

J

So

we

now

specifically

have

text

stating

what

happens

when

you

change

these

constants

and

then

we

have

also

a

section

on

deployments

and

performance

evaluations

which

have

been

presented

in

the

last

few

presentations

with

the

working

group

so

I'll

quickly

go

over

the

tuning

constants

part,

so

the

first

one

I

will

talk

about

is

the

the

low

condition

window.

So

we

pick

the

value

16,

so

this

basically

prevents

the

algorithm

from

kicking

in

too

early.

So

imagine

you

started

with

an

initial

congestion

window

of

10

mss.

J

This

at

least

prevents

the

prevents

the

algorithm

kicking

in

before

we

have

even

measured

the

last

round

rdt

as

well.

As

you

know,

very

early

exit

from

slow

start,

so

if

you

can

use

higher

values

here,

but

the

higher

the

higher

the

value

you

use,

it

may

cause

overshoot

on

certain

networks

that

are

very

low

bdp.

J

F

J

J

So

that's

sort

of

the

trade-off

here

of

adjusting

the

value

and

then

there's

the

two

threshold

values.

It's

basically

like

a

floor

and

a

ceiling

for

the

threshold

again,

a

small

min

here

can

cause

a

very

early

exit

for

from

slow

start

and

higher

max

can

cause,

possibly

you

know,

miss

any

kind

of

delay.

J

Spikes

if

you

increase

the

value

too

much,

so

the

sensitivity

of

the

algorithm

basically

is

controlled

by

these

parameters,

and

these

are

again

very

tunable

depending

on

network

conditions,

but

these

values

typically

tend

to

work

very

well

in

practice,

and

the

last

one

is

the

lss

divisor.

We

specifically

state

that

it

should

not

exceed

the

value

that

the

rfc

side

we

should

not

exceed,

because

otherwise

the

algorithm

becomes

too

aggressive.

J

J

J

J

J

We

would

also

like

to

do

more

measurements,

more

end-to-end

measurements

on

workloads,

as

well

as

comparing

with

bbr

startup,

which

is

the

other

sort

of

like

condition,

control

algorithm,

that's

being

worked

on

in

the

iccrg

working

group,

so

that

would

be

that

would

do

for

a

very

good

comparison.

So

that's

something

that

we

would

like

to

do

in

the

coming

period.

J

For

the

community,

I

would

say

plea:

please,

review,

provide

feedback

on

the

draft.

The

more

feedback

is

welcome

as

well

as

if

you

have

implementations,

please

share

performance

data,

so

we

would

love

to

get

more

data

from

the

community

and

and

once

we

once

we

can

get

more

data.

I

think

we

can

move

forward.

K

So

I

had

a

question

about

the

threshold

to

detect

when

the

round

trip

delay

is

growing.

If

I

understand

that

right,

that

threshold

is

a

fixed

time

in

milliseconds,

rather

than

being

relative

to

the

round

trip

time,

and

I'm

wondering

if

it

makes

sense

to

to

make

it

relative,

make

it

a

multiplicative

factor

and

the

reason

I'm

saying

that

is

on

a

data

center

network

with

10

gigabit,

ethernet

or

higher,

where

you've

got

very

low

round

trip

times.

J

J

That

is

something

we

should

experiment

with.

Currently

most

of

our

testing

has

been

very

much

focused

on

wide

area

networks

and

the

internet,

so

which

is

where

this

problem

is,

is

particularly

bad,

because

if

you

look

at

data

center

networks,

the

rtt

is

low

enough

that

even

with

the

overshoot,

the

time

spent

in

recovery

is

not

that

large.

So

the

latency

increase

is

not

that

bad.

Whether

you

know

this

is

a

algorithm

that

helps

in

those

cases

with

a

dynamic

threshold

or

something

we

should

experiment

and

see.

J

So

that's

something

we

can

certainly

get

on

a

plate

and

measure.

This

algorithm

is

very

effective,

particularly

for

large

latency.

So

that's

where

the

the

impact

of

the

overshoot

is

pretty

bad,

but

but

great

suggestion,

I

would

say

that

other

delay

increase

algorithms

like

the

ledbat

have

used

fixed

constants

there

as

well.

I

think

in

general,

like

using

dynamic

values.

I

I

do

agree

that

it

becomes

makes

the

algorithm

more

universal.

So

thanks

for

the

feedback.

K

K

H

L

H

So

this

this

has

two

norms.

This

is

a

high

start.

Draft

has

two

normative

references

to

experimental

rfcs,

and

I

I

thought

we

reached

something

like

consensus

last

time

that

abc

could

move

proposed

standard

to

solve

that

problem

and

I'm

curious

about

3742

and

what

the

group

thinks

about

that

as

being

mature

enough

to

advance

standard,

and

if

the

answer

is

no,

then

maybe

we

need

to

consider

going

back

to

experimental

with

this.

L

J

I

mean

and

the

yes

I

mean

we

have

a

dependence

on

on

using

that

algorithm.

I

I'm

guessing

that

we

should

be

able

to

subsume

the

portion

that

we

need

here.

So

in

that

sense,

maybe

it

doesn't

have

to

be

normative,

but

as

it's

written

right

now,

it's

sort

of

like

yeah

references

it

as

is

normative,

but.

L

L

L

L

J

It's

default

enabled

for

all

connections

for

about

two

years

now

all

connections,

meaning

data

center

internet

everywhere,

so

it

is

in

very,

very

wide

use

in

terms

of

data.

I

think

like

in

the

previous

two

tcpm

work

group

meetings.

We

presented

the

data.

The

draft

also

has

a

section

where

we

sort

of

summarize

what

has

been

found

so

far,

but

yeah.

It's

very

widely

deployed

at

this

point.

J

C

A

F

I

wanted

to

definitely

support

moving

forward

with

this

work,

but

I

also

wanted

a

second

stewart's

comment

that

the

the

fixed

thresholds

and

number

of

milliseconds

gives

me

some

pause

and

is

something

I'd,

probably

at

least

want

to

make

sure

we

re-evaluate

before

we

go

forward

with

it.

I'd

be

very

interested

in

the

comparison

to

bbr's

startup.

F

There

are

some

changes

there

in

bbr

versus

bbr2

that

I

think

are

also

worth

comparing.

So

I

think

if

you,

if

you

are

willing

to

do

that

comparison,

a

three-way

comparison

would

be

appropriate

and

I'm

sure

I

or

neil

or

yu-chun

would

be

happy

to

chat

with

you

about

that.

But

yeah.

Thanks

for

doing

this,

it's

nice

to

have

alternatives

and

startups

that

are

not

quite

so

aggressive.

J

F

A

A

M

Okay,

great

yeah.

I

just

wanted

to

also

express

support

for

making

the

rtt

threshold

a

relative

multiple

of

the

min

rtt,

and

also

wanted

to

note

that

that

is

the

behavior

in

the

linux

code

and

have

been

since

about

since

the

original

I

started

in

linux.

In

about

2008,

the

multiple

has

changed

and

I

think

right

now

it's

it's

one,

one

eighth

or

12.5

percent

of

the

min

rtt,

and

that

has

worked

well

in

in

practice

and

in

production.

A

Okay,

I'd

like

to

close

the

queue

here,

so

in

any

case

I

mean

this

document

is

fairly

new

as

a

working

group

item.

So

I

assume

this

will

not

be

the

last

opportunity

to

discuss

the

content.

So

my

proposal

is

to

move

the

follow-up

discussion

on

the

list

and

have

a

next

discussion.

Then

next

meeting,

maybe

some

of

the

algorithm

details

need

some

work

and

some

other

work

is

needed,

but

I

think

that's

usual

for

a

working

coup

document.

A

N

N

So

the

document

I'm

presenting

today

is

a

new

draft.

However,

there's

a

couple

of

recent

related

documents,

the

first

one

was

a

proposal

intended

to

enable

the

sender

with

the

ability

to

trigger

immediate

acts

from

the

receiver,

and

the

second

one

is

a

document

focusing

on

a

similar

area

which,

however,

focused

a

bit

more

on

requirements

rather

than

solutions,

and

in

that

second

document

we

included,

among

other

things,

the

idea

of

adding

providing

the

sender

with

the

ability

to

request

some

frequency

from

a

receiver.

N

So

this

second

document

was

presented

in

the

last

interim

meeting,

which

was

a

replacement

for

idf

107,

and

after

that

there

was

some

discussion

on

the

mailing

list

which,

by

the

way,

thanks

to

everyone

for

the

very

valuable

feedback

that

we

received

and

the

discussion

converts

to

the

idea

of

defining

a

new

tcp

option

serving

two

purposes.

The

first

is

providing

the

sender

with

the

ability

of

requesting

some

ad

frequency

from

the

receiver,

and

the

other

purpose

is

allowing

a

sender

to

request

some

immediate

hack

from

a

receiver.

N

So

this

new

document

defines

such

a

new

tcp

option.

Next,

please

so

on

the

content

of

the

document

itself.

We

start

with

the

motivation

by

explaining

that

delayed

acts

is

a

mechanism

intended

to

reduce

protocol

overhead

and

in

many

cases

this

is

actually

achieved.

However,

delayed

acts

may

also

contribute

somehow

to

suboptimal

performance

in

a

number

of

scenarios.

N

Here

we

have

identified

two

main

categories

of

such

scenarios

in

terms

of

the

congestion

window

size

the

first

one

being

what

we

call

here:

large

congestion

window

scenarios,

meaning

a

congestion

window

size

much

greater

than

the

mss,

and

in

these

scenarios,

saving

up

to

one

of

every

two

acts,

which

is

what

may

be

achieved

with

the

latex

may

be

insufficient.

For

example,

there

may

be

a

goal

of

mitigating

performance

limitations

due

to

symmetric

path

capacity,

for

example,

when

reader's

path

capacity

is

significantly

limited

compared

to

the

forward

path.

N

So

the

other

main

category,

where

the

latex

may

also

contribute

to

some

optimal

performance,

is

what

we

call

here:

small

congestion

window

scenarios.

This

means

congestion

window

size

in

the

order

of

one

mss

or

less

or

less

than

that.

So,

for

example,

in

data

centers,

the

bandwidth

delay

product

may

be

precisely

in

the

order

of

one

mss

or

less.

So.

N

So

in

this

document

we

define

a

new

option,

which

is

called

tcp

act

rate

request.

Tar

option

with

the

following

associated

behavior

sender

may

request

the

receiver

to

modify

the

accurate

of

the

latter

to

one

hack.

Every

r

full-sized

data

segments

where

the

value

of

r

would

be

carried

in

tar

option

field

and

also

center,

is

allowed

to

request

an

immediate

attack

by

setting

r

to

the

special

value

of

0.,

and

then

the

receiver

would

be

as

follows.

N

Receiver

complying

with

this

specification

and

receiving

a

segment

with

the

tar

option

when

the

r

field

of

the

entire

option

is

different

from

zero.

According

to

the

current

wording

in

the

document,

the

receiver

must

modify

exactly

to

one

arc

are

full-size

data

segments

and

when

r

is

equal

to

zero,

the

receiver

must

send

an

immediate

arc.

By

the

way

we

have

a

question

here

for

the

working

group,

which

is

that

perhaps,

instead

of

the

must

term

that's

used

here

for

the

receiver,

we

were

wondering

whether

maybe

should

capital

letter

should

could

be

appropriate.

N

N

So

this

is

the

format

for

the

tar

option.

As

you

can

see,

it

follows

the

shared

experimental

options

format

defined

in

rc

6994,

so

the

first

field

is

a

kind

field

which

will

take

would

take

one

of

the

experimental

code

points

either

253

or

254.

The

length

field

would

indicate

that

this

is

a

five

byte

format.

N

Next

is

a

two

byte

experiment,

identifier,

which

we

are

considering

to

set

to

zero

zero

ac

as

a

way

to

stand

for

ack

somehow,

and

the

final

field

would

be

r

which

would

carry

the

binary

encoding

of

the

r

value

by

the

way.

A

common

here

is

that

we

in

the

long

term,

we

would

like

to

pursue

using

a

three

byte

format.

However,

we

also

understand

that,

in

the

initial

stages,

the

right

way

to

go

is

using

the

shared

experimental

options,

format

in

69.94

so

well.

N

K

If

you

send

one

segment,

then

the

other

end

won't

act

it

until

it

gets

a

second

segment,

but

you

don't

have

enough

ram

to

send

the

second

segment

and

you

end

up

waiting

for

the

delayed

act

timer,

and

this

is

often

used

as

an

argument.

Why

constrained

devices

can't

use

tcp

and

of

course,

if

you

do

that,

you

throw

away

all

the

other

benefits

of

tcp.

N

K

I

I

apologize

I'm

doing

the

terrible

thing

which

we

should

never

do

at

idf

meetings.

Commenting

on

a

draft

that

I

have

not

read.

I

have

not

read

the

draft.

I

didn't

know

about

it

until

your

presentation,

so

thank

you

for

the

presentation.

I've

learned

about

something

potentially

useful

for

other

work

that

I'm

doing

so.

Thank

you.

L

Thank

you

for

the

presentation.

This

is

jana

yangar.

We

are

working

on

something

quite

similar

or

quick.

I

don't

know

if

you

have

seen

that

draft

and

that's

again

a

very

early

draft

at

the

moment,

with

a

lot

of

back

and

forth

going

on

with

folks

who

are

implementing

and

interested

in

it.

I'd

recommend

taking

a

look

at

that

one

as

well

in

particular,

there's

one

one

bit

that

we

have

in

there

that

I

I

don't

know

if

you've

considered

and

I'd

like

to

bring

it

up

for

you

to

consider.

L

It's

there's

a

debate

going

on

about

exactly

what