►

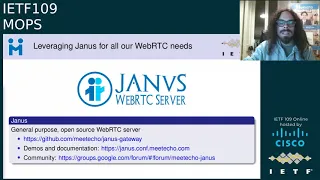

From YouTube: IETF109-MOPS-20201117-0500

Description

MOPS meeting session at IETF109

2020/11/17 0500

https://datatracker.ietf.org/meeting/109/proceedings/

A

A

A

C

A

A

B

B

A

B

B

A

E

Can

I

can

I

start?

Oh

okay,

yeah.

Okay,

thanks

for

the

introduction,

so

I'll

give

a

quick

overview

of

how?

Basically

we

we

changed.

We

changed

the

way

that

we

stream

itf

meetings

from

how

we

did

it

before

so

when

it

was

mostly

an

in-person

meeting

and

the

remote

participation

was,

of

course,

less

prevalent

than

it

is

now.

And

so,

if

we

think

about

how

we

how

we

did

things

before

so

it

was

basically

harder

from

an

from

a

setup

perspective

but

easier

in

terms

of

participation

and

in

terms

of

setup.

E

E

This

is

a

few

a

few

years

ago,

which

was

basically

how

to

achieve

large-scale

broadcasting

via

webrtc

in

the

first

place,

in

an

architecture

that

we

call

solate,

and

the

approach

was

basically

taking

advantage

of

some

sort

of

a

tree

based

architecture

where

you

can

have

a

participant,

injecting

something

via

webrtc.

Everything

in

the

middle

doesn't

need

to

be

necessarily

webrtc.

E

It

can

be

plain

rtp,

for

instance,

as

it

is

in

our

case,

as

long

as

at

the

end,

when

you

trickle

down

the

streams

at

the

end,

you

feed

servers

that

are

able

to

turn

this

feed

into

a

broadcast

webrtc

broadcast

again,

which

is

in

a

nutshell.

What

I'll

try

to

to

explain

explain

today

and

so,

first

of

all,

in

terms

of

audio,

there

are

challenges

for

both

audio

and

video,

because

the

itf

is

not

just

a

plain

broadcast.

It

is

very

much

conversational.

E

In

that

case,

the

podcaster

was

remote,

guess

where

we

wrote

and

the

audience

was

remote

as

well

and

of

course,

webrtc

is

definitely

a

good

fit

for

the

conversation

part,

because

it's

easy

to

have

a

chat

using

our

browser.

It's

what

we

are

doing

right

now,

but

in

principle

broadcasting

can

be

done

via

webrtc

as

well,

and

there

are

a

few

advantages

of

having

everything

mixed.

So,

first

of

all,

it's

easier

to

broadcast

and

distribute

it's

easier.

E

And

so

what

we

wanted

to

do

was

try

to

figure

out

a

way

to

basically

put

these

two

things

together

on

one

side

broadcasting

to

a

lot

of

people

at

the

same

time

allowing

some

of

those

people

to

interact

with

each

other

and

the

way

that

we

do.

This

with

janus

is

using

the

audio

bridge

plugin,

which

is

basically

an

audio

mcu.

So

if

you

imagine,

these

three

participants

are

all

active

and

talking

to

each

other

as

mikhail

and

leslie

were

doing

up

until

a

few

minutes

ago,

there's

actually

something

in

the

other

bridge

plugin.

E

That

is

always

relaying

a

mix

of

this

conversation

to

a

separate

component,

and

we

call

this

feature

rtp

forwarding

that

we

use

for

a

plethora

of

different

use

cases

in

this

specific

use

case.

We

use

it

to

facilitate

the

broadcasting

of

the

stream.

So

imagine

that

you

have

the

audio

bridge.

That

is

only

mixing

the

active

participants

at

any

time,

and

so

basically,

it

weighs

very

little

on

the

cpu,

because

you

only

have

a

limited

number

of

participants

being

mixed.

E

E

This

is

a

much

more

optimized

way

of

doing

things,

but

again,

as

I

said,

we

want

people

to

be

able

to

to

actually

participate

in

the

conversation

which

means

that

anytime,

that

one

of

the

attendees,

the

passive

attendees,

must

be

a

an

active

participant

instead,

for

instance,

to

make

a

question

or

becoming

the

presenter

themselves.

What

happens

is

that

we

escalate

them

to

an

active

role.

So

anytime,

you

press

the

mic

button.

E

I

mean

just

last

week

we

had

the

enog

meeting

by

ripe

and

they

actually

needed

two

separate

channels

for

two

different

languages

and

so

using

two

different

mixes

for

the

two

languages

and

an

interpreter

that

can

listen

on

one

and

speak

on.

The

other

makes

it

very

easy

to

do

something

like

this

and

broadcast

this

seamlessly,

and

so

that

you

can

have

the

audience

very

easily

switch

from

one

language

to

another

anytime

they

need

to

which

would

be,

of

course,

much

more

complex.

If

all

the

streams,

the

audio

streams

were

separated

from

from

each

other.

E

For

video,

the

approach

is

a

bit

different

because

we,

of

course

don't

do

any

video

mixing

here,

as

you

can

see

from

the

ui

we

are

doing,

we

are

using

an

sfu

approach,

so

each

each

video

stream

is

separated

from

the

other,

and

for

this

we

basically

took

some

lessons

from

the

so-called

webinar

approach,

where

we

typically

have

some

sort

of

a

one-to-many

scenario

where

you

have

a

single

presenter,

injecting

media

and

then

distributing

this

to

a

lot

of

passive

viewers.

And

of

course

I

mean

again,

the

itf

is

mostly

conversational.

E

We

can

have

multiple

active

strings

at

the

same

time

and

it's

not

only

different

people.

Speaking

in

this

case,

I'm

sending

two

different

strings

myself,

because

I'm

sharing

my

webcam

and

these

lights

at

the

same

time,

and

so

of

course

you

need

to

take

this

into

account.

But

if

you

look

it

from

an

architectural

perspective,

you

can

see

each

video

stream,

as

it

says,

is

its

separate

broadcast.

So

each

video

stream

is

a

one-to-many

broadcast.

If

you

want

that

is

then

tied

together

from

an

application

level

perspective

from

the

application

itself.

So

it's

the

application.

E

We

are

using

a

different

plugin,

so

we

are

not

using

the

audiobridge

plugin

that

only

those

does

audio

mixing,

but

we

are

using

the

video

room

plugin,

which

is

an

sfu

instead,

so

it

basically

just

relays

packets,

which

means

that

if

we

have

a

participant,

publishing

media

in

a

video

room

in

a

video

room,

then

other

participants

can

subscribe

to

this

stream.

If

you

want,

which

is

basically

how

all

these

sfus

work.

And

the

interesting

part

is,

of

course,

that

this

plugin,

just

as

the

audiobridge

plugin,

does

support

the

so-called

rtp

forwarding.

E

So

we

are

able

to

take

the

stream

from

from

this

publisher

and

relay

it

as

a

plain

otp

stream

to

a

separate

component

to

do

whatever

we

want

with

it.

So

some

people

are

doing

it

for

identity

using

it

for

identity

verifications,

otherwise

using

it

for

for

post

processing

or

live

recording,

and

in

this,

in

this

specific

case,

we

are

using

it

again

just

to

facilitate

the

broadcasting

part,

because

the

video

room,

plugin

itself

is

perfectly

capable

of

serving

a

number

of

passive

attendees

itself.

E

But

the

problem

is

that

we

can't

anticipate

how

many

attendees

we

will

have

in

any

room

and

we

want

to

scale

as

much

as

possible.

So,

for

instance,

you

have

the

plenary

where

you

have

hundreds

of

participants

instead

and

of

course,

the

video

room

itself

on

a

single

janus

instance

may

not

be

able

to

sustain

that

load

by

itself.

E

So

it's

much

easier

to

instead

use

the

video

room

plugin

just

for

inject

ingest,

and

then

you

pass

the

rtp

stream

as

it

is

to

a

streaming

plugin

for

distribution

and

then

it's

up

to

the

streaming

plugin,

which

is

more

optimized

for

broadcasting

functionality.

To

then

distribute

this

to

a

wider

audience

and

again

we

can

take

advantage

of

using

multiple

streaming

plug-in

instances

for

distribution

instead,

which

again

brings

us

back

to

that.

A

previous

slide

that

I

had

on

three

three

base

distribution.

E

So

the

wider

the

tree

is

the

the

more

the

more

attendees

you

can

serve,

of

course,

and

because

you

can

serve

multiple

nodes

for

the

distribution

part

and

of

course

it's

up

since

we

are

just

working

with

plain

rtp.

It's

also

very

easy

to

distribute,

without

actually

using

other

webrtc

components

in

the

middle

as

long

as

you

have

webrtc

functionality

on

the

edge,

which

is

what

we

do

and

so

from

from

a

functionality

perspective.

E

As

I

was

saying,

video

can

be

treated

as

multiple

one-to-many

broadcasts,

which

is

exemplified

in

this

very

ugly

picture

that

you

see

over

here.

So

in

this

case,

we

have

two

different

participants,

injecting

video,

and

they

are

also

talking

to

different

video

room

instances

which

isn't

happening

in

in

in

this

setup

of

the

itf,

but

will

probably

happen

soon.

E

In

this

case,

you

have

then

each

both

participants

injecting

their

own

stream,

which

is

then

distributed

to

all

of

those

four

distribution

nodes

that

you

see

and

the

same

happens

for

the

both

of

them,

which

means

that

from

an

audience

perspective,

it

really

doesn't

matter

that

the

it's

different

participants

that

they

are

being

fed

to

different

instances.

They

are

both

seen

as

two

separate

streams

that

they

are

receiving

from

the

certain

same

server

they

are

connected

to.

E

This

is

how

it

looks

in

a

nutshell,

so

you

have

active

participants

connected

to

the

audio

bridge

for

speaking

and

to

the

video

room

plugin

for

sending

their

own

video

streams,

and

all

of

those

streams

are

then

forwarded

to

the

to

the

to

a

plate

or

our

streaming

plugins

for

the

distribution

part,

which

means

that

no

matter

where

you

are

ingesting

streams.

The

moment

you

hit

the

streaming

plugin

instance.

You

are

able

to

receive

those

streams.

E

We

just

need

to

update

the

distribution

tree

behind,

according

behind

the

curtains

accordingly-

and

I

mean

all

of

this

effort

eventually

led

to

to

what

you

saw

in

at

itf

108

in

madrid,

so

that

the

interface

maybe

didn't

change

as

much

from

the

one

that

you

were

used

to

when

the

meetings

were

actually

in

person

and

you

were

attending

remotely.

But

there

were

a

lot

of

changes

actually,

especially

in

terms

of

how

the

streams

were

managed.

E

C

E

E

So

we

had

this

multicast

support

available

for

the

for

a

long

time,

but

we

just

couldn't

take

advantage

of

it

which

which

we

now

can-

and

this

means

that

these

instances

that

you

see

over

here

are

basically

you

still

have

one

audio

bridge.

You

still

have

one

video

room,

but

they

are

just

sending

a

single

stream

via

rtp

forwarding

to

a

multicast

group

instead,

which

means

that

any

streaming

instance,

any

distribution

instance

that

you

see

over

here

just

needs

to

subscribe

to

the

right

multicast

group

in

order

to

receive

the

stream.

C

E

E

We

are

all

capturing

our

own

webcams,

our

own

screens

and

so

on,

and

instead,

in

that

case,

we

had

several

pre-recorded

presentations

that

we

want

to.

We

wanted

to

play

live

through

the

same

metacore

interface

as

if

they

were

live,

but

they

were

pre-recorded

instead,

and

this

was

actually

pretty

straightforward

to

do

in

terms

of

how

the

architecture

worked

in

the

first

place,

because

the

streaming

plugin

that

I

was

mentioning

that

basically

allows

you

to

do.

E

The

broadcasting

part

actually

works

by

taking

plain

rtp

inputs

as

a

source,

which

is

why

it

works

so

nicely

with

yoda

bridge

and

video

room

plugins

as

a

source

themselves.

So

all

we

needed

to

do

was

basically

take

those

prerecorded

files

and

stream

them

via

rtp,

using

a

tool

like

ffmpeg

or

gstream,

or

any

other

open

source

tool

that

that

is

available

and

then

stream,

those

to

to

the

streaming

plugin

instances

that

were

associated

to

the

room

and

that

basically

would

become

a

new

broadcast

that

people

in

the

room

could

subscribe

to.

E

So

from

the

audio

bridge,

and

in

this

case

we

were

bypassing

that

component,

which

made

for

a

slightly

awkward

experience,

and

so

we

actually

changed

that

in

109.

So

right

now

we

are

actually

injecting

pre-recording

presentations

as

a

regular

webrtc

participants

and

that

which

means

that

they

go

directly

both

in

the

audio

bridge

and

the

video

room,

and

they

are

distributed

exactly

as

a

regular

participant

would,

which

makes

it

much

more

transparent

to

the

infrastructure

in

place.

E

Some

sessions

always

need

to

broadcast

it

to

be

broadcasted

to

youtube

as

well,

which

means

that,

in

that

case,

some

mixing

needs

to

take

place

because,

of

course,

any

cdm

provider

like

youtube

needs

to

have

a

single

audio

and

single

video

stream

to

be

able

to

broadcast

them

to

to

their

audience

and

the

way

this

works

is

we

take

advantage

of

the

same

rtp

forwarding

functionality

that

I

was

mentioning

before.

So

in

this

case,

we

have

an

ncu

component.

E

E

It

will

still

work

pretty

much

the

same

way

for

for

109

as

well,

and

this

basically

covers

my

presentation.

I

hope

I

didn't

go

too

fast,

especially

because

I

had

a

lot

of

slides

and

I

wanted

to

make

sure

that

I

have.

I

had

enough

time

to

present

them

all.

So

I'm

happy

to

answer

any

question.

If

you

want,

and

by

the

way

I

already

see

callan

asking

something,

he's

asked

how

many

participants

are

reasonable

to

reasonable

combined

in

this

way.

In

principle,

there

is-

let's

say

I

don't

know

the

maximum

layout.

E

E

E

But

of

course,

the

more

people

you

have

active

in

the

same

in

the

same

audio

bridge

instance

is

of

course,

going

to

weigh

a

bit

harder

on

the

cpu

itself,

which

is

why

we

tried

to

to

optimize

things

as

much

as

possible

and

broadcast

as

much

as

possible,

rather

than

than

everybody

turning

on

their

mix,

which

of

course

also

makes

little

sense,

because

40

people

talking

at

the

same

time

is

going

to

be

chaos

anyway.

So.

E

E

Yeah

we

actually,

we

actually

had

a

few

meetings

that

shared

very

much

the

same

architecture

in

the

past.

So,

for

instance,

we

had

the

meeting

for

the

for

the

afrinic

here

in

session.

We

had

a

couple

of

ripe

meetings

this

past

couple

of

weeks,

and

you

could

say

that

95

of

the

code

was

exactly

the

same

and

we

had

to

just

tailor

some

specific

functionalities

to

some

use

cases.

E

So,

for

instance,

for

this

enog

meeting,

we

had

to

support

these

bilingual

streams,

which

was

which

was

just

a

minor

change,

because

the

architectural

already

allowed

for

it

and

most

of

the

times

it's

just

visual

changes.

So

it's

a

matter

of

adapting

the

user

interface

for

some

specific

things

or

some

functionality

that

are

very

much

specific

to

to

some

use

cases

because,

for

instance,

the

virtual

hum

functionality

that

we

had

in

108

was

very

much

an

itf

requirement

and

was

never

needed

for

any

other

use

case.

E

The

functionality

that

we

have

here

for

raising

your

hand

may

be

more

generic

and

so

may

be

reusable

in

our

context,

but

is

again

something

that

we

started

working

specifically

for

the

itf

instead.

So,

as

far

as

the

audio

video

distribution

is

concerned,

it's

very

much

the

same

in

in

our

context

and

so

that

that

part

is

basically

reusable

in

several

different

contexts.

C

E

Oh

jake

is

asking

if

there

is

a

write

up

on

the

multicast

setup

in

the

cloud,

and

this

was

in

azure

yeah.

Basically,

the

the

setup

that

we

had

in

108

was,

I

think,

using

google

cloud

servers

and-

and

we

do

know

that

that

setup

didn't

have

multicast

support,

at

least

at

the

time

which,

while

instead

asia

does

support

it

and

again,

alessandro

can

provide

more

insights

about

specifically,

why

azure

supports

it

now

and

how

we

could

take

advantage

of

it.

But

this

was

definitely

the

first

standard

that

we

could.

F

E

Yeah

as

eric

is

pointing

out

yeah,

the

streams

work

over

ipv6,

and

this

is

thanks

to

to

janus

in

the

first

place.

So

as

I

anticipated,

all

that

you

are

seeing

is

actually

based

on

a

completely

open

source

component.

So

all

the

the

stream

that

is

responsible

for

the

audio

and

video

streaming

is

completely

open,

source

and

you're

completely

free

to

use

it

on

your

own

terms,

and

that

component

does

support

ipv6

out

of

the

box.

E

B

B

C

B

G

G

G

G

G

G

G

The

edge

device

providing

the

computation

and

storage

is

itself

limited

in

such

resources

compared

to

the

cloud

and

can

be

overwhelmed

by

a

sudden

surge

in

demand.

Additionally,

the

throughput

to

the

user

over

the

wireless

link

will

suffer.

The

user

will

perceive

a

fall

in

qoe

of

the

video

being

rendered

on

her

ar

device.

G

G

A

A

G

G

G

Next

is

dynamically

changing

abr

parameters.

The

ebr

algorithm

must

be

able

to

dynamically

change

parameters,

given

the

heavy

tail

nature

of

network

throughput

in

such

distributions,

law

of

large

numbers

works

too

slowly.

The

mean

of

sample

does

not

equal

the

mean

distribution

and,

as

a

result,

standard

deviation

and

variance

are

unsuitable

as

metrics

for

such

operational

parameters.

G

Fourthly,

handling

conflicting

qa

requirements.

Qoe

goals

often

require

high

bit

rates

and

low

frequency

of

buffer

refills.

However,

in

practice

this

can

lead

to

a

conflict

between

those

goals.

For

example,

increasing

the

bit

rate

might

result

in

the

need

to

fill

up

the

buffer

more

frequently

as

the

buffer

capacity

might

be

limited

on

the

ar

device.

G

Algorithm

must

be

able

to

handle

the

situation,

finally

handling

the

side

effects

of

deciding

a

specific

bit

rate.

For

example,

selecting

a

bit

rate

of

a

particular

value

might

result

in

the

avr

algorithm

not

changing

to

a

different

rate

so

as

to

ensure

a

non-fluctuating

bit

rate

and

the

resultant

smoothness

of

video

quality.

The

ibr

algorithm

must

be

able

to

handle

the

situation

next

slide.

Please.

G

So

as

a

conclusion,

I

would

like

to

point

out

these

two

references.

The

first

reference

is

an

outcome

of

a

project

that

streamed

over

38

years

of

data

with

over

63

000,

distinct

users

and

compare

the

performance

of

several

abr

algorithms

listed

in

the

slide.

The

second

reference

documents,

the

problems

with

current

abr

algorithms.

A

A

A

A

A

D

So

I

I

had

a

question

about

the

requirements

I've

I've

heard

in

I

mean

this

this

few

millisecond

requirements.

I

see

it

it

quoted

regularly,

but

I

do

work

a

lot

with

these

devices

and

I'm

I

don't

understand

where

that

comes

from.

Could

you

could

you

walk

me

through

that?

A

little

bit

more?

I

mean,

I

understand

why

when

you

rotate

your

head,

you

need

to

update

your

display

at

probably

over

150

hertz,

but

I

don't

understand

why

that

means

the

video

flow

needs

to

be

that

rapid.

G

Yeah,

no

that's

a

very

good

question

and

I

actually

agree

with

your

observation

that

the

devices

themselves

are

quite

fast

these

days.

The

problem

is

actually

the

the

fact

that

you

have

to

do

a

lot

of

processing

in

these

devices

to

overlay

the

scene

that

the

viewer

is

viewing

with

something

like

we

are

envisaging

a

historical

figure,

for

example,

and

to

synchronize

that,

with

the

view

of

the

observer,

is

quite

challenging

and

at

the

same.

G

D

G

D

G

D

D

G

G

G

I

C

Hi

ryan,

thanks

thanks

for

this,

it

was

an

interesting

document.

I'm

curious

is:

are

the

sort

of

requirements

described?

These

are

based

on

a

a

particular

set

of

systems

like

is

it

defined

whether

you're

using

a

point

cloud

for

the

overlay

of

the

ar

objects

or

whether

you're

trying

to

render

a

video

from

a

point

of

view?

That's

part

of

this,

or

are

these

like

two

possible

implementations

of

the

same

of

the

same?

G

G

We

we

will

have

more

specific

requirements,

but

this

document

was

essentially

looking

at

the

big

picture

where

you

didn't

really

go

into

very

deep

specifics

of

of

what

particular

algorithm

or

what

particular

technique

we

are

using,

but

definitely

point

cloud

viewport

adaptation.

All

those

things

are

are

at

least

at

the

moment,

part

of

the

requirement.

But,

as

you

go

down

that

particular

branch

of

technique,

you

will

need

some

specific

requirements

for

those

as

well.

Yeah.

C

G

So

yeah,

so

we,

and

and

without

your

draft

as

being

a

route

to

a

taxonomical

tree

of

media

operations

and

one

of

the

branches

out

of

that

route,

is

abr.

Algorithms,

whose

sub

branches

are

use

case.

So

definitely

we'd

like

to

have

feedback

from

the

working

group,

and

I

think

this

is

a

very

good

opportunity

to

to

this

question

answer

session

is

very

good

opportunity

to

look

at

various

directions

that

staff

could

go

to

and

definitely

look

for

an

adaptation

at

some

point.

J

J

J

G

K

G

Excellent

question

so

with

with

applications

such

as

ar

and

vr,

the

problem

is

that

the

the

various

parameters

that

such

algorithms

take

most

importantly

network

throughput

and

the

size

of

the

buffer.

They

change

very

rapidly

in

such

use

cases

as

the

user

is

moving

around

and

often

this

is

referred

to

as

volatility

of

the

conditions,

so

associations

between

various

logical

associations,

between

the

software

components,

break

up

and

then

form

again.

G

So

so

the

the

problem

is

specific

to

ar

and

vr

use

case

in

that

there

is

a

very

rapid

change

in

these

parameters,

and

so

the

input

to

the

abr

algorithm

is.

It

is

changing

very

rapidly

because

these

parameters

are

changing

very

rapidly

and

the

ebr

algorithm

needs

to

adapt,

according

so

as

opposed

to

the

traditional

scenarios

where

the

the

the

parameters

are

changing,

but

not

as

fast

as

in

an.

H

C

A

G

B

So

I

think

that

I

think

that

that

with

40

people

here,

we're

probably

not

well,

we

aren't

quorum.

So

I

think

it

sounds

like

you've

had

some

good

feedback,

and

maybe

the

next

step

is

to

do

a

turn

of

the

of

the

draft,

a

new

version

of

the

draft

with

with

those

updates,

and

then

we

can

take

it

to

the

to

the

mailing

list

and

see

if

people

want

to

adopt

this

as

something

to

move

forward

within

the

working

group

or

have

other

thoughts.

B

B

G

Again,

a

fantastic

question,

so,

from

our

perspective,

we

would

like

to

do

both.

The

question

is,

that

is,

is

mopp's

working

group,

the

appropriate

working

group

to

do

the

protocol

that,

or

is

it

just

that

we

identify

the

operational

issues

that

lead

to

certain

questions

that

can

then

be

taken

to

other

working

groups?

G

G

B

Thank

you.

I

think

I

think

that

that

it

will

work

well

for

this

collection

people

to

help

work

through

the

operational

aspects

and

also

thereby

bring

clarity

to

the

op

to

the

protocol

questions

to

take

to

other

working

groups.

So

it's

it.

The

protocol

work

doesn't

get

done

here,

but

we

can

probably

be

helpful

in

terms

of

making

sure

that

the

questions

get

get

asked

in

a

useful

way

in

other

groups.

A

L

L

That

is

I'll,

explain

what

it

is,

but

next

slide

so

it.

The

goal

of

web

transport

has

fills

a

gap

where

web

sockets

exclusively

provide

reliable

order

delivery

over

tcp,

and

there

is

a

unreliable

or

unordered

way

to

deliver.

Data

called

rtc

data

channel,

but

it's

designed

around

a

peer-to-peer

use

case

and

it

has

a

lot

of

rough

edges

next

slide.

L

So

as

a

solution

to

that,

we

propose

a

new

thing

called

web

transport,

which

is

essentially

trying

to

expose

semantics

of

quick

connection

to

the

web

api.

It

is

does

not

let

you

create

direct

quick

connections,

but

it

gives

you

similar

semantics

and

it

is

different

from

websockets

in

sense

that

websocket

is

message

oriented

and

web

transport

is

stream.

Oriented

next

slide.

L

So

we

are

the

target.

Applications

is

anyone

who

wants

something

like

websockets,

but

they

want

something

like

websockets,

but

for

udp,

or

they

want

to

have

multiple

streams

of

independent

data

that

they

send

and

they

want

to

send

it

without

head-of-line

blocking.

There

are

a

lot

of

various

use

cases

we

have

in

mind.

L

L

So

web

transport-

and

I

said

it,

provides

quick

semantics.

It

provides

streams

and

datagrams

streams

are

quick

streams.

They

are

essentially

just

like

tcp

connections,

except

it

is

very

cheap

to

create

them

and

it's

very

cheap

to

cancel

them

because

of

the

multiplexing,

and

they

all

share

congestion

control

context,

and

by

cancel

I

mean

when

you

reset

a

quick

stream.

It

is

a

form

of

unreliability,

because

all

of

the

underlying

data

is

not

retransmitted,

even

if

it

gets

lost.

L

L

So

web

transport,

we

have

a

lot

of

design

requirements,

but

what

we

require

of

our

protocol

are,

we

require

it

to

use

dls.

We

require

it

to

use

congestion

control,

which

is

important,

because

regular

udp

does

not

have

any

form

of

congestion

control.

But

since

we're

in

the

browser,

we

have

to

ensure

that

the

resulting

connection

will

not

overload

the

network.

We

require

server

to

be

able

to

know

the

origin

of

the

web

page,

which

originated

the

request.

In

order

to

enforce

the

ors.

L

L

The

server

has

to

continuously

indicate

to

the

browser

that

it

still

wants

to

talk

to

the

client

and

that's

as

important,

because

if

you

do

not

have

that,

it

is

very

easy

to

use

your

api

for

a

ddos

attack

and

we

require

we

provide

every

web

transport.

Endpoint

has

a

url

and

that

allows

it

to

integrate

with

a

lot

of

web

features.

Like

content,

security

policy,

etc,

next

slide,

so

why

would

you

use

web

transport

for

media

well

compared

to

webrtc?

There

are

multiple

advantages.

L

L

That

means

whenever

you

want

to

push

a

media

check,

you

do

not

need

to

send

a

request

and

then

get

that

media

check.

You

can

just

push

it

and

simplify

things,

and

this

also

means

that

you

can

do

it

the

same

protocol

in

both

directions,

so,

for

instance,

something

like

dash

or

hls

can

be

used

to

stream

video

from

an

http

server.

But

if

you

try

to

use

it

for

contributing

video,

you

have

to

configure

your

server

to

which

you

send

video

to

pull

from

your

some.

L

B

B

L

A

A

L

L

L

L

So,

regarding

specific

standardizing

media

over

web

transport,

there

is

no

actual

current

effort

to

standardize

this,

and

the

reason

for

that

is

that

web

transport

is

just

being

developed.

So

if

I

don't

think

people,

while

people

are

building

things

on

top

of

them,

none

of

the

sayings

are

building.

On

top

of

it

are

it's

a

stage

where

they're

ready

for

this?

L

I

am

aware

of

efforts

to

do

both

video

live

streaming

and

real-time

applications.

Yesterday

fox

from

twitch

presented

there

on

their

efforts

of

to

do

live

streaming

over

web

transport

at

the

web

transport

working

group

meeting,

and

I

am

aware

of

multiple

attempts-

I

don't

remember

specifically,

but

there

are

definitely

multiple

attempts

to

build

game

streaming

systems

on

top

of

this,

and

so

there

it

is

unclear

what

would

be

the

first.

What

would

be

the

way

forward

to

standardize

something?

L

L

F

Hey

so

I'm

the

guy

at

twitch

that

gave

the

presentation

yesterday

we're

mostly

exploring

it

as

an

hls

or

contribution

alternative,

just

as

a

way

to

reduce

headline

blocking,

and

it

might

be

something

that

twitch

would

be

interested

in

trying

to

standardize

as

well,

but

we're

still

prototype

stays

a

stage.

We

haven't

released

it

or

anything

yet,

but

yeah.

I'd

love

to

talk

more

about

web

transport

as

well.

If

anybody

has

any

questions,

I've

been

involved

in

the

staff

alpha

and

the

working

group

pretty

heavily

now.

N

L

L

Some

of

them

might

get

eventually

released

as

open

source,

but

they

do

not

believe

there

is

a

specific

example,

but

even

if

you

read

the

web

transport

explainer,

it

has

some

examples

of

just

how

you

could

take

a

chunk

of

video

and

connect

it

to

a

media

source

extension.

So

that

is

like

to

build

something

really

simple

is

not

particularly

hard.

A

B

B

This

is

like

well,

that's

that's

interesting,

which

is

not

really

a

good

reaction,

but

there

you

are,

and

so

I

don't

think

we're

going

to

conclude

a

discussion

here,

but

it

seemed

like

this

was

an

appropriate

time

to

at

least

launch

a

discussion

of

whether

or

not

we

have

the

right

milestones

for

this

working

group

and

for

the

items

that

we

haven't

particularly

embarked

on.

Do

we

have

volunteers

or

other

thoughts,

and

why,

yes,

in

case

you're,

scrambling

to

find

our

charter

and

what

are

the

darned

milestones

anyway?

Next

slide?

Please

thank

you.

B

Naturally,

some

of

that

is

because

it's

2020

and

what

was

the

question,

but

some

of

it

is

also

just

because

we

haven't

pursued

it.

So

these

are

our.

There

are

two

key

documents.

The

there

was

a

theory

that

we

were

gonna

talk

about

the

relationship

of

the

work

that

the

sympathy

folk

are

doing

and

what

what

the

ietf

does

and

also

the

streaming

video

alliance.

B

We

have

had

some

cross

chatter

between

this

sva

work

and

this

working

group,

but

nothing

so

formal

as

a

document.

So

I

guess

my

questions

are:

do

we

want

to

and

then

the

final

bullet

point?

I

have

no

idea

what

that's

a

bogon,

I

that

is

as

it

is

on

our

charter,

but

I

don't

know

whether

they

edge

network

considerations

document

is

the

same

as

the

operational

considerations

document

anyway.

It's

bogon

so

with

that,

by

way

of

rousing

intro,

I

throw

open

the

floor

for

comment.

C

C

A

O

But

on

the

other

end,

there

will

be

an

evaluation

indeed

in

about

a

year

from

now.

Even

documents

was

not

the

main

goal

of

mobs.

It

was

the

documents

here

are

more

to

document

what

we

have

doing

and

to

basically

have

an

archive

and

not

to

lose

the

time

you

spend

specifically

as

an

auto

check

on

this

document.

So

there

is

no

link

reeling

between

achieving

document

publication

and

the

survival

of

this

written

group.

B

O

J

L

O

So

if

you

don't

mind,

I

confirm

what

you

just

said.

Lastly,

having

the

right

milestone

now

having

fuzzy

milestone

like

there

is

on

this

charter,

if

you

don't

intend

to

work

on

smt

and

sva

simply

remove

them

right,

it's

nothing

as

bad

as

having

a

wrong

milestone.

We

can

delay,

we

can

change

them,

but

even

milestone

where

nothing

is

moving

there.

Just

remove

that.

That's

easy

and

you

have

the

power

to

do

it.

This

chairs,

as

you

know,.

A

No,

I

was

just

going

to

say

I

mean

it

just

the

the

we.

We

put

these

milestones

in

place

for

a

reason

right,

so

I'm

I'm

just

sort

of

wondering

if

the,

if

the

reasons

have

changed,

I

don't

I

don't

think

that's

the

case

and

if

it's

just

a

matter

of

of

you

know

getting

you

know

the

energy

of

activation

to

get

them

started

right.

You

know

having

the

right

having

the

right

people

in

the

working

group

to

to

help

advance

the

help

advance

them.

B

Yeah,

so

I

think

I

think

that

that's

part

of

it-

and

I

think

part

of

it

is

in

the

case

of

simpty.

There

were

folks

that

were

maybe

more

involved

a

year

ago

or

going

to

be

involved

a

year

ago

and

then

and

then

2020

happened.

So

that's

one

that

we

might

want

to

sort

of

put

in

the

parking

lot

and

and

should

the

right

people

become

involved

again,

we

could

pursue

it

streaming,

video

lines

we've

had

people

present

work

from

there.

B

You

know

both

sanjay

mishra

talking

about

the

work

of

the

the

open,

caching

stuff,

that's

being

done

there,

and

also

last

at

our

last

virtual

meeting

virtual

108.

We

had

the

presentation

about

the

the

the

measurements,

the

metrics

for

video

quality

of

experience

presented

here.

So

I

think

it's

a

question

of

whether

or

not

there

is

interest

and

volunteer

to

actually

do

some

work

on

on

documenting

what

where

there

is

clearly

overlapping

work

and

glenn.

Why

I

see

a

technical.

D

M

I

you

know

why

this

is

because

2020.,

so

I

do

think

that

both

of

them

are

reasonable

things

to

to

continue

within

the

milestones,

maybe

with

some

different

dates.

Obviously,

since

I

think

we

missed

july

2020,

but

both

of

these

topics

to

me

seem

still

relevant

right,

simtes

still

got

their

their

their

their

2110

specification,

which

is

you

know,

using

ip

networks

for

their

work.

Sks,

as

leslie

said,

has

the

cdn

work

going

on

over

there

as

well

as

some

other

stuff

that

is

ongoing.

M

So

I

I

think

we

should

keep

both

of

these,

but

maybe

be

more

conscious

and

putting

some

energy

into

working

out

both

of

them

and

if

one

of

them

turns

out,

you

know

that

one

or

the

other

isn't

really

getting

a

lot

of

energy

back

from

the

other

side

of

that

relationship

that

then

we

can

talk

again

about

killing

it

off.

I

think

I

I

think

it's

premature

to

come

off

right

now.

B

M

I

think

sanjay

and

I

should

have

a

conversation-

I'm

not

volunteering

him,

I'm

not

volunteering

me,

but

I

think

the

two

of

us

together

can

have

that

conversation

about

who

either

both

of

us

or

somebody

else

might

be

the

right

person,

because

both

of

us

are

clearly

active

in

the

itf

and

we're

both

active

here.

So,

although

I

don't

see

sanji

on

the

call

tonight,

he

may

be

asleep.

C

J

B

J

B

J

B

K

K

Go

ahead:

hey

this

is

trey

just

kind

of

reply

to

spencer.

I

think

spencer

also

knows,

I

think,

if

you're

gpp,

there

are

multiple

works,

work

on

edge

computing

aspect,

right

mostly

sa2

and

s86,

but

I

think

in

in

this

work,

pretty

much

close

to

s6

work,

application

level,

edge,

computing

considerations

and

I

totally

agree

with

the

spencer,

the

current

documentation

missing

lots

of

existing

edge.

K

Networking

architectures

right

talked

about

the

media

streaming

and

compute

computation

and

edit

server

location

and

resources,

and

those

still

need

to

be

considered

a

document

somewhere

either

in

the

same

documentation

or

in

separate

documentations.

Talk

about

that,

and

I

mean

they

think

the

current

draft

is

too

broad

and

to

to

you

know

to

comprehend

it.

J

J

Both

are

both

our

person

to

the

3gpp

and

3gpps

person

to

us

to

talk

about

this

a

little

bit.

Maybe,

but

my

impression

is

that

3tvp

does

a

lot

more

with

how

to

with,

with

with

mechanisms

to

do

things,

then,

maybe

how

you

know

operational

considerations

for

them,

and

things

like

that,

so,

if

they're,

using,

if

they're

using

ietf

protocols,

especially

for

for

any

of

this,

that

it

might

be,

that

might

be

a

really

valuable

thing

for

the

operations

area

to

do

whether

it's

in

mops

or

someplace

else.

B

B

J

B

Yes,

and-

and

I

agree

with

chris

lemon's

comment-

that

given

the

amount

of

cross-pollination

between

the

sva

and

iatf,

the

esp82

is

particularly

useful.

It's

we

just

need

to

provoke

it

at

this

point

I

think

yeah.

So

I

think

we've

we

are

exhausted.

We

have

exhausted,

we

have

exhausted

our

list

of

things,

so,

yes,

any

other

other

business.