►

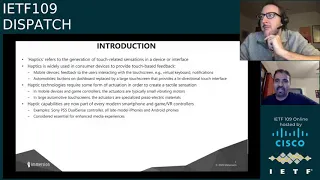

From YouTube: IETF109-DISPATCH-20201116-0500

Description

DISPATCH meeting session at IETF109

2020/11/16 0500

https://datatracker.ietf.org/meeting/109/proceedings/

A

A

A

My

name

is

patrick

mcmanus

got

my

co-chairs

here,

ben

campbell

and

an

incoming

co-chair

of

chris

dp

we'll

talk

about

that

in

a

in

a

moment

as

we

go

here's

our

agenda

for

today

we

can

have

just

a

look

over

it,

but

from

the

top

we

of

course

need.

We

need

a

note

taker

for

this

session

braum.

I

believe,

offered

to

do

that

on

the

mailing

list.

A

B

A

At

jabber,

now

all

right

with

those

preliminaries

out

of

the

way

you

can

see

what

we

our

agenda

in

front

of

us,

we're

going

to

have

a

short

discussion

from

our

id's.

We

have

two

dispatch

items

to

talk

about.

As

a

reminder,

the

purpose

of

dispatch

is

really

to

be

a

friendly

and

welcoming

place

to

new

work

to

the

ietf

and

to

help

it

find.

The

permanent

homework

will

really

be

developed.

A

This

working

group

itself

very

rarely

adopts

a

draft.

The

more

likely

and

desirable

outcomes

are

to

find

an

existing

working

group

for

draft

to

discuss

whether

or

not

perhaps

a

mini

working

group

would

be

in

order.

Perhaps

a

buff

would

be

in

order

and

to

make

you

know

a

recommendation

amongst

those

things,

or

even

perhaps

a

recommendation

not

to

proceed

at

all

with

the

work

to

the

ads,

who,

of

course,

get

to

make

the

the

actual

decisions.

A

This

is

a

joint

meeting

with

the

art

area,

which

means

we'll

also

highlight

some

other

stuff

going

on

in

the

art

area

of

the

ietf

this

week

and

have

an

fyi

discussion

of

work

that

may

be

even

like

too

early

for

being

dispatched,

but

is

of

interest

and

could

take

feedback

from

from

this

audience

as

well.

That

alexi

will

be

presenting

on

media

types

or

rather

content

types

to

distinguish

between

forward

and

encapsulated

encrypted

each

encrypted

emails.

A

I

believe,

okay,

a

reminder

of

our

mailing

list,

art

at

iatf.org

and

dispatch

at

itf,

4

being

the

mailing

list,

and

we

don't

have

our

note

well

in

these

slides.

I'm

sorry,

that's

my

oversight,

but

I

will

refer

everyone,

especially

given

that

this

is

the

first

meeting

of

the

week

to

in

your

favorite

search

engine.

Look

up

iatf

note!

Well,

those

are

important

terms

under

which

your

contributions

with

the

iatf

are

governed.

A

A

contribution

is

almost

anything

you

do

that

interacts

with

with

other

folks

in

a

semi-public

ietf

context,

so

that

could

be

a

mailing

list

that

could

be

this

meeting

and

so

those

cover

things

rules

both

related

to

ipr,

as

well

as

your

your

code

of

conduct

during

these

meetings.

So

if

you're

not

familiar

with

that,

please

do

take

a

look

all

right.

That's

the

usual

getting

started

stuff

out

of

the

way,

and

with

that

we

can

move

over

to

our

first

speaker,

which

I

think

is

going

to

be

our

ads.

C

C

Most

of

you

know

that

fairly

recently,

our

beloved

colleague

and

good

friend,

jim

shad,

died

and

I'd

like

to

just

take

a

moment

of

silence

to

recognize

jim

and

his

contributions.

The

the

working

groups

he's

chaired

the

documents

he's

edited

the

smiles

he's

shared

and

the

wine

he's

he's

made.

So

a

bit

of

silence

for.

C

C

Thanks-

and

I

won't

do

the

full

minute

for

that,

but

we

all

remember

him

fondly

sorry.

The

the

next

thing

is

a

bit

of

happier

news.

We

have

a

new

chair,

as

many

of

you

also

know.

We

we've

been

interested

in

rotating

chairs

in

this

particular

working

group

to

give

different

people

a

shot

at

having

having

something

to

do

with

bringing

new

work

into

the

art

area

and

being

more

exposed

to

things

in

the

art

area.

So

ben

is

rotating

out

and

kirsty

payne

from

the

uk.

C

National

cyber

security

center

is

rotating

in

and

she

will

be

our

new

chair

starting

at

this

meeting.

So

as

we

speak

right

now,

I

am

clicking

the

button

to

update

the

data

tracker

for

care

state

and

at

the

end

of

the

meeting

at

the

end

of

the

week,

I

will

remove

ben

from

the

chair

list

and

there

you

have

some

video

from

kirsty

kirsty,

say

hi.

Yes,.

D

A

Awesome

christy,

that's

great!

I

don't

want

to

overlook

thanking

ben.

You

know

I

either

who's

really

done

the

the

yeoman's

work

here,

the

past

few

months,

which

I've

really

really

appreciated

and

you've

left

us

in

a

you

know

in

a

great

place.

So

you

know

thank

you

ben

for

your

efforts.

We

can

do

sort

of

the

the

meat

echo

golf

club,

I'm

sure

everyone

who's

muted,

is,

is

showing

a

appropriate

appreciation

but

sincerely

ben.

A

A

E

A

E

A

E

Away,

thank

you

great

thanks,

patrick

and

hi

everyone

good

morning,

good

evening

good

afternoon.

If

you

are

in

a

time

zone

where

it

is

afternoon,

my

name

is

yeshwanth

muthusami.

I

work

for

immersion

and

I'm

going

to

be

presenting

our

id

on

the

on

on

a

proposal

for

haptics

as

a

top-level

media

type.

Next

slide,

please

patrick.

E

E

It's

used

widely

in

in

a

number

of

consumer

devices,

for

where

your

work,

when

you're,

using

your

virtual

keyboard

or

to

get

any

kind

of

vibrating

notifications

and

off

late

in

in

many

of

the

cars,

the

the

dashboard,

the

buttons

are

usually

replaced

by

a

large

touchscreen

that

provides

a

bi-directional

touch,

interface

and

the

thing

about

haptics

is

there.

It

requires

some

kind

of

actuation

to

in

order

to

to

create

that

tactile

sensation

in

a

mobile

device.

That

actuation

is

provided

by

small

vibrating

motors

or

actuators,

or

in

a

larger

or

automotive

touch

screen.

E

These

actuators

are

piezoelectric

materials

and

haptics

are

these

days.

They

seem

to

be

everywhere.

The

the

most

recent

example

is

a

sony

ps5,

dual

sensor

controller,

which

has

just

been

released,

and

it's

getting

a

lot

of

good

press

because

of

its

haptics

controller

or

pretty

much

all

of

their

smartphones

on

the

market

have

haptics

in

them

and

they

are

right.

Haptics

is

considered

pretty

essential

for

enhanced

media

experiences.

E

Next

slide,

please,

okay,

the

this

is

just

an

indication

of

that

of

the

differences

between

version

zero,

zero,

which

has

been

on

the

dispatch

mailing

list

since

the

middle

of

october

and

version

one

which

I

uploaded

a

few

hours

ago.

I

I

apologize

for

this

late

breaking

update,

but

until

late

yesterday

evening,

I

didn't

know

I

was

going

to

be

presenting

here.

E

So

thanks

to

an

ee

and

email

snafu

at

our

end,

I

wasn't

aware

of

this

presentation,

so

I

kind

of

had

to

scramble

and

get

the

new

version

out

the

new

version

it

tries

to

address

all

of

the

comments

which

we

have

received

on

version:

zero,

zero

on

the

on

the

dispatch

mailing

list

and

specifically

it

try.

It

tries

to

I've

added

pros

to

dispel

the

inadvertent

inadvertent

misconception

that

some

people

has.

He

seemed

to

have

gotten

from

version

zero,

zero

that

these

these

following

haptic

subtypes

were

already

in

use.

E

That

is

not

the

case.

The

haptic

data

formats,

like

ahap

and

org

and

ibs

and

hapt

are

are

indeed

widely

in

use

and

we

expect

them

to

live

under

the

haptics

top

level

type

once

it's

approved.

So

I

made

that

point

more

clearer

and

based

on

feedback

from

more

than

one

person.

I've

added

the

sections

on

the

subtype

registrations

for

haptic,

slash,

ibs

and

haptic,

slash

hacked

to

to

just

to

illustrate

how

the

subtype

registrations

would

be

handled

once

we

have

a

haptics

approved

at

the

top

level.

E

I've

added

a

number

of

new

new

references

that

show

the

progression

of

the

standardization

of

haptics

in

mpeg

and

the

availability

of

documents

for

the

various

haptic

data

formats

that

we

envision

to

live

under

the

haptics

top

level

type.

So

that

is

the

basic

basic

differences.

So

if

people

have

read

version

zero,

zero,

I

I

just

want

to

point

out

that

my

presentation

is

going

to

be

based

on

version

one,

and

this

slide

it

kind

of

gives

you

an

indication

of

what

is

different,

which

you

may

not

have

gone

through

next

slide.

Please.

E

Okay

in

the

in

the

next

series

of

eight

slides,

I

basically

go

through

various

justifications

for

why

we

believe

haptics

needs

to

be

treated

as

a

top

level

type

and

that's

what

I'm

that's.

What

you're

going

to

be

seeing

the

first

slide

here

is

basically

more

of

an

introduction,

so

to

speak.

Haptic

signals

provide

an

additional

layer

of

entertainment

and

overall

sensory

immersion

and

the,

and

as

the

user

experience

and

enjoyment

of

media

content

can

be

significantly

enhanced.

If

you

add

haptics

to

the

audio

video

content

in

iso.

E

E

And

when,

when

that

happens,

it

will

become

part

of

the

iso

iec

14496

part

12

sixth

edition-

and

this

is

a

question

we

get

asked.

Often,

how

is

it

what

is

going

to

be

the

designation

for

haptics

in

mp4

files,

given

that

video

mp4

is

already

taken

and

audio

mp4

is

already

taken

and

and-

and

we

do

realize

that,

and

so,

if

a

video,

a

an

mp4

file

has

got

video

audio

and

haptics,

then

we

are

not

going

to

mess

with

the

designation

it.

It's

going

to

it's

going

to

still

be

video

mp4.

E

The

same

goes

for

if

a

file

has

audio

and

haptics

and

happens

to

be

a

dot

mp4

file,

we

are

not

going

to

change

the

designation,

but

we

are.

We

do

envision

to

mp4

files

with

just

haptic

tracks

in

them,

and

the

examples

of

such

files

would

be

mp4

files

used

for

streaming

games

or

have

haptic

files

for

haptic,

vests

and

and

bodysuits

and

belts

and

gloves,

and

for

those

files.

We

envisioned

that

designation,

haptic,

slash

and

b4

next

slide,

please,

okay!

E

E

Vibro

tactile,

which

is

a

touch

vibration,

is

just

one

of

them:

the

force

feedback

we,

which

you

will

feel

in

the

in

the

sony.

Ps5

dual

sensor

controller

is

called

kinesthetic

haptics

and

the

surface

is

for

the

friction

and

and

where

you

can

actually

get

the

different

types

of

you

can

get

the

feel

of

different

textures.

E

So

you

you

can

actually

get

the

feeling

that

the

the

that

you're

feeling

that

that

you're,

actually

touching

rock

or

soft

silk

or

a

sharp

object

or

all

that

be

because

of

the

surface,

friction

that

you

feel

on

your

fingertips

and

then

you

have

the

spatial

non-contact

haptics,

which

is

based

on

ultrasound

and

last

but

not

least,

you

have

the

thermal

haptics.

So

these

are

all

the

different

submodalities

of

haptics

that

you

need

to

take

into

account

and

and

designating

haptics

as

a

top

level.

Media

type

would

allow

us.

E

Please-

and

this

in

my

opinion,

is

a

pretty

compelling

reason

your

mileage

might

vary,

I

mean

for

for

the

human

sense

of

hearing.

We

have

the

top

level

media

type

of

audio

for

the

human

sense

of

seeing

we

have

the

top

level

type

top-level

media

type

video

and

for

the

equally

important

human

sense

of

touch.

I

think

it

makes

for

perfect

sense

to

have

the

top

level

media

type

haptics

and

more

to

the

point.

E

E

Okay-

and

there

is

a

considerable

amount

of

commercial

uptake,

as

I

kind

of

mentioned

in

passing

earlier-

it

is

rapidly

becoming

a

standard

feature

of

many

ce

devices,

and

these

bullet

points

basically

give

you

an

idea

of

the

extent

of

the

uptake

I

iphone

as

you

can.

As

you

see

here,

is

over

191

million.

This

is

data

from

last

year,

the

the

last

year

for

which

full

year

data

is

available,

and

it

does

have

native

success,

support

for

haptic

encoded

data,

the

the

aaa

app

format,

as

well

as

the

core

haptics

api.

E

The

w3c

has

got

an

html

vibration

api,

which

is

which

provides

haptic

support

in

the

mobile

web

browsers.

I've

already

mentioned

the

game

consoles

and

those

remain

a

very

popular

ce

device

or

close

to

40

million

units

sold

and

then

xr

is

in

high.

Here

is

an

upcoming

market

and

it

helped

in

large

part

by

the

first

version

of

the

kronos

open,

xr,

haptic,

api

and

so

haptic

media

is

expected

to

be

commonly

exchanged

between

these

devices

and

since

these

devices

in

in

total,

they

represent

the

majority

of

ce

devices

around

the

world.

E

And

haptic

data

formats

are

already

in

use.

These

are

prevalent

in

a

large

number

of

devices

around

the

world

and

these

would

live

as

subtypes

under

the

proposed

haptic

top

level

media

type.

Once

it's

once

it's

standardized,

or

once

it's

registered

the

aaa

app

for

for

format,

which

is

the

apple

haptic

and

audio

pattern.

Data

format,

which

is

this

is

the

de

facto

standard

encoding

on

all

ios

devices

and

ios

connected

game

peripherals

and

of

late.

It

has

seen

usage

and

adoption

beyond

apple

devices

as

well.

E

The

very

recently

in

android

11,

google

has

introduced

a

proprietary

extension

to

the

og

for

format,

specifically

to

any

enable

the

adhd

in

the

encoding

of

half

of

haptic

media

in

org

files.

The

ibs

haptic

data

format

is

a

vendor-specific

format

as

of

now

that

is

in

use

in

mobile

phones

from

lg

electronics,

a

number

of

models

like

the

v30

v40

and

the

newest

v50

that

are

sold

worldwide

and

gaming

phones

from

asus,

especially

the

rog

one,

and

the

rog

ii

and

rog3,

which

are

also

available

worldwide.

E

E

Now

these

are

these

subtypes,

don't

yet

haven't

yet

been

standardized,

but

the,

but

they

live

in

terms

of,

and

they

live

in

an

informative

annex

that

we

made

as

part

of

our

mpeg

iso

bmf

proposal,

and

we

propose

the

following:

distinct

4cc

codes

for

them.

Once

again,

these

codes

are

not

registered

yet,

but

the

standardization

pertaining

to

these

is

ongoing

in

in

some

cases,

and

the

plan

is

to

is

to

standardize

these

haptic

coding

formats

in

the

near

future.

The

first

one

is

hmpeg

or

hmpg.

E

E

Acting

coding

format,

that's

based

on

midi

and

hivc,

is

a

audio

to

vibe,

haptic

coding

format,

which

basically

refers

to

the

growing

area

of

automatic,

a

a

to,

b

or

or

audio

to

vibration,

conversion

algorithms,

so

one

standardized

these

for

these

formats

will

live

as

the

subtypes

under

the

proposed

haptic

top

level

media

type.

Next

slide.

Please.

E

Top

level

type

haptics

connects

to

a

sensory

system

of

a

touch

or

motion

directly

and

is

more

specific

than

the

abstract

application.

Type

application

historically

has

been

used

for

applications.

That

is

code,

which

means

it's

viewed

and

treated

with

great

skill

for

security.

Hack

pics

is

not

code

in

in

much

the

same

way

that

audio

and

video

are

not

core

either

and

haptics

is

a

property

of

a

media

stream.

It's

not

an

application

under

any

normal

definition.

E

This

is

a

somewhat

involved

slide,

but

I

felt

it

necessary

to

make

these

points

because

we

were

told,

as

part

of

the

instructions

for

rfcs

that

we

need

to

be

very

explicit.

So

I'm

going

to

try

and

get

just

touch

on

that

that

the

highlights

here

signal

data

is

typically

I

mean

haptics.

Data

can

be,

can

be

represented

as

collections

of

signal

data

or

it

could

be

represented

as

descriptive

text

in

xml

or

json,

or

a

similar

format.

E

Signal

data

is

typically

not

executed

by

endpoint

processors,

so

there

is

not

much

risk

there,

but

descriptive

text

is

passed

and

represented

in

memory

using

xml

data

structures.

Then

this

data

is

then

further

utilized

to

to

to

construct

one

or

more

signals

which

are

then

sent

to

the

actuation

hardware

and

because

of

the

media,

rendering

in

nature

of

the

data

path

for

haptic

coded

data,

you

can,

you

can

think

of

the

security

profile

of

haptic

data

would

be

pretty

consistent

with

the

security

profile

of

visual

or

audio

media

data.

E

So

in

theory

you

could

have

a

you

could

have

malicious

instructions

that

are

present

in

the

descriptive

haptic

media

and

those

could

execute

arbitrary

code

in

kernel

space

effectively.

The

high

passing

system,

permission,

structures

or

execution

sandboxes,

and

so

you

so

it

all

is

so

haptics

audio

video

and

media

data,

widespread

use

and

careful

attention

need

to

be

paid

by

the

operating

system

and

device

driver

implementers

to

ensure

that

the

synthesis

and

rendering

parts

do

not

provide

attack,

surfaces

or

malicious

payloads.

E

F

E

E

Okay,

the

these

are

the

last

two

slides.

I

believe

these

are

we

we

just

give

a

gig

give

examples

of

the

I

ids

haptic

type,

it's

the

subtype

would

be

would

be

ivs

and

the

file

extension

would

be

dot,

ibs

or

dot

ivt,

and

this

is

and

the

device

independent

haptic

effect

coding.

I

think

the

rest

of

the

part.

I

will

let

you

read

on

your

own

time.

I

will

point

out

one

that

there

is

a.

E

E

This

is

the

haptic

format

so

and

the

file

extension

would

be

dot

hacked

and

this

is

a

device

dependent,

haptic

coding

effect.

That's

effect,

that's

based

on

the

rif

standard,

the

research,

the

resource,

interchange

file,

format,

standard

and

it's

and

yeah,

and

that's

pretty

much

all

I

want

to

say

about

that,

and

I

believe

that

brings

me

to

the

last

slide

next

slide.

Please

that's

it.

E

E

Okay,

the

I

I

I

think,

I've

given

a

few

examples

there.

But

if

you

in

the

case

of

streaming

games-

and

if

you

wanted

to

send

the

haptic

the

data

by

itself

to

to

the

controller,

it

will

not

have

any

audio

or

video,

because

it's

mainly

meant

for

your

controller,

which

most

likely

has

deals

with

kinesthetic

feedback.

The

other

time

where

you

can

expect

haptics

to

be

sent

over

the

wire

just

by

itself.

E

If,

if

you

had

a

wirelessly

connected

bodysuit

or

a

vest,

for

example,

I

mean

the

the

this

might

seem

a

bit

far-fetched.

But

in

reality

there

were

at

least

three

or

four

companies.

At

last

year,

ces

we,

which

actually

were

showing

these

kinds

of

haptic

bodysuits,

with

up

to

between

12

and

12,

to

24

different.

E

I

I

663

6381,

so

the

codex

parameter

yeah

for,

for

example,

we

just

use

swedish

base

media

file

formats

so

and,

and

then

before.

So

this

is

the

codex

parameters

I

mean

you

have

the

fork.

Iso

cc

performance

for

haptics,

which

already

this

allows

you

to

tell

this-

is

container

file

with

these

haptics

types

in

it.

But

that's

do

we

have

a

direction

in

the

other

way

that

we

need

to

do

something

about.

E

Okay,

I

mean

I

will

have

to

admit

to

not

having

gone

through

6381,

but

if

you

are

referring

to

haptic

codec

specific

parameters,

we

do

envision

those

being

being

standardized

once

the

mpeg

cfp,

which

deals

specifically

with

an

mpeg

coding

format

standard

once

that

standard

is

finalized

in

the

in

next

april

or

in

the

june

that

cut

time

frame.

So

I

would

at

that

point

I

would

envision

6381

to

be

needed

to

be

updated.

I

A

So

this

might

be

a

good

time

to

jump

in

and

remind

folks

to

try

and

be

responsive

to

the

fundamental

dispatch

questions

we're

dealing

with,

as

well

as

the

the

merits

of

the

proposal.

So

if

we

get

fairly

nuanced

questions,

this

might

not

be

the

right

venue

to

work

them

through

and

actually

what

we're

trying

to

do

is

find

the

right

venue.

So

so,

please

be

responsive

to

that

question

as

we

explore

this.

J

Hi,

this

is

center,

you

think

about

applications

that

use

media

types.

You

know

the

idea

that

you

could

say

haptics

blah.

Instead

of

application,

blah

or

audioblock,

you

have

to

make

up

some

new

mime

types

for

the

individual

formats,

because

audio

doesn't

carry

them.

It

does

nothing

in

their

character,

but

if

you

think

about

generic

form

applications

that

know

that,

if

something

is

audio

star

audio

that

they

can

do

something

with

it.

J

E

Okay,

yeah,

I

I

can

respond

to

your

your

questions

and

comments.

The

first

point

is:

haptics

is

not

typically

encoded

in

the

same

way

as

as

audio

and

point

number

two.

Is

that

the

the

whole

point

of

our

mpeg

iso?

Bmf

proposal,

which

has

progressed

to

the

dam

stage,

which

is

a

draft

amendment

stage,

is

to

treat

haptics

as

a

first

order,

media

type

at

the

same

level

as

or

audio

and

video,

and

we

actually

have

a

working

implementation

of

our

proposal

that

works

both

on

ios

and

android

devices.

E

That

shows

that

you

could

have

an

mp4

file

which

now

has

three

tracks

and

and

as

far

as

your

pop

pop

point

about

the

audio

tools,

all

of

those

tools

use,

I

mean

they

all

ingest

mp4

files.

Now

it

is

true

that

I

mean

once

you

have

once

you

have

haptics

as

part

of

the

iso

bmf

the

standard

they

did.

They

are.

They

are

going

to

be

a

third

track

in

an

mp4

file

and

there

might

need

to

be

changes

to

be

made

in

the

media

player

engine.

E

That

now

recognizes

the

hacked

box

in

in

iso

bmf

in

the

iso.

Bmf

header

and

make

allowance

for

that,

but

that's

just

the

progression

of

the

standard,

it's

no

different

than

the

next

version

of

a

any

other

and

mpeg

standard.

Now

that

being

the

case,

the

the

the

third

point

I

would

make

is

haptics

is

or

in

many

cases

like,

if

you

take

the

ahap

format,

they

have

for

format.

If

you've

ever

looked

at

an

ahab

file,

it's

actually

a

bunch

of

json.

E

So

it's

a

very

it's

a

very

descriptive

file

format

that

basically

says

at

what

offset

with

respect

to

the

audio

timeline.

Do

you

want

to

play

out

a

haptic

effect

which

could

be

a

short

click

or

a

or

a

buzz

or

some

kind

of

a

transient,

and

that

and

at

what

percentage

strength

you

want

that

transient

to

be

played.

E

So

those

kinds

of

things

you,

you

can't

really

do

with

a

with

an

audio

format

and

it's

for

those

reasons

we

didn't

want

to

shoot

on

a

haptics

into

any

of

the

existing

or

audio

audio

formats.

We

felt

that

you

could

get

the

most

flexibility

and

benefit

from

the

growing

field

of

haptics

if

it

were

its

own

top

level

type.

A

E

I

mean

that

seems

like

you

are

asking

me

if

I

know

the

procedure

in

ietf

I

mean

I

I

would

be

looking

to

you

for

that

I

mean

what

is

the?

What

would

you

consider

to

be

the

next

step?

I

mean

we

we

have,

we

have.

We

have

made

a

case

and

we

have.

We

have

addressed

all

of

the

comments

we

have

gotten

so

far.

I

really

appreciate

the

thorough.

A

Presentation

I

mean,

I

think

I

asked

the

question

just

to

make

sure

to

refocus

the

conversation

we're

having

on

that

dispatch

question

and

to

make

sure

you

know

if

you

had

an

opinion

on

that,

as

some

folks

do

when

they're

making

their

presentation.

So

that

was

clear

to

the

group,

but

I

want

to

make

sure.

A

I

mean

fundamentally

that

will

be

driven

through

iatf

consensus

and

the

the

purpose

of

this

exercise

here

is

just

to

get

it

into.

You

know

the

room

that

will

start

making

those

forms.

We

will

not

reach

consensus

and

dispatch

on

the

merits

of

it,

so

janna

you're.

Next,

if

you

can

speak

to

this

part

of

the

question,

I

would

appreciate

it.

We've

only

got

about

six

minutes

left.

K

Okay,

I

I

I

was

going

to

ask

and

is

probably

a

question

for

the

area,

directors

or

or

or

the

other

dispatch

chairs,

given

that

this

draft

has

gone

through

one

iteration

and

there's

been.

Presumably

some

engagement

on

the

mailing

list

is

what

I

gathered

now,

I'm

not

paid

attention

to

that.

But

does

it

are

there

other

folks,

besides

immersion

technologies

that

are

interested?

Presumably

they

are

and

are

they

willing

to

come

here

and

engage

at

the

idea?

K

The

reason

I

ask

this

question

is

because

this

depends

to

me

at

least

where

this

lands

kind

of

depends

on

who

the

other

parties

that

are

interested

are.

I

can

read

the

draft

and

and

to

me

it

makes

sense

to

have

haptics

as

a

high

level

thing,

but

it

doesn't

matter

because

I'm

not

the

one

building

this

stuff.

So

I'm

curious

to

hear

there

are

others

who

have

expressed

interest

in

having

a

standard

on

this.

K

If

they've

expressed

an

interest

in

actually

building

through

the

standard

word

to

be

described,

because,

ultimately,

what

you're

trying

to

do

is

generate

among

people

who

are

who

are

engaged

on

the

details

of

the

draft

and

for

that

you'd

need

people

who

are

not

just

interested

on

the

side

but

are

actually

implementing

and

deploying

this

stuff.

Are

there

people

like

that.

E

Bmf.

In

fact,

the

chair

of

the

a

mpeg

systems,

file

format,

sub

group

is

david,

singer

he's

from

apple

and

apple,

has

their

own

haptic

format,

and

they

have

actually

been

quite

active

because

they

have

an

interest.

They

have

a

big

business

interest

in

haptics,

so

they've

been

quite

active

in

creating

this

mpeg

cfp,

which

I

mentioned

the

call

for

proposals

now

outside

of

that

a

a

a

little

bit

of

anecdotal

evidence

might

be,

but

we

immersion

founded.

E

E

A

A

M

A

N

You

with

me

richard,

hey,

yeah,

it's

just

busy

granting

permission

prompts

real

quick

comment

here.

I

think

this

is

a

very

natural

80

sponsor.

I

think

we

should

not

waste

the

bandwidth.

The

stabilization

working

group

on

this

ietf

last

call

in

the

ensuing

discussion

will

be

plenty

to

nail

down

any

any

of

the

questions

that

have

been

raised

here.

That's

it.

A

L

I

think

the

top

level

type

makes

sense,

so

I

do

support

that

on

the

question

of

where

to

dispatch

to

is

there

any

point

in

a

work

group?

I

think

the

relevant

question

is:

what

do

you

plan

to

do

beyond

the

top

level

type?

Do

you

plan

to

have

any

of

these

elementary

stream

formats

standardized

within

itf,

or

do

you

plan

to

have

payload

formats

in

in

avt

core

carrying

these,

or

do

you

plan

to

describe

them

in

sdp

and

then

music?

E

Type:

okay:

I

can

try

to

answer

that.

I

mean

at

least

one

of

the

four

formats

we

which

have

mentioned

is

not

going

to

be

under

ietf,

it's

going

to

be

done

by

ieee

and

the

second

one.

The

hmpg

will

probably

fall

out

of

the

work

that's

ongoing

in

mpeg,

which

leaves

the

remaining

two,

the

he

m

and

the

havc.

L

E

Not

yet,

but

but

it's

interesting,

you

should

bring

up

spatial

haptics,

because

that

is

part

of

phase

two

of

our

mpeg

cfp

and

it's

so

under

the

overall

umbrella

of

mpi

or

mpeg

in

immersive

media.

So

once

phase

two

of

the

ccfp

is

over,

we

do

expect

something

on

spatial

haptics

to

be

standardized.

But

but

it's

probably

the

short

answer

to

your

question-

is

it's

too

early

to

say.

A

D

So

two

things

one

is

I'm

supportive

of

getting

this

top-level

domain

done?

That's

my

personal

comment.

One

comment

as

idf.

Listen

to

mpeg

this

activity

is

by

no

means

the

most

active,

but

also

by

no

means

the

quietest

that

mpac

has.

There

is

a

significant

amount

of

industry,

interest

visible.

That

goes

well

beyond

one

startup

company.

Thank

you.

C

Yes,

I

I

think

this

is

the

the

the

primary

discussion

of

this

will

be

on

the

media

types

list.

I

think

we

don't

need

a

working

group

to

do

this,

but

I

think

I

I

do

want

to

defer

to

the

community

for

the

decision

on

that.

So

I'm

hearing,

mostly

people

thinking

ad

sponsored,

is

fine

and

I'm

good

with

that

and

murray

being

next

he'll

he'll

he'll

tell

you

whether

he's

willing

to

be

the

sponsor

or

not

and

he's

a

media

type

reviewer

anyway.

So

I'm

done.

O

A

P

P

We

should

move

forward

with

some

sort

of

topical

type,

but

the

hint

of

magnus's

question

several

other

peoples

that

have

worked

in

this

space

before

has

been

like

there's

some

really

complicated,

tradeoffs,

because

nothing's

perfect

in

this

space.

The

audio

plus

video

is

already

a

disaster

to

explain

when

you

use

one

and

when

use

the

other,

and

this

is

going

to

expand

that,

and

I

think

that

it's

not

that

this

drafts

complicated.

P

It's

that

the

set

of

contradictory

rules

we've

already

set

up

in

this

space

is

going

to

be

complicated

and

there's

going

to

be

no

way

to

get

this

draft

to

meet

every

single

one

of

our

existing

rules

simultaneously,

and

I

think

that

that

complexity

is

one

of

the

reasons

that

I

would

argue

that,

given

we

already

have

very

experienced

media

type,

people

expressed

that

this

shouldn't

even

be

done

at

ietf.

You

may

not

want

to

have

that

whole

conversation

on

the

itf

last

call

list,

which

is

where

it's

going

to

end

up.

P

P

A

B

I,

what

I

would

take

this

has

been,

I

think,

with

cullen's

last

comment

there

and

it

it

probably

makes

sense.

This

is

a

com.

The

dispatch

conversation

needs

to

continue

because

I

don't

think

we've

necessarily

reached

a

resolution,

so

we

probably

need

some

discussion,

but

among

the

authors,

chairs

and

area

directors

in

the

very

short

very

in

the

very

near

future

and

and

see

if

we

can

resolve

that.

A

C

I'd

like

to

stick

one

quick

thing

in

in

response

to

culinary

yeah,

I

suppose,

and

the

ads

can

decide

to

ad

sponsor

anything

they

want

to,

but

one

of

the

reasons

we

have

dispatches

exactly

so

we

will

listen

to

what

dispatch

has

to

say

about

these

things.

So,

thanks

for

your

comments

on

that

and

yeshwand,

I

want

to

make

it

clear

to

you

that

the

message

I'm

getting

from

this

is

we're

going

to

do

this.

E

R

A

A

Q

T

Q

Q

Okay,

so

webrtc

is

still

the

the

best

media

transfer

used

for

streaming

real

time

and

audio

and

video,

but

while

it

is

possible

to

use

other

transport

for

ingesting

webrtc

content

into

a

media

server

when

using

webrtc

both

for

ingesting

and

delivering

the

the

media

allows

stream

to

have

several

good

properties,

but

mainly

you

can

work

on

browsers

for

both

the

publishing

side

and

the

viewer

side.

You

avoid

to

have

protocol

translation

between

something

that

it

is

not

webrtc

into

webrtc

this.

Q

You

have

to

do

that.

You

will

most

probably

cause

delay

and

have

to

increase

the

complexity

of

the

implementation,

because

you

already

are

supporting

webrtc

for

for

streaming

out

so

implementing

something

different

for

assuming

and

doing

the

protocol

translation

is

is

going

to

cause

more

more

will

will

be

more

difficult

to

implement.

I

just

have

webrtc

foreign,

and

in

most

you

will

most

probably

have

a

protocol.

Then

problems

do

with

the

codes.

Q

For

example,

rtmp

does

not

support

opus

at

all,

so

you

will

have

to

do

transcoding

between

other

codecs

and

and

the

codex

that

a

webrtc

supports

and

also

some

feature

that

they

are

webrtc

only.

Are

you

will

not

be

able

to

support

it?

End-To-End,

for

example,

some

servers

may

decide

to

just

do

a

rtxor

or

flag

or

or

effect

end-to-end,

just

from

whatever

the

the

the

the

sender

supports

that

the

producer

support

to

the

viewer.

Q

Also

they

are

complex

implement,

so

it

is,

they

will

not

likely

be

in

use

at

all

and

in

rtsp

that

this

could

be

the

closest

that

we

can

have

for

the

broadcasting

industry

and

is

not

compatible

with

rtc

sdp

of

an

asset

model

and

also

a

for

for

publishing

the

rtcp

record

and

doing

a

push

from

our

rtcp

server

is

not

very

widely

supported.

So

the

consequence

is

that

now

it's

its

service

has

its

own

implementation

of

the

of

custom

adult

protocols.

Q

So

you

want

to

have

an

encoder

that

supports

several

several

webrtc

instrument

services.

Each

way

you

will

have

to

implement

a

one

protocol

for

one,

let's

see

a

streaming

service,

so

this

is

not

probably

that

it

is

going

so

something

that

is

going

to

happen.

So,

at

least

in

our

opinion,

do

we

the

the

consequences

that

we

need

a

new

reference

signaling

protocol

for

this

use

case.

Q

Q

So

what

would

be

the

requirements

from

this

new

streaming

protocol?

I

mean

it

needs

to

be

simple

and

simple,

to

implement

and

also

simply

to

to

use

I

mean

and

to

be

able

to

set

the

table

or

to

provision

an

encoder

with

this

new

protocol.

It

will

have

to

be

as

easy

as

you

have

now,

with

an

rtp,

a

rtmp

uri,

so

you

will

not

have

to

do.

Q

I.

You

should

only

be

need

to

to

point

your

your

encoder

with

an

uri

and

have

the

the

streaming

and

the

streaming

up

the

and

for

ingesting

the

uk

is

quite

specific,

so

it

is

only

required

to

you

only

require

to

to

implement

a

set

of

webrtc

functionality,

mainly

that

you

only

need

to

support

only

the

directional

flows

from

from

the

from

the

encoder

to

the

to

them

into

the

media.

Q

So

that

the

profit,

the

proposition

solution

is

something

that

it

is

really

really

simple.

It

is

just

a

doing

an

http

with

an

sdp

offer

to

from

from

the

encoder

to

to

the

media

server

and

receiving

the

sap

answer

from

the

from

the

from

the

from

the

media

server.

With

this

sdp

offer

asset,

you

set

up

a

webrtc

and

a

session.

Q

Normally

by

any

other

of

those

both

sides,

a

authentication

bay

will

be

a

an

authorization,

will

be

supported,

natively

by

http,

a

header

under

the

adopted

doctorization

header

with

a

video

token.

So

it

is

just

exactly

what

I

mean

a

standard,

http

stuff

and

also

you

will

use

you

will

it

will

support

http

radiations

for

load,

balancing.

Q

Yes,

you

create

apple

connection

and

you

get

the

the

offer

just

to

do

a

fetch

with

it,

then,

for

sending

the

post

to

the

media,

server

receive

the

the

answer

and

and

pass

it

that

they

said

remote

description

and

once

it

is

set,

and

you

can

control

the

the

state

of

the

of

the

feed

with

just

by

the

the

on

connecting

state

changes.

Events

received,

so

you

have

the

connected,

disconnected

failed

and

closed.

Q

We

have

tried

to

reduce

the

complexity,

mainly

because,

if

it

is

going

to

be

implemented

by

the

by

native

encoders

and

hardware-based

say

solutions,

I

mean

trying

to

reduce

the

the

requirements

for

implementing.

It

was

important,

so

we

have

restricted

that

that,

in

a

way

that

well

first,

the

stp

bundle

is

always

required

and

rtcp

maxine.

Q

Q

Q

There

has

been

some

discussion

if

this

should

be

a

make

a

bit

more

complex

to

have

and

to

be

able

to

control

the

state

of

this,

this,

the

of

the

stream

by

http

rest

or

something

like

that.

But

this

is

the

the

bare

minimum

that

it

is

that

it

is

required

to

in

order

to

to

have

this

same

working

and

with

yeah.

A

Q

I'm

I'm

not

aware

what

is

the

best

scene.

I

mean.

I

think

that

this

is

really

simple.

I

mean

the

draft

is

available

and

it

is

really

small.

So

I

think

that

the

the

at

least

my

my

opinion

should

be

that

you

should

be

the

the

whatever

is

best

for

for

having

a

fast

solution,

and

I

don't

expect

to

have

much

discussion.

F

Q

Mainly

because

if

I

going

to

to

speak

to

a

hardware

encoder

a

band

to

implement

it,

I

need

to

to

say:

please

implement

it,

so

it

is

possible

to

use

it

with

a

more

than

one

service.

I

mean

I

already

have

implemented

it,

but

if

it

is

not

a

standard,

it

would

be

just

another

thing

that

and

that

my

server

implements,

but

no

other

server

implements.

So

if

we

can

agree

that

having

this

as

a

specification

within

the

encoder,

well

they're

going

to

can

be

say

that

I

just

need

to.

F

A

U

U

Q

Yeah,

so

I

think

that

I

may

not

have

answered,

probably

or

very

carefully

to

today

completely

to

the

bernard

question

before

I

mean

that

the

situation

in

in

web

in

streaming

is

different

with

that

in

common

webrtc

cases

that

you

have

one

vertical,

that

you

control

the

clients

and

the

and

the

streaming

services.

Typically

in

streaming,

you

will

have

a

hardware

encoders

that

are

nor

are

even

that.

Q

Works

is,

for

example,

with

a

with

you

support

rtmp,

you

just

implement

rtmp,

and

you

are

able

to

talk

to

facebook

to

twitch

to

to

a

broad

range

of

of

service.

So

the

idea

is

here

is

the

same:

to

be

able

to

to

have

it

in,

for

example,

in

in

obs

or

in

in

the

vendor,

for

the

for

the

encoder

is

different,

that

the

one

that

is

deploying

in

the

the

streaming

service.

Q

Q

It

is

yeah,

it

is

already

implemented

in

both

in

in

in

janus

and

and

in

medusa.

I

mean

in

in

in

our

in

the

melica's

streaming

platform,

and

we

have

implemented

in

in

an

obs

fork

that

we

are

trying

to

to

get

into

to

the

main

one,

but

the

complexity

is

having

to

is

compiling

the

delivery

of

rtc,

but

a

part

of

the

web

browser

we

have

already

been

in

in

obs

and

trying

to

get

traction

with

the

people

that

it

is

implemented

in

in.

However,

encoders.

W

I

just

wanted

to

say

I

I

think

this

is

a

good

good

thing.

We

should

be

doing.

I

think,

there's

some

still

discussion

to

be

had

on

how

much

simpler

it

could

be.

I

think,

there's

still

some

optionalities

that

could

be

taken

out,

so

I

kind

of

think

that

publishing

it

as

is,

might

it

needs

another

round

of

discussion

in

terms

of

the

detail

from

my

point

of

view,

but

I

I

think

it's

eminently

implementable,

even

as

it

is.

O

Intended

this

is

intended

to

be

a,

I

know

you

wrote

a

a

replacement.

You

know

flash

standardization

replacement

for

our

rpmp

is

this

feature

equivalent

to

rtmp

has

used,

because

one

thing

I

did

notice

is

it

didn't

seem

other

than

you

know

the

stp

proper.

It

didn't

seem

as

you

wrote

it

terribly

extensible.

O

O

Q

Yeah

well,

the

goal

is

not

to

replace

and

rtmp

I

mean

rtmp

or

the

other

broadcasting

protocol

can

that

will

be

and

use

for

different

things.

I

mean

I

don't

expect

this

to

replace

and

the

current

broadcasting

solution

for

ngs,

but

the

problem

is

that

if,

for