►

From YouTube: IETF109-NETCONF-20201118-0500

Description

NETCONF meeting session at IETF109

2020/11/18 0500

https://datatracker.ietf.org/meeting/109/proceedings/

A

B

A

A

A

The

hum

window,

which

is

the

one

we

you'll

use

for

polling,

will

be,

is

right

next

to

the

chat

tab

on

the

left

side.

No

need

to

do

any

virtual

blue

sheet

signing

meet.

Echo

will

record

your

presence

when

you

need

to

queue

up

to

ask

any

questions.

Make

sure

you

raise

click

on

the

raise

hand

icon,

and

we

will

then

call

you

and

put

you

on

the

cue

to

speak.

A

A

Right

and

for

jabber,

I

have

this

link

to

use.

If

you

want

to

set

up

jabber

and

don't

ask

me

too

many

details,

the

whatever

information

is

there

probably

is:

what

does

I

use

to

set

up

jabber,

but

also

note

that

meet

echo

chat

window

will

cross

post

or

they

will

cross

post

to

each

other?

So

if

you

are

on

jabber,

we

will

see

it

on

the

meet

echo

chat

window

logs,

of

course,

for

the

meeting

will

be

available

after

the

meeting.

A

A

A

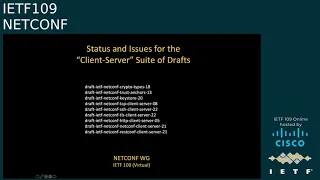

The

client

server

suite

of

drafts

will

go

into

working

class

called

once

the

security

drafts

listed

above

clear

last

call.

We

don't

want

to

inundate

the

working

group

with

more

documents

till

we

have

cleared

what's

on

the

deck

https

notification

is

nearing

working

group

last

call

we'll

talk

about

it.

When

I

present

that

draft

young

push

notification

messages

again

is

waiting

on.

Https

draft

will

resurrect

it

once

we

are

ready

to

send

https

notification

to

last

call.

B

B

C

So

just

a

quick

question

on

the

meeting

notes.

I

went

to

cody

md,

but

it

gives

me

a

blank

new

page.

It

looks

like

the

fact

that

I've

created

it.

So

I

can

add

some

meeting

notes

to

that,

but

doesn't

have

the

normal

structure

that

you

would

expect.

Maybe

that'll

need

some

sorting

out

afterwards,

unless

you

know.

A

A

And

in

order

to

minimize

exchanging

too

many

screens

again,

we'll

continue

with

the

csr

bootstrapping

sctp

draft

and

then

I'll

take

over

followed

by

pair

and

thomas

the

non-chartered

items.

We

have

penguin

penglio

and

chen

coming

back

with

the

drafts

they

had

presented

before,

and

then

we

have

one

new

draft

from

yan

lindman,

followed

by

kent

and

chin

talking

about

a

list

pagination

mechanism.

A

A

D

B

B

This

is

the

update

to

the

client

server

suite

of

drafts

since

itf

108,

just

a

few

small,

high-level

updates

and

crypto

types.

We

added

the

password

grouping

to

define

a

union

between

a

clear

text

password

and

an

encrypted

password

and

and

then

you'll

see

that

grouping

is

now

being

used

in.

I

think

three

of

the

other

drafts

and

we

also

added

feature

statements

for

the

encrypted

formats,

specifically

password

encryption,

symmetric,

key

encryption

and

private

key

encryption.

Those

are

all

now.

Those

are

the

names

of

the

features.

B

It's

okay,

those

are

the

names

of

the

features

and,

of

course,

they're

controlling

whether

or

not

the

server

supports

the

encrypting

of

passwords,

symmetric

keys

and

private

keys

respectively,

and

also

a

a

certificate

expiration

notification.

We

added

a

feature

for

that

for

for

controlling

whether

or

not

the

server

supports

sending

notifications

when

certificates

are

expiring

in

trust,

anchors

and

actually

really

for

the

remaining

the

well

you'll

see.

B

I

guess

you

might

even

say

to

some

degree

they

were

editorial,

and

so

you

know

the

changing

and

improving

the

way

the

content

was

being

presented

was

reflected

in

all

the

drafts.

So

that's

essentially

the

change

that

was

made

in

trust

anchors

and

in

all

the

others,

as

well.

Also

in

the

keystore

draft,

their

section

four,

which

is

entitled

encrypting

keys

for

configuration,

was

pretty

much

entirely

rewritten

to.

B

Please

moving

on,

we

have

a

tcp

client

server.

So

here

was

the

case

in

the

socks

gsa,

api

we

modified

it.

There

was

a

field

for

a

password,

so

we

modified

it

to

use

the

password

grouping

and

now

it's

the

case

that

the

password

may

be

in

clear

text

or

encrypted

there

in

that,

in

that

configuration

data

model

we

also

added.

There

was

missing

mandatory.

B

B

That

password

was

previously

only

it

could

previously

only

be

clear

text,

and

now

it's

using

the

password

grouping,

so

either

clear

text

or

encrypted

password

can

be

configured

in

tls

client

server.

The

in

the

both

the

client,

authentication

and

server

authentication

for

psks,

it's

got

converted

from

being

a

presence

container

to

a

leaf

of

type

empty.

B

There

was

a

number

of

fixed

needs

that

got

cleaned

up,

and

that

was

the

main

update.

Okay

in

the

http

client

server

draft,

there

was

also

a

some

fixed

means

that

got

removed

in

the

http

client.

You

know

when

you're

using

basic

authentication

there's

a

password.

It

was

previously

just

clear

text

now

it's

using

the

password

grouping,

so

it

can

be

encrypted,

and

strangely,

oh

okay,

I

see

it

now

hovering

over

the

screen.

It.

It

blocks

the

text

of

what's

being

presented

in

the

both

the

net

conf

incline

and

client

server

drafts.

B

In

general,

we've

been

waiting,

and

we

I

just

putting

on

my

chair

hat

for

a

soaked

moment

adam

and

I

have

been

waiting

for

these

first

three

drafts

to

stabilize

from

a

sector

perspective

and

also

from

a

yang

doctor

perspective.

I

think,

there's

a

rein

doctor

review

outstanding

for

these

drafts,

so

we're

hoping

to

get

that

those

updates

sort

of

completed

for

these

first

three

core

drafts

and

then

whatever

cascading

changes

would

occur,

such

as

the

ones

you

saw

already.

A

A

A

B

B

B

B

Sorry

for

that,

for

that

edit

to

fix

that

mall.

That

editorial

comment

that

I

left

in

the

securities

considerations

section

next

slide,

please,

okay!

So

when,

when

this

draft

was

presented

last

time,

we

had

said

that

the

authors

had

made

every

effort

to

for

the

zero

for

the

draft

to

basically

be

ready

for

last

call.

It

was

already

ready

for

last

call.

We

had

taken

the

time

and

effort

to

to

do

everything,

including

security

considerations

and

considerations

and

everything

we

thought

we

were

done

and

ready.

B

But

then

one

of

the

authors

had

an

exchange

with

another

itf

contributor

from

a

different

company

and

not

not

someone

who's

commonly

in

the

netcomfort

group.

Regarding

crmf,

which

is

a

a

microsoft

format,

stands

for

certificate,

request

message:

format:

it's

not

actually

it's

not

technically

a

microsoft

format

anymore,

it's

an

itf

format,

but

it

was

originally

supported

by

microsoft

and

they

wanted

to

ensure

that

crmf

was

being

supported,

and

so

we

thought

that

we

were

going

to

need

to

do

an

update

for

that.

B

B

However,

to

bullet

point

number

three:

the

same

comment

from

that

offer:

author,

sorry

itf

contributor

in

a

different

working

group.

Spun

off

a

number

of

some

other

drafts,

so

you'll

notice

in

the

lamps

working

group,

a

couple

drafts

by

russ

housley

on

updating,

crmf,

algorithms

and

yet

another

one

for

updating,

aes

gmac

algorithm.

B

A

A

A

A

A

A

A

We

did

add

another

example

for

xml

the

corresponding

example

actually

for

xml

in

the

drum.

So

with

those

two

examples

and

the

fact

that

we

have

completed

the

security

considerations

section

let's

go

to

actually

I

don't

have

this

end

of

the

slide

yeah.

So

with

those

two

changes,

we

believe

that

this

document

is

also

ready

for

working

group

last

call,

but

rather

than

us

teacher,

is

calling

for

it,

we'll

both

step

down

and

see.

C

A

C

So

we've

got

10

people

that

say

they're

happy

if

this

goes

to

working

with

last

call,

there's

no

one

who's

chosen

not

to

raise

their

hand

out

of

34

participants.

So

it's

11..

I

think

I

think

that's

fine,

so

I

think

we

can

yeah

it's

going

up

slightly,

so

I

think

we

can

take

this

to

first

to

do

to

kick

off

the

working

last

call.

I

will

work

with

kent

mahesh

to

work

out

exactly

how

what

the

process

should

be

in

this

particular

case.

A

A

A

B

F

So

next

slide,

please

smash

so

I'll

make

a

quick

reminder

of

the

goal

of

the

draft.

Then

I'll

show

the

main

difference

with

the

0-0

trying

to

cover

the

most

important

comments

that

we

received

on

the

mailing

list.

Since

then,

then,

the

main

point

is

to

shut

the

my

mic

and

let

you

guys

discuss

and

take

notes

on

this.

So

next

slide

please.

F

Next

slide,

please

manage

so

our

first

set

of

changes

based

on

the

comments

we

received

in

the

last

month.

We

renamed

for

obvious

reason

the

fragmentation

option

into

segmentation

option,

because

that's

basically

what

we

do

in

order

to

be

consistent

with

the

netconf

distributed

notif

draft,

I

changed

the

generator

id

term

into

observation

domain

id.

F

Then

we

reworked

the

applicability

section

part

of

the

draft

to

align

with

the

rfc85

on

udp

usage

guidelines,

mostly

what

we

covered

is

congestion,

control

or

lag

thereof,

dealing

with

mtu

and

lack

of

reliability

of

the

draft.

Basically,

what

we

did

is

to

show

that

the

context

in

which

this

protocol

would

be

deployed.

F

Is

aligned

with

the

recommendations

that

are

proposed

that

are

defined

in

the

rfc,

so

I

did

not

list

all

the

strict

recommendations

and

guidelines

of

the

rfc,

but

I

I've

been

sticking

to

the

actual

guidelines

for

the

context

of

application

of

this

protocol

next

slide.

Please,

then,

we

proposed

some

changes

to

the

notification

message

header.

So

what

you

can

see

first

is

that

we

stole

a

bit

from

the

version

field

to

introduce

what

we

called

an

encoding

space

flag

when

it

is

unset.

F

It

means

that

the

encoding

type

field

is

standard,

meaning

that

what

you,

the

value

that

is

in

there

is

defining

an

encoding

that

is

standard

and

when

this

flag

is

set,

it

means

that

we

are

falling

into

private

encoding

tab

space.

What

we

mean

by

private

is,

for

example,

gpb.

That

is

not

a

standard

and

what

would

go

there

is

any

encoding

type

that

a

vendor

would

support,

that.

That

is

not

a

standard,

and

that

is

the

vendor

would

designed

on

its

own,

which

encoding

type

it

would

decide

to

use.

F

I

agree

that

relying

on

the

vendor

documentation

to

figure

out

what

your

favorite

vendor

is

sending

to

you

would

feel

a

bit

archaic,

so

we

have

been

having

discussion

on

whether

we

would

need

netconf

to

be

able

to

retrieve

a

description

on

what

the

vendor

is

using

as

an

encoding

type.

This

is

open

for

discussion

me

personally.

I

don't

care

too

much

about

non-standard

encoding

types,

so

I'm

really

really

open

to

that

discussion.

F

I

need

those

two

fields

in

order

to

do

a

consistent

job

there.

So

that's

the

main

reason.

Other

reason.

Another

reason

is

quality

of

life.

When

I

have

those

fields

up

there,

I

can

easily

do

load

balancing

based

on

by

preserving

consistency

on

the

generator

that

is

being

done,

so

I

can

do

load

balancing

based

on

the

on

the

generator

and

that

easiest

distributed

uses

of

the

of

the

collector.

F

F

There

was

quite

a

bit

of

discussion

on

how

to

deal

with

this

private

space,

so

I

already

said

that

we

can

either

rely

on

vendor

documentation

or

write

a

netconf

draft

to

retrieve

that

information.

I

I

would

like

you

to

discuss

this

and

and

honestly

we'll

follow

we'll

follow

the

decision

on

the

working

group.

I

will

not

fight

against

any

decision

that

you

guys

would

make

on

this

sure

can't

if

you

want

to

go

ahead.

B

Discount

as

a

contributor

regarding

the

version

being

zero,

I

do

recommend

it.

I'd

recommend

that

in

fact

there

is

a

separate

rfc.

I

don't

know

the

number

of

hand,

but

there

is

a

ietf

recommendation

that

any

enumerated

field,

both

the

zero

and

the

max

bit

values

are

reserved

values,

and

so

yes,

both

zero

as

well

as

I

guess,

seven,

the

value

seven

should

be

reserved

fields,

values

for

us,

if

possible,.

F

A

A

F

No,

what

I

was

trying

to

to

say

is

that

if

you

want,

we

can

define

one

okay,

I'm

okay,

to

to

provide

a

draft,

a

companion

draft

to

do

that.

Okay,

personally,

I

I

don't

care

really

about

non-standard

encoding,

but

if,

if

that's

needed,

if

we

consider

here

as

a

working

group

that

basic

archaic

vendor

documentation

would

not

work,

then

we'll

do

the

work

of

providing

this

mechanism.

F

F

So

if

you

want

to

pull

the

working

group

on

how

they

want

to

deal

with

that,

I'm

fine

with

that.

Currently

the

people

I'm

interacting

with

care

about

the

standard

ones,

mostly

right,

and

so

this

was

the

reason

why

we

made

this

change

was

to

deal

with

the

fact

that

gpb

is

not

will

be

supported

by

vendors

and

is

not

standard,

and

so

it

was

feeling

weird

to

have

it

be

listed

in

the

draft

and

given

a

code

point

in

the

standard

encoding

field,

and

so

that

was

our

way

of

dealing

with

that.

F

If

you

want

to

change

this,

I'm

also

open

to

this.

We

had

also,

for

example,

a

suggestion

to

to

have

to

not

use

a

space

flag,

but

to

reserve

the

last

values

of

the

encoding

type

field

and

and

let

vendors

use

the

research

space.

I

wanted

to

make

things

more

clear.

You

know

you

see

and

so

and

not

have

to

use

reserved

non-documented

bits,

and

so

I

I

it

was.

I

did

that

for

clarity

reasons.

If

you

want

me

to

roll

this

back,

I'm

fine

with

this.

I

just

would

like

to

know

yeah.

H

G

I

F

F

I

Well,

I

don't

know

what

you

know,

ad

reviews

and

other

later

reviews

are

gonna

produce

as

as

objections,

but

I

don't

know

if

there's

a

problem

with

with

how

it's

be

referenced

or

or

something

like

that,

if

it's

a

documentation

or

not

so

and

then

hopefully

you

just

put

the

enumeration

back

and

it'll

be

important

for

people

to

work

with

it.

My

original

action

was

just

the

the

the

there

was

no

really

reference

given

document,

not

that

it

shouldn't

be

there.

F

Actually,

you

might

be

right

that

if

we

take

it

out

and

and

and

use

the

approach

where

we

reserve

some

values

that

would

be

used

for

private

use,

there

would

not

be

any

reference

to

non-standard

encoding,

but

then

I'm

afraid

that

the

question

the

question

will

once

again

be

raised.

How

how

does

an

operator

realize

in

a

multi-vendor

context

and

multi-uh

release

context?

Which

box

is

sending

what

I

mean

right?

This

is

the

main

problem

with

non-standard

stuff.

G

A

C

I

I

wasn't

necessarily

going

to

give

an

opinion

as

an

ad

on

this.

I

think,

potentially

some

further

discussion.

I

I

had

a

question

really

as

an

individual

that

is

is

he's

just

saying

could

be

sufficient

enough

in

terms

of

knowing

what

that

encoding

actually

would

be,

because

I

assumed

that

with

yang

there's,

there's

a

couple

of

different

ways

that

you

can

code

this

data

in

yang.

C

You

can

either

have

a

generic

gpb

encoding

of

gang

data

or

in

some

cases

I

thought

some

people

use

specific

generated,

gpp

models

generated

from

the

gang

data.

So

is

there

an

issue

there?

That

gpp

is

is

not

the

issue

about

whether

the

encoding

is

known

or

not,

but

actually

what

will

that

data

look

like,

and

maybe

that

could

be

solved

by

being

more

specific

about

exactly

what

the

encoding

is.

But

I

I

do

worry

about

having

something

that

says

it's

done

in

this

way,

but

it's

unspecified.

F

Yeah

the

I

completely

agree

with

you.

The

good

thing

is

that

for

this

draft

it

does

not

matter

because

I

would

like

to

answer

with

not

my

problem

answer

because

to

me,

I'm

packing

messages

that

I

I'm

reassembling

messages

when

they

are

split

at

the

transport

layer

with

this

draft

and

then

I'm

passing

it

down

towards

the

big

data

people

which

know

when

they

register,

when

they

use

a

distributed,

subscription

to

go

to

connect

to

the

box

which

deal

at

that

on

that

side

on

how

they

are

going

to

do

that.

G

B

As

a

contributor,

as

there

was

a

comment

made

about

the

https

notif

draft

it,

it

would

also

need

to

support

private

encoding

if

this

were

necessary-

and

I

just

wanted

to

state

that

I

believe

that's

already.

The

case

speak

in

two

ways:

first,

if

if,

if

there's

using

http

media

types-

and

it

does,

you

know,

define

us-

or

I

should

say-

use

the

standard

media

types

for

json

coded

young

data

and

xml

encoded

yang

data,

and

but

of

course

they

could.

B

You

know

private

media

types

could

be

generated

and

created

and

used

and

then,

secondly,

for

those

for

when

subscribe,

notifications

are

being

used

and

they're

being

configured

for

configured

subscribe

notifications,

there's

a

yang

identity

called

encoding.

I

think,

and

currently

there's

sub

identities

for

json

and

xml,

but

of

course

private

or

other

standard

identities.

Sub

identities

could

be

defined

as

well.

So

I

think

I

think

it's

already

supported

there

if

to

encode

private

encodings

for

http,

for

notifications

for

https

native.

F

B

F

Last

last

update

we

shrunk

the

segment

number

space

to

16

bits

because

we

were

not

needing

32

and

then

I

tried

to

bring

more

clarity

on

boxes

that

would

rely

on

ip

fragmentation.

Instead

of

supporting

the

segmentation

option,

I

would

like

to

I

will

further.

I

will

simplify

this

even

more

and

basically

do

like

eip

fix

and

say

we

should

not

do

fragmentation.

F

F

Then

I

received

a

bunch

of

comments

on

the

relationship

between

the

last

bit

flag

of

this

option

and

the

sequence

number

mostly

by

andy.

I

agree

with

all

of

them

and

the

request

for

clarification

will

be

done

so

you

had.

You

were

asking

for

details

and

I

agree

with

you

it.

It

wasn't

clear

on

some

parts

and

and

the

changes

you

were

asking.

I

agree

with

all

of

them,

so

it

will.

You

will

find

them

in

the

show

too

next

slide.

Please.

F

Okay,

so

the

current

implementation

status

is

so

during

the

academy

we

were

working

in

an

environment

where,

within

swisscom

lab,

we

had

a

huawei

implementation

of

this

version,

so

we

were

following

dash01

based

on

the

feedback

that

I

will

receive.

I

will

update

to

the

comment

and

on

the

collector

slide

aside,

we

have

a

go

long

version

of

the

connector

and

a

c

version

of

the

collector.

F

The

c

version

is

being

validated

and

integrated

within

a

pmh

gt

right

now

for

our

next

steps,

we

will

probably

provide

a

ddls

support

for

this.

It's

not

our

top

priority,

because

in

all

the

deployment

scenarios

we

we

won't

need

it,

and

then

I

will

apply

the

changes

that

you

guys

recommend,

based

on

the

on

the

discussions

on

the

mailing

list

that

we

will

have

based

on

this

meeting.

Thanks.

C

Okay,

go

ahead

joe.

Yes,

it's

actually

on

the

previous

issue.

If

we

still

have

time,

I'm

I'm

not

sure

whether

we

need

to

discuss

it

further

now.

I

think

it

needs

further

discussion

on

the

list

and

potential

get

resolved

during

working

with

last

call.

I

think

the

question

was

really

about

whether

the

icg

would

accept

a

protocol

where

this

is

unspecified,

and

the

answer

is,

I

don't

know,

I

think

it

will

just

depend

on

the

ad

reviews

at

the

time

or

the

icg

reviews

when

that

happens.

C

F

Yes,

that

helps

a

lot,

so

what

I

would

suggest

is

I

remove

those

bit

and

I

reserve

the

last

few

values

of

on

them

so

that

I

don't

need

any

reference

to

anything,

and

I

and

I

and

we

proceed

this

way

and

so

that

I

don't-

and

I

follow

in

this

comment-

that

this

stuff

is

too

complicated.

For

no

reason

do

I

do

that

in

the

show

too.

F

I

would

not,

but

I

would

reserve

values

and

I

would

not

create

a

private

space,

but

I

would

just

reserve

values

and

if

we,

and

so

that

the

the

header

will

no

longer

change

and

if

based

on

the

review,

we

can

leave

a

reference

to

a

value

being

gpb,

then

we

just

leave

it

there

and

that

will

just

be

a

value

being

fixed.

Okay

and

if

there's

a

gpb

won't

work

because

it's

not

standard,

then

I

leave

it

in

the

reserved

space.

That

is

the

usual

blur

response

that

that

we

define

at

the

atf.

F

For

this

reason,

so

I

don't

over

complicate

stuff

with

this

s

bit,

I

leave

room

for

gpb

in

the

standard

space.

If

we

get

review

further

down

the

iesg

review,

that

says,

but

gpb

is

not

a

standard

you

can

put,

you

cannot

put

it

there,

then

I

will

leave

gpb

being

used

in

the

reserved

space

that

I

will

that

I

would

define.

C

So

I

don't

think

it

matters

whether

gpb

is

a

standard.

I

don't

think

that

unless

it'll

be

a

problem

from

that

perspective,

I

think

it's

just

a

matter

of

whether

it

the

the

specification

is

clear

or

not

into

what's

used,

and

it

it

may

be

that

effectively.

If

these

are

reserved,

you

can

have

some

area

in

a

space

that

actually

defines

what

these

fields

are

for

the

ones

that

aren't

done

in

the

document

and

hence

that

can

be

extended

with

future

documents

that

specify

this

behavior,

if

required,.

A

G

G

C

The

only

other

question

I

have,

and

again

I

might

be

completely

off

mark

here-

is

if

it's

the

observation

domain

ids

are

tied

to

what

that

is.

Obviously,

some

line

cards

may

have

multiple

separate

npus,

so

I

don't

know

if

that's

something

that

needs

to

be

considered

or

not

or

whether

that

is

not

an

issue.

G

A

G

G

Exactly

if,

if

that

is

the

intent,

if

that

is

what

we

are

aiming

for,

then

absolutely,

for

instance

in

in

ipfix

they

did

not

resolve

that

problem.

So

there

the

the

ids

were

generated,

and

you

could

not

map

it

down

to

the

to

the

line

card

to

the

the

network,

processors

and

it

was

already

sufficient

to

to

ensure

data

integrity.

So

for

the

data

collection,

it's

not

needed

to

to

map

down

to

the

specific

network

processor,

but

it

in

order

to

troubleshoot

further.

A

A

K

K

B

K

It

notifies

transport

protocol,

encoding

format

and

secure

security

and

protocol

yeah,

and

then

the

ms

can

subscript

the

young

notification

according

to

its

demand

and,

for

example,

it

needs

the

odp

protocol,

binary

encoding

format

and

js

security

protocol.

Then

the

server

will

send

the

notification

over

udp

and

also

satisfied

others

parameters

or

functions.

K

K

K

So

we

just

to

reply

that

the

answer

is

one

of

the

principles

set

by

rfc.

Young

push

is

to

minimize

the

number

of

subscription

iterations

between

subscriber

and

the

publisher

and

discourage

random

guessing

of

different

parameters

by

a

subscriber,

and

our

idea

is

to

try

to

prevent

the

problem

at

the

stage

of

the

negotiation

of

subsequent

subscription

in

order

to

minimize

the

number

of

integrations

yeah.

So

we

want

to

just

maybe

it

can

improve

the

efficiency

and

increase

the

loss

rate

yeah,

and

the

second

question

is

about

the

static

pronoun.

K

Yeah-

and

the

third

question

is

sensor

group

seems

a

very

vendor

specificability,

so

we

remove

the

two

parameters

and

one

is

max

node

per

sensor

group

and

max

sensor

group

per

object

and-

and

we

have

taken

them

out

in

the

latest

version

yeah-

and

these

three

questions

are

three

main

questions

discussed

in

the

mailing

list.

So

I

think

all

of

them

are

solved

now

yeah,

okay,

next

slide,

please.

B

G

L

L

L

A

C

L

L

I

L

A

L

C

C

So

that's

one

comment

to

that,

but

the

other

question

I

had

was

in

terms

of

the

structure

of

the

yang

it

looked

like

you

could

just

define,

for

example,

one

transport

that

supported

or

one

encoding,

whereas

I'd

have

thought

that

servers

have

different

capabilities

that

they

support,

and

hence

it

may

be

that

the

structure

the

model

needs

to

be

more

flexible

or

otherwise.

I

don't.

I

don't

understand

in

the

yang

why

it's

only

expressing

one

of

those

rather

than

this

is

the

set

of

different

transports

or

encodings

that

are

supported.

L

B

B

L

So

kernel

status,

this

job

has

been

presented

in

previously

2itm

meeting

and

actually

we

tried

in

a

previous

item

and

it

was

suggested

to

align

with

essay

model

and

also

we

actually

introduced

a

new

subsequent

mode.

Besides

the

periodic

subscription

and

unchanged

substitution,

and

so

we

need

to

better

characterize

this

separation

subscription.

L

So

in

last

night

meeting

we

also

you

know,

discusses

and

we

got

actually

a

lot

of

support

and

so

in

latest

version,

actually

we

tried

to

you

know:

remove

the

dependency

to

the

esa

model.

We

will

remove

the

past

target

definition

and

we

also

you

know,

clarify

the

xpath

external

evaluation

node

in

the

young

model

and

and

rewrite

the

usage

example

to

align

with

these

young

model

parameter

changes.

L

L

So

for

people

who

don't

know

what

the

adaptive

subscription

is,

actually

it

is

the

extension

to

the

subscript

notification

for

young

push

subscription.

They

support

two

different

mode.

One

is

period

subscription

which

allow

you

to

publish

the

data

periodically

and

another

is

unchanging

substitution

which

allow

you

to

publish

data

when

data

gets

changed

or

protocol

operation

on

data

get

changed,

but

in

some

cases

maybe

for

server

and

client

over

they

may

support

multiple.

L

L

Monitoring

the

wireless

signal

stress

can

be

weak

can

be

strong

so

because

of

the

air

interface

resources

is

very,

is

very

expensive,

so

we

can,

when

the

wireless

signal

strength

is

very

strong,

we

actually

can

collect

the

data

at

a

lower

rate,

but

when

the

signal

strength

is

very

weak,

actually

we

can

collect

more

data,

since

we

need

to

have

sufficient

enough

data

to

do

the

job

shooting.

So

this

can

greatly

reduce

the

data

to

export

to

the

client.

L

This

actually

can

provide

the

evaluation

creation

and

the

water

market

actually

is

part

of

this

evaluation

expression

and

that

can

use

to

to

ex

to

to

express

the

condition

to

be

satisfied

and

and

trigger

the

interval

switching.

So

here

we,

we

just

give

an

example.

So

when

such

condition,

excess

expressed

by

the

express

external

evaluation

actually

get

changed,

actually

the

condition

I'll

get

actually

satisfied.

L

L

Here

is

the

issue:

we

actually

respect

the

nd

on

the

list

and

about

the

filter.

It's

this

filter

and

trigger,

maybe

not

a

in

good

design,

so

we

make

a

some

change

actually.

Accordingly,

for

example,

we

take

out

the

data

path

target

and

things

that

we

we

don't

think.

This

is

something

needed,

because

this

actually

will

impact

or

influence

the

event

recorder

output.

Actually,

so

in

this

model

we

also

changed

the

naming

for

then

we

changed

the

condition

expression

to

the

x-path's

external

expression.

L

L

B

I

B

So,

as

a

contributor

andy,

I'm

kind

of

curious

about

that

comment

that

you

just

made,

because

I

think

the

the

reason

for

the

periodic

was

because

there's

some

values

that

just

don't

change

very

often

at

all,

maybe

once

a

day

or

even

less

often,

but

because

they're

periodically

pushed

in

case

this

subscriber

comes

in

later

they

get

it.

You

know

the

value,

but

I

guess

there's

also

a

sync

up

that

occurs

right.

B

L

L

G

I

just

wanted

to

follow

up

on

robert's

comment.

I

was

thinking

the

same,

so

I

think,

ideally,

if

for

a

periodical

subscription,

we

could

have

a

range

say

from

from

one

to

ten

and

then

basically,

the

the

the

publisher

decides

depending

on

on

the

state

which

which

value

it's

choosing

for

the

the

export

interval.

L

A

A

L

H

H

H

I

have

seen

actually

several

vendors

have

implemented

their

own

proprietary

mechanisms

for

providing

a

shorter

way

to

convey

this

information

like

a

time

stamp

of

last

change

or

some

checksum

of

the

config,

or

something

that

you

can

read

in

order

to

see.

If

anything

has

changed

at

all

in

the

device,

but

those

mechanisms

we

are

using

them

and

they

are

kind

of

nice.

H

They

are

better

than

nothing,

but

they

are

not

quite

good

enough,

because

in

many

cases

there

have

been

small

changes

somewhere,

but

maybe

not

in

the

area

where

this

particular

client

is

interested

to

see.

Oh

has

changed

so

it

will

be

reported

as

changed,

but

it

hasn't

changed

in

anything

in

any

area

that

this

client

is

caring

about.

So

we

are

back

to

r1.

H

Essentially,

if

you

go

to

the

next

slide,

this

mechanism

also

has

the

problem

that

it

also

often

gives

or

sometimes

gives

false

false

alarms

or

you

miss

it

false.

You

say

that

you

notice

that

oh

I

issue

you

get

config

and

you

get

to

get

a

complete

reply.

You

spend

a

lot

of

cpu

time

to

compute.

Is

this

the

same

as

last

time?

H

H

Okay,

so

I'm

proposing

a

solution

where

we

say

that

we

have

a

concept

of

a

transaction

id

attribute

that

you

that

the

server

may

report

for

every

container

and

list

elements.

We

don't

want

to

have

this

on

every

leaf

and

everywhere,

but

on

containers

and

list

elements,

and

we

make

sure

that

whenever

there's

an

edit

config,

the

server

will

update

the

transaction.

The

id

value

for

every

container

and

list

element

that

have

been

touched

by

this

transaction,

so

that

the

client

can

rely

on

this

transaction

id

being

something

new.

H

So

anything

that

is

touched

by

this

client

also,

it

can

in

an

edit

configure

clients

can

specify

this

transaction

id

and

then

the

server

will

apply

that

transaction

id

value

to

everything

that

has

been

touched,

but

also

the

client

during

a

get

config

can

specify

transaction

ids

or

what

it

expects

to

say.

Oh,

I

believe

the

contents

of

this

container,

or

this

list

element

has

this

transaction

id

and

if

it

matches,

there's

no

need

for

the

server

to

send

the

content

of

that

and

on

the

next

slide,

please.

H

I

have

an

example

of

what

that

looks

like

so

here

we

have

an

initial

sync

where

the

client

is

issuing

a

get

config

and

with

a

filter

for

interfaces

in

nacom

and

on

the

interfaces

it

says.

Hey.

Can

I

have

the

transaction

ids

for

this

please

by

issuing

this

in

tag

equals

question

mark

next.

Please-

and

this

is

what

a

reply

might

look

like

so

here.

The

server

has

decorated

the

reply

with

these

transaction

and

tag

values,

and

you

can

see

this

def,

something

that's

something

on

various

containers

on

the

well.

H

H

H

I

think

that

was

all

my

slides

basically

go.

Let's

take

the

next

one

yeah,

okay

right,

so

in

a

edit

config

we

could.

The

client

could

also

say

hey.

I

expect

the

transaction

id

of

this

interface

to

be

ghi5555,

so

if

it

is

go

ahead

and

run

this

transaction

and

delete

this

interface

in

this

case,

but

if

it

isn't,

if

one

of

these

transaction

ids

have

a

mismatch,

then

abort

the

whole

transaction

and

report

it

things

are

not

the

way

that

you

expected,

since

things

have

moved

since

you

last

synchronized.

H

D

Yeah,

but

I

as

a

client

won't

know,

I

don't

have

any

assurance

that

what

I

have

changed

under

this

transaction

id

is

indeed

what

is

still

changed

when

I

do

like

to

get

config

right

after

I

I

make

my

changes

or

or

I

wait

three

seconds

or

three

minutes

right

I

mean

is

that

is

that

expected

or

is

that

a

a

hole

in

the

the

concept

right?

Because

you

don't

have

any

with

lock

everything's

atomic

right?

You

know

everything's

there

and

it's

and

it's

atomic

with

this.

D

H

D

You're

saying

like

on

the

delete

in

in

the

I

was

talking

about

just

the

original

change

with

the

get

I'm

sorry.

I

was

back

on

the

previous

slide

when

I,

when

I

entered

the

q,

so

I

come

in,

I

do

an

edit

config

right,

I'm

not

doing

a

delete

operation

yet

right,

I'm

just

trying

to

figure

out

what

changed

right.

So

I

do

an

edit

config.

I

say

tag

foo

right.

D

E

H

H

But

what

this

is

about

is

to

ensure

that

when,

when

a

client

has

a

view

of

the

states

of

the

server,

it

should

be

able

to

verify

that

view

quickly

by

saying,

okay,

I

know

this

about

the

configuration

and

these

are

the

tags,

I'm

aware

and

just

report

the

differences

versus

what

I

know.

So

that's

the

mechanism,

I'm

looking

for

here.

D

H

A

B

E

My

point

is

that

we

have

something

very

similar

in

rest

golf

and

it

would

be

good

to

understand

that.

Is

this

the

same

mechanism

actually

or

are

we

if

we

have

a

data

store

that

is

visible

both

on

rest,

conf

and

net

confidence?

Do

we

have

to

supply

support

to

similar,

but

not

the

same

manners

so

describe

somehow

the

relationship

with

rest,

config

tags.

H

Yes,

this

was

definitely

greatly

inspired

by

the

e-tags,

and

my

intention

is

that

the

server

implementation

should

be

able

to

be

joined

here,

but

things

are

still

early

in

this

draft

and

we

will

see

where

it

takes

it.

I

don't

want

to

guarantee

that

it

will

be

exactly

the

same

or

very

much

compatible

with

e-tags.

But

yes,

it

is

the

same

mechanism

that

we

have

in

e-tax

already

that

I'm

trying

to

describe

here.

A

I'll

take

that

as

a

yes.

So

again,

I

I

understand

the

optimization

in

terms

of

trying

to

tag

at

least

at

the

container

and

the

list

known,

but

couldn't

this

be

a

little

more

cold

strained,

for

you

just

say

for

a

given

config

or

a

transaction,

you

have

a

tag

and,

if

you're

trying

to

edit

it

and

if

the

tag

doesn't

match,

you

request

that

you

do

it

now.

A

H

Yes,

it's

up

to

the

client

to

decide

where

what

parts

of

the

transaction.

It

really

cares

about

things

being

the

same.

It

can

say

for

this

part

of

the

tree,

I'll

just

go

ahead

as

traditional

config,

but

for

the

interfaces

list,

I'm

really

interested

to

make

sure

that

all

the

interviews

that

I

touch

are

untouched

or

that

no

interfaces

have

been

passed

or

a

particular

aspect

of

the

details

of

one

interface

are

important.

It's

up

to

the

client

to

decide

where

the

tags

have

to.

B

Match

so

so

as

chair,

can

you

go

ahead

and

bring

up

my

my

last

presentation

but

as

a

contributor

to

yan,

I

would

recommend

trying

to

align

this

work

exactly

with

resconf,

if

possible,

because

I

know

that

some

you

know

servers

that

present.

Both

restaurant

and

netconf

actually

build

one

of

those

interfaces

on

top

of

the

other,

and

I

think

typically,

rest

conf

is

built

on

top

of

netconf

but

anyway,

if

it,

I

guess

the

question

is:

why

wouldn't

why

couldn't

it

be

the

line

or

aligned?

B

B

B

Thank

you,

jin,

olaf

and

way

there

are

actually

also

other

respondents,

but

they

haven't

yet

contributed

and

so

they're

not

yet

listed

here,

but

hopefully

that

will

improve

next

next

presentation

next

slide,

please.

The

motivation

for

this

work

is

to

first

inform

us

to

better

support.

User-Facing

client

interfaces,

interacting

with

data

from

potentially

large

lists.

B

Examples

of

potentially

large

lists

include

traffic

logs

or

really

any

time

series

data

which

might

include

audit

log,

but

in

general

those

time

is

kind

of

like

config,

false

op

state

type

data,

but

also

within

configuration.

There

are

some

very

large

lists,

sometimes

interfaces

or

firewall

rule

bases

can

grow

to

be

in

the

thousands

and,

of

course,

it's

all

very

manageable.

Today

with

existing-

and

you

know,

netconf

I

mean

already-

the

client

gets

the

entirety

of

the

large

configured

list

and

then

handles

it

itself

in

its

own

memory.

B

B

E

B

The

little

arrows,

but

it's

it's

not

looking

quite

right

anyway

I'll.

Let

me

go

back

up

to

the

top

of

the

slide.

The

solution

proposal

is

to

introduce

to

both

netconf

and

russkoff

an

api

for

list

pagination,

there's

five

control

points

that

have

been

discussed,

and

this

was

on

the

list.

So

I'm

a

bit

repeating,

but

since

this

is

the

first

presentation

introducing

the

work,

I

wanted

to

make

sure

there's

a

slide

for

it.

There's

the

ability

to

limit

the

number

of

results

that

are

returned

in

the

response.

B

B

Are

they

returned

in

the

forward

or

reverse

direction,

and

potentially

there's

the

ability

to

sort

the

results,

maybe

on

a

particular

node

or

in

in

sql

terms

on

a

column

you

know

sort

on

a

particular

column

and

then

and

then

the

results

would

be

in

that

order

or

if

it's

an

order

by

user

list

by

default.

The

order

is

the

order,

the

configured

order,

as

it

were,

by

the

order

by

user

list

and

then,

lastly,

there's

potentially

the

ability

to

filter

the

items.

B

Maybe

the

client

is

only

interested

in

zooming

in

to

a

particular

subset

of

the

data.

Now

these

control

points

are

actually

ordered

in

processing,

there's

a

processing

order.

It

is,

in

fact

the

reverse

order,

so,

first

the

results

are

filtered

filters

in

general

are

very

fast

they're,

almost

always

well,

hopefully

the

node

or

the

leaf

that

you're

filtering

on

has

been

indexed

by

your

backend

database.

B

So

that

is

the

processing

order

and

for

those

that

are

familiar

with

sql,

that

is

exactly

what

sql

does

and

I

imagine

it

is

the

same

for

most

backend

databases,

but

that

is

in

fact,

what

this

author

list

is

is

doing

so

there's

a

number

of

authors

that

are-

or

you

know,

some

authors

are

more

implementation

oriented,

and

so

we

have

a

representation

of

different

backend

databases

and

we're

doing

prototypes

to

of

this

of

all

this,

to

ensure

that

it's

mappable

to

the

various

backend

databases

next

slide.

Please.

B

B

So,

first,

how

important

is

it

to

iterate

over

stable

result

sets?

I

think

it

was

jan

who

had

posted

a

comment

about

this

to

the

list,

but

essentially

should

something

like

cursors

or

snapshots

be

supported

and

just

sort

of

thinking

out

loud,

I'm

I'm,

you

know

for

configured

for

configure

for

configuration

with

rest

conf,

at

least

and

and

in

the

last