►

From YouTube: IETF109-SAAG-20201119-0500

Description

SAAG meeting session at IETF109

2020/11/19 0500

https://datatracker.ietf.org/meeting/109/proceedings/

A

A

A

A

A

A

Can

move

on

to

the

working

group

summaries?

So

I

think

most

of

these

we

can

just

sort

of

page

through

we've

gotten

reports

from

most

people

and

let's

see

I've

got

a

local

copy

up,

so

I

can

sort

of

sneak

ahead,

so

emu

just

sent

one

which

I

do

not

believe

made

it

into

the

slides

yes,

but

that

is

available

and

if

anybody

has

any

additional

comments

that

they

want

to

make

about

what

has

been

going

on

in

their

working

group

by

all

mean,

please

join

the

queue.

A

B

B

A

C

A

A

A

I

have

attempted

to

go

through

them

and

then

try

to

extract

out

the

technical

bits

and

work

with

authors

to

make

changes

to

address

them,

but

I

still

have

to

do

my

final

pass

to

sort

of

go

through

the

actual

changes

in

the

document

to

see

whether

or

not

the

review

comments

have

actually

been

addressed.

So

that's

still

waiting

for

my

additional

review,

follow-up

and

potentially

would

either

have

more

changes

to

the

draft

or

potentially

go

to

the

isg.

A

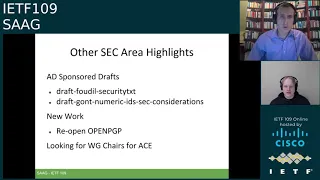

A

B

In

addition

to

the

the

ace

working

group

share

opportunity

in

general,

if

you

are

interested

in

being

a

working

group

chair

opportunities

like

this

kind

of

always

come

up,

and

so,

if

you

let

us

know

in

advance,

what

will

keep

you

in

mind

when

these

opportunities,

you

know,

do

present

themselves

so

really

kind

of

do?

Let

us

know

if

you

know,

with

a

short

note

privately

just

say:

hey

at

some

point:

I'd

be

interested

and

we'll

see

whether

there's

a

match.

B

A

couple

other

area

highlights:

we've

had

a

lot

of

discussion

in

recent

weeks

on

various

vulnerability

disclosure

guidance.

So

we

first

had

the

discussion

about

the

llc,

so

we

have

a

published

policy

for

the

ietf

infrastructure

and

very

much

most

recently,

which

was,

I

think,

we

closed

it

to

early

last

week.

We

also

have

now

documented

how

we

do

vulnerability

disclosure

in

the

ietf.

B

You

can

read

that

github

link

there

and

that

should

be

posted

relatively

soon

to

the

official

website.

The

big

kind

of

addition

beyond

everything

that

we

already

do

was

first

having

it

written

down,

but

we

knew

now

have

a

last

resort,

alias

that

will

go

to

ben

and

me

if,

if

that

discoverer

or

that

seeker

of

the

vulnerabilities

can't

can't

find

the

right

working

work,

the

other

thing

ben

and

I

wanted

to

plug-

is

we

keep

a

working

list

of

what

we

call

the

common

the

common

discussed

topics?

B

So

if,

if

you're

working

kind

of

on

a

document

or

if

you

were

or

reaching

out

and

helping

with

security

issues

in

another

working

group,

we

have

this

working

kind

of

tick

list

of

things

to

consider.

Beyond

all

the

official

documentation

we

have

as

documents

go

forward

and

if

you

have

suggestions

of

other

things

to

add

to

that

list,

we

also

really

do

welcome.

Welcome

back

it's

a

it's.

B

The

other

thing

ben

and

I

wanted

to

want

to

just

to

quickly

highlight

our

pipeline

grows

and

shrinks,

and

we've

been

really

putting

in

a

concerted

effort

to

make

sure

that

we're

working

kind

of

the

documents

as

fast

as

we

can.

We

know

that

we

are

a

gating

function

to

to

moving

that

forward.

So

we

just

want

to

highlight,

we've

been

pushing

hard

and

we

believe

everything

is

through

pub

wreck.

B

As

of

as

of

these

kind

of

screen

caps

a

couple

of

kind

of

days

ago,

and

what

we

would

also

kind

of

call

out

is

that

our

reviews

of

these

are

kind

of

agile.

If

you

have

a

document

with

us

in

the

pipeline,

you

know

we

would

typically

probably

handle

it.

First

come

first

serve,

but

if

you

have

some

constraints

where

you

think

there

are

dependencies

on

the

documents,

the

way

the

authors

have

resources

requires

that

we

look

at

something

kind

of

sooner.

B

A

Yes,

I

used

to

second

that

it

is

very

helpful

if

there's

a

particular

document

that

has

some

additional

constraints.

I

know

we're

both

happy

to

try

and

work

to

meet

those,

but

we

have

to

know

about

them

in

order

to

do

anything

and

just

to

sort

of

reiterate

that

the

publication

requested

queue

has

gone

to

zero,

but

it

used

to

be

quite

large.

So

there's

a

little

bit

of.

E

E

E

E

A

F

A

B

Apps,

absolutely

if

you'll

notice,

the

the

the

the

write-up

of

that

is

actually

still

a

github.

We

have

not

yet

done

the

necessary

steps

to

put

things

on

on

the

website

kind

of

itself.

Publishing

the

key

in

in

one

or

many

kind

of

key

servers

is

still

one

of

those

last

steps.

We

need

to

do

in

addition

to

a

little

bit

of

editorial

polish.

We

still

have

so

that

that's

on

the

tick

list

before

we

launch.

B

So

ben-

and

I

just

really

wanted

to

reiterate

you

know,

while

we

do

a

lot

of

document

review,

there's

a

tremendous

amount

of

magic

and

effort

that

happens

before

things

get

to

us

and

things

that

happen.

That

tremendously

also

help

us

and

that's

only

possible

through

the

sec

review

sector

review

process.

Folks

on

that

team

give

really

of

themselves

and

they

shoulder

quite

a

heavy

burden

to

do

either

last

call

reviews

they

are

there

for

the

telechat

reviews.

B

They

are

the

resources

we

have

when

working

groups

want

an

early

review

and

do

that

iteration

and

it

frankly

makes

the

document

quality

by

the

time

it

gets

to

the

isg

so

much

better,

and

we

can't

thank

that

team

that

that

team

enough

for

for

what

they

do,

and

so

we

just

wanted

to

quickly

publicly

recognize

all

of

the

reviews

that

have

happened

and

who

those

reviewers

have

been

since

the

last

time

we

got

together

in

ietf

108..

So

again,

really

kind

of.

Thank

you.

Thank

you.

Everyone

you're

really

helping

us

in

the

community.

A

All

right,

so

the

next

agenda

item

was

this

sort

of

terminology

question

about.

You

know

on

path

or

man

in

the

middle,

and

what

does

this

actually

mean?

So

if

we

go

to

the

next

slide,

this

was

a

thread

that

was

actually

just

that

was

started

on

sag

by

michael

richardson,

and

so

I've

got

the

link

in

the

slides

to

the

the

initial

message

of

the

thread

and

then

mica

also

tried

to

do

a

summary

as

well,

probably

a

few

days

ago.

A

At

this

point,

though,

the

days

are

learning

together,

but

to

sort

of

reiterate

some

of

that,

if

we

go

to

the

next

slide,

to

reiterate

some

of

that

for

this

audience

there

were

a

few

interesting

points.

So

obviously

many

of

these

terms

that

we

bandy

around

don't

actually

have

a

precise

definition.

I

mean

in

the

middle,

has

been

used

for

probably

decades

and

sort

of

the

landscape

in

which

we

try

to

use.

A

When

you

know

when

one

thing

would

be

a

problem

or

when

it

would

not,

and

to

be

able

to

talk

about

the

distinctions

versus

just

there's

an

attack

and

there's

not

an

attack,

there

can

be

a

lot

of

context

around

that

in

terms

of

how

severe

it

would

be

or

in

what

cases

it

would

be

problematic.

So

next

slide.

E

E

A

But

if

we

go

to

the

next

slide,

there's

sort

of

some

questions

about

are:

are

these

really

a

useful

taxonomy

to

have

like?

Is

there

more

detail

in

what's

going

on

that,

we

need

to

have

turns

to

be

able

to

talk

about,

and,

of

course,

the

pervasive

question

or

the

theme

of

this

talk.

Perhaps

even

are

these

terms

well

defined

and

unambiguous?

A

H

This

is

obviously

full

of

bike

sheds,

as

we

saw

on

the

last

slide,

but

it

is

real.

I

think

it

would

be

very

important

for

the

itf

in

general

to

have

a

small

set

that

are

usable

currently

across

working

groups.

We've

had

old

definitions

and

such

like

that,

but

I

think

doing

something

and

trying

to

finish

it

within

the

next

nine

months

or

a

year

would.

A

A

A

Thank

you.

That

would

be

quite

helpful

and

presumably

the

results

of

that

can

be

input

for

paul

as

we

try

to

prepare

a

potential

document

for

specific

usage

in

the

itf

of

summarize

what

we

found

from

the

literature

so

jonathan,

I

assume

you're

done

so

you

can

leave

the

queue.

I

don't

think

I

have

the

option

to

do

that.

A

J

Hi,

I

guess

you

can

hear

me-

I

see

the

little

bar

there,

so

I'm

a

little

bit

confused

about

what

problem

we're

actually

trying

to

solve

here.

Is

this

a

question

of

inclusive

terminology,

or

is

this

a

an

issue

of

I?

I

thought

that

was

kind

of

where

this

came

from,

but

it

seems

like

there's

also

an

issue

of

of

trying

to

come

up

with

more

a

finer

grain

definition

of

what

a

an

in

the

middle

attack

is.

J

So

I

I

guess

I'd

like

to

understand

that

and

if

it's

in

terms

of

the

in

terms

of

the

inclusive

terminology,

there's

also

this

other

work,

that's

going

on

in

the

ietf

terminology,

github

repository

and

I

don't

know

exactly

who's

who's,

managing

that

or

or

what

what

working

group

or

or

whatever

is,

is

dealing

with

that.

But

but

that's

going

on

as

well,

and

I'm

seeing

that

cited

externally.

A

Okay,

just

a

administrative

note,

I

do

have,

I

think,

one

or

two

more

slides,

so

I

will

cut

the

cue

on

this

slide

and

we

can

go

drain.

This

cue

and

then

go

on

a

little

bit

more

to

answer

jim's

question

now.

The

primary

problem

here

is

that

when

you

say

man

in

the

middle,

not

everybody

is

thinking

the

same

thing

and

we

would

like

people

to

all

be

thinking

about

the

same

thing

for

any

given

term.

A

I

A

K

Hi,

I

was,

I

wanted

to

suggest

that

we,

if

we

come

up

with

some

new

terminology,

that

it

actually

can

be

used,

also

in

some

form

and

analysis.

I

think

it

would

be

unfortunate

if

we

have

all

these

different

terms,

fine-grained

terms

and

then

there's

no

equivalent

way

to

use

those

in

the

underlying

formal

technologies

for

formal

analysis,

because

nowadays

we

use

them

more

and

more

often

so

that

be

better,

be

some

mapping

between

those.

A

H

So

yeah

just

a

quick

answer

to

a

jim

fenton.

The

other

reason

why

having

good

terminology

is

important

is

not

just

so

that

we

in

the

ietf

expression,

security

or

understand

it,

but

people

reading

papers

that

we

write

about

our

protocols

do

as

well,

and

a

very

good

example

is

in

a

paper

that

I've

written

recently,

I

used

a

man

in

the

middle

machine

in

the

middle

attacker

in

the

middle

and

got

some

interesting

responses

from

security

novices.

H

But

people

who

understand

the

internet,

one

is

with

man

in

the

middle,

is

they

said?

Well?

Does

the

person

have

to

be

sitting

there

watching

all

the

time?

And

so

you

know

clearly

we

should

make

whatever

that.

Whatever

that

phrase

is

going

to

be

clear,

that

it's

it's

not

a

person,

you

know

that

which

is

a

completely

understandable

thing,

but

the

other

is-

and

this

has

come

up

a

lot

with

the

questions

around

dough-

is

it's

really

not

in

the

middle?

H

Almost

all

of

these

attacks

are

very,

very

near

one

edge

or

the

other,

and

so

I

mean

I

probably

was

in

the

middle

20

30

years

ago,

but

now

most

of

the

ones

that

we

are

concerned

about

are,

in

fact

or

not.

I

shouldn't

say

most,

but

many

of

the

ones

we

were

concerned

about

are

in

fact

very

near

an

edge

so

saying

in

the

middle

hides

some

of

the

attacks

that

we

actually

care

about,

namely

system

administrators,

putting

security

busting

proxies

near

users.

A

A

So

this

is

actually

the

last

slide,

so

the

queue

is

is

open

again.

Sorry

for

the

strange

split

with

the

the

question

slide,

but

I

think

my

overall

takeaways

here

are

that,

yes,

it's

sounding

like

we

do

want

to

have

a

more

fine-grained,

more

precise

set

of

terminology

that

we

can

use

and

there's

definitely

some

constraints

on

how

we

should

do

that,

to

make

it

most

usable

for

us

and

for

others.

C

A

C

B

I

just

wanted

to

reiterate

two

of

the

other

constraints

we

heard

in

the

discussion

from

the

jet

from

jabber.

I

think

mohit

was

saying

you

know

we

should

make

sure

we

we

stare

at

the

written

guidance

we

already

have

in

the

ietf

and

jonathan

noted

and

hottest

reiterated

the

idea

that

the

academic

literature

may

also

have

kind

of

things

to

say

and

we

want

to

make

sure.

What's

there

is

consistent

and

we

make

make

sure

we

don't

publish

something

that

conflicts.

A

L

All

right

perfect!

Well,

thanks

for

having

me,

I

I

will

give

a

warning

here.

These

slides

are

packed

dent.

My

intent

is

not

to

go

over

them,

but

if

you

find

me

exceeding

0.8

eckerd,

please

yell

and

I

will

try

to

slow

down

when

it

comes

to

some

of

these

topics.

I

I

know

that

many

people

participated

in

some

of

the

discussions

that

I'll

be

discussing.

My

intent

is

to

misrepresent

things.

So

if

you

find

something

that

that

you

don't

believe

is

accurately

presented,

please

do

correct

me.

L

The

only

thing

I

would

encourage

is:

could

you

save

it

to

the

end

to

see

how

much

it

impacts

things

well,

just

to

help

things

progress

so

with

that

we

can

go

ahead

to

the

next

slide,

all

right.

So

yes,

this

talk.

It's

intended

for

people

designing,

implementing

or

using

a

protocol

that

uses

certificates.

L

This

is

not

meant

to

be

the.

How

do

you

run

your

pki

for

your

organization,

it's

more

about?

How

do

you

run

a

pki

on

the

internet?

I'm

not

trying

to

tell

you

how

to

run

a

pk

on

the

internet,

but

I'm

trying

to

share

some

stories

about.

What's

worked

and

what's

not

worked

so

next

slide

so

part

of

where

this

talk

came

from

was

discussion

about

the

use

of

what

colloquially

we

call

the

web

pki,

and

there

was

some

confusion.

L

You

know

what's

the

problem

with

using

the

web

pki

and

what

are

the

gotchas.

So

this

is

interesting

for

you,

if

you're

not

sure

what

the

web

pki

is.

You

hate

the

web

pki

or

you

love

the

web

pki,

and

there

is

some

implied.

You

know

thinking

that

needs

to

be

done

on.

If

you

think

mutual

tls

is

all

that

great

or

it's

an

easy

solution,

so

we

can

go

ahead

and

next

slide.

L

L

So

you

can

go

ahead

next

slide,

and

I

guess

I

don't

need

the

covers.

Here's

the

next

slide.

So

the

first

one

that

I'm

going

to

talk

about

is

the

pki

and

I'm

calling

this

pki

0.1.

It's

sort

of

the

the

alpha.

It

comes

about

x5,

988

and

the

important

part

to

sort

of

capture

here.

When

we

talk

about

what

does

the

x509

pki

was

part

of

the

directory?

You

know

it

was.

It

was

merely

an

authentication

protocol

that

fit

into

the

overall

x500

series

of

of

specifications

describe

how

the

directory

worked.

L

L

It

was

merely

as

a

asymmetric

cryptographic

exchange

to

authenticate

to

the

directory

and,

after

that,

everything

you

needed

would

be

in

the

directory

or

if

you

need

to

communicate

with

someone

in

the

directory,

you

could

use

it

to

look

up

their

information

and

communicate

with

them.

There

next

slide.

L

It

made

use

of

x,

509

certificates,

but

without

the

inherent

dependency

on

the

directory.

The

first

sort

of

approach

was,

as

you

see

in

rc

1114,

that

there

was

a

way

to

name

things,

but

it

was

mostly

determined

by

rsa

dsi.

They

were

going

to

run

the

pki

for

everyone

and

they

would

be

able

to

certify

what

are

known

as

organizational

notaries,

who

would

then

be

responsible

for

within

the

scope

of

that

organization,

defining

a

namespace.

L

Now

not

everyone

was

organizationally

affiliated

so

for

those

that

were

residential

rsa

would

also

run

a

ca.

Now,

despite

there

being

organizational

notaries,

the

suggestion

was

that

rsa

would

in

fact

run

these

as

well

on

a

practical

sense.

Since

running

a

pki

is

hard

in

the

subsequent

privacy

enhancement

rcrs

1422,

it

became

a

bit

more

complex.

L

Now

the

source

of

information

here

since

you're,

not

really

using

the

directory,

comes

from

the

message.

Headers

everything

you

need

is

sort

of

in

the

message

that

you

get

and

you

don't

need

to

go

through

any

lookups,

because

it's

a

very

flat

structure

right.

You

have

the

policy

registration

authority,

the

policy

cas

and

the

cas

beneath

them,

but

you

don't

really

get

much

deeper

than

that

next

slide.

L

So

around

this

time,

netscape

comes

to

town

with

ssl

kicks

it

off

in

november

1994.,

I

would

say

it's

cold

was

rough

consensus

in

running

code,

but

they

sort

of

did

a

side

step

around

the

ietf

because

they

were

concerned.

It

was

going

to

take

too

long,

so

they

threw

up

a

rough

draft

spec

as

well

as

shipped

a

very

beta

copy

of

navigator

that

implement

ssl

and

the

reason

for

doing

it.

L

This

way

was

they

were

as

reportedly

deeply

concerned

about

what

microsoft

would

do

in

terms

of

bringing

commerce

to

the

web,

and

so

they

wanted

to

be

there

first

to

ensure

that

there

wasn't

a

proprietary

solution.

Another

part

of

this,

though,

was

also

that

they

were

aiming

for

minimal

change

to

existing

software.

L

It

needed

to

work

on

the

computers

people

had

with

the

software

people

had,

and

so

this

largely

meant

doing

things

in

user

land,

which

is

why

it

became

the

secure,

socket

layer,

no

other

changes

and

they

used

x509

because

rsa

said

to

use

x5x9,

and

that

seemed

like

a

good

idea

at

the

time.

What

else

are

you

going

to

use

and

there

was

very

little

external

dependencies,

so

the

very

first

version

of

netscape?

L

It

merely

checked

against

a

list

of

issuers

that

were

baked

into

the

binary,

and

every

certificate

was

directly

issued

by

one

of

those

issues.

There

were

no

chains,

there

were

no

change

in

the

protocol

naming

rules.

There

were

no

naming

rules.

In

fact,

there

wasn't

really

anything

going

on

whatsoever.

So

in

the

early

versions

there

was

nothing

being

checked.

The

idea

is

that

users

would

manually

inspect

certificates

and

decide

whether

or

not

to

continue

after

they

released

the

specification.

L

L

L

L

The

itu

had

a

proposed

number

of

changes

that

became

excellent,

509,

v3

and

particular

extensions.

Now

the

goal

with

pkx-

and

I

hope

I

again

am

not

misrepresenting

anything-

was

no

presumed

dependency

on

the

directory

to

allow

multiple

routes,

without

necessarily

the

isoc

running

the

route

or

having

rsa

run

the

route

with

many

ways

to

name

things.

There

were

some

important

goals

that

were

set

out

in

that

first

draft.

That

would

become

many

of

these

rfcs,

which

is

minimal

configuration

by

users

minimal

interactivity

requirements.

L

Things

need

needed

to

be

able

to

be

automated

and

verified

with

automated

tools

and

boy

golly

gee.

Wouldn't

it

be

nice

to

have

automatable

certificate,

enrollment

management

using

well-defined

protocols

now

that

took

a

number

of

years

from

when

that

work

started

to

when

that

work

was

specified

and

all

the

meantime

netscape

and

microsoft

were

waging

a

very

exciting

war

next

slide.

L

So

this

is

where

I

call

web

pki

versus

internet

pki

this

period,

where

the

pki

that

netscape

had

deployed

really

started

off

with

presumption

of

user

interactivity.

They

did

add

the

common

name,

checking

and

would

later

add.

Subject:

alternative

name

checking,

but

really

the

assumption

was

users

will

be

inspecting

using

the

document

information.

L

L

Web

pki

was

focused

on

browsers

and

it

started

off

that

way,

but

the

movement

by

microsoft

to

move

ie

into

the

operating

system

started

to

create.

What

I

would

argue

is

the

the

precursor

to

the

internet

pki,

because

they

wanted

to

expose

these

services

a

sort

of

general

operating

service

or

sorry

operating

system

level.

Services

where

anyone

could

write

an

internet

enabled

application,

whereas

netscape

it

started

off

really

focused

on

the

browser.

L

Netscape

would

add,

support

for

ssl

to

some

of

their

other

protocols

like

smtp

and

ftp,

but

in

with

respect

to

the

communicator

product,

but

this

wasn't

sort

of

how

things

started

and

it

blurred

the

lines

between

the

web,

pki

and

internet

pki,

because

here's

a

set

of

cas

that

can

be

used

for

a

variety

of

protocols,

a

variety

of

internet

services

and

it

just

works-

is

it

supposed

to

be

just

for

the

web?

Is

it

supposed

to

be

for

anything?

It

was

a

bit

ambiguous

and

the

selection

of

cas

was

admittedly,

very

weak.

L

It

was

mostly

based

on

organizational

reputation

and

business

risk,

not

necessarily

security

risk,

so

you're

a

bank,

you

issue

certificates.

You

seem

pretty

trustworthy

because

you're,

a

bank

there's

a

lot

of

regulation

around

banks,

so

that

was

what

made

a

lot

of

the

early

ca's.

The

audits

wouldn't

come

until

fair

next

slide,

so

I'm

going

to

call

this

the

precursor

to

the

web

pki

this.

This

is

when

things

started

to

get

really

messy.

So

the

ca

browser

forum

coming

about

in

2006

2007

started

blurring

things.

It

started.

L

Cab

form

started

because

cas

were

unhappy

that

certain

competitors

were

issuing

certificates

with

only

domain

names.

They

felt

that

this

was

fundamentally

the

wrong

way

to

do

certificates

because

they

had

started

out

with

legal

names,

even

though

pkix

was

really

oriented

around

internet.

Naming

browsers

were

unhappy

with

cas

in

terms

of

just

how

widely

divergent

everything

was.

So

a

meeting

was

held

did

not

go

the

way

that

the

originators

intended,

because,

rather

than

forbidding

domain

validation,

what

ended

up

being

is

development

of

a

new

standard.

L

Ev

browsers

were

interested

in

combating

phishing

microsoft,

most

of

all,

with

internet

explorer,

and

so

they

propose

this

ev

standard

and

the

reason

why

I

call

this

like

web

pki

0.9

is

it

developed

a

pki

that

was

very

focused

on

user

interactivity

and

it

was

very

focused

on

web

browsers

and

web

browser

specific

needs.

The

semantics

of

an

ev

certificate

outside

of

a

web

browser

really

don't

make

sense.

Yes,

there's

some

additional

validation,

but

none

of

that

is

actually

part

of

the

processing

model

of

a

certificate

of

rfc

3280.

L

L

And

so

what

I'm

calling

the

birth

of

the

web

pki

was

when

the

cab

forum

adopted

the

baseline

requirements.

So

the

context

here

is

the

cab

form

was

created.

Ca.

Some

cas,

I

should

say,

were

unhappy

about

domain

validation

in

certificates

created

this

new

ev

standard.

So

now

they

want

to

come

back

to

minimum

standards

and

figure

out

what

is

the

absolute

bare

minimum

for

certificates

and

the

work

on

this?

What

made

progress

was

incredibly

contentious

and

incredibly

profane

on

the

mailing

list.

I

will

say,

and

then

diginotar

happened

and

did

you

know?

L

Turner

happened

in

2011

in

the

fall

of

2011,

and

I

really

should

trust

in

the

system

and

the

version

that

had

been

circulated

that

had

been

quite

controversial,

which

allowed

for

both

domain

validation

and

organizational

validation

came

about,

and

it

also

set

up

a

number

of

policies

for

how

certificates

could

be

issued

when

they

would

be

issued.

Most

importantly,

for

example,

is

the

deprecation

of

10

24-bit

certificates

was

in

that

very

first

version.

L

And

that

way

they

did

not

have

to

disable

the

10

24-bit

code

within

their

product

until

the

majority

of

servers

had

migrated

off

their

existing

10-24-bit

certificates.

So

it

was

a

bit

of

a

flag

day

and

a

way

for

managing

that.

But

importantly,

it

also

marks

a

point

where

browsers

really

began

to

tell

cas

what

they

can

and

can't

do

and

that's

why

I

call

it

the

birth

of

the

web

pki,

because

it

was

a

set,

an

application

community,

defining

policies

for

the

cas

and

for

the

pki.

L

So

it

would

not

be

correct

to

talk

about

web

pki

1.0

without

talking

about

2.0

and

what

what

I'm

calling

web

pki

2.0

is

really

the

point

in

which

people

realize

that

there

were

in

fact

separate

pkis

that

there

was

this

concept

of

a

web

pki

being

a

majorly

different

thing

that

it

wasn't

just

about

the

software

verification

quirks

within

browsers.

But

in

fact

that

there

were

a

different

set

of

policies

and

expectations

and

needs

in

user

communities

and

so

symantec

largest

ca.

L

Their

history

of

key

material

goes

back

to

the

days

of

rsa,

dsi

and

verisign,

and

I

do

see

some

you

know.

Participants

here

who

were

around

verisign

at

those

days,

had

some

issues

and

ultimately

got

distrusted

in

browsers.

But

the

problem

was

the

roots

were

embedded

on

virtually

every

device

out

there.

Part

of

that

was

by

design

of

rsa,

which

was

in

the

early

days.

If

you

wanted

to

get

a

license

for

the

rsa

patent,

you

had

to

include

rsa's

routine

material.

That

would

become

the

verizon

group.

L

Part

of

it,

though,

was

also

just

that's

what

everyone

else

is

doing.

It

seems

like

a

good

idea,

so

I

call

it

the

split

between

because

we

saw

a

lot

of

issues

with

this

classic

example,

of

course,

is

a

single

web

server

providing

a

single

api

endpoint,

you

see

it

there

non-updatable

device,

a

number

of

major

media

companies

had

set

top

boxes,

had

tvs

where

they

only

trusted

a

few

cas,

but

all

of

their

users

used

the

same

web

server

all

talk

to

the

same

end

point,

and

now

suddenly

you

had

a

conflict.

L

If

I

have

an

old

set-top

box,

it

doesn't

work

with

new

certificates,

but

if

I'm

using

a

browser,

it

does

not

work

with

these

old

certificates

that

are

being

deprecated,

and

so

there

wasn't

a

good

way.

Now

and

now

you

had

to

think

about

the

situation

of,

I

have

a

single

web

server.

I

actually

need

different

certificates

for

this,

and

I

because

I

need

different

certificates.

I

need

even

different

domains

as

a

way

to

distinguish

what

certificates

to

send.

L

The

other

aspect,

of

course,

was

that

as

part

of

this

deprecation,

it

was

not

uniform

across

all

of

symantec,

so

some

operating

systems

would

continue

and

still

to

this

day,

trust

semantics

certificates

or

use

trust

them

for

other

formats,

like

email,

and

so

this

also

revealed

that

different

protocols

have

different

pki

needs

and

security

needs.

The

security

issues

that

affected

symantec

as

it

affects

the

web

pki

some

vendors

decided,

did

not

affect

other

protocols

other

purposes,

and

so

I

call

it

web

pki

2.0.

L

L

L

For

how

we

got

here

well,

the

reason

why

I

mention

it

is

that

there

throughout

this

history

there

were

various

points

where

we

saw

branches,

branches

and

approach

branches

in

need

branches

in

what

was

supported,

and

this

affected

the

design

of

these

different

pdfs,

the

web

pki,

the

internet,

pki,

what's

capable

in

software

and

these

branches

have

implication

if

you're

trying

to

use

certificates

that

generally

work-

and

you

have

to

think

about

what

are

the

answers

for

this

because

they

have

material

impact.

So

an

example.

Next

slide

is

thinking

about

the

connectivity

model.

L

Next

slide

exactly

you

can

see

kerberos

right,

kerberos

has.

This

is

my

terribly

hacky

attempt

at

the

classic

kerberos

model,

where

the

client

talks

to

a

server.

It

also

talks

to

the

kdc,

and

the

assumption

here

is

that

the

server

and

the

kdc

don't

have

to

talk

because

the

client

acts

as

the

mediator.

So

that's

what

it

can.

What

I

mean

by

the

connectivity

model

next

slide,

x509v1's

connectivity

model

kind

of

assumed

that

everyone

was

talking

to

the

directory

right.

X509

was

just

a

subset

of

the

overall

directory

protocols.

L

It

was

how

you

authenticated

to

the

directory

and

you

would

use

the

directory

to

find

other

certificates.

Now

there

was

the

allowance

for

out-of-band

communication,

but

the

idea

here

is

that

say:

crls

would

be

stored

in

the

directory.

If

you

need

to

figure

out

certificate

paths

that

would

be

stored

in

the

directory,

everyone

talked

to

the

directory.

There

was

strong

connectivity

and

equally

their

strong

connectivity

on

the

client

server

next

slide.

L

The

web

pki

connectivity

model

is

a

little

messy.

We

assume

that

the

client

can

talk

to

the

server,

but

one

of

the

the

realities

here

is

we

don't

assume

that

the

client

can

talk

to

the

ca.

This

was

realized

very

early

on

in

in

many

of

the

web.

Pki

implementations

that

in

fact,

it's

really

hard

to

get

a

packet

from

point

a

to

point

b

on

the

internet

reliably,

especially

from

client

computers,

everything

from

local

firewalls

to

dodgy

internet

connections

that

you

don't

really

control.

L

So

there

isn't

an

assumed

connection

here

from

the

client

to

the

ca,

but

there

is

to

some

hand

wavy

degree

in

modern

web

pki

and

assume

connection

to

the

browser

vendor.

You

need

to

find

out

if

you

have

new

updates

and

so

you've

probably

got

a

communication

channel

to

talk

to

the

browser

vendor

and

for

the

browser

vendor.

L

L

L

This

is

somewhat

of

an

oversimplification,

but

with

pkx

the

2459

3280

5280

design,

there's

not

really

any

strong

assumptions

whatsoever.

Now

the

client

and

the

server.

We

presume

that

they

have

a

path

for

exchanging

messages,

but

everything

else

is

fair

game,

whether

it's

one

ca

or

many

cas

it's

a

bit

of

an

unreliable

connectivity.

Now

this

is

important

because,

in

order

for

many

of

the

security

guarantees

to

work,

you

actually

have

to

figure

out

what

is

the

actual

connectivity

so

next

slide.

L

So

an

example

of

this

right,

the

server

can't

talk

to

external

entities,

the

client

can't

talk

to

external

entities,

so

you

have

no

way

to

actually

fetch

or

deliver

revocation

data.

If

the

client

and

the

server

are

the

only

two

parties

that

can

talk

and

you

have

a

certificate

chain,

there's

no

way

to

get

that.

So

what's

your

solution?

Well,

a

solution

is

short-lived

certificates.

You

don't

have

to

worry

about

revocation

now

you

just

let

the

lifetime

of

the

certificate

be.

L

What

it

is

downside

of

that

is

now

this

introduces

into

your

pki

and

your

protocol

design.

You

have

to

think

about

certificate,

lifecycle

management.

You

also

have

to

think

now

about

how

third-party

cas

in

that

relationship

works.

If

your

clients

don't

have

a

way

to

change

what

cas,

they

trust

and

your

servers

don't

have

a

way

to

change

what

cas

they

trust.

L

L

There's

a

problem,

though,

with

assuming

that

ocsp

stapling

is

viable,

which

is

if

the

client

built

or

verified

certificate

path

is

different

than

what

the

server

sent

then

those

ocsp

responses,

the

server

sent,

aren't

helpful,

and

in

order

to

solve

this

problem,

you

need

strict

requirements

on

how

certification

paths

are

built,

so

that

your

client

and

your

server

can

agree

on.

This

is

the

right

path.

L

L

That

was

part

of

what

prompted

this

but

epls

is

this

example

right

where

you're

bringing

a

client

onto

the

network,

they

don't

have

connectivity

and

you

need

to

bring

them

up,

and

this

creates

designs

for

you,

design

challenges

for

your

pki

when

your

client

can't

talk

to

the

network.

A

real

world

example

of

this

not

trying

to

promote

google

products,

but

when

early

chromebooks

were

released

and

they

had

a

cellular

modem

in

this.

This

is

the

cr50s.

L

The

problem

with

that

is

in

order

to

use

that

cellular

modem.

You

need

to

activate

the

cellular

remote,

so

you'd

go

to

a

cellular

provider

in

this

case,

verisign

or

sorry

verizon's

website,

and

you

would

activate

your

cr-50

and

you

would

have

cellular

connectivity

there's

one

problem

with

this:

verizon

only

allowed

connectivity

to

their

servers,

they

forgot

to

allow

connectivity

to

symantec's,

ocsp

servers

and

so

chromebooks

would

fail

to

connect

and

fail

to

be

able

to

activate

their

cellular

connection

because

they

had

no

ability

to

fetch

the

revocation

data

and

for

a

brief

window.

L

This

was

a

hard

failure

on

chromebooks

and

so

create

at

first

slow

connections

where

it

would

sit

around

for

60

seconds

spinning

and

then

also

potentially

hard

fail.

These

are

terrible

user

experiences

when

you

just

want

to

use

a

device-

and

this

affects

your

pki

design,

because

these

constraints

change

how

you

design

your

your

protocol,

your

pki,

next

slide.

L

Another

thing

that

is

not

entirely

obvious

is

thinking

about

the

naming

scheme,

and

so

I

mentioned

the

web.

Pki

started

out

with

this

idea

of

show

it

all

to

the

users.

This

naming

scheme

really

leaned

heavily

on

the

idea

of

civil

legal

names,

with

the

idea

being

that

rsa

would

verify

the

name

for

some

value

of

verify

or

whoever

had

the

rsa

license

would

verify

and

that

these

would

all

be

trustworthy.

So

there

weren't

really

rules

on

verification

with

pkx.

L

There

was

a

strong

interest

and

how

do

we

build

something

for

the

internet

right,

dns

names,

but

also

with

the

legacy

x509v1?

There

was

the

globally

unique

name,

the

distinguished

name,

it's

distinguished,

however,

naming

is

not

so

simple.

I

know

I'm

preaching

to

the

choir

here.

Dns,

of

course,

has

many

zones

and

can

involve

many

different

naming

authorities.

L

You

have

to

decide.

How

do

you

reflect

that?

Is

that

something

that

should

be

reflected

in

the

pki

is

it?

You

know

there

is

a

model

of

pki

for

every

dns

zone

split.

There

is

a

potential

new

sub

ca

that

can

allow

delegation

and

control

just

within

that

namespace.

If

you

look

at

say

the

early

drafts

of

tim,

there

was

a

very

strong

coupling

of

restricting

those

organizational

notaries

to

a

very

specific

global

name

space,

which

is

the

organization,

but

then

allowing

them

free

reign

within

that.

L

So

another

way

to

think

about.

Like

looking

at

the

dns,

the

web

pki

was

a

global

naming

scheme.

You

didn't

have

to

work

with

the

coordination

of

any

of

the

zones.

Any

ca

could

issue

for

any

domain

name

on

the

internet.

That

is

wonderful

and

terrifying,

so

you

can

see

some

of

these,

but

also

you

have

to

think

about

how

it

means

for

services

and

protocols.

L

So

I

mentioned

here,

you

know:

should

an

hp

server

on

port

80

be

able

to

obtain

a

certificate

for

your

smtp

server.

Just

because

I

control

the

web

server

doesn't

necessarily

mean

I

am

in

control

or

authoritative

for

the

smtp

server,

and

if

I

can

obtain

a

certificate,

can

I

potentially

spoof

that

connection

and

so

srv

names

could

help

here?

That

is

certainly

one

option.

Another

option

you

could

put

it

all

in

dns

name,

you

could

use

extended

key

usages

that

have

semantic

name.

L

L

L

The

web

pk

took

a

very

different

turn

started

off

as

roughly

one

year

certs,

because

that's

when

organizational

information

could

be

verified

and

both

between

the

combination

of

domain

names

and

in

the

combination,

the

the

quest

to

provide

ever

cheaper

services

certificates

start

getting

longer

and

by

the

time

the

cab

forum

came

about

and

adopted,

the

baseline

requirements

certificates.

There

were

some

cas

issuing

10-year

certificates

for

a

domain

name.

I

assure

you

domain

names,

don't

usually

last

for

10

years.

L

L

So

I

mentioned

a

little

here

that

domain

names

can

change

more

frequently

than

annually.

We

can't

just

assume

a

one-year

cert

research

here

is

like

bygone

ssl

that

looked

at

how

certificates

changed

sorry

certificates

did

not

change

were

not

revoked,

even

though

control

of

the

domain

name

or

where

the

domain

pointed

was

changed,

and

so

this

creates

new

security

risks.

L

So,

as

I

mentioned

earlier,

you

know

the

web

pki,

the

netscape

shipped,

that

became

the

web

pki

of

today

really

was

just

a

quick

hack.

The

assumption

here

was

get

it

to

market

fix

it

in

post,

there

was

strong

confidence

that

ipsec

would

replace

all

this

as

with

dns

sec,

and

this

would

just

be

a

short

thing.

L

And,

of

course,

this

this

last

bit

of

our

names,

unambiguously

interoperably

machine

parcel

domain

names,

turn

out

not

to

be

as

clear,

because

you

have

specifications

like

rfc

5280

talking

about

the

preferred

name,

syntax,

and

then

you

have

the

running

code

that

allows

a

lot

more

than

that

to

hit

the

wire

and

actually

be

resolved.

The

wire.

L

L

I'm

not

going

to

try

to

solve

that

discussion

other

than

to

know

that

the

the

threat

model

from

cas

being

able

to

do

that

global

issuance

is

slightly

tempered

by

the

fact

that

clients

can

change

how

dns

how

they

do

their

dns

resolutions,

or

potentially

their

ip

connectivity

will

bgp.

Second

dns

sex.

Save

us,

maybe

p

kicks

is

a

very

robust

model.

L

It

allows

the

federation

of

all

these

pkis

to

match

from

the

bridge

and

all

of

these

wonderful

techniques,

so

that

you

can

be

very

selective

and

you,

unfortunately,

can

get

the

lawyers

involved

and

figure

out

how

all

this

works.

The

downside,

of

course,

is

in

practice.

Everyone

ends

up

federating

with

everyone,

and

you

have

no

idea

who

you're

trusting

and

why

you're

trusting

them-

and

this

is

practically

worked

out

in

the

web

pca.

L

So

next

slides

another

one

is

who's

the

policy

authority

who

decides

who

writes

the

rules.

This

is

something

that

I

think

causes

people

the

most

pain

when

thinking

about

what

pki

is

internet

pki,

because

why

should

browser

set

the

rules?

And

this

is

because

it

is

a

to

a

degree

browser

pki

for

better.

For

worse,

so,

in

order

to

achieve

the

security

and

interoperability

you

need

you

need

folks

to

agree,

and

this

is

where

sdos

can

help.

L

But

I

think

we

know

that

the

ietf

is

a

bit

shy

around

policy

and

for

good

reason.

So

you

need

a

policy

authority

and

I'm

not

trying

to

say

that

it

can't

be

an

sdo

just

that

in

general

it's

not

an

sdo,

because

you

need

to

be

able

to

say

that

x

is

not

secure

and,

if

I

say

x

is

not

secure

and

you

disagree,

I'm

missing

out