►

From YouTube: IETF109-WEBTRANS-20201116-0900

Description

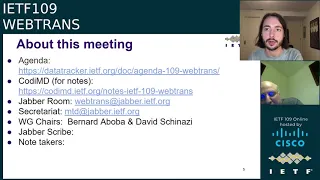

WEBTRANS meeting session at IETF109

2020/11/16 0900

https://datatracker.ietf.org/meeting/109/proceedings/

A

Slides

so

the

session

is

being

recorded

and

if

you're

here

you're

automatically

in

the

blue

sheets,

please

join

the

session

jabber

room

and

use

headphones,

although

I'm

not

doing

that,

I'm

using

an

echo

cancelling

speakerphone

and

state

your

full

name

before

speaking,

so

we

can

get

you

into

the

minutes

david.

Do

you

want

to

give

a

few

tips.

C

So

the

medical

tool

makes

sense,

but

it's

not

necessarily

very

intuitive.

So

you

need

to

do

separate

things

like.

If

you

want

to

speak,

you

need

to

first

enter

the

queue

and

then

like

the

chairs,

will

call

on

you

at

some

point.

You'll

have

to

manually

leave

the

queue

yourself

when

you're

done

speaking,

but

also

once

we

call

on

you

we'll

just

say

something:

we

don't

have

a

button

to

press

to.

Let

you

in

like

last

time,

so

you

need

to

yourself

enable

your

audio

by

unmuting.

C

So

that's

the

button

here

and

then,

when

you're

done

you

like

kind

of

stop

by

saying

you're

done,

and

then

you

mute

and

we'll

move

on

to

the

next

person.

We

encourage

folks

to

use

their

camera

if

they're

comfortable.

It's

a

lot

easier

to

understand

each

other,

especially

across

like

different

accents

and

everything

when

you

can

kind

of

see

the

person

you're

talking

to,

but

that's

not

a

requirement

by

any

means.

Yeah

also.

A

A

Okay,

the

note

well

just

a

reminder

of

itf

policies.

You've

probably

heard

this

several

times

already.

If

you've

been

attending

other

meetings

and

by

participating,

you

agree

to

follow.

Itf

proceed

processes

and

policies

and

definitive

information

is

in

the

document

listed

below

and

other

bcps.

If

you

want

more

info,

please

talk

to

the

working

group

chairs

or

the

ids.

A

So

at

this

meeting

the

agenda

is

posted

and

it's

up

to

date

we

will

want

a

volunteer

for

notetaker.

I

believe

we

have

a

volunteer

for

jabber

scribe,

but

we

will

want

somebody

to

take

notes.

We

have

the

kodi

md

at

this

link,

so

it's

easy

to

do,

but

we

do

need

a

volunteer,

so

we

can

keep

notes

for

what

is

about

to

transpire.

C

C

D

A

We

really

appreciate

it.

We

really

do

okay,

so

here

is

the

agenda

we

had.

I

have

the

preliminaries

which

you've

just

talked

about.

We

should

probably

do

the

agenda

bash

as

well.

David

will

handle

a

cue

and

then

we're

going

to

have

will

law

from

the

w3c

web

transport

working

group.

Give

us

a

little

update.

A

Luke

will

give

us

some

developer

feedback

from

the

web

transport

origin

trial.

Then

we'll

have

victor,

do

the

overview

and

requirements.

Eric

will

do

http,

2

and

then

victor

will

do

the

quick

and

http

3

drafts

and

then

we'll

have

the

wrap

up

and

hopefully

a

bunch

of

time

for

discussion

during

this

session.

A

A

Okay,

well,

I

can.

I

can

give

his

slide,

probably

well

enough

for

now,

so

I

will,

I

will

do

it.

The

w3c

web

transport

working

group

has

been

established

and

the

charter

has

been

published

and

the

working

group

has

been

created

and

all

that

they

did

hold

meetings

during

the

w3c

tpac

held

two

two-hour

meetings,

and

now

they

have

set

up

bi-weekly

meetings

at

an

alternating

time,

slot

of

7

a.m

or

4

p.m.

Pacific,

and

it's

all

on

the

w3c

web

transport

wiki.

So

you

can

go

and

find

out

all

about

it.

A

A

There

are

lots

of

issues

that

have

been

filed

by

various

people,

including

folks

that

are

part

of

this

meeting,

and

so

work

is

underway

and

if

you're

interested

please

please

participate

all

right.

So

now

we're

going

to

have

some

developer

feedback.

Luke

will

tell

us

a

little

bit

about

what

is

experienced.

I

have

also

have

a

few

observations

to

make.

So

luke

I'd

like

to

hand

the

floor

to

you.

G

A

A

Everybody

luke's

gonna

be

giving

feedback

on

the

web

transport

origin

trial.

Here's

some

basic

info

about

it.

Basically,

if

you've

got

chromer

edge

up

to

m88

like

the

canary

you

can

play

with

it,

you

should

probably,

I

guess,

do

for

the

latest

api

if

you're,

just

working

from

the

api

draft,

I

guess

m87

or

later

is,

is

a

good

thing

and

there's

some

various

info

on

the

web

to

get

more

experience

with

the

api,

but

go

ahead.

Luke.

G

Yeah

so

to

that

extent

as

well,

the

there's

a

few

hurdles

to

get

the

api

going,

but

the

stream

part

of

the

api,

quick

transport

works

somewhat

well

I'll

go

into

some

of

the

specific

bugs

and

the

datagram

component

works

as

well,

and

you

have

to

do

stuff

with

self-signed

certs.

So

but

there's

there's

guides

out

there,

which

is

great,

so

yeah,

just

some

some

some

heads

up.

G

G

You

know

like

we're

streaming.

This

video

call

or

you'd

be

streaming

watching

a

video

download

and

anytime

you

have

congestion.

It

just

causes

the

queue

to

backup,

either

when

you're

contributing

to

a

website

like

you're,

creating

a

new,

a

stream

we

use

rtmp

at

twitch

and

congestion

causes

back

pressure

which

increases

latency

and

then

in

my

half

I

work

on

the

distribution

team.

Like

the

cdn

team,

we.

G

Low

latency

live

video

cdn

and

again,

if

any

time

your

network

sputters

just

a

little

bit,

it

causes

a

roadblock

increases

latency.

So

we

really

want

to

use

quick

more

than

anything

to

investigate

how

to

solve

this,

and

this

is

some

interesting,

like

ramifications

with

yeah

how

to

do

it

so

next

slide.

G

The

crux

of

our

idea

and

how

to

do

low,

latency

video,

is

that

we

really

want

to

break

video

into

components

such

that

if

there

is

congestion,

they're

independent

of

each

other,

so

we

want

multiplex

multiplexing.

We

want

to

have

multiple

requests

in

flight

and

I

think

more

critically.

We

want

to

prior

to

prioritize

such

that.

If

there

is

congestion,

we

make

sure

the

important

data

gets

there

first,

so

we're

using

quick

transport.

G

You

have

to

go

into

the

area

of

like

http

push

and

you

have

to

start

mapping

well,

I'm

gonna

have

to

make

the

client

do

these

requests

in

this

order.

This

frequency-

and

it

also

gets

difficult

when

you

wanna,

have

the

same

api

for

contributing

and

distributing

video

you

have

to

you

have

to

deal

with

firewalls.

So

if

the

client

is

pushing

video,

it's

a

different

api

than

the

clients

downloading

video.

G

So

it's

really

nice

to

have

like

a

quick

based

api

where

it's

just

arbitrary

streams.

It

doesn't

matter

who

initiates

doesn't

matter

who's

receiving

the

api

is

symmetric

in

that

regard

and

quick

transport

is

so

simple.

We

don't.

We

don't

need

connection

pooling,

like

hp3

transport,

there's

almost

no

point

having

fallback

hv2

transport

support,

because

we

just

don't

want

to

work

with

tcp.

G

G

I've

gone

through

and

filed

quite

a

few

bugs

with

the

chrome

implementation,

which

is

great,

more

or

less

the

state

machine

just

needed

some

to

be

able

to

handle

few

a

few

cases

better.

A

lot

of

stuff,

where

really

the

only

case

that

worked

out

of

the

box

was,

if

was

remote,

initiated

unidirectional

streams

were

great,

otherwise,

there's

always

like

little

subtle

issues

with

every

other

configuration,

so

here's

just

a

few

tickets.

If

you

want

to

go

through,

I

think

most

of

them

are

being

worked

on.

G

Some

of

them

are

fixed.

I

know

the

using

the

entire

cpu

core

is

fixed.

That

was

just

a

busy

loop

in

the

kind

of

like

the

wake

up

logic,

but

we've

run

into

a

few

things

like

half

closed

streams,

for

example,

should

the

would

transport

api

require

half

closed

or

it

should

just

be

vague,

or

does

it

defer

to

quick

just

a

few

things

that

needed

like

the

draft

to

mention

it

explicitly

so

that

implementations

don't

miss

it?

G

So

this

is

a

little

like

a

feature

bucket

list

at

the

very

end,

but

prioritization

we're

really

concerned

about

congestion

control,

and

I

know

it's

very

hard

to

do

like

a

way

to

pick

things

out

and

some

issues

with

datagrams

like

if

you

try

and

send

a

high

rate

of

datagrams,

you

run

into

fighting

with

the

underlying

congestion

control

mechanism

that

you

also

don't

have

control

over,

but

yeah.

That's

it.

G

I

I

think

I've

given

more

fine

detailed

feedback

by

posting

issues,

at

least

on

github

and

yeah.

If

anybody

has

any

specific

questions

as

well

about

what

the

the

origin

trials

are

like,

but

it's

been

great

so

far,

just

being

able

to

run

quick

transport

in

the

browser,

it's

been

a

lifesaver

even

before

h3

support

is,

you

know,

ready.

A

A

A

A

One

thing

I

think

it's

important

to

understand-

and

this

is

true

of

any

use

case-

is

to

understand

whether

it's

a

green

field

use

case,

whether

people

have

something

they're

already

doing

doing

it

with

and

then,

if

they

do,

you

have

to

understand

what

the

bar

is

to

get

them

to

move

from

whatever

they're

doing

now

to

to

what

you

want

them

to

move

to.

So

in

this

particular

case,

for

these

things

we

see

the

developers

are

using

today

webrtc

the

data

channel

with

rtp

audio

video.

A

So

in

the

case

of

the

remote

desktop,

typically,

the

audio

video

they'll

use,

whoever

to

see

screen

sharing

and

then

the

data

channel

for

the

keyboard

and

mouse

events

and

control

for

gaming.

There's

we

see

client

server

game

streaming,

which

is

done

exclusively

with

the

data

channel,

so

they

send

the

audio

video

and

the

control

trap

of

the

keyboard

mouse

stuff

over

the

data

channel.

A

We've

also

seen

gaming

done

with

rtp

audio

and

video,

as

well

with

the

data

channel

being

used

for

the

for

the

keyboard

and

mouse

as

well,

and

I

would

mention

that

there's

a

number

of

developers

who

use

both

client

server

and

peer-to-peer

use

cases.

So,

for

example,

you

could

stream

a

game

from

the

cloud,

but

you

could

also

stream

it

from

your

game.

A

Console

to

a

mobile

device

or

the

remote

desktop

could

be

a

remote

desktop

in

the

cloud

or

you

could

be

just

doing

a

remote

desktop

with

your

desktop

your

own

desktop,

for

example,

as

well.

So

a

bunch

of

these

developers

they're

supporting

both

client

server

and

peer-to-peer

and

they

like

to

have

a

single

code

base.

They

don't

want

to

have

to

write

a

completely

different

implementation

for

peer-to-peer

versus

client

server,

and

that's

one

of

the

reasons

they

like

webrtc

is

because

it

kind

of

gives

them

both

and

yes

for

the

client

server

case.

A

They

need

ice,

but

it's

more

important

to

them

in

general

to

have

the

ability

to

write

one

code

base

than

it

is

to

get

rid

of

ice.

So

I

know

we

pitched

one

of

the

things

about

web

transport.

Is

we

get

rid

of

ice

and

the

answer

from

a

bunch

of

these

developers

is

I'll?

Keep

the

ice

if

I

can

keep

do

the

peer-to-peer.

So

a

lot

of

these

folks

have

a

strong

interest

in

the

in

the

peer-to-peer

extension

to

great

transport

as

well.

A

few

other

things.

A

So

some

of

these

folks

were

having

issues

with

the

data

channel,

because

the

the

way

the

javascript

back

pressure

is

implemented

in

data

channel,

so

offering

them

potentially

a

better

back

pressure

was

it

was

a

very

attractive

aspect

of

web

transport,

but

as

luke

just

mentioned

it's

not

for

de

for

for

web

transport

for

reliable

web

transport,

it

does

solve

a

lot

of

the

back

pressure

problems

kind

of

nicely.

So

you

you

don't

have

to

have

basically

what

we

saw

with

the

data

channels.

A

They

were

doing

a

lot

of

application

layer

acknowledgement,

but

for

datagrams

you

still

need

the

app

layer

acknowledgement.

So

in

some

ways,

if

for

developers

who

are

using

datagrams

in

this

scenario,

they're

not

seeing

a

lower

level

of

complexity

with

web

transport

and

they

were

kind

of

hoping

that

web

transport

would

allow

them

to

get

rid

of

the

application

layer

acting

that

they

were

doing

to

make

the

data

channel

function

but

they're

not

they're,

not

seeing

that

advantage,

they

still

have

to

do

their

application

layer

acting.

A

The

other

thing

is

that

they're

asking

about

just

some

basic

devops

issues

like,

for

example,

say

they

bring

down

a

gaming

server.

Is

there

a

way

to

migrate

these

web

transport

connections

to

another

to

another

server?

So

there's

just

some

practical

aspects

of

the

clustering

and

draining

that

they

they

wanted

to

understand

how

to

do

better.

A

A

A

A

A

Also,

would

it

be

widely

supported

in

browsers,

of

course,

some

of

these

questions?

I

can't

really

answer

right

now,

but

they

were

a

little

bit

concerned

about

the

complexity

of

the

protocol

and

pooling

issues

with

http

3

and

whether

it

would

inter-operate

and

I'll

talk

a

little

bit

more

about

that.

But

quick

transport

didn't

worry

them

at

all

because

they

for

the

other

cases,

because

it's

so

simple,

it's

like

yeah.

A

If

they

were

using

a

stock

kind

of

a

server,

they

would

have

to

implement

their

own

server

combination

of

http,

3

and

http

transport,

probably

within

a

framework,

and

then

for

the

folks

who

were

using

node,

they

discovered

oops.

I

don't

have

a

there's:

no

quick

module!

That's

going

to

be

available

in

any

reasonable

amount

of

time

due

to,

I

guess

some

blocking

issues

with

openssl

and

so

they're

kind

of

stuck

with

python

and

aio

quick

and

for

the

people

who

are

comfortable

with

python.

A

That

wasn't

a

really

big

deal,

but

if

they

weren't

it

was

and

then

they

would

ask

questions

like

will

http

3

transport

be

supported

widely

by

cdns.

You

know

hard

to

answer

that

and

then

there

was

a

few

folks

doing

this

event

streaming

for

like

company

events

who

are

interested

in

enterprise-

and

this

got

interesting

because

a

lot

of

these

enterprises

they

think

of

http

2

http-

is

the

thing

they

use

as

a

transport

of

last

resort.

A

So

you

know

a

lot

of

enterprises,

they

block

udp

traffic.

They

don't

even

necessarily

allow

tcp

on

all

ports

and

the

problem

is

in

these

kind

of

an

enterprise.

It's

it's

likely

that

quick,

wouldn't

work,

and

so

we

talked

a

little

bit

and

said:

well,

we

can

do

this

http,

2

failover

and

the

problem

there

was,

if

you're,

an

enterprise

that

doesn't

allow

that's

that

tightly

controlled.

You

probably

don't

support

http

2

either,

so

it

wasn't

clear

that

http

2

transport

would

really

help

them

much

that

this

would.

A

This

would

help

them

with

this

enterprise

problem.

Things

are

very

different

from

the

folks

who

are

more

on

the

consumer

side

because

they

don't

encounter

a

lot

of

these

crazy

firewalls,

but

this

was

just

something

out

there.

So

a

bunch

of

questions

among

the

customer

base

about

http

3

transport

and

how

how

much,

how

much

take-up

it

would

get

in

the

in

the

ecosystem

and

also

interoperability

due

to

complexity.

C

C

Questions

for

luke,

so

yeah,

perfect

cool,

so

the

first

one

you

mentioned

that

you

weren't

interested

in

an

h2

fallback.

So

just

out

of

curiosity,

if

on

a

network

that

blocks

udp

and

quick,

what

would

you

use

instead?

Or

would

you

just

say

my

feature?

Doesn't

work

and

that's

also

a

reasonable

option.

I'm

just

curious.

G

C

Well,

but

I

guess

just

the

my

question:

is

you

know

if

quick

isn't

there,

you

need

something.

You

can't

require

quick,

because

some

users

don't

have

access

to

it,

and

so

then

it's

either

you

have

a

fallback

inside.

You

know

underneath

the

javascript

layer

like

that

could

be

h2

transport

or

you

manually

at

your

layer,

fall

back

to

something

else

like

hls,

I

think

they're

both

reasonable

options.

G

C

G

Oh,

so,

if

we

did

h2

transport,

I

think

the

performance

would

actually

be

worse

than

falling

back

to

a

different

protocol

based

on

based

on

the

the

way

we're

prioritizing

data,

because

we

expect

the

prioritization

to

take

effect.

But

if,

under

the

hood,

we're

using

tcp

and

they're,

you

know

the

kernel

doesn't

prioritize.

We're

just

gonna

have

more

buffering.

A

C

Yeah,

no,

I

think

for

especially

for

use

cases

that

are

already

on

the

market

or

in

production

today

with

something

it's

natural

to

fall

back

to

what

they

already

have

built

today.

That

makes

sense

cortex,

and

then

I

had

a

second

question

for

you

luke,

which

was

you

mentioned,

quick

transport

and

preferring

that

to

h3

transport.

You

know

one

of

the

main

questions

we're

trying

to

answer

today

is:

do

we

do

we

want

to

build

one

of

them?

Do

we

want

to

build

both

of

them?

Do

we

want

to

build?

C

G

G

G

C

Okay,

so

from

your

perspective,

if

I'm

understanding

correctly

you're

building

your

own

stack

from

scratch

and

in

that

scenario,

having

to

also

build

h3,

is

more

work

for

limited

benefit.

If

you're,

not

the

main

value,

add

would

be

pooling

and

if

you're

not

using

it

you're

having

more

complexity

with

less

with

no

benefit

cool

thanks.

That

makes

sense

and.

A

F

F

You

can

not

prioritize

on

your

socket,

but

if

you

have

priorities

that

need

to

cross

a

reverse

proxy,

you

would

have

to

communicate

that

and

http

provides

that

way.

So,

if

you're

using

you,

if

you

do

not

have

any

form

of

reverse

proxy

in

from

your

video

server,

I

guess

you're

not

interested.

That's.

Why

I'm

asking.

G

So

we

would,

we

would

use

any

form

of

h3

prioritization

as

an

alternative

but

yeah.

We

we

right

now

have

a

we

assign

users

to

a

host

directly.

If

you

go

to

twitch

and

look

at

the

network.

Tab,

you'll

you'll,

see

when

you

start

a

video

session,

you're

you're

signed

with

a

specific

host

name

and

that

host

is

then

able

to

prioritize

traffic

okay

from

two

hosts.

Then

we

couldn't

do

it.

G

C

I

Thank

you

david,

so

just

fyi.

I

think

that

it's

very

likely

that

bbr2

would

become

the

default

congestion,

control

and

quick

and

q

at

the

end

of

q1.

2021

is

very

close

to

done

after

a

lot

of

work,

so

I

think

I

am

fairly

optimistic

about

that.

I

do

think

that

is

in

much

better

congestion,

controller

than

either

bbr1

or

cubic

for.

I

I

I

highly

support

the

direction

of

making

a

decision

on

these

transports

because

I

think

moving

forward

is

important

on

the

fallback

issue

based

on

my

discussions

with

youtube.

They

also

do

not

need

a

fallback

to

tcp,

because

if

they

were

to

have

a

fallback

they

would

actually

prefer

to

use

http,

1.1

and

their

current

solution.

I

And,

although

that

being

said,

as

long

as

they

can

say,

I

only

want

to

use

quick

transport.

If

I

actually

get

quick

transport,

I

think

they

would

be

happy

to

use

quick

transport.

So

it's

not

that

they're,

because

they're

importing

our

stack

anyway,

the

cost

of

the

implementation

cost

is

low,

but

the

they

would

definitely

not

want

to

fall

back

to

h2

transport.

They

would

only

want

to

fall

back

to

each

one.

C

J

J

I

basically

borrowed

heavily

from

google's

example,

client

code,

but

then

wrote

my

own

web

transport

server

on

top

of

ish,

and

that

was

really

an

experiment

to

see

for

an

existing

stack,

how

much

wicked

that

obviously

leverages

datagram,

and

so

as

when

I

started

this

work.

Some

of

that

was

changes

to

the

library

to

support

datagram

and

I

kind

of

expected

web

transport

might

take

some

changes

to

the

library

too,

but

in

the

end

it

didn't.

I

was

able

to

just

modify

our

example,

client

and

server

to

speak,

quick

transport.

J

Let's

say

that

way

that

was

quite

low

impact.

I

I

suspect

for

me,

like

adding

hp3

transport

to

that

toy

server

would

have

been

quite

simple

too,

because

all

the

existing

http

3

heavy

lifting

would

have

been

there,

but

then,

like

the

fact

I

didn't

do.

That

is

maybe

telling

that

it's

kind

of

a

bit

superfluous.

J

Again,

I

didn't

need

connection

pooling

in

this.

This

toy

example,

all

I

was

doing,

was

echoing

something

back,

it's

not

as

complicated

as

video

streaming

or

anything,

but

you

can

see

how

somebody

who's

coming

kind

of

new

into

quick

would

want

to

do

the

easiest

thing

in

maybe

in

some

respect

so

yeah.

J

I

think

some

of

the

questions

I

had

have

been

answered

in

terms

of

luke

the

the

kind

of

what

work

did

it

take

on

the

server

side

for

you

to

to

implement

this

stuff,

but

it

seems

that

you're

quite

targeted

towards

this

use

case.

So

I

I

think

I

already

got

the

answer

there.

So

yeah

just

cheers

for

sharing,

and

I

I

do

agree

that

it

would

be

good

for

us

to

come

to

some

answer

on

what

people

want

from

either

quick

transport

or

http,

3

transport

and

and

how

the

the

fallbacks

all.

K

So

it'd

be

really

interesting

to

see

if

we

can

find

any

data

about

that

or

or

if

it's

just

you

know,

hey

I've

got

a

congestion

controller

built

into

my

thing

right

now,

and

so

it's

it's

annoying

to

have

this

mode

where

it's

not

there.

The

only

other

thought

that

kind

of

comes

with

that

is

about

the

application

layer

acts.

G

We

haven't

gone

down

this

route,

but

one

of

the

problems

with

the

control

with

datagrams

is

that

you

don't

know

when

the

packets

could

be

dropped

by

web

transport.

So

you

have

to

implement

your

own

congest

control

on

top

and

you

kind

of

have

almost

like

two

two

algorithms

and

you

take

the

minimum

of

both

of

them.

G

I

I

didn't

realize

this

until

we

talked

about

it

on

github,

but

because

datagrams

don't

have

flow

control,

there's

definitely

states

where

the

quick

packet

could

be

act,

yet

the

datagram

was

actually

never

delivered

to

the

application.

So

I

don't

think

you

can

use

the

underlying

acts

of

the

datagrams.

Unfortunately,

thank

you.

That's

a.

K

G

C

All

right,

sorry,

folks,

I

cut

the

cue

after

alan,

which

I'm

hoping

that

everyone

heard,

because

I

wasn't

sure

I

might

have

muted

at

the

time

and

daniel.

I

see

your

videos

on

if

you

want

to

speak,

please

join

the

queue

using

the

andreas

thing

or

oh

you're.

Just

saying

all

right,

all

right

cool

sounds

good.

All

right,

then

we're

gonna

go

on

to

victor's

next

presentation

and

let's

try

to

keep

the

conversation

or

the

comments

a

little

bit

shorter.

C

F

Okay,

apparently

I

didn't

configure

the

camera

in

advance.

So

I'm

sorry,

you

can't

see

me,

but

I

want

to

give

an

update

on

the

one

draft

we've

actually

adopted.

That's

the

web

transport

overview

draft,

so

the

goal

is

roughly

to

sum

up

what

the

requirements

in

web

transport

api

are

and

what

are

the

common

properties

and

the

semantics

we

expect

from

web

transfer

protocols.

F

F

F

So

quick

transport

first

didn't

have

any

headers

and

we

had

some

clever

tricks

to

get

away

with,

not

sending

them

that

did

not

work.

Then

we

added

a

very

bespoke

unidirectional.

Header

format

called

client

indication,

and

that

is

what's

currently

in

the

chrome

implementation

in

the

origin

trial

and

in

the

latest

revision

of

the

draft,

which

is

still

yet

to

upload,

because

I

also

need

to

solve

some

issues

with

error

handling.

F

There

are

actual

fully

featured

headers,

which

I

tried

to

basically

replicate

the

http

fields

where

there

was

an

equivalent

http

field,

and

there

is,

but

that's

the

fields

defined.

Our

origins

here,

authority

path

status

and

the

proposal

is

that,

since

all

of

the

proposed

transports

have

those

headers

we

should

expose,

we

should

define

those

headers

as

a

shared

property

of

any

transport.

F

C

Well,

thank

you

victor.

That

was

incredibly

fast

that

really

brought

us

back

to

the

agenda

time.

Yeah,

I'm

getting

reports

from

a

bunch

of

people

that

medico

is

going

through

some

serious

technical

issues

if

you're

trying

to

speak

or

join

the

queue-

and

it's

not

working,

please

like

say,

oh,

I

was

gonna

say,

say

so

in

the

jabber.

But

apparently

the

meet

echo

chat

is

busted

as

well.

Go

ahead.

C

J

Yeah

so

my

my

hat's

available

for

free

on

the

snapchat

filter

thing

I'll

send

links

around

later,

but

the

serious

point

victor

just

a

question

about

this

change.

I

just

wonder

if,

depending

on

the

direction

of

like

whether

people

want

quick

transport

and

not

the

rest

of

them,

if

if

this

proposal

is

still

like,

the

thing

you

think

is

the

best

option,

but

so

it's

gonna

be

http

style.

Even

if

we

don't

use

the

http

style

transport,

I

wondered

if

you

could

clarify.

J

J

C

C

C

C

C

L

Okay,

so

we'll

just

give

what's

been

going

on

since

the

last

itf

there's,

some

github

issues

have

been

filed

and

a

little

bit

of

discussion,

there's

some

outstanding

prs

and

basically

all

of

hq

transport

is

kind

of

in

a

holding

pattern

waiting

for

the

working

group

to

move

forward

on

what

are

we

doing

with

http

in

general,

because

h2

is

definitely

you

know,

sort

of

the

last

on

the

packing

order

in

terms

of

transport,

so

we're

not

going

to

do

http

at

all.

We're

not

going

to

do

http

2.

L

L

There's

an

issue

open

about

being

able

to

open

streams

that

additional

round

trips

for

people

who

might

need

a

refresher

so

that

h2

and

h3

drafts,

because

they

can

multiplex

multiple

web

transport

sessions

on

the

same

connection,

they're

sort

of

one

step

where

you're

sending

you're

opening

a

stream.

That's

defining

your

session

and

then

you're

opening

additional

streams

that

are

kind

of

hanging

off

that

session,

and

so

there's

just

an

open

issue

to

discuss

like.

Is

there

a

way

to

do

that

in

one

round

trip?

L

Can

you

hang

can?

Can

you

send

the

new

web

transport

streams

referencing

the

connect

stream

before

you've

received

acknowledgement

from

the

other

side

that

it's

going

to

that

the

handshake

is

going

to

succeed

and

the

there's

sort

of

an

interesting

interaction

with

h3

here,

because

h3

the

streams

could

also

arrive

out

of

order

in

h2.

They

can't

so

you

could

end

up.

The

receiver

can

end

up

with

an

h3

street.

L

If

we

allowed

this

the

h3

draft,

you

could

end

up

with

a

web

transport

stream

that

has

no

session

established

for

it.

Yet

if

you

were

trying

to

do

it

in

a

single

round

trip,

so

I

think

this

issue

is

still

open.

We

have

any

proposed

resolution

for

it

next

slide,

datagrams,

there's

still

not

a

definition

of

what

exactly

the

datagram

support

looks

like

in

h2

transport.

Again

we're

not

working

on

it

hard

because

we're

waiting

for

a

nod

from

the

working

group.

Here,

I

think,

on

the

issue.

L

L

Okay,

I

haven't

read

the

slide

yet

so

give

me

a

second,

I

mean

I

think,

and

this

will

just

maybe

feed

into

the

broader

discussion

about

http

versus

quick

transport.

So

you

know

the

http

transports.

You

know

have

this

feature

where

you

can

multiplex

the

sessions

right,

whereas

quick

transport

doesn't

have

that

feature.

L

So

if

you

want

to

have

you

know

multiple

column,

web

transport

sessions

with

quick

transport,

you

have

to

open

multiple

connections,

each

one's

sort

of

independent-

and

I

you

know,

I

hear

people

saying

complexity

around

the

h3

draft

and

I'm

trying

to

like

put

my

finger

on

what

is

the

complexity

there

and

one

aspect

that

one

aspect

of

it

is

this

sort

of

being

able

to

to

pool

or

multiplex

multiple

sessions

together?

Obviously,

h3

has

just

you

know

some

additional

complexity.

L

L

C

Point

of

order,

as

a

chair,

I

have

been

informed

by

the

ietf

chair

that

the

data

tracker

authentication

vm

completely,

fell

over

and

they're

gonna

reboot

it,

but

it

means

that

it's

gonna

at

some

point.

It's

gonna

kick

everyone

out

of

the

meeting.

It's

gonna

take

two

minutes

to

reboot

and

then

you're

all

gonna

be

able

to

join

again,

so

I'm

gonna

say

like

keep.

Let's

keep

going.

Let's

keep

talking,

but

if

you're

kicked

out,

go

make

yourself

a

beverage

and

come

back

in

a

few

minutes

and

we'll

resume.

L

C

G

M

L

So

quick

transport

allows

you

to

have

multiple

streams

on

one

connection,

but

it

only

allows

you

to

specify

a

single

web

transport

uri,

whereas

h2

and

h3

transports.

Let

you

open

multiple

sessions

within

the

same

connection

using

different

web

transport

uris

and

directing

subgroups

of

streams

towards

these

different

virtual

sessions.

L

Within

the

connection,

I

think

that's

like

I

said

I

think

it's

a

key

difference

between

the

sort

of

two

transport

worlds

and

I

think

it's

also

where

some

people,

I

think,

that's

where

some

of

the

complexity

that

people

point

to

h3

or

h2

and

say.

Oh,

it's

complex,

I

think

that's

where

some

of

it's

coming.

L

K

C

M

M

C

K

H

K

K

C

C

C

C

F

F

F

So,

just

as

a

reminder,

h3

transport

is

h2

transport,

but

that's

over

h3.

It

has

the

datagram

support

through

quick

datagram

and

the

specific

mechanism

for

embedding

datagram

vintage

free

is

described

in

draftskin

as

a

quick,

date-free

datagram

their

draft.

We

are

currently

converting

it

with

design

decisions

and

hd

transport.

There

is

a

pull

request.

I

wrote

everyone

is

encouraged

to

take

a

look

at

it.

F

There

is

a

redesigned

to

use

stream

ids

as

like

identifier

for

web

transport

sessions.

That

is

also

design

decisions

that

was

in

h2,

and

that

made

more

sense,

and

that

allowed

me

to

deliver

two

paragraphs

of

text

from

the

specs.

So

that's

nice

and

that's

basically

it.

I

did

some

other

minor

adjustments,

but

everyone

is

welcome

to

take

a

look

at

that

pull

request

for

all

of

the

details.

F

Next

slide,

quick

transport,

quick

transport

actually

get

more

update

and

not

publish

a

draft

because

there

are

still,

I

need

to

fix

the

story

with

error,

handling

and

error

codes,

but

the

basic

idea

of

quick

transport.

If

you're

not

familiar,

it's

a

quick,

but

it

has

a

handshake

in

front

of

it.

But

after

you're

done

with

the

handshake,

you

can

treat

your

web

transport

connection

as

if

it

was

just

a

regular,

quick

socket.

F

So

it

has

lpn

values,

that's

dedicated

for

it

and

that's

not

http,

and

that

allows

us

to

avoid

cross

protocol

attacks.

It

has

its

own

dedicated

uri

scheme,

called

quick

transport,

quick

dash

transport

column,

slash

slash

and

it

has

the

same

syntax

as

http

urls,

so

we

re.

So,

as

I

mentioned

before,

we

used

to

have

a

very

bespoke

header

format.

We

have

a

new

format,

that's

bespoke,

but

now

it's

used

to

have

numbers

as

keys.

F

Now

it

has

strings

as

keys

and

those

follow

roughly

the

same

semantics

as

http,

and

the

reason

I

want

this

is

one

having

header

names

as

strings.

Instead

of

keys

is

greater

for

extensibility,

because

this

lots

people

who

are

rolling

their

own

things

on

top

of

this,

to

add

their

own

headers

without

having

to

fear

any

collision,

because

otherwise

it'd

have

to

work

with

numbers

and

that's

the

main

reason.

F

It's

still

easy

to

parse,

it's

basically

16-bit

lens

prefixed

strings

everywhere,

and

one

of

the

main

conceits

of

great

transport

is

that

you

get

exactly

one

quick

connection

per

your

instance

of

your

transport.

That

is

step

optimal.

If

you

open

a

lot

of

web

transport

connections

because

you

do

not

get

any

pulling-

and

this

also

allows

another

hand-

allows

you

to

do

a

lot

of

things

like

swapping

in

your

custom,

congestion,

controller

on

the

server

side

etc.

F

So

this

is

an

example

of

how

quick

transport

url

scheme-

if

you

do

not

remember

this,

says

I've

not

updated

this

slide,

but

all

of

the

highlighted

values

are

now

sent

completely

in

the

handshake.

So

that's

another

update,

slash

improvement

next

slide

as

a

reminder

that

that's

this

draft

is

actually

implemented

in

chrome.

There

are

instructions

on

how

to

do

things

with

it.

If

and

I

think

corey

bernard

went

earlier

today,

so

I'm

not

going

to

go

back

into

this

now,

let's

get

to

the

great

transport

zoo.

F

The

great

transport

zoo

is

where

we

look

at

all

of

our

transport

and

decide

which

ones

we

want

and

which

ones

we

don't,

and

there

are

four

options,

which

means

that

there

are

two

to

the

four

power

two

to

the

power

four

variants

and

we're

largely

arguing

for

three

or

four

of

them.

I

think

at

this

point,

but

there

are

three

of

four

dimensions.

There

is

a

hypothetical

fallback

transport

which

I

didn't

think

I

think

is

this

point.

F

So

as

an

overview,

there

are

like

very

easy

axis

that

you

can

split

dedicated

versus

pooled,

quick

versus

tcp,

and

those

are

the

kind

of

defining

characteristic

of

those

those

used

to

be

less

defining

with

last

updates

to

quick

transportation,

free

transport,

etc.

I

feel

like

they're,

most

important

in

characteristics

of

all

of

those.

Next.

F

F

So

here

is,

however,

there

has

been

some

interesting

details

that

happened

on

api

layer.

So

one

thing

we

did

is:

we've

we

updated

the

api

somewhere

in

september

and

that's

in

the

very

latest

versions

of

chrome,

where

we

notice

that

there

is

a

very

similar

redundancy.

If

you

look

at

the

old

approach,

you

will

notice

that

the

choice

of

transport

is

indicated

twice

once

in

the

constructor

name

once

in

the

euro

url

scheme

and,

of

course,

that's

redundant.

F

So

we

renamed

the

api

entry

point

to

web

transport

and

that

had

a

very

nice

side

effect

of

basically

now

before

that,

in

order

to

implement

all

of

the

transport,

we

would

have

to

write

codes

for

all

of

those

javascript

classes

and

that

added

a

lot

of

cost,

but

now

that

this

is

entirely

dispatched

by

url.

This

moves

the

problem

of

actually

picking

the

transfer

down

to

the

network

layer,

which

removes

overhead

from

shipping

multiple

transports

or

both

on

technical

level,

but

also,

I

feel

on

conceptual

level.

Martin.

You.

H

H

F

In

the

new

quick

transfers,

there

is

no

automatic

fallback

if

you,

because

quick

transport

only

exists

over

quick

and

you

basically

get

to

do

this

manually

and

in

http

version.

You

have

to

delegate

this

to

the

browser,

because

the

browser

is

the

only

entity

that

knows

the

state

of

your

socket

pulse,

so

it

if,

if

it

knows

whether

you

have

h2

or

h3,

that

means

it

will

find

the

appropriate

socket

and

opens

the

session

on

that

yeah.

F

There

is

a

potential

for

new

api

to

add

more

control

over

what

exactly

happens,

but

the

basic

point

I'm

trying

to

make

is

that

there

is

still

it's

still

much

easier

conceptually

because

you

can

just

this

is

now

just

a

knob.

You

tweak,

instead

of

like

completely

different

things

that

require

completely

different

code

paths.

F

So,

just

to

clarify

conceptually

the

difference,

so

this

is

this

is

the

first,

the

very

first.

This

is

how

it

looked

before

and

on

the

next

slide,

you

can

see

that,

thanks

to

this,

there

is

one

less

box,

and

actually

one

less

arrow

is

what's

more

important,

because

a

lot

of

implementation

expense

here

is

crossing

the

sandbox

boundary

next

slide.

F

So

here

is

so

I've

made

repeatedly

the

observations

that

as

I've

as

we've,

refactored

more

and

more,

both

quick

transport

and

each

free

transport,

they

become

more

and

more

similar

semantically,

and

at

this

point

I

am

there

is

some

debate

to

this.

But

at

this

point

I

am

basically

convinced

that

http

free

transport

exists

primarily

as

quick

transport

with

connection

pooling

and

that

kind

of

makes

sense,

because

if

you

think

about

it,

there

is

no

really

substantial

difference

for

them

to

be

different

entities.

F

So

one

of

the

ideas

I

had

is

we

have

quick

transport

and

http

free

transport,

but

we

could

so

currently

we

disambigate

in

by

scheme,

but

it

is

actually

like.

That's

a

choice

made

by

client

and

that

makes

them

semantically

different,

but

we

can

also

even

delegate

that

decision

to

the

server

by

offering

both

http

free

as

and

web

transport

as

a

lpn.

F

Alternatively,

it

will

establish

that

connection

a

new

to

that

server,

and

I

the

reason

I

like

this

is

that

this

allows

us

to

make

progress

without

making

any

assumption

of

what

we're

actually

what

transports

we're

actually

using.

But

I

do

believe

we

still

should

make

progress

on

that,

because

this

this

this

is

logical,

but

we

still

should

actually

decide

which

transfer

we're

shipping

next

slide.

F

F

Now

the

only

there's

a

bottom

layer,

which

is

a

protocol

which

is

the

only

parts

that's

different

between

those

two,

but

if

you're,

implementing

http

free

transfers,

the

measure

marginal

cost

of

implementing

quick

transport

for

both

web

browser

and

even

the

server

but

web

browser

is

the

only

one

which

really

has

to

ship.

Both

is

a

fairly

minimal.

The

google

implementation

is

2

000

lines

of

c

plus

plus,

and

that's

not

that

much

that's

at

least

10

times

less

than

our

http

stack.

F

That's

approximately

five

times

less

than

just

a

file

that

decodes

all

of

the

quick

frames.

It

is

very

little

code

comparatively

and

those

2000

clients

includes

the

integration

test

and

the

unit

tests.

Obviously,

and

the

reason

I

believe

that

quick

transport

is

particularly

appealing

is

that

if

you

think

of

the

other

elements

in

the

ecosystem

as

http

2

as

http

free,

quick

transport

is

kind

of

http

one

of

web

transport,

because

it

is

the

simplest

thing

that

can

work

and

it

is

sufficient

and

optimal

for

a

lot

of

cases.

F

For

instance,

if

you're

doing

video

streaming

and

you

have

a

dedicated

server

you

connect

to,

and

you

do

not

have

any

form

of

pulling

that

you

care

about.

You

will

probably

want

to

have

a

quick

transport,

and

that's

as

that

is

what

luke

mentioned,

and

this

is

also

true,

for

instance,

for

youtube.

Youtube

does

not

use

http

2

for

video

serving

it

always

uses.

Http

1,

because

http

2

is

just

a