►

From YouTube: IETF109-SPRING-20201118-0500

Description

SPRING meeting session at IETF109

2020/11/18 0500

https://datatracker.ietf.org/meeting/109/proceedings/

A

B

C

D

A

A

A

No,

so

otherwise

next

next

slide,

please

so,

regarding

the

meeting

the

minutes

are

collaborative,

you

can

connect

and

contribute

and

also

correct

whatever

you

want,

including

your

name

and

your

comments,

so

you

don't

have

to

wait

for

the

minutes

to

be

published.

You

can

correct

right

now

in

terms

of

queue

management.

A

A

D

C

A

A

A

It

turns

out

that

we

have

at

least

five

or

six

document

documents

related

to

that

subject.

So

we

have

the

segment

endpoint

failed

and

probably

we

need

more

coordination

on

that

subject,

because

not

all

documents

are

aligned

on

terminology

framework

and

also

we

could

better

align

between

the

two

working

groups

between.

E

A

F

F

F

F

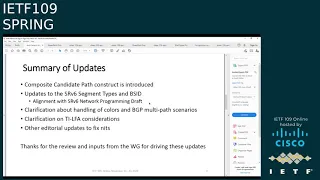

So

the

main

part

is

the

composite.

I

would

think,

is

a

composite

candidate

path

construct

and

we'll

talk

about

it

more

in

the

next

slide.

Apart

from

that,

there

are

updates

to

the

srv6

segment

types

and

srv6bcd

in

this

document.

This

is

for

alignment

with

the

srv6

network

programming

draft,

which

is

you

know

now

almost

through

the

isd

evaluation.

F

These

changes

are

related

to

the

fact

that

we

have

you

know

behaviors

which

are

associated

with

segments.

So

we

have

changes

to

allow

optional

specification

of

those

behavior

and

sit

structure

for

the

segment

types

and

then

for

the

srv6bc

as

well.

We

can

have

multiple

visits

associated

to

the

same

sr

policy

with

you

know

different

behavior.

So

we

wanted

to

add

some

of

those

clarifications

in

this

text

and

then

there

were

some

questions

about

colors

and

bgp

multipath

scenario.

F

So

there

is

just

a

small

text

added

there

based

on

inputs-

and

I

know

there

are

editorial

updates-

needs

as

well

on

the

draft

next

slide,

please

so

one

of

the

so,

as

I

mentioned,

one

of

the

changes

is

the

composite

candidate

path

and

let's

look

at

the

motivation

for

this

today.

Sr

policy

has

you

know

a

dynamic

or

explicit

candidate

path

right

and

let's

say

that

we

have

a

sr

policy

with

a

dynamic

candidate

path

and

it's

you

know,

has

its

objectives.

F

F

You

know,

color

1

would

mean

blue

plane,

color

2

could

mean

red

plane

and-

and

this

is

to

allow

kind

of

a

combination

in

a

flexible

manner

of

the

intended.

You

know

objectives

want

to

stress

or

highlight

that

the

unit

of

signaling

from

protocols,

bgp

psap

and

even

you

know

from

a

model

perspective

young

model

perspective.

F

It

would

kind

of

remain

the

candidate

path

and

overall,

the

rules

of

selection

of

the

candidate

path,

the

preference

you

know

that

which

one

is

active

and

the

overall

framework

of

sr

policy

is,

you

know,

not

really

changed.

So

we've

had

some

discussions,

welcome

feedback

and

inputs

on

this

as

well.

Further

next

slide,

please,

as

part

of

this

candidate

composite

candidate

path.

There

have

been

discussion

on

you,

know,

color

usage

and

how

it

is

managed

or

how

it

should

be

managed.

F

F

Those

based

on

bgp

leverage,

this

color.

There

are

other

mechanisms

like

bc

which

do

not

leverage

the

color,

and

then

there

are

other

mechanisms

in

the

draft

which

may

or

may

not.

You

know,

leverage

or

you

know,

use

color.

I

would

say

that

these

may

be

implementation,

specific

mechanisms

that

that

you

know

may

be

there.

F

So

a

discussion

point

for

the

working

group

has

been:

do

we,

you

know,

allocate

or

reserve

a

separate

block

for

colors.

You

know,

as

has

been

requested

by

some

members,

or

do

we

allow

the

operator

to

manage

this

color

space

based

on

their.

You

know,

deployment

designs,

you

know

also

leveraging

whatever

is

available

to

the

implementations

that

they

are

working

with.

F

F

G

You,

oh

okay,

so

this

is

about

the

block

allocation

for

dynamically

instantiated

sr

policies.

We've

had

exchanges

on

the

email

about

about

that

iem

in

favor

of

allocating

a

separate

block

for

dynamically

created

policies,

a

separate

block

of

colors,

I

mean

for

those

dynamically

created

as

our

policies

specifically

when

they

are

created

by

a

controller.

G

G

G

G

F

A

H

Candidate

path,

so

we

are

introducing

one

more

level

of

ecmp

right,

which

is

a

hierarchical

like

we

already

had

an

igp

cmp

and

a

per

segment

list

awaited

ecmp,

and

this

is

one

more

level

of

a

weighted,

ecmp

and

and

on

top

like,

if

there

is

a

bgp

service

resolving

which

which

has

multipath

enabled,

then

that

will

be

the

next

level

of

hierarchy

for

the

ecmp,

it's

possible

that

that

some

platforms

may

not

be

capable

of

doing

so

many

hierarchies,

and

it

will

be

good.

Some

text

can

be

written.

F

C

Yeah,

I

have

a

comment

of

the

composite

kennedy

parts

as

well.

For

me,

you

introduce

a

new

kind

of

kennedy,

pass

it's

good,

but

it's

a

little

bit

hard

to

understand

and

I'm

glad

you

can

add

more

text

to

us.

Describe

it

and

also

do

we

really

need

to

specify

that

the

the

end

point

of

the

can

composite

candidate

pause

should

be

identical

must

be,

must

be

identical.

I

I'm

not

really

sure

about

that.

F

F

E

A

I

F

J

J

J

If

they

have

implemented

that

independently,

we

are

applying

that

for

ldp

mpls

in

dodge

telecom

segment,

routing

isn't

operational,

so

I

didn't

check

whether

this

has

been

implemented

by

some

of

our

vendors

and

there

are

more

out

which

are

not

operational

on

dutch

telecom.

I

just

would

like

to

know.

The

idea

is

not

that

that

that

creative,

that

others

can't

have

it

if

there

are

implementations

already

out

our

operators

like

functionality.

That

is

something

that

I

can

tell

okay.

Then,

please

switch

to

the

next

slide.

J

Right

this

is

an

example,

and

it

calculates

some

paths.

There

are

4096

available

by

all

the

interfaces

and

the

parallelity

just

think

about

that.

The

network

shown

is

also

symmetric

on

a

lower

part,

which

I

didn't

include

in

the

picture

and

then,

if

you

start

an

mpls

trace

route

with

32

multipath

ip

addresses,

of

course,

already

behind

the

source.

Router.

J

The

result

will

be

that

some

interfaces

will

see

two

ip

addresses

being

routed

across

it

and

others

will

see

no

ip

address

being

routed.

So

what

happens

in

rfc,

ad29

or

rfc

8287,

maybe

trace

route,

is

that

the

source

router

will

start

to

measure

with

another

32

ip

addresses,

and

you

will

have

the

same

effect

again

and

only

16

will

go

to

router

110.

J

J

As

far

as

I

can

tell,

our

staff

continues

to

tell

us

that

yeah

you

in

some

cases

you

cannot

address

or

test

all

interfaces

from

a

router

start

to

with

a

traceroute

to

router

destination.

If

you

use

ping

as

it

is,

and

the

idea

which

is

shown

on

the

next

slide,

please

is

to

add

segment

routing

functionality,

and

that

would

allow

you

if

a

router

source

on

the

left

hand

side

adds

a

top

label

like,

for

example,

the

notes

it.

J

J

Okay,

I'm

a

bit

tired,

sorry,

but

but

the

time

to

live

has

to

be

set

correctly.

Then

you

can

forward

up

to

32

ip

destination,

addresses

to

that

router.

You

can

ignore

ecmp,

stuff

and

and

enable

ip

packets

with

a

full

set

of

destination,

addresses

to

reach

the

router,

and

that

can

be

done

also

repeatedly.

You

could

do

that

with

a

packet

sent

to

r120

or

r121.

J

If

that

is

of

interest,

what

you

need

to

do

here

is:

we

do

not

need

any

new

protocol

components,

so

mpls

om

can

stay

like

this.

This

is

a

local

improvement

on

routers.

You

of

course

need

to

adopt

the

implementation

and

you

need

a

different

state

engine

inside

of

the

routers.

We

have

implemented

that

and

our

operators

like

it.

J

That

is

it

if

there

are

comments,

please

go

to

the

list

and

I'd

also

appreciate,

if

vendors

have

implemented

that

already

to

talk

to

me,

of

course,

because

I'm

not

sure

whether

I'm

the

only

one

right

yep.

This

is

an

individual

draft.

If

you

like

the

idea

yeah,

please

pick

up

give

a

discussion

so

that

the

work

can

be

picked

up.

If

you

like

it.

Thank

you

very

much.

If

there

are

questions,

of

course,

I'm

happy

to

answer.

A

K

Hi,

can

you

hear

me?

Yes,

okay,

hi,

I'm

naked

from

cisco,

so

I'll

be

presenting

this

draft

on

behalf

of

my

co-authors.

Next

slide,

please,

okay!

So

let

me

start

with

the

problem

that

we

are

trying

to

solve

with

this

draft.

So

currently

you

know,

I'm

sure

you

know

I've

noticed

there

are

different

types

of

segment

ideas

that

are

proposed

with

different

forwarding.

You

know

instruction

or

forwarding

semantic

associated

to

these

segment

ids.

K

So

when

the

segment

routing

is

applied

on

mpls

data

plane,

we

are

actually

leveraging

extending

and

leveraging

the

the

machinery

defined

in

rfc

8029

for

the

oem

purpose.

Like

I

know,

path,

validation,

trace,

group

etc.

So,

in

order

to

do

that,

one

of

the

primary

requirement

right

now

as

for

rfc

age

29,

is

to

define

a

new

target

fac

stacks

of

tlb

for

each

of

the

segment

id.

So

this

is

more

like

a

one-on-one

kind

of

mapping.

K

So

this

requires

basically

a

lot

of

you,

know,

effort

and

standardization,

and

also

either

a

domain

wide

or

a

node-wide

software

upgrade

so

that

you

know

the

initiator

of

the

responder

can

understand

what

you

know.

Sub-Tlb

is

it

they

can

pass.

The

data

included,

perform

the

effect,

validation

and

respond

back

with

the

positive

negative

response.

This

basically

racers

scalability

challenges.

K

K

So

we

took

one

step

back

instead

of

you

know

blindly

defining

news

of

tlbs.

We

thought

if

there

is

any

scalable

way

where

we

can

try

to

address

this

challenge

and

that's

what

you

know

we

did

with

this

draft.

So,

instead

of

defining

a

one-on-one

basis,

kind

of

you

know:

segment

targeted,

fcc

stack

for

each

of

the

segment

id.

We

tried

defining

one

generic

segment

id

that

that

could

be

applied

to

validate

different

types

of

you

know:

segment

ids.

K

So

as

part

of

this,

what

we

did

is

we

proposed

a

new

sub

tlp

that

only

carries

the

segment

id

that

needs

to

be

validated

and

from

the

validation,

the

effect

validation

point

of

view.

There

are

two

main

things

that

basically

any

responding

node

will

do

so

when,

whenever

the

probe

you

know

reaches

the

particular

node,

the

the

responding

or

the

validating

node

will

perform

two

basic

check

one.

Is

it

the

lsp

endpoint

for

that

particular

segment

id

and

did

it

receive

the

probe

on

the

right

incoming

interface?

K

K

K

So,

let's

take

an

example

where

node

8

is

assigned

with

a

perfect

segment

id

of

sixteen

zero

zero

zero,

eight

for

the

default

algorithm

and

sixteen

one,

two

eight

eight

for

the

flex

algorithm,

algo

128,

so

the

node

one

when

it

wants

to

validate

any

of

these

prefixes.

Let's

assume

that

it

wants

to

validate

16008.

K

It

simply

will

include

this

segment

id

as

part

of

the

sub

tlv

in

the

mpls

oem

pro

the

request-

and

you

know

one

will

send

the

traffic

when

node

8

receive

it.

Like

I

mentioned

earlier,

it

performs

two

basic

things:

is

it

the

lsp

endpoint

for

sixteen

zero,

zero,

zero

eight

and

did

it

receive

it

on

the

right

incoming

interface?

K

K

So

in

this

case,

when

node

1

want

to

validate

9378,

it

simply

will

include

this

9378

in

excuse

me

in

the

mpls

probe

and

will

minister

the

traffic

and

when

eight

receives

it

again,

it

performs

the

basic

two

check.

Am

I

the

lsp

endpoint,

and

did

I

receive

it

on

the

right

incoming

interface?

So

in

this

case

eight

can

receive

the

traffic.

When

9378

is

the

you

know,

adjacency

segment,

it

can

receive

the

traffic

either

on

the

link

one

or

on

link

two.

K

So

this

is

a

another

example

for

parallel

set

where

you

know,

instead

of

terminating

both

the

links

on

the

same

node,

we're

actually

terminating

it

on

different

nodes.

In

this

case,

9378

is

load

balanced

by

node

7

to

node,

8

and

also

node

88.

So

again,

you

know

the

same

procedure

can

be

applied

here

and

as

long

as

we

are

terminating

it

on

the

right

interface

will

be

getting

a

positive

response.

Next

slide.

K

So

one

thing

I

was

kept

telling

this,

like.

You

know

one

of

the

check

to

ensure

that

basically,

as

part

of

the

validation,

one

of

the

things

that

we

do

is

to

ensure

that

we

are

receiving

the

probe

on

the

right

incoming

interface

to

do

that.

We

we

basically

introduce

this

again.

This

is

a

local

implementation

matter,

but

we

included

this

one.

K

One

approach

as

part

of

the

draft

where

each

of

the

node

can

maintain

a

table

which

is

a

segment

id

to

the

interface

mapping

table

which

could

be

easily

used

to

perform

the

the

check,

if,

if

you

are

receiving

it

on

the

right

incoming

interface,

so

in

this

table,

each

of

the

node

will

have

all

the

local

prefix

sets,

along

with

the

respective

interface,

for

example.

If

it

is

a

prefix

set

that

is

associated

with

the

default

algorithm,

then

it

could

be.

K

You

know

it

can

receive

the

pro

from

any

of

the

incoming

interface,

wherein

if

it

is

another,

so

it

also

includes

the

adjacency

sid

assigned

by

the

directly

connected

nodes

for

itself.

So,

in

this

case,

node

8

will

include

its

prefix

set,

for

you

know,

algo0

and

also

algo128,

and

it

also

includes

the

adjacency

set

assigned

by

node

seven

for

eight.

So

you

would

notice

that

you

know

we

are

not

required

to

maintain

any

other

details,

including

its

own

adjacency

set,

or

even

the

adjacency

say,

designed

by

node

for

five

or

six.

K

Yeah,

so

in

a

nutshell,

what

we

are

trying

to

do

was

you

know,

define

one

targeted,

fcc

stack

that

could

help

us

validate

multiple

types

of

segment

ids,

so

one

it,

of

course

drastically

reduce

the

standardization

effort.

So

we

don't

anymore

need

to

have

a

one-on-one

mapping

for

right

now

for

each

of

the

segment

ids.

K

We

don't

need

a

targeted

efficiency

stack

and

two

we

drastically

reduce

the

number

of

or

the

the

volume

of

information

that

needs

to

be

included

by

the

initiator

and

also

the

volume

of

information

that

needs

to

be

validated

by

the

the

responder,

and

it

could

be

easily

extendable

to

accommodate

any

future

segment

ids.

As

long

as

you

know,

we're

able

to

use

those

basic

checks.

Next,

please.

K

K

A

L

K

M

K

K

K

M

H

H

H

H

Basically,

if

you

have

multiple

igp

domains

and

each

domain

has

deployed

a

flex

algo,

so

bgpct

can

be

used

to

interconnect

these

and

the

the

flex

algorithm

number

can

can

directly

map

to

the

color

and

that

color

can

directly

map

to

the

transport

class

route

target

that

is

carried

in

bgpct

and

that's

how

you

get

an

end

to

end

connectivity

for

that

particular

flex.

Algo.

H

So

in

the

bgp

ct

the

the

the

in

the

nlri

portion,

the

prefix

will

be

zero.

Zero,

zero

and

the

color

will

be

encoded

in

the

route

target

and

there

will

to

to

be

able

to

advertise

multiple

of

these

color

only

policies.

The

route

distinguisher

enables

advertisement

of

these

multiple

color-only

policies

end-to-end,

and

we

added

some

details

on

the

use

cases

and

examples

explaining

how

these

use

cases

work.

So

one

is

this

data

sovereignty

use

case.

H

H

So

the

next

update

that

we

added

is

translating

transport

classes

across

domains.

So

imagine

there

are

two

domains

where

the

the

colors

are

do

not

match

like,

for

example,

a

red

color

in

one

domain

has

a

different

meaning

in

another

main

so

and

that

maps

the

red

color

in

one

domain

maps

to

a

green

color

in

the

other

domain.

So

this

could

usually

happen

when

you

know

these

two

are

cooperating

domains.

It

could

also

happen

if

it's

a

result

of

some

network

mergers.

H

So

an

example

has

been

added.

You

know

to

explain

how

exactly

this

translating

the

colors

across

the

domain

works.

Basically,

the

idea

is

the

nlri,

which

is

the

prefix,

and

the

route

distinguisher

remains

same

and

the

route

target

changes

across

domains

to

appropriately

use

the

the

transport

tunnels

which

map

to

the

required

intent.

H

H

H

H

H

H

So

solution

is

to

define

a

low

latency

transport

class

and

then

use

this

intra

domain.

Accumulate

this

intra

domain

latency

metric

in

bgp.

So

there

is

this

draft

ietf

idr

performance

routing.

This

defines

a

new

new

latency

metrics

of

tlv

in

the

aigp

attribute

accumulated

igp

attribute,

so

this

accumulated

latency

metric

works

very

similar

to

accumulated

igp

metric.

That

is

instead

of

igp

metric.

It

accumulates

the

latency

metric,

for

example,

in

this

rightmost

metro,

the

latency

metric

is

10

for

the

lower

path

and

for

the

upper

path

from

abr

7

to

p6.

H

H

Latency

10

plus

5

then

becomes

15..

This

continues

on

every

border

router

the

latency

keeps

getting

added

onto

the

the

existing

latency

and

when

you

receive

on

pe

2

on

the

ingress,

when

you

receive

both

the

advertisements

from

abr

1

and

abr

2

for

pe

6,

the

advertisement

received

from

abr

one

has

the

latency

metric

of

42

and

the

advertisement

received

from

abr

2

has

a

latency

metric

of

36

35.

F

Okay,

thanks

shraddha

for

the

update,

the

the

draft,

as

I

believe,

has

actually

three

parts

or

three

components.

You

know

it

goes

into

use

cases,

it

goes

into

requirements

and

then

it

has

a

you

know

a

proposal

based

on

bgpct

and

you

know

I

believe,

for

the

problem

statement

there

are.

There

are

several

solutions

available.

F

F

H

H

Yeah,

so

the

requirements

in

the

use

cases

yeah.

Definitely

we

can

look

at

them

and

discuss

them.

So

this,

I

believe

the

proposal

is,

is

a

different

solution

than

the

existing

solution,

so

it's

using

bgp

based

mechanism

to

interconnect

multiple

domains.

As

far

as

I

understand,

the

existing

solutions

are

mostly

label

stacking

solutions

where

which

do

not

use

bgp,

as

you

know,

bgp

as

a

mechanism

to

interconnect

multiple

domains.

H

F

Right,

I

mean

I

understand

this

thing.

My

point

was

that

there

are

there

are

these

requirements.

There

are

the

use

cases

and

I

I

believe

all

of

this

work

is

informational,

because

you

know

this

is

all

about

a

design.

Seamless

mpls

was

also

informational,

so

my

suggestion

would

be

that

as

a

working

group,

we

tackle

the

requirement

and

use

case

first

and

try

to

you

know

capture

what

is

there

plus

you

know

so

that

it's

not

that

there

is

only

one

solution.

F

C

I

H

E

E

Replying

to

katan's

comment,

I

think

we're

looking

at

two

distinct

requirements

here.

One

requirement

is

for

coarse-grained

te

between

networks

that

value

autonomy.

Another

is

for

fine-grained

te

between

networks

that

are

must

much

more

tightly

coupled.

So

I

think

that

this

draft

should

probably

go

forward.

F

A

N

You

hear

me

yes,

yeah,

I'm

also

responding

to

ketan.

I

would

say

that

if

this

document

is

targeted

as

a

standards

track

document,

then

having

a

solution

would

be

important

as

having

a

requirements.

Document

would

be

important

so

that

we

are

not

coming

up

with

too

many

solutions

for

the

same

problem,

given

that

it's

targeted

right

now

as

informational,

I

think

it's

fine

for

it

to

set

out

what

its

goals

are

and

and

set

out

how

it

wants

to

achieve

those

goals.

N

I

think

the

overhead

of

writing

a

requirements

document

is

probably

not

the

best

way

to

deal

with

it.

The

other

thing

I'd

like

to

point

out

is

that

we've

we've

gone

over

the

you

know

whether

we

should

have

hierarchy

or

not,

and

hierarchy

has

a

lot

of

good

sides

to

it,

which

is

you

do

information?

Hiding

you

don't

blow

up.

You

know

any

one

domain

or

in

the

case

of

hierarchical

controllers,

any

one

controller.

N

F

That

is

a

great

presentation.

I

read

the

document

and

I

strongly

feel

this

is

this

should

be

adopted.

I

think

it's

very

useful.

I

think

that

you

documented

both

use

cases

and

requirements

and

the

solution

the

possibility

of

how

to

implement

this.

The

way

the

seamless

mpl

is

used

in

backhaul

there's

a

huge

potential

for.

A

F

I

mean

I

agree

with

all

that

is

said

in

substance

for

sure

this

draft

is

currently

marked

as

standard

stack,

so

that

was

one

part.

The

other

part

is

that

we

definitely

want

to

present

in

all

the

choices

and

all

the

solutions

and

therefore

it

is

you

know

if

we

are

doing

informational

documents

for

the

solution,

then,

as

a

working

group,

we

should

try

to

present.

You

know

the

various

options

so

that

operators

can

make

the

choices.

F

I

still

feel

that

there

is

a

value

to

have

a

requirements

separately,

discussed

and

worked

on

in

this

case,

because

it

would

again

help

whoever

is

looking

at

those

informational

documents

to

kind

of,

like

you

know,

have

a

reference

point

to

compare

the

pros

and

cons.

You

know

for

their

specific

use

cases.

A

E

A

solutions

document

is

all

well

and

good.

However,

we

shouldn't

make

the

solution

document

block

on

the

requirements

document.

I'm

sorry.

I

said

that

wrong.

It's

all

well

and

good

to

have

a

requirements

document,

but

we

shouldn't

make

the

solutions

document

block

on

it.

We

have

some

bitter

experience

where

we

have

solutions

blocking

on

requirements,

documents

for

ridiculous

amounts

of

time,

and

we

don't

want

to

repeat

that

experience.

A

P

Hi,

can

you

hear

me

bob?

Yes,

oh

great,

many

thanks

all

and

I

will

be

presenting

this

draft

on

iom

encapsulation

in

srv6

network

on

behalf

of

my

co-authors.

Next,

actually,

so,

this

draft

has

been

around

for

quite

some

time

since

october

2018..

It

was

for

presented

in

nps

working

group

around

november

2018.

P

So

this

is

a

very

sweet

spot

to

collect

the

ion

data

from

the

segment

endpoints

and

not

all

the

nodes

in

the

network.

So

that

is

the

focus

now

iom

data

fields,

encapsulations

that

can

be

carried

in

various

protocols

like

nss,

chinese

ipv6,

hopper,

hop

header,

ipv4,

mpls,

and

here

we're

talking

and

focusing

on

srv6

and

the

data

collection

from

the

endpoints

to

achieve

that

iom

ionm

encapsulated

packet

as

the

encapsulation

is

not

defined

here,

because

we

use

encapsulation

for

inm

that

is

defined.

P

Is

I

draft

ietf

ippm

ionm

data,

but

carry

that

information

inside

srh

within

the

sr

htld

the

draft

defined

procedure

for

ingress

node?

How

we

achieve

this,

the

segment

endpoint

node

and

the

egress

node,

and

we're

going

to

go

over

this

and

then

draft

does

not

introduce

any

new

procedure

or

ion

encoding,

which

is

which

is

as

defined

in

ppm,

with

input

next

type.

Please.

P

In

that

case,

implementation

on

the

ingress

takes

well

encapsulation,

srv6,

header,

decide

and

and

and

will

add

a

pre-allocated

tlv

in

the

packet

and

that

pre-allocated

srhtlv

is

also

deterministic

in

segment

routing

network,

because

the

segment

list

is

known.

So

you

know

how

many

points

are

there

in

the

network.

So

that's

an

advantage.

It's

deterministic

and

then

the

endpoint

node,

there's

no

local

configuration

again

may

insert

local

ion

m

data

as

the

packet

leaves

from

the

english

node.

P

P

So

there

are

a

couple

of

things.

One

is

that

we

would

like

to

get

working

group

feedback

on

this

draft.

We

are

also

priming

this

for

working

with

options.

So

we

welcome

all

the

question

and

comment.

One

question

for

the

chair

would

be

that

we

believe

that

the

work

should

happen

in

spring.

It

doesn't

change

any

encapsulation,

5

ppm.

P

Q

So

in

ippm

working

group

there

is

a

working

group

draft,

that's

called

direct

expert

which

basically

does

not

require

any

information

being

stored

in

the

packet

in

the

irm

packet

itself.

So

this

information

is

exported,

as

name

suggests,

directly

from

each

node

that

generates

their

information

to

a

collector.

Q

Also

in

ippm

working

group

that

we

had

meeting

monday,

we

discussed

there

is

another

proposal

on

hybrid

two-step

method,

which

is

a

combination

of

iam,

trace,

option

and

direct

expert.

So

basically

what

it

creates.

It

creates

a

follow-up

packet

which

is

out

of

band,

which

is

again

advantage

similar

to

direct

expert,

so

their

network

resources,

especially

for

services

critical

to

delay

and

packet

laws,

does

not

require

in

more

bandwidth

because

in

ip

trace

options

in

iom

trace

options

method.

P

P

So,

but

that

does

not

change

the

advantages

of

doing

the

data

collection

in

the

packet.

We

cannot

be

deciding

that

one

way

or

the

other

one

way

is

better

than

the

other,

because

in

direct

export

the

problem

is

the

correlation

of

the

packets

at

the

controller,

because

you

have

to

collect

these

copy

of

the

packet

from

everywhere

and

then

correlate.

P

So

there

are

pros

and

cons,

and

this

is

why

I

mean

there

are

two

methods

that

are

defined

and

and

what

you

propose

for

the

hybrid

two

step

is

is

very

interesting

proposal

and,

I

think,

is

also

sort

of

orthogonal.

In

terms

of

we

cannot

be

in

defining

solution.

We

can

be

cherry

picking,

one

versus

the

other,

because

they

both

have

their

own

space

and

advantages

here.

P

The

advantage

is

that

we

are

collecting

data

only

from

segment

endpoints,

which

are

important

nodes

and

we

are

collecting

only

if

the

node

supports

the

data

and

because

we

are

deterministic

from

the

behavior

point

of

view

and

from

pre-allocated

space

point

of

view,

so

the

mpu

issue,

then

a

lot

of

other

issues

are

addressed

in

in

that

case.

So

I

think

it

is

simple.

It

is

also

a

very

sweet

spot

for

srv6

network.

Q

More

than

mtu

and

hybrid

two-step

allows

to

generate

a

sequence

of

follow-up

packets

from

one

packet

and

actually

it

collects

them

as

it

follows

their

irm

packet.

So

there

is

no

additional

information

required

for

correlation.

As

for

challenge

to

do

correlation

with

a

direct

expert.

Yes,

you

are

right.

It

requires

additional

information

to

being

collected

from

the

node,

so

that

correlation

of

information

can

be

done

by

the

collector.

P

E

First,

I

agree

with

everything

that

craig

had

in

the

second.

I

have

some

questions

about

your

reliance

on

the

srh

tlv

field.

It's

clear

how

this

would

work

in

the

situation

where

the

srh

is

the

only

mechanism

you're

using

for

traffic.

Here

it

contributes

a

little

bit

to

srh

float,

but

at

least

it's

understandable.

R

R

P

So

I

I

think

we

can

take

it

offline,

but

but

one

point

is

that

with

the

ip

v6,

especially

with

the

hop

by

hop

option,

when

you

use

hobbyhop

option,

you

collect

data

from

all

the

hops

and

and

in

this

use

case

we

are

trying

to

collect

data

for

the

segment

endpoints

only.

So

that

is

the

major

difference

that

the

data

collection

is

from.

A

fewer

places

is,

is

more

deterministic

in

terms

of

how

many

segment

endpoints

we

have,

but

we

can

take

it

offline.

R

P

P

P

P

P

M

A

A

A

C

A

T

T

T

T

T

T

E

T

T

T

T

T

T

T

So

boss

segments

are,

you

know,

very

similar

to

known

segments

it's,

except

that

you

know

the

type

of

forwarding

behavior

they

represent.

There

are

common

and

shareable

building

blocks.

You

know

following

instructions

for

stateless

p2mp

tunnels,

next

slide,

please.

So,

let's

look

at

example.

So

in

this

this

picture

we

have

a

multicast

stream

to

four

leaf

nodes

l1

to

l4,

and

then

we

are

using

two

p2mp

chains,

a

red

one,

a

green

one.

T

T

Next

slide,

please

next,

please

I'll

skip

this

example,

so

this

is

diagram

of

the

generic

model

of

bi-second

behavior.

We

have

incoming

packet

p

and

we

replicate

it

to

make

a

call

p1

for

packet

p.

We

perform

next

on

the

bot

set

and

then

we

forward

p

based

on

the

next

set

for

p1.

We

do

a

sequence

of

next

on

all

the

p2mp

chances

and

then

we

process,

you

know

we

receive

this

p1

locally.

T

This

is

the

same

diagram

in

the

case

of

you

know,

map

to

srnps

when,

in

the

case

where

a

packet

has

a

inner,

you

know

innermost

service

label.

The

route

need

to

insert

a

a

special

purpose

label

called

the

end

of

chain

label

between

the

service

label

and

the

ptme

chain

labels,

so

that

when

we,

you

know

pop

labels

of

p1,

we

know

where

to

stop,

and

we

can.

You

know,

keep

the

service

people

with

the

packet

for

local

processing

next

slide.

Please.

T

T

A

U

T

U

T

U

Yes

right,

so

there

are

some

topologies

where

this

really

doesn't

work.

Well,

the

simplest

one

is

the

inverted

letter

y

topology,

where

there's

where

you

would

normally

consider

a

point-to-multi-point

fork,

but

you

either

have

to

send

two

copies

down

the

root

of

the

of

the

of

the

tree

or

you

have

to

return

from

one

of

the

buds

returned

back

up

into

the

network,

so

that

that's

while

this

works

really

nicely

in

all

the

examples

you

drew,

I

suspect

it.

It

falls

apart

in

in

other

example,

topologies.

T

The

this

mechanism-

you

know,

as

I

mentioned

in

the

early

slide

of

benefits,

ring

topology

and

linear

and

semi-linear

topology.

You

know

the

goal,

is

you

won't

be

able

to

achieve

the

maximum

ideal?

You

know

traffic

organization

in

all

topologies,

you

know

one

one

one

example

would

be

the

you

know

the

example

you

just

gave,

but

in

in

general

I

think

you

know.

We

believe

that

in

certain

type

of

apologies

and

with

a

certain

constraint

fed

to

the

path

computation,

you

know

you

should

be

able

to.

T

V

V

T

T

T

T

V

A

M

M

T

Yeah,

that's

actually

in

the

yeah.

If

you

have

a

large

number

of

leaves,

you'll

probably

use

a

a

number

of

you

know

chains

and

for

your

path,

computation.

You

can

specify

a

number

of

constraints.

One

would

be

the

you

know,

maximum

hop

hop

count.

You

know

to

limit

the

end

to

end

delay,

only

per

per

chain

basis

right.

A

W

W

Next

to

this,

we

think

that

redundancy

protection

is

one

of

the

mechanism

to

achieve

the

service

protection

and

the

service

protection

actually

is.

One

is

one

of

the

three

techniques

defined

for

the

deterministic

networking

and

the

second

reason

we

we

propose

this

work

is:

there's,

there's

a

new

requirement

for

the

network

and

for

the

services

like

the

the

cloud

ar

or

cloud

games

they.

This

kind

of

services

requires

more

strict

rsa

guarantees,

for

example

the

end-to-end

reliability.

W

So

this

redundancy

protections

follows

the

principle

of

the

prey

off

that

stands

for

the

package,

replication,

elimination

and

ordering

function

that

are

originally

defined

for

the

event

net

and

in

this

draft,

and

this

documentary

extends

the

capabilities

in

the

sr

paradigm

to

support

this

mapping.

Though

next

page,

please.

W

W

And

if

one

of

the

paths

between

the

redundancy

node

and

the

merging

node

fails

and

the

packet,

the

the

service

won't

be

interrupted.

Since

we

have

this

redundancy

path

and

if

the

and

currently

the

the

merging

node

can

actually

provide

the

functionality

of

the

ordering,

but

it

is

optional,

it

is

defined

optional.

So

far.

Next,

please.

W

W

The

segment

redundancy

segment

is

associated

with

the

redundancy

policy

and

it

will

be

explained

later

to

steer

the

flow,

and

there

are

two

new

behavior

and

r

and

m

and

m

are

defined

for

these

two

segments

and

on

the

redundancy

note

yeah,

there

is

also

metadata

to

be

encapsulated

on

the

density

node

and

remove

it

at

the

on

the

modding

mode,

and

this

so

far

the

the

metadata,

the

flow

identification

and

sequence

number

are.

The

metadata

defined

here.

W

W

It

is

a

variant

of

the

sr

policy

and

it

includes

the

more

than

one

order

more

than

one

other

list

of

a

segment

between

the

redundancy

node

and

the

merging

node,

and

we

we

defined

it

the

last

because

the

because

the

redundancy

note

is

actually

specifying

the

path

between

the

redundancy

of

the

relentless

policy

actually

specifying

the

path

between

the

revenues

node

and

the

merging

node.

So

we

assume

that

the

the

last

segment

of

the

ordered

list

is

always

the

march

segment.

W

Yeah

this

page

and

the

following

page

are

the

the

behavior

of

the

suit

code

suit

code

for

the

end,

r

and

and

m

behaviors.

I

won't

go

through

one

by

one

by

one

or

lambda

line.

Maybe

we

can

go

to

the

the

second

following

page.

There

is

a

figure

that

I

can

explain

here

so

on

the

rep.

I

think

I

will

explain

more

on

the

redundancy

node

emoji

notes.

W

So

on

the

redundancy

note,

actually

we

will

create

two

more

two

new

srh

header

and

this

headers

include

will

be.

The

segment

list

will

be

encapsulated

triggered

by

the

redundancy

pulse

policy,

so

the

srh

will

indicate

the

the

pass

from

for

the

for

the

primary

parts

and

the

and

srh

will

indicate

the

second

part

and

on

the

merging

note,

the

there.

W

A

E

W

W

It

defines

a

pair

of

segments

that

yeah

I

I

will

first

answer

your

question.

I

think

that

we

we

define

this

these

two

segments

and

in

between

these

two

segments

or

between

these

two

notes,

that

is

the

the

functionality

of

the

redundancy

protection

actually

before

before

the

redundancy

mode

or

after

the

merging

node.

It

is

just

purely

as

our

as

our

srv6

domain.

So

we

would

like

to

split

this.

W

If

we

use

this

simple,

simple

case,

we

would

like

to

split

this

two

into

three

parts.

One

is

three

part

from

node

one

to

the

another

z

node

and

at

the

at

the

node

one.

The

rsr

srh

indicates.

That

only

indicates

the

the

segment

list

from

node

one

to

the

redundancy

node.

It

doesn't

include

the

other

passes

towards

two

node

two

and

on

the

redundancy

note

that

it

will

specify

the

path

from

redundancy

node

to

the

marginal

and

on

the

marginality,

to

replace

the

new

7

list

to

the

to

the

node

2..

E

E

W

W

Yeah,

actually,

I'm

not

quite

quite

known

about

this,

I'm

tube

problem,

but

I

think

I

will.

I

will

maybe

reply

you

later.

If

I

understand

the

relation

between

the

mq

problem

and

the

and

how

we

can

use

how

we

can

avoid

or

solve

this

problem,

in

our

case

sure

yeah,

I

think

I

will

reply

you

later.

Okay,

thank

you.

C

A

A

Thank

you

very

much

for

for

your

understanding.

We

have

discussed

so

we

have

here

a

few

minutes

for

the

young

modeller.

Maybe

we

could

focus

on

the

first

two

slides

because

I

don't

think

there

is

much

point

in

discussing

the

detail

of

the

model

at

this

point,

and

this

is

young.

No,

this

is,

I

don't

know

who's

speaking

hey.

O

A

O

O

This

young

model

is

based

on

the

sr

service

programming

architecture

described

in

the

ietf

draft.

Next

slide.

Please

yeah.

This

yang

model

covers

the

base,

sr

segment,

sr

service

programming

model,

which

is

common

for

both

sr

aware

and

srn,

aware,

services

and

yang

model

for

sr

another

service

proxy

next

page,

please,

this

yang

model

consists

of

four

yang

modules,

which

are

shown

in

the

green

boxes.

The

middle

sr

service

programming

model

is

the

base

sr

service

programming

model.

O

The

bottom

green

box

shows

the

sr

another

service

module

which

augments

the

base

sr

service

programming

module.

Similarly,

any

new

sr

aware

services

could

be

modeled

by

augmenting

the

base.

Sr

service

programming

module

next

slide,

please.

This

is

the

base

sr

service,

programming

yang

configuration

model.

This

is

a

list

of

service

program,

indexed

by

name.

The

key

attributes

are

highlighted

in

the

red

boxes.

O

The

first

one

is

like

sr

sr

service

programming

behavior.

This

would

be

a

sr

aware,

static,

proxy,

dynamic

proxy,

etc.

The

second

one

is

the

service

type

and

service

instance

represent

a

service

in

this.

In

the

case

of

fsr

aware

this

is

the

exact

service

which

is

offered

and

in

the

case

of

sr

another

service

proxy.

This

is

the

service

which

has

been

proxied

for

next

one

is

the

sid

assigned

or

allocated

for

this

sr

service

programming.