►

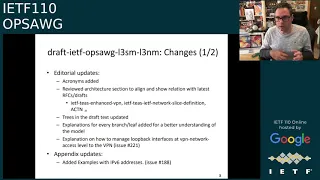

From YouTube: IETF110-OPSAWG-20210312-1200

Description

OPSAWG meeting session at IETF110

2021/03/12 1200

https://datatracker.ietf.org/meeting/110/proceedings/

A

A

Okay,

welcome

everyone

at

this

fifth

day

of

the

iatf

meeting,

one

one

zero.

This

is

this

meeting

of

the

joint

operation

and

management

area

working

group.

We

our

suggests

today

tiernzo

and

myself

hank.

There

is

a

notion

here

next

slide,

please

that

this

meeting

is

getting

recorded.

Everybody

should

be

aware

of

that,

and

also,

if

you

haven't

seen

this

already,

which

is

maybe

unlikely

at

the

fifth

day,

there

is

the

I

have

note

where

under

which

we

operate.

A

So

if

you

are

curious

how

ipr

works

and

our

anti-harassment

and

code

of

conduct

rules,

there

are

links

here

that

you

can

follow

in

the

slide

material

found

on

the

data

tracker,

as

you

apparently

all

have

read

and

agreed

to

that

you

can

go

to

the

next

slide,

please,

which

is

again

making

sure

how

to

work

through

this

meetings.

If

you

have

never

done

the

virtual

one,

that's

just

a

informational

text

here.

So

next

slide.

Please.

B

A

Again,

the

other

material

should

be

apparent

from

the

data

tracker

here.

So

next

slide,

please,

which

is

the

agenda?

Start?

No

sorry,

it's

the

micro

group

stutters

already

so

yeah

the

past

iteration

of

four

months

since

the

last

meeting

we

did

achieve

some

milestones

and

some

goals

here

so

rfc

8969

is

now

actually

published.

Thank

you

for

all

the

great

work

and

the

authors

to

be

so

as

vigilant

going

through

the

all

sections.

A

We

have

a

moved

two

publications

to

the

isd

process,

the

tech

accent,

module

of

course

ends

very

quickly,

and

I

think

that

publishing

using

the

process

fast,

finding

geofeeds

we

passed

a

working

group

last

call

and

the

ntf

id,

so

that

is

now

in

queue

to

being

moved

and

finishing

that

in

the

process

we

adopted

a

lot

of

drafts.

That

is

also

very

good

to

see

two

of

them

actually

like

three

of

them,

but

two

of

them

are

really

much

related

from

michael.

A

A

Then

exactly

you

can

see

a

lot

of

things

happening

in

the

at

the

end.

Of

course,

we

are

joined

with

the

open

session

of

ops

area,

so

the

last

slot

is

going

to

our

most

dearest.

Ladies

here

for

a

rob

and

we

will

have

the

open

mic,

but

that

is

probably

mostly

that

by

not

the

chairs

but

the

id.

So

now

we

can

actually

move

to

the

first

presentation.

A

D

D

So

this

is

this,

is

this

is

one

slide

that

provides?

I

would

say

this

summary

on

the

current

status

of

the

vpn

common

drive.

So

the

basic

message

is

that

here

we

are,

we

are

ready

and

we

we

we

received,

I

would

say

important

comments

and

reviews

for

for

that,

one,

the

one

of

the

I

would

say

the

important

reviews

we

received

was

from

the

the

young

doctors

and

basically

we

there

is

no.

I

would

say

major

issue

that

was

found

by

the

reviewers.

D

There

were

some

some

proposals

to

fix

some

parts

on

mainly

editorial

on

some,

oh,

I

would

say

on

minor

stuff,

we

fixed

that

one

and

we

released

the

a

revision

of

the

document.

There

was

last

in

the

last

meeting

where

you

see

there's

a

comment

from

from

basically

from

from

joe

t

to

to

to

say

that

yeah

that

the

the

model,

what

should

provide,

I

would

say,

more

annotations,

so

that

it

can

ease

to

its

readability,

because

it

is

not

in

the

document

itself.

D

So

what

we

have

done

is

that

we

are

reorganized

various

part

of

the

model

itself

and

we

include

a

lot

of

annotations.

So

that

that

can

be,

I

would

say,

more

easy

for

people

to

to

to

to

digest

and

we

think

that

what

we

have

so

far

is

it

it's

really

bitter

and

we

have

more

clarity

on

the

on

this

part.

D

We

also

received,

they

would

say

some

interesting

comment

from

from

julian

and

and

also

from

curity,

that

to

to

include

additional

transport

protocols

and

that

that

was

really,

I

would

say,

a

valid

comment.

So

we

had

we.

We

had

this

that

one

and

we

included

the

reference

into

into

the

I

would

say

into

the

model,

because

we,

even

if

our

scope

initial

scope,

was

not

the

on

the

test

centers

we

are.

I

think

that

it's

really

good

that

to

see

that

various

use

cases

can

make

use

of

this

model.

D

So

that's

one.

We

we

we

we

added

this,

these

extensions

and

this

reference

into

the

the

model,

and

then

we

added

more

text

to

describe,

I

would

say,

the

various

data

node

that

we

have

on

the

on

the

on

the

draft.

So

for

for

this

one,

we

think

that

we

there

is

no,

no,

no,

no

opening

issues.

So

far

on

the

document

and

the

the

next

step

that

we

would

like

to

to

suggest

to

the

working

of

also

for

ourselves

is

to

to

to

initiate

a

working

platform

for

this.

E

F

Okay,

so

thank

you

so

now

we're

gonna

give

you.

I

think

it's

the

go

to

the

previous

slide.

Please,

and

essentially

this

one

okay.

So,

as

you

know,

the

l3nm

model

has

been

here

for

a

while,

and

we

received

a

lot

of

feedback

from

from

implementations

and

it's

been

now

taken

also

near

to

production.

So

I

think

we,

we

are

now

very

comfortable

with

the

with

the

content

right

now

so

here

the

work

that

we

did

for

the

for

the

latest

version

is,

on

the

one

hand,

a

completed

editorial

work

that

he

needed.

F

F

So

we

received

some

also

some

comments

from

from

kerati

about

about

that

part.

So

it

was

not

understood

how

it

was

and

needed

to

be

done

in

the

model.

So

we

added,

we

didn't

didn't

need

to

change

the

model

itself,

but

just

we

had

a

destination

on

how

you

can

manage

the

loopback

interfaces

at

the

vpn

networks

level.

Also,

we

made

some

changes

to

the

appendix

to

also

do

the

examples

not

only

with

ipv4,

but

also

with

the

pvc

services.

Okay.

So

these

examples

that

are

provided

in

the

appendix

are

updated.

F

So

there

are,

you

can

go

there

and

check

all

the

all

the

discussions

so

just

to

give

you

a

summary

of

the

most

important

ones

that

have

been

incorporated

into

the

latest

version

and

we

receive

requests

to

add

additional

attributes

for

static

routes

how

to

handle

the

external

connectivity,

which

was

also

present

in

the

service

model.

So

we

incorporate

here

a

profile

to

er

to

add

it

now

it's

a

profile

just

because

we

have

different

solutions

today,

so

we

added

a

profile

to

cover

that

for

better

readability.

F

We

order

the

leaves

in

the

module

okay.

So

now

it's

a

it's,

it

was

a

suggestion

made

that

it

was,

as

we

came

from

the

very

early

versions,

adding

new

leaves

we

need

to

do

this,

reorganization

and

so

for

the

multicast

use

case.

Also

this.

So

this

model

is

being

used

also

today,

and

it's

putting

also

into

into

production

to

to

model

a

multicast

network

for

tv.

So

from

here

they

come

a

lot

of

inputs

and

we

have

now

the

support

for

bim

idmp

at

both

the

vpn

node

and

the

vpn

access

level.

F

This

tcp

level,

so

the

support

is,

is

full.

Then

the

cpu

security

part

and

some

fields

that

came

from

the

service

model

have

an

internet

network

model

and

some

generalization

of

the

concept

of

maximum

roads

allowed.

So

if

you

want

to

go

to

the

next

one,

please

so,

then

the

we

are

happy

with

the

with

the

issues

of

arson.

There

is

a

small

comment

added

in

the

in

the

github

yesterday

on

a

couple

of

days

ago,

with

that

we

think

it's

useful

and

we

already

have

a

proposal

to

cover

that

is

an

enhancement.

F

So

it's

not

changes

anything

fundamental

in

the

movies

as

an

engagement.

So

we

do

think

the

document

is

ready

for

working

group.

Last

call

and

there's

some

complimentary

work

to

this.

To

this

piece

of

work.

Follow

the

service

mapping

interiors

that

uses

this

model

as

a

base

and

then

adds

on

it

and

plugs

all

the

traffic

engineering

internet.

So

go

go

tears

for

that

discussions.

G

The

document

has

followed

the

same

structure

as

the

l3

name,

as

oscar

has

mentioned,

to

increase

the

readability

and

how

to

the

the

people

is

able

to

understand

the

model.

We

have

a

that's

gloss,

editing

several

parts,

including

the

the

connection,

the

routing

protocols

and

everything

is

explained

in

the

document.

G

During

the

last

months,

we

have

received

the

routing

the

directorate

review,

the

issues

that

they

rise.

There

were

sold

and

we

have

a

list

of

operations

in

indicator

in

the

git

repository

that

we

are

working

on.

So

some

of

the

issues

that

are

listed

here

in

the

in

this

slide,

the

first

one-

is

related

to

the

support

of

the

the

deep

serve

mpls

of

the

rfc

327.

G

The

issue

204

is

related

to

the

clarification

of

the

evpn

flavors,

the

next

one.

The

two

two

is

related

with

the

speed

horizon,

supported

this

vpn

network

access

level

and

the

finally,

the

ip

faster

route

support.

So

next

slide,

please

so

in

in

addition

to

the

revision

of

the

issues

that

of

the

routing

directory

we

have

included,

we

have

closed

at

the

additional

issues,

more

related

with,

as

I

commented

before,

with

the

avpn

support.

G

So

we

have

included

some

ethernet

segment,

identifier,

parameters,

control

wars

and

a

kind

of

configuration

for

the

targeted

vpn.q

support.

We

have

a

also

include

the

presence

type

in

order

to

create

define

the

availability

of

the

of

the

paths

we

have

included

in

the

document

additional

examples

and

how

to

use

as

a

l2nm,

and

we

have

included

a

node

list

just

in

order

to

not

limit

the

number

of

elements

in

in

the

service.

G

G

H

So

my

question

was

just

on

a

process

point

of

view,

and

I

know

you

pulled

the

common

stuff

out

into

a

separate

module

and

you're

saying

that

that's

ready

for

working

with

glass

core

and

the

l3

nm

model

is

my

question:

is:

is

it

okay

to

progress?

Those

two

with

this

one

still

being

open

and

being

actively

developed?

Is

that

is

there

gonna

be

any

issues

in

terms

of

wanting

to

subsequently

churn

stuff

that

is

lower

down

in

those

specs?

H

D

Yeah

yeah,

this

is

actually

a

good

question.

What

we

that

we

already

discussed

last

time

and

the

the

agreement

we

we

had

is

that

we

will

try

to.

I

would

say

to

to

stabilize

the

three

document

by

this

this

this

atf

meeting

and

if

we

file

to

to

have,

I

would

say,

an

acceptable

set

of

the

ill-2

nm.

We

will

progress

only

the

the

cameron

and

the

hl

training,

so

what

we

at

least

am

on

the

the

the

authorship

team.

D

What

we

we

decided

is

that

for

the

coming

so

far

it

will

be

frozen.

So

there

is

no.

If

there

is

any

specific,

I

would

say,

item

that

can

be

induced

or

detected

when

we

will

go

into

specific

detail

for

the

h2nm

that

will

be

covered

directly

into

the

l2nm

document

itself.

So

that's

why

we

are

confident

that

we

progress

in

only

the

two

ones

want.

I

would

say

there

is

no

no

dependency

again

on

the

on

the

l2

one.

H

H

D

We

can

continue

to

stretch

that

exercise,

but

it

it

it

will

never

be.

I

would

say

as

perfect

as

perfect,

so

yeah

we

can

wait,

but

I

don't.

I

don't

personally

see

a

value

for

that.

So

we

we

have.

We

have

the

camera.

We

have

one,

the

l

train

m,

which

I

would

say

exemplify

how

we

can

use

the

the

the

common

model.

If

there

is

anything

that

can

be,

I

would

say

require

that

it

is

to

it

to

new

level

that

can

be

just

handled

in

that

in

that

one

else.

J

And

thanks

for

this

presentation

one,

I

just

have

a

process,

question

myself,

but

I

I

haven't

noticed

a

lot

of

chat

on

these

drafts

on

the

group.

Maybe

I'm

just

not

seeing

them

or

they're

getting

misfiltered

on

my

email,

but

are

the

issues

being

resolved

on

list?

Are

they

being

resolved

by

the

authors

independently.

B

So

before

med

you

jump

in

that

was

going

to

be

another

one

of

my

things

that

are

brought

up

that

I

I'd

asked

previously

to

bring

some

of

that.

I

I

see

like

some

of

the

github

work

here

and

and

yes,

it's

the

official

github,

but

we

we

need

to

bring

some

of

these

items

back

to

the

list

as

you

discuss

them

in

your

author

group.

As

you

agree

on

resolutions,

some

I

I

saw

around

109

came

back

but

to

elliot's

point

I

haven't,

I

haven't

seen

a

lot.

D

Yes,

so

I

think

I

think

this.

This

is

also

another

point

that

we

have

discussed

also

in

in

previous

meeting

that

we

and

yes,

you

are-

you

are

right,

it's

it's

just

it's.

We

have.

We

have

regular

meeting

that

that

are

organized

every

week.

Among

the

I

would

say,

people

who

are

interested

on

this

work

and

this

meeting

are

advertised

and

the

I

would

say

sponsored

by

the

betty

working

group

and

this

the

I

would

say

the

information

to

connect

are

available.

D

I

think

from

the

from

the

from

the

working

group

level

some

of

the

of

the

issues.

Yes,

we

discussed

them

sometimes

ei

on

the

on

the

github.

It's

for

example.

Yesterday

I

have

sent

a

note

to

the

list

about

one

of

the.

I

would

say

the

comment

that

we

received

from

an

implementer

and

for

which

we

would

like

to.

I

would

say,

to

hear

more

comments

on

it,

so

it

it's

it's

always

for

us.

It's

it's

doubling

the

effort.

I

agree

with

you.

D

What

but

what

what

can

be

done,

at

least

by

I'd,

say

by

our

chairs,

is

that

so,

at

least

you

can

you

can

configure

the

the

github

to

send.

I

would,

I

would

say

a

summary

of

the

issues

on

a

weekly

basis

and

then

people

I

would

take

and

jump

in

if

there

is

any

point

to

be

to

be

to

be

further

discussed.

J

I

J

D

B

B

H

I

I

think

it's

sometimes

helpful

to

split

the

issues

between

one's

editorial

nature,

which

I

think

you

can

just

handle

and

ones

that

are

sort

of

like

a

design

change

or

something

that

requires

consensus

or

agreement

for

the

working

group

and

there.

I

don't

think

you

need

to

to

spread

all

the

discussion

back

to

working

necessarily,

but

I

do

think

it's

really

helpful

to

actually

give

the

conclusion

of

how

the

issue

was

resolved

and

and

if

possible.

H

If

the

text

is

one

block

of

text,

I

think

it's

helpful

to

actually

then

copy

that

working

group

and

say

look.

This

is

the

resolution.

Has

anyone

who's

not

been

following

the

actual

design

meetings

able

to

comment

on

that?

I

think

it's

helpful

to

get

as

much

coverage

of

those

as

you

go

along,

where

possible.

C

K

Thanks

yeah

largely

what

rob

and

you

said

if

there

is

a

decision

made

that

needs

to

be

posted

to

the

list

and

a

short

summary

of

sort

of

what

the

question

was

and

what

the

decision

was

slash,

how

it

was

arranged.

Obviously

we

don't

need

the

whole

discussion,

but

hey

like

somebody

raised.

This

is

a

concern

we

discussed

it.

We

decided

x,

here's

the

issue.

If

you

want

more

background,

I

think,

is

reasonable.

B

And

oh

med:

did

you

want

to

comment

to

that

nope?

The

last

thing

I

had

that

wasn't

covered,

so

I

got

the

I

jotted

down.

The

working

group

last

calls.

We

talked

about

that

on

the

expert

review

again

for

l2

and

m.

By

the

way

I

did

find

those

github

issues

they're

actually

under

l3

and

m

in

the

repository,

so

that

was

a

little

bit

of

of

the

the

200

level

issues.

But

for

that

expert

evpn

review,

we

found

someone

in

the

routing

directorate.

Who

could

who

did

that?

B

D

B

L

L

This

is

the

this

model

is

for

is

used

between

service,

orchestration

and

network

controller,

and

it's

the

similar

interface

between

like

the

layer,

3

in

them

and

layer

two

and

I'm

just

mentioned

just

to

present

it

and

on

the

right.

Here's

a

like

here,

the

modeling

approach

of

this

model

and

the

underlying

network

has

been

modeled

by

8345,

and

this

modeling

approach

of

this

model

is

augmented.

L

L

L

M

M

M

M

M

J

M

M

So

therefore

it's

not

needed

to

cover

that

as

well

on

ipfix

and,

as

I

said

previously,

the

history,

the

is46

registry

should

be

correct,

corrected

anytime

soon

and

once

it's

done

basically,

the

additional

code

points

could

be

added

there.

I

received

positive

feedback

as

well

from

from

mpls,

and

I

would

like

to

call

again

adoption

on

it.

Ops,

awg.

O

H

Oh,

that's,

okay,

so

just

to

check

and

ben

was

here,

he

probably

has

a

definitive

answer.

Am

I

right

in

thinking

this

is

adding

these

attributes?

Anyone

can

do

this

anyway.

I

think

that

that

registry

allows

anyone

to

add

them.

So

I

guess

what

I'm

thinking

is.

We

shouldn't

make

too

high

a

bar

if

these

are

useful

fields

to

add

the

ip

fix

registry,

then

I

think

that's

a

useful

thing

to

do

and

what's

going

to

comment.

H

I

Q

P

On

the

ayanna

and

register

information

element,

however,

the

lead

value

is

whenever

we've

got

like

multiple

ipv5

merchant

levels.

Together,

it's

worst

still

published

document,

I

believe,

to

explain

some

background

and

I

agree

with

you

that

the

boss

will

be

too

high

for

adoption,

but

for

for

requesting.

M

Another

yeah

exactly

I

actually

went

before

I

was

creating

this

stuff.

I

went

directly

to

aina

and

requesting

to

to

add

this

because

the

code

points

are

referring

to

existing

rscs,

but

ayanna

requested

for

me

that

there

is

a

official

document

and

now

I

see

airdraft

should

be

present

there

and

therefore

I

created

this

draft.

P

H

B

J

J

Okay,

so

between

what's

happened

since

the

previous

ietf.

Obviously,

as

I

mentioned,

it's

now

posted

as

a

working

group

draft

scott

and

I

are

already

working

on

some

editorial

changes.

Just

editorial

changes

for

zero

one

and

I'll

come

to

some

of

the

bigger

issues

based

on

bigger

feedback

and

without

further

ado.

Let's

get

to

the

bigger

issues

next

slide.

Please.

J

J

So

so,

if

you're

looking

for

fast

a

fast

rfc,

this

is

not

the

draft

we

we

don't

know,

for

instance,

yet,

based

on

some

of

the

format

work,

that's

continuing

whether

it

will

be

necessary

to

discover

one

or

multiple

s-bombs

out

of

a

system,

and

so

we're

still

trying

to

work

that

out

this

amounts

to

you

know:

do

we

have

an

array

or

do

we

have

a

single

object?

And

you

know

it's

not

a

big

deal,

but

it

is

if

the

s-bombs

are

going

to

live

in

different

places.

J

How

to

return

that

information

in

some

reasonable

way?

The

second

major

point

that

scott

and

I

discussed

and

there's

one

of

three

there

are

a

couple

of

others-

is

that

an

s-bomb

on

its

own?

What

an

s-bomb

delivers

to

you

and

what

discovery

of

an

s-bomb

delivers

to

you

is

an

inventory

of

the

device

with

that

inventory,

especially

if

you're

using

common

names

of

of

software.

J

J

J

Another

is-

and

this

really

just

does

answer

you

know

the

question

in

in

great

detail.

Actually

is

this

thing

vulnerable

to

a

particular

cve?

What

you

know

is

it

patched

in

and

how

is

it

patched

and

what

versions

do

you

need

to

to

go

fix

it,

and

so

one

and

there's

a

separate

format-

and

this

is

the

wonderful

thing

about

standards-

is

that

there

are

so

many

of

them

called

cyclone

dx

that

roughly

covers

the

same

ground.

J

The

people

that

are

working

in

the

various

proofs

of

concept

on

this

technology

have

said.

You

know

suit,

isn't

really

useful

in

this

context,

but

cyclone

dx

is

so

we

might

just

want

to

switch

that,

but

our

goal

in

the

draft

just

to

be

clear,

is

we

want

to

be

a

little

bit.

Technology

neutral

about

all

this

there's

going

to

be

a

lot

of

formats.

J

We

really

want

to

rely

on

the

http

content

type

headers

accept

headers,

and

things

like

that,

so

that

the

information

can

come

across

in

whatever

way

it

can

come

across,

but

that

desires.

That

requires

a

little

bit

of

thought

and

scott,

who,

I

don't

believe,

is

here

on

a

call

would

say.

The

other

thing

that

we

have

to

do

is

examine

the

work

of

rowley,

which

I'm

not

familiar

with

to

see.

J

Oh

scott

is

on

the

call,

maybe

scott

you

want

to

comment

a

little

bit

about

rowley

before

and

I

know

we're

running

out

of

time.

So

if

you

want

to

briefly

comment,

maybe

you

could

be

a

little

bit

more

clear

than

I

am,

but

this

is

another

issue

that

we

have

to

talk

about,

and

that's

really

all.

I

have.

B

J

Yes,

jeff,

so

it's

a

great

question

and

our

problem

in

answering

that

question:

is

it

it?

That

is

a

matter

of

how

the

s-bomb

format

is

organized

and

we're

trying

to

be

format

neutral

and

so

that

that

gets

us

into

well

suppose,

you're

referencing,

if

you've

got

one

s

bomb

and

you're

referencing,

another

s-bomb

right?

J

How

does

that

referencing

work,

and

I

think

we

have

to

add

an

example

of

that

sort

of

thing

going

on,

but

I

need

a

live

example

and

right

now

the

people

who

are

doing

the

format

work

aren't

yet

up

to

that

task,

and

so

we're

that's.

Why

another

reason

why

we

have

to

slow

roll

this?

This

is

really

live.

Technology

work

across

the

across

several

different

industries.

A

Actually

so

hi,

this

is

hank

as

an

individual,

no

heads

on

with

a

question

to

one

or

more

s

bomb

to

be

discovered.

That

is,

I

think,

strong

related

to

the

dependencies

of

s-bombs

with

each

other.

If

they

are

somehow

related,

there

might

be

dependencies

on

them

and

you

have

to

retrieve

all

of

them.

Some

of

these

sets

subsets

of

this

might

be

from

one

authority

so

discovered

at

one

location.

A

There

might

be

more

than

one

yes

that

are

already

somehow,

depending

on

each

other,

which

could

be

done

via

a

native

nesting

of

a

document

that

would

solve

it.

With,

I

don't

know,

binary

stuff,

you

could

do

a

cbo

sequence,

that's

relatively

easy

with

other

stuff

yeah,

the

the

3t

spdx

side

of

the

modeling.

Here,

that's

happening

under

the

itna

umbrella.

They

have

this

artifact

concept.

That

else

has

some

defects

in

it

and

and

again

can

relate

to

other

s

bombs.

So,

yes,

these

things

can

point

to

each

other.

A

A

So,

if

you

go

with

an

example

like

cyclone

dx,

I

would

at

least

make

it

three

the

golden

number

to

to

show

this

little

bit

diversity.

Here,

I'm

fine

with

removing

sweet

as

the

top-level

s-bomb

reference,

because

by

itself

it's

it's

like

a

cve,

not

so

super

useful.

It

will

be

used

by

other

things.

So

so

that

is

therefore,

I

think

totally

fine.

J

J

O

So

talking

about

openmetrics,

I

need

to

talk

about

prometheus

for

a

second,

of

course,

prometheus

is

where

all

of

this

is

coming

from.

Prometheus

is

inspired

by

the

original

monitoring

system

within

google.

It's

a

time

series

database,

the

effort

or

the

protocol

underlying

this

effort

is

stable.

Since

2014.

O

O

But

it's

still

a

competing

thing,

and

so

we

wanted

to

have

a

neutral

name

for

the

whole

effort

doing

networking

for

15

years

myself,

I

consider

itf

and

rfc

still

the

gold

standard

of

how

to

communicate

standards

on

the

internet,

so

that

is

also

one

of

the

founding

goals

of

openmetrics

and

basically

do

a

really

careful

evolution

of

what

we

have.

We

had

input

from

dozens

of

people.

We

have

used

openmetrics

in

production

in

prometheus

for

three

years

now

we

had

outside

vendors

or

other

vendors

we

implement

from

from

our

reference

code.

O

O

B

I

I

E

E

E

O

O

Yes,

this

is

one

thing

which

is

basically

ready

to

be

used

to

transmit

to

transmit

data

if

you

might

be

implying

that

this

is

better

split

up

into

different

parts

within

the

the

context

of

itf.

If,

if

I'm

right

with

this

assumption,

absolutely

no

no

issue

from

from

our

end

getting

feedback

on

how

to

do

this

properly

is,

is

my

main

intention.

B

P

P

P

O

I

agree

that

it's

super

icky

to

introduce

new

standards

and

it's

not

very

likely

that

the

old

standards

will

be

fully

going

away.

If

you

introduce

new

new

ones-

and

we

had

this

debate

for

quite

some

time

before

even

starting

this,

but

given

the

adoption

even

back,

then

we

decided

that

there

is

a

need

in

the

industry

to

standardize

on

this,

and

we

see

quite

some

confusion

around

people

not

doing

it

precisely

correct

or

not

at

all

so

yeah.

J

And

that's

that's

a

question,

and

then

a

comment

here

is

one

thing

that

we

normally

do

in

these

processes

is

the

first

thing

we

do.

Is

we

just

document

existing

practice

so

is

not

to

perturb

what's

going

on

and

that

you

usually

produce

an

informational

document

first

and

then

a

standard

second,

and

so

the

idea

is

so

that

if

we

want

to

do

the

evolution,

people

know

what

they're

evolving

from

from

an

ietf's

process

standpoint.

J

So

we

can,

I

mean

usually

that

can

be

pretty

lightweight

as

long

as

you

can

write

out

the

description

and

I

would

absolutely

support

getting

the

informational

document

out

quickly.

Right,

that's

you

know,

you

know

what

the

wire

format

is

today.

You

know

what

your

information

model

is

today

and

then,

if

people

want

to

go

from

that

right,

then

the

other

thing

that

this

group

is

usually

pretty

good

about

is

understanding

that

oh

yeah

there

actually

is

an

installed

base,

so

they

don't

do

crazy

things.

J

Even

you

know,

as

even

though,

as

benoit

says,

you

know,

we

do

have

all

these

other

information

models,

but

if

what

you

have

is

working

for

you,

I

don't

think

there's

a

lot

of

religion

in

the

ietf

on

that

point,

because

we've

just

been

talking

about,

for

instance,

wireshark

as

an

as

a

separate

example,

and

what

what

the,

how

to

standardize,

p

cap

and

p

cap

and

g.

So

it's

it's

not

a

it's,

not

a

religious

point,

but

that

would

normally

be

the

path

thanks.

O

O

O

Initially,

there

are

some

other

or

there's

mainly

one

one

other

major

initiative

in

the

same

region

that

is

open

telemetry

and

the

two

groups

are

working

each

other

where

open

telemetry

is

trying

to

achieve

100

compatibility

with

open

metrics

for

the

simple

reason

of

adoption.

I

it's

probably

going

too

far

to

give

the

complete

dump

of

the

history

and

and

everything

right

now,

but

we

can

do

this

on

the

mailing

list

or

in

whichever

form

or

I

can

go

there

now.

I

don't

care.

J

K

Warren,

so

thanks

this

is

warren

yeah

I

mean,

I

think

that

it

would

be

really

good

if

we

could

get

this

documented.

Clearly,

it's

very

widely

deployed

and

having

the

official

way

to

do

it,

written

down

would

be

really

good,

but

I

think

this

needs

to

start

off

as

an

informational

document

and

like

elliot,

and

I

think,

ben

y.

I

have

some

concerns

that

we're

getting

full

representation

from

everybody,

who's

actually

participating.

K

O

B

I

I

I'd

hate

to

do

this,

but

we're

going

to

have

to

to

cut

the

mic

in

order

for

us

to

get

through

the

remaining

three

sessions

and

leave

any

time

for

ops

area.

So

I

would

ask

chin

and

elliott

if

you

could

take

your

additional

comments

to

the

list

and

we're

gonna

need

to

move

on.

But

the

good

news

is:

there's

a

lot

of

discussion,

a

lot

of

interest.

So

thank

you

richard

for

coming

to

present

and

let's

keep

this

going

on

the

mailing

list

and

thank

you.

E

E

E

R

R

R

Now,

what

you

would

typically

do

for

something

like

tcp

next

slide

is.

You

would,

of

course,

take

something

like

a

packet

capture

somewhere

in

the

network

and

then

analyze

that

using

a

tool

like,

for

example,

wireshark.

You

can

still

do

that

for

quick

and

other

encrypted

protocols,

but

it's

more

difficult

next

slide.

R

Quick

now

already

encrypts

a

lot

of

its

transport

metadata

as

well.

So

if

you

want

to

do

this,

you

would

have

to

store

the

entire

packet

capture,

including

the

very

large

payloads

and

then

also,

of

course,

the

tls

decryption

secrets

to

get

the

final

information

out

of

there,

which

could

lead

to

problems

at

scale

and

there's

a

second

long-standing

problem

with

this

approach.

Next

slide.

R

So

next

slide,

what

we

eventually

ended

up

doing

for

quick

was

take

a

different

approach

and

instead

of

logging

in

the

network

or

taking

packet

captures

means

that

x

will

trade.

This

information

from

the

implementations

directly

at

all

of

the

endpoints

or,

let's

say

vantage

points,

because

we

can

of

course,

also

look

at

intermediate

devices

as

well.

R

This

approach,

then

allows

us

to

only

log

the

things

that

we

actually

need

so

being

better

for

privacy

and

also

keeping

the

overhead

lower.

This

is,

of

course,

not

a

fantastic

new

idea.

Most

implementations

have

some

kind

of

debug

logging

outputs,

but

the

idea

of

qlog

was

to

have

like

a

single

way,

a

single

format

single

schema

that

all

the

different

implementations

can

reuse.

R

That

means

the

q

log

is

is

far

from

rocket

science.

Basically,

what

we

have

now

is

a

mapping

onto

json,

and

we

just

have

a

schema

that

defines

how

you

should

log

several

individual

event

types.

For

example.

What

should

the

receive

packet

look

like,

or

if

you

indeed

want

to

lock

some

congestion

control

stuff?

R

R

This

approach

turned

out

to

work

quite

well

for

quick

having

both

the

common

format

and

some

public

tools,

at

least

leading

to

most

of

the

quick

stacks,

currently

actually

outputting.

The

format

directly.

There's

also

some

experience

with

large

scale

usage

by

facebook

who

are

using

this

to

monitor

and

analyze

their

their

quick

deployments

at

scale.

R

So,

given

this

relative

success

for

quick,

we

have

now,

we

are

now

moving

to

adoption

of

this

work

inside

of

the

quick

working

group

next

slide,

which

is

intended

to

be

part

of

the

of

the

recharger

of

the

working

group,

and

there

are

two

goals.

The

main

one

is,

of

course,

finalizing

this

for

quick

and

hp3,

but

the

secondary

goal,

which

is

one

of

the

reasons

why

I'm

talking

to

you

right

now,

is

because

we

believe

that

q

log

can

be

used

for

more

than

just

quicken

hpg.

R

R

But

the

thing

is

that

I,

and,

if

we're

honest,

not

many

people

in

the

quick

working

group

or

all

that

into

this

type

of

work

or

all

that

experience

to

these

type

of

formats,

so

we're

hoping

to

get

a

bit

of

feedback

from

you

on

these

things,

I'm

going

to

highlight

just

three

of

the

main

ones

that

I

think

might

be

most

relevant

to

you

all

here.

Next

slide.

R

The

first

one

is

that

we're

currently

using

json

as

a

civilization

format,

which

is

very

nice,

and

it's

very

flexible.

It's

also

not

the

most

performant

approach

that

you

can

have.

So

there

are

some

discussions

about

maybe

moving

this

to

something

else.

What

we've

currently

done

in

the

draft

to

kind

of

bypass?

R

R

So

what

we're

thinking

about

now

is

having

some

kind

of

a

sanitization

level

approach

where

you

have

different

use

cases

or

more

or

less

tricked

in

how

to

sanitize,

and

we

give

some

guidelines

on

how

to

do

this,

which

hashing

to

apply

or

which

fields

should

be

left

out

completely

again.

This

is

something

that

I

think

you

hear

of

have

probably

encountered

at

some

point

or

the

other

when

collecting

information

would

be

interesting

to

hear

of

similar

approaches.

R

R

If

this

turns

out

to

all

work

quite

nicely

and

can

be

generalized,

the

idea

is

to

go

for

the

separate

working

group

down

the

line

that

helps

finalize

all

of

this,

but

for

now,

for

practical

reasons,

we're

going

to

keep

this

inside

of

the

quick

working

group

which

the

work

will

start

in

a

couple

of

months.

So

I'm

hoping

some

of

you

might

be

willing

to

join

us

there

to

give

us

some

insight.

R

S

Thank

you

I'll

try

to

keep

this

quick,

I'm

going

to

apologize

for

not

being

familiar

with

your

material.

It's

the

first

time,

I'm

seeing

related

stuff.

The

two

comments

I'd

give

you

is

that

I

do

cons.

I

suggest

you

consider

looking

at

yang,

not

specifically

because

you

think

that's

the

best

match

for

things,

but

one

of

the

features

that

the

language

is

enjoying

right

now

is

that

it's

a

modeling

language,

the

actual

serialization

formats

that

yang

is

allowed

to

output.

Are

you

know

multiple,

so

you

can

get

json

format

see

more.

S

S

My

second

comment

is,

I

think

the

majority

of

your

work

is

going

to

be

figuring

out

how

to

define

a

common

header

that

can

be

used

for

the

exchange

model

for

passing

these

objects

around

once

you

have

that

the

the

protocols

for

for

doing

subscriptions

for

things

of

interest

is

going.

I

think,

fallout

from

there.

A

Okay,

also

making

this

very

quick

so

to

speak.

Sorry,

the

you

were

talking

about

pcap

with

tcp

old

school,

how

you

would

do

it.

Bioshock

was

a

icon

there.

There

is

an

effort

that

wants

to

document

pcap

here.

Unfortunately,

that

effort

was

a

little

bit

the

pipe

that

is

fueling

that

effort.

It

was

a

little

bit

congested

for

this

idea.

H

B

B

H

And

just

while

it's

going

on

the

the

background

here

of

why

I'm

asking

this

question

is

because,

obviously,

if

this

works

in

the

quick

working

group,

it

may

there's

obviously

a

lot

of

other

work

going

on

there,

and

it

may

be

that

the

people

who

are

interested

in

this

in

particular

may

find

that

they

get

swamped

with

other

stuff.

And

so

maybe

we

have

discussions

with

martin

and

I

as

to

whether

splitting

up

another

working

group

for

this

work

makes

more

sense

whether

it

be

better

in

a

different

home.

B

Well,

having

a

do

not

raise

for

the

negative

is

probably

not

the

great

idea.

If

we

wanted

to,

we

could

do

it

as

if

we

were

like

we

could

say

the

opposite,

but

so

far

in

the

interest

of

time.

I'll

just

call

it

here.

12

hands

have

gone

up

in

support

of

interest

here.

So

there's

there's

clearly

some

of

the

53

people

on

the

of

the

participants.

There's

there's

clearly

some

interest

in

that.

T

T

T

This.

This

can

really

help

network

operators

immediately

understand

the

root

cause

and

can

facilitate

automation

to

fix

the

underlying

issues

without

any

manual

intervention.

So

this

this

proposal

actually

focuses

on

extensions

to

ipfix

for

exporting

the

drop

packet

exception.

Information

in

ipfx

format

it

this

this

was

introduced

in

ietf

109..

T

T

T

We

have

added

the

section

4

4.2.2

for

forwarding

next

up

id

with

with

some

additional

description

and

an

l3

vpn

network

example

on

how

to

populate

this

field

in

in

in

some

use

cases.

Additionally,

we

have

added

section

4.2.1

with

some

additional

justification

on

forwarding.

Next,

a

forwarding

exception

code.

T

T

S

Hi

very

brief

question:

after

looking

through

the

document,

so

I'm

seeing

some

of

the

codes

for

white

drops

are

happening.

Has

there

been

discussion

about

providing

drop

information

about

what

layer

it's

happening

at

like

you

know?

Maybe

a

layer,

two

forwarding

exception

layer,

three

forwarding

exception,

etc.

S

S

M

What

I

would

like

to

see

is

a

bit

more.

Why

you're

introducing

new

fields?

What

what

is

the

main

benefit

because,

for

instance,

the

iphix

entity

89,

is

focusing

on

drop,

forwarded

and

consumed

and

from

what

I

understood

is

your

main

motivation

is

basically

to

introduce

an

enterprise

bit

and

also

increase

the

point

space

for

for

for

dropped,

but

you're

not

referring

to

any

other

use

cases

like

forwarded

or

consumed.

M

U

It's

it's

pretty

much

well

known

what

a

given

drop

code

means

and

what

is

its

behavior

on

the

box.

For

example,

on

a

given

box,

the

ttl

expiry

might

be

a

draft

packet,

but

maybe

on

a

different

box.

The

ttl

expiry

might

be

the

one

which

is

actually

a

consumed

exception

in

the

sense

that

the

packet

is

sent

to

the

control

plane,

which

will

respond

with

an

icmp

unreachable.

U

So

in

that

sense,

what

kind

of

initially

thought

is

that

probably

it

is

the

drop

code

which

in

itself

will

explain

what

kind

of

exception

it

is.

But

in

addition

to

that

again,

what

we

also

considered

is

remember:

all

of

this

encoding

is

consuming

the

forwarding

path

bandwidth.

So

what

we

want

is

only

the

organic

data

which

the

only

data

path

knows

to

be

encoded

and

no

other

extra

information

which

can

theoretically

be

derived.

U

For

example,

if

a

given

type

of

code

can

implicitly

mean

that

it

is

dropped

or

consumed,

then

we

don't

want

to

have

an

extra

bit

and

consume

data

about

bandwidth

in

order

to

and

do

that

extra

encoding.

So

that

was

one

of

the

motivation

why

we

didn't

add

it,

but

at

the

same

time

I

I

do

understand

what

you're

also

indicating

is.

If

we

have

a

marriage

of

the

two,

it

kind

of

provides

a

backward

compatibility.

Where

I

mean

some

entity

might

not

want

to

report

both

of

them

together.

U

For

the

second

part,

I

think

the

concern

is

mainly

see.

Forwarding

status

in

the

current

form

is

very,

very

limited

and

again

what

we

are

trying

to

indirectly

do

by

affording

status

is.

We

are

trying

to

standardize

the

drop

codes

right

and

the

problem

is.

We

have

so

many

variety

of

asics

in

the

networking

domain

today,

with

each

of

them

having

their

own

proprietary

pipelines

right.

Some

of

them

use

a

hard-coded

asic

pipeline

other.

Are

software

driven?

U

There

are

yet

another

which

are

microcode

driven

and

all

of

them

have

their

own

category

and

sets

of

exceptions

for

to

give

an

example.

On

the

juniper

side,

one

of

our

asics

reports

about

200

odd

exceptions

in

the

current

format

and