►

From YouTube: IETF110-DETNET-20210309-1430

Description

DETNET meeting session at IETF110

2021/03/09 1430

https://datatracker.ietf.org/meeting/110/proceedings/

A

A

Yesterday,

this

is

an

itf

meeting.

I

think

you

all

know

that,

and

I

most

are

familiar

with

the

rules

that

govern

our

participation

and

our

process.

If

you're

not,

please

go

look

up

the

note

well

everything

we

say

here:

it

becomes

part

of

our

public

record

and

it

is

being

recorded

we're

using

meat,

echo

you're

here

with

me.

So

of

course

you

know

that

and

meet

echo

automatically

handles

our

blue

sheets

and

it

does

have

a

chat

channel.

The

jabber

is

still

there.

A

If

you

want

to

go

directly

into

that,

note-taking

you've

seen

in

chat

we're

using

code

emd,

please

jump

in

and

help

us

take

notes

and

capture.

What

is

said.

That's

particularly

important

if

you

make

a

comment,

whether

it's

in

jabber

or

in

at

the

mic,

I'd

like

to

have

that

captured

comments

that

are

set

in

jabber

will

be

repeated

in

general.

A

If,

for

some

reason

you

don't

want

it

repeated,

that's

fine,

but

then

don't

add

that

to

the

minutes,

as

I

mentioned

yesterday,

the

tools

page

seems

to

be

having

a

little

bit

of

trouble.

So

you

might

want

to

go

to

data

tracker

I'll

point

out

that

the

data

tracker

now

has

the

htm

the

tools

format

html,

which

personally

works

much

better

for

me,

so

may

wish

to

go

there

we're

in

our

second

session.

A

We

have

a

joint

session

on

friday,

with

that's

being

hosted

by

pals,

it's

joint

with

mpls

in

spring,

and

it's

really

focused

on

the

mpls

label,

stack

and

pseudo

wire,

related

control,

word

and

oem,

and

how

those

things

are

going

to

progress

and

there's

lots

of

lots

of

work

going

on

in

that

area.

So,

if

you're

interested

in

that

topic,

I

suggest

you

join.

A

A

As

I

mentioned

yesterday,

we've

added

a

new

step

to

our

itr

disclosure

process.

Specifically,

if

you

join

a

as

a

contributor

or

as

a

co-author

to

a

working

group

document,

we

ask

that

you

make

an

ipr

statement

at

that

time.

We

reviewed

the

status

of

our

drafts

yesterday,

so

I'm

not

going

to

redo

that

today.

A

C

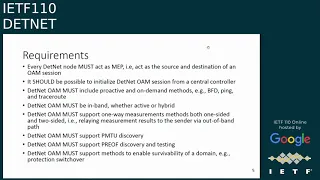

Okay,

so

we've

been

working

on

on

a

document

addressing

the

comments

and

discussions

that

we

had

since

previous

109

meeting

and

the

structure

is

the

same.

What

was

updated

is

that

we

added

the

requirements

section

that

previously

was

a

part

of

a

working

group

adopted

document

on

oem

over

mpos

data

plane,

and

it.

C

C

So

the

telemetry

information

can

be

the

process

can

be

separated

into

two

sub

processes.

One

is

a

trigger

and

originated,

and

the

second

is

a

collect

and

transport

for

the

network.

Analytics

iem

tracing

uses,

two

methods

that

characterizes

end-to-end

and

hot

by

hop

in

addition

for

iem

tracing

is

defined,

direct

export,

which

every

node

that

originates,

telemetry

information

exported

to

the

collector

separately

and

another

mode

of

collecting

transporting

was,

is

proposed

and

discussed

in

npp

and

working

group.

It's

a

hybrid

two-step.

C

It

is

using

the

follow-up

packet

that

follows

the

same

path

as

a

trigger

packet

using

the

same

encapsulation

and

collects

telemetry.

Why?

We

think

it's

important

to

discuss

it

because,

especially

for

the

net

net,

where

the

network

resources

dedicated

to

detmet

service

are

scarce

and

precious

their

method

of

collecting

and

transporting

on

path.

Telemetry

is

important

because,

as

you

see

for

iem

tracing

it,

it

embeds

the

telemetry

information

into

the

data

packet

itself

into

trigger

packet,

whereas

direct

export

and

hybrid

two-step

can

be

transported

out

of

that.

C

C

C

C

By

sharing

the

same

encapsulation

of

their

flow,

that

is

being

monitored,

active

oem

is

in

band,

and

so

thus

we're

able

to

do

interpretation

of

their

failure,

detection

or

performance

metric

collected

and

apply

it

to

their

network

analytics.

Another

requirement

is

that

oem

must

support

one-way

measurement,

whether

it's

a

one-sided

or

two-sided.

C

A

That

this

this

slide

and

this

section

of

the

document

we

didn't

get

a

lot

of

attention,

as

we

do

adoption

and

I'll

just

remind

people

that

they

can

make

their

comment

on

the

list

and

say

they

want

it

addressed

as

part

of

the

working

group

process.

It

doesn't

have

to

block

adoption

just

because

you

disagree

with

one

of

these

points,

but

it

is

good.

You

express

your

view

during

adoption.

C

C

A

A

I'd

like

to

just

ask

people

if

they

have

objections,

because

if

we,

if

we

do

the

tool

and

ask

for

objections,

we

don't

know

what

the

objection

is.

So

I

I

really,

I

think,

we'd

prefer

to

hear

what

the

objection

is,

whether

that

someone

types

it

in

jabber

or

says

it

at

a

mic.

I

think

those

are

good.

Now,

of

course,

we

are

going

to

do

this

on

the

list,

so

there's

always

an

opportunity

there.

A

Personally,

I

actually

have

some

technical

comments

on

the

document,

but

I

don't

think

it

blocks

adoption

so

I'll

make

those

comments

during

the

adoption

call

and

we'll

address

them

during

work,

normal

working

group

processing.

But

if

someone

has

an

objection,

probably

a

strong

one

it'd

be

great

to

hear

hear

from

them.

A

E

We

had

discussions,

particularly

in

the

rings

meeting

on

what

we

intend

to

you,

know

the

focus

of

the

draft

to

be,

and-

and

I

highlighted

the

keywords

here

that

are

copied

from

the

from

from

from

the

abstract,

which

means

we

are

proposing

changes

to

rcp

and

surf

for

a

better

alignment

with

ours

with

tsn.

We

called

it

rsvp

dash

tsn

in

the

document,

and

that

means

we're

limiting

the

scope

of

the

draft

to

to

those

changes.

E

E

A

A

A

E

E

Thanks

so

we

we,

we

clarified

the

use

cases

as

a

change

from

the

last

version.

We

now

present

two

use

cases

what

I

would

call

a

hybrid.net

over

to

your

center,

where

customer

network.

The

difference

is

that

we

have

in

in

one

case,

a

a

core

network

with

rsvp

support

and

the

other

ones.

We

don't

that's

a

difference

in

the

use

cases.

E

Section

three

is

now

focused

on

the

design

rationale,

which

are

the

former

sections

two

two

two

three

two

five,

so

we

merged

them

into

a

new

section

and

then

a

focal

section,

four

on

the

rsvp

tsn

proposal,

which

took

the

layer

into

actions

revised

from

the

previous

version.

So

it's

it's

a

revised

part,

but

it's

largely

taken

from

from

the

previous

draft

and

we

also

changed

the

api

descriptions

to

align

with

the

terminology,

which

was

another

comment

we

received

so

in

the

structure.

If

you

go

to

the

next

slide,

please.

E

Design

rationale,

section

three,

as

I

mentioned

before,

and

then

section

four

is:

is

it's

the

proposed

design

which

which

has

the

layer

interaction,

the

the

interactions,

the

the

api

descriptions

and

the

message

formats

will

come

in

the

next

version?

We

didn't

finish

them

in

time

for

the

deadline.

So

that's

the

last

subsection

of

section

four.

I

don't

know

why

the

numbers

went

wrong.

That's

obviously

not

section,

seven

there

but

never

mind.

That's

at

the

end.

E

Please

so

the

we've

received

feedback

both

in

the

in

the

in

the

109,

but

also

the

interviews

meeting

here

that

we

tried

to

address

in

the

new

version.

So

the

the

scenario

was

the

one.

You

know

what

type

of

scenario

we

had.

That's

why

we

revised

the

use

case

section

to

provide

their

hopefully

a

clearer

example.

E

Thanks

to

the

next

one,

please

so

the

next

steps

I'd

like

to

do

is

to

fill

in

the

message

formats

and

the

protocol

information,

as

I

didn't

get

that

done

in

time

and

obviously

would

like

to

seek

more

input

from

the

list

potential

which

brings

me

to

the

last

slide.

If

you

can

just

jump

to

that

one

already,

which

means

we

would

like

to

hear

feedback

comments,

potentially

anybody

who's

happy

to

help

us

co-authors

and

contributions

are

very

welcome.

E

F

E

F

Let

me

suggest

that

the

the

the

presentation

material

did

a

really

nice

job

on

covering

all

the

various

data

planes

that

would

work,

it'd

be

great,

rsvp

covered

all

of

those,

and

as

part

of

that,

could

I

suggest

you

find

an

acronym

other

than

tsm,

which

is

going

to

going

to

create

some

bad

implications

about

which

data

planes.

This

applies

to.

A

So,

unlike

david,

I

think

your

your

the

title

is

appropriate

because

you

really

are

limited

there,

but

I'm

going

to

go

back

to

a

comment

that

I

believe

we

made

at

the

last

couple

times

that

you've

talked

about

this

draft.

Is

we

don't

have

a

definition

of

what

rsvp

looks

like

for

debt

net

in

general

and

that's

for

all

the

use

cases

that

david

just

talked

about

where

for

ip

for

mpls

for

aggregation?

A

We

don't

know

what

that

looks

like

and

there's

a

a

large

body

of

work

we

can

build

on,

in

particular

the

traffic

engineering

extensions

to

rsvp,

and

I

know

that

you're

not

building

on

those

you're

building

on

inserve.

So

you

know,

what's

your

thought

about

addressing

these

base

capabilities

from

the

comment

before

from

david's

comment,

as

well

as

from

previous

meetings.

E

Yeah,

so

when

we

discussed

about

how

to

move

forward

because

the

camera

as

you

as

you

said,

was

made

during

the

last

meeting

to

go

for

an

rsvp

deadnet,

we

found

the

the

access,

at

least

for

us,

for

the

for

the

authors

that

we

had

too

large.

So

we

honed

it

down

to

tsn,

but

I

do

agree,

and

but

that

was

the

discussion

we

had

in

the

last

meeting,

but

there's

a

whole

body

of

work

to

be

done

on

that

net

view

and

rsvp.

E

If

you,

if

you

will,

but

it's

probably

that

we

didn't

want

to

take

that

on

so.

Hence

we

hung

ourselves

down

into

this

end,

but

it's

a

little

bit

kind

of

avoiding

the

work,

but

I

think

it's

maybe

also

starting

the

work.

So

we

wanted

to

be

concrete

from

the

from

the

tsn

angle

and

can

then

maybe

reopen.

You

know

that

body

of

work

or

that

discussion

once

you

know

we

move

forward

if

you

will

right

so

that

was

that

was

a

little

bit.

I

would

compromise

in

a

way.

G

Yes,

this

is

also

what

I

wanted

to

highlight,

so

I

I

think,

thank

you

very

much

for

the

update

based

on

that.

It

is

much

more

clear

what

was

your

focus

with

this

draft

and

I

I

would

like

to

join

the

previous

comments

that

the

title

might

be

misleading,

because

what

you

are

focusing

here

is

if

rsvp

is

used

for

that

net

signaling

and

you

are

using

graph

for

tsn

signaling

how

these

control

plane

signaling

can

interact

in

a

sub

network

scenario.

G

So

I

think

that

is

your

use

case,

and

this

is

pretty

well

described

in

the

updated

draft.

So

thanks

for

that,

but

I

think

also

that

this

is

something

that

must

be

highlighted

in

the

title

as

well,

that

you

have

a

special

focus

on

a

special

scenario

and

not

solving

the

control

plane

signaling

for

that

net.

E

The

best

thing

to

do,

instead

of

reflecting

the

actual

focus

in

the

draft,

so

that

one

I

do

agree

with,

I

said

I

mean

there

is

an

interest

to

come

back.

I

mean

I

discussed

this

with

the

with

my

courses

to

to

look

at.

You

know

the

larger

problem

still

of

control,

plane

signaling,

you

know,

but

it

would

be.

E

I

see

this

as

a

body

work

that

probably

goes

beyond

the

competences

that

we,

the

three

of

us,

have

and

that's

why,

in

the

meantime,

we

would

probably

like

to

just

stick

to

it.

We

can

just

reflect

that

by

narrowing,

also

the

scope

clearly

in

the

title

and

renaming

the

title,

but

certainly

being

interested

in

addressing

the

conor

open,

signaling

problem

at

some

point,

as

contributors.

D

F

F

E

F

D

F

A

I

A

One

comment

about

I'm

sorry

for

the

delay.

One

comment

on

on

the

slide

you

say:

dettnet

has

scoped

a

single

administrative

domain.

It

also

can

support

a

closed

group

of

administrative

control,

so

it

could

include

cooperating

administrative

domains.

So

I

think

you

yours

your

comment

there.

That

sub-bullet

is

not

aligned

with

our

charter,

so

just

be

aware

of

that.

I

I

The

conclusion

of

conclusion

is

that

nowadays,

we

are

short

of

a

common

and

a

simple

method

to

provide

this

loneliness

service

in

large-scale

sp

networks,

and

our

method

mentioned

in

the

draft

show

a

potential

solution

that

is

both

simple

and

scalable.

Meanwhile,

it

does

not

need

the

type

factorization.

I

I

So

we

think

that

they

should

know

both

in

the

network,

and

in

this

case

the

main

problem

is

to

decrease

the

microburst.

In

our

mechanism.

We,

it

uses

flow

shipping

on

the

edge

and

on

the

intermediate

nodes,

we

do

the

interface

graduated

shipping,

we

change

the

regular

behavior

or

on

the

router.

It

is

to

say

that

the

router,

following

all

the

traffic

as

soon

as

possible

in

our

maximum,

we

use

the

shipping

method

in

the

tsn

so

that

the

traffic

will

be

forwarded

in

out

of

the

way

next

week.

Please.

I

Comparing

the

cancer

mechanism,

our

mechanism

is

simpler

and

more

scalable.

Of

course,

we

have.

Some

disadvantage

is

about

the

uncertainty,

everything

that,

in

our

maximum

uncertainty,

perhaps

is

higher,

but

okay,

when

the

uncertainty

is

higher,

perhaps

some

of

the

final

use

case

cannot

be

exported

in

our

mechanism

next

page,

please.

I

We

think

that,

for

example,

in

a

network

that

the

critical

traffic

is

not

too

much

or

low

density

traffic

is

not

that

critical

and

the

perhaps

the

the

first

step,

the

loaded

traffic.

The

note

that

critical

will

be

would

appear

in

the

large

scale

as

united

workforce,

and

we

also

think

about

to

do

this

approximate

evaluation

of

the

society.

I

We

need

to

check

whether

the

mechanism

we

can

work

can

work

for

those

low

latency

traffic.

They

are

not

was

that

critical

and

we

think

that

the

replication

and

elimination

method

in

testing

can

have,

because

in

our

mechanism

perhaps

we

cannot

ensure

that

a

hundred

percent

losses,

this

packed

replication

of

the

elimination,

private

hype

to

grow

some

packets,

that

have

lost

the

network,

and

this

is

the

last

page.

I

think

if,

if,

if

you

have

any

problem

in

any

question,

you

can

talk

now.

F

Yeah,

so

looking

at

the

description

of

this,

the

approach

looks

a

lot

like

diffserv,

where

the

flows

are

individually

policed

at

the

edge

and

then

there's

a

flow

aggregate

traffic

traffic

conditioning

conditioning

in

the

core.

The

scalability

comments

are

definitely

on

the

mark

is

that

that

was

one

of

the

reasons

that

diffserv

was

designed.

The

way

it

is

I'd

encourage

of

the

authors

to

take

a

look

at

a

diffserv

framework,

that'd

be

rfc

2475

place

to

start.

A

I

A

E

K

I

understand

that

that's

what

has

to

be

done,

maybe

one

day

meet,

echo

will

have

a

button

for

us

to

push

to

move

someone

else's

slides,

hi

everyone

jakob

stein,

it's

nice

to

be

back

in

the

itf

after

a

long

time.

Next,

next

slide,

please

the

reason

I

haven't

been

coming

to

the

itf

for

a

long

time

is

I've

been

wasting

my

time

with

5g

exhale

transport,

and

I

really

could

use

this

time

to

plug

a

book

which

just

came

out,

but

I

won't

do

so.

There

are

problems

having

to

do

with

delays.

K

I'm

sure

you

all

know

when

doing

backhaul

of

mobile

and

it's

gotten

much

worse

in

5g

and

people

have

thought

that

perhaps

tsn

or

detnit

mechanisms

are

can

be

adapted

to

solve

this

problem

and

it

turns

out

it

doesn't

work

very

well.

In

particular,

I'm

talking

about

qbv,

which

I

realize

is

not

a

death

net,

it's

more

of

a

tsn

approach,

but

I

have

to

define

my

first

line

here.

K

The

mechanism

I'm

talking

about

is

for

what

I

call

a

time.

Sensitive

packet

flow,

where

I

mean

by

time

sensitive

simply

that

the

packets

have

to

be

delivered

within

only

a

little

bit

more

than

the

physically

possible

minimal

time

to

get

through

the

network,

and

when

you

try

to

use

mechanisms

that

do

time

gating,

it

turns

out

that

they

don't

scale

and

if

you

actually

try

to

give

an

upper

bound,

the

upper

bound

is

much

higher

than

what

is

physical,

physically

possible.

K

And

if

you

don't

plan

it

correctly

and

it's

almost

impossible

to

play

it

correctly.

The

efficiency

on

the

line

goes

down

and

we're

wondering

if

there

is

a

mechanism

that

doesn't

suffer

from

all

of

these

problems

and

it's

interesting

by

the

way

that

the

802.1

people

themselves,

the

tsn

people

in

their

cm

document

for

exalt,

recommend

frame

preemption,

but

don't

recommend

their

own

mechanism

for

for

this

this

case

and

which

I

think

is

basically

because

it

doesn't

scale

very

well.

Can

I

have

the

next

slide.

Please.

K

It

has

to

go

to

and

scheduling,

logic

which

decides

which

packet

of

all

those

packets

that

have

to

be

transmitted

over

the

output

port

should

be

first,

and

I

just

want

to

mention

that

when

you

think

about

it

in

terms

of

segment,

routing

and

tsn

or

deathnet,

rather

than

saying

which

port

and

which

packet

to

send

over

that

port,

you

can

think

of

it

as

where

to

send

the

packet

and

when

to

send

the

packet.

In

other

words,

forwarding

is

basically

saying

given

this

packet,

it

just

arrived.

K

K

So

what

am

I

proposing?

I'm

proposing

an

alternative

to

using

qbv

and

similar

mechanisms

that

relies

on

using

a

stack

data

structure

added

to

the

packet

in

the

packet

headers

I'll

mention

in

a

moment.

What

has

to

be

in

that

stack?

There

are

two

options.

One

is

only

for

the

scheduling

problem

once

one

for

the

both

for

the

forwarding

and

the

scheduling

problem,

and

the

nice

thing

about

this

alternative

is

that

it

can

be

optimized

in

a

scalable

way

it

can.

Its

configuration

can

be

adapted.

K

In

other

words,

you

can

add

a

new

flow

without

having

to

start

touching

a

lot

of

network

elements,

a

lot

of

routers

in

the

network,

during

which

time

you'll

have

inconsistencies

and-

and

you

might

have

packed

quote-

either-

be

lost

or

fail

to

meet

their

deadlines,

and

it

can

get

down

to

very,

very

close

to

the

physical

delay

and

the

mechanism

using

a

stack.

It

has

sort

of

an

analogy

with

segment

routing.

K

K

K

But

it

hasn't

been

that

long

in

the

system,

so

you

might

decide,

let's

use,

what's

called

earliest

deadline

first

or

edf,

and

that

simply

puts

the

packet's

deadline,

the

absolute

time

that

it's

supposed

to

reach

the

other

end

of

the

network

or

the

final

host,

and

that

sounds

better.

It

is

better,

however,

that

obviously

prioritizes

packets

with

an

earlier

final

dead

time

deadline

in

each

router

along

the

way,

without

knowing

how

far

it

is

from

this

router

to

the

final

destination.

K

So

you

might

have

a

case

where

you

are

prioritizing

a

packet

which

is

just

has

one

more

hop

to

go

over

another

packet,

which

has

which

looks

like

it

has

more

time,

but

actually

has

a

long,

a

large

number

of

routers

to

get

to

traverse

so

both

lis

and

edf,

and

many

other

variations

where

you

put

one

time

into

the

pocket,

aren't

good

enough

next

slide.

Please.

K

So

if

for

each

router,

you

traverse

you

pop

the

top

of

the

stack,

you

look

at

that

time

and

you

do

some

kind

of

scheduling

which

might

be

edf

or

just

in

time

or

something

I

call

pedf

look

at

the

draft

or

any

other

mechanism

and

of

course

you

need

some

mechanism

for

figuring

out

these

deadlines.

I'm

going

to

show

you

one

in

the

next

slide,

which

I

mentioned

the

draft,

but

certainly

not

the

only

mechanism,

and

I

refer

to

other

ones

in

the

draft

as

well.

K

K

And

why

do

I

say

it

is

analogous

to

segment

routing,

because

if

I'm

already

putting

a

stack,

which

has

an

entry

for

every

router

to

be

traversed

into

the

packet,

I

might

as

well

combine

it

with

segment

routing

and

have

both

the

forwarding

decisions

and

the

scheduling

decisions

in

the

same

stack.

In

other

words,

I

put,

I

can

put

a

stack

in

where

every

entry

in

the

stack

has

two

sub

entries,

one:

a

forwarding

sub

entry.

Basically,

where

do

you

send

the

packet

and

one

is

scheduling,

sub

entry

which

says

when

to

send

the

packet?

K

K

Here's

a

simple

example

to

give

you

the

idea

why

these

numbers

are

what

they

are.

You

can

see

in

the

draft.

However,

the

idea

is

the

host

in

this

case,

I'm

do

using

it

like

in

segment

routing

rather

like

in

sort

routing

source

routing.

So

you

actually

see

the

header

which

is

being

inserted

only

after

the

first

router,

and

it

has

the

number

of

entries

as

the

number

of

routers

minus

one,

but

basically

you

will

see

the

stock

there.

K

The

srtsn

stack,

if

you're

doing

both

where

you

have

both

the

forwarding

sub

entry

and

the

scheduling

sub

entry

and

each

time

one

is

popped

until

you

get

to

the

final

one

and

you

can

even

save

a

bit

by

having

a

special

code

for

a

for

bottom

of

stock

rather

than

wasting

a

bit.

But

that's

we

don't

really

have

to

go

into

that

right

now.

Next

slide,

please

I

realize

I

only

have

one

minute.

K

K

Actually

I

didn't

give

you

one

extra

bit

here,

because

if

you

do

that

trick,

I

mentioned

before,

but

the

bottom

of

stock.

You

don't

need

that

plus

one

at

the

end,

but

you

actually

in

many

cases

for

the

kinds

of

networks

where

this

is

important,

where

you

have

several

hundred

routers

and

the

maximum

amount

of

time

that

a

packet

can

go

through.

The

network

is

on

the

order

of

milliseconds.

K

This

can

end

up

being

16

bits

or

maybe

32

bits

if

you're

really

going

to

squander

them,

which

means

that

between

four

and

eight

hops

only

require

as

much

room

as

a

single

ipv6

address.

Next

slide.

Please,

and

to

sum

up

what

I'm

asking

from

this

working

group-

and

I

asked

something

similar

to

this

spring

working

group-

and

I

was

going

to

ask

pce

as

well,

but

they

didn't

get

me

into

their

schedule,

is

where

should

this

work

be

done?

It

is

similar.

It

has

elements

of

segment

routing.

K

It

has

elements

of

time

sensitivity

of

the

ditnet

variety

and,

of

course,

requires

an

optimization

algorithm

and

by

the

way

it

was

interesting

to

hear

just

before

about

rsvp.

I

also

have

a

non-centralized

mechanism

where

you

don't

have

to

do

measurement

of

the

link

delays

ahead

of

time,

but

rather

send

an

rsvp

like

packet

ahead

of

actually

several

of

them

and

measure

the

the

acquire,

timestamps

and

sort

of

measure

the

delays

as

you

go,

but

simply

I'm

asking

for

the

chairs

to

coordinate

where

and

if

they

want

this

work

to

progress.

A

Okay,

we

didn't

leave

time

for

questions

because

we

do

have

one

more

slot

and

we're

rapidly

running

out

of

time,

we'll

take

the

action

to

coordinate

so

absolutely

and

it

seems

like

there's

some

interest,

because

we've

got

good

discussion

on

the

list,

which

is

always

nice

to

see

on

a

new

draft.

With

that

we're

going

to

move

to

the

last

presenter.

H

H

H

H

H

H

H

H

H

H

A

F

The

advice

I

would

give

to

the

authors

is

to

split

the

mechanism

away

from

the

location

of

the

bits,

and

then

I

think

the

crucial

question

of

the

debt

net

working

group

is

whether

the

problem

that

the

authors

propose

to

solve

is

something

that

the

debt

network

group

wants

to

take

on.

In

which

case

a

plausible

path

forward

would

be

to

work

on

the

mechanism

here

and

then

go

look

for

the

bits

elsewhere

and

I

can

think

of

at

least

four

places

where

one

might

be

able

to

find

bits.

L

L

Right

so

from

my

perspective,

certainly

the

normative

thing

that

that

that

we

require

would

be

an

encapsulation

for

the

interoperability

right,

and

I

think

that

applies

both

to

yakov

and

our

draft,

and

you

know

I

I

can

just

observe

that

would

be

the

similar

type

of

you

know.

Encapsulation

that

was

done

in

support

of

prior

in

that

matter.

Allow.

F

L

K

K

J

Well,

it

seems

to

me

that

yeah

yeah

sorry

is.

It

seems

to

me

that

this

is

definitely

a

problem.

That

net

has

the

largest

interest

in

it

being

the

the

working

group,

that's

working

on

the

most

critical

form

of

of

time-related

communications,

and

so

I

think

it.

This

is

really

the

place

to

at

least

start

this

piece

of

work.

A

Okay,

thank

you

I'll

point

out

I'll

point

out

that

to

date,

we

as

a

working

group

have

only

taken

on

informational

documents

related

to

queuing,

not

standards

track

because

we're

that's

outside

our

current

charter

and

more

than

that,

the

transport

area

typically

owns

queuing

mechanisms.

I

don't

know

of

any

case

where

a

queuing

mechanism

has

been

defined

in

the

routing

area.

A

The

definition

of

that,

though,

is,

if

is

not

outside

the

scope

of

the

ietf,

and

if

the

ads

and

the

transport

area

agree

that

you

know

the

right

place

for

it

to

be

done

is

that

net?

You

know

we'll

follow,

but

we

are

being

very

careful

as

chairs

to

stay

within

our

charter

and

to

coordinate

with

the

transport

area

on

the

definition

of

new

queuing

mechanisms,

referencing

existing

queuing

mechanisms

and

how

they

operate

with

debt

net

and

whether

they

need

special

bits

on

the

wire.

A

F

Wearing

my

transfer

working

group

hat

as

we're

likely

to

be

one

of

the

places

where

the

big

hunting

goes

on,

the

expertise

on

cyclic

cueing

is

very

clearly

in

debt

net,

not

in

the

transport

area

working

with

uncomfortable

going

looking

for

bits.

If

the

mechanism

is

defined

here,

I'm

not

comfortable

being

the

primary

venue

for

defining.

M

Uma

hi,

so

thanks

thanks

states,

I

think

my

my

opinion

is

basically

the

problems.net

has

been

talking

over

a

period

of

time

with

respect

to

bounded,

latency

and

also

the

jitter

case.

This

draft

need

to

be

solved

in

that

net

and

also

it's

not.

This

is

like

you

know

that

net

is

more

focused

on

p

e

to

p

e

k

s

not

to

end

to

end

so

to

speak

from

what

psn

tsv

working

group

is

doing.

A

Okay,

stuart:

are

you

still

in

queue?

I

assume

you're

out

and

all

right?

Well.

Thank

you

all

for

the

discussion,

as

I

mentioned

before,

a

big

issue

with

queuing

is

our

charter

and

that

queuing

isn't

done

in

the

routing

area

traditionally

in

the

ietf,

but

we'll

we'll

work

to

find

a

home,

and

so

we'll

work

with

the

transport

area

chairs,

which

includes

david

and

also

with

the

with

the

area

directors,

who

you

know

the

isg

says

where

work

goes

so

we'll

work

with

them

on

that

and.

L

Can

I

just

jump

in

with

a

question

there

not

about

the

queuing,

but

you

know

if

I

observe

yarkov's

draft,

which

I

find

very

interesting

from

the

perspective

of.

Actually,

you

know

in

contradiction.

What

some

people

in

spring

thought,

I

think,

is

an

ideal

case

of

exploiting

segment

routing,

whether

it's

mpls

or

v6.

We

we

haven't,

you

know,

done

sr

as

a

forwarding

plane

in

that

net.