►

From YouTube: IETF110-OPENPGP-20210311-1430

Description

OPENPGP meeting session at IETF110

2021/03/11 1430

https://datatracker.ietf.org/meeting/110/proceedings/

A

B

B

B

I

think

we

can

just

pull

up

the

gitlab

issue

tracker

and

give

people

a

chance

to

talk

about

it

if

we

have

the

time,

but

I

want

to

focus

the

presentations

that

we

have

are

going

to

consume

most

of

the

meeting,

so

any

other

agenda

bashing

or

shall

we

move

on

to

a

discussion

of

the

overall

plan?

Just

so

we're

set

on

the

same

page.

B

B

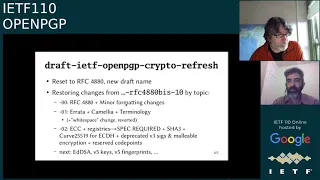

We

picked

a

new

draft

name,

it's

now

draft

ietf

openpgp

crypto

refresh,

and

we

are

trying

to

restore

the

changes

that

we're

in

48

this

dash

10

topic

by

topic,

so

each

revision

tries

to

address

each

each

one.

So

far,

we've

had

three

revisions:

zero

zero

is

basically

4880

with

some

minor

formatting

changes:

zero

one

fixed

all

the

published

errata

and

added

the

camellia

cipher

and

updated

some

terminology,

along

with

one

change

about

white

space.

B

B

We

brought

in

curfew

255.19

for

ecdh,

and

we

deprecated

some

of

the

older

stuff

that

people

shouldn't

be

using

anyway

and

we

reserved

a

bunch

of

code

points

for

what

had

been

in

this

10.

in

the

upcoming

revision.

I

guess

paul

can

maybe

speak

to

this

later,

but

I

think

we're

talking

about

staging

ed

dsa

and

maybe

v5

keys

and

v5

fingerprints.

You

know

in

the

next

one

upcoming

and

thank

you

to

everyone,

who's

reviewed

on

the

list

for

these

changes.

B

It

has

been

really

useful

to

get

a

sense

of

what

the

working

group

thinks

about

them.

It's

pretty

clear

that

we

will

fix

up

some

some

parts

of

it

yeah.

I

see

joao's

comment

there,

but

about

just

how

legacy

the

stuff

is

that

we

are

trying

to

put

in

here

but

yeah,

it's

20

21.

Let's

get

open

pgp

up

to

date,

so

that's

sort

of

the

direction

that

the

working

group

is

doing

on

next

slide

steven

just

about

the

mechanisms,

the

mechanics

for

how

we're

doing

it.

B

B

So

there

are

opportunities

to

do

sort

of

diffs

and

comparisons

between

those

three

documents,

as

paul

mentions

in

the

chat

rfc

diff

is

useful

for

comparing

these

things

I

find

actually

also

diff,

given

the

markdown

sources

is

useful

for

comparing

to

the

different

documents

and

if

anybody

wants

to

talk

later,

probably

not

in

this

group

but

in

a

separate

conversation,

I'm

happy

to

walk

you

through

if

you're

interested

in

trying

to

help

edit

or

propose

changes.

I

can

help

you

with

some

of

that

we're

using

the

issue

tracker

where

possible.

B

B

A

It's

probably

worth

emphasizing

that

we're

not

really

in

a

position

where

the

working

group

is

open

for

fresh

new

ideas.

Just

now,

really

what

we're

doing

is

we're

playing

kind

of

catch

up

on

the

set

of

tips

versus

4880

that

we

already

nearly

know

for

sure

that

we

want

to

include,

but

we're

making

sure

we

get

consensus

on

those

before

we

go

forward.

So

it's

a

it's

a

it's

a

slightly

kind

of

bookkeeping

exercise

to

some

extent

but

with

technical

parts.

A

But

the

main

thing

is:

if

you

know,

if

you

have

a

super

good

idea

for

something

new

to

do

with

pgp,

now

is

not

really

the

good

time

by

all

means:

you'll

drop

them

out

of

the

list,

but

expect

that

we

might

say,

let's

get

to

that

after

we've

already

got

a

bit

of

success

with

the

current

work.

Just

want

that

to

be

clear,

yep

and

if

there's

no

other,

if

there's

no

questions

on

the

plan,

then

we

will

move

to

key

extraction.

C

C

In

practice,

this

means

that

the

victim

will

not

check

the

key

fingerprint

before

using

the

key

next

slide,

please.

So

the

the

requirement

on

key

decryption

is

relevant

because

in

open

bgp

the

private

key

is

not

fully

encrypted.

So

here

you

can

see

the

schema

of

an

rsa

key,

for

instance,

and

when

you

encrypt

the

key,

only

the

fields

in

grey

are

both

encrypted

and

authenticated.

C

So

those

include

the

secret

key

parameters

and

if

an

attacker

tampers

with

the

encrypted

data,

then

the

key

decryption

will

fail,

but

there

are

also

some

parameters

that

are

stored

in

clear

text

and

in

case

of

rsa.

Those

are

the

public,

modulo

n

and

the

public

exponent

e,

and

if

the

attacker

corrupts

those

values,

then

key

decryption

will

work.

Fine

and

clean

rosa

in

2001

already

noticed

this

partial

integrity

check

issue

and

they

exploited

it.

C

They

showed

how

an

attacker

could

corrupt

the

public

parameters

of

a

dsa

key.

Then

they

wait

for

the

victim

to

sign

using

the

corrupted

key

and

from

the

faulty

signature

they

could

extract.

The

dsa

secret

exponents

looks

like

this.

Now

at

the

time,

openpgp

was

not

fixed

to

address

the

specific

attack

vector.

C

So

what

we

have

found

is

we

have

looked

at

the

same

attack

idea

again

and

we

have

found

that

it's

not

just

dsa

keys,

but

any

key

type

is

potentially

vulnerable

to

this

kind

of

key

corruption.

Attacks

specifically

when

it

comes

to

faulty

signature,

attacks,

dsa,

eddsa

and

rsa

keys

can

be

directly

compromised

and

the

attacker

needs

to

corrupt

the

key

only

once

and

then

it's

as

little

as

one

faulty

signature

to

extract

the

secrets,

but

also

any

encrypted

curve.

C

C

So,

aside

from

these

attacks

that

target

signing,

we've

also

found

some

attacks

that

exploit

decryption,

but

those

are

a

little

bit

more

involved

and

we

will

be

sharing

more

information

about

the

decryption

attacks,

as

well

as

all

the

mathematical

details

of

the

signing

attacks

soon

enough.

We

are

finishing

up

a

paper

and

we

will

be

establishing

it

and

sharing

it

with

the

community.

C

C

For

instance,

the

validation

method

that

does

not

work

is

trying

to

use

the

key,

for

instance,

to

sign

and

verify

a

message

or

encrypt

and

decrypt

a

message

so

in

many,

depending

on

the

key

type.

Even

if

the

operation

is

successful,

you

have

no

guarantee

that

the

private

and

public

parameters

correspond

or

strong

enough,

so

instead

to

carry

out

private

key

validation,

one

should

really

check

the

mathematical

relationship

between

the

different

parameters,

and

this

means

carrying

out

some

algorithm

specific

checks

and

in

case

of

where

I

say

these

are

sorry

forward

enough.

C

We

have

reviewed

a

number

of

popular

open,

pgp

libraries,

and

none

of

them

is

safe

against

all

of

the

attacks

that

we've

found.

So

even

if

variation

is

implemented,

it's

always

it's

often

missing

some

heat

checks

and

in

them

in

terms

of

end-to-end

attacks.

We

have

found

two

real-world

applications

that

are

vulnerable

to

these

key

corruption

issues.

A

A

D

B

So

I

just

wanted

to

say

thanks

to

the

researchers,

to

lara

and

kenny

and

and

your

and

your

colleagues

who

worked

on

this

for

bringing

this

to

the

attention

of

the

working

group.

It

sounds

like

you

did

a

pretty

good

review

of

existing

implementations,

but

obviously

there

may

be

implementations

that

you

don't

know

about

so

being

able

to

talk

about

it

here

should

hopefully

give

people

a

chance

to

have

a

heads

up

if

they

have

an

implementation

that

they

haven't

published.

We're

much

appreciated

for

bringing

that

to

the

group.

C

A

A

F

F

F

So

why

why

should

we

do

that?

So

we

needed

our

a

way

to

verify

our

implementation

right,

and

if

we

have

to

do

the

work

anyway,

we

can

make

it

useful

for

others

too,

and

this

is

in

line

of

with

our

mandate

of

improving

the

ecosystem

and

it's

good

for

other

implementations

too,

because

writing

tests

is

a

lot

of

work

and

they

get

free

tests

and

there

are

secondary

effects

too.

F

So

the

tests

are

black

box

tests

and

there

are

two

kinds

of

tests:

the

first

one

I

call

consumer

tests

where

artifacts

are

produced

by

the

test,

suite

and

then

consumed

by

all

the

implementations

and

the

other

kind

are

producer

consumer

tests,

and

here

the

artifacts

are

also

produced

by

the

implementations

being

tested.

So

you

get

this

nice

interop

matrix

and

the

test

suite

uses

an

interface

called

sop

or

the

stateless

openpgp

interface

that

has

been

extracted

by

by

dkg,

and

you

can

see

some

examples.

F

So

here

we

generate

a

key,

then

we

use

it

to

encrypt

some

data.

Data

is

read

from

standard

in

and

the

ciphertext

is

produced

on

standard

out

and

to

decrypt.

You

do

the

same.

You

feed

the

ciphertext

and

send

it

in

and

get

the

plain

text

on

standard

out

and

currently

the

test

suite

just

uses

five

very

simple

operations

and

gets

out

a

lot

of

information

that

way

so

on

the

right.

You

see

an

example

test.

F

Every

test

has

a

heading

and

it

has

a

stable

link

that

you

can

refer

to

in

bug

reports

when

you

get

a

description

and

maybe

additional

artifacts

like

certificates,

and

there

is

a

little

button

next

to

artifacts

and

if

you

have

the

right

font.

You

also

get

this

little

magnifying

glass

in

the

button

and

if

you

click

on

that,

you

get

taken

to

a

packet

dumper

to

inspect

the

artifact.

F

F

Most

tests

start

with

the

base

case.

So

to

make

sure

we

are

all

on

the

same

page,

and

there

are

then

there

are

some

variants

and

some

of

the

variants

where

the

the

interpretation

or

the

expectation

is

not

clear.

They

they

don't

have

any

expectations.

So

you

get

a

kind

of

white

row

and

sometimes

mostly

in

producer

consumer

tests,

the

producer

fails

to

produce

an

artifact

or

the

produced.

Artifact

does

not

meet

the

expectations,

so

you

can

get

a

cross

mark

there

too.

F

So

this

is

an

example

of

an

signature,

very

verification

test,

so

nipper

started

looking

into

encodings

of

ecc

artifacts

and

to

aid

that

I

decided

to

write

the

test

and

the

test

verifies

an

ed

dsa

signature

and

it

starts

with

the

base

case.

That's

green

for

all

implementations,

except

for

gpg14,

which

does

not

do

ecc,

and

then

you

see

a

variant

where

the

s

value

of

the

signature

is

zero.

F

F

This

is

an

example

of

a

producer

consumer

test

so

or

maybe

we

could

should

call

it

a

scenario

test

and

this

models

key

generation,

encryption

and

decryption.

So

you

generate

a

key

with

implementation,

a

encrypt

for

that

key

with

implementation

b

and

then

decrypt

it

with

the

implementation

a

and

you

can

see.

F

F

So

I

consider

that

a

success

and

we

improved

implementations

across

the

board,

not

only

sequoia

in

accordance

with

our

mandate,

and

we

also

improved

the

the

understate

understanding

of

the

ecosystem.

So

during

our

implementation

effort,

we

ask

those

questions:

how

implementation

handling

this

all

that

and

we

just

wrote

the

test

to

find

out

and.

F

F

F

And

if

you

look

at

the

the

chart

on

the

right,

a

significant

percentage

of

the

points

you

see

are

the

the

algorithm

supporters.

Then

there

are

problems.

For

example,

if

you

consider

signature

subpackets,

it's

unclear

what

implementation

should

do

if

there

are

multiple,

maybe

conflicting,

sub-packets.

F

F

It's

sometimes

not

clear

how

to

handle

missing

sub

packets,

missing

timestamp

subpackets,

what

to

do

if

a

signature's

creation

time

is

in

the

future

or

what,

if

the

signature's

creation

time

predates

the

signing

keys

creation

time

or

what?

If,

at

the

time

the

signature

was

supposedly

created,

the

signature

sub

key

was

not

bound

or

was

revoked

or

the

certificate

was

for

some

reason,

not

not

valid,

and

there

are

unknown

packets.

F

F

F

F

F

Finally,

the

really

bad

news,

many

implementations,

accept

signatures

from

weak

algorithms,

so

a

third

of

implementations

accept

and

successfully

verify

md5

signatures.

5

of

nine

signature.

Implementations

are

fine

with

sha1

signatures

and

four

of

nine

are

happy

with

ripe,

mdm

signatures

and

just

for

fun.

I

included

a

test

that

takes

signatures

over

the

shattered

collision

and

five

of

nine

implementations

are

fine.

With

that.

F

F

So

if

you

want

to

add

tests

or

propose

tests,

talk

to

me

or

open

an

issue,

most

artifacts

are

generated

on

the

fly

using

sequoia,

but

some

artifacts

are

just

stored

as

test

data,

and

if

you

generate

artifacts,

please

please

use

the

common

test,

keys,

the

alice

and

bob

key,

and

if

you

want

to

get

your

implementation

tested,

that's

really

easy.

You

just

need

to

implement

the

stateless,

open,

pg

and

open

ptp

interface,

and

then

we

can

plug

it

into

the

test

suite

to

do

that.

Just

talk

to

me.

F

F

F

B

Yeah,

I

just

wanted

to

thank

justice

for

the

presentation

and

for

the

tremendous

amount

of

work

that's

gone

into

this.

I

think

it's

actually

highlighted

a

lot

of

stuff,

and

I

know

that

many

bugs

have

been

fixed

as

a

result.

We

have

a

little

bit

of

time

for

questions,

but

we're

a

little

overtime

right

now

do

folks

have

anything

they

want

to

raise.

B

You

can

just

put

yourself

in

the

queue

by

raising

your

hand,

I

want

to

encourage

people

to

take

a

look

at

that

if

you,

if

you're

in

the

working

group-

and

you

have

not

looked

at

the

interoper

test,

suite

you're

missing

out.

This

is

something

that

I

think

really

makes

it

useful

for

implementers

and

for

those

of

us

who

are

working

on

the

spec

itself

to

get

a

sense

of

where

things

might

be

confused

or

confusing.

So

so,

thank

you.

Eustis.

G

G

G

G

But

for

ecc

easy

point

is

encoded

into

mpi.

I

think

that

that

that

is

the

cause

of

many

troubles

and

for

curves,

which

format

is

big,

big,

indian.

It

is

okay,

but

these

days

modern

ishii

use

little

indian

format,

and

because

of

that,

we

have

zero

removable

problem

or

any

existing

implementation

which

support

ed255,

have

to

support

zero,

likability

things.

G

G

G

G

G

G

G

G

G

Yes,

yes,

yeah

and

next

slide,

please

so

under

so

we

will.

We

have

other

approach

per

club

approach,

defining

data

format

per

for

each

cards.

Next

slide

please

and

the

most

the

best

one

would

be

just

a

octet

sling

if,

but

it

would

require

a

new

algo

number

yes

and

next

slightly.

So

my

conclusion

is

that

sos

is

a

compromise

to

introduce

other

modern,

curves

obcc

without

dividing

from

standard

implementations.

A

H

If

we

adopted

that

as

the

approach

throughout

the

itf,

then

you

push

the

whole

onus

on

all

this

part

of

tagging

and

bagging

onto

the

creators

of

the

new

algorithms.

We

don't

need

to

revisit

this,

because

the

only

things

that

the

algorithm

ever

the

coder

needs

to

deal

with

is

the

seed,

so

just

just

a

different

way

of

looking

at

it.

B

I

Hi,

so

I'm

not

sure

if

there's

any

point

in

rushing

through

this,

but

just

a

a

generic

thing

for

the

people

who

haven't

followed

the

list

and

who

are

now

in

the

working

group

meeting

here.

What

we've

done

is

as

daniel

said

in

the

beginning.

We

are.

We

are

pulling

in

a

lot

of

changes

in

insistable

chunks,

for

people

to

reconfirm

the

consensus

so

that

we

can

get

to

a

state

where

everybody

agrees

on

the

biz

document.

That

is,

for

instance,

yoaf.

I

Why

you

are

seeing

triple

des

is

a

must

algorithm,

because

we

haven't

gotten

to

the

part

where

we're

going

to

rewrite

that

section

again,

that

is,

that

is

scheduled

to

be

in

the

next

or

the

the

second

next

update

to

the

draft.

So

in

general,

I'm

mostly

interested

in

if

people

see,

if

there's

anything

in

the

process

that

we

can

improve

on

so

far.

I

So

so

we're

basically

trying

to

to

present

this

in

little

chunks

with

you

know,

one

or

two

weeks

of

time

for

the

working

group

to

give

us

feedback

and

then

move

on

to

the

next

one,

in

the

hope

that

once

we've,

like

you,

know,

consumed

all

the

items

that

you

know

you

see

listed

here,

that

we

are

basically

in

a

in

a

similar

position

as

the

older

biz

document.

But

we

have

confirmed

all

the

consensus

of

all

these.

A

I

The

previous

presentation

is

interesting

in

that

it

would

have

if

we

would

pull

this

into

this

abyss

document.

Then

we'd

have

to

see,

because

that

would

that

would

prevent

that.

That

would

help

us

in

specifying

some

of

the

older,

the

newer

curves

that

we're

about

to

merge

in.

We

would

have

to

do

that

in

this

newer

way.