►

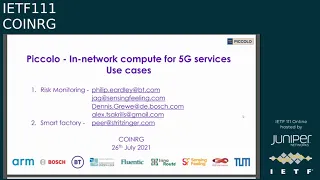

From YouTube: IETF111-COINRG-20210726-2300

Description

COINRG meeting session at IETF111

2021/07/26 2300

https://datatracker.ietf.org/meeting/111/proceedings/

A

Foreign

okay,

it's

four

o'clock

good

day

good

morning,

good

evening

very

late

into

the

evening,

and

thank

you

to

all

of

you

who

are

in

unusual

time

zones

and

are

up

well

beyond

when

you

are

normally

up.

You

are

attending

the

coin

computing

in

the

network,

research

group,

and

we

welcome

you

on

behalf

of

jeffrey

and

marie

jose

and

myself

otherwise

referred

to

as

gem

and

you

are

participating

in

the

ietf.

A

Despite

what

that

link

says

at

the

very

top

of

this

page,

please,

if

you

are

not

speaking,

please

be

muted

if

you

are

presenting,

feel

free

if

you're

comfortable

and

have

the

bandwidth

to

display

video,

although

it's

not

required

and

of

course

we

would

love

for

folks

to

participate

in

the

collaborative

note-taking

tool,

which

is

actually

not

only

specified

here

as

a

url.

But

it's

built

into

this

tool

the

meat

echo

tool-

I

personally

am

not

participating

in

the

jabber,

but

again

everything

has

been

integrated

into

this

tool.

A

A

B

B

B

The

culture

will

introduce

this.

This

is

related

to

computer

first

networking,

and

then

we

have

three

presentations

about

the

use

case.

First,

one

is

from

that

chosen

based

on

their

project

between

btw

and

huawei

and

cambridge,

and

they

will

also

they

will

introduce

their

insights

or

findings

in

use

cases

and

then

the

second

is

a.

C

Those

are

the

two

ones

that

are

very

high

in

terms

of

where

they

are

in

the

stack

and

they've

been

there

for

quite

a

long

time,

their

rg

documents-

and

we

intend-

probably

in

the

next

months

to

maybe

push

them

to

last

call

and-

and

you

know,

push

them

into

the

open.

The

stack

there's

a

new

draft,

which

is

the

the

case

from

the

case

study

from

xavier

and

then

there's

a

bunch

of

other

documents.

Some

of

them

are

expired.

Some

of

them

need

care.

C

C

Oh

you're,

already

in

presentation

we

had.

I

okay,

I

had

a

slide

on

some

of

the

milestones,

so

maybe

it's

going

to

be

presented

later.

We

have

yeah

well

yeah.

I

would

like

them.

Okay,

we

we've

we've

basically

addressed

all

our

milestones

with,

I

would

say

if

I

want

to

see

the

the

glass

half

full,

I

will

say

we

were

doing

really

well.

C

C

You

know:

we've

been

there

for

for

two

years

now

and

I'd

like

us

to

probably-

and

we

would

like

us,

the

gems

I

would

like

to

to

see

if

we

need

new

ones

which

ones

have

been

achieved

and

which

ones

need

to

be

updated

so

again

without

further.

You

know

introductions

from

us.

We

would

like

to

go

into

the

presentation,

the

first

one

because

of

time.

Well,

actually,

everybody

is

in

the

time

that's

presenting,

I

think,

is

in

a

bad

time

zone.

C

D

C

D

Okay,

so

yeah

onto

the

second

slide.

Thank

you.

So

this

is

about

automotive

risk

monitoring.

So

the

idea

of

the

use

case

is

that

it's

a

real-time

assessment

of

situational

risk

based

on

the

knowledge

of

a

vehicle

and

the

behavior

of

the

of

the

occupants

and

about

what's

happening

around

outside

the

vehicle

and

maybe

about

other

things

like

like

the

weather

or

the

road

conditions.

D

D

So

next

slide,

please,

okay,

so

this

is

how

you

might

try

and

do

it

today

or

kind

of

a

default

way.

You

might

do

it

today.

So

there's

video

processing,

which

is

separate

from

the

vehicle

data

systems

both

going

into

a

cloud-based

analytics

engine.

The

digital

processing

will

be

done

either

on

the

camera

or,

if

there's

a

you

know,

a

certain

number

of

several

cameras

in

in

the

car,

then

you

know

the

vp

might

be

that

visual

processing

might

be

combined

across

across

the

cameras.

D

D

So

another

way

you

might

have

flexibility

is

so

that

you

can

update

and

alter

the

the

algorithms

some

machine

learning

algorithms

easier.

So

you

know

so,

and

rather

than

having

often

this

the

software

and

these

systems

is

tightly

coupled

to

particular

hardware

in

the

vehicle,

so

to

enable

more

kind

of

flexibility

there,

you

might

also

be

able

to

have

different

kinds

of

algorithms

there,

depending

on

what

sort

of

vehicle

it

is.

D

D

D

D

That's

on

top

of

that.

That

does

things

like

resource

allocation

and

reporting

from

containers

and

attestation

and

then

sensing

feeling,

which

is

a

small

another

small

company

in

the

uk,

who

have

various

vision,

processing

products

today

so

they're

looking

at

changing.

That's

that

side

of

things

and

adapting

which

elements

are

done

in

the

vehicle

and

watching

the

network.

D

D

So

that's

control

of

things

like

resource

control,

being

able

to

flex

that

and

adapt

adapt,

the

algorithms

there

or

the

frame

rate,

the

cameras

or

whatever.

According

to

resources,

things

like

about

delivery

of

functions

to

the

nodes

and

what

sort

of

functions

they

might

be

distribution

of

the

functions

so

things

under

the

sort

of

general

orchestration

heading

thinking

about

what

control

or

knowledge

the

application

might

have.

D

What

the

network's

up

to

and

kind

of

flip

side.

Of

that,

what

additional

functions

an

edge

network

might

want

to

offer.

So

maybe

privacy

filtering

or

notably,

scope,

machine

learning,

algorithms

things

under

general

security

and

privacy

heading

which

have

touched

on

and

then

bearing

in

mind.

This

is

a

vehicle,

so

just

the

general

context

of

mobility

and

handle

mobility

and

how

you

handle

the

problems

associated

with

that.

D

So

that

was

all

I

wanted

to

just

quickly

run

through

on

that

particular

use

case.

Just

one

slide

at

the

end

about

the

project.

Yes,

so

that's

it

for

me

and-

and

I

can

either

ask

questions

now

or

quickly

or

we

can

peer,

can

talk

and

then

have

the

questions

at

the

end.

But

I'll

see

kiretti

you're

you're

in

there

hey.

Can

you.

G

G

G

You

expect

that

you

know

your

networking

works

because

the

wired

network

inside

the

car

and

when

it's

in

in

the

network,

your

connectivity

may

either

actually

go

down

or

experience

fluctuation,

so

in

in

designing

the

balance

between

what

happens

in

the

edge

node

and

the

picollo

node.

I

think

the

the

safety

of

the

car

from

the

point

of

view

of

there's

a

function

that

I

really

needed

to

be

secure.

G

D

G

D

A

H

H

C

H

H

So

first,

let

me

introduce

you

what's

what

we

want

to

achieve

here.

So

normally

factories

are

built

kind

of

like

on

the

drawing

board

and

then,

basically,

everything

is

programmed

by

hand

all

the

movement

of

all

the

pallets

and

all

the

processes,

and

everything

is

kind

of

like

handcrafted

in

plc

programs,

and

that

is

not

very

flexible

and

is

also

wasting

a

lot

of

effort.

H

So

the

the

goal

we

want

to

achieve

here

is

plug

and

produce.

So

you

actually

stick

together

your

conveyor

belt

system,

and

then

it

self

learns

its

topology

and

routes

pallets

in

a

smart

way

in

an

optimized

material

flow,

basically,

and

that

is

built

into

the

conveyor

belt

system

and

and

not

using

an

external

server.

So

that's

how

such

a

assembly

line

looks

and

we'll

just

like

skim

over

it.

It

goes

in

circles.

H

We

are

actually

distributing

in

the

in

the

use

case,

the

pellets

between

these

spooling

machines.

So

these

are

the

machines

that

actually

spool

electric

motors

each

machine.

Each

machine

can

spool

six

motors

and

sometimes

not

all

six

work,

so

we

have

to

be

flexible

in

distributing

the

pellets

and

and

also

different

kinds

of

motors.

Here

I

will

so

that's

actually

a

predecessor

system

where

we

have

little

nodes

connected.

H

I

just

want

to

show

you

like

how

this

movement

looks

like

you

have

these

pellets

and

you

can

go

through

corners

and

they

are

taken

by

these

conveyor

belts.

I

cut

this

short

because

of

the

time

constraints,

even

it's

like,

maybe

so,

let's

head

back

to

the

conveyor

belt

system,

so

that's

the

the

floor.

H

Plan

of

the

factory

was

just

the

conveyor

belt

and

everything

else

left

away,

and

so

again

we

are

focusing

on

this

spooling

machine

things

and

the

blue

dots

that

you

see

up

here

are

the

embedded

nodes

that

we

attach

to

the

to

the

conveyable

system,

which

are

later

sold

together

with

a

conveyor

belt

system,

or

that's

the

plan

actually

to

make

it

to

a

product

of

of

bosch

rexroad.

Who

makes

these

conveyor

belt

systems.

H

And

so,

if

you

take

away

the

the

grapple

system,

we

have

a

kind

of

like

mesh

network

architecture.

So

you

can

daisy

change

these

nodes

and

some

are

connected

to

a

switch,

and

so

that's

kind

of

like

a

typical

network

topology

we

would

have

in

practice,

and

people

don't

want

to

depend

on

the

topology

and

the

the

system

that

we

implement

is

using

message,

parsing

kind

of

like

an

active-based

system,

because

we

are

using

along

programming,

language

and

allowing

systems.

So

we

are

running

along

on

all

these

little

nodes.

H

So

we

have

processes

and

we

have

these

nodes,

which

are

the

actual

compute

nodes

and

we

have

to

connect

it

to

a

network

and

the

process

send

messages

and

that's

basically,

all

we

care

about.

We

have

everything

we

can

build

everything

with

processes

and

messages,

and

the

big

question

is:

if

you

have

a

complicated

system

and

a

complicated

topology,

how

do

we

automatically

map

these

processes

to

nodes,

so

everything

works

smoothly

within

necessary

constraints?

That's

our

core

question,

and

here

we

have

basically

two

modes

of

operation.

H

So

if

you

look

at

these

at

our

network

from

before,

basically

these

the

the

static

mapping

is

really

is

relatively

local.

So

this

is

for

the

control

loops

that

actually

control

the

the

the

neighboring

things

make

sure

that

nothing

collides

on

the

on

the

lowest

level

and

the

dynamic

mapping

is

basically

the

whole

network.

We

want

to

use

all

the

nodes.

H

So

we

can

actually

have

this

in

small

embedded

systems,

but

I'll

skip

my

skin.

The

details

here,

the

our

main

research

questions

here

are

that

can

we

can

we

actually

can

the

orchestrator

that

that

maps

all

these

computes

to

these

nodes

computation

these

nodes

be

distributed

in

the

same

in

the

same

sense

as

the

computation?

H

A

H

So

that

the

current

system,

that

that

only

controls

the

conveyor

belts

like

like

you,

have

seen

them

in

the

video

there,

we

have

kind

of

like

tens

of

milliseconds

or

five

milliseconds

and

soft

real

time

would

be

sufficient

because

nothing

bad

happens

if

you're

slow.

It's

just

like

the

pellet,

doesn't

move

immediately.

H

But

if

you

want

to

kind

of

like

do,

combined

access,

basically

like

you

want

to.

There

is

conveyor

belt

systems

that

that

can

precisely

control

the

position

of

the

pilot,

and

you

want

to

kind

of

like

synchronize

this

with

a

robot

arm

there.

You

need

to

go

to

sub

millisecond

hard,

real-time

requirements,

and

that

would

be

an

extension

of

the

use

case.

C

E

E

E

To

be

honest,

so

this

was

a

the

actual

seminar

that

took

place

earlier

in

june,

fully

virtual,

unfortunately,

but

we

organized

it

in

a

way

that

we

almost

ran

it

around

the

clock,

which

so

put

some

particular

burden

on

colleagues

in

the

u.s

and

asian

time

zones.

So

I

think

two

days

a

bit

payback

for

the

europeans,

and

so,

if

you

don't

know

dark

studsu

is

a

computer

science

convention

center

in

a

remote

place

in

far

west

germany.

E

So

so

is

there

like

a

space

for

a

new

approach

for

doing

that

that

it

goes

beyond

what

we're

already

doing

so

packet

flow

processing

or

just

overlays,

and

so

the

idea

was

a

bit

learning

from

this

distributed

computing

systems,

trying

to

take

advantage

of

recent

trends

in

hardware

and

and

software

technologies

and

yeah

trying

to

shape

a

research

agenda

essentially

and

yeah.

I

think

it

was

a

really

interesting

event,

thanks

everybody

who

who

was

there

and

put

in

all

the

time.

E

E

So

not

everybody

is

on

there

but

yeah.

I

think

you're

gonna

see

some

familiar

faces,

including

two

coin:

rg,

coaches,

okay,

the

problem.

At

a

glance

I

mean

it's

difficult

to

capture

this

fully,

but

these

are

where

the

like

the

talks

we

had

on

the

agenda

and

if

you

have

been

to

doctoral

you

know

that.

So

there

are

many

additional

things

happening

and

the

the

value

is

often

more

also

in

the

discussions

that

these

talks

evoke.

E

Also

some

quite

specific

insights

in

say

what

is

possible

with

current

technology,

so

pear

also

introduced

his

ideas,

and

so

we

also

in

the

end

discussed

a

bit

about

what

could

actually

be

nice,

say

more

substantial

research

ideas

or

phd

topics,

for

example,

and

so

I

just

this

is

a

it's.

A

very

eclectic

collection

here

I

just

picked

some

say

highlights

that

I

think

should

give

you

some

some

idea

of

what

what

has

been

discussed

so

in

general.

Why

is

this

relevant?

E

E

And

if

you

look

at

like

the

hardware

trends

and

like

the

more

like

the

hard

facts,

basically

so

complete

computing

and

communication

on

different

cost

performance

trajectories.

So

can

you

consider,

or

can

you

leverage

this

for

building

systems

that

yeah

enough

make

the

most

out

of

these

different

of

these

two

domains?

E

And

so

I

picked

this

one.

A

use

case

topic

here

because

we

heard

about

the

others

before,

and

that

is

health

in

general,

so,

for

example,

health

sensing,

so

making

use

of

all

these

sensors

that

we

have

fitness,

sensors,

pacemakers

and

and

so

on,

and

try

to

share

the

say,

analytics

results

of

this

data

in

like

in

a

federated

system.

E

So

not

upload

everything

to

the

cloud

and

do

it

there,

but

do

it

in

a

more

distributed

way

with

like

federated

principal

component

under

this,

for

example,

or

base

inference

systems

and

in

addition,

well

now

in

the

pandemic

we

are

doing

lots

of

contact

tracing

and

there

are

different

systems

on

the

market.

Some

of

them

are

more

centralized,

others

more

decentralized.

E

So

in

germany

we

have

a

fairly

decentralized

systems.

We

are

largely

happy

with

it,

but

it

could

be

actually

better

because,

due

to

this

decentralized

nature,

well,

you

kind

of

can

actually

cannot

use

the

data

to

its

full

potential,

so

be

nicer.

If

you

could

use

the

data

to

say,

have

a

bit

more

specific

insight

so

like

prevalence

in

some

areas,

tracing

particular

virus

variants

and

so

on,

but

without

giving

up

the

the

privacy

preservation.

E

First

networking

ideas,

then

for

the

facts:

yeah,

if

you

look

at

the

how

like

servers

and

switches

develop

and

how

they

they

differ,

and

so

on

I

mean

we

know

that

you

can

do

a

lot

in

in

servers

and

servers

are

getting

better

and

say

more

efficient

in

access

to

the

network,

better

hardware,

support

and

so

on.

Switches.

On

the

other

hand,

are

also

getting

smarter

and

more

pro

and

programmable,

but

even

with

like

promoter

data

planes,

which

is

today

what

you

can

actually

do

with

them

is

still

quite

limited.

E

So

it's

not

a

turing

complete

programming

platform,

and

so

these

are

still

different

systems,

and

specifically,

we

looked

a

bit

into

like

p4

programming,

and

so

it's

many

exciting

opportunities,

but

also

many

many

constraints

and

difficulties.

And

so

one

thing

we,

I

think

concluded

was

that

yeah.

You

can

use

this

for

some

things,

but

not

as

a

general

in

networking

in

network

computing

platform.

E

E

There

was

also

the

question:

what

are

these

areas

and

that

you

want

to

maybe

consider

so,

of

course

not

you

cannot.

You

have

to

consider

that

there

are

control

loops

that

are

really

critical

that

have

to

run

in

the

car,

for

example,

so

time

sensitive

and

safety,

critical

things,

for

example,

and

yeah.

So

some

questions,

so

some

smartnics

are

also

enabling

us

to

do

things

more

efficiently

and

with

like

fpgas,

and

so

what

I

mentioned

before.

E

E

Maybe

with

a

like

more

clean

state

approach,

you

could

also

arrive

at

say,

more

lightweight

design,

where

maybe

orchestration

doesn't

have

to

play

such

an

heavy

role

in

the

in

the

whole

system.

So

maybe

you

can

actually

have

a

more

like

disabled,

more

self-organized

way

in

these

systems

and

one

tool

for

that

that

we

also

looked

into

was

in

network

telemetry

and

so

basically

adding

more

information

to

the

actual

communication

that

is

used

for

distributed

computing,

for

example.

E

So,

like

feedback,

congestion

or

load

information,

these

kind

of

things

which

would

enable

the

notes

themselves

maybe

to

make

some

some

smarter

decisions

in

the

end

yeah.

Then

there

were

also

some

interesting

contributions

on

say,

rethinking,

communication

and

computation

abstractions,

more

fundamentally

so,

for

example,

starting

from

the

idea

that

you

would

always

communicate

in

a

in

a

broadcast

way.

E

And

yeah

coming

to

the

end,

so

there

was

a

lot

more

of

course,

but

I

just

want

to

keep

the

time

here,

and

so

my

our

professional

summary

here

is

that

there

are

trends

in

hardware

development,

so

that

are

actually

important

to

understand.

So,

like

multi-core

systems

are

also

kind

of

limited

because

of

thermal

heat

and

power

issues,

and

there

is

an

evolution

of

specialized

hardware

support

both

on

the

switching

and

the

say

server

side.

E

E

C

C

J

Yes,

I

will

spare

you

the

video

two

o'clock

in

the

morning

here,

so

you

don't

want

to

see

my

face

right

now.

Computer

is

not

working

yeah

as

us.

As

I

mentioned,

projects

has

been

set

up

between

huawei

bt

and

cambridge

university

cfn

project

team

includes

you

know,

john

grockhoff,

richard

morty,

phil

and

and

and

peter

willis,

on

the

on

the

bt

side

in

the

read

and

a

bunch

of

phd

students

at

cambridge

university.

J

J

If

you

will,

we

investigate

use

cases,

that's

what

we

started

with

the

project

started

with

some

delay,

jeffrey

who

my

co-chair.

He

was

grouped

in

setting

up

the

project

handed

this

over

to

me

last

year

and

and

that

led

to

some

delays

together

with

kobe,

so

we

started

effectively

at

the

beginning

of

the

year

end

of

last

year.

Roughly

so

we

are

currently

in

the

use

case

stage

that

led

to

requirements

for

node

and

network

technologies,

which

we

gathered

so

far.

J

Next

steps

will

be

developed,

key

networking

and

no

technologies,

and

also

to

provide

a

demonstration

of

key

benefits

for

a

selected

use

case.

So

this

presentation

today

is

about

the

use

case,

part

and

and

fitting

the

agenda

generally

of

the

of

today's

meeting.

We

apply

the

taxonomy

for

the

use

cases

that

we

looked

at

and

and

first

you

know,

obviously

looking

at

a

brief

description

of

the

use

case.

J

What

are

the

services

that

are

used

here

and

provided

in

that

use

case?

What

are

the

drivers?

What

drives

the

need

for

the

solutions

for

you

know

in

the

particular

use

case,

and

we

also

had

aspects

which

I

will

not

show

today

expected

economic

value

with

references

to

market

reports

time

to

demand.

So

when

do

we

require?

You

know

when

the

required

solution

that

will

rely

upon

some

of

the

novel

features

we

identified

in

short,

mid

and

long

and

low

them,

mainly

in

the

mid

and

long

term.

J

So

the

first

one

we

looked

at

is

distributed

data

storage

as

a

meta

use

case.

If

you

will,

because

it's

been

utilized

in

some

of

the

other

use

cases

as

well,

so

there's

a

need

for

a

vendor

and

a

service

provider,

independent

data

ecosystem

marketplaces

that

are

open

to

all

at

a

reasonable

customers.

Low

entry

barriers

for

storing

data.

J

The

servers

here

are

distributed,

consensus

systems

or

dlts

to

to

some

extent

that

provide

discovery,

functionality

to

match

constraints

such

as

hyper

capabilities,

utilize,

security

mechanisms,

hash,

algorithms

being

used

or

the

proof

of

work

pattern,

that's

being

utilized

and

then

and

and

so

allowing

me

to

find

and

discover

the

right

minors

that

match

those

constraints

and

perform

the

transactions

which

includes

the

multi-point,

sending

of

constraint-based

requests

to

a

selected

group

of

miners

and,

ultimately,

the

actual

computation

over

a

pattern.

A

workproof

mistake,

or

some

of

the

other

mechanisms

that

are

being

utilized.

J

J

Kovit

has

brought

some

of

these

issues

to

light

last

year

in

places

like

in

germany,

for

instance,

growing

value

in

data

gathering

and

sharing

data

is

an

important

asset

right

and

then,

and

hence

data

storage,

and

maybe

an

illustrative

manner

becomes

crucial.

As

I

mentioned.

Also

regulations

such

as

gdpr,

which

pushes

things

like

locality

and

quite

heavily

and

and

also

purpose

oriented

storage,

is

one

of

the

you

know

the

key

issues

as

a

data.

J

So

the

servers

here

are

general

av

communication,

computational

cores

sensor,

gathering

infusion

fusion

and

some

of

this,

the

ones

we

specifically

listed,

which

again

also

partially

overlapped

with

what

phil

presented

for

the

piccolo

version

before

is

positioning

through

distributed.

Ai

localized

object,

recognition

for

level

5

driving,

localized,

dynamic,

high

precision,

maps,

predictive

accident

avoidance,

predictive

vehicle

flow

management

and

the

virtual

black

box.

That

would

be

boxing

block

and

there

are

some

of

the

example

services

that

use

a

mixture

of

the

of

the

air

communication

computation.

J

Of

course,

I

mentioned

before

the

the

the

key

in

all

of

these

services

to

provide

a

reaction

capacity

to

the

virtualized

services

with

a

fast

rerouting,

so

that

we

have

high

dynamics

that

that

may

happen

between

some

of

the

distributed

service.

Endpoints

your

these,

the

the

the

needed

capacity

for

filtering

and

pre-processing.

J

Computer,

where

traffic

steering

is,

is

a

key

aspect

and

to

ensure

that

the

service,

that's

the

traffic,

is

being

routed

to

is,

for

instance,

not

overloaded

or,

as

short

as

delay

possible,

depending

on

what

service

we

are

talking

about.

These

are

some

of

the

key

aspects

in

the

services

that

we

observed.

J

Drivers

generally

is

is:

are

the

the

level

five

driving

requirements

in

the

various

worldwide

regions?

There

are

some

differences,

but-

and

you

know

overall,

they're

they're,

they're

rather

similar,

and

you

find

these

services

across

all

of

those

requirements.

If

you

will,

the

last

area

where

I

defined

are

digital

twins

here,

as

a

quote

from

the

digital

twin

consortium.

Digital

twin

is

a

virtual

representation

of

real-world

entities

and

processes

synchronized

at

a

specific

frequency

and

fidelity.

J

So

it

consists

of

data,

computational,

representational

models

and

service

interfaces.

The

the

the

services

are

similar

to

the

to

the

to

the

v2x

we

saw

before

so

again.

You

know

generally

av

communication,

computational

cores

and

and

sends

the

data

in

and

fusion,

but

maybe

with

somewhat

different

goals,

if

you

will

in

the

digital

twin.

J

J

You

know,

and

that

means

to

often

need

to

optimize

communication

as

well

as

a

computation

pipeline.

The

example

for

that

is

a

live

feed

av

with

a

feature

with

a

feature

extraction

and

centralized

unit

where

the

computation

pipeline

optimization

may

be

more

important

than

the

communication.

Optimization.

J

In

that

case,

drivers

here

are

again

several

vertical

industries,

such

as

manufacturing,

automated

mode

of

supply,

chain,

aerospace,

defense.

You

know

that's

a

very,

very

long

list,

there's

an

emergence

of

a

number

of

industry

initiatives

I

mentioned

before

the

digital

twin

consortium

was

already

voted

before

the

the

the

the

idta

and

gaia

axon.

Some

of

the

other

initiatives

that

work

in

various

aspects

gaiax

very

much

on

aspects

like

data

center,

interconnect

aspects,

the

data,

storage,

obviously

aspects

as

well

there.

J

J

The

announcement

of

computation

within

and

across

domains

also

the

need

for

delegated

announcement,

and

then

maybe

even

pre-announcements

are

described

in

or

are

are

being

derived

from

some

of

the

use

cases.

The

interconnection.

A

lot

of

these

communications

we

see

happening

and

and

what's

captured

in

rfc

8799,

is

limited

domains

that

are

very

stakeholder

specific

some

of

the

stakeholders

that

I

mentioned

before

in

the

vertical

industries

that

are

involved

in

the

initiatives,

but

there

is

obviously

a

requirement

to

to

interconnect

across

limited

domains

where,

in

particular,

the

public

internet

comes

into

play.

J

Also,

requirements

too

that

allow

us

to

bind

to

available

computational

instances

on

the

dynamic

constraints

both

and

pair

talked

about

this

before

in

their

talks

around

you

know,

being

able

to

build

dynamic

relationship

between

the

services

as

they

are

being

deployed

in

the

network.

The

constraints

here

may

change

and

obviously

vary

per

use

case,

but

may

also

change

over

time,

and

hence

these

bindings

are

may

exhibit

dynamic,

binary

behavior

as

well.

Collective

communication

patterns

that

are

potentially

even

request

specific

can

be

found

in

a

number

of

the

use

cases.

J

Undistributed

reasoning

that

I

mentioned

before

and

an

ipv6

support

for

making

this

work

work

in

an

ipv6

environment

through

suitable

potential

extensions

is

another

requirement

we

derived

some

of

the

requirements.

I

wanted

to

note

that

here

to

make

a

link

is,

they

are

already

captured

in

the

existing

current

use

case

draft

image,

as

I

mentioned

before.

J

That

brings

me

then,

finally,

to

the

next

steps

so

for

coin,

and

the

next

steps,

from

from

from

our

side,

is

to

consider

the

integration

of

use

case

and

requirements

in

the

existing

use

case

draft

that's

quite

easy,

given

that

I'm

one

of

the

co-authors,

I'm

I

wanted

to

bring

this

to

discussion

with

the

other

co-authors

for

the

next

iteration.

To

maybe

bring

some

of

the

insights

from

the

cfn

work

in

there

to

see.

J

What's

missing

is

that

some

of

the

use

cases

are

already

in

there

in

part,

so

it

should

be

quite

easy

for

the

cfn

project

I

mentioned

in

the

in

the

objectives

before

is

to

pick

a

use

case

of

choice.

We

haven't

done

that

yet

so,

which

one

would

be

worked

with

in

in

terms

of

the

system,

architecture

and

the

demonstrator,

and

the

demonstrators

plan

for

for

next

year

to

system

architecture.

Work

will

happen

this

year.

So

that's

something

maybe

also

for

another

update

to

the

group

once

we

have

more

to

share

good.

Thank

you.

C

Yeah,

thank

you

very

much.

We

well.

I

I

think

it's

a

great

idea

that

you

want

to

include

this

into

the

use

case

document

and

by

the

way,

we're

going

through

a

lot

of

use

cases

in

this

meeting,

and

I

think

the

the

goal

of

the

the

minutes

will

be

also

to

you

know,

organize

them

in

such

a

way

that

we

have

a

better

view

of

all

of

this

and

move

a

lot

of

discussion

to

the

to

the

list.

C

A

Consortium,

so

I

wondered

if

you

think

that

some

of

those

places

are

organizations

that

we

should

hear

from

due

to

their

interesting

perspectives

that

are

either

adjacencies

or

overlap

with

some

of

the

goals

here.

What

what

are

your

thoughts

there,

and

and

does

the

cfm

project

also

interact

with

those

consortium

as

well.

J

Well,

the

cfn

project

only

through

the

partners,

you

know

indirectly,

so

not

as

a

project

itself

use

the

project

itself

as

a

research

project.

We

have

some

involvement

in

some

of

the

the

the

initiatives

and

gaiax

is

something

where

huawei

is

very

active

in

one

of

the

ones

that

I

didn't

mention.

I

don't

know

exactly

why

I

left

them

out

because

it

might

be

quite

interesting.

It's

the

iic,

the

industrial

internet

consortium,

where

the

industrial

ledger.

J

I

have

to

use

the

right

word

task

group.

I

think

it

is

it's

currently

preparing

a

white

paper

that

may

be

quite

interesting.

That's

something

that

we

initiated

in

that

particular

group

where

we

presented

some

work

on

the

impact

of

dlts

on

networks

and

and

that

has

to

do

with

the

way

dlts

work.

We

specifically

looked

at

ethereum

as

one

of

the

examples

there

and-

and

you

know,

does

it

really

work

the

way

we

expect

it

to

work?

What

are

some

of

the

issues

that

are

caused

by

the

involved

network

technologies?

J

Are

there

any

ways

to

improve

on

that?

That's

a

bit.

We

we

picked

the

irc

because

it

had

that

dedicated

ledger

group,

but

I

think

that's

maybe

also

something

and

then

and

the

legends

themselves

are

quite

important

in

the

isc

because

of

the

aspect

of

again

data

storage

and-

and

these

are

maybe

some

of

the

discussions

that

may

be

quite

interesting

for

this

group

as

well.

So

I

think

that

in

the

data

space

there

may

be

something.

A

J

Well,

we

started

with

ethereum,

just

because

of

you

know

it's

quite

easy

for

us

to

study.

I've

also

looked.

I

actually

had

a

call

with

janus

a

couple

of

months

or

weeks

ago,

and

ficond

is

another

one

because

of

some

of

the

changes

that

we

find

is

quite

interesting

as

an

evolution

from

ethereum

to

firecoin.

So

it's

not

specifically

targeted

falcoin

and

all

these

platforms

are

all

part

of

this

distributed:

data,

storage

and

and

and

also

the

question

of

how

the

network

can

help

with

any

of

that.

J

So

some

of

the

the

the

problems

we

outlined

in

the

operation

of

ethereum

really

have

to

do

with

some

aspects

of

of

of

net

network

issues

that

we

all

know

like

non-routability

to

miners.

Even

the

miners

have

announced

themselves

and

and

the

associate

problems

that

has

right

on

the

operations

of

the

of

the

dlt

now

file

coin

has

specific

mechanisms

to

tackle

some

of

those

at

the

overlay

level,

which

is

the

reason

why

we've

also

looked

at

it

as

a

as

a

potential.

A

C

Okay,

so

thank

you

sorry,

sorry

for

talking

over

you,

okay,

so

thank

you

very

much

dirk.

The

next

is

at

first

it

was

supposed

to

be

the

presentation

of

a

draft,

but

when

we

started

talking

to

seville

we

figured

out

it

was

also

a

new

use

cases

and

your

use

case,

and

it

was

it

is

for

mobile

virtual

networks.

So

xavier,

please

go

ahead.

L

But

actually

this

use

case

uses

three

well-known

technologies.

You

know

p4

for

data

plane,

programming,

5g

has

underlying

network

and

5g

lan

as

virtualization

technology,

and

the

the

goal

is

to

initially

study

the

the

problem.

You

know

without

too

many

moving

parts,

because

all

those

technologies

are

known,

but

this

is

a

starting

point

and

we

can

look

at

expanding

the

programming

aspect

and

look

at

you

know

how

p4

can

be

used

for

you

know

more

more

things

than

than

than

today.

L

L

First

data

plane

programming

can

be

used

by

tenants

to

control

increasingly

complex

virtual

mobile

networks,

so

we

need

to

cope

with

the

evolution

of

future

mobile

networks

towards

more

and

more

customized

environments.

So

we

can

expect

very

diverse

and

granular

network

services,

including

computing

services,

and,

I

would

say,

beside

besides

handling

complexity.

L

L

L

L

So

now

we

we

can

look

at

some

aspects

of

the

underlying

network

right.

So

two

points

mostly

I

mean

first,

you

know

the

the

p4

program

or

it

needs

to

be

deployed

on

physical

nodes

and

possible

locations.

Include

you

know,

mostly,

these

user

plane

functions,

so

you

have

anchor

user

blade

functions

represented

here

which,

which

are

the

at

the

edge

of

the

5g

domain,

or

you

can

have

intermediate

upfs

as

well,

and

you

can

even

you

know,

deploy

some

p4

programs

or

fragments

or

programs

on

mobile

nodes.

L

L

So

the

that's

you

know

that

leads

us

to

the

requirements

and

research

challenges.

The

first

p4

programs

will

need

to

be

splitted

or

distributed

because

data

paths

are

distributed

with

no

central

node

in

the

general

case

right.

So

this

can

be

an

input

to

the

coin.

Rg

draft

on

p4

distribution,

which

is

in

reference

here

in

particular

in

in

this

use

case.

You

know,

distribution

is

not

for

scaling

purposes

or

performance.

L

It

is

needed

to

process

all

packets

right

and

a

second

point

is

a

multi-multi-tenancy

support

or

second

requirement.

I

would

say,

multiple

5g

lands

can

share

the

same

infrastructure

and

the

mtpsa

paper

mentioned

earlier,

and

other

related

studies

provide

useful

solutions

in

this

space

and

the

fact

that

programs

may

be

distributed

and

multi-tenant

at

the

same

time

could

also

add

some

additional

challenge.

L

A

third

point

is

about

mobile

network

awareness.

Like

a

p4

program

could

interact

with

a

mobile

network

system

and,

for

example,

it

could

learn

parameters

associated

with

the

flow.

It

could

also

influence

the

processing

overflow

by

the

5g

system.

So,

for

example,

based

on

some

processing,

you

could

select

a

particular

slice

or,

for

example,

and

a

fourth

point

or

fourth

requirement

is

mobility.

L

Support,

since

you

are

in

a

mobile

system,

p4

programs

would,

in

this

case,

need

to

migrate

in

order

to

follow

data

flows

when,

when

mobile

devices

move

from

one

attachment

point

to

the

to

the

next

and

finally,

there

are

security

risks,

including

overusing

network

resources,

injecting

traffic

and

accessing

you

know,

traffic

from

other

users

right.

So

here

I

like

just

to

summarize

data

plane

programming,

you

know

in

this

use

case

is

a

way

for

tenants

to

control

virtual

networks

over

various

underlying

networks

and

as

a

starting

point

we

can

use.

F

L

L

So

we

you

know

related,

for

example,

to

new

data,

plane,

programming

approaches

or

maybe

improving

p4,

as

that

has

been

mentioned

in

the

actual

seminar

summary,

and

also

you

know,

and

after

that

you

know

also,

we

could

look

at

other

underlying

networks.

You

know

data

centers,

especially

and

of

course

we

could

study

the

impact

on

those

changes

under

the

requirements

and

challenges.

C

Thank

you

very

much

davey.

I

can

summarize

a

little

bit

with

was

discussed

with

the

use

case,

authors

that

maybe

this

could

be

one

of

the

use

cases.

Then

that

would

you

know

we

would

need

to

talk

about.

Does

it

still

need

its

own?

Its

own

draft

there's

also

a

question

from

dave

oren.

Do

you

see

the

question

in

the

chat

zavier.

C

Yep

so

the

question

I

will

read

it

is,

he

says,

doesn't

want

an

answer

now,

but

maybe

you

can

give

ideas

of

the

answer

about

a

p4

virtual

5g

network.

He

says

that

we've

had

virtual

router

technology

on

conventional

routers

for

20

years,

but

nobody

could

figure

out

how

to

deal

with

the

required

isolation,

properties

or

the

ability

to

properly

soft

or

hard

partition.

The

resources

and

actually

the

question

is-

is

before

making

this

easier

or

harder.

L

But

so

I

think

that

you,

you

would

need

some

form

of

interface

between

I

mean

the

integration

between

the

mobile

network

and

the

and

the

p4

switch

would

need

to

be

controlled

and

under

the

control

of

the

of

the

operator.

In

order

to

to

to

avoid

the

security

issues,

I

didn't

think

I

didn't

see

any

big

blocking

issue,

but

I

may

not

have

thought

of

everything.

So

thank

you.

A

And

our

next

talk

is

chris

who'll,

be

speaking

to

us

in

fact

giving

us

a

preview

of

his

paper.

That's

been

accepted

to

infocom

2021

and

chris

you're

welcome

to

start

sharing

your

screen.

While

I

give

a

little

bit

of

background

about

you,

chris

is

a

phd

student

who's

finishing

up

this

year,

so

for

all

of

you

out

there

who

are

looking

for

talented

technologists

chris

will

be

on

the

market

shortly.

A

M

It

may

be

able

to

satisfy

some

of

the

requests

with

the

store,

the

data

that

is

already

in

the

system,

thus

being

able

to

actually

provide

very

good

servicing

in

some

cases

and

some

it

may

not,

but

as

as

it

showed

up

until

this

point,

it's

actually

it

can

improve

computing

systems

by

quite

a

lot.

So,

as

we,

you

know,

as

as

everyone

here

knows,

any

computing

system

is

generally

made

of

at

least

like

a

processing

like

processor,

main

memory

and

io

right.

M

M

Of

course,

making

the

differentiation

between

cache

and

storage-

that

is

sorry.

Oh,

I

accidentally

skipped

a

few

slides

there.

Okay,

so

yeah

I

developed,

store

processing

for

that

reason,

which

is

like

it

was

developed

for

the

reverse

flow.

As

I

said,

of

data

and

for

service

adaptability,

then,

for

this

to

be

implemented,

I

used

a

two

definitions

of

timing

for

data

to

be

stored,

which

is

the

freshness

period

and

shelf

life.

M

M

This

is

so

this

represents.

The

schematic

basically

represents

an

internal

algorithm

for

the

for

how

storage

would

work.

Basically,

before

this,

the

it

was

pretty

dumb

the

system,

then

we

implemented

some

more

intelligence

in

the

fact

that

it

started

having

some

feedback

loops

and

it

started

looking

at,

and

this

was

already

implemented,

which

is

basically

function,

instantiation

and

a

feedback

loop

for

feedback

for

that

it

was

towards

the

cloud

and

it

was

implemented

without

storage

beforehand.

M

So

yeah

the

labels

themselves

offer

the

ability

to

track

performance,

the

property

of

data

and

the

data

placement

statistics,

which

is

so.

This

is

on

top

of

the

firstness

period

and

shelf

life,

and

it

provides

a

means

for

other

strategies

to

be

implemented

on

top

of

it,

so

that

the

system

can

actually

use

them

to

their

to

its

advantage

and

to

those

to

the

certain

user's

advantage.

Of

course

it

makes,

of

course

it

makes

the

system

aware

of

the

data

context

and

it

can

improve

performance

exactly

because

of

that.

M

M

M

Thus,

from

here,

we

basically

decided

that

we

have

we.

We

have

to

have

a

structure

within

our

date.

How

should

I

say,

data

population

right?

So

all

the

data

are

individually

hashed.

They

have

different

identifiers,

but

at

the

same

time

they

all

come

with

labels,

which

can

basically

classify

the

data

and

make

it

easier

for

different

entities

within

the

medium

to

both

identify

and

use

the

data

and

more

effectively.

M

We

then

had

a

prototype

evaluation

that

was

using

a

google

file

system

for

timings

and

like

local,

basically

local