►

From YouTube: IETF111-GNAP-20210726-1900

Description

GNAP meeting session at IETF111

2021/07/26 1900

https://datatracker.ietf.org/meeting/111/proceedings/

A

A

A

C

A

A

A

A

This

was

christina

all

right.

Thank

you

very

much

christina

for

taking

notes.

You

can

use

any

old

emacs

or

you

can

use

the

kodi

md.

The

link

is

on

this

slide

and

it's

already

ready

with

some

some

material

filled

in

so

that

would

be

even

more

convenient

if

you're

doing

it

offline.

Please

send

me

the

minutes

to

leave

and

myself

after

the

meeting.

D

A

A

A

E

E

Please

go

ahead.

Okay

next

slide,

I

should

introduce

I'm

the

chief

technology

officer

of

a

nonprofit

called

patient

privacy

rights.

It's

a

volunteer

position

and

I

also

lead

a

an

implementation

of

self-sovereign

technology

protocols

built

around

some

of

the

standards

that

I'll

be

talking

about

today

and

that

we're

working

on

gnapp.

E

I

I

want

to

talk

about

the

human

rights

perspective

on

protocol

design,

where

we're

not

just

talking

about

privacy

to

the

individual,

but

the

impact

on

society,

the

efficiency

that

is

increasingly

shifting

power

not

to

self-sovereign

people

through

decentralization

but

actually

to

the

sovereign

corporations

and

governments

and

to

deal

with

that

protocols

are

essential.

You

know,

standardized

data

models

like

verifiable

credentials

and

decentralized

identifiers

are

great.

They

are

useful,

but

they

do

not

have

a

primary

impact

on

the

human

rights

perspective.

E

I'm

going

to

just

describe

one

slide

of

what

aspect

of

self-sovereign

identity.

I

think

matters

the

issue

of

separation

of

concerns,

sort

of

we're

familiar

a

bit

with

the

gdpr,

the

the

legal

difference

between

a

controller

and

a

processor

and

the

separation

of

concerns

of

not

lumping

them

together

in

the

standard

sort

of

facebook

model.

If

you

would

and

then

well,

how

do

you

do

controls

what's

going

on

in

w3c

and

diff

and,

as

I

said,

I

hope

to

have

10

or

15

minutes

for

discussion

next

slide,

please.

E

So

what

are

the

human

rights

concerns?

You

know

it

made

me

think

of

of

tyrell

corporation

in

in

the

movie

blade

runner,

where

you

know

the

the

problem

was

that

it

was

really

impossible

for

humans

to

regulate

what

a

corporate

entity

did

once

it

sort

of

became

a

a

thing

of

its

own.

The

kind

of

concerns

we

have

are

like

facial

recognition,

which

I'm

calling

ambient

authentication.

It's

been

banned

in

a

couple

of

jurisdictions,

but

it's

still

out

there.

E

E

Next

slide,

please!

So

I'm

not

going

to

dwell

on

this

slide.

I'll

simply

say

that

self-sovereign

identity

could

be

thought

of

as

an

identity

that

only

you

control.

There's

no

third

party,

you

know,

there's

no

domain

controller

or

anybody

else,

and

it

basically

has

a

public

component,

the

decentralized

identifier

and

the

did

method,

and

in

the

did

document

in

the

things

that

are

controlled

by

you.

E

E

Everything

else

is

a

processor,

in

other

words,

everything

other

than

your

identity,

whether

it's

a

nation

or

a

vendor

or

is

is

basically

a

sovereign

as

far

as

you're

concerned

there.

Sometimes

you

have

a

choice,

but

very

often

you

don't

have

a

choice

of

sovereignty

unless

you

want

to

be

a

refugee

and

the

control

models

which

I'll

say

a

little

bit

more

in

a

second,

are

either

direct

control

through

possession

messaging

type

control.

E

E

So

where

do

I

hope

or

what's

my

picture?

What

do

I

want

you

to

keep

in

mind

as

we

go

to

the

discussion

slide

section?

We

originally

had

the

narrow

waste

being

internet

protocol,

and

then

you

see

all

those

alternatives:

email,

www

phone

on

one

side,

copper,

fiber,

physical

layer.

What

we

ended

up

with

partly

as

a

direct

result

of

oauth

is

a

platform

narrow

neck

where

we

get

to

choose

our

sign

in

with

facebook

or

sign

in

with

google.

E

E

So

specifically,

we

can

bring

up.

These

are

five

w3c

and

diff

protocol

work

groups,

the

one

that

I'm

most

involved

in

and

justin

is

as

well.

I

don't

know

about

the

others,

because

I

don't

attend.

All

of

them

is

the

verifiable

credential

api

protocol

work

there's

also

the

encrypted

data

vaults

identity

hubs

which

has

been

allowed

for

a

long

time.

E

E

You

have

my

contact

info

and

I

really

really

welcome

a

continuation

of

the

discussion

as

well

as

help

in

implementing

an

app

in

in

the

particular

way

that

we're

trying

to

do

it

in

the

hiu

of

one

example:

implementation

of

various

standards,

including

dids

and

vcs

in

the

healthcare

context.

Hie

stands

for

health

information

exchange

of

one.

So

it

is

sort

of

this

idea

that

you

can

have

a

self-serving

agent.

So

the

questions

are,

as

you

can

read

them

yourselves,

how

many

authorization

protocols

does

the

internet

need

at

that

narrow

waste?

E

Do

we

need

something

other

than

gnapp

in

the

narrow

waste

there?

How

do

we

feel

about

you

know

compatibility

with

oauth

in

terms

of

the

human

rights

and

particularly

things

like

client

credentials

that

are

often

associated

with

all

laws?

Do

we

understand

and

recognize

the

difference

between

self-sovereign

agents

and

fiduciary

agents?

E

Both

are

important

to

human

rights

and

if

people

want

to

discuss

that,

I'm

happy

to

go

into

it,

how

to

detach

the

chain

of

custody

type

protocols

that,

like

I

say,

treat

every

transaction

as

a

pre-crime

event,

potentially

without

actually

introducing

biometrics

that

we

have

associated

with

analog

credentials

in

the

past

and,

finally,

the

the

question.

The

big

question

is:

should

gonapp

be

seen

as

the

narrow

waste

of

self-sovereign

identity.

F

All

right,

I

think,

I'm

sending

it

says

I

am

okay,

so

adrian

first

off.

Thank

you

so

much

for

bringing

this

in,

as

adrian

mentioned,

I'm

also

tangentially

involved

in

a

few

of

the

the

efforts

that

were

that

were

discussed

here:

okay,

including

the

awkwardly

named

vc

http

api

alphabet

soup,

but

also

the

selfish

udo,

open

id

provider

and

a

few

other

things

so

to

me.

F

So

first

adrian,

I

want

to

say

I

I

really

appreciate

your

perspective

on

asking

this

question

about

you

know:

is

this

security

protocol

the

narrow

waste?

I

think

that's

a

very

interesting

way

to

look

at

it,

but

my

personal

perspective

on

this

is

that

it

is

equally

important

to

always

ask

the

question

where

these

things

fit

together.

F

So,

on

those

layered

diagrams

a

lot

of

times,

the

the

the

important

questions

in

getting

answer

to

get

answered

are:

what

do

the

interactions

between

those

layers

look

like?

That

would,

of

course,

it

does

of

course,

have

to

be

very,

very

clear

when

you're

at

the

narrow

waist,

if

you're

at

the

narrow

waist,

because

you

know

you've

got

the

two

most

important

boundaries

sitting

above

and

below

you,

but

with

a

security

layer

protocol

like

gnap

you've

got

to

focus

on.

F

This

is

this

is

an

api

for

getting

cryptographic

credentials

moved

around

using

using

web

protocols.

In

short,

there

are

a

number

of

different

ways

that

gnap

and

the

vc

http

api

could

fit

together

and

they

all

have

different

bearings

on

where

you

view

sort

of

the

centrality

of

the

protocol,

for

example,

as

an

hp

as

an

api

ganap

can

be

used

to

protect

calls

to.

It

could

also

be

used

to

in

the

process

of

gathering

the

credentials

that

would

be

used

in

the

api.

F

So

you

know

getting

a

user

involved

is

something

that

can

happen.

Oauth

do

that's

kind

of

the

one

one

of

their

main.

You

know,

selling

points

if

you

will

and

so,

depending

on

which

direction

you're

approaching

the

problem

from

gnab

could

look

like

a

narrow

waist

or

it

could

look

like

just

another

function

in

sort

of

the

overall

structure.

F

E

Very

briefly,

because

I

I

hope

we

have

other

perspective

for

at

least

another

perspective,

two

two

components

to

to

your

question

number

one,

I'm

primarily

interested

in

delegation

as

a

human

right.

We

all

understand

why

we

get

to

choose

our

defense

lawyer

when

facing

the

court

or

the

state

why

we

get

to

choose

our

doctor

when

we

face

the

hospital

or

the

drug

vendors,

and

so

as

you,

we

think

about

those

different

layers.

We're

gonna

might

or

might

not,

and

you

are

very

clear

as

to

what

you

meant.

E

Is

there

really

only

one

above

and

below

or

is

an

app

spread

into

the

other

layers

of

the

of

the

hourglass?

I

I

think

if

at

any

layer

we

ignore

delegation,

we

are

in

introducing

the

potential

for

a

human

rights

problem,

and

so

that's

the

direct

answer

to

your

question.

It's

delegation

all

the

way

down

to

use

the

turtle's

analogy.

E

The

second

part

of

the

thing

is,

I

am

basically

looking

to

potentially

either

take

up

within

the

ganap

group,

an

appendix

that

discusses

these

five

protocols

or

whatever

the

right

number

is

that

are

being

worked

on.

You

know

on

top

of

dids

and

vcs

and

either

literally

say

about

them.

What

we

said

about

oauth

is

here's

what

they

don't

do

that

needs

to

be

done

in

gnapp

or

help

me

start

another

work

group.

Hopefully

many

of

us

here

will

join

this

and

I'm

new

to

iatf.

E

G

My

question

is:

there

is

nothing

in

oauth

itself

that

actually

requires

that

relationship.

There's

there's

no

requirement

for

client

credentials

at

all

and

there's

dynamic

client

registration,

which

is

in

oauth,

which

would

allow

someone

to

without

any

prior

relationship,

show

up

and

use

and

assign

in

with

facebook

button

or

access

data.

G

E

That

justin

was

an

editor

for,

and

I

was

a

co-founder

of

five

years

ago

and

you

cannot

get

there

from

here

now.

If

that's

my

opinion,

you

know

as

an

advocate

and

not

a

geek

if

justin

wants

to

pipe

in

for

his

opinion

as

to

what

failed

about

uma,

2

and

the

hard

work

group

as

a

profile

of

oarth

and

uma.

B

C

Oh,

go

ahead,

robin

okay!

Sorry

thanks

kathleen

adrian!

Thank

you

again

for

bringing

this.

I

I

guess

my

question

is

a

very

simple

one.

What

is

it

in

this

model

that

would

prevent

a

relying

party

from

simply

saying

to

the

individual

here

are

all

the

pieces

of

data

that

I

require

about

you.

Otherwise,

you

can't

access

the

service.

E

Again,

it's

the

issue

of

delegation.

As

long

as

we

are

careful

that

it

becomes

obvious

when

the

relying

party

is

preventing

you

from

choosing

your

defense

lawyer

or

from

choosing

you're

a

doctor.

I

happen

to

be

a

physician,

though

I've

never

practiced

medicine

and

I

I

only

had

a

license

for

like

15

years

and

decided

it

wasn't

worth

the

money

every

year,

but

I

so

I'm

a

geek

I'm

an

engineer

by

by

nature,

but

yes

that

that's

basically

what

has

to

happen

now.

E

A

B

F

All

right,

hi

everybody,

I'm

justin

richard

joined,

also

by

aaron,

parecki

and

fabian

involved,

and

I

will

be

doing

the

editor's

presentation

today,

aaron

and

fabian.

Please

feel

free

to

jump

in

at

any

point

and

yeah.

Let's

get

started

so

first

off

we're

going

to

talk

about

the

changes

in

the

drafts.

We

are

now

a

two

draft

working

group,

which

is

you

know

a

hundred

percent.

F

The

mix-up

attack

and

also

I

want

to

focus

on

some

features

that

have

been

removed

and

and

changed

significantly

since

the

since

the

draft

at

the

last

meeting,

and

then

we

want

we're

gonna

end

by

talking

about

kind

of

our

next.

Our

next

set

of

big

topics,

kind

of

like,

where

we're

going

next

and

and

the

status

of

implementations

that

we,

the

editors,

knew

about

and

we'd

like

to

really

start

calling

preview

there.

F

We

we

would

like

to

start

calling

out

more

people

who

are

implementing

knapp

implementing

parts

of

knapp

and

and

that

kind

of

thing,

so

you

can

go

through

the

the

rfc

diff.

If

you

want

the

detailed

text

changes

for

the

core

draft,

the

resource

server

draft

is

functionally

brand

new,

except

that

a

lot

of

that

text

actually

came

from

the

core

draft

to

begin

with.

F

So

a

lot

of

the

sections

that

you

see

deleted

from

the

core

draft

version,

4

have

wound

up

in

the

resource

service

draft

and

then

been

expanded

on,

but

more

on

that

in

just

a

bit

next

slide.

Please

there's

been

a

lot

of

work.

Over

the

last

few

months

we

have

merged

36,

pull

requests

against

core

10

against

the

resource

servers

draft,

and

you

can

use

those

urls

there

to

go.

F

Look

at

github

and

see

the

exact

text

changes

for

all

of

those,

and

so

just

I'm

going

to

now

talk

through

the

what

the

actual

changes

were.

We're

not

gonna

go

diving

deep

into

every

individual

pull

request,

especially

because

some

of

them

undid

the

changes

that

that

earlier

ones

do

because

this

is

an

iterative

process,

but

I

do

wanna

try

to

thoroughly

cover

the

stuff.

That's

changed

since

last

time,

so

the

discovery

mechanism

and

the

core

draft

these

are

these

are

by

the

way.

F

So

this

is

the

stuff

that

allows

a

client

instance

to

do

a

pre-flight

call

to

the

auth

server

and

figure

out

different

bits

and

parameters,

and-

and

things

like

that,

as

most

in

the

group

know,

this

type

of

discovery

in

gnapp

is

is

functionally

optional

because,

as

you

know,

the

name

suggests

the

negotiation

mode

of

the

protocol

or

the

negotiation

nature

of

the

protocol

allows

the

different

parties

to

be

able

to

say

like

here's,

what

I

can

do,

here's

what

you

can

do.

How

can

we

you

know?

F

F

Anyway.

This

discovery

mechanism

is

fairly

lightweight

and

the

changes

here

are

are

relatively

minimal,

but

it's

they're

reflecting

other

changes

that

that

we

made

down

the

line,

including

this

next

section

about,

subject

identifiers.

I

have

to

give

huge

credit

to

fabian

on

this.

He

did

a

lot

of

great

work

with

clarifying

how

subject

identifiers

work

inside

of

gnapp,

so

this

whole

notion

of

being

able

to

identify

who

the

user

is

and

potentially

who

the

resource

owner

is.

We've

got

some

open

issues

for

for

clarifying

that

language,

but

identifying

the

person.

F

That's

there

is

really

was

a

was

an

important

driving

use

case

for

starting

gnap,

because

it

allows

ganap

to

be

able

to

say

without

additional

apis

like

just

who's

here

right

now

and

we

are

aligning

with

the

the

sec

event.

Working

group

has

a

subject:

identifiers

draft,

which

I

believe

is

in

last

call

or

just

out

of

last

call,

hopefully

yarn.

F

Please

it's

on

its

way,

and

so

we

aligned

with

some

of

the

latest

changes

from

there

that

that

the

editor

had

put

in

for

supporting

dids,

for

example,

as

a

format.

That's

something

that

that,

as

adrian

mentioned,

the

dude

community

is

is,

is

pushing

more

stuff

out

there

and

aligning

with

that

ongoing

format.

F

F

Some

of

these

ended

up

getting

overcome

by

other

changes

which

will

which

we'll

cover

in

just

a

moment

as

as

the

protocol

itself

as

the

gnap

core

protocol

got

kind

of

cleaned

up

regardless

there

was

a

lot

of

stuff

that

got

changed

around

how

sort

of

the

the

smaller

details

of

how

the

cryptography

stuff

works

next

slide.

Please.

F

F

If

I

try

to

remember

that,

but

they

came

along

and

we're

like,

hey

you're,

not

mentioning

cash

control

here

on

any

of

these,

you

really

should

and

not

only

that,

but

here's

where

all

of

the

places

where

it

should

be

those

types

of

things

are

it's

a

small

contribution

in

terms

of

like

number

of

lines

change,

but

it

is

immensely

valuable

to

the

working

group

and

the

editors

absolutely

love

seeing

this.

So

thank

you

so

much

for

that.

F

This

is

because

this

is

an

important

detail

for

implementers

that

you

know

we

either

would

have

noticed

eventually,

or

somebody

would

have

tripped

up

and

made

a

mistake

and

then

we

would

have

noticed

too

late.

Basically,

so

thank

you

for

that.

A

big

change

which

we're

going

to

cover

in

its

own

in

its

own

discussion

is

we

extracted

the

portions

of

this

of

the

core

specification

that

talk

about

resource

server

communication

out

into

its

own,

separate

spec.

F

F

What's

really

happening

here,

and

this

change

of

language

is

meant

to

reflect.

This

is

that

the

user

is,

is

providing

some

amount

of

interaction

with

not

just

the

authorization

server,

but

also,

but

potentially

a

bunch

of

other

components

that

the

authorization

server

has

different

kinds

of

relationships

with,

and

the

client

can

facilitate

that

connection

like

that.

F

F

I'm

not

saying

that

necessarily

the

vc

http

api

should

be

in

knapp

core

as

an

option,

but

that's

the

kind

of

thing

that

it's

really

important.

We

allow

for.

We

don't

accidentally

like

box

out

of

the

realm

of

possibilities

here,

because

if

we

do

box

it

out,

then

somebody's

just

gonna

come

up

with

a

really

weird

way

to

patch

it

back

in

which

is

exactly

what's

happening

with

oauth

and

openid

connect

and

the

vc

http

api

stuff

right

now,

so

we

need

to

be

aware

of

this.

Okay.

F

This

notion

of

privileges

was

brought

up

of

some

as

something

that

really

is

orthogonal

to

these

other

things.

Now

this

is

a

description

of

the

kind

of

thing

that

you're

asking

for

in

your

access

token,

not

necessarily

what

goes

into

the

token

itself,

because

that

is

opaque

to

the

client

instance,

and

so

this

is

part

of

the

client's

request

saying

like.

I

know

that

to

call

this

api.

This

is

a

privilege

that

I

want

to

be

able

to

have

on

this

api.

F

F

We

have

had

some

editorial

changes

which

we'll

see

in

a

moment

that

try

to

clarify

and

expand

that

language.

That's

that's

gonna,

be

an

ongoing

effort

for

this,

and

our

sister

group

oauth

is

working

on

the

rich

authorization

request,

which

is

a

back

port

of

this

feature

to

oauth

2.,

and

that

is

nearing

last

call

in

that

space

as

well.

F

So

you

know

we're

trying

to

to

sync

this

language

between

the

two

so

that

the

same

concepts

can

be

applied

at

both

spaces

and

the

privileges

field

is,

is

a

part

of

that

we

added

a

new

parameter

for

the

mix-up

attack.

I'll

talk

about

that

attack

in

just

a

bit

and

then

and

then

the

editors

got

bored

and

deleted

a

bunch

of

stuff.

No,

we

had

we

had

actual

ongoing

conversations

about

what

people

were

using.

What

like

why?

F

Each

thing

in

the

protocol

was

in

there

we're

getting

to

the

point

of

the

protocol

where

it's

starting

to

stabilize

the

core

is

really

stabilizing

so

now's

the

time

to

really

look

at

all

of

the

stuff.

That's

grown

around

it

and

be

like

okay.

Why

do

we

have

this

piece?

Why

are

we

doing

this?

Does

it

still

make

sense,

like

I

was

saying

before

about

the

user

handle

now

that

we've

got

this

new,

opaque

subject

identifier,

it

probably

doesn't

make

sense

to

have

a

separate

field

for

that

anymore.

F

That's

the

kind

of

thing

we're

going

to

start

really

looking

at

and

I'll

talk

more

about

the

specifics

of

the

remove

features

towards

the

end

of

the

presentation

when

we

get

there

next

slide,

please

whole

bunch

of

editorial

changes

and

some

of

them

small,

some

of

them

big

one

of

the

biggest

ones,

though,

was

fixing

up

sort

of

the

examples,

especially

the

cryptographic

examples.

I

we

now

have

a

script

that

will

generate

properly

formatted

and

signed

examples

for

much

of

the

spec.

F

This

started

the

example

started

as

me,

copying

and

pasting

from

my

personal

implementation

and

and

then

a

lot

of

things

got

edited

by

hand,

and

people

noticed

that

the

signatures

weren't

weren't

actually

valid

anymore,

or

that

in

in

in

one

in

one

at

least

one

case

that

we

didn't

even

have

valid

json

in

in

a

spot.

So,

anyway,

a

lot

of

that's

being

being

cleaned

up.

Obviously,

there

are

probably

still

mistakes.

F

We

want

to

know

where

they

are

so

that

we

we

can

fix

them

or

you

can

fix

them.

There

are

also,

you

know.

There

was

a

bunch

of

typos

aaron,

actually

did

a

a

bunch

of

work

for

updating

and

clarifying

the

diagrams

that

are

throughout

the

document,

including

a

new

sort

of

foundational

diagram.

That's

right

at

the

beginning

of

draft

six

that

says

like

these

are

the

major

players

and

what

they

mean

to

each

other.

F

F

F

F

F

The

idea

with

splitting

this

up

was

to

have

things

that

face

a

client

instance

in

one

document

and

things

that

face

a

resource

server

only

in

another

document,

because

these

legs

of

the

triangle

can

vary

independently

of

each

other,

like

that's,

that's

fundamentally.

What

it

gets

down

to

is

that

I

can

do

things

to

interact

with

users

and

get

tokens

and

have

different

client

deployments

and

do

all

of

that

in

a

completely

different

space

from

the

decisions

I

need

to

make

about.

What

do

I

put

in

my

access

token?

F

Am

I

using

a

structured

format?

Am

I

using

references?

Am

I

doing

introspection?

Am

I

doing

you

know

some

shared

memory?

Bus

thing?

What

does

the

token

even

represent,

like

the

the

client,

doesn't

need

to

know

about

those

decisions

in

order

to

do

its

functions

and

the

resource

server

doesn't

need

to

know

about

what

the

client's

doing

in

order

to

decide

whether

the

access

token

it

gets

is

good

or

not

so

separating

that

decision

space

into

these

two

documents

was

really

key.

F

So

if

you

can

actually

go

back

a

slide,

there's

a

couple

of

bullet

points

thanks

what

the

what

this

functionally

means

is

that

we

took

resource,

set

registration

and

sort

of

resource

server

introduction

this

dynamic

introduction

into

a

different

draft

token

introspection

is

in

there

because

a

client

this

is

something

that

is

designed

to

face

resource

servers.

There's

open

questions

of

whether

client

should

be

allowed

to

do

that

and

how

that

gets,

exposed

and

also

yarn's

got.

F

F

D

A

D

For

that,

the

only

thing

that

that's

very

well

defined

is

judd,

of

course,

but

here's

since

exactly

what

adrian

was

explaining

before

we

are

trying

to

achieve

also

delegation

and

advanced

types

of

scenarios

which

basically

require

a

bit

more

advanced

cryptography

type

of

of

requirements,

and

so

that's

well

that's

at

least

an

open

issue,

and

so

far

what

we've

got

in

the

document.

From

from

what

jaren

explained

as

well

in

in

in

the

issues

is

we

just

have

to

you

have

some

links,

but

it's

not

official

standards

right

now,.

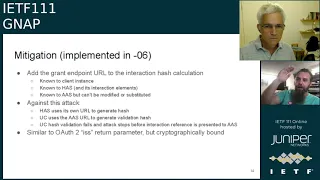

F

All

right

thanks

if

you

can

actually

go

a

couple

of

slides

forward.

Please

thank

you.

So

now

I'm

going

to

talk

about

the

mix-up

attack.

It

was

identified

by

a

couple

of

researchers

and

because

I

am

a

terrible

person,

I

forgot

to

copy

their

names

into

the

slides.

I'm

sorry

if

somebody

could

actually

add

that

to

the

notes.

That

would

be

appreciated.

F

So

sorry

about

that,

but

this

is

related

to

the

oauth

2

mix-up

attack.

If

you're

familiar

with

it,

it

basically

works

by

getting

a

client.

That

knows

how

to

talk

to

multiple

authorization

servers

to

ask

for

a

token

at

one

as

but

get

the

user

to

interact

at

a

different

as

and

and

have

an

attacker

come

away

with

an

access

token.

F

Now

out

of

the

box,

knapf

is

already

in

a

better

state

than

oauth

2,

because

we

don't

have

bear

secrets

as

sort

of

a

fundamental

building

block,

so

it

is

not

possible.

If

you

know,

assuming

your

keys

are

the

keys.

The

private

keys

themselves

are

not

compromised.

It's

not

possible

for

an

attacker

to

be

able

to

just

easily

impersonate

a

good

authorization

server

and

and

convince

a

client

that

they're

talking

to

someone

else.

F

You

can

ask

for

a

bearer

token,

but

if

you're

not

asking

for

a

bearer

token,

then

the

mix-up

attack

succeeds,

but

it

succeeds

in

a

detectable

way,

because

the

client

will

not

get

back

a

usable

access

token,

and

so

the

client

is

going

to

notice

that

the

access

token

doesn't

work

in

oauth,

2's,

mix-up

attack

and,

if

you're,

using

bearer

tokens

in

knapp,

the

attacker

gets

the

copy

of

the

access

token,

and

so

does

the

client.

So

the

client

doesn't

actually

know

this.

F

You

know

being

able

to

detect

these

conditions

is

is

as

important

as

being

able

to

prevent

these

these

conditions

in

a

lot

of

in

a

lot

of

ways.

So

next

slide,

I'm

gonna

talk

through

how

this

works.

Now

I

will

say

that

there's

a

there's,

a

text

write

up

on

the

next

slide

as

well.

I'm

going

to

talk

through

the

diagram,

but

I

encourage

people

to

follow

along

with

the

text.

F

So

user

starts

off

talking

to

their

client

instance,

and

this

is

a

diagram

from

the

researchers,

so

the

user

starts

off

by

talking

to

the

client

instance

and

the

client

instance,

the

the

players

here

are,

you

know

the

end

user.

The

client

instance

aas

is

the

attacker's

authorization

server.

So

this

is

the

bad

authorization

server

and

has

is

the

legitimate

authorization

server.

F

F

F

F

F

The

attacker

can

disguise

themselves

enough

to

fish

a

user

for

this,

so

they

interact

they

they

approve

it,

and

all

of

that

comes

back

with

the

validation,

hash

and

the

interaction

reference

back

to

the

client

we're

down

in

step

nine.

Now

at

this

point

and

here's

where

the

attack,

here's,

where

the

attack

hits

at

this

point,

all

of

the

information

needed

to

make

that

validation,

hash

was

the

client

nonce,

the

server

nonce

and

the

interaction

reference.

F

The

honest

authorization

server

has

all

of

these.

The

attacker

has

the

client

nonce

and

the

server

nods,

because

it

proxied

those

values

back

in

steps,

2

and

4

above

so

what

that

means

is

that

the

validation

hash

in

step

9

actually

does

validate,

even

though

the

initial

request

going

to

one

server

is

different

from

what

would

be

expected

by

the

honest

authorization

server

now.

F

Here's

where

the

fallout

of

the

attack

starts

to

happen

is

that

the

client

forwards

that

interaction

reference

signed

with

its

the

client's

key

to

the

attacker's

server,

and

then

the

attacker

sends

that

interaction

reference

on

it

proxies

that

again

signed

with

the

attacker's

key.

So

it's

not

impersonating

the

client

to

the

honest

authorization

server

and

gets

back

an

access

token

here.

If

it's

a

bound

access

token,

the

attack

stops,

the

attacker

gets

a

token

the

user

doesn't.

F

Maybe

they

call

and

complain.

Maybe

they

don't

notice,

but

at

this

point

the

attack

is

over

because

the

attacker

has

access

approved

by

the

client.

So,

in

summary,

this

is

really

substituting

a

client

getting

a

user

to

approve

a

client

that

they

don't

think

that

they're

approving

and

the

access

token

being

delivered

somewhere

else.

F

F

F

But

what

we

realized

in

looking

at

this

attack

in

gnapp

is

that

this

verification,

this

validation,

hash

that

we

already

had,

we

could

actually

mix

in

the

grant,

request

url

and

have

the

same

effect

without

having

to

add

additional

values

on

the

wire

which

an

attacker

could

then

possibly

sit

on

that

redirect

and

and

steal

that.

So

what

happens?

F

Is

you

now

mix

together

the

client

nonce,

the

server

nonce,

the

interaction

reference

and

the

url

and

the

the

grant

endpoint

url

mix

those

together,

which

means

that

when

that

hash

is

initially

generated,

the

honest

authorization

server

is

going

to

use

its

own

grant

url

like

it's

not

being

told

what

that

url

is

on

the

way

in

by

anybody.

It's

going

to

be

using

its

own

grant,

url

that

the

attacker

called

the

client

to

do.

F

F

Yes,

this

is

still

putting

weight

on

the

client

to

protect

itself

which,

as

we

know,

client

developers

will

do

everything

to

avoid

having

to

do

more

work.

But

this

is

a

very

simple

addition

to

code

that

was

already

required

by

compliant

client

software

to

do

here.

So

there's

no

additional

parameters.

You

have

to

check,

there's

nothing

more.

You

have

to

send

nothing

more.

F

You

have

to

protect

it's

all

information

that

you

already

have,

and

we

validated

this

approach

with

the

researchers

who

were

looking

at

that

they

confirmed

that

this

does

in

fact

cut

off

the

attack,

so

this

has

been

implemented

in

draft

six

and

the

the

pr

that

implements.

This

was

remarkably

short

because

it

simply

said

add

this

url

into

this

mix,

and

everything

else

should

should

fall

from

that.

F

F

F

F

So,

oh

yeah,

I

forgot

about

this

wrap

up.

So

basically,

any

redirect

based

protocol

is

gonna,

be

inherently

fishable.

It's

it's

the

nature

of

the

space

that

we

work

in

and

there

are

related

phishing

attacks

if

you're

not

using

the

the

finish

feature

as

part

of

your

interaction,

because

there's

no

interaction

reference

that

you

can

protect

in

that.

F

But

this

is

kind

of

that's

part

of

the

trade-off

of

being

able

to

do

this

with

different

kinds

of

clients,

and

while

this

is

made

easier

by

dynamic

world,

it

is

still

very

possible

even

with

static

clients

and

and

we've

seen

this

in

the

wild

in

the

oauth

world,

with

people

using

self-service,

client

registration

stuff

to

register

things

that

to

a

human

look,

a

lot

like

google

documents,

but

aren't

so

next

slide.

Please.

F

So

we

removed

a

number

of

features

in

drafts,

five

and

six,

the

first

of

which

is

the

signature

methods.

The

editors

put

out

a

call

to

the

list

a

while

back

about

this.

We

kept

http

message

signatures

which

is

still

progressing

in

in

the

http

working

group.

We

kept

mtls

based

on

the

concepts

from

the

oauth

mtls

work.

F

We

dropped

the

oauth

proof

of

possession

partially

because

that

that

draft

has

long

since

expired

and-

and

it

is

probably

going

to

be

replaced

by

a

new

draft

based

on

http

message-

signatures

in

the

oauth

working

group.

That's

in

adoption

call

you

know

oauth

right

now

we

also

dropped

oauth.

D-Pop

d-pop

was

never

meant

to

be

a

signature

method

for,

like

general

http

signatures.

F

F

So

we

don't

actually

have

any

listed

as

must

implement

yet

so

mandatory

to

implement

is

going

to

be

a

different

thing.

We

we

didn't

add

that

language,

yet

there

there

was

some

discussion

about

this

and

people's

the

feeling

so

far,

and

I'm

not

going

to

go

so

far

as

to

say

that

this

is

the

consensus,

but

the

feeling

so

far

seems

to

be

that

http

message

signatures

will

be

the

mandatory

to

implement

for

at

least

kind

of

a

you

know.

F

F

But

you

know

this

is

this

is

something

we

got

to

figure

out

what

we're

going

to

do

with

these

things.

So

thank

you,

though

important

question

capabilities

was

a

sort

of

a

pseudo-discovery

mechanism.

I

still

like

it

as

an

individual.

I

think

it's

it's

clever,

but

the

thing

is

we

looked

at

it

and

nothing

was

using

it

and

it's

really

dangerous

to

have

something

in

a

security

protocol

that

you

think

somebody

will

use

for

extending,

but

without

actually

exercising

that.

F

F

Similarly,

the

existing

grant.

This

was

a

way

to

ask

for

a

to

make

a

new

grant

request

based

on

a

separate

grant.

So

this

isn't

about

updating

a

grant

to

get

new

rights,

new

access

tokens,

it's

not

about

step

up

or

step

down,

it's

not

about

reading

or

managing

an

existing

thing.

It's

about

saying

give

me

something

new

in

the

context

of

something

else,

and

it

was

one

of

those

things

that

always

kind

of

confused

people

and

people

weren't

quite

sure

what

was

going

on

this

was.

F

But

once

again

we

didn't

really

see

a

push

that

needed

this

right

now,

so

the

editors

propose

what

the

editors

have

done

is

we

pulled

it

out

from

the

core

spec?

But

what

we

would

like

to

see

is

that

if

people

want

this,

that

we

actually

attack

this

as

a

holistic

feature

right,

I

think

adrian's

trying

to

jump

on

the

queue.

H

E

A

E

Sorry,

what

I'm

saying

is

relative

to

the

resource

owner,

interacting

with

the

resource

server.

I

know

of

only

two

ways

to

do

this:

either.

There's

a

pre-registration

of

an

authorization

server

that

the

resource

server

has

to

agree

to

for

the

self-sovereign.

You

know,

users

for

self-sovereign

delegates,

authorization,

servers

or

the

resource

owner

has

to

get

a

capability.

F

F

Is

that

there's

this

security

notion

of

a

capability

where

it's

it's

a

credential

with

its

destination,

all

sort

of

bound

up

into

one

thing

for

a

really

sort

of

dumb

version

of

it?

Think

of

it

as

a

url,

with

an

oauth

token,

on

the

end,

you

have

everything

you

need

to

be

able

to

just

go

to

that

magic,

url

and

just

use

it.

That's

not

what

the

capabilities

negotiation

array

was

about

at

all,

and

so

that

was

that's

another

good

reason

to

to

remove

that.

F

What

adrian's

discussing,

though,

is

exactly

the

kind

of

thing

this

notion

of

like

how

do

we

dynamically

introduce

resource

owners

into

this

process?

That's

exactly

the

kind

of

thing

that

the

major

editorial

changes

around

the

the

interaction

and

authorization

gathering

section

was

really

about,

and

so

so

that

was

all

very

you

know

very

key

to

that

part

of

the

discussion

so

yeah.

Hopefully,

hopefully,

that

answered

answered

the

question,

hopefully

adrian's,

safe,

because

that

did

sound

like

a

fire

alarm.

Thank

you.

That's

all

good!

F

F

Underspecified

what-if

feature

with

the

hope

that

if

this

really

does

need

to

be

used

that

we

can

bring

it

back

in

in

a

way,

that's

more

robust

and

makes

makes

more

sense

as

sort

of

a

holistic

approach.

Just

like

the

existing

grant

thing,

you

know,

you

know,

I

think

it

was

yarn,

brought

up

the

notion

like

what,

if

we

had

a

grant

identifier

to

use

there

instead,

you

know

those

are

the

kinds

of

questions

that

should

be

asked

all

together

in

the

notion

of

what

is

this

feature?

F

F

So,

where

do

we

go

from

here?

Our

current

discussion

on

the

mailing

list?

One

of

our

current

discussions

is,

you

know,

key

rotation.

You

know:

should

we

define

a

mechanism

as

part

of

knapp

but

also

enable

existing

mechanisms?

Some

things

that

people

do

today

are

like

host

your

keys

at

an

external

url

and

have

the

other

party

fetch

that

whatever

keys

at

that

url,

you

know.

How

do

we

want

to

manage

this

kind

of

stuff?

F

F

F

One

of

the

other

things

that

we

need

to

need

more

text

and

more

discussion

on

is

how

these

different

components

relate

to

each

other.

What

are

the

assumptions

that

we're

making

about

these

different

components,

like

I

mentioned

previously,

aaron

started

that

with

that

sort

of

introductory

diagram

and

the

text

around

that

fabian's

done

a

lot

of

really

great

work

on

sort

of

the

entities

and

roles

section.

We

need

to

keep

expanding

that

we

need

to

keep

expanding

that

so

that

it's

it's

more

clear

like

this.

F

This

entity

trusts

this

other

entity

for

this

purpose.

In

this

context,

and

and

it's

that

type

of

transitive

discussion

that

needs

to

be

encoded

in

the

draft

right

now

there

there

are

trust

relationships

and

they

are

mentioned

in

there,

but

they

are

not

explicitly

described

as

well

as

they

could

be.

So

we

know

that

that's

something

that

needs

to

happen.

F

F

We

need

to

just

go

make

those

registries,

it's

a

lot

of

mechanical

stuff

there,

but

we

think

that

we've

got

a

a

decent

feel

on

the

fields

that

that

are

clear

candidates

for

extensibility

through

registry

versus

those.

That

really

should

be.

You

know

locked

down

more

specifically,

and

there

are

related

issues

about

guidance

to

extensions

like

what

does

it

make

sense

for

an

extension

to

be

able

to

say

here:

how

does

it

fit

in

with

the

other

functions?

F

F

I

don't

think

I

actually

fixed

the

discovery

document

I'll

have

to

go.

Do

that

right

after

call,

but

there

are

implementations

that

I

know

about

or

that

the

editors

know

about

in

python,

php

and

rust

in

the

works,

some

of

them

from

us,

some

of

them

not

from

us.

There

are

also

more

implementations

on

the

on

sort

of

our

core

dependencies,

specifically

http

message:

signatures

and

security

event

identifiers.

F

You

know

we

know

this,

but

we

do

think

that

it

is

a

very,

very

good

time

to

start

implementing

this

to

start

trying

it

out

to

start

actually

fitting

it

into

different

spaces,

and

I've

reached

out

to

a

couple

of

the

groups

that

had

worked

on

early

implementations

of

the

xyz

proposal

from

before

the

gnap

working

group,

about

updating

to

updating

to

gnab

for

their

systems

and

following

the

draft,

and

so

we'll

see

we'll

see

where

we

go

from

there.

Oh

robin

bike

shedding

very

shortly

bike.

F

F

F

A

A

Please

give

it

a

read,

not

as

detailed

as

you

would

for

working

group

last

call,

but

let's

sketch,

let's

catch

them

in

what's

what

are

missing

features

and

also

we

we

used

to

have

too

many

ways

of

doing

signatures.

We

still

have

a

few

too

many

other

other

things

that

are

duplicate

that

are

redundant,

that

can

be

removed

and

maybe

come

back

as

extensions

or

maybe

not

so.

A

quick

read

by

everybody

here

would

be

highly

appreciated.

A

B

I

A

I

I

So

I

have

a

proposal

to

explain

how

this

should

be

done

now.

I

I

would

also

like

to

address

another

message,

which

is

one

finding

about

the

current

document,

which

I

believe

is

important

and

in

section

seven

today

it

is

stated

in

gnab.

The

client

instance

re

secures

its

request

to

the

rs

by

presenting

an

access

token.

I

B

J

F

Yeah,

this

is

to

answer

jamie.

That's

a

really

interesting

idea,

so

the

resource,

access

rights

and

oauth

2's,

rich

authorizations

requests

have

discussed

this

notion

or

the

notion

of

registering

the

types

somewhere

and

the

consensus

has

been

that

we

don't

want

to

require

registration

of

types,

but

having

a

catalog

would

be

an

interesting

one.

Now,

it's

not

that's

not

usually

what

an

iana

registry

is

used

for,

so