►

From YouTube: IETF112-COINRG-20211111-1200

Description

COINRG meeting session at IETF112

2021/11/11 1200

https://datatracker.ietf.org/meeting/112/proceedings/

B

B

I

think

everybody

is

aware

of

this.

I

think

it

for

this

group.

Yes,

it's

important,

but

also

you

know

that

we're

recording

this,

because

we

are

still

virtual

and

I

guess

even

when

we'll

go,

not

virtual

we'll

do

it.

I

think,

what's

also

very

important,

is

all

the

disclosures

of

intellectual

property

which

is

on

on

the

next

slide

or

something

and

the

code

of

conduct,

which

is

also

very

important,

and

I

think

a

few

of

us

have

had

experiences

that

show

us

how

important

it

is

eve.

B

B

So

we

have

a

very

packed

agenda,

so

you

know

I'm

already

two

or

three

minutes

into

this,

so

we're

going

to

go

very

fast.

I

think

we're

very,

very

happy

to

have

scott

chanker

to

present

the

extensible

internet,

which

was

a

ccr

paper

and

essentially

raises

a

lot

of

interesting

questions

about

the

future

of

the

internet

and

also

is

is

connected

to

some

of

the

questions

that

we've

had

in

this

group.

So

we're

very

lucky

with

this.

Then

we

have

the

very

interesting

in

network

aggregation

paper.

B

Then

also

the

information-centric

data

flow

for

distributed

computing

from

dirk,

and

we

we

had

well,

it's

actually

ideas,

but

actually

there's

only

one

alessandro,

because

it's

a

holiday

is

not

going

to

present.

I'm

going

to

present

this

it's

about

an

operating

system

for

distributed

applications

and

in

coin,

we

are

very

big

on

thinking

that

the

internet

is

moving

to

be

more

like

a

computer

board,

and

if

we

have

a

computer

board,

you

need

an

operating

system

next

slide,

then

we

have

the

drafts

update.

B

Obviously

a

research

group

is

not

too

about

doing

only

drafts,

but

we

do

have

very

interesting

work.

This

is

happening

in

the

drafts

with

this

research

group

and

we

would

like

to

give

them

a

chance

to

talk

about

the

ideas

so

use

cases

again

to

show

that

there,

you

know,

there's

a

way

to

use

these

things

transport

protocols,

because

the

this

has

been

an

issue

once

you

start

putting

computing

in

the

system

and

then

the

security

and

privacy

and

again

a

big

issue.

B

B

Here

this

is

good

yeah,

you

found

you

know.

If

you're

there

you

found

the

meat

deco,

which

is

good

the

live

minutes,

it's

integrated

to

meet

techo

codim,

the

mailing

list.

Well,

if

you're

here,

you

probably

know

about

our

mailing

list,

if

you

know,

if

you

don't

well,

please

do

that

and

you

know

we

have

a

ton

of

documents.

B

Turk,

your

document

expired

that

needs

an

update,

because

it's

an

rg

document.

We

have

two

rg

documents.

We

have

again

a

new

new

drafts.

I

think

this

needs

an

update

because

we

have

the

new

draft

from

china

mobile

also

and-

and

we

have

a

ton

of

other

drafts

that

you

know

are

a

different

level

of

maturity,

and

essentially

this

is

something

that

we

will

raise

on

the

list.

The

idea

that

we

need

to

do

something

about

it.

B

The

milestones

we've

done

a

lot

of

it

and-

and

we

need

I'll

go

very

fast,

because

I

know

one

minute

over

we'll

go

very

fast

to

the

last

thing,

which

is,

we

need

a

milestone,

review

and

we'll

do

it,

and

now

we

have

the

presentations

and

the

first

one

is

scotch

anchor

from

berkeley

and

the

extensible

internet.

So

scott.

Thank

you

very

much

for

doing

this,

because

it's

so

early

in

california.

F

F

So

the

core

subject

of

the

talk

is

really

about

architectural

change.

So

when

I

say

the

word

architecture,

I'm

really

referring

to

the

arrangement

of

the

data

plate,

functionality,

that

is

the

layers

and

the

basic

functions

they've

been

assigned.

It's

not

about

specific

protocols

ipv4

to

ipv6.

It's

not

an

architectural

change

in.

In

my

lexicon,

nor

is

it

about

the

control

plane.

F

Now,

there's

been

decades

of

architectural

research

trying

to

make

changes

to

the

the

basic

architecture,

but

I

I

think

it's

pretty

clear

that

you

know

over

20

works

of

20

years

of

clean

slate,

architectural

research,

there's

no

discernible

architectural

impact

and

the

public

internet,

at

least

in

the

eyes

of

certainly

the

researchers,

I

talked

to

seems

doomed

to

architectural

stagnation.

That

we've

tried

it.

Just

we

don't

see

any

movement,

but

that's

not

what

the

hyperscalers

think

the

cloud

and

content

providers

are

building

their

own

large

private,

ip

based

networks.

F

They've

got

many

points

of

presence

and

what's

relevant

to

this

working

group.

These

points

of

presence

apply

extensive

in-network

services,

flow

termination,

caching,

load,

balancing

and

so

forth,

and

these

services

have

had

a

very

significant

impact

on

customer,

latency

and

reliability,

so

they

put

a

lot

of

money

into

it

because

they

actually

see

very

tangible

benefits

in

their

user

community.

F

F

F

F

On

the

other

hand,

it

provides

the

service

model

to

host.

That

is

this

best

effort.

Packet

delivery

is

exactly

what

anything

you

want

to

do

on

a

host.

That's

the

service

that

you

see

and

you

have

to

build

on,

so

it

has

to

support

all

application

requirements

and

these

requirements

are

becoming

more

stringent,

which

is

a

reason

to

change

the

architecture

and

that's

exactly

why

the

these

hyperscalers

have

built

out

their

own

networks

to

extend

the

the

architecture

for

their

own

purposes,

so

they

can

meet

these

requirements.

F

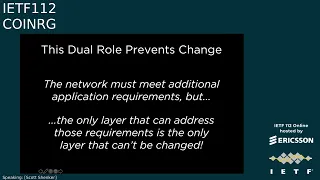

But

it's

this

dual

role

that

prevents

change

because

you

have

demands

coming

from

above.

You

have

constraints

coming

from

below

and

they

are

meeting

in

the

middle

in

a

single

layer,

so

the

network

must

meet

additional

application

requirements,

but

the

only

layer

that

can

address

those

requirements

is

also

the

only

layer

in

the

architecture

that

can't

be

changed.

F

So

that's

the

the

nub

of

the

problem

that

they

coincide

in

the

single

layer,

both

the

demands

and

the

constraints.

So

the

second

question

is

well:

how

can

we

overcome

this

barrier

and

there?

This

is

where

the

extensible

internet

proposal

comes

in.

It's

really

very

simple:

you

use

the

current

ip

protocol

unchanged,

you

don't

do

anything,

but

you

do

introduce

a

new

layer.

Above

it

we

call

it

service,

layer

or

l.

3.5

and

the

service

layer

offers

new

in-network

services

to

host.

So

this

is

the

relevance

to

this

working

group

is

this

network

services?

F

F

So

why

does

this

solve

the

problem

because

it

decouples

layer

three's,

two

roles?

Currently

it's

the

interface

to

both

l2,

which

gives

it

the

constraints

and

l4

which

gives

us

the

demands

and

in

the

ei

l3,

is

still

the

interface

to

l2.

It

does

a

perfectly

good

job

of

that,

but

l

3.5

is

the

interface

to

the

hosts

and

there's

no

reason

for

l

3.5

to

be

in

every

router.

F

F

All

the

service

layer

communication

is

tunneled

over

ip

and

the

source

specifies

which

service

to

invoke

using

the

tunneling

protocol

when

the

packet

gets

to

the

service

node

and

it's

this

ability

for

clients

to

signal

to

the

service.

Node,

what

service

they

want

that

allows

this

to

go

beyond

what

the

hyperscalers

are

doing,

which

needs

to

be

backwards,

compatible

to

actually

we're

able

to

offer

services

like

multicast,

where

the

host

needs

to

know

that

you've

changed

the

semantics

of

what

they've

asked.

So

this

is

the

basic

outline

of

the

design.

F

F

Now

the

key

point

here

is

that

all

these

services,

that

these

in-network

services

that

I

talk

about

are

in

software,

so

standards

are

not

detailed.

Written

specifications,

they're

open

source

code

and

there

are

three

necessary

software

components

on

the

service.

Node

one

is

the

service

modules

that

is,

for

every

service,

whether

it's

multicast,

whether

it's

ddos

protection.

F

There

is

a

service

module,

that's

implemented,

that's

running

on

a

service

node,

it

runs

in

a

standardized

execution

environment.

If

this

execution

environment

has

a

very

simple

set

of

primitives

pack,

it

in

out

ephemeral,

storage,

stable

storage,

maybe

one

or

two

others,

but

it's

a

write

once

run

anywhere

environment.

If

you

write

your

service

module

to

run

inside

this

environment,

it

can

run

on

any

service

node

and

then,

of

course,

in

the

service

node.

You

need

some

kind

of

runtime

or

orchestration

to

scale

up

scale

down

recover

from

failures.

F

This

doesn't

need

to

be

standardized.

You

know,

there'll

be

open

source

versions

available,

whether

it's

kubernetes

or

openstack

or

whatever

comes

next,

and

when

I

talk

about

in-network

services,

this

is

limited

computation.

This

is

not

where

you

run

your

machine

learning

jobs.

This

is

basic

packet,

forwarding,

payload,

processing,

simple

functions

like

caching,

so

it's

more

complicated

than

just

simple

ip

forwarding,

but

it's

not

general

computation,

it's

fairly

limited

and

these

service

nodes

don't

just

have

to

be

generic

processors.

F

They

can

have

secure

enclaves,

they

can

have

hardware

accelerators,

but

everything

you

do

has

to

run

on

a

commodity

processor,

but

if

there

happens

to

be

an

accelerator

that

can

be

used

to

to

get

better

performance

now

choosing

the

services.

This

is

where

you

have

some

kind

of

governance

process,

whether

it's

itf

or

something

else.

You

know

it's

way

too

early,

but

there's

some

body

that

decides

what

are

the

set

of

public

services

and

and

their

implementations

and

all

of

these

public

services

are

run

on

all

service

nodes.

F

That

is,

if

you're

offering

internet

service

so

you're

supplying

a

service

node.

You

have

to

download

all

of

the

service

modules

that

are

in

the

service

model

that

have

been

approved,

and

so

this

is

really

the

biggest

change

to

ei,

meaning

that

ei

brings

this

deployment

model.

That

is

because

they're

approved

software

modules

they

can

be

rolled

out.

They

can

be

deployed.

There's

no

per

vendor

per

domain

decision

process

of

is

cisco

going

to

support.

This

is

juniper

going

to

support

this,

that

is

18t

or

deutsche

telekom

going

to

deploy

it.

F

F

You

can

also

incorporate

other

frameworks

that

are

becoming

quite

popular.

They

can

be

running

on

these

service

nodes,

istio

and

then

oppa

for

the

policies,

various

telemetry

kinds

of

frameworks

and

then

support

for

radical

new

architecture,

something

like

icn,

whether

it's

the

donor

style

or

the

ndn

style.

This

now

just

becomes

a

service.

You

just

roll

it

out,

you're,

not

replacing

all

the

routers.

It's

just

a

piece

of

software

there'll

be

host

support,

obviously,

of

course,

for

all

the

services-

and

this

just

gets

rolled

out

like

another

standard.

F

F

F

Given

the

failures,

why

do

we

think

this

might

succeed

and

there

are

three

reasons:

one

backwards:

compatibility

ip

just

continues

to

be

used.

If

you

don't

want

to

change

anything,

you

just

use

ip.

You

know

maybe

20

years

from

now

that

will

go

away,

but

when

we

roll

ei

out

nothing

changes

about

ip

and

the

kind

of

resources

you

need,

you

just

need

the

service

nodes

which

could

use

edge

computing

or

existing

pops.

They

have

these

facilities

out

there

I

mean

we've

talked

to

some

carriers

and

they

say.

F

Oh

you

know

we

could

run

this

tomorrow

that

we've

got

all

these

facilities

available.

Obviously

there's

more

involved,

but

this

isn't

talking

about.

You

know

major

capital

outlays.

The

second

reason

is

fear

is

a

great

motivator.

You

know

the

internet

architecture

has

resisted

change

and

mark

handles

this

great

essay

on.

You

know

why

the

internet

just

barely

works

and

his

reasoning

is,

you

know,

sort

of

you

know.

Basically,

why

should

it

change

unless

it

has

to?

But

what's

implicit

in

his

essay?

Is

that

there's

no

alternative

that

the

internet

is

just

there?

F

F

Not

in

hardware,

and

the

point

is

the

private

networks

have

proven

every

aspect

of

this

conjecture:

they're

up

in

the

running

they're

running

at

scale,

they're

running

with

real

traffic

at

an

unimaginable

scale,

so

ei

is

really

the

architecturally

co-version

of

what

of

the

approach.

These

private

networks

have

already

proven

to

work.

So

that's

why

we

think

it

might

succeed.

F

So

my

last

real

slide

is

you

know:

where

are

we?

We

built

a

prototype,

really

murphy

mccauley?

Who

has

built

the

prototype

while

teaching

four

classes

a

week,

so

progress

has

been

a

little

slower,

but

you

know

we

want

to

finish

development,

which

should

be

in

a

couple

of

months,

deploy

it

on

fabric

and

other

test

beds,

engage

the

community

by

providing

this

test.

F

But

we

say:

if

you

have

a

new

service,

we

can

deploy

it

if

you

want

to

write

applications

on

top

of

these

services

like

pub

sub

or

whatever

you

can

do

that

too.

Continuing

our

discussions

with

industry

and

we'd

love

to

have

your

participation

you

here,

meaning

as

individuals

as

a

research

group

or

the

broader

community.

With

that,

thank

you

and

I'll.

Take

your

questions

and

eve.

Why

don't

you

kick

it

off.

A

C

Thank

you

eve

and

thank

you

scott

for

for

this.

As

you

said

the

second

time,

I've

sort

of

heard

this,

and

and

finally,

it's

sinking

in

a

little

bit.

My

question,

which

draws

on

some

of

the

the

stuff

in

the

chat,

is

about

the

layering

here

and

in

particular

the

relationship

with

with

transport

protocols,

so

in

in

your

very

simple

example

of

source,

snsn

dest

would

the

would

you

see

the

transport

protocol

running

end

to

end

so

source

to

destination?

F

So

there

will

definitely

be

an

end-to-end

reliability

layer

and

because

for

reliability,

we

want

the

failure

of

a

service

node

to

be

no

more

serious

than

the

failure

of

a

router

today.

So

they'll

definitely

be

end

to

end

whether

the

pipe

that

goes.

We

view

that

ip

is

providing

a

pipe,

whether

the

the

pipe

that

goes

between

these

two

implement.

Some

kind

of

reliability

is

an

open

question,

but

but

it's

not

mandated

by

the

architecture.

The

essential

transport

is

end

to

end

where

congestion

control

is

done.

C

Right

because

I

think

I

think

this

sort

of

factors

into

things

like

transport

layer,

encryption

as

well,

and

whether

the

sms

are

able

to

access

the

data

to

do

anything

unless

those

transport

sessions

are

terminated

and

restarted,

and

this

is

kind

of

making

me

wonder.

I

I

don't

dispute

at

all

the

all

your

points

about

needing

to

introduce

some

additional

layering

to

to

get

the

development,

and

I'm

just

wondering

whether

this

is

really

go

should

go

in

at

layer

4.5

rather

than

at

3.5,

but

I'll.

Let

others

talk.

F

Well,

I

actually

can

I

I

mean

this

is

a

very

active

area

of

investigation

for

us

and

our

current

thinking

is

that

the

end

to

end

first

of

all,

all

the

pipes

will

be

encrypted

at

the

low

level,

but

but

that's

trivial,

but

and

service

node

to

service

node

pipes.

But

at

the

end-to-end

transport

layer

there

will

be

encryption,

but

I

we

want

to

have

an

option

for

the

endpoints

to

say

here

are

the

parts

that

we

are

willing

to?

F

Let

the

intermediate

node

see

versus

here

are

the

parts

that

they

can't,

and

so

they

can

decide

like

they

want

to

take

care

of.

You

know,

take

advantage

of

caching,

then

they

might

expose

certain

aspects,

but

if

they

want

to

keep

certain

material

private,

they

don't,

and

so

we

want

to

give

that

leave

that

flexibility

to

the

application

itself.

I

I

Isn't

that

a

barrier

to

entry,

though

I'm

I'm

not

really

quite

understanding.

The

reason

for

doing

so,

because

also

the

culture

asks

the

right

question:

who

has

the

incentive

to

really

deploy

this?

But

if

I'm,

if

I

have

to

ramp

up

my

sn

deployment

by

really

essentially

running

any

public

service

over

it,

isn't

it

preventing

me

for

just

quickly

roll

out

my

owner's

end,

where

all

I

want

to

do

is

run

my

service

on

it,

because

it's

a

low

barrier

to

entry.

F

That

our

point

is

that,

once

you

support

the

execution

environment,

implementing

these

other

services

is

simple.

It

it

does,

require

extra

resources,

but

if

you

don't

make

it

uniform,

then

the

internet,

you

know

the

beauty

of

the

growth

of

the

internet.

Is

you

knew

wherever

you

plugged

in

you

had

a

set

of

services?

F

You

could

depend

on

if

we

now

go

to

the

space

of

ip

options,

which

you

know

is

no

fun,

that's

not

going

to

work,

and

so

that

really

is

critical

to

this,

that

you

can

build

an

application

that

relies

on

a

public

service

and

that

will

be

available

wherever

the

user

is

so

that

that's

critical

part

of

the

proposal,

and

given

that

it's

not

about

changing

equipment,

it's

just

about

the

scaling

of

your

service

nodes.

We

think

there's

a

chance

that

that's

gonna.

F

F

F

F

So

there

might

be

like

a

particular

stack

that

says:

okay,

you're

going

to

get

an

ip-like

service

and,

on

top

of

it,

you're

going

to

get

this

kind

of

transport

service.

And

on

top

of

that,

there's

going

to

be

this

kind

of

ddos

prevention,

and

you

know

whatever,

as

a

a

set

of

what

we

might

think

of

individual

features.

F

E

F

Yeah,

so

in

our

current

implementation,

what

we

would

do

is

we

would

say.

Well,

if

this

is

the

composition,

then

we

would

have

that

ball

of

code.

I

mean

take

those

several

different

pieces

of

code,

merge

them

together

and

then

have

them

run

as

a

single

service

module,

so

that

we've

actually

made

sure

that

these

code,

that

the

code

bases

work

together

and

and

so

we're

not

doing

enough

chaining,

it

is

actually

we

decide

to

put

it.

F

J

F

So

I

I

think

for

both

of

them

it

is

the

extensibility

so

for

service

chaining,

you

tell

it

to

go

through

various

boxes,

but

it's

not.

The

network

is

not

making

you

a

promise

of.

I

will

deliver

multicast

packets

for

you.

It

is

go

through

this

box.

This

box

can

do

something,

but

but

service

chaining

doesn't

give

you

any

global

service

definition

that

that's

your

job.

F

You

have

to

sort

of

you

know

if

you

say

you

go

through

a

firewall

and

then

go

to

this

and

go

through

that

you're,

the

one

figuring

out,

eventually

what

what

that's

giving

you.

So

I

think

that's

the

difference

so

something

like

ddos

prevention,

that

you

know

we're

architecting

it

to

provide

it's

a

service-wide

thing:

it's

not

just

a

single

box,

it's

not

just

a

scrubbing

box!

So

that's

what

I

think

that

the

key

difference

is.

I

don't

know

whether

that

helps.

A

D

F

So,

yes,

we

will

have

some

kind

of

discovery

process

for

service

nodes

to

discover

each

other,

but

if

you

want

to

have

an

sdn

like

control

for

a

domain

to

have

the

service

nodes

know

about

each

other,

that

would

be

fine,

so

the

control

plane

we.

I

was

completely

silent

on

the

control

plane

for

this

we're

open

to

a

wide

variety

of

how

you're

going

to

manage

your

domain.

A

B

A

F

A

K

Here

we

we

viewed

our

working

data

center,

so

we

have

the

flexibility

to

customize

everything

and

it's

different

from

the

internet.

I'm

happy

to

introduce

our

work

in

network

application

for

multi-tenant

learning.

So

this

is

a

joint

work

from

colleagues

from

chiang

mai

university

and

the

university

of

wisconsin

medicine.

K

Machine

learning

algorithms

is

used

in

various

scenarios,

such

as

natural

light

reprocessing

computer

reading,

so

with

the

increasing

size

of

the

data

set

and

the

model,

so

the

algorithm

is

implemented

as

a

as

a

distributed

system.

So

the

ps

architecture

is

a

typical

architecture

that

can

support

this

kind

of

distributed

system

in

the

ps

architecture.

K

So

recently,

the

chain

or

network

competition

provide

an

opportunity

to

solve

this

problem.

The

programmable

switches

offer

in

intrinsic

packet

processing,

so

the

switch

pipeline

has

registers

which

can

store

the

network

states

and

the

user

specified

programs

can

be

loaded

to

customize

the

packet

processing.

K

There

exists,

there

is

an

existing

work

that

applies

in

network

of

aggregation

to

to

distributed

chain

html.

This

work

target

a

single

chart,

a

single

rack

setting.

So

there

is

a

wrap

connect,

multiple

workers,

so

the

switch

is

offload.

The

ps

is

offloaded

to

the

switch,

so

the

workers

gradients

are

aggregated

in

the

switch

to

support

micro

jobs.

The

switch

resource

is

statically

partitioned

and

assigned

to

each

job.

K

So

we

propose

our

solution

with

three

key

goals.

The

first

goal

is,

we

should

maximumly,

you

use

the

network

condition

for

performance

gain

on

targeting

the

production

network.

We

should.

The

solution

should

support

multiple

simultaneous

job

change

efficiently,

and

it

should

also

support

a

multi-rack

quality.

K

K

So

the

assignment

use

a

hash

function

to

hash

the

job,

id

and

sequence

number

so

for

four

packets

from

one

job,

with

the

same

sequence

number,

but

from

different

workers,

they

would

be

hashed

to

the

same

position

and

get

a

repeated

there.

So

the

the

vision

result

is

sent

to

the

ps.

Then

the

ps

return,

the

result

to

the

switch.

K

K

First,

because

the

switch

the

aggregator

assignment

is

decentralized,

it's

possible

to

to

have

hash

clearance,

for

example,

job

tools,

gradient

packet

is

a

is

a

assigned

it's

hashtag

keeper,

which

is

occupied

by

another

job

job.

Three,

in

this

case,

all

the

packets

would

pass

through

the

switch

and

arrive

at

the

on

the

ps.

The

ps

do

the

aggregation

and

send

the

result

back

to

the

switch.

K

There

is

another

case

of

inconsistency

or

membership

inconsistency.

It

causes

incomplete

aggregation,

for

example,

the

first

packet

is

sent

to

a

aggregator

which

is

reserved

by

drop

three,

then

this

packet

it

passes

through

passes

through

to

the

ps,

but

at

this

time

job3

completes

its

application

and

deallocated

the

switch

memory.

The

grid

guitar.

The

remaining

package

is

sent

to

the

aggregator

and

the

aggregated

there.

So

both

the

switch

and

the

ps

have

a

partial

result.

They

are

waiting

for

each

other.

This

is

a

deadlock

and

they

are

what's

worse.

K

Is

that

there

is

no

return

packet

of

results

to

dialogue.

This

switch

memory

causing

a

memory

leak

problem,

so

we

design

a

re-transmission

antenna

and

host

with

the

duplication

in

the

switch

so

for

for

packet

a

it

has

that

it

is

a

stock

under

the

switch

and

the

ps,

but

it's

it's

a

following

package

may

be

maybe

successfully

aggregated

and

returned.

So

if

the

sender

observed

reduplicated

the

ack

the

result

packet,

it

would

re-transmit

the

missing

the

packet

that

is

missing

the

result

it

will

all

workers

would

retransmit

a

so.

K

K

So

with

this

design,

we

have

a

correct

protocol

that

can

guarantee

the

the

correctness

to

support

microreact

aggregation.

We

need

a

aggregation

hierarchy

in

the

topology.

Ideally,

this

aggregation

hierarchy

can

be

can

be

of

many

levels.

We

consider

the

data

center

topology

in

the

data

center

topology,

the

core

network

has

multiple

passes

and

it

uses

non-deterministic

locking.

K

Currently

we

have

a

two

level

aggregation

design,

so

all

the

packets

would

aggregate

at

the

walkers

tour

first,

then

they

are

sent

to

the

ps4

and

the

second

level

aggregation

is

happening

at

the

ps4.

The

final

result

is

send

it

to

the

ps,

so

in

this

in

this

multiple

racks

part,

we

also

overcome

another

challenge,

because

the

higher

level

switch.

This

is

the

hierarch

aggregation

hierarchy

or

the

previous

in

the

paris

page.

K

So

in

each

of

the

guitar

we

have

a

big

map

which,

where

each

b

that

he

knows,

I

always

children.

But

so

there

is

one

bit

of

four

in

the

switch

two

which

indicated

the

switch

to

zero.

But

the

one

meter

here

cannot

denote

the

all

the

possible

aggregations.

These

always

work

because

there

are

two

workers.

K

So

the

previous

design

also

overcome

other

challenge.

Other

challenges

on

our

reliability

when

the

the

re-transmission,

with

the

duplication,

can

also

handle

the

packet

loss

correctly

and

one

pack

loss

happens

in

the

host

of

the

re-transmitter

and

the

bitmap

in

the

switch

can

guarantee.

There

is

exactly

wax

application

and

we

also

redesigned

the

congestion

control.

The

essential

problem

is

what

should

be

the

congestion

signal,

because

some

many

packets

are

consumed

in

the

switch,

so

they

do

not

have

round

sweep

time.

So

there

is

no

rtt.

K

We

use

the

ecl

as

the

congestion

signal

use

eimd

for

congestion

control

and

on

the

in

the

host.

The

switch

can

only

compute

on

integrals.

It

does

not

support

floating

point

arithmetic,

so

we

do

compensation.

We

scale

with

scale.

The

float

point

block

points,

two

integers

bioscanning

factor

and

this

because

we

skills

the

floating

numbers

it's

possible

to

have

overflow

at

the

switch

to

handle

this

problem.

We

just

use

the

fallback

mechanism,

we

reuse

the

fallback

magnesium

when

switch,

detect,

detects

our

flow

overflow.

K

K

Sometimes

it's

the

performance

skin

is

very

significant.

Atp

can

benefit

the

network

intensive

workloads

more

than

the

competition

intensive

workloads

and

comparing

atp

with

the

ring

or

reduce

with

hardware

acceleration

atp

is

slightly

better

than

ring

or

reduce,

but

more

importantly,

it

only

uses

half

of

the

batteries

that

we

already

use.

K

K

K

Okay,

there

are

more

evaluations,

so

in

atp

we

co-designed

the

host

and

the

switch

logic.

The

switch

to

service

security

service

is

best

effort.

It

has

dynamic

resource

allocation.

The

host

networking

stack,

has

fallback

mechanisms

for

correctness,

guarantee

and

also

reliability

and

congestion

control

to

ml

jobs.

Such

a

design

can

provide

performance,

skill

and

correctness,

and

we

achieve

our

goal

of

multi-tenant

but

to

draw

support.

K

So

there

is

takeaway

so

usually

when

we

do

a

network

competition,

usually

we

can

get

a

very

significant

performance.

Scan

switch

computes

much

faster

than

a

server,

but

correctness

guarantee

is

very

difficult

because

the

network

competition

introduces

new

schematic

into

the

network,

for

example.

Practice

can

be

consumed

instead

of

lost.

Then

hosts

need

to

distinguish

these

cases

and

usually

we

do

switch

and

host

the

co-design.

K

B

L

So

in

coin,

so

far

in

my

view,

we

have

mainly

been

discussing

like

two

strengths

of

of

work,

so,

like

one

is

coming

from

like

data

plane

probability

and

then

seeing

how

this

could

be

put

to.

You

know

a

useful

purpose,

for

you

know

having

distributed

applications

and

improving

their

performance

and

so

on,

maybe

also

evolving

some

protocols

to

support

certain

use

cases

better.

L

The

other

strand

you

could

say,

is

coming,

but

from

the

distributed

computing

where

we

say

okay,

what

can

we

learn

from?

This

will

be

computing

and

how

does

it

affect

our

view

on

networking

and

maybe

then

re-imagine

the

relationship

and

in

the

end,

have

more

programmable

systems,

also

like

the

ones

that

scott

talked

about,

and

so

I

think

the

the

like

the

thesis

of

this

group

in

in

general

is

maybe

is

there

some

confluence

at

the

end

of

these

two

strains?

L

I

invite

you

to

check

out

the

paper

and

the

demo

video

after

this,

so

in

distributed

computing,

we

know

that

there

are

many

different

types

of

interactions,

so,

like

simple

message,

passing

remote

method,

indication

data

sets

implementation,

key

radio

stores

and

so

on,

and

so

I

think

some

itfs

ago

we

presented

a

system

that

we

called

compute.

First

networking

that

was

essentially

a

yeah,

too

incomplete,

really

general

distributed

computing

system

based

on

an

icn.

L

L

Can

this

fundamental

paradigm

can

be

used

to

implement

batch

as

well

as

stream

processing

so

in

steam

processing

you

you,

like

conceptually,

you

look

at

each

data

object

independently

in

an

unbounded

stream

of

data

in

batch

processing.

You

group

data

and

typically

the

systems

allow

you

to.

You

know,

implement

groupings

dynamically

so

based

on

some

predicate

or

some

time

window

specification

and

so

on.

L

So

windowing

is

a

common

concept

here

that

allows

this

grouping

and

slicing,

and

this

can

also

lead

to

situations

where

you

have

something

like

a

predicate

that

allows

you

to

put

like

one

data

object

into

like

multiple

windows.

For

like

consumption

by

by

different

functions,

for

example,

but

you

can

also

split

this

up

in

in

different

ways.

L

L

You

can't

really

predict

the

processing,

transport

delays

and

so

on

and

typically

what

you

try

to

achieve

is

that

you

match

the

production

rate

with

the

input

rate,

and

so

the

task

of

a

data

flow

system

then,

is

to

adjust

the

processing

graph

to

the

application

requirements

and

the

data

production

rate,

and

you

can,

you

can

see,

there's

all

like

some

kind

of

variable

performance

and

systems

can

be

compared.

How

well

they

maybe

keep

up

with

the

offered

load.

L

Perhaps

there

are

a

couple

of

really

widely

used

implementations,

so

apache

beam

is

basically

the

unified

programming

model

that

many

data

from

invitations

use,

so

sometimes

they're

called

runners.

So

you

may

have

heard

about

apache,

flink

spark.

Of

course,

google

cloud

dataflow

is

a

product

and

so

on,

and

the

picture

here

on

the

right

hand,

side

depicts

the

architecture

of

a

system.

I

think

it's

probably

inspired

by

flink,

where

you

on

the

bottom

here

you

you

see

the

the

notes,

so

these

this.

L

What

is

called

task

manager

here,

so

this

could

be

something

like

a

a

compute

node

in

your

network,

which

is

offering

several

slots

for

computation,

and

this,

like

the

job

manager

on

the

top

right

here,

is

kind

of

orchestrating

this

whole

system.

So

it's

kind

of

having

an

overview

about

the

available

slots

and

is

responsible

for

allocating

tasks

for

for

certain

jobs

and

then

also

managing

the

connectivity

between

those

those

jobs.

L

L

You

know

matching

of

like

your

compute

performance

with

the

network

performance

and

having

also

really

responsive

systems.

In

the

end,

you

don't

you

have

you,

don't

have

this

full

visibility

of

both

the

computing

and

the

networking

resources

now

in

the

system.

I

wanted

to

talk

about

today,

and

so

we

call

this

ice

flow

information

senior

data

flow.

We

assume

that

we

have

a

network

of

nodes

and

in

in

icn

we

we

name

everything

so

the

assumption

here.

L

They

would

also

be

announced

in

this

routing

system

and

so

we'll

be

able

to

construct

compute

graphs,

and

what

we

do

in

this

system

here

is

that

we

are

kind

of

not

establishing

connections

to

functions

or

to

to

nodes.

We

are

actually

just

asking

for

input

data,

and

so

when

there

is

new

input

data,

we

this

triggers

computation

at

the

like,

downstream

function

and

so

on.

L

They

return

name.

Data

object

with

the

usual

icn

properties,

so

they

are

immutable,

they

can

be

cached

and

they

can

be

authenticated

encrypted

and

so

on,

and

so

the

interesting

challenge

is

here

is

that

we

have

asynchronous

data

production.

So

we

have

to

know

when

data

is

available

so

like

push

semantics,

which

is

typically

not

idiomatic

in

icn,

and

then

you

have

to

think

about

flow

control.

So

how

do

you

couple

consumers

and

producers

and

then

in

the

icn

system?

Typically,

you

you

publish

data.

That

means

you

make

data

available.

L

L

We

are

using

an

icn

technology

called

dataset

synchronization

for

that,

where

so,

logically,

the

producers

produce

data

under

a

known,

prefix

and

consumers

can

subscribe

to

that

prefix

and

then

they

would

learn

when

there

is

new

data

under

that

prefix,

and

then

they

can

decide.

Okay,

I'm

interested

in

text

to

lines

object

one,

and

then

I

can

fetch

that.

L

So

there

are

implementations

of

this

concept

like

psync

in

in

icn,

which

in

the

end

which

you

may

have

heard

about,

and

so

that

means

so

in

the

on

the

like

very

low

layer.

This.

That

means

consumers

have

to

kind

of

send

update

interests,

to

learn

about

new

names

perfectly

and

then

from

an

application

perspective.

L

L

You

know

runtime

information

and

configuration

information.

So

what

is

the

static

flow

graph?

What

is

the

actual

dynamic

flow

graph?

What

are

available,

compute

slots

in

the

system

and

so

on,

but

also

implementing

this

loose

coupling

between

consumers

and

producers?

So

we

have

conceived

something

that

like

what

we

call

consumer

reports

where,

basically,

we

publish

what

windows

have

been

processed

by

each

consumer,

and

so

this

is

also

a

like

a

organized

data

structure

in

in

icn

way,

and

we

also

use

this

data

set

synchronization

scheme

to

share

this

information.

L

Okay,

just

very

quickly

so

this

approach

allows

us

to

deal

with

congestion,

control

and

yeah

like

a

proper,

receive

window

configuration

in

a

different

way,

so

we

can

really

adapt

like

the

interest

rate,

for

example,

to

our

actual

processing

speed,

so

avoiding

that

we

asked

for

too

much

data.

We

cannot

process

in

in

real

time

and

observing

the

performance

of

my

downstream

consumers

could

be

a

trigger

for

an

upstream

producer

to

initiate

scaling

out

and

so

by

creating

a

new

subgraph.

So

if

I'm

constantly

realizing

that

my

downstream

cannot

keep

up.

L

L

L

L

This

system

would

basically

need

additional

infrastructure,

so

like

a

name-based

routing

infrastructure,

for

example-

and

there

are

solutions

for

that

that

in

principally

principal

work,

but

maybe

in

terms

of

research,

this

system

could

also

be

supported,

perhaps

better

by

a

routing

system

that

gives

you

more

information

so,

for

example,

resource

education,

information

directly

and

not

only

reachability

information

and

so

for

coin.

We

think

this

could

be

an

example

for

like

new

protocol

work,

so

I'm

not

saying

that

this

is

the

the

best

way

of

doing

this.

L

So

this

is

has

to

be

something

that

you

know

ends

up

in

a

protocol

spec.

But

it's

it's

an

interesting

example,

so

how

you

can

break

up

overlays

and

leverage

systems

like

icn

to

do

that,

and

so

today

I

talked

about

data

flow,

but

you

can

imagine

that

other

interaction

classes

could

be

promising

as

well,

so

other

systems

may

be

like

kafka

like

published

broker

systems,

and

so

on

with

that,

thanks

for

your

attention

are

there

any

questions.

A

A

So

I

think

in

the

interest

of

time

we

will

go

forward

in

the

program

we

had

segmented

the

program

into

you

know,

papers

that

have

been

published

elsewhere

and

that's

been

really

fruitful.

Thank

you

all

for

your

presentations.

The

next

section

is

gonna,

be

brief.

It's

sort

of

new

ideas

section

and

then

we're

gonna

get

to

the

drafts

and

draft

updates,

and

then

then

a

new

draft.

A

B

A

A

B

B

B

That's

been

put

together

by

a

large

group

of

people

and

it's

a

european

wide

project

and

the

idea

started

from

essentially

a

lot

of

us

actually

a

lot

of

people

on

the

moda

team

or

will

be

when

we

once

we

see

the

slides

are

very

familiar

to

this

group,

because

a

lot

of

them

are

involved

and

one

of

the

ideas,

oh,

my

slides,

are

coming.

Thank

you.

Thank

you

so

much

eve

and

the

the

id

and

we

can

go

directly

to

the

first

slide.

B

B

Everything

is

fragmented

and

for

essentially

in

iot.

What

people

do

is

they

put

a

sensor

somewhere?

They

put

a

gateway

in

they

connect

to

the

cloud,

and

they

claim

that

the

problem

is

solved.

The

problem

is

not

solved

because

the

minute

that

you

want

to

start

having

applications

and

services

and

artificial

intelligence

that

cover

more

than

one

thing

you

you're

in

you're,

in

your

agriculture.

Well,

you

may

want

to

take

decisions

that

are

based

on

the

market,

but

then

how

does

that

work?

When

you

need

to

det?

B

You

need

for

that

to

know

or

how

much

disease

do

you

have

in

your

farm

or

maybe

what's

the

building

temperature,

because

that

will

increase

or

decrease

depending

on

you

know

the

time

of

year

or

the

time

of

day,

and

it

will

increa

increase

or

decrease

your

production,

which

yeah

will

go

back

to

your

your

modeling

of

decision,

and

now

you

would

the

minute

you

do

that.

Well,

you

have

people

involved.

You

have

a

ton

of

different

systems

that

don't

talk

to

one

another.

Next.

B

So

oops

so

essentially

in

this

fragmented

environment,

the

application

development

has

very

very

important

pain

points.

So

all

these

fragmented

systems

that

require

overlays

multiple

gateways,

different

cloud

applications,

the

different

cloud

providers

that

don't

talk

to

one

another

and

there's

issues

always

of

that

in

security

and

privacy,

because

there's

data

privacy,

there's

digital

sovereignty,

there's

multiple

customers

who

are

involved

and

it

creates

a

big

problem.

B

Data

valorization

is

key.

I

think

scott

mentioned

that

you

know

you.

You

use

open

source

approaches,

and

this

is

exactly

what

we

want

to

do,

because

in

fact

it

is

not

the

algorithms

or

the

software

that's

worth

and

the

words

a

lot.

It's

actually

the

data

itself,

and

so

we

want

to

make

sure

that

we

can

actually

maximize

data

valorization

by

acting

it

on

it

inside

the

network.

Next

slide.

B

B

B

So

the

format

didn't

work

very

well

here,

the

main

mode

of

functionality.

I

don't

want

to

go

I'll,

go

fast,

because

I

don't

want

to

go

into

everything

this,

but

obviously

we

want

to

do

some

orchestration.

There

was

discussion

about

orchestration

before,

but

we

feel

that

we

can

actually

look

into

this

and

again

on

device

computing

over

a

trojan

system

we

want

to

have

if

we

can

as

much

as

possible

reusability

and

that's

actually

a

problem

on

those

verticalized

applications

is

if

you

change

vendor

or

if

you

change

supplier.

B

Your

system

doesn't

work

anymore

because

they

use

different

protocols,

different

semantics,

different

everything

you

would

like

to

have

modularity

as

a

design

choice.

I

usually

when

I

teach

my

class

on

distributed

systems.

I

talk

about

the

lego,

blue,

brick

approach,

so

that

you

know

we

want

to

have

a

lot

of

lego

bricks

and

we

connect

them

when

we

need

them

and

we

don't

use

them

when

we

can't

or

when