►

From YouTube: IETF112-PRIV-20211110-1600

Description

PRIV meeting session at IETF112

2021/11/10 1600

https://datatracker.ietf.org/meeting/112/proceedings/

C

B

B

B

B

B

D

B

B

B

B

F

Fantastic,

I

actually,

despite

what

it

says,

on

the

on

the

agenda,

I'm

going

to

merge

the

discussion

of

the

things

we're

trying

to

accomplish

with

the

discussion

of

technology,

because

I

think

it's

easier

to

understand

the

motivating

cases

so

I'll

just

fold

them

right

in

rather

having

a

separate

use

cases

presentation

so

I'll

say

I

sent

a

link.

I've

written

up

quite

a

bunch

of

interactive

material

on

this.

F

So

if

people

want

to

read

that

I

sent

a

link

in

the

java

chat

and

obviously

something

is

unclear.

Please

stop

me

and-

and

you

know,

ask

so:

okay,

let's

move

on.

So

let's

just

start

so

here's

just

like

the

traditional

like

this

is

what

I'm

going

to

talk

about

kind

of

in

order.

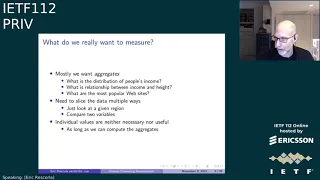

First,

I

want

to

talk

about

like

the

kinds

of

things

like

one

actually

wants

to

measure

in

these

typical

settings.

F

For

the

protocol

that

we

sort

of

developed

that

are

hoping

to

you

know,

pull

in

in

this

work,

that's

what

all

aside

posting

I'm

planning

to

do

so,

there's

a

lot

of

situations

where,

like

one

would

like

to

learn

about

people

right,

you

know

we

have

like

the

the

census

or

you

know,

there's

a

lot

of

public

research.

You

know,

and

you

learn

things

like

demographics,

and

you

know

people's

income.

You

know,

maybe

they

have

medical

issues.

F

You

know

companies

want

to

do

product

development,

so

see

what

features

that

you

know.

People

use

and

don't

use

how

much

they

use

them

like.

Are

the

products

not

working

in

some

way?

And

then

you

also

want

to

take

like

behavioral

measurements

like

like,

so

you

know,

say

you

want

to

discover

like

new

websites

that

no

one

knows

about

or

what

information

people

care

about,

so

you

can

tune

your

product

to

be

like

more

like

what

people

actually

want.

F

So

I

mean

there

was

a

good

example

of

this.

On

the

other

day,

when

brave

did

some

posting

about

like

their

research

engine

and

how

they

like

want

to

discover

websites

for

the

search

engine,

so

so

all

these

problems

involve

like

collecting

data.

The

information,

of

course,

is

like

very

useful,

but

it

also

is,

you

know,

can

be

very,

very

sensitive.

You

know

people

often

don't

want

to

and

shouldn't

have

to

disclose.

You

know

their

medical

issues

in

order

in

order.

F

Want

to

know

you

know

where,

where

funding

should

be

targeted,

for

instance,

but

we

don't

want

to

know

what

individuals

have

like

you

know,

medical

conditions,

that's

exactly

this

right

same

thing

is

true

for

your

income,

official

orientation,

all

those

things

someone

is

unmuted,

amazed

peter.

If

you

could.

I

know

it's

not

me,

because

I'm

not

typing-

and

you

know

it

turns

out

that,

like

not

only

the

things

you

naturally

think

of

sensitive

sensitive

but

even

like

much

less

sensitive

data

can

be

very

appealing.

F

And

of

course

this

is

like

how

ad

targeting

works,

and

it

turns

out

that

there's,

like

a

lot

of

a

lot

of

like

evidence

that

you

can

put

like

less

sensitive

data

together.

This

is

often

called

like

high

dimensional,

sparse

data

sets

and

figure

things

out

that

people

would

be

surprised,

and

so

I

had

this.

This

is

you

know

from

an

article

a

few

years

ago

about

you

know,

target

inferring.

You

know

a

girl

was

pregnant

by

like

looking

at

her

other

other

person

behavior.

F

So

there's

been

a

lot

of

you

know,

research

on

this,

and

one

would

hope

to

do

better

and

so

like

the

historical

way

that

you

know

one

does.

These

things

is

that

you

just

gather

all

the

data

and

then

you

promise

not

to

disclose

it,

and

you

know

this

is

like

not

working

out

super.

Well,

you

know

data

breaches.

F

There's

this

the

famous

case

of

the

census,

information

we

use

for

targeting

japanese

americans

during

world

war

ii,

and

so

generally,

you

better

have

a

system

that

does

not

involve

just

like

trusting

someone

to

like

handle

the

data

appropriately.

So

the

good

news

is

that

actually,

the

data

that

you

want

to

know

is

not

necessarily

sensitive.

The

data

you

want

to

know

is

usually

what's

called

aggregates.

F

So

say

you

wanted

the

distribution

of

people's

income,

maybe

in

a

particular

region,

or

maybe

you

want

to

look

at

their

relationship

between

like

income

and

height

which,

by

the

way

there

is

one

or

you

want

to

know

what

the

most

popular

websites

are.

But

I

don't

care

like

what

lists

any

individual

goes

to.

What

I

care

about

is

like

what

says

people

aggregate

go

to

and

in

fact

it's

often

not

useful

to

learn

the

websites

that

an

individual

goes

to,

because

maybe

a

lot

of

them

are

like

cardinality.

F

Often

you

need

to

like

slice

the

data

in

multiple

ways,

so

you

say

look

I

just

want

to

look

at

a

given

region

or

when

I

compare

two

variables.

I

want

to

regress

them

against

each

other.

So

in

these

cases

you

know

it's,

as

I

say,

it's

actually

not

useful

to

have

to

have

the

individual

data

beyond

what

lets

you

compute

these

aggregate,

metrics

and

and,

of

course,

it's

very

harmful

to

digital

data.

F

If

you

misuse

it

and

from

the

perspective

of

a

researcher,

not

only

you

know,

it's

not

just

a

matter

of

it's

not

just

a

matter

of

of

harmful,

but

it's

a

matter

of

dangerous,

because

now

you

have

to

have

all

sorts

of

controls

and

procedures

around

hand

on

the

data

that

make

it

very

hard

to

work

with,

and

of

course

also

it

makes

it.

People

unwilling

to

you

know,

share

the

data

with

you

if

they

know

if

they

think

you're

not

gonna

handle

responsibly.

F

F

F

I

want

to

tell

you

a

little

bit

about

the

kinds

of

kind

of

output

measurements.

You

might

want,

there's

a

number

of

common

measurement

tasks

that

that

we're

hoping

to

achieve

in

this

working

group.

So

the

first

is,

what's

often

called

a

simple

aggregates.

This

is

the

stuff

you

would

like

learn

in

an

interest

s

class

of

you

know.

You

know

single

figures,

group

statistics

that

capture

some

data

so

mean

median

sum

histograms.

F

That

turns

out

to

be

a

very

useful

technique

for

a

number

of

number

settings

so,

like

I

said

you

can

notice

it.

These

are

all

like

the

aggregate

things

I

don't

depend

on

any

anybody's

individual

data.

So

we

just

like

to

find

some

way

to

gather

those

these

aggregates

without

having

to

be

infected

by

people's

individual

data,

which

is

like

basically

toxic

waste

at

some

level.

F

So,

like.

Let

me

give

you

like

one

motivating

use

case,

it's

very

useful

to

know

what

kind

of

sites

users

visit,

because

then,

if

you're

like

a

web

browser,

so

that

our

backlight

use

case,

if

you're

like

web

browser,

you

like

to

know

what

kinds

of

sites

people

visit.

So

you

can

make

your

web

browser

work

well

on

those

sites,

and

so

you

can

spend

and

spend

time

saying:

okay,

but

people

like

really

watch

a

lot

of

videos

like

making

video

where

they

work

is

important.

F

Now

we

have

like

some

data

on

this

because

we

collect

like

mechanical

data

but

like

for

obvious

reasons,

it's

unattractive

to

know

like

what

topics

any

individual

is

interested

in,

because

on

this

topic,

some

of

those

topics

are,

you

know,

implicate

information.

They

don't

want

us

to

have,

and

you

know

it's,

it's

very

difficult

to

obviously

know

exactly

what

these

people

go

to

as

problematic,

but

even

knowing

the

interest

people

have

is

problematic.

F

So

that's

like

one

motive

in

this

case,

another

one

of

the

use

case

again

for

a

browser

is

to

see

which

websites

are

having

problems

of

one

kind

or

another.

In

some

cases,

these

problems

are,

I

guess,

innocuous,

so

like

web

compatibility

is

a

big

problem.

Some

sites,

just

don't

render

properly

and

like

mozilla,

operates

like

a

thing

which

you

can

press

in

the

upper

right

hand

corner

of

your

browser

somewhere.

F

That

says,

like

this

site

is

broken

from

me

but

like,

but

we

depend

pretty

heavily

on

people

volunteering

information

because

we

obviously

don't

want

to

collect

what

url

everybody's

going

to.

This

is

like

a

bigger

problem

for

surprisers

with

smaller

market

shares,

because

things

will

often

work

on

like

one

engine

or

not

another.

So

in

many

cases

we

can

detect

breakers

on

the

client.

F

We

know

that

something's

wrong,

like

they

try

to

use

a

property,

we

know

it

doesn't

exist

or

the

user

is

saying

reload

constantly

like

rage

clicking,

but

you

can't

do

anything

about

it

because,

like

the

browser

knows,

but

it

can't

tell

us-

and

so

that's

one

example.

Another

example

is

that

we

know

there's

a

lot

of

like

what's

called

fingerprinting

going

on.

F

So

a

lot

of

web

tracking

happens

with

cookies,

but

there's

a

lot

of

which

happens

without

cookies

and

and

so

what

you

do

is

you

like

have

a

bunch

of

javascript

apis

and

you

can

measure

how

the

browser

behaves

under

the

job

sheet

apis.

So

an

example,

people

often

talk

about

is

what's

called

canvas

fingerprinting

where

you

like

render

some

fonts

and

then

you

read

back

from

the

canvas,

and

that

gives

you

information

about

like

the

gpu,

that

machine

uses

machine.

F

So

you

can

use

this

to

build

up

a

like

single

value

which

you

can

use

use

like

follow

the

user

on

the

internet.

So

this

is

like

a

big

front

tracking

that

is

not

addressed

by

the

kinds

of

you

know.

Third-Party

cookie

blocking

the

browsers

like

firefox

and

chrome.

Do

this

is

again

off

detectable

on

the

client

because

you're

like?

Why

is

this

person

like

doing

a

lot

of

canvas?

F

So

we're

stuck

in

the

situation

where,

where,

if

you

have

like

the

sort

of

accurate

information

about

which

sites

are

having

problems,

you

could

just

think

about

it,

could

you

go

to

the

site

and

you

could

like

download

the

script

and

find

it

yourself,

but

but

doing

that

would

entail

collection,

browsers

are

still

very

problematic.

One

thing

I

would

say

is

people

often

say

like:

why?

Don't

you

just

do

a

scraper,

and

you

certainly

can

do

that

sometimes.

F

But

there

are

two

problems:

one

is

building

a

scraper

that

collects

that

much

information

is

very

expensive

and

the

second

is

that

it's

very

easy

to

detect

when

someone

has

a

scraper

and

if

so,

especially

in

these,

in

these

fingerprinting

cases,

they

could

just

send

you

different

different

data.

There's

a

lot

of

fingerprints.

So

again,

the

problem

statement

is

to

collect

on

the

sites

where

the

client

is

seeing

some

issue,

but

only

to

see

the

hot

ones

and

only

to

see

and

not

see

individually.

F

And

I'm

just

going

to

preview

on

the

the

rest

of

the

people.

Here

were

talking

about

a

number

of

use

cases.

I

guess

I

I

hate

this

first

one,

so

I

should

have

like

remove

that.

My

slides

got

changed,

but

there's

a

bunch

of

talk,

I

think

other

use

cases

involving,

but

some

advertising

work

and

also

some

work

on

covenant.

Exposure

notification

measurement

so

you'll

see

those

later,

but

I

mean

this

should

give

you

a

sense

of

breath.

F

So

it's

important

to

recognize.

There

actually

are

two

kinds

of

privacy

threats

with

these

kinds

of

data

collections.

The

first

is

when

you

collect

sensitive

data

and

it's

directly

tied

to

identifying

information,

so

you

say:

look

I,

like

you

know,

did

a

survey

and,

like

I

called

people

on

the

phone,

and

they

told

me

you

know

they

tell

me

this

sensitive

stuff

and

now,

like

I

have

the

phone

number

and,

like

you

know,

they

told

me

like

you

know

whether

they

have

a

particular

medical

condition.

F

F

The

client

like

and

it

encrypts

the

data

to

the

collector,

and

then

you

have

some

proxy

in

the

middle

that

removes

all

the

metadata

like

the

ip

address,

and

so

this

this

avoids

the

collector

like

seeing

that

meta

information

but

still

gets,

and

because

that

is

encrypted,

the

proxy

never

sees

the

report

so

that

these

things

are

split

up

and

we've

talked

about

the

trust

model.

For

that.

So

I

won't

go

into

that

in

much

detail

and

so

the

number

of

ways

to

do

this.

F

It's

really

good

for,

like

sort

of

boosting

the

privacy

of

semi-sensitive

data

like

data

you

collect

anyway,

you

say

well

like

I

wish

it

had

the

ip

address

you

can

get

rid

of

it,

and

so

it's

very

common

now

for

like

browsers

to

collect

telemetry

and

we

have

the

ip

address

which

you

just

throw

away,

and

we

agree

not

to

have

that

involved.

There

are

also

a

bunch

of

cases

where

you

want

to

collect

individual

values

and

these

free

form

data

blobs

that

you

want

to

really

dig

into.

F

F

F

F

Maybe

it

doesn't

depend

on

computational

assumptions,

but

when

you

put

the

shares

together,

they

of

course

represent

the

entire

value.

So

what

you

do?

Each

client

sends

like

one

share

to

one

server

another

share

another

server

and

then

the

servers

take

the

shares

themselves

and

they

aggregate

them.

They

compute

the

aggregate

value

but

again

you're

just

working

on

the

partial

data,

so

you're

not

learning

anything,

and

then

you

could

take

the

aggregate.

F

F

So

let

me

just

pause

for

a

second

before

I

talk

at

the

crypto,

which

is

the

trust

model,

because

this

is

like

really

important.

I

know

this

comes

up

a

lot.

We

talk

about

these

systems,

so

the

client's

requirement

is

the

two

servers

do

not

collude.

If

it's

a

service

clue,

they

can

be

digital

values

and

it's

game

over

right,

and

so

this

is

very

hard

to

operate

between

people,

obviously

and

and

the

client

has

to

trust

exactly

one

of

them.

The

client

is

great.

F

The

client

trusts

both,

but

as

long

as

one

of

them

doesn't

cheat,

it's

fine

and

you

could

do

n

servers,

but

two

is

the

most

common

number.

Obviously,

the

servers

also

have

to,

for

various

reasons,

do

a

little

bit

of

enforcement

about

like

minimum

batch

sizes

and

query

limits

and

stuff

like

that.

To

avoid

some

attacks

that

we

will

talk

about

here

for

the

collector's

requirement,

both

servers

have

to

actually

be

executed

protocol

correctly

because

either

server

can

like

distort

the

results

that

they

don't.

F

But

again,

this

is

only

a

correctness

requirement

that

only

one

server

is

required

to

behave

correctly

from

the

client's

perspective.

So

I

just

want

to

recognize

right

up

front.

It's

like

difficult

to

verify

from

the

client's

perspective

that

the

servers

aren't

colliding.

That

conclusion

could

happen

through

side

channels

depending

on

the

architecture.

Sometimes

the

sideshows

are

small

signs

are

big.

You

can

do

point

in

time

audits

to

verify

that

someone

is

behaving

correctly,

but

you

can't,

but

it's

like

not

possible

for

them.

F

You're

not

colliding,

and

I

just

want

to

like.

So

I

want

to

highlight

that,

but

I

also

want

to

say

that,

like

this

is

like

a

very,

very

common

scenario

on

the

internet,

where

you,

where

people

have

data-

and

you

have

to

trust

and

behave

correctly-

I

mean,

if

you

think

about

like

you

know

your

data

is

in

gmail.

F

Like

you

know,

google

has

like

your

entire

email

record

right

and

you're

pressing

them

behave

correctly,

and

you

know

even

you

know,

even

if

the

software's

running

on

your

machine,

like

you

know,

generally

people,

think

of

that

as

behaving

correctly,

but

like

your

ability

to

verify

the

software

running

on

your

device,

extraordinarily

limited

and

so

like,

while

like

it

would

be

great

to

have

a

situation

in

which

you

never

had

to

trust

anybody.

That's

simply

not

the

situation.

F

Most

of

us

find

ourselves

in

so

we're

talking

about

here

is

trying

to

like

alleviate

the

situation

of

trust

people

and

make

the

number

of

people

have

the

trust

smaller

or

the

number

of

people

have

to

cheat

larger.

I

guess

we're

not

talking

about

a

limiting

trust

entirely.

It's

not

possible

the

state

and

our

technological

development,

and

so

we're

trying

to

improve

the

situation,

but

we're

not

trying

to

like

boil

the

ocean.

F

So

I

want

to

talk

like

very

briefly

about

like

one

cryptographic

protocol

to

give

you

a

sense

of

like

the

situation.

This

is

sort

of

the

one

that

started

it

off.

It's

called

prio

and

it's

useful

for

computing,

like

numeric

aggregates,

like

sum

and

mean

that

kind

of

thing

and

like

this

is

like

the

one

that's

going

to

most

apprehensible

and

like

we're

going

to

punt

the

crypto

to

cfrg.

But

this

one

is

like

understandable,

like

normal

humans.

F

So

we

assume

each

client

has

some

value

like

the

numeric

value

and

like

called

x

of

I

right,

and

so

the

client

does.

Is

the

client

splits

up

that

value

in

the

following

way?

It

generates

a

random

value,

sorry

about

the

fancy

math,

but

basically

a

random

value

is

smaller

than

a

prime

and

then

it

basically

does.

It

sends

server

one

like

the

value

minus

the

random

value

module

the

prime

and

it

sends

server

to

the

random

value.

F

It's

like

you

see

it's

quite

easy

to

convince

yourself

that

if

you

know

that

oh

no,

the

random

value

is

not

enough

and

knowing

and

knowing

that

the

subtraction

is

not

enough,

and

that

is

sufficient.

So

now

each

server

takes

all

the

shares

I

get

from

everybody

and

they

add

them

up

right

and

again

like

because,

because

because

because

these

are

like

information,

theoretically

they're

not

only

anything

and

then

they

and

then

they

basically

exchange

the

exchange,

the

sums

or

really

like

one

sentence

on

the

other.

Probably.

F

So

this

is

like

this

seems

like

really

boring

and

like

kind

of

obvious

and

but

it's

actually

fantastically

powerful,

and

the

reason

is

because

there's

a

lot

of

things

you

can

actually

compute

with

just

the

sum

thing.

As

long

as

you

encode

the

data

properly,

you

can

compute

all

kinds

of

things

as

sums,

so

like

arithmetic

mean,

is

obvious.

That

sum

divided

by

count

product

you

compute

product

by

doing

some

of

the

logs

geometric

means

coming

from

product.

F

You

can

do

variance

and

standard

deviation

by

computing,

some

some

of

the

values

of

the

squares

there's

a

but

there's

a

bunch

of

fancy

stuff

for

doing

like

or

and

min

max,

and

even

ordinary,

obviously

squares.

So

the

trigger

is

just

finding

the

writing

coding

and

there's

like

papers

now

about

how

to

do

all

this

stuff.

F

F

Unless

you

qualify

yourself

and

you

just

live

with

it,

you

have

to

live

with

the

noisy

data

right,

because

people

don't

lie

consistently

and

then

there's

like

consistent

completely

ridiculous

data,

or

I

like

say,

like

I'm,

a

kilometer

tall

or

worse,

I

say

like

I'm

negative,

kilometer

tall

right

and

ordinarily.

What

you

do

is

you

just

like

have

some

filter

mechanism

where

you

said

you

know

you

just

said

like

well,

I

I

I

just

reject

anything.

This

is

a

kilometer

tall

right

and

but

like

with

the

pre

of

it

is

encrypted.

F

You

can't

do

that

right

and

so,

and

so

instead,

what

you

do

is

like-

and

this

is

the

fancy

math

part.

Each

submission

comes

with

the

zero

knowledge

proof

of

validity,

and

the

proof

says

something

like

this

height

report

is

like

between,

like

100

and

200

centimeters

right,

the

servers

work

together

to

value

the

proof

and

you

only

aggregate

the

submissions

that

have

valid

proofs

right

so,

like

you

have

to

trust

me.

F

This

part

works

but,

like

this

part

works,

but

it's

important

remember

that

this

part

believing

this

part

works,

does

not

we're

not

necessarily

required

for

bullying

privacy

if

the

privacy

claim

caused

the

previous

things,

the

youtube

with

zero

knowledge.

Please

don't

pick

the

data

so

okay,

so

like

going

back

to

my

use

cases

right

say

I

want

to

collect

these

user

interests

right.

F

So

basically,

what

you

call

user

interest

thing

like

preo

is

that

every

user

interest

is

a

bucket

and

you

have

like-

I

don't

know:

100

200,

500

buckets

right

and

the

client

individually

reports

time

spent

in

each

bucket.

You

have

to

report

the

ones

that

are

zero

too

by

the

way.

Otherwise

you

can

just

look

at

which

buckets

are

reported,

and

then

you

use

prior

somewhat

and

you

end

up

with

a

bunch

of

sums

one

for

each

bucket

and

now

you

know

exactly

how

much

your

time

was

spent

on

each

bucket

for

each

bucket.

F

But

you

don't

anybody's

individual

time

spent

and,

as

I

know

you

can.

Oh,

you

can

also

report

t

squared.

You

could

be

standard

deviation

as

well,

so

this

is

like

a

pretty

straightforward

application

or

something

like

preamp,

but

there's

like

a

whole

pile

of

use

cases

that

basically

come

into

this

okay.

So

even

more

fancy

is

a

protocol

called

heavy

hitters.

Well,

actually

they

didn't

name

it

so,

like

we've

been

calling

it

hits

and

the

idea.

F

F

F

Could

I

just

giving

you

examples

or

something

we're

just

giving

you

submissions

that

like

have

one

piece

of

information,

but

you

can

also

tag

the

submissions

of

the

demographic

data

because,

like

birthdayers

of

code

results-

and

these

are

talking

about

right

and

those

get

passed

on

all

the

way

to

the

aggregators

right-

and

this

is

like

this-

is

notionally

safe,

because

you

say

that

the

non

nonsense

information

is

safe

or

you

you

only

click

nonsense,

information

and

then

they

owed

it

as

encrypted.

But

then

you

can

say:

okay

computing

aggregate

over

the

subsets.

F

So

that's

like

a

very

powerful

today.

That's

one

reason

why

this

is

like

a

powerful

technique

and

ways

that

like

ohio,

is

not,

but

of

course

it

means

that

repeated

queries

can

be

used

to

reduce

values

by

like

querying

for

the

subset

like

includes

them

and

then

excludes

them.

There's

defenses

against

this

on

having

minimum

batch

sizes,

anti-replay

randomization

for

differential

privacy.

This

is

a

piece

of

work.

F

F

So

what

is

the

state

of

the

play

here?

A

number

of

us,

some

of

the

people

you're

representing,

have

basically

developed

a

generic

protocol

that

is

designed

for

doing

privacy

pure

measurement.

What

I

mean

by

generic

is

that

it's

a

framework

protocol

that

then

you

can

plug

in

you

know

individual

cryptographic

technologies,

so

it's

compatible

with

the

basic.

What

these

things

called

verifiable

should

be

application

functions

which

we'll

be

talking

about,

but

initially

it's

tuned

to

work

with

prio

and

heavy

hitters.

F

It's

built

on

top

of

https.

It's

going

to

smell

a

lot

like

you

know

any

rest

kind

of

protocol

like

acme

or

whatever

you've

seen

before,

and

so

it's

easy

to

implement

with

physical

services,

infrastructure

and

and

other

people

working

on

this,

like

you

know,

having

work

from

regular

infrastructure

to

design

and

work.

Well

with

this,

so

the

so.

F

I

think

there's

a

number

of

different

playing

modes

it's

compatible

with

one

of

which

is

that

you

know

the

collector

and

the

leader

are

the

same

person

and

they're

trying

to

do

it.

The

data

collection

and

they

outsource

the

helper

job

to

one

other

person

so

that

they

can

make

guarantees

about

the

privacy

of

the

system.

Another

possibility

is

the

whole

is

a

whole.

Like

leader,

collector

helper

box

is

like

a

service.

That's

provided

people.

F

The

trust

model

here

you'd,

be

assuming

is

that

the

clients

have

to

know

what

the

helpers

leader

are,

and

so

the

clients

know

who

the

who,

who

the

data

is

being

encrypted

for,

and

so

they

can

make

their

own

assessment

of

whether

or

not

they

trust.

One

of

those

people,

though,

of

course

in

a

real

world

scenario.

F

So

you

know

people

are

actually

going

to

ask

like

what

like

the

situation.

Oh

hi

is

because

oh

hi,

as

I

mentioned,

is

useful

for

many

of

these

kinds

of

settings.

These

are

complements

and

not

substitutes.

So

you

know

good

cases

for

ohi

are

like,

as

I

say,

sort

of

standing

sensitive

data,

this

kind

of

rich

freeform

data

that,

like

you,

couldn't

really

you

know,

aggregate

this

way.

Anything.

F

You

can

use

ohio

talk

to

ppm

server,

that's

like

boosting

the

privacy

of

the

system.

So,

like

you

say,

okay

well,

I

do

want

to

collect

this

data,

but

I

actually

store

an

ip

address,

so

you

can

remove

that

as

well,

and

so,

in

fact,

I

think

you'll

see

you'll

see

some

of

the

some

of

that.

Like

later

talks,

you

can

also

use

sort

of

a

front-end

proxy

server

to

do

a

bunch

of,

like

you

know,

kind

of

misuse.

F

Detection

of,

like

you

know,

spamming,

attacks

and

stuff

like

that.

So

so

I

think

these

are

a

complimentary

techniques,

not

but

not

not

competitive

techniques,

which

is

why

you

see

some

of

the

same

people

working

on

them.

I

don't

know

why.

Oh

yeah

right,

I

was

like.

Why

do

I

have

two

more

slides,

so

I'm

now

done.

I

think

I

hit

my

target

time

target

quite

well.

I

have

a

little

time

for

questions

which

I'd

be

happy

to

take.

F

E

Boss,

thanks

good

presentation,

I

enjoyed

you

laid

it

out

really

well,

the

one

thing

that

I'd

like

to

hear

more

about

is

sort

of

the

deployment

scenario,

for

example

on

slide

14,

don't

go

back

you

you

specifically

said

the

client

wants

to

report

some

value

right.

So

would

your

expectation

be

that

application,

authors

and

and

servers

would

sort

of

make

use

of

the

same

way

that

sort

of

ohio

is

considering

being

deployed

by

you

know

various

organizations

to

get

into

stuff

or

that

you

know

doe

is

being

used

for.

E

F

I

think

so

I

think

I

think

the

most

likely

settings

for

this

initially

will

be

the

kind

of

like

kind

of

measurements

that,

like

people

are

already

taking

via

the

software

disseminate.

So

you

know

things

like

browser

telemetry.

You

know,

I

think

the

the

case

of

the

case

of

the

the

tim.

What

we

talk

about

next

involves

measurement

of

covert

exposures

and

yeah.

Those

things

are

all

being

done,

like

sort

of

sort

of

like

you

know

automatically.

F

You

know,

potentially

by

asking

these

are

automatically

by

the

software.

I

think

there's

some

possibility

that

in

the

future

you

know

you'd

see

like

this

used

for

surveying

for

direct

surveying.

Where

you

say,

okay,

you

know,

are

you

willing

to

participate

in

surveys

and

then

we'll

we'll

write

down

the

client?

And

you

can

do

it,

but

I

mean,

like

you

know

the

the

I

mean

I

think

yeah.

You

know

these

are

pull.

F

F

Those

are

very

dangerous

right

because,

if

you

like

collect

like

every

url

somebody

goes

to,

if

this

is

behaving,

then

there's

a

real

probability

that

you

learn

a

bunch

of

like

say:

google

docs,

you

know

capability

urls

or

you

learn.

You

know

you

know

some

document

quality.

This

is

like

my

plan

to

buy

company

a

you

know

so

yeah.

B

F

I

I

All

right,

thank

you,

okay,

so,

let's

get

started.

My

name

is

tim

and

I'm

an

engineer

at

the

internet

security

research

group

we're

the

non-profit

that

operates

the

let's

encrypt

certificate

authority,

not

to

be

confused

with

the

irs

g.

Incidentally,

the

dilemma

that

mr

riskorla

just

introduced,

in

which

the

essential

function

of

gathering

telemetry

from

the

field

introduces

significant

privacy

risks

for

users

is

of

great

interest

to

the

isrg.

I

Given

our

mission

of

reducing

barriers

to

secure

and

private

communications

on

the

internet

next

slide,

please

so

echo

covered

that

you

know

the

ways

in

which

telemetry

is

a

privacy

risk

for

users

and

the

you

know

the

implied

benefits

to

users

of

using

these

new

technologies.

But

I

think

it's

worth

noting

that

many

data

collectors

also

want

to

do

the

right

thing

and

respect

the

privacy

of

their

users.

Besides

that

being

a

decent

thing

to

do.

I

The

large

amount

of

personal,

identifying

information

stored

by

conventional

telemetry

systems

is

a

significant

liability

for

the

data

collector.

There's

new

privacy

regulations

emerging

in

various

jurisdictions,

all

the

time

which

require

expensive

and

complicated

controls

around

user

data

and

all

that

pii

makes

for

a

very,

very

tempting

target

for

attackers.

I

I

So

we

envision

running

a

standards

compliant

aggregator

as

a

service,

with

the

same

focus

on

automation

and

ease

of

integration

that

drive.

Let's

encrypt.

We

expect

that

some

customers

will

want

to

run

their

own

aggregator

and

have

it

work

with

ours,

but

others

will

want

to

avoid

running

any

servers

at

all

and

will

instead

choose

two

existing

aggregators,

say

one

run

by

isrg

and

one

run

by

some

other

organization

or

a

company

that

chooses

to

participate.

I

So

in

support

of

that,

we

are

hoping

to

provide

an

open

source

implementation

of

a

ppm

aggregator

with

the

aim

of

making

it

easy

for

some

data

collector

to

interoperate

with

isrges

aggregator

or

anybody

else's.

So

hopefully

it

ends

up

being

a

matter

of

grabbing

a

container

image

from

some

public

registry.

I

We

are

also

aiming

to

provide

open

source

client

libraries

targeting

like

a

variety

of

languages

and

frameworks

chosen

to

you

know,

facilitate

adoption

for

the

most

likely

interested

parties.

So

you

know

you

can

imagine

like

a

swift

sdk

for

ios

apps

javascript

for

web

app

for

a

single

page

application

on

the

web

and

so

on.

I

So

right,

so

with

this

goal

in

mind,

an

open

standard

through

the

ietf

is

acutely

valuable,

because

a

proprietary

single

vendor

solution

wouldn't

be

terribly

useful,

since

the

privacy

guarantees

are

contingent

upon

independent

and

non-colluding

aggregators

next

slide.

Please

thank

you.

So

we

talked

a

lot

about.

You

know

the

benefits

of

these

new

technologies,

but

there

are

some

trade-offs:

some

drawbacks

to

these

systems.

So

for

one

thing

there.

I

So

it's

more

likely

more

likely

to

fail

somewhat

necessarily.

Second,

the

verification

of

the

proofs

that

introduced

earlier

do

introduce

some

computational

network

overhead

and,

in

particular,

we'll

introduce,

depending

on

which,

which

protocol,

which

vdaf

is

in

use,

will

introduce

potentially

multiple

rounds

of

communication

between

the

aggregating

servers.

I

Finally,

metrics

gathered

under

these

schemes

are:

these

skins

are

necessarily

less

flexible

than

conventional

telemetry

systems.

You

can't

make

arbitrary

post-hoc

queries.

Sorry,

you

can't

make

arbitrary

queries

post-hoc

against

your

corpus

of

data.

You

have

to

know

up

front

before

you

begin

collecting

any

data.

What

are

the

aggregations

you're

interested

in

computing?

This

has

to

do

with

the

construction

of

the

proofs,

as

well

as

enforcing

some

of

the

privacy

guarantees

of

the

system.

I

So

so

en

explorer.

Verifications,

of

course,

is

the

system

by

which

mobile

devices

can

sort

of

anonymously

exchange

with

each

other

to

a

cove

exposure.

Enpa

allows

back

anonymously

and

privately

back

hauling

that

data

to

your

regional

public

health

authority

so

that

they

can

get

information

on

how

many

people

are

getting

the

notifications,

how

many

people

are

on

their

mobile

devices,

how

many

people

are

interacting

with

them

and

all

sorts

of

interesting

metrics

about

the

spread

of

code

itself,

as

well

as

the

effectiveness

of

the

of

the

en

system.

I

So

this

is

currently

deployed

in

13,

u.s

states

and

the

district

of

columbia,

and

at

the

moment

it's

gathering

2.1

million

measurements

per

hour.

We

also

heard

last

night

if

there

was

one

more

interesting

number,

that

sometime

over

the

night,

we

gathered

12

billion

individual

metrics

that

have

been

aggregated

since

the

system

launched

we're

also

about

to

deploy

this

internationally.

So

beyond

the

united

states,

so

we're

hoping

to

soon

turn

this

on

in

four

states

in

mexico.

Okay,

I'm

already

well

over

time,

so

I

will

see

the

floor.

B

J

Okay.

So

I'm

going

to

talk

very

briefly

about

some

of

the

use

cases

in

advertising,

specifically

the

conversion

measurement

one.

I

think

charlie's

going

to

follow

up

with

some

more

details

on

on

this

one.

So

conversion

measurement

is

something

that

happens

on

the

web

quite

a

bit

when,

when

someone

shows

an

advertisement,

it's

kind

of

nice

to

know

if

that

advertisement

is

having

the

intended

effect.

J

J

So

the

way

this

works

today

is

pretty

simple:

we

assign

a

user

an

identifier,

so

everyone

gets

their

own

unique

identifier

and

every

time

they

visit

a

website

and

an

advertisement

is

shown

or

they

do

something.

Then

we

just

create

a

little

little

log

record

that

records

all

the

details

from

the

context

and

the

user

identifier

time

stamps

all

those

sorts

of

other

things.

J

And

then

you

look

at

the

log

and

you

can

answer

all

sorts

of

questions

about

what

people

have

done.

It's

it's

really

great

for

getting

the

information

that

you

need.

It's

all

quite

precise

and

leaving

aside

all

of

the

complications

of

anti-fraud

and

all

those

sorts

of

other

things

you

can

answer

all

the

questions

that

you

have

fairly

precisely.

J

The

current

status

of

this

work

is

that

there's

lots

and

lots

of

requirements

that

are

coming

through.

This

is

obviously

a

lot

more

complicated

when

it

comes

into

practice.

There's

lots

of

ideas,

people

have

all

sorts

of

wonderful

proposals

and

some

competing

requirements,

but

a

lot

of

the

really

promising

ideas

include

something

like

what

ekka

described

earlier.

J

B

K

Let's

see

if

this

works

great,

you

guys

can

see

it

yep,

okay,

great

hello,

everyone.

My

name

is

charlie

harrison.

I'm

a

software

engineer

working

at

google

looking

at

ppm

like

solutions

for

doing

ads

measurement

on

the

web

to

satisfy

similar

use

cases

that

martin

was

just

talking

about

so

I'll

kind

of

breeze

through

some

of

the

background.

K

Is

that,

like

many

ads

use,

cases

are

actually

like

totally

fine

with

aggregate

data

and

they

don't

actually

need

to

track

you

kind

of

around

the

web

and

learn

all

the

data

exactly

we,

we

could

be

fine

with

aggregate

data

in

many

cases,

so

I

I

want

to

go

over

just

in

a

little

bit

more

detail.

How

attribution

measurement,

which

is

also

called

conversion

measurement,

happens

today

with

cookies,

essentially,

and

I'm

sure

this

is

going

to

be

familiar

for

many

people.

K

So

you'll

read

this

cookie

when

an

ad

is

placed

and

you'll

also

read

this

cookie

when

there's

a

conversion

like

when

you

buy

something

later

on

down

the

road

after

you've

seen

an

ad

for

it

and

so

right

now,

this

cookie

id

is

used

as

the

join

key

to

join

these

two

cross

site

events

right

and

but

they

they

they

join.

Arbitrary

events,

so,

like

all

of

your

browsing,

can

be

linked

up

to

this

one

cookie

in

theory.

K

How

could

it

be

improved?

We

could

internally

join

this

data

in

the

browser.

So

when

you

see

an

ad,

we

could

register

something

in

like

custom,

new

browser

storage

and

when

you

buy

something

that

was

like

pointed

to

by

that

ad.

That

would

join

up

with

that.

With

that

event

in

the

internal

browser

storage-

and

you

could

have

a

communication

path

from

the

browser

to

something

like

ppm,

where

we

we,

you

know,

we

have

this

data,

we

want

to

compute

something

like

a

histogram.

K

Maybe

we

want

to

learn

something

like

you

know:

what

are

the

counts

of

conversions

like

purchases

per

ad

campaign,

so

the

x-axis

is

ad

campaign.

The

y-axis

is

the

number

of

conversions

we

can.

We

can

use

ppm

to

kind

of

generate

data

data,

share,

split

that

encodes,

that

histogram

contribution

send

that

up

to

ppm

and

the

ad

tech

could

learn.

Aggregate

statistics

like

just

a

histogram

of

of

the

of

the

counts,

and

this

doesn't

reveal

any

user

data

directly.

K

K

So

that's

something

we're

looking

into

there's

a

lot

of

interesting

research,

that's

related

to

kind

of

the

heavy

hitter

stuff

about.

How

do

we

report

these

histograms

when

they're?

Like

really

really

really

big,

like

you,

are

running

millions

of

campaigns

or

you

want

to

do

like

all

sorts

of

different

crosses

we're

really

interested

in

systems

that

can

help

train

machine

learning

models

in

some

cases

there's

there

are

results

that

show

that

even

just

aggregate

histograms

could

be

used

to

train

like

logistic

models.

K

But,

like

are

like

we're

looking

into

more

sophisticated

mechanisms.

There's

you

know

in

rather

than

conversion

measurement.

We

could

look

at

reach

measurement,

which

is

asking

like

how

many

distinct

users

saw

my

ad

across

many

different

websites.

So

this

is

kind

of

like

removing

a

like

remove,

duplicates

operation,

and

you

know

we

we're

we're

interested

in

exploring

like

you

know.

K

B

M

L

All

right

so

yeah

this

is

this

is

pretty

different,

so

ecker

talked

about

protocol.

We

had

a

couple

talks

about

use

cases,

I'm

here

to

talk

about

kind

of

the

substrate

and

the

the

label

we

are

using,

for

this

is

verifiable,

distributed

aggregation

functions

thanks

to

chris

patton.

For

that

that

name

context

here

is

a

like

many

things

in

the

in

the

ietf.

We

need

some

complicated

crypto

for

this,

and

so

we're

doing

some

parallel

work

in

cfrg,

and

it

goes

alongside

the

crypto.

L

Now,

if

you

look

at

the

draft,

it's

kind

of

in

two

parts,

we

define

an

api.

That

is,

the

abstraction

ppm

is

supposed

to

rely

on.

So

the

idea

is

that

we

have

multiple

instantiations

that

do

various

flavors

of

this

private

aggregated

measurement,

dance

that

all

behave

in

a

close

enough

way

that

we

can

define

a

common

api

over

them

and

build

protocol

around

that

api,

and

this

provides

a

way.

L

You

know

that

the

computation

of

that

aggregate

is

distributed

over

those

aggregators

and

the

privacy

properties

of

the

individual

measurements

are

assured

by

non-collusion

among

those

aggregators

and

finally,

it's

verifiable

in

the

sense

that

the

aggregators

can

check

that

the

inputs

have

meet