►

From YouTube: IETF112-AVTCORE-20211112-1200

Description

AVTCORE meeting session at IETF112

2021/11/12 1200

https://datatracker.ietf.org/meeting/112/proceedings/

A

A

A

B

You

do

need

a

data,

tracker,

login

and

hopefully

that

all

worked.

I

know

it

was

problematic.

Earlier

in

the

week

you

can

join

the

session

dragon

room

jabber

room

via

the

meeting

agenda,

although

I'm

not

sure

why

you'd

need

to

please

use

headphones

or

an

echo

canceling,

speakerphone

and

state

your

full

name

before

speaking,

so

that

we

can

get

it

in

the

notes.

B

B

B

B

B

B

A

A

As

of

about

an

hour

ago.

This

noticed

incorrect.

We

needed

we

needed

an

updated

draft

because

we

decided

to

change

it

to

experimental

well

published

that

this

morning.

So

now

I

will

do

the

write-up

on

that

and

hopefully

that

should

get

done.

We

dropped

petra

a

while

ago.

He

probably

dropped

this

from

the

draft

status

too,

the

next

as

a

next

time.

A

A

I'll

go

multiplexing

right

right

right!

Yes,

yes,

so,

but

we,

I

think

all

our

other

milestones

are

going

to

be

discussed

here

today,

so

yeah,

so

vvc

is

both

adopted

and

in

first

working

group

last

call

there

were

a

lot

of

comments

there

from

mostly

from

the

vc

community

itself.

So

it's

great

that

they're

participating

here

and

we'll

just

definitely

be

talking

about

that

later.

B

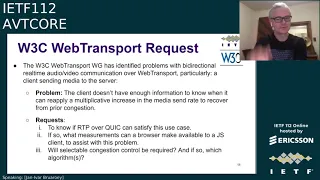

All

right

so

now

I'm

going

to

talk

briefly,

hopefully

about

recent

liaison

statements.

We

have

a

request

from

the

w3c

web

transport

working

group

and

yanivar

is

here

to

talk

a

little

bit

about

that,

and

then

we

have

a

liaison

from

iso

iec

jtc

one

sc29

working

group,

three

about

green

metadata,

so

yanivar.

E

E

B

Yeah,

I

would

I

would

caution

yanivar,

that

rtp

over

web

transport

or

is

a

little

bit

different

from

rtp

over

quick,

so

maybe

we'll

get

into

that

in

the

in

the

session

to

follow,

because

it's

not

as

tightly

coupled.

But

so

I

guess,

can

you

raise

some

of

these

issues

in

the

discussion

that

follows

and

we'll

try

to

see

where

to

go

from

there?

Does

that

make

sense.

B

A

G

Can

you

hear

me

so?

Yes,

we

can.

Yes,

I

can

speak

on

that.

My

colleagues

worked

on

this,

so

if

it's

okay,

I

can

go

through

please

so

this

three

epic

systems

and

they

would

like

to

point

an

update

on

the

progress

of

this

standard

2301-11,

which

is

energy,

efficient,

media

consumption,

green

metadata.

This

has

been

so

2015,

19,

first

and

second

editions

and

now

the

third

edition

is

currently

in

the

committee

drafts

series

status

and

just

as

an

overview

in

general.

G

This

standard

provides

various

type

of

metadata

that

enable

management

of

there

are

these

four

bullets:

management

of

decoder,

power,

consumption

or

display

power,

consumption

and

for

offline

applications,

or

for

like

adaptive

streaming

or

dash

applications.

It

provides

media

selection,

metadata

and

also

quality

recovery

for

video

encoding

power

consumption,

so

different

kind

of

metadata

are

provided

in

this

specification

and

recently.

The

third

edition

of

this

standard,

which

is

which

is

pointed

to

in

this

ls,

provides

a

bunch

of

new

features.

G

For

example,

these

three

bullets

say

here:

there

is

now

interactive

signaling

for

remote

decoder,

power

reduction

that

gives

better

power

reductions

and

also

vvc

sci

messages

for

carrying

green

metadata

related

to

complexity

metrics.

This

has

been

added

and

also

we

will

see

seo

that

can

also

carry

metrics

for

quality

recovery

after

low

power

encoding.

So

a

bunch

of

new

features

have

been

added

and

hope

that

this

information

is

of

interest

to

this

group,

and

we

can

consider

using

this

in

this

group.

G

These

different

metadata

have

all

a

very

different

shape

and

form

some

are

for

live

applications.

Some

are

for

a

stream

adaptive

streaming

applications

and

some

are

forward

direction

from

center

to

receiver.

Some

are

from

receiver

site

to

the

sender

side,

so

my

imagination

is

that

the

the

metadata

that

is

to

be

transferred

from

receiver

to

the

center

side-

that's

something

especially

where

you

know

some

container

formats

and

so

on

might

be

of

interest.

C

G

G

H

G

B

A

Yeah,

I

mean,

I

think

you

know

if

you

know

in

so

far

it's

I

guess.

There's

not

one

of

the

elements

is

the

receiver

to

send

her

feedback.

So

I

think,

if

somebody

is,

you

know

interested

in

submitting

a

draft

on

that.

I

think

we

could

certainly

you

know

be

you

know

interested

in

them.

You

can

take

up

with

the

if

the

work

group

wants

to

do

it,

but

I

think

yeah,

certainly,

I

think

any

sort

of

receiver

to

send

our

feedback

over

rtcp

is

in

scope

for

this

group.

A

G

H

So,

just

just

one

remark

that

if,

if

someone

were

interested

in

this

type

of

stuff,

then

of

course

the

forward

direction

is

also

something

I

mean

a

lot

of.

This

gets

as

far

as

I

understand.

A

lot

of

this

can

get

piggy

packed

into

the

codec

into

the

codec

bit

stream,

using

sai

messages

and

such,

but

it

could

also

be

multiplexed

by

simply

creating

its

own

payload

format

right

and

that

way

it

may

be

applicable

also

to

non-epic

standards.

H

I

I

know

we

had

the

the

rmcat

group,

which

tried

to

do

a

bunch

of

algorithms

and

I'm

not

sure

those

algorithms

directly

apply

to

quick.

So

I'm

not

suggesting

we

take

the

work

there,

but

I'm

I

was

wondering

if

we

should

maybe

have

a

broader

conversation

about

where

we

do:

media

congestion

control

over

quick

and

whether

that's

of

rtp,

specific

or

a

more

general

conversation.

B

A

J

J

J

Both

of

the

drafts

use

the

unreliable

datagram

extension

with

the

flow

identifier

to

demultiplex

different

rtp

sessions,

and

our

draft

focuses

a

bit

more

on

congestion

control

and

the

interface

requirements

for

quick

implementations

and

condition.

Controlling

and

yeah.

Both

drafts

use

sdp

for

signaling.

K

K

J

Okay,

yeah,

that

is

because

in

udp,

one

would

probably

typically

use

different

udp

ports

and

do

different

rtp

sessions

over

different

udp

ports

and

identify

them

by

that

and

in

quick.

We

can

have

the

itp

sessions

in

one

connection

and

then

try

to

use

all

of

the

information

we

have

available

in

that

one

connection

to

you:

use

for

different

rtp

sessions

at

the

same

time

like,

for

example,

the

congestion

control

information.

I

Yeah

I

mean

there

was

a

comment

in

the

chat

about

not

understanding

what

rtp

session

meant.

In

this

context

I

mean

you

know:

rtp

session

has

a

very

well

defined,

meaning

in

in

the

rtp

spec,

and

we

need

to

make

sure

that

that

what's

meant

here

is

consistent

with

that

meaning

and

it's

not

just

being

used

as

a

as

an

alternative

to

the

ssrc,

for

example,

for

demonstrating

users.

I

J

Okay,

then,

maybe

I

continue

with

the

presentation

on

congestion

controlling

first

and

we

keep

that

on

a

later

discussion

yeah.

So

today

we

want

to

focus

on

the

congestion

controlling,

and

we

identified

a

couple

of

questions

which

come

up

when

we

do

rtp

over

quick

and

first

is

that

we

have

congestion

control

in

quick

and

rtp

and

quick

suggestion

to

use

an

algorithm

similar

to

neurano.

J

J

J

J

We

would

like

to

use

the

quick

connection

stats,

which

are

already

available

in

the

transport

protocol

to

reduce

the

rtcp

overhead

and

in

quick.

We

have

the

datagram

draft,

which

allows

an

implementation

to

expose

the

datagram

acknowledgments

to

the

application

and

we're

using

this

to

identify

which

rtp

packets

have

arrived

at

the

receiver.

J

We

keep

track

of

the

send

rtp

packets

and

in

which

datagrams

they

were

sent

and

at

what

time

they

were

sent.

So

we

have

the

timestamp

and

as

soon

as

an

acknowledgement

arrives,

we

know

that

this

rtp

packet

has

been

received,

and

then

we

also

use

the

rtt

samples

provided

by

the

quick

connection

statistics

to

infer

a

receive

timestamp

at

which

the

packet

arrived

at

the

receiver

by

just

adding

half

of

the

rtt,

the

last

known

rtt

to

the

same

timestamp

we

kept

track

of

earlier.

J

J

L

Yes,

I

had

a

question

about

the

the

time

stamp

feedback.

From

from

what

I

understand,

the

the

purpose

of

that

in

rtcp

is

for

congestion

controls

to

understand

the

one-way

delay,

to

understand,

variance

in

one-way

delay

and

those

delay-sensitive

algorithms

can

use

that

high-fidelity

one-way

delay,

inferencing

from

that.

But

if

you're

basing,

if

you're

trying

to

synthesize

that

using

round

trip

times,

it

seems

like

you've

lost

the

one-way

delay

aspect

of

that

which

may

be

important

for

some

of

those.

Some

of

those

congestion,

control,

algorithms,.

J

J

We

configured

a

bandwidth

limit

of

one

megabit

per

second

and

we

run

the

experiments

with

different

one-way

delays

and,

after

60

seconds

of

a

video

run

for

video

transmission.

We

reduce

the

available

link

capacity

to

500

kilobits

per

second

to

see

how

the

algorithms

and

the

transport

react

to

this,

and

then

our

application

locks

incoming

and

outgoing

rtp

and

rtcp

packets

for

analyzers

afterwards

and

will

to

calculate

psnr

and

sm

statistics

on

the

raw

video

files

next

slide,

please.

J

B

M

B

J

So

everything

goes

through

the

both

combustion

controllers

and

we

see

that

in

the

first

part

of

the

video

transmission,

it

seems

to

behave

very

similar

to

the

previous

slide,

but

that

probably

is

due

to

application

limited

transmission.

So

as

long

as

screen

is

sending

less

data

than

urinal

would

then

we

are

in

this

application,

limited

state

where

neurano

doesn't

really

do

much

yet,

but

as

soon

as

then,

the

link

capacity

is

reduced

to

500

kilobits

per

second

for

30

seconds.

J

We

see

that

both

congestion

controllers

try

to

adapt

to

the

new

link

capacity

and

the

the

screen

target.

Bitrate

drops

to

almost

zero,

and

the

ramp

up

also

does

not

happen

at

all

in

the

case

of

a

one

millisecond

delay.

It

may

be

that

that

happens

much

later

if

we

looked

into

it

for

a

longer

time,

but

this

is

already

not

really

usable.

So

we

didn't

investigate

that

further

and

in

the

second

case

our

one-way

delay

was

15

milliseconds.

J

J

We

generate

the

feedback

at

a

fixed

interval

and

we

think

that

the

instability

in

the

last

experiments

are

due

to

this

fixed

generation

of

feedback

which

may

lead

to

some

acknowledgements

from

quick

arriving

after

we

generate

the

next

feedback.

Even

though

the

rtp

packet

has

already

been

received

by

the

receiver

and

due

to

the

delayed

and

aggregate

x,

they

only

arrive

a

little

bit

too

late

at

our

sender,

so

that

some

feedback

is

not

included,

which

leads

to

feedback

which

would

be

less

precise

than

the

one

rtcp

could

provide.

J

We

run

all

the

experiments

again

with

the

second

stream

opened

and

quick

this

time,

not

datagrams,

but

the

real,

quick

stream

which

sends

constant

data,

and

in

this

case

we

see

that

the

application

probably

needs

some

prioritization

between

real-time

data

in

quick

data

grams

and

background

traffic.

Within

the

same

connection

as

in

our

experiments,

we

see

that

the

target

bitrate

quickly

degrades

and

it

might

even

starve

because

of

the

background,

data

and

yeah.

There's

done

probably

some

better

scheduling

of

prioritization

necessary.

J

J

And

then.

The

last

point

is

that

some

prioritization

is

necessary.

As

we've

seen

on

the

last

slide

yeah

in

the

future.

We

plan

to

do

some

more

experiments

on

different

congestion,

control,

algorithms

and

different

forms

of

computing

traffic

and

different

network

topologies,

and

we

would

also

like

to

try

to

find

some

way

to

do

better,

prioritization

than

no

prioritization

at

all

to

see

how

we

can

use

one

quick

connection

for

real-time

data

and

non-return

data.

At

the

same

time,.

N

Yeah

this

is

this

is

really

good

work,

I'm

glad

to

see

this

kind

of

moving

forward.

I

guess

I

have

three

points

here

that

I

think

are.

It

would

be

good

for

us

to

try

to

limit

scope

here.

One

would

be

you

know.

Is

there

anything

besides

receive

time

spawn?

We

need

to

have

the

same

information

that

rtp

or

what

we

had

here

as

udp

would

have,

because

if

I

think,

if

we

get

there,

then

we

can

feel

pretty

confident

that

we

have

everything

at

a

protocol

level.

N

You

need

to

do

a

good,

real-time

congestion.

Control.

Second

thing

is

that

you

know

I

think,

trying

to

prioritize

real-time

and

non-real

time.

Right

now

might

be,

I

think,

a

lot

and

we

might

just

say,

open

a

different,

quick

connection

or

non-real-time

stuff,

because

that's

how

it

works

today

and

that's

that

that's

where

we

stop,

but

that

might

be

a

good

milestone

of

saying

that

we

just

basically

replaced

the

entire

congestion

controller.

N

If

we

do

rpp

flows

today

and

get

that

working

over

quick-

and

I

think

that

would

be

a

pretty

good

accomplishment

and

then

last

one

thing

we

haven't

really

talked

about

here

is

sort

of

overhead

of

how

many

more

bytes

are

we

putting

on

the

wire

for

each

rtb

packet,

you

know

and

where

are

we

sending

redundant

rtc

feedback

and

it'll

be

good

to

sort

of

dive

into

that

and

see.

You

know

what

those

numbers

are

and

then

we

start

thinking

about.

Is

there

anything

that

can

be

done

to

mitigate

that.

A

P

P

A

Q

Q

Q

R

Q

Yeah

because,

for

example,

I

doubt

that

we

can

just

disable

the

the

quick

confession

control

and

let

the

application

run

on

javascript

just

do

behave

properly,

so

we

will

have

to

find

a

way

of

trying

to

have

both

working

simultaneously.

You

know,

I

think

that

it

was

sometime

proposed

that

have

some

good

breakers

in

case.

F

B

I

guess

the

one

thing

that

wouldn't

potentially

be

exposable

is

the

detailed

act

data,

because

in

a

browser,

basically,

you

can't

assume

that

that

the

acts

reflect

the

application

what

it

got,

but

maybe

the

the

timestamp

and

other

info

from

the

acts

could

be

could

be

provided

up.

I

guess

and

and

originally

in

web

transport

we

did

have

the

concept

of

adding

a

congestion

control

selector

to

the

constructor,

and

I

guess

to

justin's

point

that

would

be

on

a

quick

connection

level.

B

J

O

The

question

I

probably

have-

or

the

suggest

I

have

is-

this

draft

did

not

actually

flag

avt

core

in

the

file

name

and

I've

been

having

some

conversations

on

the

media

of

a

quick

mailing

list

which

is

only

available

about

about

another

draft.

That's

relevant

here

that

doesn't

have

avt

core

in

the

name.

It

was

focused

on

the

svp

for

or

keeping

over

quick,

but

recognizing

that

that

was

going

to

have

know.

O

O

You

all

can

think

about

that

and

yeah

yeah

sure

the

the

discussions

we've

been

having

for

my

draft

in

this

space

and

the

over

in

the

mock

mailing

list

are,

I

think,

they're

definitely

ready

to

move

into

abc

core

or

a

group

like

that,

rather

than

kind

of

an

ad

hoc

overall

media

over

a

quick

discussion.

Yeah

I

mean.

A

I

Yeah

hi,

so

I

mean

there's

a

bunch

of

discussion

in

the

chat

about

short-term

and

long-term

approaches,

and

I

think

that's

important.

I

think

we

should

make

sure

we

have

that

broader

architectural

conversation

about

where

we

want

to

go,

rather

than

necessarily

just

sleepwalking

into

into

reusing

our

tp

here.

I

The

the

the

actual

reason

I

I

got

up

to

the

mic

was

you

know

the

comments

about

using

separate

connections

to

avoid

having

the

the

congestion

control.

Prioritization

discussion,

I

mean

separate

connections,

causes

quite

a

lot

of

pain

in

in

the

current

model,

and

we

we

should

have.

It

have

a

deliberate

discussion

about

whether

we,

we

think

that's

acceptable

pain

or

whether

we

want

to

make

conscious

effort

to

reduce

that

and

try

to

make

everything

run

over

a

single

connection,

even

if

it

means

more

congestion,

control

and

prioritization

work.

C

J

C

J

Yeah,

that's

probably

true.

We

were

planning

to

or

our

current

test

sub

setup

is

kind

of

inspired

by

the

test

cases,

evaluation

for

congestion

control

from

rmcat,

but

they

don't

completely

implement

all

of

it

and

yeah.

As

I

said,

we

would

like

to

do

more

experimentations

with

that,

and

then

we

should

probably

also

include

bi-directional

streams.

There.

A

N

O

N

Wanted

to

add

on

to

comments

point

where

he

sort

of

mentioned

that

well,

maybe

we

should

take

on

you

know

prioritization

know,

I

think

that's

for

any

specific

work

in

this

area.

We

probably

want

to

have

a

good

sense

of

what

our

goals

are.

You

know

what

would

count

enough.

Will

we

count

as

like

a

reasonable

v1?

N

What

what

things

do

we

think

we

have

to

do?

I

think

this

author

has

shown

you

can

get.

You

know

some

results,

even

just

now,

just

by

sort

of

encapsulating

rtp

within

quite

datagram,

but

what

would

be

necessary

for

us

to

kind

of

consider

is

something

that

we

want

people

to

play

on,

whether

it's

overhead,

whether

it's

packet

rate

optimization.

N

A

Okay

and

we're

kind

of

over

time,

but

this

is

dairy

with

a

lot

of

interest,

so

I

figured

we

would

let

us

go

over,

but

thank

you

all

and

I

guess

discussion,

probably

on

the

abt

list

or

the

mock

list,

or

both,

probably

if

you're

interested

should

be

subscribed

to

both.

But

hopefully

we

can

try

to

converge

on

where

we're

having

the

discussion.

A

Q

Okay,

so

we

have

been,

I

put

a

new

draft

for

greatness,

that

it

is

does

to

clarification.

One

was

the

authentication

after

encryption

and

also

how

the

what

the

they

mean.

What's

the

minute

of

the

crisis

attribute

when

it

is

present

with

with

with

bundle,

it

does

not

change

the

anything

on

the

draft.

It's

just

clarification

say

that

were

needed

because

it

was

not

clear

at

the

moment

and

regarding

the

implementation

status,

we

still

have

only

two

implementations.

Currently

one

is

done

by

you

jonathan

and

that.

Q

H

H

I

I

think

the

real

test

will

come

when

the

thing

goes

out

to

publication

request

and

all

these

security

guys

come

out

of

the

woods

and

and

talk

about

the

newest

trend

that

they

want

to

see

in

the

security

sections

and

similar,

maybe

the

congestion

control

guys

I

don't

know

so

yeah

and

well.

So

what

we

will

be

doing

is

we'll

publish

one

more

revision

of

the

bbc

draft

pretty

soon

I

gave

you

a

target

date

mid

of

the

month,

but

we

will

do

that

earlier.

H

I

think,

probably

as

soon

as

later

today,

and

then

we

suggest

that

maybe

another

working

group

last

call

is

adequate.

Since

the

text,

changes

are

not

insubstantial,

although

they

are

deep

down

in

the

details

where,

where

the

bbc

codec

maps

to

the

offer

answer

mostly

and

then

I

think

we're

ready

for

after

that,

working

plus

call

for

publication

request

next

slide.

Please.

H

Thanks

bernard

now,

this

was

an

issue,

that's

more

a

generic

question

that

was

triggered

by

the

banner

by

a

comment

from

belmod,

where

he

said

there

are

no

complete

examples

of

the

stp

of

our

answer.

Negotiation

in

the

draft

that

was

in

in

the

context,

I

think,

mostly

of

the

scalability

related

rather

complex,

of

answers,

scenarios

that

this

draft

would

allow,

and

that

raises

a

little

bit

the

philosophical

question

of

what

good

do.

H

I

think

that

people

simply

take

the

examples,

as

shortcuts

of

for

you

know,

for

for

the

way

how

things

are

to

be

done

and

don't

really

get

a

full

understanding

of.

What's

going

on,

we

had

this

discussion

before

a

few

years

ago

or

decades

ago,

or

so,

and

in

for

the

hvc

payload

there

we

later

on,

decided

not

to

go

for

full

examples,

and

I

just

want

to

kind

of

reconfirm

that

that's

the

right

thing

to

do.

H

H

B

B

H

I

completely

agree,

I

think,

the

the

disagreement.

If

there

is

any

I

don't

know,

the

disagreement

is

what

the

cure

to

this

problem

is

right.

I

think

fever

is

not

to

to

put

oversimplified

examples

in

a

draft

that

will

be

copy

pasted

in

that

may

be

known

as

correct,

but

may

may

not

be

known

as

applicable

for

the

particular

problem

that

people

have

and

that

people

go

away

from.

H

H

H

L

Yeah,

I

don't

think

you

need

examples

to

spoon

feed

people.

I

don't

think

that's

necessary,

but

in

your

view,

do

you

think

that

the

current

examples

give

the

high

order

bit

of

of

what

what

the

important

part

most

important

parts

of

the

draft

we're

trying

to

convey

in

their

stp?

I

think

that's

the

useful

part,

because

there's

usually

a

lot

of

text

and

it's

usually

you

know,

drafts

that

have

many

parameters

and

and

many

rules

on

all

the

parameters.

It's

it's

hard

to

lose.

L

H

L

L

H

H

We

haven't

wrapped

the

or

one

working

group

draft

this

this

tradition

of

keeper

life

drafts.

I

I

consider

you

know

this

is

this

is

just

not

not

a

good

thing

so,

unless

you

chairs

tell

me

that

we

should

run

keep

a

live,

keeper

live

drafts

for

bookkeeping

purposes

or

something

like

that,

we're

not

going

to

do

it.

However,

the

draft

is

still

on

our

on

our

internal

agenda

and

we

are

committed

to

to

deal

with

that.

H

However,

the

the

way

we

want

to

do

that-

and

that

was

I

think,

greed

in

last

summer-

is

we'll

deal

with

it

once

we

have

learned

the

lessons

and

that's

especially

the

lessons

I'm

expecting

on

the

security

side

during

the

idf

last

call.

So

in

other

words,

what

we

suggest

to

do

is

we

will

produce

version

two

after

we

got

the

bbc

draft

through

the

last

call

and

then

we'll

be

ready

very,

very

quickly

with

working

group

glass,

curl

of

the

ebc

draft.

H

A

H

H

I

C

M

M

I

was

around

for

the

birth

of

that

as

a

combined

department

of

defense

and

vendor

working

group,

the

working

group

was

called

interoperably

controlled

working

group

this.

While

the

skip

protocol

began

in

1994,

there

were

predecessors

to

it.

We

go

back

to

the

pstn

days

of

early

communications

that

the

dod

would

do

over

pstns

and

then

into

is

the

end

and

migrated

into

ip

all

handled

with

a

with

a

community

of

interest.

M

M

The

skip

standards

are

currently

available

to

participating,

government

military

communities

and

select

oems

of

network

equipment

and

call

management

servers

that

support

skip

government

business

entities

must

request

access

to

relevant

information

and

access

to

skip

standards

is

based

upon

a

need

to

need

to

know

now.

Devices

that

implement

skip

standards

transparently

operate

over

digital

carriers

skip

is

an

application

layer

protocol.

It

doesn't

function

down

in

the

network

layer,

the

devices

most

commercial

network

and

security

community

personnel

are

not

aware

of

skip,

and

this

can

result

in

a

skip.

M

Sub

media

type

skip

being

removed

from

the

sdp

of

a

standard,

sip

message,

so

the

lack

of

awareness

among

the

network

and

security

community

has

become

a

larger

issue

as

the

use

of

skip

grows

over

more

commercial

carriers

and

as

network

security

devices

become

more

restrictive

of

unknown

media

as

a

side

story.

To

that,

my

first

exposure

to

to

network

security

was

way

back

in

the

early

cisco

days

of

building

a

firewall

based

on

ip

addresses.

M

M

We

have

come

across

issues

where

the

sd

stp

doesn't

contain

the

transport

that

we

need.

So

the

draft

rfc

submitted

the

ietf

is

designed

to

provide

information

to

network

equipment,

oems

network

administrators

security

personnel

and

to

help

skip

succeed

of

the

commercial

networks

because

skip

relies

on

commercial

equipment

within

the

network

infrastructure

to

operate

and

as

it's

become

security

policies

have

changed,

devices

have

changed

from

being

where

you

go

in

and

decide

what

you're

going

to

not

let

into

your

network.

M

While

it

is

a

gateway

protocol,

it

is,

it

is

meant

to

take

iep

traffic

and

an

isdn

bri

network,

64k

udi

channel

and

build

a

end-to-end

bit

integrity

network

for

the

users

to

use

whatever

they

want.

So

rfc

4040

develops

a

clear

channel.

It

just

happens

to

transverse

go

from

ip

to

isdn.

64K

udi,

so

skip

is

to

be

treated

like

that

on

a

pure

ip

network.

M

This

rfc

is

needed

to

provide

additional

information

for

those

media

subtypes,

where

we

include

the

media

type

description,

the

payload

format,

rtp

header

fields,

payload

format,

parameters

and

the

stp

declaration

as

a

mapping

to

the

sdp

and

provides

mapping

examples.

What

you'll

see

on

the

next

slide,

I

believe

next

slide.

M

M

M

M

That

unawareness

is

partially

tied

to

the

fact

that

this

that

the

body

of

community

that

this

serves

is

one

that

has

a

as

an

already

standing

community

already

has

expanding

set

of

standards

in

which

to

operate.

To

do

this,

so

it

operating

in

that

environment

is,

is

one

that

produces

the

fact

that,

while

we're

defining

the

end

products,

but

we

don't

have

any

way

to

let

the

people

in

the

middle

know

what

we

do.

So

the

draft

rfc

increases

the

etf

awareness

of

the

skip

working

group

in

its

efforts

to

achieve

international

interoperability.

M

So

we

have

to

look

at

our

problem

as

not

just

being

the

end

products

having

interoperability,

because

everyone

else

who

carries

traffic

is

responsible

for

defining.

What's

on,

the

network

is

responsible

for

building

equipment

that

implements

security

plans,

they

need

the

knowledge

to

know

how

to

do

that.

It

even

goes

down

as

far

as

the

procurement

people.

M

If

I,

as

a

network

designer,

want

to

support

this

protocol

on

my

network.

How

do

I

reference

that

to

a

procurement

rep

so

that

they

can

then

write

a

a

request?

For

quote

to

vendors,

saying

this

is

what

your

product

needs

to

support

so,

as

in

the

last

bullet,

the

rfc

provides

information

about

skip

and

the

skip

working

group

community

system

network

architects,

dr

administrators

security

personnel,

oems

risk

analyst

procurement

personnel

necessary

for

skip

to

be

included

in

the

system

security

life

cycle.

M

M

It's

not

the

the

it's

basically

building

a

a

channel

by

which

the

end

devices

will

negotiate.

What

they're

going

to

do,

they're

going

to

negotiate

applications

they're

going

to

negotiate

security

parameters,

so

its

format

itself

is

not

as

clearly

defined

as

if

you

were

carrying

a

movie

traffic

or

729,

or

something

like

that.

A

M

B

M

M

I

would

ask

buber

what

do

you?

Where

would

something

like

this

go,

because

it

is

in

the

end,

it

is

the

standard,

that's

needed

for

end

products

to

communicate

and

the

way

that

they

were

designed,

the

recognition

that

their

far

end

product

is

a

compatible

one

and

then

working

over

a

clear

channel

establish

what

each

of

the

users

are

capable

of

doing

and

want

to

do.

B

B

B

B

K

K

M

S

Good

the

question

regret

in

beginning

of

the

earlier

slides

for

this.

They

were,

as

I

mentioned,

about

the

tsbcis

specification,

it's

kind

of

in

the

same

ballpark.

If

you

will

similar

or

not,

but

not

the

same

as

what

we're

doing.

I

was

kind

of

curious

how

that

was

approval

for

that

was

processed

through

the

group.

A

A

T

I

would

actually

suggest

talking

to

the

media

type

reviewers,

for

that,

and

I

know

murray

is

one

that

wasn't

one

at

that

time.

So

it

might

be

something

where

you

need

to

ask

that,

but

I

actually

got

in

queue

to

ask

a

different

question

which

is

actually

on

this

slide.

If

I

can,

if

I

can

point

to

this

from

my

understanding

of

your

description

in

addition

to

the

two

media

types

that

you

have

in

there,

you

do

have

data

streams

which

you

describe

in

this

slide

as

clear

channel

data.

T

M

T

A

H

I

I

Hi

we

seem

to

be

going

a

little

bit

backwards

in

terms

of

the

way

the

itf

handles

these

sorts

of

documents.

I

I

was

very

surprised

to

see

that

all

that

the

controversy

about

the

the

spec

that

stefan

was

just

talking

about,

because

we've

done

lots

of

payload

formats

which

have

you

know,

had

various

degrees

of

difficulty

in

accessing

the

specifications

over

the

years

so

process

wise.

I

M

I

T

R

Yeah,

just

on

that

point,

the

isg

recently

has,

if

you

look,

there's

there's

a

bcp

97

is

under

revision

for

exactly

this

reason,

we

had

a

number

of

documents

come

to

us

that

we

were

not

able

to

evaluate,

because

the

specification

to

which

it

referred

was

not

available

to

us.

I

think

the

proposal

is

exactly

what

I

think

colin

just

said.

As

long

as

the

reviewers,

the

people

who

need

to

review

it

and

approve

it

can

get

access

to

it.

To

make

sure

this,

the

wrapping

specification

is

right.

R

M

C

M

B

B

U

Also,

it

was

noted

that

the

sram

format

itself

is

has

some

interest

outside

rtp.

So,

for

instance,

you

could

you

could

use

web

transport

or

rtc

data

channel

with

webcodex

and

still

use

this

frame

in

between

to

do

end-to-end

encryption

so

that

that

means

that

we

we

want

integration

with

rtp,

but

we

also

want

a

screen

to

be

used

outside

of

rtp.

U

In

addition

to

that,

on

the

wp

side,

so

the

api

level,

the

webrtc

working

group,

adopted

the

webrt

and

curry,

transform

as

the

first

public

working

draft

and

the

functionality

already

shipped

in

chrome

since

maybe

a

year,

and

it's

also

enabled

by

default

in

software

tech

preview,

and

it

might

be

also

in

in

the

queue

for

other

browsers

as

well.

So

progress

is

being

made

to

add

support

to

allow

webpages

to

to

use

s-frame

or

to

implement

engine

encryption

into

browsers,

so

the

webrt

and

kodi

transform

is

definitely

used

for

end-to-end

encryption.

U

It

was

also

used

for

emulating

emulating

red

if,

if

it's

not

available

in

the

browser,

so

so

we

are

seeing

that

solutions

are

in

browsers,

are

more

and

more

using

that

and

we're

seeing

that

solution.

Existing

webrtc

solutions

are

using,

like

google

do

facetime

webex

there

all

adding

progressively

support

for

energy

encryption

and

they're

all

doing

that

with

different

test

firm

like

formats

and

flavors.

U

So

there's

no

interpolation,

it's

not

the

same

kind

of

technology.

It's

very

related,

but

it's

not

exactly

the

same.

Some

workarounds

are

being

used,

and

so

so

it's

not

great

and

it's

not

great,

because

it's

a

security

privacy

technology

and

usually

having

just

one

well

identified

piece

of

technology

to

do.

That

is

better.

So

next

slide.

U

Based

on

that,

my

understanding

is

really

that

we

need

to

make

progress.

I

think

we

already

had

a

similar

slide

one

year

ago

about

that

saying:

hey

things

are

starting

to

evolve

and

to

an

encryption

is

being

shipped

and

we

need

to

make

progress,

and

it's

even

more

of

a

case

now

so,

and

it's

particularly

particularly

the

case

for

s

frame

within

webrtc

ecosystem

for

web

transport

and

data

channel.

We

still

have

some

time,

but

for

webrtc

ecosystem.

U

It's

it's

really

getting

fat

there,

so

that

means

s

frame,

rtp

integration

and

s

frame

sdp

integration.

My

understanding

in

the

past

was

that

avt4,

for

instance,

would

be

responsible

for

doing

the

srm

rtp

integration,

but

we

are

seeing

email

threads

on

the

sframe

working

mailing

list

about

that.

So

first

question

that

I

have

to

the

avt

core

working

group.

No

previous

slide.

My

first

question

would

be

to

to

be

clear

about:

where

should

that

work

go

or

where

should

it

be

worked

on?

U

A

I

U

Okay,

so

so

it's

great

we're

in

a

ut

core,

we're

discussing

that.

So,

let's

try

to

make

progress.

So

at

last

meeting

we

we

discussed

a

lot

the

packet

versus

frame

issue

and

I

think

that

there

are

common

requirements

in

both

cases.

So

if

we

put

the

packet

versus

frame

issue

aside,

we

can

try

to

focus

on

uncommon

requirements

and

that's

what

we

try

to

do

in

the

next

slides

next

few

slides

so

yeah

the

vs

frame

working.

U

There

was

a

good

discussions

in

base

frame

working

with

making

list

about

various

approaches,

and

basically

I

think

we

we

know

that

middleware

middleware

being

like

sfus

network

intermediaries

or

browsers,

cannot

inspect

encrypted

or

transform

packet

payloads,

but

they

still

especially

as

a

fuse.

They

still

need

information

to

root

and

complete,

transform

packets

separately

and

that's

especially

the

case

in

svc

simulcat

cases,

but

it's

already

the

case,

for

instance

in

in

non-simulcast

cases.

U

So

one

question

was

from

the

main

list:

whether

it

should

be,

if

possible,

just

from

http

inspection

to

determine

that

content

is

encrypted

or

transformed,

and

if

so,

where

should

the

information

be

located?

Should

it

should,

should

it

be

just

a

payload

type,

should

it

be

a

dedicated

head

extension?

U

I

I

U

So

currently,

in

the

current

and

deployed

environments,

the

payload

type

is

defining

the

codec,

so

let's

say

h64

api

8p9

and

we

need.

We

need

at

some

point

this

information

anyway

and

that's

how

it's

being

deployed.

But

we

could

also

say

that

you

know

that's

wrong

and

the

pilot

type

should

say.

Oh

it's

encrypted

content,

or

it's

content

that

you

you

do

not

need

to

care,

it's

opaque

and

that's

one

possibility.

Q

Yeah

I

mean

the

only

thing

is

that

it

was.

I

mean

it

was

right

there

in

the

in

some

discussion

of

the

main

disease

that

maybe

yeah

signaling,

that

in

the

sdp

that

the

content

was.

Even

if

it

is

yes

and

we

don't

change

the

packet

decision

of

the

codec,

just

increasing

the

the

payload

yeah,

the

the

payload

of

the

the

of

the

rtp

packet,

that

it

could

go

from

there

within

the

the

same

pilot

time.

But,

for

example,

me.

Q

R

Q

It

could

be

done

in

a

different

way.

So,

yes,

we

were

seeking

guidance

about

exactly

if

if

this

could

be

an

option-

or

we

should

just

er

if

we

see,

for

example,

shape

and

sending

an

rtp

packet

with

with

payload

the

payload

network

for

vp8,

then

the

payload

must

be

vp8

and

an

unless

view

or

whatever

it

can

expect

it

in

an

exped

to

have

the

the

correct

payload

in

there.

I

So

I

think

that

there's

some

separate

issue

issues

here

I

mean

one-

is

how

you

signal

it,

and

one

is

what

is

the

payload

format?

You

know

the

the

the

the

the

question

on

the

slide

about

here?

Is

this

located

in

the

the

payload

type

or

within

the

itp

pillow

that

I

mean

they're

the

same

thing,

so

you

may

are

you

asking

about

the

way

that

is

included

into

the

packet

or

the

way

the

data

is

the

way

the

contents

of

the

packets

are

signaled.

U

U

I

B

C

B

R

B

So

I

know

there's

there's

that

issue

because

really

the

the

encrypted

payload

is

really

an

end-to-end

thing

and

sdp

really

isn't

about

that.

It's

hot

by

hop,

but

I

think

it's

a

little

bit

dangerous

to

assume

that,

because

you've

negotiated

a

particular

header

extension

that

everything

will

work

end

to

end

with

an

encrypted

payload,

because

there

could

be,

as

we

just

talked

about

with

the

b2b

uas

right.

There

could

be

somebody

in

the

middle

who's

looking

at

this

and

if

the

sdp

doesn't

tell

it

that

they

give

it

any

inkling

that

it's

encrypted.

B

A

Oh

yes,

I

mean

it

seems

to

me

like

conceptually

what

this

actually

should

be.

I

mean

insofar

as

everything

in

the

rtp

session

will

be

s

frame,

which

I

think

is

what

people

probably

want,

because

you

don't

because

I

think

having

this

be

mixed

is

a

security

and

you

know

nightmare.

I

think

what

this

actually

is

a

profile.

Much

like

savp

has

a

profile.

A

This

is

a

new

transformation

like

savpp,

which

I

mean.

I

don't

know

if

you

just,

I

mean

whether

you

actually

negotiate

it.

That

way

for

backward

compatibility

reasons

is

a

separate

issue,

but

I

feel,

like

you

know,

that

might

be

at

least

conceptually

the

cleanest

way

to

approach

it

and

if

we

have

to

hack

something

to

get

it

to

work

with

browsers

or

whatever

so

be

it.

A

A

P

They

think

it's

a

hint

to

the

sf

utility.

It's

saying

that

you

know

you

know,

don't

look

into

the

packet

further,

because

it's

entered

and

created.

You

don't

get

anything

versus

something

like

if

it's.

If

it

is

not

an

internet

encrypted

thing,

you

can

look

into

the

packet

to

see,

for

example,

audio

level

or

something

like

that,

and

hence

having

some.

We

need

to

kind

of

look

it

in

the

picture

of

the

signaling

and

the

media

to

make

a

decision

just

looking

at

rtp.

L

Yeah,

I

think

conceptually,

I

agree

with

jonathan

that

the

first

thing

I

thought

conceptually

was

also

profile

level

things

like

that

savp

or

something

like

that.

I

was

an

original.

I

made

the

the

right

precedent

model

to

use,

however,

not

an

originalist,

and

I

don't

believe

that

it

decided

long

ago

should

impact

everything

today,

and

I

think

I

would

argue

that

savp

has

caused

a

lot

more

damage

than

than

good

and

there's

been

a

lot

of

problems

with

things

like

stuff

for

security.

L

I

would

I

would

caution

against

trying

to

do

what

logically

makes

sense

is

to

make

a

new

profile

out

of

this,

but

I

think

that's

that's,

probably

a

mistake

that

rehashes

a

lot

of

the

mistakes

that

those

older

profiles

cause.

What

makes

more

logical

sense

to

me

is

this

is

just

an

encapsulation

like

like

red

or

effect

or

anything

else,

it's

an

encapsulation

of

of

some

other

payload.

L

So

I

think

it

makes

more

logical

sense

to

declare

all

the

real

payloads

and

then

also

declare

the

encapsulated

types

and

then

what

you

actually

send

on

the

wire

is

the

encapsulated

type.

So

it

would

be

a

different

payload

type

number

and

it

would

just

be

an

encapsulation

of

some

other

payload

type

that

is

also

in

the

stp,

but

won't

appear

on

the

wire

because

you're

not

actually

sending

that

format

on

the

wire.

I

I

It

strikes

me

as

a

wrapper

format.

Much

more

like

you

read

or

the