►

From YouTube: IETF112-QUIC-20211110-1200

Description

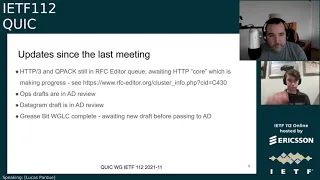

QUIC meeting session at IETF112

2021/11/10 1200

https://datatracker.ietf.org/meeting/112/proceedings/

A

And,

or

would

anyone

like

to

volunteer

to

help

robin

with

the

note-taking

today

robin

will

be

presenting

for

15

minutes,

but

beyond

that?

Whoever

would

like

to

dive

in

they'll

be

very

welcome,

see

watson's

volunteered.

Thank

you,

watson

for

jabba's,

scribe

yeah.

If

we

could

get

some

d2

to

assist

with

the

note

taking,

that

would

be

very

welcome

too.

A

A

Jonathan,

thank

you

very

much.

You

were

the

person

I

had

in

mind

to

to

ask.

So

I'm

glad

you

did

it

first

right.

Then,

let's

get

started

with

the

session.

Hello,

everybody.

This

is

the

quick

working

group

session.

If

this

is

not

the

session

you

intended

to

join,

please

leave

now.

I'm

lucas,

I'm

one

of

the

co-chairs,

matt

who's,

just

putting

his

video

on

is,

is

my

fellow

co-chair.

This

is

the

first

session.

I

believe

that

lars

hasn't

shared

a

quick

session.

A

A

Like

I

said

we

we

wanted

to

go

through

the

usual

notewell

and

and

etc,

which

I'll

come

on

to

next,

but

also

give

a

little

bit

of

an

update

from

the

chairs

about

you

know

what

has

the

working

group

been

up

to

since

the

last

meeting

and

maybe

the

direction

of

the

group

so

just

to

you

know

we

we've

delivered

rfc

9000

and

people

are

a

bit

fragmented

across

different

drafts.

We've

lost

a

little

bit

of

focus,

but

the

working

group

is

still

active,

so

we

just

wanted

to

keep

people

up

to

date.

A

This

covers

the

itf

policies

that

cover

topics

such

as

patents

or

code

of

conduct

and

and

how

you

participate

in

the

ietf.

So

this

is

important

for

understanding

your

contributions

to

this

working

group

and

the

ietf

in

general.

So

please,

please,

do

go

and

attend

that.

We've

also

wanted

to

give

some

special

attention

to

the

itf

code

of

conduct

this

time

around.

So

the

you

know,

the

rc7154

lays

out

some

of

the

expectations

for

participants

in

the

itf.

A

I'd

like

to

say

that

the

quick

working

group

has

got

a

good

track

record

of

being

able

to

function

and

have

constructive

discussions

on

things,

so

it

doesn't

hurt

to

remind

ourselves

that

we

should

keep

that

up,

and

I

look

forward

to

a

healthy

discussion

during

this

session

in

terms

of

administrative

year.

For

this

meeting,

we've

got

the

notetakers.

Thank

you

robin

and

jonathan.

A

The

blue

sheets

are

automatically

taken

care

of

now

by

meet

echo,

so

that

saves

a

chore.

The

chat

will

be

by

meet

echo

or

java,

all

integrated

again,

no

problem

for

av.

If

you

would

like

to

present

and

or

participate

in

the

queue

to

ask

questions

or

clarifying

questions

or

make

comments,

we

we're

using

the

echo

people

should

be

familiar

with

that

by

this

point

in

the

week.

A

But

if

you're

not

there's

several

icons

in

the

top

left

hand

corner

of

the

screen,

you

have

the

raised

hand

to

get

in

the

queue

when

it's

open.

We

will

be

closing

the

queue

at

some

points.

Depending

on

how

we

manage

time,

we

can

also

unmute

your

microphone

or

your

video

for

presenters.

We're

going

to

go

with

the

screen

share

tool

built

into

meet

echo

today.

So

when

is

this

a

lot?

A

You

can

click

the

page

icon,

to

request

to

share

slides

and

it'll,

be

done

by

me,

echo,

which

is

a

little

bit

more

friendly

to

people's

streaming

bandwidth

or

if

you

really

need

to

use

share

screen

or

we

can

have

the

chairs

share

this,

the

slides

for

you

and

the

chairs

will

be

running

the

cube,

and

so

my

glamorous

assistant

matt

will

be

helping

me

on

that

front,

but

down

to

the

order

of

business

today.

Just

a

recap

of

the

agenda,

like

I

said,

we've

got

some

chair

updates.

A

A

Mary

will

be

presenting

on

behalf

of

a

few

folks

that

have

been

working

on

that

and

had

a

side

meeting

a

couple

of

weeks

ago

and

that'll

be

the

bulk

of

our

time

in

that

section

and

then,

and

as

the

time

permits,

we'll

look

at

some.

Some

of

the

things

here

like

receive

time,

stamps

quick

v

next,

whatever

that

might

be,

or

the

zero

rtt

bdp.

So

before

we

we

get

started

on

the

agenda

in

earnest.

Is

there

any

bashing

that

anyone

would

like

to

do.

A

A

If

you

recall

a

few

months

ago,

as

a

group,

we

we

decided

that

people

now

that

rsc

9000

was

out,

which

is

a

quick

version,

one

that

the

http

3

alpn,

which

is

the

labeled

h3,

was

allowed

to

be

deployed

on

the

internet

and

I

believe,

we've

seen

some

decent

amount

of

live

deployments

and

interoperability

on

the

public

internet,

which

is

great.

So

I

don't

see

those

the

specs

being

in

the

rsc.

A

A

If

you

haven't

seen

them,

please

do

check

them

out

and

let

us

know

otherwise,

if

we

don't

hear

pushback,

we'll

we'll

direct

people

to

to

incorporate

the

changes

as

and

when

needed,

the

op,

strats,

the

applicability

and

manageability

or

an

ad

review.

So

thank

you

for

everyone

for

the

the

feedback

during

the

various

rounds

of

working

group

last

call

and

the

editors

for

working

with

people

on

that

to

get

it

done.

A

That's

appreciated

the

datagram

draft

also

passed

working

group

last

call,

and

that's

also

an

ad

review

and

the

grease

bit

working

group

last

call

is

completed.

We

had

some

feedback

there's

a

few

minor

changes

there,

so

we're

just

waiting

a

new

draft

and

then

we'll

do

our

shepherd

write

up

and

pass

that

on

to

the

ad.

So

thank

you

and

mine's,

just

posted

in

the

chat

hill

or

having

a

date

inbound,

brilliant,

some

related

work.

A

A

A

I

hope

I've

addressed

the

points

this

all

of

the

side

meetings

in

the

ietf

sidebeings

calendar,

so

please

check

that

for

the

actual

correct

date

and

time

and

actual

joining

instructions

so

there'll

be

a

link

to

it

was

zoomed.

Yesterday,

so

that

isn't

you

know

necessarily

strictly

anything

on

quick

we're

still

trying

to

figure

out

things

in

that

group.

That

may

be.

A

You

know

what

are

the

requirements

for

using

quick

versus

modifying,

quick

and

trying

to

understand

if

any

work

needs

to

come

back

here

or

not

so

anyone

interested

in

that

there's

some

healthy

discussion

there.

It's

kind

of

interesting,

oh

and

robin

also

mentioned,

there's

a

aside

meeting

today

about

openssl

and

it's

quick

support

for

doing

stuff.

So

if,

if

anyone's

interested

in

that

again

check

out

the

the

side

meeting

calendar

because

all

the

details

will

be

there.

A

A

The

focus

areas

are

kind

of

three

of

the

maintenance

and

evolution

of

the

quick

specs

as

and

when

we

need

to

to

do

things

that

you

know

improve

upon

or

fix

or

iterate

upon

drafts

that

have

been

adopted,

items

and

and

been

published.

So

that

should

be

fairly

obvious.

Other

things

about

supporting

the

deployability,

which

relates

to

drafts

like

ops,

strats

or

queue

log

or

low

balances,

those

level

of

things.

A

So

we

can

support

non-protocol,

specific

work

items

too

if

they

make

sense

for

us

to

do

things

and

then

new

extensions

to

quick,

so

datagram's

an

example

of

an

extension

and

we've

got

another

one

coming

up

later

in

this

session.

But

as

I

mentioned,

dns

of

a

quick

is

an

example

of

how

an

application

protocol

could

use

quick

and

that

work

was

done

outside

our

working

group.

A

It

could

be

done

here

if

we

really

need

it

if

that

suitable

home

couldn't

be

found,

but

I

would

hope

that

most

most

of

the

discussion

in

such

specifications

relates

to

the

application

protocol

and

that

we

have

an

awareness

of

the

work

that's

happening

there,

so

we

can

on

you

know.

People

from

this

working

group

can

go

and

review

with

a

with

their

quick

head

on

and

maybe

give

some

feedback

about

those

aspects

of

things

and

then

defining

new

congestion.

Controls

is

outscope.

A

We

we've

we've

cleared

things

from

our

our

agenda

and

we

have

some

more

time,

but

we

also

have

a

clear

idea

of

the

problems

and

people

have

come

together

for

a

more

unified

proposal.

So

we'll

see

how

the

working

group

think

on

that

and

we'll

be

taking,

the

chairs

will

be

taking

an

assessment

about

whether

we

think

that

work

is

is

worth

adopting

in

its

current

form.

B

A

C

C

C

C

C

C

C

There

we

go

cool,

so

yeah

we're

we're

kind

of

hoping

to

wrap

up

the

act.

Frequency

draft

in

a

relatively

near

future,

there's

one

fairly

substantial

design

issue.

That's

outstanding

that

I'm

going

to

highlight

in

these

slides,

but

first

I'm

going

to

start

with

kind

of

an

overview

of

where

the

frame

is

at,

and

you

know

and

talk

through

that

and

if

there

are

any

questions,

obviously

feel

free

to

interject.

C

C

So

that's

the

value

you'd,

like

the

peer

to

delay,

an

acknowled,

an

immediate

acknowledgement

for

sorry

delay,

an

acknowledgement

for

upon

and

perceiving

an

app

listening

packet.

Immediate

acknowledgements

are

totally

different.

Yes,

sorry,

I

need

to

do

something

later.

Thanks,

sir

sir,

the

the

ignorancy

field

is

ignoring

congestion

experience.

This

was

added

both

as

a

result

of

conversations

about

how

one

might

use

ecn

in

different

or

ecn

marking

in

different

contexts.

C

C

Freezing

and

and

state

problem

statement

were

much

better

than

mine

was,

and

so

the

issue

is

that

one

act

is

sent

immediately

today,

just

like

with

p1,

but

after

that

the

next

act

will

not

be

sent

until

the

eliciting

threshold

of

the

act

layer

hit

and

there's

a

lot

of

situations

where

that

might

slow

down

the

packet

threshold

lost

detection.

So

the

kind

of

example

is

you

have

a

drop,

you

send

an

immediate

act,

and

then

you

don't

send

another

act

for

say

10

or

20

packets.

C

It's

it's

pretty

easy

to

hit

a

situation

where

you

know.

If

you

send

another

act,

you

know

two

packets

later,

you

could

have

immediately

declared

a

loss

and

moved

on,

but

instead

you're

waiting

say

like

a

quarter,

rtt

rtt

for

the

the

time

threshold

to

hit

in

data

centers.

This

kind

of

could

be

worse,

unfortunately,

because

data

centers

tend

to

have

alarm

granularities,

which

are

not

amazing

and

commonly

the

the

thresholds.

We're

talking

about

according

to

t

and

eight

that

are

tv,

are

literally

unachievable

in,

like

a

micro

center.

C

I'm

sorry,

a

micro

micro,

second

scale,

data

center

networking

environment.

So

this,

this

probably

is

actually

a

worse

problem

in

a

data

center

than

the

public

internet,

but

anyway,

that's

kind

of

the

outline

of

the

problem,

and

the

most

important

conclusion

is

that

loss

detection

latency

has

the

potential

to

be

worse

than

in

quick

v1.

C

So

the

the

proposal

here

is

to

communicate

the

reordering

threshold

to

the

receiver.

Instead

of

this

ignore

order

that

we

we

have

right

now

and

the

algorithm

comes

down

to

that.

The

receiver

needs

to

send

an

immediate

acknowledgement

whenever

they're

missing

packets,

somewhere

between

the

largest

acknowledged

value

that

has

been

sent

in

a

previous

acknowledgement.

C

Mastery

ordering

threshold

and

the

largest

acknowledged

in

the

that

you

know

currently

has

been

received

at

this

moment

from

a

more

recently

received

packet

minus

the

reordering

threshold,

so

that

guarantees

that,

if

they're,

basically

any

packet

in

that

range,

that

is

missing.

Assuming

that

the

peer

is

correctly

communicated

their

reiterating

threshold

to

you.

That

means

that

they

can

immediately

declare

a

loss.

The

moment

they

receive

your

immediate

acknowledgement.

C

So

ideally,

this

gives

you

the

best

of

both

worlds.

The

major

you

know

the

major

negative

is.

It

definitely

increases

implementation

cost

by

a

slight

margin,

because

you

have

to

implement

this.

This

algorithm

right

here

so

yeah.

So

that's

that's

the

item

to

discuss.

Do

people

have

thoughts.

This

pr

has

been

kind

of

out

there

for

a

while

and

as

there's

the

issue,

yeah

christian

christian,

please

go.

F

Should

we

skip,

can

you

hear

me

now,

can

you

can

you

hear

me

now

yeah?

Okay,

that's

a

weirdness

with

the

microphone

too

many

buttons

yeah,

I'm

not

sure

I

mean

you're,

proposing

to

add

a

weirdo

in

threshold

based

on

packet

counts

and

I

am

not

sure

that's

the

right

tool,

the

the

reason

I

say

that

is

that

we

have

tried

a

lot

of

that.

F

C

F

C

I

would

agree

there

are

circumstances

where

this

is

not

actually

a

helpful

mechanism,

and

so

we

did

maintain

the

existing

kind

of

ignore

order.

Functionality,

if

you

send

a

reordering

threshold

of

zero,

basically

means

the

existing

order.

Functionality

yeah,

I

think

it.

It

really

depends

on

the

circumstance.

C

So

this,

I

guess,

is

really

about

giving

the

sender

which,

as

described

in

the

recovery

draft,

the

last

detection

congestion

total

draft

there,

there

are

two

essential

mechanisms

for

declaring

loss

there

right.

One

is

reiterating

threshold

impact

accounts

and

one

is

in

time,

and

so

this

is

giving

the

sender

the

maximum

number

of

tools

to

express

sort

of

those

two

mechanisms

between

the

the

ipad

and

the

rendering

threshold,

but

but

you're

right

that

this

may

not

always

be

applicable.

F

F

F

Now

the

packet

sequence

number

is

something

which

is

very

hard

to

use

in

practice,

because

first

I

mean

the

the

size

of

the

loss

in

the

packet.

Sequence

number

vary

with

the

conjugation

window

and

second,

the

it

also

depends

on

things

like

numbers

keeping

by

the

sender

and

so

what

you

have

there.

You

have

a

primary

arc

delay

you

might

want

to

have

an

acquiring

delay

or

something

like

that.

C

C

B

If

it's

all

right,

I

mean

I,

I

think

I

don't.

I

don't

quite

understand

the

issue

that

christine's

having

at

high

level.

Basically

packet

threshold

is

already

there

and

the

problem

with

this

particular

with

with

the

with

the

act

delays

that

we

effectively

did

not

have

packet

to

show

lost

detection

anymore

and

what

this

proposal

does.

Is

it

brings

it

back?

B

So

it's

not.

They

were

bringing

a

new

new

mechanism

here

we

are

simply

reinstating

a

mechanism

that

we

lost

because

of

this

extension

does

that

make

sense

to

your

christian?

I'm

not

I'm

not.

Quite

maybe

I

mean

it's

not

saying

we'll

have

to

discuss

this

on

the

on

the

issue

and

others

just

bringing

it

up.

B

B

D

Okay,

so

wherever

I

I

think,

maybe

I

can

understand

what

you're

trying

to

do,

I'm

not

sure

about

the

first

subtraction

here

I'd

like

a

picture,

because

I'm

not

smart

enough

to

do

this.

The

purpose

of

this

discussion

was

so

that

people

could

understand

what's

going

on,

and

I

suspect

this

is

probably

something

along

the

lines

of

the

right

solution

here,

but

I

don't

know-

and

so

that

way.

D

C

G

Oh

thanks

ian,

I

think

it's

better

reordering

threshold

is

better

than

ignore

order.

This

gives

you

a

little

bit

more

options

than

you

know,

just

true

and

false,

but

as

I

I

think,

christian

what

he

said,

I

think

I

agree

with

him.

So

if

there's

a

reordering

threshold,

it

can

be

either

in

time

or

in

packets.

I

I

think

maybe

we

can

make

this

field

either

two

fields

or

make

this

make

a

way

to

distinguish

whether

we

are

setting

this

in

time

or

packets,

but.

C

B

Yeah,

I

don't

think

we

have,

I

mean

the

pr

the

pr

for

this

is

not

fully

fleshed

out.

Yet

I

think

that's

that's,

definitely

good

feedback.

I

think

we

need

to

have

something.

That's

more

precise

in

terms

of

what

does

an

endpoint

do

if

they

don't

have

anything

better,

that

they

can

use

up,

they

don't

have

having

a

a

default

in.

There

is

going

to

be

very

useful

and

I

I

think

we

can.

We

can

default

back

to

quickv1

defaults,

but

it'll

be

good

to

state

that

explicitly

that

people

know

what

to

do.

I

Hi

thanks

for

doing

this,

and

I

do

see

it

progressing,

that's

really

good.

I

have

two

questions.

The

first

one

I

think

you've

already

touched

on

which

I

think

there

is

some

element

of

safety

in

here.

If

you

get

these

numbers

wildly

wrong,

then

there

is

a

congestion

control

implication,

so

I

would

really

love

to

see

some

sort

of

safety

considerations

or

recommended

maximums

or

something

we

can.

Maybe

we

can

discuss

that

later

on

the

issue.

Does

that

seem

reasonable.

C

Yeah

yeah

we

can,

we

can

either

discuss

it

on

this

issue

or

on

on

other

issues.

Yeah.

Let

me

yeah,

I'm

happy.

It

needs

to

be

discussed.

I

think

we

all

agree.

I

just

want

to

make

sure

we

get

the

right

text

in

there

and

it

applies

to

all

the

various

mechanisms

we

have

and

it's

kind

of

a

global

like

recommendation,

so

I

think

we're

all.

On

the

same

page,

it's

just

a

matter

of

making

sure

we

actually

get

the

right

tax

rate

down

so

yeah.

That's.

I

C

I

C

I

And

then

my

second

point

is

is

even

more

crazy

than

I

think

what

video

raised

I

mean.

I'm

not

convinced

that

ignore

ce

is

in

any

way.

What

the

people

who

are

designing

l4s

are

imagining,

and

I

wonder

what

the

impact

of

actually

deploying

an

ignore

ce

thing,

without

some

very

strong

guidance

would

be

on

the

whole

l4s

thing.

B

Add

something

that

quickly

to

gauri

the

this

isn't

ignore

ce

is

not

really

designed

to

handle

l4s,

it's

more

of

an

escape

hatch

for

those

for

for

for

l4s,

in

the

sense

that

if

you

have,

if

you

don't

have

any

acts

coming

back,

you

don't

have

any

accurate,

ecm

feedback,

and

so

this

this

sort

of

currently

defeats

any

accuracy

that

you

might

want

to

do.

So

it's

an

escape

hatch.

It's

not

precisely

defined

as

a

solution

for

l4s

just

to

be

clear.

I

A

I

ask

you

politely

to

keep

up

this

healthy

discussion

on

the

list

and

the

issues.

Thank

you

ian

for

the

presentation.

Your

last

slide

talked

about

working

group.

Best

call,

it

seems,

there's

there's

still

a

few

well,

this

design

issue

and

a

few

more

issues

to

go.

Another

question

the

chair

is

probably

asking

is:

is

about

implementation

and

interoperability

of

the

act

frequency.

A

I

know

there

has

been

some,

but

if,

if

other

people

are

doing

that,

that

would

help

us

understand

how

mature

this

draft

is

and

and

how

good

it

is.

But

beyond

that,

I

think

we'll

need

to

move

on

to

the

next

slide.

So

once

again,

thank

you.

Ian

you're

welcome

thanks

robin's

up

next

with

q

log.

Do

you

wanna

there?

We

go

the

system

works.

Thank

you.

Go

ahead,

robin.

J

Yeah,

I'm

intelligible.

I

hope,

since

we

had

a

few

issues

with

that

on

monday,

so

it's

short

other

than

kilo,

because

not

that

much

happened

for

the

latest

draft

in

the

past

three

months.

The

main

thing

we

did

was

move

to

a

different

serialization

format

and

just

to

give

a

little

bit

of

context

on

that.

As

you

know,

we

we

use

json

for

the

main

format,

which

is

very

nice.

It's

well

supported.

The

main

downside

is

that

it's

quite

not

very

robust

in

most

of

the

parsers,

for

example.

J

Here,

if

you

would

forget

the

final

square

bracket

in

this

example,

most

of

the

parcels

would

simply

break

they,

wouldn't

even

give

you

a

partial

result

there.

This

means

typical

json

is

not

very

usable

for

streaming

cases,

because

there

you

often

might

not

have

the

option

to

properly

finish

the

json

file,

or,

let's

say,

if

your

implementation

crashes

before

they

can

finish

the

full

log.

J

So

what

we

did

initially

was

define

a

separate

additional

format.

Next

to

that,

for

which

we

chose

newline

delimited

json,

which

is

exactly

what

it

sounds

like

you

just

instead

of

having

proper

json,

you

just

have

each

json

object

as

a

separate

line,

and

you

can

just

use

the

new

line

as

a

delimiter,

which

is

very

simple

works

quite

well.

J

J

J

J

J

Quite

doable,

if

you're

already

doing

nd

json,

it's

very

easy

to

move

over

to

json

text

sequences

in

practice,

and

we

also

tested

various

existing

tool,

suites

or

tools

that

people

were

using

for

qlog,

like

jq

and

all

kinds

of

linux,

based

text,

processing

tools,

and

they

all

seem

to

work

just

fine

with

this

new

format,

and

so

that's

the

reason

why

we

decided:

let's

go

for

it,

and

so

that's

the

main

change

in

draft

one.

We

moved

from

ndjs

onto

this

with

that

some

fewer

additional

minor

changes.

We

have

semantic

versioning.

J

J

We

are

still

supporting

both

json

and

json

text

sequences,

so

non-streaming

file

based

and

streaming,

let's

say,

but

if

you

were

for

some

reason

waiting

for

this

change

to

be

made

to

update

your

own

implementation,

I

think

it's

fair

to

say

that

we'll

likely

stay

with

this

streaming

format.

So

if

you

were

waiting

for

this,

you

can

probably

go

ahead

and

start

implementing

that.

J

So

that's

since

last

time.

What

are

we

planning

for

the

near

future

by

itf

113?

We're

hoping

to

mainly

do

some

editorial

work

and

the

main

thing

there

is

that

in

the

current

drafts,

all

of

our

events

we've

tried

to

properly

define

them

which

fields

are

on

which

events

and

which

type

are

those

fields.

J

But

for

those

we

are

currently

using

the

typescript

data

definition

format,

and

here

we

have

a

very

similar

situation

to

nd

json,

it's

usable,

but

it's

no,

it's

not

really

well

defined

anywhere,

and

it's

not

an

rfc,

and

so

here

again

we

also

want

to

make

the

move

to

something

a

bit

more

itf

centric,

which

is

called

the

concise

data

definition.

Language

cddl,

as

you

can

see

it's

it's

very

similar

in

concepts.

J

So

the

goal

is

there

to

hopefully

have

something

like

in

tools

like

qvis,

that

you

upload

a

q

log

file,

and

then

it

can

tell

you

exactly

if

you

have

any

errors

in

there,

misspelled

fields,

wrong

types

or

fields,

and

so

on

and

so

forth,

which

is

something

we've

seen

surface

a

little

bit

in

the

in

the

past

months

for

some

people.

So

that's

kind

of

the

idea.

It's

both

to

make

everything

a

bit

more

neat,

itf

style,

but

also

be

more

robust

towards

the

future

when

we

start

adding

new

stuff.

J

Our

main

goals

are

to

basically

flash

out

what

we

have.

We

are

a

bit

lacking

in

tls

and

qpec

stuff

at

this

moment,

so

we

want

to

add

those

things,

and

then

we

have

a

few

events

that

need

to

be

extended

or

touched

up

to

be

a

bit

clearer.

And

then

there

are

some

proposals

to

add

more

high-level,

generalized

stuff

like

indicating

which

cpu

or

which

tread

a

certain

event

comes

from

that

kind

of

stuff

that

other

implementations,

like

microsoft,

use

in

their

custom

logging

format.

J

That

might

be

useful

down

the

line

and

that's

basically

it

like.

Last

time

we

we

had.

I

had

in

my

slides,

like

a

desire

to

have

like

a

a

global

design

guideline

or

or

a

design

framework,

so

that

people

could

add

new

events

and

new

protocols

even

to

qlog

and

so

on

and

so

forth.

And

since

we've

had

a

lot

of

discussion

about

that,

and

I

think

most

people

there

agree

that

this

is

a

bit

of

a

utopia

that

it

would

be

quite

difficult

to

achieve

this.

J

We

need

to

think

about

how

to

indicate

which

protocols

and

which

events

are

in

which

file

how

to

make

it

possible

to

change

existing

events.

For

example,

add

new

transport

parameters

into

the

existing

transport

parameter

event

that

we

have

and

so

on

and

so

forth,

and

then

we

should

also

have

a

discussion

about.

Let's

say

we

want

a

q

log

log

events

for

rap

transport.

J

How

do

we

do

that?

Is

that

a

separate

draft?

Is

it

a

fully

separate

thing?

Where

does

that

live

and

so

on

and

so

forth?

So

a

few

of

those

practical

issues.

Those

are

not

things

that

are

very

pressing

at

this

moment,

but

it

would

be

nice

to

have

an

idea

of

what

people

think

about

this

or

to

have

some

proposals

of

this

by

itf

113,

so

that

we

can

start

the

discussion

in

earnest

by

then.

K

J

K

Right

I

mean

if,

if

there's

only

one

format

defined

then,

for

example,

I

can

create

a

pool

that

only

supports

that

format.

But

if

there

are

two

formats

being

defined,

I

I'm

you

know

I'm

forced

to

write

a

converter

or

write

at

least

write

a

test

to

support

both

of

those

two

and

that's

a

complexity.

I

think

for

everyone,

every

other

one.

A

J

H

J

You

have

multiple:

if

you

have

the

normal

json

one,

we

can

append

separate

traces

as

individual

objects.

That's

much

more

difficult

in

streaming

format.

If

you

want

to

do

that

in

streaming

format,

you

would

have

to

indicate

for

each

individual

event

to

which

sub-trace

it

it

belongs.

Otherwise,

it's

impossible

to

de-multiplex

them

afterwards.

J

A

Oh

well,

it

doesn't

seem

like

we've

got

anyone

else

in

the

queue.

There's

some

some

good

progress

here

and

some

good

progress

to

come.

I

did

see

in

the

chat

christine

mentioned

multipath

support,

I

think

you

know

sometimes

defining

these

events

takes

some

thinking,

but

it

isn't

too

hard

it's

more

the.

A

What

should

what

makes

sense

to

put

in

and

what

are

people

willing

to

implement,

because

you

can

define

everything

and

if

no

one

implements

them,

then

you,

you

can

end

up

with

some

interop

issues

like

like

we

found

in

the

hackathon

last

week

when

we

are

consuming

each

other's

q

logs.

So

I

think

there's

there's

some

some

good

work

to

come

up

and

look

forward

to

that

progress

in

the

future.

J

For

just

for

multipath,

I

was

intentionally

waiting

a

bit

for

the

unified

proposal

right

to

know

what

what

direction

we're

going

and

once

that's

settled

down.

I

I

think

it

should

be

relatively

easy

to

add

at

least

provisional

multi-part

events

and

then

also

have

some

basic.

Let's

say,

cubist

tooling

around

that

to

help

with

multiple

debugging.

A

H

H

We

were

went

around

in

circles

for

a

bit

on

it

because

it

was

too

complicated.

Then

mt

came

around

with

a

simpler

design

that

folks

liked

that's

in

the

draft

today

and

then

the

question

is:

where

do

we

go

from

here?

So

far

the

the

new

simplified

design,

I've

haven't

heard

anyone

said

they

dislike

it,

so

we're

in

good

shape.

H

We

still

have

a

lot

of

minor

issues

on

the

on

the

document.

It's

more

that

just

the

the

editors

haven't

been

focusing

on

it,

so

we

haven't

made

good

progress

on

those,

but

like

all

the

ones,

I

did

a

quick

pass

on

those

last

week

and

it

really

looked

like

there

was

agreement

on

how

to

resolve

all

of

them.

H

The

question

is

kind

of

what

is

the

status

with

implementations

for

the

longest

time

we

didn't

have

one.

I

just

just

on

monday,

I

implemented

it

in

our

stack,

not

the

compatible

part,

but

the

at

least

the

version

downgrade

part-

and

it

was

very

straightforward

and

kind

of

the

question

for

the

working

group

is:

where

do

we

want

to

go

from

here?

E

H

Want

to

wait

until

we

have

another

version,

so

we

can

like

test

the

compatibility

bits

at

scale

before

we

ship

this

we'd

kind

of

like

to

get

a

some

sort

of

a

timeline,

because

you

know

that

if

this

needs

to

get

published

soon,

then

that

it's

worth

it

for

the

editors

to

prioritize

like

fixing

these

editorial

things.

Otherwise

we

can

kind

of

kick.

This

can

down

the

road

until

another

version,

so

thoughts

questions.

What

do

we

do

now?

This

is

the

end

of

my

presentation.

H

Crickets,

so

if

one

thing

is,

please

interrupt

with

my

server,

I

put

it

on

the

general

channel

of

the

slack

just

want

to

make

sure

that

you

know

we.

I

implemented

it

correctly,

but

apart

from

that,

given

that

there's

not

too

much

excitement

about

this,

I

guess

maybe

we

just

kind

of

kicked

the

can

down

the

road

a

bit

further

matt.

Do

you

have

any

thoughts.

L

Yeah,

I

was

just

gonna,

you

know,

hearing

the

the

deafening

silence

from

the

working

group.

I

think,

like

everyone

agrees,

this

is

something

we

need

it,

but

it

doesn't

seem

like

there's

a

lot

of

implementation

appetite

at

the

moment.

I

think

for

something

like

this.

We

do

want

implementations

and

interop

before

we

like,

you,

know

kind

of

shepherd

it

through.

So

just

everyone

keep

that

in

mind

that

if,

if

you

want

this

in

the

future,

if

you

see

this

being

something

that

you

want

in

the

future,

please

implement

it

sooner

rather

than

later.

B

Hey

thanks

for

the

presentation

dude

just

very

quickly,

it

seems

to

me

that

it

might

actually

be

useful

to

get

some

implementation

deployment

experience

along

with

a

different

version.

So

I

wonder

if

the

quick

v2

discussion

that

we

are

about

to

have

later

might

play

into

how

quickly

we

move

on

this

draft.

H

That

that

makes

sense,

because

if

we

have

a

v2

that

is

compatible

with

the

first

one

that'll

help

us

to

use

this

at

scale.

Also,

we

won't

be

able

to

ship

a

v2

without

a

version

negotiation

mechanism

with

v1

so

like

yeah,

maybe

these

become

a

joint

cluster

and

I

think

the

quick

v2

is

on

the

s

time

permits.

So

in

that

case

I'll

just

say,

let's

punch

for

now,

but

let's

see

what

comes

out

of

the

v2

discussion.

L

E

E

Too

many

choices,

and

I

agreed

with

that

and

then,

of

course

the

other

concerns

there

weren't

a

ton

of

implementations,

particularly

on

the

server

side

and

no

interop

activity

to

date

that

I'm

aware

of

so

some

things

have

happened

here.

Christian

uitima

has

been

incredibly

valuable

and

fixing

the

now

very

much

misnamed

stream

site

for

algorithm

into

something

that

is

a

little

more

secure.

E

E

E

There's

an

issue

in

the

github.

If

you

want

to

weigh

in

on

this,

but

essentially

you'd

be

getting

longer

c

ids,

the

only

benefit

would

be

fewer

crypto

passes,

which

seems

like

a

not

that

strong

advantage,

and

then

that

would

dramatically

simplify

that

the

document

essentially

have

a

like

a

plain

text

version

and

the

cirtex

version

of

the

same

thing,

so

that

would

be

that'd

be

as

simple

as

you

could

get.

I

really

would

like

to

split

the

load

balancer

portion

of

this

and

the

retry

service

portion

of

this.

E

I

view

them

as

entirely

orthogonal

with

each

other.

The

load,

balancer

thing's,

essentially

version

invariant

with

a

few

caveats

and

then

retry

service

thing

really

is

not,

and

I'm

getting

proposals

for

things

like

stateless

reset

offload

and

so

on,

and

they

all

just

have

like

the

common

theme

of

middle

box

coordination.

But

I

don't

know

if

that's

a

strong

enough

thread

to

hold

these

two

things

together.

E

I've

also

just

kind

of

become

enamored

of

the

idea

that,

like

smaller

drafts,

are

better

because

people

read

them

and

when

there's

a

lot

of

ancillary

stuff

that

half

people

don't

care

about

just

makes

things

worse.

So

I

don't

know

if

people's

strong

opinions

about

that

I'll

be

happy

to

hear

from

it

then

after,

like

if

all

this

goes

according

to

plan,

then

we

do

another

editorial

pass.

Then

it'd

be

done

as

it's

going

to

be

done.

E

C

Ian

I,

the

only

thing

I

had

to

share

is

that

I

now

that

we've

kind

of

worked

our

way

through

our

backlog

of

idea

of

quick

work

in

other

areas.

We

are

very

excited

to

hopefully

take

this

on

in

the

first

half

of

2022,

but

there's

still

some

details

to

be

worked

out

in

terms

of

who's

going

to

do

the

work

and

everything,

but

the

use

cases

are

there

and

everything,

but

that

to

be

said

to

be

clear.

C

That's

like

the

stream

cipher

protocol

with

like

the

key

rotation

and

all

of

those

sort

of

mechanisms,

the

key

exchange

portion

and

all

that

stuff.

I

don't.

We

probably

will

support

at

some

point

in

the

future,

but

that's

like

more

of

a

a

year

from

now

or

more

probably

sort

of

thing.

So

I

think

that

probably

supports

the

idea

that

at

least

in

our

case,

you're

gonna

get

a

deployment

on

one

of

them

a

lot

earlier

than

you're

going

to

get

deployment

on

the

other

half.

But

I

don't

know.

E

Are

you

talking

about

the

retry

service

versus

the

the

load?

Balancing?

Yes,

sorry,

that's

right!

Okay,

yeah!

All

right

super!

Thank

you

yeah!

I

mean

like

that's

the

other

thing

like.

If

we

get

a

lot

of

implications

in

one,

I

anticipate

the

implementations

of

one

of

those

components

versus

the

other

to

be

very

asymmetric,

and

if

one's

ready

to

go

I'd,

just

like

to

move

it

and

at

some

point

I'll

I'll

produce

a

mock-up

of

what

these

book

things

would

look

like

when

people

shoot

at

it.

Lucas.

A

E

I

I

I

brought

it

up

like

a

long

time

ago

and

got

like

I

think

it's

like

five

or

six

weeks

ago

and

got

some

negative

like

a

little

bit

negative

pushback,

I'm

it

wasn't

super

strong,

I'm

become

more

convinced

this,

I'm

bringing

it

up

again,

and

you

know

I

don't.

I

don't

think

I

haven't

really

discussed

this

on

the

list

much

recently.

A

Okay,

that's

cool.

I

think

that

the

list

is

a

good

venue

to

continue

that.

I

think

I

I

I'm

trying

hat

off

in

personal

opinion.

If

splitting

them

is

going

to

help

make

some

progress

on

on

the

one

thing

that

people

really

do

want

to

implement.

That

seems

like

a

benefit,

but

I'm

I'm

not

fully.

Okay

with

with

lb

to

know

what

the

potential

downside

might

be

for

that

split.

E

I

mean

they're

functionally

completely,

I

mean

they

can,

in

theory,

be

implemented

on

the

same

device,

but

there

there's

there's

no

real

relationship

between

the

two

they're

together

because

they

were

again

under

the

theme

of

middlebox

cooperation,

which

is

a

pretty

weak

threat.

In

my

view,

so

there's

a

comment

in

the

chat

about

they

missed

the

cfrg

email.

That's

because

we

didn't

actually

go

directly

to

cfrg

upon

the

advice

of

the

security

ids.

E

L

L

E

So

I

I

have

like

two

concerns

with

the

surety

I'm

beyond

beyond

just

kind

of

my

ideal

path

and

how

this

proceeds

I

to

consider

my

maturity.

Number

one

is

that

the

document

is

written

in

a

way

that

it's

implementable,

because

obviously

I

knew

what

I

meant

when

I

implemented

it,

which

it

doesn't

mean

a

ton

and

then

the

second

thing-

and

I

hope

that

especially

google's

intentions

are

helpful

here,

like

actually

trying

to

deploy

this

thing

and

developing

some

like

we

have.

E

We

have

sort

of

a

configuration

model

that

has

some

assumptions

and

it'll

be

really

nice

to

like

test

that

against

actual

production.

Somehow,

so,

I'm

really

eager

to

hear

to

hear

like

what

the

what

the

issues

are

with

google

and

like

what

the

rough

edges

on

sort

of

the

assumptions

we

make

on

how

to

configure

things

and

like

what?

What

what

the

pain

points

are.

E

I

know

that

my

my

co-author,

nick

banks

has

like

been

working

with

azure

on

this

stuff

and

it

hasn't

gone

super

well

in

terms

of

getting

them

to

to

provide

good

feedback.

So

those

are

really.

Those

are

the

only

reason

other

than

just

like

the

clean

up

in

the

draft.

That's

those

are

the

reasons.

Those

are

the

two

things

where

I

just

don't

want

to

like

hit

the

wtlc

button

right

now,.

A

N

Okay,

hello,

everybody

so

yeah,

I'm

amelia,

I'm

presenting

a

new

draft

here

but

effectively.

This

is

not

new

work.

There

has

been

previously

three

different

drafts

and,

as

you

can

see

here

on

this

new

draft,

we

have

a

set

of

authors

that

actually

combines

the

authors

from

the

previous

draft.

So

that's

the

content.

N

So,

let's

move

to

the

next

I

have

to.

I

can

just

control

this

myself,

okay

yeah.

So

what

happened

so

far?

Lucas

already

mentioned

that

we

had

an

interview

meeting

about

a

year

ago

where

we

talked

about

quick

use

cases

mainly

requirements,

and

since

then

there

was

a

lot

of

work

and

people

have

been

working

on

implementations.

N

There

were

three

different

proposals

and

in

order

to

move

forward

from

here,

we

somehow

needed

to

agree

what

the

right

way

is

to

move

forward

like

combining

those

proposals

and

and

getting

one

way

out

of

this.

So

I

got

in

touch

with

all

the

authors

from

from

all

the

draft,

and

there

was

already

a

lot

of

agreement

about

what

to

do,

and

everybody

was

like

so

on

board

for

having

one

unified

solution

immediately.

N

So

we

had

a

site

meeting.

Only

a

few

weeks

ago,

where

we

discussed

how

to

move

forward

here

and

as

a

result

of

that,

we

recently

published

this

new

draft,

which

does

take

parts

of

the

the

other

three

drafts.

So

this

presentation

will

give

you

an

overview

about.

What's

what's

the

focus

in

the

new

draft

and

what's

in

the

new

draft-

and

this

is

the

most

important

outcome

from

all

the

discussion

we

had-

is

that

the

new

draft

is

really

focusing

on

the

core

components.

N

So

that

means

the

current

draft

as

a

core

component

has,

of

course,

the

negotiation

of

a

new

extension.

It

has

a

minimal

set

of

path,

management

that

is

needed

about

setting

up

inclusion,

close

new

path

or

old

pass.

In

that

case,

it

talks

a

little

bit

about

scheduling,

but

that's

really

minimal.

At

this

point,

we

probably

need

a

few

more

words,

but

don't

want

to

talk

about

it

extensively,

only

what's

needed

to

make

it

work,

and

then

it

talks

about

how

to

actually

transmit

and

retransmit

packets.

So

that's

it.

N

That's

the

core

everything

else

that

has

been

previously

discussed,

like

more

advanced

scheduling,

mechanisms

or

unidirectional

passes,

address

disc

discovery

or

any

kind

of

qs

handling,

and

these

are

things

that

could

come

up

later

on

in

additional

drafts

or

could

be

additional

extensions.

On

top

of

that.

N

So

the

other

thing

that

we

had

like

very

broad

agreement

on

is

that

one

of

the