►

From YouTube: IETF112-V6OPS-20211111-1430

Description

V6OPS meeting session at IETF112

2021/11/11 1430

https://datatracker.ietf.org/meeting/112/proceedings/

A

A

A

B

B

So

it's

because

we

feel

that

now

our

draft

is

ready

for

working

loop.

Last

call.

We

did

two

changes,

so

there

were

two

places

in

the

draft

which

were

not

ready

and

removed

both.

So

it

was

section

4.2

about

the

issue

of

the

security

of

the

stateful

technologies

and

I

opened

a

new

individual

draft

for

that.

It's

titled

scalability

of

ib

version,

6

generation

technologies

for

ipv

version,

was

a

service

and

here's

a

link

for

the

zero

zero

version.

B

It

has

real

content.

Sulfite

contains

the

scalability

results

of

ip

tables,

which

is,

of

course,

a

nat4

for

a

stateful

id4

for

implementation,

but

it

serves

the

sample

for

the

n86

for

skyway

test,

and

I

would

like

to

present

it

right

after

this

draft

and

there's

another

draft,

but

it's

just

a

space

holder.

It's

about

the

benchmarking

of

all

five

ip

version,

foil

service

technologies,

which

was

referred

in

section

five.

So

now

I

think

there's

no

no

unfinished

parts.

A

B

B

B

B

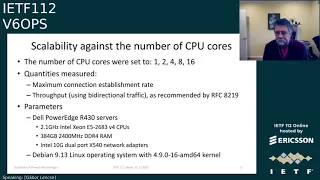

So

I

did

so.

But

the

main

goal

is

is

to

serve

samples

and,

let's

may

say

some

words

about

what

have

measured

so

measurements

of

two

kinds:

scalability

agents,

the

number

of

cpu

cores,

how

the

performance

of

ip

tables

scales

up

with

the

number

of

cpu

cores

and

the

other

measurement

is

scalability

agents.

The

number

of

concurrent

sessions,

of

course,

number

of

compensations

when

they

increased.

We

expect

that

somebody

performance

will

be

decreased

and

the

entire

description

of

the

methodology

is

in

this

draft

below.

B

B

It

was

a

maximum

connection,

establishment

rate

and

throughput

using

video

bi-directional

traffic,

as

recommended

by

rfc

8219,

and

I

used

the

power

edge

servers

with

a

2.1

gigahertz

intex

7

cpus,

the

clock

frequency

was

set

fixed

to

2.5

gigahertz,

and

it

has

quite

a

amount,

significant

ram.

It

was

384

gigabytes

and

used

10

giga

to

import

network

cards

and

somewhat

old

linux

version

and

linux

kernel.

B

So

here

are

the

results.

The

first

line

shows

the

number

of

cpu

cores

those

always

double

so

set

you're

using

a

c

line

scanner

parameter.

So

only

this

number

of

cpus

were

on

and

others

were

off

and

the

first

lines

shows

the

connection

per

second

median

minimum

maximum

values.

All

the

units

are

thousands,

so

it

means

that

with

a

single

cpu,

core

273.5.

B

000

connections

were

established

per

second

and,

as

you

see

when

we

double

the

number

of

cpu

cores,

the

performance

doesn't

really

double

but

scale

scales

up.

I

feel

quite

well

so

if

you

see

at

the

end

with

16

cores

it's

about

10

times

as

much

as

with

one

core,

so

I

think

it's

not

not

a

bad

scale

up

and

row

number

five

shows

the

relative

scale

up.

Of

course

it

is

one

with

one

cpu

and

we

do

it's

0.83,

but

of

course

it's.

B

B

Of

course

here

is

also

median

minimum

maximum

and

it

also

scales

up

very

similarly

a

very

bit

better,

maybe

the

performance

of

11

cores,

but

it's

very

similar.

So

it

was

the

scalability

that

is

the

number

of

cpu

cores

and

the

other

one

is

the

scalability

agents,

the

number

of

concurrent

sessions

and

the

number

of

concurrent

sessions

was

set

by

using

different

number

of

source

for

destination.

Port

number

combinations,

and

I

usually

increase

the

number

four-fold

except

the

last

case.

B

You

will

see

y

and

also

I

increase

the

hash

table

size

proportionally

with

the

number

of

concrete

sessions

except

the

last

two

cases

and

some

non-uniform

memory.

Access

issues

also

influenced.

If

you

as

the

last

case,

you

will

see

why

and

imagine

the

same

two

quantities,

maximum

connection,

excavation

rate

and

throughput

using

bi-directional

traffic,

as

recommended

by

rfc,

82

19

and

here

are

the

results.

B

And,

as

you

see

the

hash

table

size,

we

are

usually

always

increased

it

four

times

except

the

last

fuckbonk

case.

So

you

see

at

100

million

100

million

the

hashtable

size

2

to

the

power

of

27,

and

it's

400

million.

It's

just

2

to

the

power

of

28,

because

the

system

didn't

allow

me

to

set

to

a

higher

value

and

of

course

it

was

not

increased

at

the

last

column.

Neither

so

you

can

see

the

both

the

connection

per

second

median

and

the

super

medium

values,

of

course,

compared

to

the

first

column.

B

In

the

second

column,

this

someone

degrees

can

be

observed

as

well

as

ron

suggested,

it's

very

likely

due

to

the

effect

of

the

cache,

because

the

first

column,

the

level

three

cache

have

some,

but

but

no

more

after,

but

from

the

second

column

in

the

consecutive

columns,

the

values

are

very,

very

stable.

Of

course,

there

are

very

little

decrease

in

the

last,

but

one

column

and

there's

some

decrease

at

the

last

column,

of

course,

because

I

couldn't

increase

any

more

the

hash

table

size

and,

of

course,

there

were

no

numeral

issues.

B

So

for

this

reason

there

was

some

degradation,

but

in

general

we

can

say

that

using

hashing

we

can.

We

can

make

a

good

scalability.

Of

course,

it's

also

true

that

a

state

corporation

has

it

as

its

price.

We

have

seen

it

in

the

cpu

core

scalability

and

also

we

can

see

it

here

because

it

requires

a

lot

of

memory.

B

So

with

other

solutions,

we

don't

need

such

amount

of

memory,

and

we

can

also

see

that

when

we

are

approaching

the

limits

of

the

system,

for

example,

when

we

use

memory,

then

it's

a

problem

and

it

becomes

a

bit

a

bit

lower

performance.

So

this

is

the

price

of

of

stateful

operation.

So

I

had.

I

have

prepared

another

slide

with

my

questions

and

my

question

is:

what's

your

opinion?

B

Are

these

results

useful

and

sufficient

for

network

operators?

So

using

these

results,

you

could

make

a

good

decision

and

even

it

is

more

important

that

will

the

same

method

be

appropriate

for

a

stateful

nad

six

force

capability

measurements,

because

if

you

say

it's,

okay,

that

I

start

with

measurements

with

that

and

the

first

implementation

is

jew.

What

do

you

think

of

jewel?

Is

it

a

bright

idea

to

test

the

jewel?

A

E

E

We

realized

that

this

title

may

lead

people

to

believe

that

we

present

a

new

solution

in

this

draft,

which

is

not

the

case.

This

is

a

deployment

guideline

draft,

so

all

the

solutions

they

that

you

have

discussed

you

know

are

existing.

You

know

rfcs,

so

this

is

the

first

clarification

I

would

like

to

make.

E

Also

the

title

may

lead

people

to

believe

that

we

favor

layer,

2

and

layer

3

holds

isolation

all

the

time.

This

also

not

the

case.

We

we

advocate

layer,

2

and

layer,

3

isolation,

only

in

the

suitable

cases

and

the

gif

clearly

say

that.

Oh

you

know

in

what

cases

isolation

is

suitable

and

in

what

cases

isolation

are

not

suitable.

So

this

is

the

first

two

clarification

that

I

would

like

to

make

about.

The

draft.

E

So

first,

I

would

like

to

talk

about

why

we

want

to

write

such

a

draft.

It's

because

we

realized

that

mainly

operators

are

finally

starting

to

deploy

ipv6.

So

in

this

process

they

started

to.

You

know

care

a

lot

more

about

neighbor

discovery

and

they

find

that

there

are

mainly

the

issues

and

solutions

are

distributed

in

many

rfc

and

they

will

have

to

read

many

ifcs

in

order

to

to

understand

all

the

xcus

and

get

comfortable

with

nd.

E

So

from

talking

with

them,

we

decided

that

it

can

be

useful

to

collect

all

the

issues

and

solutions

into

a

single

place

for

easy

reference.

We

acknowledge

that

rfc,

6583

and

recently

1999

helps

because

these

two

rfcs

did

talk

about

nd

excuse.

The

second

rfc

actually

talk

about

ipv6

security

excuse

about

it

covers

some

anti-issues,

but

nevertheless

they

are

either

a

little

bit

old

or

you

know

not

complete

on

nt,

for

example,

ifc

1999,

actually

on

cover

wing

the

wireless

nd.

E

E

However,

there

is

no

draft

saying

how

to

avoid

the

excuse,

because

if

you

can

avoid

the

excuse,

then

it

will

be

simpler

than

you

know,

leading

with

the

xcu

and

then

deploy

a

solution

to

deal

with

it.

It's

for

this

reason

that

we

wrote

these

draft

and

then

the

work

part

is

kind

of

like

a

simplified

table

of

content.

E

For

example,

if

you're

isolating

hosts,

then

you

know

if

your

service,

you

don't

rely

on,

for

example,

mdns,

then

you

know

ndns

is

not

going

to

work.

So

we

do

recognize

that

you

know

isolating

hose.

Has

its

you

know,

applicability

some

places?

It's

some

places,

it's

not

good.

So

we

to

discuss,

we

do

discuss.

You

know

where

you

should

be

isolating

where

you

should

not

be

this.

F

E

E

Prefix

host,

you

know

the

unique

prefix

for

host.

You

know

some

people

actually

in

our

draft.

We

call

it

l3

isolation

because

from

our

discussion

you

know

even

among

the

co-authors

themselves,

and

also

we

are

seeking

private

comments

from

some

extras

in

the

working

book.

We

find

that

many

people

mix

these

two

things

together,

so

l2

isolation

is,

is

I

think

that

is

simple

right.

You

know

it's

like

putting

a

hole,

for

example,

in

a

p2p

ring,

or

you

know,

by

itself.

E

E

Prefix

some

people

kind

of

feel

that,

oh

you

know.

If

the

hose

is

of

its

own

link,

then

it

will

equal

get

also

get

its

own

subnet.

But

this

is

not

the

case.

So

here

we

put

on

a

picture

that

we

take

from

ifc

7668,

and

this

picture

clearly

tells

you

that

you

know

in

in

this

picture.

The

6ln

is

the

like:

the

sixth

low

power

node

and

the

six

lbi

is

the

router,

and

here

you

can

see

that

all

the

holes

are

clearly.

E

You

know

in

a

point-to-point

link

to

the

router,

so

you

know

they

are

isolated

in

l2,

but

actually

you

know

ifc

7668,

the

the

author

of

the

rc.

They

decided

that

you

know,

for

whatever

reasons

it's

better,

to

put

all

of

these

in

a

same

subnet.

So

again,

this

is

a

clear

example

like

you

know,

just

because

a

node

is

it's

in

its

own

p2p,

for

example,

a

p2p

link

doesn't

mean

that

you

know

they

will

necessarily

you

know

be.

E

E

Implicitly,

increasingly,

assuming

that

you

know

okay,

you

know

the

holes

are

isolated

in

l2,

strictly

speaking,

strictly

speaking,

you

know,

there's

corner

cases

where

you

know

the

the

holes

may

not

be

isolated

or

vice

versa.

We

also

find

that

you

know

there

are

some

other

drafts,

which

you

know

is

advocating

layer

to

isolation.

E

For

example,

p2p

ethernet

and

the

author

assumes

that

you

know

it

will

automatically

imply

the

unique

prefix

per

host.

We

would

just

like

to

to

point

out

that

you

know

there

are

corner

cases

like

this

one,

where

these

two

things

are

different,

so

I

think

that

you

know

this

is

this

may

be

a

good

notion

for

people

to

for

easier

understanding

of

nd,

and

then

we

we

clearly

in

this

graph.

E

We

clearly

analyzed

the

pros

and

cons

of

both

l2

isolation

and

unique

prefix

per

path

and

where

they

are

applicable,

and

I

would

like

to

especially

mention

that,

when

the

unique

prefix

host

before

it

become

rfc

8273,

there

was

actually

a

lengthy

debate

about

the

the

merit

of

a

unique

prefix

per

host,

and

at

that

time

you

know

many

experts.

You

know

expressed

very

good.

You

know

inside

about

nd,

but

the

those

inside

are

dispersed

in

you

know

more

than

100

messages.

E

You

know

it's

not

easy

for

people

to

get,

because

people

may

read

rfc,

but

they

may

not

go

back

to

you

know

historic

male

archives.

So

you

know.

While

we

are

writing

this

job,

you

know

we

go

to

this

xcu

and

then

we

carefully

read

all

those

messages

and

we

also

summarize

the

valuable

insight

from

you

know

a

hundred

plus

messages

into

the

app

again.

I

think

that

this

will

help

people

to

to

get

some

of

the

the

insight

from

the

expert,

and

this

also

you

know,

help.

F

E

You

know

understand

the

pros

and

cons

of

unique

prefix

per

power

per

host

data,

so

this

is

another

one

and

overall

overall

this

you

have

to

discuss.

You

know

how

to

avoid

ndx

use

and

then

to

provide

deployment

guidelines.

We

believe

that

this

is

useful

service

to

the

community

and

then

on

my

last

slide,

I

would

like

to

give

some

a

suggestion

on

how

to

reading

the

draft,

because

it's

along

our

the

the

mailing.

F

E

A

couple

of

people

have

read

the

draft,

but

we

believe

that

many

people

may

still

have

not

have

a

chance

to

read

it

so

based

on

discussion

with

other

commenters.

We

realized

that

again.

You

know

some

people

think

because

of

the

title

that

we

are

advocating

host

isolation

everywhere,

then

I

think

that

people

may

get

alienated.

You

know

about

the

job

immediately,

but

here

I'd

like

to

clearly

clarify

that

this

is

not

the

case.

We

realized

that

you

know

again.

E

Host

legislation

has

its

marriage

somewhere,

but

you

know,

may

have

disadvantages

otherwise,

so

you

know

it's

only.

You

know

we.

We

do

have

some

guidelines

on

where

to

apply

this

host

isolation,

and

then

you

know

it's

important

not

to

assume

that

l2

isolation

automatically

imply

a

unique

prefix

per

path.

These

are

two

different

things,

because

if

you

feel

that

these

are

the

same

thing,

then

our

journey

may

appear

a

little

confusing

like

okay.

You

know

why

you

are.

E

E

We

also

acknowledge-

or

at

least

you

know,

I

don't

want

to

speak

for

michael

authors,

but

you

know

at

least

for

myself,

I'm

not

a

well-known

nd

cast

in

the

expert

like

some

of

the

people

in

this

working

group.

So

sometimes

you

know,

people

may

think

that,

oh

you

know

when

they

have

a

disagreement

when

they

have

a

disagreement,

they

say

that,

oh

you

know

these

guys

are,

you

know

newbies

and

they

know

what

they

are

talking

about

now.

E

E

Bear

with

us

a

little

bit

and

with

that

I

would

like

to

say

to

the

community

that

please

review

and

comment.

We

will

really

appreciate

that,

because

we

put

a

lot

of

effort-

and

we

believe

that

this

is

this

draft

is-

is

a

good

service

to

the

community.

We

didn't,

we

didn't

advocate

any

solution,

so

we

don't.

We

don't

have

our

own

agenda.

We

just

want

to.

E

You

know

help

people

understand

nd,

easier

and

would

like

to

thank

you,

know,

ted

lemon

and

brian

and

michael

for

their

for

their

comment

on

the

mailing

list,

and

also

there

are

the

private

commenters,

which

we

appreciate

and

also

you

know,

if

you

really,

and

if

you

feel

that

this

you

have,

you

can

also

contribute

to

the

draft.

We

welcome

your

contribution

and

you

know

if

you

want

to

be

a

co-author.

We

are

also

open

to

that

and

that's

it.

Thank

you.

G

All

right,

yeah,

so

good

presentation.

So

thanks

for

putting

your

efforts

into

this,

I

think

my

comment

is

related

to

your

original

point

about

it's

really

hard

to

find

all

the

information,

because

it's

scattered

around

a

bunch

of

drastic.

You

don't

know

where

to

look.

My

comment

is

that

there's

an

ieb

rfc

that

I

think

would

be

good

to

reference

and

that's

rfc

4903,

which

is

called

multi-link

subnet

issues

right.

That's

what

the

ieb

did

an

evaluation

of

the

issues

around

multi-link,

subnets

and

at

least

wrote

those

up

right.

G

It

again

did

not.

I

guess

you

can

read

it

and

decide

whether

you

think

it

actually

makes

any

recommendations

or

if

it

just

highlights

issues

right,

and

so

I

think

it's

another

good

reference

to

add

to

your

list,

because

it

will

point

out

some

of

the

things

that

you're

pointing

out,

and

maybe

some

other

ones

that

you

don't

have

in

your

draft.

Since

I

haven't,

I

scanned

through

your

draft,

but

I

didn't

read

all

of

it,

but

I

did

look

at

your

list

of

references

and

noticed

it

wasn't

in

there.

E

F

F

Okay-

okay,

great!

Yes,

thank

you!

So

much

for

doing

this

work

that

I'm

working

with

lots

of

enterprises

we've

been

trying

to

get

them

to

implement.

This

is

big

area

of

confusion

and

problems,

and

so

we

will.

I

will

have

people

that

I

know

very

large

implementations

also

take

a

look

at

this

and

see

from

from

you

know.

Private

managed

networks

see

where

this

makes

sense

and

we

will

give

you

feedback,

but

thank

you

for

doing

this.

C

C

D

Yeah

thanks

a

lot

for

doing

this

very

interesting

and

I'm

particularly

interested

in

think

about

what

you

call

learn

to

isolation.

I'm

just

curious,

if

you

say

talk

about

something

like

private

villains

when,

from

router

perspective,

it's

still

a

single

interface

right

and

it's

just

your

switching

infrastructure

preventing

peer-to-peer

communication.

D

D

How

alert

to

isolation

in

this

case

helps

with

ng-related

issues,

but

if

it's

different

interfaces

from

router

perspective

right,

I'm

curious

how

well

it

would

scale

because

you

might

have

like

thousands

of

hosts

in

vlan

currently

and

if

you

start

creating

interfaces

on

a

router

for

each

host.

I'm

just

I

don't

know

if

it

exists,

because

if

I

look

at

this

from

enterprise

perspective,

I

have

a

vlan

currently

and

I

would

like

to

see

something

like

prefix

per

host

and

so

on.

But

I

am

not

sure

that

technology

actually

exists.

D

E

E

E

Even

if

you

do

the

the

l2

isolation,

you

know

other

other

hopes

in

another

p2p

link

may

still

poison

the

router.

I

completely

agree

with

you.

I

completely

agree

with

you,

so

my

first

feedback

would

be

just

because

you

do

l2.

Isolation

doesn't

mean

that

you

know

you.

You

automatically

solve

mainly

nd

probe

when

you

do,

for

example,

no

matter

l2

isolation

or

unique

prefix

per

host.

Actually,

you

also

need

to

enhance

the

router.

E

E

E

For

example,

if

we

look

at

the

l2

isolation

case,

which

I

think

that

you

know

if

we

look

at

which

solution

make

use

of

this

one

I'll

say

that

you

know

a

case

in

point,

that

would

be

the

width,

you

know

the

wireless

nd

and

the

wireless

nd.

Actually,

you

know

introduce

quite

some.

You

know

new

functionality

into

it

in

order

to

you

know

avoid

some

of

the

problems

that

you

just

mentioned.

I

think

that

this

is

my

my

first

response

and

the

second

response

regarding

the

introducing

of,

for

example,

many

interfaces.

E

This

may

be

the

case,

and

this

may

be

something

you

know

undesirable,

but

you

know

in

our

draft.

It's

largely

we

talk

about

the

you

know

where

it's

appropriate,

where

it's

not

appropriate

and

if

you

know

introducing

too

many

interfaces

become

a

problem,

then

I

think

that

you

know

in

our

journal.

We

will

recommend

that

you

don't

do

it.

I

I

I

hope

I

respond

to

your

your

points.

D

E

Well,

if

again,

if

we

go

back

to

the

to

the

the

debate

before

uppx

become

a

rfc,

I

think

that

the

the

people,

for

example,

I

think

that

the

author

of

ifc

8273,

they

say

that

the

implementation

exists.

However,

how

exactly

was

the

super

implementation

be,

was

actually

not

clearly

specified

in

any

rfc.

E

E

Specify

what

additional

functionality

the

router

or

the

host

needed

to

do,

for

example,

you

know

in

wing

that

you

know

they

specify

these

edges

registration,

but

I

think

that

in

in

in

the

case

of

upp

h

the

how

what

the

what

exactly

the

router

do

is

actually

not

specified,

and

I

actually

feel

that

maybe

we

need

a

draft

and

actually

always

p

to

p-

is

the

net

job.

Personally,

I

think

that

you

know

can

actually

be

the

the

the

mixing

specification

of

ifc

8273.

I

don't

know

whether

oh

they

agree

to

this

or

not.

E

H

I'm

slightly

concerned

by

how

much

wind

has

been

thrown

around

in

the

last

few

minutes.

I

also

think

more

generally,

it's

probably

going

to

be

more

confusing

for

operators

to

have

all

the

six

low

stuff

mentioned

in

here.

Right

wind

is,

you

know

I

mean

I

know

pascal's

on

the

call,

but

like

the

stateful,

six

low

stuff,

the

stateful

registration

stuff

that

6lo

does

was

discussed

in

six

man

in

the

past.

You

might

want

to

go

review

some

of

those

discussions.

H

E

H

So

yeah

this,

this

six

little

diagram

doesn't

appear

in

the

in

the

draft,

but

like

there's

lots

and

lots

of

wind

recommendations

it.

It

looks

like

the

to

me.

It

looks

like

the

draft

sort

of

yeah.

I

mean

you,

you

do

mention

it

that

it's

appropriate

for

for

six

low

cases

for

lln

cases,

but

I'm

like

not

entirely

sure.

It's

going

to

be

clear

to

an

operator

that

this

stuff

is

not

available

for

like

laptops

and

whatever,

generally

speaking,.

E

J

J

J

Yes,

it's

because

of

my

source,

I'm

sorry

yeah.

I

think

we

never

really

resolved

whether

well

snd

is

applicable

to

to

propose.

I

mean

there

were

very

few

discussions.

There

was

never

a

call

for

adoption.

Really.

There

was

not

enough

discussion

to

to

justify

that

any

discussion.

Any

decision

was

actually

taken

and

really

we

we

would

need

to

study

the

prime

at

six

man,

which

was

never

done.

F

J

H

A

A

A

I

think

we're

all

aware

of

the

current

condition

of

the

hop

by

hop

extension

header.

Many

many

providers

drop

packets

that

contain

hop

by

hop

because

it

can

be

used

as

a

dos

attack

we're

finding

some

new

uses

for

it,

but

we

really

can't

use

it

because

of

its

status

is

a

dos

vector.

So

there

will

be

a

joint

meeting

between

v6,

ops

and

six

men

tomorrow

to

discuss,

discuss

how

we

can

fix

that

problem.