►

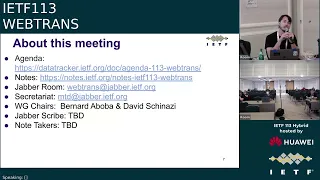

From YouTube: IETF113-WEBTRANS-20220324-1330

Description

WEBTRANS meeting session at IETF113

2022/03/24 1330

https://datatracker.ietf.org/meeting/113/proceedings/

B

C

A

A

A

D

This

is

our

first

in-person

meeting

since

we

formed

the

working

group

in

march

of

2020,

because

we

had

an

in-person

buff

and

then

we

were

virtual.

So

now

you

know

how

tall

some

of

you

are

and

we're

looking

forward

to

seeing

more

folks

at

hopefully

the

coming

itfs.

We

still

have

a

lot

of

folk,

remote.

D

D

The

usual

tips

for

remote

meetings

haven't

really

changed,

but

if

things

have

changed

for

the

in-person

folks,

since

we

were

last

in

person

in

particular,

if

you

want

to

join

the

mic

line,

you

need

to

do

it

on

the

online

version.

Otherwise,

you'll

just

be

standing

at

the

mic,

stretching

your

legs

and

you

can

use

either

the

onsite

or

the

remote

version.

They

both

work

for

that.

D

And

yeah,

if

you

are

in

the

room

like

please,

join

it

as

well,

because

that's

the

only

way

we

populate

the

blue

sheets,

which

is

important

and

then

the

buttons

for

meat

echo

are

roughly

the

same.

You

press

the

hand

to

join

the

cue,

and

then

you

can

unmute

once

it's

your

turn.

Please

stay

muted

until

that's

the

case.

D

The

note

well

it's

thursday,

so

most

of

you

have

probably

seen

it,

but

some

folks

might

be

joining

for

their

first

session

today.

So

everything

we

do

itf

is

covered

by

the

notewell

and

if

you

haven't

read

it,

you

really

should

do

that.

It

said

that

you

had

to

read

it

to

register

so

hopefully

you

at

least

glanced

at

it.

In

particular.

Anything

you

say

has

implications

with

regards

to

copyright

and

patents,

and

also

we

have

the

itf

has

a

code

of

conduct

that

the

chairs

will

be

enforcing

it.

D

Pretty

much

boils

down

to

be

nice,

which

is

what

everyone

has

been

doing

in

this

working

group

so

far.

So

we're

really

happy

for

that.

Let's

keep

it

that

way,

all

right,

some

more

links.

We

would

like

volunteers

as

usual

for

a

jabra

scribe

and

a

note

taker.

Do

we

have

volunteers?

Please

thank

you,

lucas

for

jabberscribing

who

would

like

to

be

our

notetaker.

Please.

D

D

For

note,

taking

notes,

awesome

thanks

and

we're

yeah,

you

can

collaborate

both

of

you

on

the

usual

thing,

that's

accessible

from

the

itf

agenda.

Page

awesome

thanks

right.

Our

agenda

today

is

me

talking

for

a

while.

That's

done,

then

we're

gonna

have

a

w3c

update

from

will.

We

are

then

going

to

talk

about

web

transport

of

http

3

and

then

we'll

transfer,

http,

2

and

wrap

up.

Would

anyone

like

to

bash

the

agenda.

D

E

Okay,

thank

you

good

morning

from

california.

I'm

will

law

from

akamai,

I'm

representing

the

w3c

group.

Looking

at

developing

the

api

for

web

transport

for

browsers,

I

represent

yaniva

brewery,

my

co-chair,

so

just

a

quick

update

for

you,

three

slides

progress

since

november

the

9th

last

time

we

reported

the

status

of

the

spec

is

now

a

working

drop.

The

latest

version

is

march,

11,

2022

and

there's

a

link

in

the

slides.

Here

you

can

go

to

it

as

chairs.

E

We've

set

a

somewhat

optimistic

timetable

for

the

year

it's

outlined

here

with

candidate

recommendation

coming

up

awfully

quickly.

The

main

blocker

here

is

by

july

31st.

We

had

the

idea

for

a

proposed

recommendation,

which

requires

at

least

two

independent

implementations.

Chrome

have

been

in

production

since

m97.

However,

mozilla

have

not

yet

signaled

a

date

so

july.

31St

is

somewhat

challenged

at

this

point,

and

I

would

encourage

alternate

browser

vendors

to

chrome.

E

To

please

step

up

here.

We

have

a

charter

valid

through

september

30th.

We

will

extend

that

charter

if

we're

not

able

to

get

the

second

implementation,

then,

by

that

rate,

milestones,

we've

adjusted

our

milestones

to

match

the

release

process.

So

we

have

a

new

milestone

up

now

called

candidate

recommendation.

It

obviously

contains

the

issues

that

need

to

be

resolved

before

we

can

proceed

to

that

stage.

Minimum

viable

ship,

the

prior

one,

has

a

few

issues

remaining.

E

E

Some

of

the

resolution

establishing

a

session.

Clients

should

not

be

providing

credentials

and

I've

got

issue

links

there.

You

can

go

through

and

read

the

details

blocking

ports

on

on

fetch's

bad

ports

list,

adding

smooth

rtrt

variation

to

web

transport

stats.

With

the

definitions

coming

from

elsewhere,

packets

lost

web

transport

stats

extra

requirements

on

server

cert

hashes

on

web

transport,

get

stats,

we've

added,

expired,

outgoing

dropped,

incoming

datagrams

lost

and

decided

that

stream

stats

will

be

an

individual

getstats

method

on

each

stream

instance.

E

You

know

require

unreliable

versus

request,

reliable,

for

example,

content

security

policy

has

also

been

a

long

issue

and

there

was

recently

a

decision

to

to

break

the

compatibility

with

the

early

version

of

chrome,

in

the

hope

that

it's

better

to

do

it

now

as

there's

not

that

many

users

and

that

it

would

be

more

stable,

going

forward

datagram

priority

over

streams,

there's

been

a

change.

The

priority

algorithm

had

some

some

guidance

and

it's

replaced

with

normative

language

that

the

user

agent

should

make

sure

that

datagrams

get

out

quickly.

E

The

last

one

was

very

sort

of

absolute

datagrams

always

go

out

first,

and

there

were

some

potential

issues

there.

Current

issues

under

debate,

bike

shedding

on

names

for

stream

stats

that

should

be

resolvable

connection,

pooling,

there's

new

issues

raised

around

whether

it's

default

on

or

off,

and

how

to

post

that

in

the

constructor

and

then

lastly,

priorities

between

streams

is

this

necessary,

and

also

this

is

a

question

for

our

etf?

E

G

Go

ahead

so

world's

asking

about

priority

here,

which

I

think

is

probably

one

of

the

more

interesting

questions

that

we

could

we

could

discuss

here,

there's

potentially

something

we

could

do.

We

could

take

the

http

priorities

thing

and

just

sort

of

say:

well,

there's

eight

levels

and

off

we

go

or

we

could

take

a

leaf

out

of

the

webrtc

book.

I

think

they

have

four.

E

No

is

the

answer

and

there's

they

don't

have

to

be

precisely

identical

in

feature

set.

I

think

there's

an

intent

that

they

should

deliver

the

majority

of

the

capability,

but

you

know,

chrome

is

certainly

shipped

not

with

these

features

in

and

that's

acceptable

they're

getting

added

post

ship.

So

I

think

the

requirement

is

that

we

want

basic

functionality,

be

able

to

connect,

be

able

to

establish

streams,

send

datagrams.

E

D

I

Allen,

frindell

with

respect

to

priority,

I

think

I

I

like

the

http

scheme,

a

lot

in

terms

of

the

eight

lanes

and

I

think,

in

terms

of

different

priority

schemes.

We've

looked

at,

at

least

in

http

over

time

that

this

has

got

the

right

balance

of

flexibility,

power,

simplicity

and

creating

another

one.

I

I'm

not

sure

would

be

really

useful,

so

finding

a

way

to

adapt

that,

as

like

a

model,

there's

two

pieces,

one

would

be

if,

if

webtransport

adopted

it

would

we

add

signaling

to

the

wire

protocol

so

that

the

browser

side

can

set

the

priority

on

streams

that

the

server

is

sending

and

another

one

is

just

on

the

api

side.

Can

the

browser

set

priorities

on

the

streams

that

it

is

sending

which

is

strictly

does

not

require

wire

format,

changes

it's

just

between

the

api

and

the

sending

implementation

thanks.

D

J

D

D

K

I

was

mostly

under

the

impression

that

the

priorities,

at

least

outside

of

those

communicated

as

the

wire

by

gtp,

are

typically

considered

the

api

concern.

So

I

personally,

unless

we

intend

to

provide

a

mechanism

for

end-to-end

signaling,

I

don't

think

there's.

There

is

much

in

the

working

group

to

be

done

since,

as

far

as

I

can

see,

it's

mostly

an

api

problem

as

in

it

is

about

how

web

applications

signal

priorities

to

the

browser's

networks.

Act

then

not

to

the

peer.

G

Yeah,

I'm

going

to

agree

with

victor

here.

I

think

that

if

an

application

wants

to

do

the

signaling,

it

can

use

web

transport

for

that.

That's

probably

the

most

obvious

thing

to

do

here

and

then

it

becomes

a

purely

api

consideration

and

I,

I

think,

to

allen's

point

that

extensible

prioritization

scheme

scares

me,

I

think,

probably

for

something

like

web

transport.

D

D

D

D

D

K

K

So

now,

web

transport

over

http

still

has

a

lot

of

some

issues

that

are

not

resolved,

and

one

of

them

is

the

question

of

whether

we

need

to

support

a

go

away

like

mechanism

for

training

sessions

and

the

reasoning

behind

the.

Why

we

need

to

send

the

protocol

as

opposed.

We

need

it

in

the

application

and

the

application

can

handle

it

by

itself.

K

K

D

K

K

I

Alan

frindell,

so

I

think

when

you

think

about

it

in

the

context

of

http

2,

where

all

of

the

web

transport

streams

are

not

visible

at

the

http

session

layer,

you

know

an

http

2

go

away,

would

have

no

impact

on

whether

webtransport

endpoint

could

continue

creating

new

web

transport

streams

within

that

established,

http

2

stream,

and

I

think

we

should

preserve

those

semantics

for

http

3..

So

if

you

receive

a

go

away,

it

means

no

new

web

transport

sessions,

but

you

can

still

create

web

transport

streams.

If

you

want

to.

K

D

D

K

K

At

some

point,

alan

suggested

that

we

might

want

to

keep

the

internal

streams

open,

even

when

the

sessions

is

closed

and

when

we

discussed

this

a

year

ago.

The

conclusion

was

to

revisit

this

once

we

have

more

implementation

experience

and

my

current

impression

after

having

more

implementation

experience

is

that

they

have

having

the

semantics

where

the

control

stream

automatically

terminates.

K

Everything

on

session

is

much

easier

to

follow

and

much

easier

to

manage

in

terms

of

resource

life

cycle

and

also,

if

we

allow

that,

I

am

not

sure

how

that

would

look

for

http

2,

where

everything

is

on

a

single

stream.

So

I

think

we

should

keep

the

current

semantics.

Where

closing

the

connect

stream

automatically

determines

everything.

K

L

I

think

I

would

generally

agree

with

having

killing

the

connect

stream

kill

everything

else.

It

seems

like

if

you

don't

do

that.

What

you're

effectively

saying

is

that's

kind

of

an

implicit

a

couple

slides

ago

the

go

away

that

we

wanted

for

a

web

transport

session,

and

so

it

seems

safer

to

make

that

explicit,

go

away.

If

you

wanted

those

semantics

and

then

still

have

your

control

stream

around,

if

you

needed

control

messages

rather

than

having

the

control

stream

disappear

and

trying

to

assign

some

sort

of

meaning

to

that.

K

M

K

M

Yeah

I

mean

it's

depending

on

what

protocols

you're

doing

in

some

cases.

It

might

be

that

you

actually

want

to

finish

saying

it's

the

assumption.

What

what,

if

you're,

relying

on

the

higher

level

protocol

built

on

top

of

web

trans

or

evapotrans

itself,

to

do

the

close,

which

kind

of

semantics

you

want.

So

it's

a

bit

yeah.

K

D

G

So

if

we're

thinking

about

the

http

2

version

of

this

one,

if

you

close

the

connect

stream,

you

also

have

just

shut

off

your

ability

to

send

any

more

stream

data

of

any

type,

so

I

think

you're

also

prevented

from

receiving

it

in

a

sense,

probably

not

so

I

tend

to

think

that,

once

we've

closed

the

streams,

then

everything

else

is

effectively

orphaned.

At

that

point,.

D

Hey

victor

speaking

as

an

individual

contributor.

Well,

rather,

as

a

mask

enthusiast

here

in

the

http

datagrams

draft

that

we

have

over

in

mask

when

you

close

the

connect

stream,

you

can

no

longer

send

datagrams,

so

my

personal

take

would

be.

It

just

makes

everything

easier.

If,

when

you

close

the

connect

stream,

everything's

done.

K

M

Magnus

pestle

one

question

I

have

is

about

receiving

the

data

that,

if

you're

basically

sending

the

rest

of

the

data

you

have,

and

then

you

hit

close

is

the

risk

that

the

receiver

will

get

the

close

before

the

date,

the

other

like

data

is

sent

and

then

you

reject

it

and

that's.

I

will

be

a

little

bit

careful

about

that

particular

use

case.

D

M

M

K

To

clarify

there

are

effectively

three

ways

you

can

close

the

connect

stream.

You

can

close

it

by

sending

close

session

capsule,

which

gives

you

the

session,

which

gives

you

the

status

code.

You

can

close

it

by

just

sending

thin,

which

is

equivalent

to

sending

an

empty

capsule,

and

you

can

reset-

and

this

issue

roughly

covers

all

three

of

those

cases

but

yeah

in

general.

G

So,

in

terms

of

the

effect

on

a

transport

session,

it

should

be

nothing.

It

may

affect

what

the

browser

does

with

future

requests.

But

that's

that's

not

something

that

word

transfer

necessarily

has

to

worry

concern

itself

with

the

the

sort

of

broader

questions

about

how

to

transport

and

interact

with

connections.

L

So,

let's

talk

about

pooling,

we

have

decided

a

while

back

that

we

would

like

to

support

pooling,

and

that

means

that

we

want

to

be

able

to

have

more

than

one

web

transport

session

within

a

given

http

3

connection,

and

I've

got

a

little

side

note

here

on

the

side

that

this

is

really

not

that

much

of

an

issue

for

h2,

because

each

session

is

fully

encapsulated

within

one

connect.

Stream

and

h2

is

already

perfectly

capable

of

having

more

than

one

connect

stream

and

so

a

lot

of

the

layering.

L

So,

coming

back

to

the

pretty

picture

that

we

looked

at

last

time,

this

is

kind

of

visually.

What

we

think

we're

doing-

and

we

had

this

up

in

the

context

of

flow

control

for

h2,

but

essentially

within

http

2

every

web

transport

session,

is

within

a

connect

stream

and

you

can

have

multiple

of

those

pooling

done.

L

H3

is

a

little

bit

more

interesting

because

you

have

web

transport

sessions

where

here

we've

kind

of

broadened

this

box,

and

this

is

all

a

little

bit

abstract.

So

we

don't

need

to

get

too

into

the

details

of

this

diagram.

But

what

we're

really

trying

to

convey

is

that

we've

got

these

different

native

http

3

streams

that

are

serving

different

purposes

on

behalf

of

that

web

transport

session.

L

So

the

proposal

that

I'm

going

to

make

with

these

slides

for

discussion

is

that

we

do

the

necessary

things

in

order

to

keep

any

individual

web

transport

session

from

essentially

unfairly

dominating

the

h3

connection

and

call

it

a

day

and

explicitly.

That

means

we're

not

going

to

do

anything

to

try

to

preclude

future

enhancements

to

this.

L

What's

left,

though,

is

some

sort

of

control

on

the

maximum

count

of

sessions

that

you

can

make

right?

So

if

I

wanted

to

open

a

million

web

transport

sessions

within

my

h3

connection,

that

might

not

leave

any

room

for

non-web

transport

requests

to

go

on

there

and

also

the

count

of

streams

for

each

session.

For

the

same

reason,

because

the

streams

are

native

h3

streams,

those

are

kind

of

entangled.

L

We

do

have

a

note

in

both

h3

and

also

actually

in

h2

right

now.

That

says

that

we

don't

actually

go

out

of

our

way

to

provide

any

limit

on

the

number

of

web

transport

sessions

that

you

can

open

and

that

instead

there's

two

different

ways

that

the

server

or

the

the

receiver

of

that

can

just

say,

nope.

This

isn't

happening,

and

I

just

reject

it.

L

L

L

The

way

that

we

generally

do

that,

for

example,

within

quick,

is

we

have

a

max

streams

frame

that

adjusts

the

limit

on

the

number

of

streams,

and

then

we

have

max

data

and

max

stream

data.

And

of

course,

all

of

these

have

their

associated

blocked

frames

as

well

to

help

with

debugging

and

other

issues

like

that.

L

We

have

this

nice

property

here

in

which,

because

web

transport

over

h3

is

already

using

native

h3

streams,

those

have

a

flow

control

limit

on

them

already

from

quick,

which

is

that

mastering

data

frame.

So

we

don't

actually

need

a

new

one

there

that

is

already

present,

and

if

we

do

the

maxweb

transport

sessions

setting,

then

we

don't

need

any

other

limit

for

total

session

count.

L

If

we

go

back

to

our

pretty

picture

from

before

I've

added,

some

vaguely

shaped

annotations

to

kind

of

try

to

help

visualize

what

this

means.

This

may

or

may

not

be

meaningful

if

it

is

not

no

worries,

so

the

first

one

is

down

at

the

bottom.

There

we've

got

our

setting

for

mac

sessions

and

I've

put

that

around

the

fun

little

dot

dot

dot.

L

L

H

L

I

don't

have

a

strong

negative

aversion

to

keeping

track

within

a

web

transport

session

of

the

amount

of

data

that

you're

allowed

to

send

and

having

a

limit

be

communicated

from

the

other

end

about

that.

But

if

there's

it

could

also

be

that

I'm

not

correctly

internalizing

how

painful

that

would

actually

be

see.

David

is

not

standing

up.

Yeah.

D

H

Yes,

so

of

course

you

can

read

the

data

out

of

the

stream

and

then

have

all

your

buffers

at

the

web

transport

layer.

So

I

guess

my

my

question

is:

how

do

we

account

for

if

they

are

missing,

missing

packets

or

missing

frames

on

a

stream,

so

in

quick

flow

control,

then

the

maximum

offset

that

you

have

received

would

count

for

flow

control.

H

Do

we

then

say

like

we

don't

care

about

this

or

we

let

the

quick

layer

handle

this,

and

we

have

like

this

totally

separate

flow

control

on

on

the

web

transport

layer?

I

I

haven't

fully

thought

this

through,

because

we

are

now

reusing

the

the

quick

flow

control

from

this

stream

on

the

web

transport

layer.

If

there's

any

mismatch

there,

I

would

need

some

more

time

for

that.

D

G

L

G

Knowing

that

that

enforcement

is

going

to

be

imperfect

at

that

point-

and

I

certainly

don't

want

to

have

it

with

its

tentacles

deeply

embedded

in

the

quick

stack

in

order

to

get

the

values

that

the

quick

stack

is

using.

So

I

I

think

I'm

I'm

all

for

saying

just

okay,

maybe

we

we

just

don't

do

that

one.

L

If

we're

not

doing

that

right,

I

mean

the

the

reason

we

have

a

lot

of

those

tiers

is

to

allow

people

to

be

more

restrictive

and

therefore

allow

any

individual

stream

to

use

that

entire

limit

without

having

to

commit

to

using

these.

You

know

to

being

willing

to

devote

the

resources

for

the

sum

of

all

of

the

streams,

but

it's

entirely

possible

that

you

can

tackle

that

elsewhere

anyway,.

G

G

You

must

provide

this

many

streams,

but

that

doesn't

mean

that

you're

gonna

get

that

many

streams,

and

so,

if

you

have

enough,

but

you

can

just

count

how

many

streams

you've

got

at

the

moment,

there's

a

little

bit

of

trickiness

in

terms

of

the

accounting,

but

I

think

we

can

make

that

work.

And,

of

course,

if

this,

if

the

quick

layer

isn't

able

to

issue

new

streams

faster,

then

you

won't

be

able

to

get

up

to

this

limit.

But

that's

okay.

L

And

for

what

it's

worth,

I

think

that's

probably

a

good

thing

in

the

same

way

that

max

data

gives

you,

you

know

a

way

to

say

my

I'm

not

willing

to

take

the

sum

of

all

of

them

in

total.

I

want

something

smaller

than

that

you

could

always

say

at

the

quick

layer.

I'm

only

willing

to

take

this

many

streams,

but

each

web

transport

session

is

able

to

use

all

of

them,

which

does

not

then

commit

you

to

the

sum

of

all

of

your

web

transport

sessions

in

stream

count.

L

G

L

I

Alan

from

dell,

so

I

think

I

I

hear

the

like

concern

that

max

data

is

hard.

Maybe

I

don't

know

I

want

to

say

impossible,

but

much

harder

than

stream.

So

I

think

maybe

what

makes

sense

is

we.

We

separate

this

out

and

kind

of

table

max

data

for

now,

but

I

I'm

left

a

little

uncomfortable

thinking

that,

like

one

session,

can

cannibalize

quick's

entire

flow

control

and

leave

other

sessions

blocked

and

so

be

good

to

have

a

solution.

But

you

know

how

complicated

it

needs

to

be.

Is

it

worth

implementing?

D

D

Okay,

like

limiting

max

streams,

it

sounds

like

this

might

be

hard,

but

we

want

to

try

so

I'm

getting

consensus.

Let's

write

a

pr

to

do

this

and

have

someone

implement

it

and

see

from

there.

If

you

disagree,

please

jump

up

to

the

mic,

I'm

getting

a

sense

that

max

data

most

folks

think

that

it's

too

hard,

but

alan

thinks

it

might

be

possible.

D

I

personally

as

well

as

chair.

I

I

don't

love

the

idea

of

keeping

an

issue

open

with

no

clear

resolution

points,

so

what

I

would

suggest

would

be

to

close

the

issue

and

if

someone

has

a

design

that

they've

implemented,

that

they

they

want

to

bring

to

the

working

group

to

say

I

figured

out

how

to

do

this.

D

I

Just

repeat

what

I

said

in

the

chat,

which

is

I'm

not

any

more

sure

than

anybody

else

that

it's

possible,

but

I

am

interested

in

spending

some

more

time

or

having

also

the

group

spend

more

time

exploring.

Is

it

possible,

without

bending

over

backwards

or

doing

something,

that's

not

worth

implementing.

So

in

terms

of

I

don't

real,

I'm

personally

like

to

keep

the

issues

open

until

they're

really

resolved,

but

if

I

mean

otherwise,

it

might

get

lost,

but

I'll.

Let

other

people

decide

how

we

want

to

handle

administratively.

D

My

my

take

is,

if

there's

something

that

no

one

has

an

intuition

on

how

to

solve

keeping

it

open

like

doesn't

I

don't

see

a

what

leads

us

to

closing

it,

and

so

I'd

rather

have

like

us

fail

like

if

no

one

proposes

anything,

the

answer

is

gonna,

be

we're

not

doing

it,

and

so

I

I

would

put

the

burden

on

whoever

and

you

know

we're

at

a

point

on

this

document

where

anyone

can

file

an

issue.

So

if

anyone

comes

up

with

a

proposal,

then

they

open

an

issue.

I

No,

it's

okay,

I

mean

go

ahead

and

close

it.

If

that's

what

we

want

to

do,

I

will

probably

forget

about

it.

We

will

all

forget

about

it.

Probably

sometime

after

we

ship

it,

somebody

will

report

a

very

complicated

bug

where

we've

hit

this

issue

and

complain

and

then

we'll

kick

ourselves

for

not

having

spent

more

time

on

it.

D

L

L

So,

since

112

we've

landed

a

bunch

of

the

changes

that

we

talked

about,

then,

however,

a

bunch

of

the

changes

that

we

talked

about,

then

the

resolution

was

wait

for

the

http

datagram

design

team

in

mask

which

has

now

concluded,

so

that

is

fantastic.

We

are

unblocked

on

that.

The

remaining

outcome

from

that

that

we

need

to

decide

what

to

do

with

is

capsules.

L

So

that's

going

to

be

where

I

suspect,

we'll

spend

the

bulk

of

our

time

today

before

we

do

that.

There

is

one

other

issue

that

I

think

martin

filed.

That

is

something

I

wanted

to

bring

up,

so

we

all

talk

about

it

a

little

bit

and

see

if

there's

any

intuition

from

anyone

that

that

would

prevent

what

seems

like

the

right

answer

to

me.

L

L

Neither

side

can

send

any

web

transport

frames

at

all

you're

stuck

for

that

entire

rtt,

and

I

think

martin

very

rightly

points

out.

That's

basically

just

delaying

things

arbitrarily

any

frames

that

you're

allowed

to

send

by

the

flow

control

limits,

et

cetera,

et

cetera,

so

any

frame

that

would

otherwise

be

legitimate.

L

Why

should

we

prevent

you

from

sending

them,

and

so

the

proposal

here

is

allow

sending

them

don't

force

people

to

have

extra

rtts,

or

you

know

trips

as

opposed

to

round

trips,

and

let

everything

get

going

a

little

bit

sooner.

If

you

want

to

wait,

nothing

stops

you

from

waiting

that

can

be

a

nice

simple,

easy

way

to

implement

it.

L

But

if

somebody

wants

to

put

in

the

effort

to

correctly,

you

know,

send

frames

to

allow

things

to

get

going

sooner,

especially

since

web

transport

is

focused

on

allowing

the

server

to

also

initiate

web

transport

streams.

If

one

of

the

first

things

that

you're

planning

on

doing

is

having

the

server

open,

a

number

of

streams,

then

having

it

be

able

to

send

those

frames

without

waiting

for

the

connect

response

to

get

to

the

client

and

then

for

frames

to

get

back

from

the

client

to

the

server

so

that

it

can

then

open.

L

L

So

the

datagram

design

team

is

now

complete.

Thank

you,

david

and

a

bunch

of

other

fine

folk

who

participated

there

and

helped

get

that

out

in

time

so

that

we

could

talk

about

this

here

previously

in

h2,

we

defined

tlvs

for

every

web

transport

frame,

and

now

that

capsules

are

a

thing

we

could

potentially

be

registering

them.

L

Instead

of

in

our

registry

of

places

where

we

keep

our

list

of

web

transport

frames

to

use

with

h2,

we

could

register

them

in

a

different

registry

where

we

keep

the

list

of

capsules,

and

so

that

brings

up

the

somewhat

obvious

question

of

great.

So

what

do

we

get

if

we

use

capsules-

and

this

is

our

current

list

of

frames-

we

arrived

at

this

a

couple

of

meetings

ago-

it's

basically

the

minimal

subset

of

things

that

we

need

in

order

to

send

on

the

connect

stream

so

that

web

transport

works.

L

If

we

use

capsules,

there

exists

this

cool

capsule

already

called

datagram,

and

we

could

potentially

just

use

that

instead

of

having

it

be

wt

datagram,

the

definition

is

the

same.

The

wire

format

is

the

same.

The

semantics

are

essentially

the

same

with

a

little

bit

of

caveat

that

we'll

talk

about

a

little

bit

later.

L

We've

just

talked

about

removing

web

transport

max

data

from

that

list,

so

the

reuse

here

on

the

later

part

of

this

slide

was

previously

going

to

be

web

transport

mac

streams,

along

with

the

blocked

variant

as

well

as

web

transport

max

data,

along

with

the

blocked

variant.

That

would

now

just

be

web

transport

max

streams.

But

the

point

is

the

same:

if

there's

anything

from

h2

that

we

need

to

reuse

in

h3,

we

can

just

use

it.

It's

great.

It's

happy

we're

in

a

shared

registry.

Already,

anyway,

no

problems

there.

L

The

thing

that

I

think

we

want

to

discuss

is

whether

the

end

to

endianness

of

these

capsules

is

going

to

be

an

issue.

Is

that

a

good

thing?

Is

that

a

bad

thing?

And

so

my

understanding

is

that

the

tlvs

that

we

were

previously

defining

for

your

web

transport

over

h2

frames

were

not

end

to

end.

They

would

be

consumed

by

whoever's

terminating,

that

particular

h2

connection.

L

And

if

you

imagine

a

scenario

where

not

everybody

wants

to

implement

or

be

supporting

web

transport

over

h2,

but

we

do

think

that

we

need

it.

Maybe,

on

the

actual

connection

over

you

know,

kind

of

the

last

mile

of

the

internet

to

whatever

client

is

connecting

because

it

may

not

have

access

to

quick

on

some

percentage

of

networks.

L

You

could

imagine

that

an

intermediary

would

allow

a

client

to

fall

back

to

web

transport

over

h2,

but

still

want

to

speak

web

transport

over

h3

to

whatever,

upstream

or

origin

that

it's

going

to

be

talking

to,

and

it

would

be

doing

that

for

all

of

its

clients.

So

it

would

be

talking

h3

upstream

all

the

time

and

it

would

usually

be

talking

h3

downstream

to

the

client

most

of

the

time,

but

sometimes

some

of

the

clients

are

going

to

need

to

fall

back

to

h2,

and

we

want

that

to

work.

L

L

I

Alan

frindell,

so

just

to

repeat

what

I

just

put

in

the

chat,

which

is

that

the

datagram

capsule

it's

end

to

end

as

a

concept,

but

it's

not

transmitted

at

each

hop

as

a

capsule

right

when

you

go

through

a

hop

and

it

came

in

as

a

capsule,

but

the

other

end

supports

a

native

datagram

concept.

Then

it's

the

intermediary

is

allowed

to

sort

of

convert,

and

so

some

of

the

capsules-

I

don't

even

know

if

you

want

to

go

back

to

the

slide.

L

G

So

I

think,

mark

nottingham's,

review

of

donna

graham's

draft

that

asked

the

question

is

capsules,

premature,

something

or

other

generalization

abstraction,

and

I

think

it's

this

here-

that

sort

of

highlights

the

challenges

of

using

capsules

for

anything,

because

I

think

you

can

simply

look

at

the

datagram

thing

in

isolation

and

go

yeah.

So

that

makes

a

very

bit

of

sense.

We

can

pull

those

out

and

we

can

forward

those.

However,

we

choose,

but

then

I'm

looking

at

the

stuff

you're

doing

with

streams

here

and

thinking.

L

I

think

there's

something

in

the

sense

that,

as

alan

pointed

out

like

there

is

a

way

and

datagram

is

actually

an

example

of

that

where,

in

the

http

datagrams

document

it

says

you

know,

each

capsule

type

can

define

the

translation

that

it

should

do.

Potentially

when

running

over

different

transports,

and

the

only

capsule

that

is

defined

also

defines

one

of

those

to

say.

Datagram

is,

you

know,

potentially

broken

out

into

being

a

native

datagram

so

like

that

concept

is

appealing-

and

it's

maybe

possible

to

do

that

for

each

of

these

things.

D

D

Everything

looks

completely

different

and

the

I

think

what

it

comes

down

to

is

are:

is

web

transport

of

http

3,

the

same

protocol

as

web

transport

over

http,

2

and

1.,

or

are

they

different

protocols

and

by

protocol

I

made

http

upgrade

token,

perhaps

and

and

where

this

gets

really

interesting?

Is

let's

say

you

have

a

scenario

where

you

have

two

h2

hops

and

an

intermediary

in

the

middle

you

would

like

capsules

are

great

there.

Everything

you

want

everything

to

be

end

to

end.

D

You

have

a

server

that

terminates

the

client's,

hd

web

transfer,

http

3

connection,

and

then

it

turns

around

and

becomes

a

web

transport

client

over

http

2

to

to

the

back

end

for

lack

of

a

better

word,

and

at

that

point

the

capsules

are

end

to

end

from

the

client

to

the

first

node

and

from

the

first

node

to

the

second

node,

in

that

you

have

three

ends

or

four

to

this

protocol,

as

opposed

to

two

that

kind

of

makes

it

make

sense

to

me.

This

feels

consistent.

D

It

actually

like

I

I

we

had

another

discussion

of

like

they

do,

should

they

have

different

http

upgrade

tokens.

It

kind

of

pushes

me

in

the

yes

bucket

for

that

a

little

bit,

but

that

makes

it

all

of

this

kind

of

self-consistent

and

kind

of

clean

and

reasonable

to

me

empty.

Does

this

make

any

sense

to

you.

G

That

is

amazingly

slow.

That

makes

sense.

I

I

think,

that

the

sort

of

minimization

that

comes,

if

you're,

if

you've,

got

that

sort

of

intermediary,

there's

no

edge

away

through

h3,

or

vice

versa

and

you're

doing

the

web

transport

thing,

then

that

intermediary

needs

to

understand

web

transport

pure

and

simple

up

and

down

back

and

forth

yep

there's

still,

there

are

still

problems

that

we

don't

have

solved.

G

In

that

scenario,

though,

and

that's

where

it

starts

to

get

really

interesting

if

the

first

hop

has

no

constraints

on

on

creating

streams,

but

the

second

hop

does

and

you

you

have

a

stream

come

in

at

the

intermediary

and

the

intermediary

cannot

create

the

outgoing

stream.

What

is

it

going

to

do?

Yeah

exactly

the

sort

of

thinking

that

I'm

going

through

right?

How

the

hell

do

you

deal

with

that?

D

And

just

to

make

sure

I'm

understanding

you

right

well,

first

off

I

I

agree

like

that

kind

of

back.

Well,

it's

a

form

of

back

pressure,

just

17

different,

like

degrees

of

back

pressure,

50

shades

of

back

pressure.

If

you

will

the

thinking

about

it,

though,

that,

like

this

issue

at

hand,

whether

we're

using

capsules

or

something

or

any

possible

framing

for

this,

we

still

have

to

solve

that

right

like,

unfortunately,

if

you

have

an

intermediary,

no

matter

what

we

have

that

problem.

G

If,

if

it

stays

in

the

stream

and

can

stay

in

the

stream,

then

it's

not

a

problem

if

it,

if

it

has

to

be

lifted

out

and

the

constraints

are

different,

then

then

that's

not

going

to

be

the

case.

So

if

you,

if

your

intermediary

was

just

tunneling

the

effectively

bite

string

back

and

forth,

then

then

that

would

be

fine.

I

think

it

wouldn't

be

constrained

in

any

way

and

the

the

constraints

on

the

number

of

streams

that

you

have

would

be

fully

end.

C

I

Alan

fendell,

I

was

when

you

talked

about

that

stream

problem

going

through

intermediaries.

It

reminded

me

that

we

have

had

that

problem

with

I'm

going

to

say

the

p

word.

Everyone

cover

yours.

If

you

don't

want

to

hear

it

push

server

push.

We

had

that

problem

where

the

one

hop

thought

it

could

create

new

pushes

and

it

got

to

a

proxy

that

no

longer

had

available

streams

so

and

it's

yeah

it's

kind

of

a

hard

problem

and

in

the

in

the

h2

version

of

web

transport.

I

F

Hello,

lucas,

buddy

speaking

for

myself,

yeah,

I

made

a

comment

in

the

jabra.

I

think,

like

my

understanding

of

what

martin

said

about

the

stream

impedance

between

creation

on

one

side

of

an

intermediary,

and

the

other

seems

just

to

be

something

that

exists

already

today

to

me

anyway.

If

maybe

maybe,

if

there

is

a

problem,

we

could

solve

this

with

contexts

and

capsules.

Would

let

us

do

that

if

we

really

wanted

to

so

I

don't.

I

don't

see

a

humongous

issue

here

myself

that

is

different

than

the

problem

we

already

have

today.

L

L

Once

upon

a

time,

we

had

a

conversation-

and

I

think

this

echoes

a

lot

of

what

martin

just

said

in

the

in

the

chat,

which

is

we

talked

about

kind

of.

Do

we

define

these

things

that

we're

sending

over

the

connect

stream

and

capsules?

Have

this

cute

way

to

say

you

know

it's

a

datagram

when

you're

on

a

transport

that

supports

datagrams

split

it

out

to

be

a

datagram,

we

could

make

the

webtransport

stream

capsule

do

the

same

thing

and

say

when

you're

on

h3

split

it

out

when

you're

on

h2

don't.

D

Thanks

david

in

the

queue

again

as

an

individual,

I

agree

with

eric

here

just

wanted

to

reply

to

martin's

point

like

what

is

this

bias

and

adding

redundant

lengths.

So

I'm

looking

at

the

h2

draft

and

it

like,

because

you

know

we

have

these

frames

and

I

think

it's

reasonable

that

we

might

want

to

add

a

future

frame

for

an

extension

later.

D

These

are

self-describing,

and

so

we

have

a

sequence

of

tlvs

on

the

data

stream,

which

starts

to

look

like

something

I

know

so

like

we

don't

have

any

redundant

links.

These

are

literally

wire

format

compatible

with

capsules.

The

only

question

is

are

the

do

we

share

types

are?

Is

this

one

eye

on

a

registry

or

two

in

the

registries

and

for

the

reason

that

eric

mentioned

earlier,

having

some

of

them

be

the

same

everywhere

and

some

of

them

be

popped

out

in

quick

for

there?

D

G

G

That

is

actually

a

pretty

good

strategy

and

if

your

intermediary

doesn't

need

to

do

it

doesn't

advertise

support

for

http

3

version

of

web

transport

if

it

cannot

guarantee

end-to-end,

quick

and

http

3

all

the

way,

I'm

losing

my

brain.

It's

really

good

at

this

time

in

the

morning.

If

I

can't

guarantee

and

to

win,

then

it

doesn't

doesn't

offer

it

and

the

fallback

here,

which

is

the

h2

one

which,

by

the

way,

probably

works

on

http

one

one

as

well

adequately.

L

L

I

think

that's

absolutely

true,

but

when

we're

looking

at

the

other

things

that

we

get

from

that,

I

think

the

is

our

litmus

test

for.

Should

this

be

a

capsule?

Should

it

be

end

to

end

or

not?

And

if

not,

what

is

the

litmus

test,

because

I

think

which

in

a

registry

do

we

want

it

in?

Is

I

mean

that's

nice

and

I'm

happy

like

I

don't

care

what

I

enter

registry

we

put

things

in,

but

it

seems

as

though,

if

we're

like

great

this

is

nice.

L

L

L

Yeah,

I'm

certainly

getting

to

do

that

and

you

know

happy

to

do

that

with

other

folks

if

we

want

to

sit

down

together

and

and

talk

about

it

on

a

call

somewhere

and

and

brainstorm

a

little

bit

while

we

do

it

but

yeah

the

other

thing

just

in

case

victor

wasn't

looking

at

the

chat.

I

know

we

talked

about

this

a

little

bit

before,

but

I

wanted

to

make

sure

if

any

reaction

there,

since

a

lot

of

what

we're

just

discussing

kind

of,

has

impacts

for

how

h3

looks.

L

D

D

D

Would

you

be

willing

to

lead

this

design

team

sure

indeed,

thank

you

so

we'll

send

an

email

to

the

list

and

if

you

are

interested

in

joining,

please

reach

out

to

the

chairs

and

then

we'll

have

the

design

team

figure

this

out

for

us

and

in

an

ideal

world.

If

that

that

would

happen

in

the

near

future,

we

could

have

an

interim

to

discuss

it

similar

to

what

we've

been

doing

over

the

last

three

months

at

a

very

different

working

group.

J

O

Yeah,

jonathan

linux,

this

is

luke

curley,

who

said

he

missed

the

initial

priorities.

Discussion

that

raised

an

interesting

point

in

the

chat,

since

we

seem

to

run

out

of

everything

else

to

talk

about

that.

He

has

a

mock

style

scenario

where

he

wants

to

be

able

to

prioritize

new

things.

He

sent

over

old

things

he

sent,

which

is

hard

to

do

with

a

small

thick

set

of

priorities.

O

So

I

guess

I

know

the

priorities.

Api

is

not

in

scope

of

this

working

group

per

se,

but

it's

something

to

people

who

are

designing

mechanisms

like

that

to

keep

in

mind

like

whether

that

means

I

mean

it

sounds

like

his

implementation.

Just

has

you

know

64

for

priority

and

just

names

his

priorities

after

his

stream

ids.

O

D

I

I

Talk

about

the

priorities

and

then

make

my

other

point

too.

So

I

think

in

terms

of

luke's

scenario,

you

don't,

if

you

really,

all

you

want

is

to

switch.

You

know

your

mode

from

fifo

to

lifo.

Maybe

the

easiest

way

to

do

that

is

have

a

single

message

that

goes

over

your

web

transport

application