►

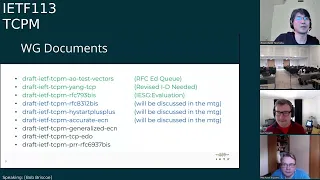

From YouTube: IETF113-TCPM-20220323-1330

Description

TCPM meeting session at IETF113

2022/03/23 1330

https://datatracker.ietf.org/meeting/113/proceedings/

A

A

B

A

A

Okay,

hello,

everyone.

I

think

I

can

hear

you

you

can

hear

me

so

this

is

our

dcpm

working

group

meeting,

tcp

maintenance

under

my

neck

station

working

group,

and

this

working

group

has

three

co-chairs.

My

name

is

yoshi

nishida

and

we

have

ian

here

and

michael

here,

everyone

in

remote

and

just

in

case

this

session

is

being

recorded,

so

it

will

be

published

eventually

so

here

in

the

remote

make

sure

you

have

already

cleaned

the

room,

otherwise

it

will

be

published.

A

Okay-

and

this

is,

I

use

a

node.

Well,

I

think

you

have

already

seen

this

several

times

already.

So

in

a

nutshell,

this

basically

describes

how

to

participate,

how

to

contribute

to

the

idf

and

which

point

you

should

be

available

and

if

you

have

any

concerns

about

participating

or

contributing

the

idea.

Please

read

this

slide

very

carefully

and

then,

if

you,

you

know,

you

can

find

the

same

content

on

the

idf

webpage.

You

can

search

and

you

can

find

it

very

easily.

A

A

A

A

A

And

then

this

is

agenda

for

the

today's

meeting,

so

we

are

starting

from

working

group

status

and

then,

after

that,

we

have

three

presentation

for

working

group

document

at

first

probing

we'll

talk

about,

I

start

draft

and

then

bd

will

talk

about

the

cubic

draft.

After

that,

bob

will

talk

about

acura,

dcn

updates

and

after

working

group

documents

presentation,

we

have

three

presentation

for

non-working

group

document.

A

C

Thank

you,

yeah.

I'm

really

excited,

obviously

I'm

extremely

familiar

with

with

quick

and

to

you

know,

congestion,

control,

work

like

ppr

and

and

such

and

I'm

excited

to

kind

of

get

a

better

visibility

into

the

innovations

in

tcp,

and

you

know

help

wherever

I

can

to

to

make

sure

that

tcp

is

awesome

is

quick.

A

A

This

draft

has

been

on

hold

for

a

while,

and

the

reason

why

it

has

been

on

hold

is

that

this

truck

depends

on

accurate

dcn,

but

we

have

very

long

discussion

on

accuracy

and

draft.

That's

why

you

know

this

general

edition.

Drought

has

been

suspended

for

a

long

time,

but

I

think

the

current

status

of

our

tradition

is,

you

know,

getting

mature,

it's

getting

close

to

be

finalized,

so

I

think

we

can

start

proceeding

generally

each

and

drop

very

soon.

That

is

expectation.

A

And

for

idio

draft

joe

sometimes

updates

the

draft.

Some

sometimes

send

a

message

to

the

mailing

list,

but

the

problem

of

this

draft

is:

we

don't

have

many

feedback

on

this

draft,

so

it's

very

difficult

for

chairs

to

think

about

how

to

proceed

this

draft.

So

I

would

like

to

talk

about

this

traffic

a

little

bit

more

in

my

final

slide.

So

I

will

you

know,

talk

about

this

later

and

then

the

final

one

is

69

37

bits

drafts

and

this

draft

has

been

inactive

for

a

while.

A

E

So

this

is

a

richard

just

the

general

observation.

I

don't

think

we

need

to

wait

with

generous

ecn

on

any

of

the

other

stuff,

really

quite

the

opposite,

so

I

would

really

appreciate

it.

If

generalized

ecn

would

proceed,

perhaps

we

can

start

thinking

about

even

going

working

group

last

call

because,

quite

frankly,

there's

no,

I

don't

see

any

technical

discussion

going

on

around

that,

while

there

is

also

technical

merit

to

be

had

from

generalized

ecm.

A

F

G

H

A

A

A

So

this

is

my

final

slide,

so

I

like

to

talk

about

idio

draft

a

little

bit

more

and,

as

I

mentioned

the

problem

of

this

draft

is

we

have

very

little

feedback

on

this

draft.

So

what

I'm

thinking

right

now

is

you

know

we

can.

You

know,

have

some

kind

of

specific

reviewer

for

this

draft

and

then,

if

we

can

get

very

detailed

feedback

on

this

trust

from

the

reviewers,

we

can

have

a

very

good

idea

about

how

to

proceed

draft.

A

A

Okay,

yeah,

if

not

no

after

some

discussion

among

cheers,

we

might

contact

some

of

you

asked

for

asking

you

know

reviewing,

but

that's

a

plan

and

then

also,

if

you

have

some

implementation

experience

for

idio

draft

or

if

you

are

planned.

If

you

plan

to

implement

idio

draft,

please

the

information

such

kind

of

information

will

also

be

very

useful

for

us

to

think

about

how

to

proceed

this

draft.

A

So

we

appreciate

your

cooperation

and

then

in

the

meantime,

I

also

like

to

think

about

how

to

you

know,

proceed

scene,

option

extension

and

joe

has

a

one

proposal

to

about

slashing

option

space

extension

and

this

program.

The

program

of

this

draft

is

also

we

don't.

We

have

very

little

feedback

on

this

draft

and

then

joe

is

saying

that's

no.

He

is

thinking

about.

You

know

submitting

this

draft

as

an

independent

stream,

but

I'm

not

sure

this

is

a

great

idea.

A

The

one

reason

for

that

is

option.

Extension

is

very

fundamental

for

this

respect.

So

having

this

kind

of

proposal

in

as

in

as

an

independent

stream

is

a

great

idea

or

not,

but

we

still

have

a

don't.

Have

don't

have

a

specific

feedback

on

this

point,

so

we

don't

know

if

this

is

okay

or

if

this

is

not

okay.

Well,

we

should

adapt

it

as

this

is

a

great

idea

so

and

then

we

also

would

like

to

think

about

how

to

extend

option

space

in

general,

not

only

specifically

those

proposals.

A

A

I

Yeah,

I

would

say

the

options

extension

in

general

and

the

sin

especially,

is

something

I've

been

interested

in

for

a

long

time,

and

ideally,

I

think

the

working

group

would

handle

this

and

arrive

at

a

consensus

solution.

I

think

the

independent

stream

path

is

a

last

resort.

If

the

working

group

doesn't

have

the

interest

or

energy

to

do

anything.

A

J

Hi,

martin

duke

google

yeah,

I

agree

with

wes.

It

would

be

nice

to

get

this

done

and

if

it's

a

question

of

bandwidth

it

just

people

want

to

review

it.

But

there's

too

many

other

things

in

tcpm

right

now,

then

maybe

we

can

make

a

commitment

to

like

I

don't

know,

adopt

it

and

then

clear

some

space

that

people

have

the

time

or

if

people

just

aren't

interested.

That's

it.

That's,

maybe

a

different

issue.

So

I'm

not

sure

what

the

nature

of

the

of

the

reluctance

is.

E

So

this

is

so

this

is

richard,

so

I

so

from

my

ex

observations.

Over

the

last

couple

of

years,

we've

had

a

couple

of

years

ago.

We

had

this

push

to

get

more

option

space

and

it

was

quite

a

high

high

on

the

agenda.

But

since

then

we

didn't

really

find

anything

that

had

an

immediate

need

for

extended

options

right

away.

E

However,

I

would

support

what

martin

just

said.

I

think

we

should

discuss

adopting

this

and

having

it

on

the

on

the

working

groups

agenda,

because

eventually

it

will

be

coming

up

again

and

then

it

will

be

more

urgent

than

ever,

probably

at

least

in

the

beginning,

at

least

at

that

time

I

would

expect

people

will

have

some

bandwidth

to

contribute

and

review.

E

J

So

I

do

want

to

clarify

that

I

was

making

a

question,

not

a

statement

like

if

the

if

the

lack

of

reviews

is

is

like

indifference

or

bandwidth,

and

if

the

question

is

bandwidth,

then

I

think

we

can

make

time

for

this

and

just

maybe

reduce

our

document

intake

or

or

whatever,

and

then

put

it

as

like.

The

last

milestone

they're

the

furthest

up

milestone,

whereas

if

people

just

like

don't

care,

then

then

we

shouldn't

string

along

joe

thanks.

K

K

K

At

that

point,

we

got

some

feedback

and

we

started

looking

into

jitter

resiliency

and

we

ended

up

simplifying

the

algorithm

a

lot.

So,

instead

of

trying

to

compensate

for

early

exit

from

slow

start,

we

added

detection

of

spurious

exits

to

be

able

to

resume

slow

start.

So

imagine

a

network

where

there

is

a

lot

of

jitter

a

delay

increase

algorithm

would

kick

in

sometimes

spuriously

and

cause

us

to

exit

slow

start.

At

that

point

we

enter

something

called

as

the

conservative

slow

start

phase.

K

So

what

is

conservative

slow

start

is

basically

a

series

of

rounds

where

we

are

trying

to

determine

if

the

exit

was

spurious

due

to

a

delay

spike

and

the

way

we

do.

That

is

we

when

we

enter

css.

We

capture

the

the

current

rounds

main

rdt

at

that

point

and

then

for

a

series

of

rounds.

We

see

if

any

of

the

delay

samples

are

going

to

be

lower

than

the

captured

rdt.

K

That

tells

us

whether

the

delay

increase

was

spurious

or

not,

and

if

we

think

that

the,

if,

if

we

determine

that

the

exit

was

indeed

spurious,

we

basically

resume

high

start

plus

plus

so,

which

means

you

know,

regular

slow

start

resumes

as

well

as

the

direction

of

delay

increase

and

in

the

entire

algorithm

is

basically

reset

and,

and

it

restarts,

and

the

advantage

of

this

is

that

if

the

network

does

have

jitter

that

you

know

slow

start

will

still

give

you.

You

know

good

performance

and

an

exponential

window

increase.

K

K

Sorry

for

the

small

font

size

here,

but

this

is

the

summary

of

the

current

algorithm

in

draft

version

4..

So

basically

in

slow

start,

we

we

adjust

the

condition

window

according

to

five

six,

eight

one,

but

we

take

rtt

samples.

So

each

round

is

approximately

an

rtt

and

we

remember

the

last

round's

minority

and

we

compute

the

current

round's

minority

and

we

use

a

threshold,

basically

there's

a

lower

clamp

and

an

upper

clamp.

K

But

basically,

if

the

current

rounds,

minority

is

greater

than

the

last

round's

minority

plus

the

threshold,

then

we

exit

slow

start.

We

also

capture

something

called

a

css

baseline

rtd.

So

that's

the

rgd

I

was

talking

about

that

will

help

us

determine

if

the

exit

was

spurious

or

not,

and

then

the

conservative

slow

start

phase

lasts

a

few

rounds.

Currently,

the

draft

recommends

five

rounds

based

on

experimentation

and

then

for

each

ack

in

css.

K

D

D

K

The

exit

was

actually

spurious

and

we

just

resume

slow

start

and

high

start

plus

plus,

but

if

we

complete

all

of

css,

if

we

complete

all

those

rounds-

and

we

don't

see

the

minority

go

lower

and

then

our

captured

rtt,

then

we

basically

enter

conditional

maintenance.

So

that

is

actually

a

correct

exit

and

there

is

a

consistent

delay

increase.

K

K

So

we

did

make

a

few

changes

in

in

draft.

I

want

to

bring

up

a

couple

of

things

that

were

brought

up

in

the

mailing

list.

Randall

had

proposed

that

instead

of

you

know,

setting

css

baseline

rtt

to

the

current

rounds

minority

that

it

instead

be

set

to

last

one

minority

plus

rtt

thresh.

We

did

experiment

with

the

idea,

but

it

showed

poor

performance

when

jitter

was

present.

K

So

I

think

it

was

an

optimization

for

when

there's

no

jitter

but

in

in

presence

of

jeter,

it

did

not

work

well,

and

I

think

neil

had

suggested

that

we

use

a

different

mechanism

for

determining

window

and

basically,

instead

of

using

greater

than

or

equal

to

you

strictly

greater

than

I

think

his

comments

were

going

towards.

You

know

cases

where

we

could

be

app

limited,

but

we

found

that

this

is

also

inaccurate,

computation

of

an

end

of

round

in

many

cases.

So

we

did

not

make

this

change.

K

So

the

only

sort

of

logic

change

that

we

made

in

draft

zero

four

was

removing

dependency

on

low

ss

thresh.

So

basically,

at

this

point,

the

only

trigger

for

computing

and

measuring

the

rtt

values

and

looking

at

delay

increases

basically

enough.

Number

of

rtt

samples

have

been

taken

in

the

round

which

currently.

O

B

K

So

at

this

point

we

have

addressed

all

the

outstanding

reviews.

I

think

we

got

one

from

bob

jeremy

and

neil

so

far,

and

we

answered

questions

on

the

mailing

list

as

well.

That

came

in

from

randall

and

neil

and

to

our

awareness

there

are

at

least

three

implementations

in

in

production

use.

The

windows

tcp

cubic

implementation

has

been

using

this

for

more

than

two

years.

I

think,

maybe

three

or

more

than

three

years

at

this

point,

cloudflare's

quick

library,

quiche

uses

it

in.

P

P

P

K

P

A

A

A

K

D

D

D

So

I

have

captured

some

of

the

updates

in

these

slides,

but

there

are

many

more.

These

are

just

some

of

the

updates

that

I

thought

would

be

interesting

to

just

combine

in

the

slides,

and

this

includes

everything

that

has

been

changed,

plus

the

ones

that

I

have

not

added

here

so

they're

all

present

on

the

github.

D

D

So,

since

the

last

meeting

we

made

some

changes

and

these

I

would

like

to

discuss

the

spurious

congestion,

detecting

spurious

congestion

event

and

reacting

to

them

there

there's

now

we

have

categorized

into

them

into

two

events.

One

is

boris

timeouts

and

the

other

is

spurious

loss

detected

by

acknowledgements.

The

first

one

is

kind

of

relevant

for

tcp

and

there's

already

a

standards

track,

rc

that

specifies

the

response

for

restoring

the

congestion

window

and

for

adapting

the

timer

so

that

there's

to

avoid

any

future

spurious

timeouts.

D

So

there's

this

rc

4015

that

specifies

all

of

that,

and

then

we

have

the

spurious

losses

detected

by

acknowledgments.

So

this

is

relevant

for

both

tcp

and

quick

and

in

tcp

you

can

use

timestamps

and

duplicate

sex

to

detect.

These

excuse

me

for

quick.

It's

quite

easy.

You

can

detect

it

by

seeing

any

new

packets.

Sorry,

sorry,

you

can

see

this

by

detecting

any

packets

that

were

marked

lost,

but

they

were

acknowledged

after

they

were

marked

lost,

so

that's

kind

of

a

spurious

loss

and

then

for

these

events.

D

So

you

just

have

to

be

a

little

careful

with

that,

and

then

we

have

neil

actually

made

the

next

update

about

clarifying

the

meaning

of

application

limited.

There

was

some

question

about

what

is

application,

limited

means,

and

we

have

added

text

for

clarifying

that,

and

then

there

was

a

request

or

question

by

yushi

about.

Why

is

rfc

7661?

D

D

It's

like

it's

doing

how

many

bytes

are

acknowledged

in

rta

in

consecutive

rtts,

one

two

or

three

rounds

so

because

it's

based

on

the

pipe

value,

which

is

why

it's

acknowledged

in

rtt,

it

kind

of

includes

the

validates

the

receive

window,

because

you

always

check

the

receive

window

before

sending

the

data.

So

we

have

added

text

to

make

it

a

little

bit

more

clear

why

it

is

safe

to

use

even

when

this

condition

window

grows

beyond

receive

window.

D

B

P

Yeah

gory

first

I

was

going

to

talk

to

the

previous

slide

and

just

say

I'll

read

the

text

again.

I

think

I

was

happy

with

what

was

done

so

the

7661

thing.

I

think

they

did

the

right

thing

for

this

document,

so

I

don't

see

that

as

an

issue

and

I'll

read

the

text

carefully,

I

promise

and

give

feedback.

If

I

see

anything

so

I

from

my

point

of

view,

let's

look

at

the

last

slide.

A

Q

D

A

L

L

Thing

means

that

that

the

ietf

cannot

do

this

before

cubic

undergoes.

All

of

this

you

know

evaluation

and

then,

while

I

sort

of

I

was

instrumental

in

establishing

this

process,

and

so

I

wish

that

deployment

would

have

happened

that

way,

it

didn't

right,

people

deployed

cubic

and

it

worked

well

enough

and

more

people

deployed

cubic,

and

now

it's

basically

ubiquitous

and

I

thought

of

c2

two

ways

forward

right

either

we

can

acknowledge

that

and

say.

L

Well,

you

know

this

didn't

happen

in

the

way

we

ideally

would

have

envisioned

it

to

play

out,

but

the

reality

is

that

it

is

running

the

traffic

on

the

internet

and

therefore

maybe

it

should

be

ps

or

we

can

say

you

know

we're

not

willing

to

do

that.

We're

going

to

stick

with

new

reno,

and

I

personally

obviously

I'm

very

biased

here,

because

I

think

this

should

be

a

published

center.

L

But

I

think

it

would

be

good

to

sort

of

that

seems

to

be

the

fundamental

sort

of

objection

right

that

it's

it

didn't

do

what

we

expect,

specifically

new

congestion

control,

algorithms,

which

is

arguably

isn't

really

either,

but

the

process

that

we

expect

the

stuff

to

undergo,

and

it

didn't

follow

that

right.

I

will

point

out.

P

P

This

is

not

totally

consistent

with

other

rfcs,

but

it

is

running

code.

It

is

out

there.

It

is

the

consensus

of

what

to

do

and

that's

what

this

document

is,

and

if

we

have

a

process

to

doing

that,

then

I

think

we

should

go

that

way.

If

we

don't,

then

I

think

we

need

to

invent

one,

because

we

need

to

get

this

document

published.

P

L

L

But

we're

now

in

a

situation

where

the

people

who

work

on

new

congestion

control

schemes

actually

are

in

control

of

of

end

points

directly,

which

wasn't

the

case

back

then,

which

is

mostly

academic

work

that

smart

people

did,

but

they

didn't

have

the

the

ability

to

deploy

at

scale

which

we

now

have

right,

and

so

it's

a

very

different

world

now,

15

years

later,

where

we

actually

have

the

ability

to

do

a

b

testing

over

the

real

internet

and

and

get

data

back.

And

all

of

that.

L

A

A

This

is

proposed

standard

and

then

I

also

think

this

is

some

kind

of

aggressiveness

in

the

draft

the

algorithm

described

in

the

draft.

So

I

think

I'm

not.

I

don't

find

a

particular

reason

that

we

are

attacking

cubic

specifically,

so

there

are

several

drafts

and

the

old

draft

should

be

treated

equally

and

then

otherwise.

You

know

it's

not

very

fair,

and

so

I

just

know

if

you

know

we

in

the

space

we

set

very

high,

set

high

bar

on

the

cubic

drug,

I

think

there

we

should

set

all

other

kind

of

transition.

A

D

K

If

I

were

writing

a

new

tcp

implementation,

or

even

a

quick

implementation

like

having

cubic

as

the

standard

to

implement,

would

make

sense

because

we

know

know

the

limitations

of

neurano

right,

so

it

doesn't

make

sense

for

new

implementers

new

implementers

to

not

find

this

as

a

as

a

proposed

standard

rc.

That's

my

take

on

it

as

an

implementer.

If

I

was

looking.

K

D

A

J

Just

very

quickly,

I

I

I

feel

like

we're

getting

maybe

away

from

the

80-12

discussion

a

bit,

but

regarding

like

the

gates

for

congestion

control,

the

bar

that

we

are

setting.

It

feels

like

that

bar

is

now

essentially

high

enough

that

the

correct

way

to

deploy

new

congestion

control

is

to

go

work

for

one

of

those

companies

that

ours

mentioned,

deployed

at

scale

and

then

come

to

idf

and

say

see

it

works,

which,

maybe

is

not.

J

S

A

A

A

A

A

A

A

A

N

E

D

A

L

Out

of

the

queue

yeah,

I

I

just

wanted

before

so

for

the

author's

team.

Ask

that

if

people-

because

we

had

the

second

working

group,

call

now

right

if

people

are

okay

with

publishing

the

version,

as

is

that

would

be

useful

to

know,

and

if

the

answer

there

is

no,

it

would

be

very

good

to

know

what

exactly

should

be

added

to

the

document.

L

I

know

that

gory

suggested

earlier

that

we

add

a

paragraph

that

said

this

document

didn't

follow

the

process

outlet

in

whatever

the

rfc

was,

but

the

you

know

the

itf

discussed

this

and-

and

I

want

to

say

proof,

but

at

least

acknowledge

that

it's

not

standing

in

the

way

of

this

publication

or

something

like

that.

If

there's

other

things

that

people

want

to

add,

that

would

be

also

good

to

know.

L

I

would

like

to

ask

the

chairs,

for

these

suggested,

edits

to

a

bit

more

actively

asked

whether

there's

consensus

for

this

edition.

Otherwise

we

are

sort

of

again

in

a

situation

where

this

grab

back

of

things

that

people

want

to

add.

We

don't

really

know

what

has

consensus

and

what

hasn't.

Thank

you,

okay,.

J

If

I

recall

the

discussion,

if,

unless

I

missed

something

in

a

discussion,

the

only

person

who

came

to

the

mic

and

said

it

should

be

experimental

was

lars

attempting

to

be

a

sock

puppet

for

for

makuu,

like

you're

kind

of

trying

to

like

express

his

opinion

without

him

being

here.

So

if,

if

either

of

the

one

or

two

people

who

support

experimental

rather

than

proposed

standard,

could

actually

like

say

something

about

it.

I

think

that's

sort

of

customary

at

least

to

give

them

an

opportunity

to

state

their

position.

J

A

A

D

Q

Okay,

so

I

I

did

not

want

to

make

this

complicated

and

I

haven't

followed

the

last

emails

from

marco

anymore,

but

I

remember

that

there

were

things

about

having

to

update,

probably

3168

about

something

and

and

some

procedural

things

right

that

that

he

had

heavy

disagreement

about

now.

I

don't

remember

if

that

was

all

resolved

or

not,

and

I

mean

okay,

I'm

fine

with

publishing

without

having

that

thing

in.

I

don't

want

to

make

it

complicated.

Q

You

really

don't,

but

if

it's

possible

to

state

why

there

is

a

procedural

problem

here

and

why

that

thing

gets

published.

Nevertheless,

other

than

just

saying:

okay,

it's

published

because

there

is

code

and

is

deployed,

I

mean

if

we

could

be

a

bit

more

elaborate

than

just

something

something

saying

something's

fishy

about

this

rfc,

then

I

think

this

would

be

valuable.

If

we

cannot

I'm

not

going

to

make

a

point

about

that,

I

mean

it's

okay,

I

still

want.

I

think

the

primary

thing

is

that

this

should

be

a

bs.

A

F

A

D

A

F

F

A

A

F

F

Next,

okay,

so

recent

draft

history

there

was

a

long

hiatus

after

july

21,

but

then,

like

all

good

buses,

they

come

in

threes

and

so

february

march

time

we've

had

three

there's

links

there

to

the

summaries

of

the

diffs

in

each

of

them,

which

I'm

not

going

to

go

through

in

these

slides.

But

there

are

spare

slides

at

the

end

that

do

and

thanks

to

ilpo,

vidi,

gory,

richard

and

myself

actually

for

noticing

some

unclear

parts.

F

F

Just

really

news

on

implementation:

there

are

two

main

implementations,

one

in

linux,

one

and

three

bsd,

the

linux.

One

is

reasonably

up

to

date,

but

it's

a

couple

of

draft

versions

out

of

date,

but

there

aren't

that

many

things

to

do

rich

has

been

implementing

in

freebsd

there's

link

to

his

implementation.

There.

F

F

F

So

there

are

a

number

of

cases

where

you

might

not

send

incapable

packets

because

there's

a

handshake

at

the

start

that

checks

whether

there's

a

mangling

going

on.

If

there

is

mangling

you,

you

still

remain

in

actual

etn

mode,

but

you

don't

set

ec

and

not

acn

capable

on

your

packets

that

you

send

out.

But

then

video

wondered

well,

even

if

you're

not

sending

ecn

capable

packets.

What,

if

you

get

congestion,

marking

feedback

coming

back

and

there

are

four

possible

three

possible

ways.

F

The

in

all

cases,

you

would

turn

off

sending

ecn

capable

packets,

but

it

there's

a

difference

in

how

we

think

you

should

respond

to

congestion

if

it

comes

in,

as

shown

in

the

right

hand,

column

and

we'd

like

some

discussion,

either

now

or

on

the

list

of

whether

these

the

rationales

for

these

make

sense.

So

the

first

one

is

if,

during

the

handshake,

you

see

some

sort

of

illegal

transition

going

on

like

either

you

send

a

non-easy

packet

and

you

get

get

back

feedback.

F

F

F

If

you

see

any

feedback,

even

though

you

wouldn't

expect

to

see

it

right

in

the

in

the

b

case,

if

you

see

continuous

congestion

feedback,

then

you

don't

respond

to

it,

especially

if

you're,

if

you're

not

sending

ect,

but

even

if

you

are,

that

probably

implies

there's

mangling

going

on.

That's

that's

asserting

congestion

experienced

all

the

time

and

the

third

case

c.

F

F

There

were

just

two

cases

where

I

changed:

lowercase

recommended

to

uppercase

and

I

think

they

were

justified.

One

is

it

said

already

in

the

introduction:

it's

recommended

to

implement

sac

and

ecm,

plus,

plus

with

accurate

ecn

and

made

that

uppercase,

and

similarly

there

was

one

saying

it

strongly

recommended

to

test

the

path

traversal

of

the

accuracy

and

option

and

given

it

already

said

strongly,

I

figured

that

was

quite

reasonable

to

put

normative

there.

But

if

anyone

wants

to

object

to

that,

please

do

so

on

the

list

or

now

at

the

mic.

F

F

We've

just

been

conservative

and

the

third

case

is

a

bit

of

a

fallout

from

when

we

changed

the

tcp

option,

accurate,

ecm

tcp

option,

so

we

had

two

different

tcp

option:

orders.

If

you

remember,

we

went

through

that

episode

of

changing

it,

and

you

noticed

that

we

hadn't

changed

the

initialization

of

the

two

fields

from

how

it

was

originally

when

we

only

had

one

option

or

one

type

of

option,

so

we'll

change

that

okay

next

slide.

F

We

ought

to

update

that

advice

as

well,

but

there

is

an

argument

that

that

advice

in

3449

isn't

normative,

so

maybe

we're

not

updating

it.

So

I

don't

mind

going

with

what

gory

says,

but

maybe

other

people

want

to

chip

in

on

that

point,

but

whatever

we're

going

to

try

and

change

the

text

to

outline

the

problem

and

discuss

possible

ways

forward

without

recommending

any

changes

and

without

updating

3-4-9.

F

Through

the

experience

of

richard's

implementation

and

ill

pose,

found

that

actually

sending

it

accurately

an

option

is

the

easy

part

of

the

code

and

handling

the

receipt

of

it

is

the

difficult

part,

and

so,

whereas

it

currently

says,

if

you

don't

want

to

implement

the

accurate

ecn

option

at

least

consider

implementing,

if

you

don't

want

to

send

it

at

least

consider

receiving

it.

We

want

to

change

that

round

too.

F

Other

than

dealing

with

the

upcoming

changes,

one

of

which

is

still

working

through

that

filtering

section

with

with

gauri

and

then

writing

text.

For

that,

please

could

the

people

who

asked

for

the

recent

changes

just

confirmed,

they're,

okay

on

the

list

or

not,

and

otherwise

I

think

we're

ready

for

working

group.

Last

call

and,

as

I

mentioned

earlier,

generalized

ecn

depends

on

this

one.

H

F

F

So

I

I

definitely

don't

think

this

contravenes

that

principle.

But

if

you've

said

this

on

the

list,

I

can

respond

there

when

I've

fully

understood

what

you

mean

and

the

other

case.

I

don't

also

see

how

the

act

create

requesting,

makes

generalized

ecn

or

does

it

realize

this

year,

and

it

seems

that

generalization

is

a

different

thing.

It's

trying

to

make

control

packets

he's

incapable,

which

I

don't

see,

how

that

relates

directly

to

the

outbreak,

but.

P

Okay,

thanks

for

the

rit

349

proposal

on

the

list,

I'm

very

happy

with

the

direction.

That's

going,

I'm

sure

the

rest

of

the

documents

pretty

ready.

The

one

thing

I

objected

to

reading,

which

you

did

not

pick

up

on

was

the

strongly

recommend.

I'm

not

sure

our

ad

would

be

that

happy.

Having

a

strongly

recommend.

A

B

A

C

M

M

Okay?

Thank

you.

My

name

is

carlos

gomez

and

I'm

going

to

present

the

last

update

of

the

draft

entitled

tcp

a

great

request,

star

option.

My

co-author

is

john

cochran

from

the

university

of

cambridge.

First

of

all,

let's

take

a

look

at

the

motivation

for

this

draft

delay.

Dax

is

a

widely

used

mechanism

which

is

intended

to

reduce

protocol

overhead.

M

However,

in

some

cases

it

may

also

contribute

to

suboptimal

performance.

We

can

identify

two

types

of

scenarios

here.

The

first

is

so-called

large

congestion

window

scenarios.

That

means

when

the

congestion

window

size

is

much

greater

than

the

mss,

where

saving

up

to

one

of

every

two

acts

may

be

insufficient.

M

Then

there's

also

so-called

small

congestion

window

scenarios.

That

means

with

the

congestion

window

size

up

to

the

order

of

around

one

mss,

for

example,

this

would

be

in

data

centers,

where

the

bandwidth

delay

product

is

up

to

the

order

of

one

mss,

and

in

this

case

the

led

x

will

incur

a

delay

much

greater

than

the

rtd,

and

also

in

transactional

data

exchanges

or

when

the

congestion

window

has

decreased.

The

ability

of

requesting

immediate

acts

may

help

avoid

idle

times

long,

faster

congestion

window

growth

so

about

the

status

of

the

draft.

M

So,

let's

go

through

the

updates

in

zero.

Three,

the

first

one

is

in

the

main

format

of

the

option.

Previously

we

had

a

six

byte

format,

and

now

it's

just

five

bytes.

Basically,

we

have

remove

the

field

called

n

here.

This

was

intended

so

that

when

r

was

set

to

zero,

the

sender

could

request

immediate

acts

for

the

next

n

segments.

However,

it

was

found

that

this

was

mostly

redundant,

so

it's

possible

to

produce

the

same

effect

without

an

explicit

field

called

n

and

then

also

in

the

new

format.

M

The

size

of

the

r

field,

which

corresponds

to

the

ack

raid

requested,

has

a

size

of

six

bits

and

also

there's

one

new

bit,

which

would

be

at

the

moment

reserved.

This

is

called

v

so

for

the

r

field

there

there

are

like

two

possible

encodings

on

the

table.

The

first

one

would

be

like

the

straightforward

approach,

which

is

the

binary

encoding

of

the

requested

ack

rate

by

the

maximum

value

of

r.

Since

we

have

64.

M

M

First,

we

state

that

a

direction

capable

receiving

tcp

should

modify

its

aggregate

to

one

and

every

r

receive

data

segments

from

the

sender,

and

this

used

to

be

a

must

in

previous

versions.

However,

it's

been

modified

to

shoot,

because

actually

there

may

be

several

reasons

why

a

receiver

might

not

be

able

to

satisfy

the

request.

M

So

I

don't

know

if

there

may

be

comments

on

this.

Also,

there

was

another

suggestion

to

aggregate

this

option

with

others

as

in

yoshi's

draft,

and

another

clarification

is

that

a

tcp

segment

carrying

retransmitted

data

is

not

required

to

include

at

our

options.

So

if

the

original

segment

carry

the

tar

option,

the

retransmission

is

not

required

to

to

also

carry

the

same

star

option,

and

then

there

have

been

several

comments

about

the

ignore

order

feature

here.

This

was

suggested

once

in

a

previous

tcpm

meeting.

M

The

idea

behind

the

future

would

be

to

allow

a

sender

tell

the

receiver

that

it

has

some

tolerance

of

our

data

packets.

So

then

it's

not

necessary

for

the

receiver

to

to

send

immediate

acts

when

there

are

like

ignore,

well

reordered

data

packets.

However,

it

seems

like

the

benefit

that

is

seen

from

this

feature

is

not

so

clear

or

not

so

significant,

so

the

proposal

that

we

have

like

on

the

table

for

the

next

update

of

the

draft

would

be

to

just

remove

the

feature.

J

J

M

M

A

M

Yeah,

I

don't

know

if

maybe

bob

would

like

to

reply

himself,

but

it

seemed

like

some

people

mentioned

that

the

maximum

value

of

63

could

be

fine.

However,

bob

considered

like

the

future,

so

when

this

option

might

be

used

several

years

from

now,

and

perhaps

the

capacity

of

links,

change

and

so

on.

So

I

don't

know

if

maybe

bob

would

like

to

also

add

something.

G

A

P

M

Yeah

thanks

for

the

consideration,

so

perhaps

the

the

point

that

bob

had

was

considering-

maybe

not

today's

scenarios,

but

maybe

future

scenarios.

So

the

idea

was

being

able

to

support

like

larger

values

but

yeah.

That

may

be,

of

course,

something

to

take

into

account

that

also

a

very

large

value

may

have

issues,

as

you

just

explained.

Thank

you.

Yeah.

A

A

N

Okay,

hello:

this

is

pony

from

china,

mobile

and

I'd

like

to

pre

present

the

multiple

tcp

robust

session

establishment,

and

since

this

work

has

been

presented

in

the

past

years,

I'd

like

to

have

a

recap

of

it.

First,

this

document

want

to

solve

the

problem

of

connection

setup

failure

that

is

essentially

caused

by

establishing

a

connection

only

on

default

paths

with

unknown

path.

Letters

when

we

use

multiple

multipath

protocol,

the

session

will

be

established

in

a

sequence

and

the

default

bus

is

selected

as

the

first

one

by

default.

Ule,

maybe

wi-fi.

N

If

there

is

no

wi-fi

signal,

405

gxs

will

be

selected.

However,

when

the

wi-fi

signal

is

bad

and

the

delay

is

large,

the

default

path

can't

be

established,

which

will

also

affect

the

link

of

the

second

connection.

So

this

problem

is

a

real

one

and

has

been

occurring

during

modified

protocol

deployment

and

implementation.

N

N

It

is

a

set

of

extensions

to

regular

mvtcp

and

btw

version.

One

is

designed

to

provide

provide

a

more

robust

establishment

of

mptsb

sessions.

It

has

four

methods,

including

1

theme

and

e3

and

ips.

It

also

presents

a

design

and

the

protocol

procedure

of

the

combination

scenario.

In

addition

to

these

standard

alarm

solutions,

for

example,

the

combination

of

the

same

and

ips

and

combination

of

timer

and

rps,

so

a

very

short

solution.

Recap

of

those

methods.

N

Result,

let's

say

again

to

network

voltage

is

achieved

by

modifying

the

thing

retransmission

type

timer.

If

one

path

is

defective,

another

path

is

used

and

for

the

same,

one

provides

the

ability

to

simultaneously

use

multipath

for

connection

setup

and

rst

is

used

to

terminate

connection

setup

on

other

paths

when

connection

has

been

established

on

the

first

path

and

there's

also,

the

ethereum

provides

ability

to

simultaneously

use

multiple

paths,

and

I

mean

joint

cap

is

used

for

decreasing

overhead

merging

all

simultaneous

established

path

without

a

joint

process.

N

So

this

draft

has

been

presented

several

times

from

itaf16,

and

the

last

presentation

was

in

idf,

for

the

iso

is

negotiating

with

shares

the

possibility

for

getting

rid

of

the

ipr

blocking

issue

towards

adoption

of

it.

So

the

criteria

is

something

that

authors

can

work

on

by

taking

to

the

other

people

who

need

the

publication

of

the

unwanted

the

update.

For

instance,

a

presentation

from

the

network

operator,

rather

than

dealing

with

test

results

of

the

suggested

mechanism,

would

be

interested

input

to

the

working

group.

N

So

in

this

case

I

found

this

work

was

really

valuable

and

also

planned

for

the

joint

testing

work,

but

due

to

the

coverage,

it

is

too

hard

for

us

to

find

a

place

to

do

it.

So

I

checked

the

test

before

and

it

showed

the

obvious

effect

efficiency

of

this

method

and

there's

also

the

three

start

forms

one

or

six.

You

can

see

the

demo

and

the

hexane

will

be

done

into.

N

I

can

one

seven

and

one

eight,

so

I

just

reviewed

the

overall

work,

the

draft

check

them

demo

and

the

ipr

disclosures

with

the

license.

This

draft

has

major

enough

and

completed.

I

wish

my

joining

without

ipr

issue

could

help

to

promote

this

work.

So

I'd

like

to

ask

if

we

could

call

for

adoption

of

this

draft

thanks

and

any

comments.

C

A

A

This

is

yeah

good

things

having

support

is

very

good

thing,

but

what

we

really

expect

is

you

know

showing

some

experience

or

operational

experience

by

implementation

experience,

and

then

you

can

demonstrate

that

this

is

a

great

idea

and

then,

at

the

same

time,

since

you

are

not

also,

we

can

know

how

you

deal

with

the

ipr

issues

and

that

kind

of

information

will

be

very

useful

for

us

to

think

about

how

to

proceed

around,

but

just

supporting

is

just

one

step,

but

we

need

more

steps.

That's

my

comment.

G

So

you

mentioned

that

the

idea

can

also

be

applied

to

mpdccp

and

be

quick,

sending

doing

connection

setups

simultaneously

or

wire.

Timers

is

something

which

is

done

in

sctp,

though

so

there's

prior

art

for

other

protocols.

Do

your

does

your

ipr

cover,

also

mp,

quick

and

mpdccp,

are?

Is

your

ipr

specific

to

mp

or

tcp.

G

A

Okay,

yeah,

I

think,

as

I

mentioned,

that

you

know

showing

support

from

you

is

very

good

and

for

the

draft,

but

it's

still

a

little

difficult

for

you

know.

We

can

think

this

is

ready

for

working

group

adaption

because,

as

I

mentioned,

no,

I

want

to

see

more

broad

support

or

you

can

show

some

more

solid

test

results

or

implementation

experiment.

Then

you

can

convince

people

that

this

is

not

good

item

for

this

parking

group

right

now.

It

just

was

just

one

support,

so

I

think

we

need

more

supports

or

more

experiments.

O

Okay,

we'll

try

to

make

it

a

bit

faster

than

planned

to

leave

some

room

for

a

discussion,

because

that's

really

the

intent

of

presenting

our

work

here

in

the

tcpm

so

yeah.

First,

I

would

like

to

to

thank

tcpm

for

giving

us

the

opportunity

to

to

present

this

track

for

the

first

time

within

itf,

so

this

strap

is

called

tcps,

modern

transport

services

with

tcp

and

tls,

and

you

might

be

wondering

whether

tcpls

is

an

acronym.

O

O

So

so

those

were

the

main

benefits

of

of

mptcp,

but

it

also

had

some

issues.

So

the

address

exchange

mechanism

was

not

really

secure,

although

it

improved

it

improved

in

v1

and

it

used

tcp

and

the

tcp

options

which

are

prone

to

middle

box

interference,

and

so

that

really

drive

the

design

of

mptcp

and

made

the

design

a

bit

more

difficult

to

to

make.

So

mptp

also

proved

to

be

maybe

difficult

to

implement,

and

I

think

we

can

take

the

seven

year

journey

from

this

v0

specification

to

mainline

linux

as

an

example.

O

As

an

example

of

that,

of

course,

many

things

happen

in

that

time

frame.

But

still

it's

a

long

time,

so.

Another

important

protocol

that

was

more

recently

designed

is

quick,

which

started

in

2016

with

the

goal

of

designing

an

udp

based

transport

protocol

and

which

the

first

version

of

the

protocol

was

shipped

into

in

last

year.

Actually

and

it

enabled

stream

multiplexing

without

head

offline,

docking

and

connection

migration

and

failover

as

well.

O

So

I

think

an

important

point

that

we

we

need

to

look

at

today

is

that

we

know

that

tls

is

the

most

used

protocol

at

tcp

and

the

latest

version

is

even

expanding.

The

the

use

of

encryption

to

extend

the

tls

protocol

with

encrypted

tls

records

and

encrypted

extension

and

and

together

with

the

fact

that

tcp