►

From YouTube: IETF113-NMRG-20220324-0900

Description

NMRG meeting session at IETF113

2022/03/24 0900

https://datatracker.ietf.org/meeting/113/proceedings/

A

B

B

German

myself

quite

sure

we

are

participating

remotely

and

we

have

so-called

local

backup,

diego

lopez,

which

you

can

see

in

the

room

sitting

next

to

the

screen.

So

in

case

there

is

some

issues

or

we

need

to

handle

anything

happening

in

vienna.

We

will

rely

on

diego

for

that,

but

the

meeting

will

happen

in

mythical

see

that

everyone

is

connected

here.

We

will

use

the

the

queue

from

the

from

miteco

so

and

we

will

synchronize

with

the

queue

in

the

room.

B

So

this

is

energy

meeting

for

iitf

113

and

we

need

to

go

through

a

bit

of

not

well

slides

before

we

go

into

the

agenda,

so

intellectual

property,

the

iota

follows

the

ietf

interdictory

of

intellectual

property

rights,

disclosure

rules.

So

by

this

we

mean

that

by

participating

in

the

rtf,

you

agree

to

follow

iotf

processes

and

policies.

B

If

you

participate

in

person

and

choose

not

to

wear

a

red,

do

not

photograph

lanyard,

then

you

can

send

to

up

here

in

such

recordings,

and

if

you

speak

at

the

microphone

appear

on

the

panel

or

carry

out

any

official

duty

as

a

member

of

iotf

leadership,

then

you

can

send

to

appearing

in

recordings

of

you

at

the

time.

If

you

participate

online

and

turn

on

your

camera

and

or

your

microphone,

then

your

consent

to

appear

in

search,

recording

recordings.

B

Privacy

and

code

of

conduct,

so

as

a

participant

in

or

attendee

to

any

itf

activity,

you

acknowledge

that

written

audio,

video

and

photographic

records

of

meetings

may

be

made

public

personal

information

that

you

provide

to

iotf

will

be

handled

in

opponents

with

the

privacy

policy

that

you

can

find

at

the

following

link

as

a

participant

or

attendee.

You

agree

to

work

respectfully

with

other

participants.

B

B

So

thank

you

for

your

attention

for

this

introductory

slide,

and

now

we

will

start

officially,

let's

say

the

content

of

our

meeting

just

before

going

forward

online

meeting

etiquette

so

be

aware

that

this

session

is

being

recorded.

Please

keep

your

audio,

muted

and

video

off

when

not

presenting

or

speaking

and

when's

thinking.

Please

start

by

stating

your

name.

Clearly,

it's

very

important

for

the

meeting

units

and

for

the

audience.

Thank

you.

B

B

B

The

main

topic

of

discussion

for

today

will

be

network

digital

twins.

We

have

provisioned

enough

time.

We

hope

to

have

an

insightful

discussion

on

this

emerging

topic

and

we

will

have

several

presentations.

The

first

one

will

be

from

jordy

and

albert

about

how

to

build

a

digital

twin,

comparing

different

approaches

to

that

really.

Looking

forward

to

this

presentation,

then

we

have

also

from

shang,

on

behalf

of

the

other

co-authors,

a

presentation

on

the

draft

on

the

research

group,

draft

network,

digital

twin

concepts

and

reference

architecture,

and

this

presentation

in

the

slot.

B

B

B

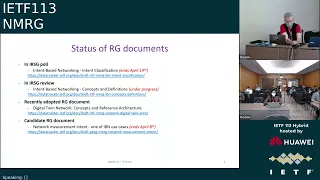

So

in

rnc

poll

we

have

the

intent-based

networking

intern

classification,

so

the

irg

member

needs

to

express

their

view

on

the

document.

This

poll

will

end

april,

14

14th,

so

it's

coming

pretty

soon.

The

next

step

will

be

to

if

this

is

positive

to

proceed

towards

a

ietf

conflict

review,

so

this

is

progressing

towards

rbc

publication.

B

The

second

document

second

document

is

in

rsg

review.

So

this

is

the

step

just

before

the

rsd

poll.

It's

intend-based

networking

concepts

and

definition.

This

is

still

under

progress.

We

received

the

review

from

the

irg

member,

and

this

is

going

through

interactions

with

the

co-authors

to

address

the

comments

and

then

be

able

to

proceed.

B

We

have

also

recently

adopted

research

group

documents,

digital

twin

network

concepts

and

reference

architecture,

so

we

will

have

also

more

insight

on

this

document

during

the

technical

topic

later

on.

In

this

session

also,

you

may

have

seen

on

the

mailing

list.

We

have

started

a

call

for

a

research

group

document

adoption

for

the

document

network

measurement

intent,

which

is

one

of

the

ibm

use

cases

of

nmrg.

B

If

not,

let

me

continue,

as

you

may

have

seen

in

the

agenda,

for

the

topic

on

ibn.

We

will

not

go

into

in-depth

technical

discussion,

but

we

wanted

to

show

that

this.

This

topic

is

also

progressing

in

a

number

of

other

groups

beyond

irtf

and

ietf,

and

that

there

is

more

and

more

also

standardization,

open

source,

but

also

research

activities

on

this

topic-

and

this

is

just

let's

say,

a

a

broad

overview.

B

So

what

we

have

for

other

groups

in

4r

in

the

linux

foundation,

the

onapp

project,

where

there

are

several

ibn

use,

cases

being

developed

and

part

of

the

different

honor

premises,

the

link

provided,

you

can

see.

Let's

say

it's

an

overview

of

the

different

intent-based

networking

use

cases

appearing

in

the

different

releases

and

by

browsing

on

the

on

up

wiki

looking

for

internet

base.

B

You

can

find

those

different

use

cases

and

more

details

about

who

is

involved

and

the

exact

topic,

but

it's

interesting

to

see

that,

since

several

years,

onap

is

continuously

pushing

to

have

intern-based

networking

use

cases

also

being

part

of

the

technologies

developed

in

onap

in

in

etsy,

the

zerotouch

network

and

service

management,

isg

and

hello.

I'm

sorry,

diego

has

been

recently,

I

point

as

a

new

chair

of

this

isd.

B

So

if

you

have

any

questions

on

this

group,

you

can

also

turn

to

diego,

but

there

are

a

couple

of

activities

also

in

this

isg

related

to

intern-based

networking.

So

there

is

a

group

report

group

report

11

which

targets

intern-driven

autonomous

networks.

It's

a

study

to

try

to

understand

the

different

definitions,

techniques

and

mechanism

of

intent,

driven

aspects

in

relationship

to

zero

touch

management,

and

this

document

is

triggering

a

lot

of

interesting

discussions.

So

I

invite

you

to

to

try

to

read

it.

It's

a

part

of

an

open,

open

draft.

B

It's

publicly

accessible

and

there

is

also

a

proposal

for

a

proof

of

concept

on

automation,

of

intent

based

plus

lead

line

service.

This

is

proposal

by

members

of

this

isg

about

using

the

zsm

specification

in

the

specific

context

of

intern-based

clause

line

service

in

the

itu.

You

have

also

a

focus

group

on

autonomous

networks

and

in

this

focus

group

there

are

a

set

of

activities.

B

Which

include

use

cases

or

poc

also

related

to

intent,

based?

Not

all

the

activities

are

related

to

internet,

but

you

can

find

a

few

of

them

and

the

main

document

you

can

find

is

on

the

poc

and

proposal

for

builder

tone,

where

you

can

see

the

latest

teams

willing

to

propose

activities

related

to

that

as

part

of

the

focus

group

activities.

B

Finally,

you

have

also

the

at

the

tm

forum

autonomous

network

project.

They

have

an

interesting

set

of

documents

addressing

specifically

intern

based

networking,

so,

for

instance,

ig

1253

intent

in

autonomous

networks.

You

can

find

the

latest

draft.

It's.

You

just

think

I

think

to

have

a

login

on

tmf

to

access

it,

but

it's

also

part

of

a

broader

set

of

documents

which

I

will

quickly

show,

and

this

is

an

interesting

development

because

they

have,

as

you

see

several

aspects,

they

really

want

to

specify

relate

in

relationship

to

intent.

B

So

the

document

I

was

mentioning

is

the

one

here

in

the

middle,

but

you

see

that

they

want

also

to

go

into

more

modeling

aspects,

different

capabilities,

api

development,

etc,

and

this

is

ongoing

activities

as

part

of

this

autonomous

networking

project,

which

I

think

is

a

very

interesting

development,

more

on

the

say,

events

or

research

side.

There

are

also

a

number

of

activities

ongoing

again.

This

is

not

exhaustive.

B

So

I

think

this

could

be

an

interesting

experience

for

also

researching

participants

to

really

have

a

more

practical

use

of

intern-based

networks,

also

more

and

more

papers

and

special

issues

in

the

literature

again.

This

is

just

an

extract

of

some

of

the

recent

paper

essentially

published

last

year,

as

you

can

see

different

special

issues

in

different

different

journals,

but

also

some

articles

either

survey,

type

of

articles

or

dedicated

approaches

for

a

global

view

on

them

on

intern

based

networking.

B

B

B

B

We

have

received

a

draft

on

this

on

this

notion.

It's

also

linked

to

the

ai

document.

There

could

be

also

two

interesting

projects,

so

we

are

trying

to

see

how

to

organize

something

meaningful

proposition

from

the

research

group

to

have

a

good

discussion

on

this

topic.

We

will

see

what

will

come,

but

we

try

to

have

something

before

the

summer

on

this

in

the

next,

let's

say

plenary

meeting.

Currently

we

plan

to

go

to

the

next

ietf,

but

this

remains

to

see

the

feasibility.

C

C

So

I

know

that

this

document

was

here

for

a

while.

We

so

this

document,

just

maybe

to

to

to

make

it

clear

for,

is

a

shared

document

that

we

work

collaboratively.

But

it's

not.

It

is

just

a

google

document.

Actually

we

we

thought

that

it

was

better

to

work

on

it.

So

approximately

what

I

would

say

is

that

not

exactly

one

year

ago,

but

almost

one

year

ago

we

decided

to

first

freeze

the

challenge,

the

challenges

where

we

want

to

document

in

this

document.

C

Actually

this

was

a

temporary

freeze

because

of

course,

we

are

not

saying

that

we

will

be

exhaustive

to

have

whole

challenges

because

we

may

miss

some

some

of

them.

There

will

be

news

that

can

also

came

out

due

to

some

context,

and

so

but

at

least

we

wanted

to

freeze

it

in

order

to

really

progress

and

because,

at

that

time

we

have

some

bullet

points

for

challenges.

We

have

some

idea

a

lot

of

ideas

actually,

but

not

something

very

strict.

C

So

we

have

presented

this

list

and

then

we

have

asked

some

particular

contributors.

We

were

quite

willing

to

to

lead

the

first

with

me

to

try

to

consolidate

all

the

input

we

got

regarding

the

different

challenges,

so

we

define

templates

that

you

can

see.

Basically,

here

we

try

for

each

challenge,

have

some

motivation,

a

very

quick

state

of

the

art,

not

not

a

big

in-depth

survey,

but

at

least

the

quick

survey

regarding

the

challenges

and

in

patera

twilight.

What

are

the

remaining

problems?

C

Because

in

many

cases

we

have

already

tried

to

use

ai

for

the

challenges

it

works.

It

does

not

work

well

and

so

on

and

try

to

highlight

what

I

mean

the

remaining

problem

and

maybe

some

really

recent

results

or

orientations

that

we

could

investigate.

Tobias.

I

think

it

was

very

quite

successful

because

we

had

a

lot

of

challenges

that

were

well

documented,

so

I

really

want

to

thank

to

thanks

all

the

contributors

here.

C

Let's

say

editors

that

helped

me

to

put

that

in

the

more

let's

say

a

nice

way

at

the

time

we

have,

we

had,

let's

say

quite

let's

say

detailed

description

of

the

challenges

with

a

lot

of

references,

and

it

was

very

good

to

to

structure

a

bit

of

the

id

that

you

put

behind

the

challenge.

We

have

only

two

challenges.

What

we

didn't

had

really

input

was

one

what's

about

the

acceptability,

but

actually

it's

what

I

will

call

meta

challenges,

because

we

have

some

sub

challenges

that

are

also

related

to

acceptability.

C

I

don't

think

this

is

a

big,

the

big

issue

and

one

is

about

the

email

in

the

loop.

I

think

we

had

a

lot

of

discussions

that

we

need

to

have

the

human

into

integrity

with

ai,

even

in

our

domain,

for

network

for

property

network,

and

we

actually

have

not

really.

We

did

not

really

describe

one

of

these

challenges

at

that

time

and

still

today.

C

C

C

I

will

go

a

bit

more

into

the

details,

so

here

was

the

initial

list

of

challenge

that

we

have

that

you

had

in

v,

three

so

version

three,

so

maybe,

as

you

remember,

we

tried

to

categorize

a

bit

of

challenges.

We

had

four

criterias.

One

is

more

related

to

problems

that

relate

to

the

ai

technique

itself,

saving

other

world.

It's

when,

for

example,

we

need

to

really

work

on

the

algorithm

of

method

api

to

fulfill

the

needs

of

a

network

management

problem,

a

challenge

we

have.

C

We

have

identified

a

lot

of

problems

looking

to

data

access

to

data

or

to

present

data

in

a

relevant

way

for

of

needs.

We

have,

although

seen

that

there

is

actually

one

particularity

of

our.

Maybe

our

domain

is

that

we

also

want

to

you.

Many

cases

want

to

use

ai

not

only

to

to

to

predict

some

value

and

so,

but

also

to

really

take

decision

or

at

least

guide

or

help

in

decision

or

take

actions.

C

Does

this

make

a

bit?

The

focus

is

the

constraint

a

bit

different,

and

also

we

had

a

lot

of

discussion

at

regarding

accessibility,

why?

We

should

why?

What

would

be

the

the

obstacle

to

to

use

ai

for,

let's

say

operating

networks,

a

lot

of

issue

we

are

used

to

to

have

all

procedures.

We

cannot

also.

We

don't

want

to-

let's

say

let

an

ai

automatically

around

the

network

and

so

forth.

A

lot

of

discussion.

Of

course,

a

lot

of

we

had.

C

We

had

this

particular,

let's

say

criteria

of

what

I

call

it

a

challenge,

but

I

know

that's

not

a

good

term

anyway.

So

we

have

this

list,

as

you

can

see

here,

are

challenges

from

like

the

yti

data

management

and

so

on.

I

will

not

go

for

each

of

them.

Of

course

it's

not

the

goal

here,

but

after

the

reviews,

what

I

call

here

the

review.

C

Basically,

the

review

of

the

three

from

b3

to

v4

is

that

we

just

observe

that

many

of

these

challenges,

which

are

the

let's

say

the

first

column

here

mostly,

are

related

to

a

single,

let's

say,

main

problem,

or

let's

say

with

a

of

course.

For

instance,

you

can,

it

can

be.

The

challenge

may

be

somewhat

due

to

some

primary

data,

maybe

ai

techniques,

but

anywhere

we

put

that

it's.

It

was

still,

as

you

can

see

here,

we

tried

to

somehow

evaluate

each

other.

C

It

was

all

quite

very

focused

and

it

does

not

really

make

sense

to

to

try

to

to

let's

say

to

to

first

the

challenges

to

be,

let's

say:

multi

criteria.

Maybe

it's

good

just

to

say

we

focus

on

what

is

the

main

criteria

that

characterize

the

challenges

and

we'll

try

to

organize

a

bit

of

document

regarding

that

and,

although,

for

the

let's

say,

ai

for

anim

actions,

actually

it's

mostly

related,

maybe

to

the

ai

technique

that

would

run

behind

it's

some

kind

of

a

sub.

Let's

say

a

sub

level

of

the

ai

category.

C

C

What

is

really

important

to

see

here

is

that

in

previous

document

on

the

free

version,

we

got

this

list

of

challenges

which

were

actually

a

big

table

where

we

have

one

rule

per

changes

we

described

and

though

we

just

really

a

structure

a

bit

based

on

the

different,

let's

say

categories,

so

more

morality

to

the

ai

techniques

that

we

will

need

to.

That

needs

to

be

extended

to

be

worked

on

and

for

network

management,

and

here

you

see

a

list

of

five

programs

that

comes

basically

to

the

to

the

challenge

that

you

had

before.

C

One

refers

to

the

problem

of

how

we

can

precisely

define

a

network

management

program

to

be

able

to

find

the

right

technical

right

set

of

techniques

we

could

use.

I

mean

ai

techniques

you

could

use

to

to

help

us

one

is

regarding.

Oh,

we

evaluate

the

preference

of

produce

model

not

only

from

an

ai

perspective

from

if

we

include

some

net.

C

Let's

say

network

specific

metric

into

the

a

algorithm

itself,

and

there

is

all

we

can

email

ai

include

network,

or

we

can

use

ai

to

really

the

challenge

of

using

ai

for

planning

actions

for

operating

network

not

only

distributed

ai.

I

will.

I

will

go

back

to

this

a

bit

a

bit

after

because

we

don't

have.

Actually

we

have

it

just

as

a

placeholder

now,

but

we

don't

have

a

challenge

really

well

described

here.

C

Then

we

have

all

the

things

related

to

the

data

say

that

I've

driven

the

data

in

ai

that

we

need

maybe

specific

data

in

our

case

or

we

collect

data.

This

is

also

program.

It's

not

only

on

using

data

to

get

data

to

extract

knowledge

to

share

data.

Maybe

these

are

all

of

that

now

included

in

this

part,

and

then

we

have

the

acceptability

of

ai,

how

we

can

explain

what

has

decision

of

network

a

products

or

we

can

ensure

that

when

we

have

an

ai

or

prototype

working

in

the

lab

environment,

we

can.

C

C

C

We

have

removed

some

parts,

we

don't

have

any

more

use

case

parts

so

to

maybe

to

recall

it

has

never

been

an

objective

for

this

document

to

list

some

use

cases

and

to

have

to

detail

some

use

cases,

and

we

really

insist

before

to

not

fill

out

this

section

before

we

are

satisfied

with,

let's

say,

a

description

of

challenges,

and

I

think

for

now

it

we

could

even

skip

this

part.

We

don't

have

need

to

have

this

use

cases

at

that

time.

C

C

Anyway,

we

have

some.

Let's

say

when

it's

got

challenge.

We

give

some

illustrative

use

case

of

application.

Of

course

it's

not

detail

use

cases.

So

we

still

in

the

document.

It's

not

completely.

It's

a

theoretical.

We

still

provide

some

some

example

to

highlight

to

show

to

explain

the

different

challenges,

so

we

still

have

some

more

in

line

with

the

challenges,

but

not

as

really

detailed

use

cases

with

detailed

procedures

and

so

on.

It's

something

that

we

think

we

don't

want

now

and

also

for

directional

recommendations.

Somehow

this

will

be

a

kind

of

another

document.

C

Okay,

of

course,

we

have

known

an

introduction

that

the

idea

is

to

show

that

we

that

the

ai

we

cannot.

We

cannot

say

we

will

not

use

ai

for

network

management.

It

does

not

mean

that

we

will

use

ai

for

everything

network

management,

but

somehow

it's

something

that

we

cannot

avoid,

that

we

need

to

have

it,

and

that

is

we

highlight

that

with

some

let's

say

program

that

very

briefly,

and

because

we

have

a

dedicated

section

for

that,

we

try

a

little

bit

to

disambiguate

between

ai

and

machine

learning

here.

C

The

issue

that

most

of

challenges,

to

be

honest,

were

written

with

machine

learning

in

mind.

So

personally,

I

try

a

bit

to

make

it

more

generic.

It's

not

always

easy,

because

maybe

some

are

really

still

very

machine

learning

oriented.

I

think

it's

not

a

problem,

but

at

least

nutrition

try

to

a

bit.

Let's

say.

C

Disambiguate,

obviously,

that

we

have

it's

not

only

about

machine

learning,

there

are

so

much

homogeneity

challenges

that

can

be,

let's

say,

applied

to

different

ai

fields

or

there

are

more,

let's

say,

oriented

to

machine

learning.

So

we

try

to

I

like

that.

I

think

this

is

not.

We

are

still

reviewing

this

part.

To

be

honest,

and

I

hope

that

we

come

up

with

a

nice

nice

nice

version.

Then.

C

We

have

this

section

about

the

difficult

products

in

network

management,

so

previously

we

have

a

list

of

basically

bullet

points.

We

have

a

lot

of

problems

that

I

think

it

was

covered

in

documents,

so

everyone

put

bullet

points

and

then

we

try

something

to

organize

a

bit

this.

This

is

a

difficult

problem,

so

I

didn't

have

to

give

an

exhaustive

list

again:

here's

to

give

some

examples

that

we

could

use.

Maybe

when

you

describe

channel

and

then

so,

we

try

to

categorize

them

according

to

five

five

criteria.

C

Here,

as

you

can

see,

for

example,

one

is

about

the

very

large

solution

space

this

is.

Can

this

can

help

to

characterize

a

difficult

program?

Some

is

related

to

uncertainty,

appear

on

unpredictability

of

what

will

be

the

environment

or

the

context.

Your

assertion

will

be

applied.

Some

can

be

some

or

guided

by

the

need

to

deliver

solution

in

real

time,

or

there

are

a

problem

that

already

depending

on

data,

of

course,

when

maybe

you

will

need

to

analyze

atr

from

different

problems,

and

maybe,

if

I'm

not

I'm

not

personally,

a

very

fan

of

that.

C

If

that

is

about

the

the

need

to

be

intermittent,

you

mind

processing,

I

think

this

is

not

really

a.

This

is

not

a

something

that

comes

directly

from

from

the

problems

you

want

to

tackle

that

you

need

to

be

integrating

the

human

process

just

because

it

comes

from

the

procedures

that

you

are

not

the

problem

that

you

want

to

take.

So

this

is

something

that

is

more,

let's

say,

a

constraint

that

we

had

on

top

of,

or

let's

say,

our

solutions,

but

the

problems

you

want

to

to

achieve.

C

For

example,

here

we

have

some

example,

then,

below

like

a

computation

of

optimal

classification

of

network

traffic.

If

you

don't

need

humans,

it

should

be,

should

be

something

possible,

of

course,

if

it's

not

always

the

case,

but

it's

not,

let's

say

a

constraint

that

comes

directly

to

the

problem

to

to

so,

of

course,

now

we

have

only

two

problems

which

are,

let's

say

described

in

a

more

let's

say,

a

textual

way.

C

So

what

remain?

What

mostly

remain,

because

I

would

say

that

there

is

the

first

issue

regarding

the

humay

in

the

loop

challenges

you

see.

The

current

description

is

very

lightweight

it's.

What

is

what

is

in

italic

here?

So

I

is

the

question

is:

should

we

keep

it

or

should

we

some

or

omitted

in

the

document?

Okay,

they

said

we

will

not.

C

This

is

the

goal

is

not

to

provide

a

full

list

and

exhaustive

list

of

challenges,

somehow

somebody's

looking

to

come

out,

nor

these

are

new

organizations

for

each

let's

say

big

category.

We

have

a

kind

of

introduction

for

each

challenge

related

to

ai

technique.

We

have

an

introduction

trying

to

give

a

bit

the

scope

of

this.

All,

let's

say

sub

challenges

and

the

let's

say

your

human

subcharge

will

be

part

of-

let's

say

ia

techniques,

probably

that

that's

most

integrated

as

a

human,

so

it

can

be

somewhat

in

putting

the

introduction.

C

C

Of

course,

then

you

have

a

lot

of

other,

let's

say

editorial

parts.

We

need

to

complete

introduction

conclusion

and

so

on.

We

need

to

reference

and

so

on,

so

the

ideas

that

were

then

to

I

know

that

I

already

promised

a

bit

before

to

transfer

these

google

documents.

Let

me

give

you

a

draft

something

that

is

just

in

my

mind.

I

hope

that

you'll

be

able

to

to

try

this

music

document,

and

I

created

with

everything

really

for

me.

C

Also,

all

to

progress

now

so

to

know

we

have

lost

a

lot

of

contributors.

It

was

really

great.

We

had

a

lot

of

the

id.

No,

I

I

would

like

to

go

with

a

smaller

equatorial

team

to

be

with,

maybe

more,

let's

say,

a

focus

team

really

working

on

the

world

document,

not

only

on

specific

challenge

to

to

totally

do

this.

Let's

say

to

do

all

the

editorial

paths,

to

all

music

elements

and

so

on

and

to

I

think

it

would

be

very

important.

C

After

the

review

of

the

document,

this

is

a

small

dot.

Our

team

will

really

be

an

important

challenge.

We

will

make

some

change

and

hopefully

mid-may,

will

be

able

to

deliver

nice

documents

that

we

got

for

review

from

everybody,

but,

of

course,

as

a

link

is

open.

If

anyone

is

already

interesting

to

give

you

to

give

us

some

comments,

it's

really

it's

really

open,

so

do

not

hesitate

and

to

already

write

comments

before

and

yeah.

That's

it

for

me

any

comments

or

questions.

B

B

I,

like

your

approach,

what

I'm

for

the

document

itself?

What

what

do

you

intend

to

describe

really

a

series

of

problems?

I

mean

what

will

be

the

focus

of

the

of

this

section

and

document.

It

will

be

more

on

the

criteria

or

it

will

be

more

on

trying

to

find

some

problems

that

that

have

the

different

criteria

c1

to

c5.

C

Okay,

so

actually

it

was

a

bit

in

kind

of

let's

say:

reverse

engineering,

this

criteria

it

was

based

off

what

has

been

already

provided

in

terms

of

problems

so

yeah.

The

idea

of

using

this

criteria

is

just

to

help

us

to

to

try

to

somehow

have

yes,

I

have

problems

that

fulfill

different

materials.

Many

criterias.

C

The

idea

of

the

document,

of

course,

is

not

the

description

of

the

problem

itself,

but

if

you

have

some

some

problems

that

are

used

and

in

the

chinese

description

we

have

already.

Actually

we

have

many

many

people

and

they

contribute

to

describe

challenges

and

they

give

an

example,

for

example,

and

so

the

idea

that

we

should

take

this

example

and

put

them

in

the

first

section

and

try,

of

course

this

example,

because

many

examples

are

similar,

so

they

are

quite

transversal

and

try

to

show.

C

Why

is

why

this

why

these

problems

are

very

hard

and

we

actually

yeah

this

criteria,

should

that

should

have

to

understand

why

they

are

very

hard

and

then

for

each

section.

You

can

refer

to

this.

To

this,

let's

say

big

problem

when

you

describe

why

this

is

a

challenge,

for

example,

I

don't

know

to

use

a

lightweight

ai

in

network,

for

example,

because

we

have

we

need

some

out

to

the

reverse

relation

constraining

determinative

type.

So

we

need

the

ai

and

working

at

like

minutes

we

put

so

we

put

so

so.

C

C

C

I

think

there

is

no,

let's

say,

let's

say,

limit

of

challenges

which

what

we

could

put

some

more

for

the

range

of

management,

of

course.

So,

yes,

we

have

challenges,

maybe

to

resolve

some

some

problems

that

you

have

already

today

or

maybe

we

want

to

integrate

ei

to

solve

new

channels.

Some

new

some

new

problems

will

have

in

network

management

so

that

you

know

we

have

already

so

this

is

not.

C

C

I

think

this

is

part

actually

of

the

acceptability,

which

is

you.

You

know

it's

acceptability

main

challenges

and

then

you

can

accept

challenges.

Maybe

this

is

a

good

challenge

to

have

here,

because

if

you

have

time

to

look

at

the

document,

I

try

to

to

add

this

id

that

we

have

already

procedures.

We

have

already

solution.

We

cannot

just

say

from

the

from

from

today

to

tomorrow,

which

change

everything

we

need

to

to

some

way

integrate

incrementally

ai.

C

In

production

system,

actually

this

is

we

move

from,

let's

say,

yeah

lab

solutions

to

solution

in

in

the

real

real

network,

let's

say

and

yeah.

Yes,

there

are

some

some

challenges

and

we

try

to

to

figure

out

what

could

be

the

orientation

as

well.

Of

course,

I

think.

Maybe

we

then

have

questions.

Also,

so

is

there

some

you

know

session,

and

then

we

will

go

to

so

digital

twins.

That's

why

I

think

it's

also

a

possibility.

C

A

research

and

digital

should

help

to

integrate

ai

incrementally

into

production

system,

because

you

test

it

in

yeah

digital

twins.

So

this

is

something

that

appears

as

well

here

so

yeah

your

mark

is

fully

valid.

So

if

you

are

interested

by

this

I

mean

you

can

focus

on

this

section

and

you

can

give

me

some

feedback

if

you

want,

I

will

be

very

happy

to

take

you

to

just

to

know

if

this

make

this

is

I

like

what

you

have

said

before

that?

B

E

E

Okay,

so

I

will

start

so

thanks

for

I

love

for

inviting

me

for

for

this

talk.

I

hope

that

you

find

it

interesting.

So

what

we're

going

to

talk

is

how

you

can

build

a

digital

twin

and

we

will

compare

a

bunch

of

technologies

and

we

will

try

to

understand

what

are

the

pros

and

the

the

cons.

So

next

slide

please.

E

So

this

is

a

digital

twin.

I

think

that

I

don't

need

to

describe

further

the

concept

right.

It

is

a

digital

representation

of

a

networking

infrastructure

and

it

has

been

documented

already

in

drafts

and

many

papers

and

instead

of

focusing

on

on

what

is

the

digital

twin,

we

will

focus

more

on

how

we

can

build

it.

So

next

slide,

please

so

next

one.

E

So

I

think

that

the

the

first

question

we

need

to

answer

ourselves

whenever

we

want

to

discuss

the

digital

twin

and

specifically

when

we

want

to

discuss

how

to

build.

It

is

what

are

the

inputs

and

the

outputs

it's

very

hard

to

have

a

meaningful

discussion

on

the

digital

twin

on

the

network,

digital

twin.

If

we

don't

first

of

all

discuss

what,

if

it's

a

box

right,

if

we

agree

that

it's

a

box,

then

it

has

some

inputs

and

some

outputs

and

that's

the

very

first

thing.

E

E

So

in

order

to

answer

the

question

how

we

can

build

a

digital

twin,

we

have

decided

to

go

through

for

these

inputs

and

outputs,

which

are

as

inputs

we

have

the

network

configuration

which

means

this

is

my

network

topology.

I

have

this

type

of

cues.

I

have

this

type

of

scheduling

policies

like

stick

priority

or

weighted

weighted

circuiting.

I

have

this

routing

protocol.

I

have

an

overlay

routing

protocol

with

segment,

routing

and

so

on.

That's

the

network.

E

Configuration

for

the

traffic

load

is

exactly

which

is

what

are

the

packets

that

are

entering

into

my

network

right?

How

many

users

I

have,

which

type

of

traffic

they

send

do

they

send

voiceover

ip

within

demand

or

data

traffic

for

backup

traffic

and

so

on?

So

those

are

the

two

inputs

and

at

the

output,

what

we

propose

is

that

it

is

the

resulting

network

performance.

E

So

if

I

have

a

network

which

has

this

particular

configuration

with

that

topology

and

this

particular

router

equipment

and

switches,

and

so

on-

and

I

load

it

with

this

particular

traffic,

this

is

the

performance

that

I

will

get

and

the

performance

can

be

measured

through

what

will

be

the

delay

of

the

flows

from

the

users?

What

will

be

the

delay

of

the

voice,

video

on

demand

traffic?

How

many

losses

I

will

have

in

my

network?

What

will

be

the

link

utilization?

What

will

be

the

utilization

and

so

on?

E

It

is

very

important

to

note

that

we

are

not

claiming

that

those

are

the

right

inputs

and

outputs.

Those

are

the

ones

we

are

considering

in

my

group

to

answer

the

question:

how

we

can

build

it

and

to

see

which

are

the

challenges

ahead

and

they

are

relevant

for

the

sake

of

the

discussion.

I

think

that

those

inputs

and

outputs

can

be

challenged

and

we

can

have

a

discussion

on

whether

those

are

not

the

right

ones,

and

maybe

there

are

better

ones.

E

Then

how

why

these

inputs

and

outputs

make

sense

at

least

to

me,

because

if

you

take

the

a

real

network

infrastructure

and

you

assume

it

as

a

box

also,

that's

super

stupid.

What

I'm

going

to

say?

Okay,

because

you

guys

are

running

networks

and

building

them,

but

let

me

explain

them

from

this

angle

at

the

end,

a

network

if

we

assume

that

that's

a

transient

network,

what

you

have

is

data

packets

that

are

getting

into

the

network

and

data

packets

that

are

getting

out

the

network

right.

E

If

that's

that's

a

transiting

network,

so

we

have

traffic

going

from

ingress

to

egress.

Now

these

traffic

are

packaged,

but

at

the

end

they

can

be

voice,

traffic,

video

traffic

and

so

on.

And

then

you

have

some

sort

of

administrator

which

can

be

a

network

management

platform

or

a

controller

which

is

applying

a

configuration

to

the

network.

Okay

or

the

network

is

already

configured.

The

configuration

is

everything

that

it

is.

E

You

know

on

the

configuration

file

of

each

and

every

network

device

and

then,

if

you

have,

if

you

assume

that

you

have

some

sort

of

telemetry

platform,

what

you

have

is

a

performance

metrics,

so

you

can

measure

okay.

This

is

the

delay

for

this

type

of

flows.

This

is

my

utilization.

This

is

my

losses.

This

is

my

link

utilization,

okay.

So

this

is

how

we

see

a

network.

Of

course,

not

all

some

networks

consume

and

produce

traffic.

E

E

You

are

applying

exactly

the

same

configuration

to

the

performance

network,

digital

twin,

exactly

the

same

as

you

have

in

the

real

network,

but

instead

of

using

the

real

traffic

to

test

the

net,

the

network

digital

twin,

you

input

a

description

of

the

traffic

okay

because

it's

not

a

real

network,

so

you

don't

put

packets

into

the

digital

twin.

But

you

put

a

description

on

how

this

packet

looks

like

how

many

flows

do

I

have

how

many

voice

of

video

on

demand

flows

I

have,

which

is

the

rate

for

this

video

on

demand

flows?

E

How

many

users

I

have,

which

is

the

the

dynamic

behavior

temporal

behavior

of

the

users,

because

maybe

at

night

I

have

more

traffic

than

during

the

day,

because

that's

a

residential

network

and

so

on.

So

those

are

the

two

inputs

to

a

network:

digital

pin,

okay

and

then

the

output

is

are

not

the

package

because

we

are

not

putting

packets

into

the

digital

pin.

What

we

are

putting

is

a

description

of

the

traffic

and

what

we

get

is

a

description

of

what

will

be

the

performance

of

that

network.

E

So

I

don't

want

to

discuss

which

are

the

use

cases

of

a

performance

digital

twin.

I

don't

think

that

that's

the

focus

of

this

presentation,

but

I

think

that

it

is

very

hard

to

to

to

have

a

meaningful

discussion

on

how

you

can

build

something

if

we

don't

see

why

that's

relevant

right.

So

let

me

go

super

quickly

over

a

few

use

cases.

E

E

If

I

change

this

right,

if

I

don't

have,

if

the

autonomous

system

does

not

have

a

digital

twin,

that

will

tell

okay,

if

you

do

this,

the

performance

will

be

bad.

So

don't

do

it

it's

very

hard

for

your

autonomous

system

to

decide

what

to

do

right.

So,

basically,

that's

that's.

That's

that's

the

main,

the

main

goal

of

our

performance,

the

network,

digital

twin.

So

here

we

have

a

set

of

use

cases,

but

on

the

paper

listed

below

you

have

way

more

use

cases

you

can

check

them.

E

For

instance,

you

can-

and

I'm

assuming

here

that

this

digital

twin

is

deployed

with

a

traffic

telemetry

platform,

I'm

a

network

management

platform

which

are

not

easy

to

implement

or

deploy,

but

I

assume

that

they

are

already

there

and

that's

out

of

the

scope

of

my

presentation,

but

you

can

answer

questions

such

as

what?

If

so,

let's

say

that

I'm

employed

in

a

company,

I'm

running

the

network

and

my

company

is

thinking

about

acquiring

another

company.

So

I

can

ask

my

network

digital

twin.

E

E

E

What

will

be

the

impact

on

the

session

establishment

time

and

so

on?

Or

you

can

ask

questions

such

as

okay?

Can

I

support

new

user

slas

with

exactly

the

same

resources

I

do

have

or

do

I

need

to

buy

new

network

equipment,

or

maybe

I

need

to

upgrade

the

link

okay.

So

this

is

a

set

of

use

cases.

You

have

more

on

the

on

the

paper

if

you

are

interested

so

next

slide,

please,

okay!

E

What

are

the

scheduling

policy

you

are

using

any

arbitrage

scheduling

policy

will

work

like

strict

priority

weighted,

securing

deficit

run

robin

and

so

on

the

q

length

and

then

other

features

such

as

ecmp

lag

and

so

on.

I

know

that

some

of

these

features

are

very

old

and

and-

and

our

goal

is

to

academically,

show

that

whether

this

can

be

built

then

the

real

features.

I

think

it's

up

to

discussion

for

people

that

have

way

more

knowledge

on

how

the

industry

works.

E

Okay

and

the

traffic

load,

as

I

was

saying,

you

have

the

traffic

matrix,

which

is

how

much

how

much

bandwidth

I

have

from

one

ingress

point

to

one

egress

point

and

then

also

we

support

flows,

meaning

that

we

are

assuming

that

the

performance

network

digital

tune

will

support

flows,

meaning

that

we

have

this

amount

of

flows.

That

start

here

and

here

and

then

each

flow

has

a

different

type

of

traffic

like

voiceover

ip

video

on

demand

web

and

so

on,

and

we

don't

need

that

these

flows

are

described

as

five

tuple.

E

E

So

now,

let's

build:

let's

try

to

build

this

box

with

a

simulator

okay.

So

next

slide

please

so

here

what

we're

doing

is

we're

taking

this

box.

Okay

and

this

box

is

actually

a

simulator

okay,

it's

running

c

code

or

whatever

language

you're

using

and

it's

implemented

using

a

simulator.

So

next

slide.

E

Okay,

so

we

have

actually

we

did

that

and

for

that

we

use

the

obnet

plus

plus

simulator.

That's

that

discrete

event

simulator

with

basically

a

discrete

event

simulator.

What

does

it

simulates

the

propagation

transmission

and

forwarding

of

each

and

every

packet,

and

actually

the

forwarding

of

each

and

every

packet,

is

what

is

considered

inside

the

simulator

is

a

discrete

event.

So,

basically,

a

simulator

is

a

code

which

is

which

takes

all

these

events,

and

you

know,

goes

through

all

of

them

and

tries

to

understand

what

happens.

E

Discrete

event.

Simulators

are

very

well

known

in

networking.

There

are

way

more

discrete

object

is

just

one

of

them.

There

are

them

and

network

simulator

two

and

three

g

and

three

cisco

packet,

three

seven.

So

there

are

a

bunch

of

them,

but

all

of

them

they

work

under

the

same

principle,

which

is

they

simulate

what

happens

with

each

and

every

packet.

So

if

you

take

one

simulator

and

you

try

to

build

this

kind

of

box,

you

will

find

that

the

accuracy

is

very

good.

E

Now

what

is

accuracy-

and

this

was

one

of

the

questions

that

jerome

was

sending

to

the

list

right,

but

I

couldn't

see

here

I

mean

if

I

take

a

real

network

and

I

apply

a

configuration

and

I

I

load

it

with

a

certain

amount

of

traffic

and

I

measure

in

the

real

network.

What

is

the

delay,

for

instance,

for

a

particular

flow,

and

then

I

do

the

same

with

a

simulator,

the

difference

between

the

the

real

delay,

measured

at

the

network

at

the

real

network

and

the

delay

measured

at

the

simulator.

E

That's

what

I

call

accuracy:

okay

and

the

error,

the

higher

the

error,

the

the

worse,

the

the

the

less

accurate