►

From YouTube: IETF113-IPPM-20220321-0900

Description

IPPM meeting session at IETF113

2022/03/21 0900

https://datatracker.ietf.org/meeting/113/proceedings/

A

C

C

C

C

C

C

C

All

right,

looking

at

our

agenda,

we

are

spending

the

first

chunk

on

the

primary

working

group

documents

that

we

have

that

are

active.

We

have

a

bunch

of

documents

that

are

in

later

stages,

they're

with

the

isg

or

they

are

in

the

rfc

editor

queue

where

we've

been

very

productive.

So

thank

you

to

everyone

in

the

working

group

for

that,

but

today

we'll

go

through

some

of

the

protocols,

starting

with

some

of

the

more

newly

adopted

protocol

work

and

then

getting

some

updates

on

iom,

as

well

as

explicit

flow

measurements

and

srpf.

F

Good

morning

everybody

so

we've

got

the

capacity

measurement

protocol

to

talk

about

this

morning.

We've

got

a

working

group

draft

and

one

which

we've

updated

lynn

chaviton

my

long

time

colleague

and

I

are

working

on

this

together-

we're

looking

for

more

help

and

review

from

the

working

group.

So

next

slide.

Please.

F

We

have

the

test

stream

and

that

still

has

a

feedback

path

with

the

either

the

measurements

or

the

commanded

test

rates

to

use

for

the

next

50

milliseconds

or

so

so.

We've

got

a

continued

round-trip

relationship

going

on

throughout

the

operation

of

the

protocol

and

we

actually

set

bits

in

the

load

pdus

to

stop

the

test,

stop

one

and

stop

two

from

the

server

and

client

respectively,

and

so

that's

how

we

basically

turn

things

down

at

the

end

of

the

test.

Duration.

F

F

Good

suggestions,

like

the

suggestion

to

include

a

randomized

payload

option

and

also

how

that

might

be

implemented.

So

we've

tested

the

performance

of

that

and

the

code

suggests

performance

of

the

code.

It

suggests

very

little

compressibility

of

packet

payloads,

so

so

that's

good.

In

fact,

we

got

surprisingly

low

rates

and

in

some

of

the

tests,

where

you

know

much,

higher

rates

were

claimed.

F

F

You

don't

have

to

allocate

five

gigabits

to

everybody,

big

big

savings.

There

we

have

an

optional

stop

for

start,

I'm

sorry

start

rate

in

the

load

adjustment

algorithm.

Now

the

fixed

rate

option

remains

so

that

basically

means

that

if

you're

trying

to

achieve

gigabit

rates,

you

might

start

at

500

megabits

and

test

your

search.

Your

way

up

from

there

also

we've

got

backward

compatibility.

F

F

I

think

if

we

tried

to

implement

that-

and

in

section

four

I

took

out

all

the

parameters

that

were

basically

referred

to

or

were

redundant

with,

rfc

1997,

the

the

metric

and

method

and

trim

down

we've,

basically

trimmed

down

our

proposal

to

four

security

modes,

the

two

that

we've

got

implemented,

unauthenticated

and

password

and

we've

got

a

secure

setup

exchange.

So

that

would

just

be

the

first

part

of

the

setup

and

then

you

know

the

classic

secure

all

the

things.

That's

the

last

purpose.

F

F

I

mentioned

the

four

modes:

if

you

want

more

modes

say

so,

and

protocol

9

allows

for

a

new

load

adjustment

algorithm

with

more

robustness

to

all

sorts

of

problems,

because

the

feedback

we

got

is

is

people

said,

look

you

guys

say

you're

measuring

maximum

capacity.

So

please

always

do

that.

You

know,

even

even

if

you

have

to

put

more

load

in

the

channel,

do

it.

F

C

A

H

F

C

C

C

C

I

C

J

J

K

L

B

C

L

L

Yeah,

I'm

sorry,

I

hope

I

didn't

nobody

didn't

screw

anything

up

there,

but

the

implementation

is

in

go

and

it

is

has

been

tested

as

far

back

as

government

1.16,

presumably

to

work

with

earlier

versions.

I

just

haven't

tested

with

it,

so

not

that

it

won't

work,

but

this

is

sort

of

our

backwards

version.

L

L

Finally,

as

usual

with

this,

with

any

implementation

and

interoperation

test

and

work

is

to

take

advantage

of

this

experience

to

clarify

ambiguities

in

the

protocol

and

to

get

those

rounded

out,

and

that's

one

of

the

things

that

I

think

kristoff

will

talk

about

as

he

goes

into

talking

about

the

protocol

itself

and

the

changes

that

we've

made.

So

I

don't

want

to

steal

his

thunder

but

feel

free

as

we

go

forward

at

the

end

of

the

talk.

L

J

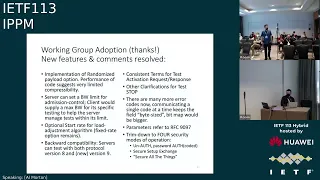

Thank

you

will

that

was

great

so

over

to

the

major

changes

in

the

itf

draft.

Thank

you

very

much

for

the

working

group

group

adoption.

So

during

this

presentation,

I

want

to

address

mostly

the

two

biggest

discussion

points

which

were

around

what

is

working

conditions

and

how

can

we

interpret

responsiveness

results?

J

J

We

are

trying

to

explore

how

the

network

behaves

when

it

is

under

traffic

patterns

that

end

users

actually

generate,

and

so

we

use

http,

2

or

http

3

in

the

future,

with

standard

congestion

controls

that

way,

we

create

the

realistic

part

of

the

network,

responsiveness,

working

condition,

and

how

can

we

push

it

to?

The

worst

case

scenario

is

by

creating

multiple

bulk

http

requests

like

now.

J

J

However,

we

want

to

create

what

we

call

a

stable

buffer

load

situation

so

that

we

can

actually

measure

it

over

a

certain

duration

of

time,

and

so

we

create

this

kind

of

stable

buffer

load

situation

by

creating

multiple

http

requests.

And

so

we

really

push

the

network

into

a

worst

case

scenario,

but

even

by

creating

those

multiple

bulk

http

requests.

J

J

Now

that

is

true,

definitely

and

each

network

point

between

your

client

and

the

server

has

the

potential

to

expose

buffer

bloat

and

to

to

have

buffer

bloat.

However,

buffer

blows

can

also

happen

in

the

end

host

sets

the

entire

networking

stack

from

ip

all

the

way

up

to

http

can

be

subject

to

buffer

load,

and

so

each

of

these

points

in

the

in

the

networking

stack

layer

has

the

potential

to

create

buffer

bloat,

and

so

because

our

methodology

is

using

http.

J

J

So

one

of

the

questions

during

the

adoption

call

was

well,

if

I'm

having

let's

say

I'm

measuring

responsiveness,

and

I

create

this

load

generating

connection

between

the

client

and

the

server

here

in

red

right

and

it's

filling

the

pipe

and

it

exposing

it

is

exposing

buffer

bloat

on

the

right

side.

Those

blue

boxes

that

I

draw

here-

let's

say

the

buffer

load-

is

happening

in

this

in

these

sections,

the

http,

the

tls

and

the

tcp

connection.

J

J

C

N

N

J

G

J

Thank

you

for

this

question,

so

I

agree.

It's

that's

imitating

multiple

different

clients

and

if

I

say

clients

I

mean

different

different

devices,

is

unfortunately

not

possible

with

this

kind

of

a

test.

We

would

need

to

have

some

inter-device

synchronization

and

communication

to

start

the

test

and

then

have

them

all

create

these

kind

of

http

bulk

data

transfers

at

the

same

time,

and

doing

that

is

not

possible

without

without

a

protocol

to

talk

between

the

devices

and

it's

from

our

perspective,

currently

out

of

scope

for

this

draft.

J

F

F

For

example,

you

know

where,

in

the

in

the

metric

and

method

that

the

protocol

I

just

talked

about,

supports

that's

a

maximum

iplayer

capacity.

So

there's

still,

I

think,

a

little

little

ambiguity

in

the

terminology

that

you

might

be

able

to

root

out.

Also,

I

didn't

notice

in

the

draft

any

discussion

of

the

effects

of

congestion

control.

Algorithms,

you

know

sort

of

the

older

ones

are

more

likely

to

fill

the

buffers,

and

some

of

the

newer

ones

have

the

goal

of

of

not

doing

that.

F

So

you

know

you're

going

to

get

different

levels

of

working

conditions

from

those

and

and

and

as

ignacio

says,

with

multiple

clients

you're

going

to

get

kind

of

a

mixture

of

those

congestion,

control,

algorithms,

potentially

so

there's

you

know,

there's

some

some

things

to

talk

about

here

and,

and

you

know,

unfortunately,

I

don't

think

we

can

shut

the

door

on

on

all

these

discussions.

Quite

yet,

but

thanks

for

putting

your

work

together,

appreciate

it.

J

Thanks

a

lot

for

your

feedback,

al

yeah

we'll

definitely

try

to

clean

up

some

parts

of

the

capacity

wording

and

I'll

take

your

feedback

and

go

another

pass

on

capacity

and

the

wording

we're

making

sure

that

we

use

the

right

terminologies

there

and

I

like.

Actually

the

suggestion

aren't

having

a

discussion

about

congestion,

controls

I'll,

add

a

subject

section

for

that

as

well.

C

O

O

O

O

O

O

O

O

O

O

O

C

C

P

M

Draft

so

about

the

data

integrity.

We

have

clarified

the

scope

of

the

document

so

basically

now

the

integrity

protection

is

on

iom

data

fields

and

not

including

headers.

For

obvious

reasons,

we

have

shared

on

the

mailing

list

and,

as

a

consequence,

the

algorithms

were

returned

to

be

more

generic,

and

so

they

work

for

currently

defined

iom

option

types

and

so

for

future

defining

and

also

as

a

direct

consequence.

M

The

direct

export

option

type

is

not

included

anymore

because

it

doesn't

fit

because

if

you

see

the

define

the

defining

of

such

option

type,

it

doesn't

contain

any

ion

data

field

per

c.

Okay.

So

if

you

really

want

to

go

that

way

and

have

protection,

I

think

we

could

have

a

per

hub

verification,

but

not

sure

if

you

want

to

go

that

way.

So

we

can

just

discuss

that

later

on

the

mailing

list,

and

so

the

update

also

includes

some

editorial

changes.

M

M

C

A

Was

that,

given

that

this

document

is

really

a

document

that

was

inspired

by

the

working

group

originally

right,

where

people

said

well

yeah?

Well,

even

though

we're

in

a

limited

domain,

we

do

want

to

have

a

dedicated

way

to

go

and

ensure

integrity

above

and

beyond

what

you

can

go

and

do

with

the

underlying

transport,

if

you're

riding

on

on

top

of,

say,

v6

and

would

be

able

to

use

authentication,

headers

and

the

likes.

And

so

we

would

really

appreciate

hearing

back

from

people

that

well

originally

driving

the

the

questions.

A

A

Okay,

I

think

it's

not

so

much

of

a

of

a

security

review,

because

I

think

the

methods

that

we

are

using

are

well

well

known

and

state

of

the

art.

There's

nothing

really

new

or

inventive.

Here,

it's

more

like

application

appliance

well

donor

to

the

problem,

but

let's

see

whether

people

consider

that

is

well

usable,

useful

in

the

context

of

the

the

original

problem

that

people

had

in

mind.

J

A

My

bad

right,

so

the

document's

been

pretty

stable.

We've

not

really

heard

anything

back

and

sorry,

gentlemen.

I

I

forgot

to

add

the

beer

reference

or

not.

I

didn't

forget

that

the

beer

reference

I

forgot

to

publish

the

draft,

the

the

o1

version

before

the

cut

off

so,

which

is

why

I

added

the

github

reference

I'll

I'll

push

that

out

today

or

well.

A

D

A

D

A

I

I

F

C

Q

R

R

R

R

This

case

we

have

the

measurement

of

all

the

packets

from

one

side

and

a

percentage

of

the

package

from

the

side

with

more

packets

and

the

one-way

particulars

that

are

made

in

the

best

options.

In

our

opinion,

using

it

to

be,

the

square

beat,

is

common

to

the

option

that

we

can

have

before

this

measurement

and

two

different

bits

for

the

second

part

of

the

measurement.

The

loss

event

need

the

reflection

square.

R

Okay,

the

number

of

groups

and

the

companies

that

works

about

this

top

is

is

incremented

from

last

time

because

the

university

of

technion

joined

us

in

this

kind

of

work

and

in

technology.

If

your

caller

from

huawei

manage

the

contact

with

his

institutional

research.

So

we

are

a

free

university.

Three

different

research

groups

that

explore

this

kind

of

methodology

for

measurement

in

the

network.

R

Last

update,

so

it

is

a

meeting

we

don't

present

the

draft

updates,

because

we

are

waiting.

The

very

extensive

revision

that

the

ike

coons

from

akin

university

terminate

only

last

thursday,

and

so

we

are

waiting,

is

a

revision.

I

thanks

ike

for

his

work

and

the

work

of

his

research

group

in

the

university

because

he

implemented

all

the

algorithm

described

in

this

draft.

So

we

have

a

new

implementation,

totally

separate

from

the

others,

so

he

tested

also

the

clarity

of

the

description

of

the

algorithm

and

he

suggested

some

updated.

R

The

main

tool

is

about

the

tv

description,

the

routing

packet

loss,

because

he

asked

to

clarify

better

the

token

mechanism

that

maintains

the

throughput

of

the

measurement

equal

in

the

two

directions:

the

discussion

about

the

trade-off

of

the

duration

of

the

measurement,

because,

if

the

measurement

the

period

of

the

measurement

is

longer,

we

have

less

measurement

and

is

is

short.

We

measure

less

packets

for

the

packet

loss,

so

there

is

a

trade-off.

There

is

a

little

bit

discussion

that

is

better

to

describe

in

the

draft.

R

R

R

There

are

many

options,

it

depends

from

the

protocol.

For

example,

some

protocol

have

only

two

bits,

so

only

one

bit

can

be

used

for

packet

loss.

This

is

a

little

bit

the

problem

because

with

them

one

bit,

the

measurement

are

more

less

precise

for

the

delay.

One

bit

is

a

sufficient,

because

both

spin

bit

and

delay

beat

also

in

the

hidden

version

needs

only

one

beat.

R

R

So

the

conclusion

is,

there

are

quite

a

big

work

about

this

topic.

There

are

some

sibling

draft

in

the

ppm

working

group,

one

in

particular,

that

is

about

putting

the

probe

not

in

the

network,

as

we

thought

at

the

beginning

of

the

work,

but

also

in

the

end

user

device,

in

order

to

have

an

end-to-end

measurement

in

a

very

simple

way,

also

for

methodology

like

a

kubit

that

is

not

an

end-to-end

methodology.

R

So

it's

very

convenient

and

it's

possible

to

measure

end

to

end

and

combining

this

measurement

with

probe

in

the

network

is

possible

to

split

the

measurement

and

to

locate

the

problem.

If

there

is

other

sibling

draft

are

presented

in

other

working

group

in

the

co-op

working

group

in

particular,

the

last

one

in

the

past

was

present

in

quick

working

group,

and

there

are

one

draft

also

in

tcpa

proposal.

R

R

R

R

There

is,

in

our

opinion,

a

little

bit

difference

about

the

packet

loss

because

for

quick

we

prefer

cubit

and,

albeit

for

tcp,

cubit

and

rbit,

because

the,

albeit

is

a

simple

as

a

simple

implementation,

and

there

is

a

less

measurement

delay

their

bit

detector's

losses

also

for

all

accurate

tcp

packets.

It

is

a

protocol

independent,

so

it

not

depends

from

the

implementation

of

the

protocol.

So

there

are

strengths

and

the

weakness

in

the

bot,

but

in

our

opinion,

for

quick

is

better,

albeit

as

second

was

beat.

R

C

R

No

because

we

decided

to

start

from

a

ppm

also

with

some

materials

that

are

agree

with

this

approach

and

after

we

put

this

idea

inside

the

specific

protocol

working

group

for

copper,

the

the

draft

is

active.

We

presented

in

the

last

interim

meeting

where

people

represented

is

a

quite

good

interest

from

the

working

group

for

quick

in

particular

in

past,

was

presented.

C

Do

do

we

do

we

want

to

essentially

publish

this

without

any

adopters,

necessarily

or

or

if

we

had

some

adopter

lined

up.

That

was

that

we

thought

would

come

in

relatively

soon.

Then

we

could

see

if

there's

any

feedback

from

that

protocol

or

group

before

we

kind

of

finalize

and

publish

this

document,

but

that's

not

necessary.

C

C

P

P

P

So

there

is

either

zero

or

one

we

added

a

sub

dlv

for

srv6,

it's

a

structure,

sub

tlv

and

some

small

minor

editorial

changes,

and

we

have

no

open

issues

currently,

so

the

structure

srv6

segments

tlv

basically

identifies

the

structure

of

the

128-bit

srv6

seat,

so

128

bits,

some

bits

can

be

for

the

node,

some

for

the

function

length

and

some

for

the.

So

it

just

identifies

that

this

is

in

line

with

other

drops

and

repsis

for

a

srv6.

P

P

P

P

C

P

C

P

C

S

S

We

have

a

primary

client

primary

server,

secondary

client,

secondary

server

architecture

and

yeah.

The

draft

mentions

a

rational

for

it.

This

is

a

summary

mentioned

in

our

appendix

we

give

one

possible

way

of

registration

protocol

and

we

keep

the

option

open

to

enterprises

to

either

use

this

or

have

their

own

registration

protocol

so

well.

There

is

a

flow

between

primary

client

and

the

primary

server

where

well.

S

Well,

this

secret

is

later

shared

with

with

the

secondary

server

and

the

secondary

clients.

We

had

received

some

comments

in

the

side

meetings

with

sharing

the

secret

with

the

secondary

clients,

and

we

had

taken

care

of

it

by

generating

client

specific

keys.

So

what

primary

client

does

is

it

generates

generates

the

client

specifically.

S

Keep

the

mic

up

your

mouse,

so

what

the

primary

client

does

is.

It

generates

the

client

specific

keys

by

doing

a

kdf

with

the

info

parameter

as

the

client

ip

and

generates

a

specific

client

generate

the

specific,

secondary

client

keys

and

for

sharing

all

these

keys.

We

are

using

tls

as

a

means

so

yeah.

S

S

C

S

T

F

S

Q

S

Q

Q

I

think

that

when

we

enterprises

finally

do

get

ipv6

networks,

which

we're

dragging

our

heels

on

terribly

on

right

now

that

we

will

have

other

pieces

of

information

in

other

extension

headers,

and

I

would

not

like

to

see

a

different

solution

for

each

one

of

those

that

we're

going

to

deploy

in

the

future.

So

those

are

my

three

points

and

we're

excited

about

this

development.

I

hope

it

can

continue.

T

Yeah,

thank

you

so

much

mike

and

one

thing.

I

know

that

I'm

going

to

just

put

a

boost

out

mike

and

I

have

been

working

with

a

lot

of

the

federal

government

in

the

united

states

to

help

them

do

their

ipv6

address

planning,

because

ipv6

planning

at

enterprises

has

lagged

a

great

deal

and

and

we're

hoping

actually

to

get

some

of

these

federal

agencies

to

talk

next

time,

but

we're

working

with

at

least

three

different

agencies.

T

F

Just

good

timing,

I

guess-

and-

and

you

know

in

today's

environment,

it

makes

a

lot

more

sense

to

have

an

encrypted

version

of

it.

So

just

offering

my

support

for

this

direction

draft

a

long

time

ago,

you

know

I

I

I

appreciate

that

they've

got

a

lot

of

work

to

do

here

and

it

should

be

interesting

to

see

it

complete

thanks.

B

Martin,

duke

google,

no

hats

on

yeah

like

encryption's

good,

so

thank

you.

If

we

do

adopt

this,

I

think

it'd

be

good

to

get

really

early

sec

area

review

of

this.

I

don't

know,

I

mean

you've

mentioned

some

cryptographers

a

bunch.

I

don't

know

who

those

people

are,

but

we

should

probably

run

this

through

suck

area

sooner

rather

than

later.

C

C

B

U

U

It's

a

method

to

collect

and

transport

on

path,

telemetry

information

it

can

equally

be

used

in

a

point-to-point

or

point-to-multi-point

cases,

and

the

point

of

multi-point

cases

is

a

tricky

one

because,

as

you

understand,

the

replication

of

packets,

if

packet

includes

telemetry

information,

inherently

leads

to

replication

of

upstream

collected

telemetry

in

some

environments.

That

is

not

a

big

concern,

but

some

networks

are

especially

for

the

services

that

a

premium

service

and

use

guarantees

for

like

out

reliable,

low

latency

services

that

that

becomes

challenging.

U

S

U

Another

advantage

of

what

can

be

seen

as

advantage

of

this

method

is

that

the

collection

can

be

done

out

of

band

so

which

means

that

it

follows

their

topological

path

but

uses

a

different

class

of

service

so

not

to

use

the

same

bandwidth

allocated

for

the

data

flow

that

is

monitored

and

again

in

many

environments.

In

many

cases

that

would

be

advantageous

because

it

would

not

use

their

premium.

Bandwidth

next

slide

please.

U

We

had

discussions

and

we

added

some

fields

for

ease

of

parsing,

and

it's

expected

that

it's

processed

at

their

same

notes

that

are

generating

information

and

each

node

when

it

receives

the

trigger

packet,

originates

information

and

holds

it

for,

according

to

the

local

policy

for

the

follow-up

package,

one

of

their

options

that

it

gives

us

is

that

we

can

export

raw

measurements

for

each

trigger

packet

or

measurements

can

be

statistically

processed

locally

and

then

collected

using

their

follow-up

packet

next

slide.

Please.

U

U

Transit

ingress

node

originates

the

follow-up

packet

and

then

each

transit

node

adds

more

information.

If

the

mtu

is

about

to

be

exceeded,

then

it

uses

their

encapsulation

of

preceding

follow-up

packet

and

starts

additional,

generates

additional

packet

so

and

then

they

arrive

at

the

egress

node

next

slide.

U

So

that

was

the

case

of

for

point

to

point,

and

this

diagram

reflects

the

theory

of

operation

for

point

to

multipoint,

and

so

because

we

identify

the

flow

we

don't

have

to

copy

the

packets

and

they're

replication

node

only

generates

a

new

follow-up

packet

that

collects

telemetry

information

on

downstream

of

their

multicast

tree

slide.

Please.

U

And

this

is

very

interesting

mode

that

was

suggested

by

pascal,

so

that

it

works

upstream

and

their

follow-up

packet

is

generated

by

egress

node

of

their

for

the

flow

and

then

as

sent

in

return

path,

tracing

the

same

path

of

the

monitored

flow

to

the

ingress

so

which

might

be

useful

for

cases

when

the

ingress

node

needs

to

be

aware

of

the

performance

and

can

influence

the

network

by

selecting

certain

scenarios.

One

of

the

possible

scenario

was

the

wireless.

U

U

U

U

U

U

So

their

key

element

is

their

time

unit

or

pam

interval,

and

then

we

differentiate

the

error

interval.

The

interval

when

their

metrics

exceeds

the

optimal

thresholds,

predefined

and

error-free

interval

when

their

performance

is

below

optimal

or

better,

not

below,

but

below

the

threshold,

so

not

exceeding

their

optimal

thresholds,

and

there

is

no

defect

being

detected.

U

With

error

error

interval,

we

can

identify

the

severely

errored

interval

so

and

define

it

would

be

proposed

to

define

it

as

an

interval

where

their

performance

net

metric

exceeded

critical

level

previously

predefined

or

defect

was

detected.

You

can

notice

that

a

severely

arrowed

interval

is

a

subset

of

error

interval.

S

U

U

U

U

So

we

identified

the

items

for

further

discussion

and

future

work

that

will

be

outside

of

the

scope

of

this

draft

and

we

welcome

our

inputs

and

contributions

and

collaboration

next

slide.

Please

so

welcome

comments,

discussion

and

we

think

that,

as

a

merge

of

the

work

that's

been

discussed,

we

would

like

ask

the

chairs

to

consider

working

group

adoption.

C

C

I

I

C

S

C

A

A

The

first

one

is

on

using

either

type

a

protocol

and

identification

to

carry

iom

data,

and

that

is

largely

for

protocols

like

gre

or

we

can

use

that

for

geneva

as

well.

The

second

document

is

for

raw

export

of

iom

data.

Take

the

the

ium

data

blob

and

export

it

using

a

fix,

very

simple

method

to

go,

and

at

least

have

one

standard

means

to

go

and

get

the

data

out.

So

the

two

drafts

are

old,

mature

both

of

them

started

in

in

2018

and

they've

been

tagging

along.

A

A

We

would

not

even

need

to

go

and

do

an

rfc

for

that

in

order

to

go

and

get

the

additional

code

points,

but

I

think

it's

better

if

we

do

it

that

way,

because

that

means

people

are

aware

of

it

and

it's

it's

well

documented

how

they

want

to

go

and

be

done.

The

second

thing

is

a

draft

that

brian

weiss

wrote.

Originally

a

couple

of

protocols

use

an

either

type

to

identify

a

particular

header

geneva's.

A

A

A

Direct

export

is

iom,

information

or

flags

that

tell

a

node

to

go

and

extract

the

data

and

then

eventually

ship

it.

How

it's

been

shipped

direct

export

doesn't

tell

you

right.

So

direct

export

is

about

flags

and

iuem

on

the

wire

that

tell

a

node

what

to

do.

Raw

export

is

what

rapper

you

want

to

go.

Well,

some

means

in

ipfix

to

go

and

get

us

a

quote:

standardized

effort

to

go

and

get

data

off.

The

note.

C

A

A

R

A

A

C

I

That's

too

quick.

This

topic.

We

talk

about

performance

management

on

a

lab,

including

two

drops

for

stamp

at

the

tm

extensions

lag,

provides

multiples

to

combine

multiple

physical

links

into

a

single

logical

link.

Usually

when

forwarding

traffic

over

lag,

the

hash

based

motion

is

used

to

load

balance.

The

traffic

across

the

member

links

link

delay

of

each

member

links

varies

because

of

different

transport

paths.

To

provide

low

latency

service

for

time

sensitive

traffic,

we

need

to

leastly

steer

the

traffic

across

the

lag

member

links

based

on

the

link,

delay,

loss

and

so

on.

I

That

requires

a

solution

to

measure

the

performance

metrics

of

every

member

link

of

lag,

existing

active

pm

methods

around

a

single

test

session

over

the

aggregation

without

the

knowledge

of

each

member

link.

This

will

make

it

impossible

to

measure

the

performance

of

a

given

physical

member

link.

The

measured

traffic

management

metrics

can

only

reflect

the

performance

of

one

member

link

or

an

average

of

all

the

member

lengths

of

the

lag

to

solve

this.

We

followed

the

similar

idea

of

rfc

71

and

30

the

bfd

on

that

next,

please.

I

And

to

measure

the

performance

matrix

of

every

member

link

of

a

lag,

not

multiple

sections

need

to

be

established

between

the

two

endpoints

that

are

connected

by

the

lab.

These

sessions

are

called

micro

sessions.

The

micro

sessions

need

to

associate

with

the

corresponding

member

links,

for

example,

when

the

reflector

receives

a

test

packet,

it

needs

to

know

from

which

member

link

the

packet

is

received

and

correlated

with

a

micro

session.

I

I

This

shows

the

omp

and

the

tvamp

extensions,

including

control

message

and

the

test

packet.

We

add

two

new

control

messages,

the

request,

the

om

micro

sessions

and

the

requested

tv

micro

sessions

and

in

the

test

packet.

We

add

sender,

micro

session

id

and

the

reflector

micro

section

id

both

ids

are

locally

assigned

next.