►

From YouTube: IETF92-LWIG-20150325-1520

Description

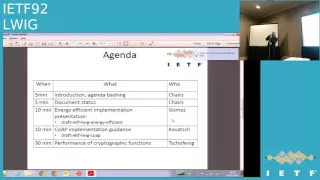

LWIG meeting session at IETF92

2015/03/25 1520

A

A

So

here's

a

little

there's

a

link

to

the

meeting

material

and

the

jabba

room

and

the

blue

sheets,

or

should

be

going

around

and

again.

If,

if

you

get

up

to

the

might

to

speak,

please

speak

clearly

and

state

your

name.

Thank

you!

So

here's

the

agenda,

I,

don't

the

any.

Do

we

need

to

do

any

agenda

bashing

on

this?

Anyone

have

any

other

items.

They

want

to

talk

about.

A

Okay,

hearing

on

so

I'll

just

quickly

give

a

quick

update

on

the

document

status.

So

the

l

word

cellular

document.

So

there's

a

working

group

last

call

issued.

There

was

little

response

on

the

mailing

list,

but

there

are

no

objections,

so

assume

that's

a

tacit

approval

and

so

therefore

will

advance

the

document

with

the

energy

efficient

draft.

There's

a

presentation

today

from

Carlos

is

he

here,

yep,

yeah

and

I?

Guess

that'll

I

think

he

asks

at

the

end

whether

he

thinks

is

ready

for

work

and

written

as

cool

as

the

eye

could

be

too

minimal.

A

A

There's

a

couple

of

expired

documents:

there's

a

el

hueco

app,

which

I

think

Matthias

is

just

going

to

give

a

a

quick

presentation

on

all

those

are

going

to

present

on

each

it's

going

to

make

some

discussion

on

this

and

TLS

minimal.

Again.

I

think

this

is

kind

of

just

a

hangover

from

where

we

were

in

Honolulu,

really

so

I,

don't

that

has

been

any

change

to

that,

not

Allison

shaking

his

head.

Okay,

so

the

first

presentation

is

from

Carlos

Gomez.

C

Thank

you

good

afternoon.

My

name

is

Carlos

Gomez

and

I'm,

going

to

present

an

update

on

the

draft

entitled

energy

efficient

implementation

of

ITF

constraint.

Protocol

suite

also

known

as

the

energy

efficient

draft.

Okay.

Let's

take

a

look

at

the

status

of

the

document.

This

draft

was

last

updated

around

three

weeks

ago.

This

last

version,

which

is

dash

02,

intends

to

incorporate

the

feedback

collected

during

the

last

ITF

meeting

in

Honolulu,

in

which

there

were

several

useful

comments

given

by

people.

C

So

let's

take

a

look

little

bit

of

more

detail

on

these

changes

in

section

3,

which

is

the

section

which

focuses

on

radio

duty

cycling

techniques,

there

is

a

new

subsection,

3

dot

3

entitled

through.

This

is

for

completeness

because,

for

instance,

in

in

the

same

section,

there

was

section

3

dot

to

focusing

on

the

impact

of

radio

duty

cycling

on

latency

and

buffering.

C

So

we

have

added

this

section

on

throughput,

acknowledging

in

the

text

that

this

is

not

typically

a

key

concern

in

constrained

no

networks.

However,

it's

still

important

in

some

services,

such

as

over

here

software

update

or

also

when

sensors,

which

are

offline,

mostly

have

some

short

opportunity

for

transferring

measurements

which

have

been

accumulated

during

this

offline

period.

C

Also,

we

state

the

radio

duty

cycling

leads

to

yet

another

trade

off

time

is

between

energy

consumption

and

throughput.

Another

change

is

new

subsection

3,

that

forum

titled

radio

interface

tuning.

However,

most

of

the

text

here

is

text

which

was

previously

in

section

3,

the

two

in

the

last

draft

version,

and

this

change

has

been

done

mainly

to

achieve

a

better

document

organization

and

also

there

is

some

additional

sentence

to

to

make

a

more

smooth

transition

to

the

next

subsection.

C

So

this

next

subsection

is

three

dot:

five,

which

corresponds

to

the

old

section

303.

This

is

the

section

focusing

on

the

Power

Save

services

available,

in

example,

low-power

radios

and

the

main

change

in

this

section

is

in

subsection,

3

dot,

five

dot,

one

in

which

we

have

deleted

the

V

in

the

title.

If

the

title

they're

used

to

to

be

bullied,

it

could

read

powersave

services

in

802

11

b.

C

C

Ok,

another

section

with

some

changes

in

section

6,

which

focuses

on

application

layer.

Now

there

is

some

structure

added

to

the

document.

The

sense

that

previously

there

were

no

subsections

here

now

we

have

tried

to

organize

a

bit

better.

The

content

with

these

three

subsections,

six

dot,

one

entitled

energy-efficient

theatres

in

coop,

62,

title

sleepy

note,

support

and

six

dot

3,

which

focuses

on

coop

timers.

C

The

content

in

subsection

6

dot

1623

is

the

same

as

in

the

previous

drug

version,

and

the

changes

are

mostly

in

subsection

6,

the

two

where

we

have

other

a

reference

to

the

publish-subscribe

draft.

Also

we

mentioned

the

OMA

I'd,

wear

them

to

mq

load

and

also

we

mentioned

the

one

in

2m

quad

binding

with

an

application-layer

mechanism

for

slipping

notes.

C

C

Nevertheless,

there

still

we

have

incorporated

a

comment

from

Dominique

who

mentioned

that

it

could

be

useful

positive

to

have

good

interfaces

between

layers

anyway

and

other

minor

changes

have

been

applied

throughout

the

document,

mainly

verifications

also

editorial

improvements

and

a

couple

of

new

references

which

have

been

added

so

after

these

changes.

We

believe

that

the

document

is

mostly

ready,

so

you

would

like

to

ask

the

chairs

weather

is

possible

to

issue

a

working

group

last,

all

okay,.

A

B

I

read

the

document.

I

find

it

excellent,

very

lots

of

useful

information.

Very

good

work.

I

have

to

suggestions,

one

is

for

15

dot

for

in

you

describe

the

TCH

mechanism,

I

think

unless

you

have

another

opinion,

just

to

be

fair,

the

SME

should

be

mentioned

as

well.

It's

not

this

quiet

I

know

that

there

are

six

dish,

work

group

working

groups,

as

knows

its

image

working

group.

B

So

I

don't

know

if

you

have

any

opening

that

the

industry

care,

but

the

SME,

if

it

doesn't

then

find

drop

jessamy

but

I'm

mature

and

the

second

appointment.

Oh

yes,

you

mentioned

Bocelli

in

the

document

as

well

and

as

a

cover

of

a

dect

ule

draft

I.

If

time

permits,

I

would

love

to

be

able

to

add

a

section

on

on

deck

tivoli

as

well

just

to

have

a

complete

picture

so

I

know

then

I

take

the

onus

of

providing

text.

We

try

to

do.

Okay,.

C

A

B

D

D

B

A

D

Okay,

I

wanted

to

talk

a

little

bit

about

performance

analysis.

I

did

with

a

co-worker

of

mine,

manuel

and

excite

so

a

little

bit

about

the

motivation

of

why

I

had

been

actually

working

on.

This

was

the

meanwhile

expired.

Elevate

document

initially

contains

guidance

and

also

performance

figures.

So

if

you,

if

you

look

up,

try

to

find

the

expired

version,

that's

what

you're

going

to

find

in

there,

but

in

the

meanwhile,

after

the

work

was

started,

the

dice

group

was

formed

in

air.

D

So

data

we

would

like

to

have

is

we

would

like

to

know

what

is

that

the

code

size

typically

coat

will

end

up

in

flash

and

how

much

code

size

to

the

different

extensions

actually

need

the

message

size

and

a

communication.

Overhead

is

important

to

some

of

you

specifically,

if

you

use

some

of

the

low-power

radio

technologies,

who

have

a

very

small

n,

tu

sais,

but

a

lot

of

extension

work

going

into

that

to

reduce

the

size

of

the

messages.

Overall,

cpu

performance

is

important.

D

D

Don't

they

want

to

have

a

rough

estimate

on

what

they

can

expect

to

actually

choose

the

right

platform

rather

than

trying

everything

out

themselves,

which

is

a

very

time-consuming

process,

just

telling

them

use

TLS,

and

some

of

the

extensions

is

not

not

enough

I

believe

so.

I

said

there

is

already

something

in

English

dls

minimum

a

minimal

document,

namely

we

have

code

size

of

various

basic

building,

blocks,

cryptographic,

functions

and

so

on:

hey

sm1,

libraries

etc,

but

only

from

from

one

single

stack.

So

we

ideally

want

to

have

data

from

from

different

implementations.

D

So

we

have

more

referencing

representative

coverage,

there's

memory

information

in

the

oven

on,

unfortunately,

only

for

the

British

etsy

credential

version,

nothing

for

the

elliptic

curve,

cipher

suites,

that

we

also

recommend

in

a

profile

document

and

then

for

a

communication.

Overhead

there's

only

very

high

level

information

they're,

mostly

focusing

on

the

record

layer.

Okay.

D

A

D

What

boards

and

processes

I

use

and

then

talk

about

the

actual

performance

data,

and

so

that

the

material

in

the

slides

that

should

give

you

enough

information

to

actually

redo

the

test

year

on

your

own

to

bury

frightened

okay.

So

the

focus

was

my

focus

was

on

road

trip,

though,

at

this

point

in

time.

Not

the

product

release

changes,

although

I

will

sort

of

talk

about

at

the

end

of

the

presentation.

Talk

about

the

relevance

for

the

dls

exchange

as

well.

D

So

I

covered

the

classically

curves

and

also

did

some

preliminary

work

on

the

curves

that

dan

bernstein

proposed

curve,

255,

19

and

particularly

focused

on

runtime

performance

rather

than

energy

consumption

or

code

size,

there's

a

little

bit

of

ram

usage

in

there.

But

it's

it's

yeah.

It's

not

in

that

fully

complete

data,

as

you

will

see

in

a

second

I,

didn't

use

any

hardware

acceleration

on

the

surface

messed

up

the

the

the

data

I

used

open

source

code,

namely

I

used

the

polarizer

cell

stack

on

which

has

been

renamed

to

embed

pls

ack.

D

D

B

Rihanna's

carolyn

just

a

question

on

this

because

you

raise

it

the

plenary

as

well,

and

I'm

just

wondering

if

this

is

an

area

where

we

could

do

some

further

development.

I'm

just

wondering

it.

You

know

can-can

people

who

are

versed

in

security,

maybe

school

us

a

little

bit

on

what

constitutes

a

good

source

of

entropy,

and

is

there

a

very

cheap

solution

that

perhaps

could

be

you

know,

maybe

you're

measuring

thermal

noise

or

something

and

just

attach

it

to

a

GPIO

pin?

What's

the

you

know,

what's

the

most

cost-effective

way

to

do

that?

D

Should

that's

it

that's

a

good

point,

so

there's

this

IDF

RFC

that

provides

some

information

about

that,

but

many

of

the

recommendations

or

descriptions

in

their

focus

more

on

systems

that

have

already

some

hardware

components

in

it.

I

can

be

used,

so

it

might

be

good

to

actually

invite

someone

to

just

give

a

talk

to

us

and

performance

a

little

bit.

Ideally,

what

you

want

to

have

is

a

microprocessor

that

has

already

a

random

number

generator

on

board,

and

so

you

don't

need

to

worry

about

it

and

those

exist.

B

Maybe

I

can

give

part

of

an

answer

to

that.

It's

pretty

easy

to

measure

a

thermal

noise

in

a

resistance

or

diet

Junction

a

DA

to

D

converter.

You

need

to

have

some

gain.

So

if

you

have

an

operational

amplifier

around

your

microcontroller,

you

can

use

your

ID.

It

is

to

measure

no

reason,

that's

a

very

good

random

number

generator.

You

just

need

to

be

careful

that

you

don't

inject

electronic

noise

from

the

power

supply

into

your

thermal

noise,

because

then

you

get

correlation

with

the

software

activity

of

the

node.

D

So

what

I,

what

I

did

on

so

obviously

I

have

to

use

the

platform.

I

have

to

use

some

processes

and

so

they're

different

arm

processes

assume

a

main.

Oh,

so

there's

a

cortex,

a

series

which

you

find

your

mobile

phone

tablet

into

your

home.

Rather

a

dose

I

haven't

used,

they

have

high

performance,

they

would

have

no

problem

at

all

with

any

of

the

crypto

that

you

see,

which

is

loved

it.

So

I

used

the

cortex

and

processes

which

you

find

on

many

IOT

devices.

D

D

D

Yeah

ECC

doesn't

mean

a

little

coffee.

Okay

and

of

course

I

didn't

use

just

a

bra

processors,

but

instead

on

bots

and

emma

ports

have

some

of

me,

so

you

can

actually

look

at

them.

The

nice

thing

about

them

is

that

normally

for

for

experiments,

you

already

have

all

the

bidding

the

pins

there,

so

you

can

even

sense

us

on

these

sports

and

so

on.

So

you

can

actually

ready

ready

to

use

them

rather

than

building

your

system.

D

So

so

it

said

previously,

I

have

basically

the

full

range

of

coverage

and

I

try

to

also

have

different

boards

with

different

cpu

speed,

so

that

there's

a

little

bit

of

difference.

I

had

I

noticed

that

picture

of

my

desk

program.

If

and

as

I

explained

later,

if

you

don't

have

enough

RAM,

then

you

will

not

be

able

to

use

some

of

the

optimizations

and

then

you

are

unfortunate

state.

We

talk

about

that

later.

D

So

they

have

these

funny

names

and

the

number

indicates

the

key

length

so

we're

talking

about

Keela

in

a

second,

the

second

type

of

curves

I'll

have

to

look

into

the

college

curves

followed

by

the

German,

bring

pool

curves,

which

also

standardized

in

as

an

IDF

RFC

and

as

I

said

curve

255,

not

19,

which

is

a

more

recent

one.

Currently

under

discussion

and

standardization

efforts

are

ongoing.

So

we'll

see

those

from

a

recommendation

point

of

view.

Coop

coop

recommends

the

NIST

curve.

200

256

are

one.

A

Is

a

question

that

nobody

in

this

room

cannot

so

yeah

exact?

However,

there

is

an

RFC

RFC

60

mg,

which

for

well

as

some

of

these

curves,

actually

shows

that

they

can

be

constructed

from

information

that

was

available

before

the

year

nineteen

ninety-four.

So

if

you

know

about

petland

lifetimes

that,

if

you

are

not

a

lawyer,

this

should

give

you

some

influence.

If

you

are

a

lawyer,

you

know

that

law

little

bit

little

bit

more

complicated

anyway.

A

D

Definitely

yeah:

okay,

yeah.

The

note

that

the

end,

the

pips

related

specification

also

gives

them

a

different,

sometimes

gives

them

a

different

names.

So

you

see

p

and

then

followed

by

the

key

line,

speed

256

so

depending

on

which

document

you

read

you

you,

you

need

to

figure

out

which

what

it

mastery

so

the

Cobras

curves,

are

easy

to

recognize,

but

it

because

they

have

the

cave

at

the

end

after

the

number

versus

they

are

in

case

of

the

nice

curves.

They

were

so

I'm

talking

also

about

a

few

optimizations.

D

Those

are

the

first

optimization

and

you

see

the

references

here

for

further

details.

They

are

already

described

in

case

of

the

NIST

optimization

already

described

in

this

specification

itself.

So

that's

a

obviously

an

obvious

obvious

thing

to

do

and

what

is

specific

really

does

are

in

this

is

actually,

if

you

look

at

the

the

curse

they

some

of

them

are

specially

constructed

and

the

primes

are

specially

constructed

in

such

a

way

that

it

makes

the

point

multiplication

scalar

multiplication

faster

and

that's.

Why

why

you

see

a

difference

between

the

performance

of

all

these

different

curves?

D

And

so

you

need

to

look

at

if

you

want

to

know

more

about

this

optimization,

you

go,

go

and

click

the

links,

so

NIST

optimization

basically

uses

the

structure.

This

special

structure

of

the

prime,

the

fixed

point

of

dimensional

optimization,

bring

computes

points

because

it

uses

a

more

efficient

way

of

doing

the

multiplication

and

then

the

reduction.

So

what

what?

In

naively,

what

you

would

do

is

I'm

like

in

general,

like

you,

you

multiply

numbers

anything.

D

Like

techniques

like

the

Montgomery

letter,

you

may

have

working

in

other

working

groups

and

finally,

there's

a

run

time

technique

and

called

a

windowing

effects

or

a

fixed

by

the

optimization

can

be

done

up

front.

Basically

up

front,

if

you

know

what

so

it

depends

on

on

the

exchange.

Work

in

many

cases

can

be

done

up

front

and

the

window

technique

is

it's

also.

It's

also

for

efficient

exponentiation,

but

it's

a

runtime

technique.

D

It

doesn't

offer

such

a

men's

performance

benefit

and

those

are

a

parameter

sort

of

window

technique,

I

list

them

as

a

W

and

the

values

are

from

two

to

seven

sort

of

and

the

other,

the

other

ones,

the

NIST,

optimization,

fixed

point,

optimizations,

Ida,

enabled

or

disabled.

You

will

see

the

impact

of

doing

that.

Ok,

so

I

looked

into

I'm

sort

of

the

cipher

suites

that

are

standardized

that

are

also

recommended.

D

They

are

using

diffie-hellman

exchange

if

emerald,

if

you

had

an

exchange,

easy

th,

e

and

and

of

course,

a

signature,

the

signature

mechanism

ecds

a-

and

so

if

you

look

at

that,

the

elliptic

curve

digital

signature

algorithm,

which

is

sort

of

the

elliptic

curve,

variant

of

the

ESA

or

sometimes

quite

ESS-

and

you

will

find

it

in

this

cipher

suite

that

and

the

one

that

is

shown

here.

The

second

bullet

may

be

familiar

to

you,

because

that's

the

one

recommended

also

in

court

in

consequently

also

named

aditya

this

profile

document.

D

What

and

that

issue

came

up

just

at

the

sea

of

achieve

our

meeting.

If

you

use

on

the

ECE

as

a

signature

mechanism,

it

has

some

of

the

pool

of

randomness

property.

So

it's

actually,

the

implementation

uses

in

a

randomized

hashing

technique,

which

is

described

in

this

RFC

69

79,

which

prevents

that

you

lead

your

private

key.

D

If

you

use

the

same

random

random

number

of

order

for

a

construction

sweet,

it's

just

a

way

how

this

digital

signature

algorithm

works,

because

it's

based

on

algemeine

and

it's

just

different

than

what

you

know

from

from

the

RSA

keys

in

case

you

have

no

critic.

Co-Op

always

recommends

for

the

elliptic

curve

variants

of

the

public,

key

variance

to

use

the

diffie-hellman

exchange

along

with

it,

and

particularly

the

ephemeral,

if

you

have

an

exchange,

and

so

I

included

that

as

well,

because

it

obviously

contributes

to

the

overall

performance

and

complex

complexity

of

the

whole

thing.

D

Okay-

and

this

is

an

expansion

of

the

slide

I've

shown

during

the

plenary

on

the

key

lens,

so

needless

to

say,

that

there

is

a

trade-off

between

performance

and

security

here,

and

so

you

see,

you

need

to

pick

the

right

key

size

for

the

symmetric

algorithms,

but

as

well

as

for

the

ECCC

or

ECG

as

a

and

those

values

sort

of

this.

The

mapping

from

symmetric

key

performance

to

this

asymmetric

algorithms

is

basically

taking

from

alves

RFC

4492.

D

So

you

need

to

make

sure

that

the

right

things

are

that

the

things

match

match

up,

and

so,

if

you

look

at

the

recommendation

that

the

youth

a

document

the

TLS

bcp

recommends,

then

we

are

at

the

round

230

something

it's

for

ECC,

depending

on

which

other

recommendation

you

look,

it's

a

little

bit

higher,

sometimes

a

little

bit

lower.

Just

to

give

you

a

right

rough

guess.

A

Just

quickly

add

to

that,

so

when

we

did,

the

recommendations

for

I

should

mandatory

to

implement

decision

for

co-op.

We

also

looked

at

the

expected

life

time

of

Internet

of

Things

devices

and

and

assume

these

have

along

your

lifetime.

Then

your

garden-variety,

the

IT

system,

so

we

went

with

a

conservative

side

there

and

yonder

256

plus.

B

D

D

It

I

think

it's

the

other

way

around

in

RSA,

if

I'm,

not

if

I

remember

correctly.

So

even

so,

you

need

to

actually

be

careful

what

you

compare,

because

RSA

can

actually

be

faster

computationally

if

you

just

look

at

the

right

operations.

Of

course,

from

a

key

sighs

point

of

view,

what

you

transmit

over

the

wire

easy

see

will

have

an

advantage,

as

you

have

seen

on

a

previous

slide.

D

If

you

go,

if

you

go

back

and

that's

actually

why

the

recommendation

is

to

use

ECC

because

of

that,

the

shorter

keel

and

not

so

much

because

of

the

performance,

because

if

you

compare

let's

say

112,

you

have

230

3

bits

keysight's

for

ECC,

but

you

need

to

use

2014

8

bits

of

RSA

keys.

For

example,

that's

what

is

stable

from

this

RFC,

the

dimension

should

ok.

D

The

brain

food

curves

are

much

much

slower

than

slower

than

all

of

the

other

curves.

So

if

you

want

to

get

performance

out

of

the

IE

device,

that's

probably

not

done

a

good

approach

to

use

those.

They

have

some

benefits

because

they

are

the

reason

why

they

are

slower

is

because

they

use

they

don't

use

specially

crafted

our

purchase

and

primes,

but

the

instead

generate

them

randomly,

which

of

course,

then

you

can't

use

a

special

structure

because

it's

randomly

chosen.

So

that's

that's

a

downside,

but.

D

Example

in

smart

grid

deployments

in

Germany,

so

those

will

have

if

you

have

a

regulatory

mandate

to

use

the

specific

that

specific

curved

and

then

you

have

to

live

with

it.

The

performance

for

the

based

on

the

bit

the

bit

lines

of

the

doctor

curves,

it's

obviously

not

linear,

and

so,

if

you

go

beyond

256

because

you

have

maybe

a

very

long

lifetime

of

your

IT

device,

you

need

to

crank

up

a

pretty

massively.

As

you

will

see,

the

easy

th

and

the

ecd

h.e

is

only

there's

only

a

minor

difference.

A

D

C

D

Good

question

good

question

so

on

the

implementation

here

it

takes

into

account

timing

such

an

attack

on

all

of

the

implement.

All

of

the

the

code

is

constantly

one

forward

to

mesh

net

and

body

what

it

what

hasn't

been

or

the

aspect

that

hasn't

gotten

a

lot

of

attention

is

on

other

types

of

cyclin

attacks.

So

that's

something

to

look

into

by

timing

such

an

attack

that

in

taking

care.

B

D

Let

me

let

me

skip

that

slight

and

put

to

them

to

the

number.

So

I

started

the

m3

m4,

which

I

have

a

better

performance.

I

hope

you

can

see.

That

is,

you

need

to

click

on

the

on

the

webpage,

so

I

here

I

have

miss

Kirsten

kovats

curves

are

with

a

device,

it's

an

ECB,

SH

signature

operation,

so

the

faster

operation

and

before

device

before

nxp

APC

1768,

all

up

all

of

the

optimizations

that

I

showed

previously

enabled

went

to

the

maximum.

So

what

happens

then

is

that

operation

takes

and

those

are

milliseconds?

D

D

D

D

D

D

So

if

you

have

the

chance

to

design

your

your

overall

system,

so

obviously

you

want

to

minimize

the

verification

operation

on

the

on

the

IOT

device

and

try

to

do

the

signature

operation,

if

that's

possible,

but

it

will

look

later

at

what

what

that

means

for

TLS

on

and

four

for

the

be

256

curve,

and

we

have

previously

hard

for

the

signature

of

122

milliseconds

and

now

we

are

talking

about

458.

So

one

and

50

milliseconds

is

its,

of

course,

on

something

the.

D

That's

true,

that's

true,

it's

very

fun

copy

paste

should

be

a

super,

strict

I

need

to,

and

it's

a

big

step.

Okay,

so

you

see

you

see

I'm

sort

of

the

absolute

values

and

sort

of

what

what

you

can

expect

and

obviously,

of

course,

if

you

look

at,

if

you

crank

up

the

key

size,

then

do

221.

Then

we

already

in

a

like

almost

one

and

a

half

seconds

for

the

verification

operation,

which

is

quite

a

bit.

D

And

something

on

a

brain

focus

on

so

this

is

the

same

theatre

operation,

the

faster

one.

If

you

compare

that

this

is

the

256

curve,

and

if

you

compare

that

the

122

that

we

have

seen

previously

against

the

brain

poker

proved

674

that

and

gives

you

an

indication

that,

obviously

it's

all

ready

for

a

signature

operation

is

much

much

slower,

okay

and

in

and

for

the

verification

operation

more

than

almost

three

seconds.

That's

quite

tell

you

something

in

in

for

200

512like

more

than

10

seconds

it's

its

massive.

D

D

A

D

So

better

avoid

that

duh.

So,

as

I

said,

the

window

parameters

are

is

one

of

those

optimizations

that

helps

to

improve

the

performance.

It's

the

one

that

has

the

least

impact,

but

already

are

here

with

the

signature

operation

it

and

I

turned

off

the

or

cranked

frankly

to

the

minimum

and

the

minimum

window

size.

Then

you

see,

the

difference

is

here

from

66

are

milliseconds

to

127.

So

it's

already

has

some

some

impact

if

you

disable

dose,

for

whatever

reason

it.

The

NIST

performance.

Optimization,

however

eyes,

that's

a

completely

different

story.

D

So

here

are

we

compare,

so

those

are

the

different

processes

now

also

also

from

the

same

mm

three

and

four

category,

but

as

soon

as

you

see

in

the

top,

this

is

actually

the

easy

eh.

This

is

the

diffie-hellman

handshake.

Now

here,

on

the

left

hand

side,

you

see

the

optimization

this

a

verte

on

the

right

hand,

side

you

see,

enabled

only

the

nist,

optimization

and

arid

all

the

other

optimization

data

to.

B

D

And

you

see

the

difference,

for

example,

if

you

pick

the

b1,

I

d-do

curve

on

the

right

hand,

side

638,

milliseconds,

but

hellman

&,

chick

versus

almost

six.

Second,

if

you

disable

so

obviously,

and

even

in

this

knit

using

the

special

structure.

So

if

you

already

used

in

this

curve,

then

you

better

use

utilizes

special

structure

of

that

curve,

rather

than

then

disabling.

D

That

sort

of

optimization

which

understand

it

but

allow,

but

the

performance

impact

would

be

will

be

clearly

visible,

and

so

those

are

now

I'm

for

them

for

the

login

processes

and

there

the

stories

are

more

complicated,

so

here

I

disabled,

all

the

optimizations,

basically

making

it

a

slow

as

possible,

and

the

diffie-hellman

exchange

affirmative

in

Hammond

exchange

so

ready.

If

I

do

that

already

for

b92

I

have

more

than

five

seconds

run

time

for

that

product.

If

you

have

an

exchange,

obviously

not

a

notic

waiting.

D

D

This

is

the

signature,

verify

operations

and

also

for

the

same

process

up

like

signature

operation

more

than

two

seconds

verified

operation.

What

is

192

curve

verify

up

the

operation

more

than

five

seconds,

so

it

takes

a

little

bit

of

time

yeah,

but

if

I

am

enable,

however,

all

the

optimizations,

of

course

it

gets

better,

so

here's

the

handshake

performance

and

or

everything

in

a

bit

all

optimizations.

So

if

you

look

at

that

again

at

the

be

192

curve,

then

we

are

about

800

milliseconds.

So

that's

much

better.

D

It's

it's

much

much

still

much

much

lower

than

than

m3

m4

that

we've

looked

into

previously

but

gets

into

a

range

that

is

more

acceptable

and

you

can

look

at

the

detail,

values

other

words

if

for

the

easy

days

so

that

the

performance

of

the

lip

of

the

EV

Hellman

is

roughly

is

similar

to

the

performance

of

the

verify

operation,

the

signature

verification

operation

there.

Of

course

the

sign

operation

is,

is

much

much

better

here

for

the

pinna

192

I'm

225

milliseconds.

D

So

let's

begin

I'm

pretty

good

with

all

performance

enable

and

845

for

the

for

the

corresponding

verify

operation.

So

almost

four

times

four

times

the

cost

of

the

verify

compared

to

the

senior

operation.

Ok,

so

the

CPU

are

obviously

has

impact

on.

So

if

you

compare

the

faster

m3

with

a

slow

industries,

a

19-6

megahertz

versus

answer,

two

megahertz,

you,

you

see,

you

see

some

some

impact,

so

it's

not

earth-shaking

could

be

at

least

some

of

the

optimizations,

but

the

difference

here

would

be

in

so

I

used

the

P

192.

D

D

Okay,

and

so

here

you

see

sort

of

a

comparison

among

the

different

ports.

You

see

most

of

them,

so

those

are

in

three

and

then

four.

So,

if

you

oh

of

course,

if

you

increase

the

key

lens,

then

of

course

it

messes

up

there

at

the

statistics.

But

if

you

just

look

at

the

the

first

three

bars

for

each

for

each

processor,

then

it's

it.

There

is

a

difference,

but

it's

not

it's

not

so

dramatic

and

all

of

them

have

a

fairly

good

performance

finger.

A

D

D

Optimizations

in

neighborhood,

okay,

the

curve

are

25,

519

I'm,

so

look

looked

into

that

one

as

well,

and

so

here

I

on

the

far

left.

There's

this

cortex

m0

with

48

megahertz

are

called

KL

46

set

and

there

are

three

different

performance

or

three

different

or

data

collected

for

three

different

implementations,

the

first

column,

the

biggest

one

is

our

implementation,

not

optimized

for

the

elliptic

curve

diffie-hellman,

and

it

was

roughly

one

and

a

half

seconds

for

the

photo

curve

for

that

then

Bernstein.

D

It's

that

has

550

milliseconds.

So

it's

obviously

a

completely

different

scale

and

to

have

the

comparison

with

what

I

had

shown

previously

and

to

compare

this

with

Miss

be

256

version

that

I

I

had

shown

a

few

slides

ago.

That

has

1145

milliseconds.

So

if

you

compare

that

so

at

the

moment,

sort

of

the

curve

on

25

519

implementation,

we

have

is

actually

slower.

D

The

nispom

demonstration,

but

the

reason

for

that

is

because

it

hasn't

the

coat

doesn't

take.

The

big

number

implementation

doesn't

take

into

account

the

special

structure

of

that

curve,

and

that

makes

all

these

curves

different.

So

that

obviously

doesn't

help

you.

If

you

have

an

unorganized

version,

then

these

new

curves

don't

get

you

anywhere.

D

However,

if

you

optimize

it,

even

not

if

you

don't

optimize

it

for

that

target

platform

for

the

right

target

platform,

that

already

makes

a

huge

difference,

and

so

it's

twice

as

fast,

which

is

which

is

good,

and

if

we

look

on

the

picture

of

the

APC

70

68,

which

is

the

m3.

You

see

that

the

Google

implementation

on

our

platform

has

94

the

diffie-hellman

II

before

the

diffie-hellman

exchange

has

94

milliseconds,

which

is

actually

pretty

good.

D

A

D

B

D

Only

the

deployment

version,

because

the

work

on

the

ellip

down

the

signature

version

is

still

ongoing,

so

the

progress

is

a

little

bit

different.

There

is,

however,

if

you

go

into

assembly,

optimizations,

there's

one

other

library

that

I

looked

into

the

micro

ECC

library,

and

that

has

also

the

code

for

for

the

nice

curves,

and

you

can

see

it's

unlike

the

code.

D

The

bowl

ssl

code

has

no

assembly

arm

assembly

optimizations

in

there,

and

it

turns

out

that

you

can

actually,

if

you

by

hand

craft

the

code

normally

which

think

the

compiler

is

much

better

at

producing

optimized

code.

But

in

fact,

in

this

specific

case,

it

isn't

done.

So,

if

you

look

at

the

numbers,

there's

a

huge

difference

between

sort

of

the

runtime

of

the

numbers

that

I

showed

and

what

my

crazy

seat

provides

as

the

fact

that

two

or

three

with

those

optimizations

understand

the

optimization.

D

B

D

A

B

D

I'm

cancer

I

quickly,

so

we

also

looked

at

the

memory

consumption.

We

only

measure

the

heat

and

not

the

stack,

but

the

heap

is

is

what

it

memory

comes

in.

Our

the

heap

is

where

we

have

most

of

the

memory

consumption

rather

than

the

stack

because

of

the

way

how

the

program

is

written.

So

the

floating-point,

the

fixed

point,

optimization

consumes

a

little

bit

of

RAM

here

is

the

the

fixed

point.

Optimization

is

this

a

bird

but

as

you

can

see,

these

elliptic

curves

are

complications.

D

D

If

you

any

cable

on

the

floating-point

optimization,

then

it's,

it

obviously

increases

because

you,

the

whole

idea

is

that

you

cashpoints,

and

so

we

are

talking

about

for

the

256,

we're

doubling

the

RAM

usage

in

for

higher

for

close

with

a

high

and

before

long

bit

lines.

You

know

like

it

gets

in

over

14

kilobytes

for

the

521

bit.

D

So

the

summer

here

is,

if

you,

if

you

want

the

optimizations,

there's

a

trade-off

with

the

RAM

usage,

so

you

obviously

be

a

price

here,

but

I

think

that

the

benefit

of

enabling

those

optimizations

are

pay

off,

because

you

obviously

get

so

here.

This

figure

shows

on

the

heap

usage

in

invites

compared

to

enabling

the

optimizations

and

disabling

so

there's,

obviously

some

difference,

but

on

the

next

slide

on

the

performance

benefit

that

you

gain

is

basically

you

and

the

summary.

A

D

You

increase

fifty

percent

of

the

RAM

usage.

You

actually

get

an

increased

performance

by

a

factor

of

eight

or

more

so

I

would

say,

go

for

them

go

for

the

performance

in

this

case

and

then

I'm

applying

there

besides

you.

So

this

is

this.

Those

are

operations

but,

of

course,

when

you

look

at

the

TLS

exchange,

there's

not

one

only

one

at

least

the

cipher

suites

that

we

are

chosen.

D

So

what

you

need

is

a

couple

of

things,

and

so

you

need

to

add

up

the

numbers

to

actually

get

the

whole

thing

on

ignoring

sort

of

hash

complications

or

or

or

the

round-trip

delay

as

well

or

maybe

other

things

that

so

you

would,

you

would

have

to

add

on

for

the

ordinary

exchange

for

it

on

eccie

service

weeks.

The

ECE

is

a

verification,

the

ETD

HH

computation

and

then

ecbs

a

signature.

So

you

have

to

add

those

up

and

what

you

didn't

get.

D

For

example,

here

I

had

a

number

of

based

on

under

NBC

7068

with

the

curve

are

be

2

to

4.

I'm

sorry

between

the

ones

that

you

accomplished,

something

like

700,

something

milliseconds

and,

of

course,

I'm.

You

may

need

to

add

a

few

additional

arms

signature

verifications

if

you

actually

use

the

sort

of

the

public

key

infrastructure,

so

this

doesn't

apply

to

the

Rope

up

the

key

mode,

but

but

it

applies

to

what

what

is

called

the

certificate

mode

in

the

specification.

D

Needless

to

say,

at

one

quick

word

about

symmetric

key

cryptography,

because

I

said

it's

super

fast

I've

skipped

skip

that

slide.

It's

just

an

introductory

of

what

what

that

means.

So,

if

you

look

at

here

I'm,

I

have

this

m0

sort

of

the

really

slow,

processor

and

again

milliseconds

here.

So

if

you

look

at

the

shower

256

2.2

millions

for

1024-bit

a

hash

over

120

1024

bit.

Well,

you

two

point:

two

milliseconds

it

let

it

go

back

just

like

once.

D

Yeah

150

punishment,

okay

and

the

same

for

RSA

I

use

the

same

value;

okay,

one

point

and,

for

example,

AES

CCM,

128

the

same

length,

five

point,

eight

milliseconds,

so

obviously

like

symmetric

cryptography,

you

don't

need

the

hardware

acceleration,

no

problem.

How

do

I

acceleration

me

or

the

hardware

ES

and

hardware

may

provide

you

other

benefits

in

terms

of

sight,

Chandler

assistance,

but

from

the

for-

point

of

view,

not

an

issue.

D

X1

faster

processor,

obviously

like

the

m3,

a

SCCM,

1,

2

128,

one

point:

seven

milliseconds

obvious

are

not

something

to

worry

about,

and

my

conclusions

like

so

needless

to

say

that

the

ECC

crypto

needs

some

time

and

we

have

chosen

intention

at.

You

also

include

that

as

those

that

recommended

cipher

Suites

and

had

chosen

a

one

that

has

a

diff,

you

have

an

exchange

lee

as

well

in

femoral.

D

So

what

the

exact

value

is

that

one

needs

to

worry

about

the

bench

really

on

the

actual

application,

because

the

good

thing

about

dls

is

it.

Does

the

heavy

lifting

at

the

beginning

and

then

is

established

as

the

session

Keys,

and

we

may

at

some

time

in

the

future,

may

need

to

refresh

keys,

but

then

in

the

meanwhile,

in

a

time

in

between

you

run,

you

work

with

the

symmetric

keys,

which

are

obviously

super

fast.

D

So

what

the

exact

performances

depends

on

as

you've

seen

on

a

number

of

different

factors,

and

so

it's

not

a

simple

answer,

but

someone

who

works

on

a

specific

application

has

to

then

think

about

like

what's

the

acceptable

delay

for

the

user

when

is

set

it

up

like

is

this.

Is

this

the

setup

that

we

do

when

the

user

unpacks

the

device

and

pushes

the

button,

and

it

talks

to

some

server

where

maybe

a

longer

interaction

delay

may

be

acceptable,

or

are

we

talking

about

something

where

it's

a

real

time?

D

Okay,

so

in

terms

of

next

step,

so

we

obviously

I've

seen

it

in

in

one

of

the

introductory

slides

that

I

there's

some

other

data

that

I

would

like

to

collect,

particularly

on

energy

efficiency,

which

I

haven't

done

yet

and

verify

some

of

the

other

data

and

do

more

than

a

new

curves

that

the

CF

Archie

is

looking

into

so

I'm

doing

all

that

stuff

is

quite

time-consuming

as

you

as

you

can

imagine

it,

because

I

basically

needs

to

compile

the

code

download.

The

thermal

image

run.

D

D

A

B

Simpson,

a

quick

question:

I

was

surprised

to

see

that

the

performance

for

GCM

was

better

than

CCM

and

I.

Think

the

reason

that

most

of

the

lightweight

world

has

centered

around

CCM

is

simply

because

it's

a

nard

we're

so

often

did

you

get

any

numbers

on

RAM

or

code

usage.

If

you

don't

use

hardware

acceleration

for

GCM

vs,

DCM

I.