►

From YouTube: IETF98-IRTFOPEN-20170327-1520

Description

IRTFOPEN meeting session at IETF98

2017/03/27 1520

A

B

B

A

A

A

E

E

E

E

You've

all

I

think

seen

a

slide

that

Lars

has

put

many

times

before,

where

he

shows

you

that

we're

under

the

same

IP

our

policy

as

the

IDF,

so

note

that

and

there's

a

little

bit

more

detail

to

it.

If

you

look

at

the

slides

on

page

I

have

a

slide

coming

up.

That

will

tell

you

where

these

slides

are,

but

you

couldn't

find

them

in

the

in

the

agenda

of

the

data

tracker.

I've

just

updated

a

new

copy.

E

A

E

Okay,

so

mm

yeah,

I

RTF,

IPR

policy,

read

it

at

your

leisure,

but

bear

in

mind

that

we

are

under

IPR

according

to

RFC

3979,

here

terms

of

disclosures,

etc.

If

there's

a

remote

participation,

we

do

have

a

lot

of

people

I

meet

echo.

I

will

try

to

manage.

If

people

want

to

do

the

Q

we

have.

Hopefully

we

have

somebody

who

would

be

willing

to

watch

jabber

and

let

us

know

if

somebody

asks

a

question

on

jabber

can

I

get

a

hand

for

that.

E

Thank

you,

Matt,

okay

and,

as

you

probably

know,

we

have

mailing

lists.

I

RTF

announced

and

I

RTF

discuss

join

those

the

prizes

price

talks

are

by

Yosi

gilad

and

Alastair

king

coming

up

on

the

state.

So

Lars

handed

off

the

chair

duck

to

me

this

morning

and

I

just

wanted

to

say

about

Lars

that

he

has

been

an

astoundingly

great

I

RTF

chair.

E

E

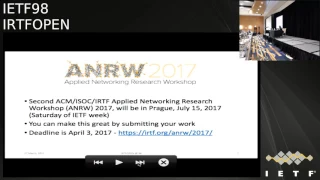

There's

a

workshop:

this

will

be

the

second

of

them

and

it

will

be

held

it

at

the

same

venue

as

ITF

improv

on

the

Saturday

by

TF

week.

We

really

want

great

submissions.

This

is

up

to

you

submit

papers

for

this

and

the

deadline

and

the

location

for

submitting

papers

is

there

april.

Third,

I

will

eventually

get

used

to

the

mic.

E

Our

TF

PT

is.

We

have

about

ten

groups.

Seven

of

them

are

meeting

this

week.

I've

listed

them,

there's

groups

they're,

not

meeting,

but

some

of

them

are

having

interim

meetings,

crypto

forum

and

global

gaya

at

global

access

to

the

internet

for

all

and

important

news

is

that

this

this

I

Cavs

technical

plenary

is

on

the

theme

of

Human

Rights

protocol

considerations

and

one

of

the

chairs

Neil's

10

/,

and

the

first

chair

of

the

I

RTF

Dave

Clark

are

speaking

to

us

about

this,

and

it

should

be

a

very

interesting

and

lively.

E

It's

been

a

little

bit

quiet

in

the

stream,

just

one

publication,

but

a

number

of

others

are

queued

up

and

we

concluded

a

couple:

research

groups

after

the

last

meeting,

sdn

RG

and

the

provisional

or

proposed

network

machine

learning

group

and,

as

always,

contact

me

about

your

interest

in

forming

new

groups

or

any

comments

you

have

on

the

existing

state

of

the

area.

With

that

I'm

going

to

turn

the

mic

over

and

the

floor

over

to

yossi.

First

for

the

talk

about

half

and

validation

extension

to

the

rpki.

F

Hello,

so

I'll

present

jump-starting

bgp

security,

and

this

is

a

joint

walk

with

a

Baha'I

client.

Amir

helzberg

in

my

case

appear

so

so

Eddie.

This

talk

is

all

about

inter

domain

routing

and

and

today

is

the

factor

Paula

cult

following

that

is

bidipi,

and

so

first,

let

me

start

off

by

motivating

a

bit

about

the

problems

in

BGP,

so

I

vtp

care

is

no

authentication

over

the

data,

and

so

let's

consider

the

following

case.

That

shows

how

btp

walks.

So

we

have

Boston

University

at

a

s111

and

bu

has

as

a

prefix.

F

Okay,

so

yeah

so

bu

has

a

perfect

one,

sixty

eight

dot

122

16

and

it

advertises

that

prefix

to

its

neighbor,

ASX

and

now

sx

learns

out

to

which

the

IP

addresses

within

that

prefix.

So

it

would

send

its

data

packets

down

to

be

you

know

not

only

that

ASX

also

really

is

that

advertisement

onwards

to

its

own

neighbor.

So

so

it

appends

its

identifier

to

go

out,

and

now

its

neighbor

learns

another

out

its

neighbor,

those

out

to

each

day,

I

goddess

within

that

prefix.

F

Now,

since

bgp

care

is

no

authentication

well,

the

attacker

can

actually

provide

the

same

and

announcement

for

the

same

IP

prefix

and

now

the

victim

has

two

outs

to

choose

from,

so

it

can

either

go

to

out

its

topic

to

ASX

and

then

reaching

vu.

Oh,

it

can

all

be

traffic

to

the

attacker

both

claiming

to

each

for

the

same

prefix.

So

what

would

the

victim

do

in

this

case?

Well,

if

we

choose

to

go

with

the

shorter

out,

so

the

attacker

would

actually

intercept

traffic

coming

from

the

victim.

F

So

in

order

to

mitigate

these

problems,

called

perfect

size

x.

There's

a

new

mechanism

that

was

standardized

called

the

resource,

public

key

infrastructure

or

the

rpki,

and

they

are

pki

any

Maps

I

believe

fixes

to

the

organizations

that

own

them

it

provides.

It

will

be

facilitates

to

think

so.

It

provides

origin

authentication

in

order

to

prevent

hijacks,

and

it

also

lays

the

cryptographic

foundation

behind

most

sophisticated

mechanisms

like

bgp

sec,

that

the

prevent

more

advanced

attacks

and

so

I'll

talk

about

both

of

these

in

this

dog.

F

Let

me

start

off

by

by

talking

about

about

how

the

applica

is

deployed

and

performs

all

doing

authentication.

So

usually

rpki

is

so.

The

way

erica

is

usually

deployed

is

that

mistletoe

deploys

the

sort

of

general

purpose:

local

cash

machine,

it's

a

yes

and

that

machine

syncs

with

a

globe

with

the

globally

available

repositories

and

these

repositories

all

the

route,

origin,

authorization

records

or

walls

which

provide

this

sort

of

authenticated

mapping

between

an

IP

prefix

and

the

a

is

number

that's

allowed

to

originate

out

to

that

prefix.

F

So

the

local

cache

that

leaves

this

this

was

and

then

it

verifies

the

signatures

over

them.

And

if

the

signatures

are

valid

well,

then

it

creates

a

sort

of

a

whitelist

filtering

goal.

So

so,

in

this

case,

that

I

believe

is

168

that

one

2216

should

only

be

announced

or

sued

only

originate

from

a

s111

and

and

then

it

goes

on

and

deploys

disorders

onto

the

routers.

F

F

Well

now,

given

that

the

victim

observes,

is

these

databases

like

the

Advocate,

otherwise

they

can

find

that

actually

al

666

is

not

authorized

to

originate

out

for

that

IP

prefix

and

so

the

route

from

the

attacker

is

invalid,

and,

and

so

it

is

discarded

and

topic

would

flow

down

the

correct

path,

but

the

applique

does

not

prevent

all

attack.

So

specifically,

if

the

tiger

is

sophisticated,

when

they

can,

they

don't

have

to

claim

to

originate

the

IP

prefix.

F

They

can

sort

of

claim

to

be

directly

connected

or

have

this,

this

false

link

to

the

two

origin,

so

they

can

use

a

false

origin

in

the

announcement

and

now

another

victim

receives

again

two

out,

and

in

this

case

the

applique

does

not

provide

any

any

data

that

allows

two

to

identify

that

the

attackers

are

these

false.

So

really

it

looks

like

so

so

really

the

victim

can

tell

the

difference

and

if

you

choose

to

out

its

topic

to

the

attacker,

because

the

out

in

that

case

is

shorter,

so

how?

F

How

are

we

protect,

inter-domain,

routing

security?

Well,

the

camp

adhigam

has

two

steps

so

step.

One

is

to

deploy

the

rpki

and

so

prevent

hijacking,

and

then

the

second

step

is

to

deploy

bgp

sec

and

bgp

sec

protects

against

false.

This

false

paths

that

that

attackers

might

tied

2-2

claiming

the

advertisements

the

way

it

works

is

well

considered

the

be

in

the

outer

at

a

s111,

oh

well,

BTW

Piecyk

adds

this

new

attribute

called

the

secure

pad.

F

Well

now

in

the

origin,

is

funny

even

would

sign

the

announcement

that

it

would

send

to

its

neighbor

ASX,

so

that

would

allow

ASX

to,

and

so

now

ASX

would

need

to

do

to

to

check

so

check.

Number

one

is

that

a

s111

is

the

actual

legitimate

auditing

for

that

prefix,

and

the

second

check

is

that

is

that

the

origin

actually

approved

the

next

hop.

If

that

is

so

well,

then

ASX

would

actually

sign

would

add

the

next

stop.

So

it's

unable

to

the

out

and

sign

the

new

announcement.

F

So

now

the

announcement

has

actually

two

signatures

on

it

and

when

as1

receives

it,

when

asy

receives

it,

it

would

now

check

that

the

origin

is

correct

and

then

that

the

link

between

a

s111

and

a

sex

exists,

and

also

that

the

link

between

SX

and

sy

exists.

So

basically

it

with

the

number

of

signatures

where

the

verification

would

be

the

number

of

hops.

F

What

are

the

benefits

of

bgp

sec

and

the

password

option?

Well

so

consider

the

same

topology.

Now

all

the

aces

in

blue

Adolphe,

bgp

sex.

So

almost

all

the

good

guys

at

all,

except

for

ASX,

and

that

means

that

s

111

actually

can't

advertise

its

prefix

in

BGP,

because

ASX

does

not

know

how

to

handle

secure

paths

and

so,

in

a

sense

a

sex

actually

breaks

the

BGP

sec

pad

and

it

would

force

a

s111

to

advertise

its

prefix

to

legacy

BGP.

F

So

now

the

attacker

can

actually

do

the

same

attack

as

it

did

before.

It

only

needs

to

circumvent

the

rpki,

because

the

victim

receives

a

legacy,

BGP

message

and

am

therefore

again

traffic

would

flow

to

the

attack.

So

really

it

was

shown

that

underpass

adoption,

bgp

sec,

provides

an

amiga

benefits,

so

in

this

stock

our

goals

are

well.

The

relative.

F

Also

on

the

security

side

we

would

like

to

put

to

protect

against,

is

like

forged

origin

attacks

in

BGP

advertisements,

and

we

would

like

to

provide

some

significant

security

benefits,

even

a

partial

adoption

of

our

protocol,

so

that

is

in

contrast

to

bgp

sec.

While

on

the

deployment

side

we

like

to

do

some

minimal

computations

and

have

them

be

done

only

offline

and

off

the

router.

F

So

we're

proposing

to

do

path

and

validation

and

the

way

patent

violation

walks

is

very

similar

to

the

RPI.

You

have

a

database

and

that

database

allows

an

aes

to

also

list

its

naval,

so

in

our

case

is

111

would

also

register

that

it

is

linked

with

ASX,

and

if

that

database

covers

all

the

neighbors,

then

the

victim

can

now

identify

that

there

is

no

link

between

a

is

666

and

a

s111,

and

so

it

would

know

to

discard

that

goes

out

and

send

the

topic

in

the

correct

path.

F

So

let

me

just

briefly

summarize

the

result

that

I'm

going

to

show

you

later

so

how

much

security

with

that

small,

so

the

positional

mechanism

by

us

well

so

the

so.

This

diagram

shows

this

shows

on

the

y-axis,

the

attacker

success

light

and

on

the

x-axis.

It

compels

between

different

protocols.

So

the

blue

ball

indicates

just

vanilla

bgp.

So

what

happens

when

we

have

no

authentication

well,

the

attacker

can

do

hijacks

and

get

about

fifty

percent

of

the

traffic

to

the

hijack

network.

F

If

we

add

alba

ki

on

top

of

that,

and

we

have

Alton

authentication

now

the

attacker

gets

about

thirty

percent

of

the

traffic

doing

path.

The

end

you

get

further

down

to

just

below

fourteen

percent,

while

the

purple

ball

shows

what

happens

in

the

best

scenario

well

in

the

best

passer

adoption

scenario

for

bgp

six.

So

that

means

that

everyone

adopts

PGP,

60

everyone

supporting

but

still

legacy.

Bgp

messages

are

allowed,

so

the

attacker

can

still

advertise

in

legacy

bgp.

F

F

So,

just

to

to

provide

sort

of

the

the

intuition

as

to

why

this

would

buy

us

some

significant

benefits.

Well,

the

reason

is

that

the

average

path

length

between

a

yeses

on

the

inner

is

actually

quite

short.

So

it's

about

four

hops

for

air

sobs

long

on

average,

and

that

seems

to

suggest

that

if

the

attacker

is

forced

to

sort

of

use

and

to

sort

of

add

another

hope

to

its

out,

then

it

salt

is

going

to

be

much

less

attractive.

F

F

So,

finally,

we

evaluate

the

security

benefits

that

such

a

such

a

mechanism

would

buy

us

on

today's

Internet

and

in

order

to

do

that,

we

use

a

simulation

framework.

So

we

have

the

internet

level

a

s

graph

from

Qaeda

and

in

each

iteration

of

our

simulation.

We

pick

an

attacker

and

a

victim

pair

and

we

assume

that

the

victim

adopts

both

the

rpki.

So

it

registers

it's

it's

perfect

in

the

database

and

also

registers

in

the

pattern

database.

F

F

So

let

me

show

you

some

results.

So

in

this

graph

you

can

see

the

attack

of

success

site

on

the

y-axis

and

on

the

x-axis

there

is

the

amount

of

a

top

is

P,

so

the

largest

ice

peace

while

performing

the

the

path

and

violation

filtering

quotes,

and

you

can

see

that

so

the

two

lines

here,

the

blue

line-

shows

what

happens

when

the

attacker

does

the

next

day

s

attack.

F

That

is

when

the

attacker

claims

to

be

directly

linked

with

the

legitimate

origin

of

the

prefix,

and

that

is

precisely

the

attack

that

patent

violation

was

meant

to

mitigate.

So

you

can

see

that

the

more

doctors

that

you

get,

the

the

tackle

success

site

goes

down

and

even

with

20

adopters.

Actually,

this

attack

the

attacker

should

not

perform

this

attack

because

it

should,

it

should

claim

to

be

connected

to

a

neighbor

of

the

legitimate

prefix

owner

and

circumvent

patent

violation.

That

would

give

him

about

fourteen

percent

success

rate,

regardless

of

the

amount

of

filtering

nodes.

F

So

that

is

the

point

where

the

tiger

should

switch

and

just

to

give

you

context,

so

the

the

dotted

the

red

line

here

shows

what

you

would

get

with

the

app

yeah.

Well,

the

green

line

shows

the

best

that

you

could

hope

for

with

bgp

sec

under

partial

adoption.

So

again,

that

is

what

happens

when

everyone,

a

dog,

3gp

sec,

but

bgp

messages

are

still

allowed

to

be

advertised,

so

it

wasn't

deprecated

yet

and

for

comparison.

F

Another

interesting

result

we

found

was

that

actually

a

local

deployment

can

provide

protection

for

local

traffic,

so

this

is

particularly

important

sees

many

clients

are

fetching

conan

from

nearby

and

now

on

the

x-axis.

This

graph

plots

deployment

just

on

the

North

American

ISPs

and

and

for

that

time,

for

the

attacker

and

victim

pairs,

we

picked

just

a

SS

with

in

North

America

and,

as

you

can

see

out

quite

quickly,

so

even

with

the

top,

with

only

the

top

10

I

Spears

adopting

the

the

attackers

success

like

doing

the

next

year.

F

So

that

goes

down

really

quickly,

and

so

we

should

switch

to

doing

the

two

hop

attack

which

buys

almost

the

same

success

rate

as

the

best

you

could

hope

for

with

bgp

sec.

We've

also

seen

seen

similar

plans

in

europe,

so

this

is

not

just

for

north

america

and

finally,

you

might

ask

well

why

you

shouldn't

I

authenticate

more

than

the

last

talked.

Why

shouldn't

I

do

student?

I

authenticate

the

navels

of

my

neighbors

and

list

them

in

in

this

database.

F

Well,

so

doing

so

is

much

harder

because,

while

you,

while

the

operator,

knows

the

a

SS

that

are

directly

linked

to

it,

it

might

be

harder

to

know

the

SS

that

these

neighbors

are

likely

to

and

and

furthermore-

and

you

can

see

here

the

so

here

on

the

graph,

I

would

argue

that

it

buys

you

and

not

that

much

additional

benefits.

So

in

the

x-axis

you

can

see

how

many

hops,

from

the

actual

origin,

does

the

attacker

claim

so

for

different

amounts

of

hops

of

false

hopes

in

the

in

the

advertisement

under

the

y-axis.

F

They

take

a

success

site.

So

if

there

is

no

authentication

with

just

vanilla

bgp,

the

attacker

can

claim

to

be

to

own

the

perfect.

So

it

can

do

hide.

You

can

get

about

fifty

percent

success

I

if,

if

fabric

I

provides

all

the

authentication,

it

goes

to

about

thirty

percent

pattern.

Validation

leads

you

to

fault

in,

and

the

two

of

validation

really

gives

you

not

that

much

benefit.

Father

from

that.

F

We

have

some

more

results

in

the

paper,

so

in

particular

we

found

that

loud

content

providers

are

actually

better

protected

by

patent

relation.

We

also

found

that

patent

violation

could

could

have

mitigated

some

some

high-profile

incidents

that

occurred

in

the

past

and

finally,

we

also

proved

that

it

is

security

monotone.

So

if

an

AAS

decides

to

do

filtering

that,

doesn't

that

means

that

no

other,

a

yes

can

actually

get

a

better

out.

That's

not

true

for

all

auditing

security

mechanisms.

F

So,

just

to

conclude

my

talk

so

I

present

patent

violation,

which

can

really

significantly

improve

inter-domain

routing

security,

even

under

par

adoption,

while

circumventing

all

the

deployment.

How

does

that

that

bgp

SEC

has

so

we

advocate

sort

of

extending

the

rpi

to

include

to

allow

this

option

of

of

an

aes

to

register

its

neighbors

and

give

it

given

that

the

similar,

a

dog

they're,

actually

the

identical

deployment

strategy

and

and

finally,

if

patent

violation

is

sanitized,

we

recommend

some

some

efforts:

financial

auditory.

F

D

Russ

Wyatt

linkedin,

we

may

all

have

the

same

questionnaire

and

Doug

me.

You

could

say

the

same

thing

so

I'll

just

suggest

that

you

said:

if

you

get

providers

to

deploy,

this

I

would

actually

suggest.

Well,

you

might

want

to

talk

to

Leslie

bagel.

If

she's

over

there

someplace

she's

leaving

the

room

run

Leslie

run.

We

have

been

working

as

a

small

group

to

do

work

around

some

bgp

security

stuff.

Similar

to

this,

you

might

want

to

go

back

and

look

at

the

SOB

GP

work.

D

You

may

have

already

looked

at

it

and

PG

PG

b,

which

are

all

kind

of

in

the

same

area.

To

some

degree,

my

only

suggestion

would

be.

Is

that

you'll

never

ever

ever

and

it

going

to

tell

you

the

same

thing

when

he

gets

up

here

going

to

get

transit

providers

to

deploy

this

won't

happen.

Your

best

hope

is

to

get

people

like

LinkedIn

Microsoft,

Google

Facebook,

the

big

eyeball

providers

on

the

edges

to

deploy

this

and

got,

and

people

who

are

directly

connected

to

real

customers.

D

D

However,

as

LinkedIn

I

can

tell

you

who

I'm

upstream

to

so,

if

you're

ever

going

to

have

a

hope

of

getting

this

type

of

thing

off

the

ground,

it's

probably

going

to

have

to

come

from

the

edge

in

and

have

people

like,

LinkedIn

advertising,

rpki

or

some

other

way

whom

I

up

streams

are

you're,

never

going

to

get

level

free

to

admit

to

the

public

that

I'm

connected

I'm

using

them.

As

my

upstream

right.

F

D

H

F

F

F

H

F

H

I

Hi

eric,

I

was

born

level

three,

I

I

admit

to

not

having

read

the

paper,

so

I'm

gone,

not

going

to

ask

you

a

question

I'm

going

to

ask

if

the

paper

covers

it,

because

I

think

I

missed

it

in

the

presentation

it

it's

easy.

It's

easy

to

fake

out

RPI,

because

if

you're

attacking

a

s

is

666,

it

just

adds

an

AS

path

that

says

111

behind

it

right

they

put.

If

I

aim,

666

and

I

say

x,

111

haven't

I

defeated.

This

whole

thing.

Does

that

just

paper?

Explain

it

and

I'll

go

away.

F

J

Okay,

all

of

our

boy,

sadness,

I,

have

two

questions.

The

first

one

is:

if

I

understand,

you're

right,

then

the

database,

what

I

have

to

maintain

is

fairly

large,

because

I,

don't

only

have

one

originator.

I

have

many

originators

and

every

originator

to

store

or

to

parse

that

particular

peer.

Therefore,

the

policy

processing

becomes,

I

would

imagine

bigger.

So

the

interesting

thing

here

would

be:

what

is

the

overhead

processing

just

for

the

policy

additional

policies?

What

you

have

to

know

inject

into

the

router?

J

That's

number

one

sorry

number

two

is

your

graph

shows,

basically,

that

you

only

are

better

in

one

two

and

three

hops

pretty

much

so

the

average

path

lengths,

as

far

as

I

remember,

is

summer,

three

point

seven

or

something

like

that,

so

the

a

s

that

basically

a

testing

was

a

666

already

has

a

punishment

of

11

hub.

So

therefore,

the

it

doesn't

win

over

three

hops.

It

only

bends

over

two

hops,

but

basically

makes

a

path

relatively

short.

Some

other

question

is:

what

is

the

gain?

What

you

make.

J

F

J

F

F

So

the

number

of

a

s

numbers

on

the

Internet

is

actually

smaller

than

the

number

of

prefixes

I'm,

not

saying

it

won't

have

an

overhead,

but

the

overhead

that

you're

paying

for

the

RPK

I

like

the

amount

of

data.

Is

that

you,

the

amount

of

state

that

you

need

to

keep

for

doing

the

rpi

filtering,

is

actually

about

an

order

of

magnitude

more

than

what

you

need

to

do

this

mechanism.

So.

F

K

K

K

F

So

your

eyes

are

really

sort

of

imagined

there,

so

the

AS

is

at

the

core

of

the

internet,

doing

the

filtering

not

as

much

as

registering

themselves

through

the

database.

Well,

if

you

are

content

provider,

then

what

it's

using

your

your

interest

to

you,

you're,

not

providing

any

doesn't

services

right,

but

it

is

in

your

interest

to

to

deliver

your

content

quickly

right.

F

K

F

K

G

K

F

C

F

F

F

C

F

C

F

And

so

I

will

reach

my

goal

right,

so

this

actually

came

comes

to

play

in

our

in

our

simulation,

so

we

did

take

into

account

so

the

inferred

being

felt

business

relations

between

between

these

a

SS.

So

if

you

know

one

AS,

IS

thieves,

an

advertisement

for

Miss

customer,

we

did

assume

that

is

going

to

take

that

over

over

the

other.

Basically,

my.

C

F

C

F

C

C

F

Okay,

so

you

I

in

the

sense

that

ten

percent

of

the

Indian

might

might

consist

of

very

important,

very

important

users

I

yeah,

so

we

didn't

weigh

the

the

a

SS

I

no

metric

with.

We

basically

counted

the

number

of

faces.

That

would

be

an

interesting

thing

to

add

to

the

simulation

framework

in

order

to

sort

of

rank.

The

attack,

yeah,

yeah

I,

don't

know,

I

have

no

intuition

about

with

this

ten

percent

p

and

the

the

best

ones

are

not

be

happy

to

check

for.

E

L

Hey

good

afternoon,

you

guys

can

hear

me

all

right,

yeah,

so

I'm

Alistair

King

from

kada.

This

is

the

Center

for

Applied

internet

data

analysis

at

UC,

San,

Diego

and

I

have

the

real

the

pleasure

of

talking

to

this

afternoon

about

bgp

stream,

and

this

is

a

software

framework

that

we've

developed

for

the

historical

analysis

and

real-time

monitoring

of

bgp

measurement

data,

and

this

is

some

work

that

we

presented

last

year

at

the

internet

measurement

conference

in

santa

monica,

so

bgp

stream.

L

As

I

said,

a

set

of

open-source

libraries,

api's

and

tools

for

historical

and

real-time

bgp

data

analysis

open

source,

it

is

open

source.

Now

it's

available

had

it's

been

released

for

about

a

year

and

a

half.

Now

we

have

had

several

publications

that

have

already

used

it

from

other

authors.

I

think

actually

you'll

see

used

it

for

some

of

his

research

and

so

I

mean

a

big

part

of

this

presentation

is

going

to

be

really

an

encouragement

to

this

community

to

go

out

and

look

at

it.

L

Use

it

for

your

research

use

it

for

your,

maybe

peer

operations,

and

also

we

were

learning

really

wanting

feedback

from

this

Community

about

you

know

things

you

would

like

to

see

changed

and

other

things

like

that,

so

vgp

stream

we've

really

tried

hard

in

this

case,

to

come

up

with

a

simple

way

to

do

potentially,

really

complex

analysis

of

the

GP

measurement

data

we've

designed

it

for

youth

by

both

researchers

and

operators.

So

we

really

like

some

feedback

from

some

of

the

operator.

L

You

know

real

boots

on

the

ground

side

of

things

here,

we're

certainly

happy

as

researchers,

but

on

the

other

side

of

things,

that's

we're.

Looking

for

some

real

feedback

as

well.

I'll

also

show

you

how,

when

you,

when

you

using

be

trippy

stream

for

doing

research

for

doing

analysis,

it

really

facilitates

experimental,

reproducibility

and

repeatability,

and,

as

I've

said,

you

know,

it'll

do

real-time

monitoring

just

as

easily

as

a

little

bit

historical

analysis.

If

you

keep

you

data

so

first,

you

may

be

wondering

you

know

why.

Why

create

this

software?

What's

what

is

it

doing?

L

What's

its?

What's

the

need

that

it's

filling

so

as

researchers

and

especially

researchers

who

are

looking

at

bgp

data,

we

make

use

of

a

ton

of

this

existing

bgp

measurement

data.

So

there's

these

two

major

collection

projects

in

this

space.

You

may

have

heard

of

them

route

views

on

the

University

of

Oregon

and

then

the

ripe

risk

collection

effort

and

so

between.

L

These

two

they've

been

collecting

data,

each

of

them

actually

for

over

15

years

now,

and

they

have

something

like

20

terabytes,

maybe

more

of

collected

mrt

data

for

the

last

decade

and

a

half,

and

so

we've

been

using

this

data

a

lot.

But

you

know

when

we

started

at

Cato

developing

these

large-scale

platforms

for

doing

real-time

data

analysis

with

vgp.

We

really

found

that

there

was

a

real

lack

of

good

tooling

for

processing

and

analyzing

bgp

data,

and

so

you

know

when

we

would

go

and

try

and

do

this.

L

The

state

of

the

art

in

this

case

would

be

something

like

go

to.

The

reviews

website

browse

around

find

the

file

that

I

want

download

it

to

my

server

and

then

use

BGP

dumb,

convert

that

to

ascii

pipe

it

through.

Some

kind

of

parsing

script

that

I

just

hacked

together

for

the

purpose

of

my

analysis

and

then,

of

course,

rinse

and

repeat

for

all

of

the

files

that

I

was

interested

in

all

right.

So

this

really

is

just

sort

of

a

small

snapshot

of

that.

L

This

type

of

thing

that

we're

trying

to

make

easier

with

vgp

stream,

but

it's

also

capable

of

doing

a

whole

lot

more

than

that.

That

I'll

show

you

throughout

this

presentation.

Okay,

so

how

does

bgp

stream

work

if

we

sort

of

go

up

to

a

really

really

high

level?

Bgp

stream?

The

framework

is

a

distributed

framework.

It's

comprised

of

these

two

main

components.

The

first

is

the

metadata

broker.

So

this

is

a

web

application

that

we

are

running

an

instance

of

act

ada,

and

this

can

be

easily

replicated

elsewhere.

L

I'll

talk

about

that

a

little

bit

later

on,

but

and

then

the

other

component

is

a

set

of

user

libraries.

So

these

are

this:

is

software

that's

run

by

users

on

their

machines

and

so

what

happens?

The

metadata

broker

this

web

application?

Has

this

background

process?

That's

continuously

crawling

and

indexing

the

data

that's

available

on

these

public

data

archives.

So

the

instance

that

we're

running

at

kada

is

continuously

crawling

the

route,

views

and

ripe

risks

archives,

and

it's

us

it's

able

to

serve

queries

about

which

data

is

available

there.

L

So

I

just

want

to

stress

here.

You

know

this

metadata

brokers

service

that

we're

running

this

isn't

storing

actual

bgp

data,

it's

storing

the

metadata

about

what's

available,

and

it's

not

serving

bgp

data

to

users.

The

way

that

the

way

that

the

actual

data

gets

served

is

instead,

the

user

library.

So

these

that

this

is

software

running

on

users.

L

You

know

on

your

machine

of

doing

analysis

that

you

want

so

another

way

of

looking

at

this

framework

is

sort

of,

maybe

in

a

more

rigorous

fashion,

if

we

stack

it

up

at

the

bottom

in

this

sort

of

layer

1.

Here

we

have

the

data

providers,

you

know

route

use,

ripe

risk

and

then

the

metadata

providers

of

which

our

metadata

broker

web

services,

like

an

instance

of

this

metadata

provider

and

then

the

center.

Here

we

have

lib

bgp

stream.

L

This

is

the

c

library

that

is

really

the

core

of

the

bgp

stream

framework

and

it's

responsible

for

going

to

the

metadata

providers

for

going

to

the

data

providers

and

retrieving

the

data

and

then

demultiplexing

that

into

a

single

stream.

For

these,

this

talk

layer,

this

level,

three,

which

are

your

applications.

These

are

users

applications

written

and

see

using

a

capi

python,

and

then

we

have

some

other

tools

that

make

it

easier

to

do

sort

of

large-scale,

complex

analysis

of

bgp

data

in

real

time.

So

I

want

to

point

out.

L

The

blue

boxes

in

this

diagram

is

a

diagram

representing

the

paper.

The

blue

boxes

are

software

that

we've

developed

as

part

of

the

bgp

stream

framework,

so

the

orange

things

are

these

third-party

components

that

we're

using

and

then

anything

in

a

blue

box.

It's

also

marked

with

a

star

that

software

that

we've

already

made

publicly

available

as

open

source.

L

So,

in

the

center

of

the

stack

here,

as

I

mentioned,

we

have

the

bgp

stream.

So

this

is

the

core

part

of

our

framework,

and

before

I

talk

about

this,

I

wanted

to

save

in

BGP

stream.

Currently

we

are

only

supporting

the

mrt

data

format.

This

is

sort

of

the

de

facto

standard

Atlee

currently

for

capturing

and

storing

BGP

measurement

data.

There

are

absolutely

efforts

to

replace

mrt

with

other

things,

so

we

have

BMP

and

we're

we're

currently

working

with

a

collaboration

with

Cisco

and

they're,

open

BMP,

guys

to

add

BMP

support

to

bgp

stream.

L

So

that

said,

you

know

for

the

moment

there's

this

wealth

of

mrt

data

available,

as

I

say,

with

this

15

years

of

mrt

data

and

its

continuing

to

be

collected.

So

as

we

speak

with

more

and

more

nid

mrt

data

is

collected.

So

for

the

risk.

This

presentation,

we

will

be

talking

about

bgp

stream

and

the

assumption

is

going

to

be

we're

processing

in

RT

data,

okay,

so

libby

GP

stream.

L

This

data

that's

coming

from

potentially

multiple

sources

into

a

single

screen,

and

so

in

this

way,

you're

able

to

configure

bgp

stream

to

retrieve

data

for,

for

example,

all

of

the

route

use

all

of

the

writers

collectors,

which

ends

up

being

something

like

300

and

maybe

400

bgp

peers

worth

of

data

a

little

bit

all

the

demultiplex

into

a

single

stream

for

your

application

to

process

and

an

important

step

in

this.

This

d

multiplexing

is

sorting.

L

So

as

I

mentioned,

libby

GP

streams,

the

c

library

has

a

capi

and

we've

really

tried

to

make

the

capi

as

it

is

usable

and

intuitive

as

it

can

be

for

a

capi.

We

expose

two

types

of

data

structures.

The

first

is

a

record,

so

a

record

really

is

just

a

really

thin

wrapper

around

the

MRT

record.

The

reason

we

add

this

wrapper

is

so

that

we

can

add

some

metadata.

L

That's

not

found

in

the

MRT,

for

example,

the

name

of

the

collector

that

this

record

came

from

and

in

the

collection

project,

for

example,

and

then

so

because

these

mrt

records

that

we're

wrapping

here

these

can

contain

information.

One

record

can

contain

information

for

multiple

preferences

or

multiple

peers,

so

in

the

example

of

a

rib

dump.

So

this

is

a

table.

Snapshot

that

comes

from

a

collector.

The

routing

table

snapshot

a

rib

dump

record

inside

of

MRT

can

actually

contain

information

as

seen

by

multiple

peer.

L

L

So

here

is

some

code,

then

this

will

instantiate

an

instance

of

bgp

stream

using

the

capi.

The

highlighted

part

here

is

perhaps

the

most

interesting.

If

you

can

see

it

these

screens.

First

of

all,

we

add

a

filter

that

says:

I

only

want

data

from

the

IRC

06

collector

to

a

right

risk

collector

as

well

as

I,

also

want

data

from

the

route

views,

jinx,

collector

and

then

next

I

add

a

filter.

That

says

only

give

me

the

updates

information,

so

you

know

implicitly,

it's

saying

don't

tell

me

about

the

ribbed

on

Scotty's

table

dumps.

L

Only

give

me

the

update

stream

from

these

two

collectors

and

then,

of

course,

we

specify

a

time

range

that

we're

interested

in

processing

data.

For

so

once

we've

done

this

instantiation

and

configuration,

we

then

have

the

sense

of

nested

while

loops,

where

we

first

iterate

through

the

records

from

the

stream

and

then

for

every

record.

You

reiterate

through

the

albums

of

that

record,

and

so

this

this

kind

of

structure,

the

overall

structure

of

this

API,

is

going

to

be

reminiscent

of

like

a

live.

P.

L

So

as

well

as

see.

Of

course,

we

have

Python

make

life

a

little

bit

easier

in

the

paper

we

use

the

python

bindings

to

implement

so

commonly,

or

at

least

in

the

past,

it's

going

to

commonly

studied

facet

of

BGP,

and

this

is

a

s

path,

inflation,

and

so

you

know

the

point

here

on

the

slide,

move

them

with

this

implementation,

wasn't

to

say:

hey,

we

started

asf

inflation,

it's

interesting!

No!

L

On

top

of

this,

is

that

not

only

do

I

have

my

analysis

logic

in

my

code,

I've

also

embedded

the

data

specification

so

as

a

usual

disorganized

researcher.

I

come

back

to

this

a

year

later

and

I

don't

need

to

remember

what

the

data

was

that

I

process

to

get

these

results.

It's

actually

embedded

in

that

same

script.

So

not

only

can

I

remember

but

now

I

can

hand

the

script

off

to

some

another

researcher.

Who

can

take

that

use

that

to

reproduce

and

reap

repeat

my

experiment,

and

then

you

know.

L

L

L

L

So

we

end

up

with

this

data

set

of

triceps

that

are

taken

during

a

black

holing

event

and

then

trace

routes

that

are

taken

after

the

bye

Pauline

has

finished,

and

then

we're

able

to

compare

the

results

we

see

between

them,

and

so

the

results

in

and

of

themselves

are

interesting

if

you're

interested

in

those

take

a

look

at

the

paper.

But

the

point

here

again

is

what

we

were

able

to

do

with

using

these

python

bindings

and

the

real-time

monitoring

capabilities

of

bgp

string.

L

We

actually

found

that

we're

able

24

ninety

to

ninety-five

percent

of

these

black

holding

events

were

able

to

probe

the

black

hole

prefix,

while

the

black

holing

was

still

in

effect,

and

so

in

this

way

we're

able

to

combine

this

passive

control,

plane

data

with

active

data,

plane

measurements

and

look

at

these

timely.

You

know

these

transient

routing

policies,

in

effect.

L

So

you

know,

I've

talked

about

with

our

capi.

We

have

this

Python

API

and

we

also

make

available

this

command

line

tool

that

we

call

bgp

corsaro,

and

this

is

a

plug-in

based

tool

and

the

its

job

here

in

the

bgp

stream

framework

is

to

allow

users

to

do

continuous,

real-time

monitoring

of

EGP,

but

in

more

of

a

looks

like

production

or

operational

capacity,

and

so

what

it

does

is.

It

continuously

monitors

these.

L

This

bgp

data

that's

coming

in

from

the

from

these

public

data

sources,

and

then

it

runs

some

analysis,

logic

and

then

outputs

statistics

or

some

some

output.

Some

aggregate

statistics

in

these

regular

time

bins,

and

so

as

it

is

probably

better

demonstrated

through

an

example

we

make

available

with

bgp

corsaro

an

example,

a

sample

plugin.

L

This

we

call

prefix,

monitor,

and

so

this

plugin,

you

give

it

a

set

of

IP

ranges,

as

this

may

be

your

network,

something

that

you're

interested

in

monitoring

and

then

watches

the

bgp

data

as

it

comes

in

and

as

its

tracking.

Only

two

statistics.

One

is

the

number

of

prefixes

that

are

announced

from

that

address

space

and

to

the

number

of

origin,

a

esas

that

are

announcing

those

grievances.

So

we

took

this

plug-in,

took

bgp

Corsaro

and

we

use

this

to

look

at

a

hijacking

attack

that

was

first

reported

by

dine

research

in

january

2015.

L

L

Here

we

have

the

number

of

a

SS

that

are

announcing

those

prefixes,

and

so

you

can

clearly

see

these

four

spikes

there,

where

the

number

of

origin

aliases

goes

from

one

to

two,

and

indeed

the

spike

on

the

seventh

is

exactly

the

event

that

dined

research

was

reporting

on

this

blog

post

here

and

we

dug

more

into

the

data

and

found

this

some

romanian

aes.

That's

also

announcing

gars

address

space

here.

So

you

know

again

the

point

here

isn't:

hey.

We

have

a

perfect

hijack

detection

tool.

It's

hey!

L

L

And

then,

of

course,

we

have

15

years

of

PGP

data.

This

turns

out

to

be

a

lot

of

data.

We

said

hey.

Maybe

this

is

big

data,

and

so

we

went

and

took

the

apache

spark

framework.

Bgp

stream

put

them

together

and

then

you

know

make

them

work

happily

together

and

so

in

the

paper

we

present

sort

of

some

results

from

this

analysis

and

I'm

going

to

go

really

quickly

through

a

couple

of

those

things

couple

of

the

results

we

got

out

of

this.

But

again

you

know

the

point

here

is

we.

L

We

did

the

work

to

see

how

you

can

use

bgp

stream

in

this

big

data

environment

and

we've

also

made

available

some

of

these

scripts,

as

templates

that

you

can

use

for

going

a

using

vgp

stream

in

this

way,

looking

at

longitudal

analysis

of

bgp

measurement

data,

so

just

really

quickly.

Some

results

from

this

analysis.

Of

course

you

know

size

of

the

routing

table

over

time,

but

actually,

what

kind

of

surprised

me?

L

And

maybe

this

book

shouldn't

in

surprising,

we

ended

up

needing

to

use

this

routing

table

size

metric

to

normalize

a

lot

of

our

out

of

analyses,

and

it

turns

out

that

this

has

this

heat

map

really

clearly

shows.

So

this

is

the

size

of

a

peers,

every

peers,

routing

table

over

time,

and

you

can

see

the

sort

of

the

expected

a

curve

there

at

the

top

and

then

there's

this

other

mess

at

the

bottom.

And

so

these

are

what

we

call

the

partial

peers.

L

So

in

this

publicly

available

data,

there

are

several

many

peers

that

are

only

sharing

a

fraction

of

the

global

routing

table,

and

so

in

order

to

get

this

number

in

order

to

get

this

number

of

the

size

of

the

routing

table,

so

that

we

can

then

normalize

our

other

analyses.

We

have

to

exclude

these

partial

peers,

otherwise

they

end

up

skewing

this

calculation

for

the

size

of

the

routing

table.

L

L

So

if

we

first

look

at

before

the

red

lines

here,

you

know

you

can

see

this

sort

of

almost

a

flat

linear

growth

in

the

overall