►

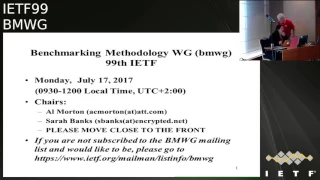

From YouTube: IETF99-BMWG-20170717-0930

Description

BMWG meeting session at IETF99

2017/07/17 0930

https://datatracker.ietf.org/meeting/99/proceedings/

B

A

A

A

C

A

B

G

G

C

This

is

our

working

group

meeting

for

ITF

99,

if

you're

not

subscribed

to

the

mailing

list

and

you'd

like

to

do

so.

I've

grabbed

these

slides

and

put

that

link

and

join

us

on

the

mailing

list,

how

many

people

are

attending

in

a

BMW

G

for

the

first

time?

Quite

a

few.

Thank

you

about

seven

or

eight,

very

good,

so

welcome.

Welcome

you'll

find

that

this

is

a

really

easy

group

to

join.

C

If

you

have

some

testing

background,

there's

not

that

much

literature

that

is

absolutely

necessary

to

be

able

to

have

read

and

to

join

our

discussion.

So

if

you

RFC's

will

see

and

hear

as

frequent

references

and

those

are

the

ones

that

you

can

start

out

with

to

get

a

good

background

and

actually

come

and

talk

to

either

one

of

us

afterwards.

C

C

A

C

We

have

several

documents

here

that

describe

the

rules

for

IPR

disclosure

regarding

the

contributions

and

contributions,

our

oral

statements

or

written

our

electronic

communication

made

at

any

time

or

any

place

which

are

addressed

to

all

of

these

bodies.

Ye

ITF

plenary

iesg.

Any

ITF

mailing

lists

any

working

group

which

we

are

right

now

any

BA

birds

of.

C

Specially

the

ID,

the

arts,

the

editor

and

the

time

all

the

contributions

are

subject

to

our

c

53

78

and

3979

updated

548

70.

So

they

we

basically

ask

that

everyone

be

aware

of

these

things

and

when

you

registered

for

the

meeting

you

check

the

box.

That

said

you

were

aware,

and

and

as

a

result,

so

we're

expecting

everyone

to

follow

the

rules

and

what

does

it

mean?

C

All

right

so

onto

the

onto

the

real

work.

So

here's

here's

our

agenda.

We

haven't

we

cook,

they

asked

for

volunteers

as

note-taker,

volunteers,

okay,

Marius,

thank

you

and

anybody

else.

We

usually

really

try

to

get

to

well,

so

that,

while

one

note-taker

is

speaking

another

another

can

help

out.

Anybody

else

want

to

volunteer

alright.

C

Well,

we'll

hope

to

get

some

some

help

with

that

and,

in

fact

feel

free

to

join

the

etherpad,

the

details

of

which

I

can't

easily

provide

you,

because

my

people,

my

my

pcs,

locked

up

here,

but

that

we

can,

we

can

get

that

going.

So

here's

our

here's

our

plan.

Oh,

we

still

don't

have

a

jabber

scribe

right.

Okay,

Sarah

is

gonna,

take

care

of

jabbering,

we've

talked

about

IPR,

the

blue

sheets

are

circulating

and

ticky

I

noticed

that

you

need

to

sign

the

blue

sheet.

Please.

C

So

it's

right

up

front

here,

just

the

and

and

and

try

to

keep

the

sheet

in

the

back,

and

if

anybody

walks

in

the

door

hand

it

to

them

and

suggest

that

they

do.

Is

that

all

all

the

rest

of

us

is

gonna

decide,

and

that

would

be

great

all

right.

So

here's

our

agenda

we're

first

going

to

talk

about

the

working

group,

graphs

and

the

status

of

the

working

group.

B

C

C

We

had

good

support

at

the

last

meeting

for

adopting

we

had

many

people

say

on

the

mailing

list

that

they

would

support

it,

but

only

a

few

said

that

they

would

actually

review

the

document

when

I

reminded

people

that

that

that's

a

part

of

supporting

the

document

writing

one

word

in

an

email

reply

of

support

that

doesn't

get

it

over

the

edge.

We

really

need.

The

truth

is

we

need

expertise

in

these

areas

to

be

able

to

complete

the

work

in

a

in

a

manner

that

that's

going

to

be

useful

to

the

industry.

C

So

that's

that's

the

kind

of

thing

we're

looking

forward

to

be

demonstrated

in

in

the

review

comments

when

you

say

you

know

that

you

support

a

draft

and

you've

reviewed

it.

You've

had

this

question

about

it.

That's

how

you

demonstrate

that

so

so

we've

got

that

draft

and-

and

it

is

you

know,

moving

forward

toward

adoption

because

that's

the

best

way

to

put

it

now,

then

we've

got

several

other

drafts

which

have

been

proposed.

C

One

on

service

function,

chaining

a

key

Kim

is

here

to

present

that

and

then

we

had

this

other

one

considerations

for

benchmarking,

network

virtualization

platforms

in

the

data

center

environment

and

that's,

unfortunately,

gonna

be

wiped

off

the

agenda.

Today,

presenters

weren't

able

to

join

us

in

it

hasn't

been

an

update

on

that

either,

but

we

did.

C

They

would

come

through

and

join

us

so

then,

after

after

the

the

drafts

that

we

discussed

today

will

have

a

reach,

are

during

discussion

and

we'll

kind

of

put

down

our

ideas

and

get

some

feedback

from

the

group

and

then

discuss

a

schedule

for

retarding,

as

we

have

very

nearly

completed

all

of

our

charges

work

items

and

that's

a

good

thing.

The

question

is:

do

we

have

enough

work

to

continue?

I

mean

personally,

I

can

be

as

yourself.

So

then.

C

The

final

thing

we'll

do

today-

and

this

is

the

kind

of

thing

that

we've

been

able

to

do

a

little

bit

when

we

have

a

long

session

like

this,

is

to

take

a

look

at

some

related

presentations

and

topics

from

other

organizations.

For

example

West

some

are

we

heard

from

a

software-based

traffic

generator

design

team,

the

moon,

gen

traffic

generator?

They?

C

They

were

here

with

the

advanced

network

research

workshop

last

year

and

and

then

stayed

on

to

give

our

group

would

have

talked

about

this

room,

gen

traffic

generator,

and

then

we

also

heard

from

the

the

FDI

o

continuous

integration,

testing

team

and

kind

of

work

that

they

were

doing

to

test

the

Cisco.

Now

the

FBI

or

open-source

VPP

virtual

switch

so

this

year,

what

we're

gonna

hear

is

talk

about

the

OPN

at

the

via

spark.

C

We've

heard

about

the

project

here

many

times,

because

that

group

on

the

contributions

to

our

work

in

the

area

of

sort

of

test,

setups

and

configuration

parameters

for

repeatability

and

now

we're

gonna,

hear

a

bit

about

what

they,

what

they've

done

with

recent

testing

the

results

that

they've

collected

and

there

are

of

course,

implications

on

our

future

work

and

I'll.

Try

to

emphasize

that

as

I

go

through

so.

F

C

C

C

C

Through

the

iesg

the

same

week,

which

made

it

very

interesting

lots

of

lots

of

traffic

on

all

this

and

then

most

recently,

we've

gotten

iesg

approval

of

the

data

center

benchmarking

drafts,

which

were

working

here

for

quite

a

while,

and

that

caused

a

lot

of

commentary

and

an

additional

feedback.

So

and

now

the

working

group

is

really

seeing

all

this

feedback.

It's

it's

really

quite

extensive

and

I

mean

it

informs

us

in

ways

that

we

need

to

know

in

order

to

get

our

graphs

through

more

efficiently.

C

C

C

On

benchmarking,

mat

and

and

look

the

very

next

one-

that's

going

to

be

announced-

probably

sometime

today,

I

would

guess,

or

or

this

week

at

least

as

eighty

172

on

the

vnf

virtual

network

function

and

NFV

network

function,

virtualization

infrastructure,

benchmarking

considerations.

This

was

the

first

step

in

our

our

working

group

charter

pivot,

begin

to

take

on

the

bench

marking

of

virtualized

network

functions

and

which

was

a

key

step

in

our

reach.

C

Our

during

activity,

the

last

time

around

we've

previously

done

a

benchmarking

of

physical

network

devices

with

their

dedicated

hardware,

but

now

we're

expanding

our

focus

into

this

virtual

network

function

and

their

infrastructure

potential.

So

this

was

first

Stefan

and

glad

to

get

that

complete

if

you're,

if

you're

beginning

to

work

in

this

area.

This

is

an

excellent

draft.

C

Considerations

and

contributions

there

to

consider,

as

we

begin

this

so

we'll

have

a

discussion

of

reach

are

during

that.

That's

our

Charter

update

discussion

and

very

soon

we'll

have

our

supplemental

BMW

G

page

announced

Sarah's,

been

working

on

that

and

and

has

created

the

place

where

we'll

or

well

sort

of

keep

our

our.

C

Interesting

information

about

you

know

for

people

who

haven't

been

able

to

attend

a

meeting,

the

kinds

of

things

that

I

said

when

we

got

going

here.

You

know

how

easy

it

is

to

join

the

group

and

what

you

need

to

do.

First

and

second

and

third,

things

of

that

nature,

and

in

the

past

we've

included

reviews

of

particular

documents

there,

an

analysis

of

comparisons

and

so

forth.

So

it's

been

useful

to

have

our

own

supplemental

age

and

unfortunately,

Comcast

decided

that

they

no

longer

provided

web

pages

for

residential

customers.

C

C

Gonna

have

this

this

discussion,

so

I

think

I

will

hold

up

the

presentation

there

and

ask

if

there's

any

questions

about

the

status

of

the

working

group

or

direct,

no

good,

okay,

so

so

yeah!

This

is

a

you

know:

Maurice

it's

been

a

very

successful

last

year

or

so

and

getting

all

of

these

drafts

move

forward

and

that

that

helps

us

to

make

space

on

our

in

our

attention

span

and

our

working

group

agendas

to

be

able

to

adopt

some

good

work.

Now,

that's

why

we're

beginning

to

consider

these

things

in

earnest.

F

With

feedback

from

owl

the

last

time

this

went

out

for

working

group

last

call.

There

were

some

comments

that

came

in

those

were

addressed

when

we

uploaded

the

draft

I'll

caught,

something

that

maybe

I

should

have,

which

was

hey.

It's

not

clean

when

I

do

reviews

for

ops,

Directorate

I'm,

usually

the

first

one

to

pick

out

on

nits,

so

I

found

it

very

ironic

that.

I

F

F

So

in

any

event,

we've

gone

back

through

and

cleaned

up

those

knits

that

was

I

believe

submitted

two

weeks

ago,

so

it

was

under

the

deadline

and

so

at

this

point,

I

think

we're

looking

or

asking

the

working

group

to

do.

Another

working

group

last

call

it's

been

a

long

time

coming.

This

draft

has

been

thoroughly

reviewed

and

worked

on

for

quite

some

time.

So,

if

you're

new,

you

might

be

wondering,

wait

what

it's

going.

The

working

group

last

call,

but

it's

been

around

for

some

time.

F

C

So,

first

off

any

any

comments

from

the

attendees

about

this

Sdn

controller

that

work

okay.

Well,

so,

as

I

mentioned

it

to

Sara

before

I

have

a

couple

of

comments,

and

one

of

those

is

related

to

one

of

the

drafts

that

we've

just

got

approved

and

basically

all

the

way

through

the

publication

process,

and

that

is

the

considerations

for

benchmarking

of

vnfs

and

related

infrastructure

will

be

the

RFC

81

72

is.

C

J

A

C

Draft

and

and

the

way

this

metric

matrix

works

is

this:

we

have

these.

These

different

phases

of

communication

listed

in

the

first

column,

its

activation

operation

and

deactivation,

so

you

can

kind

of

think

of

like

setting

up

a

path

evaluating

the

performance

of

that

path

in

terms

of

its

throughput,

the

transmission

rate,

latency

cost

ratio

and

and

reliability.

A

C

C

A

C

Here

is

the

additional

column,

but

this

was

the.

This

was

the

the

matrix

that

comes

from

an

ANSI

standard,

NC

X,

3,

dot,

102,

and

it

was

originally

designed

for

data

communications

evaluation

back

in

a

long

time

ago,

back

in

the

eighties.

But

it's

been

very

effective

to

apply

it

to

our

work

here

and

we've

done

that

down

into

graphs

the

SDN

controller

draft

and

also

in

the

vs

/

contributions

from

oakley

empathy

into

the

big

guy

into

our

work.

Here

we.

C

Tests

that

this

project

has

designed

so

in

making

sure

that

these

citations

were

were

correct.

I

looked

into

the

I,

looked

into

the

the

controller

performance

draft,

and

this

is

one

of

one

of

Sarah's

graphs.

That

she's

just

discussed

here

and

in

fact,

when

I

noticed,

was

that

the

the

active

the

accuracy

column

was

was

missing

and

I

originally

thought

that

that

was

just

a

synchronization

issue

between

this

is

the

the

terminology.

C

Draft

were

where

the

benchmarks

and

other

terms

are

carefully

defined

and

the

and

then

the

draft

on

methodology

and

and

that's

what

this

one

is.

So

here's

the

section

in

Sarah

and

her

author

team's

draft

on

controller

asynchronous

message,

processing.

Great

so

of

imagine

a

case

here

now,

where

you

have

a

many

switches

connected

to

a

controller

and

they

all.

D

C

Association

with

the

controller,

where

the

controller

is

going

to

accept

their

request

to

establish

a

flow

and

then

to

give

back

the

information

necessary

to

insert

in

their

flow

table

to

be

able

to

accommodate

that

flow

in

the

future

on

a

switch

by

switch

basis.

So

as

a

plow

enters

the

the

network,

that's

controlled

by

the

controller,

couldn't

see

many

of

these,

these

packet

in

messages

and

it's

important

to

know

for

a

given

number

of

switches.

How

many

of

these

flow

requests

are

packet

in

in

the

open

flow

of

car

lights?

C

Can

they

accommodate,

but

one

of

the

one

of

the

things

we

learned

in

the

process

of

this

is

that

many

many

of

the

originals

control,

our

benchmarking

tools,

didn't

pay

attention

to

pack

it

in

which

is

like

a

flow

update,

request

message:

loss

ratio.

If

a

request

came

in

and

it

was

dropped

or

not

honored,

then

a

flow

would

be

sitting

there

wanting

to

continue

to

send

packets,

but

no

way

to

do

it

and

that's

kind

of

that's

really

bad

for

network

operators,

but

yet

that

somehow

this

was

overlooked.

D

C

K

C

The

walls

so

there's

there's

really

two

kinds

of

parameters

here

and,

and

the

point

was

to

try

to

capture

both

of

them

here.

The

old

benchmarking

tools

have

typically

characterized

the

maximum

message,

processing

rate.

But

what

we

like

to

add

and

we've

begun

to

do

that

based

on

comments

here,

is

to

characterize

the

the

rate

where,

where

there's

no

message,

dropping

so

zero

loss

ratio

and

that's

always

going

to

be

less

than

the

maximum

with

the

frame

dropping

there

could

be

as

much

as

10

percent

or

20

percent

frame.

C

C

C

C

E

C

M

C

F

I

think

they're

really

good

changes.

I,

don't

think

the

other

authors

are

gonna,

have

an

issue

with

this,

so

I

think

it's

minor

to

add

it,

but

especially

if

you

have

new

folks

reviewing

I

think

it's

a

lot

cleaner

to

go

in

with

a

clean

draft.

Yeah

fine

it'll

only

take

a

day

or

two

and

we'll

upload.

Okay,.

C

C

C

All

right,

so

our

next

next

item

is

a

draft

on

benchmarking

methodology

or

Ethernet

VPNs

and

the

provider

backbone.

Evp

ends

sadena

Jacob.

This

is

here

from

juniper

and

we

have

a

related

draft

here.

That's

run

through

many

iterations,

so

Sedin

I

invite

you

to

come

up

and

make

your

presentation

of

the

latest.

N

C

D

D

O

N

Hi

yeah

good

morning,

everyone

there's

this

bench

marking

on

evpn

at

pbbb

PN.

This

is

a

you

know:

the

new

RFC,

which

came

year

and

a

half

back.

So

this

was,

you

know,

isn't

new

RFC,

seven,

six,

two

three!

So

this

is,

you

know

it's

land.

Now

it's

widely

deployed

in

the

provider

arena

and

the

main

feature

of

this

PB

e

VPN

is

like

you

can

have

both

routers

in

the

forwarding

compared

to

VPLS,

because

you

have

to

run

either.

You

know

spanning

tree

or

workers

active

and

standby.

N

N

So

which

I

explain

yeah

the

comments

it

was

from

the

last

idea

of

it

was

they

want

to

type

five

routes

because

I

find

out

was

not

a

part

of

RFC

which

came

as

a

separate

draft,

because

there

are

lot

of

drafts

which

is

going

in

best

working

group.

It,

which

is

it's

like

a

vanilla

feature.

A

VPN

and

ppbv

penis

like

a

to

RFC

spaniel,

are

features.

N

They

add

a

lot

of

other

things

like

say,

for

example,

they

they

wanna,

run

VP,

WS,

/

e,

VPN

e

2

e

3

eel

and

kind

of

different

service,

and

so

they

spin

of

a

new

draft.

So

actually

this

is

what

this

was

I

fire

out

itself

is

a

draft

which

is

adopted

by

the

IETF

best

working

group.

So

they

want

that

also

to

be

benchmarked

as

part

of

EVP

and

when

we

benchmark

EVP,

so

they

ask

okay.

Why

can't

you

do

that?

N

So

I

incorporated

that

as

one

of

the

parameter,

because

we

have

defined

the

parameter

so

now

the

problem

is

EVP

knows

implemented

by

different

providers

and

it

is

very

difficult

when

you

buy

when

in

the

test

community,

as

well

as

when

you

buy

this

product

or

the

services

rendered

by

different

vendors.

So

you

have

to

have

apples

to

apples

comparison.

So

you

don't

know

okay,

say

vendor

X

or

service

provider.

X

is

giving

this

so

how

I

rated

this

would

be

some

standard.

This

would

be.

N

Some

extra

core

parameters

have

to

be

measure,

so

so

there's

a

that.

That's

how

the

motivation

behind

this

drop

and

type

fire

route

was

to

ask

in

last

idea.

So

we

incorporate

that

as

one

of

the

parameters.

So

this

is

a

test

setup

because

you

know

the

duty

is

one

of

the

multi

Hampi.

This

is

a

typical

evpn

deployed

scenario

so

because

it

will

be

active

active

because

that

is

the

the

most

deployed

scenarios

where

active

active,

because

that

is

where

both

routers

will

be

forwarding.

N

N

N

Did

is

that

what

you're

saying

yeah

the

beauty

is

where

you

know

we

have

to

have

an

in

test

cell

scenario.

We

have

to

have

a

D

UT,

barring

you

run

the

test

and

you

measure

the

parameters,

but

to

place

the

duty.

You

need

to

have

other

elements,

network

elements

there

and

the

traffic

which

is

pumping

by

direction

traffic

or

you

need

direction,

a

traffic

which

is

pumping.

That's

why

we

return

to

our

teapots

or

the

router

test

of

like

XE.

Our

Spirent

will

be

there.

So

the

duty

is:

where

is

a

reference

point?

M

N

Mentioned

there

in

that

test,

setup

clearly

mentioned

that

the

reference

point

which

is

mentioned

and

the

traffic

is

by

a

you,

know,

pump

from

unidirectional

or

bi-directional

traffic

is

sent

and

the

framerate

all

the

details

of

clearly

I

think

so.

I

want

to

raise

the

two

idea

of

back.

So

we

have

added

all

those

points,

the

framerate

and

all

those

things

which

is

clearly

mentioned.

You

say

layer,

two

frames

we

are

doing

it

so

I

looked

at

the

procedure.

M

N

It's

clear

I'm,

just

saying

that,

for

me,

it's

not

okay,

no,

actually,

that's

what

it

that

section

test

set

up

it

was

mentioned

there

I

mean.

Is

that

the

reference?

So

in

that

it?

The

question

came

so

we

added

explicitly.

This

is

where

it

was

like

that

we

added

that

if

that

is

not

clarified

you

people

once

again,

you

know,

look

into

it

and

we'll

definitely

look

into

it,

because

that

is

where

test

set

up

in

that

is.

Explanation

is

given,

and

all

that,

because

usually

the

one.

M

Of

them

devices

or

the

device

under

test

for

part

of

the

the

maybe

should

be

I,

don't

know,

put

the

frame

like

one

of

the

solutions

I

can

think

of

just

like

a

frame

around

anything.

That's

like

anything,

that's

aside

that

the

deity

should

be

well

a

box

that

says

tester

like

and

then

like

explain

that

the

tester

is

a

multitude

of

machines

like

router

one

RRP,

c

e

and

m

PHP.

So

that's

that's

how

I

would

kind

of

try

to

take

care

of

it

because

it's

otherwise

it's

really

confusing

it's.

M

F

To

add

to

that

I

think

Sudan,

a

couple

of

things

having

your

are

one

in

your

ce-1,

slash,

tester,

right,

I,

think

a

lot

of

us

when

we

see

multiple

routers

on

a

diagram,

I

think

we

had

speaks

if

we

think

those

are

all

being

tested

in

and

I

see

your

point.

It's

not,

but

I

also

see

Mary

at

this

point,

I

think

we

talked

a

little

bit

about

that

yesterday.

F

Reading

back

through

the

draft,

with

your

test

hat

on

with

fresh

eyes

and

I

realize

that

can

be

a

little

harder

when

you're

the

raw

offer.

But

reading

back

through

and

saying

I

was

gonna,

go

sit

down

and

test.

This

does

this

make

sense

and

I

suspect

you'll

get

a

little

more

appreciation

for

what

Mary

is

to

say,

I.

N

A

point:

it's

not

it.

So

if

that

set

up

test

set

up

and

the

duty

details,

we

will

make

it

more

explicit

with

a

you

know:

bullet

points

in

fact,

okay,

thank

you

thanks.

Various

will.

Definitely

this

appreciate

your

points

and

I'm

I

think

so

I

can

move

to

the

next

yeah.

Thank

you.

So

these

are

the

parameters

which

we

defined

it.

You

know

these

are

them.

You

know,

parameters

like

for

the

evpn,

the

basic

evpn.

N

So

it's

a

back

learning.

What

we

do

is

like

the

there

are

different

types.

You

know

the

local

learning

remote

learning-

and

you

know

that

is

when

with

the

Mac

is

sent

from

one

direction,

then

that

is

a

local

learning.

Then

the

Mac

is

coming

from

the

remote.

You

know,

router

the

time

take

to

learn

the

you

know

certain

amount

of

Mac's

that

and

when

you

are

sending

the

you

know

by

direction

traffic.

N

And

what

is

the

amount

of

time-

and

this

is

you

know,

repeated

in

interval

and

it

is

plotted

because

this

Mac's

are

advertised

by

BGP.

It's

unlike

VPLS.

It's

a

data

plane

learning,

but

here

is

the

Mac.

Details

is

advertised

to

other

routers

as

a

type

to

route

because

they

have

defined

certain

NLRs

in

EVP

on.

C

N

J

N

Actually,

this

is

my

via

a

Lego

bridge.

The

traffic.

The

local

learning

is

the

traffic

which

is

bricks

from

here

and

reaching

here

the

beauty,

because

it's

a

multi

home

scenario.

The

traffic

will

be

reaching

here,

so

the

traffic

will

be

reaching

here,

the

time

taken

to

learn

the

local

impacts

and

the

time

taken

to

advertise

to

this

remote

offer,

because

the

local

learning

it

will

be

in

the

camp

table

or

the

local

map

table

from

there

he

took

it

has

to

keep

to

the

BJP

and

VTP

has

to

are

obeys.

N

That

depends

on

the

out

of

pass.

The

PDP

update

goes,

and

so

that

is

where

the

Delta

comes

into

picture

with

the

learning

and

the

rotation

rate

will

be

different.

You

learn

in

the

magnitude

which

we

follow

the

previous

RFC,

which

explained

about

how

the

Mac

has

to

be

learned,

that

federal

you

use

and

that

attachment

is

from

our

frequent.

You

know

from

the

BGP

advertisement

how

long

it

will

take

to

a

device

that

n

number

of

facts

or

X

number

of

fans

to

r1.

So

so

that's

good

for

local.

N

Give

me

give

me

the

next

one,

the

room

remoteness

from

that

you

know.

Traffic

is

sent

directly

to

our

because

it's

connected

directly

to

r1,

so

the

R

1.

It

will

be

learned

the

Mac

and

advertise

to

here.

So

the

same

thing

reverse

Park

will

have

clickable

here,

the

local,

the

max

in

r1,

will

be

advertised

as

a

BGP,

so

it

will

be

coming

as

a

BGP

advertisement.

Here

then,

if

from

the

evpn

database,

it

will

be

populating

to

the

local

path.

Ok,.

G

N

C

So

the

so

then,

the

fundamental

answer

to

the

question

which

I

think

Marius

was

raising

is

that

really

all

we

need

to

do

in

order

to

accomplish

mac

learning

is

to

generate

packets

with

max

that

need

to

be

learned

and

then

to

measure

the

benchmarks.

We're

going

to

be

looking

at

the

control

plane

interactions

between

the

devices

in

your

setup

in

order

to

determine

when

a

max

been

learned

and

when

it's

been

advertising,

did

you

Sakura.

N

Yep,

that's

the

same

way,

I'm

doing

it

because

all

right,

the

BGP

itself,

which

has

serialization

delay

them

the

NLRA

and

Lara.

You

know

the

back.

You

know,

as

for

the

RFC,

does

to

hook

up

and

send

it.

So

that

is

where

the

actual

benchmarking

has

to

come.

How

fast

the

you

know

device

like

you,

know

various

vendors

device,

how

we

can

work

through.

L

N

Ip

this

test

is

a

post

I'm

discussing

about

the

Mac.

So

the

beauty

is

that

a

friends

point

so

in

beauty,

a

set

of

friends,

point

Jim,

so

you

have

the

local

learning,

which

is

there

and

the

remote

routes

which

is

coming

from

the

r1

I

have

to

populate

to

my

Mac

table,

because

that

is

coming

as

a

type

to

route,

because

r1

will

be

advertising

to

the

duty

as

a

type

to

route.

Then

I

have

to

receive

that

routes.

Then

I

have

to

put

it

in

the

Mac

cable.

L

L

E

L

L

A

control

plane

is

good.

So

one

other

comment

about

this:

in

the

multihoming

active

active

environment,

you

may

never

get

a

Mac

advertisement

from

M

HP

E,

just

the

ESI

advertisement

that

is

equal

to

the

one

on

the

D.

You

yep

this.

So

when

you

benchmark

and

say

how

quick

this

is

where,

if

you

remove

the

ESI

F

of

M

HP

e,

you

invalidate

all

the

max

at

all

one

that

have

been

welcome

to

you.

So

it's

not

you

it's

possible

in

Bulgaria

the

Mac

advertisement,

the

type

2

from

both

yep.

P

L

N

N

Know

one

of

the

trigger

trigger

you

know

benchmarking.

Yet

this

is

a

learning

rate.

You

know

bringing

back

the

ESI,

so

withdrawal

type

for

withdrawal

comes,

and

you

know

that

does

not.

You

know

not

that,

because

this

is

learning

it's

a

vanilla,

vanilla

test,

so

it's

not

a

trigger,

so

that

is

not

added.

Yes,

I

withdrawal

is

not

added.

L

F

F

Carrier

feedback

that

maybe

you

should

consider

the

withdrawal

scenario

and

I

think

you

should

also

explicitly

consider

active,

active

and

non-active

active

in

both

snip

scenarios,

because

I

hear

you

this

is

about

learning,

but

there

is

no

learning

without

what

they're

off.

At

the

end

of

the

day,

anyways

I

agree

that

learning

might

be

its

own

discrete

task,

but

I

think

having

withdrawal

is

also

very

important

to

measure.

There's

never

been

a.

We

all

do

it

anyways.

When

we're

all

testing

for

vendors,

we

all

do

it

for

our

customers.

That.

N

Jim,

actually,

we

added

that

as

a

link,

because

normally

in

provider,

the

link

failures

is

frequent,

so

we

added

that

link

failure,

local

and

remote,

which

is

added

that

for

benchmarking,

because

ESI

be

drawn

there,

because

what

we

have

done

is

a

link,

if

that's

good,

what

we

have

added

as

a

link

failure.

That

is

where

the

Mac

flesh

is

a

parameter

next

I'm

coming

to

that

the

local

failure.

That

means

is

as

good

as

ESI

failure,

the

local

as

well

as

remote.

So

how

fast

is

it

is

getting

flesh?

So

that

is

the

word.

N

N

L

It

now

I

here

so

just

go

back

to

the

picture

of

second

I

guess

as

as

an

operator.

This

is

what

I

sort

of

want

to

know.

If

I

had

an

active

backup

scenario

where

I

was

using

de,

do

UT

as

my

active

to

send

traffic

to

r1

and

all

one

to

do,

UT,

+,

n,

HP

e2

is

my

backup

and

then

I

crush

D

ut.

How

fast

does

it

take

my

service

to

come

out?

L

N

N

Because

that

is

covered

in

the

link

failure

scenario,

which

happens

in

the

provider,

is

covered

like

the

ESI

cutting

off.

You

know

that

is

indirectly,

which

is

you

know,

link

cutting

is

indirectly,

which

is

referring

to

the

ESI

cut

off,

so

that

mat

flesh

the

to

a

scenarios

are

covered

bonus.

The

local

failure,

which

is

frequent

in

the

provider

environment

in

the

metro

ring

so,

and

the

remote

failure,

which

is

in

the

remote

failure.

How

fast

is

flashing

the

routes,

because

the

type

to

withdrawal

is

the

important?

Otherwise

traffic

will

be

black

hauling.

F

What

I

recall

reading

you're,

covering

Mac

flush

on

the

D

UT,

that's

explicitly

I!

Think

not

what

you

mean

saying

and

and

I

think

again.

We

can

take

it

to

the

list.

We

can

talk

about

it

afterwards,

because

I

think

we're

starting

to

rathole

a

little

here,

but

this

is

all

again.

This

is

all

from

the

perspective

of

the

duty.

That's

not

what

he's

saying

right

for

the

other

PE

to

be

come

and

go

through

the

depilation.

D

F

Think

that's

the

conversation

that

maybe

we

should

have

after,

if

you're

open

to

hanging

around

for

a

little

bit,

and

we

can

talk

about

how

to

potentially

add

that

in

but

I

do

think

it's

a

to

put

out

the

draft

as

an

RFC

without

that

in

there

I

think

it's

something

I'd

like

to

hope.

We

convince

you

to

consider

okay.

N

I'd

be

a

failure.

Okay,

fine

and

I'll

get

back

give

me

you

know

I.

Let

me

complete

I'll

get

back

to

you.

Okay,

just

give

me

a

sensing.

So

now

the

Mac

aging,

a

Mac

learning

we

covered

it.

Mac

aging

is

it's

it's

a

normal

aging

scenario.

We

are

referring

to

it,

so

we

scale

to

n/a

max

like

we

are

not

N.

We

are,

you

know

as

a

tester.

It

was

up

to

you

know

the

tester

to

reference

the

point

as

n

so

scale

and

stop

the

traffic.

N

So

how

long

it

will

take

to

flash

off

that

n

number

of

Macs

from

the

table

and

as

well

as

the

remote

you

learn

as

a

type

2

from

the

remote,

so

the

remote

traffic

is

stopped.

So

it

has

to

you,

know,

age

out

from

the

remote

router

and

it

has

to

send

the

type

through

withdrawal,

so

the

type

to

withdrawal

comes.

It

has

to

fresh.

N

It

has

to

signal

the

local

Mac

table

and

you

know,

remove

all

the

Macs

from

that

table

so

because

that

is

where

one

important

parameter,

because

if

the

withdrawal

didn't

come,

if

the

patrol

there

is

a

problem.

So

the

issue

is

like

your:

even

though

the

traffic

is

stopped,

the

Mac

it

remains

there

and

the

black

Halling

will

be

there.

I

L

Once

you

h

out

right,

you

know,

I

would

just

say

that

you

know

because

again

back

to

the

optimization,

like

the

ESI,

optimization,

if

a

whole

bunch

of

Mac's

timeout

associated

with

certain

Ethernet

segment,

you're

not

going

to

get

individual

withdrawals

for

that

you're

going

to

get

the

withdrawal

for

the

ESI

and

then

you're

gonna

invalidate

everything

until

you

know

your

back

table

hey

and

validate

these.

You

know

please

max

this

part.

Okay,.

N

N

You

know,

that's

my

Mac

concept

is

there,

so

it

will

advertise

the

Mac

and

IP

to

the

remote

routers

so

that

you

know

in

the

interval

and

routing

so

unnecessary

are

flooding

and

all

does

out,

which

is

not

there

in

the

conventional

VPLS,

so

interests

in

the

via

mobility

and

lot

of

other

features

are

dependent

on

this.

So

it

depends

on

the

Box,

how

you

can't

know

how

many,

because

it

depends

Mac

plus

IP,

that

NLRA

payload,

so

how?

N

F

Before

you

keep

going,

can

I

ask

for

next

meeting

when

you

bring

these

slides

back

in

with

updates

presuming

you're

gonna

present

next

in

Singapore,

could

you

put

the

diagrams

the

diagram

that

you

had

originally?

Can

you

put

them

in

on

the

side

and

then

highlight?

What's

where?

Oh

okay,

because

a

lot

of

times

you're,

saying

the

sender

and

while

I

think

I

can

guess

what

the

sender

is

it'd,

be

nice

I

think

that's

part

of

the

problem

too

Mary.

N

F

Go

back

now,

I

realize

that

you

need

to

do

it

next

time.

I

just

eat

one

of

these

slides

that

you've

been

going

through

where

you're

talking

about

learning

and

flushing,

and

you

put

down

and

then

specifically

call

out

where

and

what,

because

I

think

you

and

through

the

questions

that

Jimmy's

asking

you're

doing

it

with

a

highlighter

on

the

screen

here,

but

unfortunately

for

the

meet

echo

folks,

it's

not

caring

to.

D

C

That

the

actual

measurement

and

benchmarks

are

understandable

and

and

if

you

an

executable

person

and

if

and

if

you

can

reference

documents

from

the

RFC

that

you're

referring

to,

if

there's

figures

there-

that

then

that

may

be

sort

of

place

and

starting

to

available

to

incorporate

these

figures

so

that

we

can

so

that

everyone

can

understand

these

test.

Styles

I,

think

you

know

we

all

sort

of

understand

the

concepts

here.

Certainly,

but

the

unique

details

of

evpn

are

not

my

expertise.

C

N

F

Right

but

Sudan

I'll

point

out

there's

a

lot

of

pushback

to

the

feedback

you're

getting,

but

if

you're

getting

the

feedback

it

tells,

you

I

think

that

it's

the

way

you're

describing

things

here

and

the

way

it's

reading

in

the

draft,

is

it

I,

don't

think

coming

across

as

crisply

and

cleanly

as

you

expect

it

is,

and

if

we're

struggling

with

it,

then

other

folks

who

read

the

RFC

would

struggle

with

the

two.

So

you

know,

let's

circle

back

to

this,

based

on

the

discussion

we

had

yesterday,

but.

N

No

fine-tuning

and

it's

you

know

the

things

things

has

to

be

as

Mary

said,

as

we

find

you

do,

that

fine

tuning,

it

was

Sir

I'd.

It

has

done

because

maybe

you

know

certain

things

as

Jim

also

told

the

fine

tuning

will

be

done

at

us

and

in

the

present

Asian

like

giving

you

small

couple

details

in

the

sender

receiver

that

also

okay.

F

Soon,

not

to

nitpick

but

I,

think

it's

a

little

more

than

just

fine-tuning,

I

think

a

good

chunk

of

the

methodology,

while

you're

doing

the

test

of.

What's

what

and

what's

where

that

is

missing

from

the

test

cases,

so

I

think

it's

a

little

more

than

just

fine-tuning,

I

think

there's

a

good

amount

of

surgery.

F

N

F

So

so

I'll

also

point

out

I,

don't

know

that

I'm

it's

familiar

as

Jimmy

is,

but

this

is

not

my

first

rodeo

with

you,

VPN

and

I

still

was

a

little

unclear

as

to

where

you

were

going

with

a

good

amount

of

test

cases.

Sometimes

it's

just

not

clear

and

I'm

making

assumptions,

and

sometimes

when

you're

talking

in

here,

they

turn

out

to

be

the

right

assumptions.

But

I

shouldn't

have

to

assume

the

document

should

be

very

clear

and

straightforward

as

to

what

you

should

be

doing.

N

F

N

D

M

Maya

so

I'm

just

trying

to

echo

Sara

here

it's

it's

not

just

fine-tuning

it's

like

a

spring

cleaning.

It

needs

a

lot

of

changes,

not

just

fine-tuning.

It

has

to

be

clear

to

you

that

the

the

draft

at

this

point

is

not

in

a

stage

to

be

adopted.

It's

it's

unclear,

sound,

clear

to

people

that

are

very

familiar

with

evpn.

It's

unclear

to

do

also

everyone

else,

so

it

needs

a

lot

of

changes,

not

just

like

fine

team

that

has.

M

N

M

N

Sorry,

if

I'm

rude

but

I'm

just

you

know

just

I

want

to

know,

like

you

said

like

you

know,

it

has

to

be

clean

code.

Me

an

example

because

then

it

will

be

helpful

for

me,

which

is

which

is

a

viewpoint

which

that,

because

you

know

you

state

okay,

it

has

to

be

clean.

It

has

to

be

done.

That's

a

word.

I

accept

the

feedback.

M

N

D

N

That

you

know

I'm

at

ease.

It's

like

a

fine-tuning

as

we're

cleaning

up

all

this.

That's

a

term

I

used

it.

Okay,

don't

misquote

me,

I

mean

I,

respect

your

feedback,

but

the

thing

is

fine-tuning.

It

means

all

the

things

has

to

be

corrected.

That

is

what

the

English

is

about,

which

I

understand

it.

I

respect

your

feedback.

Definitely

it

will

be

done

that

that's

a

commitment,

I

told.

E

N

So,

and

if

you

can

you

point

at

me,

I

have

noted

that

this

is

a

things

has

to

be

done,

I

respect

that

it

will

be

taken

care

because

each

feedback

I

have

taken

very

seriously

I've

gone

back

to

that

and

have

done

it.

So

I

will

go

through

that

in

a

different.

You

know,

because

the

the

test,

what

the

EVP

intestines,

which

I

sat

with

and

you

know

the

parameters

which

I

have

given,

so

they

were

able

to

understand

it,

but

the

feedback

which

I

got

from

you

guys

is

different.

F

F

I

remember

it

was

a

mess

and

it

took

somebody

really

sort

of

saying

you

know

very

comic:

wake

up,

here's

how

it's

done

so

could

I

ask

in

the

spirit

of

him

being

new

and

alleviating

some

of

the

pushback

that

we're

getting

on

the

feedback

here,

I've

agreed

to

and

I'm

wondering

if

you

would

I

think

you're

a

fantastic

writer

and

you've

gone

through

the

process

really

well.

Could

you

take

a

look

at

the

diagram,

the

first

diagram

in

the

in

the

document

and

provide

surgical

feedback

around

that

here's?

What

doesn't

make

sense

to

me?

F

Here's

what

does

because

I

think

he's

really

struggling

with

what

I'm

hearing

is

I'm,

not

entirely

sure,

where

give

me

some

examples

of

what

you're

talking

about.

So

if

you

take

the

first

diagram,

I'll

take

the

first

use

case

and

I

think

between

the

two

of

those

and

we

can

even

talk

before

we

send

it

over

sure.

M

Iii

would

I

can

come

up

with

a

solution

to

what

I

am

trying

to

correct.

Of

course,

I

don't

have

the

the

EVP

M

background

that

maybe

is

very

critical,

but

I

I

can

also

ask

for

help.

We

have

people

that

are

experts

in

European

and

the

the

thing

is,

it's

I

think,

as

you

are

saying,

even

for

people

that

know

the

stuff,

don't

it

doesn't

make

sense

right.

F

N

I'm

sure

constructive

way

I

mean

so

then

it

would

be

easy

because

he

will

be

thinking

something

in

the

mind,

so

I

am

assuming

okay.

This

is

what

he

assumes

it

so

because

I

was

thinking

in

the

mind

where

EVP

and

actual

scenario

which

I

testing

so

I

was

thinking,

assuming

that

maybe

that

is

a

delta

between

us.

If

you

can

come

up,

you

don't

need

to

go

deep

into

the

e

beeping,

but

this

is

a

delta,

which

is

there

in

the

you

know,

transforming

the

idea

into

that.

M

Seems

like

given,

from

the

first

time

I

reviewed

the

document,

I

told

you

pretty

like

simple

things:

it

wasn't

very

technical.

It

was

most

of

almost

about

anything

and

like

there

was

a

lot

of

pushback,

so

I.

Think

if

you,

if

you

can

try

to

I,

don't

know

I

dig

deeper

into

the

feedback

that

and

then

consider

it

some

more

and

then

make

make

sure

that,

like

it

actually

got

covered

because

you

would

say

you

were

saying

this

got

covered.

This

got

covered.

N

And

then

okay

see,

this

is

like

you

know,

give

and

take

like

I

mean

like

if

you