►

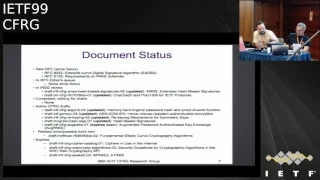

From YouTube: IETF99-CFRG-20170718-1550

Description

CFRG meeting session at IETF99

2017/07/18 1550

https://datatracker.ietf.org/meeting/99/proceedings/

A

C

C

D

D

D

D

D

D

D

The

other

thing

the

chairs

would

like

to

say

is:

we

started

crypto

review

panel

last

September

and

we

worked

it

slow,

getting

up

to

speed

and

getting

the

panel

reviewing

documents,

but

we

had

lots

of

very

good

reviews.

Recently,

GCMs

I

will

I

got

three

or

four

reviews.

You

know,

I

think

even

Kenny

and

I

was

surprised.

How

much

interest

was

in

this,

so

III

think

the

things

are

working

well.

So

we're

looking

forward

to

using

crypto

panel

more

in

the

future.

B

Okay,

so

first

up

we

have

stanislav,

is

going

to

update

us

on

rekeying

stands,

laugh

I,

think

we

allocated

ten

minutes

in

the

agenda

for

your

talk

and

please

try

to

stay.

This

is

for

all

speakers.

Please

try

to

stay

inside

the

pink

box,

that's

where

the

video

will

find

you

and

I'll

be

doing

just

ask

for

next

slide

each

time.

Okay,

thank

you.

E

Process

and

we

need

some

limits

to

be

mighty

to

be

taken

into

account.

First

of

all,

these

are

just

general

internal

properties,

estimations

for

few

staffers

if

they

have

some

potential

for

abilities

and

the

most

important

of

all

side

channel

the

newest

methods

of

work

theatres.

So,

for

example,

there

was

paper

recently

about

tempest

attacks

on

AAS.

We

is

attack

taken

only

50

seconds

to

get

an

a

ski

from

opens,

ourself

implementation.

E

So

taking

into

account

these

problems,

we

have

to

limit

the

key

usage,

the

key

lifetime,

and

when

our

limits

are

reached,

we

have

to

change

keys.

So,

of

course,

we

can

just

do

a

new

handshake,

key

establishment,

but

it

can

be

quite

expensive.

So

in

a

lot

of

stations

we

prefer

to

update

the

keys

so

to

do

13.

E

A

good

example

here

is

Arrakeen

procedure,

interest

1.3

as

a

key

update

procedure

and

I

think

that

this

is

a

good

example.

How,

when

and

and

in

which

stations

13

must

be

done

place

next

slide.

So

the

main

objective

for

the

document

is

to

prepare

a

document

with

a

mean

of

choices

for

the

developers

of

protocols

that

just

contain

a

lot

of

skill

and

efficient

procedures

that

solve

13

tasks

in

most

relevant

cases.

E

Of

course,

this

document

shouldn't

be

redundant

and

the

document

should

contain

general

recommendations

and

chase

principles

for

as

a

13

mechanisms,

one

of

another

one

or

another,

and

there

is

some

specific

parameters

we

consider

both

external

and

internal

key

methods,

parallel

serial

hash

based

or

cycle

based,

reasonable

and

without

metal

keys

for

the

most

the

most

for

the

best

majority

of

them.

The

security

increase

is

about

critical,

so

the

key

lifetime

can

be

increased,

approximately

quadratically

in

most

relevant

models.

Late

next

slide,

so

the

work

started

after

soul

meeting.

E

There

was

a

proposal

from

the

separate

G

chairs

to

create

such

document.

There

was

a

talk

on

Ricky

in

film,

so

meeting

we

had

discussion

and

the

end

work

started

as

a

zero

zero

draft

and

zero

1

draft

appeared

before

the

Chicago

meeting

and

in

Chicago

we

had

one

our

side

meeting,

honor

King

war.

We

had

some

important

decisions

about

the

document.

Please

next

slide,

please

next

I

think

so.

E

E

We

edit

a

remark

to

the

draft

and

also

the

service

on

the

slide

that

this

must

not

be

used

as

a

method

to

prolong

life

or

broken

ciphers.

It's

just

a

security

security

margin,

a

safety

margin

against

possible

future

attacks.

So

it's

not.

It

mustn't

be

used

to

prevent

life

or

something

already

vulnerable.

E

Some

words

about

what

quantum

issues

are

added:

no

post

quantum

issues

can

be

solved

better

in

because

if

we

talk

about

doors,

algorithms,

we

can

just

talk

about

attack

on

two

or

four

blocks

are

sufficient

for

us

to

mount

attack,

so

were

keen

couldn't

help

and

some

wars

about

reasons

that

remain

for

even

for

ciphers.

These

large

block

sizes

worried

about

a

channel

resistance,

FS

and

again

the

safety

margin

race.

Next

slide

about

the

commendation

guidelines.

E

We

rearranged

the

document

so

that,

as

a

recommendation,

part

in

the

maturation

part

is

before

as

a

mechanism

ourselves

and

this

there

was

decision

not

to

consider

any

related

questions

for

skip

soidiers,

because

all

models

are

quite

different.

This

next

item

and

all

the

mechanisms

themselves,

there

was

a

number

of

minor

iterations

about

constants

in

the

internal

wiki

in

for

CTR

mode.

The

most

important

direction

was

about

the

CCM

mode.

There

was

proposal

to

consider

also

SSA

mode,

Weiser,

King

and

I'll

return

to

this

question

a

bit

later.

Please

next

slide.

E

So

after

the

Chicago

median,

we

had

four

more

versions.

The

current

version

is

0

5.

We

had

two

major

revisions

after

a

list

of

durations

by

less

costly

by

shaker

on

admissible

ASCII,

and

the

current

version

is

0.

5,

of

course,

will

be

happy

to

have

any

reviews

alterations

for

this

version

is

next

slide.

We

hope

that

structure

the

principles

and

made

recommendations

in

this

version

are

quite

close

to

being

ready,

but

we

still

have

a

lot

of

important

question

to

be

solved.

E

First

of

all,

as

I

mentioned

before

internal

reinforce

the

same

now,

we

have

proposal

for

mechanisms

for

internal

routine

for

cesium,

it's

added

to

the

draft,

but

there's

a

problem

with

it

that

now

this

is

the

only

mechanism

that

doesn't

have

security

proof

for

all

other

mechanisms.

We

either

have

food

security

proofs

or

they

can

be

obtained

by

some.

E

Flight

notification

source

and

it

improves

because

principles

are

the

same-

that

in

textbooks

but

position

because

of

authenticity

problems

can't

get

security

proofs

using

since

we

have

now,

so

it

must

be

done

from

from

a

scratch.

If

so,

we

need

help

here

and

if

someone

would

like

to

help

it

will

degrade

and

for

now

we

think

that

if

we

unable

to

obtain

all

security

proofs

for

the

same

with

looking,

we

need

to

exclude

the

mechanism,

because

we

don't

want

something

without

guilty

proof,

explicit

or

implicit

to

be

in

the

document.

E

Of

course

we

will

need

to

attest

to

actors

and

also

I,

would

ask

for

its

directions

and

concerns

for

the

current

version.

First

of

all,

opinions

about

whether

all

the

concerns

from

the

Chicago

meeting

have

been

properly

addressed

and

any

new

comments.

First

of

all

about

the

recommendation,

as

and

use

cases

for

protocols,

for

instance,

for

from

the

IT

field,

I

think

it

will

be

very

important

to

have

as

much

refined

from

relations

about

recommendations

from

the

developers

of

protocols

as

we

can

next.

Quite

so,

thank

you

very

much

questions

stations.

B

E

I,

don't

think

in

the

scope

of

the

draft,

but

in

my

opinion,

document

is

about

ciphers

that

don't

have

any

even

any

theoretical

problems,

so

I,

don't

think

that

this

must

be

in

the

scope

of

the

new

protocols

and

I.

Don't

think

that

anything

must

be

strengthened

with

akin

if

we

have

some

theoretical

properties.

So

in

my

opinion

see

this

must

be

out

of

the

scope,

but

it

just

only

opinion.

We

don't

have

to

add

some

such

thoughts

in

the

document

itself.

E

So

are

there

any

ciphers

in

use

that

are

not

broken

and

32-bit

or

64-bit?

If

and

my

opinion

is

that,

if

real

security

for

now

for

the

node

X

is

the

same

as

a

purely

security,

then

it's

everything

is

okay

with

the

cipher,

so

we

have

some,

for

example,

32-bit

cipher.

Of

course

we

have

some

a

priori

limitations

for

its

usage,

of

course,

but

if

we

don't

have

any

attacks,

then

that

can

lower

as

a

theoretical

security,

then

we

can

understand

that

the

cipher

is

still

unwell,

doable

and

it

can

be

considered.

E

G

G

So

so

I

mean

actually

don't

actually

going

out

of

your

way

to

apply

this

mechanism,

so

that

you

can

use

three

days

seems

like

a

questionable

life

decision,

but

nonetheless,

I

think

it

is

a

almost

a

canonical

example

of

what

this

mechanism

can

do.

It

can

take,

it

can

take.

It

can

take

a

cipher

where

the

best

attacks

are

generic

attacks,

but

the

generic

bounds

are

not

great

and

make

them

more

comfortable.

E

B

Maybe,

and

we

could

ask

for

a

quick

show

of

hands

from

the

from

the

audience,

no

putting

you

guys

on

the

spot.

Who

has

read

the

draft?

Oh

that's,

fantastic

great:

are

there

any

yeah

she's

got

you

get

your

hands

down?

First.

Are

there

any

volunteers

to

do

a

thorough

review

of

the

draft

on

behalf

of

CFR

gee

now

that

it's

reaching

a

fairly

mature

state

any?

B

B

B

H

Okay,

so

I

presented

this

draft

at

sag

at

the

last

ITF

we

were

asked

to

bring

it

here.

It's

substantially

updated,

so

what

I'm

gonna

do

is

I'm

gonna

go

over

for

people

who

didn't

see

my

sag

presentation,

I'm

gonna

go

over

what

exactly

a

verifiable

function.

Random

function

is

and

why

we

should

standardize

them

I'm

going

to

talk

about

the

applications

and

since

that

meeting

I

have

more

information

about

what

the

applications

of

this

primitive

is.

People

are

using

it

in

ways

that

I

didn't

know

before

and

then

talk

about.

H

What's

new

in

this

draft,

okay,

so

to

get

an

idea

of

what

a

verifiable

random

function

is

we

all

know

what

a

hash

function

is.

It

doesn't

have

a

key.

If

you

take

an

input,

it

gives

you

an

output

and

to

verify

that

the

hash

was

done

correctly.

You

just

recompute

the

hash

yourself.

Okay,

so

there's

a

verification

mechanism

for

this

hash

function.

Next

slide,

a

PRF

has

a

symmetric

key.

The

holder

of

the

key

can

compute

the

hash,

but

if

you

don't

hold

the

key,

you

cannot

verify

that

the

hash

was

computed

correctly

right.

H

So

that

means

that

there's

no

one-to-one

relationship

between

the

input

and

the

output

with

a

pseudo-random

function

with

a

vrf.

It

is

a

keyed

hash

function.

Next

slide,

it's

keyed

hash

function,

but

this

time

we

have

an

asymmetric

key.

So

the

way

to

think

about

this

is

sort

of

like

the

public

key

version

of

of

a

keyed

hash

function

and

what

happens

is

in

order

to

compute

the

hash.

You

use

the

secret

key,

so

that

means

only

the

hash

sure

can

compute

the

hash,

but

anyone

can

verify

that

the

hash

was

computed

correctly.

H

So

that

gives

you

we're

gonna

see

that

that

gives

you

a

one-to-one

relationship

between

inputs

and

outputs.

If

you

know

the

public

key,

that's

what

we

want

from

Aviara

cool,

okay,

great

okay.

So

how

does

this

work

so

I

want

to

go

over

the

API

of

how

a

vrf

works.

So

what

happens?

Is

the

verifier

holds

the

public

key?

The

hash

sure

holds

the

secret

key.

He

sends

his

input

over

to

the

hasher,

the

hash

sure

computes,

the

value

called

the

proof.

H

So

the

way

that's

computed

is

by

taking

the

secret

key

in

the

input

and

computing.

Some

sum

value

called

the

proof.

Okay,

so

only

the

hash

sure

can

do

this.

Now,

the

verifier

needs

to

verify

that

this

hash

is

correct.

So

it's

going

to

run

the

verify

function

using

the

public

key,

the

input

in

the

proof.

H

If

that

comes

out

valid,

then

he

can

get

the

actual

vrf

hash

output,

which

just

is

derived

directly

from

the

proof,

so

you

can

think

of

it

as

just

taking

the

proof

in

hashing

it

in

some

way,

and

that

gives

you

the

vrf

hash

output.

Okay,

so

the

way

you

verify

is

with

the

proof,

but

the

actual

hash

output

is

computed

using

this

proof

to

hash

function

on

the

proof

itself

notice

that

the

proof

the

hash

function

is

not

keyed.

Okay,

so

the

keying

is

for

the

proving

function.

H

The

public

key

is

for

the

verify

function.

Okay,

so

what

are

these

things

useful

for

so

the

way

we

came

to

this

is

four

through

n

SEC,

five,

which

is

solving

a

zone

enumeration

problem

in

DNS

SEC.

It's

also

used

for

key

transparency

in

the

conex

project

and

all

sorts

of

derivatives

of

these

projects.

In

both

cases,

vrf

is

used

to

prevent

dictionary

attacks.

Okay,

we're

gonna

see

exactly

why

this

works.

H

Since

then,

it's

I

also

found

that

it's

being

used

by

cryptocurrency

called

Al

Gore

and

in

this

use

case

it's

a

little

bit

different.

What

they're

doing

is

to

do

randomized

selection

that

can

be

verified

and

I'm

not

going

to

talk

about

this

application

in

this

presentation,

but

I'm

happy

to

take

questions

about

that

after

we

started

talking

about

vieira,

have

some

various

venues

that

we

started

to

find

various

implementations

slightly

different

implementations

of

the

same

underlying

idea?

Okay,

so

it

comes

from

the

Sean

penderson

Sean

penderson

proves

for

that.

H

So

now

let

me

show

you

the

the

uniqueness

property,

which

is

one

of

the

key

properties

of

Revere

F.

So

what

uniqueness

says

is

that

there's

a

one

to

one

input,

one

to

one

relationship

between

the

input

and

the

hash

as

long

as

you

know

the

public

key

okay?

So

if

you

think

about

sha-256,

if

I

hash,

X

I'm

always

going

to

get

Y,

it's

not

going

to

come

out

to

a

different

value.

H

Every

time

I

do

the

hashing

a

vrf

will

give

you

the

same

property

that

every

input

maps

to

a

unique

output

given

the

public

key

okay,

and

that

that's

why

you

can

use

a

vrf.

The

same,

you

might

use

a

hash

function.

You

know

that

there's

a

one-to-one

relationship

between

the

input

and

the

output

more

formally,

what

this

says

is

even

an

adversary.

That

knows,

the

secret

key

cannot

find

two.

H

Two

outputs

that

map

to

the

same

input.

Okay,

so

it

can't

be

two

outputs

that

map

to

the

same

input.

We

know

that

there's

a

one-to-one

relationship

between

the

input

and

the

output,

even

if

you

know

the

secret

key

and

that's

the

same

as

with

sha-256

right.

We

have

this

type

of

relationship

with

that.

Similarly,

we

want

to

have

collision

resistance

even

in

the

face

of

an

adversary.

That

knows

the

secret

key

in

this

case

of

the

public

key

is

fixed,

even

an

adversary.

H

That

knows,

the

secret

key

can't

find

two

inputs

that

go

to

the

same

output.

So

these

two

properties

give

us

a

nice

one-to-one

relationship

that

allows

us

to

use

hash

functions

and

hash

based

data

structures.

Basically,

you

can

substitute

your

hash

function

with

a

vrf

okay,

but

why

would

you

want

to

use

a

vrf

into

this

last

property,

which

is

pseudo

randomness

and

what

it

says

is

if

I

take.

If

someone

gives

me

a

vrf

hash

output

I

have

no

idea

what

input

it

corresponds

to

okay.

H

So

if

I

just

get

the

hash

value,

so

notice

what's

happening

here,

the

hasher

is

computing.

The

proof

then

computing

the

hash

by

taking

proof

to

hash

of

the

proof

he

gives

me

the

vrf

hash

output.

I,

as

the

adversary,

have

no

idea

what

input

this

hash

corresponds

to

you.

I

cannot

do

a

dictionary

attack

where

I

test

all

the

inputs

in

my

dictionary,

hash

them

and

see

if

they

match

this

hash

value

that

was

given

to

me.

I

cannot

do

this

because

I

don't

have

the

secret

key

okay.

H

So

that's

why

vrf

is

actually

useful

in

a

lot

of

these

use.

Cases

is

because

it

prevents

dictionary

attacks.

Okay.

So

if

this

isn't

clear,

please

ask

me

questions

in

the

in

the

question

answer

session,

but

this

is

the

real

important

thing,

so

it

acts

like

a

hash

function

that

stops

dictionary

attacks,

and

this

is

just

the

formalization

of

what

I

just

said.

It

was

so

these

two

use

cases

which

are

where

we

started

with

this

are

about

preventing

dictionary

attacks.

H

I

just

want

to

briefly

show

you

kind

of

what

the

idea

is

here,

forgetting

about

V

ahrefs

for

a

second

and

I'm.

Sorry,

there's

public

key

and

secret

key

on

this

slide.

They

shouldn't

be

there

in

the

normal

case,

where

we

have

a

hash

based

data

structure

and

we

have

the

root

of

the

data

structure.

The

querier

will

send

a

query

to

the

hasher

he'll,

give

him

some

sort

of

information

from

the

data

structure,

so

this

case

I'm

drawing

the

commercial

tree

of

getting

the

Merkle

path.

Anyway,

it

doesn't

matter.

H

You

get

some

information,

he

this

information

about

about

the

data

structure

and

he

can

verify

that

his

input

is

in

the

data

structure.

But

if

he

does

this

a

bunch

of

times,

he

starts

to

learn

a

lot

about

the

data

structure

right

so

the

second

input,

he

gets

another

branch

and

you

can

imagine

it

keeps

doing

this

and

you

can

get

the

whole

Merkel

tree

and

so

on.

Then

he

can

start

to

do

things

like

dictionary

attacks

to

try

to

learn

what

exactly

is

present

or

absent

from

this

data

structure.

H

Okay,

so

this

is

where

this

is

where

VXR

come

in.

If,

instead

of

using

a

regular

hash

function,

we

use

vrf

hashes,

then

now,

when

I

want

to

get

the

answer

to

a

query.

I

have

to

ask

the

hash

sure

to

answer

the

query

for

me:

I'll

get

still

the

the

part

of

the

data

structure

that

I'm

querying

about,

but

I'll

also

get

this

proof

that

only

the

hash

sure

can

compute,

okay

and

then

I'll

use

that

proof

to

verify.

H

First

of

all

that

the

hash

are

answered

me

correctly,

so

I'm

going

to

take

the

prove,

the

the

proof,

the

input

and

the

public

key

and

run

the

verify

function.

Then

I'm

going

to

get

the

hash

value

and

check

that

the

hash

is

in

the

data

structure.

So

it's

just

like

what

you

would

normally

do

except

you

first

need

to

do

the

verify,

function

and

rejected

verify

fields.

Okay,

so

this

property

ensures

that

the

hash

sure

cannot

lie

to

you

about

the

output

and

the

vrf.

H

Pseudorandomness

property

ensures

that,

because

you

can't

compute

hashes

on

your

own,

you

can't

start

reversing

hashes

and

trying

to

enumerate

everything

in

this

data

structure

and

that's

what

we're

using

it

for

an

N,

SEC

5,

that's

what's

being

used

in

con

X

and

key

transparency

and

all

these

other

applications.

Ok,

so

what's

new

in

this

draft,

there's

two

vrf

specified

in

this

draft.

The

more

efficient

one

is

the

elliptic

curve,

vrf

we've

done

sort

of.

So

when

we

were

designing

this,

we

wanted

to

be

fast.

H

We

wanted

proofs

to

be

very

short

because

the

proofs

are

the

things

that

are

on

the

wire.

So,

as

you

can

see

here,

the

thing

that's

traveling

around

on

the

wire.

All

the

time

is

the

proof.

So

we

really

don't

want

that

to

be

excessively

long

for

various

applications.

This

is

important

in

order

to

optimize

all

this

stuff.

We

did

very

careful

security

proofs.

You

can

see

on

the

bottom

of

the

slide.

I

have

a

link

to

a

paper

that

we

have

on

the

crypto

eprint

archive.

H

You

can

see

all

the

security

proofs

for

all

of

these

brf's

that

are

specified

in

this

draft.

So

everything

we

specify

comes

with

the

security

proof

that

you

can

find

there

in

terms

of

the

elliptic

curve

vrf

it

so

the

way

we

wrote

it

is

we

wrote

the

general

vrf.

Then

we

have

cipher

suites.

So,

depending

on

what

curve

you

want

to

use,

what

hash

function

you

want

to

use

those

are

specified

in

the

cipher

suites

it.

For

this

particular

draft.

H

We

were

very

careful

to

deal

with

curves

with

cofactor

greater

than

1,

so

8255

1

9

curve

has

a

cofactor

greater

than

1.

We

were

careful

to

actually

make

sure

that

we

did

everything

correctly

with

the

cofactor.

Our

security

proofs

were

actually

also

updated

to

include

the

cofactor

in

the

security

proofs.

So

that's

the

one

major

change

that

we

did

here.

The

other

thing

that

we

did

is

that

we

added

a

key

validation

function.

H

So

if

you

saw

my

presentation

that

sag

what

we

were

talking

about,

we

were

saying

that

if

the

public

key

was

given

to

you

by

a

trusted

entity,

then

the

vrf

was

secure.

Ok,

so

if

you

trust

the

guy

who

gave

you

the

vrf

public

key,

then

the

vrf

was

secure.

With

this

draft,

we

found

a

way

to

get

around

that

requirement.

So

now

you

can

validate

that

the

public

Hugh

was

given

to

you

for

the

vrf

is

actually

a

good

public

key

for

a

vrf,

and

why

would

you

care

about

this

right?

H

Because

the

adversary

is

the

one

who

potentially

is

choosing

the

public

key

in

some

applications?

So

we

want

to

have

a

way

of

verifying

that

the

public

keys,

secure,

ok,

so

I'm

gonna

wrap

up

really

quickly

with

the

elliptic

curve

vrf

and

just

to

show

you

what

it

is.

It's

very,

very

high

level,

okay,

so

our

public,

our

secret

key,

is

X.

Our

public

key

is

G

to

the

X.

What

do

we

do

to

hash

an

input?

We

take

the

input.

We

hash

it

to

a

point

on

the

curve.

H

That's

H,

then

we

raise

H

to

X,

which

is

the

secret

key.

Ok,

so

that's

R.

And

then,

if

you

look

at

the

book

the

corner

of

my

screen,

the

screen

over

here,

you

can

see

that

the

actual

hash

output

is

just

the

hash

of

this

gamma.

So

we

take

H

to

the

power

of

X

and

compute.

The

hash

of

that

that's

the

V

RF

output.

Ok,

now

I

need

to

tell

you

what

the

vrf

proof

is.

What

the

vrf

proof

is,

is

this

value?

Gamma,

sorry

I

said

alpha,

but

I

meant

gamma.

H

This

value

gamma

is

part

of

the

proof

and

then

there's

these

two

values

C

and

s.

What

are

these

things?

There

are

zero

knowledge

proof

that

gamma

and

the

public.

He

have

the

same

discrete

log,

so

they're

raised

to

the

same

exponent.

Ok,

so

we

do.

We

attach

the

G

or

knowledge

proof

that

this

is

the

case,

and

that

proves

to

you

that

gamma

is

a

valid

hash

of

the

input

right,

because

gamma

is

H

to

the

X.

So

you

verify

that

what

our

gamma

is

is

H

raise

to

the

power

of

X.

H

You

know,

H,

you

don't

know

X,

but

you

use

this

year.

Knowledge

proof

to

verify

that.

Ok!

So

that's!

This

is

this

by

the

way.

This

is

the

pattern

that

you

see

in

all

the

different

implementations

that

we

found

in

different

places

and

the

details

aren't

exactly

how

you

specify

the

cofactor

and

so

on,

which

we've

done

in

this

draft.

Ok,

so

it

with

that

also.

B

I

H

G

J

Brian

40p

film

first

I

just

wanted

to

support

this.

You

know

general

effort,

I

think

you

know

VR

I

can

you

know.

Brf's

are

indeed

extremely

useful

and

actually

another

application

case.

I'm

familiar

with

some

very

nice

pass

a

distributed

password

protection

protocols

and

algorithms

by

Gregory

Nevin

and

some

some

others

are

used.

Vrs

that

I

know

of

so

that's

I

think

yet

another

interesting

application

area.

You

can

add

to

the

list.

Just

a

a

question

on

the

the

the

trusted

versus

untrusted

public

key

and

the

verification

function.

H

H

H

J

Okay,

I

I,

understood

decaf

was

mostly

a

mechanism

to

make

sure

that

you

don't

get

burned

by

the

cofactor

in

doing

you

know,

arithmetic,

so

so

that

you

canonicalize

all

points

that

have

that.

Are

you

know

the

you

know

the

same

modular,

the

cofactor

but

I'm,

not

an

expert

on

decaf,

so

it

you

know

you

could

be

exactly

right.

So

yeah.

L

H

So

I

don't

know

the

numbers

for

the

operations

of

the

how

fast

it

is

for

some

reason

before

this

meeting,

but

in

terms

of

the

proof.

So

that's

an

elliptic

curve

point

gamma.

That's

one

elliptic

curve

point.

So

if

you

do

point

compression

which

is

what's

in

the

draft,

that's

256

bits,

if

you

use

eg,

255

or

9c,

is

a

half

of

a

sha-256

output.

So

it's

128

bits

this

getting

this

down

to

128

bits

like

we

cut

it

in

half.

H

B

K

B

Like

to

do

hums

in

IOT,

fo,

Nixon

I

believe

we

are

so

I'm

gonna

ask

two

questions

one:

should

we

adopt

this

I

sort

of

hi

w

this

I

say

we'd

like

to

adopt

this

and

you

hum,

and

then

we

should

not

adopt

this,

and

then

you

hum

okay.

So,

let's

start

with

please

hum,

if

you

think

we

should

adopt

this

draft

at

the

CFR

G

doc

document

and

for

the

opposite,

if

you

think

we

should

not

adopt

this

as

IC

FRG

document,

please

hum

now

or

forever

hold

your

hum.

Okay

I

think

we're

I.

B

B

J

So

this

is

based

on

something

we've

been

working

on

for

for

quite

a

while

now

in

very

various

forms.

The

new

development

is

basically,

we

finally

wrote

the

first

draft

of

a

of

an

actual

attempt

at

at

an

actual

spec

for

for

a

simple

collective

signing,

algorithm

and

I'm,

going

to

mostly

just

go

over

I

won't

get

deep

into

the

detail,

technical

details

for

that

you

know

read

the

draft

if

you

haven't

already,

but

I

want

to

mainly

mainly

kind

of

motivate

it

and

talk

about

applications.

Why

why?

J

This

is

interesting,

because

because

at

this

stage

we're

just

trying

to

trying

to

see

if

there's

support

for

for

working

on

standardizing

of

this

now

this

is

one

of

the

many

types

of

cryptographic.

Algorithms,

that's

motivated

by

splitting

trust,

avoiding

single

points

of

failure

x'

by

by

building

constructions,

that

split

trust

more

widely,

so

that

so

that

no

single,

compromised,

node

or

participant

can

compromise

the

whole

system

and

collective

signing

or

aggregate

signing

or

multi

signatures.

J

You

have

a

lot

of

different

variations

and

several

different

names.

This

is

the

draft.

It

is

one

of

a

kind

of

constellations

of

possible

ways

to

design

collective

or

multi

signatures,

but

the

basic

idea

is

where

you

have

multiple

independent

parties

who

want

to

collaborate

to

validate

and

sign

some

message

or

statement

of

some

kind,

and

often

this

is

a

threshold

group

of

some

kind,

T

of

n

it

doesn't

have

to

be

it

can

be,

there

can

be

more

complex.

Predicates,

like

you

know,

t1

of

n1.

J

J

You

know

ensuring

that

you

know

many

witnesses

have

seen

something,

and

also

to

eliminate

single

points

of

failure

and

a

nice

property

of

the

of

snore

signatures

of

the

type

that

RFC

880

32

just

standardized

is

they

support

this

kind

of

multi

signatures

very,

very

easily

and

efficiently

again

without

getting

too

much

into

the

technical

details.

This

is.

This

is

just

kind

of

a

an

illustration

of

the

basic

way

this

works

any

any

snore

snore

signature

is

formally

a

challenge

response.

Sigma

protocol

basically

operates

in

three

stages.

J

The

signer

creates

a

snore

commit,

which

is

basically

a

picks,

a

random

value.

Little

v,

computes

G

to

the

G

to

the

B

which

to

produce

the

public,

commit

big

V

uses

a

hash

function

to

turn

that

into

a

challenge

and

then

computes

a

response

which

is

mathematically

related

to

the

private

key,

which

is

a

little

K

in

this

case,

and

the

classic

snore

signature

is,

is

the

challenge

response

now.

Rfc

3032

uses

the

slightly

different

RS

signature

format,

which

is

which

is

equivalent,

but

you

know

kind

of

formally.

The

difference

doesn't

really

matter.

J

It's

just

a

representation

thing,

so

the

the

fundamental

modification

to

support

multi

signatures

is

quite

simple.

All

you

do.

You

know,

at

least

in

the

basic

basic

form

you

have

multiple

signers.

Now

you

can

have

any

number

each

signer

has

to

create

their

own

have

have

their

own

Shanor

commit,

so

each

signer

picks

a

you

know.

We

wanted

little

v1

bit

little

v2

computes

a

corresponding

public

committed.

We

big,

v1,

big

v

to

somebody,

let's

call

it

leader,

but

it

can

can

be

anyone

there

there's.

No,

no

one

has

to

be

trusted,

especially

trusted.

Here.

J

Someone

basically

combines

all

the

commits

together,

computes

a

computes,

a

challenge.

I

should

mention

everybody

needs

to

verify

the

challenge.

There

were

there's

a

bug

in

the

first

draft,

it's

it's

being

fixed

that

that's

important,

but

so

everyone

can

can

compute

or

recompute

and

verify

the

challenge,

and

then

everybody

computes

a

response

corresponding

to

their

part

of

the

of

the

of

the

commit

and

then

outcomes,

basically,

when

you

combine

them

together

in

the

right

way

outcomes.

Basically,

a

single

standard,

snore

sing

that

signature

and

this

can

be

in

either

challenge

response,

form

or

RS

form.

J

The

draft

is

written

with

RS

form

to

be

consistent

with

RFC

a

t32,

and

so

it

kind

of

just

works,

and

so

basically

you

can

then

verify

one

of

these

collective

signatures

against

the

combined

two

public

public

key

of

all

the

of

all

the

signers

all

at

once,

with

basically

just

the

cost

of

a

signal,

a

single

signature

verification.

So

this

is,

you

know

nowhere

near

new,

there's

tons

of

background

theoretical

background

of

you

know

this

and

vary

in

different

variants

of

this

scheme.

J

That

won't

go

through

these

just

going

through

some

applications,

so

so,

actually

a

your

two

ago,

I

can't

remember

how

long

ago

I

presented

this

this

paper,

where

we

were

experimenting

with

making

this

really

scale

and

applying

it

foot

as

a

as

a

transparency

mechanism.

Basically

the

idea

is,

you

can

take

kind

of

any

critic,

security-critical

Authority

service,

maybe

the

you

know,

DNS

SEC

root

zone

or

a

CA,

or

you

know

any

protocol.

J

You

you

like,

if

you

want

to

ensure

that

if,

if

it

does

something

wrong,

a

lot

of

people

will

know

you

know

whatever

you

know

the

complete

collection

of

any

everything

the

authority

sign,

so

the

authority

can't

be

coerced

into

secretly

signing

some

backdoored.

You

know

some

evil,

something

or

other

without

anybody

knowing

well

you

you

attach

this

group

of

witnesses

so

that

you

know

the

witnesses

have

to

sign

to

and

make

sure

everything

that

gets

signed

gets

logged.

You

know

for

better,

better

or

worse

right,

so

without

getting

into

the

details

again.

J

Basically,

we

demonstrated

that

with

the

right

protocols

you

can,

you

can

make

this

extremely

scalable

up

to,

like

you

know,

the

thousands

or

tens

of

thousands

of

participants

can

can

work

together

to

collectively

sign

messages

in

a

matter

of

a

few

seconds.

We

don't

you

know,

I,

don't

think

that

any

immediate

application

is

going

to

really

need

that

scalability.

J

But

it's

nice

to

know

we

can

get

it

when

we,

when

we

went

to

verification

cost

in

terms

of

CPU

time,

goes

down

by

orders

of

magnitude

depending

on

the

you

know,

a

number

of

verifiers,

as

you

would

expect,

and

also

of

course,

you

get

significant

signature,

size

savings

and

now

one

one

caveat

the

reason

that

that

the

collective

version

is

not

absolutely

constant

time,

as

you

might

expect,

based

on

there's

a

simple

way.

I

explained

it

is

that

you

know

realistic,

collective

signing

scheme.

J

You've

got

to

have

a

way

to

tolerate

participants

that

are

failed

or

not

online.

At

the

time

you

want

to

sign,

and

so

you

have

to

be

able

to

adjust

down.

You

know

the

group

of

signers

to

some

set

of

signers

that

still

satisfies

a

threshold

and

so

in

the

design

proposed

in

the

draft

there's.

Basically,

we

basically

have

a

standard,

IDI,

25,

519

signature,

plus

a

bit

mask

of

the

actual

participants

and

the

bit

mask

is

is

part

of

the

signature.

J

In

that

it's

fully

checkable,

you

know

any

attempt

to

to

change

the

bit

mask

will

be

will

invalidate

the

signature,

but

but

so

we

basically

need

one

bit

of

per

participant,

and

so

so

it's

still

linear.

You

know

linear

space,

but

but

way

way

smaller.

So

and

although

it's

not

really

the

emphasis

of

the

draft,

we

can

make

this

and-

and

this

is

you

know

this

is

optional.

We

can

make

this

extremely

scalable

with

with

pre

structured

communication,

but

that's

probably

not

necessary.

J

Most,

you

know

small-scale

applications,

so

we'll

get

into

that

other

use

cases

so

so

cryptocurrency,

the

cryptocurrency

community,

has

been

expressed

quite

a

bit

of

interest

in

this

for

compressing

the

size

of

transactions

by

aggregating.

You

know

a

bunch

of

transaction

signatures

into

into

smaller

space.

I

again,

I

won't

get

into

the

details.

J

So

for

details,

see

the

paper,

but

just

just

you

know

kind

of

laying

out

some

of

the

applications

we

think

are

interesting

and

I'm

sure

there

are

others.

I

would

welcome

suggestions

you

know

kind

of

if

you

know

of

other

applications

that

I

missed.

Please

let

us

know

anyway.

So

again,

I

won't

get

into

a

lot

of

detail

on

the

draft

itself.

J

We're

not

you

know

we're

not

we're

not

wedded

to

any

particular

format.

Now

there

are

plenty

of

questions,

so

you

know

if

the

group

decides

that

this

is

a

worthwhile

thing,

you

know

direction

to

try

to

standardize.

There

are

some,

of

course,

some

issues,

and

this

is

to

be

discussed

and

decided.

This

is

certainly

an

incomplete

list,

but

some

of

the

questions

that

we

already

know

about

one

is

there's

a

trade-off

between

getting

strict,

absolutely

strict

and

non

malleability

of

the

signatures,

which

would

certainly

be

desirable

for

various

reasons.

J

You

know

not

malleable

signatures

have

burned.

You

know

various

people

in

the

past

and

so

yeah

that

we

would

like

non

malleability.

On

the

other

hand,

we

can't

get

strict

non-vet

non

malleability,

as

well

as

protecting

against

a

certain

type

of

internal.

Do

s

attack.

So

so,

if

somebody

goes

offline

at

the

wrong

time

during

the

signing

process,

do

you

have

to

restart

from

scratch?

Or

can

you

continue

if

you

want

to

be

able

to

continue,

you

need

some

limited

degree

of

malleability,

so

a

trade-off

potentially

to

be

discussed.

J

Another

important

topic

is

the

way

to

defend

against

well-known,

related

key

attacks.

So

if

I

just

use

the

the

simple

algorithm

I

mentioned-

and

you

know,

take

any

collection

of

public

keys,

then

I'm

hosed,

it's

not

secure,

because

somebody,

an

adversary,

can

compute

a

malicious

public

key

related

to

other.

To

you

know

the

honest

nodes

public

keys

to

cancel

them

out.

That's

not

good

the

standard

way

to

do.

J

This

is

just

to

make

sure

that

everybody

who

has

a

public

key

in

one

of

these

groups

has

proved

knowledge

of

the

corresponding

private

key

standard

is

kind

of

standard

cryptographic

press

practice

anyway,

if

you're

using

PGP

keys

to

feed

this

thing,

you're

all

automatically

covered.

But

you

know

it's

an

important

caveat.

Another

alternative

way

of

doing

this.

J

If

we

care

about

you

know,

if

we

expect

that

list

to

get

very

big

and

we

care

about

the

amount

of

state

that

the

verifier

has

to

know

in

its

root

of

trust,

then

we

can.

You

know

we

have

ways

of

changing

that

again

at

some

cost

in

complexity

and

stuff,

so

and

they're

probably

going

to

be

more

issues.

So

that's

so

I

wanted

to

leave

it

at

that.

So

at

the

moment

the

main

priority

here

is

to

get

feedback

on

you

know

kind

of.

J

B

M

J

N

For

hon

Becca,

yeah

I'm,

very

interested

in

this

I

mean

I'm

not

managed

to

get

my

head

round

than

the

crypto,

yet

what

I

would

like,

and

why

could

you

really

use,

is

other

moment

for

encryption?

I

can

split

a

DP

hand.

Key

into

two

I

can

put

a

private

key

in

a

trusted

platform.

Module

inside

the

phone

that

is

unique

to

the

device,

never

leaves

it

and

then

have

a

second

encryption

going

on

at

the

application

level,

so

that

I

don't

need

to

trust

what

the

manufacturer

put

into

the

device

when

it

was

made.

J

N

J

N

J

That

that's

a

subtlety

so

that

whether

you

need

a

different

verifiers,

a

subtlety

that

maybe

should

be

added

to

the

issued

thing

it

could

be

designed

so

that

the

ED

2519

verifier

core,

you

know

kind

of

the

core

64

byte,

you

know

ed

25,

519

signature

is

exactly

compatible

with

the

classic

ed

25

519.

That

would

have

some

downs

certain

downsides.

You

know

we

because.

J

Prevent

us

from

messing

with

that

with

what

goes

into

the

hash

and

stuff

we

wouldn't

get

to

do.

That

would

exclude

the

possibility

of

adding

the

hash

of

the

bit

mask

in

there

too.

First

trick

malleability,

and

you

know

certain

things

like

that,

but

it's

that

is

an

option

that

that

could

be

could

be

considered

and

I'm

very

open

to

that.

Yeah.

N

I

mean

if

you

can

do

that

and

I

can

do

it

as

a

die.

I

will

take

any

of

the

other

compromises,

because

at

the

moment

the

only

way

that

I

can

create

a

signature

like

that,

basically

as

soon

as

the

public

as

soon

as

the

secret

that

was

used

to

create

it

is

known.

Well,

you

know

you

blow

up

the

whole

system,

so

I'd

be

very

interested.

If

you

could.

Okay.

J

O

Richard

Barnes

right,

it

seems

like

we

have

a

combination

here

in

this

draft

of

some

cryptographic

instructions

and

some

protocol

considerations

and

a

lot

of

these

issues

here

are

kind

of

at

the

intersection

of

those

things,

and

it

might

have

kind

of

some

disjoints

pools

of

expertise

in

terms

of

people.

We

need

to

review

this

I

mean.

Do

you

think

that

there

is

I

thought?

That's

what.

K

J

J

Cryptographic

part

is

basically

already

done

and

defined

and

formalized

and

proven

in

the

collection

of

papers

I

cited

on

an

earlier

slide.

We

really

haven't.

You

know

added

anything

to

the

to

that.

There's

I

do

the

the

only

subtlety

is,

you

know

the

bit

mask

and

how

that

interacts

with

the

kind

of

a

standard

cryptographic

construction

there.

J

There's

you

know

it,

it

would

be

worth

you

know,

looking

at

that,

a

little

bit

more

closely

than

we

have,

but

you

know

I'm

pretty

sure

that

it

doesn't

fundamentally

change

anything

on

the

on

the

crypto

side.

So

this

is

mostly

you

know

intended

to

be

about.

You

know,

kind

of

just

defining

a

standard,

usable

format

in

a

concrete

rather

than

abstract

form.

You

know,

kind

of

that.

I

can

actually

build

a

signer

and

verify

for

it